A Comprehensive Guide to fNIRS-EEG Data Preprocessing Pipelines for Robust Multimodal Fusion

This article provides a comprehensive overview of data preprocessing pipelines for the fusion of functional near-infrared spectroscopy (fNIRS) and electroencephalography (EEG), tailored for researchers and drug development professionals.

A Comprehensive Guide to fNIRS-EEG Data Preprocessing Pipelines for Robust Multimodal Fusion

Abstract

This article provides a comprehensive overview of data preprocessing pipelines for the fusion of functional near-infrared spectroscopy (fNIRS) and electroencephalography (EEG), tailored for researchers and drug development professionals. It covers the foundational principles of both modalities, explores methodological approaches for integrated analysis—from early fusion to deep learning strategies—and addresses critical troubleshooting steps for artifact correction and data quality control. Furthermore, it outlines validation frameworks and comparative analyses of fusion techniques, highlighting their applications in brain-computer interfaces and clinical neurology. The goal is to serve as a technical guideline for implementing reproducible and effective fNIRS-EEG fusion to advance multimodal brain imaging research.

Understanding the Core Principles of fNIRS and EEG for Multimodal Integration

Fundamental Concepts of EEG

What is the neurophysiological basis of EEG signals?

Electroencephalography (EEG) measures electrical activity generated by the synchronized firing of neuronal populations in the brain. These signals represent the summation of post-synaptic potentials from pyramidal cells that are oriented in parallel, creating electrical fields strong enough to be detected at the scalp surface. The basis of EEG lies in the rhythmic, synchronized oscillations of these neural populations, which produce distinct brain wave patterns classified by their frequency ranges: delta (0.5-4 Hz), theta (4-8 Hz), alpha (8-13 Hz), beta (13-30 Hz), and gamma (>30 Hz) [1] [2].

What makes the alpha rhythm particularly significant in EEG research?

The alpha rhythm (8-13 Hz), first discovered by Hans Berger in 1929, remains one of the most studied EEG oscillations due to its prominent role in brain function [3]. This rhythm is most evident during wakeful relaxation with closed eyes and is thought to play an inhibitory function by actively suppressing irrelevant brain regions during cognitive processing [3]. Recent research suggests the alpha rhythm may have played a pivotal role in the cognitive evolution of nocturnal mammals, potentially serving to maintain wakefulness during nighttime hours [3]. Studies have shown that alpha oscillations exhibit characteristic patterns across different states: reduced alpha power during cognitive engagement, increased alpha synchronization during internal processing, and specific spatial distributions that vary with age and cognitive status [3].

Technical Troubleshooting Guide & FAQs

EEG Recording Issues

Q: Why are my reference or ground electrodes showing persistent high impedance or oversaturation?

A: This issue commonly arises from improper electrode-skin contact or individual physiological factors [4].

- Step-by-Step Troubleshooting:

- Check Electrode Connections: Ensure all electrodes are properly plugged in, reapplied with proper skin preparation (cleaning, abrasion, and conductive paste), and check for "bridging" between electrodes due to excess gel [4].

- Test Alternative Placements: If issues persist, try alternative ground placements such as the participant's hand, collarbone area, or sternum [4].

- Isolate Hardware Issues: Restart the amplifier unit and acquisition software. If available, test with a different headbox or complete system in another room to rule out hardware failure [4].

- Remove Metal Objects: Ask the participant to remove all metal accessories, including necklaces and bracelets [4].

- Consider Individual Factors: Some individuals may carry more static electricity or have unique skin properties that cause oversaturation. In such cases, placing the ground further away (e.g., on the hand) may resolve the issue [4].

Q: How can I minimize jitter and latency when synchronizing EEG with other devices like fNIRS?

A: Precise temporal alignment is crucial for multimodal research [5] [6].

- Solution: Implement a Lab Streaming Layer (LSL)-based system for data acquisition [5] [6]. This open-source platform helps overcome two common issues:

- Jitter: Millisecond-order temporal variability that readily affects the signal-to-noise ratio of electrophysiological outcomes.

- Latency: Constant delays between different data streams. LSL ensures precise time-alignment of datasets, which is particularly critical for detecting stimulus-induced transient neural responses or testing hypotheses about temporal relationships between different functional aspects [5] [6].

Data Preprocessing & Fusion Challenges

Q: Which pre-processing steps have the most significant impact on EEG data quality?

A: Research indicates that signal segmentation and re-referencing methods are particularly critical [7].

- Segmentation: The approach to segmenting continuous data (into trials/epochs) significantly affects subsequent cleaning procedures [7].

- Re-referencing: Four common approaches show different impacts [7]:

- Common Averaged Reference (CAR)

- robust Common Averaged Reference (rCAR)

- Reference Electrode Standardization Technique (REST)

- Reference Electrode Standardization and Interpolation Technique (RESIT) Studies found similar topographical representations after CAR, REST, and RESIT, while rCAR showed the most different event-related spectral perturbation (ERSP) topographical pattern [7].

- Artifact Removal: Interestingly, the choice of Independent Component Analysis (ICA) algorithm (e.g., SOBI vs. Extended Infomax) had relatively small effects on the cleaning procedure compared to segmentation and re-referencing [7].

Q: What are the primary challenges in fusing EEG with fNIRS data?

A: The fusion of EEG and fNIRS is complicated by their fundamentally different signal origins and artifact profiles [8].

- Complementary Properties: EEG captures fast electrical neural signals (millisecond resolution) with limited spatial precision, while fNIRS measures slower hemodynamic responses (reflecting blood oxygenation changes) with better spatial localization [8] [9].

- Artifact Challenges: Both modalities are contaminated by physiological artifacts, but these manifest differently [8]:

- EEG is susceptible to ocular (EOG) and muscle (EMG) artifacts.

- fNIRS is contaminated by systemic physiology (cardiac, respiratory, blood pressure) that affects hemodynamics in the scalp and brain.

- Fusion Complexity: The different temporal resolutions and physiological origins make fusion non-trivial. Most current methods rely on data concatenation, model-based, or decision-level strategies, while more advanced source-decomposition techniques that could reveal complex neurovascular coupling processes remain underrepresented [8].

Experimental Protocols for Multimodal Research

Protocol 1: Motor Imagery and Mental Arithmetic Task Classification

This protocol outlines the methodology for acquiring a simultaneous EEG-fNIRS dataset for brain-computer interface applications, adapted from a publicly available benchmark dataset [9].

- Participants: 29 subjects (28 right-handed, 1 left-handed; 14 males, 15 females; average age 28.5 ± 3.7 years) [9].

- Experimental Tasks:

- Motor Imagery (MI): Imagination of left-hand versus right-hand movements without physical execution. Each trial consists of a rest period followed by an imagination period cued by visual stimuli [9].

- Mental Arithmetic (MA): Performing serial subtractions of two numbers from a given starting number (e.g., subtracting 7 from 1000 repeatedly). Each trial includes a rest period and a task period [9].

- Data Acquisition:

- EEG: Recorded using a specific electrode cap following the international 10-10 or 10-20 system.

- fNIRS: Recorded using optodes placed over relevant cortical areas (e.g., motor cortex for MI, prefrontal cortex for MA).

- Data Analysis (DeepSyncNet Framework):

- Preprocessing: Standard filtering and artifact removal for both modalities.

- Feature Extraction: 1D EEG and fNIRS signals are converted into 3D tensors to capture spatiotemporal information. A Receptive Field Block (RFB) is used for multi-scale feature extraction [9].

- Fusion: An Attentional Fusion (AF) mechanism with residual connections adaptively integrates EEG and fNIRS features at early network layers. Feature Attention Mechanisms (FAM) and Spatiotemporal Attention (STA) dynamically refine the fused representations [9].

- Classification: A learnable weighted fusion mechanism optimizes the contribution of each modality for final task classification [9].

Protocol 2: Investigating Emotional Processing with EEG Microstates

This protocol examines alpha rhythm dynamics during emotional experiences using EEG microstate analysis [3].

- Stimuli: Presentation of emotionally charged music videos categorized as "happy" or "sad" [3].

- EEG Recording: Standard high-density EEG recording from 64+ channels.

- Analysis Pipeline:

- Preprocessing: Standard filtering, artifact removal, and re-referencing.

- Microstate Analysis: Identification of prototypical topographic maps (classes A, B, C, D) that remain stable for ~60-120ms before rapidly transitioning to another map.

- Source Localization: Use of eLORETA (exact Low Resolution Brain Electromagnetic Tomography) to estimate cortical sources of activity.

- Statistical Comparison: Compare microstate occurrence, duration, and functional connectivity between "happy" and "sad" conditions.

- Expected Outcomes: Increased class D microstate occurrence and current source density in the central parietal region during happy music (indicating enhanced attention), and elevated class C microstate occurrence and functional connectivity in the precuneus during sad music (associated with mind-wandering) [3].

Table 1: Impact of Different Re-referencing Methods on EEG Data Quality

| Re-referencing Method | Acronym | Key Characteristics | Effect on ERSP Topography |

|---|---|---|---|

| Common Averaged Reference [7] | CAR | Rereferences to the average of all electrodes | Similar to REST and RESIT |

| robust Common Averaged Reference [7] | rCAR | A variant of CAR less sensitive to outliers | Shows most different pattern |

| Reference Electrode Standardization Technique [7] | REST | Estimates reference at infinity using a head model | Similar to CAR and RESIT |

| Reference Electrode Standardization and Interpolation Technique [7] | RESIT | Combines standardization with interpolation | Similar to CAR and REST |

Table 2: Comparative Characteristics of EEG and fNIRS Neuroimaging Techniques

| Characteristic | EEG | fNIRS |

|---|---|---|

| Measured Signal | Electrical activity from synchronized neuronal firing [8] [9] | Hemodynamic response (blood oxygenation) [8] [9] |

| Temporal Resolution | Millisecond level [8] [9] | Slower (seconds) [8] [9] |

| Spatial Resolution | Limited [8] [9] | Better than EEG [8] [9] |

| Primary Artifacts | Ocular (EOG), muscle (EMG) [8] | Systemic physiology (cardiac, respiratory, blood pressure) [8] |

| Main Strength | Direct measure of neural electrical activity with high temporal precision [9] | Better spatial localization and less susceptible to movement artifacts [8] [9] |

Signaling Pathways and Workflows

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Essential Materials for EEG-fNIRS Multimodal Research

| Item | Function & Application |

|---|---|

| EEG Electrode Cap | Holds electrodes in standardized positions (10-10/10-20 system) for consistent scalp coverage [9] [10]. |

| Conductive Electrode Gel/Paste | Improves electrical contact between scalp and electrodes, reducing impedance and signal noise [4]. |

| fNIRS Optodes | Sources emit near-infrared light into the head; detectors measure light intensity after tissue absorption [8]. |

| Abrasive Skin Prep Gel | Gently removes dead skin cells and oils to significantly reduce skin-electrode impedance [4]. |

| Lab Streaming Layer (LSL) | Open-source platform for synchronized multimodal data acquisition, critical for temporal alignment of EEG and fNIRS [5] [6]. |

| Reference & Ground Electrodes | Essential for creating a stable electrical reference point; often placed on mastoids or other locations [4] [10]. |

Core Theoretical Foundations

The Hemodynamic Response in fNIRS

What is the hemodynamic response and how does fNIRS measure it?

Functional near-infrared spectroscopy (fNIRS) is a non-invasive optical neuroimaging technique that measures brain activity by detecting hemodynamic changes associated with neuronal activation. This process relies on neurovascular coupling, where active neuronal tissue triggers a rapid delivery of blood, resulting in localized changes in blood oxygenation [11] [12].

During brain activation, a complex physiological sequence occurs: First, neuronal activity increases local energy demands, initially depleting oxygen and causing a brief rise in deoxygenated hemoglobin. This triggers a subsequent oversupply of cerebral blood flow through local arterial vasodilation. The resulting hemodynamic response typically shows an increase in oxygenated hemoglobin and a decrease in deoxygenated hemoglobin as oxygenated blood flushes through the active region [12] [13].

fNIRS Hemodynamic Response Pathway:

The typical adult hemodynamic response follows a characteristic pattern, often modeled using a canonical hemodynamic response function composed of two Gamma functions to characterize the positive response and undershoot [14]. However, this response can vary between brain regions, across trial repetitions, and among individuals. In newborn populations, for instance, studies have demonstrated a more mixed hemodynamic response compared to adults, potentially due to developing neurovascular coupling mechanisms [13].

The Modified Beer-Lambert Law

How does the Modified Beer-Lambert Law convert light attenuation into hemoglobin concentrations?

The Modified Beer-Lambert Law (MBLL) is the fundamental principle enabling fNIRS to quantify changes in hemoglobin concentrations from light attenuation measurements. This approach generalizes the traditional Beer-Lambert law to account for light scattering in biological tissues [15] [16].

Principles of the Modified Beer-Lambert Law

The MBLL is implemented through these key equations:

Optical Density: ( OD = -\log\left(\frac{I}{I0}\right) = \varepsilon c d DPF + G ) [15] Where ( I0 ) is incident light intensity, ( I ) is detected light intensity, ( \varepsilon ) is the molar extinction coefficient, ( c ) is chromophore concentration, ( d ) is source-detector separation, ( DPF ) is the differential pathlength factor, and ( G ) accounts for light loss due to scattering.

Differential Form: ( \Delta OD = -\log\left(\frac{I(t)}{I0}\right) \approx \langle L \rangle \Delta\mua(t) ) [16] This differential form relates changes in optical density to changes in absorption coefficients, where ( \langle L \rangle ) represents the mean photon pathlength.

For practical calculation of hemoglobin concentrations, the system of equations becomes:

[ \begin{bmatrix} \Delta OD{\lambda1} \ \Delta OD{\lambda2}

\end{bmatrix}

d \cdot DPF \cdot \begin{bmatrix} \varepsilon{HbO2}^{\lambda1} & \varepsilon{HbR}^{\lambda1} \ \varepsilon{HbO2}^{\lambda2} & \varepsilon{HbR}^{\lambda2} \end{bmatrix} \cdot \begin{bmatrix} \Delta [HbO_2] \ \Delta [HbR] \end{bmatrix} ]

This allows researchers to solve for the concentration changes of oxyhemoglobin (( \Delta [HbO_2] )) and deoxyhemoglobin (( \Delta [HbR] )) by measuring optical density changes at multiple wavelengths [15] [12].

Technical Support & Troubleshooting

Frequently Asked Questions

FAQ 1: Why is my fNIRS signal showing an inverted hemodynamic response?

An inverted hemodynamic response (decrease in HbO₂ instead of increase) can result from several factors:

- Physiological Variability: Newborns and infants often exhibit mixed hemodynamic responses due to developing neurovascular coupling. Approximately 46% of studies in newborns report atypical responses [13].

- Improper Probe Placement: Ensure optodes are positioned over the cortical region of interest with adequate pressure without causing discomfort.

- Systemic Confounds: Physiological processes like blood pressure changes (Mayer waves), respiration, or cardiac pulsation can contaminate the signal. Implement short-separation channels to regress out systemic influences [12] [17].

- Task Design Issues: Overly complex paradigms or insufficient rest periods may cause atypical responses. Review your block/event-related design timing.

FAQ 2: How can I distinguish true neural activation from physiological noise?

Physiological noise is a common challenge in fNIRS experiments. Implement these strategies:

- Frequency Filtering: Apply bandpass filters (typically 0.01-0.5 Hz) to remove cardiac (~1 Hz) and respiratory (~0.3 Hz) oscillations [18] [12].

- Short-Separation Regression: Use short-distance channels (<1 cm) to capture superficial contaminants that can be regressed from long-distance channels [17].

- Signal Quality Metrics: Calculate the Scalp Coupling Index (SCI) to identify poorly coupled channels. Remove channels with SCI <0.5 [18].

- Adaptive Filtering: Employ Kalman filters or principal component analysis to separate physiological noise from neural signals [14].

FAQ 3: What are the optimal parameters for the canonical hemodynamic response function in fNIRS?

The canonical HRF in fNIRS is typically modeled using two Gamma functions with these key parameters [14]:

| Parameter | Typical Value | Description |

|---|---|---|

| Response Delay | 2-4 seconds | Time to peak response after stimulus onset |

| Undershoot Delay | 8-12 seconds | Time to undershoot minimum |

| Response Dispersion | 1.0-1.5 | Width of the positive response |

| Undershoot Dispersion | 1.5-2.0 | Width of the undershoot |

| Response-to-Undershoot Ratio | 6:1 | Amplitude ratio of response to undershoot |

Optimal parameters vary by brain region, task paradigm, and population. For motor tasks, the peak HbO₂ response typically occurs around 6 seconds post-stimulus [18].

Troubleshooting Common Experimental Issues

Problem: Poor signal quality across multiple channels

- Check optode-scalp coupling: Ensure adequate pressure and use appropriate amounts of gel if using electro-optical systems.

- Verify source-detector distance: Maintain 3-4 cm separation for adult cortical measurements. Distances <1 cm primarily sample extracerebral tissue [18].

- Assess ambient light contamination: Use opaque head caps and shield measurement environment from external light sources.

- Evaluate signal-to-noise ratio: Calculate coefficient of variation for each channel; remove channels with excessive noise (>15-20%).

Problem: Inconsistent responses across subjects

- Standardize preprocessing pipeline: Apply identical filtering, motion correction, and quality thresholds across all subjects [12].

- Account for anatomical variability: Use 3D digitizer or MRI co-registration when possible to verify optode placement.

- Control physiological states: Standardize instructions regarding caffeine, food intake, and physical activity before experiments.

- Implement quality control metrics: Reject epochs with excessive motion artifacts (>80-100 μM amplitude) or poor scalp coupling [18].

Problem: Difficulty interpreting HbO₂ and HbR responses

- Expect canonical response pattern: Typically, HbO₂ increases while HbR decreases during neural activation.

- Check for cross-talk: Significant positive correlation between HbO₂ and HbR may indicate systemic contamination rather than neural activity.

- Validate with control conditions: Compare activation during task periods versus baseline or control conditions.

- Consider population-specific responses: Infant and clinical populations may show atypical response patterns [13].

Experimental Protocols & Methodologies

Standard fNIRS Processing Pipeline

fNIRS Data Processing Workflow:

Step-by-Step Processing Protocol

Step 1: Convert Raw Intensity to Optical Density

- Calculate optical density as: ( OD = -\log{10}\left(\frac{I}{I0}\right) )

- Where ( I ) is measured intensity and ( I_0 ) is reference intensity

- Perform this conversion for each wavelength [18]

Step 2: Quality Assessment and Channel Exclusion

- Calculate Scalp Coupling Index (SCI) for each channel

- Exclude channels with SCI <0.5

- Remove channels with source-detector distance <1 cm (short channels) or >4.5 cm (excessive attenuation) [18]

Step 3: Convert to Hemoglobin Concentrations

- Apply Modified Beer-Lambert Law: ( \begin{bmatrix} \Delta [HbO2] \ \Delta [HbR] \end{bmatrix} = \frac{1}{d \cdot DPF} \begin{bmatrix} \varepsilon{HbO2}^{\lambda1} & \varepsilon{HbR}^{\lambda1} \ \varepsilon{HbO2}^{\lambda2} & \varepsilon{HbR}^{\lambda2} \end{bmatrix}^{-1} \begin{bmatrix} \Delta OD{\lambda1} \ \Delta OD{\lambda_2} \end{bmatrix} )

- Typical DPF values: 5-7 for adults at 700-850 nm wavelengths [15] [12]

Step 4: Filtering and Artifact Removal

- Apply bandpass filter (0.01-0.5 Hz) to remove physiological noise

- Implement motion artifact correction (e.g., wavelet-based, spline interpolation)

- Use short-separation regression if available [12]

Step 5: Epoch Extraction and Analysis

- Extract epochs aligned to stimulus onset (typically -5 to +15 seconds)

- Apply baseline correction (pre-stimulus interval)

- Perform statistical analysis (GLM, t-tests) to identify significant responses [18]

Common Preprocessing Techniques

Table: Frequency Filters for Physiological Noise Removal

| Noise Source | Frequency Range | Filter Type | Recommended Cutoff |

|---|---|---|---|

| Cardiac Pulsation | 0.8-2.0 Hz | Low-pass | 0.5-0.7 Hz |

| Respiratory Rate | 0.2-0.5 Hz | Band-stop | 0.2-0.5 Hz |

| Mayer Waves | 0.07-0.13 Hz | High-pass | 0.01-0.05 Hz |

| Very Low Frequency Drift | <0.01 Hz | High-pass | 0.01 Hz |

Research Reagent Solutions & Materials

Essential fNIRS Research Components

Table: Key Research Materials for fNIRS Experiments

| Component | Function | Specifications & Considerations |

|---|---|---|

| fNIRS Instrument | Measures light attenuation | CW (continuous wave) most common; FD (frequency domain) and TR (time-resolved) offer additional information [14] [12] |

| Optodes | Light emission and detection | Source-detector separation: 3-4 cm for adults; Material should ensure proper scalp coupling [18] |

| Wavelengths | Chromophore differentiation | Typically 760 nm (sensitive to HbR) and 830-850 nm (sensitive to HbO₂) [14] |

| Head Cap | Optode positioning | Should provide stable positioning while maintaining comfort; Various sizes for population-specific fit |

| Coupling Gel | Improves light transmission | Optional for some systems; Electro-optical gels improve signal quality |

| Digitization System | Spatial registration | 3D digitizers for co-registration with anatomical images; Essential for source localization |

| Quality Metrics | Signal validation | Scalp Coupling Index (SCI), coefficient of variation, signal-to-noise ratio [18] |

Advanced Methodologies for fNIRS-EEG Fusion

For researchers integrating fNIRS with EEG in multimodal studies:

Temporal Alignment

- Synchronize fNIRS and EEG clocks at experiment start

- Use common trigger pulses for stimulus presentation

- Account for inherent hemodynamic delay (4-6 seconds) in fNIRS compared to EEG [17]

Artifact Handling

- EEG: Apply robust artifact removal (ICA, template subtraction)

- fNIRS: Implement motion correction and short-separation regression

- Joint: Develop common artifact rejection criteria [17]

Data Fusion Approaches

- Concatenation-based: Simple feature concatenation before classification

- Model-based: Incorporate neurovascular coupling models

- Source-decomposition: Identify latent components across modalities [17]

Experimental Design Considerations

- Include resting-state blocks for baseline signal characterization

- Implement control conditions to validate specific neural responses

- Balance task complexity with signal interpretability [12] [13]

This technical support guide provides the fundamental principles and practical methodologies essential for successful fNIRS research, with particular attention to integration with EEG in multimodal studies. The troubleshooting recommendations address the most common challenges encountered during fNIRS experimentation and data analysis.

Functional near-infrared spectroscopy (fNIRS) and electroencephalography (EEG) are two non-invasive neuroimaging techniques that, when combined, create a powerful tool for neuroscience research. The primary rationale for their fusion lies in their complementary spatiotemporal resolution profiles. EEG measures the electrical activity of neurons with a millisecond-scale temporal resolution, allowing it to capture fast neural dynamics. However, its spatial resolution is poor, on the order of centimeters, due to the blurring effect of the skull and scalp [19]. In contrast, fNIRS (or its high-density version, Diffuse Optical Tomography - DOT) measures hemodynamic changes related to neural activity. It offers a relatively high spatial resolution (millimeter-scale) but suffers from a fundamentally limited temporal resolution because the hemodynamic response it measures evolves over several seconds [19] [8].

This complementarity is crucial for neuroscientific investigations. For instance, if two spatially close neuronal sources are activated sequentially with only a small temporal separation (e.g., 50 ms), using either EEG or fNIRS alone would fail to resolve them correctly. EEG would blur them spatially, while fNIRS would smooth them temporally [19]. Multimodal fusion aims to overcome the inherent limitations of each standalone modality.

Technical FAQs and Troubleshooting

FAQ 1: Why can't I resolve two finger-tapping events that are close in time and space with a single modality?

- EEG Alone: The centimeter-scale point spread of EEG reconstruction makes the sources spatially indistinguishable.

- fNIRS Alone: The slow nature of hemodynamics (lasting a few seconds) smooths out the responses, making them temporally indistinguishable if they occur with a short separation (e.g., 1 second or less) [19].

- Recommended Solution: Perform joint EEG-fNIRS source reconstruction. The high spatial precision from fNIRS can be used as a spatial prior to constrain the high-temporal-resolution EEG inversion, yielding a reconstruction with enhanced spatiotemporal resolution [19].

FAQ 2: How do I handle the inherent temporal delay of fNIRS signals relative to EEG?

The hemodynamic response measured by fNIRS has an inherent delay of several seconds compared to the electrical activity captured by EEG. A fixed temporal offset (e.g., 2-8 seconds) is sometimes applied, but this is suboptimal as the delay can vary by subject and task [20].

- Advanced Solution: Implement a dynamic temporal alignment strategy. For example, use an EEG-guided Temporal Alignment (EGTA) layer, which employs a cross-attention mechanism to generate fNIRS signals that are temporally aligned with EEG, resolving the issue of temporal mismatch [20].

FAQ 3: What are the best practices for filtering my simultaneous EEG-fNIRS data to maximize fusion quality?

Both signals contain physiological noise, but they manifest differently and require specific filtering approaches. The table below summarizes recommended parameters based on the signal type.

Table 1: Standard Filtering Parameters for EEG-fNIRS Fusion

| Modality | Filter Type | Typical Frequency Bands | Primary Purpose |

|---|---|---|---|

| fNIRS | Band-Pass Filter | 0.01 - 0.1 Hz [21] or 0.05 - 0.7 Hz [22] | Preserve the hemodynamic response while removing cardiac (~1 Hz), respiratory (~0.3 Hz), and very low-frequency drifts. |

| EEG | Band-Pass Filter | 1 Hz (High-Pass) and above [21] | Remove slow drifts and line noise; specific frequency bands (e.g., alpha, beta) are often extracted for analysis. |

FAQ 4: My fNIRS signals are contaminated by strong systemic physiological noise. How can fusion with EEG help?

Cardiac activity, blood pressure changes, and respiration can create noise in fNIRS that masks neural activation. While EEG is also susceptible to physiological artifacts (like ECG and EMG), the same physiological source manifests with distinct characteristics in each modality. Data-driven, unsupervised symmetric fusion methods can exploit these differences to robustly model and reject shared physiological confounders, thereby enhancing the signal-to-noise ratio of the neurally-evoked activity in both modalities [8].

Key Experimental Protocols and Methodologies

Protocol: Joint EEG and DOT Source Reconstruction

This protocol uses fNIRS/DOT reconstruction as a spatial prior for EEG source localization to resolve spatiotemporally close neural events [19].

- Mesh Generation: A segmented brain atlas (e.g., ICBM152) is used to create a tetrahedral mesh of the head, typically comprising four tissue types: scalp, skull, cerebrospinal fluid (CSF), and brain.

- Forward Modeling:

- EEG: Calculate a leadfield matrix that models how electrical currents from neural sources propagate to electrodes placed on the scalp (e.g., according to the 10-20 system).

- DOT: Calculate a forward model that describes how light propagates through tissue from optical sources to detectors.

- Inverse Problem: Solve the joint inverse problem using a framework like Restricted Maximum Likelihood (ReML). The DOT reconstruction provides the spatial prior, which is then used to constrain the EEG reconstruction.

- Validation: The method can be validated with simulated data where two activation spots (e.g., ~8 mm in diameter, mimicking digit representations in the somatosensory cortex) are activated sequentially with a known temporal separation (e.g., 50 ms). The joint algorithm should accurately recover these sources, which neither modality could resolve in isolation [19].

Diagram 1: Workflow for Joint EEG-DOT Source Reconstruction

Protocol: Spatial-Temporal Alignment Network (STA-Net) for BCI Decoding

This protocol is an end-to-end deep learning approach for fusing EEG and fNIRS for brain-computer interface (BCI) tasks, explicitly addressing spatial and temporal misalignment [20].

- Signal Preprocessing: Independently preprocess EEG and fNIRS signals according to standard pipelines (e.g., filtering, artifact removal).

- 3D Feature Extraction: Use 3D convolution to extract spatio-temporal features from both modalities.

- Spatial Alignment (FGSA Layer): The fNIRS-guided Spatial Alignment (FGSA) layer calculates spatial attention maps from fNIRS to identify sensitive brain regions. These maps are used to weight the corresponding EEG channels, spatially aligning EEG with fNIRS.

- Temporal Alignment (EGTA Layer): The EEG-guided Temporal Alignment (EGTA) layer generates temporal attention maps based on a cross-attention mechanism. This produces fNIRS signals that are dynamically aligned with the EEG, correcting for the variable hemodynamic delay.

- Classification: The spatio-temporally aligned features are fused and fed into a classifier for tasks like motor imagery (MI), mental arithmetic (MA), and word generation (WG) [20].

Diagram 2: STA-Net Architecture for Spatiotemporal Alignment

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Key Materials and Tools for fNIRS-EEG Fusion Research

| Item / Solution | Function / Explanation |

|---|---|

| High-Density DOT (HD-DOT) | An advanced fNIRS setup using multiple source-detector separations with overlapping sensitivity profiles to enable 3D image reconstruction of functional activation with spatial resolution comparable to fMRI [8]. |

| Open-Source Analysis Toolboxes (HOMER3, MNE-Python, EEGlab) | Software packages providing standardized pipelines for data preprocessing, including conversion to optical density/chromophore concentration (fNIRS) and artifact removal/rerferencing (EEG) [22] [21]. |

| SimBio/FieldTrip Toolbox | An environment for advanced EEG (and MEG) forward modeling, used for calculating the leadfield matrix in a realistic head model, which is critical for source reconstruction [19]. |

| ICBM152 Brain Atlas | A standardized, non-linear asymmetric brain template used for generating realistic head models for both EEG and DOT forward modeling in simulation studies [19]. |

| Short-Separation Channels | fNIRS source-detector pairs placed with a small separation (e.g., < 1 cm) to selectively measure systemic physiological noise from the scalp. These signals can be used as regressors to improve the recovery of cerebral signals [8] [23]. |

| Cross-Modal Attention Mechanisms | A deep learning component that allows a model to dynamically focus on the most relevant features from one modality based on the context provided by the other modality, enhancing fusion performance [20] [24]. |

Neurovascular coupling (NVC) describes the fundamental physiological process whereby neural activity triggers subsequent changes in local cerebral blood flow and hemodynamics [25]. This relationship forms the critical link between the electrical brain activity measured by electroencephalography (EEG) and the hemodynamic responses measured by functional near-infrared spectroscopy (fNIRS). In combined EEG-fNIRS studies, understanding NVC is paramount, as it allows researchers to interpret the two distinct signals not as separate phenomena, but as interconnected aspects of the same underlying brain activity. The EEG signal captures the direct, millisecond-scale electrical discharges of neurons, primarily from the cortical surface [26]. Conversely, fNIRS measures the slower, second-scale hemodynamic response—changes in oxygenated (HbO) and deoxygenated hemoglobin (HbR) concentrations—that serves as an indirect marker of this neural activity [27] [25]. This complementary relationship is the cornerstone of multimodal fusion research, enabling a more complete picture of brain function by combining excellent temporal resolution (EEG) with improved spatial localization (fNIRS) [8] [27].

Technical FAQs: Resolving Common Experimental Challenges

Q1: What are the most effective strategies to minimize motion artifacts in simultaneous EEG-fNIRS recordings?

Motion artifacts present a significant challenge, but their impact can be mitigated through a combination of hardware choices and signal processing techniques. fNIRS is generally more robust to movement than EEG [26]. Key strategies include:

- Secure Cap Fit: Use a tight but comfortable electrode cap to keep both EEG electrodes and fNIRS optodes in a fixed position, which is crucial for artifact-free data [28].

- Accelerometer Integration: For protocols involving significant movement (e.g., walking or treadmill running), attaching an accelerometer to the subject's head is recommended. The recorded motion data can then be used with advanced signal processing, like adaptive filtering, to clean the fNIRS signal [29].

- Active EEG Electrodes: Employ active EEG electrodes, which provide a higher signal-to-noise ratio and are less susceptible to environmental interference [28].

- Post-Processing Algorithms: Utilize motion correction algorithms during the preprocessing stage for both modalities. While many robust methods exist for EEG, confounder correction in fNIRS often relies on filtering or dedicated motion artifact removal techniques [8].

Q2: How can we reliably synchronize EEG and fNIRS data streams from separate devices?

Precise temporal synchronization is essential for valid NVC analysis. The recommended approach is:

- Master-Slave Configuration: Typically, the EEG amplifier, which usually has a higher sampling frequency, acts as the "master" device. The fNIRS system is then synchronized to it [28].

- Hardware Triggers: Use transistor-transistor logic (TTL) pulses sent via a parallel port or other digital I/O line to mark specific events in both data streams simultaneously. The delay for such triggers is generally very low (e.g., not exceeding 5 msec) [29].

- Software Synchronization: Employ protocols like the Lab Streaming Layer (LSL), which allows for synchronized data collection from multiple devices by sharing a common clock signal across a network [27].

Q3: Our fNIRS signals are contaminated by strong systemic physiology (e.g., heart rate, respiration). How can this be addressed in the context of NVC analysis?

Systemic physiological interference is a common confounder in fNIRS, as the technique is sensitive to cardiac pulsation, respiratory fluctuations, and blood pressure changes [8] [30]. Several data-driven approaches can help isolate the NVC-related signal:

- Filtering: Applying band-pass filters can remove high-frequency cardiac noise and very low-frequency drift.

- Advanced Decomposition: Techniques like Principal Component Analysis (PCA) can be used to identify and remove components of the signal that correlate with systemic physiology [29].

- Short-Separation Channels: Incorporating fNIRS channels with a very short source-detector distance (e.g., < 1 cm) is a highly effective method. These channels are predominantly sensitive to systemic artifacts in the scalp and not to cerebral hemodynamics. Their signals can be used as regressors to clean the standard, long-separation channels [8]. Note that this powerful technique remains underutilized.

Q4: What is the optimal sensor placement strategy to avoid interference between EEG electrodes and fNIRS optodes?

The goal is to achieve co-registration without physical or signal interference.

- Integrated Caps: The most straightforward solution is to use a high-density EEG cap that has pre-defined, fNIRS-compatible openings or holder rings, allowing for interleaved placement [28] [26].

- Interleaving Pattern: A standard method is to "put the EEG electrodes in between the optodes" since EEG electrodes are typically smaller [28].

- Material Considerations: The cap material should be dark to prevent ambient light from contaminating the fNIRS signal [28]. It is also vital to ensure that the combined weight of the sensors is lightweight to reduce the risk of movement artifacts and ensure subject comfort.

Q5: Why might we observe a decoupling between EEG and fNIRS signals, and what does it signify?

Observing a decoupling—where the expected correlation between electrical and hemodynamic activity breaks down—is not always an artifact; it can be a significant physiological finding. For example, a study on cognitive-motor interference found that divided attention during a dual-task led to a decreased neurovascular coupling across theta, alpha, and beta EEG rhythms [31]. Furthermore, research on retired athletes with a history of mild traumatic brain injury (mTBI) showed a reduced hemodynamic response compared to controls, suggesting altered cerebral metabolic demands and potentially impaired NVC due to past injuries [25]. Before concluding a physiological decoupling, however, technical causes like those addressed in FAQs Q1-Q3 must be rigorously excluded.

Table 1: Troubleshooting Common Artifacts in EEG-fNIRS Fusion Studies

| Artifact Type | Primary Affected Modality | Root Cause | Preventive Solutions | Corrective Processing Methods |

|---|---|---|---|---|

| Motion Artifacts | Both (EEG more susceptible) [26] | Head movement, loose cap fit | Secure, lightweight cap; accelerometer use [28] [29] | Adaptive filtering, motion correction algorithms [8] [29] |

| Systemic Physiology | fNIRS [8] | Cardiac, respiratory, blood pressure cycles | Controlled environment, subject relaxation | Filtering, PCA/ICA, short-separation regression [8] [29] |

| Scalp Hemodynamics | fNIRS | Blood flow changes in skin/scalp | Proper optode pressure & coupling | Use of short-separation channels [8] |

| Ocular/Muscle Artifacts | EEG [8] | Eye blinks (EOG), head/neck muscle (EMG) | Instruct subject to minimize movement | Blind source separation (e.g., ICA), regression [8] |

| Synchronization Errors | Data Fusion | Separate device clocks, software lag | Hardware TTL triggers, Lab Streaming Layer (LSL) [27] [28] | Post-hoc alignment using event markers |

Essential Experimental Protocols for NVC Investigation

Protocol 1: The Cognitive-Motor Interference (CMI) Task

This protocol is designed to study how the brain allocates resources when cognitive and motor tasks are performed simultaneously, a paradigm known to modulate NVC [31].

- Objective: To investigate the neurovascular correlates of divided attention and cognitive-motor interference.

- Task Design:

- Single Motor Task: Participants perform an isolated upper limb motor task.

- Single Cognitive Task: Participants perform an isolated cognitive task.

- Cognitive-Motor Dual Task: Participants perform both the motor and cognitive tasks simultaneously [31].

- Measured Signals: Simultaneous recording of EEG and fNIRS bimodal signals.

- Key NVC Analysis: The correlation between task-related EEG components (in theta, alpha, and beta rhythms) and the concurrent fNIRS hemodynamic responses is computed. A decrease in this correlation during the dual-task condition indicates CMI-induced decoupling of neurovascular activity [31].

Protocol 2: The "Where's Wally" Neurovascular Coupling Test

This protocol is a validated method for assessing the integrity of the NVC response itself and has been used to study populations with suspected NVC impairment, such as those with a history of concussion [25].

- Objective: To elicit and measure a standardized hemodynamic response to a visual cognitive task.

- Task Design:

- Baseline: The participant sits quietly for 5 minutes, breathing normally with eyes open.

- Stimulation: The participant performs five cycles of a visual search task. Each cycle consists of 20 seconds with eyes closed, followed by 40 seconds with eyes open searching for the character "Wally" (or "Waldo") in a complex image. If found quickly, the image is advanced [25].

- Measured Signals: fNIRS over the prefrontal cortex (covering dorsolateral and orbitofrontal cortices) is essential. Simultaneous EEG can add valuable electrical correlates.

- Key NVC Analysis: In healthy controls, the task should induce a relative increase in O2Hb and a decrease in HHb in the prefrontal cortex. A blunted or altered response, such as a reduction in O2Hb increase, suggests impaired NVC [25].

Table 2: Core Experimental Protocols for NVC Research

| Protocol Name | Primary Research Application | Task Paradigm | Key NVC Metrics | Typical Participant Groups |

|---|---|---|---|---|

| Cognitive-Motor Interference (CMI) [31] | Divided attention, dual-task cost | Sequential single and dual tasks | EEG-fNIRS correlation in theta, alpha, beta bands | Healthy young adults, elderly, clinical populations with attention deficits |

| "Where's Wally" NVC Test [25] | NVC integrity, metabolic demand | Repeated cycles of visual search/rest | Prefrontal O2Hb increase, HHb decrease | Populations with suspected NVC impairment (e.g., mTBI, concussion) |

| n-Back Working Memory | Cognitive workload, executive function | Continuous performance task with varying memory load | Prefrontal HbO amplitude, latency; EEG theta/gamma power | Broad cognitive neuroscience, neuroergonomics, clinical studies |

The Data Preprocessing Pipeline for Robust NVC Analysis

A rigorous preprocessing pipeline is critical for cleaning the data and enabling a valid analysis of the relationship between EEG and fNIRS signals. The following workflow outlines the key steps for each modality before data fusion.

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Essential Materials for Combined EEG-fNIRS Experiments

| Item Name | Function/Application | Technical Specifications |

|---|---|---|

| Integrated EEG-fNIRS Cap | Holds sensors in co-registered positions for simultaneous measurement. | Compatible with 10-20 system; openings for EEG electrodes & fNIRS optodes; dark-colored material to block ambient light [28] [26]. |

| Active EEG Electrodes | Measure electrical brain activity with high signal-to-noise ratio. | Active electrodes (e.g., g.SCARABEO) for reduced preparation time and motion resilience [28]. |

| fNIRS Optodes | Emit near-infrared light and detect reflected light to measure hemodynamics. | Sources (LEDs/lasers) and detectors; typical source-detector separation of 20-30 mm for cerebral measurement [28] [29]. |

| Short-Separation Channels | A specialized type of fNIRS channel for measuring and removing scalp hemodynamics. | Source-detector separation of < 1 cm; critical for robust artifact removal in data-driven analysis [8]. |

| Conductive Electrolyte Gel | Ensures low impedance electrical contact for EEG electrodes. | Saline-based or abrasive gel for wet EEG systems; not required for dry electrodes. |

| Accelerometer | Records head movement to assist in motion artifact correction. | Small, lightweight sensor attached to the cap; provides reference signal for adaptive filtering [29]. |

| Synchronization Hardware/Software | Temporally aligns EEG and fNIRS data streams from the start of recording. | TTL pulse generator, parallel port, or software platform like Lab Streaming Layer (LSL) [27] [28]. |

Advantages of Integrated fNIRS-EEG over Single-Modality Neuroimaging

Technical FAQs & Troubleshooting Guide

FAQ 1: What are the primary technical advantages of integrating fNIRS with EEG? The integration of fNIRS and EEG creates a synergistic system that overcomes the inherent limitations of each modality when used alone. Electroencephalography (EEG) records electrical activity from neuronal firing, providing excellent temporal resolution on the order of milliseconds, but suffers from relatively low spatial resolution and sensitivity to electrical noise and motion artifacts [32] [33]. Conversely, functional near-infrared spectroscopy (fNIRS) measures hemodynamic changes (changes in oxyhemoglobin (HbO) and deoxyhemoglobin (HbR) concentrations), providing good spatial resolution and being less susceptible to motion artifacts, but has lower temporal resolution due to the slow nature of the hemodynamic response [32] [34]. By combining them, the dual-modal system provides simultaneous information on both the electrical neural activity and the hemodynamic metabolic response without electromagnetic interference, offering a more complete picture of brain function [32] [35].

FAQ 2: How do I address motion artifacts in my fNIRS-EEG data? Motion artifacts (MAs) are a common challenge that can significantly degrade signal quality. The table below summarizes various correction techniques.

Table 1: Motion Artifact Correction Techniques for fNIRS and EEG

| Technique Category | Specific Methods | Description | Applicability |

|---|---|---|---|

| Algorithmic (fNIRS & EEG) | Wavelet Packet Decomposition (WPD) [36] | Decomposes signals using wavelet packets; effective for single-channel artifact correction. | fNIRS EEG |

| WPD with Canonical Correlation Analysis (WPD-CCA) [36] | A two-stage method; shown to improve ΔSNR by 11.28% (EEG) and 56.82% (fNIRS) over WPD alone [36]. |

fNIRS EEG | |

| Hardware-Based (fNIRS) | Accelerometer-based Active Noise Cancelation (ANC) [37] | Uses accelerometer data as a noise reference for an adaptive filter to clean the fNIRS signal. | fNIRS EEG |

| Data Handling | Channel Rejection [37] | Discarding data segments or entire channels that are heavily corrupted by motion artifacts. | fNIRS EEG |

FAQ 3: What are the main strategies for fusing fNIRS and EEG data? Data fusion can be implemented at three primary levels, each with its own advantages.

Table 2: Data Fusion Strategies for fNIRS-EEG

| Fusion Level | Description | Advantages | Examples |

|---|---|---|---|

| Data-Level Fusion | Direct combination of raw or preprocessed data from both modalities [33]. | Potentially retains the most complete information. | - |

| Feature-Level Fusion | Extracting features from each modality (e.g., EEG band powers, fNIRS HbO/HbR slopes) and concatenating them into a combined feature vector [33] [38]. | Often provides high classification accuracy; widely used and effective. | Combining EEG band powers and fNIRS signal peaks/means for BCI [38]. |

| Decision-Level Fusion | Each modality is processed and classified independently, and the final results are combined (e.g., by voting or weighted averaging) [33]. | Can eliminate redundant information; provides robustness. | Combining SVM classifier outputs for EEG and fNIRS for mental stress detection [33]. |

FAQ 4: What are the key design considerations for an fNIRS-EEG acquisition helmet? The helmet design is critical for signal quality and co-registration. Key considerations include:

- Probe Integration: EEG electrodes and fNIRS optodes can be integrated on a shared substrate or arranged separately. A common approach is to use a flexible EEG cap as a base and create punctures for fNIRS probe fixtures [32].

- Customization: Standard elastic caps can lead to inconsistent probe-scalp contact pressure due to head shape variations. 3D-printed custom helmets or those made from cryogenic thermoplastic sheets offer a better, customized fit, improving signal quality and spatial alignment, though at a higher cost or potential comfort trade-off [32].

- Co-registration: Precise spatial localization is essential. The arrangement should allow for co-registering fNIRS channels and EEG electrodes to standard brain atlas coordinates (e.g., Montreal Neurological Institute - MNI space) for accurate interpretation [32] [35].

FAQ 5: My synchronized data shows temporal misalignment. How can I improve synchronization? Precise synchronization is challenging. There are two primary methods:

- Separate Systems with Software Sync: Using separate commercial systems (e.g., NIRScout for fNIRS and BrainAMP for EEG) and synchronizing them via host computer software. This is simpler but may lack the microsecond precision needed for some EEG analyses [32].

- Unified Hardware Processor: Using a single, unified hardware processor to acquire and process both EEG and fNIRS signals simultaneously. This method is more complex but achieves highly precise synchronization, streamlining subsequent analysis [32]. Always check and report the synchronization error, which should ideally be less than 100 ms [35].

Essential Experimental Protocols & Methodologies

Protocol 1: Mental Arithmetic (MA) and Motor Imagery (MI) Task for BCI Classification

This is a common paradigm for testing hybrid BCI systems [33].

- Participants: Healthy subjects.

- Task Design:

- Data Acquisition: Simultaneously record EEG (e.g., 64-channel system) and fNIRS (e.g., system with multiple sources and detectors over the prefrontal and motor cortices) [33].

- Data Processing:

- Fusion & Classification: Fuse EEG and fNIRS features at the feature-level (e.g., by simple concatenation) and feed into a classifier (e.g., Support Vector Machine - SVM, Linear Discriminant Analysis - LDA). This approach has achieved classification accuracies over 96% for discriminating between tasks [33].

Protocol 2: Neurovascular Coupling (NVC) Analysis in Substance Use Disorder

This protocol investigates the relationship between electrical and hemodynamic brain activity [35].

- Participants: Two groups: individuals with a specific substance use disorder (e.g., etomidate) and healthy controls [35].

- Task Design: Resting-state measurement with eyes closed for 5 minutes [35].

- Data Acquisition: Simultaneous high-density EEG and fNIRS recording, with precise co-registration of channels [35].

- Data Processing & Fusion:

- EEG Source Localization: Reconstruct the source of EEG signals to match the locations of fNIRS channels [35].

- Multi-Band Local Neurovascular Coupling (MBLNVC): Analyze the coupling between EEG rhythms (δ, θ, α, β, γ) and the fNIRS HbO signal at specific brain locations [35].

- Network-Based Feature Fusion: Map the multi-modal features to well-established brain networks (e.g., Yeo 7 networks) to identify network-specific coupling alterations [35].

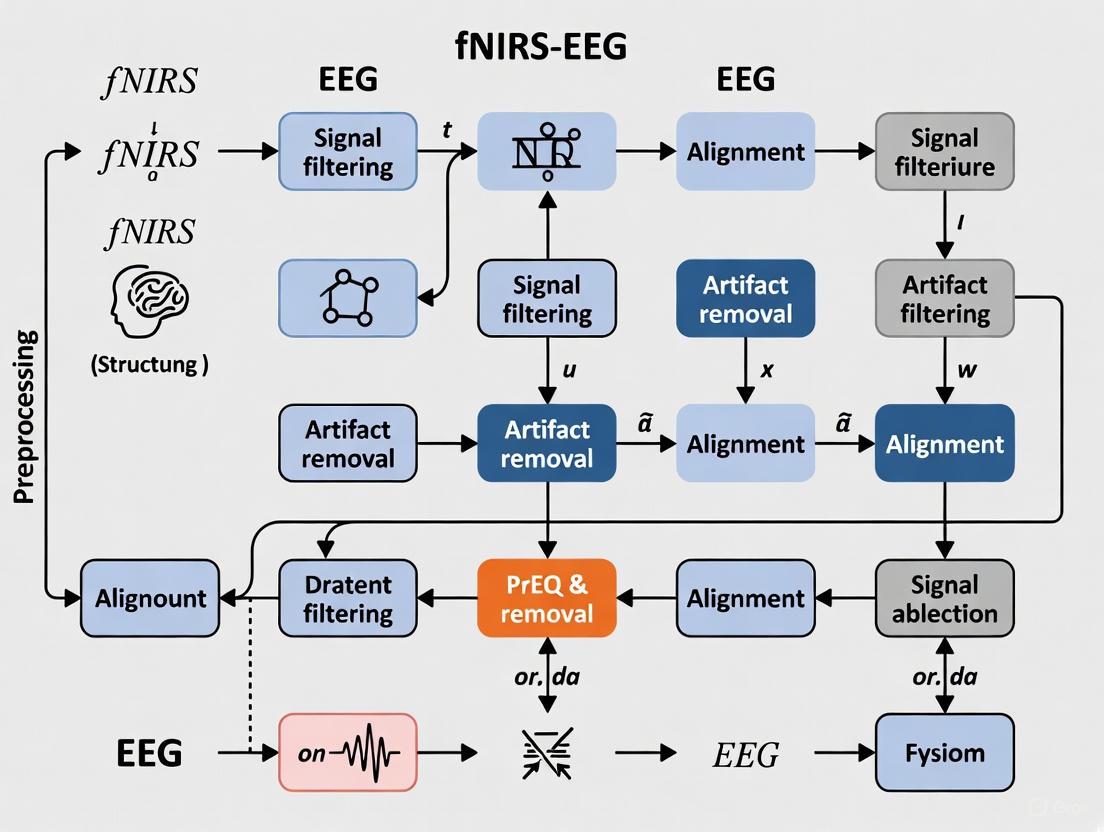

The following diagram illustrates a generalized workflow for a multimodal fNIRS-EEG data preprocessing pipeline, integrating the key steps from the protocols above.

The Scientist's Toolkit: Key Research Reagents & Materials

Table 3: Essential Materials and Software for fNIRS-EEG Research

| Item Name | Type | Primary Function | Key Details |

|---|---|---|---|

| Multi-modal Acquisition Helmet | Hardware | Holds EEG electrodes and fNIRS optodes in stable, co-registered positions on the scalp. | Custom 3D-printed or thermoplastic designs are superior to standard caps for ensuring consistent probe contact [32]. |

| Unified Data Acquisition System | Hardware | Simultaneously acquires and time-stamps EEG and fNIRS data streams. | Critical for minimizing synchronization error; preferred over loosely coupling two separate systems [32]. |

| Accelerometer | Hardware | Records head movement data concurrently with brain signals. | Serves as a reference signal for hardware-based motion artifact correction algorithms in fNIRS [37]. |

| NIRS Toolbox | Software | A MATLAB-based suite for fNIRS data analysis. | Supports GLM, functional connectivity, and multi-modal analysis; compatible with NIRx data for automatic 3D probe import [39]. |

| Homer2 / Homer3 | Software | Widely used fNIRS analysis packages. | Provide GUI and script-level processing streams; compatible with data in the common *.nirs file format [39]. |

| EEGLAB | Software | A MATLAB toolbox for processing EEG data. | Used for standard preprocessing (filtering, ICA-based artifact removal) and rhythm extraction [35] [40]. |

| Turbo-Satori | Software | Real-time fNIRS analysis software. | Optimized for brain-computer interface (BCI) and neurofeedback research; integrates with NIRx acquisition systems [39]. |

The logical relationships between the core components of an integrated fNIRS-EEG system and the synergistic advantages they create are summarized below.

Building and Applying fNIRS-EEG Preprocessing and Fusion Pipelines

Functional Near-Infrared Spectroscopy (fNIRS) is a non-invasive neuroimaging technique that measures changes in cerebral blood oxygenation by shining near-infrared light through the skull and into the brain tissue. This light is absorbed differently by oxygenated hemoglobin (HbO) and deoxygenated hemoglobin (HbR), allowing researchers to infer changes in blood flow and oxygenation in specific brain regions. Preprocessing raw fNIRS data is crucial for cleaning the signal and ensuring that subsequent analyses accurately reflect underlying neural activity rather than physiological noise or motion artifacts. This guide outlines standardized steps for converting raw light intensity signals to meaningful HbO and HbR concentrations, with particular attention to the context of multimodal fNIRS-EEG fusion research.

Frequently Asked Questions (FAQs)

Q1: What is the purpose of converting raw light intensity to optical density?

The initial raw light intensity measurements are influenced by various factors unrelated to brain activity, including instrument properties and ambient light. Converting to optical density (OD) standardizes the signal and provides a more stable baseline for subsequent calculations. The conversion uses the formula: OD = -log10(I/I0), where I is the detected light intensity and I0 is the emitted light intensity [41]. This step is a prerequisite for applying the Modified Beer-Lambert Law.

Q2: Why must I apply a bandpass filter to my fNIRS data? Bandpass filtering is essential for isolating the hemodynamic response related to neural activity by removing unwanted physiological noise. The useful fNIRS signal is typically confined to frequencies below 0.5 Hz, while common physiological noises occur at higher frequencies: heartbeat (~1-2 Hz), respiration (~0.4 Hz), and Mayer waves related to blood pressure (~0.1 Hz) [41]. A typical bandpass filter with cutoffs of 0.01 Hz (or 0.05 Hz) to 0.5 Hz effectively removes these noise components while preserving the signal of interest [22] [41].

Q3: What are motion artifacts, and how can I correct for them? Motion artifacts are sudden, large shifts in the signal caused by head movements, muscle contractions, or other physical activities during recording [41]. They are a major source of noise and can obscure the true hemodynamic response. Several correction methods are available, and the choice depends on your data and software. Common algorithms include:

- CBSI (Correlation-Based Signal Improvement): A method that leverages the negative correlation between HbO and HbR signals to correct for motion [41].

- PCA (Principal Component Analysis): Identifies and removes components of the signal that correlate with motion [41].

- Spline Correction: Models and interpolates over motion-corrupted segments [41].

- Wavelet-Based Correction: Identifies and removes artifacts in the wavelet domain [41]. It is recommended to visualize your data before and after applying any motion correction method to ensure its effectiveness.

Q4: How does the Modified Beer-Lambert Law work?

The Modified Beer-Lambert Law (MBLL) relates changes in optical density to changes in the concentration of chromophores (HbO and HbR) in the tissue. It modifies the classic law to account for light scattering in biological tissues. The formula for the change in optical density at a given wavelength is:

ΔOD(λ) = α(λ) * Δc * l * DPF(λ)

Where:

ΔOD(λ)is the change in optical density at wavelengthλ.α(λ)is the molar extinction coefficient of the chromophore at wavelengthλ.Δcis the change in chromophore concentration.lis the source-detector separation (physical distance).DPF(λ)is the Differential Pathlength Factor, which accounts for the increased path length due to scattering [42] [41].

Q5: Why are short source-detector channels important? Short-distance channels (typically with a separation of less than 1 cm) are primarily sensitive to physiological noise in the scalp and skull rather than brain activity [22]. By measuring this superficial noise, they provide a regressor that can be used to remove it from the standard channels (which contain a mixture of brain signal and superficial noise), thereby enhancing the brain-specific signal [43]. This is a key step in improving the quality of fNIRS data.

Q6: What specific considerations exist for fNIRS-EEG fusion? Successful fusion of fNIRS and EEG data requires careful preprocessing to account for the different nature of the signals. fNIRS measures slow hemodynamic changes (requiring high-pass filtering around 0.01-0.05 Hz), while EEG measures fast electrical potentials (often filtered between 0.5-70 Hz) [17] [24]. A major challenge is that many studies incorporate robust artifact handling for EEG, but confounder correction in fNIRS remains limited to basic filtering or motion removal [17]. Furthermore, short-separation measurements for fNIRS are still underutilized in fusion studies [17]. Fusion methods themselves can be categorized as:

- Early Fusion: Combining raw data or low-level features before analysis.

- Late Fusion: Integrating the outputs or decisions of separate analyses [24]. Advanced methods like cross-modal attention mechanisms are also being developed to dynamically weight the importance of each modality [24].

Standardized Preprocessing Workflow

The following diagram illustrates the complete, standardized workflow for preprocessing fNIRS data, from raw measurements to analysis-ready hemoglobin concentrations.

Step-by-Step Protocol

- Raw Data Input: Begin with raw light intensity data for (typically) two wavelengths (e.g., 730 nm and 850 nm) [29].

- Convert to Optical Density (OD): Transform the raw intensity to optical density to create a stable baseline for analysis using the formula:

OD = -log10(I/I0)[22] [41]. - Signal Cleaning: This critical step involves several sub-procedures to remove noise.

- Short Channel Regression: Use signals from short source-detector pairs (<1 cm) to regress out superficial, non-cerebral physiological noise [22] [43].

- Motion Artifact Correction: Apply algorithms like CBSI, PCA, Spline, or Wavelet to identify and correct for motion-induced signal shifts [41].

- Bandpass Filtering: Use a zero-phase bandpass filter (e.g., 0.05 - 0.7 Hz or 0.01 - 0.5 Hz) to remove high-frequency noise (heartbeat, respiration) and low-frequency drift [22] [41].

- Convert to Hemoglobin: Apply the Modified Beer-Lambert Law to the cleaned OD data to calculate relative concentration changes of HbO and HbR [22] [42].

- Epoch and Analyze: Segment the continuous HbO/HbR data into epochs time-locked to experimental events for statistical analysis and visualization [22].

Technical Specifications & Parameters

Key Parameters for the Modified Beer-Lambert Law

Table 1: Parameters and typical values for converting optical density to hemoglobin concentration.

| Parameter | Symbol | Description | Typical Value / Formula |

|---|---|---|---|

| Molar Extinction Coefficient | ε |

Wavelength-specific absorption property of HbO and HbR. | Look-up tables (e.g., for 730 nm & 850 nm) [42]. |

| Source-Detector Separation | l |

Physical distance between light source and detector on the scalp. | 2.5 - 3.0 cm [29]. |

| Differential Pathlength Factor | DPF |

Factor correcting for increased photon pathlength due to scattering. | Wavelength- and age-dependent (e.g., ~6 for adults) [42] [41]. |

| Partial Pathlength Factor | PPF |

Combined factor (DPF * PVF) accounting for scattering and the fraction of path in brain tissue. |

Used in some advanced models [41]. |

Physiological Noise Characteristics

Table 2: Frequency ranges of common physiological noise sources in fNIRS signals.

| Noise Source | Frequency Range | Notes |

|---|---|---|

| Heartbeat | ~1 - 2 Hz | Can be suppressed by low-pass filtering [41]. |

| Respiration | ~0.4 Hz | Can be suppressed by low-pass filtering [41]. |

| Mayer Waves (Blood Pressure) | ~0.1 Hz | Very close to the signal of interest; may require careful filtering or source separation [41]. |

| Hemodynamic Response | < 0.1 Hz | The target signal for functional brain activation studies [41]. |

Table 3: A selection of key software tools for fNIRS data preprocessing and analysis.

| Tool Name | Primary Function | Key Feature | URL/Location |

|---|---|---|---|

| HOMER2 / HOMER3 | Comprehensive fNIRS analysis | GUI and scripting; extensive processing stream including MBLL, motion correction, and filtering. | homer-fnirs.org [44] |

| MNE-Python | Multimodal neuroimaging (EEG/MEG/fNIRS) | Python-based; integrates fNIRS preprocessing with EEG analysis, ideal for fusion research. | mne.tools [22] [44] |

| NIRSLab | Complete fNIRS data analysis | Modules for registration, preprocessing, 3D projection, and GLM analysis. | nirs-lab.com [44] |

| NIRS-SPM | Statistical parametric mapping | SPM-based toolbox for statistical analysis of fNIRS signals. | bisp.kaist.ac.kr [44] |

| fnirsSOFT (BIOPAC) | Process, analyze, and visualize fNIRS | Stand-alone software with a graphical user interface. | nirx.net/fnirssoft [44] |

How do I establish a robust EEG re-referencing procedure for my preprocessing pipeline?

A statistically robust re-referencing procedure is crucial for mitigating the effect of reference electrode activity, which can contaminate all EEG channels. The common average reference (CAR) is widely used but can be biased by neural activity present at the reference site. A robust maximum-likelihood type estimator can be adapted to mitigate this issue.

Methodology for Robust Re-referencing:

- Model the observed voltage

dt,kat channel k and time t as:dt,k = st,k - rt + nt,k, wherest,kis the ideal silent-reference signal,rtis the unknown reference voltage, andnt,kis sensor noise [45]. - Apply a robust estimator, such as the median or a trimmed mean, to the data from all channels at each time point to calculate the reference estimate

řt. This approach reduces the influence of channels with high-amplitude neural activity, which act as outliers in the reference estimation [45]. - Add the estimated reference back to each channel to obtain the re-referenced signal:

št,k = mt,k + řt[45].

This procedure is simple, fast, and avoids the substantial bias that can occur with traditional methods like CAR, especially when working with low-density EEG setups [45].

What are the standard parameters for filtering continuous EEG data?

Filtering is essential for removing unwanted biological and line noise artifacts from the EEG signal. The table below summarizes standard parameters for a basic preprocessing pipeline, with examples from recent research.

Table 1: Standard EEG Filtering Parameters and Applications

| Filter Type | Standard Frequency Bands | Purpose | Example from Literature | Key Considerations |

|---|---|---|---|---|

| High-Pass Filter | ≥ 0.5 Hz or 1 Hz [46] [47] | Removes slow drifts and DC offset; improves ICA decomposition quality [46]. | A 1 Hz high-pass filter is recommended before ICA [46]. | Overly aggressive high-pass filtering (e.g., >0.5 Hz) can distort ERPs [47]. |

| Low-Pass Filter | ≤ 30 Hz to 40 Hz [35] [48] | Attenuates high-frequency muscle noise and other high-frequency artifacts. | A 40 Hz low-pass filter was used in an etomidate study to focus on classic EEG rhythms [35]. | The cutoff should be above the highest frequency of interest for your analysis. |

| Band-Stop (Notch) Filter | 50 Hz or 60 Hz (region-dependent) | Removes mains line noise. | A 50 Hz notch filter was applied in a visual evoked potential study [48]. | As an alternative, consider adaptive methods like the CleanLine plugin for line noise removal [46]. |

| Band-Pass for Rhythms | δ (1-3 Hz), θ (3-8 Hz), α (8-13 Hz), β (13-30 Hz), γ (30-40 Hz) [35] | Isolates specific neural oscillatory rhythms for analysis. | Rhythms were extracted using band-pass filters in a neurovascular coupling study [35]. | Use basic FIR filters and avoid causal filters if phase preservation is critical [35] [46]. |

Experimental Protocol: A typical filtering sequence for continuous data, as implemented in EEGLAB, involves:

- High-pass filter at 1 Hz using a basic FIR filter [46].

- Low-pass filter at 40 Hz in a separate step to avoid unnecessarily steep filter slopes [46].

- Notch filter at 50 Hz (or 60 Hz) [48]. It is recommended to filter continuous data before epoching to minimize artifacts at epoch boundaries [46].

What steps should I take to ensure proper epoch extraction for Event-Related Potentials (ERPs)?

Epoch extraction involves segmenting the continuous EEG signal into time-locked windows around events of interest. The key is to balance sufficient baseline and post-stimulus periods while managing data dimensionality.

Detailed Methodology for Epoch Extraction:

- Define Epoch Boundaries: A typical epoch might span from -200 ms before the event to +800 ms after, but this depends on the cognitive component under study. The baseline period (pre-event) is used for baseline correction [49] [47].

- Perform Baseline Correction: This process removes the DC offset from each epoch by subtracting the average amplitude of the baseline period. For example, a baseline window of -190 ms to -10 ms might be used [47]. Note: If a high-pass filter (e.g., 0.5 Hz) has been applied, additional baseline correction may be redundant or even distort the signal [47].

- Address Dimensionality: ERP data can be conceptualized as a hypercube with dimensions: sensors × time × conditions × subjects × trials. No single plot can visualize all dimensions, so researchers must strategically slice or average across dimensions [49]. Common practices include:

- Plotting a single channel or region of interest (ROI) across time for one condition, averaging over subjects and trials.

- Creating a butterfly plot to show all channels for a single condition and time window.

- Using a topoplot to visualize spatial voltage distribution across the scalp at a specific time point [49].

Table 2: Common ERP Visualization Types and Their Uses

| Plot Type | Dimensions Visualized | Best Use Case | Common Tools / Functions |

|---|---|---|---|

| ERP Plot | Time (sliced), Condition (sliced) | Showing the amplitude time-course of a specific component at a selected channel. | Standard in all ERP toolboxes. |

| Butterfly Plot | Sensors (all), Time (sliced) | Overview of all channel activities for a single condition; identifying widespread artifacts. | epochs.plot() in MNE-Python. |

| Topoplot | Sensors (all, spatial), Time (sliced) | Visualizing the spatial distribution of voltage at a specific latency. | topoplot in EEGLAB, plot_topomap in MNE. |

| Channel Image | Time, Sensors | Depicting trial-by-trial and channel-by-channel activity as a heatmap; useful for identifying consistent patterns. | Used in the LIMO toolbox [49]. |

How can I troubleshoot inconsistent results after applying my preprocessing pipeline?

Inconsistencies often arise from subtle differences in parameter choices, software tools, or the order of operations.

Troubleshooting Guide:

Problem: Inconsistent PSDs or peaks after filtering.

- Potential Cause: Different software libraries (e.g., PyLSL, BrainFlow vs. native hardware software) may apply filters or handle data scaling differently, even with identical nominal parameters [48].

- Solution: Verify the filter functions and all input parameters (e.g., filter type, order, roll-off) are identical across software. Check for built-in preprocessing in the hardware's native software that isn't replicated in your custom script [48].

Problem: Poor baseline in epoched data after high-pass filtering.

- Potential Cause: The high-pass filter may not have been applied effectively, or an additional baseline correction (DC offset removal) might be causing a conflict [47].

- Solution: Visually inspect the power spectrum before and after filtering to confirm the filter worked. If a high-pass filter (≥0.5 Hz) was successfully applied, avoid applying a separate baseline correction, as the filter should have already centered the data [47].

Problem: Low classification accuracy or signal quality after artifact removal.

- Potential Cause: Overly aggressive artifact rejection can remove valuable neural signal along with the artifact, biasing your final dataset [50].

- Solution: Assess the impact of artifact removal on your final results (e.g., classification accuracy or correlation with an external variable). Compare results with and without stringent artifact rejection. It may be better to use a more conservative threshold or a method like ICA to preserve more of the true neural signal [50].

What essential materials and reagents are required for a synchronous fNIRS-EEG experiment?

Integrating fNIRS and EEG requires specific hardware and software to enable synchronous data acquisition and analysis.

Table 3: Research Reagent Solutions for fNIRS-EEG Fusion

| Item | Function / Description | Example from Literature |

|---|---|---|

| EEG Acquisition System | Records electrical activity from the scalp with high temporal resolution. | 64-channel EEG system (e.g., NeuSen W) with sampling frequency ≥ 250 Hz [35]. |

| fNIRS Acquisition System | Measures hemodynamic changes by detecting near-infrared light attenuation, providing spatial resolution. | System with multiple emitters and detectors (e.g., 23 emitters, 16 detectors) using dual-wavelength lasers (730 & 850 nm) [35]. |

| Integrated Probe Cap | A custom helmet or cap that holds both EEG electrodes and fNIRS optodes in a co-registered spatial arrangement. | Flexible EEG cap with punctures for fNIRS fixtures; 3D-printed custom helmet for better fit and stability [32]. |

| Synchronization Hardware/Software | Ensures precise temporal alignment of EEG and fNIRS data streams. | Unified processor for simultaneous acquisition; or synchronization of separate systems via host computer [32]. |

| Software for Multimodal Analysis | Tools for preprocessing, feature fusion, and joint analysis of the two data modalities. | Custom scripts in MATLAB/Python; toolboxes like EEGLAB for EEG and Homer2 for fNIRS [35] [32]. |

Figure 1: A standardized preprocessing pipeline for EEG data within an fNIRS-EEG fusion framework. Dashed lines indicate the integration point for synchronized fNIRS data prior to multimodal analysis.

In functional near-infrared spectroscopy (fNIRS) and electroencephalography (EEG) fusion research, artifacts represent non-neural signals that can significantly corrupt data quality and interpretation. These unwanted signals originate from multiple sources: motion artifacts from subject movement, physiological artifacts from cardiac pulsation, respiration, and blood pressure changes, and environmental artifacts from instrumental and external interference [12] [8]. Effective artifact handling is particularly crucial in fNIRS-EEG studies because both modalities are susceptible to different artifact types with distinct characteristics, and the fusion process can amplify artifacts if not properly addressed [8] [51]. The portability of fNIRS and EEG systems enables brain monitoring in naturalistic settings, but this advantage comes with increased vulnerability to artifacts, making robust preprocessing pipelines essential for data validity [8] [52].

Troubleshooting Guides

Motion Artifacts

Q: What are the primary causes and characteristics of motion artifacts?

Motion artifacts (MAs) arise from imperfect contact between sensors and the scalp during subject movement. In fNIRS, optode displacement causes sudden, high-amplitude signal shifts due to changes in light coupling efficiency [37]. Specific movements causing MAs include head movements (nodding, shaking, tilting), facial muscle movements (raising eyebrows), body movements (limb movements causing head motion), and jaw movements (talking, eating) [37]. In EEG, motion creates similar signal disruptions but manifests as electrical potential changes from electrode movement relative to skin [52] [36]. Motion artifacts typically exhibit higher amplitude and different frequency characteristics compared to the underlying neural signals in both modalities.

Q: What methods effectively correct for motion artifacts?

Multiple algorithmic approaches exist for motion artifact correction, each with distinct advantages:

Table 1: Motion Artifact Correction Methods for fNIRS and EEG

| Method | Modality | Principle | Performance Metrics | Limitations |

|---|---|---|---|---|

| Wavelet Packet Decomposition (WPD) | fNIRS & EEG | Signal decomposition into wavelet packets with artifact component identification and removal [36] | ΔSNR: 29.44 dB (EEG), 16.11 dB (fNIRS); η: 53.48% (EEG), 26.40% (fNIRS) [36] | Wavelet selection affects performance |

| WPD with Canonical Correlation Analysis (WPD-CCA) | fNIRS & EEG | Two-stage approach: WPD followed by CCA for enhanced artifact separation [36] | ΔSNR: 30.76 dB (EEG), 16.55 dB (fNIRS); η: 59.51% (EEG), 41.40% (fNIRS) [36] | Increased computational complexity |

| Accelerometer-Based Methods (ABAMAR/ABMARA) | fNIRS | Use accelerometer data as noise reference for adaptive filtering [37] | Enables real-time rejection; improves classification accuracy [37] | Requires additional hardware; placement affects performance |

| Moving Average & Spline Interpolation | fNIRS | Identifies artifact periods and interpolates using clean data segments [37] | Simple implementation; effective for isolated artifacts [37] | Can distort signal morphology near artifacts |

The following workflow illustrates a systematic approach to motion artifact management:

Motion Artifact Management Workflow

Physiological Artifacts

Q: What physiological processes cause artifacts and how do they differ between modalities?

Physiological artifacts originate from various bodily functions with distinct manifestations in fNIRS and EEG:

Table 2: Physiological Artifacts in fNIRS and EEG

| Source | fNIRS Manifestation | EEG Manifestation | Frequency Characteristics |

|---|---|---|---|

| Cardiac Pulsation | Low-frequency oscillations from blood volume changes | Electrical spikes from heart muscle activity (ECG) [8] | fNIRS: ~1-1.5 Hz; EEG: ~1-1.5 Hz with sharper peaks |

| Respiration | Slow oscillations from blood pressure and volume changes | Minimal direct impact | fNIRS: ~0.2-0.3 Hz; EEG: Less prominent |

| Mayer Waves | Very low-frequency oscillations from blood pressure regulation | Not typically detectable | fNIRS: ~0.1 Hz; EEG: Not applicable |

| Blood Pressure Changes | Systemic hemodynamic fluctuations | Not typically detectable | fNIRS: <0.1 Hz; EEG: Not applicable |