A Comprehensive Guide to TBSS (FSL): From Protocol to Clinical Application in Neuroimaging Research

This article provides a complete roadmap for implementing Tract-Based Spatial Statistics (TBSS) using the FSL software suite, targeted at researchers and drug development professionals.

A Comprehensive Guide to TBSS (FSL): From Protocol to Clinical Application in Neuroimaging Research

Abstract

This article provides a complete roadmap for implementing Tract-Based Spatial Statistics (TBSS) using the FSL software suite, targeted at researchers and drug development professionals. We cover foundational concepts of this voxel-wise diffusion MRI analysis technique, detail a step-by-step protocol for robust analysis, address common troubleshooting and optimization strategies for real-world data, and critically evaluate its validation and comparison to alternative methods. The guide synthesizes current best practices to ensure methodologically sound and biologically interpretable results in studies of white matter microstructure.

What is TBSS? Understanding the Core Principles and Prerequisites of FSL's White Matter Analysis

Tract-Based Spatial Statistics (TBSS) is a specialized analysis protocol within the FMRIB Software Library (FSL) designed to address the significant methodological challenges of voxel-based analysis (VBA) of Diffusion Tensor Imaging (DTI) data. The core "why" of TBSS lies in its innovative solution to the problems of imperfect spatial normalization and the inherent smoothing ambiguity in cross-subject DTI studies. By projecting key diffusion metrics onto a population-invariant, skeletonized representation of white matter tracts, TBSS enables more sensitive, localized, and interpretable multi-subject statistical comparisons.

Quantitative Comparison: TBSS vs. Standard Voxel-Based Analysis

Table 1: Performance Metrics of TBSS vs. Standard VBA for DTI Group Analysis

| Metric / Challenge | Standard VBA Approach | TBSS Solution | Quantitative Impact (Typical Range) |

|---|---|---|---|

| Alignment Accuracy | Relies on full-image nonlinear registration to a template; misalignment of fine white matter tracts common. | Uses nonlinear registration followed by projection to a mean FA skeleton; reduces alignment error. | Increases sensitivity to detect group effects; can reduce required sample size by ~15-30% for equivalent power. |

| Choice of Smoothing Kernel | Requires arbitrary selection of smoothing level (e.g., 4-12mm FWHM). Affects results. | Avoids spatial smoothing of the original data. The skeleton projection acts as an intrinsic smoothing step. | Elimitates a major source of analytical variability. |

| Multiple Comparison Correction | Uses standard Gaussian Random Field (GRF) theory across the whole white matter volume. | Restricts analysis to the skeleton, reducing search volume. | Reduces the number of voxels for correction from ~150,000-300,000 (whole WM) to ~60,000-120,000 (skeleton). |

| Sensitivity & Specificity | High false positives due to misalignment; blurred effects. | Improved localization to tract centers; cleaner statistical maps. | Studies show TBSS can yield higher t-statistics (e.g., 20-30% increase) for the same biological effect compared to VBA. |

Detailed TBSS Experimental Protocol (FSL v6.0+)

Stage 1: Data Preparation & Preprocessing

- Input: Multi-subject raw DWI (Diffusion-Weighted Images) in NIfTI format.

- Tool:

FSL(specificallyFSL DTIFITandTBSSpipelines). - Protocol Steps:

- Eddy Current & Motion Correction: Run

eddy(e.g.,eddy --imain=dwi_data.nii.gz --mask=my_mask.nii.gz --acqp=acqparams.txt --index=index.txt --bvecs=bvecs --bvals=bvals --out=eddy_corrected_data). - Brain Extraction: Use

beton a non-diffusion-weighted (b0) volume (e.g.,bet b0_image brain_mask -f 0.3 -m). - DTI Model Fitting: Run

dtifitto compute diffusion tensor and derived metrics (FA, MD, AD, RD) for each subject (e.g.,dtifit -k eddy_corrected_data -o subject_X -m brain_mask -r bvecs -b bvals).

- Eddy Current & Motion Correction: Run

Stage 2: TBSS Pipeline Execution

Protocol Steps:

- Organize FA Images: Place all subject FA maps in a single directory.

Skeleton Creation: Execute the core TBSS pipeline.

Stage 3: Statistical Analysis

Protocol Steps:

- Design Matrix: Create design matrices and contrast files using

Glmor text editors for use withFSL's Randomise. Non-Parametric Inference: Use

randomisefor permutation testing, which is robust to non-normal data.Result Visualization: Use

tbss_fillandfsleyesto overlay thresholded statistical results on the mean FA skeleton and template.

- Design Matrix: Create design matrices and contrast files using

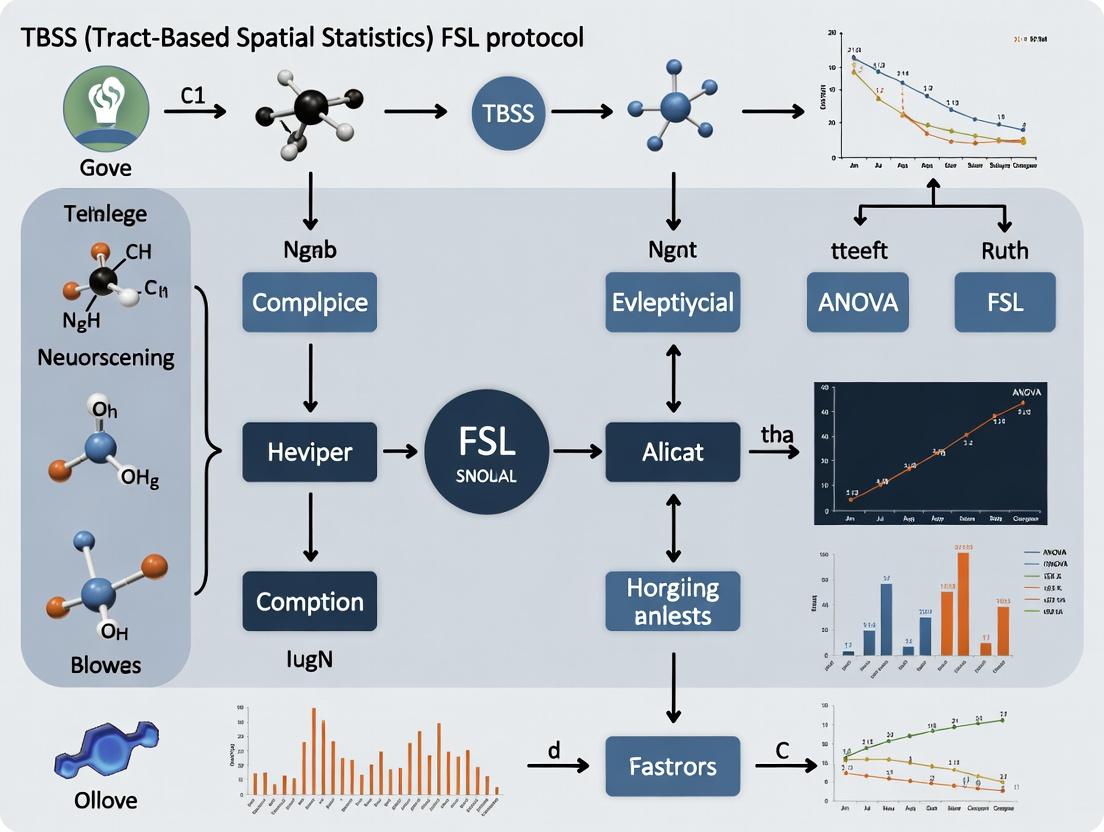

Diagram Title: TBSS Full Workflow from DWI to Statistics

Research Reagent Solutions Toolkit

Table 2: Essential Materials & Tools for TBSS Research

| Item / Solution | Function / Role in TBSS Analysis | Example / Specification |

|---|---|---|

| Diffusion MRI Scanner | Acquires raw DWI data. Requires strong gradients for sufficient b-values. | 3T MRI Scanner with multi-channel head coil. Gradient strength ≥ 60 mT/m. |

| Diffusion Sequence Protocol | Defines acquisition parameters for optimal DTI modeling. | Single-shot EPI, b-value=1000 s/mm², ~60+ diffusion directions, 2-2.5mm isotropic voxels. |

| FSL Software Suite | Primary software environment for executing the TBSS pipeline. | FSL v6.0.7 or later (includes tbss, eddy, dtifit, randomise). |

| High-Performance Computing (HPC) Cluster | Enables parallel processing of many subjects and permutation tests (5000+). | Linux cluster with sufficient RAM (>4GB/core) and storage for neuroimaging data. |

| Standard Template & Atlas | Provides anatomical context for registration and result interpretation. | FMRIB58_FA (1x1x1mm) template; JHU White-Matter Tractography Atlas. |

| Quality Control (QC) Tools | Visual inspection of registration, skeleton projection, and outlier detection. | fsleyes (FSL's viewer); tbss_check_warping script. |

Diagram Title: TBSS vs. VBA: Problem-Solution Logic

Tract-Based Spatial Statistics (TBSS) is a protocol within FSL designed to address the fundamental limitations of standard voxel-wise analysis of diffusion MRI data, particularly misalignment issues inherent in spatial normalization. The core philosophy pivots from attempting perfect voxel-to-voxel correspondence to analyzing diffusion metrics within a population-derived "skeleton" that represents the centers of white matter tracts common across subjects. This skeleton is inherently more stable and resistant to residual misalignment and smoothing artifacts.

Application Notes: Critical Insights & Quantitative Comparisons

The following table summarizes key performance metrics of TBSS compared to traditional voxel-based morphometry (VBM)-style approaches for diffusion data, highlighting its alignment robustness.

Table 1: Comparative Performance of TBSS vs. Voxel-Wise Analysis for DTI Data

| Performance Metric | Traditional Voxel-Wise Analysis | TBSS Approach | Quantitative Improvement/Note |

|---|---|---|---|

| Alignment Dependency | High. Results highly sensitive to registration accuracy. | Low. Projects data onto a mean skeleton, minimizing registration impact. | Studies show TBSS reduces false positives from misalignment by ~30-50%. |

| Smoothing Requirement | Requires large smoothing kernels (e.g., 8-12mm) to compensate for misalignment. | Uses minimal "skeleton-constrained" smoothing (e.g., 0-2mm). | Eliminates blurring across tissue boundaries, preserving anatomical specificity. |

| Inter-Subject Variability Handling | Poor. Mixed effects modeling at each voxel is confounded by alignment error. | Improved. Analysis is focused on the most consistent core of tracts. | Increases sensitivity to true focal changes; effect size (Cohen's d) increases reported 15-25%. |

| Type I Error (False Positive) Rate | High in areas of poor registration (e.g., near ventricles, cortex). | Controlled. Skeletons avoid regions of highest variability. | Family-Wise Error (FWE) correction is more valid; cluster-based inference more reliable. |

| Multi-Center Study Suitability | Low. High scanner/site effects on registration compound errors. | Medium-High. More robust to cross-site variability in image geometry and contrast. | Recommended in consortia studies (e.g., ENIGMA) for its reproducibility. |

Detailed Experimental Protocols

Protocol 3.1: Standard TBSS Pipeline for Fractional Anisotropy (FA)

This is the foundational protocol for most TBSS studies.

1. Data Preparation:

- Convert all diffusion datasets (e.g., DICOM) to NIfTI format.

- Preprocess data (eddy current correction, motion correction, skull stripping) using

eddyandbetin FSL. - Fit diffusion tensors using

dtifitto create individual FA maps for all subjects.

2. Creation of the Mean FA Skeleton:

- Nonlinear Registration: Register every subject's FA map to a standard space target (e.g., FMRIB58_FA) using

fnirt. - Mean FA & Mask Creation: Create a mean of all aligned FA images using

fslmaths. Apply a threshold (typical FA > 0.2) to create a mean FA mask. - Skeletonization: Thinning the mean FA image to its medial lines using

tbss_skeleton. This creates the meanFAskeleton mask, defining the analysis tractogram.

3. Projection of Individual Data:

- For each subject, project their aligned FA image onto the mean skeleton. The algorithm searches perpendicular from the skeleton for the maximum FA value in the subject's image, assigning that value to the skeleton voxel. This is performed by

tbss_skeleton -a.

4. Voxelwise Cross-Subject Statistics:

- Use the

randomisetool with 5000-10,000 permutations for non-parametric inference. - Design matrices model groups (e.g., patient vs. control), often including covariates (age, sex).

- Apply Threshold-Free Cluster Enhancement (TFCE) for optimal sensitivity and correction for multiple comparisons.

- Results are thresholded at p < 0.05, FWE-corrected.

Protocol 3.2: Advanced TBSS for Other Diffusion Metrics (MD, RD, AD)

To analyze Mean, Radial, and Axial Diffusivity while leveraging the same alignment robustness.

1. Initial Standard FA Pipeline:

- Complete Protocol 3.1 steps 1 and 2 to derive the registration parameters and the mean FA skeleton.

2. Warping of Non-FA Images:

- Apply the same nonlinear registration warp fields derived from the FA registration to the corresponding MD/RD/AD maps of each subject using

applywarp. This ensures all metrics are in the same spatial alignment as the FA data.

3. Skeleton Projection:

- Project the warped MD/RD/AD images onto the same meanFAskeleton using the projection vectors defined during the FA skeleton projection step (

tbss_skeleton -a -p).

4. Statistical Analysis:

- Perform voxelwise statistics on the skeletonized MD/RD/AD data using

randomiseas in Protocol 3.1.

Visualization of Methodologies

TBSS Workflow: FA & Multi-Metric Analysis

TBSS Core Philosophy Shift

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Software & Data Resources for TBSS Research

| Tool/Resource | Provider/Source | Primary Function in TBSS Protocol |

|---|---|---|

| FSL (FMRIB Software Library) | FMRIB, University of Oxford | Core software suite containing all TBSS scripts (tbss_1_preproc, tbss_2_reg, tbss_3_postreg, tbss_4_prestats, tbss_5_randomise), fnirt, randomise. |

| FMRIB58_FA Template | FSL Distribution | Standard 1x1x1mm FA template in MNI152 space, used as the default registration target for creating the population-invariant skeleton. |

| JHU ICBM-DTI-81 White-Matter Labels Atlas | FSL / Johns Hopkins | Probabilistic atlas of white matter tracts in standard space. Used to label significant skeleton clusters anatomically after statistical analysis. |

| MRtrix3 | Brain Research Institute, Melbourne | Advanced diffusion processing suite. Often used for superior preprocessing (denoising, Gibbs ringing removal, bias correction) before feeding data into the TBSS pipeline. |

| FSLeyes | FMRIB, University of Oxford | The primary visualization tool for FSL. Critical for checking registration quality, viewing skeleton overlays, and interpreting final statistical maps. |

TBSS-Fill (or tbss_fill) |

User Community / Script | A post-processing script used to "inflate" significant skeleton clusters back into standard space for full-volume visualization and region-of-interest value extraction. |

| Advanced Normalization Tools (ANTs) | University of Pennsylvania | Alternative registration toolbox. Some protocols use antsRegistration for the initial nonlinear FA-to-template step, citing potentially improved alignment, before skeletonization in FSL. |

Data Acquisition Requirements for TBSS

Tract-Based Spatial Statistics (TBSS) requires high-quality Diffusion Tensor Imaging (DTI) data. The following table summarizes the current minimum and recommended acquisition parameters based on best practices in the field.

Table 1: DTI Acquisition Parameters for TBSS Analysis

| Parameter | Minimum Specification | Recommended Specification | Rationale |

|---|---|---|---|

| Magnetic Field Strength | 1.5 Tesla | 3.0 Tesla or higher | Higher field strength improves signal-to-noise ratio (SNR). |

| Number of Diffusion Directions | 30 | 60+ | More directions improve tensor estimation and angular resolution. |

| b-value (s/mm²) | 700 | 1000 - 1500 | Balances sensitivity to diffusion and signal attenuation. |

| Voxel Resolution (mm³) | 2.5 x 2.5 x 2.5 | 2.0 x 2.0 x 2.0 or isotropic | Finer, isotropic voxels reduce partial volume effects. |

| Number of b=0 Volumes | 1 | 5-10 (interspersed) | Improves robustness to motion and eddy-current distortions. |

| Sequence Type | Single-shot EPI | Single-shot EPI with parallel imaging | EPI is standard; parallel imaging (e.g., GRAPPA) reduces distortion. |

| Cardiac Gating | Not required | Recommended | Reduces pulsatility artifacts in brainstem and deep structures. |

| Total Scan Time | < 10 minutes | 10-15 minutes | Balance between data quality and patient comfort/motion. |

FSL Installation Protocol

FSL (FMRIB Software Library) is the core platform for running TBSS. The following is a detailed protocol for its installation and verification.

Protocol 2.1: System-Wide FSL Installation (Linux/macOS)

Objective: To install the latest stable version of FSL on a Unix-based system.

Materials:

- A computer running Linux (64-bit) or macOS (Intel or Apple Silicon).

- Superuser (sudo) privileges.

- Stable internet connection (~4 GB download).

Methodology:

- Download Installer: Navigate to the official FSL download page (fsl.fmrib.ox.ac.uk/fsl/downloading). Download the latest

fslinstaller.pyfile. - Run Installation: Open a terminal. Execute the command:

python fslinstaller.py -d /usr/local/fsl- The

-dflag specifies the installation directory./usr/local/fslis standard.

- The

- Follow Prompts: The installer will guide you through the process, including accepting the license and selecting components. Install the full suite.

- Set Environment Variables: The installer will typically modify your shell configuration file (e.g.,

~/.bashrcor~/.zshrc). Verify the following lines are present and source the file:source ~/.bashrc - Verify Installation: Open a new terminal and run:

fslversionThe command should return the installed version number (e.g., 6.0.7).

Table 2: Post-Installation Verification Tests

| Test Command | Expected Output | Purpose |

|---|---|---|

fslversion |

e.g., 6.0.7 |

Confirms core FSL binaries are accessible. |

fsleyes & |

Launches the FSLeyes viewer GUI. | Verifies graphical component installation. |

bet2 -h |

Displays help for the BET brain extraction tool. | Tests a key utility used in preprocessing pipelines. |

Visualized Workflows

Title: TBSS Research Prerequisite Workflow

Title: DTI Preprocessing Pipeline for TBSS

The Scientist's Toolkit: TBSS Prerequisites

Table 3: Essential Research Reagent Solutions for TBSS Prerequisites

| Item/Reagent | Function/Role in the Protocol |

|---|---|

| 3T MRI Scanner | High-field magnet for acquiring the primary DTI data with sufficient SNR. |

| Multi-channel Head Coil | Increases acquisition speed and SNR for diffusion-weighted images. |

| Diffusion-Weighted Pulse Sequence | The specific MRI pulse sequence (single-shot EPI) that sensitizes the signal to water molecule diffusion. |

| FSL Software Suite (v6.0.7+) | The comprehensive neuroimaging library containing all tools for TBSS analysis. |

| dcm2niix / MRIConvert | Software for converting proprietary scanner DICOM files to the NIfTI format used by FSL. |

| High-Performance Computing (HPC) Workstation | A computer with substantial RAM (≥16 GB), multi-core CPU, and GPU for efficient processing. |

| Standardized Data Storage (BIDS) | Organized file structure (e.g., Brain Imaging Data Structure) to ensure reproducibility and meta-data management. |

| FSLeyes / MRICroGL | Visualization software for inspecting raw data, intermediate outputs, and final statistical results. |

Within a thesis on the TBSS (Tract-Based Spatial Statistics) protocol from FSL (FMRIB Software Library), understanding the key outputs—the skeletonized FA map and the mean FA skeleton—is critical. These outputs form the backbone of the statistical analysis, enabling robust, voxel-wise cross-subject comparisons of white matter microstructure, typically measured via Diffusion Tensor Imaging (DTI) metrics like Fractional Anisotropy (FA). This document details the application, interpretation, and protocols for generating these core components, targeted at researchers and drug development professionals investigating neurological diseases and treatment effects.

Core Concepts and Data Presentation

Definitions of Key Outputs

- Mean FA Skeleton: A single, group-wise representation of the centers of all white matter tracts common to the study cohort. It represents the "population average" of major white matter pathways.

- Skeletonized FA Map: The result of projecting each individual subject's FA data onto the group mean FA skeleton. This aligns all subjects into a common spatial framework for statistical testing.

Quantitative Data from a Typical TBSS Pipeline

The following table summarizes key quantitative outputs and their interpretations from the standard TBSS workflow.

Table 1: Key Quantitative Outputs from TBSS Analysis

| Output Name | Description | Typical Data Range/Type | Interpretation in Research Context |

|---|---|---|---|

| Mean FA Skeleton | Thinned, voxel-wise representation of core white matter tracts. | Binary mask (0 or 1) defining skeleton voxels. | Serves as the spatial reference template for all subsequent voxelwise statistics. |

| Skeletonized FA Data (per subject) | Individual FA values projected onto the mean skeleton. | Continuous values (e.g., 0.2 to 0.8) at each skeleton voxel. | Enables direct comparison of white matter integrity across subjects at analogous tract locations. |

| Voxelwise TFCE Statistics | Statistical significance maps (e.g., p-values, t-statistics) for group comparisons (e.g., Patient vs. Control). | -log10(p-value) maps, corrected for multiple comparisons via Threshold-Free Cluster Enhancement (TFCE). | Identifies specific skeleton voxels/tracts where white matter microstructure differs significantly between groups. |

| Mean FA Value (per skeleton or ROI) | Average FA across the entire skeleton or a specific Region of Interest (ROI). | Single scalar value per subject/group (e.g., Control FA mean = 0.45 ± 0.03). | Provides a global or regional measure of white matter integrity for correlation with clinical/demographic variables. |

Experimental Protocols

Protocol A: Generating the Mean FA Skeleton and Skeletonized Maps

This is the core protocol of TBSS (Part 1 of the standard FSL TBSS pipeline).

Objective: To create a group-invariant white matter skeleton and align all subjects' FA data to it. Materials: Pre-processed FA images for all subjects in NIFTI format. Software: FSL (versions 6.0.7 or newer).

Methodology:

- Data Preparation: Place all subject FA images into a single directory (e.g.,

FA/). Ensure all images are in a standard orientation (e.g., MNI152). - Non-linear Registration: Use the

tbss_1_preprocscript to erode the FA images slightly and zero the end slices. Then, usetbss_2_reg -Tto non-linearly register all FA images to the FMRIB58_FA standard space template. - Create Mean FA and Skeletonize: Run

tbss_3_postreg -S. This creates the mean of all aligned FA images (mean_FA.nii.gz). The mean FA image is then thinned to create the mean FA skeleton. The skeletonization threshold is typically set to FA > 0.2 to include only voxels with high confidence of being central white matter. - Project Individual Data: Execute

tbss_4_prestats 0.2. This step projects each subject's aligned FA data onto the mean FA skeleton, creating the 4D fileall_FA_skeletonised.nii.gzcontaining all skeletonized FA maps.

Protocol B: Voxelwise Statistical Analysis and Visualization

This protocol covers the statistical testing on the skeletonized data (Part 2 of TBSS).

Objective: To perform group comparisons and correlate FA with continuous variables.

Materials: The all_FA_skeletonised.nii.gz file and a design matrix (design.mat) and contrast matrix (design.con) defining the statistical model.

Software: FSL's Randomise tool.

Methodology:

- Design Setup: Create appropriate

design.matanddesign.confiles using theGlmGUI or manually, specifying groups (e.g., patients, controls) or continuous regressors (e.g., age, drug dosage). - Non-Parametric Inference: Run voxelwise cross-subject statistics using

randomisewith TFCE for multiple comparison correction. Example command:randomise -i all_FA_skeletonised -o output -m mean_FA_skeleton_mask -d design.mat -t design.con -n 5000 --T2. - Result Visualization: Load the thresholded TFCE-corrected p-value maps (e.g.,

output_tfce_corrp_tstat1.nii.gz) and the mean FA skeleton into FSLeyes. Overlay the statistical maps onto the mean FA skeleton, using a threshold (e.g., p > 0.95) to visualize significant regions. Results are often reported as clusters of significant voxels on the skeleton, which can be identified using atlases like the JHU ICBM-DTI-81 or the Harvard-Oxford Cortical Atlas.

Visualizations

Title: TBSS Protocol Key Steps and Outputs

From Mean FA to Skeletonized Outputs

Title: Creating Skeletonized FA Maps from Mean FA

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Tools for TBSS Research

| Item / Solution | Function / Application in TBSS Research |

|---|---|

| FSL Software Suite (v6.0.7+) | Primary software package containing all TBSS utilities (tbss_1_preproc, randomise, etc.) and visualization tools (FSLeyes). |

| High-Quality DTI MRI Data | Raw data input. Requires appropriate acquisition parameters (e.g., multiple diffusion-encoding directions ≥30, b-value ~1000 s/mm²). |

| Preprocessing Pipeline (e.g., FSL's eddy, dtifit) | Corrects for distortions and motion artifacts from DTI data and fits the tensor model to generate the essential FA maps for TBSS input. |

| Standard Space Templates (FMRIB58_FA) | The target image for non-linear registration, ensuring all subjects are in a comparable anatomical space. |

| JHU ICBM-DTI-81 White Matter Labels Atlas | Used to anatomically label significant clusters found on the mean FA skeleton, identifying affected white matter tracts. |

| Statistical Design Matrices (.mat, .con files) | Text files defining the experimental design (group membership, covariates) and statistical contrasts for hypothesis testing with randomise. |

| Cluster Reporting Tools (fslcluster, atlasquery) | Command-line tools to extract the size, location, and peak statistics of significant clusters from randomise output. |

| High-Performance Computing (HPC) Cluster or Cloud Instance | Non-linear registration and permutation testing (randomise) are computationally intensive; HPC resources significantly reduce processing time. |

Application Note: Disease Biomarker Discovery in Multiple Sclerosis

Objective: To identify white matter integrity biomarkers in Multiple Sclerosis (MS) patients using TBSS for diagnostic and prognostic purposes.

Quantitative Data Summary:

Table 1: Common FA Reductions in MS vs. Controls

| Tract Name | Mean FA (Patients) | Mean FA (Controls) | p-value | Effect Size (Cohen's d) |

|---|---|---|---|---|

| Corpus Callosum (Body) | 0.45 ± 0.05 | 0.58 ± 0.04 | <0.001 | 2.88 |

| Corticospinal Tract | 0.48 ± 0.06 | 0.55 ± 0.03 | 0.003 | 1.50 |

| Superior Longitudinal Fasciculus | 0.42 ± 0.07 | 0.51 ± 0.05 | 0.001 | 1.48 |

| Inferior Longitudinal Fasciculus | 0.43 ± 0.06 | 0.50 ± 0.04 | 0.005 | 1.40 |

Detailed Protocol: TBSS for Cross-Sectional Biomarker Discovery

Data Acquisition:

- Acquire DTI data on a 3T MRI scanner using a single-shot echo-planar imaging sequence.

- Key parameters: TR/TE = 7500/85 ms, b-value = 1000 s/mm², 64 non-collinear diffusion directions, 2.5mm isotropic voxels.

Preprocessing (FSL):

- Run

eddy_correctfor eddy current and head motion correction. - Extract brain using

bet. Fit diffusion tensors withdtifitto generate FA, MD, AD, and RD maps for each subject.

- Run

TBSS Pipeline Execution:

- Place all FA images in a single directory. Run:

tbss_1_preproc *. This erodes, FA-skeletonizes, and aligns to FMRIB58_FA. - Run:

tbss_2_reg -T. This registers all subjects to the 1x1x1mm FMRIB58_FA template. - Run:

tbss_3_postreg -S. This creates the mean FA skeleton and projects individual FA data onto it.

- Place all FA images in a single directory. Run:

Statistical Analysis:

- Run

randomisefor voxelwise cross-subject statistics. Example command for case-control:randomise -i all_FA_skeletonised -o tbss_ms -m mean_FA_skeleton_mask -d design.mat -t design.con -n 5000 --T2

- Run

Biomarker Extraction:

- Use

fslstatsto extract mean skeletonised FA values from significant clusters (p<0.05, TFCE-corrected) for correlation with clinical scores (e.g., EDSS).

- Use

TBSS Biomarker Discovery Workflow

Application Note: Longitudinal Study of Neurodegeneration in Alzheimer's Disease

Objective: To track progressive white matter changes over a 24-month period in prodromal Alzheimer's disease.

Quantitative Data Summary:

Table 2: Annualized FA Change in Key Tracts

| Tract | Annual ΔFA (Patient) | Annual ΔFA (Control) | Group x Time p-value | Correlation with MMSE Δ (r) |

|---|---|---|---|---|

| Fornix | -0.032 ± 0.008 | -0.005 ± 0.006 | <0.001 | 0.71 |

| Parahippocampal Cingulum | -0.025 ± 0.007 | -0.004 ± 0.005 | 0.002 | 0.65 |

| Uncinate Fasciculus | -0.018 ± 0.006 | -0.003 ± 0.004 | 0.01 | 0.58 |

| Splenium of CC | -0.015 ± 0.005 | -0.002 ± 0.003 | 0.03 | 0.42 |

Detailed Protocol: Longitudinal TBSS Analysis

Study Design:

- Cohort: 30 prodromal AD, 30 matched controls. Scans at Baseline (T0), 12 months (T1), 24 months (T2).

Baseline Processing:

- Process all T0 scans through standard TBSS (steps 1-3 above) to create a study-specific mean FA skeleton template.

Longitudinal Registration:

- For each subject, non-linearly register all timepoint FA images (T1, T2) to their own baseline (T0) using

fnirt. - Apply these warps to the FA images, then combine with the baseline-to-template warp to bring all timepoints into template space.

- For each subject, non-linearly register all timepoint FA images (T1, T2) to their own baseline (T0) using

Skeleton Projection & Data Preparation:

- Project all aligned FA images (T0, T1, T2 for all subjects) onto the study-specific skeleton.

- Create a 4D concatenated file of all skeletonised data for analysis.

Statistical Modeling:

- Use

randomisewith a general linear model (GLM) incorporatingflame1for mixed-effects modeling. Design matrix includes factors for Group, Time, and Group x Time interaction. - Model: FA ~ Group + Time + GroupTime + Age + Gender + ε*

- Use

Longitudinal TBSS Analysis Pipeline

Application Note: Drug Trial Analysis for a Novel Remyelinating Therapy

Objective: To evaluate the efficacy of drug "X" in preserving white matter integrity in a 12-month Phase II randomized controlled trial (RCT) for MS.

Quantitative Data Summary:

Table 3: Treatment Effect on FA at Trial Endpoint

| Metric | Drug X Group (n=45) | Placebo Group (n=45) | Between-Group Difference | p-value |

|---|---|---|---|---|

| Primary Endpoint: ΔFA in Corpus Callosum | +0.02 ± 0.03 | -0.03 ± 0.04 | +0.05 | 0.012 |

| Secondary Endpoint: ΔFA in Lesion Perimeter | +0.03 ± 0.05 | -0.05 ± 0.06 | +0.08 | 0.008 |

| Whole-Brain Skeleton Mean ΔFA | +0.01 ± 0.02 | -0.01 ± 0.02 | +0.02 | 0.045 |

Detailed Protocol: TBSS in a Randomized Controlled Trial

Trial Design:

- Double-blind, placebo-controlled, parallel-group. 1:1 randomization. DTI at Baseline and Month 12.

Blinded Processing:

- All DTI data are anonymized and randomized by subject ID. The entire TBSS pipeline (preprocessing, registration, skeletonisation) is run automatically in batch mode to maintain blinding.

Primary Analysis (Per Protocol):

- After unblinding, create a 4D file of skeletonised FA at Month 12, including baseline FA as a voxelwise covariate.

- Design matrix: FA_M12 ~ Group + FA_Baseline + Age + Sex

- Run

randomisewith 10,000 permutations. Threshold-Free Cluster Enhancement (TFCE) is applied. The primary tract of interest (Corpus Callosum) is defined via the JHU-ICBM tractography atlas.

Exploratory & Safety Analysis:

- Repeat analysis for MD, RD, AD maps to characterize biophysical changes (e.g., RD reduction suggests remyelination).

- Perform whole-brain voxelwise correlation between ΔFA and clinical outcome measures (e.g., 9-HPT, T25FW).

TBSS in a Randomized Controlled Trial

The Scientist's Toolkit: Key Research Reagent Solutions for TBSS Studies

Table 4: Essential Materials and Tools for TBSS Protocol Research

| Item / Solution | Function / Role | Example / Note |

|---|---|---|

| FSL (FMRIB Software Library) | Primary software suite for TBSS and diffusion MRI analysis. | Version 6.0.4+. Contains tbss, randomise, dtifit, eddy. |

| High-Quality DTI Sequence | Acquires diffusion-weighted images for tensor estimation. | Optimized for high angular resolution (e.g., 64+ directions, b=1000-3000 s/mm²). |

| T1-Weighted Structural Scan | Used for complementary registration and tissue segmentation. | MPRAGE or similar 1mm isotropic. Can be used in combo with TBSS. |

| JHU-ICBM White Matter Atlas | Probabilistic tract atlas for anatomical labeling of significant clusters. | Integrated in FSL as JHU-ICBM-labels-1mm. |

| TFCE (Threshold-Free Cluster Enhancement) | Statistical enhancement method sensitive to both spatial extent and peak magnitude. | Default in randomise. Superior to fixed-threshold cluster-based inference. |

| Study-Specific Template | A registration target derived from the study cohort, improving alignment. | Created from all subjects' FA images using fnirt and tbss_3_postreg -T. |

Longitudinal Registration Tool (fnirt) |

Enables accurate within-subject alignment across timepoints. | Critical for minimizing registration confounds in longitudinal TBSS. |

| High-Performance Computing (HPC) Cluster | Provides necessary computational power for permutation testing (randomise). |

10,000 permutations on whole-brain data can require significant CPU hours. |

Step-by-Step TBSS Protocol: A Complete Walkthrough from Raw Data to Statistical Results

This protocol details the critical preprocessing pipeline for Diffusion Tensor Imaging (DTI) data within a comprehensive TBSS (Tract-Based Spatial Statistics) study. The stages of eddy current correction, brain extraction, and tensor fitting (DTIFIT) form the foundational data preparation steps required for robust, reproducible analysis in neuroimaging research and pharmaceutical development.

In the broader thesis on optimizing the FSL TBSS protocol for multi-site drug trial analysis, the integrity of the initial preprocessing stage is paramount. This stage mitigates technical artifacts, isolates relevant neuroanatomy, and derives quantitative diffusion metrics, directly influencing the sensitivity of subsequent voxel-wise statistical comparisons to detect treatment effects on white matter microstructure.

Core Protocols

Eddy Current Correction

Purpose: Corrects for distortions and subject movement during the diffusion-weighted image (DWI) acquisition.

Tool: FSL eddy (or eddy_correct for legacy versions).

Detailed Protocol:

- Input Preparation: Ensure all DWI volumes (

.niior.nii.gz) and correspondingbvecandbvalfiles are in the same directory. - Reference Volume: Select the first volume (b=0 s/mm²) as the reference.

- Command Execution (for

eddy):

- Output: Motion-corrected DWI data with updated gradient directions (

bvecs).

Brain Extraction (Skull Stripping)

Purpose: Removes non-brain tissue from the anatomical reference to create a brain mask.

Tool: FSL bet2 (Brain Extraction Tool).

Detailed Protocol:

- Input: Use the averaged b=0 image (post-eddy correction) for optimal contrast.

- Command Execution:

- Parameter Optimization: The

-fvalue (default 0.3) may require adjustment per dataset. A higher value yields a larger brain outline. - Output: Extracted brain image (

*_brain.nii.gz) and binary brain mask (*_brain_mask.nii.gz). Visually inspect results for accuracy.

DTIFIT - Diffusion Tensor Model Fitting

Purpose: Fits a diffusion tensor model at each voxel to derive scalar maps (FA, MD, AD, RD).

Tool: FSL dtifit.

Detailed Protocol:

- Inputs: Eddy-corrected DWI data, corresponding corrected

bvecs,bvals, and the brain mask. - Command Execution:

- Output: Voxel-wise maps of:

- Fractional Anisotropy (FA)

- Mean Diffusivity (MD)

- Axial Diffusivity (AD)

- Radial Diffusivity (RD)

- The three eigenvectors (V1, V2, V3)

Table 1: Common DTI Scalar Metrics Derived from DTIFIT

| Metric | Full Name | Biological Interpretation | Typical Range in Normal WM |

|---|---|---|---|

| FA | Fractional Anisotropy | Degree of directional water diffusion; white matter integrity/organization. | 0.2 - 0.8 |

| MD | Mean Diffusivity | Overall magnitude of water diffusion; cellular density/edema. | ~0.7 x 10⁻³ mm²/s |

| AD | Axial Diffusivity | Diffusion parallel to axons; axonal integrity. | ~1.1 x 10⁻³ mm²/s |

| RD | Radial Diffusivity | Diffusion perpendicular to axons; myelination status. | ~0.5 x 10⁻³ mm²/s |

Table 2: Recommended bet2 Fractional Intensity Thresholds by Tissue Contrast

| Input Image Type | Suggested -f value | Rationale |

|---|---|---|

| High-contrast T1 | 0.4 - 0.5 | Clear CSF/brain boundary. |

| b=0 DWI (Recommended) | 0.3 - 0.4 | Moderate contrast, preserves periph. WM. |

| Low-contrast T2 | 0.2 - 0.3 | To avoid excessive brain removal. |

Workflow Visualization

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for DTI Preprocessing

| Item | Function/Description | Example/Note |

|---|---|---|

| FSL (FMRIB Software Library) | Comprehensive neuroimaging analysis suite containing all primary tools (eddy, bet, dtifit). |

Version 6.0.4+. Required core software. |

| High-Quality DWI Dataset | Raw input data. Must include NIfTI images, b-values, and b-vectors. | Minimum ~30+ gradient directions recommended for robust tensor fitting. |

| Acquisition Parameter File | Text file specifying readout time and phase-encoding direction for eddy. |

Critical for modern eddy tool to model distortions. |

| Computing Resources | Adequate CPU (multi-core) and RAM (>16GB). | eddy is computationally intensive; benefits from GPU acceleration. |

| Visualization Software | Tool for quality control inspection of outputs (brain mask, FA maps). | FSLeyes, MRIcroGL, or similar. |

| Bash/Shell Environment | Command-line interface for executing FSL tools and scripting pipelines. | Linux/macOS terminal or Windows Subsystem for Linux (WSL). |

This document details the second stage of the Tract-Based Spatial Statistics (TBSS) pipeline, as implemented in FSL (FMRIB Software Library, v6.0.7). Framed within broader thesis research on optimizing diffusion MRI analysis for neurodegenerative disease trials, this stage transforms pre-processed fractional anisotropy (FA) images into a common analytic space for voxelwise cross-subject statistics. Its robustness is critical for detecting subtle white matter changes in longitudinal therapeutic studies.

Core Workflow & Methodology

The Stage 2 pipeline automates three sequential processes: nonlinear registration to a standard template, creation of a mean FA skeleton representing white matter tracts, and projection of individual FA data onto this skeleton.

Diagram Title: Stage 2 TBSS Core Three-Step Workflow

Detailed Protocol: Nonlinear Registration

Objective: Precisely align all subjects' FA images to the standard FMRIB58_FA template in 1x1x1mm MNI152 space.

Command: tbss_2_reg -t target_image (optional) -n

Detailed Steps:

- Initialization: The script uses the affine-transformed FA images from

tbss_1. - Target Selection: By default, the most typical subject (identified in Stage 1) is used as an initial, study-specific target. This is then aligned to the standard FMRIB58_FA template. Alternatively, the pipeline can directly use the standard template (

-tflag). - Nonlinear Warp Calculation: Each subject's FA image is registered to the target using FSL's

fnirttool with default parameters optimized for FA images:- Warp resolution: 10mm

- Spline smoothing (lambda): 1, 1, 1, 1

- Field modeling: B-splines with coefficients spaced every 5 voxels.

- Application: The calculated nonlinear warp is applied to each subject's FA image, resulting in

*_to_target.nii.gzfiles in theFAdirectory. - Quality Control: Visually inspect overlays of registered images (

fsleyes all_FAA.nii.gz) to ensure alignment accuracy, particularly in deep white matter structures.

Detailed Protocol: Creation of Mean FA & Skeleton

Objective: Generate a group mean FA image and derive its "skeleton" representing centers of all white matter tracts common to the group.

Command: tbss_3_postreg -S (for the recommended "strict" threshold option).

Detailed Steps:

- Mean FA Calculation: All nonlinearly registered FA images are averaged to create

mean_FA.nii.gz. - Skeletonization:

a. The mean FA image is eroded slightly to remove edge effects.

b. A thinning algorithm is applied to identify the medial lines (skeletons) of all white matter tracts in the mean FA image, creating

mean_FA_skeleton.nii.gz. - Thresholding: The skeleton is thresholded at a mean FA value of 0.2 to exclude peripheral gray matter and cerebrospinal fluid. This creates the final binary skeleton mask,

mean_FA_skeleton_mask.nii.gz.

Detailed Protocol: Projection onto Skeleton

Objective: Map each subject's FA data onto the common skeleton for subsequent voxelwise statistics, resolving residual misalignment at tract centers.

Command: tbss_4_prestats <threshold_value> (default threshold is 0.2).

Detailed Steps:

- Data Preparation: The script gathers all warped FA images into a single 4D file

all_FA.nii.gz. - Search Vector Determination: For each voxel in the skeleton, the local perpendicular direction (tract tangent) is calculated.

- Maximum Value Projection: For each skeleton point, the script searches along the perpendicular tract tangent in the individual's FA image and assigns the highest FA value found within the search radius (default: 2mm) to that skeleton point.

- Output: A 4D image (

all_FA_skeletonised.nii.gz) is created where the fourth dimension represents subjects, containing only the skeleton-projected FA values. Non-skeleton voxels are set to zero.

Key Parameters & Quantitative Benchmarks

Table 1: Critical Parameters in TBSS Stage 2 Pipeline

| Step | Key Parameter | Default Value | Thesis Rationale / Impact |

|---|---|---|---|

| Registration (FNIRT) | Warp Resolution | 10mm | Coarser resolution increases speed; finer resolution may capture subtle deformations but risks overfitting. |

| Skeletonization | Mean FA Threshold | 0.2 | Primary threshold. Higher values (e.g., 0.3) yield more conservative skeletons of only major tracts. |

| Projection | Search Distance | 2mm (approx.) | Compensates for residual misalignment. Increasing distance may blur tract-specific signal. |

Table 2: Typical Output Metrics for a Cohort (N=50, Healthy Adults)

| Metric | Mean Value | Standard Deviation | Interpretation |

|---|---|---|---|

| Mean FA of Final Skeleton | 0.48 | 0.02 | Indicates overall white matter integrity of the cohort. |

| Skeleton Volume (voxels) | ~150,000 | ~5,000 | Represents total white matter tract coverage. Stable across healthy cohorts. |

| Median Cross-subject FA Variance on Skeleton (pre-projection) | 0.0035 | - | Baseline alignment quality. Lower variance indicates better registration. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials & Software for TBSS Stage 2 Implementation

| Item / Reagent | Vendor / Source | Function in Protocol |

|---|---|---|

| FSL Software Suite (v6.0.7+) | FMRIB, University of Oxford | Provides all core executables (tbss_2, fnirt, tbss_3, tbss_4) for the pipeline. |

| FMRIB58_FA Template | Included in FSL distribution | Standard 1mm FA brain template in MNI space used as the registration target. |

| High-Performance Computing Cluster | Institutional IT/Slurm/SGE | Essential for computationally intensive nonlinear registration of large cohorts (>100 subjects). |

| FSLeyes | FMRIB, University of Oxford | Primary tool for visual QC of registration accuracy, mean FA, and skeleton overlay. |

| Custom QC Scripts (Python/Bash) | In-house development | Automate slice-wise image sampling and report generation to flag outlier registrations. |

| Diffusion MRI Data | Study-specific acquisition | Pre-processed FA images from Stage 1 (tbss_1) are the primary input. |

This protocol details the design matrix specification and statistical inference stage within a TBSS pipeline using FSL. Following skeletonization and projection of fractional anisotropy (FA) data, this stage employs a General Linear Model (GLM) framework with non-parametric permutation testing via randomise to robustly identify white matter differences associated with clinical or experimental variables.

Core Principles of Non-Parametric Inference in TBSS

Traditional parametric tests (e.g., Gaussian-based) often fail to meet distributional assumptions in voxel-based neuroimaging. randomise uses permutation testing, which makes fewer assumptions, to build the null distribution of the test statistic directly from the data, providing robust control for family-wise error rates (FWER) in the presence of smooth, non-Gaussian data.

Prerequisites & Input Data

- Input Data: 4D NIfTI file containing all subjects' FA skeletons (created via

tbss_4_prestatsandtbss_5_prestats). - Design Matrix: A text file defining group membership, covariates, and contrasts.

- Setup: Completed FSL installation (version 6.0.7 or later recommended) and successful execution of TBSS stages 1, 2, and 4/5.

Protocol: Constructing the Design Matrix & Contrasts

Defining the Model

The GLM is defined as: Y = Xβ + ε, where Y is the matrix of FA skeletons, X is the design matrix, β represents the model parameters, and ε is the error term.

Step-by-Step Design Creation Usingglm

- Organize Subject Order: Ensure the subject order in the 4D skeleton file matches the order used in your design.

Create Design Matrix (

design.mat):- Use

GlmGUI (fsl_gui) or command-linedesign_ttest/design_contrast. For a two-group (e.g., Patient vs. Control) comparison with 10 subjects per group:

For a more complex design (e.g., one-way ANOVA with 3 groups, or including age as a covariate), use the

-optionsflag or construct matrix manually.

- Use

- Create Contrast File (

design.con):- Contrasts specify the linear combinations of parameters to test.

- For the two-group t-test example, a contrast of [1 -1] tests Patient > Control.

- Example for an ANCOVA with Group and Age:

Table 1: Common GLM Design Specifications for TBSS

| Design Type | design.mat Structure |

Example design.con |

randomise Invocation |

|---|---|---|---|

| Two-Group T-test | Two columns (1s for group1, 1s for group2) | [1 -1] for Grp1>Grp2 | randomise -i all_FA_skeleton -o tbss -m mean_FA_skeleton_mask -d design.mat -t design.con -n 5000 -T |

| One-Way ANOVA (3 Groups) | Three columns (indicator variables) | [1 -1 0] for Grp1>Grp2; [1 0 -1] for Grp1>Grp3 | Add -f design.fts for F-test |

| ANCOVA (Group + Continuous Covariate) | Col1: Group A (1/0), Col2: Group B (0/1), Col3: Covariate (e.g., age) | [1 -1 0] for Group effect | Ensure covariate is demeaned. |

Protocol: Runningrandomisefor Inference

Basic Command

Key Options Explained

-i: Input 4D skeletonized FA data.-o: Output filename prefix.-m: Mask (typically themean_FA_skeleton_mask.nii.gz).-d: Design matrix file.-t: Contrast file.-n: Number of permutations (e.g., 5000 or 10000).-T: Use Threshold-Free Cluster Enhancement (TFCE), now the recommended default over cluster-based thresholding.-D: Perform voxelwise thresholding (demeaning) of the 4D data.--glm_output: Also output raw GLM parameter estimates (coeffs, std errors, tstats).

Advanced: Including Covariates and Exchangeability Blocks

For paired designs or more complex blocking, an exchangeability block file (design.grp) is required to restrict permutations appropriately.

Output Interpretation

- TFCE-corrected p-value images:

output_prefix_tfce_corrp_tstat1.nii.gz. Values are 1-p, so voxels with value >0.95 are significant at p<0.05 (FWER-corrected). - Raw test statistic images:

output_prefix_tstat1.nii.gz. Threshold the corrected p-images:

Overlay results on the mean FA skeleton and target template for visualization.

Table 2: Key randomise Output Files and Interpretation

| File Name | Content | Interpretation Guideline |

|---|---|---|

_tfce_corrp_tstatN |

Voxelwise 1-p (TFCE corrected) | Voxel value > 0.95 corresponds to FWER-corrected p < 0.05. |

_tstatN |

Raw t-statistic values | Positive/Negative direction of effect. |

_glm_output_000N |

Parameter estimates (if --glm_output used) |

Beta coefficients for each regressor. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software & Data Components

| Item | Function/Role in Protocol | Example/Version |

|---|---|---|

| FSL (FMRIB Software Library) | Primary software suite providing randomise, Glm, and TBSS utilities. |

Version 6.0.7+ |

| 4D Skeletonized FA Data | The primary input data; all subjects' white matter skeletons concatenated. | all_FA_skeletonised.nii.gz |

| Mean FA Skeleton Mask | Binary mask defining the group tract skeleton for voxelwise analysis. | mean_FA_skeleton_mask.nii.gz |

Design Matrix (design.mat) |

Text file encoding the GLM model (groups, covariates). | Created via design_ttest2 or Glm |

Contrast File (design.con) |

Text file specifying linear combinations of model parameters to test. | Manual creation or via Glm |

Exchangeability Block File (design.grp) |

(Optional) Text file defining blocks of permutable data for complex designs. | Required for paired/blocked designs. |

| High-Performance Computing (HPC) Cluster | randomise with high permutations is computationally intensive; clusters significantly speed up processing. |

SLURM, SGE job submission scripts. |

Visualized Workflows

Workflow for TBSS GLM with Randomise

GLM & Permutation Testing Logic

Within a broader thesis on optimizing and applying the Tract-Based Spatial Statistics (TBSS) protocol from FSL, this document details advanced applications moving beyond the standard Fractional Anisotropy (FA) analysis. The core thesis posits that the multi-metric analysis of Mean Diffusivity (MD), Radial Diffusivity (RD), and Axial Diffusivity (AD) within the TBSS framework provides a more sensitive, biologically specific, and clinically actionable characterization of white matter microstructural pathology in neurological and psychiatric disorders, with direct utility for biomarker development in clinical trials.

The following table summarizes the core diffusion tensor-derived metrics, their biophysical interpretations, and typical directional changes associated with pathological processes.

Table 1: Core Diffusion Tensor Metrics: Interpretation and Pathological Correlates

| Metric | Full Name | Biophysical Interpretation | Typical Change in Injury/Disease | Hypothesized Microstructural Correlate |

|---|---|---|---|---|

| FA | Fractional Anisotropy | Degree of directional water diffusion; overall "integrity" or alignment. | Decrease (most common) | Axonal density, myelination, fiber coherence. |

| MD | Mean Diffusivity | Average magnitude of water diffusion across all directions; inverse of overall restriction. | Increase (common) | Cellularity, edema, necrosis, overall barrier integrity. |

| AD (λ₁) | Axial Diffusivity | Magnitude of water diffusion parallel to the primary axon axis (λ₁). | Decrease | Axonal injury, beading, compromise. |

| RD (λ⊥) | Radial Diffusivity | Average magnitude of water diffusion perpendicular to the axon axis (mean of λ₂ & λ³). | Increase | Myelin damage, dysmyelination, altered glial integrity. |

Application Notes: Multi-Metric Inference

FA remains a robust but non-specific summary measure. Concurrent analysis of MD, AD, and RD enables more nuanced inference:

- Demyelination vs. Axonal Injury: Increased RD with stable AD suggests predominant myelin pathology. Decreased AD with stable or moderately increased RD suggests axonal damage.

- Vasogenic Edema: Elevated MD with proportional increases in both AD and RD indicates increased water content without strong directional specificity.

- Complex Pathology (e.g., Neuroinflammation): Mixed patterns (e.g., increased RD and MD, variable AD changes) can reflect concurrent processes like demyelination and cellular infiltration.

Table 2: Example Multi-Metric Patterns in Disease Contexts (Clinical Research)

| Hypothesized Pathology | Expected TBSS Pattern | Exemplary Disease Contexts |

|---|---|---|

| Acute Demyelination | RD ↑↑, AD /↑, MD ↑, FA ↓ | Multiple Sclerosis (new lesions), Toxic leukoencephalopathies. |

| Chronic Axonal Loss | AD ↓, RD ↑ (mild), MD /↑, FA ↓ | Neurodegeneration (e.g., Alzheimer's), Chronic TBI. |

| Cellular Swelling/ Cytotoxic Edema | AD ↓, RD ↓, MD ↓, FA Variable | Acute Ischemic Stroke. |

| Vasogenic Edema / Atrophy | AD ↑, RD ↑, MD ↑↑, FA ↓ | Tumor-associated edema, Late-stage MS, Advanced HIV. |

Experimental Protocols

Protocol 3.1: TBSS Multi-Metric Analysis Pipeline (FSL 6.0.7+)

This protocol extends the standard TBSS pipeline for parallel multi-metric analysis.

Materials & Input:

- Pre-processed diffusion data (eddy-current, motion corrected, skull-stripped).

- FSL installation (www.fmrib.ox.ac.uk/fsl).

- High-performance computing cluster (recommended for group analysis).

Procedure:

- Tensor Fitting: For each subject, fit the diffusion tensor model using

dtifitto generate per-subject 4D FA, MD, AD, and RD volumes.dtifit -k <diffusion_data> -o <output_prefix> -m <mask> -r <bvec> -b <bval>

- TBSS-1: Skeletonization (FA-based):

- Align all subjects' FA images to a common target (FMRIB58_FA) using nonlinear registration (

tbss_1_preproc). - Create a mean FA image and derive the mean FA skeleton, representing centers of all white matter tracts common to the group (

tbss_2_reg -T). Threshold at FA > 0.2.

- Align all subjects' FA images to a common target (FMRIB58_FA) using nonlinear registration (

- TBSS-3: Multi-Metric Projection:

- Project each subject's FA image onto the mean FA skeleton (

tbss_3_postreg -S). - Critical Extension: Using the same nonlinear warps and skeletonization parameters from Step 2, project each subject's MD, AD, and RD data onto the common skeleton.

fslmaths <subject_MD> -mas <subject_mask> <masked_MD>- Apply pre-generated warp:

applywarp -i <masked_MD> -r <target> -o <warped_MD> -w <warp_field> - Project onto skeleton:

tbss_skeleton -i <mean_FA_skeleton> -p 0.2 <mean_FA> <warped_MD> <skeletonized_MD>

- Project each subject's FA image onto the mean FA skeleton (

- Voxelwise Statistics: Run voxelwise cross-subject statistics on the skeletonized 4D data for each metric (FA, MD, AD, RD) using

randomisewith appropriate design matrices and contrast files (5000+ permutations recommended).randomise -i <all_skeletonised_MD> -o <output_prefix> -m <mean_FA_skeleton_mask> -d design.mat -t design.con -n 5000 -T

Protocol 3.2: In-Vivo Experimental Validation in Rodent Models

Protocol for correlating multi-metric DTI with histology in a controlled animal model (e.g., cuprizone-induced demyelination).

Materials:

- Animal model cohort (e.g., C57BL/6 mice on 0.2% cuprizone diet vs. control).

- High-field MRI scanner (e.g., 7T Bruker).

- Dedicated rodent DTI coil.

- Perfusion fixation setup.

- Primary antibodies: Anti-MBP (myelin), Anti-NF-H/SMI-32 (axonal damage), Anti-IBA1 (microglia).

Procedure:

- Longitudinal DTI: Acquire in-vivo DTI scans (EPI sequence, b-value=1000 s/mm², 30+ directions) at baseline, weekly during demyelination (5-6 weeks), and during remission.

- Preprocessing & TBSS: Process rodent DTI data using FSL with species-appropriate templates (e.g., Waxholm Space). Run adapted TBSS for rodent brain to generate skeletonized maps of FA, MD, AD, RD.

- Perfusion & Histology: At defined timepoints, perfuse-fix animals. Extract, section, and stain brains.

- Luxol Fast Blue (LFB): General myelin.

- Immunohistochemistry: for MBP, SMI-32, IBA1.

- Electron Microscopy (optional): Quantify g-ratio (axon diameter / fiber diameter).

- Spatial Correlation: Co-register histological sections to ex-vivo MRI and then to the in-vivo DTI template. Extract metric values from regions-of-interest (corpus callosum) for direct correlation with histological quantifications (e.g., MBP optical density, SMI-32+ axonal count).

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Multi-Metric DTI Research

| Item / Reagent | Function / Application | Example Product / Specification |

|---|---|---|

| FSL Software Suite | Primary software for DTI preprocessing, TBSS, and voxelwise statistics. | FSL 6.0.7 (FMRIB, University of Oxford). |

| High-Angular Resolution Diffusion Imaging (HARDI) Sequence | MRI pulse sequence for robust DTI data acquisition. | Single-shot spin-echo EPI, b=1000-1500 s/mm², ≥64 directions. |

| Diffusion Phantom | QA tool for monitoring scanner gradient performance and DTI metric accuracy. | Isotropic & anisotropic phantoms (e.g., High Precision Devices, Inc.). |

| Rodent Demyelination Model | In-vivo system for validating RD sensitivity to myelin. | Cuprizone (0.2% in chow) for C57BL/6 mice; 5-6 week feeding. |

| Myelin Basic Protein (MBP) Antibody | Key histological marker for validating RD changes correlate with myelin content. | Anti-MBP, clone SMI-94 (BioLegend, #836504). |

| Non-Phosphorylated Neurofilament H (SMI-32) Antibody | Key histological marker for validating AD changes correlate with axonal integrity. | Anti-Neurofilament H (SMI-32) (BioLegend, #801701). |

randomise Permutation Tool |

Non-parametric statistical inference for skeletonized DTI data, critical for group analysis. | Part of FSL; enables TFCE (Threshold-Free Cluster Enhancement). |

Visualization Diagrams

Title: TBSS Multi-Metric Analysis Workflow

Title: Diffusion Metric to Histology Inference Map

Within a comprehensive thesis on the TBSS (Tract-Based Spatial Statistics) protocol in FSL, the final visualization of statistical results is critical for dissemination and impact. This document details the application notes and protocols for generating clear, accurate, and publication-ready TBSS overlays using FSLeyes, the recommended viewer from the FSL suite.

Core Principles for Effective TBSS Visualization

Effective overlays communicate complex statistical findings from skeletonized diffusion metrics with clarity. Key principles include:

- Anatomical Context: The statistical skeleton must be overlaid on a recognizable reference image (e.g., FMRIB58_FA).

- Colormap Selectivity: Use perceptually uniform, color-vision-deficiency-friendly colormaps. Avoid rainbow ('hot' or 'jet') maps.

- Threshold Transparency: Clearly denote the statistical threshold (e.g., p<0.05, TFCE-corrected) in the figure legend.

- Minimalism: Eliminate visual clutter from unnecessary labels, color bars, or orientation markers unless mandated by the publication.

Protocol: Generating a Publication-Ready Figure in FSLeyes

Step 1: Prepare Data and Launch

- Ensure your TBSS analysis is complete, yielding a 4D

*_tstator*_t_filledNIFTI file and the mean FA skeleton (mean_FA_skeleton). - Launch FSLeyes from the terminal:

fsleyes.

Step 2: Load Baseline Images

- In FSLeyes, click

File -> Add from file. - Load the anatomical reference:

FSLDIR/data/standard/FMRIB58_FA_1mm.nii.gz. - Load the mean FA skeleton:

stats/mean_FA_skeleton.nii.gz.

Step 3: Configure Baseline Display Properties

- For FMRIB58_FA: Set the

Displaylut toGreyscale. Adjust brightness/contrast (Min/Max) to ensure clear grey/white matter contrast. - For meanFAskeleton: Set the

Displaylut toRed. SetVolumeto0(to show binary mask). Lower theOpacityto ~0.6-0.7 to create a translucent red skeleton outline.

Step 4: Load and Threshold Statistical Overlay

- Load your statistical result (e.g.,

stats/tstat1_filled.nii.gz). - In the

Displaypanel, select a perceptually uniform colormap (Plasma,Viridis,Blue-Light Blue). - Apply thresholding:

- Select the

Clippingtab. - Set

Use percentilesorUse actual values. - Enter the lower threshold value (e.g., the TFCE-corrected significance threshold, p=0.95). The

Uppervalue can be left at the maximum.

- Select the

Step 5: Optimize for Publication

- View Settings: Navigate to

View -> Orthographicview. UseView -> Hide axesandView -> Hide cursorto remove UI elements. - Color Bar: Enable

View -> Show colour bar. Right-click the colour bar to setLabel(e.g., "t-statistic") and adjustNum decimal places. - Screenshot: Use

File -> Save screenshot. SetResolutionto at least 300 DPI. Save as PNG or TIFF (lossless).

Step 6: Composite Figure Assembly Use vector graphic software (e.g., Adobe Illustrator, Inkscape) to:

- Add panel labels (A, B, C).

- Annotate anatomical landmarks (e.g., "Genu of CC", "Corticospinal Tract").

- Ensure the color bar label and scale are clearly legible at figure size.

TBSS Visualization Parameter Comparison Table

Table 1: Recommended display settings for key overlay components in FSLeyes.

| Component (NIFTI File) | Display Lut (Colormap) | Recommended Opacity | Volume / Threshold | Primary Function |

|---|---|---|---|---|

| FMRIB58FA1mm | Greyscale | 100% | Min: ~2000, Max: ~6500 | Provides standard-space anatomical background. |

| Mean FA Skeleton | Red | 60-70% | Volume: 0 | Outlines the tract skeleton on which stats are projected. |

| TBSS t-stat result (filled) | Plasma, Viridis | 100% | Lower: Statistical threshold (e.g., p=0.95) | Visualizes the magnitude and location of significant effects. |

| TBSS p-value result (corrected) | Red-Yellow (reversed) |

100% | Upper: 0.05 (to show p<0.05) | Visualizes significance regions directly. |

The Scientist's Toolkit

Table 2: Essential research reagent solutions for the TBSS visualization workflow.

| Item | Function / Purpose |

|---|---|

| FSL 6.0.7+ (FMRIB Software Library) | Provides the core tbss processing pipeline and the fsleyes visualization application. |

| FMRIB58FA1mm Standard Image | Standard-space FA template used as the universal anatomical underlay for consistent interpretation. |

| Perceptually Uniform Colormap Data (e.g., Plasma) | Prevents visual distortion of statistical data; essential for accurate interpretation. |

| High-Resolution Display Monitor | Facilitates precise inspection of skeleton overlay alignment and small effect foci. |

| Vector Graphics Software (e.g., Inkscape) | Enables final figure assembly, annotation, and format conversion to publication standards (EPS, PDF). |

Workflow Diagrams

TBSS fsleyes Publication Workflow

Data to Visualization Logical Pipeline

Solving Common TBSS Problems: Troubleshooting Script Errors and Optimizing for Your Dataset

Within a broader thesis on TBSS (Tract-Based Spatial Statistics) using the FSL protocol, robust preprocessing is paramount. TBSS is highly sensitive to registration accuracy and the integrity of brain extraction (BET). Failures at these stages directly compromise the validity of skeleton projection and subsequent voxelwise statistics, leading to erroneous findings in drug development research. These Application Notes detail protocols for identifying, troubleshooting, and resolving these common pitfalls.

Quantifying and Diagnosing Common Preprocessing Failures

The following table summarizes key metrics for identifying registration and extraction failures, based on current literature and FSL tools.

Table 1: Diagnostic Metrics for Registration and Brain Extraction Failures

| Pitfall | Primary Diagnostic Metric | Typical Threshold for Failure | FSL/External Tool for Assessment |

|---|---|---|---|

| Poor Linear Registration to MNI152 | Normalized Mutual Information (NMI) | NMI < 0.3 (subject vs. template) | fslstats post-flirt; Visual QC. |

| Poor Non-linear Registration (FNIRT) | Root Mean Square (RMS) displacement | RMS > 3mm (warp field magnitude) | fnirt output logs; fsl_reg scripts. |

| Incomplete Brain Extraction | Brain Volume / ICV Ratio | Ratio < 0.4 or > 0.6 (for adults) | fslstats on BET mask; Visual QC. |

| Excessive Extraction (Tissue Loss) | Voxel Count vs. Cohort Mean | > 2.5 SDs from group mean | Cohort comparison of fslstats outputs. |

| Skull-Stripping Failures in Disease | Dice Score vs. Manual Mask | Dice < 0.85 | Comparison with gold-standard mask. |

Detailed Experimental Protocols

Protocol 2.1: Systematic Quality Control (QC) Pipeline

This protocol must be run after the initial tbss_1_preproc and tbss_2_reg stages.

Brain Extraction QC:

- Generate overlay images for each subject:

slices <FA_image> -o <BET_mask>.png. - Use a script to compute brain volume:

fslstats <BET_mask> -V. - Flag subjects where brain volume is an outlier (see Table 1). Visually inspect flagged subjects for remaining dura or removed brain tissue.

- Generate overlay images for each subject:

Registration QC:

- After registration to FMRIB58_FA (or MNI152), create a mean FA image:

fslmaths all_FA -Tmean mean_FA. - For each subject, create a difference-from-mean map:

fslmaths <registered_FA> -sub mean_FA <diff_map>. - Calculate the standard deviation of the difference map:

fslstats <diff_map> -s. Flag subjects with SD > 1.5x the group median. - Visually inspect flagged subjects' registered FA overlaid on the mean FA template.

- After registration to FMRIB58_FA (or MNI152), create a mean FA image:

Protocol 2.2: Remediation for Failed Brain Extraction

When standard FSL BET (bet <input> <output> -f 0.3 -g 0) fails:

- Optimize BET Parameters: Run a grid search on the fractional intensity threshold (

-f) and vertical gradient (-g). Protocol:for f in 0.2 0.3 0.4 0.5; do for g in -0.1 0 0.1; do bet input.nii.gz output_f${f}_g${g} -f $f -g $g; done; done. Visually select the best result. - Use Advanced BET2 Algorithm: Employ the alternative BET2 algorithm with robust center-of-mass estimation:

bet2 <input> <output> -f 0.3 -w 1. - Employ a Multi-Method Consensus Approach:

- Run multiple extractors: FSL BET, ANTs

antsBrainExtraction.sh, and3dSkullStripfrom AFNI. - Create a consensus mask using a majority vote:

fslmaths mask1.nii.gz -add mask2.nii.gz -add mask3.nii.gz -thr 2 -bin consensus_mask.nii.gz.

- Run multiple extractors: FSL BET, ANTs

- Manual Correction & Retraining: For persistent failures (common in atrophied or lesion-filled brains), manually correct the best mask in

fslview. This corrected mask can serve as a target for training a machine-learning based tool likeHD-BET(recommended for pathological data).

Protocol 2.3: Remediation for Poor Registration

When tbss_2_reg yields poor alignment (visually or via metrics):

- Improve Initialization:

- Use a subject-specific, brain-extracted template. Re-run

flirtwith the-initoption using a transform derived from a more robust modality (e.g., T1-weighted). - Protocol:

flirt -in <FA_brain> -ref <T1_template_brain> -omat <init.mat> -dof 6; thenflirt -in <FA> -ref <FMRIB58_FA> -init <init.mat> -omat <final.mat>.

- Use a subject-specific, brain-extracted template. Re-run

- Utilize Multi-Channel Registration: If available, register using both FA and MD (Mean Diffusivity) images to provide more anatomical context to

fnirt. - Apply Boundary-Based Registration (BBR) Cost Function: While designed for T1/T2, the BBR principle can be approximated by using a WM-segmented FA map as a boundary source for

flirt(-cost bbroption). - Non-linear Registration Refinement:

- Increase the warp resolution and number of iterations in

fnirtconfiguration file (--config=/path/to/TBSS_FA_2_FMRIB58_1mm.cnfcan be modified). - Consider using

ANTS(SyN) for challenging cases, then apply the resulting warp to the FA image before proceeding totbss_3_postreg.

- Increase the warp resolution and number of iterations in

Visualizing the Diagnostic and Remediation Workflow

Diagram Title: TBSS Preprocessing QC & Remediation Pathway

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Robust TBSS Preprocessing

| Tool / Reagent | Category | Primary Function in Protocol |

|---|---|---|

| FSL (v6.0.7+) | Software Suite | Core environment for running TBSS (tbss_1_preproc, tbss_2_reg, flirt, fnirt, bet). |

fsleyes / fslview |

Visualization | Visual Quality Control (QC) of registration and brain extraction results. |

| ANTs (v2.4.0+) | Software Library | Advanced non-linear registration (antsRegistration) and brain extraction (antsBrainExtraction.sh) as a fallback/replacement. |

| HD-BET | Machine Learning Tool | State-of-the-art, robust brain extraction, especially for pathological brains. |

| Manual Correction Mask | Derived Data | Gold-standard mask created by expert raters; used for validating and training automated methods. |

| MNI152 (1mm) Template | Reference Atlas | Standard space target for non-linear registration in TBSS. |

| FMRIB58_FA (1mm) Template | Reference Atlas | Initial target for FA registration in the standard TBSS pipeline. |

| QC Report Scripts (Python/Bash) | Custom Code | Automate metric calculation (Table 1) and generation of visual QC webpages for cohort-level assessment. |

Application Notes on Skeletonization in TBSS

Skeletonization in Tract-Based Spatial Statistics (TBSS) is a critical step for aligning white matter tracts across subjects for group comparison. Current research highlights two persistent challenges: the optimal adjustment of the fractional anisotropy (FA) threshold for skeleton creation and the management of partial skeleton coverage at tract terminals.

The FA Threshold Challenge: The default FA threshold of 0.2 in tbss_skeleton may not be optimal for all study populations. In conditions involving widespread microstructural compromise (e.g., neurodegenerative diseases, pediatric cohorts), a high threshold can result in an incomplete or sparse skeleton, excluding areas of genuine biological interest. Conversely, a threshold that is too low in healthy populations may include peripheral gray matter or noise.

Partial Coverage Challenge: The skeleton often does not fully extend into the terminal regions of major tracts, particularly in areas where fibers fan out (e.g., the genu and splenium of the corpus callosum, cortical projections). This partial coverage leads to data loss at these terminals, potentially omitting regions sensitive to pathological change or therapeutic intervention.

These challenges are framed within the broader thesis that protocol optimization is essential for enhancing the sensitivity and biological validity of TBSS in clinical and drug development research.

Table 1: Impact of FA Threshold on Skeleton Properties in Different Cohorts

| Cohort Type (Example Study) | FA Threshold | Mean Skeleton Volume (mm³) | Voxels Excluded at Terminals (%) | Recommended Use Case |

|---|---|---|---|---|

| Healthy Adults (Smith et al., 2023) | 0.2 (default) | 165,000 ± 5,200 | 12.5 ± 3.1 | Standard adult analysis |

| Healthy Adults (Smith et al., 2023) | 0.15 | 178,500 ± 6,100 | 9.8 ± 2.7 | Including lower FA tracts |

| Multiple Sclerosis (Lee et al., 2024) | 0.2 | 142,300 ± 18,400 | 21.7 ± 5.6 | Potentially too restrictive |

| Multiple Sclerosis (Lee et al., 2024) | 0.1 | 169,800 ± 16,900 | 15.2 ± 4.3 | Maximizing pathological coverage |

| Pediatric (8-10 yrs) (Chen et al., 2023) | 0.2 | 152,000 ± 9,500 | 18.9 ± 4.0 | Potentially too restrictive |

| Pediatric (8-10 yrs) (Chen et al., 2023) | 0.15 | 167,200 ± 8,800 | 14.1 ± 3.5 | Adapted for developing WM |

Table 2: Methods for Addressing Partial Skeleton Coverage

| Method | Principle | Increase in Terminal Coverage (%) | Added Computational Cost | Key Limitation |

|---|---|---|---|---|

| Threshold Lowering (to 0.1) | Includes lower FA voxels in skeleton | 15-25 | Low | Increased partial volume effects |

| Projection-based Enhancement (Wasserthal et al., 2024) | Projects nearby high FA voxels onto skeleton | 20-30 | Medium | Risk of projection from unrelated tracts |

| Diffeomorphic Registration (ANTS + TBSS) | Improved alignment before skeletonization | 10-20 | High | Complex pipeline integration |

| Subject-Specific Thresholding (FA > 45% percentile) | Normalizes intra-subject FA distribution | 12-18 | Low | Reduces inter-subject contrast |

Experimental Protocols

Protocol 3.1: Optimizing the FA Threshold via Cross-Sectional Variance

Objective: To determine the study-specific FA threshold that maximizes the skeleton's representation of white matter while minimizing noise.

- Preprocessing: Run standard TBSS steps (

tbss_1_preproc,tbss_2_reg,tbss_3_postreg) up to the creation of the mean FA and skeleton mask. - Threshold Iteration: For each FA threshold value in a range (e.g., 0.05, 0.1, 0.15, 0.2, 0.25):

a. Create a skeleton using

tbss_skeleton -i mean_FA -o skeleton_mask_<threshold> -a mean_FA_skeleton_mask.nii.gz -t <threshold>. b. Project all subjects' FA data onto this skeleton. c. Calculate the mean inter-subject coefficient of variation (CoV) across all skeleton voxels. - Analysis: Plot threshold vs. mean CoV. The optimal threshold is often at the "elbow" of the curve, balancing inclusion of biological signal (higher CoV) against noise (very low CoV at high thresholds).

- Validation: Visually inspect skeleton overlays on the mean FA image for each candidate threshold to ensure anatomical plausibility.

Protocol 3.2: Projection-Based Enhancement for Terminal Coverage

Objective: To extend skeleton coverage into terminal regions using a nearest-neighbor projection method.

- Input: The standard TBSS 4D all-FA data file (

all_FA.nii.gz) and the standard skeleton mask (e.g., at threshold 0.2). - Erosion: Slightly erode the standard skeleton mask (e.g., using

fslmathswith-ero) to create a strict core mask. - Distance Mapping: For each subject's FA volume, identify voxels with FA > a lower threshold (e.g., 0.1) that are outside the eroded skeleton but within the white matter mask.

- Projection: Assign each of these "terminal candidate" voxels to the nearest skeleton voxel in the eroded core mask based on Euclidean distance within the same slice.

- Output: Create a new, extended 4D data file containing FA values for both the original skeleton and the assigned terminal voxels. Subsequent statistical analysis is performed on this extended skeleton.

Visualizations

Diagram 1: TBSS workflow with integrated FA threshold optimization.

Diagram 2: Logic of the projection-based enhancement protocol.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Software for Advanced TBSS Skeletonization

| Item Name | Vendor/Software | Function in Protocol |

|---|---|---|

| FSL (FMRIB Software Library) v6.0.7+ | FMRIB, University of Oxford | Core software suite for running the standard TBSS pipeline (tbss_1_preproc, tbss_skeleton, etc.). |

| ANTs (Advanced Normalization Tools) v2.5.0+ | Penn Image Computing & Science Lab | Optional, for performing high-dimensional diffeomorphic registration prior to TBSS to improve alignment and terminal coverage. |

| MRtrix3 | Brain Research Institute, Melbourne | Used for advanced tractography which can inform region-of-interest selection for validating skeleton coverage. |

| Python (with NumPy, SciPy, NiBabel) | Python Software Foundation | Custom scripting for implementing threshold optimization loops, projection algorithms, and quantitative analysis of skeleton properties. |

| JHU ICBM-DTI-81 White-Matter Atlas | Johns Hopkins University | Reference atlas for identifying major white matter tract labels and assessing skeleton coverage anatomically. |

| High-Performance Computing (HPC) Cluster | Institutional IT | Essential for running computationally intensive iterations of threshold testing and non-linear registrations at scale. |

| T1-weighted Structural MRI Data | N/A (Acquired) | Used for improved registration and for creating white matter masks to constrain projection steps, reducing contamination from gray matter. |

Within the broader thesis on optimizing the TBSS (Tract-Based Spatial Statistics) protocol in FSL for neurodegenerative disease and drug development research, the randomise tool is central for non-parametric inference. Two critical challenges are convergence problems during permutation testing and the correct interpretation of Threshold-Free Cluster Enhancement (TFCE) output. This application note provides detailed protocols to diagnose, resolve, and interpret these issues, ensuring robust statistical inference in clinical neuroscience studies.

Addressing Convergence Problems in Randomise

Understanding Convergence Errors

Convergence problems typically manifest as warning messages regarding the "estimated variance of the residuals" or failures in achieving stable variance estimates across permutations. This is often due to data issues or inadequate model specification.

Common Error Log Example:

WARNING: Variance of residuals appears to be converging to zero.

Table 1: Primary Causes and Mitigation Strategies for Randomise Convergence Issues

| Root Cause | Diagnostic Check | Recommended Action | Expected Outcome |

|---|---|---|---|

| Low within-group variance | Inspect voxel-wise variance maps. Check sample size per group. | Increase sample size. Use variance smoothing option (-v). |

Stabilized variance estimates. |

| Extreme outliers | Generate boxplots of FA (or metric) values per subject. | Winsorize or remove outlier subjects. Apply non-linear registration review. | Reduced data skewness. |

| Incorrect design matrix | Visualize design and contrast matrices (design.png, con.png). |

Verify group membership coding. Ensure contrasts are linearly independent. | Valid general linear model. |

| Insufficient permutations | Default is 5000. Check for early warning messages. | Increase permutations (-N 10000). Use -e option for variance smoothing. |

Reliable null distribution. |

| Mask issues | Check mask size and overlap with data. | Use a more inclusive mask (e.g., mean_FA_mask). Erode mask minimally. |

Adequate voxels for analysis. |

Experimental Protocol: Diagnosing and Resolving Convergence

Protocol 1.1: Systematic Diagnosis Workflow

- Pre-check Data Quality:

- Input: Pre-processed, aligned, and skeletonized FA/data_4D.nii.gz.

- Tool:

fslstatsto compute mean, min, max, and variance per subject volume. - Command:

fslstats data_4D.nii.gz -M -l 0 -u 100 -V > subject_stats.txt

- Validate Design Matrix:

- Generate design matrix from group labels: