Advancing Episodic Memory Assessment in Neurodegenerative Diseases: Digital Tools, Biomarkers, and Clinical Applications

This article synthesizes current advancements in episodic memory assessment for neurodegenerative diseases, targeting researchers and drug development professionals.

Advancing Episodic Memory Assessment in Neurodegenerative Diseases: Digital Tools, Biomarkers, and Clinical Applications

Abstract

This article synthesizes current advancements in episodic memory assessment for neurodegenerative diseases, targeting researchers and drug development professionals. It explores the foundational role of episodic memory as a core early deficit in conditions like Alzheimer's disease, examines the shift toward digital, remote, and unsupervised assessment methodologies, addresses key challenges in implementation and optimization, and provides a comparative analysis of validation evidence for novel tools against traditional benchmarks. The content highlights how integrating digital cognitive assessments with fluid biomarkers creates new opportunities for scalable case-finding, clinical trial enrichment, and high-frequency monitoring of therapeutic outcomes.

The Critical Role of Episodic Memory in Early Neurodegenerative Disease Detection and Staging

Episodic Memory as a Primary Cognitive Indicator in Preclinical and Prodromal Alzheimer's Disease

Within the landscape of neurodegenerative disease research, the detection of Alzheimer's disease (AD) pathology at its earliest, most treatable stages is paramount. The pathophysiology of AD begins accumulating many years, even decades, before the onset of overt clinical dementia [1]. This disease continuum spans from a preclinical stage (asymptomatic but with biomarker evidence of pathology) to a prodromal stage (characterized by subtle cognitive symptoms, often termed Mild Cognitive Impairment or MCI, that do not yet meet dementia criteria) [1] [2]. Within this framework, episodic memory—the ability to recall unique personal experiences in terms of their content (what), temporal occurrence (when), and location (where)—has emerged as a primary cognitive indicator. Its decline is a hallmark of early AD due to the vulnerability of its neural substrates, particularly the medial temporal lobe circuit, which includes the entorhinal cortex and hippocampus [3] [2] [4]. This Application Note details the protocols and underlying evidence for leveraging episodic memory assessment to identify individuals in the preclinical and prodromal stages of AD, providing researchers with actionable tools for clinical trials and longitudinal studies.

The following tables summarize key quantitative findings from recent research, highlighting the sensitivity of episodic memory measures in early AD detection.

Table 1: Progression to Symptomatic AD by Preclinical Stage (Over 5 Years) [5]

| Preclinical AD Stage | CSF Biomarker Profile | Proportion of Cohort at Baseline | 5-Year Progression Rate to Symptomatic AD (CDR ≥0.5) |

|---|---|---|---|

| Normal | Normal Aβ and tau | 41.5% (129/311) | 2% |

| Stage 1 | Abnormal Aβ only | 15% (47/311) | 11% |

| Stage 2 | Abnormal Aβ and tau | 12% (36/311) | 26% |

| Stage 3 | Abnormal Aβ, tau, and subtle cognitive changes | 4% (13/311) | 56% |

| SNAP | Abnormal tau only | 23% (72/311) | 5% |

Table 2: Sequence and Timing of Presymptomatic Cognitive Decline in Familial AD [6]

| Estimated Years to Symptom Onset | Key Episodic Memory and Cognitive Measures Found to Be Abnormal |

|---|---|

| -10 years | Accelerated Long-Term Forgetting (ALF) |

| -10 to -7 years | Subjective Cognitive Decline (Everyday Memory Questionnaire) |

| -7 to -5 years | Timed Executive Function (Digit Symbol Substitution Test), Working Memory (Digit Span) |

| -5 to 0 years | General Intelligence (Performance IQ, Verbal IQ), Standard Episodic Memory Tests (Recognition Memory Test, Paired Associate Learning) |

Table 3: Sensitivity of Novel Episodic Memory Tasks in Preclinical AD [7]

| Participant Group | Conceptual Matching Task (CMT) Performance | Preclinical Alzheimer Cognitive Composite (PACC5) Sensitivity |

|---|---|---|

| Aβ-negative Cognitively Unimpaired (Aβ-CU) | Reference (Normal) | Reference (Normal) |

| Aβ-positive Cognitively Unimpaired (Aβ+CU, Preclinical AD) | Significantly Lower | Less sensitive than CMT |

| Aβ-positive Mildly Cognitively Impaired (Aβ+MCI, Prodromal AD) | Significantly Lower | Less sensitive than CMT |

Experimental Protocols for Episodic Memory Assessment

Below are detailed protocols for key episodic memory assessments featured in the cited research.

Protocol: Accelerated Long-Term Forgetting (ALF) Assessment

Background: ALF probes the integrity of hippocampal memory consolidation. Individuals learn new information normally and retain it over short delays (e.g., 30 minutes), but then forget it at an accelerated rate over days. It is a highly sensitive marker of presymptomatic hippocampal dysfunction [6].

Materials:

- Verbal Learning Stimuli: A word list (e.g., 10-15 unrelated nouns), a short story, or a complex figure.

- Recording sheets.

- Quiet testing environment.

Procedure:

- Encoding (Day 1):

- Present the learning material (list, story, figure) to the participant.

- For a word list, use a multi-trial procedure (e.g., three consecutive learning trials).

- Ensure initial learning criterion is met (e.g., 90% correct recall after a 5-minute delay).

- Initial Recall (30-minute Delay):

- After a 30-minute delay filled with non-memory tasks, administer a free recall test for the material.

- Record the number of correct units recalled. This score serves as the baseline for calculating forgetting rates.

- Delayed Recall (7-Day Delay):

- After a 7-day delay, without any warning or rehearsal, administer a second free recall test for the original material.

- Record the number of correct units recalled.

- Data Analysis:

- Calculate the percentage of material retained:

(7-day recall score / 30-minute recall score) * 100 - Compare the retention percentage to normative data. A significantly lower percentage in study participants compared to controls indicates Accelerated Long-Term Forgetting.

- Calculate the percentage of material retained:

Protocol: The Doors and People Test

Background: This tool provides a comprehensive and ecologically valid assessment of verbal and visual episodic memory, measuring recall and recognition across different modalities. It is highly sensitive for differentiating between early aMCI, late aMCI, and mild AD dementia [2].

Materials:

- Doors and People Test kit (manual, record forms, stimulus cards).

Procedure: The test consists of four subtests administered in a single session:

- People Test (Verbal Recall):

- Participants are shown four line drawings of people paired with first names.

- After a brief delay, they are asked to recall the names when shown the pictures (free recall).

- If unable to recall, semantic cues are provided (cued recall).

- Shapes Test (Visual Recall):

- Participants are shown four simple geometric shapes.

- After a delay, they are asked to draw these shapes from memory.

- Doors Test (Visual Recognition):

- Participants are shown a series of photographs of doors.

- Subsequently, they are shown triplets of doors and must identify the one they saw previously.

- Names Test (Verbal Recognition):

- Participants are shown a series of names presented as if on a hotel mailbox.

- Subsequently, they are shown pairs of names and must identify the one they saw previously.

Data Analysis:

- Scores for each subtest are converted to scaled scores based on age-adjusted norms.

- A total weighted recall score (from People and Shapes) and a total weighted recognition score (from Doors and Names) can be calculated.

- Profile analysis of strengths and weaknesses across subtests, particularly a dissociation between preserved recognition and impaired recall, can help stage the severity of medial temporal lobe dysfunction.

Protocol: Conceptual Matching Task (CMT)

Background: The CMT assesses the ability to discriminate between conceptually confusable items. It is hypothesized to be a cognitive marker of early rhinal cortex atrophy, one of the first regions affected by AD pathology [7].

Materials:

- A set of stimulus pairs (e.g., images, words) that are semantically related (e.g., hammer-wrench, cup-mug) and unrelated.

Procedure:

- Task Administration:

- Participants are presented with a target stimulus.

- They are then shown two or more choice stimuli and must select the one that is conceptually identical to the target.

- The critical trials are those where the distractors are highly semantically similar to the target, requiring fine-grained conceptual discrimination.

- Data Analysis:

- The primary outcome measure is the accuracy (percentage of correct trials) on the conceptually confusable trials.

- Reaction time can be a secondary measure. Lower accuracy and/or slower reaction times on confusable trials indicate impairment and are associated with preclinical AD pathology [7].

Visualization of Episodic Memory Assessment Workflow

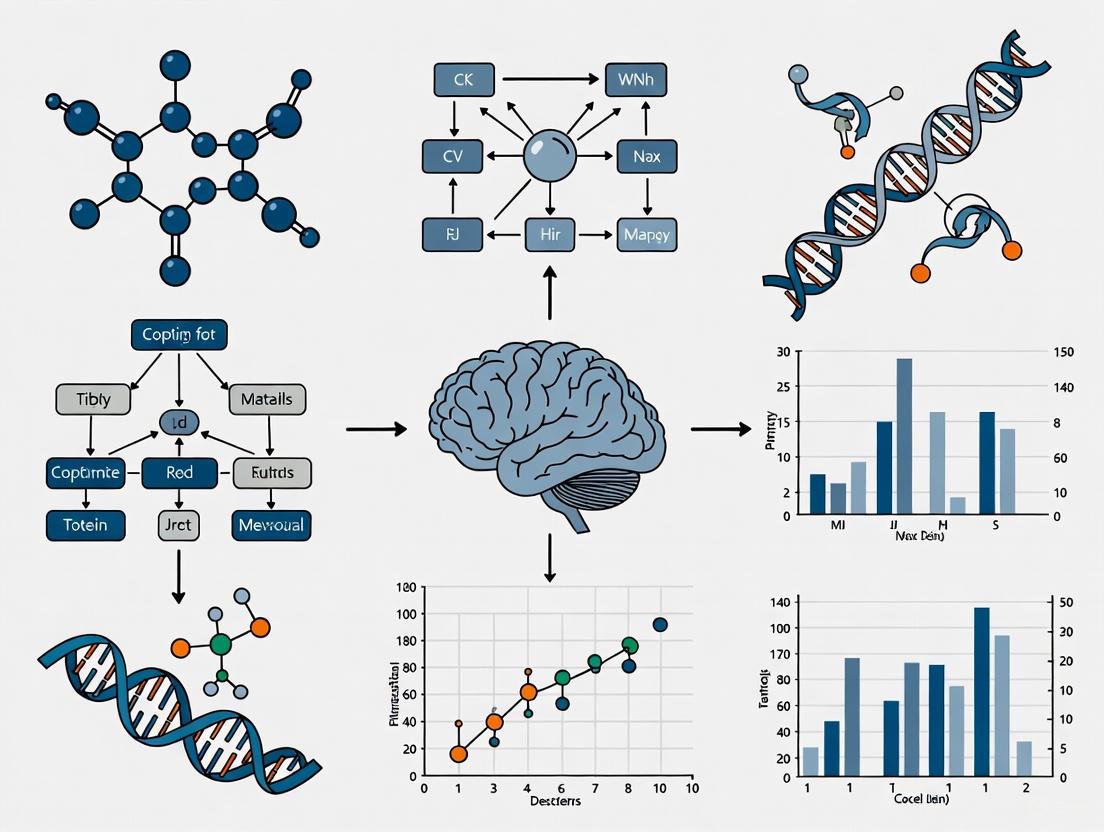

The following diagram illustrates the integrated workflow for assessing episodic memory in the context of the AD continuum, from participant screening to data interpretation.

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Materials and Digital Tools for Episodic Memory Research

| Item / Tool Name | Type | Primary Function in Research | Key Rationale |

|---|---|---|---|

| Cerebrospinal Fluid (CSF) Immunoassays (e.g., INNOTEST) | Biochemical Assay | Quantify core AD biomarkers (Aβ42, t-tau, p-tau) for participant staging [5]. | Provides pathological confirmation of preclinical AD; essential for correlating cognitive measures with biomarker status. |

| Amyloid & Tau PET Tracers (e.g., Florbetapir, Flortaucipir) | Neuroimaging Ligand | Visualize and quantify fibrillar Aβ and tau NFT burden in the brain in vivo [1]. | Allows for topographic mapping of pathology and its relationship to regional brain atrophy and function. |

| The Doors and People Test | Neuropsychological Test | Comprehensive assessment of verbal and visual recall and recognition [2]. | High ecological validity and sensitivity for differentiating early aMCI, late aMCI, and mild AD. |

| Accelerated Long-Term Forgetting (ALF) Paradigm | Behavioral Task | Detect subtle consolidation deficits by testing memory retention over days [6]. | Highly sensitive to presymptomatic hippocampal dysfunction, extending the detectable window of cognitive decline. |

| Conceptual Matching Task (CMT) | Behavioral Task | Assess integrity of conceptual discrimination and rhinal cortex function [7]. | Shows promise in detecting cognitive changes in Aβ-positive CU individuals earlier than standard cognitive composites. |

| Digital Biomarkers / App-Based Assessments | Digital Health Technology | Enable frequent, remote, and objective cognitive monitoring using AI-driven analysis [8] [9]. | Reduces rater variability; allows for high-frequency data collection to detect subtle, real-world cognitive fluctuations. |

Within neurodegenerative disease research, a critical challenge lies in linking specific cognitive deficits, particularly in episodic memory, to their underlying neuropathological drivers. The integration of fluid biomarkers into clinical research protocols has enabled a more precise mapping of memory performance onto specific proteinopathies, such as amyloid-beta (Aβ), hyperphosphorylated tau, and neurofilament light chain (NfL). These biomarkers provide a window into the core pathological processes of Alzheimer's disease (AD), frontotemporal dementia (FTD), and other neurodegenerative disorders, offering objective measures to complement cognitive assessments. This document provides detailed application notes and experimental protocols for researchers and drug development professionals aiming to elucidate the relationship between episodic memory performance and underlying pathology, thereby accelerating therapeutic development and improving diagnostic accuracy.

The following tables consolidate quantitative findings on the diagnostic performance of key plasma biomarkers, providing a reference for interpreting experimental results.

Table 1: Diagnostic Performance of Plasma P-tau217 in Differentiating Alzheimer's Disease

| Comparison Group | Sample Size (AD/Comparator) | Accuracy | Key Findings |

|---|---|---|---|

| Behavioral Variant FTD (bvFTD) | 40 AD / 15 bvFTD | 96% | P-tau217 was significantly elevated in AD compared to bvFTD [10] |

| Primary Psychiatric Disorders (PPD) | 40 AD / 69 PPD | 93% | P-tau217 effectively distinguished AD from common psychiatric mimics [10] |

Table 2: Performance of Neurofilament Light Chain (NfL) and Glial Fibrillary Acidic Protein (GFAP)

| Biomarker | Primary Utility | Performance Summary |

|---|---|---|

| Neurofilament Light Chain (NfL) | Distinguishing neurodegenerative disorders (NDs) from primary psychiatric disorders (PPDs) [10] | Best marker for differentiating all NDs from PPDs; a non-specific marker of neurodegeneration and acute neuronal injury [10] |

| Glial Fibrillary Acidic Protein (GFAP) | Marker of astrocytic activation and neuroinflammation [10] | Added limited diagnostic value compared to p-tau217 and NfL in differentiating AD, bvFTD, and PPDs [10] |

Experimental Protocols for Integrated Memory and Biomarker Assessment

Protocol: High-Frequency Episodic Memory Assessment

Objective: To capture rich, longitudinal data on episodic memory decline, optimized for detecting subtle changes in interventional studies.

Background: Episodic memory decline is a strong marker of neurodegenerative diseases, and high-frequency assessment can capture variability and richer data trajectories [11].

Materials:

- Computerized testing platform (e.g., CANTAB)

- Pre-defined word lists or visual stimuli sets (e.g., animal emojis, abstract shapes)

Procedure:

- Tutorial and Encoding: Participants first complete a tutorial test. Subsequently, they are presented with two sets of four items (e.g., one set of animal emojis and another of abstract shapes) for encoding.

- Immediate Recall: Participants are immediately asked to recall the presented items.

- Delayed Recall: After a significant delay (e.g., 2 hours or 6 hours), participants are tested again for their recall of the two sets [11].

- High-Frequency Schedule: For intensive longitudinal assessment, the task can be administered once in the morning and again in the afternoon after a minimum six-hour delay. This can be repeated across multiple sessions (e.g., 14 sessions) [11].

Data Analysis:

- The primary outcomes are delayed recall metrics, which show the strongest age-related effects [11].

- Performance can be correlated with biomarker levels (e.g., plasma p-tau217, NfL) to link memory decline with pathology.

Protocol: Blood-Based Biomarker Analysis for Differential Diagnosis

Objective: To utilize plasma biomarkers for accurate differentiation of Alzheimer's disease from other neurodegenerative and psychiatric disorders in a research cohort.

Background: Plasma p-tau217 shows strong diagnostic performance and specificity to distinguish AD from non-AD disorders, while NfL is a useful marker for general neurodegeneration [10].

Materials:

- EDTA blood collection tubes

- Centrifuge and -80°C freezer for plasma storage

- Quanterix Simoa HD-X Analyzer

- Simoa NfL and GFAP assay kits (Quanterix Corp.)

- Reagents for the in-house University of Gothenburg (UGOT) p-tau217 assay [10]

Procedure:

- Sample Collection and Processing:

- Collect blood from participants into EDTA tubes during their diagnostic work-up.

- Centrifuge samples to isolate plasma.

- Aliquot and store plasma at -80°C until analysis.

- Biomarker Measurement:

- Measure plasma NfL and GFAP using commercially available N2PB kits on the Simoa HD-X analyzer according to the manufacturer's instructions.

- Measure plasma p-tau217 using the validated in-house UGOT assay.

- All measurements should be performed in one batch by analysts blinded to clinical data and diagnoses to minimize batch effects [10].

- Data Interpretation and Profiling:

- Interpret p-tau217 levels using predefined cut-offs.

- Interpret NfL levels using age-adjusted z-score reference range models [10].

- Create biomarker profiles (e.g., high p-tau217 / high NfL, high p-tau217 / low NfL) to characterize the study population.

Visualizing the Relationship Between Memory, Pathology, and Biomarkers

Diagram 1: From Memory Assessment to Pathological Insight. This workflow illustrates how episodic memory performance in a research participant is linked to underlying proteinopathies, which are quantified through specific plasma biomarkers to yield a definitive research outcome.

Diagram 2: Biomarker-Guided Differential Diagnosis. This decision pathway shows how distinct plasma biomarker profiles can guide the differentiation of Alzheimer's disease from other conditions with high accuracy, based on established clinical research.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagent Solutions for Integrated Studies

| Item | Function/Application | Example/Specification |

|---|---|---|

| EDTA Plasma Samples | Standardized sample matrix for biomarker analysis ensuring consistency across studies. | Collected during diagnostic work-up; stored at -80°C [10]. |

| Simoa HD-X Analyzer | Ultra-sensitive digital immunoassay platform for quantifying low-abundance neurological biomarkers in blood. | Used for measuring plasma NfL and GFAP [10]. |

| Simoa NfL & GFAP Kits | Validated reagent kits for precise measurement of neurofilament light chain and glial fibrillary acidic protein. | Quanterix N2PB kits [10]. |

| P-tau217 Assay | Critical assay for detecting phospho-tau217, a highly specific biomarker for Alzheimer's disease pathology. | In-house University of Gothenburg (UGOT) assay [10]. |

| CANTAB Cognitive Battery | Computerized neuropsychological assessment suite including validated tests of episodic memory like Paired Associates Learning (PAL). | Established tasks for cross-validation of novel memory tests [11]. |

| High-Frequency Memory Test | Novel tool for assessing episodic memory over short intervals, capturing variability and sensitive to decline. | Utilizes animal emojis and abstract shapes; optimized for high-frequency use [11]. |

| Tabular Foundation Model (TabPFN) | A transformer-based foundation model for analyzing small- to medium-sized tabular datasets, potentially useful for integrating multimodal data (cognitive, biomarker, demographic). | Can outperform gradient-boosted decision trees on datasets with up to 10,000 samples, enabling rapid, powerful predictive modeling [12]. |

Within neurodegenerative disease research, establishing clinically meaningful change is paramount for evaluating disease progression and therapeutic efficacy in clinical trials. This is particularly critical for assessing episodic memory, a core cognitive domain affected early in Alzheimer's disease (AD). Traditional cognitive composites, such as the Preclinical Alzheimer's Cognitive Composite (PACC), have served as benchmarks in this endeavor. The integration of semantic memory into the PACC5 variant underscores the continuous evolution of these tools to enhance sensitivity to early, amyloid-related decline [13]. However, the rapid emergence of digital biomarkers and remote assessment technologies presents a new frontier. This document provides application notes and protocols for benchmarking novel digital outcomes against established composites like PACC5, ensuring that new tools are sensitive, valid, and capable of capturing change that matters to patients and researchers [14] [15] [16].

Background and Rationale

The Established Benchmark: PACC and PACC5

The PACC is a multi-domain cognitive composite designed to track the earliest cognitive changes in preclinical AD. It typically includes tests of episodic memory, executive function, and global cognition [13]. Research has demonstrated that adding a measure of semantic memory, specifically Category Fluency (CAT), to create the PACC5 provides unique information about early amyloid-β (Aβ)-related cognitive decline not fully captured by the original PACC [13] [17]. Semantic fluency decline occurs early in the preclinical AD trajectory, and the inclusion of more than one semantic category (e.g., animals, fruits, vegetables) maximizes Aβ group differentiation [13]. Studies have shown that the PACC5 is sensitive to cross-sectional differences between Aβ+ and Aβ- individuals, with effect sizes (Cohen's d) that are marginally larger than those of other composites like the Repeatable Battery for Neuropsychological Status (RBANS) [17].

The Digital Shift: Opportunities and Challenges

Remote and unsupervised digital assessments offer a paradigm shift in cognitive evaluation for neurodegenerative diseases. Their potential benefits include:

- Improved Scalability and Accessibility: Enabling frequent testing in participants' natural environments, reducing patient burden and travel costs [16].

- Enhanced Measurement Precision: Capturing high-fidelity data like reaction time and enabling automated scoring [16].

- Novel Digital Biomarkers: Facilitating the measurement of novel metrics, such as acoustic and linguistic features from speech, which can differentiate between subtypes of mild cognitive impairment (MCI) and correlate with structural brain changes [18] [19].

- Increased Ecological Validity: potentially providing a more reflective measure of everyday cognitive function compared to the clinic-based "white-coat effect" [16].

Despite this potential, digital tools must be rigorously validated against established endpoints to demonstrate their utility in clinical trials. Key challenges include ensuring reliability and validity in unsupervised environments, overcoming variable digital literacy in older populations, and addressing data privacy concerns [16] [20].

Quantitative Benchmarking of Cognitive Composites

Sensitivity to Aβ status is a key metric for evaluating cognitive composites in preclinical AD populations. The following table summarizes cross-sectional effect sizes from a large clinical trial screening sample, illustrating the performance of traditional composites.

Table 1: Sensitivity of Traditional Cognitive Composites to Amyloid Status in Preclinical AD

| Cognitive Composite | Component Tests (Examples) | Aβ+/− Effect Size (Cohen's d) | Key Sensitive Domains within Composite |

|---|---|---|---|

| PACC | MMSE, Logical Memory Delayed Recall, Digit-Symbol Substitution Test (DSST), Free and Cued Selective Reminding Test (FCSRT) [13] [17] | -0.15 [17] | Episodic Memory (FCSRT), Speeded Processing (DSST) [17] |

| PACC5 | All PACC components + Category Fluency (Animals, Fruits, Vegetables) [13] [17] | -0.139 [17] | Semantic Memory (Category Fluency), Episodic Memory, Speeded Processing [13] [17] |

| RBANS | Immediate and Delayed Memory, Visuospatial/Constructional, Language, Attention [17] | -0.097 [17] | Figure Recall (Memory), Coding (Speeded Processing) [17] |

The next table outlines the properties of emerging digital tools that are candidates for benchmarking against composites like PACC5.

Table 2: Properties of Emerging Digital and Remote Cognitive Assessments

| Assessment Type / Tool Class | Key Metrics Captured | Reported Advantages & Use Cases | Challenges |

|---|---|---|---|

| AI-assisted Digital Protocol [18] | Serial list learning, free recall, recognition hits, backward digit span, semantic fluency, error patterns, process variables (e.g., latencies) [18] | ~10-minute administration; classifies CU, amnestic MCI, dysexecutive MCI, dementia with >90% agreement vs. traditional protocols [18] | Requires validation in diverse, real-world populations. |

| Remote & Unsupervised Digital Cognitive Tests [16] | Conventional cognitive constructs (digitized); novel learning curves; high-frequency within-person variability [16] | Enables scalable case-finding, longitudinal monitoring (daily/monthly), and individualized risk assessment; improves measurement reliability [16] | Variable digital literacy; environmental distractions; data privacy and infrastructure [16] |

| Digital Speech Biomarkers [19] | Linguistic (content density, syntax, lexical repetition); Acoustic (pausing, prosody) [19] | Non-invasive, scalable; predicts MoCA with ~10% error; provides complementary info to clinical scales [19] | Data are protected by privacy and security laws; requires specialized processing. |

Experimental Protocols for Benchmarking Studies

Protocol 1: Validating Digital Tools Against the PACC5

Objective: To establish the concurrent validity and relative sensitivity of a novel digital cognitive assessment against the traditional PACC5 composite.

Population: Cognitively unimpaired older adults (CDR = 0), with oversampling for Aβ+ individuals confirmed via PET or CSF biomarkers [13] [17]. A target sample of ~3000 participants is recommended for adequate power, as in large preclinical trials [17].

Design: A cross-sectional study with a longitudinal follow-up component (e.g., annual assessments for 3-4 years) to track decline [13].

Procedure:

- Baseline Assessment:

- Administer the full PACC5 battery [13]:

- Mini-Mental State Examination (MMSE)

- Logical Memory Delayed Recall (LMDR) from the WMS-R

- Digit Symbol Substitution Test (DSST)

- Free and Cued Selective Reminding Test (FCSRT)

- Category Fluency Test (CAT): Three 1-minute trials for animals, fruits, and vegetables. Sum the number of correct words [13].

- Administer the candidate digital assessment in a controlled, supervised setting (e.g., clinic) using a standardized device. The digital tool should aim to capture analogous cognitive domains (e.g., episodic memory, executive function, processing speed).

- Collect biomarker data (Aβ status) for stratification.

- Administer the full PACC5 battery [13]:

Data Processing:

- Calculate PACC and PACC5 z-scores using the baseline sample's mean and standard deviation for all component tests [13].

- Extract primary and secondary digital metrics from the digital assessment (e.g., accuracy, reaction time, novel composite scores).

Statistical Analysis:

- Concurrent Validity: Calculate Pearson or Spearman correlations between the digital outcome scores and the PACC5 total score.

- Sensitivity to Aβ Status: Use Analysis of Covariance (ANCOVA) models, controlling for age, sex, and education, to examine differences in both the digital score and the PACC5 between Aβ+ and Aβ- groups. Compare the effect sizes (Cohen's d) between the digital tool and PACC5 [17].

- Longitudinal Sensitivity: Use Linear Mixed Models (LMM) to assess the annual rate of change in both the digital tool and PACC5, and compare the effect sizes for the Aβ*time interaction [13].

Figure 1: Workflow for validating digital tools against PACC5.

Protocol 2: Integrating Digital Speech Biomarkers

Objective: To determine if digital speech biomarkers provide complementary information to the PACC5 and enhance classification of early neurodegenerative disease.

Population: Cohorts including healthy controls (HC), amnestic Mild Cognitive Impairment (MCI-AD), and other MCI subtypes (e.g., MCI with Lewy bodies) [19]. Sample sizes of ~50-70 per group have been used in initial studies [19].

Design: Cross-sectional case-control study.

Procedure:

- Data Collection:

- Administer the PACC5 (as in Protocol 1).

- Speech Recording: Collect a 90-second spontaneous monologue from each participant in a quiet room. Use a high-quality digital audio recorder. The prompt can be an open-ended instruction such as "please speak spontaneously for one and a half minutes" or a standardized topic [19].

- Collect structural MRI data if available for correlation.

Data Processing and Feature Extraction:

- Automatically transcribe speech recordings. Manually correct transcripts for accuracy.

- Use Natural Language Processing (NLP) to extract linguistic biomarkers [19]:

- Content density

- Use of function words

- Sentence syntax complexity

- Lexical repetition (n-grams)

- Extract acoustic biomarkers [19]:

- Pause duration and frequency

- Speech rate and prosody (pitch, jitter, shimmer)

Statistical and Machine Learning Analysis:

- Group Differences: Use non-parametric tests (e.g., Mann-Whitney U) to compare speech features across diagnostic groups (HC vs. MCI-AD) [19].

- Correlation Analysis: Examine Spearman correlations between speech features and PACC5 component scores (especially Category Fluency) and MRI measures [19].

- Classification Modeling: Train machine learning models (e.g., XGBoost) to evaluate classification performance [19]:

- Model 1: Using only clinical scores (e.g., PACC5 components).

- Model 2: Using only speech biomarkers.

- Model 3: Combining clinical scores and speech biomarkers.

- Compare the accuracy, sensitivity, and specificity of the models to determine if speech adds complementary information.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Tools for Benchmarking Studies

| Item Name | Function/Description | Example Use in Protocol |

|---|---|---|

| PACC5 Component Tests [13] | Standardized paper-and-pencil tests assessing global cognition, episodic memory, executive function, and semantic memory. | Serves as the gold-standard benchmark for validation studies (Protocol 1). |

| Category Fluency Test (CAT) [13] | A specific measure of semantic memory where participants name items from categories (animals, fruits, vegetables) in one minute each. | Key component added to PACC to create PACC5; sensitive to early Aβ-related decline. |

| Amyloid-β Biomarker Assays (e.g., PiB-PET, CSF Aβ42) [13] [17] | Methods to quantify brain amyloid burden for stratifying participants into Aβ+ and Aβ- groups. | Critical for establishing group sensitivity of both traditional and digital tools (Protocol 1). |

| Digital Assessment Platform [18] [16] | A software system (tablet, web-based, or mobile) for administering and scoring cognitive tests digitally. | The candidate tool being validated; captures core cognitive domains and novel metrics (Protocol 1). |

| High-Fidelity Audio Recorder [19] | Equipment to capture clean, high-quality digital speech recordings for subsequent biomarker analysis. | Essential for collecting raw data for digital speech biomarker studies (Protocol 2). |

| Natural Language Processing (NLP) Pipeline [19] | Software tools for automated speech transcription, manual correction, and extraction of linguistic features. | Used to process speech recordings and generate quantitative linguistic biomarkers (Protocol 2). |

| Acoustic Feature Extraction Toolbox (e.g., Praat, OpenSMILE) | Software libraries for analyzing digital audio signals to extract prosodic and acoustic features. | Used to generate quantitative acoustic biomarkers from speech recordings (Protocol 2). |

Benchmarking digital outcomes against established composites like PACC5 is a critical step in the evolution of Alzheimer's disease assessment. The rigorous experimental protocols outlined here provide a framework for demonstrating that digital tools are not merely convenient replacements, but can offer superior sensitivity, richer data, and complementary information. As the field moves toward more frequent, remote, and patient-centered assessment, the integration of validated digital biomarkers into clinical trial endpoints will be essential for capturing clinically meaningful change and evaluating the efficacy of next-generation therapies.

Application Notes

The medial temporal lobe (MTL) is a core neural structure for episodic memory, and its functional integrity is a critical biomarker in neurodegenerative disease research. The MTL supports distinct mnemonic processes: pattern separation reduces interference by orthogonalizing similar memories; pattern completion retrieves complete memories from partial cues; and recognition memory allows for the identification of previously encountered stimuli, supported by complementary processes of recollection (context-rich retrieval) and familiarity (context-free sense of prior encounter) [21] [22]. Research indicates that these processes are supported by a distributed yet hierarchically organized network within the MTL. Converging evidence from neuropsychological, neuroimaging, and neurophysiological studies suggests that the hippocampus is critical for recollection but not familiarity, whereas perirhinal cortex contributes to and is necessary for familiarity-based recognition [21]. In the context of neurodegenerative diseases such as Alzheimer's disease (AD), the precise mapping of these cognitive processes to MTL substructures allows for the development of sensitive diagnostic tools and the identification of targeted therapeutic interventions [11] [23].

Quantitative Correlates of MTL Structure and Function

The following tables summarize key quantitative findings on the structural and functional correlates of memory processes within the MTL, providing a reference for biomarker development and assessment.

Table 1: Structural and Functional Correlates of MTL Subregions

| MTL Subregion | Primary Mnemonic Process | Key Supporting Evidence | Impact of Aging/Atrophy |

|---|---|---|---|

| Dentate Gyrus (DG)/CA3 | Pattern Separation [24] [22] | Volume of left CA3/DG predicts lure discrimination performance [24]. | Atrophy contributes to age-related performance decline [24]. |

| Hippocampus (General) | Recollection [21] | Necessary for recollection; supports high-confidence recognition responses [21]. | Critical for recollection, which is disproportionately affected in aging and AD [21]. |

| Perirhinal Cortex | Familiarity [21] | Necessary for familiarity-based recognition [21]. | Atrophy may lead to early deficits in item recognition [21]. |

| Parahippocampal Cortex | Recollection (Context) [21] | Contributes to recollection via representation of contextual (e.g., spatial) information [21]. | Atrophy disrupts binding of items to their spatial context [21]. |

| Entorhinal Cortex | Gateway to Hippocampus | Provides major input to the hippocampal formation. | Early tau pathology in AD originates here, disrupting input to the hippocampus. |

Table 2: Behavioral and Psychophysical Metrics of Memory Processes

| Memory Process | Common Assessment Paradigms | Key Behavioral Metrics | Neurophysiological Correlates |

|---|---|---|---|

| Pattern Separation | Continuous Recognition Task (Lure Discrimination) [24] [22] | Accuracy and reaction time for discriminating "similar lures" from targets [22]. | Increased fMRI BOLD signal in DG/CA3 for lures vs. repeats [24]. |

| Recollection | Remember/Know, Source Memory, ROC Analysis [21] | High-confidence correct responses, retrieval of contextual details [21]. | U-shaped zROC curves; late-onset (∼500-700ms) parietal ERP component [21]. |

| Familiarity | Remember/Know, ROC Analysis [21] | Intermediate-confidence recognition in the absence of contextual detail [21]. | Linear zROC curves; early-onset (∼300-500ms) frontal ERP component [21]. |

Figure 1: MTL Memory Network. This diagram illustrates the flow of information through the medial temporal lobe, highlighting the primary pathways and the putative loci for pattern separation and pattern completion. The entorhinal cortex serves as the major gateway, relaying highly processed information from the perirhinal and parahippocampal cortices into the hippocampal trisynaptic circuit (DG → CA3 → CA1).

Experimental Protocols

Protocol: fMRI of Pattern Separation during Lure Discrimination

This protocol details a functional Magnetic Resonance Imaging (fMRI) experiment designed to probe pattern separation in the hippocampus, optimized for detecting changes in older adults or preclinical Alzheimer's populations [24].

2.1.1 Objectives and Rationale To measure hippocampal subfield (particularly DG/CA3) activation during a mnemonic discrimination task that parametrically manipulates the level of interference, providing a functional biomarker of pattern separation integrity.

2.1.2 Materials and Reagents

- 3T or Higher MRI Scanner: Essential for sufficient resolution to differentiate hippocampal subfields.

- High-Resolution T1-Weighted Structural Scan: For anatomical registration and volumetric analysis (e.g., MPRAGE sequence).

- T2*-Weighted fMRI Sequence: For BOLD signal acquisition (e.g., EPI sequence).

- Visual Stimulus Presentation System: MRI-compatible goggles or projection screen.

- Response Recording Device: MRI-compatible button box.

- Stimulus Set: Hundreds of high-quality, color images of common objects or scenes.

2.1.3 Experimental Procedure

- Participant Preparation: Screen for MRI contraindications. Obtain informed consent. Instruct participants on the task.

- Task Design (Within Scanner): Use a continuous recognition task with three trial types:

- Targets: Novel images presented for the first time.

- Lures (Near): Images that are highly similar but not identical to a previously presented target.

- Foils (Far): Images that are identical to a previously presented target.

- Novel Foils: Completely new, unseen images.

- Task Procedure: On each trial, an image is presented. The participant indicates via button press whether the item is "New," "Similar," or "Old."

- Data Acquisition: Acquire high-resolution structural scans followed by T2*-weighted BOLD fMRI scans during task performance. A typical session lasts 60-90 minutes.

2.1.4 Data Analysis

- Preprocessing: Standard fMRI preprocessing (realignment, coregistration to structural scan, normalization, smoothing).

- First-Level Analysis: Model BOLD response for different trial types (Correctly Identified Near Lure > Correctly Identified Old Item). This contrast is theorized to isolate pattern separation demand [24].

- Second-Level Analysis: Conduct group-level analyses (e.g., t-tests, ANCOVA) to compare activation in DG/CA3 and other MTL subregions between groups (e.g., Healthy Controls vs. MCI) or to correlate activation strength with behavioral performance (lure discrimination accuracy) or clinical measures.

Protocol: Assessment of Recollection and Familiarity using ROC

This protocol describes the use of Receiver Operating Characteristic (ROC) analysis to derive quantitative, behavior-based estimates of recollection and familiarity, which can be used in conjunction with or independently of neuroimaging [21].

2.2.1 Objectives and Rationale To behaviorally dissociate and quantify the independent contributions of recollection and familiarity processes to recognition memory performance, providing a sensitive cognitive endpoint for clinical trials.

2.2.2 Materials and Reagents

- Standard Computer: For stimulus presentation and data collection.

- Psychophysics Software: E-Prime, PsychoPy, or equivalent.

- Stimulus Set: Several hundred words, nameable objects, or faces.

2.2.3 Experimental Procedure

- Encoding Phase: Participants study a list of items (e.g., 150 words). To enhance subsequent recollection, use a "deep" encoding task (e.g., rate the pleasantness of each word).

- Retrieval Phase: After a delay (e.g., 10-45 minutes), present participants with a mixed list of 150 old (studied) and 150 new words. For each test item, participants indicate their confidence that the item is old using a 6-point scale (1="Sure New" to 6="Sure Old").

- Data Collection: Record the confidence rating for every test item.

2.2.4 Data Analysis

- Construct the ROC: Calculate the cumulative proportion of "old" responses for each confidence level for both old (Hits) and new (False Alarms) items. Plot Hit rate against False Alarm rate.

- Model Fitting: Fit the data with the Dual-Process Signal Detection (DPSD) model [21]:

- Recollection (R): Estimated as the y-intercept of the z-transformed ROC (zROC).

- Familiarity (d'): Estimated as the degree of curvature of the ROC, representing the strength of the familiarity signal.

- Interpretation: Compare the parameters R and d' across experimental conditions or between participant groups (e.g., Healthy Older Adults vs. Amnestic MCI). A selective reduction in R is indicative of hippocampal dysfunction.

Protocol: High-Frequency Episodic Memory Testing

This protocol summarizes a novel, brief episodic memory test designed for high-frequency, remote assessment, which is critical for capturing longitudinal change and measuring intervention effects in clinical studies [11].

2.3.1 Objectives and Rationale To frequently assess episodic memory with minimal practice effects, enabling dense data collection for tracking cognitive trajectories or response to therapy in neurodegenerative disease research.

2.3.2 Materials and Reagents

- Computer or Tablet: For test administration.

- CANTAB or Equivalent Cognitive Testing Platform: The protocol is based on a novel test optimized for the CANTAB platform [11].

- Standardized Stimulus Sets: Abstract shapes or animal emojis.

2.3.3 Experimental Procedure

- Task Design: The test involves learning two sets of four items (e.g., one set of animal emojis, one set of abstract shapes).

- Immediate Recall: Participants are shown the items and tested immediately.

- Delayed Recall: After a standardized delay (e.g., 2-hour or 6-hour delay), recall for the two sets is tested again.

- High-Frequency Schedule: The test can be administered multiple times per day (e.g., once in the morning, once in the afternoon) over days or weeks.

2.3.4 Data Analysis

- Primary Metrics: The key outcome measures are delayed recall scores, which show the strongest age-related effects and are most sensitive to episodic memory decline [11].

- Longitudinal Analysis: Use linear mixed-effects models to analyze the trajectory of delayed recall scores over time, assessing the impact of interventions or disease progression.

Figure 2: Integrated Experimental Workflow. A proposed workflow for a comprehensive study linking neuroanatomy, function, and behavior. Participants undergo baseline assessment and are then evaluated using the key protocols simultaneously. The integrated analysis correlates data across levels (e.g., linking CA3 volume from fMRI, recollection from ROC, and delayed recall slope from high-frequency testing).

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for MTL Memory Research

| Reagent / Material | Primary Function / Application | Specific Examples / Notes |

|---|---|---|

| High-Field MRI Scanner (3T+) | High-resolution structural and functional imaging of MTL subregions. | Essential for differentiating hippocampal subfields (DG, CA3, CA1) [24]. |

| Diffusion Tensor Imaging (DTI) | Assess white matter integrity and connectivity of MTL networks. | Measured via Fractional Anisotropy (FA); can assess perforant path integrity [24]. |

| Resting-State fMRI | Measure functional connectivity of MTL networks without a task. | Assesses hippocampal-cortical connectivity strength, a potential biomarker [24]. |

| CANTAB Cognitive Battery | Computerized cognitive assessment, including episodic memory tests. | Includes Paired Associates Learning (PAL) and novel high-frequency tests [11]. |

| Standardized Neuropsychological Battery | Comprehensive assessment of multiple cognitive domains. | Critical for phenotyping patients (e.g., memory vs. executive impairment profiles) [23]. |

| E-Prime / PsychoPy | Precisely controlled presentation of behavioral paradigms. | Used for administering ROC, Remember/Know, and continuous recognition tasks [21]. |

| FreeSurfer / FSL | Automated volumetric segmentation and cortical thickness analysis. | Quantifies hippocampal subfield volumes and cortical thickness across the brain [23]. |

| ALZ-NET Registry | Source for real-world data on patients receiving new Alzheimer's treatments. | Tracks long-term safety and cognitive outcomes of drugs like lecanemab [25]. |

Digital Transformation: Methodologies for Remote and Unsupervised Episodic Memory Assessment

The accurate assessment of episodic memory is a critical objective in neurodegenerative disease research, serving as a sensitive marker for conditions like Alzheimer's disease. Traditional paper-based cognitive assessments, while foundational, face limitations in standardization, granular data capture, and ecological validity. The digital transformation of clinical neuroscience enables a shift from merely digitized classic tests (direct translations of paper tasks to digital formats) to truly digital-native, anatomically-informed protocols. These novel paradigms leverage computational frameworks and neuroanatomical insights to create more precise, engaging, and biologically-grounded tools for measuring memory function. This document provides application notes and detailed protocols for implementing such advanced digital assessments, framed within the context of a multi-modal research program on neurodegenerative diseases.

Application Notes: Foundations of Digital Episodic Memory Assessment

The Neuroanatomical Basis of Episodic Memory

Episodic memory relies on a distributed neural network, and digital protocols can be designed to target specific components of this circuitry with greater precision than classical tests. Key neuroanatomical structures include the hippocampus, parahippocampal cortex, and prefrontal regions, which act in concert to encode, consolidate, and retrieve experiences [26]. The advent of large-scale digital brain atlases, such as the Allen Brain Atlas, provides a common coordinate framework and detailed neuroanatomical reference for understanding the organization of these memory-relevant structures [27]. These resources integrate extensive gene expression data, connectivity maps, and neuroanatomical information, offering an unprecedented view of the brain's architecture.

Modern analytical approaches can identify individuals based on their unique neuroanatomical fingerprints with high accuracy, even over extended periods. For instance, one study achieved perfect participant identification using a set of 14 neuroanatomical features derived from structural MRI, demonstrating the high individuality of brain structure [28]. This personalization potential is crucial for tracking individual trajectories of cognitive decline.

The Critical Role of Test Specifications in Measurement

When translating classic tests, researchers must carefully consider how test specifications interact with participant characteristics. A large longitudinal study on word recall tests revealed that education level strongly influences test performance through its interaction with test format and word-list complexity [29]. Key findings include:

- All participants performed better in test forms with multiple practice trials.

- Increased word-list complexity negatively affected all education groups, but lower-educated individuals were more vulnerable.

- Higher-educated respondents gained more improvement from extra practice trials when complexity was constant.

These findings underscore that simply digitizing a classic word-list task without considering these dynamics can introduce measurement bias, potentially confounding the assessment of true cognitive decline in heterogeneous patient populations. The study successfully applied equating techniques to adjust for these effects, thereby enhancing the validity of longitudinal measurement [29].

A Framework for Protocol Complexity in Digital Trials

The transition to digital protocols necessitates a structured approach to manage complexity. The Protocol Complexity Tool (PCT) offers a validated framework to assess complexity across five domains [30]:

- Study Design: Endpoints, learnings from previous studies, and design complexity.

- Patient Burden: Visit frequency, procedures, and travel requirements.

- Site Burden: Number of sites, data management, and monitoring load.

- Regulatory Oversight: Number of countries and regulatory bodies involved.

- Operational Execution: Drug supply chain and data collection complexity.

The PCT uses a 3-point scale (low=0, mid=0.5, high=1) for 26 questions across these domains, generating a Total Complexity Score (TCS) between 0 and 5 [30]. This tool can drive simplification in digital protocol design, creating studies that are simpler to execute without compromising scientific quality.

Table 1: Domains of the Protocol Complexity Tool (PCT). Adapted from [30].

| Domain | Description | Example Complexity Factors |

|---|---|---|

| Study Design | Complexity inherent in the scientific protocol. | Multiple primary endpoints, unvalidated design, adaptive trial features. |

| Patient Burden | Demands placed on trial participants. | Frequent site visits, numerous procedures per visit, complex patient-reported outcomes. |

| Site Burden | Demands placed on investigative sites. | Complex data entry, extensive source data verification, stringent recruitment targets. |

| Regulatory Oversight | Complexity of regulatory and compliance landscape. | Submission to numerous countries with differing requirements, complex safety reporting. |

| Operational Execution | Logistical challenges of trial implementation. | Complex drug supply chain, multi-modal data collection, numerous vendors. |

Experimental Protocols

Protocol: Anatomically-Informed Virtual Navigation Task (A-VNT)

The A-VNT is a digital-native paradigm designed to specifically target and challenge the hippocampal-entorhinal circuit, which is critically affected in early Alzheimer's disease.

1. Primary Objective To assess hippocampal-dependent spatial memory and navigation in a high-fidelity virtual environment, providing a sensitive measure of early neurodegenerative change.

2. Experimental Workflow

3. Materials and Reagents

Table 2: Research Reagent Solutions for the A-VNT Protocol.

| Item Name | Function/Description | Specifications |

|---|---|---|

| Virtual Environment Software | Renders the 3D navigable arena and records behavioral data. | Custom-built or modified game engine (e.g., Unity); records x,y,z coordinates, head direction, and interaction logs. |

| High-Performance Computer | Runs the virtual environment smoothly to prevent motion sickness. | Dedicated graphics card (e.g., NVIDIA GeForce RTX series), ≥16GB RAM. |

| Large Monitor or VR Headset | Displays the virtual environment to the participant. | Provides immersive visual field; VR headset preferred for depth perception. |

| Response Input Device | Allows participant to navigate and interact. | Game controller or keyboard. |

| Data Pre-processing Scripts | Converts raw logs into analyzable features. | Custom Python/R scripts for path smoothing, feature calculation (e.g., path length, dwell time). |

4. Procedure

- Step 1: Participant Setup. Seat the participant comfortably and provide standardized instructions. For VR setups, ensure the headset is properly fitted.

- Step 2: Encoding Phase (10 minutes). Instruct the participant to freely explore the virtual arena to learn the locations of several distinct, non-cueable objects. The arena should contain distal visual cues on the walls.

- Step 3: Distractor Task (5 minutes). Engage the participant in a non-spatial task (e.g., verbal fluency) to prevent active rehearsal.

- Step 4: Recall Phase (5 minutes).

- Wayfinding: The participant starts at a random novel location and must navigate directly to a specified object as quickly as possible.

- Object Location: The participant is presented with objects and must place them in their correct original locations on a map of the empty arena.

- Step 5: Data Collection. The software automatically records: Path Efficiency (ratio of actual path length to shortest possible path), Heading Error (degrees off the optimal direction), Object Location Error (Euclidean distance from correct position), and Dwell Time in target zones.

5. Data Analysis The extracted features are analyzed using machine learning classifiers (e.g., linear discriminant analysis or random forest) to distinguish between diagnostic groups (e.g., healthy control vs. mild cognitive impairment) [28]. A participant's performance is also compared to a normative model built from healthy control data, generating an individual deviation score as a potential biomarker.

Protocol: Adaptive Word List Recall Test (A-WLRT)

This protocol digitizes and enhances the classic auditory verbal learning test using an adaptive algorithm to control for the confounding effects of education and word-list complexity [29].

1. Primary Objective To provide an equated measure of verbal episodic memory that is robust to differences in educational background and specific test form characteristics.

2. Experimental Workflow

3. Materials and Reagents

Table 3: Research Reagent Solutions for the A-WLRT Protocol.

| Item Name | Function/Description | Specifications |

|---|---|---|

| Stimulus Presentation Software | Presents word lists and records responses. | E-Prime, PsychoPy, or web-based JS library; allows millisecond precision timing. |

| Calibrated Word Pool Database | A large set of words with pre-rated properties. | Words rated for familiarity, concreteness, imageability; organized into lists of equivalent and varying complexity. |

| Audio Recording Equipment | Records verbal responses for later scoring. | High-quality microphone and digital recorder; optional speech-to-text software. |

| Scoring Interface / Software | Allows trained rater to score audio recordings. | Custom interface that presents audio files and allows marking of correct/incorrect recalls. |

4. Procedure

- Step 1: Baseline Assessment. Collect demographic data, with particular attention to years of education.

- Step 2: List Presentation. A list of 10 words is presented auditorily (or visually on a screen) at a rate of one word every 2 seconds. The initial list complexity (e.g., word frequency, semantic relatedness) is tiered based on the participant's education level.

- Step 3: Immediate Recall. The participant is given 60 seconds to verbally recall as many words as possible in any order. Responses are audio-recorded.

- Step 4: Adaptive List Selection. Based on the immediate recall score, an algorithm selects the word list for the second trial. If recall is high, a more complex list is chosen; if low, a less complex list is used. This targets a similar performance level across participants to reduce floor/ceiling effects.

- Step 5: Delayed Recall and Recognition. After a 20-minute delay filled with non-verbal tasks, the participant is again asked to freely recall the words. This is followed by a recognition test where the original words are mixed with foils.

5. Data Analysis Raw scores (immediate recall total, delayed recall, recognition discriminability index) are calculated. Crucially, equated scores are then computed using frequency-estimation or equipercentile equating techniques to adjust for the differential difficulty of the administered word lists, as described in [29]. This creates a fair metric for longitudinal comparison, even if test forms change over time.

The Scientist's Toolkit

Table 4: Essential Digital Resources for Anatomically-Informed Protocol Design.

| Tool / Resource | Function | Relevance to Episodic Memory Research |

|---|---|---|

| Allen Brain Atlas [27] | Integrated public resource providing gene expression, connectivity, and neuroanatomical data. | Informs target region selection for task design (e.g., hippocampal subfields); provides a standard 3D reference space for aligning functional findings. |

| INCF Digital Atlasing Infrastructure [27] | Enables integration of data from genetic, anatomical, and functional studies into a common coordinate system (Waxholm Space). | Facilitates multi-site data harmonization and comparison of findings across different digital task platforms. |

| FreeSurfer Software Suite [28] | Automated MRI processing tool for computing brain morphometry metrics (cortical thickness, subcortical volumes). | Generates participant-specific neuroanatomical features (e.g., hippocampal volume) for correlation with digital task performance. |

| Unified Study Definitions Model (USDM) [31] | A reference architecture for digitizing clinical trial protocols in a standardized, machine-readable format. | Ensures that digital episodic memory protocols are implemented consistently across different clinical trial systems, enhancing reproducibility. |

| Protocol Complexity Tool (PCT) [30] | Objectively measures the complexity of a study protocol across five domains to drive simplification. | Helps optimize digital protocol design to reduce patient and site burden, potentially improving recruitment and retention in long-term neurodegenerative studies. |

Application Notes on Episodic Memory Assessment in Neurodegenerative Disease Research

Episodic memory, the ability to recall specific personal experiences, is one of the earliest cognitive domains affected in Alzheimer's disease and related neurodegenerative conditions. Digital paradigms for assessing episodic memory components—mnemonic discrimination, associative recall, and long-term recognition—provide sensitive, quantitative, and scalable tools for detecting subtle memory deficits in clinical research and therapeutic development. These behavioral measures correspond to specific hippocampal computational processes, offering crucial insights into early disease pathology that often originates in medial temporal lobe structures [32] [33].

Mnemonic Discrimination Tests

Mnemonic discrimination, the behavioral ability to distinguish between similar memories, stems from the neural process of pattern separation primarily occurring in the hippocampal dentate gyrus and CA3 subregion [33] [34]. This function is particularly vulnerable in early Alzheimer's disease pathology, which first affects entorhinal cortex and hippocampal areas [33].

The Mnemonic Similarity Task (MST) has emerged as a benchmark assessment, with specific utility in discriminating between healthy aging, subjective cognitive complaints (SCC), and mild cognitive impairment (MCI) [33]. In clinical studies, the MST effectively discriminates patients with SCC from those with MCI with moderate accuracy (AUC = 0.77-0.78), performing equivalently to standard paper-and-pencil screening tests like the MMSE and Frontal Assessment Battery [33].

Table 1: Mnemonic Similarity Task Performance Across Clinical Populations

| Patient Group | Lure Discrimination Index (LDI) | Corrected Recognition Score | Diagnostic Accuracy (AUC) |

|---|---|---|---|

| Subjective Cognitive Complaint (SCC) | 0.37 (median) | 0.80 (median) | Reference group |

| Non-amnestic MCI (naMCI) | 0.24 (median) | 0.70 (median) | 0.78 vs. SCC |

| Amnestic MCI (aMCI) | 0.21 (median) | 0.58 (median) | 0.77 vs. SCC |

| Mild Dementia | 0.16 (median) | 0.46 (median) | Not reported |

Table 2: Correlation Between Mnemonic Discrimination and Cognitive Domains

| Cognitive Domain | Correlation with Lure Discrimination Index | Statistical Significance |

|---|---|---|

| Global Cognitive Function (MMSE) | Spearman's r = 0.39 | p < 0.0035 |

| Executive Function (FAB) | Spearman's r = 0.41 | p < 0.0035 |

| Visual Memory (ROCF recall) | Spearman's r = 0.44 | p < 0.0035 |

| Verbal Memory (FCSRT) | Spearman's r = 0.36 | p < 0.0035 |

Recent research has extended mnemonic discrimination assessment to more complex paradigms such as the Object-in-Context (MDOC) task, which evaluates pattern separation for composite stimuli containing both object and contextual features [35]. Studies indicate that object overgeneralization specifically associates with mental health symptoms, suggesting domain-specific pattern separation deficits may have different clinical implications [35].

Associative Recall Tests

Associative recall measures the ability to bind and retrieve multiple elements of an experience (e.g., object-place associations), a core function of the hippocampal circuit. This paradigm is particularly sensitive to early Alzheimer's pathology as it depends on intact hippocampal connectivity.

High-Frequency Episodic Memory Tests represent an advancement in digital assessment, optimized for repeated administration in clinical trial settings. One novel paradigm involves recall of two sets of four items (animal emojis and abstract shapes) after a two-hour delay, with demonstrated strong age-related effects and minimal task-learning effects despite high-frequency administration [11]. This enables richer longitudinal data capture for tracking disease progression or treatment response.

Table 3: High-Frequency Associative Recall Task Characteristics

| Parameter | Specification | Research Application |

|---|---|---|

| Test Frequency | Up to twice daily (6-hour interval) | Clinical trial monitoring |

| Session Completion Rate | 75% across 14 sessions | Feasibility for longitudinal studies |

| Learning Effects | No evidence of practice effects | Suitable for repeated measures |

| Age Sensitivity | Strongest in delayed metrics | Cross-sectional age comparisons |

Long-Term Recognition Tests

Long-term recognition tests evaluate the retention of previously encoded information over extended delays (hours to days), assessing both hippocampal and cortical memory systems. These tests are particularly valuable for detecting the accelerated forgetting characteristic of early neurodegenerative processes.

Digital implementations enable precise measurement of both recognition accuracy and memory quality through continuous recall paradigms that separate memory precision from overall recognition likelihood [32]. Research demonstrates that behavioral estimates of pattern separation are significantly correlated with both short-term memory (STM) and long-term memory (LTM) precision, irrespective of recall success likelihood [32].

Detailed Experimental Protocols

Protocol 1: Standardized Mnemonic Similarity Task (MST)

Purpose: To assess pattern separation ability by measuring lure discrimination performance [33].

Materials: Computerized MST (freely available at: http://faculty.sites.uci.edu/starklab/mnemonicsimilarity-task-mst/), standard computer with monitor, quiet testing environment.

Procedure:

- Incidental Encoding Phase (∼8.5 minutes):

- Present 128 pictures of everyday objects sequentially

- Each display: 2000 ms presentation with 500 ms interstimulus interval

- Participant task: Make indoor/outdoor judgment for each object via button press

- No memory instruction provided to ensure incidental encoding

Immediate Test Phase (∼13 minutes):

- Present 192 pictures in same timing parameters

- Stimulus types: 64 Targets (exact repetitions), 64 Lures (similar objects), 64 Foils (completely new objects)

- Participant task: Identify each object as "Old," "Similar," or "New" via button press

Data Analysis:

- Calculate Lure Discrimination Index (LDI): ["Similar" responses to Lures] - ["Similar" responses to Foils]

- Calculate Corrected Recognition: ["Old" responses to Targets] - ["Old" responses to Foils]

- Administer total test time: ∼13 minutes [33]

Protocol 2: Object-in-Context Mnemonic Discrimination (MDOC)

Purpose: To assess pattern separation for complex object-context associations and their relationship to mental health symptoms [35].

Materials: Computerized task with object-context pairs, standardized response interface.

Procedure:

- Encoding Phase:

- Present composite images of objects superimposed on background scenes

- Participant task: Indoor/outdoor judgment for the object only (incidental encoding of context)

- Multiple object-context pairings presented

Test Phase:

- Present objects in one of four conditions: Target Object+Target Context, Target Object+Lure Context, Lure Object+Target Context, Lure Object+Lure Context

- Participant task: "Old," "Similar," or "New" judgments

- Explicit instruction to focus on objects only (context is irrelevant background)

Data Analysis:

- Calculate lure rejection rates separately for object and context domains

- Quantify overgeneralization rates (false "old" responses to lures)

- Analyze cross-domain effects (how object status affects context judgments and vice versa)

Protocol 3: High-Frequency Associative Recall Test

Purpose: To monitor episodic memory changes with frequent assessment intervals suitable for clinical trials [11].

Materials: Digital testing platform (e.g., CANTAB), two distinct stimulus sets (animal emojis, abstract shapes).

Procedure:

- Tutorial Session:

- Familiarize participant with test interface and requirements

- Ensure understanding of delayed recall instructions

Immediate Encoding and Recall:

- Present four items from a category (animal emojis OR abstract shapes)

- Immediate recall test to establish baseline performance

Delayed Recall (2-hour or 6-hour delay):

- Assess retention after specified delay period

- Counterbalance testing order across participants

High-Frequency Administration:

- Administer once daily or twice daily (minimum 6-hour intervals)

- Continue for extended period (e.g., 14 sessions) to track longitudinal changes

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Materials for Digital Episodic Memory Assessment

| Research Reagent | Function/Application | Specifications |

|---|---|---|

| Mnemonic Similarity Task (MST) Software | Assessing lure discrimination ability | Free download (Mac OS X, Windows); ∼13 minute administration; automated scoring [33] |

| CANTAB Cognitive Battery | Comprehensive cognitive assessment including PAL (paired associates learning) | Validated digital platform; standardized normative data; multiple parallel forms [11] |

| Object-in-Context Stimulus Sets | Evaluating complex pattern separation for object-context associations | Customizable object-background pairs; controls for visual similarity [34] [35] |

| High-Frequency Assessment Platform | Frequent episodic memory monitoring for clinical trials | Minimal practice effects; engaging interface; cloud-based data collection [11] |

| ACT-R Cognitive Architecture | Computational modeling of memory processes | Simulates pattern separation/completion; theoretical framework for task design |

Integration in Clinical Trials and Neurodegenerative Research

These digital paradigms are increasingly incorporated into clinical trials for Alzheimer's disease and related dementias. The Mnemonic Similarity Task has been proposed as part of cognitive composite scores in major clinical trials of anti-amyloid therapies, including the A4 study (Anti-Amyloid Treatment in Asymptomatic Alzheimer's) [33]. The sensitivity of these measures to early hippocampal dysfunction makes them particularly valuable for:

- Subject Selection: Identifying individuals with subtle episodic memory deficits indicative of prodromal AD

- Treatment Monitoring: Detecting subtle cognitive changes in response to disease-modifying therapies

- Differential Diagnosis: Discriminating between normal aging, subjective cognitive decline, and early neurodegenerative processes

- Prevention Trials: Monitoring high-risk populations in primary prevention studies

Recent real-world evidence collection initiatives, such as the Alzheimer's Network for Treatment and Diagnostics (ALZ-NET), are incorporating these digital paradigms to track long-term outcomes in patients receiving novel therapies [25]. The combination of digital cognitive assessment with biomarker data provides powerful insights into the relationship between pathological changes and functional memory deficits throughout the Alzheimer's disease continuum.

The assessment of episodic memory, a core cognitive domain defined by the ability to acquire and recollect personally experienced events within their spatial and temporal context, is a cornerstone of neurodegenerative disease research [36]. Its decline is the clinical hallmark of typical Alzheimer's disease (AD), often preceding other cognitive deficits [36]. Traditional, in-clinic neuropsychological assessments, while comprehensive, face significant limitations including high costs, limited accessibility, and an inability to capture high-frequency, real-world data [37] [38]. These challenges have catalyzed the development and validation of remote administration modalities, which leverage ubiquitous technologies like smartphones, tablets, and telephones to enable decentralized, scalable, and ecologically valid cognitive assessment [37] [38]. This document outlines application notes and detailed protocols for implementing these remote modalities within clinical and research settings focused on neurodegenerative diseases.

Smartphone-Based Applications

Smartphone-based applications represent a transformative modality for remote cognitive assessment, capable of capturing both interactive task performance and passive behavioral data [38].

Application Notes

Smartphone apps facilitate fully remote and unsupervised assessments, allowing participants to complete tests in their own homes using personal devices. This approach provides a realistic view of everyday cognitive function, free from the anxiety of a clinical environment [39] [37]. A key advantage is the ability to conduct high-frequency testing, which can account for day-to-day performance variability and detect subtle, early declines that might be missed by single, in-clinic assessments [37]. These platforms can deliver non-verbal, anatomically informed tasks that tap specific cognitive processes like pattern separation and completion, which are relevant to early Alzheimer's pathology [37]. Large-scale validation studies, such as the "Intuition" study (NCT05058950) with 23,004 US adults, have demonstrated the feasibility, reliability, and validity of using iPhones and a custom research application for robustly capturing cognitive data and classifying Mild Cognitive Impairment (MCI) in demographically diverse populations [38].

Protocol: Unsupervised Remote Digital Memory Assessment

Objective: To remotely assess episodic memory components (mnemonic discrimination, cued recall, and recognition) in an unsupervised setting using a smartphone application.

Materials:

- Software: A validated smartphone application such as the neotiv digital platform [37].

- Device: Participant's personal smartphone (iOS or Android).

- Environment: Participants should choose a quiet, well-lit space with minimal distractions and a stable internet connection.

Procedure:

- Participant Onboarding: Participants download the research application and provide informed consent electronically. They complete a demographic and health history profile within the app.

- Instruction Phase: Clear, on-screen instructions guide participants through each task. Practice trials may be included to ensure understanding.

- Task Execution (Encoding & Retrieval): Participants complete a battery of memory tests in a single session. A sample battery is described below:

- Mnemonic Discrimination Test for Objects and Scenes (MDT-OS):

- Encoding: Participants view a series of object and scene images and make simple perceptual judgments (e.g., "Is this an indoor or outdoor scene?").

- Retrieval (Short-term): After a brief delay (minutes), participants are shown the original images mixed with highly similar "lure" images and entirely new "foil" images. They must identify each as "Old," "Similar," or "New."

- Object-Reality Recall Test (ORR):

- Encoding: Participants learn arbitrary object-scene associations.

- Retrieval: They are tested on immediate cued recall (within the session) and delayed cued recall after a longer interval (e.g., ~67 minutes ± 36 minutes) [37].

- Photographic Scene Recognition Test (CSR):

- Encoding: Participants view a series of complex photographic scenes.

- Retrieval (Long-term): After a extended delay (e.g., ~92 minutes ± 23 minutes) [37], participants are shown the original scenes mixed with novel ones and must identify them as "Old" or "New."

- Mnemonic Discrimination Test for Objects and Scenes (MDT-OS):

- Data Collection: The application automatically records accuracy, reaction times, corrected hit rates, and self-reported measures of concentration and distraction.

Data Analysis:

- Primary Outcome: A Remote Digital Memory Composite (RDMC) score can be calculated by z-scoring the key outcomes from each test (TotalRecall from ORR, TotalCorrectedHitRate from MDT-OS, and corrected hit rate from CSR) and averaging them. This composite has shown good retest reliability (ICC = 0.8) and high diagnostic accuracy for discriminating cognitive impairment (AUC = 0.83) [37].

Table 1: Key Validated Smartphone Apps for Episodic Memory Assessment

| Platform / App Name | Primary Cognitive Focus | Key Features | Validation & Evidence |

|---|---|---|---|

| neotiv digital platform [37] | Episodic Memory (Pattern Separation, Recall, Recognition) | Three non-verbal memory tests; Remote Digital Memory Composite (RDMC); Fully unsupervised. | AUC = 0.83 for detecting MCI; Good retest reliability (r=0.8) [37]. |

| Intuition Brain Health App [38] | Multimodal Brain Health (including Cognition) | Integrates with Apple Watch for passive data; Uses CANTAB cognitive battery; Large-scale decentralized trial. | Used in a cohort of 23,004 adults; Validated for MCI classification [38]. |

| TAS Test [40] | Motor-Cognitive Link (Tapping) | Keyboard tapping tests (single-key, alternate-key); Self-administered online. | Predicts episodic memory performance in asymptomatic older adults (R² adj = 9.1%) [40]. |

Tablet Platforms

Tablets offer a intermediate platform, blending the portability of smartphones with a larger screen that is well-suited for more complex visual tasks and older adult populations.

Application Notes

Tablets are particularly effective for administering immersive and interactive cognitive assessments. The larger screen facilitates the use of Virtual Reality (VR) and gamified paradigms, which can enhance ecological validity by simulating real-world scenarios [36]. Studies have shown that VR-based tasks, such as navigating a virtual town or shopping in a virtual grocery store, can effectively assess the binding of "what," "where," and "when" information that is central to episodic memory [36]. These tasks are more reliably associated with general cognitive functioning and subjective memory complaints than some standard neuropsychological tools [36]. Furthermore, tablet-based assessments can be integrated with design and analytics platforms (e.g., Figma, Google Analytics) to streamline the research workflow [39].

Protocol: Tablet-Based Virtual Reality Memory Assessment

Objective: To assess episodic memory binding and spatial navigation in an immersive, interactive virtual environment using a tablet.

Materials:

- Hardware: A tablet (e.g., iPad, Android tablet) with adequate processing power and screen size.

- Software: A custom-built or commercially available VR environment (e.g., a virtual town or a Virtual Grocery Store).

Procedure:

- Setup: The application is installed on the tablet. Participants are instructed to use the tablet in a comfortable setting, holding it in a way that allows for intuitive interaction.

- Encoding Phase (Active Navigation):

- Participants are instructed to actively navigate the virtual environment (e.g., by tapping and swiping the screen) for a fixed period (e.g., 10-15 minutes).

- During navigation, they encounter specific target events (e.g., a car passing, a person waving) in unique locations.

- Distractor Task: Participants engage in a non-memory related task (e.g., a simple puzzle) for 5-10 minutes to prevent rehearsal.

- Retrieval Phase:

- Free Recall: Participants verbally report everything they remember from the navigation, which is recorded by the device or a researcher via video call.

- Cued Recall & Recognition: Participants answer specific questions about the events (e.g., "What happened near the fountain?"). They may also be shown images and asked if they were present in the environment.

- Data Collection: The application records navigation paths, interaction logs, and retrieval accuracy. It can also calculate scores for item memory, spatial context, and temporal order.

Data Analysis:

- Scoring: Transcribed verbal recalls are scored for the number of correct items, spatial contexts, and temporal associations recalled. Recognition tasks are scored for accuracy.

- Key Metric: A Feature Binding Score can be computed by assessing the number of items correctly associated with their spatial and temporal context. This has been shown to be selectively impaired in aging [36].

Telephone-Based Tests

While less technologically complex, telephone-based assessments remain a valuable tool for reaching populations with limited access to smartphones or internet connectivity.

Application Notes