Beyond Human-Centered Science: Identifying and Mitigating Anthropocentric Bias in Cognitive Research and Drug Development

This article provides a comprehensive framework for researchers and drug development professionals to understand, identify, and mitigate anthropocentric bias—the systematic human-centered perspective that can limit scientific validity and translational success.

Beyond Human-Centered Science: Identifying and Mitigating Anthropocentric Bias in Cognitive Research and Drug Development

Abstract

This article provides a comprehensive framework for researchers and drug development professionals to understand, identify, and mitigate anthropocentric bias—the systematic human-centered perspective that can limit scientific validity and translational success. Drawing on current research, we explore the foundational concepts of this bias, present methodological strategies for its mitigation in preclinical and clinical research, address troubleshooting in complex models, and outline validation techniques. By integrating perspectives from cognitive science, biomedical ethics, and translational research, this guide aims to enhance the robustness, generalizability, and ethical foundation of scientific inquiry, ultimately fostering more reliable and effective therapeutic developments.

Defining the Problem: Unpacking Anthropocentric Bias in Scientific Inquiry

What is Anthropocentric Bias? From Philosophical Concept to Research Pitfall

Definition and Core Concept

What is anthropocentric bias?

Anthropocentric bias is the tendency to interpret the world primarily from a human-centered perspective, often unconsciously prioritizing human values, experiences, and cognitive models while overlooking broader ecological, biological, or systemic factors [1] [2]. The term originates from the Greek words "ánthrōpos" (human) and "kéntron" (center), literally meaning "human-centered" [1].

In scientific research, this bias manifests when researchers:

- Assume human biological processes are universal across species

- Design experiments and interpret results through a human-exceptionalist lens

- Overlook important non-human mechanisms or perspectives

- Develop technologies optimized primarily for human cognitive patterns

Why is this problematic in research? Anthropocentric bias can lead to flawed experimental designs, inaccurate conclusions, and technologies that work well for human models but fail when applied to broader biological systems or artificial intelligence [3] [4]. In drug development, it may cause researchers to overestimate the applicability of animal model results to humans, or vice versa.

Troubleshooting Guides

Guide 1: Identifying Anthropocentric Bias in Experimental Design

Symptoms:

- Consistent performance differences between human data and other biological models

- Unexplained failure of algorithms when applied to non-human systems

- Terminology that implicitly assumes human characteristics in non-human entities

- Difficulty interpreting results that don't align with human-centric expectations

Diagnostic Steps:

| Step | Procedure | Expected Outcome |

|---|---|---|

| 1. Terminology Audit | Review all descriptive terminology for human-centric metaphors (e.g., "virgin" cell, "husbandry") [3] | Identification of potentially biased terminology affecting interpretation |

| 2. Model Reversal Test | Apply the same experimental framework to humans and non-human models simultaneously | Revelation of asymmetrical assumptions in experimental design |

| 3. Auxiliary Factor Analysis | Identify non-essential task demands that may impede performance in non-human systems [4] | Separation of core competence from performance limitations |

| 4. Cross-Species Validation | Test hypotheses across multiple species with different evolutionary trajectories | Confirmation of whether findings reflect universal principles or human-specific traits |

Resolution: Replace human-centric terminology with neutral alternatives. For example:

- Instead of "fallopian tube," use "oviduct" when referring to non-human mammals [3]

- Replace "egg" with specific terms like "oocyte," "female gamete," or "zygote" depending on context [3]

- Use "menstrual cycle" specifically for humans and "estrous cycle" for appropriate non-human species [3]

Guide 2: Mitigating Anthropocentric Bias in AI and Cognitive Research

Problem: AI systems performing poorly on tasks due to human-centered evaluation frameworks [4].

Symptoms:

- AI models fail on tasks humans find simple, but succeed on computationally complex tasks

- Performance metrics that prioritize human-like strategies over effective solutions

- Dismissal of non-human problem-solving approaches as "incorrect"

Solution Framework:

- Distinguish performance from competence - Poor performance on human-designed tests doesn't necessarily indicate lack of underlying capacity [4]

- Identify auxiliary factors - Separate core capabilities from performance limitations caused by:

- Task demands irrelevant to the capacity being tested

- Computational limitations (e.g., output length restrictions)

- Mechanistic interference (competing cognitive circuits) [4]

- Develop species-fair comparisons - Create evaluation frameworks that account for different cognitive architectures

Experimental Protocols

Protocol 1: Testing for Terminology-Induced Bias

Purpose: Determine whether anthropocentric terminology affects experimental interpretation and hypothesis generation.

Materials:

- Research datasets (biological, ecological, or AI)

- Alternative terminology frameworks (anthropocentric vs. neutral)

Methodology:

- Select two equivalent researcher groups (Group A and Group B)

- Provide Group A with materials using standard anthropocentric terminology

- Provide Group B with materials using neutral terminology alternatives

- Both groups analyze the same dataset and generate hypotheses

- Compare hypothesis diversity, interpretation variance, and conclusion validity

Neutral Terminology Alternatives:

| Anthropocentric Term | Neutral Alternative | Context |

|---|---|---|

| "Fertilization" | "Gamete fusion" or "Syngamy" | Cellular biology [3] |

| "Dominance" | "Behavioral priority" or "Resource control" | Animal behavior studies |

| "Marriage" | "Pair bonding" or "Partnership formation" | Biological anthropology |

| "Prostitute" | "Sex worker" | Human studies |

| "Harem" | "Multi-female group" or "Polygynous group" | Primatology |

Validation Metrics:

- Inter-group hypothesis diversity index

- Interpretation consensus scores

- Predictive accuracy of generated models

Protocol 2: Cross-Species Framework Validation

Purpose: Ensure research frameworks don't privilege human-specific mechanisms.

Materials:

- Multiple model organisms or AI systems with diverse architectures

- Core research question translatable across systems

Procedure:

- Formulate research question in mechanism-neutral terms

- Design parallel experimental frameworks for each model system

- Identify and minimize auxiliary task demands specific to each system [4]

- Implement cross-system calibration to ensure equivalent task complexity

- Analyze results for system-specific vs. universal patterns

Key Consideration: Most mammals do not undergo continuous estrous cycling in natural populations - this is typically an artifact of captivity. Design experiments that account for natural reproductive cycles rather than assuming continuous cycling is the norm [3].

FAQ

Q1: Isn't some anthropocentric bias inevitable since humans conduct research?

A: While researchers naturally bring human perspectives, this doesn't make bias inevitable or acceptable. Through conscious methodology and terminology choices, researchers can minimize its effects. The goal isn't to eliminate human perspective but to recognize its limitations and actively compensate for them [4] [5].

Q2: How does anthropocentric bias specifically affect drug development?

A: In drug development, anthropocentric bias can manifest as:

- Over-reliance on human biological models when non-human models might be more appropriate

- Assumption that human metabolic pathways are universal

- Underestimation of species-specific differences in drug metabolism

- Terminology that obscures important biological differences between model organisms and humans [3] [6]

Q3: What's the difference between anthropocentrism and anthropomorphism?

A: Anthropocentrism is evaluating non-human systems from a human-centered perspective, while anthropomorphism is attributing human characteristics to non-human entities. Both are problematic but distinct: anthropocentrism prioritizes human interests, while anthropomorphism misrepresents non-human nature [1] [4].

Q4: How can I identify anthropocentric bias in my research questions?

A: Use the "perspective reversal" test: reformulate your research question from the perspective of another species or system. If the question becomes meaningless or significantly changes, it may contain anthropocentric bias. Also audit your terminology for hidden human-centric assumptions [3].

The Scientist's Toolkit: Research Reagent Solutions

| Tool/Reagent | Function | Application Notes |

|---|---|---|

| Neutral Terminology Framework | Reduces interpretive bias in experimental design | Implement as a laboratory standard operating procedure [3] |

| Cross-Species Validation Protocol | Tests hypothesis universality beyond human models | Requires adaptation for specific research domains |

| Auxiliary Factor Analysis Matrix | Identifies performance barriers unrelated to core competence | Particularly valuable in AI and cognitive research [4] |

| Perspective-Taking Framework | Cultivates ability to interpret results from multiple viewpoints | Can be developed through training and practice [7] |

| Bias Audit Checklist | Systematic review of potential anthropocentric assumptions | Should be applied at all research stages: design, execution, and interpretation |

Implementation Protocol:

- Establish laboratory-wide terminology standards at project inception

- Incorporate cross-species validation early in experimental design

- Schedule regular bias audits at project milestones

- Document and review terminology choices in publications and internal reports

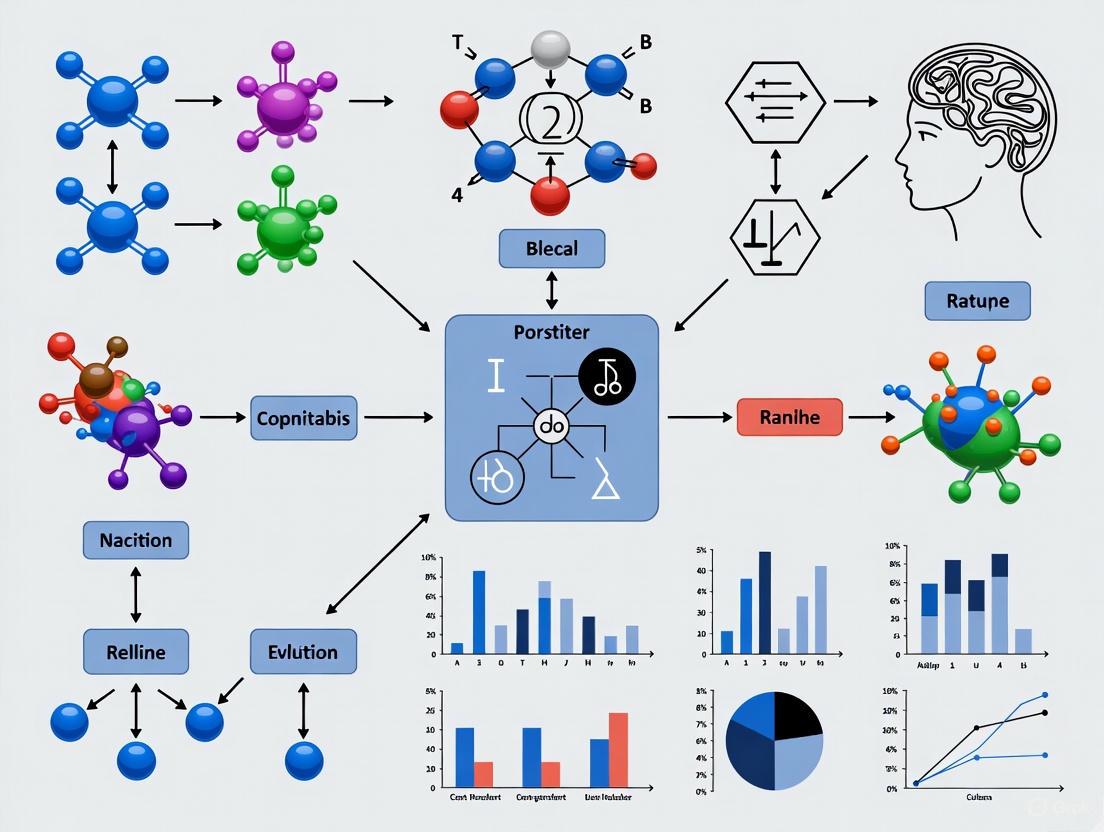

Visualizing the Bias Mitigation Workflow

Theoretical Foundation: Understanding the Biases

This technical support guide is framed within a broader thesis on addressing anthropocentric bias in cognitive and machine learning research. A recent study identifies two specific, often neglected, types of this bias that can significantly impede accurate model evaluation [8].

- Auxiliary Oversight: This occurs when evaluators overlook how non-core, auxiliary factors can impede a model's performance despite its underlying competence. A model might fail not because it lacks the core capability, but due to issues with data preprocessing, feature encoding, or hyperparameter tuning that are unrelated to its fundamental cognitive capacity [8].

- Mechanistic Chauvinism: This bias involves dismissing a model's mechanistic strategies simply because they differ from human cognitive processes, deeming them not "genuinely competent" [8]. It privileges human-like reasoning paths over other valid, and potentially more effective, computational approaches.

Mitigating these biases requires an empirically-driven approach that maps cognitive tasks to model-specific capacities through careful behavioral experiments and mechanistic studies [8]. The following guides and FAQs are designed to help researchers implement this approach.

Troubleshooting Guide: A Practical Framework

Guide 1: Diagnosing Auxiliary Oversight

Objective: To systematically rule out auxiliary factors before concluding a model lacks a core competency.

Experimental Protocol:

- Isolate the Variable: Identify a specific performance bottleneck (e.g., low recall for a specific class).

- Audit Data Quality: Check for label consistency, outliers, and imbalances specifically for the problematic subset [9] [10]. For example, if a model for fruit classification misclassifies apples as pears, scrutinize the training data for mislabeled or ambiguous images of apples and pears [9].

- Check Feature Engineering: Analyze if the feature representation is poor for the failing cases. For tabular data, examine if continuous features have been improperly scaled or if categorical features have high cardinality for the error-prone group [9].

- Test Hyperparameter Sensitivity: Conduct a small-scale hyperparameter search focused on the metric that is underperforming (e.g., optimizing for F1-score instead of accuracy) [10].

- Validate on a Clean Subset: Create a small, manually verified "golden dataset" from the problematic data subset. If performance is high on this clean set, auxiliary data issues are the likely culprit [9].

Diagnostic Table: Common Auxiliary Issues and Solutions

| Auxiliary Factor | Symptom | Diagnostic Check | Corrective Action |

|---|---|---|---|

| Data Imbalance [10] | High accuracy but poor recall for minority class. | Calculate per-class precision and recall. Check confusion matrix [11] [9]. | Use resampling techniques (SMOTE), class weights, or collect more data for the minority class [10]. |

| Labeling Errors [10] | High loss on a specific data segment; poor performance despite model complexity. | Perform error analysis: manually inspect a sample of misclassified instances [9]. | Implement iterative labeling with human-in-the-loop verification [10]. |

| Data Drift [10] | Model performance degrades over time on new data. | Use statistical tests (e.g., Kolmogorov-Smirnov) to compare feature distributions of training vs. current data [10]. | Retrain model with recent data; implement continuous monitoring [10]. |

| Inadequate Hyperparameters | Model underfits (high bias) or overfits (high variance) [10]. | Plot learning curves (training & validation error vs. training size). | For overfitting: increase regularization, use dropout, reduce model complexity. For underfitting: increase model complexity, reduce regularization [10]. |

Guide 2: Mitigating Mechanistic Chauvinism

Objective: To evaluate a model based on its performance and the validity of its internal mechanisms, not their similarity to human cognition.

Experimental Protocol:

- Define Competency by Output: Clearly define the task success criteria based on outputs and behavioral benchmarks, independent of the method used to achieve them.

- Perform Mechanistic Interpretability: Use techniques like saliency maps, attention visualization, or probing classifiers to understand how the model makes its decisions [8].

- Compare Strategies, Not Just Scores: Instead of just comparing accuracy, analyze the decision boundaries or feature importances. A non-human-like strategy that relies on robust but unexpected features (e.g., background texture in image classification) is not inherently wrong if it is consistently effective and generalizable [8].

- Stress-Test Generalization: Evaluate the model on out-of-distribution (OOD) datasets or adversarial examples. A robust mechanistic strategy should generalize well, even if it is non-anthropomorphic [8].

Diagnostic Table: Signs of Mechanistic Chauvinism vs. Robust Evaluation

| Scenario | Evidence of Mechanistic Chauvinism | Unbiased, Empirically-Driven Approach |

|---|---|---|

| A model achieves high accuracy using a non-intuitive feature. | Dismissing the model as a "hack" or "cheating" because humans don't use that feature. | Investigating if the feature is consistently informative and robust. Validating the model's performance on OOD data where that feature is decorrelated from the true label [8]. |

| A language model solves a reasoning task without a explicit step-by-step "chain of thought." | Concluding the model lacks reasoning abilities because its internal process is not human-interpretable. | Using behavioral experiments to test the limits of this capability. Does it fail on problems of a specific type or complexity? The focus is on mapping the model's cognitive capacities, not its processes [8]. |

| A computer vision model classifies objects based on texture rather than shape (vs. human bias for shape). | Deeming the model's approach flawed because it is not shape-biased. | Acknowledging texture as a valid statistical feature in its training data and evaluating its real-world effectiveness and failure modes on various datasets. |

Frequently Asked Questions (FAQs)

Q1: My model's performance is poor on a specific subgroup. How can I tell if it's an auxiliary data issue or a fundamental model limitation?

A1: Follow the diagnostic protocol for Auxiliary Oversight. First, create a balanced, clean golden dataset for that subgroup. If the model performs well on this curated set, the issue is likely auxiliary (e.g., data imbalance or noise). If performance remains poor even on perfect data, it may indicate a fundamental limitation in the model's architecture or learning algorithm for that specific task [9].

Q2: What is a concrete example of Mechanistic Chauvinism in practice?

A2: In sentiment analysis, a model might learn to heavily weight the presence of certain emoticons for classification. A researcher succumbing to mechanistic chauvinism might dismiss this as a "shallow" heuristic, unlike "deep" human understanding of language. However, a robust evaluation would test if this strategy leads to high, generalizable accuracy across diverse text corpora. If it does, the strategy is valid, even if non-human [8] [9].

Q3: My model is achieving "too good to be true" results. Could this be related to these biases?

A3: Yes. This can be a strong indicator of data leakage, an auxiliary issue where information from the test set inadvertently influences the training process [10]. This creates a false impression of high competence. To diagnose this, ensure rigorous validation practices: withhold the validation dataset until the final model is complete and perform all data preparation (like scaling) within cross-validation folds [10].

Experimental Workflow and Visualization

The following diagram illustrates the integrated, bias-aware evaluation workflow detailed in the guides above.

The Scientist's Toolkit: Key Research Reagents

This table details essential "reagents" — datasets, software, and metrics — for conducting rigorous, bias-aware model evaluation.

| Research Reagent | Function / Purpose in Evaluation | Example / Implementation Note |

|---|---|---|

| "Golden" Datasets [9] | A small, meticulously labeled subset of data used as a ground truth benchmark to diagnose auxiliary issues and test specific hypotheses. | Manually curate 100-500 examples representing a challenging or error-prone subgroup to test if a model fails due to data noise or a core limitation. |

| Stratified Cross-Validation [11] [10] | A resampling procedure that preserves the percentage of samples for each class in each fold. Crucial for reliably evaluating models on imbalanced datasets and detecting overfitting. | Use StratifiedKFold in scikit-learn. Essential for obtaining realistic performance estimates for minority classes. |

| Confusion Matrix [11] [9] | A table layout that visualizes model performance, allowing the detailed breakdown of true positives, false negatives, etc. Fundamental for moving beyond simple accuracy. | Analyze to calculate metrics like Precision, Recall (Sensitivity), and Specificity for each class, revealing biases against specific subgroups [11]. |

| SHAP / LIME | Post-hoc model interpretability tools. They help explain individual predictions and understand which features the model deems important, addressing Mechanistic Chauvinism by making strategies explicit. | Use SHAP (SHapley Additive exPlanations) for a consistent global view of feature importance, or LIME (Local Interpretable Model-agnostic Explanations) for local, instance-level explanations. |

| Performance Metrics Suite [11] [9] | A collection of metrics that provide a holistic view of model performance, preventing over-reliance on a single number like accuracy. | Essential metrics include: F1-Score (harmonic mean of precision/recall) [11], AUC-ROC (model ranking ability) [11], and Kolmogorov-Smirnov (K-S) statistic (degree of separation between positive/negative distributions) [11]. |

Frequently Asked Questions

Q1: What does the shift from "Trial-and-Error" to "By-Design" mean in drug development? The shift represents a fundamental change in philosophy. Historically, drug discovery relied heavily on serendipity and testing thousands of compounds (trial-and-error). The modern "By-Design" approach uses advanced computational methods, detailed knowledge of biological targets, and systematic principles like Quality by Design (QbD) to build quality and efficacy into drugs from the very beginning of the development process [12] [13] [14].

Q2: How can principles like Quality by Design (QbD) help address bias in my research? QbD emphasizes proactively identifying and controlling factors critical to quality. This structured approach helps researchers objectively define what matters most to their decision-making, thereby reducing the risk of unconscious anthropocentric bias influencing experimental design or data interpretation. It forces a focus on errors that truly impact the scientific conclusions rather than human assumptions [13].

Q3: What are the main advantages of rational drug design over traditional methods? Rational drug design is more targeted, efficient, and cost-effective. It minimizes reliance on chance by using knowledge of the biological target's structure and function to intelligently design molecules that will interact with it specifically. This leads to a much higher success rate compared to the traditional low-efficiency model where only one in thousands of tested compounds might become a drug [12].

Q4: My experimental results are inconsistent. How can a "By-Design" approach help? Inconsistency often stems from uncontrolled variables. A "By-Design" framework involves using mathematical models and systematic parameter analysis to understand and control your experimental process fully. For example, in drug crystallization, mathematical models can define precise "recipes" to consistently produce the desired crystal size and properties, eliminating guesswork and variability [14].

Troubleshooting Guides

Issue: High Attrition Rate in Early Drug Discovery

Problem Statement A high percentage of potential drug candidates are failing in early-stage testing due to lack of efficacy or poor pharmacokinetic properties.

Symptoms

- Drug candidates show promise in initial in vitro assays but fail in animal models.

- Compounds have poor water solubility, leading to precipitation in biological fluids.

- Molecules are metabolized too quickly or produce toxic metabolites.

- Difficulty in achieving target engagement in vivo.

Possible Causes

- Lack of Efficacy: The drug is effective in simple cell systems but cannot engage the target in a complex living organism [12].

- Poor Pharmacokinetics (PK): Low bioavailability, toxic metabolites, or unsuitable half-life (too short or too long) [12].

- Insufficient Specificity: The compound interacts with off-target proteins, causing adverse effects.

- Inadequate Solubility/Permeability: The molecule lacks the necessary balance of water and lipid solubility to reach its target site [12].

Step-by-Step Resolution Process

- Implement Early PK/PD Modeling: Use computational models to predict absorption, distribution, metabolism, and excretion (ADME) properties before synthesis. Prioritize compounds with favorable predicted profiles [12].

- Apply Structure-Based Drug Design: If the 3D structure of the target is known, use molecular docking and dynamics simulations to design ligands that are sterically, electrostatically, and hydrophobically complementary to the binding site [12].

- Utilize Ligand-Based Methods: If the target structure is unknown, use Quantitative Structure-Activity Relationship (QSAR) models to optimize the structure of lead compounds for better affinity and selectivity [12].

- Conduct Virtual Screening: Use computational tools to screen large virtual compound databases for hits with a high probability of success, reducing the need for physical HTS of every candidate [12].

- Integrate Explainable AI (xAI): Use AI models that provide transparent reasoning for their predictions (e.g., highlighting which molecular features drive activity) to avoid "black box" decisions and uncover hidden biases in the data [6].

Escalation Path If attrition remains high despite in silico optimization, re-evaluate the fundamental biological hypothesis of the target. Consider if the in vitro assays adequately represent the human disease state and are not biased by model system limitations.

Validation Step Confirm that the optimized lead compound shows improved efficacy and PK in relevant, predictive animal models that have been validated for translational relevance.

Issue: Managing Bias in AI-Driven Drug Discovery

Problem Statement AI/ML models used for target identification or compound screening are producing skewed or unreliable predictions, potentially due to biased training data.

Symptoms

- AI-generated drug candidates perform poorly for specific patient demographics.

- Model predictions are difficult to interpret or verify ("black box" problem).

- Outputs appear to reinforce existing, potentially flawed, scientific patterns.

Possible Causes

- Anthropocentric or Demographic Bias: Training datasets underrepresent certain biological contexts, sexes, or ethnic groups, leading to models that work poorly for those populations [6].

- Data Silos: Fragmented and non-diverse data sources limit the representativeness of the training input [6].

- Black-Box Models: Use of AI systems that do not reveal the reasoning behind their decisions, making it impossible to audit for bias [6].

Step-by-Step Resolution Process

- Audit Training Data: Proactively analyze the composition of datasets for diversity and representation across key biological and demographic variables [6].

- Implement Explainable AI (xAI): Shift from opaque models to those that provide clear, interpretable explanations for their predictions (e.g., counterfactual explanations) [6].

- Apply Data Augmentation: Use techniques like synthetic data generation to carefully balance underrepresented scenarios in training datasets without compromising patient privacy [6].

- Continuous Monitoring & Algorithmic Audits: Regularly test AI systems for fairness and performance across different subpopulations, and refine models accordingly [6].

Escalation Path For AI systems classified as "high-risk" under regulations like the EU AI Act, ensure compliance with transparency mandates. Engage with ethics boards and regulatory affairs specialists.

Validation Step Validate AI-prioritized targets or compounds in orthogonal experimental systems that are independent of the training data.

Experimental Data & Protocols

| Era | Dominant Paradigm | Key Milestone | Primary Method |

|---|---|---|---|

| Late 19th Century | Serendipity & Trial-and-Error | Emil Fisher's "Key and Lock" analogy for drug-receptor interaction. | Chemical modification of natural products; random screening. |

| Early-Mid 20th Century | Expansion of Trial-and-Error | Discovery of penicillin and sulfonamides by serendipity and screening. | Mass screening of natural and synthetic compound libraries. |

| Late 20th Century | Rise of Rational Design | Daniel Koshland's "Induced Fit" hypothesis; advent of computational chemistry. | Structure-Activity Relationships (SAR), early molecular modeling. |

| 21st Century | Systematic "By-Design" | Integration of AI, QbD principles, and high-throughput structural biology. | Structure-based design, virtual screening, AI, and QbD frameworks. |

| Attrition Reason | Historical Attrition Rate (Past) | Current Attrition Rate | Key Mitigation Strategy |

|---|---|---|---|

| Lack of Efficacy | High (Primary Reason) | High (Primary Reason) | Better target validation; more predictive disease models. |

| Pharmacokinetics (PK) | ~39% | ~1% | Widespread use of in silico ADME prediction tools. |

| Animal Toxicity | Significant Contributor | Reduced | Early screening for hepatotoxicity and cardiotoxicity. |

| Commercial/Other Issues | Minor Contributor | Variable | Portfolio optimization and early market analysis. |

Objective: To design a robust and consistent manufacturing process for a drug compound (e.g., crystallization) using mathematical models instead of trial-and-error.

Methodology:

- Data Collection & Sensor Integration: Install sensors to collect real-time data (e.g., temperature, concentration, particle size) during small-scale experimental batches.

- Model Development: Analyze the sensor data to develop mathematical models that describe the relationship between process parameters (e.g., cooling rate, stir speed) and critical quality attributes (e.g., crystal size distribution).

- Algorithm Creation: Create a series of integrated algorithms that combine designed experiments with the mathematical models. These algorithms will define an optimal "recipe" or path to achieve the desired output.

- Automated Control Implementation: Integrate the algorithmic recipe into an automated control system that adjusts process parameters in real-time to maintain the desired path and output, even in the presence of minor disturbances.

Key Materials:

- Process Sensors: For in-situ monitoring of critical parameters.

- Data Acquisition System: To log and process sensor data.

- Modeling Software: Platform for developing and running mathematical simulations.

- Automated Bioreactor/Crystallizer: System capable of receiving control signals and adjusting parameters.

The Scientist's Toolkit

Research Reagent Solutions

| Item | Function in "By-Design" Research |

|---|---|

| QSAR Software | Predicts biological activity and physicochemical properties based on compound structure, enabling virtual optimization before synthesis [12]. |

| Molecular Docking Tools | Virtually screens and ranks compounds based on their predicted fit and interactions with a 3D target structure [12]. |

| AI/xAI Platforms | Identifies novel targets and compounds from large datasets; explainable AI provides rationale for predictions to audit and reduce bias [6]. |

| Process Modeling Software | Applies mathematical models to design and control manufacturing processes (e.g., crystallization) for consistent, high-quality output [14]. |

| Critical-to-Quality (CTQ) Factors Framework | A QbD tool to proactively identify and focus resources on factors most essential to trial integrity and decision-making [13]. |

Experimental Workflows and Pathways

Drug Discovery Methodology Evolution

This technical support center provides resources for researchers aiming to identify and overcome anthropocentric bias in cognitive and drug discovery research. The following guides and FAQs address specific experimental issues rooted in human-centered assumptions.

FAQ: Understanding Anthropocentric Bias in Research

Q1: What is anthropocentric bias in the context of cognitive research? Anthropocentric bias is the tendency to evaluate non-human systems, like artificial intelligence (AI) or animal models, primarily by human standards, potentially overlooking genuine competencies or unique mechanisms that differ from our own [4]. In research, this can manifest as designing experiments and interpreting results through a uniquely human lens, thereby limiting the scope of discovery.

Q2: What are the practical types of this bias I might encounter? Researchers should be particularly aware of two types:

- Type-I Anthropocentrism: Overlooking how auxiliary, non-core factors can impede a system's performance, leading to the incorrect conclusion that the system lacks a specific competence [4]. For example, an AI might fail a task due to the way the task is prompted, not because it lacks the underlying capability.

- Type-II Anthropocentrism: Dismissing a system's mechanistic strategies simply because they differ from human cognitive processes, rather than evaluating if the strategy is genuinely competent [4].

Q3: How does this bias limit scientific discovery? Anthropocentric bias can restrict discovery by causing researchers to:

- Prioritize human-like pathways and mechanisms, neglecting viable alternatives [15].

- Misinterpret performance failures in AI or animal models, incorrectly attributing them to a lack of underlying ability [4].

- Formulate hypotheses that are only capable of incremental, human-like discoveries, while being unable to generate truly original hypotheses or detect anomalies that could lead to fundamental breakthroughs [16].

Q4: What is the alternative to a human-centered approach? The alternative is to foster an empirically-driven approach that maps tasks to system-specific capacities and mechanisms [4]. This involves combining carefully designed behavioral experiments with mechanistic studies to understand how a system operates on its own terms, rather than just how well it mimics human performance. The goal is a balanced collaboration where AI, for instance, serves as a tool to increase productivity, while human oversight ensures ethical rigor and creative exploration [17].

Troubleshooting Guides: Common Experimental Scenarios

Scenario 1: AI-Generated Hypotheses Lack Originality

Problem: Your AI model only produces incremental variations of known hypotheses and fails to propose novel, fundamental discoveries.

| Potential Cause | Diagnostic Check | Corrective Action |

|---|---|---|

| Training Data Limitation | Analyze if the training corpus consists only of established literature, creating a "knowledge monoculture." [17] | Curate a more diverse dataset, including preprint articles, negative results, and data from unconventional sources. |

| Algorithmic Overfitting | Check if the model excels at interpolation but fails at extrapolation beyond its training domain. | Employ or develop algorithms designed for outlier detection and exploration of low-probability spaces. |

| Human Feedback Bias | Review whether human-in-the-loop feedback consistently rewards conservative, known-correct answers. | Implement feedback mechanisms that explicitly reward novelty and risk-taking, even if some outputs are incorrect. |

Underlying Epistemological Issue: This scenario often stems from a Type-II anthropocentric bias, where the AI is expected to mimic the human process of hypothesis generation. The solution requires acknowledging that AI might discover through different, potentially non-intuitive, mechanistic strategies [4]. Current GenAI is often good only at discovery tasks involving a known representation of domain knowledge and struggles to achieve fundamental discoveries from scratch as humans can [16].

Scenario 2: Unexplained Phenomena in Animal Model Behavior

Problem: Observed behaviors in your animal model do not align with predictions based on human cognitive or neurological pathways.

Troubleshooting Protocol:

- Repeat the experiment: Unless cost or time prohibitive, repeat to rule out simple mistakes or random variation [18].

- Re-evaluate controls: Ensure you have the appropriate positive and negative controls. A positive control can confirm that the experimental setup is capable of producing a known result, helping to isolate the cause of the unexpected behavior [18].

- Challenge initial assumptions: Conduct a literature review to see if the unexpected result has a plausible biological basis you hadn't considered. Is the behavior truly an anomaly, or does it reflect a valid, non-human mechanism? [18]

- Systematically vary one variable at a time: Generate a list of variables that could explain the divergence (e.g., environmental factors, sensory modalities, social structures). Change only one variable at a time to isolate the causal factor [18].

- Document everything: Take detailed notes on all changes and outcomes for you and your team to review [18].

Key Reflection: Before concluding the model is invalid, consider if you are applying a Type-I anthropocentric bias. The animal's performance "failure" relative to human standards might be caused by an auxiliary factor, such as a difference in motivation or sensory perception, rather than a lack of the cognitive function you are studying [4].

Scenario 3: Inconsistent Results with AI-Assisted Data Analysis

Problem: An AI tool for analyzing experimental data (e.g., cell imaging) produces inconsistent or unreliable results, raising concerns about its utility.

Troubleshooting Workflow: The following diagram outlines a systematic workflow to diagnose and correct issues with AI-assisted data analysis tools, focusing on moving beyond the assumption that the tool should perform perfectly with human-curated data.

Core Principle: The instability of AI models is often a transparency issue. Inconsistent results can stem from opaque models where the impact of poor data quality is not visible, directly affecting reproducibility and reliability [17]. Implementing Explainable AI (XAI) frameworks is crucial to address this, allowing researchers to understand the model's decision-making process and identify the root cause of inconsistencies [17].

The Scientist's Toolkit: Key Research Reagent Solutions

The following table details essential materials and their functions in experiments designed to minimize anthropocentric bias.

| Item/Reagent | Primary Function | Role in Mitigating Bias |

|---|---|---|

| Computer-Simulated Laboratory (e.g., SAMGL) | Provides an environment for AI or researchers to conduct genetics experiments and records all manipulations and results [16]. | Allows AI models to perform goal-guided experimental design without human physical intervention, testing non-human generated hypotheses. |

| Causal Directed Acyclic Graphs (DAGs) | Visual tools that formalize assumptions about causal relations among variables in a system [19]. | Helps research teams make causal assumptions explicit, revealing and resolving disagreements based on different (and potentially biased) mental models. |

| Explainable AI (XAI) Frameworks | Emerging methods and tools designed to make the decisions of AI models transparent and interpretable to human researchers [17]. | Addresses the "black box" problem, allowing scientists to audit AI reasoning for non-human strategies or hidden biases, ensuring critical evaluation. |

| Diverse Training Datasets | Data corpora that include negative results, cross-disciplinary studies, and non-mainstream sources. | Counters "knowledge monocultures" and algorithmic bias by preventing AI tools from being shaped solely by existing, human-centric literature [17]. |

| Species-Fair Behavioral Assays | Experimental tasks designed with equivalent auxiliary demands (instructions, motivation) for both humans and non-human systems [4]. | Enables valid cross-species and human-AI comparisons by ensuring performance differences are not due to mismatched experimental conditions. |

Technical Support Center: Troubleshooting Animal-to-Human Translation

Frequently Asked Questions (FAQs)

FAQ 1: What is the typical success rate for therapies transitioning from animal studies to human clinical use?

Based on a comprehensive 2024 umbrella review of 122 articles and 367 therapeutic interventions, the translation rates are as follows [20]:

| Transition Stage | Success Rate | Typical Timeframe |

|---|---|---|

| Any Human Study | 50% | 5 years |

| Randomized Controlled Trial (RCT) | 40% | 7 years |

| Regulatory Approval | 5% | 10 years |

This review found an 86% concordance between positive results in animal and clinical studies, suggesting that when animal studies show efficacy, human studies are likely to as well. However, the low final approval rate indicates significant challenges in later development stages [20].

FAQ 2: What are the main factors contributing to the translational gap in animal research?

The translational gap stems from issues with both internal validity (study design) and external validity (generalizability) [21]:

| Validity Type | Common Issues | Impact |

|---|---|---|

| Internal Validity | Lack of randomization, blinding, low statistical power | Unreliable data, irreproducible results |

| External Validity | Species differences, irrelevant endpoints, poor model selection | Limited human applicability |

FAQ 3: How can researchers improve the translational value of animal studies?

Implement these evidence-based strategies [21]:

- Use systematic frameworks like FIMD (Framework to Identify Models of Disease) for model selection

- Follow ARRIVE and PREPARE guidelines for study design and reporting

- Conduct systematic reviews and meta-analyses of existing animal data

- Validate animal models against multiple human disease domains (genetics, histology, pharmacology, etc.)

Troubleshooting Common Experimental Issues

Issue: Inconsistent results between animal and human studies

Diagnosis Steps:

- Verify internal validity of animal studies using SYRCLE risk of bias tool [21]

- Assess how well your animal model replicates key human disease aspects using FIMD [21]

- Check for species differences in drug metabolism and pathophysiology

- Evaluate whether endpoints directly translate to human clinical outcomes

Solutions:

- Select animal models with validated predictive validity for your specific disease area

- Incorporate human-relevant biomarkers and functional endpoints

- Use multiple animal models to confirm findings before human trials

Issue: Failed translation despite promising animal data

Diagnosis Steps:

- Analyze whether auxiliary factors (dosage, timing, administration route) differed between species [4]

- Review clinical trial design for mismatches with animal study conditions

- Assess whether the animal model accurately captured human disease complexity

Solutions:

- Implement the "IB-derisk" tool to integrate preclinical PK/PD data into early clinical development [21]

- Use humanized animal models where appropriate

- Consider programmable virtual human technologies as complementary approaches [22]

Research Reagent Solutions

| Reagent/Framework | Function | Application |

|---|---|---|

| FIMD (Framework to Identify Models of Disease) | Standardizes assessment and validation of disease models | Selecting optimal animal models with highest translational potential [21] |

| SYRCLE Risk of Bias Tool | Evaluates internal validity of animal studies | Identifying methodological flaws in study design [21] |

| ARRIVE Guidelines | Reporting standards for animal research | Improving transparency and reproducibility [21] |

| Programmable Virtual Humans | Computational models simulating human physiology | Predicting drug behavior before human trials [22] |

| Meta-analysis Protocols | Quantitative synthesis of multiple animal studies | Determining overall evidence strength and generalizability [20] |

Experimental Protocols

Protocol: Systematic Assessment of Animal Model Validity Using FIMD

Purpose: To objectively evaluate how well an animal model replicates human disease characteristics [21]

Methodology:

- Domain Identification: Assess eight core domains: Epidemiology, Symptomatology and Natural History, Genetics, Biochemistry, Aetiology, Histology, Pharmacology, and Endpoints

- Validation Sheet Creation: Document answers to standardized questions about each domain with supporting references

- Scoring System Application: Weight all domains equally and calculate similarity scores

- Radar Plot Visualization: Generate comparative visualization of domain scores

- Pharmacological Validation: Include reporting quality and risk of bias assessment for drug intervention studies

Expected Outcomes: Quantitative assessment of which human disease aspects are replicated in the animal model, facilitating model selection and interpretation of translational potential.

Protocol: Conducting Meta-analysis of Animal-to-Human Translation

Purpose: To quantitatively evaluate concordance between animal and human studies [20]

Methodology:

- Literature Search: Systematic search of Medline, Embase, and Web of Science using predefined strings

- Study Selection: Apply inclusion/exclusion criteria for systematic reviews evaluating animal-to-human translation

- Data Extraction: Extract proportions of therapies advancing to each development stage and concordance rates

- Quality Assessment: Evaluate included studies using 10-item checklist for systematic reviews

- Statistical Analysis: Pool relative risks using random-effects models, calculate heterogeneity statistics

Expected Outcomes: Quantitative estimates of translation rates and concordance between animal and human results across multiple therapeutic areas.

Visualizing the Translational Workflow

Animal to Human Translation Pathway

Anthropocentric Bias Considerations in Translation Research

When evaluating animal models, avoid these anthropocentric biases that parallel those in AI cognition research [4]:

Type-I Anthropocentrism: Assuming performance failures in animal models always indicate lack of predictive validity, overlooking auxiliary factors like dosage, administration routes, or endpoint measurements that may differ from human trials.

Type-II Anthropocentrism: Dismissing mechanistic strategies in animal models that differ from human pathophysiology as invalid, rather than considering they may represent genuine but different biological pathways.

Mitigation Strategies:

- Implement species-fair comparisons that account for fundamental biological differences

- Develop evaluation frameworks specific to animal model capabilities rather than imposing human-centric standards

- Consider how auxiliary task demands in experimental designs may disproportionately affect animal versus human outcomes

Future Directions: Programmable Virtual Humans

Emerging technologies like programmable virtual humans offer complementary approaches to bridge the translational gap [22]. These computational models integrate:

- Physics-based physiology models

- Biological and clinical knowledge graphs

- Machine learning trained on multi-omics datasets

- Simulation of drug effects from molecular to organ-level

This approach could reduce reliance on animal testing while improving prediction of human responses before clinical trials begin.

Diligent, end-to-end bias-awareness is essential in research. It not only improves the accuracy and robustness of your results but also assists in recognizing and appropriately communicating the limitations of your models and outputs [23]. This self-assessment checklist is designed to help your team identify, evaluate, and manage the risks associated with a variety of biases that can occur before and throughout your research project workflow. The content is framed within the context of addressing anthropocentric bias—the human-centered thinking that can skew the evaluation of non-human systems like artificial cognition [4] [2]. This is particularly pertinent for researchers in cognitive science and drug development, where fair, species-fair, or system-fair comparisons are vital.

A Framework for Understanding Bias

Biases can be grouped according to the project workflow stage where they have the biggest impact. Reflecting on them early and throughout your project allows for proactive mitigation [23].

Anthropocentric Bias

Anthropocentric bias entails evaluating non-human systems, such as large language models (LLMs), according to human standards without adequate justification. It can lead to two types of errors [4]:

- Type-I Anthropocentrism: Overlooking how auxiliary factors can impede a system's performance despite its underlying competence.

- Type-II Anthropocentrism: Dismissing a system's mechanistic strategies that differ from human ones as not genuinely competent.

Other Common Biases in Research

The following table summarizes other common biases that can affect research validity [23] [24].

| Bias Category | Description | Potential Impact on Research |

|---|---|---|

| Selection Bias | Systematic error introduced by how participants or data are selected. | Non-representative samples, reduced generalizability of findings. |

| Reporting Bias | The selective revealing or suppression of information or outcomes. | Overestimation of effect sizes, distorted meta-analyses. |

| Measurement Bias | Systematic error introduced during data collection or measurement. | Inaccurate measurement of variables, compromised internal validity. |

| Confounding Bias | Distortion caused by a third variable that influences both the independent and dependent variables. | Spurious associations, incorrect conclusions about causality. |

| Confirmation Bias | The tendency to search for, interpret, and recall information in a way that confirms one's preexisting beliefs. | Ignoring contradictory evidence, reinforcing erroneous hypotheses. |

Self-Assessment Checklist: Identifying and Mitigating Bias

Use the following deliberative prompts for each stage of your research workflow. These questions are designed to help you evaluate the extent to which potential bias is relevant for your data, analysis, and research methods [23].

Stage 1: Project Scoping & Hypothesis Formulation

- Have we explicitly considered and defined what constitutes "competence" vs. "performance" for the system we are studying, per the distinction crucial to cognitive science? [4]

- Have we challenged our assumption that tasks trivial for humans are similarly trivial for the non-human system (e.g., an AI model or animal subject) under evaluation? [4]

- Have we examined our hypothesis for anthropocentric assumptions that might lead us to overlook a system's genuine but non-humanlike cognitive strategy (Type-II anthropocentrism)? [4]

- Are our research questions framed in a way that allows for the discovery of non-human-typical capacities?

Stage 2: Experimental Design & Data Collection

- Have we designed "species-fair" or "system-fair" comparisons by ensuring that humans and non-human systems are subject to similar auxiliary task demands (e.g., instructions, examples, motivation)? [4]

- Have we identified and minimized auxiliary factors (e.g., task demands, computational limitations, mechanistic interference) that could cause performance failure in a non-human system despite underlying competence (Type-I anthropocentrism)? [4]

- What is our strategy for ensuring a representative sample and minimizing selection bias? [24]

- Are our data collection methods and instruments standardized to prevent measurement bias?

Stage 3: Data Analysis & Interpretation

- When a system fails a task, is our first instinct to investigate auxiliary failure factors (Type-I) rather than immediately concluding a lack of core competence? [4]

- When a system succeeds, do we investigate whether its strategy differs from the human one, rather than automatically assuming human-like cognition? [4]

- Are we actively looking for and accounting for confounding variables? [24]

- Have we established and committed to a data analysis plan before examining the data to reduce confirmation bias and selective reporting? [24]

Stage 4: Reporting & Communication

- Do we clearly communicate the limitations of our experimental design, including any residual auxiliary task demands that might have impacted performance? [23]

- Do we accurately report the system's mechanistic strategies, even when they differ from human cognition, and avoid anthropomorphic language unless justified? [4]

- Have we reported all pre-specified outcomes and analyses to minimize reporting bias? [24]

- Have we clearly stated the assumptions behind our methodological choices and their potential impact on the risk of bias? [23]

Troubleshooting Guide: FAQs on Addressing Bias

This section directly addresses specific issues research teams might encounter.

Q: Our AI model failed a syntactic competence test that involved making grammaticality judgments. Does this mean it lacks syntactic understanding?

A: Not necessarily. This could be a classic case of Type-I anthropocentrism where an auxiliary task demand is masking competence. The demand to generate explicit metalinguistic judgments is conceptually independent of the underlying capacity to track grammaticality. You can troubleshoot this by using a different evaluation method, such as direct probability estimation on minimal pairs, which may more validly measure the target capacity [4].

Q: We observed an animal model successfully solving a problem but using a strategy completely different from the human approach. Should we consider this a valid cognitive capacity?

A: Yes. Dismissing a genuine competence solely because the mechanistic strategy differs from humans is Type-II anthropocentrism. Your experimental focus should be on whether the system reliably achieves the goal under ideal conditions, not on whether its process is human-like. Fair assessment requires acknowledging the possibility of diverse cognitive architectures [4].

Q: How can we systematically assess the risk of bias in the individual studies we are including in our systematic review?

A: You must apply a formal quality assessment using a tool appropriate to the study design. For example:

- Use the Cochrane RoB 2 tool for randomized trials [25].

- Use the ROBINS-I tool for non-randomized studies of interventions [25].

- Use the Newcastle-Ottawa Scale (NOS) for case-control and cohort studies [25]. This process involves judging each study for potential biases across specific domains (e.g., selection, performance, detection) to decide how to weight or include their data in your synthesis [24].

Q: A reviewer criticized our evaluation of an LLM's reasoning capacity as "anthropomorphic." How do we balance this with the risk of being anthropocentric?

A: Striking this balance requires rigorous empiricism. To counter charges of anthropomorphism, provide clear mechanistic explanations or behavioral evidence for your claims. To avoid anthropocentrism, design experiments that do not automatically attribute performance failures to a lack of competence. The goal is an impartial, empirically-driven approach that maps tasks to a system's specific capacities without presupposing human-like internals or unfairly applying human standards [4].

Experimental Workflow for Bias-Conscious Evaluation

The following diagram outlines a rigorous, iterative methodology for evaluating cognitive capacities in non-human systems while mitigating anthropocentric bias.

The table below details essential tools and resources for identifying and managing bias in research.

| Tool / Resource | Function | Applicability |

|---|---|---|

| Cochrane RoB 2 Tool [25] | Assesses risk of bias in randomized trials across five domains (e.g., randomization, deviations). | Randomized Controlled Trials (RCTs) |

| ROBINS-I Tool [25] | Evaluates risk of bias in non-randomized studies of interventions by assessing confounders. | Non-randomized Studies |

| Newcastle-Ottawa Scale (NOS) [25] | Quality assessment star-rating system for case-control and cohort studies. | Observational Studies |

| AGREE-II Instrument [25] | Appraises the quality and reporting of clinical practice guidelines. | Guideline Development |

| Performance/Competence Distinction [4] | Conceptual framework for distinguishing a system's ideal capacity from its observed behavior. | Cognitive Science, AI Evaluation |

| Bias Self-Assessment Framework [23] | Provides deliberative prompts to identify, evaluate, and manage bias risks throughout a project. | General Research Projects |

Practical Strategies: Implementing Bias-Aware Research Methodologies

Principled Approaches for Data Bias Mitigation in Research Datasets

FAQs: Addressing Data Bias in Your Research

What is the most critical stage for bias mitigation in a research dataset? While bias can enter at any stage, the pre-processing phase is often considered most critical. Proactively creating a fair dataset, for instance by using causal models to adjust cause-and-effect relationships, addresses bias at its source before it can be learned and amplified by analytical models [26]. Ensuring representative data collection prevents the "bias in, bias out" problem that is difficult to fully correct later [27].

How can I tell if my dataset is biased? Begin by analyzing data distribution to check if certain groups are over or underrepresented [28]. For instance, a facial recognition system trained mostly on lighter-skinned individuals will struggle with darker-skinned faces. Use bias detection tools like AIF360 (IBM), Fairlearn (Microsoft), or Google's What-If Tool to systematically measure imbalances and disproportionate impacts that may be challenging to spot manually [28].

My model performs well on average but fails for a specific subgroup. Is this bias? Yes, this is a classic sign of bias, potentially representation bias. Good average performance can mask poor performance for underrepresented groups. This necessitates analysis of performance metrics disaggregated across different demographic groups to uncover these hidden disparities [28] [27].

Can I mitigate bias if I only have access to a pre-trained model (and not the training data)? Yes, post-processing methods are designed for this scenario. Techniques like the Reject Option based Classification (ROC) or the Randomized Threshold Optimizer can be applied to the model's outputs to adjust predicted labels and improve fairness, even without access to the underlying training data or model internals [29].

What is anthropocentric bias in cognitive research, and how does it relate to data bias? Anthropocentric bias involves evaluating non-human systems, like AI, according to human standards without adequate justification and dismissing different strategies as incompetent [4]. In research, this can lead to systemic biases in dataset creation—for example, over-representing human-like behaviors or cognitive strategies while under-representing valid non-human alternatives. This can skew what your model learns as "correct" [4] [30].

Troubleshooting Guides

Problem: Underperformance on Minority Subgroups

This indicates potential representation or historical bias.

Steps to Mitigate:

- Diagnose: Disaggregate your performance metrics (e.g., accuracy, F1-score) by sensitive attributes like race, gender, or age to quantify the performance gap [27].

- Pre-process Data:

- In-Process Solution: Implement fairness constraints during algorithm development. Add a regularization term to your loss function that penalizes discrimination against protected groups [29].

Problem: Model Perpetuates Historical Inequalities

Your model may be learning from data that reflects past societal biases.

Steps to Mitigate:

- Identify Bias Source: Examine the dataset for correlations between outcomes and sensitive attributes. Use techniques like Learning Fair Representations (LFR) to find a latent representation of the data that encodes the useful information while obfuscating information about protected attributes [29].

- Mitigate with Causal Modeling: Use a mitigated causal model, such as a Bayesian network, to explicitly adjust cause-and-effect relationships and probabilities, creating a de-biased version of your dataset for training [26].

- Post-processing: Apply classifier correction methods. The Calibrated Equalized Odds technique, for example, adjusts the output probabilities of a trained model to satisfy equalized odds constraints across groups [29].

Problem: Suspected Anthropocentric Bias in Comparative Studies

This occurs when experimental designs unfairly disadvantage non-human systems (like AI) due to mismatched auxiliary task demands [4].

Steps to Mitigate:

- Audit Experimental Design: Ensure "species-fair" comparisons. If human subjects received detailed instructions, training, or feedback, provide equivalent context to the AI (e.g., through carefully designed few-shot prompts) [4].

- Level the Playing Field: For AI evaluation, consider using direct probability estimation instead of metalinguistic judgment prompts when possible. The latter introduces an auxiliary task (e.g., explaining a judgment) that may be trivial for humans but challenging for AI and irrelevant to the core competence being tested [4].

- Reframe the Question: Instead of asking "Does the AI perform a task like a human?", investigate "What is the AI's specific capacity and what mechanistic strategy does it use?". This avoids Type-II anthropocentrism, which dismisses different but competent strategies [4].

Quantitative Data on Bias in Research

Table 1: Burden of Bias in Contemporary Healthcare AI Models (as of 2023) [27]

| Model Data Type | % of Studies with High Risk of Bias (ROB) | % of Studies with Low ROB | Primary Sources of High ROB |

|---|---|---|---|

| All Types (Sample) | 50% | 20% | Absent sociodemographic data; Imbalanced datasets; Weak algorithm design |

| Neuroimaging (Psychiatry) | 83% | Not Specified | Lack of external validation; Subjects primarily from high-income regions |

Table 2: Performance Improvement from Debiasing in a Drug Approval Prediction Model [31]

| Model Type | R² Score | True Positive Rate | True Negative Rate |

|---|---|---|---|

| Standard (Biased) Model | 0.25 | 15% | 99% |

| Debiased (DVAE) Model | 0.48 | 60% | 88% |

Table 3: Evolution of Bias Mitigation Strategies (2025-2035) [32]

| Aspect | 2025 | 2030 | 2035 |

|---|---|---|---|

| Awareness | Limited formal training | Increased focus on bias awareness | Comprehensive training programs |

| Technology | Basic data analysis tools | AI-driven pattern recognition | VR/AR for immersive data interaction |

| Decision-Making | Traditional hierarchical structures | Collaborative interdisciplinary teams | Dynamic teams with real-time feedback |

Experimental Protocols for Bias Mitigation

Protocol 1: Pre-processing with Causal Fair Data Generation

This protocol creates a mitigated bias dataset using causal models before main model training [26].

Methodology:

- Causal Graph Construction: Define a Bayesian network that maps the cause-and-effect relationships between variables in your dataset, including sensitive attributes.

- Bias Mitigation Algorithm: Apply a mitigation training algorithm to this causal model. This algorithm adjusts the conditional probabilities and relationships within the Bayesian network to reduce unfair dependencies on sensitive attributes.

- Fair Dataset Generation: Use the modified causal model to generate a new, synthetic dataset. This dataset maintains the underlying structure and transparency of the original data but with reduced bias.

- Validation: Train your target AI model on this generated fair dataset and evaluate fairness metrics on a hold-out test set.

Protocol 2: In-Processing Mitigation with Adversarial Debiasing

This technique modifies the training algorithm itself to increase fairness [29].

Methodology:

- Model Architecture: Set up two competing models:

- Predictor: A model trained to accurately predict the true label (e.g., "loan approval").

- Adversary: A model trained to predict the sensitive attribute (e.g., "gender") from the Predictor's predictions or internal state.

- Adversarial Training: Train both models simultaneously. The Predictor aims to minimize its prediction error while also maximizing the Adversary's prediction error for the sensitive attribute. This forces the Predictor to learn features that are informative for the main task but uninformative for discriminating based on the sensitive attribute.

- Output: The resulting Predictor model should make accurate predictions that are fair with respect to the specified sensitive attribute.

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Tools for Data Bias Mitigation

| Tool / Solution | Type | Primary Function |

|---|---|---|

| AIF360 (IBM) | Software Library | An open-source Python library containing over 70 fairness metrics and 10 mitigation algorithms to test for and reduce bias in datasets and models [28]. |

| Fairlearn (Microsoft) | Software Toolkit | A Python package that enables the assessment and improvement of fairness in AI systems, focusing on metrics and mitigation algorithms for group fairness [28]. |

| What-If Tool (Google) | Visualization Tool | An interactive visual interface for probing model behavior without coding, allowing researchers to analyze model performance on different data slices and simulate mitigation strategies [28]. |

| Debiasing VAE | Algorithm | A state-of-the-art model for automated debiasing; used for tasks like predicting drug approvals to correct for historical biases in development data [31]. |

| Stratified Sampling | Methodology | A sampling technique that divides the population into homogeneous subgroups (strata) and then draws a random sample from each, ensuring all groups are adequately represented [28]. |

| Causal Bayesian Network | Modeling Framework | A graphical model that represents causal relationships. Can be explicitly modified with mitigation algorithms to generate fair synthetic datasets for training [26]. |

Workflow and Relationship Diagrams

Bias Mitigation Workflow

Anthropocentric Bias Taxonomy

Frequently Asked Questions (FAQs)

Q1: What is "mechanistic chauvinism" in the context of cognitive research? Mechanistic chauvinism is the bias of dismissing the problem-solving strategies of non-human systems (such as AI or animals) as invalid or inferior simply because the underlying mechanisms differ from those used by humans [4]. It is a specific form of anthropocentric bias that can lead to underestimating genuine cognitive competencies.

Q2: Why is overcoming this bias important for drug development and research? Overcoming this bias is crucial for fair and accurate evaluation of novel research tools, including AI and animal models. In drug development, this allows researchers to properly value non-human data and computational models, which can accelerate discovery and prevent the dismissal of valid, non-human-centric results [4].

Q3: My AI model failed a task designed to test a cognitive ability. Does this prove it lacks that ability? Not necessarily. This is a classic Type-I anthropocentric bias [4]. Performance failure can be caused by auxiliary factors unrelated to the core competence, such as:

- Task Demands: The test may require metalinguistic judgments or other skills irrelevant to the capacity being studied [4].

- Computational Limitations: Constraints like output length can bottleneck performance [4].

- Mechanistic Interference: Internal circuit competition can impede task execution [4].

Q4: What are the first steps in troubleshooting an experiment that may be affected by this bias? Begin by systematically challenging your assumptions about what constitutes a "correct" problem-solving strategy [18] [33]:

- Repeat the experiment to rule out simple mistakes [18].

- Consider whether the experiment actually failed, or if you are misinterpreting a valid non-human strategy as a failure [18].

- Ensure you have the appropriate controls, including a positive control for the cognitive capacity you are testing [18].

- Check for anthropocentric assumptions in your experimental design that may impose unfair demands on the non-human subject [4].

Q5: How can I design a "species-fair" or "system-fair" comparative experiment? To level the playing field, ensure that humans and non-human systems are subject to similar auxiliary task demands [4]. This can involve providing comparable instructions, examples, and motivational contexts. The goal is to map cognitive tasks to the specific capacities and mechanisms of the system you are testing, rather than forcing it to adhere to a human standard [4].

Troubleshooting Guide: Diagnosing Anthropocentric Bias in Experimental Design

Follow this workflow to identify and correct for mechanistic chauvinism in your research protocols. The diagram below outlines the key diagnostic steps.

Experimental Protocol: The Multi-Access Box (MAB) Approach

The MAB is a paradigm from comparative cognition designed to study problem-solving flexibility in a standardized way while mitigating biases. It allows you to observe how different systems discover and prefer solutions without presuming a single "correct" mechanistic strategy [34].

1.0 Objective To examine species or system differences in how novel problems are explored, approached, and solved, thereby collecting standardized data on problem-solving ability, innovativeness, and flexibility [34].

2.0 Key Components of the MAB Setup The core apparatus presents a problem that can be solved in multiple, equally valid ways. The subject must extract a reward (e.g., food for an animal, a data token for an AI) from a central location using one of several available methods [34].

3.0 Procedure

- Apparatus Familiarization: Allow the subject to explore the apparatus without a solvable problem to assess baseline exploration and neophobia.

- Problem Presentation: Introduce the problem with all potential solutions available.

- Data Collection: Record the following:

- The order in which potential solutions are explored.

- The latency to first contact with a solution mechanism.

- The latency to first successful solution.

- Which solution is discovered first.

- Which solution becomes the preferred method.

- Whether the subject flexibly switches between multiple solutions.

- Criterion and Testing: Continue testing until a predetermined criterion is met (e.g., a number of consecutive successes) or for a fixed number of trials.

The workflow for implementing the MAB approach is visualized below.

4.0 Interpretation and Analysis The key is to interpret the results without a human-centric hierarchy of solutions. Focus on the profile of problem-solving:

- Innovation: The discovery of any solution is a success.

- Flexibility: The use of multiple solutions indicates a lack of mechanistic rigidity.

- Efficiency: Preference for a solution can be analyzed in terms of energy expenditure or time, not its similarity to a human approach.

Research Reagent Solutions: A Toolkit for Bias-Aware Evaluation

The following table details key conceptual "reagents" essential for experiments designed to overcome mechanistic chauvinism.

| Research Reagent | Function & Explanation |

|---|---|

| Performance/Competence Distinction [4] | A conceptual framework to separate a system's observable behavior (performance) from its underlying computational capacity (competence). Prevents incorrect conclusions from performance failures caused by auxiliary factors. |

| Auxiliary Factor Audit [4] | A checklist to identify and control for non-core task demands (e.g., metalinguistic prompting, output length) that may unfairly impede a non-human system's performance. |

| Multi-Access Paradigm [34] | An experimental apparatus or design that allows a problem to be solved in multiple, mechanistically distinct ways. It directly tests for flexibility and helps reveal a system's inherent solution preferences. |

| Species-/System-Fair Controls [4] | Control conditions that are adapted to the perceptual, motivational, and anatomical realities of the test subject, rather than being imported directly from human experimental psychology. |

| Mechanistic Strategy Analysis | A commitment to describing the problem-solving strategies employed by a system on their own terms, rather than solely as a deviation from a human benchmark. |

The table below summarizes the two main types of anthropocentric bias to guard against in your research.

| Bias Type | Definition | Risk to Research Validity |

|---|---|---|

| Type-I Anthropocentrism [4] | Assuming that a system's performance failure on a task always indicates a lack of underlying competence. | Leads to underestimating the capabilities of non-human systems (AI, animal models) by ignoring the role of auxiliary factors and mismatched experimental conditions. |

| Type-II Anthropocentrism [4] | Dismissing a system's successful performance because its mechanistic strategy differs from the human strategy. | Leads to a failure to recognize genuine, non-human-like competencies and innovative problem-solving strategies, stifling innovation and understanding. |

FAQs: Navigating the Transition to Human-Relevant Models

Q1: What are the main scientific drivers for transitioning to New Approach Methodologies (NAMs)?

The transition is driven by the high failure rate of drugs that appear safe and effective in animals but fail in human trials. Over 90% of drugs fall in human trials due to safety or efficacy issues that were not predicted by animal testing [35]. This is largely because traditional animal models, such as inbred rodent strains, often fall short of predicting human outcomes due to fundamental species differences in biology and pharmacogenomics [36] [37]. For example, the theralizumab antibody showed great efficacy in mouse models but caused a severe cytokine storm in humans at a fraction of the dose found safe in mice [36].

Q2: What is the regulatory status of non-animal methods?

Recent legislative changes have paved the way for alternatives. The FDA Modernization Act 2.0, signed into law in December 2022, permits the use of specific alternatives to animal testing for safety and effectiveness assessments. This includes cell-based assays and advanced computational models [36]. The FDA has also published a "Roadmap to Reducing Animal Testing," though achieving its vision within the set timeframe remains a challenge for the industry [35].

Q3: What are iPSC-derived models and why are they promising?

Induced Pluripotent Stem Cells (iPSCs) are created by reprogramming adult somatic cells (e.g., from skin or blood) into a pluripotent state, allowing them to be differentiated into almost any human cell type [36] [37]. Their key advantages include:

- Human-Relevance: They provide a more accurate representation of human disease mechanisms and drug responses [37].

- Personalization: They can be derived from individuals with specific diseases or genetic backgrounds, enabling personalized drug screening [36] [37].

- Ethical & Sustainable: They offer an ethically sound and sustainable long-term source of human cells for research [37].

Q4: What are the common practical challenges when working with iPSC-based models?

Researchers often encounter several technical hurdles, summarized in the table below.

Table 1: Common Challenges and Potential Solutions in iPSC-Based Research

| Challenge | Description | Potential Mitigation Strategies |

|---|---|---|

| Differentiation Variability | Sensitivity of differentiation protocols to small changes, leading to inconsistent cell types and performance across experiments [37]. | Use of high-quality, rigorously tested differentiation reagents and protocols to promote reliable, reproducible outcomes [37]. |

| Biological Variation | Differences in donor genetics or reprogramming techniques can impact cell performance and data interpretation [37]. | Sourcing cells from diverse, well-characterized donors and using quality control tools to reduce variability. |

| Scalability & Throughput | Difficulty in scaling up bioengineered 3D models (like organoids) for high-throughput screening while maintaining physiological relevance [36]. | Employing innovative methods like single-cell technologies and "cell villages" where multiple barcoded cell lines are cultured and analyzed simultaneously [36]. |

| Complex Data Management | Working with complex qualitative data from human-relevant models requires standardized processing and coding protocols [38]. | Implementing detailed, step-by-step procedures for data categorization and coding, often involving multiple expert judges [38]. |

Troubleshooting Guides for NAMs Experimentation

Issue: Low Yield or Purity in iPSC Differentiation

Problem: Differentiated cell populations have low yield or high contamination from off-target cell types.

Recommendations:

- Verify Reprogramming and Pluripotency: Ensure starting iPSC lines are fully reprogrammed and pluripotent. Check for the expression of key markers (OCT4, SOX2, KLF4, cMYC) [36].

- Quality Control of Reagents: Use high-quality, validated differentiation reagents. Small changes in growth factors or small molecules can significantly impact outcomes. Consider reagents specifically designed for enhanced consistency, such as those that improve cell survival and genomic stability [37].

- Optimize Protocol Parameters: Systematically test and optimize critical parameters such as seeding density, the timing of media changes, and growth factor concentrations. Document any deviations meticulously.

Issue: High Variability in Experimental Readouts