Beyond the Jargon: Solving Cognitive Terminology Operationalization Challenges in Biomedical Research

This article addresses the critical challenge of operationalizing cognitive terminology in biomedical and clinical research.

Beyond the Jargon: Solving Cognitive Terminology Operationalization Challenges in Biomedical Research

Abstract

This article addresses the critical challenge of operationalizing cognitive terminology in biomedical and clinical research. For researchers and drug development professionals, inconsistent definitions and measurement approaches for cognitive constructs create significant barriers to reproducibility, data synthesis, and clinical translation. We explore the foundational roots of these issues, evaluate current methodological applications, and provide a troubleshooting framework for optimizing cognitive assessment. By comparing validation strategies and highlighting emerging technologies like AI, this guide offers a pathway toward more reliable, valid, and clinically meaningful measurement of cognition in research and practice.

The Conceptual Maze: Defining Cognitive Constructs in Scientific Research

This whitepaper examines the fundamental challenges in operationalizing cognitive terminology within contemporary research. Despite advances in neuroscience and psychology, a unified definition of "cognition" remains elusive, creating significant methodological inconsistencies across studies. We analyze current operationalization approaches, present empirical data highlighting measurement disparities, and propose standardized frameworks for future research. The content specifically addresses implications for translational research and drug development, where precise cognitive assessment is critical for evaluating therapeutic efficacy. By synthesizing findings from recent large-scale studies and methodological research, this paper provides researchers with concrete tools to enhance measurement validity in cognitive studies.

The Operationalization Challenge in Cognitive Research

The Definitional Dilemma

Operationalization represents the cornerstone of empirical cognitive research, referring to the process of defining abstract concepts into measurable variables [1] [2]. This process transforms theoretical constructs like "memory" or "attention" into quantifiable observations through specific measurement techniques. In cognitive science, this translation faces unique challenges due to the complex, multi-dimensional nature of cognitive processes that cannot be directly observed but must be inferred from behavior or physiological markers [3].

The fundamental contention in defining cognition stems from competing theoretical frameworks that emphasize different aspects of cognitive processes. While some researchers focus on computational models of information processing, others prioritize neurobiological substrates or phenomenological experiences. This divergence manifests in what researchers term the "concept-as-intended" versus "concept-as-determined" gap [3], where the theoretical construct (cognition-as-intended) often misaligns with its measured manifestation (cognition-as-determined). This validity gap is particularly problematic in drug development, where inconsistent operationalization can lead to conflicting results in clinical trials targeting cognitive enhancement.

Current Landscape of Cognitive Assessment

Recent research reveals alarming disparities in how cognitive difficulties are identified and measured. A decade-long study analyzing over 4.5 million survey responses found that self-reported cognitive disability—defined as "serious difficulty concentrating, remembering, or making decisions"—has increased significantly among U.S. adults, with rates rising from 5.3% to 7.4% between 2013 and 2023 [4] [5]. Strikingly, the most dramatic increase occurred among young adults (ages 18-39), whose rates nearly doubled from 5.1% to 9.7% during the same period [6].

Table 1: Demographic Variations in Self-Reported Cognitive Disability (2013-2023)

| Demographic Factor | 2013 Rate | 2023 Rate | Change | Measurement Approach |

|---|---|---|---|---|

| Overall | 5.3% | 7.4% | +2.1% | CDC Behavioral Risk Factor Surveillance System question |

| Age: 18-39 | 5.1% | 9.7% | +4.6% | Self-reported serious difficulty with memory, concentration, decision-making |

| Age: 70+ | 7.3% | 6.6% | -0.7% | Same as above |

| Income: <$35K | 8.8% | 12.6% | +3.8% | Same as above |

| Income: >$75K | 1.8% | 3.9% | +2.1% | Same as above |

| Education: No HS diploma | 11.1% | 14.3% | +3.2% | Same as above |

| Education: College graduate | 2.1% | 3.6% | +1.5% | Same as above |

These findings underscore how operationalization choices significantly impact identified prevalence rates and demographic patterns. The measurement instrument—a single question in an annual phone survey—captures subjective perception rather than objective cognitive performance, highlighting the critical distinction between self-reported cognitive difficulties and clinically diagnosed impairment [4].

Methodological Frameworks and Experimental Approaches

Contemporary Research Paradigms

Cognitive research employs diverse methodological approaches to operationalize specific cognitive domains. The following experimental protocols represent current standards in the field:

Protocol 1: Eye-Tracking Assessment of Attention and Memory Deficits

- Purpose: To objectively measure attention and memory impairments in clinical populations, distinguishing between encoding and retrieval deficits [7]

- Procedure: Participants with frontal lobe epilepsy (FLE) and matched controls complete visual memory tasks while eye movements are recorded. The paradigm involves encoding phases where stimuli are presented, followed by retrieval phases where participants identify previously seen items [7]

- Measures: Fixation duration, saccadic patterns, and attention allocation during encoding and retrieval phases

- Key Finding: FLE patients exhibit prolonged fixation times and reduced visual attention efficiency, primarily during retrieval phases rather than encoding [7]

- Advantage: Provides quantitative, continuous data on visual attention without relying solely on behavioral responses

Protocol 2: Event-Related Potential (ERP) Measurement of Cognitive Load

- Purpose: To quantify neural correlates of cognitive load during visual working memory tasks and establish objective physiological markers of cognitive effort [7]

- Procedure: Participants complete n-back tasks with varying difficulty levels while EEG recordings capture neural activity. The paradigm systematically manipulates cognitive demand by adjusting memory load requirements [7]

- Measures: P300 amplitude and latency, which reflect attention allocation and memory updating processes

- Key Finding: Higher cognitive load reduces P300 amplitude, indicating greater difficulty in attention allocation and memory processing [7]

- Application: Particularly valuable for assessing cognitive effects of pharmacological interventions without relying on subjective reports

Protocol 3: Dual-Task Assessment of Cognitive-Physical Interference

- Purpose: To examine competition for neural resources between cognitive tasks and postural control [7]

- Procedure: Participants perform visual working memory tasks while maintaining upright posture on force platforms. Cognitive load is systematically varied while postural sway is quantified [7]

- Measures: Body sway parameters, task performance accuracy, and ERP components

- Key Finding: While upright posture enhances early selective attention, it interferes with later memory encoding, demonstrating direct competition for neural resources [7]

- Implication: Challenges simplistic modular theories of cognition by demonstrating integrated neural resource allocation

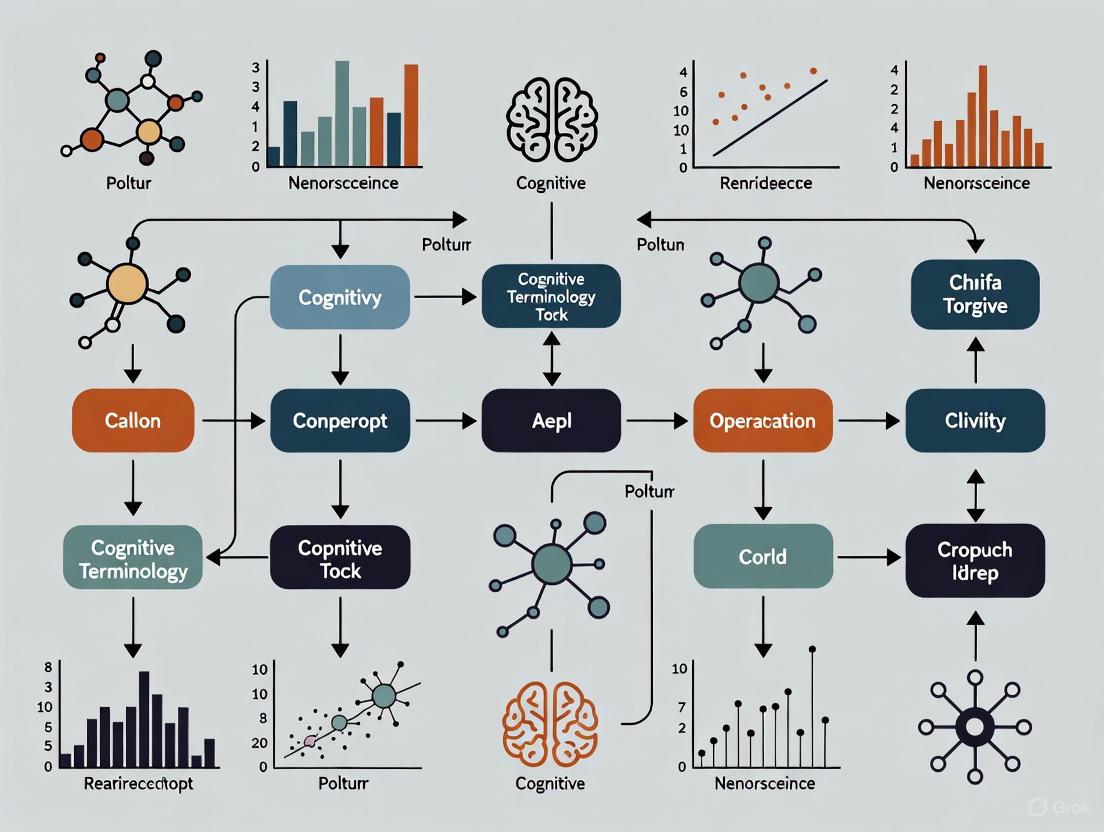

Visualizing Cognitive Assessment Methodologies

Diagram 1: Cognitive Construct Operationalization Workflow

The diagram above illustrates the iterative process of operationalizing cognitive constructs, highlighting the critical translation from theoretical concepts to measurable variables. This workflow underscores how validity assessment continuously informs conceptual refinement—a crucial but often overlooked aspect of cognitive research methodology [3].

Core Measurement Approaches and Instrumentation

Table 2: Cognitive Research Reagent Solutions and Methodological Tools

| Method Category | Specific Tool/Technique | Primary Application | Key Considerations |

|---|---|---|---|

| Behavioral Assessment | n-back task | Working memory capacity | Adjustable difficulty; sensitive to practice effects |

| Visual search task | Attention and perceptual processing | Configurable complexity; measures efficiency | |

| Retro-cue paradigm | Visual working memory management | Examines internal attention shifts | |

| Physiological Recording | EEG/ERP with P300 component | Cognitive load assessment | Excellent temporal resolution; limited spatial precision |

| Eye-tracking (pupillometry/fixation) | Visual attention allocation | Objective measure of overt attention | |

| Postural sway measurement | Dual-task resource competition | Quantifies cognitive-physical interference | |

| Self-Report Measures | CDC BRFSS cognitive disability item | Population-level cognitive difficulty screening | Subjective but practical for large-scale assessment |

| Cognitive failure questionnaires | Daily functional limitations | Ecological validity but subject to bias | |

| Clinical Populations | Frontal lobe epilepsy eye-tracking protocol | Differentiating attention vs. memory deficits | Specific to neurological disorders |

Emerging Frontiers and Innovative Approaches

The field of cognitive-digital interaction (CDI) represents a promising frontier for operationalization innovation. CDI research systematically studies "the regularities of cognitive processes under the influence of digital environment" [8], examining fundamental differences between cognitive performance in digital versus real-world environments. Empirical findings indicate these differences cannot be reduced to simple quantitative explanations but involve complex interactions related to "cognitive and perceptual load/offload and depth of information processing" [8].

Diagram 2: Cognitive-Digital Interaction Framework

This emerging research domain highlights how environmental context fundamentally influences cognitive processes in ways that resist simple quantitative measurement, further complicating the operationalization landscape [8].

Implications for Research and Development

Consequences for Basic Research

The operationalization challenges in cognitive science have profound implications for theoretical advancement. Inconsistent definitions and measurement approaches create significant barriers to comparing findings across studies, potentially slowing scientific progress. Research indicates that "the lack of a theoretically founded measure makes it easier to report those specific outcome variables that happened to be statistically significant, thus increasing the occurrence of false-positive findings in the literature" [3].

The fundamental limitation of language itself further complicates cognitive research. As noted in analyses of cognitive science methodologies, "language by its very nature splits the world of experience into discrete, commonly understood, recurring entities and events" [9], while actual cognitive processes may be more fluid and continuous than linguistic representations can capture. This creates what might be termed the "linguistic reduction problem" in cognitive operationalization.

Applications in Pharmaceutical Development

For drug development professionals, inconsistent cognitive operationalization presents both methodological and regulatory challenges. Clinical trials targeting cognitive enhancement require precise, sensitive, and validated measures that can detect subtle treatment effects. The disconnect between laboratory-based cognitive measures and real-world functioning remains a significant hurdle in demonstrating meaningful clinical benefits.

The demographic patterns identified in recent research—particularly the steep increases in self-reported cognitive difficulties among younger adults and economically disadvantaged populations—suggest potential market expansions for cognitive-enhancing interventions but also highlight the need for culturally and socioeconomically sensitive assessment approaches [4] [5] [6].

Defining cognition remains contentious precisely because different research questions demand different operational approaches. Rather than seeking a universal definition, the field may benefit from developing a structured framework that explicitly matches operationalization choices to research goals and contexts.

Future research should prioritize:

- Developing cross-environment cognitive assessments that account for digital versus real-world differences [8]

- Establishing standardized protocols for specific research domains while maintaining methodological flexibility for novel questions

- Enhancing translational validity by bridging laboratory measures with real-world cognitive functioning

- Addressing demographic disparities in cognitive assessment to ensure equitable application across populations

The continuing controversy around defining cognition reflects not scientific failure but appropriate acknowledgment of the complexity of human mental processes. By embracing this complexity through sophisticated operationalization frameworks, researchers can advance both theoretical understanding and practical applications in cognitive science.

The proliferation of "mentalist terms" — psychological constructs such as cognitive load, engagement, and mental effort — presents a fundamental challenge for empirical research in cognitive science and mental health. These terms reference subjective, internal states that lack direct observability, creating significant operationalization challenges when imported into scientific literature. Without careful conceptual grounding and methodological rigor, this proliferation risks creating a facade of scientific precision over constructs that remain poorly defined and variably measured.

The operationalization challenge exists within a broader thesis on cognitive terminology, wherein the very language used to describe mental processes often lacks the precise mapping to empirical referents required for robust scientific investigation. As mental and behavioral disorders continue to represent a leading cause of global disease burden — with recent studies showing significant increases, particularly among youth populations — the imperative for precise measurement and consistent operationalization becomes increasingly critical for both basic research and intervention science [10]. This case study examines the current landscape of mentalist term usage, analyzes specific operationalization challenges through quantitative and methodological lenses, and proposes structured approaches to enhance terminological precision and methodological rigor.

Quantitative Landscape: Measuring the Measurement Problem

Global Burden and Research Trends

The expanding prevalence of mental health challenges is mirrored in the scientific literature's increasing focus on mentalist constructs. Quantitative analysis of research trends reveals both the scale of the problem and specific gaps in measurement methodology.

Table 1: Global Mental Health Burden & Research Trends

| Metric | 2019-2021 Data | 2024-2025 Trends | Measurement Implications |

|---|---|---|---|

| Global Prevalence | 970 million people with mental disorders (2019) [10] | 25% global increase in anxiety/depression post-pandemic [11] | Increased use of "anxiety," "depression" without consistent operationalization |

| Research Activity | GBD 2021 analyzing 9 mental disorders [10] | 13% increase in "Mental/Behavioral Disorders" study category (2023-2024) [11] | Proliferation of disorder-specific terminology without measurement standardization |

| Economic Impact | $2.5 trillion (2010) to $6 trillion (projected 2030) [10] | Mental health claims: fastest-growing condition (48% of insurers) [12] | Pressure for quantifiable outcomes drives potentially premature operationalization |

| Cognitive Research | EMR model (2020) cited 140+ times by 2025 [13] | Rising studies on "cognitive load," "self-regulation" in digital contexts [14] [13] | Multiple competing operationalizations for the same mentalist terms |

Measurement Tool Gaps in Mental Health Policy Implementation

A systematic review of quantitative measures used in mental health policy implementation research reveals specific deficiencies in how mentalist constructs are operationalized in applied settings. This examination of 34 measurement tools from 25 articles demonstrates that most measures lacked comprehensive psychometric validation, with frequent omissions in test-retest reliability, structural validity, and sensitivity to change [15]. The most assessed implementation determinants were "readiness for implementation" (training and resources) and "actor relationships/networks," while the most common implementation outcomes were "fidelity" and "penetration" — all constructs requiring careful operationalization to avoid mentalist pitfalls [15].

Beyond psychometric concerns, the review found that most measures provided minimal information regarding score interpretation, handling of missing data, or training required for proper administration. This absence of methodological detail exacerbates the operationalization challenge, as researchers adopt existing measures without sufficient guidance to ensure consistent application across studies and contexts [15].

Operationalization Challenges: From Construct to Measurement

Theoretical and Methodological Divergence

The translation of mentalist terms from theoretical constructs to empirical measurements encounters several fundamental challenges that contribute to the operationalization crisis in cognitive terminology research.

The Definitional Problem

Mentalist terms often suffer from multiple, conflicting definitions across theoretical traditions. For example, "cognitive engagement" has been variably defined as "mental effort and strategies students use to process, understand, and apply learning content" [14], "deep learning strategies and self-regulation" [14], and "the mental effort and strategies students use to process, understand, and apply learning content" [14]. Similarly, "mental effort" itself has been categorized through multiple frameworks, including "effort-by-complexity," "effort-by-need frustration," and "effort-by-allocation" [13], with each framing carrying distinct measurement implications.

The Measurement Discordance Problem

Even when definitional consensus exists, mentalist constructs often suffer from discordance between measurement approaches. For instance, the Effort Monitoring and Regulation (EMR) model highlights how learners may misinterpret subjective effort experiences, with studies demonstrating only a moderate negative association between perceived mental effort and monitoring judgments, and a moderate indirect association between perceived mental effort and learning outcomes [13]. This discordance between subjective experiences (self-report), behavioral manifestations (task performance), and physiological correlates (EEG, biomarkers) creates fundamental operationalization challenges.

The Contextual Variability Problem

Many mentalist terms demonstrate significant contextual dependence, further complicating their operationalization. Research on cognitive-digital interactions reveals that cognitive processes differ meaningfully between digital and real-world environments, with these differences "related to cognitive and perceptual load/offload and depth of information processing" [8]. This suggests that operationalizations valid in one context (e.g., traditional learning environments) may not transfer cleanly to others (e.g., digital learning platforms), creating a proliferation of context-specific operationalizations that undermine construct coherence.

Case Examples: Cognitive Load and Engagement

Cognitive Load Operationalization Challenges

Cognitive load theory exemplifies the operationalization challenges facing mentalist terminology. Despite the construct's central importance to educational psychology and instructional design, its measurement remains heterogeneous and methodologically contested:

Table 2: Cognitive Load Measurement Approaches

| Method Category | Specific Measures | Strengths | Limitations |

|---|---|---|---|

| Self-Report | Rating scales (e.g., 7-point mental effort scale); NASA-TLX | Easy administration; Direct access to subjective experience | Vulnerable to interpretation differences; Context-dependent biases |

| Behavioral | Task performance; Error rates; Opt-out choices | Objective; Quantifiable; Less susceptible to bias | Indirect measure; Confounded by multiple factors |

| Physiological | EEG; Heart rate variability; Eye-tracking | Continuous measurement; Minimal conscious control | Complex equipment; Uncertain construct specificity |

| Metacognitive | Judgments of learning; Confidence ratings | Links monitoring to regulation | Subject to same biases as self-report |

Recent research has further complicated cognitive load operationalization by revealing that self-reported mental effort is significantly influenced by motivational states. Studies manipulating performance feedback demonstrate that "negative performance feedback prompted higher expectations of future mental effort compared to positive or no feedback," with these effects mediated by "participants' levels of self-efficacy and feelings of threat" [13]. This suggests that commonly used self-report measures may confound cognitive and motivational factors, fundamentally challenging the validity of existing operationalizations.

Engagement Operationalization Challenges

The construct of "engagement" exemplifies the proliferation problem, with the term expanding to encompass behavioral, cognitive, emotional, and social dimensions [14]. This conceptual expansion has not been matched by methodological precision, creating significant operationalization challenges:

- Behavioral engagement has been operationalized through observable participation metrics (attendance, task completion) [14], LMS behavioral indicators (login frequency, forum participation) [14], and time management behaviors [14].

- Cognitive engagement has been measured through self-reported learning strategies [14], information processing depth [14], and academic skill application [14].

- Emotional and social engagement dimensions have been assessed through various self-report instruments measuring attitudes, feelings, and relationship qualities [14].

The disconnect between these multiple dimensions creates significant challenges for coherent construct operationalization, with different studies measuring different facets of engagement while using the same umbrella terminology.

Experimental Protocols and Methodological Solutions

Protocol 1: Multimethod Cognitive Load Assessment

This protocol provides a comprehensive approach to cognitive load operationalization that addresses limitations of single-method approaches through methodological triangulation.

Participant Recruitment and Design

Recruit a minimum of 40 participants per experimental group to ensure adequate statistical power for detecting moderate effects in multimethod comparisons. Employ a between-subjects design with random assignment to conditions that systematically vary cognitive load demands (e.g., simple vs. complex problem-solving tasks, varied instructional formats) [13].

Multimethod Assessment Procedure

- Self-Report Measures: Administer a standardized mental effort rating scale immediately following each primary task. Use a 9-point symmetric category scale with anchors "very, very low mental effort" (1) and "very, very high mental effort" (9). Supplement with the NASA-TLX for multidimensional assessment [13].

- Behavioral Measures: Record task performance accuracy, response time, and opt-out frequency (when participants choose to skip challenging items). Code behavioral indicators of frustration or disengagement from video recordings using standardized coding schemes [13].

- Physiological Measures: Collect EEG data with emphasis on theta/alpha power ratio as an indicator of cognitive load. Monitor heart rate variability (HRV) through wearable sensors, with decreased HRV indicating higher cognitive load [16].

Data Integration and Analysis

Calculate correlation patterns between measurement modalities to assess convergent validity. Conduct factor analysis to examine whether different operationalizations load on common latent constructs. Test predictive validity of each measurement approach against transfer task performance [13].

Protocol 2: Longitudinal Cognitive Outcome Assessment

This protocol addresses operationalization challenges in longitudinal studies of cognitive functioning, particularly relevant for mental health intervention research.

Baseline Assessment Protocol

Recruit participants from defined populations (e.g., ICU survivors, individuals with mood disorders) with careful attention to inclusion/exclusion criteria. At baseline (T0), conduct comprehensive assessment including:

- Neuropsychological Testing: Administer the Repeatable Battery for the Assessment of Neuropsychological Status (RBANS) and Trail Making Test Part B as primary cognitive outcomes. Include additional tests covering memory, executive function, processing speed, and attention domains [16].

- Biomarker Collection: Collect blood samples for APOE genotyping (associated with cognitive vulnerability) and inflammatory markers. Process samples according to standardized protocols and store at -80°C [16].

- Quantitative EEG: Record resting-state EEG using standardized 10-20 system placement. Analyze spectral power in delta, theta, alpha, and beta frequency bands, particularly focusing on the ratio of slow to fast wave activity [16].

- Sleep Assessment: Conduct 7-day actigraphy monitoring to objectively measure sleep patterns, including sleep efficiency, fragmentation, and circadian rhythms [16].

Longitudinal Follow-up Protocol

Readminister the neuropsychological battery, EEG, and sleep assessment at 6-month (T1) and 12-month (T2) follow-ups. Maintain consistent testing conditions, time of day, and examiner training across assessment points to minimize measurement variance. Implement rigorous tracking procedures to minimize attrition, including regular contact updates, flexible scheduling, and compensation for participation [16].

Data Analysis Plan

Calculate composite cognitive scores from neuropsychological tests using confirmatory factor analysis. Employ linear mixed-effects models to examine cognitive trajectories over time, with primary analyses testing interactions between predictors (e.g., APOE status, sleep parameters) and time on cognitive outcomes. Control for potential confounders including age, education, and baseline clinical characteristics [16].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Measures for Mental Construct Research

| Tool Category | Specific Tools | Primary Application | Key Considerations |

|---|---|---|---|

| Psychometric Instruments | PHQ-9, GAD-7 [17] | Depression and anxiety symptom severity | Require validation for specific populations; Sensitive to administration context |

| Neuropsychological Tests | RBANS, Trail Making Test [16] | Multi-domain cognitive function assessment | Need standardized administration; Practice effects in longitudinal designs |

| Physiological Recording | EEG systems, Actigraphy devices [16] | Objective brain function and sleep measurement | Require technical expertise; Signal artifact management challenges |

| Genetic Analysis | APOE genotyping kits [16] | Genetic vulnerability to cognitive impairment | Ethical considerations; Population-specific allele frequencies |

| Digital Assessment Platforms | LMS log data, Telehealth systems [14] [12] | Behavioral engagement metrics | Privacy protections; Data processing standardization needs |

Discussion: Toward Precision in Mentalist Terminology

Integrative Approaches to Operationalization

Addressing the proliferation of mentalist terms requires integrative approaches that acknowledge the complexity of cognitive phenomena while insisting on methodological rigor. The multimethod protocols presented in this case study represent promising directions, as they explicitly recognize that complex mental constructs cannot be adequately captured through single-method approaches. Rather, they employ methodological triangulation to develop more robust operationalizations that account for the multifaceted nature of mental processes [13] [8].

Future research should aim to develop unified theoretical models that can accommodate the complex interplay of factors influencing mental processes across different contexts. As cognitive-digital interaction research suggests, differences between environments "cannot be reduced to a quantitative principle alone," requiring models that account for qualitative differences in how cognitive processes unfold across contexts [8]. Such theoretical advances must be matched by improved measurement practices that explicitly address the limitations of current operationalizations.

Recommendations for the Field

Based on this analysis, we propose three key recommendations for enhancing the precision of mentalist terminology in scientific literature:

Adopt Transparent Multimethod Reporting: Research publications should explicitly document the convergence (or divergence) between different operationalizations of the same mentalist construct, helping to establish the boundaries of valid measurement.

Develop Context-Specific Validation Standards: Rather than seeking universal operationalizations, the field should develop and adhere to validation standards specific to research contexts (e.g., digital learning environments, clinical assessment, neurophysiological research).

Implement Preregistered Operationalization Protocols: To combat flexibility in measurement and analysis, researchers should preregister their operationalization strategies, including detailed rationales for measure selection and planned analytical approaches.

The continued proliferation of mentalist terms need not undermine scientific progress if accompanied by increased methodological sophistication and theoretical precision. By acknowledging the operationalization challenges inherent in studying mental phenomena and implementing rigorous approaches to address them, researchers can enhance the validity and cumulative value of cognitive terminology research.

The theory-practice gap represents a fundamental challenge across scientific disciplines, where abstract theoretical constructs fail to translate effectively into measurable, observable phenomena. This gap is particularly problematic in fields requiring precise measurement and regulatory oversight, such as drug development and cognitive science, where ambiguous definitions can impede research progress, regulatory evaluation, and practical application. Operationalization—the process of turning abstract concepts into measurable observations—serves as the critical bridge between theoretical frameworks and empirical investigation [18]. When this process is hindered by poorly defined constructs, the entire scientific enterprise suffers from reduced reliability, invalid measurements, and compromised comparability across studies.

The core issue lies in the linguistic ambiguity of theoretical constructs and the methodological underspecification of how these constructs should manifest in observable reality. In drug development, for instance, terms like "efficacy" or "safety" may carry different operational meanings across regulatory jurisdictions, creating significant barriers to global therapeutic development [19]. Similarly, in cognitive and educational research, constructs like "engagement" or "resilience" encompass multiple dimensions that are frequently operationalized inconsistently across studies [14] [20]. This paper examines the nature and consequences of this theory-practice gap, provides a framework for effective operationalization, and offers concrete strategies for bridging this divide in rigorous scientific research.

Theoretical Foundations: The Nature of the Gap

Conceptualizing the Theory-Practice Divide

The theory-practice gap manifests when abstract conceptualizations cannot be effectively translated into empirical measurements. This fundamentally stems from what philosophers of science term conceptual vagueness—when the boundaries of a concept are poorly defined—and operational divergence—when the same concept is measured differently across contexts [18]. In scientific practice, this gap appears when theoretical definitions lack the precision necessary to guide measurement selection or when multiple competing operationalizations yield incompatible findings.

The problem is particularly pronounced in complex, multifaceted constructs. For example, in resilience research, a systematic review of 193 longitudinal studies found that most studies lacked an explicit resilience definition, with only 32% explicitly defining it as a trait (6%), an outcome (19%), or a process (8%) [20]. This definitional inconsistency directly impacts how resilience is measured and interpreted, with variable-centered approaches predominating (85% of studies) while potentially overlooking important subgroup differences that person-centered approaches might capture [20]. The conceptual-methodological mismatch occurs when theoretical complexity meets methodological oversimplification, creating a gap between what researchers conceptualize and what they actually measure.

Dimensions of the Operationalization Problem

The theory-practice gap in operationalization manifests across several distinct dimensions:

Definitional ambiguity: Core constructs lack precise boundaries or have multiple conflicting definitions across the literature. In drug development, even the definition of "artificial intelligence" varies across regulatory bodies, creating challenges for consistent oversight [19].

Contextual insensitivity: Operationalizations developed in one context are inappropriately applied to another without validation. For example, poverty manifests differently across countries, but operational definitions based solely on income level may miss crucial contextual factors [18].

Temporal instability: Construct meanings and appropriate operationalizations may evolve, but measurement approaches remain static. Educational engagement frameworks developed before digital learning became prevalent may not adequately capture online learning behaviors [14].

Methodological constraint: Available methods dictate what aspects of a construct are measured rather than theoretical importance. Overreliance on self-report measures for complex psychological constructs exemplifies this problem [20].

These dimensions collectively contribute to what researchers term operationalization bias—when the method of measurement systematically distorts the understanding of the underlying construct.

Consequences of Inadequate Operationalization

Scientific and Methodological Consequences

Poor operationalization directly undermines scientific progress through several mechanisms:

Threats to validity: When operational definitions do not adequately capture theoretical constructs, both construct validity and content validity are compromised. In resilience research, the residualization approach to measuring resilience outcomes suffers from non-independence with outcome variables, potentially creating statistical artifacts rather than measuring true resilience processes [20].

Reduced reliability: Inconsistent operationalizations across studies decrease measurement reliability and make direct comparisons problematic. A systematic review of resilience research found significant heterogeneity in how protective factors were defined and measured, limiting the ability to synthesize findings across studies [20].

Impeded replicability: The replication crisis across many scientific fields is partly attributable to vague operational definitions that prevent exact replication of experimental conditions and measurements [18].

Theoretical confusion: When different studies operationalize the same construct in different ways, it becomes difficult to determine whether conflicting results stem from theoretical inadequacies or methodological differences.

Practical and Regulatory Consequences

In applied contexts like drug development and healthcare, operationalization failures have tangible consequences:

Regulatory fragmentation: In drug development, differing operational definitions of AI and its applications across regulatory agencies like the FDA and EMA create substantial barriers to global therapeutic development [19]. This fragmentation is exacerbated when agencies provide differing guidance on similar technologies based on application context rather than technical characteristics.

Barriers to innovation: Regulatory uncertainty stemming from definitional ambiguity can impede adoption of novel technologies. The FDA's Context of Use (CoU) framework, while valuable, faces challenges when applied to AI-generated therapeutics that present novel mechanisms or outcomes that cannot be fully understood or explained using existing frameworks [19].

Resource inefficiency: In educational research, inadequate operationalization of student engagement leads to ineffective interventions. Studies show that cognitive challenges such as processing complex content, information overload, and limited academic writing skills persist when operational definitions fail to guide appropriate support measures [14].

Table 1: Documented Consequences of Operationalization Gaps Across Fields

| Field | Operationalization Challenge | Documented Consequence |

|---|---|---|

| Drug Development | Differing definitions of AI applications | Regulatory fragmentation; impeded global therapeutic development [19] |

| Educational Research | Multidimensional construct of student engagement | Ineffective support measures; persistent cognitive challenges in ODL [14] |

| Resilience Research | Variable definitions (trait, outcome, process) | Heterogeneous findings; limited comparability across 193 studies [20] |

| Clinical Simulation | Variation in "clinical competence" measures | Inconsistent preparation of nursing students for real-world practice [21] |

Frameworks for Effective Operationalization

The Operationalization Process: A Systematic Approach

Effective operationalization requires a systematic, transparent process for moving from abstract constructs to concrete measurements. This process involves three critical steps [18] [22]:

Identify the main concepts: Begin with clear conceptual definitions of the constructs of interest. In drug development, this might involve precisely defining what constitutes "AI-enabled" versus traditional approaches [19].

Choose specific variables: Determine which measurable properties represent each concept. For example, in educational research, "cognitive engagement" might be represented by variables such as "mental effort" or "learning strategies" [14].

Select appropriate indicators: Identify concrete, observable measurements for each variable. These indicators should have clear relationships to the theoretical construct and practical feasibility for data collection.

Table 2: Operationalization Examples Across Research Domains

| Concept | Variable | Indicator Examples | Field |

|---|---|---|---|

| Cognitive Engagement | Mental effort | Self-report ratings; LMS interaction patterns; response time measures [14] | Educational Research |

| Resilience | Positive adaptation | Deviation from expected functioning; trajectory analysis; absence of psychopathology [20] | Psychology |

| Clinical Competence | Skill transfer | Performance in simulated scenarios; clinical decision-making accuracy; patient care metrics [21] | Nursing Education |

| AI Enablement | Model autonomy | Degree of human oversight; complexity of tasks automated; adaptability to new data [19] | Drug Development |

This systematic approach enhances what methodological experts term operational transparency—the clear documentation of how abstract concepts are translated into specific measurements [18]. This transparency is essential for evaluating validity, facilitating replication, and enabling scientific consensus.

Fit-for-Purpose Framework in Drug Development

The drug development field offers a sophisticated framework for addressing operationalization challenges through what is termed "fit-for-purpose" (FFP) modeling [23]. This approach emphasizes aligning methodological choices with specific research questions and contexts of use (COU). The FFP framework requires:

Explicit context specification: Clearly defining the specific circumstances and decisions the operationalization is intended to support. For AI in drug development, this involves specifying the CoU framework to define the specific circumstances under which an AI application is intended to be used [19].

Methodological alignment: Selecting operationalization approaches that match the question of interest, stage of development, and available data. In model-informed drug development (MIDD), this means selecting quantitative tools that align with development milestones from discovery through post-market surveillance [23].

Risk-proportionate validation: Implementing validation strategies commensurate with the decision stakes. Higher-stakes applications (e.g., primary efficacy endpoints) require more rigorous validation than exploratory measures.

Dynamic refinement: Updating operational definitions as new information emerges throughout the development process.

The FFP approach explicitly acknowledges that operationalization is not one-size-fits-all; rather, the appropriateness of an operational definition depends on its intended use and the consequences of potential misclassification [23].

Case Studies and Experimental Protocols

Case Study: Operationalizing Resilience in Longitudinal Research

A systematic review of 805,660 participants across 193 longitudinal psychosocial resilience studies reveals the profound consequences of operationalization decisions [20]. The review documented three primary conceptualizations of resilience—as a trait, an outcome, or a process—each leading to distinct methodological approaches:

Experimental Protocol: Resilience Operationalization Comparison

- Research Design: Systematic review with standardized data extraction

- Sample Characteristics: 193 studies, 805,660 participants across all age groups

- Operationalization Categories:

- Trait resilience (6% of studies): Measured via psychometric scales assessing recovery, persistence, adaptability, and social cohesion

- Outcome resilience (19% of studies): Operationalized via residualization (deviation from expected functioning) or trajectory analysis

- Process resilience (8% of studies): Measured through dynamic person-environment interactions across multiple timepoints

- Analysis Approach: Comparison of statistical methods (variable-centered vs. person-centered), protective/promotive effects, and adversity-outcome relationships

- Key Finding: Operationalization choice significantly influenced findings, with trait approaches showing limited sensitivity to temporal changes and outcome approaches potentially oversimplifying multidimensional adaptation processes [20]

This case study demonstrates how fundamental conceptualization decisions directly shape methodological approaches and ultimately influence scientific understanding of complex phenomena.

Case Study: AI Operationalization in Drug Regulation

The rapid integration of artificial intelligence in drug development has exposed significant operationalization challenges at the regulatory level [19]. A 2025 analysis of regulatory frameworks reveals substantial fragmentation in how AI is defined and evaluated:

Experimental Protocol: Regulatory Framework Analysis

- Data Sources: FDA guidance documents, EMA regulations, White House AI Action Plan, international regulatory policies

- Analysis Method: Comparative framework analysis of definitions, oversight approaches, and validation requirements

- Key Operationalization Variables:

- AI definition scope: Broad vs. narrow definitions of AI systems

- Oversight mechanism: Direct evaluation of AI vs. product-focused evaluation

- Validation standards: Requirements for transparency, data quality, and human oversight

- Findings: Significant operationalization differences were identified, with the FDA applying differing regulatory frameworks to AI depending on application context (medical devices vs. therapeutics) and providing unclear guidance on evaluating "unprecedented AI methodologies" that don't fit existing frameworks [19]

This case highlights how operationalization challenges at the conceptual level can directly impact regulatory coordination, innovation adoption, and ultimately patient access to novel therapies.

Visualizing Operationalization Frameworks

Operationalization Workflow Diagram

Operationalization Workflow: This diagram visualizes the systematic process for translating abstract concepts into measurable constructs, emphasizing the iterative validation and refinement steps essential for bridging the theory-practice gap.

Regulatory Operationalization Framework

Regulatory Operationalization Framework: This diagram maps the challenges and proposed solutions for operationalizing AI concepts in therapeutic development, highlighting the ecosystem approach needed to address regulatory fragmentation.

Research Reagent Solutions: Operationalization Tools

Table 3: Essential Methodological Tools for Addressing Operationalization Challenges

| Tool Category | Specific Method/Instrument | Function in Operationalization | Field Applications |

|---|---|---|---|

| Conceptual Definition Tools | Systematic literature reviews; Delphi expert panels; Conceptual framework analysis | Clarify construct boundaries; Identify core dimensions; Establish conceptual consensus | Drug development (AI definitions); Resilience research (trait vs. process) [19] [20] |

| Measurement Validation Tools | Factor analysis; Reliability testing (test-retest, inter-rater); Correlation with gold standards | Establish measurement properties; Evaluate construct validity; Assess measurement invariance | Educational research (engagement measures); Psychology (resilience scales) [14] [20] |

| Statistical Modeling Approaches | Latent variable modeling; Growth mixture models; Moderation analysis | Capture multidimensional constructs; Identify heterogeneous trajectories; Test protective vs. promotive effects | Resilience research (person-centered approaches); Drug development (MIDD) [23] [20] |

| Regulatory Alignment Tools | Context of Use frameworks; Fit-for-purpose criteria; Risk-based classification | Align operationalization with decision context; Establish appropriate validation level; Support regulatory review | AI therapeutic development; Model-informed drug development [19] [23] |

The theory-practice gap in operationalization represents a fundamental challenge across scientific disciplines, with documented consequences for research validity, regulatory coordination, and practical application. This analysis reveals that effective operationalization requires more than methodological precision—it demands explicit attention to conceptual clarity, contextual appropriateness, and iterative validation. The frameworks and case studies presented demonstrate that bridging this gap requires systematic approaches that align theoretical constructs with empirical measurements while acknowledging the dynamic, context-dependent nature of many scientific concepts.

Moving forward, researchers and practitioners should prioritize operational transparency—clearly documenting and justifying operationalization decisions—and methodological pluralism—employing multiple operationalizations to capture complex constructs. In regulatory contexts, greater international harmonization of definitions and standards will be essential for advancing fields like AI-enabled drug development. Ultimately, recognizing operationalization as an ongoing process rather than a one-time decision may represent the most important step toward bridging the theory-practice gap and advancing scientific progress across diverse fields of inquiry.

The study of human cognition is defined by a fundamental theoretical divide between the classical, amodal approach and the increasingly influential grounded cognition framework. This division is not merely technical but represents a profound disagreement about the very nature of how knowledge is represented and processed. Within the context of research on cognitive terminology operationalization challenges, this debate becomes critically important, as each framework operationalizes core cognitive constructs—such as concepts, memory, and reasoning—in fundamentally different ways. The classical approach views cognition as an autonomous module in the brain that processes abstract, symbolic representations largely independent of sensory and motor systems [24]. In stark contrast, grounded cognition proposes that there is no central module for cognition, and that all cognitive phenomena are ultimately grounded in bodily, affective, perceptual, and motor processes [25]. This paper examines this theoretical divide, its implications for operationalizing cognitive terminology, and its practical consequences for research design and interpretation, providing researchers with a clear framework for navigating these competing paradigms.

Theoretical Foundations

The Classical Amodal Approach

The classical approach to cognition, which dominated cognitive science for much of the 20th century, is rooted in the computational theory of mind and the modular view of brain organization. This perspective is often termed the "sandwich model," with cognition neatly positioned between perception and action, yet functionally separate from them [24]. Its core principles can be summarized as follows:

- Amodal Symbol Systems: Cognitive representations are based on abstract, language-like symbols that are arbitrary and bear no resemblance to their referents. These symbols are amodal because they are not represented in the same systems responsible for perception and action [25] [24].

- Modular Architecture: Cognition operates as a distinct module, separate from perceptual and motor systems. While modules exchange information, their internal computations remain autonomous and impenetrable to influence from other systems [24].

- Discrete Categorization: Following the classical view of categories, concepts are defined by lists of necessary and sufficient features, have clear boundaries, and all category members possess equal status [26].

This framework aligns with Marr's (1982) tri-level hypothesis, which proposes that cognitive systems can be understood at three distinct levels of analysis: the computational level (the goal), the algorithmic level (the procedure), and the implementational level (the physical instantiation) [27]. The classical approach has provided valuable models but faces the persistent challenge of the "grounding problem"—explaining how abstract, amodal symbols acquire their meaning and become connected to the perceptual world and bodily experiences they represent [24].

The Grounded Cognition Framework

Grounded cognition challenges the classical view by proposing that cognition is intrinsically tied to the body's interactions with its physical and social environment. This perspective is part of a broader movement often called 4E cognition—cognition that is embodied, embedded, enactive, and extended [24] [28]. Rather than being an autonomous process, cognition emerges from the dynamic interaction of the brain, body, and environment [25] [29].

Key principles of this framework include:

- Simulation as Core Mechanism: Conceptual knowledge consists of partial re-enactments or simulations of sensory, motor, and affective states. Understanding the word "kick," for example, involves simulating the experience of kicking [29].

- Bodily States as Constituent: Bodily and affective states are not merely modulators of cognition but are constitutive of cognitive processes. For instance, emotional states are re-enacted during social perception and judgment [25].

- Situated Action: Cognition is for action, and cognitive processes are deeply situated in specific contexts and environments. The Situated Action Cycle describes how perception, self-relevance assessment, affect, motivation, and action form an continuous loop [24].

Grounded cognition thus serves as a unifying perspective that stresses dynamic brain-body-environment interactions as the basis for both simple behaviors and complex cognitive skills [25].

Comparative Analysis: Core Theoretical Distinctions

Table 1: Core Theoretical Distinctions Between Classical and Grounded Frameworks

| Theoretical Feature | Classical Approach | Grounded Approach |

|---|---|---|

| Nature of Representation | Amodal, abstract symbols | Modal simulations, grounded in perception, action, and affect |

| Relationship to Modalities | Separate from, and independent of, sensory-motor systems | Intrinsically dependent on and integrated with sensory-motor systems |

| Role of the Body | Peripheral (input/output system) | Central (constitutive of cognitive processes) |

| Concept Boundaries | Discrete, defined by necessary and sufficient features | Fuzzy, based on family resemblance and typicality [26] |

| Primary Function | Abstract reasoning and symbol manipulation | Situated action and adaptive behavior |

Operationalization and Methodological Manifestations

The theoretical divide between classical and grounded approaches directly translates into fundamentally different research strategies and operational definitions. This is particularly evident in how each framework conceptualizes and measures cognitive phenomena.

Operationalizing Core Constructs

The challenge of operationalization—defining abstract concepts in measurable terms—is tackled differently by each paradigm [1]:

- Categorization: The classical view operationalizes categorization as a process of applying defining features. In contrast, the grounded approach, informed by prototype theory and exemplar theory, views it as a process of similarity comparison to typical examples or specific stored instances [26].

- Memory: Classical operationalizations focus on recall accuracy and reaction times in laboratory tasks. Grounded operationalizations might instead measure the reinstatement of sensory-motor brain patterns during memory retrieval.

- Abstract Concepts: This remains a significant challenge for grounded cognition. While concrete concepts like "apple" can be easily simulated sensorially, abstract concepts like "freedom" require more complex explanations, potentially involving situated conceptualizations across diverse contexts [29].

Representative Experimental Protocols

The following protocols illustrate how the grounded perspective is operationalized in laboratory research, providing concrete methodologies for investigating its claims.

Protocol 1: Vigilance Task with Thought Probes for Spontaneous Cognition

This protocol, designed to study involuntary thoughts like mind-wandering and involuntary autobiographical memories, exemplifies the grounded emphasis on spontaneous, situated cognition [30].

- Objective: To elicit and measure involuntary past and future thoughts in controlled laboratory conditions without contamination by deliberate retrieval.

- Key Components:

- Low-Demand Ongoing Task: Participants perform a minimally demanding vigilance task (e.g., identifying infrequent target slides of vertical lines among many non-target horizontal lines). This undemanding context allows spontaneous thoughts to arise.

- Incidental Cues: A pool of 270 short verbal phrases is presented during the task. Some phrases may serve as incidental triggers for task-unrelated thoughts.

- Thought Probes: At 23 random intervals during the vigilance task, participants are interrupted and prompted to write down the content of their thoughts just before the probe. They also indicate whether the thought occurred spontaneously or deliberately.

- Procedure:

- Participants are tested in a controlled laboratory setting to minimize distractions.

- They complete the computerized vigilance task (average duration: 75 minutes).

- After the task, participants review their thought descriptions and categorize them further (e.g., as referring to past or future events).

- The collected qualitative data undergoes systematic coding by expert judges to ultimately identify involuntary autobiographical memories (IAMs) and involuntary future thoughts (IFTs).

- Rationale: The protocol avoids instructing participants to deliberately retrieve memories, thereby capturing the spontaneous, grounded nature of cognition as it occurs in the context of a simple, ongoing activity [30].

Protocol 2: Action-Compatibility and Motor Resonance Paradigms

These paradigms operationalize the core grounded claim that language understanding involves simulating actions and perceptual experiences.

- Objective: To demonstrate that processing words or sentences about actions activates motor programs congruent with those actions.

- Key Components:

- Stimulus-Response Compatibility Tasks: Participants perform actions (e.g., power grasp vs. precision grip) while categorizing objects or sentences. Reaction times are faster when the response action is congruent with the action typically associated with the stimulus (e.g., responding with a power grasp to a large object like an apple) [25].

- Neuroimaging: Using fMRI or TMS to measure activity in specific motor regions while participants process action-related language (e.g., understanding "kick" activates leg areas of the motor cortex).

- Procedure (Example):

- Participants are seated before a computer screen and a response device that can record different types of grips.

- They are presented with words or pictures of objects that afford specific actions (e.g., a cherry - precision grip; a hammer - power grasp).

- Their task is to make a categorical judgment (e.g., natural vs. man-made) using different grip responses.

- The dependent variable is reaction time. The grounded prediction, confirmed by studies, is faster responses when the grip used for response is compatible with the object's affordances [25].

- Rationale: This provides direct evidence for the automatic involvement of the motor system in conceptual processing, countering the classical view of an amodal, abstract conceptual system.

The Scientist's Toolkit: Key Research Reagents

Table 2: Essential Materials and Tools for Grounded Cognition Research

| Research Tool / Material | Primary Function in Research |

|---|---|

| Eye-Tracking Apparatus | Measures visual attention patterns as a window into cognitive processes; e.g., revealing how eye movements are part of the insight process in problem-solving [25]. |

| Neuroimaging (fMRI, EEG, MEG) | Identifies neural correlates of simulation; e.g., reactivation of visual areas during visual imagery or motor areas during action language comprehension [31] [7]. |

| Physiological Recorders (EDA, HRV) | Measures emotional arousal (EDA) and autonomic regulation (HRV) as embodied components of affective cognition [31]. |

| Virtual Reality (VR) Systems | Creates controlled, immersive environments to study situated cognition and the role of environmental context in guiding behavior and thought. |

| Vigilance Task Software | Provides the low-demand ongoing task context necessary for studying spontaneous thoughts like mind-wandering and involuntary memories [30]. |

Visualization of Theoretical Frameworks

The following diagrams, generated using Graphviz DOT language, illustrate the core architectural differences between the classical and grounded models of cognition.

The Classical "Sandwich" Model

The Situated Action Cycle in Grounded Cognition

Implications for Research and Application

The theoretical divide between classical and grounded approaches has profound implications for research design, measurement, and application, particularly in fields like drug development where cognitive assessment is crucial.

Navigating Operationalization Challenges

Researchers face significant challenges in operationalizing cognitive terminology across these paradigms:

- Variable Definition: A construct like "attention" is operationalized as a resource allocation mechanism in classical frameworks but as an embodied, perception-action loop in grounded frameworks (e.g., via eye-tracking) [25] [7].

- Measurement Validity: Tasks developed from a classical perspective (e.g., list learning) may lack ecological validity from a grounded perspective, which emphasizes context-dependent cognition.

- Interdisciplinary Integration: The grounded framework necessitates combining methods from neuroscience, psychology, and even anthropology, requiring researchers to be fluent in multiple methodological languages [27] [31].

Application in Drug Development and Clinical Research

For professionals in drug development, the choice of cognitive framework directly impacts how cognitive outcomes are measured in clinical trials:

- Endpoint Selection: A classical approach might favor standardized neuropsychological batteries. A grounded approach might incorporate ecological momentary assessment or measures of sensorimotor integration to better capture real-world cognitive function.

- Mechanism of Action: A drug's effect on cognition could be reinterpreted through a grounded lens. For example, a drug that improves "memory" might not be enhancing an abstract storage system but rather facilitating the fidelity of sensory simulations.

- Individual Differences: The grounded emphasis on how the Situated Action Cycle "manifests itself differently across individuals" encourages a move away from one-size-fits-all cognitive assessments toward more personalized measures [24].

The theoretical divide between classical and grounded cognition represents a fundamental schism in how researchers conceptualize and study the mind. The classical approach, with its amodal symbols and modular architecture, offers a clean, computable model of cognition. The grounded approach, with its emphasis on simulation, embodiment, and situated action, presents a more biologically plausible and context-rich model. For researchers operationalizing cognitive terminology, this divide is inescapable. It influences every aspect of the research process, from hypothesis generation and task design to data interpretation and clinical application. Navigating this divide requires a clear understanding of the underlying assumptions of each framework and a thoughtful approach to selecting methodologies that align with one's theoretical commitments. As the field progresses, the most productive path forward may lie not in choosing one framework exclusively, but in developing integrative models that can account for the strengths of both perspectives, ultimately leading to a more complete understanding of human cognition.

The replication crisis, characterized by the failure to reproduce influential scientific findings, poses a significant challenge to research credibility across disciplines. While statistical shortcomings such as p-hacking and low power have received substantial attention, this whitepaper argues that conceptual confusion—the failure to develop and operationalize coherent theoretical frameworks—represents a fundamental, yet underappreciated, driver of this crisis. Drawing on evidence from social psychology, consciousness studies, and methodological research, we examine how vague constructs and unvalidated assumptions undermine the reliability of empirical evidence. By framing these issues within the context of cognitive terminology operationalization challenges, this analysis provides researchers, particularly in drug development, with diagnostic frameworks and methodological solutions to enhance theoretical rigor and empirical trustworthiness.

The replication crisis has predominantly been diagnosed as a statistical problem, with solutions focusing on increasing sample sizes, adopting stricter p-value thresholds, and eliminating questionable research practices [32] [33]. However, this technical focus often overlooks a more foundational issue: the quality and clarity of the theoretical concepts being tested. Statistical reforms, while valuable, treat symptoms rather than causes when studies investigate poorly conceptualized phenomena.

As one analysis notes, "the fundamental problem with a lot of this bad research is not the bad statistics but rather the bad substantive theory, along with bad connections between theory and data. The bad statistics enables the bad science to appear successful; it does not in itself make the science bad" [34]. This whitepaper examines how conceptual confusion manifests across research domains, creates cognitive challenges for operationalization, and ultimately fuels the replication crisis. We propose that addressing these theoretical weaknesses is prerequisite to producing reliable, replicable science, particularly in high-stakes fields like drug development where the costs of irreproducibility are substantial.

Theoretical Foundations: The Conceptual Architecture of Research

Defining Conceptual Confusion in Scientific Research

Conceptual confusion refers to the lack of clarity, precision, and consensus regarding the fundamental constructs underlying a research domain. This phenomenon manifests in several ways:

- Ambiguous Construct Definitions: Core concepts lack operational specificity, allowing different researchers to interpret and measure the same concept in divergent ways [35]

- Poor Theory-Data Alignment: Theoretical frameworks fail to specify testable predictions or clear connections between abstract concepts and empirical observations [34]

- Unvalidated Assumptions: Research builds upon foundational premises that remain untested or inadequately specified [33]

In consciousness studies, for example, researchers ostensibly agree they are studying "what it is like to be" in a conscious state [35]. However, deeper examination reveals "widespread disagreement about what exactly what it is like amounts to, 'how much' there is of it, what we can take from how it subjectively appears, where to look for it, what it takes to solve the hard problem, what theories of consciousness (should) attempt to explain, and what counts as an explanation" [35]. This conceptual fragmentation persists despite surface consensus on terminology.

The Cognitive Psychology of Operationalization Challenges

The process of translating abstract theoretical concepts into measurable variables presents significant cognitive demands that amplify conceptual confusion. The Effort Monitoring and Regulation (EMR) model integrates self-regulated learning and cognitive load theory to explain how researchers manage complex cognitive tasks [13]. Several factors contribute to operationalization challenges:

- Cognitive Load Management: Complex theoretical frameworks with multiple interacting components exceed working memory capacity, leading to simplification that sacrifices conceptual precision [36]

- Metacognitive Biases: Researchers may misinterpret their subjective experience of conceptual understanding, overestimating their grasp of theoretical mechanisms [13]

- Heuristic Decision-Making: Under conditions of conceptual ambiguity, researchers default to familiar measurement approaches rather than developing optimally aligned operationalizations [13]

These cognitive challenges are particularly acute in interdisciplinary research, where teams must negotiate terminology and conceptual frameworks across disciplinary boundaries.

Domain Analysis: Conceptual Confusion Across Research Fields

Social Psychology and the Theoretical Integrity Deficit

Social psychology represents a canonical example of how conceptual confusion drives replication failures. The field's experience with social priming research illustrates this dynamic. Initial dramatic findings captured scientific and public imagination, suggesting that subtle environmental cues could unconsciously influence complex behaviors [34].

However, theoretical underpinnings proved inadequate upon scrutiny. Priming researchers "were repeatedly snared by conceptual and theoretical traps of their own devising" [34]. For instance, when initial effects failed to replicate, theorists introduced "moderators" such as desires to affiliate or gender differences to explain discrepancies. While theoretically possible, these post-hoc adjustments "undermined the generalizability of their experimental results" without providing falsifiable theoretical refinements [34].

The central theoretical claim—that "automaticity, not free will or intentionality, powerfully governs behavior"—proved too vague and expansive to generate specific, testable predictions [34]. This conceptual ambiguity enabled the persistence of research programs despite accumulating contradictory evidence.

Consciousness Studies: Proliferation Without Progress

Consciousness research exemplifies how conceptual confusion can persist as a field matures, with the domain characterized by "an abundance of theories and no good way to decide between them" [35]. The field currently offers approximately two dozen viable theories, each with some empirical support, yet lacks established parameters for theoretical evaluation [35].

This theoretical proliferation stems from foundational disagreements about the explanatory target itself. As researchers note, "as a field, we do agree that there is something about which we can know something (i.e., we agree that there is a phenomenon). But we do not agree on the characteristics of the phenomenon or the parameters for investigating it. Consequently, we do not agree on what a theory should explain" [35].

The absence of conceptual consensus manifests in divergent research approaches that produce non-comparable evidence, fundamentally limiting theoretical progress. Unlike natural sciences where empirical anomalies drive theoretical refinement, consciousness research lacks the conceptual coordination necessary for such cumulative progress.

Quantitative Research: Statistical Sophistication Masks Conceptual Weakness

Even highly quantitative fields face conceptual challenges, particularly when sophisticated statistical methods obscure theoretical deficiencies. The replication crisis has revealed how technical expertise can outpace conceptual clarity, with researchers sometimes deploying advanced statistical techniques without adequate attention to theoretical foundations [33].

Statistical misspecification—"invalid probabilistic assumptions imposed on one's data"—represents a frequent consequence of conceptual confusion [33]. When researchers lack clear theoretical models of causal mechanisms, they often default to conventional statistical models that misrepresent underlying processes. This problem is exacerbated by "the uninformed and recipe-like implementation of frequentist statistics without proper understanding of (a) the invoked probabilistic assumptions and their validity for the data used, (b) the reasoned implementation and interpretation of the inference procedures and their error probabilities, and (c) warranted evidential interpretations of inference results" [33].

Table 1: Manifestations of Conceptual Confusion Across Research Domains

| Research Domain | Primary Conceptual Challenge | Impact on Replicability | Example |

|---|---|---|---|

| Social Psychology | Overly flexible theoretical constructs | Enables post-hoc explanations for failed replications | Social priming theories incorporating unlimited moderators [34] |

| Consciousness Studies | Lack of agreement on explanatory target | Precludes meaningful theory comparison | Proliferation of theories without consensus on what constitutes consciousness [35] |

| Quantitative Research | Statistical models disconnected from theoretical mechanisms | Produces statistically significant but theoretically meaningless findings | Imposing invalid probabilistic assumptions on data [33] |

| Drug Development | Inadequate disease mechanism models | High failure rates in clinical translation | Target validation based on incomplete pathological models |

Cognitive and Methodological Mechanisms

How Conceptual Confusion Generates Replication Failure

Conceptual confusion drives replication failure through several interconnected mechanisms:

- Theoretical Flexibility: Vague constructs allow researchers to explain both positive and negative results through post-hoc adjustments, protecting theories from falsification [34]

- Operational Heterogeneity: Different research groups operationalize the same concept in divergent ways, producing non-comparable results [35]

- Cognitive Load Mismanagement: Complex, poorly specified theories overwhelm researchers' cognitive capacity, leading to methodological shortcuts and errors [13] [36]

- Incentive Misalignment: Academic reward systems prioritize novel, statistically significant findings over theoretical precision, exacerbating conceptual drift [32]

These mechanisms create a research environment where studies systematically produce unreliable evidence, as the cognitive and institutional structures fail to promote conceptual clarity.

The Role of Cognitive Load in Research Quality

Cognitive Load Theory (CLT) provides a framework for understanding how conceptual complexity impacts research quality. CLT distinguishes between three types of cognitive load that influence researchers' capacity to conduct rigorous science:

- Intrinsic Load: The inherent complexity of the research concept itself

- Extraneous Load: Additional cognitive demands imposed by poorly designed theoretical frameworks or methodological approaches

- Germane Load: Productive cognitive effort devoted to constructing coherent theoretical understanding [36]

When theoretical frameworks impose excessive extraneous load through conceptual confusion, researchers have diminished capacity for the germane processing necessary for rigorous operationalization and interpretation. This dynamic is particularly problematic for interdisciplinary research, where teams must integrate terminology and conceptual frameworks across fields.

Table 2: Cognitive Load Components in Research Operationalization

| Load Type | Definition | Impact on Research Quality | Mitigation Strategies |

|---|---|---|---|

| Intrinsic Load | inherent complexity of research concepts | Unavoidable, but can be managed through conceptual decomposition | Break complex constructs into component processes; develop intermediate theories |

| Extraneous Load | Cognitive demands from poorly structured theoretical frameworks | Reduces capacity for rigorous methodology; increases errors | Simplify theoretical presentations; clarify construct relationships; use visual conceptual maps |

| Germane Load | Effort devoted to schema construction and theoretical integration | Enhances depth of understanding and methodological alignment | Provide conceptual scaffolding; encourage explicit theory-data linking; implement collaborative conceptual refinement |

Solutions and Methodological Recommendations

Conceptual Clarity Framework for Research Design

Addressing conceptual confusion requires systematic approaches to theoretical development and operationalization. The following framework provides a structured approach to enhancing conceptual clarity:

- Construct Specification: Precisely define theoretical constructs, specifying both what they include and exclude. Establish clear boundaries between related concepts [35]

- Assumption Auditing: Systematically identify and test foundational assumptions underlying theoretical frameworks before building research programs upon them [33]

- Operational Alignment: Ensure measurement approaches directly reflect the theoretical construct rather than convenient proxies [34]

- Theoretical Falsifiability: Develop theories that generate specific, testable predictions and specify conditions under which they would be considered falsified [34]

Implementing this framework requires dedicating substantial resources to theoretical development before empirical investigation, a shift from current practices that often prioritize rapid data collection over conceptual refinement.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Methodological Tools for Addressing Conceptual Confusion

| Tool Category | Specific Method/Approach | Function | Application Context |

|---|---|---|---|

| Conceptual Specification Tools | Construct decomposition diagrams | Visualize theoretical components and relationships | Early theory development; interdisciplinary collaboration |

| Assumption Validation Methods | Specification tests; robustness checks | Verify statistical model assumptions; test theoretical premises | Model building; experimental design |

| Operational Alignment Frameworks | Multi-trait multi-method matrices | Establish convergent and discriminant validity | Measurement development; construct validation |