Beyond the Jingle-Jangle Fallacy: Establishing Discriminant Validity in Cognitive Terminology Measures for Clinical Research and Drug Development

This article provides a comprehensive guide for researchers and drug development professionals on establishing and validating the discriminant validity of cognitive terminology measures.

Beyond the Jingle-Jangle Fallacy: Establishing Discriminant Validity in Cognitive Terminology Measures for Clinical Research and Drug Development

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on establishing and validating the discriminant validity of cognitive terminology measures. It explores the fundamental principles that distinguish related constructs like cognitive frailty, food addiction, and binge eating. The content delves into advanced methodological approaches, including structural equation modeling and multi-trait multi-method analysis, for testing discriminant validity. It addresses common challenges such as poor psychometric reporting and low ecological validity, offering practical solutions for optimization. Through comparative analysis of tools like the Reading the Mind in the Eyes Test (RMET) and digital cognitive batteries, the article provides a framework for selecting and validating precise measurement tools, which is critical for ensuring accurate diagnosis, treatment efficacy assessment, and successful clinical trials in neurology and psychiatry.

What is Discriminant Validity? Core Principles and Critical Importance for Cognitive Assessment

In the rigorous world of cognitive terminology research, the precision of measurement tools can determine the success or failure of scientific endeavors. Discriminant validity stands as a fundamental psychometric principle ensuring that assessment instruments measure distinct, non-overlapping constructs. For researchers, scientists, and drug development professionals, establishing discriminant validity provides confidence that a cognitive test genuinely captures its intended construct—whether it be working memory, processing speed, or executive function—rather than inadvertently measuring unrelated traits or abilities. Without robust evidence of discriminant validity, clinical trials may draw flawed conclusions, cognitive screening tools may misclassify patients, and pharmacological interventions may target misidentified cognitive processes.

This guide examines how discriminant validity is defined, tested, and established across cognitive assessment methodologies, providing objective comparisons of measurement approaches and their empirical support.

Theoretical Foundations: What is Discriminant Validity?

Discriminant validity (sometimes called divergent validity) provides evidence that a test or measurement is not correlated too highly with measures from which it should differ. It verifies that a assessment tool is cleanly measuring its intended specific construct without unexpected overlap with theoretically distinct concepts [1].

The importance of this form of validity becomes clear when considering its counterpart—convergent validity. While convergent validity demonstrates that measures of similar constructs are positively correlated, discriminant validity establishes that measures of unrelated constructs show minimal relationship [1]. A trustworthy cognitive measure must demonstrate both: it should correlate with tests measuring similar cognitive functions while remaining distinct from assessments measuring unrelated abilities or traits.

In practical research terms, if a new test for quantitative reasoning also requires high reading comprehension, it lacks discriminant validity between these abilities. A student with strong math skills but reading challenges might perform poorly not due to mathematical deficiency, but because the test inadvertently measures reading ability [1]. Similarly, in clinical settings, a diagnostic questionnaire must successfully discriminate between anxiety and depression—conditions that often co-occur but require different treatment approaches [1].

Methodological Approaches: Testing for Discriminant Validity

Researchers employ several statistical methods to evaluate discriminant validity, each with distinct strengths and applications in cognitive research.

Table 1: Statistical Methods for Establishing Discriminant Validity

| Method | Procedure | Interpretation | Common Applications |

|---|---|---|---|

| Correlation Analysis | Calculating correlation coefficients between measures of different constructs | Correlations near zero indicate good discriminant validity | Initial validation studies; screening measures [1] |

| Fornell-Larcker Criterion | Comparing the square root of AVE for each construct with correlations between constructs | Each construct should share more variance with its measures than with other constructs | Structural equation modeling; latent variable analyses [1] |

| Heterotrait-Monotrait Ratio (HTMT) | Ratio of between-construct correlations to within-construct correlations | Values below 0.85-0.90 indicate good discriminant validity | Modern validation studies; confirmatory factor analysis [1] |

Discriminant Validity in Action: Cognitive Assessment Case Studies

Case Study 1: Social Anxiety Interpretation Bias Measures

Research on interpretation bias measures in social anxiety provides a compelling example of discriminant validity testing. A 2025 study evaluated four cognitive bias measures ranging from implicit/automatic to explicit/reflective processes [2]. The researchers examined whether these measures, while related, captured distinct aspects of interpretation bias.

The Scrambled Sentences Task (SST) and Interpretation and Judgmental Bias Questionnaire (IJQ) demonstrated good reliability and strong correlations with social anxiety symptoms, supporting their convergent validity. However, crucial for discriminant validity, each measure accounted for unique variance in anxiety symptoms beyond what was captured by the other measures [2]. This suggests that while related, these instruments tap into meaningfully distinct cognitive processes—a finding with direct implications for both research and clinical assessment.

Case Study 2: Functional Cognition in Older Adults

A 2025 cross-sectional study with 259 community-dwelling older adults compared performance-based instrumental activities of daily living (IADL) assessments with the Montreal Cognitive Assessment (MoCA) [3]. While the assessments showed expected relationships (supporting convergent validity), the performance-based IADL measures identified functional difficulties not captured by the MoCA alone in some borderline or unimpaired individuals [3].

This demonstrates discriminant validity at a clinical level—the performance-based assessments measure related but distinct constructs (functional cognition versus pure cognitive screening), providing unique information essential for comprehensive evaluations and care planning for older adults [3].

Case Study 3: Digital Cognitive Assessment in Dementia Staging

A 2025 retrospective study of a digital cognitive assessment (BrainCheck) examined its relationship with the Dementia Severity Rating Scale (DSRS) and Katz Index of Independence in activities of daily living (ADL) [4]. While moderate correlations were found between the digital cognitive overall score and both DSRS (r = -0.53) and ADL (r = 0.37), the modest strength of these relationships provides evidence of discriminant validity [4].

The digital cognitive measure shares variance with functional assessments but clearly captures distinct constructs, supporting its use as a complementary tool rather than a redundant measure in dementia staging.

Experimental Protocols for Establishing Discriminant Validity

Protocol 1: Multi-Trait Multi-Method Matrix

The multi-trait multi-method (MTMM) matrix represents a comprehensive approach for simultaneously evaluating convergent and discriminant validity [1].

Protocol 2: Cognitive Domain Specificity Testing

In neuropsychological assessment, establishing that tests measure specific cognitive domains rather than general cognitive impairment requires careful discriminant validity testing [5].

Comparative Analysis: Cognitive Measures with Established Discriminant Validity

Table 2: Cognitive Assessment Measures with Empirical Discriminant Validity Evidence

| Assessment Tool | Primary Construct Measured | Established Discrimination From | Statistical Evidence |

|---|---|---|---|

| Scrambled Sentences Task (SST) [2] | Interpretation bias in social anxiety | Other interpretation bias measures; general anxiety | Accounts for unique variance in social anxiety (p < .05) |

| Weekly Calendar Planning Activity (WCPA-17) [3] | Functional cognition & executive function | Pure cognitive screening (MoCA) | Identifies functional deficits not captured by MoCA in some cases |

| BrainCheck Digital Assessment [4] | Cognitive performance across multiple domains | Functional ability measures (ADL) | Moderate correlation with DSRS (r = -0.53); weak correlation with ADL (r = 0.37) |

| Mini-q Speeded Reasoning Test [6] | General cognitive abilities | Processing speed; working memory (as separate constructs) | Working memory accounts for 54% of association with g-factor |

Table 3: Essential Methodological Resources for Discriminant Validity Research

| Resource Type | Specific Tools/Techniques | Application in Discriminant Validity |

|---|---|---|

| Statistical Software | R (lavaan package), MPlus, SPSS AMOS, Python (SciPy) | Implementation of HTMT, Fornell-Larcker, factor analysis |

| Cognitive Assessment Batteries | WAIS, CANTAB, BrainCheck, MoCA, PASS | Source measures for establishing discriminant relationships |

| Psychometric Methods | Confirmatory Factor Analysis, Structural Equation Modeling, Multi-Trait Multi-Method Matrix | Statistical frameworks for testing discriminant validity |

| Reference Databases | BrainCheck normative database [4], Population-based cognitive norms | Age- and device-specific reference values for accurate comparison |

Establishing discriminant validity remains a fundamental requirement for developing cognitively precise assessment tools in basic research and clinical trials. The case studies and methodologies presented demonstrate that even highly correlated cognitive measures can provide unique information when proper discriminant validation procedures are followed.

For drug development professionals, these validation approaches are particularly crucial when evaluating whether cognitive endpoints in clinical trials represent specific target engagement versus generalized cognitive effects. The statistical frameworks and experimental protocols outlined provide practical pathways for strengthening measurement precision, ultimately supporting more accurate assessment of cognitive functioning and treatment efficacy across research and clinical applications.

In psychological science, creative efforts to propose new constructs have often outpaced rigorous investigation into how these constructs relate to existing ones. This has led to the proliferation of jingle and jangle fallacies—conceptual errors that undermine scientific communication and knowledge accumulation [7]. The jingle fallacy occurs when researchers assume that two measures labeled with the same name assess the same construct, when they actually measure different phenomena. Conversely, the jangle fallacy occurs when different labels are used for measures that essentially capture the same underlying construct [8]. These fallacies emerge from the vague linkage between psychological theories and their operationalization in empirical studies, compounded by variations in study designs, methodologies, and statistical procedures [9].

For cognitive terminology measures in particular, these fallacies present significant threats to validity. They can lead to bifurcated literatures, wasted research efforts, and constructs without unique psychological importance [7]. In drug development, where precise cognitive assessment is critical for evaluating treatment efficacy and safety, such conceptual confusion can have direct implications for patient care and regulatory decision-making [10] [11]. This guide examines the identification and prevention of these fallacies through the lens of discriminant validity, providing researchers with methodological frameworks to enhance conceptual clarity in cognitive research.

Defining the Fallacies and Their Consequences

Historical Foundations and Modern Manifestations

The terms "jingle" and "jangle" fallacies were coined by Truman Lee Kelley in his 1927 book Interpretation of Educational Measurements [8]. Kelley defined the jangle fallacy as the inference that two measures with different names measure different constructs, while Thorndike (1904) had earlier described the jingle fallacy as assuming that measures sharing the same label capture the same construct [7].

These fallacies remain pervasive across psychological disciplines. In cognitive research, they manifest when operationalizations diverge from theoretical constructs or when methodological variations create the illusion of distinct constructs where none exist [9]. For example, different measures all purporting to assess "metacognition" may actually capture related but distinct cognitive processes, while measures labeled as "metacognitive ability," "cognitive monitoring," and "meta-reasoning" might essentially assess the same underlying construct [12].

Implications for Drug Development and Cognitive Safety Assessment

In clinical drug development, jingle-jangle fallacies present particular challenges for cognitive safety assessment and efficacy evaluation [10] [11]. When cognitive constructs lack clear definitional boundaries:

- Regulatory decisions may be based on inconsistent or misleading cognitive endpoints

- Cross-study comparisons become problematic, hindering meta-analyses

- Clinical trial design may incorporate redundant or irrelevant cognitive measures

- Risk-benefit assessments of medications may be compromised by measurement unreliability

The U.S. Food and Drug Administration has emphasized the importance of sensitive cognitive measurements, especially for drugs with potential central nervous system effects [10]. However, without clear resolution of jingle-jangle fallacies in cognitive terminology, such assessments remain challenging.

Empirical Examples of Jingle-Jangle Fallacies in Cognitive Research

Case Study 1: Self-Belief Constructs

A comprehensive investigation of nine self-belief constructs (self-efficacy, self-competence, self-confidence, self-esteem, self-worth, self-value, self-regard, self-liking, and self-respect) revealed significant overlap suggestive of jangle fallacies [13]. Factor analyses indicated that a two-factor solution best fit the data, with self-efficacy constituting one factor and all other constructs loading on a second factor. This suggests that many supposedly distinct self-belief constructs may represent conceptual redundancies, with self-efficacy potentially being the exception.

Table 1: Factor Loadings of Self-Belief Constructs

| Construct | Factor 1 (Self-Efficacy) | Factor 2 (Global Self-Evaluation) |

|---|---|---|

| Self-efficacy | 0.82 | 0.24 |

| Self-esteem | 0.18 | 0.79 |

| Self-worth | 0.22 | 0.76 |

| Self-confidence | 0.31 | 0.71 |

| Self-competence | 0.41 | 0.58 |

Case Study 2: Metacognition Measures

A comprehensive assessment of 17 different measures of metacognition found that while all measures were valid, they showed varying dependencies on nuisance variables such as task performance, response bias, and metacognitive bias [12]. This illustrates the jingle fallacy risk—different measures all purporting to assess "metacognitive ability" may actually be influenced by different confounding factors, potentially capturing different aspects of the metacognitive process.

Table 2: Properties of Selected Metacognition Measures

| Measure | Dependence on Task Performance | Dependence on Response Bias | Dependence on Metacognitive Bias | Split-Half Reliability | Test-Retest Reliability |

|---|---|---|---|---|---|

| AUC2 | High | Low | Low | 0.95 | 0.42 |

| Gamma | High | Medium | Medium | 0.94 | 0.38 |

| Meta-d' | Medium | Low | Low | 0.96 | 0.45 |

| M-Ratio | Low | Low | Low | 0.93 | 0.41 |

| Meta-noise | Low | Low | Low | 0.91 | 0.39 |

Case Study 3: Cognitive Ability and Judgment

Research on cognitive skills in strategic behavior has demonstrated distinctions between cognitive ability (fluid intelligence) and judgment as separate constructs [14]. While both predicted strategic behavior in beauty contest games, they exhibited different behavioral patterns: higher cognitive ability predicted more frequent choices of zero (the Nash equilibrium), while better judgment predicted less frequent choices of zero. When both were included in models, cognitive ability remained a significant predictor while judgment became insignificant, suggesting that fluid intelligence drives strategic thinking, while other facets of judgment influence different aspects of behavior.

Methodological Approaches for Detecting and Preventing Fallacies

Extrinsic Convergent Validity (ECV)

Extrinsic convergent validity provides a formal approach to evaluating construct overlap by testing whether two measures of the same construct—or two measures of seemingly different constructs—have comparable correlations with external criteria [7] [15]. ECV evidence is demonstrated when two measures not only correlate highly with each other but also show similar patterns of correlation with a set of external variables.

The statistical framework for testing ECV involves hypothesis tests for dependent correlations, which can be implemented through:

- Analytical approaches using tests of dependent correlations

- Resampling approaches (e.g., bootstrapping)

- Structural equation modeling with model comparisons

Specification Curve and Multiverse Analyses

Novel approaches such as specification curve analysis and multiverse analysis involve delineating all reasonable methodological and analytical choices for addressing a research question [9]. These methods systematically examine how variations in theoretical frameworks, measurement approaches, and analytical decisions affect research outcomes.

A jingle fallacy detector can be implemented by identifying situations where the same specifications lead to different results, while a jangle fallacy detector would flag when different specifications consistently yield overly similar results [9].

Advanced Computational Approaches

Natural Language Processing (NLP) and machine learning tools offer promising approaches for detecting jingle-jangle fallacies at scale [9]. These methods can:

- Analyze semantic similarity between construct definitions across studies

- Identify consistent patterns in item content despite different labels

- Detect conceptual drift in construct definitions over time

- Facilitate large-scale literature reviews and meta-analyses

Larsen and Bong (2016) developed six construct identity detectors for literature reviews and meta-analyses using different NLP algorithms, while recent approaches have utilized GPT to analyze item content and scale assignments in personality taxonomies [9].

Experimental Protocols for Establishing Discriminant Validity

Protocol 1: Factor Analytic Approach

Purpose: To examine the underlying factor structure of multiple potentially overlapping constructs and test whether they load on distinct factors.

Procedure:

- Participant Recruitment: Secure a sufficiently large sample (N > 500) for stable factor analysis

- Measure Administration: Administer all measures of potentially overlapping constructs in counterbalanced order

- Factor Analysis:

- Conduct exploratory factor analysis (EFA) with oblique rotation

- Follow with confirmatory factor analysis (CFA) to test hypothesized structure

- Compare fit indices for different factor solutions

- Discriminant Validity Assessment:

- Calculate average variance extracted (AVE) for each construct

- Compare AVE values with squared correlations between constructs

- Discriminant validity is supported when AVE > squared correlation

Interpretation: Evidence of jangle fallacies emerges when measures with different labels load highly on the same factor, while jingle fallacies are suggested when measures with the same label load on different factors.

Protocol 2: External Correlates Approach

Purpose: To examine whether measures with similar labels show divergent patterns of correlation with external criteria, or whether measures with different labels show convergent patterns.

Procedure:

- Variable Selection:

- Identify multiple measures of potentially overlapping constructs

- Select a battery of external criteria theoretically related to the constructs

- Data Collection:

- Administer all measures to a representative sample

- Ensure sufficient power for correlation analysis

- Statistical Analysis:

- Calculate correlation matrices between all measures and criteria

- Use tests of dependent correlations to compare patterns

- Apply structural equation modeling to test equality constraints on paths

- Visualization:

- Create heatmaps of correlation patterns

- Plot confidence intervals for correlation differences

Interpretation: Similar correlation profiles for differently labeled measures suggest jangle fallacies, while divergent profiles for similarly labeled measures suggest jingle fallacies.

Table 3: Research Reagent Solutions for Jingle-Jangle Fallacy Detection

| Tool/Technique | Primary Function | Application Context | Key References |

|---|---|---|---|

| Confirmatory Factor Analysis (CFA) | Tests hypothesized factor structure | Establishing discriminant validity between constructs | [13] |

| Multitrait-Multimethod Matrix (MTMM) | Examines convergent and discriminant validity | Assessing construct distinctiveness across methods | [7] |

| Specification Curve Analysis | Maps all reasonable analytical choices | Identifying robustness of findings to analytical decisions | [9] |

| Tests of Dependent Correlations | Compares correlation patterns with external criteria | Extrinsic convergent validity assessment | [7] [15] |

| Natural Language Processing (NLP) | Analyzes semantic similarity in constructs | Large-scale literature analysis | [9] |

| Structural Equation Modeling (SEM) | Tests equality constraints in nomological networks | Modeling relationships among multiple constructs | [7] |

Applications in Drug Development and Cognitive Safety Assessment

Cognitive Performance Outcomes (Cog-PerfOs) in Clinical Trials

The validation of cognitive performance outcomes in drug development faces particular challenges related to jingle-jangle fallacies [11]. Key considerations include:

- Content Validity: Ensuring Cog-PerfOs comprehensively represent the cognitive concepts relevant to the condition and context of use

- Ecological Validity: Establishing congruence between cognitive assessments and real-world functioning

- Cross-cultural validity: Addressing cultural variations in cognitive test performance and interpretation

Involvement of cognitive psychologists in content validation and task selection is essential for proper conceptual alignment between measured cognitive constructs and therapeutic targets [11].

Cognitive Safety Assessment

Regulatory guidance increasingly emphasizes sensitive cognitive measurements for drugs with potential CNS effects [10]. However, jingle-jangle fallacies in cognitive terminology can compromise:

- Dose-response characterization if cognitive endpoints lack specificity

- Risk-benefit assessments if cognitive safety measures lack clarity

- Drug differentiation claims if cognitive profiles are muddled by measurement unreliability

Establishing clear discriminant validity among cognitive constructs used in safety assessment is therefore critical for regulatory decision-making and appropriate risk communication.

Jingle-jangle fallacies represent significant threats to the accumulation of knowledge in cognitive science and its applications in drug development. Addressing these conceptual pitfalls requires methodological rigor, theoretical precision, and systematic approaches to establishing discriminant validity.

The methodological frameworks outlined in this guide—including extrinsic convergent validity, specification curve analysis, and advanced computational approaches—provide researchers with tools to detect and prevent these fallacies. As cognitive terminology continues to evolve in complexity, maintaining conceptual clarity becomes increasingly important for valid measurement, theoretical progress, and applied outcomes in both basic research and clinical applications.

By adopting these approaches, researchers can strengthen the validity of cognitive terminology measures, enhance communication across scientific disciplines, and ensure that cognitive assessment in drug development accurately captures the intended constructs of interest.

Discriminant validity is a cornerstone of construct validity, providing critical evidence that a measurement tool is truly assessing its intended concept and not merely reflecting other, related constructs [16]. In essence, it demonstrates that a test is distinct from measures of different constructs, even those that might be theoretically related. For researchers, clinicians, and drug development professionals, establishing discriminant validity is not merely a statistical formality but a fundamental prerequisite for ensuring that collected data yield meaningful and interpretable results. Without it, findings can become confounded, leading to flawed conclusions, misdirected resources, and, in clinical settings, potential risks to patient care. This guide examines the tangible consequences of poor discriminant validity across cognitive and clinical research, supported by experimental data and comparative analyses of measurement instruments.

Theoretical Framework and Key Concepts

The principle of discriminant validity requires demonstrating that a measurement is not overly correlated with constructs from which it should theoretically differ [16]. This is often evaluated using the multitrait-multimethod matrix (MTMM), which assesses the relationships between different traits measured by different methods [17]. Confirmatory Factor Analysis (CFA) is a common statistical method used to provide this evidence [17].

A key challenge lies in the conceptual heterogeneity of many psychological constructs. For instance, mentalizing (the capacity to understand behavior through underlying mental states) is a complex construct assessed by various self-report instruments. Questions have been raised about whether these instruments adequately capture the theoretical complexity of mentalizing across its multiple dimensions or if they instead measure related concepts like general cognitive ability or emotion dysregulation [18]. Similarly, in creativity research, a critical psychometric issue is ensuring that tests of divergent thinking are distinct from measures of traditional intelligence (IQ) [16].

Consequences of Poor Discriminant Validity: Evidence from Research Settings

Impaired Construct Interpretation and Confounded Findings

When discriminant validity is not established, it becomes impossible to determine what a test is truly measuring. This clouds the interpretation of research findings and can lead to incorrect theoretical conclusions.

- The Case of Social Cognition Measures: A systematic review of social cognition measures highlights significant concerns with construct, discriminant, and convergent validity [16]. The widely used Reading the Mind in the Eyes Test (RMET), for instance, has been criticized for its unclear factor structure. However, some commentators argue that despite these structural issues, the RMET demonstrates substantial external validity, correlating with other tests of emotion recognition ability and differentiating between clinical groups (e.g., autistic individuals) and non-clinical groups [19]. This tension between structural and external validity underscores the complexity of establishing what a test truly measures. If the RMET partly reflects general cognitive ability, its use as a pure measure of "theory of mind" is compromised [19] [16].

- Stage of Change Assessments: A study comparing three popular measures of readiness to change substance use—the University of Rhode Island Change Assessment (URICA), the Stages of Change Readiness and Treatment Eagerness Scale (SOCRATES), and the Readiness to Change Questionnaire (RCQ)—found questionable convergent validity between them [17]. Confirmatory Factor Analysis suggested that these instruments, all designed to measure aspects of the same theoretical model, may not be equivalent. This lack of discriminant validity between measures of ostensibly similar constructs creates confusion in the literature and makes it difficult to compare findings across studies.

Compromised Cross-Cultural and Cross-Group Comparisons

Measurement instruments must perform equivalently across different populations to allow for valid comparisons. Poor discriminant validity can indicate that a test is not measuring the same construct in the same way across groups.

- Global Cognitive Performance in Older Adults: A large-scale study using data from the Survey of Health, Ageing, and Retirement in Europe (SHARE) tested the measurement invariance of a Global Cognitive Performance (GCP) measure across 27 European countries and Israel [20]. The researchers found widespread non-invariance, with 31.85% of factor loadings and 54.81% of item intercepts showing significant deviations. This indicates that the cognitive tests (word recall, verbal fluency, orientation, numeracy) were not being interpreted consistently across different national contexts. The consequence was clear: the study authors strongly recommended that researchers abstain from making direct cross-country comparisons of GCP using this data, as observed differences could reflect measurement artifacts rather than true cognitive differences [20].

Table 1: Documented Consequences of Poor Discriminant Validity in Research

| Research Area | Measurement Instrument | Consequence of Poor Discriminant Validity |

|---|---|---|

| Social Cognition | Reading the Mind in the Eyes Test (RMET) | Inability to determine if the test measures pure theory of mind, general cognitive ability, or emotion recognition, confounding research findings [19] [16]. |

| Substance Use Treatment | URICA, SOCRATES, RCQ | Inability to directly compare results from studies using different instruments, hindering the accumulation of a coherent knowledge base [17]. |

| Cross-Cultural Gerontology | SHARE Global Cognitive Performance (GCP) Measure | Invalidity of cross-country comparisons due to measurement non-invariance, potentially leading to false conclusions about international cognitive differences [20]. |

Consequences of Poor Discriminant Validity: Evidence from Clinical and Drug Development Settings

Inaccurate Assessment of Treatment Efficacy and Patient Outcomes

In clinical trials, poorly discriminating measures can fail to detect meaningful changes in the specific construct targeted by an intervention, leading to incorrect conclusions about a treatment's efficacy.

- Assessing Work Productivity in Rheumatoid Arthritis: The Rheumatoid Arthritis-specific Work Productivity Survey (WPS-RA) was developed to measure the impact of RA on productivity both within and outside the home. During its validation, researchers assessed its known-groups validity, a form of discriminant validity, by comparing scores across groups with different levels of physical disability (as measured by the HAQ-DI) [21]. They found that subjects with lower physical function (higher HAQ-DI scores) generally had significantly greater RA-associated productivity losses. If the WPS-RA had poor discriminant validity from general quality-of-life measures, it would not be sensitive enough to capture these specific, clinically meaningful differences, potentially causing a drug's true benefit on patient functioning to be overlooked [21].

Biased Trial Results and Reduced Generalizability

The broader issue of bias in clinical trials is a major concern for drug development. While not exclusively a measurement issue, poor discriminant validity in key endpoints can contribute to detection bias and threaten both the internal and external validity of a study [22].

- Ensuring Valid and Generalizable Outcomes: Clinical trials employ strategies like randomization and blinding to minimize biases such as selection and performance bias [22]. However, if the outcome measures themselves lack discriminant validity, detection bias can occur, where outcomes are systematically influenced by expectations. Furthermore, a lack of diverse representation in trials—a threat to external validity—can be exacerbated by measurement issues. If a cognitive test performs differently across racial, ethnic, or gender groups (i.e., lacks measurement invariance), its use in a homogeneous trial population will generate results that are not generalizable to real-world, diverse patient populations [22] [23]. This can lead to approved drugs having unexpected safety or efficacy profiles in certain demographic groups.

Table 2: Consequences of Poor Measurement Validity in Clinical Trials

| Clinical Setting | Type of Bias or Error | Consequence for Drug Development and Patient Care |

|---|---|---|

| Rheumatoid Arthritis Trials | Use of a non-discriminant outcome measure | Failure to detect a treatment's true effect on a specific domain like work productivity, leading to a potential undervaluation of a effective therapy [21]. |

| General Clinical Trials | Detection Bias & Reporting Bias | Systematic errors in how outcomes are determined or reported, threatening the internal validity of the trial and the reliability of its conclusions [22]. |

| Drug Development Pipeline | Lack of Diverse Representation & Measurement Non-Invariance | Trial results that do not generalize to the broader population, potentially resulting in drugs with unpredictable effectiveness or side effects in underrepresented groups [22] [23]. |

Methodological Protocols for Establishing Discriminant Validity

Core Experimental and Statistical Workflows

Establishing discriminant validity is a methodological imperative. The following workflow outlines the key steps, from study design to statistical analysis.

The Researcher's Toolkit: Essential Reagents for Robust Validity Assessment

The following table details key methodological "reagents"—tools and techniques—required for conducting a rigorous discriminant validity assessment.

Table 3: Essential Research Reagents for Discriminant Validity Analysis

| Research Reagent | Function & Purpose | Application Example |

|---|---|---|

| Confirmatory Factor Analysis (CFA) | Tests a pre-specified factor structure to see if items load strongly on their intended factor and weakly on others. | Used to validate the proposed dimensions of the Digital Mindset Scale, confirming its three-factor structure (digital consciousness, expertise, business acumen) [24]. |

| Multitrait-Multimethod Matrix (MTMM) | A framework for evaluating convergent and discriminant validity by examining correlations between different traits measured by different methods. | Employed to assess the construct validity of three stage-of-change measures (URICA, SOCRATES, RCQ) in substance use research [17]. |

| Alignment Optimization | A statistical method for testing approximate measurement invariance in large-scale cross-cultural studies when full invariance is not achieved. | Applied in the SHARE cognitive performance study to handle non-invariance across 28 countries after full invariance was dismissed [20]. |

| Heterotrait-Monotrait Ratio (HTMT) | A modern criterion for assessing discriminant validity; values above a threshold (e.g., 0.85) suggest a lack of discriminant validity. | Commonly used in scale development and validation studies in psychology and management (e.g., Digital Mindset Scale validation) [24]. |

| Known-Groups Validation | Tests if a measure can differentiate between groups known to differ on the construct of interest. | Used to validate the WPS-RA by comparing scores between groups with high and low physical disability [21]. |

The consequences of poor discriminant validity permeate every stage of research and clinical practice, from muddying theoretical frameworks to producing non-generalizable clinical trial results. As evidenced by challenges in cognitive assessment, social cognition, and patient-reported outcomes, failing to ensure that a tool measures what it claims—and nothing else—compromises data integrity, wastes resources, and ultimately impedes scientific and clinical progress. For drug development professionals and researchers, a rigorous and ongoing commitment to establishing the discriminant validity of measurement instruments is not merely a methodological nicety but a fundamental pillar of generating reliable, interpretable, and actionable evidence.

In the assessment of cognitive constructs, whether in neuropsychological research, drug development, or digital health, establishing robust measurement validity is paramount. This guide objectively compares two fundamental subtypes of construct validity: convergent and discriminant validity. Convergent validity confirms that measures designed to assess the same construct are strongly related, while discriminant validity proves that measures of different constructs are distinct and not unduly correlated. Through experimental data, methodological protocols, and visualizations, this article delineates their unique and complementary roles in validating cognitive terminology measures, providing a critical framework for researchers and drug development professionals.

In scientific research and clinical trials, particularly those involving cognitive assessment, the validity of the measurement tools is a foundational concern. Construct validity is the degree to which a test measures the theoretical construct it claims to measure. Within this framework, convergent validity and discriminant validity (also known as divergent validity) serve as two essential, interdependent pillars [25] [26] [27].

Their simultaneous evaluation is crucial because neither alone is sufficient for establishing construct validity [26]. A test must simultaneously demonstrate that it correlates with what it should (convergent validity) and does not correlate with what it should not (discriminant validity) [25] [28]. This is especially critical in high-stakes fields like drug development, where cognitive performance outcomes (Cog-PerfOs) are used as primary endpoints to evaluate the efficacy of new treatments for conditions like Alzheimer's disease [11]. Misleading results from an instrument with poor discriminant validity can lead to faulty conclusions about a treatment's effect on a specific cognitive domain.

Conceptual Definitions and Theoretical Frameworks

Convergent Validity

Convergent validity is the extent to which a measure correlates with other measures that are designed to assess the same or a highly similar construct [25] [26]. It is supported by evidence showing that different instruments intended to capture the same underlying trait (e.g., working memory) yield strongly positive, correlated results [28] [27].

- Example: A new, brief computerized test of verbal memory would demonstrate high convergent validity if participants' scores show a strong positive correlation with their scores on a well-established, longer verbal memory test [25].

Discriminant Validity

Discriminant validity is the extent to which a measure does not correlate strongly with measures of different, unrelated constructs [25] [27]. It provides evidence that the test is uniquely measuring its intended construct and is not contaminated by other, distinct abilities or traits.

- Example: A test designed to measure mathematical reasoning should not correlate highly with a test designed to measure spelling proficiency. A lack of correlation between the results of these two exams indicates high discriminant validity [25] [27].

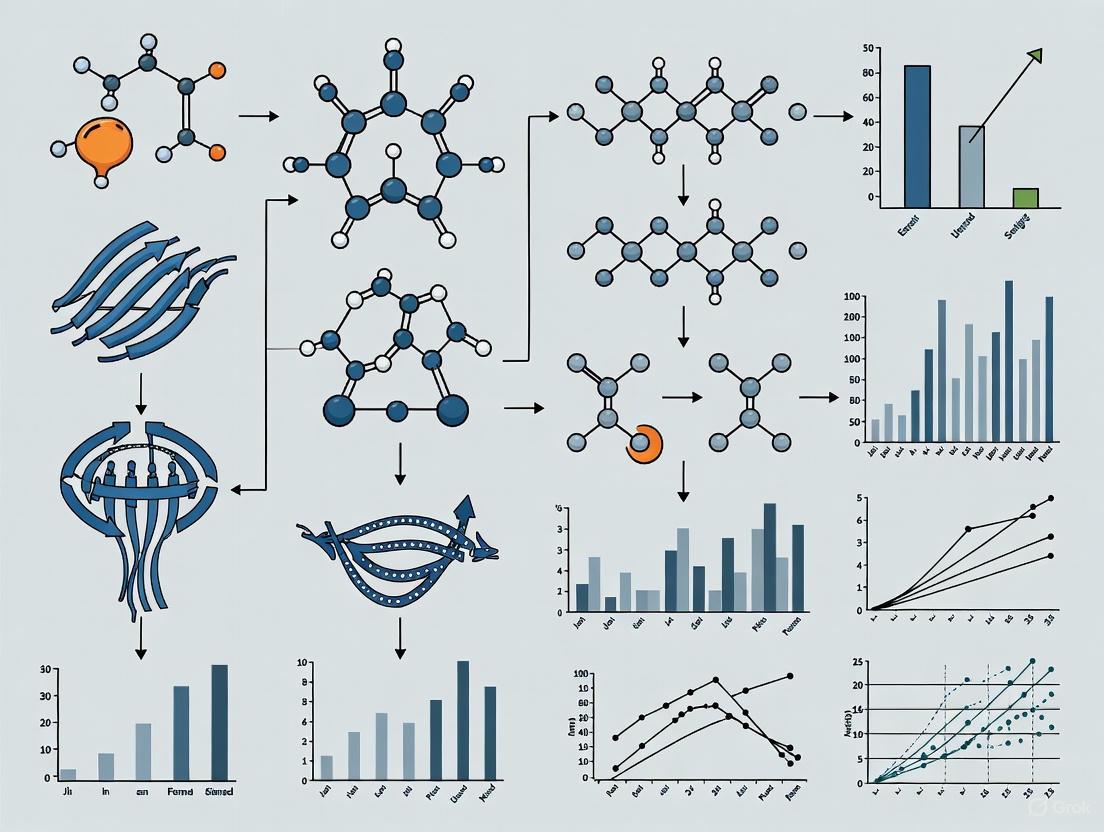

The following diagram illustrates the fundamental logical relationship between these two concepts in establishing the overall construct validity of a measurement.

Methodological Protocols for Evaluation

Researchers employ standardized methodologies to gather quantitative evidence for convergent and discriminant validity.

Correlation Analysis

The most fundamental method involves calculating correlation coefficients (e.g., Pearson's r) between measures [28] [27].

- Protocol for Convergent Validity: Administer the target test and one or more previously validated tests of the same construct to a relevant sample. Calculate the correlation matrix. A strong positive correlation (e.g., > 0.5-0.6, though context-dependent) provides evidence for convergence [28].

- Protocol for Discriminant Validity: Administer the target test alongside tests of theoretically distinct constructs. Calculate the correlation matrix. Weak correlations (e.g., < 0.3) between the target test and measures of different constructs support discriminant validity [27]. A key rule of thumb is that convergent correlations should be higher than discriminant correlations [28].

Factor Analysis

Factor analysis, including both exploratory (EFA) and confirmatory (CFA) techniques, is a powerful statistical method for evaluating validity [29] [27].

- Protocol: Administer a battery of tests measuring several different constructs to a large sample. In EFA, researchers examine whether items or tests from the same theoretical construct load highly onto the same factor. In CFA, a predefined model is tested, and good model fit is indicated when tests load strongly on their intended factor (convergent validity) and weakly on other factors (discriminant validity) [29]. For instance, a study on cognitive tests used EFA on 18 tests and found a clear three-factor structure (verbal/working memory, inhibitory control, memory), supporting the convergent validity of tests within those factors [29].

The Multitrait-Multimethod Matrix (MTMM)

The MTMM is a classic, rigorous approach that assesses validity by measuring multiple traits (constructs) using multiple methods [27] [30].

- Protocol: As shown in the workflow below, researchers measure at least two different constructs using at least two different methods (e.g., self-report, parent-report, performance-based). The resulting correlation matrix is inspected for specific patterns: high correlations in the validity diagonals (convergent validity) and lower correlations elsewhere, especially between different traits measured by the same method (discriminant validity) [30]. A study of the Strengths and Difficulties Questionnaire (SDQ) used this approach with parent, teacher, and child reports and found good convergent validity but poor discriminant validity among some subscales [30].

The following workflow outlines the steps involved in this comprehensive method.

Experimental Data and Comparative Evidence

The following tables summarize real-world experimental findings that illustrate the evaluation of convergent and discriminant validity.

Table 1: Evidence from a Factor Analysis of Cognitive Tests [29] This study administered 23 traditional and experimental cognitive tests to 1,059 community volunteers and 137 patients. The analysis revealed distinct patterns of convergent and discriminant validity.

| Cognitive Test Domain | Evidence of Convergent Validity | Evidence of Discriminant Validity |

|---|---|---|

| Working Memory | Spatial and Verbal Capacity Tasks factored together with traditional working memory measures (e.g., Digit Span). | Working memory tests loaded on a factor distinct from inhibitory control and memory factors. |

| Inhibitory Control | Several experimental measures (e.g., Stop-Signal Task, Reversal Learning) had weak relationships with all other tests, including traditional inhibitory measures, indicating poor convergent validity. | The same measures showed poor discriminant validity as they did not form a coherent factor separate from other constructs. |

| Memory | Experimental tests of memory (Remember–Know, Scene Recognition) factored together with traditional memory measures. | Memory tests loaded on a factor distinct from working memory and inhibitory control. |

Table 2: Evidence from a Multitrait-Multimethod Study of the SDQ [30] This study examined the Strengths and Difficulties Questionnaire (SDQ) using parent, teacher, and peer reports across five traits.

| Trait (Subscale) | Evidence of Convergent Validity | Evidence of Discriminant Validity |

|---|---|---|

| Hyperactivity–Inattention | Strong correlations across different informants (parent, teacher, peer). | Weak correlations with theoretically distinct traits like Prosocial Behaviour. |

| Emotional Symptoms | Strong correlations across different informants. | Weak correlations with theoretically distinct traits like Conduct Problems. |

| Conduct Problems | Strong correlations across different informants. | Moderate correlations with Peer Problems and Hyperactivity, suggesting somewhat poor discriminant validity between these specific subscales. |

| Peer Problems | Strong correlations across different informants. | Moderate correlations with Conduct Problems and Emotional Symptoms, suggesting somewhat poor discriminant validity. |

The Researcher's Toolkit: Essential Reagents & Materials

The following table details key solutions and materials required for conducting validity research in cognitive assessment.

Table 3: Essential Research Reagents and Materials for Validity Studies

| Item/Reagent | Function/Description | Example Use in Validity Research |

|---|---|---|

| Validated Cognitive Test Batteries | Established measures serving as the "gold standard" for comparison. | Used as criterion measures to evaluate the convergent validity of a new, experimental cognitive test [29] [11]. |

| Statistical Software Packages | Software for conducting complex statistical analyses (e.g., R, SPSS, Mplus). | Essential for calculating correlation matrices, performing exploratory and confirmatory factor analysis, and modeling MTMM data [29] [30]. |

| Digital Assessment Platforms | Online or computer-based systems for administering cognitive tests. | Enable precise measurement (e.g., reaction time), standardized administration, and efficient data collection for large-scale validity studies [31] [32]. |

| Multitrait-Multimethod (MTMM) Framework | A research design protocol, not a physical reagent. | Provides a structured methodological framework for designing studies that can simultaneously evaluate convergent and discriminant validity [27] [30]. |

| Normative Data Sets | Population reference data for standardized test scores. | Critical for interpreting scores and ensuring that validity findings are generalizable across different populations and cultural contexts [11]. |

Implications for Cognitive Research and Drug Development

The rigorous application of convergent and discriminant validity principles has profound implications, particularly in the field of drug development for cognitive disorders.

- Evaluating Cognitive Performance Outcomes (Cog-PerfOs): Regulatory agencies require robust evidence that Cog-PerfOs used in clinical trials are valid. A test must demonstrate it is sensitive to the specific cognitive domain targeted by the drug (e.g., episodic memory) and not unduly influenced by other domains (e.g., language comprehension) [11]. Poor discriminant validity can obscure a drug's true efficacy.

- The Rise of Digital Assessments: Remote, unsupervised digital cognitive tests are increasingly used for early detection of conditions like Alzheimer's disease [32]. Establishing their convergent validity against traditional in-clinic assessments and discriminant validity from measures of mood or motor function is a critical step in their validation [31] [32].

- Cultural and Linguistic Adaptation: When cognitive tests are translated or adapted for multinational trials, it is vital to re-establish validity. An item might inadvertently measure reading comprehension in one culture while measuring memory in another, severely compromising both convergent and discriminant validity [11].

Convergent and discriminant validity are not merely abstract statistical concepts; they are practical, essential tools for ensuring the integrity of scientific measurement. In cognitive research and drug development, where decisions impact health outcomes and therapeutic advancements, a thorough understanding of this "validity duo" is non-negotiable. By employing the methodological protocols, statistical techniques, and critical frameworks outlined in this guide, researchers can build a compelling evidential basis for their measures, ensuring that they accurately capture the constructs they are intended to measure and nothing more.

In the realms of cognitive aging and behavioral nutrition, the precision of measurement constructs fundamentally dictates the validity of research findings and subsequent clinical applications. Discriminant validity serves as a critical psychometric property, ensuring that measurement tools are truly assessing distinct theoretical constructs rather than overlapping or unrelated concepts [1]. This principle is akin to verifying that a scoop designed for flour does not inadvertently measure salt, thereby guaranteeing that each tool captures only the specific "ingredient" or trait it is intended to measure [1]. The necessity for such clarity becomes paramount when distinguishing between closely related conditions such as cognitive frailty and food addiction, where blurred lines between constructs can lead to misdiagnosis, flawed research conclusions, and ineffective interventions [33] [16].

This guide provides a systematic comparison of key cognitive and behavioral constructs, emphasizing the empirical evidence supporting their discriminant validity. It is structured to aid researchers, scientists, and drug development professionals in navigating the complexities of construct measurement, which is essential for developing targeted therapies and precise diagnostic tools. The subsequent sections will dissect the constructs of cognitive frailty and food addiction, evaluate their measurement approaches, and visualize their defining characteristics and assessment methodologies.

Deconstructing Cognitive Frailty: Definition and Diagnostic Challenges

Cognitive frailty (CF) represents a complex clinical condition characterized by the co-occurrence of physical frailty and cognitive impairment, in the absence of a diagnosed dementia [33] [34]. The operational definition established by the International Academy on Nutrition and Aging (IANA) and the International Association of Gerontology and Geriatrics (IAGG) specifies the presence of both physical frailty (as per Fried's phenotype, e.g., unintentional weight loss, exhaustion, weakness) and mild cognitive impairment (MCI), with a Clinical Dementia Rating of 0.5 [33]. This condition is clinically significant due to its strong association with an elevated risk of dementia, functional disability, reduced quality of life, and mortality [34].

Despite this formal definition, a notable lack of consensus persists among clinicians. A recent survey of European geriatricians revealed that only one in four respondents identified the IANA-IAGG definition as the correct description of cognitive frailty [33]. Nearly two-thirds of those who reported using the term in their clinical work did not select the official definition, indicating a significant disconnect between formal criteria and clinical understanding. This variance in perception underscores a substantial discriminant validity challenge; many clinicians conflate cognitive frailty with broader cognitive vulnerabilities, such as delirium, or with dementia itself, rather than recognizing it as a distinct entity defined by the specific confluence of physical and cognitive decline [33].

Table 1: Key Constructs and Differential Diagnosis in Cognitive Frailty

| Construct | Core Definition | Exclusion Criteria for Differential Diagnosis |

|---|---|---|

| Cognitive Frailty | Co-existence of physical frailty and Mild Cognitive Impairment (MCI) [33] [34]. | Exclusion of Alzheimer's disease or other dementias [33]. |

| Physical Frailty | Phenotype including unintentional weight loss, exhaustion, weakness, slow walking speed, and low physical activity [34]. | Not applicable. |

| Mild Cognitive Impairment (MCI) | Measurable cognitive deficits with preservation of independence in instrumental activities of daily living [33]. | Intact functional abilities; not severe enough to impair daily life [34]. |

| Motoric Cognitive Risk (MCR) Syndrome | Subjective cognitive complaints combined with slow gait [34]. | Does not require the full spectrum of physical frailty. |

| Social Frailty | Declining social resources and social networks critical for basic human needs [34]. | A distinct, though often related, vulnerability domain. |

The following diagram illustrates the convergent relationship between two core domains that define cognitive frailty, distinguishing it from other related conditions.

Food Addiction: A Contentious Construct and its Measurement

The concept of "food addiction" is a highly debated subject within nutritional science and psychology. It is proposed as a unique diagnostic construct characterized by compulsive consumption of certain foods, particularly those that are highly processed, despite negative consequences [35] [36]. Proponents argue that highly processed (HP) foods, with their unnaturally high concentrations of refined carbohydrates and fats, can trigger behavioral and neurobiological responses similar to those observed in substance use disorders [36]. The core evidence for this construct rests on observed parallels, including diminished control over consumption, strong cravings, continued use despite adverse outcomes, and repeated unsuccessful attempts to quit or reduce intake [35] [36].

The primary tool for assessing food addiction in humans is the Yale Food Addiction Scale (YFAS), which operationalizes the construct by adapting the diagnostic criteria for substance use disorders from the DSM-5 to the context of highly palatable foods [35]. A critical aspect of establishing the discriminant validity of food addiction is demonstrating that it is not merely a synonym for obesity or binge eating disorder (BED). Empirical evidence confirms this distinction: only about 24.9% of individuals classified as overweight or obese meet the clinical threshold for food addiction on the YFAS, while 11.1% of individuals in the healthy-weight range also report clinically significant symptoms [35]. Similarly, although there is comorbidity, only approximately 56.8% of individuals with BED meet the criteria for food addiction, indicating that the two constructs are related but not synonymous [35].

Table 2: Discriminant Validity of Food Addiction vs. Related Conditions

| Condition | Prevalence of Food Addiction (YFAS) | Key Differentiating Feature |

|---|---|---|

| Food Addiction | Defined by YFAS criteria (e.g., impaired control, craving, continued use despite consequences) [35]. | Central role of specific food substances (highly processed); not all cases involve overeating to the point of obesity [36]. |

| Obesity | ~24.9% of overweight/obese individuals [35]. | A metabolic condition characterized by excess body fat; can exist without addictive eating patterns. |

| Binge Eating Disorder (BED) | ~56.8% of individuals with BED [35]. | Defined by discrete episodes of excessive food intake; food addiction focuses on addictive-like behaviors surrounding food. |

| Healthy-Weight Population | ~11.1% of individuals [35]. | Demonstrates that addictive eating patterns can occur independently of body weight. |

Experimental Protocols and Assessment Methodologies

Assessing Cognitive Frailty: The Geriatrician Survey

Protocol Objective: To investigate European geriatricians' understanding and agreement with the formal IANA-IAGG definition of cognitive frailty [33].

Methodology:

- Design: An online, cross-sectional survey distributed across Europe through professional geriatric medicine groups.

- Participants: 440 eligible geriatricians or senior trainees from 30 European countries.

- Measures: Respondents were presented with a list of 17 possible definitions and asked to select the one they believed best matched the term 'cognitive frailty' in academic literature. Subsequently, the IANA-IAGG definition was shared, and respondents rated their agreement on a 0-10 scale.

- Analysis: Descriptive statistics were used to report the frequency of definition selection. Content analysis was performed on open-text responses explaining agreement levels.

Key Findings: This study highlighted a fundamental discriminant validity issue at the conceptual level. The most frequent response (26.8%) was the correct IANA-IAGG definition (MCI + physical frailty). However, almost an equal number (19.6%) selected a much broader definition ("current or previous delirium OR MCI OR dementia"), failing to discriminate cognitive frailty from other cognitive vulnerabilities and omitting the essential physical frailty component [33].

Establishing Food Addiction: The Systematic Review Protocol

Protocol Objective: To evaluate the empirical evidence for "food addiction" as a valid construct in humans and animals by assessing its alignment with established characteristics of addiction [35].

Methodology:

- Design: A systematic review conducted according to PRISMA guidelines.

- Data Sources: Comprehensive searches in PubMed and PsychINFO databases using keywords related to food addiction, binge eating, and compulsive overeating.

- Study Selection: Inclusion criteria encompassed quantitative, peer-reviewed studies in English. A total of 52 studies (35 articles) were included for qualitative assessment.

- Validation Framework: Each study was assessed for evidence supporting predefined addiction criteria: brain reward dysfunction, preoccupation, risky use, impaired control, tolerance/withdrawal, social impairment, chronicity, and relapse.

Key Findings: The review found support for all addiction criteria in relation to highly processed foods. The most substantial evidence was for brain reward dysfunction (supported by 21 studies) and impaired control (supported by 12 studies), while "risky use" was supported by the fewest studies (n=1) [35]. This structured approach helps discriminate food addiction from simple overeating by anchoring it to a recognized, multi-faceted diagnostic framework.

The Scientist's Toolkit: Essential Research Reagents and Measures

Table 3: Key Assessment Tools and Reagents for Cognitive and Behavioral Constructs

| Tool / Reagent | Construct Measured | Function and Application in Research |

|---|---|---|

| Fried's Phenotype Criteria | Physical Frailty [33] [34]. | Operationalizes physical frailty via five components (e.g., weight loss, exhaustion). A prerequisite for diagnosing cognitive frailty. |

| Yale Food Addiction Scale (YFAS) | Food Addiction [35]. | The primary self-report measure applying modified DSM substance use criteria to eating behaviors, crucial for establishing the construct's prevalence and discriminant validity. |

| Clinical Dementia Rating (CDR) | Dementia Severity [33]. | A structured interview used to stage dementia; a CDR of 0.5 is used in the IANA-IAGG criteria to exclude frank dementia in cognitive frailty diagnosis. |

| ImPACT Verbal Memory Composite | Cognitive Function (Verbal Memory) [37]. | An example of a tool where discriminant validity has been questioned; its score was highly correlated with other cognitive composites, limiting its specificity. |

| Controlled Feeding Diets | Metabolic Response to Food Processing [38]. | Used in interventions to isolate the effect of food processing from nutrient content. Provides experimental evidence for mechanisms underlying addictive potential of foods. |

The journey from cognitive frailty to food addiction underscores a universal imperative in cognitive and behavioral research: the steadfast commitment to discriminant validity. For cognitive frailty, the challenge lies in achieving consensus on its operational definition and distinguishing it from a spectrum of pre-dementia and frailty syndromes [33] [34]. For food addiction, the endeavor is to conclusively demonstrate that it is a unique entity separable from, though potentially comorbid with, conditions like obesity and binge eating disorder [35] [36]. The experimental protocols and tools detailed in this guide provide a foundation for this essential work. Ultimately, the fidelity of our scientific constructs dictates the efficacy of our interventions. Continued refinement of these definitions and the tools used to measure them is not merely an academic exercise, but a fundamental prerequisite for advancing targeted treatments and improving patient outcomes in neurology, geriatrics, and psychopharmacology.

How to Test for It: Methodological Frameworks and Analytical Techniques

In the assessment of cognitive terminology measures, establishing construct validity is a fundamental prerequisite for ensuring that research findings are meaningful and accurate. Within this framework, discriminant validity provides critical evidence that a test designed to measure a specific cognitive construct (e.g., working memory) is indeed distinct and does not unduly correlate with tests designed to measure different constructs (e.g., processing speed) [39]. The failure to demonstrate discriminant validity brings into question whether a tool measures the intended theoretical concept or something else entirely, potentially leading to flawed conclusions in basic research or clinical trials [40]. This guide objectively compares two primary statistical methods used to establish this distinctiveness: traditional correlation analysis and the more specialized Fornell-Larcker Criterion.

The core principle of discriminant validity is that measures of theoretically different constructs should not be highly correlated [27]. For researchers and professionals in drug development, this is particularly crucial when employing cognitive batteries as endpoints in clinical trials. Accurate measurement of distinct cognitive domains allows for the precise identification of a compound's effects, ensuring that an observed improvement in, for instance, executive function is not merely an artifact of a change in verbal ability [41].

Methodological Comparison

The following table provides a direct comparison of the two cornerstone methods for assessing discriminant validity.

Table 1: Comparison of Correlation Analysis and the Fornell-Larcker Criterion

| Feature | Correlation Analysis | Fornell-Larcker Criterion |

|---|---|---|

| Core Principle | Examines bivariate correlation coefficients between measures of different constructs [39]. | Compares the square root of a construct's Average Variance Extracted (AVE) to its correlations with other constructs [42]. |

| Primary Purpose | To show that measures of unrelated constructs have low correlations. | To show that a construct shares more variance with its own indicators than with other constructs [42] [40]. |

| Key Statistic | Pearson's correlation coefficient ((r)) [39]. | Square root of Average Variance Extracted ((\sqrt{AVE})) and latent variable correlations [42]. |

| Interpretation of Validity | Low correlations (e.g., ( r < 0.85 ) or lower) suggest constructs are distinct [27] [40]. | (\sqrt{AVE}) for a construct should be greater than its correlation with any other construct [42] [43]. |

| Key Strength | Simple to compute, intuitive to understand, and a good initial check. | A more rigorous, variance-based metric that is integral to Structural Equation Modeling (SEM) [42]. |

| Key Limitation | Does not account for measurement error; a rigid cutoff (e.g., ( r < 0.85 )) may be inelastic for constructs of varying bandwidths [40]. | Less intuitive; requires the calculation of AVE, making it specific to variance-based SEM [42]. |

Experimental Protocols

Protocol for Discriminant Validity via Correlation Analysis

Correlation analysis offers a straightforward, initial method for evaluating discriminant validity.

- Step 1: Define Constructs and Select Measures. Clearly articulate the target constructs (e.g., "episodic memory") and comparison constructs (e.g., "executive function") [27]. Select reliable and valid measures for each. In cognitive research, this might involve choosing specific tests from a battery, such as the NIH Toolbox, and their gold-standard analogues [41].

- Step 2: Administer Measures and Collect Data. Administer all selected measures to a relevant sample. The sample should have sufficient variability on the constructs of interest to avoid restriction of range, which can attenuate correlations [27].

- Step 3: Calculate Correlation Coefficients. Compute a correlation matrix containing Pearson's correlation coefficients ((r)) between all pairs of constructs [39] [27].

- Step 4: Interpret Correlations. Examine the correlations between measures of theoretically distinct constructs. Good discriminant validity is supported by low correlations. While field-specific conventions apply, correlations below 0.85 are often used as a rule-of-thumb to indicate distinctiveness, though some suggest a more conservative threshold of 0.70 [40] [44].

Protocol for Discriminant Validity via the Fornell-Larcker Criterion

The Fornell-Larcker Criterion provides a more robust assessment within the context of Structural Equation Modeling (SEM).

- Step 1: Compute Construct Averages and Correlations. Calculate the average scores for each construct and then determine the Pearson correlations between the different constructs [42] [43].

- Step 2: Form the Latent Variable Correlation Matrix. Create a matrix that displays all the correlations between the various constructs [42].

- Step 3: Calculate Average Variance Extracted (AVE). For each construct, calculate the AVE. The AVE is a measure of convergent validity that represents the average amount of variance that a construct explains in its indicators relative to the variance due to measurement error [42]. Formally, it is the mean of the squared loadings of the indicators on their construct.

- Step 4: Compute the Square Root of AVE. For each construct, calculate the square root of its AVE ((\sqrt{AVE})) [42] [43].

- Step 5: Update the Correlation Matrix. Substitute the diagonal entries in the latent variable correlation matrix (which are typically 1, representing a construct's correlation with itself) with the square roots of their respective AVE values [42] [43].

- Step 6: Validate Discriminant Validity. Compare the values in the updated matrix. For discriminant validity to be established, the (\sqrt{AVE}) for each construct (on the diagonal) must be greater than the construct's correlations with all other constructs (the off-diagonal values in its row and column) [42] [45].

Table 2: Example Fornell-Larcker Matrix for Cognitive Measures

| Construct | 1. Vocabulary | 2. Reading | 3. Episodic Memory | 4. Executive Function |

|---|---|---|---|---|

| 1. Vocabulary | 0.899 | |||

| 2. Reading | 0.815 | 0.884 | ||

| 3. Episodic Memory | 0.779 | 0.802 | 0.893 | |

| 4. Executive Function | 0.795 | 0.745 | 0.782 | 0.868 |

Note: Diagonal elements (in bold) are the (\sqrt{AVE}). Discriminant validity is established for all constructs as every diagonal value is greater than the correlations in its row and column [42].

Workflow and Logical Pathway for Validation

The following diagram illustrates the logical decision pathway for establishing discriminant validity using the two cornerstone methods.

The Scientist's Toolkit: Essential Research Reagents

In the context of statistical validation for cognitive research, "research reagents" refer to the essential analytical tools and software required to perform the analyses. The following table details these key resources.

Table 3: Essential Research Reagent Solutions for Statistical Validation

| Research Reagent | Function / Application |

|---|---|

| Statistical Software (e.g., R, SPSS, Stata) | Provides the computational environment to calculate correlation matrices, perform factor analysis, and conduct Structural Equation Modeling (SEM), which is necessary for implementing the Fornell-Larcker Criterion [43] [44]. |

| SEM/PLS Software (e.g., lavaan (R), SmartPLS) | Specialized software designed for variance-based SEM, which can automatically compute key metrics like Average Variance Extracted (AVE) and construct correlations, streamlining the Fornell-Larcker validation process [42] [46]. |

| Cognitive Test Batteries (e.g., NIHTB-CHB) | Standardized, multidimensional instruments that provide the manifest variables (test scores) for the latent constructs (e.g., executive function, episodic memory). Their validated structure is a prerequisite for discriminant validity analysis [41]. |

| Gold Standard Reference Tests | Well-established tests used as validation criteria against which new or related cognitive measures are compared. They help anchor the constructs in the analysis, as seen in the validation of the NIH Toolbox [41]. |

| Pre-Validated Survey Platforms (e.g., Qualtrics) | Tools that facilitate the collection of robust data on multiple constructs and often include built-in analytical modules to assist with initial validity checks [46]. |

This guide provides an objective comparison of how different Structural Equation Modeling (SEM) approaches perform in assessing the validity of cognitive and psychological measures, with a specific focus on establishing discriminant validity.

SEM Approaches for Validity Assessment: A Comparative Analysis

Table 1: Comparison of SEM Methodologies for Validity Assessment

| SEM Methodology | Key Application in Validity Assessment | Empirical Performance Findings | Primary Advantage | Key Limitation |

|---|---|---|---|---|

| Traditional Confirmatory Factor Analysis (CFA) [47] | Tests pre-specified factor structure where items load only on their designated construct. | Often demonstrates poor model fit for complex instruments due to disallowing cross-loadings, potentially overstating factor distinctness [47]. | Enforces simple structure, conceptually straightforward for testing hypotheses about scale structure. | Rigid assumption of zero cross-loadings can produce biased parameters and poor fit, threatening validity evidence [47]. |

| Exploratory Structural Equation Modeling (ESEM) [47] | Allows items to cross-load on multiple factors, providing a more realistic test of discriminant validity. | Superior model fit (CFI=.982, SRMR=.013, RMSEA=.04) compared to CFA for the Cognitive Emotion Regulation Questionnaire, revealing factor overlap [47]. | More accurate estimation of factor correlations, offering a stricter test of whether constructs are truly distinct [47]. | Results can be less straightforward to interpret than CFA due to cross-loadings. |

| Bayesian SEM (BSEM) [48] | Incorporates prior knowledge and can model all minor cross-loadings and residual correlations. | Provided superior insight into WISC-V cognitive structure beyond traditional methods, achieving a better-fitting model without post-hoc modifications [48]. | Attenuates replication crisis by avoiding capitalization on chance from post-hoc specification searches [48]. | Requires careful specification of priors and more complex computational steps. |

| Measurement Invariance Analysis [20] | Tests if a measure's factor structure is equivalent across groups (e.g., countries, time). | Found 31.85% noninvariant factor loadings and 54.81% noninvariant item intercepts for a Global Cognitive Performance measure across 28 countries [20]. | Essential for validating that cross-group comparisons are meaningful and not biased by measurement artifacts. | Failure to establish invariance, as in the SHARE study, prevents valid group comparisons [20]. |

Experimental Protocols for SEM Validity Assessment

Protocol: Establishing Discriminant Validity via ESEM

This protocol is ideal for initially validating a scale or re-evaluating an existing one where constructs might be conceptually overlapping [47].

- Step 1: Model Specification – Specify the hypothesized factor model, defining which items are intended to measure which latent constructs.

- Step 2: Model Estimation – Estimate the ESEM model, typically using Geomin rotation, which allows items to have cross-loadings on all factors.

- Step 3: Model Fit Evaluation – Assess global fit using indices: CFI ≥ 0.95, SRMR ≤ 0.08, and RMSEA ≤ 0.06 indicate good fit [47].

- Step 4: Examination of Factor Loadings – Review pattern of factor loadings. Strong discriminant validity is supported when items have strong loadings on their target factor and very weak cross-loadings (e.g., < |0.20| ) on non-target factors [47].

- Step 5: Analysis of Factor Correlations – Examine the correlations between latent factors. Very high correlations (e.g., > |0.85|) suggest poor discriminant validity, indicating the constructs may not be empirically distinct.

Protocol: Testing for Measurement Invariance

This protocol is critical before comparing scale scores across different groups, such as in cross-cultural clinical trials [20].

- Step 1: Configural Invariance – Test the same factor structure (same items loading on same factors) in all groups. This is the baseline model and must demonstrate acceptable fit.

- Step 2: Metric Invariance – Constrain factor loadings to be equal across groups and compare this model to the configural model. A non-significant change in CFI (ΔCFI < 0.01) suggests factor loadings are equivalent, meaning the construct is being perceived similarly.

- Step 3: Scalar Invariance – Constrain item intercepts to be equal across groups and compare to the metric model. Meeting this stricter level of invariance allows for comparison of latent means between groups.

- Step 4: Handling Non-Invariance – If invariance is not achieved (as in [20]), use methods like the Alignment Approach to identify the source of non-invariance or refrain from making direct group comparisons.

Protocol: Innovative Psychometric Designs with BSEM

BSEM can be used to explore complex model structures that are difficult to specify with traditional methods [48].

- Step 1: Priors Specification – Specify informative, small-variance priors for all possible minor cross-loadings and residual correlations, centering them on zero.

- Step 2: Model Estimation – Run the BSEM analysis using Markov Chain Monte Carlo (MCMC) estimation.

- Step 3: Convergence Diagnostics – Check that the MCMC chains have converged using statistics like the Potential Scale Reduction Factor (PSRF ≈ 1.0).

- Step 4: Posterior Predictive Checking – Evaluate the model's fit to the data using the posterior predictive p-value (PPP).

- Step 5: Interpretation – Examine the posterior distributions of parameters. A well-fitting BSEM model with all cross-loadings specified can provide strong evidence for a measure's structural validity without resorting to problematic post-hoc model adjustments [48].

Visualizing SEM Workflows for Validity Assessment

SEM Validity Analysis Workflow

Discriminant Validity Assessment Logic

The Scientist's Toolkit: Essential Research Reagents

Table 2: Key Software and Statistical Tools for SEM Validity Analysis

| Tool / Resource | Function in Validity Assessment | Application Example |

|---|---|---|

| MPLUS Software [49] | A flexible statistical modeling program widely used for running complex SEM, ESEM, and BSEM analyses. | Used to examine the mediating role of cognitive schemas between parenting styles and suicidal ideation [49]. |

| JASP Software [50] | An open-source software with a user-friendly interface for conducting CFA and other statistical analyses. | Used for Confirmatory Factor Analysis (CFA) in a study on cognitive achievement [50]. |

| Alignment Optimization [20] | A method within SEM for testing approximate measurement invariance when full invariance does not hold. | Applied to evaluate non-invariance of a Global Cognitive Performance measure across 28 European countries [20]. |

| Heterotrait-Monotrait Ratio (HTMT) [1] | A modern criterion for assessing discriminant validity, comparing within-construct to between-construct correlations. | A value below 0.85 or 0.90 indicates two constructs are distinct, providing strong evidence for discriminant validity [1]. |

| COSMIN Risk of Bias Checklist [18] | A standardized tool for evaluating the methodological quality of studies on measurement properties. | Used in systematic reviews to assess the quality of validation studies for self-report mentalising measures [18]. |

Article Contents

- Introduction to the MTMM Framework

- Core Components and Interpretation

- Experimental Protocol & Analysis

- Case Study: Validity of a Cognitive Test Battery

- Comparative Analytical Approaches

- Research Reagent Solutions