Beyond the Lab: Enhancing Ecological Validity in Virtual Reality Executive Function Testing for Clinical Research and Drug Development

This article explores the critical role of ecological validity in the assessment of executive functions (EF) using virtual reality (VR) for biomedical research.

Beyond the Lab: Enhancing Ecological Validity in Virtual Reality Executive Function Testing for Clinical Research and Drug Development

Abstract

This article explores the critical role of ecological validity in the assessment of executive functions (EF) using virtual reality (VR) for biomedical research. Traditional neuropsychological tests, while robust, are limited by their poor generalizability to real-world functioning. VR technology offers a paradigm shift by creating immersive, controlled simulations of daily life tasks, thereby enhancing the verisimilitude and veridicality of cognitive assessments. We examine the foundational concepts of ecological validity, detail methodological approaches for developing and implementing VR-based EF tests, address key challenges and optimization strategies, and review the growing body of validation evidence. For researchers and drug development professionals, this synthesis highlights VR's potential to generate more sensitive, meaningful, and predictive cognitive endpoints in clinical trials, ultimately bridging the gap between laboratory findings and real-world patient outcomes.

The Ecological Validity Gap: Why Traditional Executive Function Tests Fail in Real-World Prediction

Core Concept FAQ

What is ecological validity in neuropsychological assessment? Ecological validity is a measure of how well test performance predicts behaviors in real-world settings. It refers to the relationship between phenomena in the real world and their manifestation in experimental settings [1]. In neuropsychology, it specifically concerns understanding the relationship between assessment results and performance of everyday tasks [2].

What is the difference between verisimilitude and veridicality? Verisimilitude and veridicality are the two main methods for establishing ecological validity in assessments. The distinction is crucial for research design [1].

- Verisimilitude is the degree to which tasks performed during testing resemble those performed in daily life. It focuses on the superficial appearance or face-validity of the test.

- Veridicality is the degree to which test scores statistically correlate with measures of real-world functioning. It focuses on the predictive power of the test scores.

Why are these concepts particularly important for Virtual Reality (VR) executive function research? Traditional neuropsychological tests often bear little resemblance to real-world cognitive challenges, and performance on these tasks accounts for only a small proportion of variance in real-world functioning [3]. VR offers a promising tool to bridge this gap by creating immersive, interactive environments that simulate real-life situations [4]. Research into VR-based assessments highlights their potential for superior ecological validity [5] [3] [4].

What are the common limitations of each approach?

- Veridicality: A key limitation is that the chosen outcome measure used for comparison may not accurately represent the client’s true everyday functioning [1].

- Verisimilitude: Limitations can include the significant cost of creating new, life-like tests and a reluctance from clinicians to adopt these novel tools into established practice [1].

Troubleshooting Guide: Common Experimental Issues

| Problem & Symptoms | Potential Causes | Recommended Solutions |

|---|---|---|

| Low Ecological Validity: Test performance does not predict real-world functional outcomes. | Using abstract stimuli; Highly controlled, artificial test environment; Behavioral responses not analogous to daily life [1] [3]. | Adopt a verisimilitude approach: design tasks that mimic real-world activities (e.g., virtual shopping, cooking) [2] [4]. Ensure elicited behaviors are natural (e.g., using a steering wheel vs. a mouse) [1]. |

| Poor Prediction of Specific Functional Outcomes (e.g., return to work): Test scores correlate with other metrics but not the target outcome. | The test may lack veridicality for that specific outcome; The chosen real-world measure may be inappropriate [1]. | Conduct studies to correlate test scores with specific, validated functional measures (e.g., employment status). Use statistical methods like regression analysis to establish predictive validity [5]. |

| Participant Discomfort in VR Testing: Reports of nausea, dizziness, or eye strain during assessment. | Technical issues like low frame rates; a disconnect between visual and vestibular perception [6]. | Maintain a consistent high frame rate (e.g., 90 FPS). Implement comfort settings (e.g., teleportation movement, comfort vignettes) [6]. |

| Lack of Clinical Adoption of a novel ecologically valid test. | High cost of new test development; Clinician reluctance to change from traditional, familiar measures [1]. | Provide strong feasibility, acceptability, and validity data [3] [4]. Publish normative data to enable clinical use [4]. Use cross-platform development frameworks to reduce long-term costs [6]. |

Experimental Protocols for VR-Based Assessment

Protocol 1: Investigating Verisimilitude with a Novel VR Task

This protocol is based on the development and validation of the Nesplora Ice Cream Test, a VR tool designed to assess executive functions in an ecologically valid way [4].

- Objective: To establish normative data and validate a VR-based executive function test that simulates a real-world task.

- Materials:

- VR headset and controllers.

- Nesplora Ice Cream Test software or similar custom VR environment (e.g., a virtual shop).

- Data recording system integrated with the VR platform.

- Methodology:

- Participant Recruitment: Recruit a representative sample based on age and gender from the target population. Exclusion criteria typically include neurological pathology or conditions limiting VR use [4].

- Testing Environment: Conduct the assessment in a quiet room with sufficient space for safe VR use.

- Task Administration:

- Participants are immersed in a VR scenario (e.g., running an ice cream shop).

- They must perform tasks requiring planning (e.g., prioritizing orders), working memory (e.g., remembering recipes), and cognitive flexibility (e.g., adapting to changing customer demands) [4].

- The test is administered in a single session by a trained evaluator.

- Data Collection: Collect performance metrics automatically via the software, such as accuracy, reaction times, errors, and efficiency scores on the planning, learning, and flexibility factors [4].

- Analysis:

- Perform cluster analysis to define age groups for different cognitive factors.

- Conduct confirmatory factor analysis to validate the test's theoretical structure (e.g., planning, learning, flexibility) [4].

- Generate descriptive normative data stratified by age and gender.

Protocol 2: Establishing Veridicality for a VR Test

This protocol is modeled on research that investigated the predictive ability of VR tests for return to work (RTW) in individuals with mild Traumatic Brain Injury (mTBI) [5].

- Objective: To determine the statistical correlation between VR test scores and a concrete real-world outcome.

- Materials:

- VR headset and controllers.

- Standardized neuropsychological battery (e.g., Ruff 2 & 7 test).

- Psychological questionnaires.

- Two novel VR tests (VRTs) designed to assess attention and executive functions [5].

- Methodology:

- Participant Recruitment: Recruit a clinical sample (e.g., patients in the post-acute recovery period of mTBI) and a control group if applicable.

- Assessment:

- Conduct an intake interview.

- Administer a battery of traditional neuropsychological tests.

- Administer the novel VR tests.

- Outcome Measure: Establish a clear, objective real-world outcome metric. In this example, it was employment status (Return to Work vs. No Return to Work) at a specific follow-up point [5].

- Analysis:

- Use discriminant analysis to see if the tests (VR and traditional) can significantly predict group membership (e.g., RTW status).

- Perform regression analysis to identify which specific test variables (e.g., a trial from a VRT, a score from a traditional test) are predictive of the outcome [5].

- Report metrics such as predictive accuracy, sensitivity, and specificity.

Research Reagent Solutions: Essential Materials for VR Ecological Validity Research

| Item / Solution | Function in Research |

|---|---|

| VR Development Platform (e.g., Unity XR, Unreal Engine) | Provides the core software environment for creating and running custom, ecologically valid VR assessment scenarios [6]. |

| Cross-Platform Framework (e.g., OpenXR) | Manages hardware fragmentation by providing a unified API, ensuring the experiment runs across different VR headsets [6]. |

| Spatial Audio Engine (e.g., Steam Audio, Oculus Audio SDK) | Creates immersive and realistic soundscapes, which is crucial for simulating real-world environments and directing attention [6]. |

| Neuropsychological Test Battery (Standardized) | Serves as a benchmark for establishing convergent and discriminant validity of the novel VR tool [5] [4]. |

| Functional Outcome Measures (e.g., employment status, daily living questionnaires) | Provides the criterion measure against which the veridicality of the VR test is validated [5] [1]. |

| Color Contrast Checker Tool (e.g., WebAIM) | Ensures that any text or graphical elements in the VR interface meet accessibility standards (WCAG), guaranteeing readability for all participants [7]. |

| Data Analysis Software (e.g., R, SPSS, Python) | Used for statistical analyses, including factor analysis, regression, and normative data generation, to validate the test's psychometric properties [4]. |

Conceptual and Experimental Workflow Diagrams

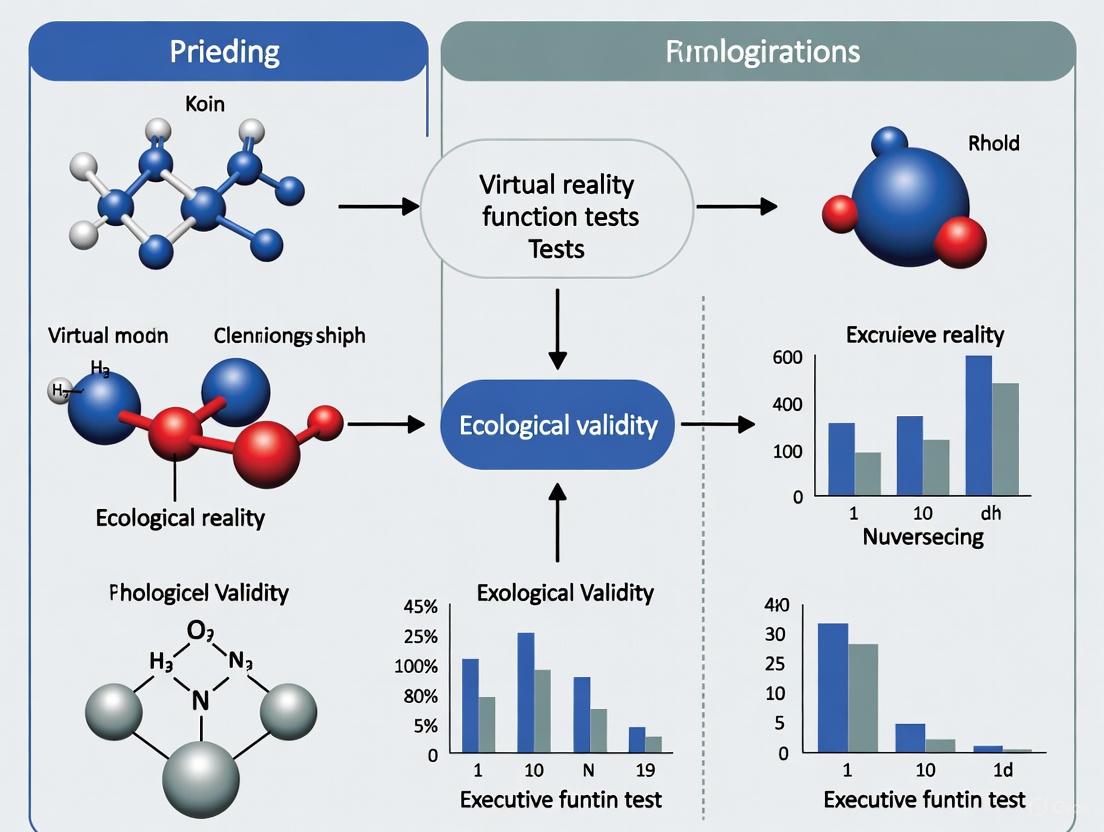

Diagram 1: Relationship between core concepts and research approaches.

Diagram 2: High-level workflow for developing a VR assessment tool.

The Limitations of Traditional Paper-and-Pencil and Computerized EF Tests

FAQs and Troubleshooting Guides

FAQ 1: What is the core limitation of traditional Executive Function tests regarding real-world prediction?

Answer: Traditional EF tests suffer from a significant lack of ecological validity, meaning an individual's performance in the controlled testing environment does not accurately predict their functioning in everyday, real-world situations [8] [9]. The abstract, context-free nature of these tasks fails to capture the complex, dynamic demands of daily life.

Replicated evidence indicates that traditional EF tests account for only 18% to 20% of the variance in a person's everyday executive abilities [9]. This gap is largely because real-world decision-making and planning are influenced by numerous internal and external factors—such as emotion, distraction, and multi-sensory input—that traditional tests deliberately exclude [9].

FAQ 2: Why do traditional EF tests and EF rating scales often yield different results for the same individual?

Answer: EF performance tests and informant rating scales (like the BRIEF) show only weak-to-modest correlations, suggesting they measure different aspects of functioning and cannot be used interchangeably [8].

The table below summarizes their key differences and divergent validities:

| Feature | Executive Function Performance Tests | Executive Function Rating Scales |

|---|---|---|

| Testing Environment | Controlled lab setting [8] | Natural, everyday settings ("in the wild") [8] |

| What is Measured | Cognitive capacity (optimal performance) [8] | Typical behavior and goal-directed success in real life [8] [9] |

| Primary Limitation | Poor ecological validity and generalizability [8] [9] | May measure behavioral outcomes rather than pure cognitive constructs [8] |

| Correlation with Academic Achievement | Demonstrates superior predictive validity for academic test performance [8] | Better at predicting teacher ratings of academic performance [8] |

FAQ 3: What is the "task impurity problem," and how does it affect traditional EF assessment?

Answer: The task impurity problem is a major methodological confound in traditional EF testing. It means that a score on any EF task reflects not only the target executive function but also systematic variance from other executive functions, variance from non-EF cognitive processes (e.g., language, motor skills), and random error variance [9].

This makes it difficult to isolate and measure a specific EF (like working memory or inhibition) purely, as performance is contaminated by other cognitive demands of the task.

FAQ 4: How can researchers mitigate these limitations and improve ecological validity?

Answer: Emerging methodologies use Immersive Virtual Reality (VR) to create controlled yet ecologically valid assessment environments.

Experimental Protocol for Developing an Immersive VR EF Assessment [9]:

- Define the EF Construct: Clearly specify the core EF (e.g., planning, cognitive flexibility) and higher-order function (e.g., problem-solving) to be assessed.

- Design the Virtual Scenario: Create a realistic virtual environment (e.g., a virtual supermarket, kitchen, or city) that elicits the target EF behaviors through naturalistic tasks like shopping or cooking [10] [9].

- Embed Performance Metrics: Program the system to log objective, quantifiable data (e.g., time to complete task, number of errors, efficiency of route planning, accuracy).

- Validate Against Gold-Standard Tools: Administer both the new VR paradigm and established traditional EF tests to a participant sample. Conduct correlation analyses to evaluate convergent validity [9].

- Assess User Experience:

- Monitor Cybersickness: Use standardized questionnaires to measure and control for symptoms like dizziness, which can impair performance and threaten validity [9].

- Measure Engagement: Evaluate user immersion and engagement, as heightened engagement may lead to a more reliable assessment of an individual's best effort [9].

FAQ 5: What technological integrations can enhance next-generation EF assessment?

Answer: Integrating Brain-Computer Interface (BCI) technology with VR presents a powerful future direction.

- EEG during EF Tasks: Electroencephalography (EEG) can capture neural correlates of cognition in real-time. Specific EEG frequency bands, such as theta rhythm, have been identified as potential biomarkers for enhancing and monitoring EF [10].

- BCI-VR for Rehabilitation: Combined BCI-VR systems are being explored for EF training. These systems can use real-time neural data to provide more effective feedback and promote brain function recovery, creating a closed-loop assessment and rehabilitation tool [10].

Research Reagent Solutions: Essential Materials for VR EF Research

| Item Name | Function/Explanation |

|---|---|

| Head-Mounted Display (HMD) | Provides immersive visual and auditory experience, creating a sense of presence in the virtual environment crucial for ecological validity [9]. |

| EEG Amplifier & Cap | Captures electrophysiological brain activity (EEG signals) during task performance, allowing for the identification of neural biomarkers associated with EF [10]. |

| Virtual MET (Multiple Errands Test) | A VR adaptation of a classic real-world assessment. It requires participants to run errands in a virtual town, providing a controlled yet ecologically valid measure of planning and problem-solving [9]. |

| Cybersickness Questionnaire | A standardized self-report tool (e.g., SSQ) critical for monitoring adverse effects like dizziness that can confound cognitive performance data [9]. |

| Cognitive Classification Algorithms | Machine learning models (e.g., Convolutional Neural Networks) that analyze complex data (like EEG signals) to classify cognitive states or identify signs of neurological disorders [10]. |

Methodological Workflow for Advanced EF Assessment

The following diagram illustrates the integrated workflow for developing and validating an ecologically valid EF assessment system.

Technical Support Center: FAQs & Troubleshooting Guides

This technical support center is designed for researchers conducting studies on the ecological validity of virtual reality (VR) executive function tests. The guides below address common methodological and technical challenges.

Frequently Asked Questions (FAQs)

1. How can we improve the ecological validity of our VR-based executive function assessments? Ecological validity comprises both representativeness (how well the test mirrors real-world demands) and generalizability (how well test performance predicts daily functioning) [11]. To enhance ecological validity, move beyond abstract tasks and use VR to simulate daily life tasks. For example, implement paradigms like the Virtual Multiple Errands Test (MET), which requires participants to run errands in a simulated environment, thereby incorporating complex, real-world cognitive demands [11]. Furthermore, ensure that the cognitive processes assessed (e.g., planning, problem-solving) are embedded within meaningful activities and correlate your VR task outcomes with standard measures of daily living [12].

2. What are the core executive function subcomponents we should be isolating in our VR tasks? Based on established neuropsychological models, the three core, separable executive subcomponents are Inhibition, Shifting (also called task-switching or cognitive flexibility), and Updating (of working memory) [13]. Your VR tasks should be designed to isolate and measure these specific components. For instance, a task might require participants to suppress a prepotent response (inhibition), flexibly switch between different task rules (shifting), or continuously monitor and update information in working memory (updating) [13].

3. We are concerned about cybersickness affecting our data. How should we monitor and report this? Cybersickness is a significant threat to data validity, as it can negatively correlate with cognitive task performance (e.g., slower reaction times, reduced accuracy) [11]. It is critical to proactively assess it using standardized tools. A recommended practice is to administer the Pediatric Simulator Disease Questionnaire (Peds-SSQ) for younger populations or similar simulator sickness questionnaires for adults immediately after the VR session [12]. Researchers should systematically report cybersickness metrics in their studies to allow for the interpretation of performance data.

4. How do we validate a novel VR executive function test against traditional methods? A standard validation strategy involves administering your novel VR task alongside a battery of well-established, traditional neuropsychological tests that measure similar constructs (e.g., Stroop Test for inhibition, Trail Making Test for shifting, Digit Span for working memory) [12] [11]. You should conduct correlation analyses between performance scores on the VR tasks and the traditional measures. Reporting both convergent validity (correlation with similar constructs) and discriminant validity (lack of correlation with unrelated constructs) is essential for establishing the new tool's psychometric properties [11].

5. What technical specifications are required for a lab setting up VR-based cognitive assessment? A functional VR assessment lab requires specific hardware and software. The following table details the essential technical components based on commercially available systems [14].

Table 1: Essential Research Reagent Solutions for VR Executive Function Testing

| Item | Function / Purpose | Examples / Specifications |

|---|---|---|

| VR Head-Mounted Display (HMD) | Provides the immersive visual and auditory experience; critical for creating a sense of presence. | Meta Quest 2, 3, 3S, or Pro (64GB+ capacity) [14]. |

| Computer/Tablet | Runs the assessment platform's administrative and data management interface. | Windows 10 (64-bit+) or Mac OS High Sierra 10.13.6+; Android 8.0+ tablet; iPad with iOS 15.1+ [14]. |

| VR Test Software | The validated neuropsychological assessment tool that presents the cognitive tasks. | Nesplora Suite, SmartAction-VR, or other specialized VR neuropsychological batteries [14] [12]. |

| Wired, Over-Ear Headphones | Deliver high-quality, synchronized audio and isolate the participant from external noise. | Must connect via cable to the VR device; Bluetooth headphones are not recommended due to audio latency [14]. |

| Stable Wi-Fi Network | Essential for software updates, data synchronization, and running web-based platforms. | Minimum speed of 50 Mbps; recommended speed over 100 Mbps [14]. |

Troubleshooting Guides

Issue 1: Discrepancy Between VR Test Performance and Informant-Reported Daily Functioning

- Problem: A research participant performs poorly on a VR test of executive functioning, but their informant (e.g., family member) reports no significant difficulties in daily activities.

- Background: Different assessment methods (performance-based vs. informant-report) can capture different aspects of functioning. Neuropsychological measures of executive functioning may explain significant variance in performance-based and informant-report measures, but the relationships can be complex [13]. Furthermore, "old-old" adults (75+) may show clear difficulties on performance-based measures without self- or informant-reported problems [13].

- Solution:

- Use Multi-Method Assessment: Do not rely on a single data source. Incorporate performance-based measures (like VR), self-reports, and informant-reports to build a comprehensive picture [13].

- Analyze Subcomponents: Examine which specific executive subcomponent (e.g., shifting, updating) is impaired in your VR task. Research shows that, for example, updating may be more predictive of performance-based measures, while switching is important for both questionnaire and performance-based measures [13]. This granularity can help explain the discrepancy.

- Review Task Design: Ensure your VR task has high ecological validity and demands the same cognitive skills required for the real-world activities the informant is reporting on.

Issue 2: Low Participant Engagement or High Attrition in Longitudinal VR Studies

- Problem: Participants find repetitive traditional cognitive tasks boring, leading to poor engagement and potential drop-out in studies requiring multiple testing sessions.

- Background: VR's immersive nature has the potential to enhance participant engagement more effectively than traditional pencil-and-paper or computerized tasks, potentially improving task performance and data reliability [11].

- Solution:

- Implement Gamification: Design VR tasks as "serious games" with embedded game-like elements (e.g., scoring, levels, narrative context). This has been shown to be more engaging than nongamified tasks [11].

- Ensure Immersion: Use high-quality, immersive HMDs to create a strong sense of presence, which captures attention and can lead to more reliable performance metrics [11].

- Minimize Cybersickness: Actively work to reduce cybersickness through smooth graphics, stable frame rates, and comfortable navigation, as its symptoms can deter participants from repeat sessions [11].

Issue 3: Suspected Invalid Test Scores Due to Poor Effort or Malingering

- Problem: A participant's test performance is unexpectedly poor, and you suspect suboptimal effort or symptom exaggeration.

- Background: Computerized neurocognitive test batteries, including VR systems, often include embedded validity indicators to help identify invalid profiles [15].

- Solution:

- Activate Embedded Validity Indicators: Ensure your assessment platform's validity checks are enabled. These often cross-check performance across tests for irregularities in effort [15].

- Review Specific Validity Constructs: Check the data against predefined criteria. For example, a test battery might flag a profile as invalid if the sum of correct hits and passes on memory tests falls below a specific threshold, or if reaction time patterns are implausible [15].

- Post-Test Interview: If the validity indicator suggests an issue, conduct a structured interview with the participant about their testing experience (understanding of instructions, effort, sleep, etc.) [15].

Experimental Protocols for Key cited Experiments

Protocol 1: SmartAction-VR for Assessing Executive Functioning in ADHD

Objective*: To explore the efficacy of a VR task based on the multi-errand paradigm in providing insights into the executive functioning of children and adolescents with ADHD in their everyday activities [12].

Table 2: SmartAction-VR Experimental Protocol Summary

| Protocol Component | Description |

|---|---|

| Study Design | Cross-sectional study [12]. |

| Participants | 76 children and adolescents (Age: 9-17 years; 40 with ADHD, 36 neurotypical) [12]. |

| Inclusion Criteria | Clinical diagnosis of ADHD (ICD-10 F90.0) for the ADHD group; age 9-17 for all [12]. |

| Exclusion Criteria | Neurological disorders (e.g., epilepsy, cerebral palsy), severe mental illness, moderate-severe autism spectrum disorder, IQ < 80 [12]. |

| Instruments & Measures | Guardian-Report: Waisman ADL Scale (W-ADL), EPYFEI questionnaire. Self-Report: Pediatric Simulator Sickness Questionnaire (Peds-SSQ). Cognitive Tests: Digit Span, Stroop Test, NEPSY-II Subtest, Trail Making Test, Zoo Map Test. VR Task: SmartAction-VR [12]. |

| Procedure | 1. Session is divided into two parts: traditional cognitive tests and the SmartAction-VR task. 2. Administer traditional cognitive tests and questionnaires. 3. Administer the SmartAction-VR task in which participants perform simulated daily life errands. 4. Administer the Peds-SSQ after the VR session [12]. |

| Primary Outcomes | Accuracy, total errors, commissions, new actions, forgetting actions, and perseverations within the SmartAction-VR environment [12]. |

Protocol 2: Validating a Novel VR EF Task Against Traditional Measures

Objective*: To establish the ecological and construct validity of a novel immersive VR executive function task for use in an adult population [11].

Table 3: VR Task Validation Protocol Summary

| Protocol Component | Description |

|---|---|

| Study Design | Cross-sectional validation study [11]. |

| Participants | Adult population (specific sample size depends on power analysis); including both clinical and healthy control groups is recommended [11]. |

| Traditional "Gold-Standard" Measures | Inhibition: Stroop Test, DKEFS Color-Word Interference Test. Shifting/Cognitive Flexibility: Trail Making Test (TMT) Part B, Shifting Attention Test (SAT). Updating/Working Memory: Digit Span, n-back tasks [13] [15] [11]. |

| Functional Outcome Measures | Performance-Based: Multiple Errands Test (MET) or its virtual equivalent. Informant-Report: Questionnaires on Instrumental Activities of Daily Living (IADLs) [13]. |

| Procedure | 1. Administer the battery of traditional neuropsychological EF tests. 2. In a separate session (or counterbalanced order), administer the novel VR EF task. 3. Administer a cybersickness questionnaire immediately after the VR task. 4. Collect functional outcome measures (performance-based and/or informant-report) [11]. |

| Validation Analysis | Convergent Validity: Calculate correlations between scores on the VR task and traditional tests measuring the same EF subcomponent. Ecological Validity: Calculate correlations between VR task performance and functional outcome measures [11]. |

Experimental Workflow and Logical Relationships

The following diagram illustrates the key stages and decision points in a robust research workflow for developing and validating a VR-based executive function test.

Technical Support Center: FAQs & Troubleshooting

Frequently Asked Questions (FAQs)

Q1: What is the relationship between cognitive load, presence, and learning outcomes in IVR? Research shows that Immersive Virtual Reality (IVR) groups often demonstrate higher levels of cognitive load but can experience lower learning outcomes and self-efficacy scores compared to control groups using only practical training. Interestingly, higher self-reported presence does not automatically result in increased cognitive load. The key is ensuring cognitive and haptic feedback are congruent to foster learning. Directly pairing IVR with hands-on training may induce mental demand and frustration, so the instructional sequence requires careful planning [16].

Q2: How can we improve the ecological validity of VR-based cognitive assessments? Ecological validity is enhanced by using VR to create complex, context-rich scenarios that mirror real-life situations. The literature indicates that VR test measures resembling real-life activities have good ecological validity. Key strategies include [5] [17] [4]:

- Developing scenarios that simulate everyday challenges (e.g., preparing a meal or running a virtual shop).

- Ensuring tasks require adaptive and context-sensitive decision-making.

- Moving beyond assessing isolated cognitive functions to measuring integrated cognitive processes as they are used in daily life.

Q3: Is sense of presence a direct result of technological immersion? No. While technological immersion is a factor, presence is primarily a psychological phenomenon. It is shaped by [18]:

- Content and Narrative: A compelling storyline and clear goals strongly affect the sensation of "being there."

- User Characteristics: Individual traits like age, personality, and previous knowledge influence the experience.

- Intentional Structures: The user's goals and expectations in the virtual environment. A sophisticated VR system does not guarantee a strong sense of presence; these psychological and social factors are often more critical.

Q4: What are the common technical issues when deploying VR in research settings and their solutions? The table below summarizes common issues and their fixes, crucial for maintaining experimental integrity.

Table: Common VR Technical Issues and Troubleshooting Guide

| Issue Category | Specific Problem | Recommended Solution |

|---|---|---|

| Display | Blurry or unfocused display | Adjust the lenses laterally; clean them with a microfiber cloth [19]. |

| Tracking | Controllers not tracking | Replace batteries; re-pair controllers via the device application [19]. |

| Tracking lost warning | Ensure a well-lit area (without direct sunlight); avoid reflective surfaces [19]. | |

| Connectivity | Headset won't update | Check Wi-Fi stability; reboot headset; clear storage space if full [19]. |

| Firewall/Network blocks | Whitelist specific hostnames/ports on your firewall for the VR platform [20]. | |

| Software | App crashes or freezes | Restart the app; reboot the headset; reinstall the app as a last resort [19]. |

| Device Management | Multi-site management | Use the platform's central portal (e.g., ClassVR Portal) for centralized oversight across locations [20]. |

Q5: What key factors should we consider when designing a multi-day VR training study? A multi-day field study highlighted several critical considerations [16]:

- Cognitive Load Monitoring: Cognitive load provides valuable insights into the instructional framework and should be measured throughout the training, not just as a final outcome.

- Self-Efficacy and Cybersickness: Correlations exist between cognitive load, self-efficacy, and cybersickness. These variables should be tracked as they can interact and influence the learning process.

- Timing of IVR Implementation: The study found that the moment of implementing IVR (before or after hands-on training) did not affect outcomes, but the IVR groups consistently showed different results than the practical-only group.

Experimental Protocols & Methodologies

Protocol 1: Validating a Virtual Reality Action Test (VRAT)

This protocol outlines the methodology for validating a virtual version of a naturalistic action test, assessing cognitive abilities in an ecologically valid context [17].

- Objective: To contribute to the validation of a virtual version of the Naturalistic Action Test (NAT) and to evaluate the role of sense of presence as a moderator between cognitive abilities and task performance.

- Design: Cross-over research design.

- Participants: Healthy adults tested in both virtual and real conditions.

- Procedure:

- Informed Consent: Obtained from all participants, specifying risks related to VR and motion sickness.

- Training Session: A 5-minute training session with the VR system and real objects is conducted before each condition.

- Virtual Task: Participants perform a task (e.g., preparing breakfast) in the immersive virtual environment using a Head-Mounted Display (HMD). Interaction is achieved by pressing a trigger button when a virtual hand reaches an object.

- Real Task: The same task is performed with physical objects in a real-world setting.

- Cognitive Assessment: A battery of traditional cognitive tests is administered to assess cognitive functions.

- Measures:

- Performance scores from the VRAT and the real-world task.

- Scores on cognitive tests (e.g., memory, processing speed).

- Self-reported measures of the sense of presence.

- Analysis: Statistical correlations between performances in virtual/real tasks and cognitive tests. Structural equation modeling (SEM) is used to test if the sense of presence moderates the relationship between cognitive abilities and virtual task performance.

Protocol 2: Establishing Normative Data for a VR Executive Function Test

This protocol describes the methodology for a normative study of the Nesplora Ice Cream test, a VR-based assessment for executive functions in adults [4].

- Objective: To establish normative data for a VR tool assessing executive functions (planning, learning, and flexibility) in a healthy adult population.

- Participants:

- Sample Size: 419 participants (51% female).

- Age Range: 17 to 80 years.

- Recruitment: Across multiple testing sites.

- Inclusion Criteria: Spanish proficiency, no diagnosed neurological, psychiatric, or neurodevelopmental conditions, and no sensory alterations limiting VR use.

- Materials: The Nesplora Ice Cream test, a VR tool administered by trained evaluators.

- Procedure:

- Recruitment & Consent: Participants are recruited and provide informed consent (parental consent for 17-year-olds).

- Test Administration: Trained evaluators administer the Nesplora Ice Cream test in a controlled setting.

- Data Analysis:

- Cluster Analysis: Used to define age groups for different executive function factors.

- Factor Analysis: Confirmatory factor analysis to validate the test's theoretical structure (planning, learning, flexibility).

- Normative Data Generation: Descriptive normative data are provided based on age and gender, including measures of validity, reliability, and internal consistency.

Research Reagent Solutions: Essential Materials for VR Cognitive Research

Table: Essential Materials for VR EF Research

| Item Name | Category | Function & Application in Research |

|---|---|---|

| Head-Mounted Display (HMD) | Hardware | Provides the immersive visual and auditory experience. The core device for delivering the virtual environment to the participant (e.g., Meta Quest, HTC VIVE, ClassVR headsets) [17] [20]. |

| VR Controllers / Hand-Tracking | Hardware | Enables participants to interact with the virtual environment, essential for assessing goal-directed behavior and motor execution in tasks like the VRAT [17]. |

| VR Cognitive Assessment Software | Software | Pre-validated tests (e.g., Nesplora Ice Cream Test, VRAT) used to measure specific cognitive domains like executive functions, planning, and learning in an ecologically valid context [17] [4]. |

| ClassVR Administration Portal | Software/Management | A centralized platform for managing VR headsets, deploying content, caching playlists, and monitoring device status across a research lab or multiple sites [20]. |

| Presence Questionnaire | Psychometric Tool | A standardized self-report measure to quantify the participant's subjective sense of "being there" in the virtual environment, a critical moderator variable [17] [18]. |

| Cognitive Load Scale | Psychometric Tool | A rating scale used to measure the mental demand imposed on a participant by the VR task, helping to optimize instructional design [16]. |

| Mobile Device Management (MDM) | Software/Management | Software like ArborXR or ManageXR to efficiently deploy, manage, and secure VR training content and applications across a fleet of headsets in an enterprise/research setting [21]. |

Table: Key Quantitative Findings from VR Cognitive Load and Presence Studies

| Study Focus | Key Metric | Finding | Context |

|---|---|---|---|

| IVR & Cognitive Load [16] | Learning Outcomes | Lower in IVR groups vs. CTRL (practical-only) | In a multi-day molecular biology skills training. |

| Self-Efficacy | Lower in IVR groups vs. CTRL | ||

| Cognitive Load | Higher in IVR groups vs. CTRL | ||

| Nesplora Ice Cream Test [4] | Normative Sample Size | N = 419 | Participants aged 17-80 for normative data. |

| Executive Function Factors | 3 factors extracted: Planning, Learning, Flexibility | Supported by confirmatory factor analysis. | |

| Gender Differences | No significant effects found | In the adult normative sample. | |

| VR Classroom Experiment [22] | Presence | Significantly higher in VR group vs. iPad group | In a comparative classroom experiment. |

| Demographic Effects | No detectable effects of age and gender on presence | Participant's previous VR experience was a significant factor. |

Conceptual Workflow Diagrams

Diagram 1: Theoretical model linking VR immersion to ecological validity.

Diagram 2: Workflow for a typical VR cognitive validation study.

Building Better Assessments: Methodological Frameworks for VR-Based Executive Function Tests

Executive functioning (EF) is critical for daily activities, and its impairment is a transdiagnostic factor in numerous mental disorders [23]. Traditional neuropsychological assessments, while robust, are frequently criticized for their lack of ecological validity—the functional and predictive relationship between test performance and real-world behavior [23]. This limitation arises because traditional tests often isolate single cognitive processes in abstract, controlled environments that fail to capture the dynamic, multi-faceted nature of daily cognitive challenges [23] [24].

Virtual Reality (VR) offers a transformative solution by enabling the creation of immersive, customizable environments that closely mimic real-world scenarios. This enhances verisimilitude (the degree to which a test mirrors real-life demands) and provides researchers with unparalleled experimental control [23] [25]. Paradigms like the Virtual Multiple Errands Test (VMET) exemplify this approach, translating a real-world task (running errands in a shopping mall) into a VR format that is both logistically feasible and standardized [23] [26]. This technical support center provides guidance on implementing these ecologically valid VR paradigms effectively.

Core Concepts & Theoretical Foundation

Defining Ecological Validity in the VR Context

In clinical neuropsychology, ecological validity comprises two principal components [23]:

- Representativeness (Verisimilitude): The degree to which a neuropsychological test mirrors the cognitive demands of daily living activities.

- Generalizability (Veridicality): The extent to which test performance predicts an individual's functioning in their daily environment.

The VR Advantage: Beyond Traditional Testing

VR paradigms address key limitations of traditional methods [23] [24]:

- Enhanced Engagement: Immersive environments capture increased attention, potentially leading to more reliable and accurate performance measurements [23].

- Multimodal Data Capture: VR enables the integration of kinematic data (e.g., movement trajectories, response times) with cognitive performance metrics, offering a richer understanding of cognitive-motor interactions [26].

- Standardized Control: Unlike real-world tasks, VR allows for the precise control of environmental variables, ensuring that all participants experience identical conditions [24].

The following workflow outlines the key stages for researchers to transition from a theoretical concept to a validated VR-based assessment:

The Researcher's Toolkit: Essential Components for a VR Lab

Setting up a VR lab for ecologically valid research requires careful consideration of hardware, software, and physical space.

Hardware & Space Configuration

Key considerations for your physical setup include [25]:

- Visual Displays: Choose between head-mounted displays (HMDs) for individual immersion or projection-based systems (e.g., CAVEs) for group viewing and shared experiences.

- Motion Tracking: Systems must capture the participant's movements with high precision. Camera-based systems require a clear line-of-sight and freedom from infrared light interference.

- Input Devices: Standard VR controllers, haptic gloves, or biofeedback sensors can be used depending on the research question.

- Rendering Computers: High-performance computers are necessary for seamless, low-latency visual rendering to minimize cybersickness.

- Physical Space: The area must be safe, open, and free of obstacles. Consider cable management systems, lighting control, and the potential need for a separate room for the experimenter and data acquisition equipment.

Software for VR Environment Generation

Selecting the right software is critical for efficient development [25]:

- Vizard (WorldViz): A comprehensive VR software tool specifically designed for researchers, often including experiment generation plugins (e.g., SightLab VR Pro) that enable the creation of studies with little to no coding.

- Unity & Unreal Engine: Powerful, general-purpose game engines with extensive asset stores and community support, suitable for building highly customized VR environments.

Troubleshooting Common Technical and Methodological Issues

Q1: My participants are experiencing cybersickness (dizziness, nausea), which threatens data validity. What can I do? A: Cybersickness is a common challenge. Mitigation strategies include [23]:

- Technical Optimization: Ensure a high and stable frame rate (e.g., 90Hz) and minimize rendering latency. A well-configured rendering computer is essential.

- Session Management: Keep initial exposure sessions short and allow for adequate breaks. Gradually increase exposure time as participants acclimatize.

- Environmental Design: Avoid artificial camera movements or accelerations that are not generated by the participant's own head movements. Provide a stable visual reference point in the peripheral visual field if possible.

- Monitoring: Use standardized tools like the Pediatric Simulator Sickness Questionnaire (Peds-SSQ) or its adult equivalents to quantitatively monitor symptoms [27].

Q2: The VR task does not correlate well with traditional paper-and-pencil measures of executive function. Is this a failure? A: Not necessarily. VR tasks aim to capture more complex, real-world behaviors that traditional tests may not adequately reflect. The validation strategy should be multi-faceted [23] [24]:

- Focus on Ecological Validity: Correlate VR task performance with measures of daily functioning or caregiver reports (e.g., the Waisman Activities of Daily Living Scale - W-ADL) [27]. This is a stronger indicator of success for a verisimilitude-focused paradigm.

- Use a Multimodal Approach: Validate against other ecologically relevant measures or real-world performance, not just traditional tests.

- Ensure Construct Validity: Clearly define the EF constructs (inhibition, cognitive flexibility, working memory, planning) your VR task is designed to assess and ensure the task mechanics align with these constructs [23].

Q3: Participant movement is causing tracking loss or occlusion. How can I improve tracking reliability? A: Tracking issues can disrupt immersion and data integrity [19] [25]:

- Environmental Calibration: Ensure the play area is well-lit (for systems that use visible light) but devoid of direct sunlight. Cover or remove reflective surfaces like mirrors and glass.

- Sensor Placement: For camera-based systems, position multiple cameras to maximize coverage of the play area and minimize occlusions. Ensure they have a clear line-of-sight to the participant and controllers at all times.

- Recalibration: Reboot the system and recalibrate the tracking sensors and guardian boundary system. Set up a new play area boundary if the problem persists [19].

Q4: How can I ensure my VR paradigm is psychometrically sound? A: Systematically evaluate your paradigm using an extended framework like VR-Check, which goes beyond traditional validity and reliability [24]. The table below summarizes a quantitative comparison of key psychometric properties from a validation study of VR-based Trail Making Tests:

Table: Psychometric Properties of VR Trail Making Test (VR-CTT) Adaptations [26]

| Evaluation Dimension | DOME-CTT (Large-Scale VR) | HMD-CTT (Head-Mounted Display) | Original Pencil-and-Paper CTT |

|---|---|---|---|

| Construct Validity (Correlation with original CTT) | Trails A: 0.58Trails B: 0.71 | Trails A: 0.62Trails B: 0.69 | Gold Standard |

| Test-Retest Reliability (Intraclass Correlation) | Trails A: 0.60-0.75Trails B: 0.59-0.89 | Trails A: 0.60-0.75Trails B: 0.59-0.89 | Trails A: 0.75-0.85Trails B: 0.77-0.80 |

| Discriminant Validity (Area Under Curve, age groups) | Trails A: 0.70-0.92Trails B: 0.71-0.92 | Trails A: 0.70-0.92Trails B: 0.71-0.92 | Trails A: 0.73-0.95Trails B: 0.77-0.95 |

Experimental Protocols & Validation Methods

Implementing a Virtual Multi-Errand Paradigm

The Multi-Errand Test (MET) is a classic measure of executive function in daily life. Its virtual adaptation (VMET) involves participants completing a list of errands (e.g., buying specific items, obtaining information) in a simulated environment like a virtual town or mall, while adhering to specific rules (e.g., cannot enter the same shop twice consecutively). Performance is quantified by metrics such as [23] [27]:

- Accuracy: Number of tasks successfully completed.

- Total Errors: Sum of all errors made.

- Commission Errors: Performing actions that were not required.

- Omission Errors: Forgetting to perform required actions.

- Rule Breaks: Number of times predefined rules are violated.

- Task Completion Time: Total time taken to complete the errands.

Validating with a Clinical Population: The SmartAction-VR Example

A recent study validated a VR-based task (SmartAction-VR) for assessing EF in children and adolescents with ADHD. The protocol and key findings are summarized below [27]:

Table: Key Findings from SmartAction-VR Validation Study (ADHD vs. Neurotypical Group) [27]

| Performance Metric | Result (ADHD vs. Neurotypical) | Statistical Significance |

|---|---|---|

| Accuracy | Lower in ADHD group | U = 406, p = 0.010 |

| Total Errors | Higher in ADHD group | U = 292, p = 0.001 |

| Commission Errors | More in ADHD group | U = 417, p = 0.003 |

| Forgetting Actions (Omissions) | More in ADHD group | U = 406, p = 0.010 |

| Correlation with Daily Independence | More forgotten actions linked to lower independence in daily life | r = -0.281, p = 0.024 |

Experimental Protocol Overview [27]:

- Participants: Cross-sectional study with 40 ADHD and 36 neurotypical participants (ages 9-17).

- Instruments:

- SmartAction-VR: The primary VR task simulating daily life activities.

- Traditional Cognitive Tests: Digit Span, Stroop Test, Trail Making Test (TMT), Zoo Map Test.

- Functional Measures: Waisman Activities of Daily Living Scale (W-ADL), completed by caregivers.

- Cybersickness Measure: Pediatric Simulator Sickness Questionnaire (Peds-SSQ).

- Procedure: A single session divided into administration of traditional cognitive tests followed by the SmartAction-VR task.

- Validation: VR performance metrics were correlated with both traditional test scores and caregiver reports of daily functioning, successfully establishing the tool's ecological and construct validity.

The logical relationships and outcomes from this validation study can be visualized as follows:

Essential Research Reagents & Materials

Table: Key "Research Reagent" Solutions for VR Experimental Setup [25] [27] [26]

| Item Category | Specific Examples | Function in Research |

|---|---|---|

| VR Software Platform | Vizard (WorldViz), Unity Engine, Unreal Engine | Core environment for building, rendering, and running the 3D virtual world and task logic. |

| Experiment Plugin | SightLab VR Pro (for Vizard) | Enables rapid generation of standardized VR experiments with minimal coding, often including templates for common tasks. |

| Neuropsychological Tests | SmartAction-VR, Virtual MET, VR-CTT (DOME/HMD) | The specific task paradigm designed to assess executive functions with high ecological validity. |

| Validation Instruments | Waisman-ADL Scale, EPYFEI Questionnaire | Questionnaires and scales used to correlate VR task performance with real-world functional outcomes. |

| Adverse Effects Monitor | Pediatric Simulator Sickness Questionnaire (Peds-SSQ), Cybersickness Surveys | Standardized tools to quantify and monitor symptoms of cybersickness in participants, ensuring data quality and participant safety. |

| Motion Tracking System | Vicon, OptiTrack, HMD-integrated (Inside-Out) Tracking | Captures high-fidelity kinematic data (e.g., hand trajectories, movement speed) which can be analyzed to enrich cognitive performance metrics. |

Key Concepts and Definitions

What is Ecological Validity and Why Does it Matter for My Research?

Ecological Validity refers to the extent to which findings from laboratory experiments can be generalized to real-world situations [28]. In the context of VR executive function research, it assesses whether a participant's performance and responses in a virtual environment accurately reflect what would occur in a real-world setting [29].

This concept is primarily broken down into two approaches:

- Verisimilitude: The similarity between the task demands of the test and the demands imposed in the everyday environment. It asks: "Does the experimental setting resemble the real world?" [30].

- Veridicality: The degree to which test results are empirically related to measures of real-world functioning. It asks: "Do the laboratory results predict real-world functioning?" [30].

For executive function tests, high ecological validity means that a patient's performance on a VR-based test can reliably predict their capabilities in daily activities, making it a crucial consideration when choosing VR technology for research or clinical assessment [31].

Troubleshooting Guides

Tracking and Boundary Issues

Problem: The virtual floor is misaligned or the play area is off-center. This manifests as a virtual floor that appears at knee level or boundary markers that do not correspond to the user's actual physical position [32] [33].

| Solution Step | Description | Applicable System |

|---|---|---|

| Run Room/Boundary Setup | Re-run the system's official room setup or boundary calibration. Ensure your tracing creates a simple box, leaving a buffer zone from real-world objects [33]. | HMD, Room-Scale |

| Clear Environment Data | If the virtual world appears tilted, clear the cached environment data from the system settings to force a fresh calibration [33]. | HMD |

| Delete Previous Configurations | For persistent issues, manually delete previous boundary and chaperone data (e.g., \Steam\config\lighthouse\) after creating a backup. This forces the system to treat the setup as entirely new [32]. |

Room-Scale (e.g., HTC Vive) |

| Use Quick Calibrate | Some systems (e.g., SteamVR) offer a "Quick Calibrate" option in the developer settings. Place the HMD on the floor at the center of your play area and run this function [32]. | Room-Scale |

Problem: The system suffers from poor controller or headset tracking. This can cause stuttering, "lost bounds" errors, or frozen displays [33].

| Solution Step | Description | Applicable System |

|---|---|---|

| Ensure Proper Lighting | Tracking cameras need adequate, consistent light. Avoid darkness and direct sunlight, which can cause overexposure [33]. | HMD (Inside-Out Tracking) |

| Check for Reflective Surfaces | Cover mirrors or large glass panels that can confuse the system's cameras by reflecting infrared dots or controllers [33]. | HMD (Inside-Out Tracking) |

| Update GPU Drivers | A stuttering image can indicate a GPU problem. Download and install the latest drivers from NVIDIA or AMD [33]. | HMD, Room-Scale |

| Re-pair Controllers | If controllers are not detected, put them in pairing mode via the system's Bluetooth settings and ensure they have fresh batteries [33]. | HMD |

Hardware and Display Problems

Problem: The display in the headset is blurry or shows a black border. A blurry image often relates to incorrect lens configuration, while black borders ("foveated rendering") indicate insufficient computing power [33].

| Solution Step | Description | Applicable System |

|---|---|---|

| Adjust IPD Manually | Measure your Interpupillary Distance (IPD) and manually enter the value (in mm) in the headset display settings for a clearer image [33]. | HMD |

| Reduce Visual Quality Settings | If you see black borders, lower the detail settings within the specific application or the global VR settings to reduce the rendering load on your GPU [33]. | HMD, Room-Scale |

Problem: The VR experience induces simulator sickness or balance issues. Users may feel dizzy, nauseated, or unsteady, which can confound physiological data collection [34].

| Solution Step | Description | Applicable System |

|---|---|---|

| Ensure High & Stable Frame Rate | Maintain a consistent, high FPS (e.g., 90Hz) by lowering graphical settings. Stuttering is a major trigger for sickness [33]. | HMD, Room-Scale |

| Shorten Initial Exposure | For new participants, start with short sessions (5-10 minutes) in stable environments to build tolerance [34]. | HMD, Room-Scale |

| Enable Virtual Nose or Reticle | Adding a fixed visual reference point in the virtual field of view can reduce perceived vection and discomfort. | HMD |

Experimental Protocols for Ecological Validity

Protocol: Validating a VR-Based Continuous Performance Test (CPT)

This protocol is adapted from a feasibility study that developed a HMD-based CPT, "Pay Attention!", to assess attention with high ecological validity [31].

Objective: To establish the validity and normative profile of a VR-based CPT for assessing attention in environments that simulate real-life challenges.

Methodology Details:

- VR Tool: HMD with a custom CPT program [31].

- Scenario Design: Create four distinct, familiar real-life virtual scenarios (e.g., a room, library, outdoors, and café) to enhance verisimilitude [31].

- Difficulty Levels: Implement four distinct difficulty levels within each scenario. Vary the level of distraction, complexity of target/non-target stimuli, and inter-stimulus intervals to avoid ceiling/floor effects and increase task sensitivity [31].

- Deployment & Data Collection:

- Provide participants with a VR device and instruct them to perform 1-2 blocks of the test per day at home.

- Aim for a total of 12 blocks over a two-week period. This multi-session approach accounts for intra-individual variability (IIV) and collects data in a more naturalistic setting [31].

- Measures:

- Primary: Commission Errors (CE), Omission Errors (OE), Reaction Time Variability (RTV) [31].

- Supplementary: Pre- and post-study psychological assessments and electroencephalograms (EEG) to correlate behavioral and physiological data [31].

- Usability: Collect post-study usability measures to ensure participant compliance and feasibility [31].

Protocol: Comparing Audio-Visual Perception Across VR Setups

This protocol is based on research that directly compared in-situ, room-scale VR, and HMD experiments to assess their ecological validity for audio-visual environment research [29].

Objective: To quantify and compare the ecological validity of room-scale VR and HMDs for perceptual, psychological, and physiological measurements.

Methodology Details:

- Experimental Design: A 2 (Site: Garden, Indoor) x 3 (Condition: In-situ, Room-Scale VR, HMD) within-subjects design [29].

- Procedure: Expose all participants to the same two real-world sites (e.g., a garden and an indoor space). Subsequently, have them experience high-fidelity virtual recreations of these sites in both a room-scale VR system (e.g., a cylindrical immersive room) and a HMD [29].

- Data Collection: Administer questionnaires and collect physiological data in all three conditions.

- Perception & Verisimilitude: Use questionnaires to rate audio quality, video quality, immersion, and realism [29].

- Psychological Restoration: Utilize standardized scales like the Perceived Restorativeness Scale (PRS) or Restorative Outcome Scale (ROS) [29].

- Physiological Measures: Record Heart Rate (HR) and Electroencephalogram (EEG) to compare physiological responses across environments [29].

- Analysis: Statistically compare the data obtained from the two VR setups against the in-situ (real-world) baseline to determine veridicality.

Frequently Asked Questions (FAQs)

Q1: From an ecological validity standpoint, when should I choose an HMD over a Room-Scale system? The choice involves a trade-off between immersion/control and accessibility/versatility. The table below summarizes key considerations to guide your decision.

| Factor | Head-Mounted Display (HMD) | Room-Scale VR (e.g., CAVE) |

|---|---|---|

| Immersion & Presence | Perceived as more immersive [29]. Blocks external visuals completely. | Slightly lower immersion score, but less restrictive for group viewing [29]. |

| Ecological Validity for Perception | Ecologically valid for audio-visual perceptive parameters [29]. | Also ecologically valid for audio-visual perceptive parameters [29]. |

| Psychological & Physiological Validity | May not perfectly replicate in-situ results for psychological restoration; care needed with EEG time-domain features [29]. | May be slightly more accurate than HMDs for psychological restoration and some EEG metrics [29]. |

| Spatial Requirements & Flexibility | Lower. Can be used in smaller, cleared spaces. Ideal for home-based studies [31]. | High. Requires a dedicated, instrumented room with projectors and tracking systems. |

| Participant Mobility & Safety | Enables full 360° turning. Higher risk of simulator sickness and requires careful safety protocols for movement [34]. | Participants see their real environment; lower sickness risk. Often allows for more natural, unencumbered walking. |

| Cost & Accessibility | Lower cost, consumer-grade hardware. Highly suitable for multi-session, home-based testing [31]. | High cost, specialized equipment. Typically confined to a lab setting. |

Q2: How can I mitigate the risk of simulator sickness in HMDs to protect data integrity? Simulator sickness can be a significant confound in research data. To minimize it:

- Technical Setup: Ensure a high, stable frame rate and minimize latency. These are the most critical factors [33].

- Session Design: Begin with short exposures (5-10 minutes) in visually stable environments and gradually increase duration and complexity as participants acclimatize [34].

- Content Design: Incorporate a stationary visual reference (e.g., a virtual cockpit or nose) to reduce vection-induced dizziness.

- Participant Screening: Screen participants for a history of high susceptibility to motion sickness and consider this as a covariate in your analysis.

Q3: My VR experiment lacks ecological validity. What factors should I adjust? Consider optimizing these three experimental factors, which have been shown to significantly impact ecological validity [30]:

- Auralization: Use high-quality spatial audio (e.g., Ambisonics) instead of monoaural sound. Adjust the sound level, as a -8 dB adjustment from the real-world level was found to optimize ecological validity in one study [30].

- Visualization: While 3D video can have high verisimilitude, 3D modeling is also a valid approach, especially when paired with high-quality audio [30].

- Human-Computer Interaction (HCI): Implement "virtual walking" if possible. Allowing participants to move naturally in the virtual space, rather than using teleportation, has great potential to significantly enhance ecological validity [30].

The Researcher's Toolkit

| Tool / Reagent | Function in VR Research | Specification / Note |

|---|---|---|

| Standalone HMD (e.g., Oculus Quest) | Portable VR delivery for home-based or lab studies. | Essential for multi-session, ecological studies outside the lab [31]. |

| EEG Headset | Records brain activity to measure cognitive load and restoration. | Check compatibility with HMDs. HMDs may influence EEG time-domain features [29]. |

| HR Monitor | Measures heart rate as a physiological indicator of stress or relaxation. | A common metric to compare real-world and virtual physiological responses [29]. |

| Ambisonic Microphone | Records spatial audio for high-fidelity soundscape reproduction. | Critical for creating ecologically valid auditory environments [30]. |

| VR-CPT Software | Administers Continuous Performance Tests within immersive environments. | Should include multiple real-world scenarios and adjustable difficulty levels [31]. |

Technical Support Center

Troubleshooting Guides

Display and Tracking Issues

Q: The VR display is flickering or shows a black screen, disrupting the cognitive assessment.

- A: This can interfere with task presentation and participant engagement. Restart the headset by holding down the power button for 10 seconds. Ensure the headset is plugged into a charger for at least 30 minutes beforehand to rule out a low battery [19].

Q: The headset tracking is lost, or the guardian boundary warning keeps appearing during a task.

- A: This breaks immersion and can invalidate task performance data. Ensure the testing environment is well-lit without direct sunlight and avoid reflective surfaces that can interfere with tracking. Reboot the headset and set up a new guardian boundary in a space free of obstructions [19].

Q: The game or menu appears off in the distance and is not positioned correctly in front of the user.

- A: An misaligned view can affect how a participant interacts with the VR environment. Press the menu button on the right controller and select 'Reset View' to recenter the scene [35].

Controller and Input Problems

Q: The VR controllers are not tracking or connecting properly, preventing input.

- A: A failure in input detection directly compromises data collection for response-based tasks. Remove and reinsert the batteries, or replace them if they are low. If the issue persists, re-pair the controllers via the companion app on a phone (e.g., Oculus app under Settings > Devices) [19].

Q: The controller's battery cover is difficult to remove for battery replacement.

- A: Hold the Touch controller with the side that has no button facing up. Place your thumb towards the top and firmly slide the cover down. The cover is magnetized and may require a change in the angle of pressure [35].

System and Application Errors

Q: The headset won't update its software, potentially missing critical bug fixes.

- A: Check the Wi-Fi connection for stability and ensure the headset has sufficient storage space for the update. Rebooting the headset can sometimes resolve update issues [19].

Q: A specific VR application or task crashes or freezes during an experiment.

- A: First, close the application and reopen it. If the problem continues, reboot the headset to clear temporary glitches. As a last resort, uninstall and reinstall the application [19].

Q: There is no sound, or the audio is distorted during the VR experience.

- A: Auditory feedback is often crucial for cognitive tasks. Check the volume levels on both the headset and within the application's settings. Reboot the headset if necessary. Disconnect any connected Bluetooth audio devices, as they can sometimes interfere with sound quality [19].

Participant Comfort and Safety

- Q: A participant is experiencing motion sickness during the VR task.

- A: For new users, limit VR playtime to roughly 30 minutes at a time. Many tasks can be configured for seated play, which can reduce motion sickness. Instruct participants to stop playing immediately if they feel unwell [35]. Administer the Pediatric Simulator Disease Questionnaire (Peds-SSQ) or a similar tool post-session to quantitatively assess tolerability, tracking symptoms like eye strain, head discomfort, fatigue, and nausea [27].

Frequently Asked Questions (FAQs) for Researchers

Q: How can VR improve the ecological validity of executive function assessments compared to traditional tools? A: Traditional neuropsychological tests are often administered in isolation in clinical settings, which can limit their ability to predict real-world functioning [27]. VR creates immersive, context-rich environments that simulate complex, daily life tasks (e.g., cooking or shopping within a virtual mall) [36] [27]. This enhances ecological validity by placing cognitive demands on participants in a way that closely mirrors real life, thereby providing more meaningful data on functional independence [27] [37].

Q: What is the evidence for VR-based training improving specific executive functions in clinical populations? A: Emerging research demonstrates the efficacy of targeted VR interventions. The table below summarizes key findings from recent studies:

| Clinical Population | Executive Function | VR Intervention Impact | Study Details |

|---|---|---|---|

| Mild Cognitive Impairment (MCI) [36] | Working Memory | Significant improvement in visual and verbal working memory [36]. | Methodology: 40 participants with MCI were randomized to VR-based cognitive rehabilitation or a control group. Assessments used tools like the Digit Span and Symbol Span subtests at baseline, post-training, and 3-month follow-up [36]. |

| Substance Use Disorders (SUD) [38] | Global Executive Functioning & Memory | Statistically significant improvements in overall executive functioning and global memory were found after a 6-week VR training program (VRainSUD-VR) [38]. | Methodology: A non-randomized controlled study assigned 47 patients to VR training + Treatment as Usual (TAU) or TAU alone. Cognitive outcomes were assessed pre- and post-intervention [38]. |

| Mild Cognitive Impairment (MCI) [36] | Cognitive Flexibility | Did not exhibit significant improvement in the studied cohort, highlighting the component-specific effects of VR training [36]. | Methodology: Cognitive flexibility was measured using the Wisconsin Card Sorting Test (WCST-64). The VR intervention focused on real-life cognitive tasks, but transfer to this specific component was not observed [36]. |

Q: How can we design VR tasks that effectively target the core components of executive function? A: Effective mapping requires deliberate task design that isolates and challenges specific cognitive processes:

- Inhibition: Design tasks that require the suppression of a dominant or prepotent response. For example, a task where a participant must only collect specific target objects that appear on a conveyor belt while resisting the impulse to collect non-target distractors.

- Working Memory: Create scenarios that require the temporary holding and manipulation of information. A virtual shopping task where participants must remember and compare prices of different items, or a navigation task that requires remembering a sequence of instructions, effectively engages working memory [36] [27].

- Cognitive Flexibility: Develop task-switching paradigms. A participant might be required to sort objects by color, and then the rule changes unexpectedly, requiring them to switch to sorting by shape. This directly engages mental flexibility, measured by tools like the Wisconsin Card Sorting Test (WCST) [36].

Q: What are the key methodological considerations for implementing a VR-based assessment? A: A standardized protocol is crucial for reliability. The following workflow outlines a robust experimental procedure for a VR-based assessment study:

Q: What are some essential "Research Reagent Solutions" or key materials for a VR cognitive neuroscience lab? A: Beyond the VR hardware, a well-equipped lab requires a suite of software and assessment tools:

| Item / Tool | Function / Explanation |

|---|---|

| Unity or Unreal Engine | Primary game engines for building and customizing 3D virtual environments and programming task logic [39]. |

| Meta XR Interaction SDK | A software development kit that provides pre-built components for handling core VR interactions (e.g., grabbing, pointing, UI raycasting), speeding up development [39]. |

| Horizon OS UI Set (for Meta) | A library of pre-built, production-ready UI components (buttons, sliders) that ensure a consistent and native look and feel for Quest applications [39]. |

| Wisconsin Card Sorting Test (WCST) | A classic neuropsychological test used to assess cognitive flexibility and set-shifting; often used as a gold-standard measure for validation [36]. |

| Digit Span Test | A standardized subtest (from WAIS/WISC) used to assess auditory-verbal working memory capacity and is commonly used in pre/post-intervention assessments [36] [27]. |

| Waisman Activities of Daily Living (W-ADL) Scale | A caregiver-reported questionnaire that assesses independence in daily living activities, providing a measure of ecological/functional outcome [27]. |

| SmartAction-VR-like Platform | A VR-based assessment platform utilizing the "multi-errand paradigm" to evaluate executive functioning in simulated daily life tasks, enhancing ecological validity [27] [37]. |

Q: How should user interface (UI) elements be designed in VR to avoid confounding experimental results? A: Poor UI design can introduce extraneous cognitive load. Adhere to these principles for clean, consistent, and low-fatigue interfaces:

- Positioning: Place UI elements roughly 0.5–1.5 meters from the user, at eye level or slightly below, to prevent neck strain [39].

- Legibility: Ensure text and interactive targets are large enough. A minimum contrast ratio of 4.5:1 for text-to-background is recommended for readability [40].

- Color and Comfort: Avoid overly saturated colors and extreme contrast (e.g., pure black/white) to reduce eye strain. Use moderate contrast with subtle gradients [40].

- Interaction: Leverage real-world metaphors (e.g., pressing virtual buttons) and support multiple input methods (e.g., controller ray-casting, direct hand tracking) for intuitiveness [39].

The pursuit of ecological validity—the degree to which test performance predicts real-world functioning—is reshaping the assessment of executive functions (EFs) in virtual reality (VR) research. Traditional paper-and-pencil neuropsychological assessments, while useful, lack similarity to real-world tasks and fail to simulate the complexity of daily activities, resulting in low ecological validity and limited generalizability [41]. VR technology addresses this limitation by allowing subjects to engage in immersive virtual environments that replicate real-world challenges, enabling researchers to capture rich, objective data on naturalistic behavior [41] [42].

A 2024 meta-analysis confirmed significant correlations between VR-based assessments and traditional measures across EF subcomponents including cognitive flexibility, attention, and inhibition, supporting VR as a valid alternative to traditional methods [41]. This technical support center provides methodologies and troubleshooting guidance for researchers aiming to implement robust, ecologically valid VR paradigms that move beyond simple accuracy metrics to encompass comprehensive behavioral and error analysis.

Technical Support Center: Troubleshooting Guides and FAQs

FAQ: Experimental Design and Data Collection

Q1: How can we ensure our VR task has sufficient ecological validity for executive function assessment?

Ecological validity is enhanced by designing tasks that simulate daily life activities rather than abstract cognitive tests. The multi-errand paradigm, implemented in tasks like SmartAction-VR, requires participants to complete familiar tasks in a virtual environment (e.g., a virtual kitchen or home scenario) that mimic real-world cognitive demands [41] [12]. Key strategies include:

- Meaningful Task Scenarios: Utilize virtual environments that replicate real-life activities such as shopping, cooking, or planning routes [41] [42].

- Contextual Complexity: Incorporate multiple simultaneous cognitive demands (planning, inhibition, working memory) as they naturally occur in daily life [12].

- Natural Interaction: Enable movement recognition and natural interactions within the VR environment to enhance immersion [41].

Q2: What behavioral metrics beyond task accuracy should we capture?

While accuracy remains important, comprehensive EF assessment requires multiple behavioral dimensions captured automatically by VR systems:

| Metric Category | Specific Measures | Cognitive Component Assessed |

|---|---|---|

| Error Analysis | Commission errors, omission errors, perseverative errors, rule violations | Inhibitory control, cognitive flexibility [12] |

| Temporal Metrics | Reaction time, hesitation periods, task completion time | Processing speed, decision-making [43] |

| Behavioral Patterns | Path efficiency, sequence of actions, task repetitions | Planning, problem-solving [43] |

| Novel Actions | Introduction of unprompted actions, rule-breaking behaviors | Behavioral monitoring, cognitive control [12] |

Q3: Our participants experience simulator sickness during testing. How can we mitigate this?

Simulator sickness can be minimized through both technical adjustments and protocol design:

- Technical Optimization: Ensure high frame rates (≥90Hz), minimal latency, and stable head tracking to reduce sensory conflict [44].

- Session Management: Implement shorter testing sessions with breaks, gradually increasing exposure duration across sessions [12].

- Participant Screening: Use the Pediatric Simulator Sickness Questionnaire (Peds-SSQ) or similar tools to identify susceptible individuals and monitor symptoms throughout testing [12].

- Environmental Design: Avoid rapid camera movements and provide stable visual reference points in the virtual environment.

FAQ: Technical Implementation and Data Analysis

Q4: What equipment and software specifications are recommended for VR-based cognitive assessment?

The Researcher's Toolkit below outlines essential components. For behavioral analysis, standard VR hardware (headsets and controllers) can capture most required metrics without additional sensors [43]. However, for comprehensive psychophysiological assessment, minimal supplemental sensors such as Galvanic Skin Response (GSR) can provide valuable convergent data without significantly increasing complexity [43].

Q5: How can we implement real-time behavioral analysis in our VR experiments?

The Sensor-Assisted Unity Architecture provides a framework for real-time analysis with minimal hardware [43]. Implementation steps include:

- Define Behavioral Triggers: Program VR environments to introduce controlled stressors (flashing alarms, countdown timers) at precise moments [43].

- Extract Behavioral Features: Capture natural reactions (task failure, hesitation, hand tremors) via standard VR controllers and headset tracking [43].

- Implement Decision Algorithm: Develop logic that analyzes behavioral patterns and triggers supplemental sensor data collection when needed [43].

- Ensure Low Latency: Optimize pipeline to achieve sub-120ms latency for real-time feedback [43].

Q6: How do we address tracking and technical issues during experiments?

Common technical issues and solutions include:

- Tracking Loss: Ensure adequate lighting (without direct sunlight), remove reflective surfaces, and recalibrate tracking systems [44].

- Controller Connectivity: Remove and reinsert batteries, re-pair controllers via the VR platform's application [44].

- Display Issues: For blurry displays, adjust lens spacing and clean lenses with microfiber cloth; for flickering, restart the headset [44].

- Boundary Problems: Reset guardian systems in adequate lighting with clear physical space boundaries [44].

Experimental Protocols and Methodologies

Protocol 1: Implementing the SmartAction-VR Paradigm for EF Assessment