Closed-Loop Neurotechnology for Memory Triggering: Mechanisms, Clinical Applications, and Future Directions

This article synthesizes current research and development in closed-loop interfaces for memory triggering, a cutting-edge frontier in neuromodulation.

Closed-Loop Neurotechnology for Memory Triggering: Mechanisms, Clinical Applications, and Future Directions

Abstract

This article synthesizes current research and development in closed-loop interfaces for memory triggering, a cutting-edge frontier in neuromodulation. It explores the foundational principles of bidirectional brain-computer interfaces (BCIs) that can both read neural signals and write stimulation back to the brain in real time. For researchers and drug development professionals, the content details the methodological advances in adaptive deep brain stimulation (aDBS), responsive neurostimulation (RNS), and targeted memory reactivation (TMR) during sleep. It critically addresses the significant technical challenges, such as neural signal instability and data processing demands, alongside the paramount ethical considerations of privacy, agency, and consent. Finally, the article evaluates validation metrics and comparative performance of different system architectures, providing a comprehensive overview for scientists and clinicians working at the intersection of neurotechnology and cognitive enhancement.

The Science of Memory and Closed-Loop Circuits: Core Principles and Neural Mechanisms

The evolution of neuromodulation technologies has marked a significant transition from open-loop to closed-loop systems, representing a paradigm shift in how we interact with the human brain. This progression culminates in the development of bidirectional Brain-Computer Interfaces (BCIs), which enable direct communication between the brain and external devices [1]. Within memory triggering research, these advanced systems offer unprecedented opportunities for investigating and potentially restoring mnemonic function by creating adaptive, real-time circuits for neural monitoring and stimulation.

Traditional open-loop systems operate through pre-programmed stimulation parameters without accounting for the brain's dynamic physiological state. In contrast, closed-loop systems, also termed adaptive or responsive technologies, function by continuously monitoring physiological inputs, processing this data through sophisticated algorithms, and dynamically adjusting outputs in real-time to achieve desired outcomes [2]. This fundamental capability for adaptation enables not only precise control and enhanced efficacy but also personalized treatment tailored to a patient's momentary physiological state.

Bidirectional BCIs represent the most advanced embodiment of closed-loop principles, establishing a direct conduit for two-way communication between the brain and external hardware [1]. These systems can interpret brain signals in real-time, converting them into commands to control external devices, while simultaneously translating external stimuli into signals the brain can perceive [1]. This bidirectional flow creates an interactive dialogue between neural tissue and machine, offering transformative potential for memory research and therapeutic applications.

Comparative Analysis: Open-Loop vs. Closed-Loop Systems

Table 1: Fundamental characteristics of open-loop and closed-loop neuromodulation systems

| Feature | Open-Loop Systems | Closed-Loop Systems |

|---|---|---|

| Responsiveness | Static, pre-programmed stimulation | Dynamic, responsive to real-time neural activity |

| Feedback Mechanism | No feedback from neural signals | Continuous feedback from recorded neural biomarkers |

| Adaptation Capability | Fixed parameters regardless of brain state | Automatically adjusts parameters based on neural state |

| Personalization Level | Limited, based on initial programming | High, continuously tailored to individual patient physiology |

| Theoretical Foundation | Linear stimulation paradigm | Cybernetic, interactive control paradigm |

| Key Applications | Traditional deep brain stimulation for movement disorders | Adaptive DBS, responsive neurostimulation for epilepsy, cognitive research |

| Data Processing | Minimal real-time processing required | Advanced signal processing and machine learning algorithms |

The distinction between these systems has profound implications for memory research. While open-loop approaches apply stimulation without regard to underlying brain states, closed-loop systems can detect specific neural patterns associated with memory encoding, retrieval, or failure, and deliver precisely timed interventions to modulate these processes [3]. This capability for state-dependent stimulation makes bidirectional BCIs particularly suited for investigating the dynamic nature of human memory.

Technical Specifications and Signal Processing Pathways

Table 2: Neural signal acquisition modalities for bidirectional BCIs

| Modality | Type | Spatial Resolution | Temporal Resolution | Primary Applications in Memory Research |

|---|---|---|---|---|

| Electroencephalography (EEG) | Non-invasive | Low (cm) | High (ms) | Network-level memory processes, sleep-dependent memory consolidation |

| Electrocorticography (ECoG) | Semi-invasive | High (mm) | High (ms) | Cortical memory representations, seizure focus localization in memory circuits |

| Stereoelectroencephalography (SEEG) | Invasive | Very High ( | High (ms) | Deep brain structures (hippocampus, amygdala) in memory formation |

| Local Field Potentials (LFP) | Invasive | Very High ( | High (ms) | Subcortical memory circuits, biomarker identification for adaptive DBS |

| Functional MRI (fMRI) | Non-invasive | High (mm) | Low (s) | Anatomical localization of memory networks, hemodynamic correlates |

| Magnetoencephalography (MEG) | Non-invasive | Medium (cm) | High (ms) | Source-localized oscillatory dynamics in memory tasks |

The operational pathway of a closed-loop BCI involves a precisely orchestrated sequence of stages: (1) brain signal acquisition, (2) preprocessing, (3) feature extraction, (4) feature classification, (5) device control, and (6) feedback delivery [1]. Central to this process is the real-time interpretation of brain signals and their conversion into commands that external devices can execute, while simultaneously delivering sensory feedback to the user [1].

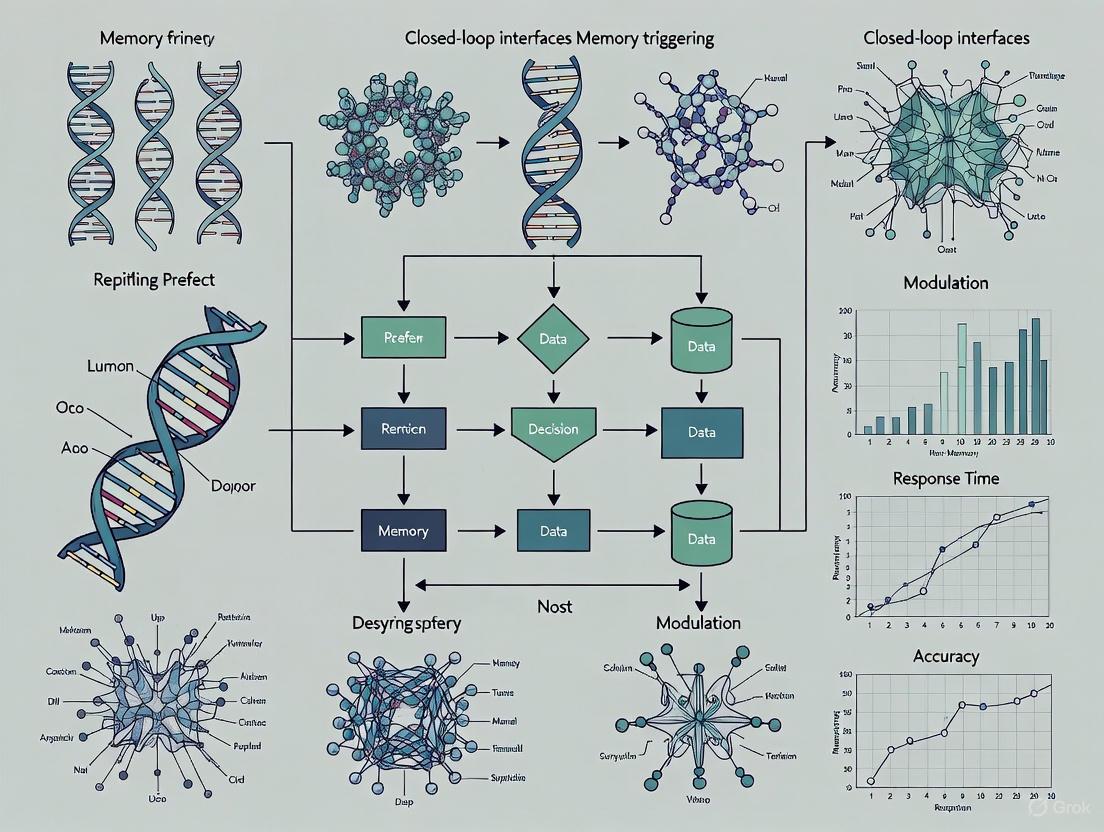

Diagram 1: Closed-loop BCI pathway for memory research (76 characters)

Experimental Protocols for Memory Triggering Research

Protocol: Hippocampal Theta-Gamma Coupling Detection and Modulation

Objective: To detect and modulate theta-gamma cross-frequency coupling in the hippocampus, a biomarker associated with successful memory encoding.

Materials and Equipment:

- Intracranial recording and stimulation system (e.g., NeuroPace RNS or Medtronic Activa PC+S)

- Depth electrodes with 8-16 contacts positioned in hippocampal formation

- Real-time signal processing unit capable of spectral analysis

- Bipolar stimulation electrodes

Procedure:

- Baseline Recording Phase: Record continuous hippocampal activity during rest and memory encoding tasks (word pair learning) for 30 minutes to establish individual theta-gamma coupling profiles.

- Feature Identification: Calculate power spectral density in theta (4-8 Hz) and gamma (30-100 Hz) bands. Implement a circular correlation algorithm to detect phase-amplitude coupling between these rhythms.

- Threshold Determination: Set individualized thresholds for significant theta-gamma coupling events based on baseline recordings (typically 2-3 standard deviations above mean coupling strength).

- Closed-Loop Stimulation Protocol: When theta-gamma coupling exceeds threshold during memory encoding attempts, deliver brief (100ms) biphasic electrical stimulation (0.5-2.0 mA) to the hippocampal formation within 50ms of detection.

- Control Condition: Employ a sham stimulation condition where detection occurs but stimulation is withheld.

- Memory Assessment: Administer recognition memory tests 24 hours post-encoding to assess retention.

Analysis Parameters:

- Signal processing: Bandpass filtering, Hilbert transform for instantaneous phase/amplitude calculation

- Coupling metric: Modulation index for phase-amplitude coupling

- Stimulation parameters: 130 Hz frequency, 100μs pulse width, 0.5-2.0 mA amplitude

Protocol: Prefrontal-Hippocampal Network Synchronization

Objective: To enhance functional connectivity between prefrontal cortex and hippocampus during memory retrieval using phase-locked stimulation.

Materials and Equipment:

- Dual-site recording capability (prefrontal and hippocampal electrodes)

- Real-time phase estimation algorithms

- Phase-locked stimulation circuitry

Procedure:

- Connectivity Mapping: During a spatial navigation task, identify characteristic phase synchrony patterns between prefrontal and hippocampal regions using coherence analysis in the theta band (4-8 Hz).

- Real-Time Phase Detection: Implement a zero-phase filtering approach with a forward-backward IIR filter to estimate the instantaneous phase of prefrontal theta oscillations with minimal lag.

- Stimulation Timing: Deliver hippocampal stimulation (5 pulse trains at 100 Hz) specifically during the peak of prefrontal theta oscillations.

- Control Conditions: Include stimulation at random phases and stimulation at the trough of theta oscillations.

- Behavioral Measures: Assess spatial memory performance and retrieval accuracy following stimulation.

Parameters:

- Phase estimation: Hilbert transform or wavelet-based approaches

- Stimulation trigger: Prefrontal theta phase between 45°-90° (ascending phase)

- Stimulation intensity: 0.5-1.5 mA, adjusted to avoid after-discharges

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential materials and analytical tools for closed-loop BCI research

| Tool/Category | Specific Examples | Research Function | Application in Memory Studies |

|---|---|---|---|

| Data Acquisition Systems | NeuroPace RNS, Medtronic Activa PC+S, Blackrock Neuroport | Record neural signals and deliver stimulation | Continuous monitoring of hippocampal and cortical activity during memory tasks |

| Signal Processing Software | BCI2000, OpenVibe, FieldTrip, EEGLAB | Preprocessing, feature extraction, classification | Real-time detection of memory-related oscillatory patterns (theta, gamma) |

| Machine Learning Libraries | Scikit-learn, TensorFlow, PyTorch | Development of decoding algorithms | Classification of neural states associated with successful memory encoding/retrieval |

| Neural Data Standards | Brain Imaging Data Structure (BIDS) [4] [5] | Standardized organization of neuroimaging data | Facilitates data sharing and reproducibility in memory research collaborations |

| Stimulation Parameters | Biphasic pulses (100-200μs), 0.5-3.5 mA, 100-150 Hz | Controlled neural modulation | Precise intervention in memory circuits with minimized risk of tissue damage |

| Behavioral Paradigms | Verbal recall tasks, spatial navigation, associative learning | Assessment of memory function | Quantification of closed-loop intervention efficacy on memory performance |

Implementation Framework and Ethical Considerations

The implementation of closed-loop BCIs for memory research requires careful consideration of both technical and ethical dimensions. From a technical perspective, personalized BCI approaches are essential, as individual differences in neuroanatomy, memory strategies, and neural signal characteristics significantly impact system efficacy [6]. This personalization encompasses customized paradigm design, individualized signal processing approaches, and tailored feedback mechanisms.

Diagram 2: BCI implementation workflow for memory research (67 characters)

Ethical considerations present significant challenges in closed-loop BCI research. Current clinical studies demonstrate that explicit ethical assessment remains rare, with ethical issues typically addressed only implicitly through technical or procedural discussions rather than structured analysis [2]. Key ethical dimensions include:

- Beneficence and Risk-Benefit Analysis: Ensuring that potential memory enhancements justify the risks of invasive procedures, particularly in vulnerable populations [2].

- Autonomy and Identity: Addressing concerns about how neural modulation might impact personal identity, authentic memory formation, and agency [2].

- Privacy and Data Security: Implementing robust protections for sensitive neural data that could reveal private thoughts, memories, or predispositions [2].

- Equitable Access: Considering justice implications in the development and potential deployment of memory modulation technologies [2].

The Brain Imaging Data Structure (BIDS) standard provides an essential framework for organizing and sharing neural data, promoting reproducibility and collaboration in memory research [4] [5]. Adherence to such community standards facilitates the aggregation of datasets necessary for developing robust closed-loop algorithms capable of generalizing across diverse populations.

Closed-loop bidirectional BCIs represent a transformative approach in memory research, enabling unprecedented real-time investigation and modulation of neural circuits underlying mnemonic processes. The transition from open-loop to closed-loop systems marks a fundamental shift from static stimulation to dynamic, adaptive interaction with the nervous system.

Future developments in this field will likely focus on enhancing the personalization of decoding algorithms, improving long-term biocompatibility of implanted devices, and addressing the ethical implications of increasingly sophisticated neural interfaces [6] [3] [2]. As these technologies evolve, they offer the potential not only to advance our fundamental understanding of human memory but also to develop novel therapeutic approaches for memory disorders resulting from neurological conditions, brain injury, or age-related cognitive decline.

The integration of artificial intelligence with bidirectional BCIs promises to further refine the precision of memory circuit modulation, potentially enabling systems that can learn and adapt to individual neural patterns over extended periods. This convergence of neuroscience, engineering, and computational analytics positions closed-loop interfaces as powerful tools for unraveling the complexities of human memory.

The formation of a enduring memory is a complex process that unfolds over time and involves a dynamic conversation between different brain regions. The hippocampal-cortical dialog is a fundamental neurobiological process through which memories, initially encoded in the hippocampus, become gradually strengthened and integrated into the neocortex for long-term storage. This process is particularly active during offline periods, such as sleep, and is crucial for the formation of stable, long-term memories [7] [8].

Understanding this dialog is not merely an academic pursuit; it provides a critical foundation for developing novel cognitive therapies and closed-loop interfaces for memory modulation. These systems aim to interact directly with the brain's natural memory processes, potentially offering new ways to combat memory impairments associated with neurological disorders and aging [9] [10] [11].

Core Neuroanatomy and Functional Divisions

The hippocampal formation and neocortex perform complementary functions in memory processing. The hippocampus, with its unique circuitry, is specialized for the rapid encoding of new information, while the neocortex supports the slow learning of structured knowledge [8].

Hippocampal Subregional Specialization

The hippocampus itself is not a uniform structure; its subregions contribute differently to memory handling:

- CA3 Region: Acts as an autoassociative memory network. It is critical for the rapid formation of a unified representation of the context by forming a configural representation from multimodal sensory inputs. Reversible inactivation of CA3 disrupts the acquisition of contextual fear memory [12].

- CA1 Region: Serves as a major output structure and is involved in the consolidation process of contextual memory. CA1 inactivation also impairs acquisition but appears to more clearly disrupt the consolidation process [12].

- Ventral Hippocampus (vCA1): Projects to cortical areas like the infralimbic cortex (IL) and is critical for the consolidation of social memories. Its interactions with the IL are necessary for stabilizing memories of newly familiarized conspecifics [13].

Neocortical Partners

The neocortex is not a passive recipient of information from the hippocampus. Specific regions are engaged during consolidation and retrieval:

- Infralimbic Cortex (IL): Stores consolidated social memories in a generalized form. IL neurons projecting to the nucleus accumbens shell (IL→NAcSh) are activated by familiar conspecifics and are necessary for the retrieval of social memory, but not for its initial encoding [13].

- Medial Prefrontal Cortex (mPFC): Is involved in the retrieval of consolidated memories and is a key site for pathological interactions in epilepsy, highlighting its role in memory networks [10].

- A Common Cortical Network: Neuroimaging studies reveal that various memory types—including general semantic, personal semantic, and episodic memory—engage a shared, widespread bilateral fronto-parietal network, with differential activation levels based on memory specificity [14].

Table 1: Key Brain Regions in the Hippocampal-Cortical Dialog

| Brain Region | Primary Function in Memory | Key Specializations |

|---|---|---|

| Hippocampal CA3 | Rapid acquisition of contextual memory | Autoassociative network; configural representation |

| Hippocampal CA1 | Memory consolidation and output | Critical for consolidation processes |

| Ventral Hippocampus | Social memory consolidation | Projects to cortical regions (e.g., IL) |

| Infralimbic Cortex | Storage of consolidated social memory | Encodes social familiarity; necessary for retrieval |

| Medial Prefrontal Cortex | Memory retrieval & integration | Part of a common cortical network for declarative memory |

Quantitative Data on Hippocampal Subregion Functions

Studies using reversible inactivation provide quantitative insights into the specific roles of hippocampal subregions. The following data, derived from a study on contextual fear conditioning in mice, illustrate the time-dependent and region-specific effects of disrupting hippocampal function [12].

Table 2: Effects of Reversible Inactivation on Contextual Fear Memory [12]

| Experimental Manipulation | Target Region | Timing of Intervention | Effect on Locomotor Activity | Effect on Contextual Freezing (%) | Key Interpretation |

|---|---|---|---|---|---|

| Lidocaine Infusion | CA3 | 10 min pre-conditioning (acquisition) | No significant effect | Significant impairment (p=0.009) | CA3 is necessary for rapid contextual encoding. |

| Lidocaine Infusion | CA1 | 10 min pre-conditioning (acquisition) | No significant effect | Significant impairment (p=0.006) | CA1 is also involved in the acquisition phase. |

| Lidocaine Infusion | CA3 or CA1 | Pre-retention test (retrieval) | Not reported | No significant effect | Neither region is required for retrieval in a recognition memory task. |

| Lidocaine Infusion | CA3 | 15 min pre-conditioning | Not reported | No significant effect | Confirms the reversibility of lidocaine inactivation. |

The Role of Sleep and Offline Reactivation

The dialogue between the hippocampus and neocortex intensifies during sleep, making this period critical for memory consolidation. The brain autonomously replays recent experiences, facilitating the transfer and integration of information [7].

Complementary Learning Systems and Sleep Stages

The Complementary Learning Systems (CLS) theory provides a framework for understanding this process, positing that the hippocampus and neocortex are two interacting systems with complementary strengths and weaknesses [7]. Computational models demonstrate how alternating sleep stages support this:

- Non-Rapid Eye Movement (NREM) Sleep: Characterized by tightly coupled dynamics between the hippocampus and neocortex. Key oscillatory events—hippocampal sharp-wave ripples, thalamocortical spindles, and neocortical slow oscillations—become temporally coupled. This "triple coupling" is thought to facilitate the transfer of information from the hippocampus to the neocortex [7] [11].

- Rapid Eye Movement (REM) Sleep: Features a lower degree of coupling between the hippocampus and neocortex. This stage may allow the neocortex to more freely explore and integrate new information within its existing knowledge networks, preventing the new data from overwriting remote memories [7].

Bi-Directional Interactions and Replay

The dialog is not a one-way street. Evidence supports a bi-directional interaction during offline periods [8]:

- Cortical Priming: Waking neural patterns originating in the cortex can trigger reactivations.

- Hippocampal Replay: These cortical activations trigger time-compressed sequential replays in the hippocampus.

- Cortical Consolidation: The hippocampal replays, in turn, drive the strengthening and consolidation of the memory traces in the cortex.

This cycle is crucial for consolidating sequential experiences and is influenced by the salience (e.g., recency, emotionality) of the memories [8].

Diagram 1: Hippocampal-cortical dialog across brain states.

Application Notes & Protocols for Closed-Loop Interfaces

The principles of the hippocampal-cortical dialog can be leveraged to develop closed-loop interfaces designed to modulate memory processes. These systems monitor neural activity and deliver stimuli at optimal moments to enhance memory consolidation.

Protocol: Closed-Loop Targeted Memory Reactivation (CL-TMR)

This protocol uses sensory cues during sleep to strengthen specific memories [11].

- Objective: To enhance the consolidation of a spatial navigation memory using auditory cues delivered during NREM sleep.

- Workflow:

- Encoding Session (Day 1, Awake): Participants learn a virtual reality (VR) spatial navigation task. Unique auditory cues are paired with crossing specific district borders.

- Baseline Retrieval Test (Day 1, Awake): Navigation efficiency is tested on a set of routes.

- Nap Session (Day 1, Sleep): Participants take a monitored nap. Electroencephalography (EEG) is used to detect NREM sleep stages in real-time.

- Closed-Loop Stimulation: An automated algorithm detects the transition from a down-state to an up-state of the cortical slow oscillation. At this precise moment, the auditory cues associated with the learned routes are delivered.

- Post-Nap Retrieval Test (Day 1, Awake): Navigation efficiency is re-tested to measure improvement.

- Key Outcome: CL-TMR during naps leads to significant improvements in navigation efficiency and is associated with increased power in the fast (12–15 Hz) sleep spindle band [11].

Protocol: Disrupting Pathological Hippocampal-Cortical Coupling in Epilepsy

This protocol demonstrates a therapeutic application by targeting maladaptive interictal dynamics [10].

- Objective: To prevent the spread of epileptic networks and associated memory deficits by eliminating pathological hippocampal-cortical coupling.

- Experimental Model: Hippocampal kindling in freely moving rats.

- Workflow:

- Baseline Recording: Neural activity is recorded from the hippocampus and medial prefrontal cortex (mPFC) during NREM sleep.

- Kindling Protocol: A progressive epilepsy model is induced via daily hippocampal stimulation.

- Pathological Coupling Detection: The system detects the occurrence of a hippocampal interictal epileptiform discharge (IED).

- Closed-Loop Intervention: Upon detection, the system delivers a brief, spatially targeted electrical stimulation to the mPFC. This stimulation is timed to disrupt the IED-induced spindle coupling.

- Assessment: Long-term spatial memory is evaluated using behavioral tests (e.g., water maze).

- Key Outcome: This closed-loop stimulation prevents the emergence of independent cortical IED foci and ameliorates long-term spatial memory deficits, demonstrating that normalizing interictal dynamics can treat cognitive comorbidities [10].

Table 3: The Scientist's Toolkit - Key Research Reagents & Solutions

| Reagent / Tool | Category | Primary Function in Research | Example Application |

|---|---|---|---|

| Lidocaine | Pharmacological Agent | Reversible neural inactivation via sodium channel blockade. | Temporarily inactivating CA1/CA3 to probe stage-specific hippocampal function [12]. |

| Halorhodopsin (NpHR) | Optogenetic Inhibitor | Light-sensitive chloride pump that silences neural activity. | Inhibiting IL→NAcSh neuron activity during social memory retrieval [13]. |

| GCaMP6f | Genetically-Encoded Calcium Indicator | Fluorescent sensor for imaging neural activity (Ca²⁺ influx). | Monitoring calcium transients in IL→NAcSh neurons during social familiarization tasks [13]. |

| Closed-Loop EEG System | Neuromodulation Device | Real-time sleep stage detection and stimulus delivery. | Providing auditory TMR cues at down-to-up-state transitions in NREM sleep [11]. |

| hm4Di DREADD | Chemogenetic Inhibitor | Designer receptor exclusively activated by designer drugs (e.g., CNO) to suppress neural activity. | Chemogenetically inactivating specific neuronal populations during offline consolidation periods [13]. |

The dialogue between the hippocampus and neocortex is a cornerstone of memory formation. Evidence from lesion, neuroimaging, and computational modeling studies confirms that this interaction is a dynamic, bi-directional process essential for transforming labile hippocampal traces into stable cortical memories, with sleep playing a orchestrating role. The emergence of closed-loop interfaces represents a transformative application of this knowledge, allowing researchers to move from observation to targeted intervention. By precisely interacting with the brain's own rhythms and states, these protocols offer powerful tools not only to enhance our fundamental understanding of memory but also to develop novel therapies for memory disorders.

Sleep provides a unique neurobiological state that facilitates the consolidation of memories. During non-rapid eye movement (NREM) sleep, the brain generates a precise temporal coordination of neural oscillations that drive synaptic and systems consolidation processes. The hierarchical coupling of slow oscillations (SOs), sleep spindles, and sharp-wave ripples (SWRs) forms a fundamental mechanism through which the sleeping brain strengthens and reorganizes memory traces without conscious effort or external stimulation [15]. This triad of oscillations enables a sophisticated hippocampal-cortical dialogue that transforms labile hippocampal memories into stable cortical representations [16].

The significance of these oscillatory events extends beyond basic memory research into clinical and therapeutic applications. Closed-loop interface systems are being developed to monitor and modulate these oscillations to enhance memory function, offering potential interventions for memory disorders including Alzheimer's disease and related dementias [9]. Understanding the precise temporal dynamics, physiological mechanisms, and functional consequences of SO-spindle-ripple coupling provides the foundation for targeted memory modulation strategies in both research and clinical settings.

Quantitative Signatures of Sleep Oscillations

The electrophysiological properties of SOs, spindles, and ripples exhibit distinct quantitative signatures that can be measured and manipulated in experimental settings. The table below summarizes the key characteristics of each oscillation type based on human intracranial and scalp recordings.

Table 1: Quantitative Signatures of Key Sleep Oscillations in Humans

| Oscillation Type | Frequency Range | Cortical Origin/Modulation | Primary Physiological Role | Amplitude/Characteristics |

|---|---|---|---|---|

| Slow Oscillations (SOs) | <1 Hz | Prefrontal cortex, neocortical networks | Coordinating temporal framework for spindle-ripple coupling; toggling between depolarized up-states and hyperpolarized down-states [15] | High-amplitude (<1 Hz) fluctuations; smaller amplitudes with bipolar re-referencing [15] |

| Slow Spindles | 9-12.5 Hz | Predominantly frontal regions | Occur during transition to SO down-state; potentially related to cortical cross-linking of information [16] | Waxing-and-waning morphology |

| Fast Spindles | 12.5-16 Hz | Centro-parietal regions | Nesting in SO up-states; facilitating hippocampal-cortical information transfer; coinciding with hippocampal SWRs [16] | Waxing-and-waning morphology |

| Sharp-Wave Ripples (SWRs) | 80-120 Hz (human hippocampus) | Hippocampal and extrahippocampal medial temporal lobe areas [15] | Facilitating local synaptic plasticity through coordinated neuronal firing; information transfer between brain regions [15] | Transient high-frequency bursts |

The temporal coupling between these oscillations follows a precise hierarchy. Research using intracranial electroencephalography (iEEG) combined with multiunit activity recordings demonstrates that SO up-states provide the temporal framework for spindle occurrence, which in turn creates optimal time windows for ripple generation [15]. The sequential coupling leads to a stepwise increase in neuronal firing rates, short-latency cross-correlations among local neuronal assemblies, and enhanced cross-regional interactions within the medial temporal lobe [15].

Table 2: Temporal Coupling Dynamics Between Sleep Oscillations

| Coupling Type | Temporal Relationship | Functional Consequences | Measurement Approach |

|---|---|---|---|

| SO-Spindle Coupling | Spindle onsets increase in earlier phases of SO up-states (average -451 ms relative to SO down-state) [15] | Increased probability of ripple occurrence; enhanced cross-regional communication | Event-locked spectral analysis; phase-amplitude coupling metrics |

| Spindle-Ripple Coupling | Ripples tend to occur during waxing spindle phase (before spindle center); most begin after spindle onset and end before spindle offset [15] | Significant increase in neuronal firing rates; optimal conditions for spike-timing-dependent plasticity | Cross-correlation analysis between spindle and ripple events |

| SO-Ripple Coupling | Ripple onsets cluster in SO up-states (average -241 ms relative to SO down-state) [15] | Active silencing during SO down-states (firing rates below baseline); coordinated reactivation during up-states | Phase-locked event histograms; firing rate modulation analysis |

Experimental Protocols for Oscillation Detection and Analysis

Intracranial EEG Recording and Multiunit Activity Protocol

This protocol outlines the methodology for simultaneous recording of sleep oscillations and neuronal firing activity in human participants, adapted from invasive recording studies in epilepsy patients [15].

Equipment Setup:

- Depth electrodes implanted bilaterally targeting anterior/posterior hippocampus, amygdala, entorhinal cortex, and parahippocampal cortex

- Additional microwires protruding ~4 mm from electrode tips for multiunit activity (MUA) recording

- Macro contacts for field potential acquisition (bipolar re-referencing)

- High-impedance amplifiers with appropriate filtering (e.g., 0.1-300 Hz for local field potentials, 300-6000 Hz for MUA)

- Sampling rate ≥2000 Hz to adequately capture ripple oscillations

Data Acquisition Parameters:

- Record during natural nocturnal sleep with simultaneous video monitoring

- Focus on NREM sleep stages (N2 and N3) with minimum 20-minute stable epochs

- Session range: 1-4 sessions per participant with consistent electrode placement

- Pool data across medial temporal lobe contacts (approximately 10 per participant) and corresponding microwires (approximately 8 per contact)

Oscillation Detection Algorithms:

Table 3: Detection Parameters for Sleep Oscillations

| Oscillation | Detection Method | Key Parameters | Exclusion Criteria |

|---|---|---|---|

| Slow Oscillations | Band-pass filtering (<1 Hz), zero-crossing detection, amplitude thresholding | Duration: 0.5-2 seconds; peak-to-peak amplitude >2 SD from background | Artifacts from movement, epileptiform activity |

| Sleep Spindles | Root mean square (RMS) power in 12-16 Hz band, duration and amplitude criteria | Duration: 0.5-3 seconds; amplitude >2 SD above baseline | EMG artifacts, movement contamination |

| Sharp-Wave Ripples | Band-pass filtering (80-120 Hz), Hilbert transform, amplitude thresholding | Duration: 50-200 ms; amplitude >3 SD above baseline | Epileptiform spikes, electrical artifacts |

Cross-Correlation Analysis Protocol for Neuronal Co-firing

This protocol assesses short-latency co-firing patterns during oscillatory events, critical for understanding spike-timing-dependent plasticity mechanisms [15].

Procedure:

- Extract spike times from multiunit activity recordings during detected SO, spindle, and ripple events

- Calculate cross-correlograms (CCGs) in ±50 ms windows centered on event maxima

- Include only pairwise combinations of microwires with minimum firing rate of 1 Hz across all NREM sleep

- Apply two-step correction:

- Subtract "shift predictor" CCGs (cross-correlation of wire 1 firing during event n with wire 2 firing during event n+1)

- Subtract CCGs derived from matched non-event surrogates (also shift-predictor corrected)

- Normalize resulting CCGs by baseline firing rates

- Quantify peak co-firing probability within ±25 ms window

Statistical Analysis:

- Compare co-firing probabilities across oscillation types using repeated-measures ANOVA

- Assess temporal asymmetry in co-firing peaks to determine directional influences

- Correlate co-firing metrics with behavioral memory performance measures

Signaling Pathways and Experimental Workflows

Sleep Oscillation Coupling and Memory Consolidation Pathway

Diagram 1: Hierarchical Coupling of Sleep Oscillations

Closed-Loop Interface Experimental Workflow

Diagram 2: Closed-Loop BCI System for Memory Modulation

Research Reagent Solutions and Essential Materials

Table 4: Essential Research Tools for Sleep Oscillation and Memory Research

| Category | Specific Items/Techniques | Research Application | Key Considerations |

|---|---|---|---|

| Electrophysiology Platforms | Intracranial EEG with microwires; High-density scalp EEG (64-256 channels); Multiunit activity recording systems | Simultaneous field potential and neuronal firing measurement; Human and animal model studies | Microwire protrusion (~4 mm) for optimal unit isolation; Sampling rates ≥2000 Hz for ripple detection [15] |

| Oscillation Detection Software | Custom MATLAB/Python toolboxes; Commercial sleep scoring software (e.g., Somnolyzer); Open-source packages (e.g., FieldTrip) | Automated detection of SOs, spindles, ripples; Cross-frequency coupling analysis | Validation against manual scoring; Adaptation to specific recording modalities (scalp vs. intracranial) |

| Neuromodulation Devices | Transcranial alternating current stimulation (tACS); Transcranial magnetic stimulation (TMS); Deep brain stimulation (DBS) systems | Closed-loop modulation of sleep oscillations; Causal interrogation of oscillation-function relationships | Precision timing relative to oscillation phases; Safety protocols for sleep stimulation |

| Molecular Biology Reagents | Antibodies for immediate-early genes (c-Fos, Arc); Synaptic plasticity markers (PSD-95, GluR1); In situ hybridization kits | Mapping neuronal activation patterns; Assessing synaptic changes following oscillation manipulation | Tissue collection timepoints relative to sleep manipulations; Specificity for activated cell populations |

| Behavioral Testing Apparatus | Virtual water maze environments; Object location/recognition tasks; Associative memory paradigms | Assessment of spatial, episodic, and declarative memory; Linking oscillation metrics to behavior | Counterbalancing of test versions; Sensitive measures of memory precision |

| Computational Modeling Tools | Spiking neural network models; Phase-amplitude coupling algorithms; Signal processing toolboxes | Theoretical testing of oscillation mechanisms; Developing detection algorithms | Biological plausibility of model parameters; Integration of multi-scale data |

Application Notes for Closed-Loop Interfaces

Protocol for Closed-Loop Modulation of SO-Spindle Coupling

System Configuration:

- Real-time EEG processing platform with low-latency signal analysis (<100 ms delay)

- Stimulation interface capable of time-locked transcranial alternating current stimulation (tACS)

- Custom algorithms for SO phase detection and spindle identification

- Safety monitoring for prolonged sleep stimulation sessions

Stimulation Parameters:

- SO-frequency tACS (0.75 Hz) applied during deep NREM sleep

- Spindle-frequency bursts (12-15 Hz) timed to SO up-states

- Stimulation intensity below arousal threshold (typically 0.5-1.5 mA)

- Bipolar montage targeting fronto-central regions

Validation Metrics:

- Pre-post changes in endogenous SO-spindle coupling strength

- Enhancement of overnight memory retention (% change from baseline)

- Absence of sleep architecture disruption or awakenings

Pharmacological Intervention Protocol Targeting Oscillation Coupling

Compound Selection Criteria:

- Agents with known modulatory effects on sleep oscillations (e.g., benzodiazepines, z-drugs, NMDA antagonists)

- Dose-response characterization for oscillation-specific effects

- Consideration of receptor specificity and pharmacokinetic profiles

Administration Protocol:

- Pre-sleep administration timed to peak plasma concentrations during early NREM sleep

- Placebo-controlled, crossover design with counterbalanced conditions

- Polysomnographic monitoring with high-density EEG

- Memory testing pre-sleep and post-sleep

Outcome Measures:

- Quantitative changes in SO, spindle, and ripple characteristics

- Alterations in cross-frequency coupling metrics

- Correlation between oscillation changes and memory performance effects

The development of closed-loop interfaces that can detect and modulate these physiological targets in real-time represents a promising frontier for cognitive neuroscience and therapeutic interventions. As research advances, the precise temporal coordination of SOs, spindles, and ripples offers compelling targets for enhancing memory function and combating memory decline in neurological disorders [9].

Closed-loop Brain-Computer Interfaces (BCIs) represent a transformative class of neurotechnology that enables direct, bidirectional communication between the brain and an external computing system [9]. Unlike open-loop systems that merely record neural activity, closed-loop architectures are defined by their ability to both decode neural signals and encode feedback through neural stimulation in real time, creating an adaptive circuit for intervention [17]. In the specific context of memory triggering research, these systems hold revolutionary potential by detecting targeted neural states associated with memory encoding or retrieval and providing immediate, precise neuromodulation to influence cognitive outcomes [17] [18]. The system's ability to intervene at specific neurophysiological moments—for instance, by rescuing poor memory encoding states—makes it a powerful tool for both basic scientific investigation and potential therapeutic applications for conditions like Alzheimer's disease and related dementias [9] [19]. This document details the standard components, experimental protocols, and reagent solutions essential for implementing such systems in memory research.

Standardized Component Architecture

A typical closed-loop BCI system operates through five sequential stages, each performing a distinct computational function. The system's core architecture is visualized below, illustrating the data flow and key processes at each stage.

Figure 1: Closed-Loop BCI System Architecture and Data Flow. The diagram illustrates the five core stages of signal processing and the closed-loop feedback pathway that enables real-time intervention.

Component 1: Signal Acquisition

The signal acquisition stage forms the physical interface with the neural system, responsible for capturing electrophysiological or hemodynamic activity with appropriate spatial and temporal resolution [20] [21]. The choice of acquisition modality represents a critical trade-off between signal quality, invasiveness, and practical applicability, which is particularly important in memory studies that require precise localization of hippocampal and cortical interactions [19] [18].

Table 1: Neural Signal Acquisition Modalities for Memory Research

| Modality | Spatial Resolution | Temporal Resolution | Invasiveness | Key Applications in Memory Research |

|---|---|---|---|---|

| Electroencephalography (EEG) | Low (cm) | High (ms) | Non-invasive | Monitoring sleep rhythms (SO, spindles) for memory consolidation [18] |

| Electrocorticography (ECoG) | Medium (mm) | High (ms) | Invasive (subdural) | Mapping cortical memory networks with higher fidelity than EEG [19] |

| Intracortical EEG (iEEG) | High (μm) | High (ms) | Invasive (intraparenchymal) | Detecting hippocampal ripples and precise neural firing patterns [17] |

| Functional Near-Infrared Spectroscopy (fNIRS) | Low (cm) | Low (s) | Non-invasive | Monitoring hemodynamic changes in prefrontal cortex during memory tasks [20] |

| Magnetoencephalography (MEG) | Medium (mm) | High (ms) | Non-invasive | Localizing synchronous neural activity during memory retrieval [21] |

Component 2: Preprocessing & Signal Enhancement

The preprocessing stage addresses the fundamental challenge of low signal-to-noise ratio (SNR) inherent in neural signals, particularly in non-invasive recordings [9] [20]. This stage applies computational techniques to isolate neural signals of interest from various biological and environmental artifacts, which is essential for subsequent feature extraction and classification stages [20] [21].

Table 2: Standard Preprocessing Techniques for Neural Signals

| Technique | Method Principle | Primary Application | Advantages | Limitations |

|---|---|---|---|---|

| Temporal Filtering | Selectively passes signals in specific frequency bands | Removing slow drifts (high-pass) and line noise (low-pass) | Computationally efficient, preserves temporal structure | May eliminate physiologically relevant signals [20] |

| Independent Component Analysis (ICA) | Blind source separation to statistically isolate independent signals | Removing ocular, cardiac, and muscle artifacts | Effective for separating mixed sources without reference signals | Requires manual component inspection, sensitive to data quantity [20] |

| Wavelet Transform | Time-frequency decomposition using wavelet functions | Non-stationary signal denoising and artifact removal | Captures transient features in both time and frequency domains | Complex implementation, basis function selection critical [20] |

| Canonical Correlation Analysis (CCA) | Maximizes correlation between multivariate signal sets | Removing EMG and other correlated artifacts | Multivariate approach effective for structured noise | Assumes linear relationships between variables [20] |

Component 3: Feature Extraction

Feature extraction transforms preprocessed neural signals into discriminative numerical representations that characterize cognitive states relevant to memory processes [9] [21]. This dimensionality reduction step identifies informative patterns while reducing computational complexity for subsequent classification [22].

For memory research, particularly informative features include:

- Spectral Power Features: Band-limited power in frequency bands crucial for memory (theta: 4-8 Hz, gamma: 30-100+ Hz) [17]

- Cross-Frequency Coupling: Phase-amplitude coupling between low and high frequencies (e.g., theta-gamma coupling) [18]

- Functional Connectivity: Statistical dependencies between neural signals recorded from different brain regions [19]

- Event-Related Potentials/Synchronization: Time-locked responses to specific stimuli or cognitive events [22]

High-frequency activity (HFA, 70-200 Hz) has been identified as a particularly reliable feature predicting memory success, as it reflects localized neural ensemble firing critical for memory encoding processes [17].

Component 4: Classification & Translation

The classification stage translates extracted features into meaningful cognitive state predictions or control commands using machine learning algorithms [9] [23]. For memory triggering applications, this typically involves binary or multiclass classification to distinguish between neural states associated with successful versus unsuccessful memory encoding or retrieval [17].

Table 3: Classification Algorithms for Memory State Decoding

| Algorithm | Model Type | Key Advantages | Limitations | Reported Performance in Memory Studies |

|---|---|---|---|---|

| Support Vector Machine (SVM) | Linear/Non-linear | Effective in high-dimensional spaces, robust to overfitting | Limited performance on very large datasets | AUC = 0.61 for recall probability prediction [17] |

| Convolutional Neural Network (CNN) | Deep Learning | Automatic feature learning, spatial pattern recognition | Computationally intensive, requires large datasets | Improved signal classification and feature extraction [9] |

| Long Short-Term Memory (LSTM) | Deep Learning (Recurrent) | Models temporal dependencies in sequential data | Complex training, potential vanishing gradients | Enhanced prediction of memory states over time [19] |

| Linear Discriminant Analysis (LDA) | Linear | Simple, fast, works well with limited data | Assumes normal distribution and equal variances | Widely used in motor-related BCI applications [19] |

Modern approaches increasingly use transfer learning to address the challenge of high variability in neural signals between individuals and across sessions, enhancing system adaptability while reducing required calibration time [9].

Component 5: Feedback & Stimulation

The feedback component completes the closed loop by delivering precisely timed neuromodulation based on classified neural states [17] [18]. For memory research, this typically involves electrical, magnetic, or acoustic stimulation triggered when the system detects neural patterns associated with suboptimal memory function.

The timing, location, and parameters of stimulation are critical determinants of efficacy. A seminal study demonstrated that closed-loop stimulation of the lateral temporal cortex during periods of poor memory encoding (as classified by the system) successfully rescued memory function, increasing recall probability by approximately 15% [17]. Similarly, phase-locked acoustic stimulation during specific phases of slow oscillations in sleep has been shown to enhance memory consolidation in animal models [18].

Experimental Protocol: Closed-Loop Memory Intervention

This section provides a detailed methodology for implementing a closed-loop BCI system to enhance memory encoding, based on validated experimental approaches [17] [18].

System Setup and Calibration Phase

Objective: To train a subject-specific classifier that predicts memory encoding success from neural features.

Materials:

- Intracranial EEG (iEEG) or high-density EEG recording system

- Stimulation system (electrical or acoustic, depending on design)

- Computing system with real-time processing capability (e.g., BCI2000, OpenVibe)

- Delayed free recall task presentation software

Procedure:

- Record-Only Session: Conduct at least three sessions of a delayed free recall memory task without any stimulation.

- Present words (typically 20-40 per list) for encoding, each for 2-3 seconds.

- Record neural activity throughout encoding, distractor, and recall periods.

- Collect behavioral data (successfully recalled words) for ground truth labeling.

Feature Labeling: Label encoding periods as "successful" or "unsuccessful" based on subsequent recall performance.

Classifier Training: Train a penalized logistic regression classifier or SVM to discriminate neural patterns during successful versus unsuccessful encoding.

- Use 5-fold cross-validation to assess classifier performance.

- Aim for minimum AUC of 0.60 for reliable prediction [17].

Model Validation: Validate classifier generalization on held-out data from the same subject.

Closed-Loop Intervention Phase

Objective: To use the trained classifier for real-time detection of poor encoding states and trigger corrective stimulation.

Procedure:

- Real-Time Probability Estimation: During subsequent memory task sessions, apply the trained classifier to neural data during word encoding to generate a continuous probability estimate of subsequent recall.

Stimulation Triggering: When the predicted recall probability falls below a set threshold (e.g., 0.5) during encoding:

Control Condition: Interleave "NoStim" lists where the classifier runs but no stimulation is delivered, to control for behavioral effects of stimulation.

Performance Assessment: Compare recall rates for stimulated items versus matched non-stimulated items using generalized linear mixed-effects models to account for within-subject variability.

The experimental workflow for this protocol is illustrated below, showing both calibration and intervention phases.

Figure 2: Experimental Protocol for Closed-Loop Memory Intervention. The workflow shows the sequential calibration and intervention phases, highlighting the transition from model training to real-time application.

The Scientist's Toolkit: Research Reagent Solutions

Implementing a closed-loop BCI system for memory research requires specialized hardware, software, and analytical tools. The following table details essential research reagents and their applications.

Table 4: Essential Research Reagents for Closed-Loop Memory BCI Systems

| Category | Specific Solution | Function | Example Applications | Key Considerations |

|---|---|---|---|---|

| Recording Hardware | Intracranial EEG (iEEG) systems | High-resolution neural signal acquisition from cortical surface | Mapping memory networks in epilepsy patients [17] | Surgical implantation required, highest signal quality |

| High-density EEG (64-256 channel) | Non-invasive scalp recording of electrical activity | Sleep monitoring, memory encoding studies [20] | Lower spatial resolution but clinically accessible | |

| Stimulation Devices | Bipolar cortical stimulator | Delivering targeted electrical stimulation to specific regions | Rescuing poor memory encoding states [17] | Current parameters critical (0.5-2.0 mA, 500ms) |

| Transcranial Direct Current Stimulation (tDCS) | Non-invasive neuromodulation via weak electrical currents | Enhancing memory consolidation during sleep [18] | Less focal than invasive approaches | |

| Software Platforms | BCI2000, OpenVibe | General-purpose BCI software platforms | Real-time signal processing and stimulus presentation [21] | Support multiple acquisition systems and paradigms |

| LFADS (Latent Factor Analysis via Dynamical Systems) | Neural population dynamics modeling | Stabilizing decoding over long periods [23] | Handles neural non-stationarities | |

| Analytical Tools | Custom MATLAB/Python scripts | Feature extraction and machine learning | Spectral analysis, classifier implementation [20] | Flexible but requires programming expertise |

| FieldTrip, MNE-Python | Open-source EEG/MEG analysis toolboxes | Preprocessing, connectivity analysis [21] | Community-supported, extensive documentation |

The standardized five-component architecture of closed-loop BCIs—encompassing signal acquisition, preprocessing, feature extraction, classification, and feedback—provides a powerful framework for memory triggering research. By implementing the detailed experimental protocols and utilizing the appropriate research reagents outlined in this document, researchers can develop robust systems capable of detecting specific memory-related neural states and delivering precisely timed interventions to modulate cognitive function. Future advancements in neural signal processing, particularly through deep learning and stabilization algorithms like NoMAD [23], alongside the development of more biocompatible interfaces [19], promise to enhance the stability, performance, and clinical applicability of these systems for treating memory disorders.

The development of effective closed-loop interfaces for memory triggering hinges on the precise monitoring of neural correlates of memory processes. Electrophysiological recording techniques provide the millisecond-temporal resolution necessary to track the rapid neural dynamics that underpin memory encoding, consolidation, and retrieval. Among these techniques, practitioners must choose between invasive methods, such as Electrocorticography (ECoG) and intracranial EEG (iEEG), which offer high fidelity signals directly from the brain, and non-invasive scalp EEG, which provides a more accessible but attenuated measure of cortical activity. Understanding the capabilities, limitations, and appropriate application contexts of each modality is fundamental to designing interventions, particularly those aiming to modulate memory processes in real-time. This document provides a structured comparison of these modalities and details experimental protocols for their use in memory research focused on closed-loop applications.

Intracranial EEG (iEEG) is an umbrella term that includes both ECoG (using subdural grid or strip electrodes) and stereotactic EEG (sEEG; using depth electrodes). These methods record electrical activity directly from the cortical surface or from deep brain structures, offering exceptional spatial and temporal resolution [24]. In contrast, scalp EEG records brain activity from electrodes on the scalp, providing a blurred summary of large-scale neural populations but remaining entirely non-invasive [25] [26]. The selection of a monitoring approach involves critical trade-offs between signal quality, spatial specificity, clinical risk, and accessibility, which this application note will explore in detail.

Technology Comparison and Selection Guidelines

The choice between invasive and non-invasive monitoring approaches requires a careful consideration of technical specifications and practical constraints. The following tables summarize the key characteristics of each modality to guide researchers in selecting the appropriate technology for their specific memory monitoring and intervention goals.

Table 1: Technical and Performance Specifications for EEG Modalities in Memory Research

| Feature | Scalp EEG (Non-Invasive) | iEEG/ECoG (Invasive) |

|---|---|---|

| Spatial Resolution | Limited (centimeter-scale); suffers from volume conduction [25] | High (millimeter-scale); direct neural recording [24] |

| Temporal Resolution | Excellent (millisecond range) [25] | Excellent (millisecond range) [24] |

| High-Frequency Signal Capture | Limited; signals attenuated, confounded by muscle artifact [26] [27] | Excellent; can reliably record gamma (>60 Hz) and high-frequency oscillations [27] [28] |

| Typical Coverage | Whole cortex (lateral and medial areas inferred) | Focal; determined by clinical need, often temporal/frontal lobes [24] |

| Key Memory Signal: Theta (3-8 Hz) | Detects power decreases during successful encoding [26] [27] | Detects both power increases (e.g., frontal) and decreases (e.g., broad cortical) [26] [27] |

| Key Memory Signal: Gamma (30-100+ Hz) | Can detect power increases, though with lower signal-to-noise ratio [26] [27] | Robust power increases strongly linked to successful memory encoding [27] [28] |

Table 2: Practical Considerations and Clinical Utility for EEG Modalities

| Consideration | Scalp EEG (Non-Invasive) | iEEG/ECoG (Invasive) |

|---|---|---|

| Invasiveness & Risk | Non-invasive; minimal risk | Invasive surgery carries risk of bleeding, infection [24] |

| Participant Population | Healthy volunteers and patients | Almost exclusively epilepsy patients [24] [26] |

| Data Accessibility | Highly accessible; suitable for large-N studies | Limited accessibility; few specialized centers [24] |

| Recording Environment | Controlled lab setting | Clinical hospital setting; suboptimal for cognitive testing [24] [28] |

| Chronic Ambulatory Potential | High with portable systems | Limited to short-term (days-weeks) except for chronic implants like RNS System [28] |

| Pathology Confound | Not applicable in healthy controls | Findings may be influenced by epileptic pathology and medications [24] |

| Ideal for Closed-Loop... | Proof-of-concept studies in healthy populations; Biomarker identification | Focal, high-fidelity intervention; Validation of neural signatures |

The following workflow diagram illustrates the decision-making process for selecting the appropriate electrophysiological modality based on research objectives and practical constraints.

Experimental Protocols for Memory Monitoring

Protocol 1: Associative Memory Task with Invasive Hippocampal Recording

This protocol is designed to capture the neural correlates of successful memory encoding, specifically targeting hippocampal gamma oscillations, using invasive recordings in either a traditional surgical iEEG or chronic ambulatory iEEG setting [28].

1. Objective: To quantify changes in hippocampal oscillatory power (particularly gamma band) that predict successful formation of associative memories.

2. Materials:

- Stimuli: A bank of 240+ color images of faces with neutral expression (e.g., from the Chicago Face Database). Each face is randomly paired with a single-word, emotionally neutral profession [28].

- Recording System: For surgical iEEG: Standard clinical intracranial recording system. For chronic iEEG: RNS System (NeuroPace, Inc.) with Research Accessories (RAs) for task synchronization [28].

- Environment: Surgical iEEG: Hospital room. Chronic iEEG: Quiet lab or ambulatory setting.

3. Procedure:

- Encoding Phase: Participants are shown a series of face-profession pairs. Each stimulus is presented for 5000 ms with a 1000 ms inter-stimulus interval. Participants are instructed to read the profession aloud to ensure attention and to make a mental association [28].

- Distraction Task: A brief (e.g., 2-minute) arithmetic task is administered immediately after the encoding block to prevent rehearsal.

- Cued Recall Phase: Faces from the encoding phase are presented in a randomized order without the profession. Participants are given a limited time to verbally recall the associated profession. Responses are audio-recorded for offline scoring.

- Task Calibration: The number of stimuli per block (e.g., 2-20) should be adjusted based on individual patient performance in a practice set to maximize statistical power and avoid floor/ceiling effects [28].

4. Data Analysis:

- Epoch Segmentation: Extract iEEG epochs time-locked to the onset of each face-profession stimulus during encoding.

- Trial Sorting: Separate encoding epochs into two groups based on subsequent memory performance: "Remembered" (correctly recalled in cued recall) and "Forgotten" (incorrectly recalled or missed).

- Spectral Analysis: Compute time-frequency representations for each epoch. Focus on the gamma band (e.g., 60-100 Hz) and theta band (3-8 Hz).

- Statistical Comparison: Compare spectral power between "Remembered" and "Forgotten" trials across the patient cohort. Successful encoding is typically associated with sustained increases in hippocampal gamma power approximately 1.3-1.6 seconds post-stimulus onset [28].

Protocol 2: Subsequent Memory Effect with Scalp EEG

This protocol adapts the subsequent memory paradigm for non-invasive scalp EEG, allowing for the investigation of cortical spectral correlates of memory formation in healthy participants or larger patient cohorts [26] [27].

1. Objective: To identify scalp-measured oscillatory correlates (theta and gamma) of successful memory encoding using a free-recall paradigm.

2. Materials:

- Stimuli: A large pool of common nouns (e.g., >1000 words). Words should be concrete, high-frequency nouns.

- Recording System: High-density scalp EEG system (e.g., 64+ channels). Geodesic Sensor Nets are suitable for uniform coverage [26].

- Environment: Electrically shielded, sound-attenuated room.

3. Procedure:

- Encoding Phase: Participants are presented with a series of words (e.g., 15-20 words per list). Each word is displayed for 1600-3000 ms, followed by a jittered inter-stimulus interval of 800-1200 ms. Participants may perform an incidental encoding task (e.g., animacy judgment) or simply try to remember the words [26] [27].

- Distraction Phase: Following the final word of the list, participants engage in a distractor task for ~20-30 seconds (e.g., solving sequential arithmetic problems) to minimize recency effects [26].

- Free Recall Phase: Participants are given 45-75 seconds to verbally recall as many words from the list as possible, in any order. Vocalizations are recorded and later scored manually.

4. Data Analysis:

- Preprocessing: Standard EEG preprocessing including filtering, bad channel removal, re-referencing (e.g., to linked mastoids), and Independent Component Analysis (ICA) for artifact removal (e.g., eye blinks, muscle activity).

- Epoching & Sorting: Segment data from -1000 ms to +2000 ms around each word onset during encoding. Sort trials into "Later Remembered" and "Later Forgotten" based on free recall performance.

- Spectral Decomposition: Calculate event-related spectral perturbation (ERSP) for each channel and trial. Focus on theta (3-8 Hz) and gamma (e.g., 30-100 Hz) bands.

- Statistics: Use non-parametric cluster-based permutation tests to compare spectral power between conditions across time, frequency, and channels, correcting for multiple comparisons. Expect to find gamma power increases and theta power decreases for remembered items over frontal and parietal scalp regions [26] [27].

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful execution of memory monitoring experiments requires a suite of reliable tools and resources. The following table catalogs key solutions for researchers in this field.

Table 3: Essential Research Reagents and Materials for EEG Memory Research

| Item / Solution | Function / Application | Example / Specification |

|---|---|---|

| High-Density EEG System | Non-invasive recording of scalp potentials with high spatial sampling. | 64-128 channel systems (e.g., EGI Geodesic systems, BrainAmp) [26] [29] |

| Intracranial Amplifiers | Recording of iEEG/ECoG signals in clinical settings. | Bio-Logic, Nicolet, Nihon Kohden systems (sampling rates: 256-2000 Hz) [26] |

| Chronic Ambulatory iEEG | Long-term, ambulatory intracranial monitoring for cognitive tasks. | RNS System (NeuroPace, Inc.) with Research Accessories for task synchronization [28] |

| Stimulus Presentation Software | Precise, time-locked presentation of experimental paradigms. | MATLAB with Psychophysics Toolbox, Presentation, E-Prime |

| Standardized Stimulus Sets | Consistent, validated visual or verbal stimuli for memory tasks. | Chicago Face Database [28], Penn Word Pools [26] |

| Quantitative Analysis Toolboxes | Open-source software for EEG preprocessing and feature extraction. | EEGLAB [25], FieldTrip, MNE-Python |

| Machine Learning Libraries | For building classifiers to decode memory states from neural data. | Scikit-learn (for standard ML), TensorFlow/PyTorch (for deep learning) [30] |

Signaling Pathways and Neural Workflows in Memory Encoding

The neural processes underlying successful memory encoding involve coordinated activity across specific frequency bands and brain regions. The following diagram illustrates the primary signaling pathways and their functional roles, synthesizing findings from both invasive and non-invasive studies.

Pathway Interpretation: The diagram depicts the established neurophysiological sequence leading to successful memory encoding. Sensory input triggers nearly simultaneous theta power decreases in broad neocortical regions and gamma power increases in local cortical circuits [26] [27]. The cortical theta decrease is thought to reflect a release from inhibition, enabling active information processing. This state, in turn, facilitates the crucial subsequent event: a sustained gamma power increase in the hippocampus around 1.3 to 1.6 seconds post-stimulus onset [28]. This sustained hippocampal gamma rhythm is a robust predictor of successful associative binding and is therefore a prime target for closed-loop memory intervention systems. The ultimate outcome of this coordinated cross-frequency interaction is the creation of a durable memory trace that can be accurately recalled later.

The integration of invasive and non-invasive EEG methodologies provides a complementary toolkit for deconstructing the neural dynamics of human memory. Invasive iEEG/ECoG offers unmatched signal quality for validating specific neural signatures and developing high-precision interventions, particularly within medial temporal lobe structures. Scalp EEG, while spatially blurred, provides an accessible and powerful means to study cortical dynamics and translate findings to broader populations. The experimental protocols and analytical frameworks outlined here provide a foundation for research aimed at monitoring and modulating memory function.

The future of closed-loop interfaces for memory triggering lies in the intelligent fusion of these approaches. Promising directions include using scalp EEG to identify candidate participants or general brain states, followed by targeted invasive recording and stimulation. Furthermore, the application of machine learning for real-time decoding of memory states from both iEEG and scalp EEG signals is a rapidly advancing frontier that will greatly enhance the precision and efficacy of interventions [30]. As chronic, ambulatory iEEG systems become more integrated into research, they will unlock longitudinal studies of memory function in real-world contexts, moving the field closer to viable therapeutic applications for memory disorders.

Engineering Memory Intervention: System Design, Stimulation Modalities, and Clinical Translation

The manipulation of memory processes is a central goal in neuroscience, with applications ranging from treating neurodegenerative diseases to enhancing cognitive function. This document provides application notes and detailed experimental protocols for three non-invasive stimulation modalities—transcranial Direct Current Stimulation (tDCS), Transcranial Magnetic Stimulation (TMS), and Targeted Memory Reactivation (TMR) via acoustic cues—within the framework of closed-loop interfaces. A closed-loop system monitors neural activity in real-time and delivers precisely timed stimulation to alter brain states, a approach shown to significantly enhance outcomes. For instance, one closed-loop system demonstrated a remarkable 40% improvement in new vocabulary learning compared to sham stimulation [31]. These technologies offer powerful tools for researchers investigating the mechanisms of memory encoding, consolidation, and retrieval.

The following tables consolidate key efficacy data and stimulation parameters from recent research to facilitate comparison and protocol design.

Table 1: Summary of Cognitive Efficacy from Clinical Studies

| Modality | Condition | Cognitive Outcome | Effect Size / Result | Citation |

|---|---|---|---|---|

| rTMS (on DLPFC) | Alzheimer's & Parkinson's | General Cognition (MoCA) | MD: 2.13, 95% CI [0.75, 3.52], p < 0.001 [32] | |

| rTMS (on DLPFC) | Alzheimer's & Parkinson's | General Cognition (MMSE) | MD: 1.16, 95% CI [0.91, 1.41], p = 0.0075 [32] | |

| rTMS | Depression | Working Memory & Attention | Significant improvement vs. HD-tDCS & antidepressants [33] | |

| HD-tDCS | Depression | Working Memory & Attention | Significant improvement vs. rTMS & antidepressants [33] | |

| tDCS + WMT | Schizophrenia | Working Memory (Training) | Significant improvement, gains partially sustained at 3-month follow-up [34] | |

| Acoustic TMR | Healthy Adults | Declarative Memory Consolidation | ~35% improvement in retention of cued information [35] [31] |

Table 2: Typical Stimulation Parameters for Electric and Magnetic Modalities

| Parameter | tDCS / HD-tDCS | rTMS | Acoustic TMR |

|---|---|---|---|

| Primary Target | Right DLPFC (e.g., F4) [34] | Dorsolateral Prefrontal Cortex (DLPFC) [32] | During Slow-Wave Sleep [35] [31] |

| Intensity | 2 mA [34] | Variable (device-dependent) | Subtle, non-arousing volume |

| Duration/Sessions | 10 sessions, 25 mins/session [34] | Protocol-dependent (e.g., 10-30 sessions) | Cues delivered during SWS peaks |

| Key Mechanism | Modulates resting membrane potentials [34] | Alters cortical excitability & network connectivity [32] | Reactivates and strengthens memories [35] |

Experimental Protocols

tDCS-Augmented Working Memory Training

This protocol is adapted from a double-blind, sham-controlled RCT for individuals with schizophrenia, demonstrating efficacy in enhancing working memory [34].

- Objective: To assess the effects of anodal tDCS combined with adaptive working memory training (aWMT) on working memory performance and transfer to untrained cognitive domains.

- Subjects: Individuals meeting diagnostic criteria (e.g., for schizophrenia), right-handed, stable on medication. Exclude for history of epilepsy, metallic head implants, or substance abuse.

- Materials: NeuroConn DC-Stimulator Plus (or equivalent), 35 cm² anode electrode, conductive paste, cathode electrode, computer with PsychoPy/Presentation software, cognitive assessment battery (e.g., BACS, spatial n-back).

- Procedure:

- Baseline Assessment: Conduct clinical (PANSS, CDSS) and cognitive (BACS, spatial n-back) assessments.

- Stimulation Setup:

- Place the anode electrode over the right DLPFC (site F4 according to the 10-20 EEG system).

- Place the cathode electrode on the left deltoid muscle.

- Set stimulation to 2 mA with a 15-second ramp-up/down and a 25-minute total duration.

- Use the device's integrated sham mode for the control group.

- Concurrent Training & Stimulation:

- Initiate stimulation 60 seconds before the aWMT task begins.

- Participants complete a 22-minute adaptive spatial n-back task.

- The task difficulty (n-back level) adapts based on participant accuracy (>65% increases, ≤40% decreases difficulty).

- Repeat for 10 sessions over two consecutive weeks.

- Post-Testing & Follow-up: Re-administer cognitive and clinical assessments at post-training (3-4 days), one month, and three months.

- Outcome Measures: Primary: mean n-back level during training and d' (sensitivity index) on the spatial n-back transfer task. Secondary: changes in BACS composite score and clinical scales.

Acoustic Targeted Memory Reactivation (TMR) for Memory Consolidation

This protocol details the use of auditory cues during sleep to enhance declarative memory consolidation, based on studies showing ~35% improvement in retention [35] [31].

- Objective: To determine if presenting acoustic cues associated with learned material during slow-wave sleep enhances the consolidation of those memories.

- Subjects: Healthy adults, normal hearing, no sleep disorders.

- Materials: Sound-attenuated sleep lab or controlled environment, polysomnography (PSG) or consumer-grade EEG headband for sleep staging, computer with audio playback software, learning material (e.g., word-image pairs).

- Procedure:

- Learning Phase (Evening):

- Participants learn to associate auditory cues (e.g., unique sounds or spoken words) with specific information (e.g., images or vocabulary words).

- Ensure high initial encoding performance (>75% correct recall).

- Cueing Phase (During Sleep):

- Monitor sleep online using PSG/EEG.

- During periods of stable Slow-Wave Sleep (SWS/N3), deliver the auditory cues associated with a randomly selected subset of the learned material.

- Cues should be played softly to avoid arousal.

- The uncued material serves as an within-subject control.

- Recall Test (Morning):

- Upon awakening, test participants on all learned material (both cued and uncued) without warning.

- Test order should be randomized.

- Learning Phase (Evening):

- Outcome Measures: The difference in recall accuracy between the cued and uncued memory items. A positive effect is indicated by significantly better recall for the cued items.

Diagram: Closed-Loop System for Acoustic TMR

The following diagram illustrates the workflow of a closed-loop system for acoustic Targeted Memory Reactivation.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Equipment for Memory Triggering Research

| Item | Function / Application | Example Use Case |

|---|---|---|

| DC-Stimulator Plus | Delivers precise low-current tDCS. | tDCS-augmented cognitive training studies [34]. |

| MagVenture or NeuroStar TMS | Provides repetitive magnetic pulses for non-invasive brain stimulation. | Investigating rTMS effects on cognitive networks in ND patients [32]. |

| fNIRS System | Monitors prefrontal cortical hemodynamics in real-time during cognitive tasks. | Assessing brain function changes post-stimulation in depression [33]. |

| Polysomnography (PSG) System | Gold-standard for monitoring sleep stages (EEG, EOG, EMG). | Identifying Slow-Wave Sleep for precise TMR cue delivery [35]. |

| Consumer EEG Headband | Ambulatory sleep monitoring for at-home TMR studies. | Targeted memory reactivation in ecologically valid settings [31]. |

| PsychoPy Software | Open-source package for designing and running cognitive tasks. | Implementing adaptive n-back training or memory encoding tasks [34]. |

| Conductive Electrode Paste | Ensures good conductivity and reduces impedance for tDCS/TMS. | Standard setup for all tDCS and HD-tDCS protocols [34]. |

| Cognitive Assessment Battery (e.g., BACS, MoCA) | Standardized tools to measure changes in specific cognitive domains. | Quantifying primary outcomes in clinical trials [32] [34]. |

Closed-Loop Targeted Memory Reactivation (CL-TMR) is an advanced non-invasive neuromodulation technique that enhances memory consolidation during sleep. By delivering sensory cues timed to specific phases of slow oscillations (SOs) in non-rapid eye movement (NREM) sleep, CL-TMR promotes the reactivation and strengthening of recently formed memory traces [11] [36]. This protocol details the application of CL-TMR for enhancing spatial navigation in virtual reality environments and declarative memory for word pairs, summarizing quantitative outcomes and providing a complete methodological framework for replication and adaptation in research settings.

The following tables consolidate key quantitative findings from recent CL-TMR studies, highlighting performance improvements and electrophysiological correlates across different memory domains.

Table 1: Behavioral Performance Outcomes in Spatial Navigation Tasks

| Study Reference | Sample Size (N) | Task Type | Key Performance Metric | CL-TMR Group Result | Control Group Result |

|---|---|---|---|---|---|

| Frontiers in Human Neuroscience (2018) [11] [36] | 37 (17 CL-TMR) | Virtual Reality Spatial Navigation | Navigation Efficiency Improvement | Significant Improvement Post-Sleep | Not Reported |

| Nature Communications (2025) [37] | 28 | Motor Sequence Task | Offline Change in Performance Speed (Up vs. Down) | Up: Significant Improvement vs. Down | Not-Reactivated: Significant Improvement vs. Down |

Table 2: Behavioral Performance Outcomes in Declarative Memory Tasks

| Study Reference | Sample Size (N) | Task Type | Memory Accuracy Change (Cued) | Memory Accuracy Change (Uncued) |

|---|---|---|---|---|

| Journal of Sleep Research (2025) [38] | 24 | Word-Pseudoword Association | +8.6% | -4.6% |

| npj Science of Learning (2025) - Personalized TMR [39] | 36 (12 per group) | Word-Pair Recall (Challenging Items) | Personalized: Significant reduction in memory decay vs. TMR & Control | TMR & Control: No significant improvement |

Table 3: Electrophysiological Correlates of Successful CL-TMR

| Study Reference | SO Amplitude | Spindle/Sigma Power | Key Correlates of Memory Benefit |

|---|---|---|---|

| Frontiers in Human Neuroscience (2018) [11] [36] | Not Reported | Increase in fast (12–15 Hz) spindle band spectral power | Improvement in navigation efficiency accompanied by spindle power increase |

| Nature Communications (2025) [37] | Significantly higher for Up-stimulated vs. Down-stimulated SOs | Significantly greater peak-nested sigma power for Up-stimulated SOs | Up-state cueing enhanced SO amplitude and sigma power |