Cognitive Categorization in Clinical Research: Foundational Models, Methodological Applications, and Best Practices for Drug Development

This article provides a comprehensive framework for applying cognitive categorization principles in clinical research and drug development.

Cognitive Categorization in Clinical Research: Foundational Models, Methodological Applications, and Best Practices for Drug Development

Abstract

This article provides a comprehensive framework for applying cognitive categorization principles in clinical research and drug development. It explores foundational theories from cognitive science, details their methodological application in trial design and data analysis, addresses common troubleshooting scenarios, and outlines validation strategies. Tailored for researchers, scientists, and drug development professionals, the content synthesizes current research and regulatory expectations to offer actionable best practices for enhancing precision, reliability, and communication in biomedical research.

The Cognitive Science of Categorization: Core Theories and Principles for Researchers

Core Concepts and Theoretical Frameworks

What is cognitive categorization?

Cognitive categorization is a fundamental type of cognition that involves sorting and distinguishing between different aspects of conscious experience—such as objects, events, or ideas—based on their shared traits, features, similarities, or other universal criteria [1]. It is the process of conceptual differentiation that allows humans to organize things, objects, and ideas, thereby simplifying their understanding of the world [1].

What are the primary theories explaining how we form categories?

Several key theories have been proposed to explain the mental processes behind categorization [1]:

- Classical Theory: This view posits that categories can be defined by a list of necessary and sufficient features that all members must possess. Categories have clear, definite boundaries, and all members have equal status within the category [1].

- Prototype Theory: Developed by Eleanor Rosch, this theory suggests that categorization is based on comparing items to a prototypical member—a central tendency or average representation of the category. Members are considered part of the category based on their family resemblance to this prototype [1].

- Exemplar Theory: This theory proposes that we categorize new items by comparing them to all stored memory representations of previous category members (exemplars). The similarity to these known exemplars determines category membership [1].

What are the different levels of a categorical taxonomy?

Categories are often organized into a hierarchy with three distinct levels of abstraction [1]:

- Superordinate Level: The highest, most inclusive level (e.g., "Furniture").

- Basic Level: The middle level that is cognitively most efficient; it is the level most often used in everyday speech and learned first by children (e.g., "Chair") [1].

- Subordinate Level: The lowest, most specific level (e.g., "Armchair").

Experimental Protocols & Methodologies

Detailed Protocol: Classification vs. Inference Learning Paradigm

This protocol is designed to investigate how different learning regimes affect category representation in participants of different ages [2].

- Objective: To examine whether category representation changes during development and how it is influenced by the method of learning (classification vs. inference).

- Background: Studies suggest that adults form different mental representations of the same categories depending on whether they learn by classifying items into labels or by inferring a missing feature of an item [2].

- Materials:

- A set of novel visual stimuli (e.g., simple shapes or fictional creatures) that can vary along several probabilistic features (e.g., color, shape, pattern) and one deterministic feature that perfectly predicts category membership.

- Computer software to present stimuli and record responses.

- Procedure:

- Participant Groups: Recruit participants from different age groups (e.g., 4-year-olds, 6-year-olds, and adults) [2].

- Training Phase:

- Classification Training Group: On each trial, present a stimulus and ask the participant to predict its category label (e.g., "Is this a 'Zap' or a 'Boz'?"). Provide feedback.

- Inference Training Group: On each trial, present a stimulus with its category label but with one feature missing. Ask the participant to predict the missing feature (e.g., "This is a 'Zap.' What is its tail shape?"). Provide feedback.

- Test Phase: After training, test all participants on their categorization performance and their memory for the specific training items.

- Key Variables & Analysis:

- Dependent Variables: Accuracy and reaction time during the test phase.

- Analysis: Compare performance between age groups and learning regimes. Examine whether participants relied more on the single deterministic feature (suggesting a rule-based representation) or on multiple probabilistic features (suggesting a similarity-based representation) [2].

Detailed Protocol: Investigating the Temporal Dynamics of Label Effects

This protocol uses a priming paradigm combined with neural measures to dissect when and how linguistic labels influence categorization [3].

- Objective: To determine whether linguistic labels affect early sensory encoding or later post-sensory decision-making during categorization.

- Background: A key debate is whether labels act as mere perceptual features or as supervisory signals that guide categorical decisions. These accounts make different predictions about the timing of label effects in the brain [3].

- Materials:

- Visual or auditory categorization task stimuli.

- Electroencephalogram (EEG) equipment.

- Priming stimuli (congruent labels, incongruent labels, and a baseline like pseudowords).

- Procedure:

- Priming Paradigm: Each trial consists of:

- A prime (e.g., the spoken word "Dog" or a control pseudoword) presented briefly.

- A target stimulus (e.g., a picture of a dog or a cat) that participants must categorize as quickly and accurately as possible.

- Experimental Conditions:

- Congruent prime: The label matches the target category.

- Incongruent prime: The label mismatches the target category.

- Control prime: A non-meaningful pseudoword.

- Data Collection: Record behavioral responses (accuracy, reaction time) and simultaneous EEG data.

- Priming Paradigm: Each trial consists of:

- Key Variables & Analysis:

- Behavioral Analysis: Compare reaction times and accuracy between congruent, incongruent, and control trials.

- Computational Modeling: Use Hierarchical Drift-Diffusion Modeling (HDDM) to isolate effects on the rate of evidence accumulation ("drift rate"), response caution ("boundary"), and non-decision processes [3].

- EEG Analysis: Use decoding techniques to analyze early (sensory) and late (post-sensory) neural components to pinpoint when label information influences brain activity [3].

The Scientist's Toolkit: Research Reagent Solutions

Table 1: Essential Materials and Reagents for Categorization Research

| Item Name | Function/Application in Research |

|---|---|

| Novel Visual Stimulus Sets | Used in category learning experiments to ensure participants have no prior associations. Allows control over specific features (shape, color) to test theoretical predictions [2]. |

| Eye-Tracking Apparatus | Measures where and for how long participants look during categorization tasks. Used to study attentional allocation, such as learned inattention to non-diagnostic features [2]. |

| Electroencephalogram (EEG) | Records electrical brain activity with high temporal resolution. Critical for determining the timing of cognitive processes (e.g., sensory vs. post-sensory) involved in categorization [3]. |

| Drift-Diffusion Modeling (DDM) Software | A computational modeling tool that decomposes decision-making into underlying cognitive processes (drift rate, boundary separation, non-decision time). Used to test mechanistic accounts of label effects [3]. |

Troubleshooting Guides and FAQs

FAQ: Our study failed to find a developmental difference in categorization strategies between children and adults. What could have gone wrong?

- Potential Issue 1: Inadequate Task Design.

- Solution: Ensure the task is appropriately complex for the youngest participants. Young children (under 6) often have difficulty focusing on a single relevant dimension. A task that relies heavily on selective attention may be too difficult for them, masking true developmental differences. Consider simplifying the stimuli or using a non-verbal response method [2].

- Potential Issue 2: Insufficient Power or Training.

- Solution: Children may require more training trials than adults to reach a stable level of learning. Ensure that all participants have achieved a predefined learning criterion before moving to the test phase. Also, verify that your sample size is large enough to detect the effect you are studying [2].

FAQ: We are observing high error rates in our inference learning condition across all age groups. How can we improve the protocol?

- Solution: Inference learning requires participants to map features within a category, which can be more demanding than simple classification. Make the category structure very clear during initial instructions. You can also include several practice trials with more explicit feedback to help participants understand the goal of predicting a missing feature, rather than just a label [2].

FAQ: Our EEG data is noisy, and we are having difficulty isolating the components related to label processing. What steps should we take?

- Potential Issue 1: Poor Experimental Control.

- Solution: Re-examine your priming paradigm. The timing between the prime and target (SOA) is critical. If it's too long, participants' attention may wander; if it's too short, sensory processing of the prime may not be complete. Furthermore, ensure your baseline condition (e.g., pseudowords) is well-matched to your label condition in terms of auditory complexity and length [3].

- Potential Issue 2: Inadequate Preprocessing.

- Solution: Implement a rigorous EEG preprocessing pipeline. This should include filtering to remove line noise and muscle artifacts, Independent Component Analysis (ICA) to remove blinks and eye movements, and careful manual inspection to reject epochs with residual artifacts.

Data Presentation and Visualization

Table 2: Summary of Key Findings from Developmental Categorization Studies

| Study Focus | Age Group | Key Behavioral Finding | Interpretation / Implication |

|---|---|---|---|

| Learning Regime Effects [2] | 4-year-olds | Relied on multiple probabilistic features in both classification and inference training. | Young children default to similarity-based representations, attending diffusely to many features. |

| 6-year-olds & Adults | Relied on a single deterministic feature in classification, but not in inference training. | Older children and adults can form rule-based representations, but this is dependent on task demands. | |

| Role of Selective Attention [2] | Adults (Classification) | Exhibit "learned inattention," struggling to attend to a previously ignored but now relevant dimension. | Classification learning promotes highly selective, optimized attention, which can hinder flexibility. |

| Adults (Inference) | Do not exhibit the same degree of "learned inattention." | Inference learning encourages attention to multiple features and their interrelations, promoting flexibility. | |

| Temporal Dynamics of Labels [3] | Adults | Congruent labels speed up responses; incongruent labels slow them down. EEG shows effects on late, not early, components. | Labels influence the post-sensory decision stage (supporting the "label-as-marker" account), not early sensory encoding. |

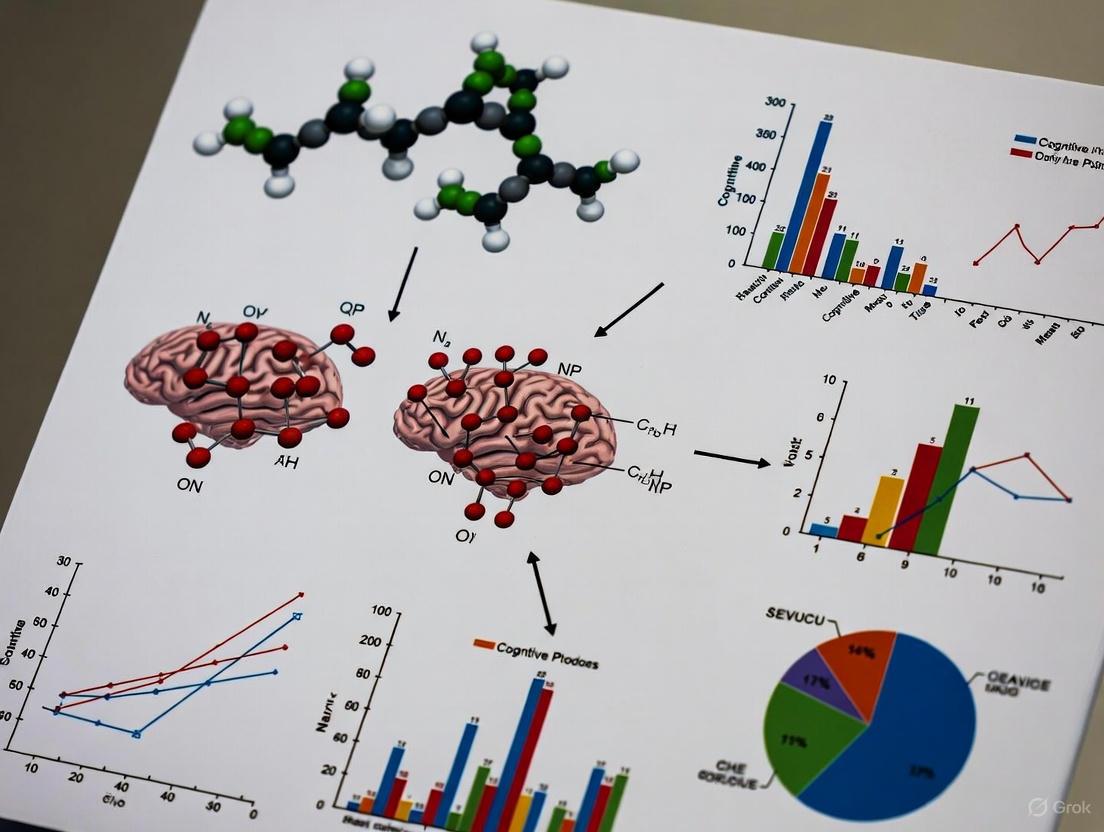

Label Influence on Categorization Pathway

Priming Experiment Workflow

Troubleshooting Guides

Poor Assay Performance in Feature-Based Screening

Problem: My high-throughput screening assay shows no window or a very weak response, making it impossible to categorize compounds effectively.

Solution: This is often an instrument setup issue.

- Confirm Filter Configuration: For TR-FRET assays, ensure the exact recommended emission filters are installed. Using incorrect filters is the most common reason for assay failure. The excitation filter has less impact on the assay window than emission filters [4].

- Verify Reagent Preparation: Differences in EC50/IC50 values between labs often trace back to differences in 1 mM stock solution preparations [4].

- Test Reader Setup: Before running your full experiment, test your microplate reader's TR-FRET setup using already purchased reagents. Refer to Terbium (Tb) Assay and Europium (Eu) Assay Application Notes for proper plate reader setup procedures [4].

- Calculate Z'-Factor: Assay window alone isn't sufficient to determine robustness. Calculate the Z'-factor, which incorporates both the assay window and data variability. Assays with Z'-factor > 0.5 are considered suitable for screening [4].

Inconsistent Clinical Categorization Across Research Sites

Problem: Multiple research sites applying the same clinical criteria categorize the same patients differently, compromising data integrity.

Solution: This typically stems from inadequate criterion specification in your rule-based system.

- Implement Formal Consensus Methods: Use structured approaches like the Delphi method or RAND/UCLA Appropriateness Method to define clearer, more precise criteria. These methods systematically organize expert judgments to supplement available evidence [5].

- Enhance Feature Definitions: In classical categorization, categories are defined by necessary and sufficient features. Ensure your clinical features are explicitly defined with clear boundaries [1] [6].

- Standardize Data Collection: Provide all sites with up-to-date literature reviews and systematic reviews to establish a common knowledge baseline, which significantly influences consistent decision-making [5].

- Conduct Pilot Testing: Before full implementation, test criteria on sample cases across sites to identify interpretation differences and refine feature definitions [5].

Failure to Distinguish Between Highly Similar Clinical Subtypes

Problem: My categorization model cannot reliably differentiate between clinically similar conditions that share many features.

Solution: This problem relates to inadequate weighting of distinctive versus shared features.

- Analyze Feature Statistics: Conduct analysis to identify which features are distinctive (true of few concepts) versus shared (true of many concepts). Concepts with more distinctive features facilitate basic-level identification [7].

- Weight Distinctive Features More Heavily: In your rule-based model, increase the weighting of features that distinguish between similar categories. Neuropsychological evidence shows that damage to distinctive feature processing specifically impairs differentiation between highly similar concepts [7].

- Consider Feature Correlations: Examine how features co-occur. Strongly correlated features speed activation in on-line comprehension tasks and may improve categorization accuracy [7].

- Implement Criterion Learning: For rule-based systems, ensure proper criterion learning on the selected dimension. The HICL model demonstrates that criterion learning is a separate cognitive operation from rule selection that significantly affects categorization performance [8].

Frequently Asked Questions

Q: What is the fundamental difference between classical and prototype categorization approaches?

A: The classical theory defines categories by necessary and sufficient features that all members must possess, with clear boundaries between categories [1] [6]. In contrast, prototype theory suggests we categorize by similarity to an ideal prototype, with members sharing a "family resemblance" rather than common invariant features [1] [6]. For clinical applications, classical approaches work better for well-defined biological categories, while prototype approaches may better capture syndromes with variable presentation.

Q: How can I determine whether to use a rule-based versus similarity-based approach for my clinical categorization system?

A: The choice depends on your specific clinical domain and application requirements. Rule-based models using explicit condition-action pairs are particularly effective for complex decision-making scenarios and when transparency is important [9]. They allow for easy modification as new evidence emerges and can identify both successful and erroneous reasoning processes [9]. Similarity-based approaches (prototype or exemplar) may perform better for pattern recognition tasks where explicit rules are difficult to define [1].

Q: Why do my categorization models perform well in validation but poorly in real-world clinical application?

A: This common issue often stems from poor data quality or contextual factors:

- Ensure Mutually Exclusive Categories: Verify that your categorical variables don't allow cases to fit multiple categories simultaneously [10].

- Address Missing Data Effectively: Use multiple imputation, regression-based predictions, or machine learning algorithms to handle missing categorical data rather than simple deletion [10].

- Validate Across Diverse Populations: Ensure your feature set generalizes across different patient demographics and clinical settings [5].

- Consider Task Demands: Research shows that conceptual processing is task-dependent - the same conceptual system can emphasize distinctive or shared features based on the categorization goal [7].

Q: What are the most common pitfalls when developing diagnostic criteria using consensus methods?

A: Based on formal consensus research, key pitfalls include:

- Inadequate Expert Selection: Groups smaller than 6 reduce reliability, while beyond 12, improvements are minimal. Include multidisciplinary experts from diverse geographical areas for more robust criteria [5].

- Poor Evidence Integration: Strictly consensus-based guidelines score lower on quality measures compared to evidence-based approaches. Always supplement expert opinion with systematic literature reviews [5].

- Insufficient Iteration: Single-round consensus methods perform worse than structured multi-round approaches like Delphi that allow experts to refine opinions based on group feedback [5].

- Ignoring Implementation Context: Criteria that work in specialist centers may fail in community settings due to different diagnostic approaches based on geographical area or available resources [5].

Experimental Protocols

Formal Consensus Development for Diagnostic Criteria

Purpose: To develop reliable diagnostic/classification criteria through structured group consensus when sufficient research evidence is unavailable [5].

Methodology (Delphi Technique):

- Problem Definition: Define the specific diagnostic categorization problem and purpose explicitly [5].

- Expert Panel Recruitment: Identify 10-30+ multidisciplinary experts from various geographic areas. Include clinicians, researchers, and potentially patients affected by the condition [5].

- Literature Review: Conduct systematic review and provide participants with relevant original publications to establish evidence baseline [5].

- Round 1: Distribute open-ended questions to elicit opinions on potential diagnostic features. Analyze responses to generate structured statements [5].

- Round 2: Circulate focused questionnaire with statements from Round 1. Participants rate agreement/disagreement. Provide feedback on Round 1 responses [5].

- Round 3: Share Round 2 results with individual participants' previous ratings. Experts reconsider and re-rate statements [5].

- Consensus Definition: Pre-specify consensus threshold (typically 80% agreement). Finalize criteria based on agreed-upon features [5].

Rule-Based Categorization Learning Experiment

Purpose: To study how humans learn and apply rule-based categorization, particularly criterion learning on a selected perceptual dimension [8].

Methodology:

- Stimulus Design: Create stimuli varying on multiple dimensions (e.g., line length, orientation, color).

- Rule Selection: Instruct participants to categorize based on one specific dimension (e.g., line length).

- Criterion Learning: Participants learn categorization criteria through feedback (e.g., "short" vs. "long" lines).

- Intra-Dimensional Shift (Experiment 1): Change the criterion on the same dimension (e.g., different length threshold) while irrelevant dimensions also change [8].

- Extra-Dimensional Shift (Experiment 2): Change the relevant dimension entirely (e.g., from length to orientation) and measure criterion learning difficulty [8].

- Data Collection: Record response times, accuracy, and learning curves across trials.

- Analysis: Use mixed-effects models incorporating participant, session, stimulus-related, and feature statistic variables [8].

Quantitative Data Analysis

Table 1: Statistical Tests for Categorical Data Analysis in Clinical Research

| Test Name | Use Case | Data Type | Sample Size | Key Advantage |

|---|---|---|---|---|

| Chi-Square Test | Assessing associations between categorical variables | Nominal or Ordinal | Large samples | Identifies patterns in data; good for preliminary research [10] |

| Fisher's Exact Test | Analyzing 2x2 tables with small sample sizes | Nominal or Ordinal | Small samples | Provides exact p-values when expected frequencies are low [10] |

| McNemar Test | Comparing paired proportions | Nominal | Dependent samples | Appropriate for pre-post study designs [10] |

| Cochran's Q Test | Comparing three or more matched proportions | Nominal | Multiple related samples | Extension of McNemar test for multiple time points [10] |

| Logistic Regression | Predicting categorical outcomes based on multiple predictors | Nominal or Ordinal | Medium to large samples | Handles multiple predictors; provides odds ratios [10] |

Table 2: Feature Statistics Influencing Categorization Performance

| Feature Statistic | Definition | Impact on Basic-Level Naming | Impact on Domain Decisions | Clinical Application |

|---|---|---|---|---|

| Feature Distinctiveness | Inverse of concepts a feature occurs in (1/n) | Facilitates faster naming [7] | Minimal positive impact [7] | Critical for differential diagnosis between similar conditions |

| Shared Features | Features occurring in many concepts in a category | Minimal positive impact [7] | Facilitates faster domain decisions [7] | Useful for determining general disease category |

| Feature Correlational Strength | Degree to which features co-occur across concepts | Strongly correlated distinctive features speed naming [7] | Strongly correlated shared features speed domain decisions [7] | Helps identify syndrome patterns where features cluster |

| Task Demands | Cognitive requirements of specific categorization task | Determines whether distinctive or shared features are emphasized [7] | Determines whether distinctive or shared features are emphasized [7] | Different clinical tasks (screening vs. differential) require different approaches |

Research Reagent Solutions

Table 3: Essential Research Reagents for Categorization Studies

| Reagent/Resource | Function | Application Example | Considerations |

|---|---|---|---|

| LanthaScreen TR-FRET Reagents | Time-resolved fluorescence resonance energy transfer detection | Kinase activity assays; compound screening [4] | Requires specific emission filters; uses Terbium (Tb) or Europium (Eu) donors |

| Z'-LYTE Assay Kit | Fluorescent kinase assay using differential peptide cleavage | Measuring compound inhibition; phosphorylation studies [4] | Development reagent concentration critical; 10-fold ratio difference expected between controls |

| OneHotEncoder (scikit-learn) | Converts categorical variables to binary matrix | Preparing categorical clinical data for machine learning [10] [11] | Prevents ordinal assumption; creates additional features |

| LabelEncoder (scikit-learn) | Converts category labels to numerical values | Preprocessing ordinal clinical data [10] | Only for ordinal data; may introduce false ordinal relationships if used for nominal data |

| FineBI Business Intelligence Tool | Self-service data visualization and analysis | Exploring categorical data patterns; creating dashboards [10] | Over 60 chart types; supports collaborative analysis |

Visualization Diagrams

Core Concepts & Diagnostic Tools

What are the fundamental differences between prototype and exemplar representations in category learning?

Prototype and exemplar theories offer competing explanations for how individuals form and use mental categories.

- Prototype Theory: This posits that categories are represented by a central tendency or prototype. This prototype is an abstract summary that contains the most common features of all category members. Categorization of a new item is based on its similarity to this single prototype [12].

- Exemplar Theory: This proposes that category learning relies on memorized representations of individual exemplars. Instead of comparing a new item to an abstract prototype, individuals categorize it based on its collective similarity to all stored examples of each category [12].

Researchers can distinguish which strategy a participant is using through carefully designed diagnostic stimuli. In the classic 5/4 and novel 5/5 task structures, two specific stimuli, A1 and A2, are used for this purpose. The theories make opposite predictions about which stimulus will be categorized more accurately, allowing you to diagnose the underlying cognitive strategy [12].

Table: Comparing Prototype and Exemplar Theories

| Aspect | Prototype Theory | Exemplar Theory |

|---|---|---|

| Core Representation | Single, abstract prototype (central tendency) | Multiple, stored individual exemplars |

| Categorization Process | Compare item to prototype | Compare item to all stored exemplars |

| Memory Demand | Lower (one representation per category) | Higher (many representations per category) |

| Prediction for A1 (1110) | High accuracy (3 features match A-prototype) | Lower accuracy (similar to some B exemplars) |

| Prediction for A2 (1010) | Lower accuracy (2 features match A-prototype) | High accuracy (similar to other A exemplars) |

How do I know if my experiment is biased toward prototype or exemplar strategies?

The design of your category structure significantly influences which strategy participants adopt. A key factor is category coherence.

- High Coherence → Prototype Strategy: When members of a category are all relatively similar to each other and to a central prototype, the prototype becomes a more efficient representation. Studies show that increasing category coherence promotes a shift toward prototype use [12].

- Low Coherence → Exemplar Strategy: When category members are more dissimilar from one another, no single prototype is a good summary. In these cases, an exemplar strategy, which relies on the specific instances, is more effective [12].

The 5/5 category learning task was specifically developed to create a strong, coherent category structure that makes the prototype more salient and thus encourages prototype-based learning [12].

Experimental Protocols & Setup

What is a validated experimental protocol for studying prototype and exemplar strategies?

The following methodology, adapted from recent research, provides a robust framework for investigating these categorization strategies [12].

1. Task Selection: The 5/5 Categorization Task This task is an optimized version of the well-known 5/4 task. It uses two categories (A and B) composed of stimuli varying along four binary-valued dimensions. The key improvement is the addition of a fifth stimulus in Category B, which eliminates an ambiguity in the Category B prototype and increases the diagnostic strength of all dimensions [12].

Table: 5/5 Category Structure with Diagnostic Stimuli

| Category | Stimulus | Dimension 1 | Dimension 2 | Dimension 3 | Dimension 4 |

|---|---|---|---|---|---|

| A | A0 (Prototype) | 1 | 1 | 1 | 1 |

| A1 (Diagnostic) | 1 | 1 | 1 | 0 | |

| A2 (Diagnostic) | 1 | 0 | 1 | 0 | |

| A3 | 1 | 1 | 0 | 1 | |

| A4 | 1 | 0 | 1 | 1 | |

| A5 | 0 | 1 | 1 | 1 | |

| B | B0 (Prototype) | 0 | 0 | 0 | 0 |

| B1 | 0 | 0 | 0 | 1 | |

| B2 | 0 | 0 | 1 | 0 | |

| B3 | 0 | 1 | 0 | 0 | |

| B4 | 1 | 0 | 0 | 0 | |

| B5 | 1 | 0 | 0 | 1 |

2. Stimuli and Presentation

- Stimulus Type: Use schematic, easy-to-distinguish stimuli like "robot" figures. Each of the four binary dimensions can be mapped to a distinct physical feature (e.g., antenna shape, ear type, eye shape, base form) [12].

- Procedure: In each trial, present a single stimulus on screen. The participant presses a key (e.g., 'F' for Category A, 'J' for Category B) to categorize it. After the response, provide immediate corrective feedback (e.g., "Right" or "Wrong") [12].

- Design: Present all training stimuli multiple times in a random order across several blocks to track learning over time.

3. Data Analysis and Computational Modeling

- Diagnostic Stimuli Analysis: Compare accuracy rates for the critical A1 and A2 stimuli. A significant advantage for A1 suggests a prototype strategy, while an advantage for A2 suggests an exemplar strategy [12].

- Computational Modeling: Fit participant responses to formal models to quantitatively identify their strategy.

Troubleshooting & Data Interpretation

My participants are not learning the categories. What could be wrong?

Problem: Low overall accuracy.

- Check Stimulus Discriminability: Ensure the physical features representing each dimension are highly distinct and easy to tell apart. Avoid using overly similar shapes or colors.

- Verify Feedback Clarity: Ensure the feedback ("Right"/"Wrong") is displayed clearly and for a sufficient duration.

- Review Task Instructions: Confirm that instructions clearly explain the goal is to learn the categories through trial and error.

Problem: No clear strategy emerges from the diagnostic stimuli or modeling.

- Check Category Coherence: Your category structure might be too difficult or not coherent enough. The 5/5 structure is recommended for its strong prototype [12].

- Analyze Learning Over Time: Strategy use can shift. A participant might start with exemplars and transition to a prototype. Fit your models to data from later blocks once learning has stabilized, or analyze blocks separately [12].

- Individual Differences: Accept that some participants may not show a strong preference for either strategy. A subgroup of learners often simultaneously forms both representation types, leading to mixed results [13].

The computational models fit my data equally well. How should I proceed?

This is a common and expected outcome, as both models are often powerful and can mimic each other's predictions.

- Focus on Diagnostic Stimuli: The A1 vs. A2 comparison provides a model-free measure of strategy that is less susceptible to overfitting. Let this be your primary diagnostic tool [12].

- Use Bayesian Analysis: Consider using Bayesian model comparison methods, which can provide more robust evidence for one model over another by penalizing model complexity.

- Embrace Coexistence: Your results may genuinely reflect that participants are using a mixture of both strategies. The brain can form prototype and exemplar representations simultaneously in different neural areas [13].

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Materials for Prototype-Exemplar Experiments

| Item Name | Function / Description | Example from Literature |

|---|---|---|

| 5/5 Stimulus Set | A set of 10 stimuli constructed from a 4-feature, binary-dimensional space. Serves as the core input for the categorization task. | "Robot" figures with varying antennae, ears, eyes, and bases [12]. |

| Diagnostic Stimuli (A1, A2) | Critical test items used to dissociate prototype-based from exemplar-based categorization performance. | In the 5/5 structure, A1 (1110) and A2 (1010) are the key diagnostic pair [12]. |

| Generalized Context Model (GCM) | A computational model that formalizes the exemplar theory. Used to fit response data and quantify evidence for an exemplar strategy. | The model calculates categorization probability based on summed similarity to all stored exemplars [12]. |

| Multiplicative Prototype Model (MPM) | A computational model that formalizes the prototype theory. Used to fit response data and quantify evidence for a prototype strategy. | The model calculates categorization probability based on similarity to a single category prototype [12]. |

| fMRI Paradigm | A functional imaging protocol to localize neural correlates of prototype and exemplar representations. | Used to identify prototype representations in visual/parietal areas and exemplar representations in visual areas/hippocampus [13]. |

Frequently Asked Questions (FAQs)

FAQ 1: What is a hybrid model in the context of clinical decision-making? A hybrid model combines knowledge-based approaches (using pre-defined rules and expert knowledge, like IF-THEN statements) with non-knowledge-based approaches (using artificial intelligence (AI) and machine learning (ML) to learn patterns from data) [14]. This synergy leverages existing process knowledge and information from collected data to create more robust and reliable decision-support tools [15].

FAQ 2: My model is producing inconsistent category boundaries for ambiguous cases. What could be the cause? Inconsistent category boundaries can stem from drift in choice bias during the learning process. Research on behavioral strategies shows that variability in an individual's stimulus-independent choice bias during training correlates with variability in their final category boundary for ambiguous stimuli [16]. To address this:

- Track bias over time: Use statistical models, like a Generalized Linear Model (GLM), to isolate and monitor the choice bias throughout the learning phase.

- Analyze strategy clusters: Employ clustering algorithms (e.g., Dynamic Time-Warping) to identify if learning trajectories are "stationary" or "drifting," as these patterns significantly impact the stability of the learned boundary [16].

FAQ 3: How can I improve my hybrid model's performance when clinical data is limited? Biopharmaceutical and clinical settings are often data-limited due to the resource intensity of experiments [15]. A hybrid modeling paradigm is particularly advantageous here.

- Use a serial architecture: Model fragments of the knowledge-based system with data-driven models. This uses machine learning to fill specific gaps in your theoretical understanding [15].

- Incorporate reinforcement learning: Frame the decision process as a learning task. Models with parameters for learning rate, initial bias, and a choice-history parameter can capture how decisions are updated based on previous choices, which can inform long-term learning even with sparse data [16].

FAQ 4: What is a common pitfall when implementing a CDSS with hybrid components? A major risk is alert fatigue from poorly implemented decision support, such as drug-drug interaction (DDI) alerts. Studies show high variability in how alerts are displayed (passive vs. active/disruptive) and a high level of irrelevant alerts, which can cause clinicians to ignore critical warnings [14].

- Mitigation Strategy: Follow curated, high-priority lists for alerts (e.g., from the US Office of the National Coordinator for Health Information Technology) and ensure alerts are targeted, relevant, and integrated seamlessly into the clinical workflow [14].

▼ Experimental Protocols & Data

Table 1: Quantifying Learning Trajectories and Category Boundaries

Table summarizing key quantitative findings from mouse auditory categorization studies, illustrating the relationship between learning strategy and outcome [16].

| Metric | Average Value (±SEM) | Correlation with Boundary Variability (ρ) | p-value | Interpretation |

|---|---|---|---|---|

| Trials to Learning Criterion | 6844 ± 673 (N=19) | - | - | Task acquisition is a long-term process. |

| Initial Accuracy Asymmetry | 3.2% ± 30.3% (N=19) | - | 0.803 | No consistent initial category preference across subjects. |

| GLM Choice Bias Variability | 22.9% ± 11.1% of sessions (N=19) | 0.67 (with boundary variability) | 0.002 | Drift in choice bias predicts boundary instability. |

| Psychometric Slope Variability | - | 0.44 | 0.07 | Choice bias drift is not strongly linked to slope changes. |

Protocol 1: Auditory Categorization Task for Strategy Analysis This protocol is used to study how individual learning strategies inform the categorization of ambiguous stimuli [16].

- Subjects: Mice (or other model organisms).

- Apparatus: A two-alternative forced-choice (2AFC) setup with a response wheel.

- Training Stimuli: Use extreme examples from two categories (e.g., low-frequency tones: 6–10 kHz; high-frequency tones: 17–28 kHz).

- Testing Stimuli: After reaching a performance threshold (e.g., 75% accuracy), introduce novel, ambiguous stimuli in an intermediate range (e.g., 10–17 kHz). These trials are not rewarded.

- Data Collection: Record all choices and response times over several weeks of training.

- Analysis:

- Isolate Choice Bias: Fit a Generalized Linear Model (GLM) to extract the stimulus-independent component of decision-making.

- Cluster Trajectories: Apply Dynamic Time-Warping (DTW) clustering to group individuals based on their choice bias drift over time.

- Correlate with Outcome: Correlate the variability in the GLM choice bias at the end of training with the variability of the psychometric category boundary across testing sessions.

Table 2: Key Features of Knowledge-Based and Non-Knowledge-Based CDSS

Comparison of the two primary components integrated within a clinical decision support hybrid model [14].

| Feature | Knowledge-Based CDSS | Non-Knowledge-Based CDSS |

|---|---|---|

| Core Logic | Pre-programmed IF-THEN rules | AI, Machine Learning, Statistical Pattern Recognition |

| Basis | Literature-based, practice-based, patient-directed evidence | Learned from historical and real-time data |

| Explainability | High (Transparent logic) | Low ("Black box" nature) |

| Data Dependency | Lower (Relies on curated knowledge) | High (Requires large, high-quality datasets) |

| Common Use Cases | Drug-drug interaction alerts, clinical guideline adherence | Predictive risk stratification, complex pattern recognition |

Protocol 2: Framework for Developing a Hybrid Model for Biopharmaceutical Processes A step-by-step guide for building a hybrid model, adaptable for various clinical and research applications [15].

- Define Model Purpose: Clearly specify the clinical or process question (e.g., "optimize drug-target interaction prediction").

- Leverage Existing Knowledge: Formalize available process knowledge or clinical guidelines into a knowledge-based framework (e.g., system differential-algebraic equations).

- Strategic Data Collection: Collect data strategically to cover the design space, acknowledging resource constraints. Pre-process data (e.g., text normalization, tokenization, lemmatization for textual data).

- Feature Extraction: Use techniques like N-Grams and Cosine Similarity to assess semantic proximity and extract meaningful features from complex data [17].

- Model Architecture Selection:

- Serial Architecture: Use a data-based model (e.g., a Random Forest or Logistic Regression) to model a specific, poorly understood fragment of the knowledge-based model. The output of the data-based component becomes an input for the knowledge-based equations.

- Parallel Architecture: Run knowledge-based and data-driven models simultaneously. Aggregate their predictions (e.g., via weighted average) to produce the final output.

- Implementation & Validation: Implement the model using appropriate programming environments (e.g., Python). Validate model performance against a hold-out test set and, where possible, through experimental or clinical confirmation.

▼ The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Categorization and Decision-Making Experiments

A list of key resources used in the featured experiments and their functions.

| Item | Function / Description | Example Use Case |

|---|---|---|

| Two-Alternative Forced Choice (2AFC) Setup | A behavioral apparatus where subjects must choose between two alternatives to report their decision. | Auditory or visual categorization tasks in model organisms [16]. |

| Generalized Linear Model (GLM) | A statistical model used to isolate and quantify the stimulus-independent components of decision-making, such as choice bias. | Analyzing behavioral data to track drift in category preference over time [16]. |

| Dynamic Time-Warping (DTW) Clustering | An algorithm that measures similarity between temporal sequences that may vary in speed, used to cluster learning trajectories. | Identifying subgroups of subjects ("Stationary" vs. "Drifting") based on their learning strategy [16]. |

| Reinforcement Learning Model | A computational framework that models how an agent learns to make decisions by maximizing cumulative reward. | Probing how choice-history and reward outcomes drive learning in categorization tasks [16]. |

| Cosine Similarity & N-Grams | Feature extraction techniques used in natural language processing to quantify semantic similarity between text passages. | Evaluating textual relevance and identifying drug-target interactions in drug discovery [17]. |

| Ant Colony Optimization (ACO) | An optimization algorithm used for feature selection, mimicking the behavior of ants seeking paths to food. | Optimizing the feature set for predictive models in drug discovery pipelines [17]. |

▼ Model Architecture Diagrams

Hybrid Model Structures

Decision Workflow Analysis

FAQs: Core Concepts and Definitions

FAQ 1.1: What is the relationship between cognitive categorization and defining patient populations?

Cognitive categorization is a fundamental cognitive process involving the grouping of objects, concepts, or events based on shared characteristics to simplify understanding [1]. Applying this to healthcare, a patient population is a collection of individuals grouped by specific health conditions, demographics, or geographic features [18]. The relationship is foundational: the cognitive frameworks we use to categorize the world (e.g., classical, prototype theories) directly inform the methodologies for creating coherent and clinically useful patient groups. Effective patient segmentation uses categorization principles to divide a population into distinct groups with similar healthcare needs, characteristics, or behaviors, enabling tailored care delivery [19] [20].

FAQ 1.2: What are the primary limitations of current Patient Classification Systems (PCS)?

Current Patient Classification Systems often exhibit several key limitations [21]:

- Nursing-Centric Focus: They frequently fail to capture the contributions of interdisciplinary teams (e.g., physiotherapy, occupational therapy), which is critical in settings like rehabilitation.

- Inadequate Capture of Complexity: They systematically omit time-intensive, crucial services such as team-based care planning, patient coordination, and family education.

- Focus on Service Utilization: Many systems are designed primarily to predict service use and costs, rather than being grounded in patient-centered needs and clinical priorities [19]. This can lead to inaccurate workload assessments and inefficient resource allocation [21].

FAQ 1.3: How can a better understanding of categorization improve patient segmentation?

Moving beyond simplistic stratification requires insights from cognitive science and other industries [19]:

- From Cognitive Science: Adopting a "prototype" or "exemplar" approach can help form segments around patients with similar healthcare needs, rhythms of needs, and priorities, rather than just single diagnoses.

- From Marketing: Incorporating patient preferences and behaviors, not just clinical risks, can help design services that people are willing to engage with.

- From Operations Management: Applying "process thinking" ensures the segmentation logic aligns with efficient care pathways, minimizing waits and streamlining resources.

Troubleshooting Guides

Issue: Patient Segments Are Not Clinically Meaningful

Problem: The defined patient segments do not resonate with clinicians, fail to predict patient needs accurately, or are too broad to inform care model design.

Solution: Implement a segmentation logic that integrates multiple data types and is guided by clinical expertise.

Experimental Protocol: Developing a Clinically Meaningful Segmentation Framework

- Objective: To develop and validate a patient segmentation system that accurately reflects clinical complexity and patient needs for a rehabilitation hospital setting [21].

- Methodology:

- Stage 1: Systematic Scoping Review. Conduct a review to identify key components of Patient Classification Systems (PCS) from existing literature. Use frameworks like Arksey and O'Malley's and report via PRISMA-ScR guidelines [21].

- Stage 2: Structured Expert Panel. Convene a multidisciplinary panel including clinicians, administrators, and patients. Employ a modified Delphi technique to build consensus on a preliminary PCS framework, integrating evidence from Stage 1 with frontline clinical experience [21].

- Stage 3: Pilot Validation.

- Pilot Implementation: Apply the preliminary PCS in a live rehabilitation setting.

- Inter-rater Reliability: Assess using Cohen's Kappa to ensure different clinicians classify the same patient consistently.

- Criterion Validity: Test the PCS against established clinical tools like the Functional Independence Measure (FIM) or Barthel Index to ensure it measures what it intends to measure [21].

- Expected Outcome: A validated, context-specific PCS that enhances workload measurement accuracy and promotes equitable resource distribution.

Issue: Segmentation Fails to Inform Efficient Service Delivery

Problem: Segmentation identifies patient groups but does not lead to improved care workflows or resource allocation.

Solution: Shift from a segmentation based solely on patient risks to one that matches patient needs with a "production logic" for service delivery [19].

Experimental Protocol: Designing Service Lines Based on Patient Segments

- Objective: To redesign care workflows and resource allocation based on distinct patient segments to improve efficiency and outcomes [19].

- Methodology:

- Step 1: Define Segments by Production Logic. Adopt a segmentation model that groups patients based on the type of medical knowledge and care logic required. Example segments include [19]:

- Healthy persons

- Persons with incidental needs

- Persons with chronic conditions

- Persons with multiple health problems (often elderly)

- Persons needing precise elective interventions

- Step 2: Map Care Pathways. For each segment, diagram the ideal patient journey, specifying the required resources, key decision points, and responsible team members. The diagram below illustrates a generalized workflow for patient categorization and service allocation.

- Step 3: Implement and Monitor. Create separate service lines or "fast tracks" for each major segment (e.g., a dedicated clinic for chronic condition management). Monitor key metrics such as waiting times, patient outcomes, complications, and staff satisfaction [19].

- Step 1: Define Segments by Production Logic. Adopt a segmentation model that groups patients based on the type of medical knowledge and care logic required. Example segments include [19]:

- Expected Outcome: Streamlined patient flows, reduced waiting times, more efficient use of resources, and improved clinical outcomes.

Diagram Title: Patient Categorization and Care Pathway Workflow

Data Presentation

Quantitative Data on Patient Segmentation

Table 1: Comparison of Patient Segmentation Approaches and Outcomes

| Segmentation Approach | Key Segmentation Variables | Number of Segments | Reported Outcomes | Key Limitations |

|---|---|---|---|---|

| Needs/Risk-Based (Traditional) [19] | Condition/diagnosis, age, service utilization, costs, frailty [19] | 4-20 segments typical (some systems have up to 269) [19] | Targets high-risk patients; Aims to reduce ED visits & hospital admissions [19] | Does not inherently inform service design; Often misses patient priorities [19] |

| Production Logic-Based [19] | Medical knowledge needed, patient's ability to self-manage, type of care required (e.g., elective, chronic) [19] | 7 segments proposed [19] | Improved medical outcomes, higher service quality, fewer complications, better resource efficiency [19] | Less focus on demographic or socioeconomic risk factors |

| Patient-Centered (e.g., CMS) [19] | Health prospects and patient priorities [19] | 8 segments proposed [19] | Aims for care that is safe, timely, effective, efficient, equitable, and patient-centered [19] | Requires deep understanding of patient goals beyond clinical data |

| High-Need, High-Cost Focus [20] | Multiple chronic conditions (3+), functional status, healthcare spending | Varies | Targets group with avg. spending >$21,000/year (4x avg. adult) to decrease costs [20] | Focusing on cost alone overlooks differing personal needs and characteristics [20] |

Table 2: Essential Research Reagent Solutions for Categorization Research

| Research Reagent / Tool | Function / Role in Research |

|---|---|

| Electronic Health Record (EHR) Data [20] | Primary data source for patient characteristics, diagnoses, service utilization, and costs used in data-driven segmentation. |

| 3M Clinical Risk Groups (CRGs) [20] | A population classification system that uses diagnosis, procedure, pharmaceutical, and functional status data to segment patients into 272 groups for risk analysis. |

| Johns Hopkins Adjusted Clinical Groups (ACGs) [20] | Offers a patient segmentation tool (Patient Need Groups - PNGs) that groups individuals based on specific health needs, characteristics, and behaviors. |

| Geographic Information Systems (GIS) [20] | Software that maps patient location data with community-level data on behaviors and health spending to create geographic health profiles. |

| Functional Independence Measure (FIM) [21] | A validated clinical tool used to assess patient disability and functional status, often used to establish the criterion validity of a new Patient Classification System. |

Advanced Analytical Protocols

Protocol: Validating a Novel Patient Classification System

This protocol details the rigorous validation process for a new Patient Classification System (PCS) as outlined in contemporary research [21].

Objective: To ensure a newly developed PCS is reliable, valid, and applicable for use in a specific healthcare setting (e.g., rehabilitation).

Methodology:

Pilot Implementation:

- Apply the preliminary PCS framework to a representative sample of patients in the target setting.

- Ensure multiple, independent raters (e.g., nurses, therapists) use the system to classify the same patients.

Reliability Testing:

- Metric: Inter-rater reliability using Cohen's Kappa (κ).

- Procedure: Calculate Kappa to measure the level of agreement between different raters beyond what would be expected by chance. A high Kappa value indicates the classification criteria are clear and objective, leading to consistent application.

Validity Testing:

- Type: Criterion Validity.

- Procedure: Statistically compare the classifications or scores generated by the new PCS against those from established, gold-standard clinical assessment tools.

- Tools: The Functional Independence Measure (FIM) and the Barthel Index are examples of tools used to validate a PCS in a rehabilitation context [21]. A strong correlation provides evidence that the PCS is measuring the underlying construct of patient care needs accurately.

Significance: This validation protocol is critical for ensuring that the PCS does not just create categories, but that these categories are applied consistently (reliably) and reflect the true complexity of patient needs (validity), thereby ensuring trustworthy data for staffing and resource allocation [21].

Implementing Categorization Frameworks in Clinical Trial Design and Analysis

Frequently Asked Questions

Q1: What is the role of categorization in clinical trial design? Categorization is a fundamental cognitive process used to structure key components of a trial, such as eligibility criteria and endpoints. By applying systematic categorization, researchers can minimize ambiguity, reduce bias, and ensure that the trial measures what it intends to. This creates a more robust and interpretable framework for screening participants and assessing outcomes [16] [22].

Q2: How can machine learning improve the classification of eligibility criteria? Machine learning can automatically classify free-text eligibility criteria into structured semantic categories. This process uses natural language processing (NLP) to identify and tag terms with concepts from medical knowledge systems like the Unified Medical Language System (UMLS). One ensemble method that integrates multiple pre-trained models (BERT, RoBERTa, XLNet, etc.) achieved a high classification performance with an F1-score of 0.8169 [23] [24]. This automation enhances the consistency and efficiency of criteria review and patient pre-screening.

Q3: What is the difference between a clinical endpoint and a surrogate endpoint? A clinical endpoint directly measures how a patient feels, functions, or survives (e.g., overall survival). A surrogate endpoint is an indirect measure (e.g., a biomarker like blood pressure) that is used to predict clinical benefit. Surrogate endpoints can accelerate trials, but they must be validated to ensure they reliably predict the true clinical outcome of interest [25] [26].

Q4: Why is endpoint adjudication necessary? An independent Endpoint Adjudication Committee (also called a Clinical Events Committee) classifies clinical outcomes in a trial in a blinded and standardized manner. This process significantly reduces variability in event reporting across different trial sites and investigators, strengthening the overall quality and credibility of the trial data [22].

Q5: What is a common pitfall when defining eligibility categories? A common pitfall is using task-dependent or manually defined categories that do not generalize. This can lead to inconsistency. A best practice is to use a semi-automated approach, like hierarchical clustering based on a shared semantic feature representation (e.g., UMLS semantic types), to induce standardized, generalizable categories from a large corpus of existing criteria [23].

Troubleshooting Guides

Problem: Inconsistent Application of Eligibility Criteria

Issue: Different researchers or trial sites interpret the same eligibility criterion differently, leading to an inconsistent study population. Solution:

- Structured Categorization: Implement a pre-defined, standardized categorization system for criteria. Use the UMLS to annotate criteria with unambiguous semantic types [23].

- Automated Pre-Screening: Develop or utilize an automated classifier to map patient data to these structured criteria categories, reducing subjective interpretation [24].

- Centralized Review: For complex trials, consider a central committee to review eligibility decisions for borderline cases, similar to endpoint adjudication [22].

Problem: High Variability in Endpoint Assessment

Issue: Reported clinical endpoints (e.g., "disease progression") are subjective and vary between clinical investigators. Solution:

- Blinded Adjudication: Establish an independent Clinical Endpoint Adjudication Committee. This committee, composed of experts blinded to treatment assignment, applies pre-defined, objective definitions to classify all potential endpoint events [22].

- Precise Definitions: In the study protocol, define endpoints with maximum objectivity. For example, instead of "disease progression," specify "≥20% increase in the sum of diameters of target lesions as per RECIST 1.1 criteria" [25].

Problem: Choosing an Inappropriate Primary Endpoint

Issue: The selected primary endpoint does not directly answer the main research question or is not acceptable to regulatory bodies. Solution:

- Align with Objective: Ensure the primary endpoint is a direct measure of the trial's primary objective. For a survival benefit, Overall Survival (OS) is the gold standard [25].

- Validate Surrogates: If using a surrogate endpoint like Progression-Free Survival (PFS), ensure its use is justified by prior evidence showing a strong correlation with the ultimate clinical benefit (e.g., OS) in the specific disease and treatment context [26].

- Consult Guidelines: Refer to FDA guidelines and approved biomarker lists to select endpoints that are recognized as valid in your therapeutic area [26].

Experimental Protocols & Data

Protocol 1: Inducing Semantic Categories for Eligibility Criteria

This methodology describes a semi-automated process for creating a standardized taxonomy from free-text eligibility criteria [23].

- Semantic Annotation: Use a semantic annotator to parse a large corpus of eligibility criteria and identify all UMLS-recognizable terms.

- Ambiguity Resolution: Apply semantic preference rules to resolve ambiguity, selecting the most specific UMLS semantic type for each term.

- Feature Representation: Transform each criterion into a feature vector where the value for each semantic type is its normalized frequency within the criterion.

- Hierarchical Clustering: Apply a Hierarchical Agglomerative Clustering (HAC) algorithm to the feature matrix. Use the Pearson correlation coefficient to assess similarity between criteria and iteratively merge the most similar clusters.

- Category Induction: Analyze the resulting cluster tree (dendrogram) to induce a final set of semantic categories.

Table 1: Classification Performance of Different Machine Learning Models on Eligibility Criteria Text

| Classifier Name | Precision | Recall | F1-Score |

|---|---|---|---|

| Ensemble Model (BERT, etc.) [24] | 0.8229 | 0.8216 | 0.8169 |

| J48 [23] | Information Not Available | Information Not Available | Best Performance |

| Bayesian Network [23] | Information Not Available | Information Not Available | Best Learning Efficiency |

| Naïve Bayesian [23] | Information Not Available | Information Not Available | Information Not Available |

| Nearest Neighbor (NNge) [23] | Information Not Available | Information Not Available | Information Not Available |

Protocol 2: Endpoint Adjudication Workflow

This protocol outlines the steps for an independent committee to classify clinical endpoints [22].

- Charter Development: Before the trial begins, draft a charter detailing the adjudication process, committee composition, and precise, objective definitions for all endpoints of interest.

- Event Identification: The committee receives potential endpoint events from the trial's clinical investigators.

- Blinded Review: Committee physicians, blinded to the participant's treatment assignment and investigator's assessment, independently review the source documentation (e.g., medical records, lab reports, imaging).

- Initial Classification: Each reviewer classifies the event according to the pre-defined criteria in the charter.

- Consensus Building: If the initial classifications disagree, the reviewers meet to discuss the case and reach a consensus. If consensus cannot be reached, a third reviewer or the full committee makes the final determination.

Table 2: Common Clinical Endpoints in Oncology and Their Definitions [25]

| Endpoint | Abbreviation | Definition |

|---|---|---|

| Overall Survival | OS | The time from randomization until death from any cause. |

| Progression-Free Survival | PFS | The time from randomization until the first evidence of disease progression or death. |

| Time to Progression | TTP | The time from randomization until the first evidence of disease progression (deaths are censored). |

| Disease-Free Survival | DFS | The time from randomization until evidence of disease recurrence (used in adjuvant settings). |

| Event-Free Survival | EFS | The time from randomization until any predefined event (e.g., progression, treatment discontinuation, death). |

Workflow Visualization

Eligibility Criteria Classification

Endpoint Adjudication Process

The Scientist's Toolkit: Key Research Reagents & Solutions

Table 3: Essential Resources for Categorization in Trial Design

| Tool / Resource | Function / Explanation | Example / Source |

|---|---|---|

| Unified Medical Language System (UMLS) | A comprehensive knowledge base that provides a standardized set of semantic types and concepts for representing biomedical meaning, essential for creating a common feature space for text analysis [23]. | U.S. National Library of Medicine |

| Pre-trained NLP Models (BERT, RoBERTa) | Deep learning models pre-trained on large text corpora that can be fine-tuned to perform specific classification tasks, such as categorizing eligibility criteria text with high accuracy [24]. | Hugging Face Transformers, Google AI |

| Hierarchical Agglomerative Clustering (HAC) | A "bottom-up" clustering algorithm used to induce a taxonomy or category structure from a set of data points without pre-defined labels, ideal for discovering inherent groups in eligibility criteria [23]. | Scikit-learn, SciPy |

| Clinical Endpoint Adjudication Charter | A formal document that pre-defines the objective criteria and standard operating procedures for an independent committee to classify clinical events, ensuring consistency and reducing bias [22]. | Internal Study Document |

| Cognitive Diagnostic Models (CDMs) | Psychometric models that provide fine-grained diagnostic information about the specific knowledge structures and cognitive processes required to answer test items; can be adapted to analyze cognitive demands of trial protocols [27]. | Research Software (e.g., R packages like CDM) |

Leveraging Categorization for Knowledge Organization and Inductive Reasoning

Troubleshooting Guide & FAQs

This technical support center addresses common experimental challenges in cognitive and pharmaceutical categorization research, providing evidence-based solutions grounded in current literature.

FAQ 1: My animal subjects are exhibiting high variability in learned category boundaries. What could be the cause?

- Issue: High inter-subject variability in the consistency of category boundaries for ambiguous stimuli.

- Investigation & Solution: This is a recognized phenomenon in individual learning trajectories. Research on auditory categorization in mice shows that variability in an animal's stimulus-independent choice bias during the final stages of training is correlated with instability in the learned category boundary.

- Action: Quantify the drift in choice bias during learning using a Generalized Linear Model (GLM). Studies found that subjects with greater variability in their GLM choice bias during training subsequently showed less stable category boundaries (Spearman's ρ = 0.67, p = 0.002) [16].

- Further Analysis: Consider that this drift may be driven by individual-specific strategies, such as a tendency for perseveration (repeating choices). Implementing a reinforcement learning model that includes a choice-history parameter can help quantify this effect [16].

FAQ 2: My computational model fits the categorization data well but fails to account for old-new recognition memory. Which model family should I use?

- Issue: An inability of a cognitive model to unify explanations for both categorization and recognition memory.

- Investigation & Solution: This is a classic challenge in formal modeling. Research using high-dimensional, real-world stimuli (e.g., rock images) has tested prototype, exemplar, and clustering models.

- Finding: The Generalized Context Model (GCM), an exemplar-based model, has been shown to provide a reasonable first-order account of both classification and old-new recognition data where other models fail. A standard version of the GCM calculates the probability of classifying item i into Category J based on its similarity to all stored exemplars of J [28].

- Refinement: If the standard GCM fails to capture variability in hit rates for old items, an extended hybrid-similarity version that includes a boost for matching distinctive features can significantly improve performance [28].

FAQ 3: How can I effectively organize drug information for a computational knowledge base to support reasoning?

- Issue: Difficulty in structuring pharmaceutical terminology for use in decision-support tools and automated reasoning.

- Investigation & Solution: Relying on a single, flat classification system is insufficient. Analysis of drug classification systems (e.g., NDF-RT, MeSH) recommends using a multi-axial, orthogonal categorical model.

- Recommended Categories: Structure your terminology around distinct, non-overlapping categories such as [29]:

- Chemical Structure

- Mechanism of Action (Cellular/Sub-cellular)

- Physiological Effect (Organ/System-level)

- Therapeutic Intent

- Benefit: This approach allows a single drug to be correctly classified from multiple perspectives (e.g., as a "piperazine," an "antifungal," and a "systemic drug"), enabling more flexible and powerful reasoning for tasks like allergy checking or treatment analysis [29].

- Recommended Categories: Structure your terminology around distinct, non-overlapping categories such as [29]:

Detailed Experimental Protocols

Protocol 1: Quantifying Individual Learning Strategies in Categorization

This protocol is adapted from studies investigating the relationship between learning trajectories and category boundary formation in mice [16].

1. Objective: To extract and model individual-specific strategies (like choice bias and perseveration) during category learning and correlate them with the stability of the learned category boundary.

2. Materials:

- Subjects (e.g., animal or human)

- Apparatus for a Two-Alternative Forced Choice (2AFC) task.

- Stimuli: Two distinct categories based on extreme examples of a sensory continuum (e.g., low 6-10 kHz vs. high 17-28 kHz tones).

- Testing Stimuli: Novel, ambiguous stimuli from the intermediate range of the continuum (e.g., 10-17 kHz tones).

3. Methodology:

- Training Phase:

- Train subjects to categorize stimuli from the two extreme categories until a proficiency threshold is reached (e.g., 75% accuracy).

- Record all choices and reaction times.

- Testing Phase:

- Intermix the ambiguous test stimuli (e.g., on 20% of trials) without providing feedback/rewards.

- Continue for multiple sessions to assess boundary stability.

- Data Analysis:

- Isolate Choice Bias: Use a Generalized Linear Model (GLM) to extract the stimulus-independent component of decision-making, the "GLM choice bias," across learning.

- Cluster Learning Trajectories: Apply Dynamic Time-Warping (DTW) clustering to the choice bias trajectories to identify common patterns (e.g., "stationary" vs. "drifting" biases).

- Model Perseveration: Fit a reinforcement learning "choice-history" model with a learning rate (α), overall bias (b), initial bias (Q₀), and a choice-history parameter (β) to quantify the tendency to repeat past choices.

- Correlate with Outcome: Calculate the variability of the category boundary across testing sessions. Correlate this with the variability of the GLM choice bias observed during the final training sessions.

Protocol 2: Testing Formal Cognitive Models on Real-World Categorization and Recognition

This protocol is based on research that evaluated cognitive models using a real-world, high-dimensional domain [28].

1. Objective: To compare the ability of prototype, exemplar, and clustering models to account for both classification and old-new recognition memory of complex stimuli.

2. Materials:

- Participants.

- Stimuli: A large set of images from real-world categories (e.g., 540 images of igneous, metamorphic, and sedimentary rocks).

- Computer-based experiment software (e.g., jsPsych [28]).

3. Methodology:

- Learning Phase: Participants classify a large set of training instances into the target categories with feedback.

- Test Phase: Participants complete two tasks:

- Classification: Categorize both old (training) and novel transfer items.

- Old-New Recognition: Judge whether each item in the test phase was presented during training ("old") or is new.

- Model Fitting:

- Derive a psychological stimulus space, often using multidimensional scaling (MDS) on similarity judgments or existing feature data.

- Fit the following models to the individual-trial data:

- Prototype Model: Assumes categorization is based on distance to the central tendency of each category.

- Exemplar Model (GCM): Assumes categorization is based on the summed similarity to all stored exemplars in each category.

- Clustering Model: Assumes categories are represented by multiple clusters or subgroups.

- Model Evaluation: Assess models based on their ability to simultaneously account for patterns in both classification accuracy and recognition hit/false-alarm rates.

Signaling Pathways and Workflow Visualizations

Diagram 1: Categorical Reasoning in Medical Diagnosis

This diagram visualizes the dual-process theory of clinical reasoning as applied in a pharmaceutical context [30].

Diagram 2: Multi-Axis Drug Categorization Model

This diagram illustrates the orthogonal axes for organizing pharmaceutical terminology as per the NDF-RT reference model [29].

Diagram 3: Exemplar Model of Categorization & Recognition

This workflow depicts the process of the Generalized Context Model (GCM) for handling both categorization and recognition tasks [28].

Research Reagent Solutions

The following table details key resources used in the featured cognitive categorization experiments.

| Research Reagent / Material | Function in Experiment |

|---|---|

| Two-Alternative Forced Choice (2AFC) Apparatus | Behavioral setup for training subjects (e.g., mice) to associate sensory stimuli with specific category responses, often involving a wheel-turn or nose-poke response [16]. |

| Auditory Stimulus Sets (Extreme & Ambiguous) | Used to define categories and probe boundaries. Typically includes two non-overlapping sets of stimuli from the extremes of a continuum (e.g., 6-10 kHz and 17-28 kHz tones) and a set of intermediate, ambiguous stimuli (e.g., 10-17 kHz) for testing [16]. |

| GABA-A Receptor Agonist (e.g., Muscimol) | Pharmacological agent for reversible inactivation of specific brain regions (e.g., Auditory Cortex) to establish their causal role in the categorization task [16]. |

| Real-World Category Stimuli (e.g., Rock Images) | High-dimensional, ecologically valid stimuli used to test the generalizability of cognitive models beyond simple lab stimuli. A published set includes 540 images across categories like igneous, metamorphic, and sedimentary [28]. |

| Multidimensional Scaling (MDS) Software | Analytical tool for deriving a psychological feature space from similarity judgments, which serves as the input for formal cognitive models like the GCM [28]. |

| Cognitive Diagnostic Models (CDMs) | Statistical psychometric models (e.g., G-DINA) used to analyze the cognitive processes and attributes (e.g., levels of Bloom's Taxonomy) measured by test items [27]. |

Troubleshooting Guides

Guide 1: Resolving Biomarker Validation and Qualification Issues

Problem: Inconsistent biomarker results are affecting trial participant stratification.

| Problem Cause | Diagnostic Steps | Recommended Solution |

|---|---|---|

| Insufficient Analytical Validation | 1. Check assay performance characteristics (sensitivity, specificity).2. Review precision data across multiple runs and operators. [31] | Establish a fit-for-purpose validation, prioritizing precision and accuracy before optimizing for sensitivity. [32] [31] |

| Unclear Context of Use (COU) | 1. Review the biomarker's stated COU document.2. Confirm the measured parameter aligns with the trial's specific eligibility question (e.g., diagnostic vs. predictive). [33] | Formally define the COU. A biomarker qualified for one COU (e.g., monitoring) cannot be assumed valid for another (e.g., diagnostic). [33] |

| Variable Pre-Analytical Handling | 1. Audit sample collection, processing, and storage protocols.2. Check for inconsistencies in sample matrix (e.g., plasma vs. serum). [33] [31] | Implement harmonized, standardized sample processing workflows across all trial sites to minimize pre-analytical variability. [31] |

Problem: Integrating novel multi-component biomarkers into established trial frameworks.

| Problem Cause | Diagnostic Steps | Recommended Solution |

|---|---|---|

| High-Dimensional Data Complexity | 1. Evaluate the integration method for different data types (e.g., radiomic, genomic, clinical).2. Assess if the model is biased towards the largest "omic" dataset. [34] | For smaller cohorts, use a multiomic graph approach that combines constituent graphs from each data type rather than simple data concatenation. [34] |

| Lack of Standardized Cutoffs | 1. Review the evidence for the chosen threshold (e.g., for a continuous biomarker).2. Check if the threshold is brand-agnostic and performance-based. [35] [36] | Adopt a performance-based approach. For example, use thresholds like ≥90% sensitivity and ≥75% specificity for triaging, as recommended in clinical guidelines. [35] [36] |

Guide 2: Addressing Biomarker-Based Eligibility Criteria Challenges

Problem: Low patient accrual due to overly restrictive biomarker-driven eligibility.

| Problem Cause | Diagnostic Steps | Recommended Solution |

|---|---|---|

| Overly Stringent Biomarker Thresholds | 1. Compare eligibility criteria with real-world patient biomarker values.2. Determine if thresholds are based on clinical necessity or arbitrary standards. [37] | Simplify and harmonize criteria. Justify the exclusion of patient subgroups (e.g., those with ECOG Performance Status 2) based on available safety/efficacy data. [37] |

| Inflexible Biomarker Testing Modalities | 1. Analyze screen failure rates due to tissue sample unavailability.2. Review if blood-based biomarkers are an acceptable alternative. [37] | Encourage flexibility in biologic material source (e.g., allow peripheral blood instead of archival tissue) where scientifically feasible. [37] |

Frequently Asked Questions (FAQs)

FAQ 1: What is the critical difference between a prognostic and a predictive biomarker?

- Prognostic Biomarkers provide information on the likely course of the disease (e.g., recurrence, progression) in an untreated individual. They inform on the intrinsic aggressiveness of the disease. [38]

- Predictive Biomarkers identify individuals who are more or less likely to respond to a specific therapeutic intervention. They inform treatment selection. [39] [38] For example, in NSCLC, EGFR mutation status is a predictive biomarker for response to EGFR inhibitors like gefitinib. [38]

FAQ 2: What is the difference between biomarker validation and qualification?

- Analytical Validation is the process of establishing that the performance characteristics of an assay (e.g., its sensitivity, specificity, and precision) are acceptable for its intended use. It answers: "Does the test measure the biomarker accurately and reliably?" [32]

- Biomarker Qualification is a formal regulatory process through which a biomarker is evaluated for a specific Context of Use (COU). It answers: "Can we rely on the biomarker interpretation to support drug development and regulatory decisions in the stated COU?" [33] [32]

FAQ 3: Our team discovered a novel biomarker. What is the regulatory pathway for its qualification?

The FDA's Biomarker Qualification Program involves a collaborative, three-stage submission process: [33]

- Stage 1: Letter of Intent (LOI) – Submit initial information on the biomarker, the unmet drug development need, and the proposed Context of Use.

- Stage 2: Qualification Plan (QP) – If the LOI is accepted, submit a detailed proposal for biomarker development to address knowledge gaps.

- Stage 3: Full Qualification Package (FQP) – If the QP is accepted, submit a comprehensive compilation of supporting evidence for the FDA's final qualification decision. [33]

FAQ 4: What are the minimum performance characteristics for a blood-based biomarker to be used in a specialized clinical setting?