Cognitive Interviewing in Research: Advanced Techniques for Validating Patient-Reported Outcomes and Survey Instruments

This comprehensive guide explores cognitive interviewing methodology for researchers and drug development professionals seeking to improve the validity and reliability of clinical outcome assessments, patient-reported outcomes, and research surveys.

Cognitive Interviewing in Research: Advanced Techniques for Validating Patient-Reported Outcomes and Survey Instruments

Abstract

This comprehensive guide explores cognitive interviewing methodology for researchers and drug development professionals seeking to improve the validity and reliability of clinical outcome assessments, patient-reported outcomes, and research surveys. Covering foundational principles to advanced applications, the article details how to design and conduct cognitive interviews, analyze qualitative data, and troubleshoot common problems. It provides evidence-based strategies for optimizing questionnaire design in clinical trials and global health research, reducing measurement error, and ensuring regulatory alignment through rigorous pre-testing methods.

The Science Behind Cognitive Interviewing: Building Valid Research Instruments from the Ground Up

Cognitive interviewing is a qualitative research method used to evaluate survey questions and other stimuli by examining respondents' underlying thought processes [1] [2]. In clinical and scientific settings, this methodology has evolved beyond simple question testing to become an essential tool for ensuring the validity and reliability of patient-reported outcomes, clinical assessments, and research instruments [3]. The core premise involves administering draft survey questions while collecting additional verbal and non-verbal data to understand how individuals interpret, process, and formulate responses to these questions [4] [2]. For drug development professionals and researchers, this method provides critical insight into response error and whether a question truly measures what it intends to measure, thereby directly impacting the quality of scientific data [1].

Contemporary applications in clinical outcome assessment (COA) demonstrate this evolution, where cognitive interviewing is now a recommended best practice to improve the psychometric properties of instruments during development and validation [3]. By identifying problems respondents have in understanding and answering draft questionnaire items, researchers can revise items to improve comprehension and response accuracy before fielding studies, ultimately strengthening the scientific rigor of data collection in clinical trials and health research.

Core Principles and Methodological Framework

Key Elements of the Cognitive Interview

A cognitive interview study is characterized by several defining features. Researchers select participants based on specific desired qualities or experiences (purposive sampling) rather than through random selection, as the primary goal is problem identification rather than estimation or causal inference [1]. Study samples are typically small, often ranging from 20 to 50 respondents, though some protocols suggest starting with as few as 8-15 interviews per round of testing [4] [1]. The methodology employs specialized techniques to collect and analyze qualitative data—information, ideas, and observations that cannot be adequately represented numerically [1].

The interview itself consists of four key elements working in tandem [2]:

- Administration of the survey question in a format mirroring the intended final context (e.g., read aloud for interviewer-administered surveys).

- Participant observation, where the interviewer notes non-verbal cues like hesitation or puzzled expressions.

- The think-aloud technique, which encourages participants to verbalize their thoughts as they answer.

- Interviewer probing, using semi-structured questions to delve deeper into the respondent's cognitive process.

Probing: The Heart of the Methodology

Probing techniques form the investigative core of cognitive interviewing, designed to illuminate the respondent's mental processes. There are three primary approaches to administering these probes, each with distinct advantages and limitations [4]:

- Concurrent Probing: Asking probes immediately after a question is answered. This captures real-time reactions but interrupts the natural questionnaire flow and may condition participants to overthink.

- Retrospective Probing: Asking probes after completing a questionnaire section or the entire survey. This provides a more authentic respondent experience without interruption, but relies on memory which may fade.

- Think-Aloud Probing: Participants continuously verbalize their thoughts as they answer. This offers active insight into comprehension and decision-making, but can feel unnatural and burdensome for participants, producing data that can be difficult to interpret.

Beyond their timing, probes can be categorized by their design and purpose. Scripted probes are predetermined and focus on testing specific hypotheses about potential respondent challenges. In contrast, spontaneous probes are developed in real-time based on active listening and observation of non-verbal cues [4].

Table 1: Cognitive Probing Strategies and Their Applications

| Probe Type | Primary Function | Example Questions | Research Context |

|---|---|---|---|

| Comprehension | Understand question interpretation | "What does the term '__' mean to you?" [4] | Testing medical terminology in patient questionnaires. |

| Recall | Explore memory retrieval | "How did you remember that event?" [1] | Evaluating questions about symptom frequency. |

| Judgment | Assess decision certainty | "How certain are you of your answer?" [4] [1] | Gauging confidence in self-reported health status. |

| Response | Examine answer selection | "How did you pick an answer?" [1] | Testing scales for quality of life or pain levels. |

| General/Spontaneous | Clarify observed difficulties | "You paused; what were you thinking?" [4] | Addressing unanticipated issues in question understanding. |

Application Notes: Protocols for Research Methodology

Experimental Workflow for Cognitive Interviewing

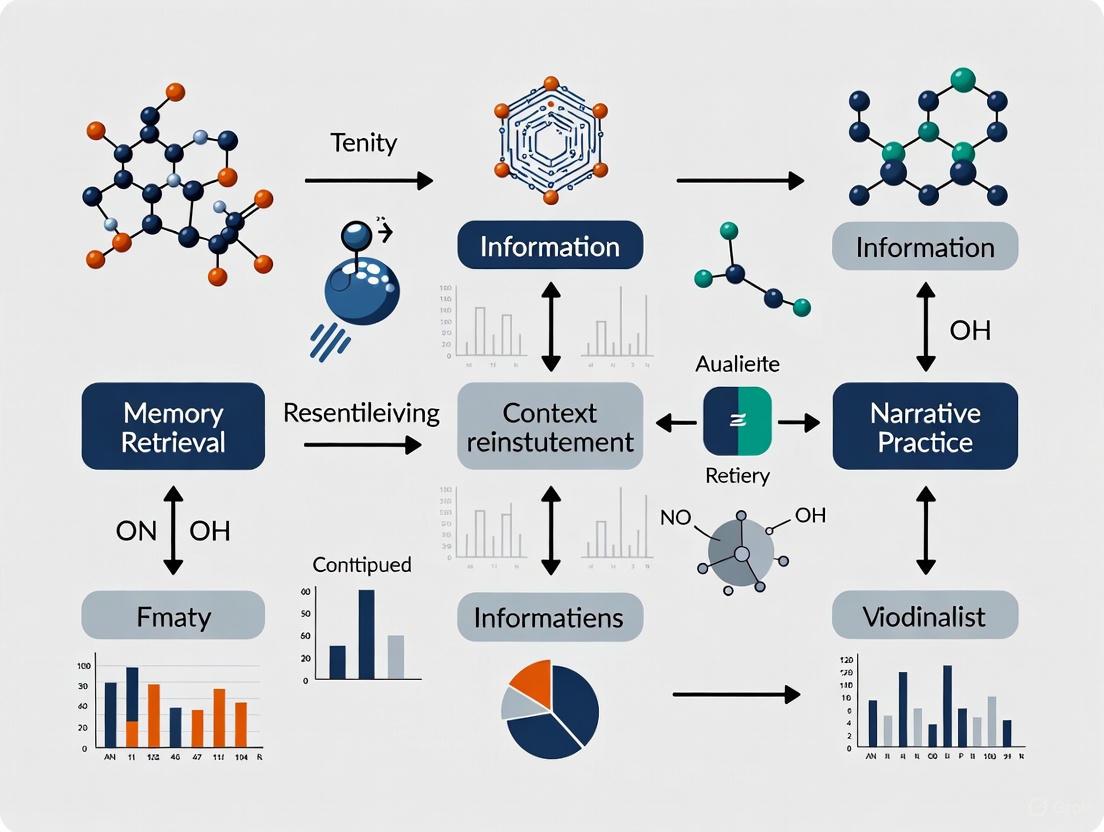

The following diagram visualizes the standard workflow for planning, conducting, and analyzing a cognitive interview study, illustrating the iterative nature of the process.

Detailed Methodological Protocol

Phase 1: Pre-Interview Planning and Design

- Objective Definition: Clearly state the purpose of testing, focusing on specific questions, concepts, or potential problem areas. This often involves testing new questions, borrowed questions, revisions to existing items, or translations [4] [3].

- Protocol Development: Create a structured interview guide containing the draft survey questions and scripted probes. These probes should target specific cognitive stages (comprehension, recall, judgment, response) and be based on pre-defined hypotheses about how questions might fail [4] [3].

- Participant Recruitment: Employ purposive sampling to recruit 8-15 participants per round of testing who represent the target population for the survey (e.g., patients with a specific condition for a clinical outcome assessment) [4] [1]. For heterogeneous populations, multiple testing rounds with different subgroups may be necessary.

- Interviewer Training: Train researchers in active listening techniques, the principles of questionnaire design, and how to administer probes without biasing the participant. Interviewers must avoid providing clarifications to questions unless specified by the protocol [4] [2].

Phase 2: Interview Execution

- Administration: Present the survey questions to the participant in a format as close as possible to the final mode (e.g., read aloud, on a screen) [2].

- Observation and Data Collection: Carefully observe and note non-verbal cues (e.g., hesitation, confusion). Based on the chosen approach, employ concurrent, retrospective, or think-aloud techniques to gather data on the participant's thought process [4] [2].

- Probing: Utilize both the pre-defined scripted probes and spontaneous probes that arise from active listening. The interviewer's role is to facilitate, not lead, the participant's explanation [4].

Phase 3: Analysis and Reporting Analysis is a systematic, multi-stage process that often occurs concurrently with data collection [1]. The goal is to identify patterns and themes related to question performance problems.

- Level 1 - Conducting Interviews: The initial stage of analysis begins with the first interview, as early findings can inform subsequent sessions.

- Level 2 - Summarizing Interview Notes: Review and summarize notes and recordings from each individual interview.

- Level 3 - Comparing Across Respondents: Compare summaries to identify common problems and thematic patterns in how different respondents interpret and answer the same question.

- Level 4 - Comparing Across Groups: If applicable, compare findings across different participant subgroups (e.g., by age, education, disease severity).

- Level 5 - Drawing Conclusions: Synthesize findings to make specific, evidence-based recommendations for question revision, retention, or deletion [1].

This process is often iterative, with revised questions undergoing further rounds of testing to verify that the identified issues have been resolved [4].

The Researcher's Toolkit: Essential Materials and Reagents

Table 2: Key Research Reagent Solutions for Cognitive Interviewing

| Item | Function & Application | Specification Notes |

|---|---|---|

| Interview Protocol | Serves as the experimental script, ensuring consistency across interviews. Contains draft questions and pre-planned probes. | Should be flexible enough to allow for spontaneous probing. Must be approved as part of the study design [3] [2]. |

| Participant Recruitment Matrix | Defines the purposive sample required to adequately test the survey instrument. | Targets individuals who "share characteristics of interest to the survey" [4]. For clinical tools, this means patients with the relevant condition. |

| Trained Interviewers | The human instrument responsible for data collection. They administer questions, observe behavior, and ask probes. | Requires training in active listening, questionnaire design principles, and unbiased probing techniques [4] [3]. |

| Data Recording Equipment | Captures the full interaction (audio/audio-visual) for accurate analysis and review. | Essential for retrospective analysis and verifying interpretations. Requires participant consent [2]. |

| Coding Framework | A structured system for categorizing and analyzing qualitative data from interview transcripts and notes. | Often developed using a grounded theory approach, where codes and themes emerge directly from the data [1]. |

| Analysis Log | A document for tracking the analytic process through the five levels of analysis, from individual interviews to final conclusions. | Provides an audit trail, enhancing the transparency and rigor of the methodology [1]. |

Cognitive interviewing represents a sophisticated methodology that extends far beyond superficial question testing. By providing a systematic framework for investigating the cognitive mechanisms underlying survey response, it allows researchers in drug development and clinical science to preemptively identify and mitigate sources of response error. The rigorous application of this method—through careful planning, skilled interviewing, and structured analysis—directly enhances the validity of clinical outcome assessments and other critical research instruments [3].

Integrating cognitive interviewing into the research methodology toolkit ensures that the data collected in subsequent quantitative studies is built upon a foundation of validated and well-understood questions. This is not merely a pre-testing step, but a fundamental practice for ensuring that the voices of patients and research participants are accurately measured, interpreted, and understood, thereby strengthening the overall integrity of scientific inquiry.

In the rigorous fields of clinical research and drug development, the validity of data hinges on a deceptively simple premise: that research participants interpret and respond to assessment questions exactly as researchers intend. Cognitive interviewing serves as a critical methodological bridge to ensure this premise holds true, directly addressing the gap between researcher intent and participant interpretation that can compromise data integrity [5]. This qualitative technique is systematically employed to evaluate and refine clinical outcome assessments (COAs), patient-reported outcomes (PROs), and other data collection instruments by identifying problems respondents have in understanding and answering draft questionnaire items [3] [6].

Without cognitive interviewing, surveys risk significant measurement error by including questions that respondents find incomprehensible, cannot accurately answer, or interpret in unintended ways [5]. This is particularly crucial in global drug trials where surveys may involve translation or are developed by researchers who differ significantly from the patient population in terms of socio-demographic characteristics, worldview, or cultural background [5]. The technique has evolved from cognitive psychology and survey research in the 1980s to become a recommended best practice in COA development and validation, now widely employed by government agencies and research institutions to ensure the reliability of collected data [5] [4].

Core Principles and Theoretical Framework

Cognitive interviewing examines the four key mental processes respondents use when answering survey questions: comprehension of the question, information retrieval from memory, judgment and evaluation of the retrieved information, and response selection [1] [2]. By probing these cognitive stages, researchers can identify precisely where participants struggle with questionnaire items and make targeted revisions to improve measurement accuracy.

The methodology is particularly valuable for revealing "hidden" problems that researchers may not anticipate, including issues with word choice, syntax, sequencing, sensitivity, response options, and resonance with local worldviews and realities [5]. For example, in developing a questionnaire assessing parental understanding of preterm birth concepts, cognitive interviews revealed that multiple participants interpreted the phrase "at risk" as indicating a certain outcome rather than a potential outcome, leading to a revision that included a concrete comparison group for clarity [6].

Table 1: Cognitive Processes Assessed in Cognitive Interviews

| Cognitive Process | Description | Example Probe Questions |

|---|---|---|

| Comprehension | How participants interpret the question and specific terms | "What does the term [X] mean to you?" "Can you rephrase the question in your own words?" |

| Information Retrieval | How participants recall or access needed information | "How did you remember that?" "Was that difficult to recall?" |

| Judgment | How participants evaluate and weigh retrieved information | "How sure are you about that?" "What factors did you consider?" |

| Response Selection | How participants map their answer to provided options | "How did you pick an answer?" "Were the response options clear?" |

Applications in Clinical Research and Drug Development

Cognitive interviewing provides particular value in pharmaceutical research and healthcare studies where precise measurement is critical. The method is extensively used in the development and validation of Clinical Outcome Assessments (COAs), which are essential endpoints in clinical trials [3]. These include patient-reported outcomes (PROs), observer-reported outcomes (ObsROs), and clinician-reported outcomes (ClinROs) that measure how patients feel or function in relation to their health condition and treatment.

In practice, cognitive interviews help ensure that COA instruments accurately capture the patient experience without measurement distortion. For instance, when testing a questionnaire on respectful maternity care in rural India, researchers discovered that hypothetical questions and Likert scales were interpreted in unexpected ways [5]. Some participants answered "no" to whether they would return to the same facility for a future delivery not because of dissatisfaction with care, but because they had no intention of having more children. Others avoided engaging with Likert scales entirely, responding in dichotomous terms despite various visual aids, revealing a fundamental mismatch between the response format and participants' cognitive frameworks [5].

The methodology is equally crucial when adapting existing instruments for new populations or translating them between languages, helping researchers identify culturally specific interpretations that might otherwise go unnoticed [5]. This application is vital for multinational clinical trials where measurement invariance across diverse patient populations is essential for valid cross-cultural comparisons.

Experimental Protocol: Implementing Cognitive Interviews

Protocol Development and Planning

The cognitive interview process begins with careful protocol development that defines the scope, objectives, and methodology. Researchers must first determine which questionnaire items require testing, typically focusing on new questions, borrowed questions, revisions to existing items, questions asked of different populations, or translated items [4]. The protocol should specify whether concurrent, retrospective, or think-aloud probing will be used, and include both scripted and spontaneous probes [4] [6].

An essential component is developing a comprehensive interview guide containing the questionnaire items to be tested followed by probing questions [6]. Scripted probes for each item ensure standardization across interviews, while allowing flexibility for spontaneous follow-up questions based on participant responses. The research team should carefully design and sequence the interview guide to ensure that probes on earlier items do not contaminate participant interpretation of later items [6].

Participant Sampling and Recruitment

Cognitive interviewing employs purposive sampling rather than random sampling, with participants selected because they share key characteristics with the target survey population [1] [4]. For clinical research, this typically means patients with the relevant health condition, caregivers, or healthcare providers depending on the instrument's intended respondent.

Sample sizes are generally small, typically ranging from 8-15 interviews per round of testing, with multiple rounds often conducted as items are revised [4] [6]. Research suggests that as few as four interviews may be sufficient to identify problematic questions, but best practices recommend aiming for each item to be reviewed by at least five participants [6]. To ensure diverse perspectives, researchers should intentionally recruit participants with varying demographic characteristics, health literacy levels, and clinical experiences relevant to the measurement concept [6].

Data Collection Procedures

Cognitive interviews are typically conducted one-on-one in a quiet, comfortable setting, either in person or remotely [6] [2]. Each session generally lasts 30-90 minutes, with participants first providing informed consent and then completing the draft questionnaire while verbalizing their thought processes [6]. The interviewer closely observes non-verbal cues such as hesitation, confusion, or uncertainty and takes detailed notes throughout the process [2].

Two primary approaches are used for collecting verbal data: the think-aloud method, where participants continuously verbalize their thoughts as they answer questions, and probing, where the interviewer asks specific follow-up questions [2]. Probing can be concurrent (immediately after each question) or retrospective (after a section or the entire questionnaire is completed) [4]. Each approach offers distinct advantages: concurrent probing captures real-time reactions but may disrupt normal question flow, while retrospective probing provides a more natural survey experience but risks participants forgetting their initial thought processes [4].

Analysis and Interpretation

Analysis of cognitive interview data typically involves the five levels of analysis framework: conducting interviews, summarizing interview notes, comparing across respondents, comparing across groups, and drawing conclusions about question performance [1]. The research team meets regularly throughout data collection to review findings and identify both "dominant trends" (problems that emerge repeatedly) and "discoveries" (significant issues that may appear in only one interview but still threaten validity) [6].

A reparative approach is used where the team collectively decides how to improve flawed items to reduce response error [6]. This involves carefully inspecting participant interpretations against the intended construct and making revisions to improve alignment. Substantially revised items are typically tested in additional interview rounds to ensure the revisions effectively address the identified issues without introducing new problems [6].

Table 2: Common Questionnaire Issues Identified Through Cognitive Interviews

| Issue Category | Description | Example from Research |

|---|---|---|

| Word Choice | Unfamiliar terms or unintended alternative meanings | Formal Hindi words unfamiliar to rural women in translated surveys [5] |

| Syntax | Overly complex or lengthy sentence structures | Questions with multiple clauses caused respondents to lose track of core question [5] |

| Response Options | Incomprehensible or insufficient response formats | Likert scales with >3 options incomprehensible to rural Indian women [5] |

| Temporal Framing | Confusion about time references | "Past year" interpreted as calendar year vs. past 12 months [4] |

| Question Format | Unfamiliar or confusing question structures | True/false format confusing; preference for yes/no questions [6] |

| Cultural Resonance | Concepts lacking relevance to local worldviews | "Being involved in decisions about your health care" less relevant in certain cultural contexts [5] |

Essential Research Reagent Solutions

The effective implementation of cognitive interviewing requires specific methodological "reagents" – the structured tools and approaches that facilitate the collection of valid data about question performance. These components form the essential toolkit for researchers employing this technique.

Table 3: Essential Cognitive Interviewing Research Reagents

| Research Reagent | Function | Application Notes |

|---|---|---|

| Structured Interview Guide | Provides standardized protocol for administering questions and probes | Includes both scripted probes for standardization and flexibility for spontaneous follow-ups [6] |

| Trained Interviewers | Conduct sessions using active listening and appropriate probing techniques | Require training in both questionnaire design principles and cognitive interview techniques [4] [6] |

| Purposive Sampling Framework | Ensures participants represent target population characteristics | Recruit participants with diversity in demographics, health literacy, and clinical experience [1] [6] |

| Data Collection Template | Systematically captures participant responses and interviewer observations | Spreadsheet organized by item with columns for comprehension, recall, judgment, and response issues [6] |

| Analysis Framework | Provides structured approach for identifying and categorizing question problems | Five levels of analysis: interviews, summaries, cross-respondent comparison, cross-group comparison, conclusions [1] |

Advanced Probing Techniques and Approaches

Probing represents the methodological core of cognitive interviewing, with several distinct approaches available to researchers. Scripted probes are designed in advance to test specific hypotheses about potential question problems and typically address comprehension, recall, judgment, and response processes [1] [6]. Common scripted probes include: "How easy or hard was the question to answer?", "What does the term _ mean to you?", "How did you decide on your answer?", and "How certain are you of your answer?" [4].

In contrast, spontaneous probes emerge from active listening and observation during the interview, allowing investigators to explore unexpected participant difficulties [4]. Effective spontaneous probes include: "What was going through your mind as you tried to answer the question?", "You took a little while to answer that question. What were you thinking about?", and "You seem somewhat unsure about your answer. Can you tell me why?" [4]. The skilled integration of both scripted and spontaneous probing enables comprehensive evaluation of question performance while maintaining methodological rigor.

The selection of probing approach should align with study objectives and questionnaire development stage. Concurrent probing is particularly valuable early in questionnaire development when detailed feedback on each item is needed, while retrospective probing may be more appropriate later in development to assess the natural survey flow experience [4]. The think-aloud approach offers the advantage of minimizing interviewer bias but can feel unnatural for participants and produce data that is more challenging to interpret [4].

Cognitive interviewing provides an indispensable methodological bridge in clinical research and drug development, offering unique insights into respondent thought processes that quantitative methods alone cannot capture. The technique's principal strength lies in its ability to reveal "hidden" problems with questionnaire items before they compromise data quality in larger studies [2]. By identifying issues with comprehension, recall, judgment, and response selection, cognitive interviews enhance construct validity and content validity of measurement instruments [6] [2].

However, researchers must acknowledge the methodology's limitations. Cognitive testing cannot indicate the size or extent of problems in the broader population, guarantee that all problems have been identified, or definitively confirm that identified problems would manifest in real survey conditions [2]. Additionally, while cognitive interviews can improve question design, they cannot guarantee that revised versions perform better than originals without subsequent validation [2].

When implemented systematically using the protocols and approaches outlined in this article, cognitive interviewing represents a powerful tool for strengthening the validity and reliability of clinical outcome assessments, patient-reported outcomes, and other essential measurement instruments in pharmaceutical research and healthcare studies. The method provides the critical link between researcher intent and participant interpretation that underpins meaningful measurement in patient-centered research.

Application Notes: Cognitive Processes in Survey Response

Cognitive interviewing is a qualitative research method used to evaluate survey questions by examining the mental processes respondents use to answer them [2]. This technique explores how individuals comprehend questions, retrieve relevant information, make judgments about their answers, and formulate responses [1]. In clinical outcome assessment (COA) measure development, cognitive interviewing has become a standard method for improving the reliability and validity of instruments by identifying problems respondents have with understanding and answering draft questionnaire items [3].

The core cognitive processes under investigation represent a sequential model of question answering [1]:

- Comprehension: The respondent interprets the question and determines what is being asked

- Recall: The respondent searches memory for relevant information

- Judgment: The respondent evaluates and synthesizes recalled information

- Response: The respondent maps their judgment to the available response options

Understanding these processes allows researchers to identify sources of response error and refine questions to ensure they measure what is intended, ultimately improving data quality in research studies and clinical trials [2] [6].

Table 1: Cognitive Probe Classification by Cognitive Process

| Cognitive Process | Probe Type | Example Probes | Primary Function |

|---|---|---|---|

| Comprehension | Meaning-Based | "What does the term [X] mean to you in this question?""Can you rephrase this question in your own words?" | Assesses interpretation of question intent, key terms, and instructions [1] [6]. |

| Recall | Memory-Based | "How did you remember that information?""Was that easy or difficult to remember?" | Evaluates retrieval strategies, recall burden, and accuracy of memory [1]. |

| Judgment | Confidence-Based | "How sure are you about that answer?""Did you have to guess or estimate?" | Reveals estimation processes, judgment formation, and confidence level [1] [6]. |

| Response | Mapping-Based | "How did you pick your answer from the options given?""Was there an answer you wanted to give that wasn't listed?" | Identifies issues with response options, social desirability, and answer mapping [1] [6]. |

Table 2: Cognitive Interview Study Characteristics and Outcomes

| Characteristic | Specification | Rationale |

|---|---|---|

| Sample Size | 9–50 participants per study [1] [6] | Enables identification of dominant problems without seeking statistical representativeness [2]. |

| Sampling Method | Purposive sampling [1] | Ensures inclusion of participants with relevant experiences and diverse characteristics [6]. |

| Interview Duration | ~60 minutes [6] | Balances comprehensive coverage with participant fatigue. |

| Primary Output | Qualitative findings on question performance [1] | Provides detailed evidence for question revision to improve validity [3]. |

| Common Outcomes | Identification of problematic items, revision recommendations, evidence of content validity [3] | Directly informs instrument development and supports regulatory submissions for COAs [3]. |

Experimental Protocols

Protocol 1: Cognitive Interviewing for Questionnaire Pretesting

Purpose: To identify problems respondents experience with survey questions and inform revisions that improve data quality [2].

Materials:

- Draft questionnaire or COA instrument

- Cognitive interview guide with scripted probes

- Consent forms

- Recording equipment (optional)

- Note-taking template

Procedure:

- Participant Recruitment: Recruit 9–50 participants using purposive sampling to ensure diversity in characteristics relevant to the study (e.g., education, health literacy, clinical status) [1] [6].

- Interview Setup: Conduct interviews in a quiet, private setting. Obtain informed consent, emphasizing that the goal is to test the questions, not evaluate the participant [6].

- Think-Aloud Training: Demonstrate the think-aloud technique by modeling how to verbalize thoughts while answering a sample question [2].

- Question Administration: Present questions in the same format planned for the final survey (e.g., read aloud for interviewer-administered surveys) [2].

- Probing: Use scripted probes targeting specific cognitive processes (see Table 1). Probes can be administered concurrently (after each question) or retrospectively (after completing the questionnaire) [6].

- Data Collection: Document participant responses, verbalizations, nonverbal behaviors, and probe responses in detailed notes [2].

- Data Analysis: Analyze data using an iterative approach, identifying recurring problems ("dominant trends") and unique but critical issues ("discoveries") [6].

- Question Revision: Revise problematic questions based on analysis findings and retest revised questions in subsequent interviews if necessary [6].

Figure 1: Cognitive interview workflow showing the sequential process from participant recruitment to question finalization.

Protocol 2: Cognitive Interviewing for Clinical Outcome Assessment (COA) Validation

Purpose: To provide evidence for the content validity of COA measures by demonstrating that items are understood as intended by the target population [3].

Materials:

- Draft COA instrument

- Interview guide with disease-specific probes

- IRB-approved protocol

- Digital recorder and transcription service

- Qualitative data analysis software (optional)

Procedure:

- Team Training: Ensure all interviewers understand the intent of each questionnaire item and follow standardized probing techniques [6].

- Participant Selection: Recruit patients who represent the target population for the COA, including variations in disease severity, demographics, and comorbidities [3].

- Interview Conduct: Use a combination of think-aloud and targeted probing to explore comprehension, recall, judgment, and response processes for each item [2] [1].

- Data Management: Record interviews and compile detailed notes in a structured format organized by questionnaire item [6].

- Analysis Framework: Analyze data using a systematic approach (e.g., the Five Levels of Analysis):

- Level 1: Conducting interviews

- Level 2: Summarizing interview notes

- Level 3: Comparing across respondents

- Level 4: Comparing across subgroups

- Level 5: Drawing conclusions about question performance [1]

- Problem Classification: Categorize identified issues by type (e.g., comprehension difficulty, recall challenge, response option misfit) [6].

- Documentation: Prepare a comprehensive report detailing methods, findings, and revisions made to support content validity claims for regulatory submissions [3].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential Materials for Cognitive Interviewing Studies

| Item | Function | Application Notes |

|---|---|---|

| Semi-Structured Interview Guide | Provides framework for consistent administration of survey questions and probes [2]. | Should include scripted introductory text, survey questions, and standardized probes for key items [6]. |

| Targeted Cognitive Probes | Questions designed to elicit specific information about cognitive processes [1]. | Should be tailored to investigate comprehension, recall, judgment, and response formulation for each survey item [1]. |

| Participant Recruitment Screener | Ensures selection of participants with characteristics relevant to the research questions [1]. | Should use purposive sampling to include diverse perspectives and experiences [6]. |

| Note-Taking Template | Standardized format for documenting participant responses and observations [6]. | Should organize notes by questionnaire item and capture verbalizations, nonverbal behaviors, and probe responses [2]. |

| Analysis Codebook | Framework for categorizing and interpreting qualitative findings [1]. | Can use grounded theory approaches with codes developed based on the data [1]. |

| Question Problem Classification System | Taxonomy for categorizing identified question problems [6]. | Common categories include: lexical (word meaning), temporal (timeframe), logical, and knowledge problems [6]. |

Figure 2: Cognitive response model showing sequential processes and potential problems at each stage.

In modern clinical research, capturing the patient's perspective has transitioned from a supplementary activity to a fundamental component of treatment evaluation. Clinical Outcome Assessments (COAs) are measurements that describe or reflect how a patient feels, functions, or survives. A critical subset of COAs are Patient-Reported Outcomes (PROs), which are reports about a patient's health condition that come directly from the patient, without interpretation by a clinician or anyone else [7]. Regulatory agencies, including the U.S. Food and Drug Administration (FDA) and the European Medicines Agency (EMA), now actively endorse the integration of patient perspectives into clinical trials. The FDA's Patient-Focused Drug Development (PFDD) initiative, codified by the 21st Century Cures Act, underscores the requirement to include patient experience data in clinical research [8] [7]. This guidance is driven by the recognition that some treatment effects are known only to the patient and that patients provide a unique perspective on treatment effectiveness, encompassing functioning, quality of life, and the burden of side effects [7].

The Role of Cognitive Interviewing in COA and PRO Development

Cognitive interviewing is a qualitative technique used to improve and refine questionnaire items during the development and validation of COA and PRO instruments [3]. Its primary aim is to improve the reliability and validity of these instruments by identifying problems respondents have in understanding and answering draft questionnaire items. The insights gained are then used to revise items to improve comprehension and response accuracy [3]. This process is indispensable for ensuring that the measures truly resonate with patients' experiences and capture concepts that are relevant to them.

Key Cognitive Interview Techniques

Practitioners employ several key techniques during cognitive interviews to elicit detailed feedback from participants [8]:

- Think-Aloud Protocols: Participants are asked to verbalize their thought process aloud as they answer questionnaire items. This provides researchers with a direct window into the respondent's cognitive journey, revealing how they interpret questions and arrive at an answer.

- Verbal Probing: The interviewer asks follow-up questions to have participants elaborate or clarify their responses. This nuanced approach helps uncover underlying perceptions, strong emotions, or points of confusion that might otherwise remain hidden.

- Paraphrasing: Interviewers ask participants to rephrase trial details or questionnaire items in their own words. This exercise reveals the participant's level of understanding and can highlight specific wording that needs clearer communication.

Best Practices for Conducting Cognitive Interviews

A structured approach is critical for obtaining high-quality, actionable data from cognitive interviews. Key procedural steps and considerations are summarized in the table below.

Table 1: Best Practices for Conducting Cognitive Interviews in COA Development

| Element | Rationale & Underpinning Methodology | Recommended Procedural Steps |

|---|---|---|

| Interview Guide Development | Provides a consistent framework for data collection while allowing flexibility for probing. | Develop a semi-structured guide with core questions and optional probes based on the COA items being tested [3]. |

| Interviewer Training & Neutrality | Neutral third-party interviewers foster trust, leading to more open and genuine insights untainted by internal trial preconceptions [8]. | Recruit and train interviewers who are detached from the investigational site staff. Emphasize neutral probing and active listening skills [3] [8]. |

| Dual-Phase Interviewing | Captures evolving participant perceptions and experiences at critical junctures in the research process [8]. | Conduct baseline interviews to understand initial perceptions and validate trial materials. Conduct post-trial dialogues to capture the full experience and the treatment's impact [8]. |

| Analysis & Reporting | Translates raw, qualitative dialogues into structured, actionable insights for the research team. | Use a blend of deductive coding (sorting data into pre-defined categories) and inductive coding (allowing themes to emerge from the data) to create a hierarchical coding frame [8]. |

Advanced Applications: The Modular Approach to PROs in Clinical Trials

A significant advancement in the application of PROs is the modular approach. This approach involves the purposeful selection and independent assessment of specific patient-relevant and clinically relevant domains from multi-domain PROMs, which are then scored and interpreted separately [9]. This strategy addresses the challenge that existing PROMs may "overreach or fall short" in measuring domains most relevant for a specific study's context, population, and treatments.

Applications of the Modular Approach

Researchers can implement the modular approach in several ways, depending on the trial's needs [9]:

- Approach A: Study-Specific Conceptual Framework: Identify key concepts relevant to the patient population and treatment. After reviewing candidate PROMs, if no single suitable instrument is found, create a framework by combining dedicated PROMs and/or relevant domains from other instruments.

- Approach B: Using a Full-Length PROM, Removing Irrelevant Domains: A disease-specific PROM may be selected, but domains that are less relevant to the specific study context (e.g., a symptom only present in advanced disease for an early-stage trial) are removed to reduce respondent burden.

- Approach C: Using a Full-Length PROM, Substituting Key Domains: A domain from a primary PROM that is a primary or key secondary outcome (e.g., fatigue) but is not measured in sufficient depth can be substituted with a more comprehensive, dedicated PROM for that specific concept.

The workflow for selecting and implementing a modular approach is outlined in the diagram below.

Comparative Analysis of the Modular Approach

The decision to adopt a modular approach involves weighing specific advantages and challenges. The following table summarizes the key arguments for and against its use.

Table 2: The Case For and Against the Modular Approach for PROMs in Clinical Trials

| Aspect | Case For the Modular Approach | Case Against the Modular Approach |

|---|---|---|

| Scientific Rigor | Promotes rigorous justification for domain selection via a conceptual framework, avoiding unplanned "trawling" for effects [9]. | Requires greater time and effort to select domains. Risks missing unexpected effects captured by full-length PROMs [9]. |

| Respondent Burden | Focuses measurement on clinically relevant domains, reducing burden by removing less relevant items [9]. | Demands high certainty that excluded domains are truly irrelevant to the study context [9]. |

| Psychometric Properties | Selected domains often retain established psychometric properties if sourced from PROMs validated in the target population [9]. | Item order effects may impact performance when domains are administered separately from the full-length PROM [9]. |

| Flexibility vs. Comparability | Enables flexibility to substitute less informative domains and append missing ones, improving sensitivity to change [9]. | Limits comparability with other studies that used full-length PROMs. Acceptability by HTA agencies may be unclear [9]. |

Experimental Protocol for a Cognitive Interview Study in COA Validation

This protocol provides a detailed methodology for conducting cognitive interviews to validate a new or adapted Clinical Outcome Assessment.

Objectives and Preparation

- Primary Objective: To identify and characterize problems related to comprehension, retrieval, judgment, and response selection that participants may encounter with draft COA items.

- Materials: The protocol requires several key materials, as detailed in the table below.

Table 3: Research Reagent Solutions for Cognitive Interviewing

| Item/Category | Function & Application in Protocol |

|---|---|

| Semi-Structured Interview Guide | Ensures consistent data collection across participants while allowing flexibility for spontaneous probing. Contains core questions about item intent, understanding, and response option selection. |

| Draft COA Instrument | The version of the questionnaire (e.g., on paper, tablet, or screen-share) that is being evaluated and refined. |

| Audio/Video Recording Equipment | Used to capture the full interview for accurate transcription and analysis, with participant consent. |

| Informed Consent Documents | Explains the study purpose, procedures, risks, benefits, and confidentiality to participants, ensuring ethical conduct. |

| Demographic Questionnaire | Collects basic information (e.g., age, gender, disease history) to describe the interview sample. |

Participant Recruitment and Sample Size

- Recruitment: Participants should be recruited from the target population for whom the COA is intended, reflecting the diversity of the eventual users in terms of disease severity, age, education, and cultural background.

- Sample Size: Cognitive interview studies typically use a sample size determined by saturation, the point at which new interviews yield no new substantive insights. While fixed sample sizes can be used, iterative recruitment until saturation is achieved is considered a best practice [3]. Sample sizes often range from 10 to 30 participants.

Step-by-Step Procedural Workflow

- Obtain Informed Consent: The interviewer explains the study process, confirms voluntary participation, and obtains written informed consent. The purpose of the cognitive interview is clarified without revealing the specific problems being investigated to avoid biasing responses.

- Conduct the Interview:

- The interviewer presents the draft COA instrument.

- The participant is instructed to use the "think-aloud" technique as they complete the questionnaire.

- The interviewer uses verbal probing (e.g., "Can you tell me more about what you were thinking when you answered that?" or "What does the term 'social activities' mean to you in this question?") to explore comprehension and thought processes.

- The interviewer may also use paraphrasing (e.g., "Could you repeat that question in your own words?").

- All sessions are audio-recorded with permission for subsequent analysis.

- Debrief: After completing the COA, a short debriefing session is held to gather overall impressions and any final comments.

Data Analysis and Reporting

- Transcription: Audio recordings are transcribed verbatim.

- Coding: Transcripts are analyzed using a systematic coding approach.

- Deductive Coding: Responses are sorted into pre-defined categories based on the interview questions (e.g., "problems with comprehension," "issues with recall").

- Inductive Coding: Researchers remain open to new, emergent themes that were not initially anticipated, capturing the full essence of the participants' journeys [8].

- Theme Development: Codes are synthesized into broader themes that describe the types and severity of problems identified with the COA items.

- Report Writing: A final report summarizes the findings, provides specific examples of problematic items, and offers evidence-based recommendations for item revision. The report should detail the methodology, sample characteristics, and the analytic process [3].

The integration of Patient-Reported Outcomes and other Clinical Outcome Assessments is fundamental to a modern, patient-centric clinical research paradigm. The rigorous development and validation of these instruments through cognitive interviewing is a critical step that ensures they are understood as intended and accurately capture the patient experience. Furthermore, the modular approach to PRO implementation offers a flexible and scientifically rigorous strategy to tailor outcome assessment to the specific context of a clinical trial, thereby enhancing data quality and relevance. Together, these methodologies ensure that the patient's voice is not merely heard but is effectively integrated into the evaluation of new treatments, ultimately leading to therapies that better address the needs of those living with the disease.

Cognitive interviewing is a qualitative research method used to evaluate and improve survey questions and other research instruments by understanding how respondents interpret, process, and formulate answers to them [4] [2]. This methodology serves as a critical tool for identifying and reducing measurement error—the discrepancy between a respondent's true value and the value collected in research—thereby ensuring the data validity essential for robust scientific conclusions [4] [3]. In fields like clinical outcome assessment (COA) and drug development, where instruments directly measure patient-reported outcomes, the application of cognitive interviewing is paramount for developing reliable and valid measures that can accurately capture treatment benefits and harms [3].

Core Principles of Cognitive Interviewing

The fundamental goal of a cognitive interview is to evaluate the four key mental processes respondents undergo when answering a question [4] [2]:

- Comprehension: How the respondent interprets the question and its key terms.

- Retrieval: How the respondent recalls the necessary information from memory.

- Judgment: How the respondent evaluates and integrates the retrieved information to form an answer.

- Response: How the respondent maps their judgment to the available response options.

During the interview, participants are asked to complete a survey or set of questions while the researcher observes and uses various techniques to gain insight into these internal processes [4]. This method is a cost-effective pretesting activity typically conducted after initial questionnaire drafting and before full survey launch [4].

Application Notes: Methodologies and Protocols

Key Probing Methodologies

Probing is the core activity of a cognitive interview, and the approach can be tailored to the research context [4] [3]. The following table summarizes the primary probing methodologies.

Table 1: Key Probing Techniques in Cognitive Interviewing

| Technique | Description | Best Use Cases | Advantages | Disadvantages |

|---|---|---|---|---|

| Concurrent Probing [4] | Probes are asked immediately after the participant answers the survey question. | Early stages of questionnaire design; testing specific, complex questions. | Captures real-time reactions and thought processes. | Interrupts normal questionnaire flow; may condition participants to overthink. |

| Retrospective Probing [4] | Probes are asked after a section or the entire survey is completed. | When the questionnaire is in a near-final form; to assess overall flow and experience. | Provides a more authentic respondent experience without interruption. | Respondents may forget their initial thought processes. |

| Think-Aloud Protocol [4] [2] | Participants are asked to verbalize their thoughts continuously as they answer the question. | Gaining unfiltered insight into comprehension and decision-making; requires minimal interviewer training. | Avoids potential bias introduced by interviewer probes. | Can feel unnatural and burdensome for participants; results can be difficult to interpret. |

Experimental Protocol: Conducting a Cognitive Interview

The following workflow outlines the standard procedure for conducting a cognitive interview, synthesizing best practices from the literature [4] [2] [3].

Figure 1: Workflow for conducting and analyzing cognitive interviews. The process is often iterative, requiring multiple rounds of testing and revision.

Pre-Interview Protocol

- Define Objectives and Select Questions: Focus the interview on new questions, borrowed questions, revised items, or questions administered to a new population or mode [4].

- Develop Interview Guide and Scripted Probes: Create a protocol with predetermined probes to test hypotheses about potential question challenges. Example scripted probes include [4]:

- "What does the term '_' mean to you?"

- "How did you decide on your answer?"

- "How easy or hard was it to find a response choice that fit for you?"

- "Were any response options missing?"

- Recruit and Train Interviewers: Cognitive interviewers should be trained in active listening techniques and have a grounding in questionnaire design principles to know what to listen for [4]. Best practices recommend training participants on the think-aloud technique if it will be used [2].

- Recruit Participants: Select 8-15 participants per round of testing who share characteristics with the target survey population [4]. Multiple rounds of testing are common, with revisions made between rounds.

In-Interview Protocol

- Administer the Survey Question: Present the question in a format as close as possible to the final survey context (e.g., read aloud for interviewer-administered surveys) [2].

- Participant Observation: Actively observe and note non-verbal cues such as hesitation, puzzled expressions, or long pauses [2].

- Apply Probing Technique: Execute the chosen probing method (Concurrent, Retrospective, or Think-Aloud) as per the interview guide [4].

- Spontaneous Probing: Based on observations, ask unscripted follow-up questions. Examples include [4]:

- "You took a little while to answer that question. What were you thinking about?"

- "You seem somewhat unsure about your answer. Can you tell me why?"

- "What was going through your mind as you tried to answer the question?"

Post-Interview Protocol

- Data Analysis: Analyze the qualitative data from all interviews to identify recurring themes, patterns, and specific problems with questions. This involves categorizing issues related to comprehension, retrieval, judgment, and response.

- Instrument Revision: Revise the survey questions based on the identified issues to improve clarity and reduce measurement error.

- Iterative Testing: Conduct further rounds of cognitive testing if substantial revisions were made, to ensure the changes resolved the issues without introducing new ones [4].

The Scientist's Toolkit: Essential Research Reagents

The following table details the key "materials" and their functions required for conducting cognitive interviews.

Table 2: Essential Reagents for Cognitive Interview Research

| Item | Function/Application |

|---|---|

| Interview Guide & Protocol | The master document containing the survey questions and scripted probes; ensures consistency across interviews [4] [3]. |

| Trained Cognitive Interviewers | Researchers skilled in active listening, neutral probing, and questionnaire design principles to effectively elicit and identify cognitive processes [4]. |

| Recruited Target Population | Participants who represent the future survey respondents, essential for ensuring ecological validity and identifying population-specific issues [4] [10]. |

| Audio/Video Recording Equipment | To capture the full interview for accurate transcription and analysis, allowing the researcher to focus on the interaction rather than just note-taking. |

| Data Analysis Framework | A systematic method (often thematic analysis) for synthesizing qualitative data from multiple interviews to identify and categorize question performance issues [3]. |

Special Considerations and Advanced Applications

Challenges in Specific Populations

Applying cognitive interviews in diverse global contexts or with specific sub-populations requires adapting standard protocols. Research with older adults in Low- and Middle-Income Countries (LMICs) highlights key challenges [10]:

Table 3: Challenges and Mitigation Strategies in Specific Populations

| Challenge Category | Specific Challenges | Recommended Mitigations |

|---|---|---|

| Population-Specific [10] | Diglossia (difference between official and spoken language), low "survey literacy", mistrust of institutions, reluctance to disclose sensitive information. | Conduct interviews in the respondent's everyday language; carefully build rapport and explain the purpose of the research; ensure cultural adaptation of the process. |

| Ageing-Specific [10] | Hearing/visual impairments, cognitive fatigue, decline in recall and working memory, word-finding difficulties, slower information processing. | Schedule shorter interviews; ensure a quiet environment; use clear, slow speech; be patient and allow more time for responses; monitor for fatigue. |

Applications Beyond Survey Pretesting

While traditionally used for testing survey questions, the cognitive interview method can be applied to a wider range of research materials, including [2]:

- Informed Consent and Permission Forms: Testing understanding of data linkage permissions.

- Patient Information Leaflets: Evaluating the clarity of instructions and medical information.

- User Testing of Digital Interfaces: Combining with usability testing for digital health tools and electronic Clinical Outcome Assessments (eCOAs).

Cognitive interviewing is a powerful, cost-effective methodology that provides an empirical basis for improving research instruments. By systematically investigating how respondents comprehend, process, and answer questions, researchers can directly address the root causes of measurement error. The rigorous application of the protocols and methodologies outlined—from selecting the appropriate probing technique to adapting to unique population needs—is fundamental to ensuring the validity of data collected in clinical, public health, and social science research. This, in turn, strengthens the evidence base derived from this data, supporting more reliable scientific conclusions and better-informed decision-making in drug development and beyond.

Conducting Effective Cognitive Interviews: Step-by-Step Protocols for Research Applications

Within the framework of research methodology, cognitive interview techniques serve as vital tools for investigating the mental processes individuals employ when interacting with information. These techniques are paramount for improving the validity and reliability of data collection instruments, particularly in fields like drug development where precise measurement is critical. This article details two core methodologies: the Think-Aloud Protocol and Respondent Debriefing. The Think-Aloud Protocol captures concurrent verbalizations of a participant's thoughts during a task, providing a window into real-time cognitive processing [11] [12]. In contrast, Respondent Debriefing is a retrospective procedure conducted after data collection, aimed at gathering feedback on the participant's experience or addressing any deception used in the study [13]. While both are qualitative methods used to enhance research quality, their applications, theoretical underpinnings, and implementation protocols differ significantly. This article provides a structured comparison and outlines detailed application notes and experimental protocols for researchers and scientists.

Comparative Analysis: Think-Aloud Protocols vs. Respondent Debriefing

The following table summarizes the core characteristics of these two methodologies, highlighting their distinct roles in the research process.

Table 1: Comparative Analysis of Think-Aloud Protocols and Respondent Debriefing

| Feature | Think-Aloud Protocol | Respondent Debriefing |

|---|---|---|

| Primary Objective | To understand real-time cognitive processes, decision-making, and usability issues during a task [14] [15]. | To gather post-hoc feedback on the survey/task experience or to ethically manage deception [13]. |

| Theoretical Basis | Rooted in cognitive psychology; aims to access working memory contents without altering the thought sequence [11]. | Grounded in ethical research principles and experiential learning theory (e.g., Kolb's cycle) [16]. |

| Timing of Execution | Concurrent with the task performance [11]. | Retrospective, after the task or survey is complete [13]. |

| Data Type Collected | Qualitative data on problem-solving strategies, expectations, frustrations, and comprehension difficulties [14] [12]. | Qualitative feedback on question interpretation, task difficulty, emotional impact, and overall procedure [13] [16]. |

| Role of Researcher | Neutral observer who may provide neutral prompts to continue verbalization [14] [12]. | Facilitator who guides a structured reflection, often using a pre-defined script [13] [16]. |

| Key Applications | Usability testing of interfaces (e.g., clinical trial software), prototype testing, understanding problem-solving in complex tasks [14] [15]. | Pre-testing survey questions, validating translated instruments, ethical closure after studies involving deception [5] [13] [17]. |

| Cognitive Stage Addressed | Comprehension, memory retrieval, judgment, and response formulation as they occur [17]. | Retrospective reconstruction of comprehension, judgment, and the subjective experience of the task [13]. |

Application Notes and Methodological Protocols

Think-Aloud Protocol: Application and Workflow

The Think-Aloud Protocol is a qualitative research method where participants continuously verbalize their thoughts, feelings, and intentions while interacting with a product, prototype, or system [15]. This method is invaluable for uncovering usability issues and understanding the user's cognitive framework.

3.1.1 Experimental Protocol for a Think-Aloud Study

- Objective: To identify usability issues and comprehension barriers in a new electronic data capture (EDC) system for clinical trial data.

- Materials Needed: Refer to Table 3: Research Reagent Solutions.

- Procedure:

- Preparation: Develop a set of core tasks that represent critical user journeys (e.g., "Enter a new patient's baseline vitals," "Generate an adverse event report"). Prepare a quiet testing environment [15].

- Participant Briefing: Welcome the participant and explain the purpose is to test the system, not their abilities. Introduce the think-aloud technique: "I'm going to ask you to think aloud as you work. This means please say everything you are thinking, looking at, trying to do, and feeling. It's very important that you keep talking continuously." [12].

- Demonstration: Model the technique with a simple, analogous example. For instance, demonstrate thinking aloud while finding a function on a smartphone [12]. Allow the participant a short practice session.

- Task Execution: Present the first task. The facilitator should observe and take notes. Use neutral prompts to encourage verbalization if the participant falls silent, such as "What are you thinking right now?" or "Remember to keep talking, please." Avoid explaining or leading the participant [14] [15].

- Data Collection: Record the session (audio and screen). Document observations, including participant quotes, task success/failure, time-on-task, and non-verbal cues of confusion or frustration [12].

- Post-Task Debrief (Optional): After all tasks, a short debriefing can be conducted to clarify observed behaviors or gather overall impressions [15].

The workflow for this protocol is linear and focused on real-time data capture, as illustrated below.

Respondent Debriefing: Application and Workflow

Respondent Debriefing is a structured conversation after a survey or task to gather feedback on the respondent's experience, their interpretation of questions, and the overall process [13]. In global health and drug development, it is crucial for validating cross-cultural survey instruments and ensuring questions are interpreted as intended [5].

3.2.1 Experimental Protocol for a Respondent Debriefing

- Objective: To assess the clarity, cultural relevance, and appropriateness of a patient-reported outcome (PRO) survey on medication side effects.

- Materials Needed: Refer to Table 3: Research Reagent Solutions.

- Procedure:

- Preparation: Develop a semi-structured debriefing guide with open-ended probes focused on key survey sections (e.g., "What did you think this question about 'fatigue' meant?", "Were the response options clear for the question about pain frequency?") [5] [17].

- Survey Completion: The participant completes the full survey under normal conditions.

- Initiating the Debrief: Thank the participant. Restate the general objective of the research. Explain that you now want to hear their feedback on the questions themselves [13].

- Structured Debriefing: Follow the debriefing guide. Effective models include the "What? So What? Now What?" model [16]:

- What? (Description): "Can you describe in your own words what that question was asking?" [16].

- So What? (Interpretation): "Why do you think that information is important for the researchers to know?" or "How did you decide on your answer?" [16].

- Now What? (Application): "Based on your experience, how could this question be made clearer for other patients?" [16].

- Data Recording: Meticulously document all participant responses. Audio recording is highly recommended for accuracy.

- Closure: Provide a final thank you, offer information on how to receive study results, and provide referral resources if the topic was sensitive (e.g., mental health support) [13].

The debriefing process is a structured cycle of reflection, analysis, and planning, as shown in the following workflow.

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of these methodologies requires specific tools and materials. The following table lists essential "research reagent solutions" for conducting rigorous cognitive interviews.

Table 3: Research Reagent Solutions for Cognitive Interviewing

| Item | Function/Application Note |

|---|---|

| Semi-Structured Interview Protocol | A guide with predefined tasks (for think-aloud) or probes (for debriefing) ensures consistency across participants while allowing flexibility to explore emergent issues [17] [2]. |

| High-Fidelity Audio/Video Recorder | Essential for capturing verbal data and non-verbal cues. Video is critical for think-aloud tests involving interface interaction. Ensures accurate data for later analysis [11]. |

| Informed Consent Documents | Clearly explains the study purpose, procedures, confidentiality, and the participant's right to withdraw. For think-aloud, must explicitly mention the requirement to verbalize thoughts [13]. |

| Pilot-Tested Tasks or Survey Instruments | The stimulus material must be robust. Pilot testing helps refine tasks and debriefing questions to ensure they elicit the intended cognitive processes or feedback [15]. |

| Dedicated Transcription Service/Software | Converts audio recordings into text for in-depth qualitative analysis. Accuracy is paramount for reliable coding and interpretation [11]. |

| Participant Incentives | Financial or other compensation acknowledges the participant's time and contribution, aiding in recruitment and reflecting the value of their expertise [15]. |

| Neutral Prompt Script | A list of standardized, non-leading phrases (e.g., "Keep talking, please," "What are you thinking?") for researchers to use during think-aloud sessions to minimize bias [12]. |

Both Think-Aloud Protocols and Respondent Debriefing are powerful, yet distinct, methodologies within the cognitive interviewing toolkit. The Think-Aloud Protocol is unparalleled for capturing the process of interaction and cognition in real-time, making it ideal for usability engineering and understanding problem-solving pathways. Conversely, Respondent Debriefing is optimized for retrospective investigation into the interpretation and experience of a survey or task, proving essential for instrument validation and ethical research practice. For researchers in drug development and scientific fields, the strategic selection and rigorous application of these protocols are fundamental to developing valid, reliable, and user-friendly data collection instruments, ultimately strengthening the integrity of research outcomes.

Within the rigorous framework of cognitive interviewing for research methodology, the development of probe questions is a critical determinant of data quality. Cognitive interviewing is a qualitative method that explores individuals' thought processes as they answer survey questions, providing invaluable insight into question validity and potential response errors [1] [18]. This methodology is particularly crucial in fields like drug development and healthcare research, where precise measurement is paramount. Probe development sits at the heart of this process, with two primary approaches emerging: scripted (planned) and spontaneous (emergent) probing. The strategic selection between these approaches directly influences the reliability, validity, and depth of the cognitive data obtained, ultimately affecting the quality of the final survey instrument.

Theoretical Framework: The Cognitive Response Process

Cognitive interviewing is fundamentally based on models of the survey response process. The most widely cited framework, Tourangeau's four-stage model, posits that respondents must:

- Comprehend the question and its key terms.

- Retrieve relevant information from memory.

- Make a Judgment based on the retrieved information.

- Select a Response that matches the available options [18].

Probes are designed to investigate and illuminate these internal cognitive stages. The strategic choice between scripted and spontaneous probing determines how a researcher interrogates each of these stages, balancing consistency against flexibility to uncover potential problems with survey questions, such as misinterpretation, recall difficulties, and sensitivity issues [19] [1].

Scripted (Planned) Probing

Scripted probing involves preparing and asking a standardized set of probe questions before the cognitive interviews begin. This approach is systematic, with probes developed during the protocol design phase to target specific, pre-identified aspects of the test questions.

Development Methodology

Developing scripted probes requires a meticulous process:

- Define Measurement Objectives: Before writing a single probe, the researcher must clarify the exact intent of the survey question and what constitutes a successful answer. This involves deconstructing the question to identify ambiguous terms, complex concepts, and specific recall periods [20]. For example, for a question about "visits to a doctor," the protocol must define whether this includes visits for dependents or only appointments with a physician, excluding other healthcare staff [20].

- Draft Proactive Probes: Based on the measurement objectives, the researcher drafts probes that proactively investigate potential points of failure in the response process. These are often aligned with the four-stage cognitive model [18].

- Incorporate Standard Probes: A set of general, versatile probes can be included in every protocol to capture broad reactions (e.g., "How did you come up with your answer?" or "What does the term mean to you in this question?") [1].

Table 1: Typology of Scripted Probes Based on Cognitive Stages

| Cognitive Stage | Probe Objective | Example Probes |

|---|---|---|

| Comprehension | Assess understanding of question wording and intent. | "In your own words, what is this question asking?" "What does the term 'formal educational program' mean to you?" [18] |

| Retrieval | Understand the recall process and memory strategies. | "How did you remember how many times you did that?" "Was that easy or difficult to recall?" [1] |

| Judgment | Evaluate the decision-making and estimation process. | "How sure are you of that answer?" "Did you have to guess or estimate?" |

| Response | Check the mapping of the answer to the response options. | "How did you pick your answer from the list?" "Was your answer a close fit to the options available?" [2] |

Application Protocol

- Implementation: The interviewer administers the survey question, allows the participant to provide an answer, and then asks the pre-scripted probe questions. This can be done concurrently (immediately after the question) or retrospectively (after the entire survey is completed) [18].

- Interviewer Role: The interviewer's role is primarily to administer the probes as written, ensuring consistency across participants. They must read the probes neutrally to avoid biasing the participant.

- Data Analysis: Data from scripted probing is typically easier to code and analyze across interviews because the same questions are asked of all participants, allowing for direct comparison and aggregation of findings [18].

Spontaneous (Emergent) Probing

Spontaneous probing relies on the interviewer's skill to generate unplanned, follow-up questions in real-time based on the participant's unique verbal responses and non-verbal cues during the interview.

Development Methodology

Unlike scripted probing, spontaneous probing is not developed in advance through a drafting process. Its "development" is continuous and occurs during the interview. However, preparing for it involves:

- Training Interviewer Competence: Interviewers are trained to listen actively and identify "cues" that warrant further exploration. These cues include participant hesitation, expressions of confusion, contradictory statements, surprising answers, or non-verbal signs like furrowed brows or long pauses [2] [18].

- Developing a Reactive Mindset: Interviewers learn to formulate probes on the fly to diagnose the root cause of the observed cue. This requires a deep understanding of the survey response process and the specific measurement objectives of each question.

Table 2: Cues and Corresponding Spontaneous Probe Examples

| Observed Cue | Potential Issue | Example Spontaneous Probe |

|---|---|---|

| Participant hesitates before answering. | Comprehension difficulty or recall struggle. | "It seemed like you paused there. What was going through your mind?" |

| Participant asks for clarification. | Unfamiliar or ambiguous terminology. | "You asked what 'X' means. How were you interpreting it?" |

| Participant gives an inconsistent answer (e.g., contradicts earlier statement). | Judgement or recall error; question sensitivity. | "Earlier you mentioned Y, but now you've said Z. Could you help me understand the difference?" |

| Participant expresses frustration or uncertainty. | Response task is overly complex or burdensome. | "You seem unsure. What part of answering that was challenging?" |

Application Protocol

- Implementation: The interviewer administers the survey question and then engages in a semi-structured conversation, using the participant's behavior as a guide for when and what to probe. This is inherently a concurrent technique [18].

- Interviewer Role: The interviewer takes on a more active and adaptive role, functioning as a diagnostic investigator rather than a standardized administrator. They must balance thoroughness with the need to avoid leading the participant or creating reactivity effects [18].

- Data Analysis: Analyzing data from spontaneous probing can be more complex, as the data across participants is less uniform. Analysis focuses on identifying themes and unique problems that emerged organically from the interviews, often using qualitative coding techniques based on grounded theory [1] [18].

Integrated Strategic Approach and Experimental Protocol

For most applied research, a hybrid approach that strategically combines scripted and spontaneous probing is recommended. This leverages the strengths of both methods to ensure comprehensive coverage while remaining responsive to individual participant experiences.

Workflow for a Combined Probing Strategy

The following diagram illustrates a protocol for integrating both probing approaches within a single cognitive interview session.

Detailed Experimental Protocol for Hybrid Probing

Aim: To evaluate and refine survey questions for a healthcare study on patient adherence to medication. Materials: Survey questionnaire, interview protocol with scripted probes, audio recorder, notetaking template. Participant Recruitment: 20-30 participants purposively sampled from the target population (e.g., patients with a specific chronic condition) to ensure a range of experiences [1].

Procedure:

- Preparation: The research team develops the interview protocol, defining measurement objectives for each survey question and drafting 2-3 key scripted probes for each. Interviewers are trained on both the scripted probes and techniques for identifying cues and formulating spontaneous probes [20].

- Interview Session:

- The interviewer administers the survey question exactly as written.

- The participant is asked to "think aloud" as they formulate their answer [2] [18].

- The interviewer observes, noting any cues (hesitation, confusion, etc.).

- If a cue is observed: The interviewer first asks a spontaneous probe tailored to the cue (e.g., "I noticed you sighed, could you tell me why?").

- After the spontaneous probe (or if no cue is observed): The interviewer asks the pre-scripted probes from the protocol.

- The interviewer documents the participant's answers to all probes and their own observations in real-time.

- Analysis: Following the interview, the team analyzes the data using a multi-level approach:

- Level 1: Reviewing notes from a single interview.

- Level 2: Summarizing findings for each test question.

- Level 3: Comparing results across all respondents to identify common patterns and unique issues.

- Level 4: Drawing conclusions about question performance and making recommendations for revision [1].

The Researcher's Toolkit

Table 3: Essential Reagents and Resources for Cognitive Interviewing

| Item/Resource | Function in Probe Development and Testing |

|---|---|

| Interview Protocol | The master document that contains the survey questions and the pre-scripted probes. It serves as the experimental blueprint, ensuring consistency and alignment with measurement objectives [20]. |