Cognitive Load Theory in Research Design: A Practical Framework for Enhancing Scientific Rigor and Efficiency

This article provides a comprehensive framework for applying Cognitive Load Theory (CLT) to biomedical and clinical research design.

Cognitive Load Theory in Research Design: A Practical Framework for Enhancing Scientific Rigor and Efficiency

Abstract

This article provides a comprehensive framework for applying Cognitive Load Theory (CLT) to biomedical and clinical research design. It addresses researchers, scientists, and drug development professionals, guiding them from foundational principles to advanced application. The content covers strategies to minimize extraneous cognitive load, optimize intrinsic load for complex protocols, and implement validation techniques that ensure data integrity and reproducibility. By systematically managing cognitive demands, research teams can reduce errors, improve decision-making, and accelerate the translation of scientific discoveries.

Why Mental Bandwidth Matters: The Foundation of Cognitive Load in Research

Cognitive Load Theory (CLT) is an established framework in educational psychology, based on the understanding that an individual's working memory—the conscious part of our memory where we temporarily store and process new information—has a limited capacity [1] [2]. When this capacity is exceeded during a learning task or a complex activity, it leads to cognitive overload, which impairs learning, performance, and the ability to encode information into long-term memory [3] [4]. For researchers and scientists, managing cognitive load is crucial for designing robust experiments, accurately analyzing data, and avoiding errors that can arise from an overwhelmed cognitive system.

CLT categorizes the mental effort required for a task into three distinct types [3] [4]:

- Intrinsic Cognitive Load: This is the inherent mental effort required by the task itself, determined by its complexity and the number of interacting elements that must be understood simultaneously. It is also influenced by the learner's prior knowledge.

- Extraneous Cognitive Load: This is the mental effort imposed by the way information or the task is presented. Poorly designed materials, disorganized instructions, or a suboptimal workflow add extraneous load, which is detrimental to learning and performance.

- Germane Cognitive Load: This refers to the mental effort devoted to processing new information, creating meaningful schemas, and transferring knowledge into long-term memory. Effective learning maximizes germane load.

Frequently Asked Questions (FAQs)

Q1: Why is cognitive load a critical consideration for researchers and scientists? Research tasks often involve complex procedures, simultaneous data monitoring, and high-stakes decision-making. High cognitive load can consume the limited working memory resources needed for these activities, leading to oversights, procedural errors, and flawed data interpretation [4]. Managing cognitive load is essential for maintaining precision and reliability in scientific work.

Q2: I often feel overwhelmed when running experiments with multiple parallel steps. What type of cognitive load am I experiencing? You are likely experiencing a high intrinsic cognitive load due to the natural complexity and high "element interactivity" of your task [1]. Furthermore, if your lab protocols, data sheets, or equipment interfaces are poorly organized, they could be adding significant extraneous cognitive load, pushing your working memory toward overload [3].

Q3: How can I measure the cognitive load of my research participants or myself? Mental workload can be measured using subjective tools like the NASA Task Load Index (NASA-TLX), which provides a multidimensional rating of perceived workload [4]. Researchers also use physiological measures and performance-based assessments to obtain objective data on cognitive load [5] [4].

Q4: Does collaboration help reduce cognitive load in research? It can, but the effectiveness depends on the task. One study found that for tasks with high complexity (high element interactivity), individual learning with integrated information formats was more effective. However, collaborative learning in dyads was more beneficial when the necessary information was presented in a dispersed format, as the partners could help reintegrate it [1].

Troubleshooting Guides

Problem 1: High Error Rates in Complex Experimental Protocols

Potential Cause: Excessive intrinsic load from high element interactivity, combined with extraneous load from a poorly structured protocol document.

Solutions:

- Chunk Information: Break down the protocol into smaller, logically grouped steps. Our working memory can hold approximately 3 to 4 units of information at a time, so presenting information in "chunks" makes it more manageable [2].

- Utilize Worked Examples: When training new team members or learning a new technique, study worked examples before engaging in problem-solving. This has been shown to improve retention and reduce cognitive load [1].

- Leverage Schemas: Encourage the development of mental models or "schemas" for common procedures. A well-developed schema acts as a single, automated unit in working memory, freeing up capacity [6].

Problem 2: Difficulty in Accurately Recording Data During Fast-Paced Experiments

Potential Cause: Extraneous overload caused by split-attention, where you must constantly look between the experimental apparatus and a separate data sheet.

Solutions:

- Apply the Spatial Contiguity Principle: Integrate information sources. For example, place the data recording sheet directly next to the relevant equipment or use a tablet with a digital form that is always visible [1]. A study on learning knot-tying found that an integrated format was specifically beneficial for reducing intrinsic load in procedural tasks [1].

- Automate and Outsource: Use technology to reduce mental burden. Automate data logging with sensors where possible, and set reminders for procedural steps on a digital calendar to free up working memory for critical decision-making [2].

Problem 3: Mental Fatigue and Reduced Performance During Long Research Sessions

Potential Cause: Depletion of working memory resources without adequate recovery, a state sometimes called "working memory recovery" failure [1].

Solutions:

- Schedule Regular Breaks: Cognitive fatigue directly impairs working memory. Taking short, scheduled breaks during long tasks can help maintain performance levels [2].

- Manage the Environment: Reduce environmental distractions (e.g., noise, clutter) to minimize extraneous load unrelated to the research task [3].

- Consider Recovery Strategies: While one study on exposure to nature imagery did not show significant effects, the concept of replenishing cognitive resources remains important. Structured rest and mindfulness interventions are areas of ongoing research [1].

Experimental Protocols & Data

Key Experimental Paradigm: Dual-Task and Working Memory Capacity

This protocol is used to assess the demands a task places on the working memory buffer [5].

Methodology:

- Primary Task: Participants perform a classic working memory task, such as a change detection task. They are briefly shown an array of colored squares and must remember them over a short delay.

- Secondary Task: A simple, non-automated discrimination task is interposed during the delay period of the primary task. For example, participants see a 'C' or a mirror-image 'C' and must make a quick discriminative response.

- Measurement: Researchers measure neural correlates like the Contralateral Delay Activity (CDA), an ERP component that reflects the active maintenance of information in working memory. They also measure accuracy on both the primary and secondary tasks.

Expected Outcome: The interposed task causes a massive disruption in the CDA, indicating that the simple task requires the same active working memory processes as the primary memory task. However, change detection performance may only be slightly impaired, suggesting the brain can use "activity-silent" neural mechanisms to retain information when active maintenance is disrupted [5].

Quantitative Findings in Cognitive Load Research

Table 1: Summary of Key Experimental Findings from Cognitive Load Research

| Study Focus | Experimental Design | Key Finding | Implication for Research Design |

|---|---|---|---|

| Split-Attention Effect [1] | Comparing integrated vs. separated source materials. | Integrating related information (text & diagrams) is more effective than presenting them separately. | Integrated protocols and data displays reduce extraneous load and improve efficiency. |

| Redundancy Effect [1] | Presenting information in multiple modalities (e.g., spoken & written text). | Codal redundancy (same code, different modality) can impair learning, while modal redundancy (different codes, same modality) can help. | Avoid presenting identical information in multiple channels; complementary information is more effective. |

| Blocked vs. Mixed Practice [1] | Studying one domain per session vs. alternating domains. | Alternating between subjects (e.g., math & language) in a session was more efficient for materials with surface similarities. | Structuring research training with varied practice can enhance learning efficiency. |

| Worked Examples [1] | Comparing studying worked examples vs. solving problems. | Using worked examples improved retention and reduced cognitive load, especially for students with a strong mastery orientation. | Use worked examples for initial training on complex data analysis or lab techniques. |

Research Reagent Solutions: The Cognitive Toolkit

Table 2: Essential "Reagents" for Managing Cognitive Load in Research

| Tool or Strategy | Function | Example Application in Research |

|---|---|---|

| Chunking [2] | Groups information into smaller, meaningful units to bypass working memory limits. | Breaking a long chemical synthesis protocol into a series of 3-4 step chunks. |

| Mnemonics & Acronyms [2] | Creates associations to make abstract information easier to recall. | Creating an acronym for the order of steps in a complex assay. |

| Concept Mapping [2] | Visually organizes information to show relationships and reduce extraneous load. | Mapping out the hypothesized relationships between variables before starting data analysis. |

| Automation & Outsourcing [2] | Uses external tools to handle tasks, freeing up working memory. | Using electronic lab notebooks with template fields and automated reminder alerts. |

| Visualization [2] | Creates mental or physical images to aid in processing and recalling information. | Mentally rehearsing a surgical or delicate procedure before performing it. |

Workflow for Cognitive Load Optimization

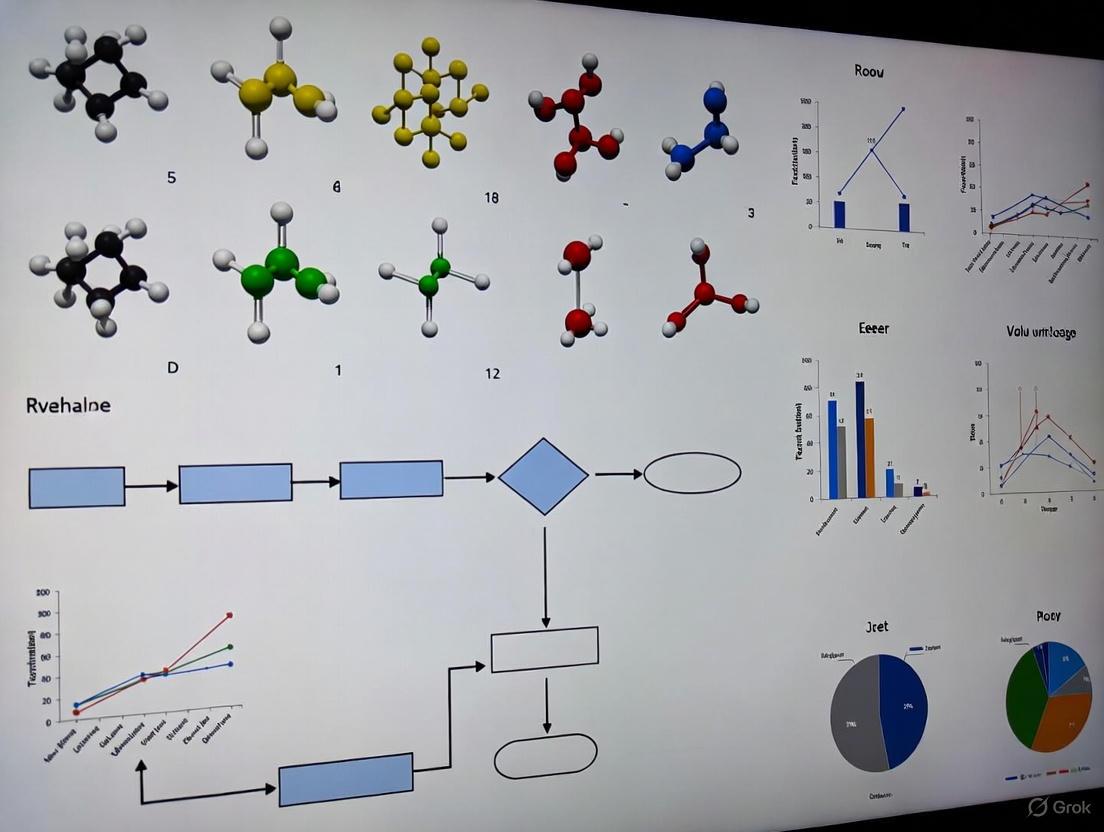

The diagram below outlines a systematic workflow for diagnosing and addressing cognitive load issues in research design.

Cognitive Load Optimization Workflow

FAQs: Understanding Cognitive Load in Research

What are the three types of cognitive load in Cognitive Load Theory?

Cognitive Load Theory (CLT) states that an individual's working memory is limited and is impacted by three types of loads [7] [8]:

- Intrinsic Cognitive Load: This is the inherent mental effort associated with the complexity of a specific task or topic. It is determined by the number of interactive elements that must be processed simultaneously in working memory [9] [10] [8]. For example, solving a complex differential equation has a higher intrinsic load than solving a basic arithmetic problem.

- Extraneous Cognitive Load: This is the unnecessary cognitive effort required to process information that is not essential to the learning or task itself. It is generated by the way information is presented and is under the control of the instructional or experimental designer [11] [12] [8]. Poorly designed materials, distractions, and confusing layouts contribute to extraneous load.

- Germane Cognitive Load: This refers to the mental resources devoted to processing information, creating meaningful schemas, and transferring knowledge into long-term memory [9] [13] [8]. It is the effort invested in actually learning and understanding the material.

Why should researchers in drug development care about Cognitive Load Theory?

Cognitive load is highly relevant to assessment and rater-based evaluation, which is common in experimental research [14]. In tasks like Objective Structured Clinical Examinations (OSCEs) or data analysis:

- Assessments with high intrinsic and extraneous load can interfere with an assessor's attention and working memory, potentially resulting in poorer quality and less reliable assessments [14].

- Reducing these loads ensures that cognitive resources are dedicated to accurate observation and decision-making, thereby increasing the validity of research outcomes [14].

How can I identify if my experimental protocol has a high extraneous load?

High extraneous load often manifests as:

- Split-Attention Effect: When users must split their attention between multiple, separated sources of information (e.g., a graph and its legend on a different page) [8].

- Unnecessary Interactivity: Requiring users to process irrelevant information or navigate a poorly structured interface to find critical data [12] [15].

- Lack of Clarity: Ambiguous labels, inconsistent terminology, or the use of unexplained jargon forces users to expend mental effort on interpretation rather than the core task [16].

What is the relationship between the three loads?

The three loads are additive and compete for limited working memory resources [8]. The goal of effective design is to manage intrinsic load, minimize extraneous load, and optimize germane load [9] [8]. If the intrinsic load of a task is high and the extraneous load is also high, it can lead to cognitive overload, impairing learning and performance. Reducing extraneous load frees up cognitive capacity that can be redirected toward germane load, facilitating better schema construction and understanding [9].

Troubleshooting Guides: Mitigating Cognitive Load in Research Design

Problem: High Intrinsic Load Overwhelms Researchers

Symptoms: Difficulty in understanding complex experimental workflows, inability to connect procedural steps, errors in executing multi-stage protocols.

Solutions:

- Chunk Information: Break down complex procedures into smaller, manageable sub-steps or "subschemas" that can be taught and practiced in isolation before being combined [7] [10] [8].

- Use Worked Examples: Provide detailed examples of completed analyses or experimental setups. This allows researchers to study the solution before attempting to generate their own, reducing unnecessary problem-solving load [8].

- Scaffold Learning: For highly complex tasks, provide temporary supports such as checklists, templates, or simplified models. These can be gradually removed as the researcher's expertise increases [10].

Problem: High Extraneous Load Impedes Data Interpretation

Symptoms: Difficulty extracting key findings from data visualizations, time wasted on formatting data instead of analyzing it, confusion caused by inconsistent labeling in lab software.

Solutions:

- Optimize Data Visualizations:

- Eliminate Clutter: Remove non-data ink, such as heavy gridlines, background images, or excessive colors, that take attention away from the data [11].

- Use Appropriate Chart Types: Ensure the visualization fits the message (e.g., use a bar chart to compare quantities, a line chart to show trends) [11].

- Annotate Directly: Include clear titles, labels, and annotations directly on the chart to provide context and eliminate the need to search for information elsewhere [11].

- Simplify Forms and Interfaces:

- Apply the "Structure, Clarity, Transparency, Support" Framework: Organize related fields, use plain language, mark required fields clearly, and provide supportive guidance [16].

- Leverage Common Design Patterns: Use familiar UI elements to reduce the learning curve for new software or tools [15].

- Minimize Choices: Reduce decision paralysis by presenting only the most relevant options at any given time [15].

Problem: Low Germane Load Limits Schema Development

Symptoms: Inability to apply learned procedures to new but related problems, difficulty in troubleshooting experiments, superficial understanding of underlying principles.

Solutions:

- Promote Schema Construction: Design training and protocols that encourage researchers to see patterns and relationships. Use concept maps or flowcharts to illustrate how different pieces of information connect [13].

- Foster Cognitive Absorption: Create well-designed materials that are free from extraneous load, allowing researchers to fully engage with the intrinsic content. This deep engagement is conducive to germane processing [9].

- Encourage Self-Explanation: Prompt researchers to explain the steps of a protocol or the reasoning behind an analysis in their own words, which strengthens schema formation [9].

The following table summarizes the key characteristics of the three cognitive loads.

| Load Type | Definition | Source / Control | Key Mitigation Strategies |

|---|---|---|---|

| Intrinsic Load | The inherent mental effort demanded by the complexity of the task or material [10] [8]. | The inherent nature of the subject matter; fixed for a given task and learner's prior knowledge [10]. | Break tasks into smaller chunks ("chunking") [10]. Use progressive disclosure [16]. Provide worked examples [8]. |

| Extraneous Load | The unnecessary cognitive effort imposed by the way information is presented [11] [12]. | The design of instructional materials, interfaces, and the learning environment; fully controllable by the designer [11] [8]. | Simplify visuals & eliminate clutter [11] [15]. Use clear, concise language [16]. Follow common design patterns [15]. |

| Germane Load | The mental effort devoted to processing information, creating schemas, and transferring learning to long-term memory [9] [13]. | The learner's cognitive resources and strategies; can be influenced by instructional design [9] [8]. | Use varied examples to illustrate principles. Encourage self-explanation. Provide opportunities for guided practice [9]. |

Visualizing Cognitive Load Management

The following diagram illustrates the relationship between the different cognitive loads and the overarching goal of managing them in experimental design.

The Scientist's Toolkit: Essential Reagents for Cognitive Load Research

| Research Reagent / Tool | Function / Explanation |

|---|---|

| Subjective Rating Scales (e.g., NASA-TLX) | Multidimensional scales that allow participants to self-report perceived mental effort, frustration, and task demand. They provide a direct, though subjective, measure of cognitive load [9]. |

| Eye-Tracking Systems | Provide objective data such as pupil dilation (a reliable indicator of cognitive load), number of fixations, and gaze paths. This helps identify which elements of an interface demand the most visual and cognitive attention [9]. |

| Task-Invoked Pupillary Response | The measurement of pupil diameter changes during a task. It is a sensitive and reliable physiological measure of cognitive load directly related to working memory activity [8]. |

| Performance-Based Measures | Metrics like task completion time, error rates, and accuracy on retention or transfer tests. These provide objective data on the behavioral outcomes of cognitive load [9]. |

| Dual-Task Paradigm | An experimental protocol where participants perform a primary task and a secondary, concurrent task. Performance on the secondary task is used to infer the cognitive load imposed by the primary task. |

Cognitive strain refers to the excessive demand on our limited mental processing power, which can severely impact performance in research settings. When the cognitive load required to operate equipment, follow protocols, and interpret data exceeds a researcher's capacity, it leads to slower information processing, missed critical details, and ultimately, abandonment of tasks or erroneous conclusions [17]. This technical support center provides practical methodologies to identify, troubleshoot, and mitigate the effects of cognitive strain, thereby safeguarding the integrity of your research.

Troubleshooting Guide: FAQs on Cognitive Strain & Research Errors

FAQ 1: Our team keeps overlooking anomalous data points in high-throughput screening. Could this be a cognitive bias? This is a classic manifestation of Confirmation Bias, where there is an unconscious tendency to favor information that confirms pre-existing beliefs or hypotheses and to disregard contradictory data [18]. This bias often originates from the brain's fast, intuitive thinking processes (Type 1), which dominate to save mental effort but are prone to systematic errors [19].

- Diagnostic Protocol: To confirm this bias, conduct a blinded re-analysis of a random sample of your raw data. Compare the findings between the original analysis and the blinded review. A significant discrepancy in the identification of anomalies indicates likely bias.

- Mitigation Strategy: Implement a structured "Devil's Advocate" workflow. Assign a team member, on a rotating basis, the specific task of challenging the dominant interpretation by actively seeking disconfirming evidence. Furthermore, utilize data visualization tools that automatically flag outliers based on pre-set, objective statistical thresholds, removing sole reliance on human observation.

FAQ 2: After a long shift, our technicians make more errors in sample preparation. How is fatigue related to cognitive errors? Fatigue, sleep deprivation, and cognitive overload are well-documented high-risk situations that dispose decision-makers to biases [19]. These states deplete the mental resources required for the slower, more reliable analytical thinking (Type 2 processes), causing an over-reliance on error-prone intuitive judgments [19].

- Diagnostic Protocol: Correlate error logs from your Laboratory Information Management System (LIMS) with time-on-task. Track metrics like procedure completion time and incident reports. A statistically significant increase in errors after a specific duration of work provides quantitative evidence of fatigue-induced impairment.

- Mitigation Strategy: Enforce mandatory break schedules following the principles of "cognitive ergonomics." Redesign complex protocols to include checkpoints and verifications. For critical, repetitive tasks, employ task-rotation schedules to prevent monotony and the resulting "inattentional blindness."

FAQ 3: Why do our researchers sometimes stick with an initial hypothesis even when evidence suggests otherwise? This is frequently a combination of the Anchoring Bias and Conservatism Bias. The Anchoring Bias is the tendency to rely too heavily on the first piece of information encountered (the initial hypothesis) [18]. The Conservatism Bias is the tendency to insufficiently revise one's belief when presented with new evidence [18].

- Diagnostic Protocol: In team meetings, anonymously poll members on their confidence in a hypothesis before and after presenting new, contradictory data. If confidence levels do not shift appropriately with the new evidence, these biases are likely at play.

- Mitigation Strategy: Adopt a "pre-mortem" analysis technique. Before finalizing a conclusion, the research team proactively assumes the hypothesis is false and brainmarks all possible reasons why. This formalizes the consideration of alternative outcomes and weakens the anchor of the initial idea.

FAQ 4: How can the physical design of a lab or software interface reduce cognitive load? Poor design creates Extraneous Cognitive Load—mental processing that does not help users understand the task or content [17] [20]. Cluttered interfaces, inconsistent labeling, and poorly organized workflows force researchers to expend valuable mental resources on simply operating the system rather than on the scientific problem [17].

- Diagnostic Protocol: Conduct usability tests where researchers are observed completing core tasks (e.g., data entry, instrument calibration). Note any hesitation, confusion, or unnecessary steps. High error rates or long completion times indicate high extraneous load.

- Mitigation Strategy: Apply user experience (UX) principles:

- Avoid Visual Clutter: Remove redundant links, irrelevant images, and meaningless design flourishes [17].

- Build on Existing Mental Models: Use labels and layouts consistent with other software and labs to reduce the learning curve [17].

- Offload Tasks: Use automation, smart defaults, and data dashboards to minimize the need for manual calculation and memory recall [17].

Experimental Protocols for Studying Cognitive Strain

Protocol: Simulated Diagnostic Error Task

- Objective: To quantify the effect of cognitive load on the application of the Base Rate Fallacy.

- Background: The Base Rate Fallacy is the tendency to ignore general statistical information (base rates) and focus on specific case information, even when the base rate is more important [18].

- Materials:

- Computer-based testing platform.

- Dual-task paradigm software (e.g., auditory n-back task for load induction).

- A series of diagnostic vignettes containing both base rate statistics and individuating information.

- Methodology:

- Group Assignment: Randomly assign participants to a high cognitive load or low cognitive load group.

- Load Induction: The high-load group performs the diagnostic task while simultaneously engaged in a secondary task (e.g., remembering a sequence of tones). The low-load group performs only the diagnostic task.

- Task: Participants review each vignette and provide a probability judgment for a specific outcome.

- Data Analysis: Compare the proportion of base rate neglect between the two groups. The dependent variable is the rate of incorrect diagnoses that favor specific, but less probable, information over general statistics.

- Expected Outcome: The high cognitive load group will demonstrate a significantly higher rate of base rate neglect, confirming that strain increases reliance on heuristic, error-prone thinking [18] [19].

Protocol: Eye-Tracking Analysis of Data Review Interfaces

- Objective: To identify visual clutter and split-attention effects in data visualization software that contribute to extraneous cognitive load.

- Background: Split-attention effects occur when users must integrate multiple, separated sources of information, which heavily loads working memory [20].

- Materials:

- Eye-tracking apparatus.

- Different versions of a data dashboard: one optimized for clarity and one with a cluttered, default design.

- A set of specific questions requiring data synthesis to answer.

- Methodology:

- Participants are assigned to interact with either the optimized or cluttered dashboard.

- While they answer the questions, their gaze paths and fixation durations are recorded.

- Metrics:

- Time to complete tasks.

- Accuracy of answers.

- Number of visual transitions between disparate screen elements.

- Fixation duration on critical data points.

- Expected Outcome: The cluttered interface will show longer task completion times, more visual transitions, and lower accuracy, directly linking poor design to higher cognitive strain and error rates [17] [20].

Visualization: The Cognitive Strain-Error Pathway

The following diagram illustrates the logical relationship between high cognitive load, the dominance of intuitive thinking, and the resulting research errors and biases.

The Scientist's Toolkit: Research Reagent Solutions

The following table details key conceptual "reagents" and tools for diagnosing and mitigating cognitive strain in research environments.

| Research Reagent / Tool | Function & Explanation |

|---|---|

| Dual-Process Theory (DPT) Framework [19] | A conceptual model for understanding the two modes of decision-making: fast, intuitive (Type 1) and slow, analytical (Type 2). It is fundamental for diagnosing the origin of cognitive biases. |

| Cognitive Load Assessment (NASA-TLX) | A validated subjective workload assessment tool that helps quantify the mental demand, frustration, and effort required by a specific research task or tool. |

| Blinded Analysis Protocol | An experimental technique where the analyst is kept unaware of the group assignments or hypothesis to prevent Confirmation Bias from influencing data interpretation. |

| Pre-mortem Analysis [19] | A proactive debiasing strategy where a team assumes a future project has failed and works backward to determine what could cause the failure, mitigating Optimism Bias and Anchoring. |

| Automated Data Sanity Checks | Scripts or software functions that automatically flag statistical outliers or data points that fall outside pre-defined physiological or technical ranges, offloading a memory-intensive task from researchers [17]. |

Quantitative Data on Cognitive Biases and Load

The table below summarizes key quantitative information about specific cognitive biases relevant to research settings, providing a quick reference for understanding their potential impact.

| Cognitive Bias | Description | Common Impact in Research |

|---|---|---|

| Confirmation Bias [18] | The tendency to search for, interpret, and recall information in a way that confirms one's pre-existing beliefs. | Leads to selective data collection, over-weighting of confirming evidence, and dismissal of anomalous results. |

| Anchoring Bias [18] | The tendency to rely too heavily on the first piece of information encountered (the "anchor") when making decisions. | Causes initial hypotheses or early data points to disproportionately influence all subsequent analysis and conclusions. |

| Base Rate Neglect [18] | The tendency to ignore general statistical information (base rates) and focus on specific case information. | Results in flawed risk assessment and misinterpretation of experimental results by ignoring prior probability. |

| Planning Fallacy [18] | The tendency to underestimate the time, costs, and risks of future actions and to overestimate the benefits. | Pervasive in project planning, leading to unrealistic timelines, budget overruns, and rushed, error-prone work. |

Collaborative science, by its nature, involves complex problem-solving and decision-making tasks that impose significant cognitive load—the mental effort required to process information in working memory. When research teams tackle multifaceted problems, the cognitive demands can quickly exceed individual capacity, leading to cognitive overload, which impairs decision-making, reduces cooperation, and increases error rates [21] [22]. Cognitive Load Theory provides a framework for understanding these limitations, traditionally focusing on individual learning but increasingly applied to collaborative contexts [23].

In collaborative research, cognitive load extends beyond individual thinking to include the transactive activities inherent to teamwork: communication, coordination, and the development of shared understanding [23]. This collective dimension introduces both challenges and opportunities—while coordination demands additional cognitive resources, well-structured collaboration can create a collective working memory effect, distributing cognitive demands across team members [23]. Understanding and managing these dynamics is crucial for maintaining research quality, especially in high-stakes fields like drug development where cognitive overload can have significant consequences.

Frequently Asked Questions (FAQs)

Q1: What are the primary types of cognitive load that affect research teams?

Cognitive Load Theory distinguishes three main types that impact collaborative science [24]:

- Intrinsic cognitive load: The inherent difficulty of the research task itself, determined by its complexity and the number of interacting elements researchers must consider simultaneously.

- Extrinsic cognitive load: The mental effort imposed by how information and tasks are presented to the team, including inefficient workflows, poorly designed tools, or suboptimal communication channels.

- Germane cognitive load: The cognitive resources dedicated to constructing schemas and developing long-term understanding—essentially, the effort of learning and innovation.

Q2: How does high cognitive load negatively impact collaborative decision-making?

High cognitive load detrimentally affects both individual and collective decision-making in several ways [21] [22]:

- It accelerates the breakdown of cooperation in team settings, even when punishment mechanisms for non-cooperation exist.

- It increases the likelihood of antisocial punishment—penalizing cooperative team members rather than free riders—by depleting cognitive resources needed for deliberative decision-making.

- It reduces the capacity for complex reasoning and systematic evaluation of alternatives during critical decision phases.

- It impairs the team's ability to reach consensus and build commitment to joint decisions.

Q3: What strategies can research teams employ to manage cognitive load effectively?

Research teams can implement several evidence-based strategies [25]:

- Amplify critical content: Identify and focus on essential knowledge, skills, and outcomes; remove extraneous information that creates unnecessary complexity.

- Provide scaffolding and cognitive aids: Implement checklists, flowcharts, guiding questions, worked examples, and concept maps to support working memory during complex tasks.

- Increase opportunities for collaboration: Distribute memory demands across team members, allowing for deeper processing and more meaningful learning than individual work alone.

- Communicate concisely: Use plain language and carefully chosen words in instructions and documentation to reduce interpretation effort.

Q4: How can we measure and assess cognitive load in research teams?

Both subjective and objective assessment tools are available [24]:

- NASA-TLX: A widely used subjective instrument that measures six domains: mental demand, physical demand, temporal demand, performance, effort, and frustration.

- Heart Rate Variability (HRV): An objective physiological measure that can provide real-time data on cognitive strain during research tasks.

- Customized assessment tools: Domain-specific instruments adapted for particular research contexts, such as the REBOA-adapted NASA-TLX developed for complex medical procedures.

Troubleshooting Common Cognitive Load Issues

Problem: Research Team Experiencing Communication Breakdowns

Symptoms: Repeated misunderstandings, missed information, conflicting interpretations of data, team members working at cross-purposes.

Solutions:

- Implement structured communication protocols with clear documentation standards [26].

- Use central collaboration platforms like OSF with comprehensive wikis to maintain shared context and project history [26].

- Establish regular check-ins with predefined agendas to ensure alignment and address confusion early.

- Apply plain language principles to all documentation, avoiding unnecessary technical jargon [16].

Problem: Declining Research Quality Under Tight Deadlines

Symptoms: Increased errors in data collection or analysis, rushed decisions with inadequate justification, failure to consider alternative hypotheses.

Solutions:

- Implement workload visualization tools to identify team members at capacity and redistribute tasks accordingly [27] [28].

- Introduce deliberate reflection points in the research process where teams must stop and elaborate on findings in their own words [25].

- Use progressive disclosure in complex tasks—presenting only immediately relevant information to prevent overwhelm [16].

- Establish clear prioritization frameworks to ensure cognitive resources focus on most critical research components first [28].

Problem: Inefficient Collaborative Problem-Solving Sessions

Symptoms: Meetings that fail to reach decisions, circular discussions, difficulty integrating diverse perspectives, participant frustration.

Solutions:

- Implement structured facilitation techniques like ThinkLets—reusable patterns of collaboration with detailed scripts for group activities [21].

- Clearly separate divergence (brainstorming alternatives), convergence (synthesizing information), and decision-making phases to manage cognitive demands [21].

- Use visual collaboration tools to externalize thinking and reduce working memory load.

- Assign specific cognitive roles (e.g., "devil's advocate," "synthesizer") to distribute cognitive functions across team members.

Cognitive Load Assessment Methodologies

Table 1: Cognitive Load Assessment Tools for Research Teams

| Tool Name | Type | Key Metrics | Best Use Cases | Implementation Considerations |

|---|---|---|---|---|

| NASA-TLX | Subjective | Mental, physical, temporal demands; performance, effort, frustration | Post-task assessment; comparing different workflow designs | Can be adapted for specific research contexts; most effective when administered immediately after tasks |

| Heart Rate Variability (HRV) | Objective | Physiological indicator of cognitive strain | Real-time monitoring during critical research procedures | Requires specialized equipment; may be intrusive in some research settings |

| Cognitive Load Scale | Subjective | Perceived mental effort on a single scale | Quick assessment of task difficulty; comparing multiple conditions | Less granular than NASA-TLX but faster to administer |

| Eye-Tracking | Objective | Pupil dilation, blink rate, fixation patterns | Interface evaluation; procedure optimization | Specialized equipment required; data analysis can be complex |

| Think-Aloud Protocols | Qualitative | Verbalized thought processes | Understanding cognitive processes during problem-solving | May alter natural cognitive processes; requires careful analysis |

Experimental Protocol: Assessing Cognitive Load Using NASA-TLX

Purpose: To quantify subjective cognitive load experienced by research team members during specific collaborative tasks.

Materials:

- NASA-TLX questionnaire (either paper-based or digital format)

- Timer or scheduling system

- Standardized task instructions

Procedure:

- Pre-Task Briefing: Explain the assessment purpose and NASA-TLX rating procedure to participants.

- Task Performance: Research team completes the target collaborative activity under normal conditions.

- Immediate Assessment: Administer NASA-TLX within 5-10 minutes of task completion.

- Rating Process: For each of the six subscales, participants mark their perceived demand level on a 0-100 scale.

- Weighting (Optional): Participants compare subscales in pairwise fashion to determine relative importance.

- Data Collection: Collect completed forms for analysis.

Analysis:

- Calculate weighted or unweighted NASA-TLX score (range 0-100)

- Compare scores across different tasks, team compositions, or workflow designs

- Identify specific demand dimensions causing highest load for targeted interventions

Research Reagent Solutions: Essential Tools for Managing Cognitive Load

Table 2: Key Resources for Cognitive Load Management in Research Teams

| Tool Category | Specific Examples | Primary Function | Implementation Tips |

|---|---|---|---|

| Collaboration Platforms | Open Science Framework (OSF), Birdview PSA | Centralize project materials, manage contributors, integrate storage | Use granular permissions for different team members; maximize wiki functionality for documentation [26] |

| Workload Management Tools | Float, Asana, Microsoft Project | Visualize team capacity, balance workloads, track deadlines | Input all project activities (including non-research tasks) for accurate capacity planning [28] |

| Cognitive Aids | Checklists, flow charts, worked examples, templates | Reduce working memory demands during complex procedures | Develop domain-specific aids through iterative testing with team members [25] |

| Structured Collaboration Methods | ThinkLets, facilitation techniques, problem-structuring methods | Guide group cognitive processes during team problem-solving | Train multiple team members in facilitation techniques; match methods to specific collaboration goals [21] |

| Communication Standards | Plain language guidelines, structured reporting formats | Reduce interpretation effort and miscommunication | Establish team-specific conventions for documentation and data sharing [16] |

Workflow Diagram: Cognitive Load Management in Collaborative Research

Cognitive Load Management Workflow for Research Teams

Effective management of cognitive load in collaborative science requires both theoretical understanding and practical implementation. By recognizing the finite nature of working memory resources and the additional demands imposed by collaboration, research teams can implement strategies that distribute cognitive demands effectively, reduce extraneous load, and optimize germane load for learning and innovation. The tools and methods presented in this technical support guide provide a foundation for creating research environments that support both rigorous science and sustainable collaborative practices.

Identifying Cognitive Bottlenecks in Common Research Workflows (e.g., Protocol Development, Data Analysis)

Frequently Asked Questions (FAQs)

What is a cognitive bottleneck in the context of experimental research? A cognitive bottleneck describes a stage in a mental operation where processing capacity is limited, forcing certain processes to happen one at a time (serially) rather than simultaneously (in parallel). In research, this often manifests as the "decision-making" or "response selection" stage, where you must interpret data and choose the next action. This central process cannot easily be multitasked and is a major contributor to delays and variability in task completion times [29].

Why is troubleshooting a particularly challenging cognitive task? Troubleshooting is challenging because it requires a researcher to hold a complex experimental setup in their working memory while simultaneously identifying potential problems, formulating hypotheses about the cause, and designing diagnostic tests. This high cognitive load can overwhelm working memory, especially when the researcher is also dealing with the stress of an failed experiment [30] [25].

How can I reduce cognitive load when designing a new experimental protocol? You can reduce cognitive load by applying principles like structure, clarity, and support [16]. This includes:

- Grouping related steps in your protocol to minimize context switching.

- Using plain language and avoiding ambiguous terms.

- Providing scaffolds and cognitive aids, such as checklists, flowcharts, or worked examples from similar protocols [25]. These supports offload information from your working memory, freeing up resources for critical thinking.

What are some common mundane sources of error I should check first when an experiment fails? Before investigating complex hypotheses, rule out simple, mundane issues. Common examples include [30] [31]:

- Reagent issues: Expired reagents, improper storage conditions, or miscalculated concentrations.

- Equipment issues: Uncalibrated instruments, incorrect settings (e.g., temperature of a water bath), or general malfunctions.

- Sample integrity: Degraded or contaminated samples.

- User-generated errors: Minor technique deviations, such as inconsistent aspiration during wash steps.

Are there structured methods for troubleshooting? Yes, a systematic approach can significantly improve troubleshooting efficiency. One common method involves these steps [31]:

- Identify the problem without assuming the cause.

- List all possible explanations (e.g., each reagent, piece of equipment, or step in the protocol).

- Collect data by checking controls, storage conditions, and your procedure notes.

- Eliminate unlikely explanations based on the data.

- Check with experimentation by designing targeted tests for the remaining possibilities.

- Identify the root cause and implement a fix.

Troubleshooting Guides

Guide 1: Troubleshooting a Failed PCR

This guide applies a structured methodology to a common laboratory problem.

Step 1: Identify the Problem

- The problem is a lack of PCR product on an agarose gel, while the DNA ladder is visible, confirming the electrophoresis system is functional [31].

Step 2: List All Possible Explanations

- Consider every component in your reaction: Taq DNA Polymerase, MgCl2, Buffer, dNTPs, primers, and the DNA template.

- Also consider equipment (thermocycler) and procedural errors [31].

Step 3: Collect Data & Eliminate Explanations

- Equipment: Confirm the thermocycler block temperature is calibrated.

- Controls: Check if your positive control (with a known-good template) worked.

- If the positive control failed, the issue is with your core reagents or master mix.

- If the positive control worked, the issue is likely with your specific sample or primers.

- Reagents: Confirm the PCR kit is within its expiration date and was stored correctly [31].

Step 4: Check with Experimentation

- If the problem is isolated to your sample, run these diagnostic tests:

- Test DNA template quality: Run the DNA sample on a gel to check for degradation.

- Measure DNA template concentration: Confirm an adequate amount was used [31].

- If the problem is isolated to your sample, run these diagnostic tests:

Step 5: Identify the Cause

- Based on the experiments, you might find the cause is degraded DNA or a concentration that is too low. The solution is to prepare a new, high-quality DNA sample [31].

Guide 2: Troubleshooting High Variability in a Cell Viability Assay

This guide is based on a real troubleshooting scenario from an educational exercise [30].

Problem: An MTT cell viability assay is producing results with very high error bars and higher-than-expected values [30].

Structured Investigation:

- Review Controls: Were appropriate positive and negative controls included to define the expected range of results? [30]

- Analyze the Protocol: Break down the protocol into its discrete stages (cell culture, treatment, assay incubation, wash steps, signal measurement) and analyze each for potential variability.

- Hypothesize: In the example scenario, the cell line was dual adherent/non-adherent. The group hypothesized that the wash steps were aspirating a variable number of cells, leading to high variance [30].

- Propose a Diagnostic Experiment: To test the hypothesis, propose an experiment that modifies the suspected problematic step while controlling for others. For example, carefully standardize the aspiration technique during washes, ensure the pipette tip is placed on the well wall, and slowly aspirate while slightly tilting the plate. This experiment should be run with both a negative control and the test compound [30].

- Identify the Cause: If the variability decreases with the modified, careful technique, user-generated error during washing is confirmed as the cognitive bottleneck. The solution is to re-train on and standardize this specific technique [30].

Quantitative Data on Cognitive Processing Stages

The table below summarizes data from a study that parsed a cognitive task (number comparison) into distinct stages. It shows how different manipulations affect the mean response time and variability (interquartile range) of each stage, highlighting the decision stage as a key bottleneck [29].

Table 1: Effects of Task Manipulations on Processing Stages

| Manipulation | Target Stage | Effect on Mean RT | Effect on IQR (Variance) |

|---|---|---|---|

| Numerical Distance | Decision (Central) | Significant Increase [29] | Significant Increase [29] |

| Notation (Digits vs. Words) | Perception | Significant Increase [29] | No Significant Effect [29] |

| Response Complexity | Motor Response | Significant Increase [29] | No Significant Effect [29] |

Key Insight: Only manipulations affecting the central decision stage significantly increased the variability (IQR) of response times. This suggests the decision stage is not only a serial bottleneck but also the primary source of noise and unpredictability in the cognitive workflow [29].

Workflow Diagrams for Cognitive Processes

Diagram 1: Three-Stage Cognitive Model with Bottleneck

This diagram visualizes the established three-stage model of cognitive processing, which can be applied to research tasks like analyzing data or executing a protocol. It shows how a central bottleneck forces serial processing when two tasks overlap [29].

Diagram 2: Systematic Troubleshooting Workflow

This diagram outlines a logical, step-by-step workflow for diagnosing experimental failures, designed to reduce cognitive load by providing a clear structure [31].

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Reagents for Common Molecular Biology Experiments

| Reagent | Function in Experiment |

|---|---|

| Taq DNA Polymerase | The enzyme that synthesizes new DNA strands during a Polymerase Chain Reaction (PCR) by adding nucleotides to a growing DNA chain [31]. |

| Competent Cells | Specially prepared bacterial cells (e.g., E. coli strains like DH5α) that can readily take up foreign plasmid DNA, a critical step in cloning and plasmid propagation [31]. |

| Selection Antibiotic | An antibiotic (e.g., Ampicillin, Kanamycin) added to growth media to select for only those bacteria that have successfully incorporated a plasmid containing the corresponding resistance gene [31]. |

| MTT Reagent | A yellow tetrazole that is reduced to purple formazan in the mitochondria of living cells; used in colorimetric assays to measure cell viability and proliferation [30]. |

| His-Tag | A string of 6-10 histidine residues attached to a recombinant protein of interest. It allows for highly specific purification of the protein using affinity chromatography with nickel-nitrilotriacetic acid (Ni-NTA) resin [31]. |

Designing for the Mind: Practical Strategies to Reduce Cognitive Load in Research Protocols

Core Principles for Reducing Cognitive Load

Managing cognitive load is critical in research design. By applying structured approaches to information presentation, you can minimize mental effort, reduce errors, and improve experimental reproducibility. The following table summarizes the foundational principles for structuring complex protocols.

Table 1: Core Principles for Reducing Cognitive Load in Research Protocols

| Principle | Description | Primary Benefit |

|---|---|---|

| Chunking [32] | Breaking content into smaller, manageable units with related items grouped together. | Enhances information processing and recall by organizing related parameters or steps. |

| Progressive Disclosure [33] [34] | Revealing information or functionality gradually, only when needed or requested. | Prevents overwhelm by showing only essential information first, deferring advanced options. |

| Clear Visual Hierarchy [32] [16] | Using size, weight, spacing, and contrast to guide attention to the most important elements. | Clarifies relationships between protocol components and establishes logical flow. |

| Logical Structure [16] | Organizing content in a predictable, logical sequence that aligns with the user's mental model. | Creates a clear path to completion, minimizing context switching and confusion. |

Practical Implementation: A Technical Guide

Chunking Information Effectively

- Group Related Parameters: Cluster all related settings or reagents together. For instance, in a PCR protocol, group "Thermocycler Conditions" separately from "Reaction Mix Components" [32] [16].

- Use Descriptive Headings: Each chunk should have a clear, descriptive heading that acts as a signpost for the content within, helping researchers quickly locate the section they need [16].

- Implement Visual Grouping: Use spacing, subtle borders, or background shading to visually reinforce which elements belong together, applying the Gestalt principle of common region [16].

Applying Progressive Disclosure

- Default to Core Protocol: Present the standard, most frequently used protocol steps and parameters by default.

- Provide Access to Advanced Options: Make expert-level options, troubleshooting parameters, or rarely modified settings available through expandable sections, tabs, or links labeled "Advanced Settings," "Alternative Methods," or "Troubleshooting Parameters" [33] [34].

- Contextual Triggers: Dynamically reveal relevant information based on user input. For example, selecting a specific antibody from a list could automatically reveal its recommended dilution and buffer formulations [34].

Visual Workflows for Protocol Design

The following diagram illustrates the logical workflow for applying these principles when structuring a complex experimental protocol.

Diagram 1: Protocol Structuring Workflow

This workflow ensures that researchers first encounter a manageable, linear path through the core protocol, with options to dive deeper into complexity only as needed.

Research Reagent Solutions

Proper organization of reagent information is crucial for experimental efficiency and reproducibility. The table below outlines a structured approach to presenting reagent details.

Table 2: Research Reagent Solutions - Organization Framework

| Category | Function | Key Information to Include |

|---|---|---|

| Core Reaction Components | Essential elements for the primary reaction (e.g., enzymes, substrates, buffers). | Concentration, volume, source/Catalog #, storage conditions. |

| Detection Reagents | Substances used to visualize or quantify results (e.g., antibodies, dyes, probes). | Dilution factor, incubation time/temp, compatibility notes. |

| Cell Culture Supplements | Additives for maintaining or differentiating cell lines (e.g., growth factors, serum). | Final concentration, sterile handling instructions, stability. |

| Buffers & Solutions | Liquid mediums that maintain specific pH or ionic conditions. | pH, molarity, preparation instructions, shelf life. |

Frequently Asked Questions

Q1: How can I prevent "over-chunking," where the protocol becomes fragmented and hard to follow?

A: Maintain a logical, sequential flow even within chunks. Use a clear numbering system and ensure that dependencies between chunks are explicitly stated. Each chunk should represent a distinct phase or module of the experiment. Test your structure with junior researchers to identify points of confusion where the flow feels interrupted [16].

Q2: What is the biggest risk when using progressive disclosure in a research protocol?

A: The primary risk is reducing discoverability. If critical troubleshooting steps or safety warnings are hidden in an "Advanced" section, users might miss them. To mitigate this, use clear, intuitive signifiers for hidden content (like icons or bold labels) and ensure that safety-critical information is always immediately visible, regardless of the user's expertise level [33] [34].

Q3: Our research team has mixed expertise. How do we design a protocol that works for both novices and experts?

A: Implement a multi-layered approach. The top layer presents the simplest, most robust path—the "gold standard" protocol. Use progressive disclosure to give experts access to advanced customization, alternative methods, and parameter justification. For novices, embed contextual help like tooltips that explain the "why" behind certain steps, which can be turned off by experts [33] [34].

Q4: Can these principles be applied effectively in a paper-based lab notebook or protocol document?

A: Yes. Use clear typographic hierarchy (headings, subheadings) and white space to create chunks. Employ numbered lists for sequential steps and bullet points for reagent lists. For progressive disclosure, you can use appendices for detailed calculations, validation data, and alternative methods, with clear cross-references from the main protocol [32] [16].

Leveraging Worked Examples and Templates for Common Experimental Designs

The design and execution of complex experiments are fundamental to progress in drug development and scientific research. However, the significant cognitive demands of this process can impede efficiency and innovation. Cognitive load theory provides a framework for understanding these challenges, positing that an individual's working memory is limited in both capacity and duration [3]. When this capacity is exceeded by the intrinsic complexity of a task or by extraneous, poorly presented information, learning and performance suffer [17] [3].

This technical support center is designed to mitigate these challenges by applying the worked-example effect, a key principle of cognitive load theory. A "worked example" is a step-by-step demonstration of how to perform a task or solve a problem, which provides an expert mental model for novices [35]. Presenting information in this format reduces extraneous cognitive load by scaffolding the initial learning process, freeing up mental resources for deeper understanding and application [35] [3]. The following guides and FAQs provide these structured resources to help you navigate common experimental designs more effectively.

Worked Example: A Screening Design for a Pharmaceutical Process

This section provides a worked example of a fractional factorial design used to screen critical process parameters.

Objective and Background

A researcher aims to identify which input factors significantly influence the percent yield of pellets produced via an extrusion-spheronization process. The goal is to minimize experiments while maximizing information on the main effects of five factors [36].

Experimental Protocol and Design

Step 1: Define the Objective and Experimental Domain Based on prior knowledge, five factors were selected for investigation, each tested at a lower and upper level [36].

Step 2: Select the Experimental Design A fractional factorial design (a 2^(5-2) design) was chosen. This design consists of 8 unique experimental runs, which is one-fourth of a full factorial design (32 runs). It is a resolution III design, primarily used to estimate main effects while assuming two-factor interactions are negligible [36].

Step 3: Randomize and Execute Runs The experimental runs are performed in a randomized order to avoid systematic bias. The standard run order is used for analysis [36]. The design and results are shown in the table below.

Table 1: Experimental Plan and Results for the Extrusion-Spheronization Study [36]

| Actual Run Order | Standard Run Order | Binder (%) | Granulation Water (%) | Granulation Time (min) | Spheronization Speed (RPM) | Spheronization Time (min) | Yield (%) |

|---|---|---|---|---|---|---|---|

| 1 | 7 | 1.0 | 40 | 5 | 500 | 4 | 79.2 |

| 2 | 4 | 1.5 | 40 | 3 | 900 | 4 | 78.4 |

| 3 | 5 | 1.0 | 30 | 5 | 900 | 4 | 63.4 |

| 4 | 2 | 1.5 | 30 | 3 | 500 | 4 | 81.3 |

| 5 | 3 | 1.0 | 40 | 3 | 500 | 8 | 72.3 |

| 6 | 1 | 1.0 | 30 | 3 | 900 | 8 | 52.4 |

| 7 | 8 | 1.5 | 40 | 5 | 900 | 8 | 72.6 |

| 8 | 6 | 1.5 | 30 | 5 | 500 | 8 | 74.8 |

Step 4: Perform Statistical Analysis Statistical analysis of the data identifies the magnitude and significance of each factor's effect on the yield. The percentage contribution of each factor to the total variation can be used to determine significance [36].

Table 2: Statistical Analysis of Factor Effects on Pellet Yield [36]

| Source of Variation | Sum of Squares (SS) | Percentage Contribution (%) | Interpretation |

|---|---|---|---|

| A: Binder | 198.005 | 30.68% | Significant |

| B: Granulation Water | 117.045 | 18.14% | Significant |

| C: Granulation Time | 3.92 | 0.61% | Not Significant |

| D: Spheronization Speed | 208.08 | 32.24% | Significant |

| E: Spheronization Time | 114.005 | 17.66% | Significant |

| Error | 4.325 | 0.67% | - |

| Total | 645.38 | 100.00% |

Workflow Diagram

The following diagram visualizes the high-level workflow for this screening design, from objective setting to conclusion.

Frequently Asked Questions (FAQs) and Troubleshooting

Q1: Why should I use a designed experiment instead of changing "One Factor at a Time" (OFAT)?

A: The OFAT approach is inefficient and, critically, it cannot detect interactions between factors. Design of Experiments (DOE) takes all input variables into account simultaneously, systematically, and efficiently. This allows you to understand not just the effect of each single variable, but also how variables interact with each other, providing a more complete and accurate model of your process [36]. This structured approach also reduces extraneous cognitive load by providing a clear, optimized path for investigation, unlike the ad-hoc and often confusing OFAT method.

Q2: I am new to DOE. What type of design should I start with for screening important factors?

A: For initial screening when you have many factors (e.g., 5 or more), a Fractional Factorial Design or a Plackett-Burman Design is recommended. These designs use a minimal number of experimental runs to identify the "vital few" factors from the "trivial many." They are efficient and prevent the cognitive overload that would come from attempting a prohibitively large full factorial design at this early stage [36].

Q3: My experimental results show a lot of noise. How can I be sure the effects I see are real?

A: Incorporating replication (repeating experimental runs) in your design is key. Replication allows you to estimate the inherent variability (noise) in your system. With this estimate, you can perform statistical significance tests (e.g., ANOVA) to distinguish between the signal (the real effect of a factor) and the background noise. This moves your decision-making from guesswork to a statistically sound foundation.

Q4: How does a worked example reduce cognitive load for me as a researcher?

A: Worked examples act as a scaffold for skill acquisition. By studying a step-by-step solution, you are not burdened by the extraneous cognitive load of simultaneously figuring out the methodological approach and executing it. This frees up your working memory resources to focus on understanding the underlying principles and logic of the experimental design, leading to deeper and more effective learning [35] [3]. As your expertise grows, you can rely less on these examples, a phenomenon known as the expertise reversal effect [35].

The Scientist's Toolkit: Essential Reagents and Materials

Table 3: Key Research Reagent Solutions for Pharmaceutical Process Development

| Item | Function / Explanation |

|---|---|

| Active Pharmaceutical Ingredient (API) | The biologically active component of the drug product. Its physical and chemical properties (e.g., particle size, solubility) are critical factors in formulation development. |

| Binders (e.g., PVP, HPMC) | Polymers used to promote powder adhesion and cohesion during granulation, essential for forming granules with the desired mechanical strength. The percentage used is a key process variable [36]. |

| Granulation Liquid (e.g., Water, Ethanol) | The solvent used to facilitate the formation of granules. The volume and type of liquid significantly impact granule density, size, and porosity [36]. |

| Spheronizer | Equipment used to round extruded material into spherical pellets. The speed and time of operation are critical process parameters affecting pellet size and uniformity [36]. |

| Extruder | Equipment used to force the wetted powder mass through a die to form cylindrical strands, a precursor to spheronization. |

| Excipients (e.g., Fillers, Disintegrants) | Inactive ingredients that constitute the bulk of the dosage form. They are critical for achieving target product profile attributes like stability, dissolution, and manufacturability. |

Visualizing a Full Experimental Workflow

For a more complex optimization design following a successful screening, the workflow expands to model interactions and find optimal settings.

Frequently Asked Questions (FAQs)

FAQ 1: What is the most significant usability mistake in form design and how can we avoid it?

A common critical error is overloading users with too many choices and unnecessary questions, which directly increases cognitive load and leads to errors or form abandonment [37]. This can be avoided by implementing a "lean" design philosophy.

- Solution: Collect only the data that is essential to support your study objectives and protocol [38]. Eliminate duplication and minimize free-text fields, using pre-defined options or multiple-choice questions whenever possible [39] [38]. This approach reduces the mental effort required to interpret questions and formulate responses.

FAQ 2: How can we make long forms less intimidating for clinical staff?

Long forms can be made manageable by applying structural principles that create a clear path to completion.

- Solution: Group related fields into logical sections with clear, descriptive headings [16] [40]. This allows users to focus on one information category at a time. Additionally, using a single-column layout provides a clear, vertical path that is easy to follow, unlike multi-column layouts which can cause confusion about the sequence of fields [16]. For very long forms, consider a multi-page design with a progress indicator to show users how much they have completed and how much remains [16].

FAQ 3: Our site personnel often misinterpret questions. How can we improve clarity?

Ambiguity in questions forces users to spend mental energy on interpretation, increasing cognitive load and the risk of inaccurate data [16].

- Solution: Use plain language that is immediately understandable, avoiding technical jargon and internal company terminology [16]. Provide clear context and examples for fields that require specific input. For instance, instead of just asking for a "Reference ID," instruct users to enter the "16-digit code found on the top-right of your receipt" [16]. Providing a CRF completion manual to site personnel also promotes accurate data entry [40].

FAQ 4: What are the concrete benefits of electronic CRFs (eCRFs) over paper?

The transition from paper to electronic CRFs (eCRFs) offers significant advantages in data quality and efficiency, primarily by reducing opportunities for error.

- Solution: eCRFs facilitate real-time data entry and oversight, with built-in edit checks that catch discrepancies immediately [41] [40]. Quantitative data demonstrates their superiority:

| Metric | Paper CRFs | Electronic CRFs (eCRFs) | Source |

|---|---|---|---|

| Average Completion Time | ~10.54 minutes | ~8.29 minutes | [41] |

| Data Entry Error Rate | ~5% | Reduced to near 0% | [41] |

| Data Transcription | Manual transfer, error-prone | Automated, reduces errors | [39] [40] |

| Query Resolution | Slower, manual processes | Instant, online management | [39] [40] |

FAQ 5: How can form design itself help prevent data entry errors?

Proactive form design can guide users toward correct entries and prevent common mistakes.

- Solution: Implement field validation and set clear boundaries for data entry [39]. This includes specifying units of measurement (e.g., "kg" or "lbs"), the number of decimal places, and standardized date formats (e.g., DD/MM/YYYY) directly on the form [40]. For electronic forms, use mandatory settings for critical fields, but always provide options for "not available" or "not done" to accommodate real-world scenarios where data is missing [39].

Troubleshooting Guides

Problem: High form abandonment or frequent incomplete submissions.

- Potential Cause: Excessive cognitive load due to a disorganized structure, unclear requirements, or the form appears too long [16] [37].

- Solution:

- Restructure the Form: Apply the principles of Structure and Transparency. Group related fields and use descriptive section headings. Ensure all required fields are clearly marked, and communicate any prerequisites (e.g., "please have your subject's medical history available") before the user begins [16].

- Implement Progressive Disclosure: Break down complex forms into multiple pages or use "wizard" patterns that dynamically display relevant fields based on prior answers. This presents users with only what's needed at each step [16].

Problem: High rate of data queries and inconsistencies in responses.

- Potential Cause: Lack of clarity and support within the form, leading to ambiguous interpretations [16] [40].

- Solution:

- Eliminate Ambiguity: Use positive wording and avoid double-barreled questions that ask about two things at once [16]. Replace free-text fields with controlled terminology and pre-coded answer sets (e.g., Mild/Moderate/Severe) wherever possible [38] [40].

- Provide In-Form Guidance: Use help text, tooltips, and clear examples directly within the form interface. A CRF completion guideline document is also recommended to ensure all site personnel follow the same standards [38].

Problem: Slow data entry times and user frustration.

- Potential Cause: Inefficient layout and physical effort required, which contributes to mental fatigue [16] [42].

- Solution:

- Optimize Layout and Flow: Use a single-column layout and ensure a logical tab order. Place easy, familiar questions first to build user momentum [16].

- Automate and Simplify: Use features like auto-population to fill in repetitive data (e.g., subject ID). In eCRFs, the system can automatically generate protocol ID and site code on all pages, eliminating manual duplication [39] [40].

The Scientist's Toolkit: Research Reagent Solutions

The following table details key resources for designing and implementing effective data collection forms.

| Item | Function |

|---|---|

| Standardized CRF Templates | A library of pre-designed, protocol-driven form modules (e.g., for demographics, vital signs, adverse events) ensures consistency across studies, accelerates study build times, and facilitates data comparison and reuse [38] [40]. |

| Electronic Data Capture (EDC) System | A software platform for creating and managing eCRFs. It provides functionalities like built-in edit checks, real-time discrepancy management, automated audit trails, and remote access for researchers, significantly enhancing data integrity and trial efficiency [41] [39]. |

| Controlled Terminologies (e.g., NCI Thesaurus, SNOMED CT) | Standardized sets of terms and codes for clinical concepts. Using these in answer sets ensures data is collected consistently (e.g., using "Myocardial infarction" instead of various spellings of "heart attack"), which simplifies data analysis and mapping to standards like SDTM [38] [43]. |

| CRF Completion Guideline Document | A companion document that provides detailed instructions for site personnel on how to complete each field of the CRF. This promotes accurate and consistent data entry across different sites and users, reducing query rates [38] [40]. |

| CDISC Standards (CDASH, SDTM) | Foundational standards for clinical research data. The Clinical Data Acquisition Standards Harmonization (CDASH) model provides recommendations for structuring data collection fields, while the Study Data Tabulation Model (SDTM) defines a standardized format for submitting data to regulators [43]. |

Experimental Protocol: Applying Cognitive Load Principles to CRF Design

Objective: To systematically (re)design a Case Report Form (CRF) that minimizes cognitive load for clinical site personnel, thereby improving data quality, completeness, and entry efficiency.

Methodology:

Protocol Analysis & Objective Alignment:

- Begin by thoroughly reviewing the study protocol to identify all primary and secondary endpoints, safety assessments, and schedule of activities.

- Create a master list of essential data points required to meet these objectives. Crucially, eliminate any data points that are "nice to have" but not strictly necessary [38].

Information Structuring & Grouping:

- Organize the identified data points into logical categories (e.g., Demographics, Medical History, Vital Signs, Efficacy Endpoints, Adverse Events).

- Within each category, order questions to mirror the clinical workflow and sequence of source documents. Start with simple, familiar questions to build momentum [16].

Form Layout & Field Design:

- Implement a single-column layout for all form sections to create a clear, linear path for completion [16].

- Design fields to minimize user effort and decision-making:

- Use pre-coded answer sets (radio buttons, checkboxes) instead of free text [39] [38].

- Specify units of measurement and data formats (e.g., DD/MMM/YYYY) explicitly [40].

- Set sensible field limits (e.g., character limits, numeric ranges) [39].

- Use mandatory field indicators and mark optional fields clearly [16].

Guidance & Support Integration:

- Embed clear, concise instructions and examples directly within the form interface.

- For eCRFs, implement hover-over tooltips to provide additional context for complex fields without cluttering the main view [38].

Iterative Usability Testing:

- Prototype the new design and test it with a small group of actual clinical research coordinators (end-users).

- Observe where they hesitate, make errors, or express confusion.

- Collect feedback and use it to refine the form in an iterative cycle before full-scale deployment [41].

The diagram below visualizes this workflow and its grounding in cognitive load theory.

Diagram: CRF Design Workflow and Cognitive Load Reduction Principles

Frequently Asked Questions (FAQs)

Q1: Why should I provide a text version of a complex flowchart? A text version makes the information accessible to users of assistive technologies and can often simplify the content. For complex flowcharts with extensive branching, a nested list or a structured text description can be more effective than a purely visual representation [44]. Providing both visual and text versions caters to different user preferences and needs [44].

Q2: How do I ensure users can distinguish between elements in my diagram? Use a color palette where the colors are visually equidistant. This makes it easier for users to tell colors apart in the chart and cross-reference them with the key. Using a range of hues (e.g., yellow, orange, blue) is often more effective than different shades of the same hue [45].

Q3: What is the minimum color contrast required for text in diagrams? To meet enhanced accessibility standards (WCAG Level AAA), the contrast ratio between text and its background should be at least 7:1 for standard text, and at least 4.5:1 for large-scale text (approximately 18pt or 14pt bold) [46]. This ensures readability for users with low vision.

Q4: My diagram is visually cluttered. How can I simplify it? Consider breaking one complex diagram into multiple, simpler diagrams. Before designing, determine how many layers need to be presented and decide if your readers need to understand the overall structure or the detail of each level [44].

Q5: How should I write alternative text (alt text) for a flowchart? For a complete flowchart, provide a single alt text that describes the chart's purpose and relationships. Think about how you would explain the chart over the phone. For example: "Flow Chart of X. Text details found in the following section" [44].

Troubleshooting Guides

Problem 1: Poor Color Contrast in Diagram Elements

Symptoms: Users report that text is difficult to read or that they cannot distinguish between different colored elements.

| Diagnosis Step | Action |

|---|---|

| Check Contrast | Use a color contrast analyzer to verify ratios. |

| Test Color Palette | Ensure your palette has visually equidistant colors [45]. |

| Review Color Usage | Avoid using color as the sole means of conveying information; supplement with shapes or patterns [44]. |

Resolution:

- For text within nodes, explicitly set the

fontcolorto have high contrast against the node'sfillcolor. The provided color palette includes high-contrast pairs like#202124on#FBBC05[46]. - For connecting lines (edges) and symbols, ensure their colors stand out clearly against the background color of the canvas.

- Refer to the Color Contrast Standards Table below for specific compliance targets.

Problem 2: Inaccessible or Unnavigable Complex Diagrams

Symptoms: The diagram is difficult to understand for users relying on assistive technologies, or keyboard users cannot interact with it.

| Diagnosis Step | Action |

|---|---|

| Check Reading Order | If using multiple image elements, see if they are read in a logical sequence. |

| Test Keyboard Nav. | Try to select and interact with all elements using only the keyboard [47]. |

| Evaluate Complexity | Determine if the diagram has too many branching paths or layers [44]. |

Resolution:

- Simplify the Visual: Break down overly complex diagrams into multiple, simpler ones focused on specific sub-processes [44].