Cognitive Terminology in Cross-Journal Analysis: Trends, Applications, and Validation in Biomedical Research

This comprehensive analysis examines cognitive terminology usage across diverse scientific journals, tracing its evolution from theoretical foundations to practical applications in biomedical and clinical research.

Cognitive Terminology in Cross-Journal Analysis: Trends, Applications, and Validation in Biomedical Research

Abstract

This comprehensive analysis examines cognitive terminology usage across diverse scientific journals, tracing its evolution from theoretical foundations to practical applications in biomedical and clinical research. The article explores the historical rise of cognitive terminology in comparative psychology, its critical role in drug development and safety assessment, and methodological challenges in ensuring valid measurement across different research contexts. By comparing terminology applications across disciplinary boundaries, we provide researchers, scientists, and drug development professionals with frameworks for optimizing cognitive assessment selection, addressing ecological validity concerns, and implementing robust validation strategies. The synthesis offers practical guidance for enhancing cognitive terminology precision in clinical trials, behavioral intervention development, and cross-disciplinary research collaboration.

The Cognitive Revolution: Tracing Terminology Shifts Across Scientific Disciplines

Historical Analysis of Cognitive Creep in Scientific Literature

Cognitive creep refers to the progressive increase in the use of mentalist or cognitive terminology in scientific domains that were traditionally behaviorally oriented. This phenomenon represents a noteworthy shift in scientific discourse, particularly within comparative psychology where behaviorist approaches once dominated methodological frameworks. The term "cognitive creep" was operationalized in a landmark study analyzing terminology shifts in comparative psychology journals, which examined over 8,572 article titles containing more than 100,000 words published between 1940 and 2010 [1]. This analysis demonstrated a systematic increase in cognitive word usage that coincided with a relative decrease in behavioral terminology, highlighting a fundamental transition in how researchers conceptualize and describe psychological processes in animal subjects.

This linguistic shift carries significant implications for how research questions are framed, how findings are interpreted, and ultimately, how mental processes in non-human species are understood. The historical tension between behaviorist and cognitivist approaches forms the essential context for understanding cognitive creep. Behaviorism, as defined by the Stanford Encyclopedia of Philosophy, maintains three core tenets: psychology is the study of behavior (not mind), external environmental causes should be used to predict behavior, and mentalist terminology has no place in research and theory [1]. In contrast, cognitivism explicitly employs terms referencing internal mental states, processes, and representations. The increasing prevalence of cognitive terminology in comparative psychology titles suggests a paradigm shift toward cognitivist approaches in a field that behaviorists once claimed as predominantly their domain.

Comparative Analysis of Terminology Shifts Across Journals

Methodology for Tracking Terminology Changes

The primary methodology for quantifying cognitive creep involves systematic content analysis of journal article titles across extended time periods. The foundational study in this area employed two complementary approaches: computerized word searches for specific terminology categories and emotional connotation analysis using the Dictionary of Affect in Language (DAL) [1]. The research design incorporated longitudinal analysis of three major comparative psychology journals: Journal of Comparative Psychology (JCP, 1940-2010, 71 volume-years), International Journal of Comparative Psychology (IJCP, 2000-2010, 11 volume-years), and Journal of Experimental Psychology: Animal Behavior Processes (JEP, 1975-2010, 36 volume-years) [1].

Table 1: Operational Definitions for Cognitive Terminology Analysis

| Category | Definition | Examples |

|---|---|---|

| Cognitive Words | Words referring to mental processes, emotions, or brain/mind processes | memory, metacognition, affect, awareness, concept formation, executive function [1] |

| Behavioral Words | All words including the root "behav" | behavior, behavioural, behaviors [1] |

| Cognitive Phrases | Specific multi-word terms with cognitive implications | cognitive maps, decision making, information processing, problem solving, spatial learning [1] |

| Emotional Connotations | DAL ratings based on participant evaluations of words | Pleasantness, Activation, Concreteness scales [1] |

The identification of cognitive terminology was explicitly operationalized through a predefined word list that included both specific words (e.g., "memory," "emotion," "cognition," "attention," "concept") and phrases (e.g., "cognitive maps," "decision making," "information processing") [1]. This methodological rigor ensures replicability and objectivity in tracking terminology changes across publications and over time. The DAL provided an additional quantitative measure by scoring words on three dimensions: Pleasantness, Activation, and Concreteness, offering insights into the emotional qualities associated with shifting terminology patterns [1].

Quantitative Evidence of Terminology Shift

The analysis revealed a clear and consistent increase in cognitive terminology across all three journals, with particularly notable shifts occurring in the latter half of the 20th century. The ratio of cognitive to behavioral words showed a dramatic transformation over time, increasing from 0.33 in 1946-1955 to 1.00 in 2001-2010 [1]. This three-fold increase demonstrates that cognitive terminology not only became more common absolutely, but also became relatively more frequent than behavioral terminology in comparative psychology literature.

Table 2: Cognitive vs. Behavioral Terminology in Psychology Literature (1940-2010)

| Time Period | Cognitive Words (per 10,000) | Behavioral Words (per 10,000) | Cognitive:Behavioral Ratio |

|---|---|---|---|

| 1946-1955 | 2 | 7 | 0.33 [1] |

| 1979-1988 | 22 | 43 | 0.51 [1] |

| 2001-2010 | 12 | 12 | 1.00 [1] |

The data reveals that while both cognitive and behavioral terminology increased from the 1940s-1950s to the 1970s-1980s, cognitive terminology continued to maintain its frequency in recent decades while behavioral terms decreased, resulting in an equal ratio in the 2001-2010 period. This represents a fundamental shift in the dominant paradigm within the field, as cognitive explanations gained prominence alongside or in place of behavioral ones.

Beyond simple frequency counts, the research also identified stylistic differences between journals. The Journal of Comparative Psychology showed an increased use of words rated as pleasant and concrete across years, while the Journal of Experimental Psychology: Animal Behavior Processes employed more emotionally unpleasant and concrete words [1]. These distinctions suggest that despite the overall trend toward cognitive terminology, different research traditions maintained distinctive linguistic styles that reflect their methodological and theoretical orientations.

Experimental Protocols for Terminology Analysis

Data Collection and Processing Workflow

The methodology for documenting cognitive creep requires systematic data collection and processing protocols. The foundational study in this area employed a structured workflow beginning with title acquisition from journal databases, followed by computational analysis using specialized linguistic tools [1]. The basic unit of analysis was the volume-year, with each volume-year scored on multiple variables including relative frequency of cognitive terms, behavioral words, and references to specific animal types (vertebrates vs. invertebrates) [1].

The experimental protocol can be summarized as follows: First, titles are downloaded from comprehensive academic databases and organized by journal and publication year. Second, a computer program processes the titles to identify matches with predefined terminology lists (cognitive words, behavioral words, and animal categories). Third, the Dictionary of Affect in Language is employed to score the emotional connotations of title words across three dimensions: Pleasantness, Activation, and Concreteness [1]. Finally, statistical analysis is conducted to identify trends over time and differences between journals.

A critical aspect of this methodology is addressing the challenge of linguistic evolution, where words may change meaning or connotation over time. The DAL provides a stable framework for evaluation by using standardized ratings that remain constant across the analysis period [1]. This ensures that observed changes reflect actual terminology shifts rather than semantic drift. The matching rate for title words in the DAL was approximately 69%, which is lower than the 90% normative rate for everyday English due to the specialized vocabulary in scientific titles [1]. This limitation is mitigated through the complementary use of direct word counts for specific cognitive and behavioral terminology.

Time Series Analysis in Psychological Research

While the original cognitive creep study employed comparative analysis across discrete time periods, contemporary research could enhance this methodology through formal time series analysis. Time series analysis examines time-ordered observations where intervals between observations remain constant, allowing researchers to identify patterns of change over extended periods [2]. This approach has become increasingly relevant in psychological research as technological advances have facilitated the collection of longitudinal data across many time points.

Time series data is characterized by several key components that must be accounted for in analysis: trend (systematic change in level of a series), seasonality (regular periodic fluctuations), cycles (long-term oscillations), and irregular variation (random noise) [2]. In the context of terminology analysis, the trend component would represent the cognitive creep phenomenon itself, while other components might reflect shorter-term fluctuations in terminology use. For robust analysis, time series should contain at least 20 observations, with many models requiring 50 or more observations for accurate estimation [2]. The 71 volume-years analyzed in the Journal of Comparative Psychology provides sufficient data points for meaningful time series modeling.

Advanced time series approaches such as ARIMA (Autoregressive Integrated Moving Average) models could potentially forecast future terminology trends based on historical patterns [2]. Additionally, intervention analysis could identify whether specific historical events (such as influential publications or theoretical developments) accelerated the adoption of cognitive terminology. These methodological refinements would build upon the foundational work documenting cognitive creep while providing more sophisticated analytical tools for understanding the dynamics of scientific discourse change.

Research Reagent Solutions for Terminology Analysis

Table 3: Essential Research Tools for Scientific Terminology Analysis

| Tool Name | Type | Function | Application in Cognitive Creep Research |

|---|---|---|---|

| Dictionary of Affect in Language (DAL) | Linguistic Database | Provides ratings of emotional connotations for words [1] | Quantifies emotional qualities (Pleasantness, Activation, Concreteness) of journal titles |

| Automated Text Analysis Software | Computational Tool | Processes large volumes of text for specific terminology patterns [1] | Identifies and counts cognitive and behavioral terminology in journal databases |

| Time Series Analysis Packages | Statistical Software | Models longitudinal patterns in sequential data [2] | Analyzes terminology trends over multi-decade periods and forecasts future patterns |

| Journal Database APIs | Data Source | Provides structured access to publication metadata and titles [1] | Collects comprehensive title sets from multiple journals across specified time periods |

| Terminology Classification Framework | Coding System | Operationalizes categories of cognitive and behavioral terms [1] | Ensures consistent identification and classification of target terminology across the dataset |

The research reagent solutions outlined in Table 3 represent the essential methodological toolkit for conducting rigorous analysis of terminology shifts in scientific literature. The Dictionary of Affect in Language deserves particular emphasis as it provides an operational method for evaluating the emotional connotations of words based on participant ratings [1]. For behaviorists, this approach aligns with their emphasis on operational definitions and measurable behaviors, as the "pleasantness" or "concreteness" of a word is defined by the rating behaviors of research participants rather than by abstract interpretation [1].

Contemporary extensions of this research could incorporate additional tools such as natural language processing algorithms for more sophisticated semantic analysis, or bibliometric software for tracking co-citation patterns alongside terminology shifts. The integration of these tools would enable more comprehensive analysis of how cognitive terminology permeates different subfields and research networks within the broader scientific ecosystem.

Cross-Journal Comparison of Terminology Patterns

The comparative analysis across three journals revealed both consistent trends and distinctive patterns in terminology usage. All journals showed evidence of increasing cognitive terminology, but with variations in magnitude and timing. The Journal of Comparative Psychology demonstrated the most pronounced shift toward cognitive terminology, along with an increasing use of words rated as pleasant and concrete across years [1]. This pattern suggests a movement toward more positively framed and operationally definable cognitive constructs in this particular journal.

In contrast, the Journal of Experimental Psychology: Animal Behavior Processes maintained a greater emphasis on words rated as emotionally unpleasant and concrete [1]. This distinction potentially reflects the different methodological traditions and theoretical commitments of researchers publishing in these venues. The persistence of such stylistic differences despite the overall trend toward cognitive terminology indicates that journal-specific cultures continue to influence how cognitive concepts are framed and discussed.

Table 4: Journal-Specific Terminology Patterns (1940-2010)

| Journal | Time Span | Volume-Years | Key Terminology Patterns | Emotional Connotation Trends |

|---|---|---|---|---|

| Journal of Comparative Psychology | 1940-2010 | 71 | Strong increase in cognitive terminology [1] | Increased use of pleasant and concrete words [1] |

| International Journal of Comparative Psychology | 2000-2010 | 11 | Cognitive terminology prevalent [1] | Limited data due to shorter publication history [1] |

| Journal of Experimental Psychology: Animal Behavior Processes | 1975-2010 | 36 | Moderate increase in cognitive terminology [1] | Greater use of unpleasant and concrete words [1] |

The cross-journal comparison reveals that cognitive creep represents a broad paradigm shift affecting multiple research traditions within comparative psychology, while simultaneously being filtered through the distinctive cultures and editorial preferences of specific publication venues. This nuanced understanding helps contextualize the cognitive terminology shift as neither uniform nor monolithic, but as a complex interaction between broader disciplinary trends and journal-specific communities of practice.

Implications and Future Research Directions

The documented phenomenon of cognitive creep carries significant implications for how scientific knowledge is constructed and communicated in comparative psychology and related fields. The shift toward cognitive terminology represents more than merely changing fashion in word choice; it reflects fundamental changes in how researchers conceptualize their subject matter, formulate research questions, and interpret findings. This linguistic transition enables new types of investigations and explanations while potentially constraining others.

From a behaviorist perspective, the increased use of cognitive terminology presents several problems, including lack of operationalization and lack of portability [1]. Behaviorists argue that mentalist terms often fail to be clearly defined in terms of measurable operations, making scientific verification difficult. They further contend that such terminology may not be portable across different species without imposing human-centric conceptual frameworks on non-human cognition. These concerns highlight the ongoing tension between behaviorist and cognitivist approaches despite the documented terminology shift.

Future research could extend this analysis in several productive directions. First, expanding the journal set to include cognitive psychology journals would provide a valuable comparison baseline. Second, analyzing abstracts and full texts rather than only titles would offer a more comprehensive understanding of terminology patterns. Third, examining co-citation networks alongside terminology shifts could reveal how cognitive creep correlates with changing reference patterns and theoretical influences. Finally, applying similar methodology to adjacent fields such as neuroscience or behavioral ecology would determine whether cognitive creep represents a broader transdisciplinary phenomenon rather than one confined to comparative psychology.

The scientific study of scientific discourse itself represents a promising meta-disciplinary approach that can enhance our understanding of how knowledge evolves across theoretical paradigms. The methodological framework presented here provides a replicable approach for documenting and analyzing such linguistic shifts, contributing to both the history and the sociology of scientific knowledge.

The evolution of psychological science has been marked by fundamental shifts in how researchers conceptualize, measure, and explain human learning and behavior. The transition from behaviorism to cognitivism represents one of the most significant paradigm shifts in the history of psychology, bringing with it profound changes in research terminology, methodology, and underlying philosophical assumptions [3]. This shift moved the field's focus from observable behaviors to internal mental processes, requiring new vocabularies to describe phenomena that could not be directly observed [4]. Understanding this terminological evolution is crucial for contemporary researchers conducting cross-journal comparisons of cognitive terminology usage, as it reveals how the same fundamental phenomena have been conceptualized through radically different theoretical lenses across historical periods and research traditions.

Behaviorism emerged in the early 1900s as a systematic approach to understanding human and animal behavior, defined by its emphasis on observable phenomena and rejection of introspection as a valid scientific method [5]. In contrast, cognitivism emerged as a reaction to behaviorism's limitations, particularly its failure to account for internal mental processes like reasoning, decision-making, and memory [6]. This paradigm shift was spearheaded by developments in computer science and artificial intelligence, which provided new metaphors for understanding human cognition [4]. The resulting transformation in research terminology reflects deeper changes in how psychologists conceptualize their subject matter, with important implications for how research questions are framed, studies are designed, and findings are interpreted across different scientific communities.

Theoretical Foundations: Core Principles and Terminology

Behaviorist Framework

Behaviorism fundamentally views psychology as the science of observable behavior, focusing exclusively on measurable responses to environmental stimuli [5]. The behaviorist paradigm operates on the principle that learning occurs through the formation of associations between stimuli and responses, with reinforcement and punishment serving as primary mechanisms for strengthening or weakening these associations over time [7]. From this perspective, the mind is treated as a "black box" whose internal workings cannot be objectively studied or measured [6]. Research within this tradition consequently emphasizes external observations of behavior under controlled conditions, with minimal inference about internal mental states.

Key terminology within behaviorism includes:

- Stimulus: Any environmental event that elicits a response from an organism

- Response: An organism's observable reaction to a stimulus

- Reinforcement: Any consequence that strengthens the behavior it follows

- Punishment: Any consequence that weakens the behavior it follows

- Operant Conditioning: Learning process through which behaviors are modified by their consequences

- Classical Conditioning: Learning process through which neutral stimuli become associated with biologically significant stimuli

The philosophical underpinnings of behaviorism reflect a mechanistic worldview in which behavior is governed by a finite set of physical laws, similar to other natural phenomena [4]. This perspective treats complex human behaviors as reducible to simpler stimulus-response units that can be objectively studied under laboratory conditions.

Cognitive Framework

Cognitivism represents a fundamental departure from behaviorism by emphasizing internal mental processes as legitimate objects of scientific study [6]. The cognitive perspective views humans as active processors of information who perceive, interpret, store, and retrieve information through complex mental operations [7]. Rather than treating the mind as a black box, cognitive researchers seek to understand the structures and processes that mediate between environmental inputs and behavioral outputs, using the computer as a guiding metaphor for human cognition [4].

Central terminology within cognitivism includes:

- Information Processing: The flow of information through the human cognitive system

- Schema: Mental frameworks that help organize and interpret information

- Metacognition: Awareness and understanding of one's own thought processes

- Memory Encoding: The process of converting information into a form that can be stored in memory

- Retrieval: The process of accessing stored information from memory

- Attention: The cognitive process of selectively concentrating on specific aspects of the environment

The cognitive revolution of the late 20th century established internal mental states as valid explanations for observable behavior, fundamentally reshaping research priorities and methodologies in psychology [5]. This paradigm shift enabled researchers to investigate complex phenomena such as reasoning, problem-solving, and language acquisition that had proven difficult to explain within purely behaviorist frameworks.

Comparative Analysis: Terminological Shifts Across Paradigms

Table 1: Core Conceptual Terminology Across Research Paradigms

| Conceptual Domain | Behaviorist Terminology | Cognitive Terminology | Nature of Shift |

|---|---|---|---|

| Learning Mechanism | Stimulus-Response Associations | Information Processing | From connection formation to active processing |

| Knowledge Representation | Behavioral Repertoires | Schemas, Mental Models | From observable behaviors to internal structures |

| Memory | Habit Strength | Encoding, Storage, Retrieval | From connection strength to active processes |

| Research Focus | Observable Behavior | Mental Processes | From external to internal phenomena |

| Explanatory Framework | Environmental Determinism | Interactive Processing | From mechanistic to computational models |

Table 2: Methodological Terminology Across Research Paradigms

| Methodological Aspect | Behaviorist Approach | Cognitive Approach | Practical Implications |

|---|---|---|---|

| Key Research Methods | Controlled Observation, Behavior Modification | Protocol Analysis, Reaction Time Studies | Shift from purely external to inferential measures |

| Data Collection | Direct Behavior Measurement | Performance Indicators, Self-Report | Expansion of valid evidence sources |

| Experimental Design | ABA Designs, Single-Subject Studies | Laboratory Experiments, Neuroimaging | Increased methodological diversity |

| Measurement Focus | Response Rate, Response Latency | Processing Speed, Accuracy Rates | From simple metrics to complex performance measures |

| Subject Population | Animals (Rats, Pigeons) | Human Participants | Changed model organisms for research |

The terminological shift from behaviorism to cognitivism extends beyond mere vocabulary changes to reflect fundamentally different conceptualizations of learning and knowledge acquisition [3]. Where behaviorism defines learning as "the mastery of behaviors" through development of habitual actions, cognitivism conceptualizes learning as "the processing of information by the mind" through observation, categorization, storage, and retrieval processes [8]. This represents a movement from understanding learning as behavioral change to understanding it as conceptual reorganization within the learner's cognitive structures.

The philosophical commitments underlying these terminological differences are substantial. Behaviorism employs mechanism as its fundamental metaphor, viewing behavior as governed by physical laws in a deterministic system [4]. Cognitivism retains mechanism but extends it to mental operations through the information processing metaphor, which conceptualizes human cognition as analogous to computer operations [4]. This shift enables researchers to address phenomena that proved problematic for behaviorist accounts, including language acquisition, reasoning errors, and the construction of meaning [4].

Experimental Protocols and Research Methodologies

Behaviorist Research Protocols

Behaviorist research methodologies emphasize experimental control and quantifiable measurements of observable behaviors under specified environmental conditions [9]. A typical behaviorist experiment involves manipulating antecedent stimuli and consequent reinforcements to determine their functional relationships with target behaviors.

Operant Conditioning Protocol (Skinner Box Experiment):

- Apparatus: Operant chamber (Skinner box) containing a lever, food dispenser, stimulus lights, and grid floor for possible electrical stimulation

- Subject: Laboratory rat or pigeon with controlled feeding schedule to maintain 80-85% free-feeding body weight

- Habituation Phase: Subject acclimates to chamber with no programmed consequences for lever pressing

- Magazine Training: Subject learns to approach food magazine when auditory stimulus signals food delivery

- Shaping Phase: Experimenter reinforces successive approximations of target behavior (lever press) using manual or automated delivery of food pellets

- Acquisition Phase: Subject learns stimulus-response-consequence contingency under specified reinforcement schedule (e.g., continuous reinforcement)

- Data Collection: Cumulative recorder tracks response rate, pattern, and temporal distribution

- Experimental Manipulation: Systematic variation of reinforcement schedules (fixed ratio, variable ratio, fixed interval, variable interval) or introduction of discriminative stimuli

- Control Procedures: Counterbalancing, baseline measurements, and reversal designs to demonstrate experimental control

This protocol generates quantitative behavioral data including response rates, inter-response times, and resistance to extinction, providing the empirical foundation for behaviorist principles of learning [5].

Cognitive Research Protocols

Cognitive research methodologies employ inferential approaches to study internal mental processes that cannot be directly observed [6]. These protocols typically measure performance on carefully designed tasks that reveal underlying cognitive operations through patterns of accuracy, reaction time, or neural activity.

Memory Encoding and Retrieval Protocol:

- Participants: Human subjects (typically 20-50 participants per condition) with normal or corrected-to-normal vision

- Apparatus: Computerized experiment with precise stimulus timing and response recording capabilities

- Stimulus Materials: Carefully controlled word lists, images, or other materials matched for relevant psycholinguistic properties

- Encoding Phase: Participants engage with materials under specified processing conditions (e.g., shallow vs. deep processing tasks)

- Retention Interval: Fixed delay period ranging from seconds (short-term memory) to days (long-term memory)

- Retrieval Phase: Participants complete recognition or recall tests under controlled conditions

- Data Collection: Reaction time measurement, accuracy scores, confidence ratings, and sometimes neuroimaging data (fMRI, EEG)

- Experimental Manipulation: Variation of encoding strategies, interference conditions, or retrieval cues

- Control Procedures: Counterbalancing of stimulus materials, randomization of presentation order, and manipulation checks

This protocol yields data on information processing efficiency including accuracy rates, response latencies, and error patterns that support inferences about underlying cognitive structures and processes [8].

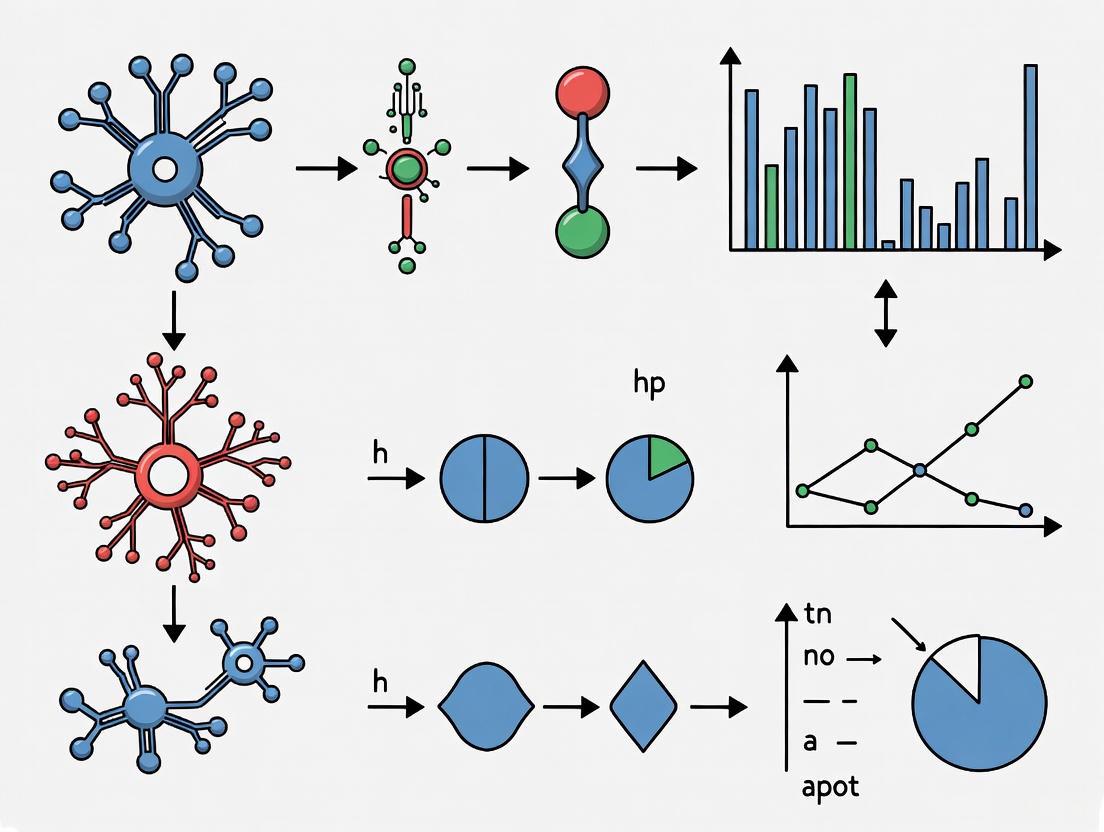

Visualization of Paradigm Relationships and Experimental Flow

Essential Research Reagents and Methodological Tools

Table 3: Key Research Tools and Their Applications Across Paradigms

| Research Tool Category | Specific Examples | Behaviorist Applications | Cognitive Applications | Functional Purpose |

|---|---|---|---|---|

| Experimental Apparatus | Operant Chambers, Eye Trackers | Controlled behavior measurement | Monitoring attention and processing | Environment standardization |

| Stimulus Presentation | Tachistoscopes, Computer Displays | Visual/auditory stimulus delivery | Precise timing of experimental trials | Input control and standardization |

| Response Measurement | Lever Press Sensors, Response Pads | Counting behavioral responses | Recording reaction times and accuracy | Output measurement |

| Data Analysis | Cumulative Recorders, Statistical Software | Tracking response patterns over time | Analyzing performance differences | Data organization and interpretation |

| Physiological Monitoring | Skin Conductance Equipment, fMRI | Measuring arousal during conditioning | Localizing neural activity during tasks | Correlating physical with psychological |

The methodological requirements of behaviorist versus cognitive research necessitate different specialized tools and measurement approaches [9]. Behaviorist research relies heavily on apparatus that enables precise control of environmental contingencies and automated measurement of observable responses, such as operant chambers equipped with stimulus lights, response levers, and reinforcement delivery mechanisms [5]. These tools facilitate the quantitative analysis of behavior through measures like response rates, inter-response times, and resistance to extinction.

Cognitive research employs tools designed to infer internal mental processes from performance measures [6]. These include reaction time measurement systems, eye-tracking equipment, and neuroimaging technologies that provide indirect indicators of cognitive operations. The shift from behaviorism to cognitivism has consequently driven development of increasingly sophisticated research technologies capable of tracking the temporal dynamics and neural correlates of information processing.

Implications for Contemporary Research and Terminology Usage

The paradigm shift from behaviorism to cognitivism continues to influence contemporary research practices, particularly in how investigators operationalize variables, design studies, and interpret findings [3]. Cross-journal comparisons reveal persistent differences in terminological conventions across research traditions, with behaviorally-oriented publications favoring language describing observable measures and cognitive publications employing terminology referencing inferred mental constructs [10]. This terminological divergence reflects deeper epistemological differences about the nature of psychological phenomena and how they should be studied.

For researchers conducting literature reviews or meta-analyses across psychological subfields, awareness of these terminological shifts is essential for accurate interpretation of findings across historical periods and theoretical traditions [11]. The same phenomenon (e.g., "learning") may be operationalized radically differently in behaviorist versus cognitive research, requiring careful attention to methodological details rather than superficial similarities in vocabulary. Contemporary integrationist approaches, such as cognitive-behavioral therapy, represent attempts to synthesize terminology and methodologies across these historically distinct paradigms [5].

Future research on cognitive terminology usage would benefit from computational linguistic analysis of published literature across decades to quantitatively track the rise of cognitive terminology and decline of behaviorist vocabulary. Such analysis could reveal subtle patterns in how paradigm shifts manifest in scientific communication and how quickly new terminological conventions are adopted across different psychological subfields and research communities.

The study of cognitive phenomena represents a frontier where multiple disciplines converge, each bringing distinct theoretical frameworks, methodologies, and terminological conventions. This cross-disciplinary exploration between psychology and linguistics has created a rich, albeit complex, intellectual landscape characterized by diverse approaches to understanding mind, language, and behavior. The integration of these fields has evolved significantly over decades, moving from parallel disciplinary investigations to increasingly integrated frameworks that recognize the inseparable relationship between language structure and cognitive processes [12]. This guide provides a systematic comparison of research approaches across this interdisciplinary spectrum, examining how cognitive terminology and methodologies vary across research traditions and outlets, with specific implications for research design and interpretation in applied fields including drug development.

The foundational relationship between linguistics and cognitive psychology was fundamentally reshaped by Noam Chomsky's work, which proposed that language structure reveals innate cognitive architectures rather than merely learned behavior [12]. This paradigm shift established that language rules are so complex that they must be genetically programmed rather than solely learned through imitation and reinforcement, positioning language as a window into fundamental cognitive structures [12]. Despite this intertwined history, tensions remain between disciplinary perspectives on language acquisition and processing, with psychologists often emphasizing learning strategies and social interactions while linguists focus on underlying structural principles [12]. This methodological and conceptual diversity presents both challenges and opportunities for researchers operating across these domains.

Comparative Analysis of Research Approaches and Terminological Usage

Disciplinary Distribution in Cognitive Science Research

Research forums dedicated specifically to cognitive science reveal distinctive patterns of disciplinary participation. Analysis of the Cognitive Science Society's activities provides insight into how different disciplines contribute to this interdisciplinary field.

Table 1: Departmental Affiliations of First Authors in Cognitive Science Journal

| Time Period | Psychology | Computer Science | Linguistics | Philosophy | Neuroscience | Cognitive Science | Other |

|---|---|---|---|---|---|---|---|

| 1977-1981 | 33% | 29% | 6% | 11% | 3% | 0% | 18% |

| 1984-1988 | 36% | 29% | 4% | 7% | 7% | 0% | 17% |

| 1991-1995 | 31% | 26% | 5% | 9% | 4% | 8% | 17% |

Source: Adapted from Schunn, Crowley, & Okada (1998) analysis of Cognitive Science journal [13]

The data reveal that psychology and computer science have consistently dominated publications in Cognitive Science journal, together accounting for approximately 60-65% of first authors across the periods studied [13]. The emergence of dedicated cognitive science departments as a affiliation category (reaching 8% by 1991-1995) indicates the institutionalization of cognitive science as a distinct discipline rather than merely an interdisciplinary collaboration [13].

Terminological Patterns in Comparative Psychology Research

Analysis of terminology usage in specialized psychology journals reveals significant shifts in theoretical orientations over time, as reflected in the language used in article titles.

Table 2: Cognitive Terminology in Comparative Psychology Journal Titles (1940-2010)

| Journal | Time Period | Cognitive Word Frequency | Behavioral Word Frequency | Cognitive-Behavioral Ratio | Title Length (Words) |

|---|---|---|---|---|---|

| JCP | 1940-2010 | 0.0105 | 0.0119 | 0.88 | 13.40 |

| JCP | 1940-1960 | 0.0021 | 0.0153 | 0.14 | 9.87 |

| JCP | 2000-2010 | 0.0176 | 0.0089 | 1.98 | 15.24 |

| JEP | 1975-2010 | 0.0098 | 0.0145 | 0.68 | 13.52 |

Source: Adapted from Whissell (2013) analysis of 8,572 article titles [14]

The data demonstrate a substantial increase in cognitive terminology usage over time, with the cognitive-behavioral ratio in Journal of Comparative Psychology (JCP) titles increasing from 0.14 in the early period (1940-1960) to 1.98 in the contemporary period (2000-2010), indicating that cognitive terms eventually surpassed behavioral terminology in frequency [14]. This "cognitive creep" represents a significant shift in theoretical orientation within comparative psychology, moving from predominantly behaviorist approaches to increasingly cognitive frameworks [14].

Experimental Protocols for Cross-Journal Terminology Analysis

Methodology for Terminological Analysis

The analytical approach for comparing cognitive terminology usage across journals and disciplines involves systematic content analysis of published research. The following protocol outlines the key methodological steps:

Data Collection Protocol:

- Journal Selection: Identify target journals representing distinct disciplinary orientations (e.g., Cognitive Science, Journal of Comparative Psychology, Trends in Cognitive Sciences)

- Time Frame Stratification: Select balanced samples across multiple time periods to enable historical comparison

- Content Sampling: Extract article titles, abstracts, and keywords as primary units of analysis

- Metadata Collection: Gather departmental affiliations, research methodologies, and citation patterns

Terminological Coding Framework:

- Cognitive Lexicon Identification: Create a standardized dictionary of cognitive terms (e.g., "memory," "cognition," "metacognition," "representation")

- Behavioral Lexicon Identification: Create a parallel dictionary of behavioral terms (e.g., "behavior," "learning," "response," "reinforcement")

- Emotional Connotation Assessment: Apply the Dictionary of Affect in Language (DAL) to evaluate emotional connotations of terminology

- Inter-rater Reliability: Establish coding protocols with >90% inter-rater agreement

This methodology enables quantitative comparison of terminological preferences across disciplines and time periods, revealing shifts in theoretical orientations and research priorities [14].

Experimental Workflow for Cross-Disciplinary Comparison

The following diagram illustrates the systematic workflow for conducting cross-journal comparisons of cognitive terminology usage:

Systematic Workflow for Terminology Analysis

Research Reagent Solutions: Methodological Toolkit

Table 3: Essential Methodological Tools for Cross-Disciplinary Terminology Research

| Research Tool | Primary Function | Application Example | Considerations |

|---|---|---|---|

| Dictionary of Affect in Language (DAL) | Quantifies emotional connotations of terminology | Scoring pleasantness, activation, and imagery values of title words [14] | 69% matching rate for scientific titles vs. 90% for everyday language |

| Cognitive Terminology Lexicon | Standardized set of mentalist/cognitive terms | Identifying cognitive terminology in text corpora [14] | Must be periodically updated to reflect evolving terminology |

| Behavioral Terminology Lexicon | Standardized set of behaviorist terms | Tracking behaviorist terminology usage patterns [14] | Enables direct comparison with cognitive terminology frequency |

| Departmental Affiliation Classification | Categorizes institutional backgrounds | Analyzing disciplinary representation in publications [13] | Reveals dominance patterns (e.g., psychology, computer science) |

| Citation Analysis Framework | Maps knowledge flows across disciplines | Identifying cross-disciplinary citation patterns [13] | Reveals influence relationships between fields |

These methodological tools enable systematic comparison of terminological patterns and theoretical orientations across disciplines and time periods. The DAL instrument is particularly valuable for operationalizing the emotional and conceptual connotations of terminology, providing a behaviorally-anchored approach to analyzing linguistic patterns [14].

Cognitive Linguistics and Cross-Linguistic Diversity

The intersection of cognitive psychology and linguistics reveals substantial variation in how language structures shape cognitive processes across different linguistic traditions. Cross-linguistic differences refer to variations in language structure, sound systems, and grammatical features that influence how individuals perceive and produce speech [15]. These differences manifest in multiple domains:

- Phonological Variation: Native speakers of one language may struggle to perceive phonetic distinctions that don't exist in their native language, affecting second language acquisition [15]

- Grammatical Structure: Languages with rich morphological systems may foster different cognitive processing strategies than those with simpler structures [15]

- Tonal Processing: Speakers of tonal languages (e.g., Mandarin) often demonstrate enhanced pitch perception compared to speakers of non-tonal languages [15]

These cross-linguistic differences have implications for cognitive processes beyond language itself, including memory, attention, and categorization. Bilingual individuals often exhibit unique patterns of speech production and cognitive processing that reflect the specific phonetic rules and structures of their dominant language [15]. This diversity presents both challenges and opportunities for cognitive researchers working across linguistic traditions.

Implications for Drug Development Research

The cross-disciplinary perspectives on cognitive diversity have significant implications for drug development professionals, particularly in domains like Alzheimer's disease research where cognitive assessment is paramount. Understanding terminological and conceptual differences across disciplines enhances research in several key areas:

Clinical Trial Design:

- Cognitive assessment instruments must account for linguistic and cultural variations in cognitive performance

- Cross-disciplinary approaches integrate neuroscientific, psychological, and linguistic perspectives on cognitive outcomes

- Biomarker development benefits from integrated perspectives on cognitive function [16]

Therapeutic Target Identification:

- The Alzheimer's drug development pipeline reflects diverse therapeutic approaches, including biological disease-targeted therapies (30%), small molecule disease-targeted therapies (43%), cognitive enhancement approaches (14%), and neuropsychiatric symptom treatments (11%) [16]

- Cross-disciplinary perspectives enable more comprehensive targeting of cognitive symptoms across multiple domains

Assessment Methodologies:

- Cognitive terminology standardization improves consistency in outcome measurement across trials

- Integrated assessment strategies incorporate insights from cognitive psychology, linguistics, and neuroscience

- Cultural and linguistic adaptation of cognitive instruments enhances validity across diverse populations

The growing emphasis on cross-disciplinary collaboration in cognitive sciences creates opportunities for more sophisticated approaches to cognitive assessment in clinical trials, potentially enhancing both the sensitivity of outcome measures and the ecological validity of cognitive assessments [17].

The cross-disciplinary study of cognitive phenomena reveals both substantial diversity in approaches and terminology, and increasing integration across traditional disciplinary boundaries. The comparative analysis presented in this guide demonstrates systematic patterns in how different research traditions conceptualize and investigate cognitive processes, with significant implications for research design, measurement, and interpretation across multiple applied contexts including pharmaceutical development.

For drug development professionals, awareness of these cross-disciplinary perspectives enables more sophisticated approaches to cognitive assessment, particularly in conditions like Alzheimer's disease where cognitive outcomes are primary endpoints. The continuing evolution of cognitive terminology and methodologies across disciplines suggests that maintaining cross-disciplinary literacy will remain essential for cutting-edge research in cognitive assessment and intervention.

In scientific research, particularly in psychology and neuroscience, the term "cognition" encompasses a broad range of mental processes, including memory, attention, decision-making, and problem-solving. This conceptual breadth presents a significant challenge: without precise definitions, communication among researchers becomes ambiguous, and findings become difficult to replicate or compare. The lack of standardized operational definitions for cognitive terminology has been identified as a persistent problem in comparative psychology and related fields, where the same term may be defined and measured differently across studies [1] [18].

This guide provides a systematic comparison of approaches to defining cognitive terminology, with a specific focus on cross-journal analysis of definitional practices. By examining how key cognitive constructs are conceptualized and operationalized across different research contexts, we aim to establish a framework for enhancing methodological rigor and facilitating direct comparison of research findings across studies and disciplines—a crucial concern for researchers, scientists, and drug development professionals who rely on precise cognitive assessments in their work.

Conceptual vs. Operational Definitions: Foundational Principles

Distinguishing Conceptual and Operational Definitions

In psychological research, a conceptual definition articulates what exactly is to be measured or observed in a study, explaining what a word or term means within the specific research context. In contrast, an operational definition specifies exactly how to capture (identify, create, measure, or assess) the variable in question [19]. These definition types serve complementary but distinct roles in the research process.

For example, in a study of stress in students, a conceptual definition would describe what is meant by "stress" theoretically, while an operational definition would describe how "stress" would be quantitatively measured—such as through scores on the Perceived Stress Scale (PSS), heart rate, or blood pressure measurements [19]. The conceptual definition establishes theoretical meaning, while the operational definition enables empirical measurement.

The Critical Role of Operational Definitions in Quantitative Research

Operational definitions are fundamental to quantitative research, which involves collecting and analyzing numerical data to find patterns, test relationships, and generalize results [20]. They serve as the crucial bridge between abstract theoretical constructs and concrete, measurable observations [21].

Key functions of operational definitions in research include:

- Enhancing reliability: Ensuring consistent measurement across time and observers

- Facilitating replication: Allowing other researchers to reproduce methods and verify findings

- Supporting validity: Helping ensure that measurements actually capture the intended construct

- Enabling quantification: Transforming abstract concepts into measurable variables suitable for statistical analysis [21]

Without precise operational definitions, research risks becoming unreplicable, invalid, or scientifically meaningless—particularly when studying complex cognitive phenomena that cannot be directly observed.

Cross-Journal Analysis of Cognitive Terminology Usage

Historical Trends in Cognitive Terminology

Research examining the employment of cognitive or mentalist words in the titles of articles from three comparative psychology journals reveals significant trends in terminology usage. A comprehensive analysis of 8,572 titles containing over 100,000 words from the Journal of Comparative Psychology, International Journal of Comparative Psychology, and Journal of Experimental Psychology: Animal Behavior Processes demonstrated a notable increase in cognitive terminology usage from 1940 to 2010 [1] [18].

Table 1: Cognitive Terminology Usage in Comparative Psychology Journals (1940-2010)

| Journal | Time Period | Cognitive Terminology Trend | Behavioral Terminology Trend | Primary Focus |

|---|---|---|---|---|

| Journal of Comparative Psychology | 1940-2010 | Significant increase | Relative decrease | Progressively cognitivist approach |

| International Journal of Comparative Psychology | 2000-2010 | High usage | Lower comparative usage | Cognitive processes in animals |

| Journal of Experimental Psychology: Animal Behavior Processes | 1975-2010 | Moderate increase | Steady or slight decrease | Balance of behavioral and cognitive approaches |

This "cognitive creep"—the progressive increase in cognitive terminology—was especially notable when compared to the use of behavioral words, highlighting a shift toward more cognitivist approaches in comparative research [1]. This trend reflects the broader evolution of psychology from strict behaviorist perspectives to frameworks that incorporate internal mental processes.

Problems in Current Usage of Cognitive Terminology

The increased use of cognitive terminology has not been without challenges. Two significant problems identified in the literature include:

Lack of operationalization: Many studies use cognitive terms without providing clear operational definitions, making it difficult to compare findings across studies or replicate results [1] [18].

Lack of portability: Definitions and measurement approaches developed in one research context often do not transfer effectively to other contexts, populations, or species [1].

These challenges are particularly acute in comparative psychology and drug development research, where precise cognitive assessment is essential for evaluating interventions or making cross-species comparisons.

Operationalization Frameworks for Key Cognitive Constructs

A Process Model for Complex Thinking

Recent research has proposed conceptual models with operational definitions for higher-order cognitive processes. One such model operationalizes "complex thinking" through three interrelated cognitive processes: critical thinking, creative thinking, and metacognition [22].

Table 2: Operational Definitions of Complex Thinking Components

| Cognitive Process | Conceptual Definition | Operational Definition Examples | Measurement Approaches |

|---|---|---|---|

| Critical Thinking | Ability to analyze, evaluate, and reconstruct thinking processes | Monitoring and control of inferences through reasoning | Standardized critical thinking tests, analysis of argument quality |

| Creative Thinking | Capacity for innovative, divergent thought and problem-solving | Performance on divergent thinking tasks, novel solution generation | Alternative Uses Test, Torrance Tests of Creative Thinking |

| Metacognition | Awareness and regulation of one's own thinking processes | Self-report on cognitive strategies, accuracy of learning predictions | Metacognitive Awareness Inventory, think-aloud protocols |

The interdependence and complementarity of these three cognitive processes enable complex thinking, which is characterized by its multidimensional, self-aware, and self-correcting nature [22]. Research suggests that metacognition plays a particularly crucial role in complex thinking, serving as a "metacompetence" that regulates other cognitive processes.

Methodology for Developing Operational Definitions

Creating effective operational definitions requires a systematic approach. The following workflow outlines the process from conceptualization to measurement:

Figure 1. Workflow for developing operational definitions of cognitive terminology.

The process begins with identifying the abstract construct of interest, then proceeds through literature review to understand how the construct has been previously defined and measured. Researchers then identify observable indicators of the construct, select appropriate measurement methods, define specific measurement criteria, and pilot test the definition before final implementation [21].

Key steps in creating effective operational definitions include:

Identify the concept or construct: Clearly define the psychological construct to be measured, reviewing relevant theory and literature [21].

Determine how the construct will be observed: Identify behaviors, physiological responses, or self-report metrics that represent the construct [21].

Select a specific measurement method: Choose from behavioral observations, psychometric tools, physiological measures, or performance-based tasks [21].

Define the criteria for measurement: Articulate precise criteria for what will be counted or measured, including units, time frames, and context [21].

Pilot test the definition: Conduct preliminary testing to identify ambiguities and refine measurement criteria before full implementation [21].

Experimental Protocols for Cognitive Terminology Research

Cross-Journal Terminology Analysis Protocol

The research on cognitive terminology usage in comparative psychology journals employed a systematic protocol that can be adapted for cross-journal comparisons in other domains:

Data Collection:

- Journal titles were downloaded from databases for three comparative psychology journals spanning 1940-2010

- The basic unit of analysis was the volume or year, with 8,572 titles containing approximately 115,000 words analyzed [1]

Term Identification:

- Cognitive words were defined as those referring to mental processes, emotions, or presumed brain/mind processes

- A predefined list of cognitive terms was used, including: affect, attention, awareness, categorization, cognition, concept, emotion, memory, mind, motivation, perception, and reasoning [1]

- Words with the root "behav" were separately identified for comparison

Analysis Approach:

- The Dictionary of Affect in Language (DAL) was employed to score emotional connotations of title words

- Relative frequencies of cognitive versus behavioral terminology were calculated by volume-year

- Statistical analyses tracked changes in terminology usage patterns over time [1]

This protocol provides a template for analyzing terminology patterns across journals, time periods, or research domains.

Language Performance as a Longevity Predictor Protocol

A landmark study demonstrating precise operationalization of cognitive constructs examined language performance as a predictor of longevity:

Study Design:

- Researchers analyzed data from the Berlin Aging Study (BASE), which tracked 516 older adults (70-105 years at enrollment) for up to 18 years [23]

Operational Definitions:

- Language performance: Measured by tasks like naming as many animals as possible within 90 seconds

- Perceptual speed: Operationalized through visual processing tasks

- Verbal knowledge: Measured through vocabulary assessments

- Episodic memory: Assessed through recall of specific events or information [23]

Statistical Analysis:

- Researchers employed a joint multivariate longitudinal survival model

- This advanced approach evaluated both current cognitive abilities and their change over time while predicting mortality risk [23]

The study found that language performance—operationalized as verbal fluency—was the strongest predictor of longevity among cognitive measures, demonstrating the importance of precise operational definitions in identifying clinically meaningful relationships [23].

Research Reagent Solutions for Cognitive Terminology Studies

Table 3: Essential Methodological Tools for Cognitive Terminology Research

| Research Tool | Primary Function | Application Context | Key Features |

|---|---|---|---|

| Dictionary of Affect in Language (DAL) | Evaluates emotional connotations of words | Analysis of textual materials, journal titles | Provides ratings on Pleasantness, Activation, and Concreteness dimensions [1] |

| Standardized Cognitive Batteries | Assess specific cognitive abilities | Longitudinal studies, clinical trials | Provide normative data, validated measures of constructs like memory, attention [23] |

| Joint Multivariate Longitudinal Survival Models | Statistical analysis of cognitive-longevity relationships | Studies linking cognitive performance to health outcomes | Analyzes both ability levels and change over time while predicting events [23] |

| Computational Linguistic Analysis | Automated analysis of terminology patterns | Large-scale text analysis, cross-journal comparisons | Enables processing of large text corpora (>100,000 words) [1] |

These "research reagents" provide essential methodological infrastructure for conducting rigorous studies of cognitive terminology usage and its relationship to other variables of interest.

Visualization Approaches for Cognitive Terminology Relationships

Effective visualization of relationships between cognitive concepts is essential for communicating research findings. The following diagram represents the conceptual structure of complex thinking as identified in recent research:

Figure 2. Conceptual structure of complex thinking and its cognitive components.

This visualization illustrates how complex thinking emerges from the integration of critical, creative, and metacognitive processes, which in turn encompass more specific thinking styles [22]. The relationships between these components highlight the multidimensional nature of complex cognition.

When creating such visualizations, it is essential to follow data visualization best practices:

- Use appropriate color palettes: Select colors that provide sufficient contrast and are accessible to colorblind readers [24]

- Limit unnecessary color usage: Employ color strategically to emphasize key relationships rather than decoratively [24]

- Maintain consistency: Use consistent color schemes across related visualizations to facilitate interpretation [24]

This comparison guide has examined current approaches to defining cognitive terminology, with particular emphasis on cross-journal analysis of definitional practices. The evidence reveals both progress and challenges in the field: while cognitive terminology has become increasingly prevalent in psychological research, inconsistency in operational definitions continues to impede comparability across studies.

The most effective approaches to defining cognitive terminology incorporate clear conceptual definitions grounded in theoretical frameworks, coupled with precise operational definitions that specify measurable indicators. The development of standardized protocols for operationalizing cognitive constructs—such as the complex thinking model outlined in this guide—represents a promising direction for enhancing methodological rigor in psychological research, neuroscience, and drug development.

For researchers and professionals working with cognitive terminology, we recommend: (1) explicitly articulating both conceptual and operational definitions in research reports; (2) adopting established measurement approaches when available; and (3) contributing to the development of standardized definitions that can facilitate comparison across studies and research domains. Such practices will advance the field toward more cumulative, replicable, and applicable knowledge about cognitive processes and their assessment.

The comparative study of cognitive terminology and processes in humans and animals is a cornerstone of modern neuroscience and psychology. This field seeks to understand the evolutionary origins of human cognition by investigating analogous processes in other species, while also recognizing the unique aspects of human cognitive abilities. Research in this domain employs a diverse methodological toolkit, ranging from observational studies in natural settings to controlled laboratory experiments and advanced neuroimaging techniques. The fundamental premise underlying this comparative approach is that despite significant behavioral and neurological differences, humans and animals share basic cognitive building blocks rooted in our shared evolutionary history. This article provides a systematic comparison of how cognitive research is conducted across human and animal models, examining the terminology, methodologies, and conceptual frameworks that both unite and distinguish these research traditions. By synthesizing findings from recent studies across multiple species, we aim to illuminate the shared and unique aspects of cognitive processing across the phylogenetic scale and provide researchers with a practical guide to navigating this complex interdisciplinary landscape.

Comparative Analysis of Cognitive Terminology and Conceptual Frameworks

Table 1: Cognitive Terminology Application Across Species

| Cognitive Term | Human Research Application | Animal Research Application | Cross-Species Validation |

|---|---|---|---|

| Learning | Explicit & implicit learning systems; educational contexts; cognitive development | Associative learning (classical/operant conditioning); skill acquisition (e.g., nut-cracking in chimps) | Quantitative models applicable to both humans and animals [25] |

| Memory | Episodic, semantic, working memory; neuropsychological assessments | Spatial memory; procedural memory; cache retrieval in food-storing species | Brain imaging reveals similar hippocampal involvement in humans and animals [26] |

| Problem-Solving | Executive function tests; innovation; technological development | Tool use; obstacle bypass; puzzle boxes (e.g., chimpanzee nut-cracking techniques) | Observational paradigms adapted for cross-species comparison [27] |

| Emotional Attachment | Parent-child bonding; romantic attachment; social networks | Human-pet bonding; intra-species social bonds (e.g., dog-owner relationships) | Public perception correlates cognitive abilities with bonding capacity [28] |

| Cognitive Decline | Alzheimer's disease; age-related memory impairment; dementia | Age-related skill loss (e.g., tool use proficiency in elderly chimps) | Similar patterns of age-related decline observed in wild chimpanzees [27] |

| Suffering Capacity | Pain scales; psychological distress measures; quality of life indices | Behavioral indicators of distress; physiological stress markers; approach-avoidance | Public perception recognizes suffering across species, weighted toward mammals [28] |

Table 2: Methodological Approaches in Cognitive Research

| Research Method | Human Applications | Animal Applications | Comparative Advantages |

|---|---|---|---|

| Quantitative Behavioral Analysis | Standardized psychological tests; rating scales; performance metrics | Owner questionnaires; trained observer coding; automated behavior tracking | Enables direct statistical comparison; operationalizes abstract concepts [20] [29] |

| Genetic Analysis | Genome-wide association studies (GWAS) for behavioral traits | Breed-specific genetic mapping; selective breeding studies | Identifies conserved genetic mechanisms (e.g., golden retriever-human gene sharing) [30] |

| Neuroimaging | fMRI, PET, MRI for brain activity mapping | Comparative neuroanatomy; functional imaging in trained animals | Reveals neural correlates of cognitive processes across species [26] |

| Longitudinal Observation | Lifespan development studies; cognitive aging research | Wild population monitoring (e.g., Bossou chimpanzee community) | Tracks cognitive changes across lifespan in naturalistic settings [27] |

| Experimental Manipulation | Controlled laboratory tasks; intervention studies | Lesion studies; pharmacological interventions; environmental manipulations | Establishes causal relationships but raises ethical concerns [31] |

Key Experimental Protocols and Methodologies

Genomic Correlates of Behavioral Traits

Protocol Title: Genome-Wide Association Study (GWAS) for Cross-Species Behavioral Traits

Objective: To identify shared genetic variants underlying similar behavioral traits in humans and golden retrievers.

Methodology Details: Researchers analyzed the complete genetic code of 1,300 golden retrievers participating in the Golden Retriever Lifetime Study, comparing genetic markers with behavioral assessments obtained through detailed owner questionnaires covering 73 specific behaviors. These behaviors were grouped into 14 reliable categories including trainability, stranger-directed fear, dog-directed aggression, and non-social fear. The team employed strict statistical thresholds to identify genetic loci significantly associated with each behavioral trait, then compared these findings with known human genetic associations using databases from human psychological genetics studies.

Key Findings: The study identified twelve genes with significant behavioral influences in both species. Notably, the PTPN1 gene associated with aggression toward other dogs in golden retrievers also correlates with intelligence and depression in humans. Another gene variant linked to fearfulness in dogs influences the tendency for humans to ruminate after embarrassing events and educational achievement levels. The ROMO1 gene, associated with trainability in dogs, links to intelligence and emotional sensitivity in humans [30].

Age-Related Cognitive Decline in Natural Settings

Protocol Title: Longitudinal Observation of Tool Use Proficiency in Aging Wild Chimpanzees

Objective: To document patterns of age-related cognitive decline in wild chimpanzees through systematic observation of tool-use behaviors.

Methodology Details: Researchers analyzed decades of video footage from the Bossou chimpanzee community in Guinea, West Africa, where scientists have maintained an "outdoor laboratory" since 1988. This site features a clearing with provided stones and nuts to observe chimpanzee tool use. The study focused on nut-cracking behavior - a culturally transmitted skill requiring complex sequence learning, fine motor coordination, and causal understanding. Researchers coded videos for proficiency metrics including hammer stone selection accuracy, nut alignment precision, strike efficiency, and success rate. Individuals of known age were tracked across their lifespan to compare performance at different life stages.

Key Findings: Researchers observed significant age-related declines in tool-use proficiency among elderly chimpanzees, including increased confusion with previously mastered tasks, frequent tool changes, misalignment of nuts, and longer processing times. These patterns mirror human age-related cognitive decline and suggest evolutionary roots for conditions like Alzheimer's disease dating back at least to our last common ancestor with chimpanzees approximately 6-8 million years ago [27].

Public Perception of Cognitive Abilities Across Species

Protocol Title: Quantitative Assessment of Public Perception of Animal Cognition

Objective: To measure how the general public perceives cognitive abilities, emotional capacity, and susceptibility to suffering across different pet species.

Methodology Details: Researchers employed survey methodology with quantitative rating scales to assess public perception of cognitive capabilities across different animal classes (mammals, birds, reptiles, etc.). Participants rated various species on dimensions including problem-solving ability, memory, communication skills, emotional attachment to owners, and capacity to experience suffering. Statistical analysis examined patterns in these perceptions and correlations between perceived cognitive ability and other attributes.

Key Findings: Public perception of cognitive capabilities follows a phylogenetic scale, with mammals receiving the highest scores followed by birds, reptiles, amphibians, and fish. Strong positive correlations emerged between perceived cognitive ability and believed capacity for both suffering and emotional attachment. This perception pattern has potential welfare implications, as species judged as less cognitively capable may receive less sophisticated care [28].

Visualizing Comparative Cognitive Research Approaches

Cognitive Perception Phylogenetic Scale

Cross-Species Genetic Behavioral Links

The Researcher's Toolkit: Essential Materials and Methods

Table 3: Research Reagent Solutions for Comparative Cognitive Studies

| Research Tool | Application in Human Research | Application in Animal Research | Functional Purpose |

|---|---|---|---|

| Owner Questionnaires | Self-report psychological inventories; informant reports of functioning | Standardized owner assessments of pet behavior (e.g., Golden Retriever Lifetime Study) | Quantifies behavioral traits and emotional tendencies in natural context [30] |

| fMRI/MRI Systems | 3T-7T scanners for human brain mapping; functional connectivity studies | Comparative neuroanatomy; trained animal imaging; Iseult 11.7T for detailed resolution | Non-invasive brain structure and function analysis; cross-species comparisons [26] |

| Genetic Sequencing Platforms | Human genome-wide association studies; psychiatric genetics | Canine genome mapping; breed-specific behavioral genetics | Identifies conserved genetic mechanisms of behavior and cognition [30] |

| Video Recording Systems | Controlled experimental documentation; naturalistic observation | Wild behavior monitoring (e.g., chimpanzee tool use); laboratory behavior coding | Permanent record for detailed behavioral analysis and reliability coding [27] |

| Standardized Cognitive Tests | IQ tests; memory batteries; executive function tests | Species-appropriate problem-solving tasks; learning assays | Operationalizes cognitive constructs for quantitative comparison [25] [29] |

| Digital Behavior Coding Software | Human movement analysis; facial expression coding | Animal behavior ethograms; automated pattern recognition | Objective quantification of behavioral sequences and patterns [20] |

Discussion: Integrating Comparative Approaches

The comparative study of cognitive terminology across human and animal research reveals both deep conservation and notable specialization in cognitive processes. Quantitative approaches have been particularly valuable in enabling direct comparisons across species, though they must be carefully adapted to account for species-specific characteristics and ecological contexts. The genetic discoveries showing shared behavioral foundations between humans and golden retrievers [30] provide compelling evidence for conserved neurobiological mechanisms underlying cognition and emotion across mammals.

Methodologically, the field continues to balance controlled laboratory studies with naturalistic observation, each offering complementary strengths. Laboratory studies enable precise variable control and manipulation, while naturalistic observations preserve ecological validity and reveal cognitive abilities in evolutionarily relevant contexts. The documented patterns of age-related cognitive decline in wild chimpanzees [27], for instance, provide unique insights that would be impossible to obtain in artificial laboratory settings alone.

Future research directions should include more sophisticated cross-species cognitive test batteries, improved genetic tools for mapping behavioral traits, and advanced neuroimaging techniques that can be applied across multiple species. Additionally, researchers must continue to address the ethical considerations inherent in comparative cognitive research, particularly as it relates to animal welfare and the interpretation of findings in ways that respect both the similarities and differences between human and animal minds [28] [31].

Measuring Cognition: Methodological Frameworks and Clinical Applications

Cognitive Performance Outcomes (Cog-PerfOs) in Drug Development

In the landscape of neurology and psychiatry drug development, Cognitive Performance Outcomes (Cog-PerfOs) serve as essential tools for quantifying the efficacy of therapeutic interventions targeting cognitive symptomatology. These measurements of mental performance, completed through answering questions or performing tasks, constitute primary or key secondary endpoints in clinical trials for conditions where cognitive impairment represents core disease pathology [32]. The rigorous validation and appropriate implementation of Cog-PerfOs have become increasingly critical as the drug development pipeline expands, particularly for Alzheimer's disease (AD) and related dementias. As of 2025, the AD drug development pipeline alone hosts 182 clinical trials investigating 138 novel drugs, with biological and small molecule disease-targeted therapies comprising 30% and 43% of the pipeline respectively [16]. Within this context, Cog-PerfOs provide the necessary metrics to determine whether these investigational therapies effectively address the cognitive manifestations that profoundly impact patients' daily functioning and quality of life.

The emerging recognition of cognitive dysfunction as a therapeutic target across numerous neurological and psychiatric conditions has intensified the focus on refining Cog-PerfO methodologies. Despite their central role in clinical research, significant challenges persist in demonstrating Cog-PerfO validity, including establishing content validity, ecological validity, and ensuring appropriate application across multinational contexts [32]. Simultaneously, advances in our understanding of cognitive assessment have revealed the limitations of relying exclusively on traditional cognitive screening tools, with growing evidence supporting the integration of functional cognitive assessments that better reflect real-world performance [33]. This comparative guide examines the current state of Cog-PerfOs in drug development, providing researchers with a structured analysis of assessment methodologies, validation frameworks, and emerging approaches that collectively shape the evaluation of cognitive therapeutics.

Current Drug Development Pipeline and Cognitive Assessment Landscape

Alzheimer's Disease Drug Development Pipeline Analysis

The 2025 Alzheimer's disease drug development pipeline demonstrates substantial growth and diversification, reflecting intensified efforts to address the mounting global burden of cognitive disorders. According to the clinicaltrials.gov registry assessment, the current pipeline includes 138 drugs being evaluated across 182 clinical trials, representing an increase in both trials and drugs compared to the 2024 pipeline [16]. This expansion coincides with the emergence of real-world evidence for newly available anti-amyloid therapies, with studies presented at the 2025 Alzheimer's Association International Conference (AAIC) confirming the effectiveness and patient satisfaction with lecanemab and donanemab in clinical practice settings [34] [35].

Table 1: 2025 Alzheimer's Disease Drug Development Pipeline Composition

| Therapeutic Category | Percentage of Pipeline | Representative Mechanisms/Targets |

|---|---|---|

| Biological Disease-Targeted Therapies (DTTs) | 30% | Monoclonal antibodies (amyloid, tau), vaccines, antisense oligonucleotides |

| Small Molecule DTTs | 43% | Synaptic plasticity, neuroprotection, inflammation, oxidative stress |

| Cognitive Enhancement | 14% | Neurotransmitter modulation, cognitive enhancement |

| Neuropsychiatric Symptoms | 11% | Agitation, psychosis, apathy |

| Repurposed Agents | 33% | Drugs approved for other indications |