Cognitive Word Identification Protocols: From Digital Biomarkers to Clinical Applications in Neurodegenerative Disease Research

This article synthesizes current methodologies and applications of cognitive word identification protocols, a critical tool for assessing cognitive health in neurological and psychiatric research.

Cognitive Word Identification Protocols: From Digital Biomarkers to Clinical Applications in Neurodegenerative Disease Research

Abstract

This article synthesizes current methodologies and applications of cognitive word identification protocols, a critical tool for assessing cognitive health in neurological and psychiatric research. We explore the foundational theories linking language production to cognitive domains like memory and executive function, and detail standardized protocols from picture descriptions to structured recall tasks. The article provides a critical evaluation of troubleshooting common pitfalls in cognitive assessment, including issues of ecological validity and cultural adaptation. Furthermore, we examine rigorous validation frameworks, including performance against standardized neuropsychological batteries and the emergence of AI-driven speech analysis as a sensitive digital biomarker. Designed for researchers and drug development professionals, this review highlights how these protocols are revolutionizing early detection, monitoring, and therapeutic evaluation in conditions like Alzheimer's disease, mild cognitive impairment, and post-stroke cognitive impairment.

The Cognitive-Linguistic Nexus: Theoretical Foundations of Word Identification in Brain Health

Language production is a complex cognitive feat that relies on the intricate coordination of core cognitive domains. A substantial body of evidence confirms that executive functions (EF), working memory, and attention are indispensable for the learning, processing, and production of language [1] [2]. These domain-general cognitive control mechanisms enable the planning, monitoring, and updating of linguistic information in real-time. This article details the application of this foundational relationship to experimental protocols, providing a framework for research within cognitive word identification and language analysis. The following sections synthesize key quantitative findings, provide detailed methodological procedures, and visualize the underlying cognitive architecture supporting language production.

Key Quantitative Findings: Cognitive-Language Correlations

Research consistently demonstrates significant correlations between specific cognitive functions and various metrics of language production. The table below summarizes key quantitative relationships established in recent studies.

Table 1: Documented Correlations Between Cognitive Functions and Language Production Metrics

| Cognitive Domain | Specific Measure | Language Production Metric | Correlation/Effect Strength | Population | Source |

|---|---|---|---|---|---|

| Working Memory | Verbal Working Memory | Grammatical Accuracy | Systematically stronger relationship | 5-6 year olds | [3] |

| Working Memory | Verbal & Visual Working Memory | Story Macrostructure (Semantic completeness, adequacy) | Significant relationship | 5-6 year olds | [3] |

| Inhibition | Inhibition Ability | Receptive Vocabulary Knowledge | Significant association | 3-4 year olds | [2] |

| Cognitive Flexibility | Task Shifting | Poetry Discourse Comprehension | Significantly better performance in high-CF students | 1st Graders with ADHD | [4] |

| Working Memory | Updating/Monitoring | Sentence Comprehension & Production | Underlying mechanism | Literature Review | [1] |

Experimental Protocols for Assessing Cognitive-Language Links

This section provides detailed methodologies for key experiments that probe the interface between core cognitive domains and language production.

Protocol: Narrative Production Task with Working Memory Assessment

This protocol is designed to evaluate the relationship between working memory capacity and narrative discourse, assessing both macrostructural and microstructural elements of language [3].

1. Objective: To investigate the respective contributions of verbal and visual working memory to the quality of oral narratives in school-aged children.

2. Participants: Typically developing children (e.g., 5-6 years old). Sample sizes of approximately 250+ are recommended for robust correlational analysis.

3. Materials and Equipment:

- Working Memory Assessments:

- Verbal WM Task: A standardized task requiring the manipulation and recall of verbal information (e.g., non-word repetition, backward digit span).

- Visual-Spatial WM Task: A standardized task requiring the manipulation and recall of visual sequences (e.g., Corsi Blocks task).

- Narrative Elicitation Stimuli:

- A series of pictures depicting a coherent, age-appropriate story.

- A single, complex picture that can inspire a story creation.

- A short, pre-recorded narrative for a story retelling task.

- Recording Equipment: High-quality audio recorder for subsequent transcription and analysis.

4. Procedure:

- Cognitive Assessment: In a quiet room, administer the verbal and visual working memory tasks in a counterbalanced order.

- Narrative Elicitation: Administer three narrative tasks in a fixed or counterbalanced order:

- Story Creation (Picture Series): Present the picture series and instruct the participant: "Look at these pictures. They tell a story. Please tell me the story."

- Story Creation (Single Picture): Present the single picture and instruct the participant: "Look at this picture. Please make up a story about what is happening."

- Story Retelling: Play the pre-recorded narrative once, then instruct the participant: "Now, please tell me the story you just heard, as close to the original as you can."

- Data Recording: Audio-record all narrative productions for later verbatim transcription.

5. Data Analysis:

- Narrative Transcription: Transcribe audio recordings verbatim.

- Macrostructure Coding: Code narratives for:

- Semantic Completeness: Presence of all main story elements.

- Narrative Structure: Adherence to a "goal-attempt-outcome" schema [3].

- Story Programming: Overall coherence and logical sequence of the narrative.

- Microstructure Coding: Code narratives for:

- Grammatical Accuracy: Number of grammatical errors per T-unit.

- Verbal Productivity: Total number of words, syntagmas, and sentences.

- Statistical Analysis: Conduct multiple regression analyses with verbal and visual WM scores as predictors and macrostructural/microstructural scores as outcome variables.

Protocol: Eye-Tracking and Executive Function in Sentence Processing

This protocol uses eye-tracking to investigate how executive functions, particularly inhibition, support real-time sentence processing and vocabulary learning in young children [2].

1. Objective: To test the hypothesis that sentence processing abilities (specifically, maintaining multiple referents) mediate the relationship between EF and receptive vocabulary knowledge.

2. Participants: Young children (e.g., 3-4 years old), assessed longitudinally.

3. Materials and Equipment:

- EF Assessments:

- Inhibition: A developmentally sensitive task (e.g., Grass/Snow task, Day/Night Stroop).

- Working Memory: A task requiring storage and manipulation (e.g., Backward Digit Span).

- Cognitive Flexibility: A task requiring shifting between mental sets (e.g., Dimensional Change Card Sort).

- Language Assessment: A standardized test of receptive vocabulary (e.g., Peabody Picture Vocabulary Test).

- Eye-Tracking Setup: An eye-tracker integrated with a display screen.

- Stimuli for Sentence Processing Task: Sets of four images and corresponding spoken sentences, as developed by Borovsky et al. (2012) [2]. Example: Images of treasure (target), a ship (agent-related foil), bones (action-related foil), and a cat (unrelated foil), paired with the sentence "The pirate hides the treasure."

4. Procedure:

- Baseline Testing: Administer EF and receptive vocabulary tests at baseline.

- Eye-Tracking Calibration: Calibrate the eye-tracker for each participant.

- Sentence Processing Task:

- On each trial, four images are displayed on the screen.

- Participants listen to a sentence that describes a relationship between the images.

- The key measurement period is the anticipatory window—the time after the verb ("hides") is heard but before the onset of the final noun ("treasure").

- Data Collection: Eye movements are tracked and recorded at a high sampling rate (e.g., 500-1000 Hz).

5. Data Analysis:

- EF Factor Analysis: Perform a confirmatory factor analysis to determine the structure of EF (unitary vs. separable components).

- Eye-Tracking Metrics: Calculate the proportion of anticipatory looks to the target image during the critical time window.

- Mediation Analysis: Use structural equation modeling to test whether the ability to maintain multiple referents (as measured by anticipatory looks) mediates the relationship between EF scores (especially inhibition) and receptive vocabulary scores.

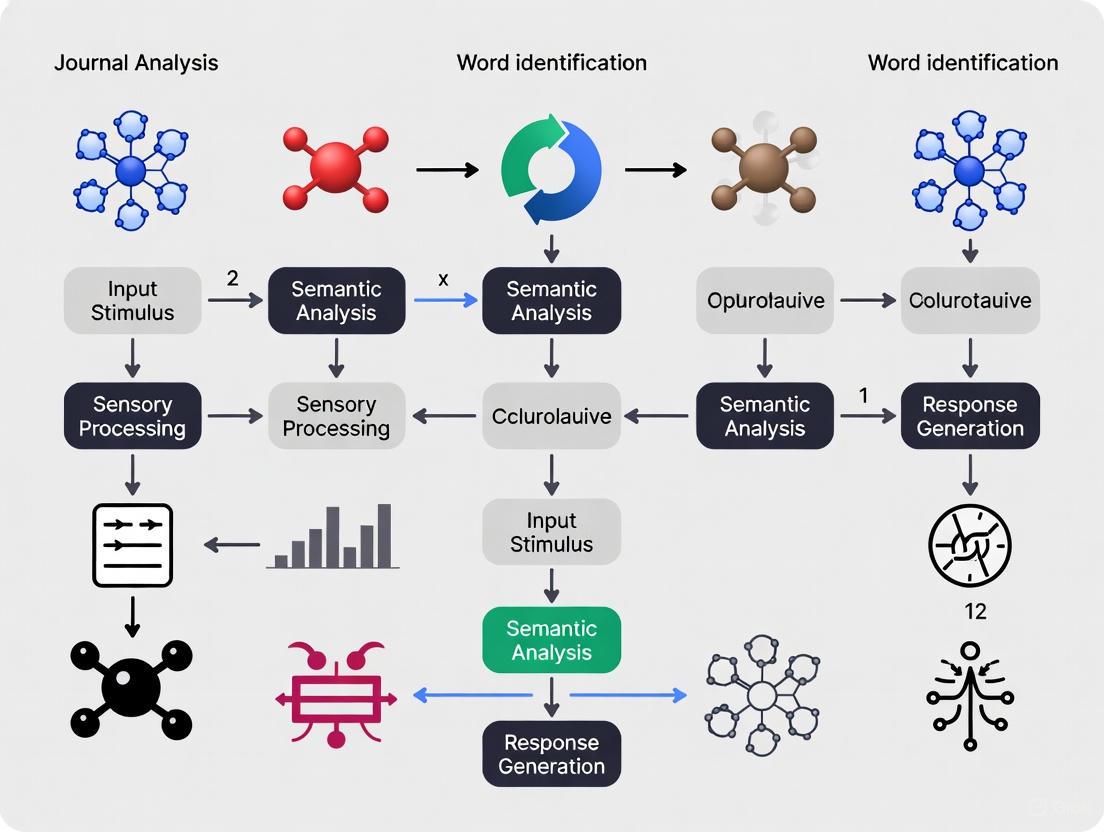

Visualization of the Cognitive Architecture of Language Production

The following diagram, generated using Graphviz DOT language, illustrates the proposed relationships and pathways between core cognitive domains and language production processes.

Diagram: Cognitive-Language Processing Pathway

The Scientist's Toolkit: Research Reagent Solutions

The following table outlines key materials and their functions for conducting research in cognitive word identification and language production.

Table 2: Essential Research Materials and Tools for Cognitive-Language Protocols

| Research Tool / Material | Primary Function in Protocol | Exemplars / Specifications |

|---|---|---|

| Standardized EF Tasks | To provide validated, developmentally sensitive measures of specific executive functions (Inhibition, WM, Cognitive Flexibility). | Grass/Snow Task (Inhibition); Backward Digit Span (WM); Dimensional Change Card Sort (Cognitive Flexibility) [2]. |

| Language Assessments | To quantify language proficiency, vocabulary, and narrative skills as outcome variables. | Peabody Picture Vocabulary Test (Receptive Vocab); standardized narrative assessment rubrics [3] [2]. |

| Eye-Tracker | To capture real-time, implicit measures of cognitive processing during language comprehension tasks with high temporal resolution. | Eye-link or Tobii systems; used to measure anticipatory looks in visual world paradigms [2]. |

| Stimulus Presentation Software | To ensure precise control over the timing and presentation of auditory and visual stimuli during experiments. | E-Prime, PsychoPy, or Presentation. |

| High-Fidelity Audio Recorder | To capture high-quality speech samples for subsequent verbatim transcription and linguistic analysis. | Portable digital recorders (e.g., Zoom H1n). |

| Cognitive Stimulation Software | To administer computerized, adaptive training programs targeting specific cognitive functions (e.g., working memory) and measure transfer effects to language. | Commercially available platforms like CogniFit or custom-designed tasks [1]. |

Understanding word recognition dynamics is a fundamental pursuit in cognitive psychology and neuroscience, with significant implications for diagnosing reading difficulties and developing cognitive interventions. This document frames the analysis of error patterns and reaction times (RTs) within Lexical Decision Tasks (LDTs) as core cognitive word identification protocols for journal analysis research. The LDT, where participants classify letter strings as words or pseudowords, serves as a paradigmatic case for investigating the architecture of the reading system [5]. Traditionally, research has focused on mean accuracy and RTs for correct responses, treating them as separate indicators of performance. However, recent advances demonstrate that the dynamic interplay between accuracy and speed—specifically, the distribution of errors across the RT spectrum—provides a more nuanced and powerful marker of reading efficiency and its development [6] [7]. This application note details the protocols for implementing LDTs and analyzing error dynamics, providing researchers and scientists with methodologies to identify objective cognitive markers relevant to broader research on neurocognitive health and performance.

Theoretical Background and Significance

Visual word recognition is a cornerstone of reading, a process where visual input is mapped onto lexical (word-based), semantic (meaning-based), and phonological (sound-based) representations [5]. A dominant theoretical framework posits that during reading, letters in the visual field activate multiple candidate word nodes in parallel [5]. The cognitive system must then resolve this competition to achieve accurate recognition. The LDT is a primary tool for probing this process, as it requires the participant to access lexical knowledge to make a binary decision.

A critical theoretical shift has moved the focus from static performance measures to the dynamics of how responses unfold over time. The analysis of error dynamics—specifically, whether errors are committed more quickly or slowly than correct responses—offers a window into the underlying cognitive mechanisms [6] [7]. Recent studies hypothesize that error dynamics can serve as an objective marker of reading efficiency and developmental progress [6]. For instance, a shift from slow word errors to fast pseudoword errors is correlated with improving reading skills in children, suggesting a refinement in the ability to inhibit automatic lexical processes when necessary [6]. This protocol focuses on capturing and analyzing these dynamics, providing a more sensitive measure than traditional analyses.

Experimental Protocols for Lexical Decision Tasks

Core Lexical Decision Protocol

This section outlines a standardized protocol for administering a lexical decision task suitable for analyzing error dynamics and RTs.

- Objective: To measure the speed and accuracy of lexical access by having participants classify visual letter strings as words or pseudowords.

- Participants: Recommendations vary by study focus. For developmental studies, sample sizes of ~50+ spanning different grade levels (e.g., Grade 1 and 2) are used [6]. Adult studies with a cognitive focus may use ~36 participants after screening [7].

- Stimulus Design:

- Word Stimuli: Select a set of words (e.g., 500 monosyllabic and bisyllabic words) from a standardized lexical database (e.g., LEXIQUE for French [7]). Control for key variables known to affect word recognition, such as word frequency, word length (e.g., 5-6 letters), and orthographic neighborhood (the number of words that can be formed by changing one letter).

- Pseudoword Stimuli: Create a matched set of pseudowords (e.g., 500 items) by replacing letters in the real words (e.g., the French word "achat" becomes "achou") [7]. Ensure pseudowords are pronounceable and obey the phonotactic rules of the language. Matching words and pseudowords on orthographic and phonological neighborhood, as well as letter and bigram frequency, is critical [7].

- Procedure:

- Participants are seated in front of a computer monitor in a quiet environment.

- Each trial begins with a fixation cross at the center of the screen (e.g., for 500 ms).

- A letter string (word or pseudoword) is presented until a response is given, or for a predetermined duration (e.g., 3000 ms).

- Participants are instructed to press one key (e.g., 'F') if the string is a word and another key (e.g., 'J') if it is not a word, as quickly and accurately as possible.

- The inter-trial interval (displaying a blank screen or fixation cross) is typically jittered (e.g., 500-1500 ms) to prevent rhythmic responding.

Protocol for fMRI Lexical Decision Experiments

For neuroimaging studies, the protocol is adapted for the scanner environment. The following is based on a detailed experiment protocol [8].

- Objective: To identify brain regions involved in visual word recognition and relate neural activity to behavioral performance (RT and errors).

- Participants: Screen for MRI safety. A sample of ~17 healthy adults is typical [8].

- Stimulus Design:

- Procedure:

- Localizer Block: Images are presented rapidly (e.g., 0.8 s each) in a block design. Participants perform a one-back task to ensure attention [8].

- Event-Related Block: Stimuli are presented individually (e.g., for 300 ms) with a long, jittered inter-stimulus interval (e.g., 3.7 s) to separate the hemodynamic responses. Participants perform the standard lexical decision task [8].

- fMRI Acquisition Parameters (Example):

- Scanner: 3T Siemens Skyra.

- Coil: 32-channel head coil.

- Functional Sequence: T2*-weighted gradient-echo-planar imaging.

- Parameters: TR = 2 s, TE = 28 ms, flip angle = 79°, voxel size = 3×3×3 mm³, 33 slices [8].

- fMRI Data Preprocessing:

- Process data using standard software (e.g., SPM12).

- Steps include realignment, slice-time correction, co-registration with structural images, segmentation, normalization to a standard template (e.g., MNI), and smoothing [8].

- Denoising (e.g., with GLMdenoise) is recommended to improve the signal-to-noise ratio [8].

Data Analysis and Quantification

Analyzing Error Dynamics

Moving beyond mean RTs and accuracy is crucial for capturing the full dynamics of word recognition. Two complementary methodologies are recommended.

- Reaction Time Comparison for Correct vs. Error Trials: Calculate the mean RT for correct responses and error responses separately for words and pseudowords. A key finding is that pseudoword errors are often faster than correct pseudoword responses, whereas word errors can be slower than correct word responses [6]. This pattern suggests different cognitive origins for the two error types.

- Conditional Accuracy Functions (CAFs): This is a more powerful technique for visualizing error dynamics. The procedure is as follows [6] [7]:

- For each participant and condition (words/pseudowords), rank-order all trials from fastest to slowest RT.

- Divide the RT distribution into a manageable number of bins (e.g., 5-7 bins), each containing an equal number of trials.

- For each bin, calculate the accuracy rate (percentage of correct responses).

- Plot the accuracy rate as a function of the mean RT for each bin.

The shape of the CAF reveals the nature of errors:

- Fast Errors: A decrease in accuracy for the fastest RT bins. This is typically observed for pseudowords and is interpreted as a failure to inhibit an automatic "word" response driven by lexical activation [7].

- Slow Errors: A decrease in accuracy for the slowest RT bins. This can occur for both words and pseudowords and may be due to lapses in attention, response uncertainty, or a time-pressure-induced guess [7].

- Of importance, the correlation between CAF patterns and independent measures of reading skills (e.g., fewer slow word errors and more fast pseudoword errors correlating with better reading) validates error dynamics as a marker of reading efficiency [6].

The table below summarizes key quantitative findings from recent studies on error dynamics in LDTs.

Table 1: Summary of Quantitative Findings in Error Dynamics Research

| Study Component | Key Quantitative Finding | Interpretation |

|---|---|---|

| Participant Sample [6] | 56 French-speaking children (22 Grade 1, 34 Grade 2) | Typical sample size for a developmental study. |

| Participant Sample [7] | 36 native French speakers (after screening; ~29 women); Mean age = 20.61 | Typical sample size for a young adult behavioral study. |

| Stimuli Count [7] | 500 words + 500 pseudowords | A large number of non-repeated items is recommended for robust CAF analysis. |

| CAF Bin Observations [7] | 100 observations per bin (5 bins total) | Exceeds recommendations for stable CAF estimation. |

| Error RT Pattern (Words) | Errors are slower than correct responses [6]. | Suggests hesitation or difficulty in lexical retrieval. |

| Error RT Pattern (Pseudowords) | Errors are faster than correct responses [6]. | Suggests impulsive responding due to failed inhibition of automatic lexical activation. |

| Correlation with Reading | Fewer slow word errors & more fast pseudoword errors → better reading skills [6]. | Error dynamics shift as reading expertise develops. |

Visualization of Experimental Workflow and Analysis

To enhance the clarity and reproducibility of the protocols, the following diagrams illustrate the core workflows.

Lexical Decision Task & CAF Analysis Workflow

The diagram below outlines the sequential process from experimental setup to the analysis of error dynamics.

Interpreting Conditional Accuracy Functions

This diagram maps the different CAF profiles to their theoretical interpretations, providing an analytical guide.

The Scientist's Toolkit: Research Reagents & Materials

The following table details essential materials and tools required for implementing the described LDT protocols.

Table 2: Essential Research Materials and Reagents for Lexical Decision Studies

| Item Name | Specification / Example | Primary Function in Research |

|---|---|---|

| Lexical Database | LEXIQUE 3 (French) [7]; SUBTLEX | Provides normative linguistic data (word frequency, neighborhood) for stimulus selection and matching. |

| Stimulus Presentation Software | PsychoPy, E-Prime, Presentation | Precisely controls the timing and display of stimuli and records behavioral responses (RT & accuracy). |

| Pseudo-letter Font | BACS stimulus set [9] | Provides well-designed, non-letter foils for alphabetic decision tasks, forcing full letter identification. |

| Standardized Reading Assessment | French "L'Alouette" test [7], TOWRE | Provides an independent, standardized measure of reading skill for correlation with experimental measures. |

| Non-verbal IQ Test | Raven's Progressive Matrices [7] | Used as a screening or control measure to rule out general cognitive factors as the source of reading deficits. |

| fMRI Scanner | 3T Siemens Skyra with 32-channel head coil [8] | Acquires high-resolution structural and functional brain images during task performance. |

| Neuroimaging Data Analysis Suite | SPM12 [8], FSL, AFNI | Preprocesses and statistically analyzes fMRI data, including GLM modeling and ROI definition. |

| Dissimilarity Analysis Tool | RSA Toolbox for MATLAB [8] | Quantifies neural representational patterns (e.g., using cross-validated Mahalanobis distance) in fMRI data. |

Application to Reading Research and Drug Development

The detailed protocols for analyzing error dynamics in LDTs have direct applications in clinical research and drug development.

For researchers studying neurodevelopmental disorders like dyslexia, these protocols offer a more sensitive behavioral endpoint than standard reading tests. The finding that a specific pattern of error dynamics (e.g., persistent slow word errors) correlates with poor reading skills provides a quantifiable target for intervention [6] [7]. A therapeutic aim could be to normalize this pattern.

For professionals in drug development, particularly for cognitive enhancers or therapeutics for neurological conditions, these protocols can serve as a key tool in a cognitive assessment battery. A drug candidate aiming to improve cognitive control or processing speed could be evaluated by its ability to specifically reduce fast errors (indicating improved inhibition) or slow errors (indicating reduced attentional lapses) in a standardized LDT. The fMRI-compatible protocol [8] further allows for the identification of neural correlates of any behavioral changes induced by a drug, strengthening the evidence for target engagement and functional impact.

The accurate interpretation of cognitive and behavioral assessments is paramount in both clinical and research settings, particularly in fields like drug development where quantifying cognitive change is critical. Hierarchical models of intelligence provide a powerful, structured framework for this interpretation. These models posit that cognitive abilities are organized in layers, from a broad general intelligence at the top to specific, narrow skills at the base [10]. This theoretical structure moves beyond a single composite score, enabling a more nuanced profile of an individual's cognitive strengths and weaknesses. For researchers and scientists, especially those developing and evaluating cognitive-focused pharmaceuticals, this granularity is indispensable. It allows for the identification of which specific cognitive domains (e.g., memory, processing speed, executive function) are impacted by an intervention, providing a robust mechanism for tracking efficacy and characterizing a drug's cognitive signature.

Theoretical Foundation: The Hierarchical Structure of Cognition

The most comprehensive and widely adopted hierarchical model in modern psychometrics is the Cattell-Horn-Carroll (CHC) theory. This model integrates decades of research into a three-stratum pyramid that systematically categorizes cognitive abilities [10].

- Stratum III: General Intelligence (g). This apex of the hierarchy represents a person's overall, general cognitive ability. It influences performance across a wide range of mental tasks and is considered the brain's core processing power.

- Stratum II: Broad Cognitive Abilities. This middle layer consists of several independent but correlated broad abilities. Key domains include:

- Fluid Intelligence (Gf): The ability to solve novel problems, reason, and identify patterns.

- Crystallized Intelligence (Gc): The breadth and depth of acquired knowledge, skills, and experience.

- Visual-Spatial Processing (Gv): The capacity to perceive, analyze, and manipulate visual information.

- Processing Speed (Gs): The speed at which automatic cognitive tasks are performed, especially under pressure to maintain focused attention.

- Stratum I: Narrow Cognitive Abilities. These are highly specific skills that sit beneath each broad ability. For example, under Gc, narrow abilities would include vocabulary knowledge, reading comprehension, and spelling accuracy [10].

This hierarchical organization explains why an individual might excel in verbal reasoning but struggle with spatial tasks, or have strong acquired knowledge but slower mental processing. For journal analysis and drug development, this model provides a validated map for deconstructing global cognitive outcomes into their constituent parts.

Application Notes: Quantitative Data and Research Reagents

The following tables synthesize key quantitative data and methodological tools essential for applying hierarchical models in research protocols.

Table 1: Core Cognitive Domains in the CHC Hierarchical Model: Definitions and Assessment Examples [10]

| Domain (Code) | Definition | Example Assessment Tasks |

|---|---|---|

| General Intelligence (g) | Overall mental processing power and reasoning capacity influencing all cognitive tasks. | Composite scores from full-scale IQ batteries. |

| Fluid Intelligence (Gf) | Ability to solve novel problems, logic puzzles, and recognize patterns. | Matrix reasoning, novel concept learning, number series. |

| Crystallized Intelligence (Gc) | Depth and breadth of acquired knowledge and verbal comprehension. | Vocabulary tests, general knowledge questions, verbal analogies. |

| Visual-Spatial Processing (Gv) | Ability to perceive, manipulate, and reason with visual patterns and spatial orientation. | Mental rotation tasks, block design, map reading. |

| Processing Speed (Gs) | Speed of performing automatic cognitive tasks, particularly under time pressure. | Symbol search, rapid naming tasks, simple visual scanning. |

| Working Memory (Gwm) | Ability to hold and manipulate information in mind over short periods. | Digit span backwards, mental arithmetic, following complex instructions. |

Table 2: Research Reagent Solutions for Cognitive Assessment Protocols

| Reagent / Tool | Primary Function in Protocol | Application Context |

|---|---|---|

| Standardized Neuropsychological Battery (e.g., SNSB) | Provides a comprehensive, multi-domain assessment of cognitive function based on hierarchical models [11]. | Gold-standard evaluation in clinical trials to detect domain-specific cognitive change (e.g., attention, memory, executive function). |

| Global Cognitive Screener (e.g., MoCA) | A brief, sensitive tool for initial screening and tracking of global cognitive status [12]. | Rapid assessment in community pharmacy settings or as a first-pass evaluation in large-scale studies. |

| Speech Audiometry (Word Recognition Tests) | Quantifies functional hearing (Speech Discrimination Score), a critical covariate in cognitive testing [11]. | Controlling for auditory confounds in cognitive studies; investigating the hearing-cognition relationship. |

| Fast Periodic Visual Stimulation-EEG (FPVS-EEG) | Tracks the emergence of neural representations for novel learned information (e.g., words) with high temporal precision [13]. | Objective, neural-level measurement of learning efficacy and lexical integration in experimental cognitive protocols. |

| CAIDE Dementia Risk Score | Validated tool for calculating an individual's risk of developing dementia based on lifestyle, age, and comorbidities [12]. | Stratifying participants in longitudinal studies or cognitive prevention trials. |

Experimental Protocols

Protocol for Pharmacist-Led Cognitive Screening and Risk Factor Assessment

This protocol, adapted from a 2025 study, outlines a method for early identification of cognitive impairment in accessible community settings [12].

Objective: To systematically identify patients at risk for cognitive impairment (CI) through cognitive screening and evaluate associated risk factors within a pharmaceutical care framework.

Materials:

- Short-form Montreal Cognitive Assessment (s-MoCA).

- Data collection form (socio-demographics, lifestyle, comorbidities, medication history).

- CAIDE Dementia Risk Score calculator.

- Anticholinergic Burden (ACB) Scale calculator.

Methodology:

- Participant Recruitment: Recruit adults aged 50 and over from community pharmacies. Exclude individuals with pre-existing diagnoses of CI/dementia or serious mental illness.

- Informed Consent: Obtain written informed consent.

- Cognitive Screening: Administer the s-MoCA test in a quiet, distraction-free area of the pharmacy.

- Risk Factor Assessment:

- Data Collection: Record socio-demographics, lifestyle habits, and medical history.

- Medication Review: Analyze chronic pharmacotherapy to identify "at-risk medications" (e.g., anticholinergics, sedatives) using the ACB scale.

- Dementia Risk Calculation: Compute the CAIDE score based on collected variables.

- Referral and Follow-up: Participants with scores suggesting CI on the s-MoCA are referred to a physician for a comprehensive diagnostic workup. Pharmacists provide counseling on modifiable risk factors (e.g., medication safety, cardiovascular health).

Protocol for Assessing Cognitive Function in Hearing Loss

This protocol details a cross-sectional study design to investigate the association between hearing loss and cognitive status using standardized batteries [11].

Objective: To determine the association between speech discrimination ability and cognitive function in older adults with hearing loss.

Materials:

- Sound-attenuated booth and calibrated audiometric equipment.

- Standardized word lists for Speech Recognition Threshold (SRT) and Speech Discrimination Score (SDS).

- Korean Mini-Mental State Examination (K-MMSE).

- Seoul Neuropsychological Screening Battery (SNSB-II).

Methodology:

- Participant Selection: Recruit adults aged ≥60 with sensorineural hearing loss. Exclude those with central nervous system diseases or congenital ear malformations.

- Audiometric Measurement:

- Conduct pure-tone audiometry to establish hearing thresholds.

- Perform speech audiometry: Determine the SRT and SDS using live voice presentation of standardized word lists, with contralateral masking if needed.

- Cognitive Assessment:

- Administer the K-MMSE to evaluate global cognitive function.

- Administer the SNSB-II to assess five key domains: attention, language, visuospatial function, memory, and executive function.

- Data Analysis:

- Classify hearing loss severity based on WHO SRT criteria.

- Classify cognitive status as normal, Mild Cognitive Impairment (MCI), or dementia based on K-MMSE and SNSB-II scores.

- Use multivariate logistic regression to analyze the association between hearing loss (SDS, WHO grade) and cognitive impairment, controlling for age, sex, and education.

Visualization of Hierarchical Models and Workflows

The following diagrams, generated with Graphviz, illustrate the core concepts and protocols described in this article. The color palette and contrast adhere to the specified guidelines to ensure clarity and accessibility.

CHC Model Hierarchy

This diagram visualizes the three-stratum structure of the Cattell-Horn-Carroll (CHC) theory of intelligence.

Cognitive Screening Protocol

This diagram outlines the step-by-step workflow for the pharmacist-led cognitive screening and risk assessment protocol.

Word Learning Neural Pathways

This diagram illustrates the logical relationships and neural pathways involved in novel visual word learning, as investigated using the FPVS-EEG method.

Quantitative Performance of Speech Biomarkers in Cognitive Assessment

The utility of speech as a digital biomarker is demonstrated by its performance in distinguishing cognitive status across multiple studies and conditions, including Alzheimer's Disease (AD), Mild Cognitive Impairment (MCI), and Post-Stroke Cognitive Impairment (PSCI).

Table 1: Diagnostic Performance of Speech Biomarkers in Cognitive Impairment

| Cognitive Condition | Speech Features Utilized | Classification Performance | Sample Size & Population |

|---|---|---|---|

| General CI / Dementia [14] | Acoustic & paralinguistic from automated transcription | AUROC: 0.90 (model with transcription features) | 146 participants (Framingham Heart Study) |

| MCI [15] | Combined acoustic & psycholinguistic from interviews | F1-score: 0.73-0.86; Sensitivity: up to 0.90 | 71 older, community-dwelling adults (Mean age: 83.3) |

| Alzheimer's Disease (AD) [16] | Percentage of Silence Duration (PSD) combined with serum biomarkers (GFAP, p-Tau217) and APOE | CI Diagnosis AUC: 0.928; Aβ Status AUC: 0.845 | 1223 participants (238 AD, 461 aMCI, 524 CU) |

| Post-Stroke CI (PSCI) [17] | Linguistic & acoustic features from picture description | Target: ≥75% accuracy (MoCA-defined impairment) | 30 stroke survivors (Singapore cohort) |

Table 2: Key Acoustic and Linguistic Features and Their Cognitive Correlates

| Feature Category | Specific Features | Cognitive Correlates & Interpretation |

|---|---|---|

| Temporal / Acoustic [16] | Percentage of Silence Duration (PSD) | Increased pauses indicate word-finding difficulty, impaired information retrieval, and cognitive load. |

| Acoustic [15] | Breathing patterns, nonverbal vocalizations (e.g., giggles) | May reflect reduced respiratory control or changes in affective prosody related to neurological decline. |

| Psycholinguistic [15] | Vocabulary richness, quantity of speech, speech fragmentation | Reduced richness and output, along with more pauses and filler words, indicate executive dysfunction and impoverished semantic content. |

| Linguistic [17] | Information content, semantic coherence, syntactic complexity | Decline in coherence and complexity reflects impairments in executive function, working memory, and verbal fluency. |

Experimental Protocols for Speech-Based Cognitive Assessment

Standardized protocols are critical for ensuring the reliability and validity of speech-based digital biomarkers. The following methodologies are adapted from recent clinical studies.

Protocol A: Picture Description Task for AD & MCI Screening

This protocol is widely used, including in the large-cohort study by [16].

- Task: Participants are shown the "Cookie-Theft" picture from the Boston Diagnostic Aphasia Examination and instructed to describe everything they see happening in the picture for 60 seconds [16].

- Recording Setup:

- Environment: Quiet room with ambient noise limited to <45 dB.

- Software: Audio recording software (e.g., Cool Edit Pro).

- Parameters: Sampling frequency of 160,000 Hz, creating a 16-bit mono recording [16].

- Feature Extraction:

- Primary Variable: Calculate the Percentage of Silence Duration (PSD), defined as (Total silent pause duration / Total speech duration) * 100% [16].

- Analysis Tool: Automated speech recognition (ASR) software or manual annotation of silence segments.

Protocol B: Semi-Structured Interview for Longitudinal Monitoring

This protocol, suitable for conditions like PSCI and general aging studies, involves more naturalistic speech [17] [15].

- Task:

- Semi-Structured Conversation: A trained interviewer conducts a qualitative interview using open-ended prompts (e.g., "Tell me about your interests.") to elicit spontaneous speech [15].

- Standardized Prompt: Alternatively, a specific prompt like "Describe your favorite holiday" can be used for consistency across a cohort [17].

- Recording Setup:

- Use a high-quality digital voice recorder in a consistent, quiet setting.

- Data Processing & Feature Extraction:

- Automated Transcription: Process audio using ASR systems (e.g., DeepSpeech engine). For multilingual populations, fine-tune acoustic models on local speech patterns (e.g., Singlish) [17].

- Feature Extraction:

Protocol C: Integrated Protocol for Post-Stroke Cognitive Impairment (PSCI)

This comprehensive protocol from [17] combines multiple tasks for a detailed assessment.

- Study Design: Prospective longitudinal cohort with assessments at baseline (within 6 weeks of stroke), 3, 6, and 12 months.

- Visit Structure:

- Cognitive Assessment: Administer the Montreal Cognitive Assessment (MoCA).

- Speech Protocol (25-35 minutes):

- Picture Description Task: As described in Protocol A.

- Semi-Structured Conversation: As described in Protocol B.

- Data Analysis:

- Correlation Analysis: Spearman's correlation between speech features and MoCA scores.

- Machine Learning: Train classification (e.g., Logistic Regression, XGBoost) and regression models to predict cognitive status and scores from speech features [17] [16].

- Longitudinal Modeling: Use Linear Mixed-Effects models to characterize trajectories of speech features and cognitive scores over time.

Visualization of Workflows and Biomarker Relationships

The following diagrams, generated with Graphviz, illustrate the core experimental workflow and the relationship between speech biomarkers and underlying pathology.

Speech Biomarker Analysis Workflow

Speech Biomarker Correlation with Pathology

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Reagents, Tools, and Software for Speech Biomarker Research

| Item Name / Category | Specific Examples / Specifications | Primary Function in Research |

|---|---|---|

| Standardized Cognitive Tests | Montreal Cognitive Assessment (MoCA), Hopkins Verbal Learning Test (HVLT), Trail Making Test (TMT) [17] [15] | Provides ground truth labels for cognitive status to validate and train speech-based classification models. |

| Speech Elicitation Tasks | "Cookie-Theft" Picture Description, Semi-Structured Interview Prompts [17] [16] | Standardized methods to elicit spontaneous speech samples for consistent feature extraction across participants. |

| Automated Speech Recognition (ASR) | DeepSpeech engine, ASR for CI v1.3, Fine-tuning with local speech corpora (e.g., National Speech Corpus) [17] [16] | Automatically transcribes audio recordings to text, enabling large-scale analysis and extraction of linguistic features. |

| Acoustic Analysis Toolkits | Software for extracting ~6,000+ vocal features (energy, pitch, prosody, spectral, voice quality) [18] | Quantifies non-linguistic, sound-based characteristics of speech that correlate with cognitive motor control and affect. |

| Natural Language Processing (NLP) Libraries | Linguistic feature extraction pipelines (syntax, semantics, lexicon) [17] [14] | Analyzes transcribed text to quantify linguistic properties like syntactic complexity, idea density, and vocabulary richness. |

| Machine Learning Frameworks | Logistic Regression, XGBoost, Linear Mixed-Effects Models [17] [16] [14] | Develops predictive models that combine multiple speech features to classify cognitive status and track longitudinal change. |

| Biomarker Assay Kits | Serum GFAP, NFL, p-Tau217 via Single Molecular Immunity Detection (SMID) [16] | Provides pathological validation by correlating speech digital biomarkers with established blood-based biomarkers of Alzheimer's disease. |

From Lab to Clinic: Implementing Standardized Word Identification Protocols

Standardized elicitation tasks are methodical procedures designed to evoke specific, measurable cognitive, linguistic, or behavioral responses. In cognitive assessment, these tasks are fundamental for evaluating functions such as memory, executive function, and language processing. The core purpose is to obtain reliable and valid data that can be used to identify cognitive impairment (CI), monitor disease progression, or assess the cognitive safety and efficacy of pharmaceutical interventions [12] [19]. The move towards standardized protocols is driven by the need for reproducibility and the ability to integrate data across multi-laboratory studies and clinical trials [20].

Framed within research on cognitive word identification protocols, these elicitation tasks serve as the foundational layer. They generate the raw verbal and behavioral data from which patterns of word retrieval, semantic organization, and narrative coherence can be quantitatively and qualitatively analyzed. This is critical for journal analysis research that seeks to deconstruct and understand the architecture of cognitive-linguistic processes.

The table below summarizes three core elicitation tasks, their primary cognitive targets, and their significance in research and clinical applications.

Table 1: Key Standardized Elicitation Tasks in Cognitive Assessment

| Elicitation Task | Primary Cognitive Domains Assessed | Research/Clinical Utility | Common Output Metrics |

|---|---|---|---|

| Story Recall | Episodic memory, Working memory, Executive function, Verbal ability [21] | Identifying memory impairments (e.g., in MCI, Alzheimer's), evaluating efficacy of cognitive enhancers [12] [21] | Recall accuracy, Thematic gist retention, Intrusions, Temporal sequence accuracy |

| Picture Description | Executive function, Semantic memory, Visual processing, Linguistic organization [22] | Assessing semantic fluency, conceptual integration, and expressive language; useful in aphasia and dementia studies [22] | Information units conveyed, Lexical diversity, Syntactic complexity, Narrative coherence |

| Semi-Structured Conversation | Social cognition, Pragmatic language, Cognitive flexibility, Self-referential memory [22] | Evaluating functional communication, psychological well-being, and identity; used in reminiscence therapy and social interaction studies [22] | Turn-taking dynamics, Use of autobiographical details, Emotional valence, Topic maintenance |

Experimental Protocols

Protocol 1: Story Recall Task

Aim and Rationale

To objectively assess episodic verbal memory and executive function by measuring the immediate and delayed recall of a structured narrative. This task is a cornerstone for identifying impairments in memory encoding, storage, and retrieval [21].

Materials and Reagents

- Standardized Narrative: A pre-recorded, semantically coherent story of 8-12 sentences, presented auditorily. The story should contain a clear theme, specific characters, and a logical sequence of events.

- Audio Recording and Playback Equipment: A device capable of delivering high-fidelity, consistent auditory stimuli.

- Digital Audio Recorder: To capture the participant's verbal responses for subsequent analysis.

- Scoring Rubric: A standardized worksheet that breaks the story down into predetermined "information units" (e.g., subjects, verbs, objects, key details) for quantitative scoring.

Step-by-Step Procedure

- Preparation: Set up the testing environment to be quiet and free from distractions. Ensure the audio recorder is functioning.

- Participant Instruction: Tell the participant: "You are going to hear a short story. Please listen carefully, as I will ask you to tell me the story back straight after it finishes, with as much detail as you can remember."

- Stimulus Presentation: Play the standardized narrative once.

- Immediate Recall: Immediately after the story ends, prompt the participant: "Please now tell me everything you can remember from that story." Begin audio recording. Do not interrupt the participant during their recall. Allow up to 2 minutes for this phase.

- Distractor Phase (for Delayed Recall): Engage the participant in a non-verbal, neutral activity (e.g., a simple motor task) for a standardized delay period, typically 20-30 minutes.

- Delayed Recall: After the distractor period, prompt the participant again without forewarning: "Earlier, I played a story for you. Can you tell me everything you remember from that story now?" Record their response.

- Data Recording: Transcribe the audio recordings verbatim. Score the transcripts against the scoring rubric to generate quantitative measures for both immediate and delayed recall.

Protocol 2: Picture Description Task

Aim and Rationale

To evaluate semantic memory, executive function for conceptual integration, and expressive language by analyzing the narrative produced in response to a complex visual scene [22].

Materials and Reagents

- Standardized Visual Stimulus: A detailed, scene-based image such as the "Cookie Theft" picture from the Boston Diagnostic Aphasia Examination or a similar normative image.

- High-Resolution Display: A monitor or tablet for presenting the image.

- Digital Audio Recorder: To capture the participant's description.

- Scoring Framework: A protocol for analyzing linguistic output, focusing on metrics like content units, syntactic complexity, and discourse organization.

Step-by-Step Procedure

- Preparation: Display the image on the screen.

- Participant Instruction: Tell the participant: "I am going to show you a picture. Please describe everything you see happening in the picture, as if you were explaining it to someone who cannot see it."

- Stimulus Presentation: Present the picture.

- Elicitation and Recording: Allow the participant to speak for a pre-set duration (e.g., 1-2 minutes). Record their entire description. If the participant stops prematurely, use a standardized prompt such as, "Is there anything else you can see?"

- Data Processing: Transcribe the audio recording. Analyze the transcript using the predefined scoring framework to quantify linguistic and cognitive performance.

Protocol 3: Semi-Structured Conversation

Aim and Rationale

To assess pragmatic language use, social cognition, and the ability to retrieve and structure autobiographical memories in a dynamic, ecologically valid context [22]. This method is particularly valuable for understanding the integrative and social functions of reminiscence [22].

Materials and Reagents

- Conversation Guide: A list of open-ended questions and topics (e.g., "Tell me about your first job," "Describe a memorable holiday").

- Elicitation Cues (Optional): Personal photos provided by the participant, known to be powerful triggers for autobiographical memory and storytelling [22].

- Digital Audio/Video Recorder: To capture the full interaction.

Step-by-Step Procedure

- Rapport Building: Begin with a brief, neutral conversation to make the participant feel comfortable.

- Initiating the Conversation: Use a broad, open-ended question from the guide to start the main conversation (e.g., "Could you tell me about some of your favorite memories from when you were younger?").

- Active Elicitation: Employ techniques such as:

- Open Questions: Ask questions that cannot be answered with "yes" or "no" [23].

- Focusing the Conversation: Gently guide the conversation from broad topics towards areas of interest for the assessment [23].

- Listening and Prompting: Actively listen and use verbal and non-verbal cues to encourage elaboration without leading the narrative.

- Recording and Termination: Record the entire session. Conclude the conversation after a predetermined time (e.g., 10-15 minutes) or when natural closure is reached.

- Data Analysis: Transcribe the recording. Analyze the transcript for conversational patterns, narrative structure, emotional content, and the use of specific cognitive-linguistic features.

Visualization of Workflows

The diagram below outlines the generalized, high-level workflow for conducting a standardized elicitation task, from preparation to data interpretation.

Cognitive-Linguistic Constructs in Word Identification

This diagram illustrates the logical relationships between the core elicitation tasks and the specific cognitive-linguistic constructs they target, which are central to word identification protocols.

The Scientist's Toolkit: Research Reagent Solutions

The following table details key materials and tools essential for implementing standardized elicitation tasks in a rigorous research or clinical trial setting.

Table 2: Essential Research Reagents and Materials for Elicitation Tasks

| Item Name | Type/Format | Primary Function in Elicitation Tasks |

|---|---|---|

| Standardized Narrative Sets | Pre-recorded audio files with matched scoring rubrics | Serves as a consistent stimulus for Story Recall tasks, enabling reliable within- and between-subject comparisons over time. |

| Normed Visual Stimuli (e.g., CPDT) | High-resolution digital images or physical cards | Provides a validated, complex scene for the Picture Description task, allowing for standardized scoring of semantic content and narrative organization. |

| High-Fidelity Audio Recorder | Digital recording device | Captures participant responses verbatim, creating a permanent record for accurate transcription and subsequent linguistic analysis. |

| Cognitive Assessment Software (e.g., CDR system) | Computerized test battery [21] | Automates the presentation of stimuli and recording of responses for some tasks, ensuring precise timing and reducing administrator-induced variability. |

| Photo Elicitation Sets | Curated sets of generic or personal photographs [22] | Acts as a potent cue for autobiographical memory and narrative generation during Semi-Structured Conversation, facilitating the assessment of self-referential memory. |

| Data Transcription Protocol | Standardized operating procedure (SOP) document | Ensures consistency and accuracy in converting audio recordings to text for analysis, a critical step for data integrity. |

The intersection of post-stroke cognitive impairment (PSCI) and Alzheimer's disease (AD) represents a critical frontier in dementia research. Evidence establishes stroke as a potent, independent risk factor for all-cause dementia, with meta-analyses revealing pooled hazard ratios of 1.69 for prevalent stroke and 2.18 for incident stroke [24]. This risk extends specifically to AD, with stroke patients demonstrating significantly increased incidence rates of intracerebral hemorrhage (3.4/1000 person-years) compared to non-AD controls [25]. The bidirectional relationship is further evidenced by findings that AD patients face elevated cerebrovascular event risks, complicating diagnostic and therapeutic approaches [25].

The clinical challenge is substantial: PSCI affects up to 75% of stroke survivors, creating an urgent need for detection and intervention protocols that address the complex interplay between vascular and neurodegenerative pathology [26]. This article details advanced methodological frameworks for identifying and intervening in these overlapping conditions, providing researchers with structured protocols for investigation.

Quantitative Landscape: Risk Relationships and Incidence Metrics

Table 1: Quantified Risk Relationships Between Stroke and Dementia

| Risk Relationship | Quantified Effect Size | Population Studied | Source Type |

|---|---|---|---|

| Prevalent Stroke → All-Cause Dementia | Pooled HR: 1.69 (95% CI: 1.49–1.92) | 1.9 million participants across 36 studies | Meta-analysis [24] |

| Incident Stroke → All-Cause Dementia | Pooled RR: 2.18 (95% CI: 1.90–2.50) | 1.3 million participants across 12 studies | Meta-analysis [24] |

| Stroke Patients with Seizures → Dementia | OR: 2.08 (95% CI: 1.95–2.21) | 128,341 hospitalized stroke patients | Analysis of Nationwide Inpatient Sample [27] |

| AD Patients → Intracerebral Hemorrhage | IRR: 1.67 (95% CI: 1.43–1.96) | 61,824 AD patients across 29 studies | Meta-analysis [25] |

| AD Patients → Ischemic Stroke | IRR: Not significant (similar to controls) | 61,824 AD patients across 29 studies | Meta-analysis [25] |

Table 2: Intervention Trial Parameters and Methodological Approaches

| Intervention Type | Study Parameters | Population Characteristics | Primary Outcomes |

|---|---|---|---|

| Pharmacological (Maraviroc) | Phase-II RCT; 150 or 600 mg/day vs. placebo for 12 months [28] | Recent subcortical stroke (1-24 months); mild PSCI; MoCA ≤26; white matter lesions [28] | Cognitive score changes; drug-related adverse events; MRI measures; inflammatory markers [28] |

| Cognitive Rehabilitation | 10 therapy sessions over 3 months + 4 maintenance sessions over 6 months vs. TAU [29] | Early-stage Alzheimer's, vascular, or mixed dementia [29] | Goal performance; quality of life; mood; cognition; carer stress levels [29] |

| Multidomain Lifestyle + Pharmacological | 2-7 lifestyle domains combined with pharmacological components; ≥6 months duration [30] | Cognitively normal at-risk, SCD, MCI, or prodromal AD [30] | Cognitive or dementia-related measures [30] |

| Digital Cognitive Assessment (IC3) | 22 short tasks; 60-70 minutes; self-administered via digital platform [31] | Stroke survivors with mild-moderate cognitive impairment [31] | Domain-general and domain-specific cognitive deficits [31] |

Advanced Detection Protocols for Post-Stroke Cognitive Impairment

Speech Analysis and Digital Biomarker Detection

Novel speech analysis protocols offer promising approaches for PSCI detection through automated analysis of linguistic and acoustic features. The following workflow visualizes a standardized protocol for acquiring and analyzing speech samples to identify cognitive impairment biomarkers:

Figure 1: Workflow for speech-based digital biomarker detection in PSCI.

This protocol employs a prospective longitudinal design with four assessment timepoints: baseline (within 6 weeks of stroke onset), 3-, 6-, and 12-months post-stroke [26]. At each visit, participants complete the Montreal Cognitive Assessment (MoCA) and standardized speech tasks including picture description and semi-structured conversation. The methodological approach includes:

- Automated Speech Recognition: Utilizing DeepSpeech ASR engine with transfer learning fine-tuned on Singaporean English (Singlish) to account for unique phonological features, prosodic patterns, and lexical variations [26].

- Linguistic Feature Extraction: Comprehensive analysis including information content, coherence, word retrieval, semantic processing, and syntactic complexity, calibrated against Singaporean English grammatical structures [26].

- Acoustic Feature Analysis: Prosodic and emotion-based features that may reflect frontal-subcortical pathway disruptions [26].

- Machine Learning Modeling: Development of classification and regression models to predict MoCA-defined cognitive impairment, with correlation analysis between speech features and cognitive scores [26].

This approach addresses multilingual contexts by incorporating Singapore-specific linguistic resources and accounting for code-switching patterns, with language background variables integrated as covariates in statistical analyses [26].

Comprehensive Digital Cognitive Phenotyping

The Imperial Comprehensive Cognitive Assessment in Cerebrovascular Disease (IC3) provides a digital framework for comprehensive cognitive assessment specifically designed for stroke populations. This protocol encompasses:

- Domain Coverage: 22 short tasks assessing attention, executive function, language, memory, calculation, praxis, and motor ability, completed in 60-70 minutes via web browser [31].

- Technical Implementation: Built on the Cognitron platform for remote neuropsychological testing, enabling standardized administration without clinician presence [31].

- Validation Approach: Comparison against established clinical tools and normative samples matched for age, gender, and education [31].

- Multimodal Integration: Designed to interface with neuroimaging (structural and functional MRI) and blood biomarkers (Alzheimer's disease and neurodegeneration markers) for comprehensive biomarker development [31].

The digital nature of IC3 affords scalability in cognitive monitoring while providing more detailed response metrics (accuracy, reaction time, trial-by-trial variability) than traditional pen-and-paper tests [31].

Therapeutic Intervention Protocols

Pharmacological Targeting of Post-Stroke Cognitive Impairment

The MARCH trial protocol evaluates Maraviroc, a CCR5 antagonist, for preventing PSCI progression through a hypothesized dual mechanism of enhanced synaptic plasticity and neuroinflammatory modulation [28]. The experimental design includes:

- Study Population: Patients aged 50-86 with recent (1-24 months) subcortical stroke, mild cognitive impairment (MoCA ≤26), and evidence of white matter lesions and small vessel disease on neuroimaging [28].

- Randomization and Dosing: 2:2:1 randomization to low-dose Maraviroc (150 mg/day), high-dose Maraviroc (600 mg/day), or placebo for 12 months, with dose escalation over initial 2 weeks [28].

- Outcome Measures: Primary outcomes include cognitive score changes and drug-related adverse events; secondary outcomes encompass functional and affective scores, MRI-derived measures, inflammatory markers, carotid atherosclerosis, and cerebrospinal fluid biomarkers [28].

- Statistical Power: Sample size of 150 participants (60 in each treatment group, 30 in placebo) provides 80% power to detect differences between treatment and placebo groups [28].

The rationale for CCR5 targeting stems from observations that carriers of the CCR5-Δ32 loss-of-function mutation showed significantly better cognitive and functional outcomes two years post-stroke [28].

Cognitive Rehabilitation Framework

Goal-oriented cognitive rehabilitation represents an evidence-based non-pharmacological approach applicable to both PSCI and early-stage dementia populations. The protocol involves:

- Goal Setting: Collaborative identification of personally meaningful rehabilitation goals using structured interviews (e.g., Canadian Occupational Performance Measure or Bangor Goal-Setting Interview) [29].

- Intervention Approaches: Combination of restorative techniques (building on retained abilities through spaced retrieval, errorless learning) and compensatory strategies (aids, adaptations, environmental modifications) [29].

- Delivery Structure: 10 therapy sessions over 3 months followed by 4 maintenance sessions over 6 months, typically implemented in real-world settings rather than clinical environments to enhance generalization [29].

- Outcome Measurement: Client perceptions of change in goal performance and satisfaction, supplemented by independent ratings from professionals or caregivers [29].

Randomized controlled trials demonstrate that this approach produces meaningful benefits in everyday functioning for people with early-stage Alzheimer's disease, vascular dementia, or mixed dementia [29] [32].

Multidomain Combination Interventions

Emerging protocols combine multidomain lifestyle interventions with pharmacological approaches to target multiple dementia risk factors simultaneously. Systematic reviews identify 12 randomized controlled trials incorporating:

- Lifestyle Domains: 2-7 domains including physical exercise, cognitive training, dietary guidance, social activities, sleep hygiene, cardiovascular/metabolic risk management, and psychoeducation or stress management [30].

- Pharmacological Components: Omega-3, Tramiprosate, vitamin D, BBH-1001, epigallocatechin gallate, Souvenaid, and metformin [30].

- Target Populations: Cognitively normal at-risk individuals, those with subjective cognitive decline, mild cognitive impairment, or prodromal AD [30].

- Precision Medicine Approaches: Some trials enrich study populations with APOE-ε4 carriers to target interventions to those at highest genetic risk [30].

These combination approaches represent a frontier in dementia prevention, requiring sophisticated trial methodologies to address their multifaceted nature [30].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagent Solutions for PSCI and Alzheimer's Investigation

| Reagent/Instrument | Application Context | Specific Function | Example Implementation |

|---|---|---|---|

| DeepSpeech ASR Engine | Speech biomarker studies | Automated transcription of speech samples with Singaporean English adaptation | Fine-tuned acoustic models for Singaporean English phonological features [26] |

| Montreal Cognitive Assessment (MoCA) | Cognitive screening | Brief cognitive assessment measuring multiple domains including executive function | Primary outcome measure in PSCI trials; cutoff ≤26 for MCI [26] [28] |

| Maraviroc | Pharmacological intervention | CCR5 antagonist with potential neuroprotective and plasticity-enhancing effects | 150 mg/day or 600 mg/day dosing in MARCH trial [28] |

| IC3 Digital Assessment | Cognitive phenotyping | Comprehensive digital assessment of domain-general and domain-specific deficits | 22-task battery implemented via Cognitron platform [31] |

| Cognitron Platform | Digital cognitive testing | State-of-the-art platform for remote neuropsychological testing | Host for IC3 assessment; enables large-scale population studies [31] |

| Canadian Occupational Performance Measure | Rehabilitation research | Structured interview for eliciting and rating individual goals | Client-centered outcome measure in cognitive rehabilitation trials [29] |

| 3T MRI with advanced sequences | Neuroimaging biomarkers | Assessment of cerebrovascular disease load, lesion topology, brain networks | Structural and functional MRI in multimodal biomarker studies [28] [31] |

| Blood biomarker panels (NFL, GFAP, p-tau) | Molecular biomarkers | Quantification of neuroaxonal injury, astrocytic activation, Alzheimer's pathology | Longitudinal tracking alongside cognitive assessments [31] |

Integrated Methodological Framework and Future Directions

The investigation of PSCI and its intersection with Alzheimer's pathology requires integrated methodological frameworks that combine multiple assessment modalities. The following diagram illustrates the relationships between assessment protocols, intervention approaches, and underlying pathological mechanisms in these complex populations:

Figure 2: Integrated framework for PSCI and Alzheimer's investigation.

Future methodological developments should focus on:

- Precision Medicine Approaches: Better stratification of patient subgroups based on biomarker profiles, including neuroimaging, blood biomarkers, and genetic factors [31] [30].

- Advanced Digital Methodologies: Refinement of speech analysis, digital cognitive assessment, and remote monitoring technologies to enable frequent, naturalistic assessment [26] [31].

- Multimodal Data Integration: Sophisticated analytical approaches for integrating data from cognitive assessments, neuroimaging, blood biomarkers, and clinical outcomes to identify novel biomarkers of recovery and treatment response [31].

- Hybrid Intervention Designs: Combination of pharmacological and non-pharmacological approaches tailored to individual patient characteristics and disease stages [30].

These protocols provide a foundation for advancing our understanding of the complex relationship between cerebrovascular disease and Alzheimer's pathology, enabling more precise detection and intervention strategies for these challenging conditions.

Automated analysis pipelines that integrate Automatic Speech Recognition (ASR) and Natural Language Processing (NLP) are transforming data extraction capabilities across research domains. These technologies enable the conversion of unstructured spoken language into structured, analyzable data, facilitating deeper insights at unprecedented scales. In the specific context of cognitive word identification protocols for journal analysis research, this integration provides a methodological framework for processing vast quantities of audio-derived text, mimicking and scaling the cognitive processes involved in skilled reading and word recognition [9] [33]. ASR acts as the perceptual front-end, transcribing acoustic signals into textual representations, while NLP serves as the cognitive back-end, extracting meaning, relationships, and features from the transcribed text [34].

The core value of these pipelines lies in their ability to transform ephemeral spoken communication—such as interviews, focus groups, conference presentations, and patient interactions—into a permanent, searchable, and quantifiable knowledge base [33]. This is particularly relevant for drug development and scientific research, where critical insights often emerge verbally in collaborative settings and are subsequently documented in written journals. By applying structured analysis to this textual data, researchers can identify patterns, trends, and evidence-based conclusions that would be impractical to uncover through manual analysis alone. The following sections detail the components, protocols, and applications of these pipelines for robust feature extraction.

Key Components of an Integrated ASR-NLP Pipeline

Automatic Speech Recognition (ASR) Systems

ASR, or speech-to-text, is the technology that converts spoken language into written text. Modern ASR systems are built on deep learning and neural networks, creating a complex pipeline to process raw audio data [34].

- Audio Preprocessing and Feature Extraction: The initial stage involves cleaning and normalizing the raw audio signal to reduce noise and enhance clarity. The audio waveform is then transformed into a sequence of numerical features, such as Mel-frequency cepstral coefficients (MFCCs) or spectrograms, which represent the speech content in a form suitable for machine learning models [34]. Recent research focuses on neural front-ends that can be trained directly with the acoustic model, though they require robust regularization like audio perturbation and STFT-domain masking to prevent overfitting [35].

- Acoustic Modeling: This component maps the extracted audio features to phonemes—the smallest units of sound in a language. Deep neural networks (e.g., CNNs, RNNs, transformers) have largely replaced older models like Hidden Markov Models (HMMs), as they can learn complex relationships directly from vast amounts of labeled speech data [34].

- Language Modeling and Decoding: The language model predicts the most likely sequence of words based on context, using statistical or neural approaches (e.g., RNNs, transformers). The decoder then combines the outputs of the acoustic and language models to generate the final transcription, using algorithms like beam search to find the most probable word sequence [34]. Contemporary systems often use end-to-end models, such as transformers with Connectionist Temporal Classification (CTC), which learn to map audio directly to text, simplifying the pipeline [34].

Natural Language Processing (NLP) for Feature Extraction

Once audio is transcribed to text, NLP techniques are applied to extract meaningful information. This process involves moving from raw text to structured data and insights.

- Text Preprocessing: This foundational step cleans and standardizes the text. It typically includes tokenization (splitting text into words or sub-word units), lemmatization (reducing words to their base form), and removing stop words (common but low-information words).

- Feature Extraction and Analysis: This is the core of the NLP stage, where algorithms identify and quantify specific features within the text. Techniques include:

- Named Entity Recognition (NER): Identifying and classifying key entities such as person names, organizations, locations, dates, and, crucially for scientific research, drug names, protein targets, and diseases [33].

- Relation Extraction: Determining the relationships between identified entities, for example, extracting drug-drug interactions or gene-disease associations from the literature.

- Sentiment and Tone Analysis: Gauging the subjective content, such as the confidence level in a research conclusion or the sentiment expressed in patient feedback.

- Topic Modeling: Uncovering latent thematic structures across a large corpus of documents (e.g., research journals), allowing researchers to track the evolution of scientific topics over time.

Table 1: Evaluation Metrics for ASR-NLP Pipeline Components

| Pipeline Stage | Key Metric | Description | Interpretation |

|---|---|---|---|

| ASR | Word Error Rate (WER) | (Substitutions + Deletions + Insertions) / Total Words × 100% [34] |

Lower WER indicates higher transcription accuracy. A WER of 5% is considered human-level performance. |

| NLP (Entity Extraction) | F1-Score | Harmonic mean of precision and recall: 2 * (Precision * Recall) / (Precision + Recall) |

Balances the correctness of extracted entities (precision) with the completeness of extraction (recall). |

| NLP (Topic Modeling) | Coherence Score | Measures the semantic similarity between high-scoring words within a topic. | A higher score indicates that the topic is more human-interpretable and meaningful. |

Experimental Protocols for Pipeline Validation

Protocol 1: Benchmarking ASR System Performance

Objective: To evaluate and select an ASR system based on its transcription accuracy and robustness in a research context.

Materials:

- A curated set of audio recordings (e.g., simulated research interviews, conference talks) representative of the target domain.

- Human-generated, ground-truth transcripts for the audio set.

- Access to candidate ASR systems (e.g., Google Cloud Speech-to-Text, OpenAI Whisper, NVIDIA NeMo).

Methodology:

- Audio Preparation: Standardize all audio files to a consistent format (e.g., 16 kHz sampling rate, mono channel). For a comprehensive test, include samples with varying acoustic challenges, such as different speakers, background noise levels, and use of domain-specific terminology.

- Transcription: Process each audio file through the candidate ASR systems to generate machine transcripts.

- Accuracy Calculation: For each ASR output, compute the Word Error Rate (WER) by aligning the machine transcript with the human-generated ground truth and counting the substitutions, deletions, and insertions required to match them [34].

- Analysis: Compare the WER across systems and acoustic conditions. The system with the lowest overall WER and most consistent performance across challenging conditions should be selected for the production pipeline.

Protocol 2: Validating NLP Feature Extraction against Manual Annotation

Objective: To ascertain the precision and recall of the NLP feature extraction module, using human annotation as the gold standard.

Materials:

- A corpus of text documents (e.g., transcribed interviews, journal article abstracts).

- A predefined schema of entities and relationships to be extracted (e.g.,

[Drug],[Target],[Effect]). - Annotation software (e.g., BRAT, Prodigy).

Methodology:

- Gold Standard Creation: Have domain experts (e.g., drug development professionals) manually annotate the text corpus according to the predefined schema. This creates the ground truth against which the NLP system will be judged.

- Automated Extraction: Run the same text corpus through the NLP pipeline to automatically extract the entities and relationships.

- Performance Calculation: Compare the automated output to the gold standard annotations. Calculate precision (what percentage of extracted entities are correct), recall (what percentage of all gold-standard entities were extracted), and the F1-score (the harmonic mean of precision and recall).

- Iteration: Use the results to refine the NLP models, focusing on the entity types or relationships with low performance scores, and re-validate until satisfactory performance is achieved.

Visualization of the ASR-NLP Pipeline Workflow

The following diagram illustrates the sequential stages and feedback loops in a robust automated analysis pipeline.

ASR-NLP Analysis Pipeline

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for Building an ASR-NLP Analysis Pipeline

| Tool Name | Type | Primary Function | Application Note |

|---|---|---|---|

| OpenAI Whisper [33] | Open-Source ASR Model | Transcribes audio across dozens of languages with robust performance in challenging acoustic conditions. | Ideal for researchers needing high-quality, offline transcription without reliance on cloud APIs. Requires technical setup. |

| NVIDIA NeMo [34] | Open-Source ASR Toolkit | A modular toolkit for building, training, and fine-cutting state-of-the-art ASR models. | Best for teams requiring custom acoustic models adapted to specific domain vocabulary (e.g., medical terminology). |

| spaCy | Open-Source NLP Library | Provides industrial-strength, fast NLP features including tokenization, NER, and dependency parsing. | Excellent for prototyping and deploying production-grade NLP pipelines for feature extraction from transcripts. |

| V7 Go [33] | Commercial Platform | An enterprise platform that combines ASR transcription with downstream AI agents for analysis and workflow automation. | Suitable for organizations seeking an all-in-one solution to turn conversations into structured, actionable knowledge without building a custom pipeline. |

| Google Cloud Speech-to-Text [34] | Cloud ASR API | Provides real-time, multilingual transcription via a powerful, managed API. | Offers high accuracy and ease of integration for projects where cloud processing is acceptable and scalability is key. |

Application in Drug Development and Journal Analysis

The application of ASR-NLP pipelines is particularly transformative in drug development and the analysis of scientific literature. These pipelines can process diverse audio and text sources to accelerate research and development.

- Accelerating Literature Review: Researchers can transcribe and analyze thousands of hours of conference presentations, webinar recordings, and interview podcasts. NLP models can then extract key findings, drug efficacy results, and adverse event mentions, creating a structured database from otherwise unstructured media. This supports systematic reviews and meta-analyses at a scale previously unattainable [36].

- Enhancing Clinical Trials and Pharmacovigilance: Patient interviews and clinician notes during trials are rich sources of qualitative data on drug efficacy and side effects. An ASR-NLP pipeline can transcribe these interactions and automatically extract mentions of specific symptoms, patient-reported outcomes, and quality-of-life measures, providing real-time, quantitative insights for safety monitoring and trial optimization [33] [36].

- Drug Repurposing: By analyzing vast corpora of scientific journals and clinical notes, NLP can identify novel connections between existing drugs and diseases. For instance, Benevolent AI used AI to repurpose Baricitinib, a drug for rheumatoid arthritis, as a treatment for COVID-19 [36]. An integrated pipeline that also processes scientific discourse (e.g., conference Q&A sessions) could uncover such insights even faster.

The integration of ASR and NLP creates a powerful tool for knowledge discovery. By automating the conversion of spoken language into structured data, these pipelines allow researchers to leverage the full spectrum of scientific communication, ultimately accelerating the pace of discovery and development in fields like medicine and pharmacology.

Navigating Methodological Challenges: Ensuring Validity and Reliability