Convergent Validity in Cognitive Assessment: A Research and Drug Development Framework

This article provides a comprehensive examination of convergent validity for researchers and drug development professionals working with cognitive assessment tools.

Convergent Validity in Cognitive Assessment: A Research and Drug Development Framework

Abstract

This article provides a comprehensive examination of convergent validity for researchers and drug development professionals working with cognitive assessment tools. It covers the foundational role of convergent validity within the broader construct validity framework, detailing established and emerging methodological approaches for its evaluation, including correlation coefficients, factor analysis, and structural equation modeling. The content addresses common challenges and optimization strategies, particularly for novel and digital tools, and presents a comparative analysis of validation evidence across traditional, experimental, and computerized instruments. By synthesizing theoretical principles with practical applications, this resource aims to enhance the rigor of cognitive assessment in clinical trials, biomarker development, and therapeutic innovation for neurological and psychiatric disorders.

Defining the Bedrock: What is Convergent Validity and Why is it Fundamental?

In the scientific fields of clinical neuropsychology and psychometrics, convergent validity is not a standalone concept but a fundamental component of construct validity. It provides critical evidence that a measurement tool accurately captures the theoretical construct it is intended to measure by showing strong relationships with other measures of the same or similar constructs [1] [2] [3]. For researchers and professionals developing and evaluating cognitive assessment tools, demonstrating robust convergent validity is a cornerstone for establishing a test's credibility and clinical utility.

The table below summarizes the core conceptual relationships that define convergent validity and its counterpart, discriminant validity.

| Validity Type | Core Question | Evidence Demonstrated By | Ideal Statistical Outcome |

|---|---|---|---|

| Convergent Validity | Do two measures that theoretically should be related, actually relate? | A high positive correlation between scores from different tests measuring the same/similar construct [1] [2]. | Moderate to high positive correlation (e.g., Pearson's r > 0.50) [2]. |

| Discriminant Validity | Do two measures that theoretically should not be related, actually remain unrelated? | A low or non-significant correlation between scores from tests measuring different constructs [1] [4]. | Low or non-significant correlation [1]. |

The Researcher's Toolkit: Establishing Convergent Validity

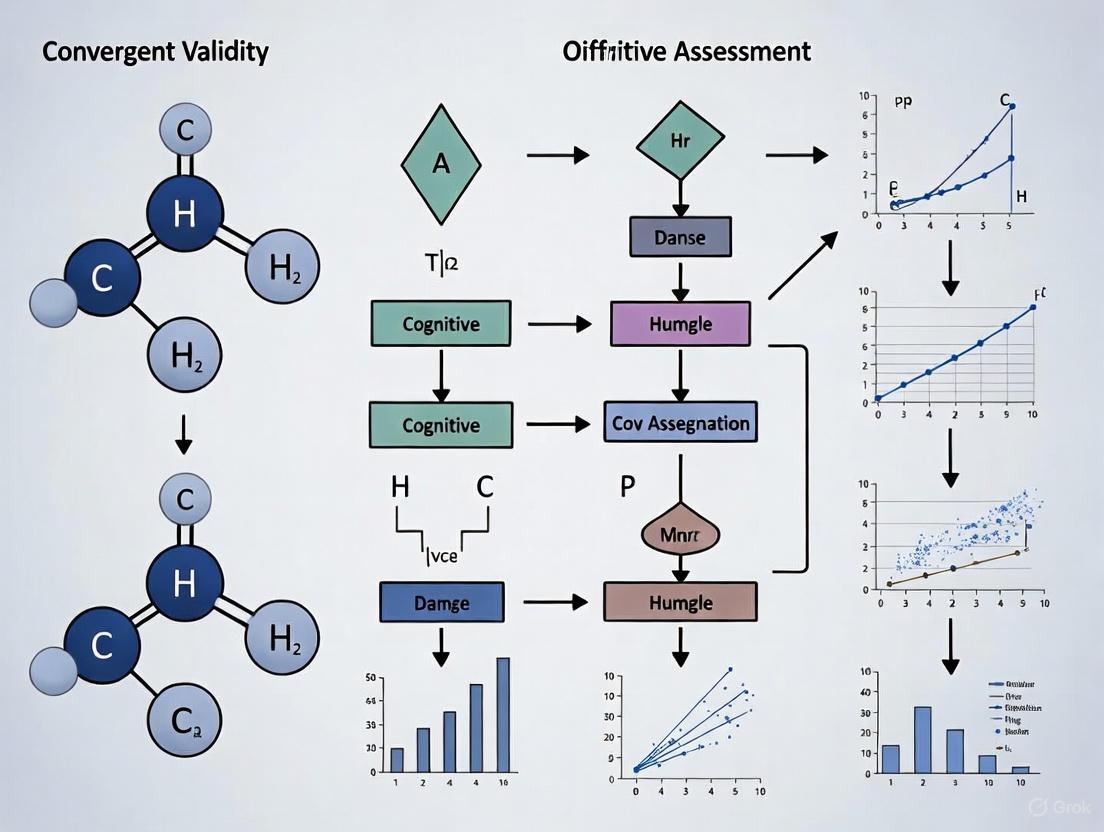

Establishing convergent validity requires a methodological approach, leveraging specific statistical techniques and research designs. The following workflow and table detail the essential components for building this evidence.

Diagram 1: Convergent validity assessment workflow.

| Tool or Method | Primary Function | Application Example |

|---|---|---|

| Correlation Coefficients (Pearson's r, Spearman's ρ) | Quantifies the strength and direction of the linear relationship between scores from two measures [2]. | Used to show that a new digital memory test's scores strongly correlate (r > 0.6) with a well-validated, traditional memory test [2]. |

| Factor Analysis (EFA/CFA) | Identifies underlying constructs (factors) that explain the pattern of correlations among multiple variables [2]. | Used to demonstrate that a new 10-item cognitive screener and a longer established battery both load highly (e.g., >0.5) onto the same "global cognition" factor [5]. |

| Multitrait-Multimethod Matrix (MTMM) | A comprehensive matrix of correlations that assesses both convergent and discriminant validity simultaneously by examining different traits measured by different methods [2]. | Used to validate a new questionnaire for depression by showing it correlates highly with other depression measures (convergent) but less so with measures of anxiety (discriminant) [2]. |

| Established "Gold Standard" Tests | Serves as a validated benchmark against which a new or alternative test is compared [6]. | A new, brief digital drawing test (RoCA) is validated against the extensive paper-based Addenbrooke's Cognitive Examination (ACE-3) [6]. |

Comparative Data in Cognitive Assessment

The principles of convergent validity are actively applied in the development and validation of modern cognitive assessment tools, from short-form paper tests to digital solutions. The table below compares several contemporary tools and the evidence supporting them.

| Assessment Tool | Format & Purpose | Convergent Validity Evidence |

|---|---|---|

| NUCOG10 [5] | 10-item short form of a paper-based cognitive screener for dementia. | The short form maintained "high convergent validity" with the original, full-length NUCOG assessment, demonstrating a strong relationship between the two versions [5]. |

| Rapid Online Cognitive Assessment (RoCA) [6] | Remote, self-administered digital drawing battery for cognitive screening. | Classified patients similarly to gold-standard paper tests (ACE-3, MoCA) with an Area Under the Curve (AUC) of 0.81, indicating strong agreement with established measures [6]. |

| Brief International Cognitive Assessment for MS (BICAMS) [7] | Brief paper-and-pencil battery for cognitive impairment in Multiple Sclerosis. | The individual tests (e.g., Symbol Digit Modalities Test) show good "known-groups validity," a form of criterion validity, and the battery is consistently associated with real-world outcomes like employment status [7]. |

| Computerized Neuropsychological Assessment Devices (CNADs) [7] | Digital platforms for cognitive testing (e.g., NeuroTrax, CBB). | Studies show strong psychometric properties. For instance, the global score from the NeuroTrax battery effectively differentiates healthy individuals from those with MS, supporting its validity for measuring the intended construct [7]. |

Experimental Protocols for Validation

For researchers aiming to replicate or design validation studies, the following protocols offer a detailed methodology.

Protocol 1: Validating a Short-Form Cognitive Tool (e.g., NUCOG10)

This protocol outlines the process for creating and validating an abbreviated version of a longer assessment [5].

Participant Recruitment and Sampling:

- Recruit a participant cohort that includes both healthy controls and individuals with the target condition (e.g., dementia). A study validating a dementia tool recruited 132 healthy controls and 191 individuals with dementia [5].

- Randomly split the cohort into a "training" set (e.g., ~70%) for development and a "testing" set (e.g., ~30%) for validation [5].

Item Selection and Short-Form Development:

- Administer the full, original test to all participants.

- Use Receiver Operating Characteristic (ROC) analysis to compute the predictive power of each individual test item. Rank items by their Area Under the Curve (AUC) values.

- Select the top-performing items to create short-form versions of predetermined length (e.g., 5, 10, and 15 items), ensuring items from each cognitive domain are retained [5].

Validation and Comparison:

- Administer the new short-form to the validation cohort.

- Calculate key psychometric properties, including sensitivity and specificity, to determine how well the short-form distinguishes between groups (e.g., healthy vs. dementia).

- Establish the convergent validity by statistically comparing scores from the short-form to scores from the original, long-form test, demonstrating a strong correlation between them [5].

Protocol 2: Validating a Novel Digital Assessment (e.g., RoCA)

This protocol focuses on validating a digital tool against established, non-digital standards [6].

Study Design and Participant Enrollment:

- Conduct an open-label study, enrolling participants from clinical settings (e.g., neurology clinics). A typical study might enroll dozens of patients with a wide age range [6].

- Apply strict inclusion/exclusion criteria (e.g., English fluency, no acute psychiatric disorder, no delirium) to create a well-defined sample [6].

Concurrent Administration of Tests:

- In a controlled environment, each participant completes both the novel digital assessment and the established "gold standard" test.

- The digital test (e.g., RoCA) should be self-administered via a touchscreen device without interference from the examiner to test its independent reliability.

- The established tests (e.g., ACE-3 or MoCA) are administered and scored by trained experts according to their standard guidelines [6].

Statistical Analysis and Classification Accuracy:

- The primary analysis involves comparing the classification of cognitive impairment from the digital tool against the classification from the gold-standard test.

- Generate a Receiver Operating Characteristic (ROC) curve and calculate the Area Under the Curve (AUC). An AUC of 0.81, for example, indicates good agreement [6].

- Report the tool's sensitivity (ability to correctly identify those with impairment) and specificity (ability to correctly identify those without impairment) at the optimal score cut-off [6].

A Framework of Validity Evidence

The following diagram illustrates how convergent validity fits within the broader construct validity framework, working alongside other types of evidence to support the meaningfulness of test scores.

Diagram 2: A hierarchy of validity evidence.

Convergent Validity's Role in the Construct Validity Framework

In the scientific evaluation of any assessment tool, particularly in cognitive research, construct validity is paramount. It answers a fundamental question: does this instrument truly measure the theoretical concept, or "construct," it claims to measure? Constructs such as intelligence, sustained attention, or cognitive impairment cannot be measured directly but must be inferred from observable indicators [8]. Establishing construct validity is therefore a critical, multi-faceted process that provides confidence in the meaning of a test's scores.

Within this framework, convergent validity functions as a crucial pillar of evidence. It is defined as the degree to which two different measures that are theoretically supposed to be related are, in fact, empirically related [9] [2]. A high correlation between scores on a new test and scores on an established test of the same construct provides strong evidence that the new tool is effectively capturing the intended concept. Conversely, discriminant validity (sometimes called divergent validity) is the other essential pillar, demonstrating that the test does not correlate strongly with measures of theoretically distinct constructs [10] [2]. Together, convergent and discriminant validity form the core of a modern argument for construct validity, painting a complete picture of a test's relationships—both where it should and should not align [9] [8].

The following diagram illustrates this foundational relationship within the construct validity framework.

Experimental Protocols for Establishing Convergent Validity

Establishing convergent validity requires a formal validation strategy. The following workflow outlines the standard methodological sequence, from hypothesis formulation to statistical evaluation.

The process begins with a clear theoretical foundation, positing that two measures assess the same or highly similar constructs [9]. Researchers must then select an appropriate validation measure, often an established "gold standard" instrument with proven validity [8]. The subsequent statistical analysis typically involves calculating correlation coefficients. Pearson's r is used for continuous, normally distributed data, while Spearman's ρ is suitable for ordinal data or when normality assumptions are not met [2]. A correlation coefficient generally above 0.5 is considered evidence of convergent validity, though the exact threshold can vary by field [2]. For more complex analyses, researchers may use Factor Analysis to see if items from different tests load onto the same underlying factor, or employ a Multitrait-Multimethod Matrix (MTMM) to assess convergent and discriminant validity simultaneously [9] [2].

Convergent Validity in Cognitive Assessment Tool Research

The application of convergent validity is vividly illustrated in the development and validation of contemporary cognitive assessment tools, including novel digital health technologies. The following case studies demonstrate its role across diverse methodologies.

Case Study: Validating a Short-Form Cognitive Screening Tool

A 2025 study by Li et al. aimed to develop and validate abbreviated versions of the Neuropsychiatry Unit Cognitive Assessment Tool (NUCOG) [5]. The research team created 5-item, 10-item, and 15-item short-form versions and assessed their psychometric properties. A key validation step was establishing the convergent validity of these new short forms by comparing their scores with the original, full-length NUCOG. The study concluded that all short-form versions demonstrated "high convergent validity," with the 10-item version (NUCOG10) providing an ideal balance of breadth and brevity while maintaining sensitivity and specificity comparable to the original [5]. This use of convergent validity allows clinicians to trust that the shorter tool measures the same core cognitive constructs as the longer, established assessment.

Case Study: Validating a Smartphone-Based Cognitive Application

In the realm of digital health, Min et al. (2025) sought to validate "Brain OK," a smartphone-based application for assessing cognitive function in elderly individuals [11]. The experimental protocol involved administering both the Brain OK test and the Montreal Cognitive Assessment (MoCA), a well-validated paper-and-pencil cognitive screening tool, to 88 participants aged over 60. To assess convergent validity, the researchers conducted a statistical analysis of the correlation between the total scores of the two tests. They reported a highly significant positive association, with a correlation coefficient of 0.904, providing strong evidence that the smartphone application measures a construct highly similar to that measured by the traditional MoCA [11].

Case Study: An AI-Enhanced Digit Vigilance Test

Pushing the boundaries further, a 2025 study developed an Artificial Intelligence-based Computerized Digit Vigilance Test (AI-CDVT) to measure sustained attention in older adults [12]. This tool integrated traditional performance metrics (reaction time, accuracy) with AI-derived behavioral features (eye blink rate, head movement, gaze) from video recordings. The experimental protocol for establishing its convergent validity involved correlating the new AI-CDVT score with several established neuropsychological tests, including the MoCA, the Stroop Color Word Test (SCW), and the Color Trails Test (CTT). The resulting Pearson correlation coefficients were -0.42 with MoCA, -0.31 with SCW, and 0.46-0.61 with the CTT, demonstrating low-to-moderate relationships with related but distinct constructs and a stronger correlation with a test of sustained attention (CTT). This pattern supports the tool's convergent validity for measuring attention [12].

Quantitative Comparison of Cognitive Tool Validation Studies

The table below synthesizes the key metrics and outcomes from the featured case studies, allowing for a direct comparison of their validation approaches and results.

Table 1: Comparative Data from Cognitive Assessment Tool Validation Studies

| Assessment Tool | Validation Criterion | Correlation Coefficient / Key Metric | Study Outcome |

|---|---|---|---|

| NUCOG10 (Short-form) | Original NUCOG | High convergent validity reported (specific coefficient not provided) | Sensitivity: 0.98, Specificity: 0.95 for dementia detection [5] |

| Brain OK (Smartphone App) | Montreal Cognitive Assessment (MoCA) | Pearson's r = 0.904 (p < 0.001) | AUC: 0.941; Sensitivity: 0.958, Specificity: 0.925 [11] |

| AI-CDVT (AI-Based Test) | Color Trails Test (CTT) | Pearson's r = 0.46 to 0.61 | Test-Retest Reliability (ICC): 0.78 [12] |

The Scientist's Toolkit: Essential Reagents for Validation Research

Beyond specific tests, conducting robust validation studies requires a suite of methodological "reagents." The following table details these essential components and their functions in establishing instrument validity.

Table 2: Key Methodological Components for Validation Research

| Research Component | Function in Validation | Exemplars from Literature |

|---|---|---|

| Criterion Measure ("Gold Standard") | Serves as the established benchmark against which the new tool's scores are correlated [8]. | Montreal Cognitive Assessment (MoCA) [11] [12], Original NUCOG [5], Color Trails Test [12]. |

| Statistical Correlation Analysis | Quantifies the strength and direction of the relationship between the new tool and the criterion measure [2]. | Pearson's correlation coefficient [11] [2], Spearman's rank correlation [2]. |

| Reliability Assessment | Establishes the consistency and stability of the new tool's scores, a prerequisite for validity. | Intraclass Correlation Coefficient (ICC) for test-retest reliability [12], Cronbach's alpha for internal consistency [13]. |

| Divergent Validity Test | Provides evidence for construct validity by demonstrating a lack of correlation with measures of dissimilar constructs [10] [2]. | Correlating an IT skills test with an IQ test [10], or a depression scale with an intelligence test [14]. |

| Multitrait-Multimethod Matrix (MTMM) | A comprehensive framework for evaluating convergent and discriminant validity simultaneously by assessing multiple traits with multiple methods [9]. | Campell and Fiske's original framework for assessing construct validity [9]. |

Convergent validity is not merely a statistical exercise; it is a fundamental component of the construct validity argument, providing critical evidence that a tool successfully measures its intended theoretical construct. As demonstrated by the validation of short-form surveys, smartphone applications, and AI-enhanced tests, establishing a strong correlation with established measures is a critical step in building scientific confidence in any new assessment instrument. For researchers in cognitive science and drug development, a rigorous validation protocol that integrates convergent with discriminant evidence is indispensable. It ensures that the tools used to gauge cognitive outcomes, whether in clinical trials or basic research, are truly fit for purpose, thereby lending credibility and interpretability to the data they generate.

In the development and evaluation of cognitive assessment tools, establishing construct validity is paramount to ensure that a test accurately measures the theoretical construct it claims to measure. This process rests on two fundamental pillars: convergent validity and discriminant validity [10] [15]. Convergent validity is the degree to which two different measures that are designed to assess the same construct agree with each other, demonstrated by a strong positive correlation [10] [9]. Discriminant validity (also called divergent validity) is the degree to which a measure does not correlate strongly with measures of theoretically distinct, unrelated constructs [16].

For researchers and drug development professionals, these concepts are not merely academic; they are critical for validating that a cognitive assessment, whether traditional or a novel digital tool, is a precise and specific instrument. A test must simultaneously converge with measures of the same ability and diverge from measures of different abilities to have strong overall construct validity [10] [15].

Conceptual Foundations and Analytical Frameworks

Defining the Core Concepts

The following table summarizes the key characteristics of convergent and discriminant validity:

Table 1: Core Characteristics of Convergent and Discriminant Validity

| Feature | Convergent Validity | Discriminant Validity |

|---|---|---|

| Primary Question | Does this test correlate with other tests that measure the same construct? | Does this test not correlate with tests that measure different constructs? |

| Purpose | To provide evidence that the test is capturing the intended construct [16]. | To demonstrate the uniqueness of the construct, showing it is distinct from others [16]. |

| Expected Correlation | Strong positive correlation [10]. | Weak or near-zero correlation [16]. |

| Analogical Goal | "Finding your friends" – aligning with similar measures. | "Avoiding strangers" – distinguishing from dissimilar measures [15]. |

The Multitrait-Multimethod Matrix (MTMM)

A robust method for evaluating both types of validity simultaneously is the Multitrait-Multimethod Matrix (MTMM), introduced by Campbell and Fiske (1959) [9]. This framework involves measuring multiple traits (e.g., working memory, inhibitory control) using multiple methods (e.g., self-report, performance-based tasks, neuroimaging). The resulting correlation matrix allows researchers to inspect:

- Convergent Validity: High correlations between different methods measuring the same trait (monotrait-heteromethod correlations).

- Discriminant Validity: Low correlations between different traits measured by the same method (heterotrait-monomethod correlations) and between different traits measured by different methods (heterotrait-heteromethod correlations) [17] [9].

Experimental Evidence from Cognitive Neuroscience

Insights from a Large-Scale Cognitive Test Battery

A seminal study by the Consortium for Neuropsychiatric Phenomics (CNP) provides a concrete example of how these validity concepts are applied in practice. The study administered 23 traditional and experimental cognitive tests to a large sample of community volunteers (n=1,059) and patients with psychiatric diagnoses (n=137) to examine convergent validity through factor analysis [18] [19].

Table 2: Selected Experimental Cognitive Tests and Their Validity Evidence from the CNP Study

| Cognitive Domain | Example Experimental Test | Key Finding on Convergent Validity |

|---|---|---|

| Working Memory | Spatial and Verbal Capacity Tasks; Spatial and Verbal Maintenance and Manipulation Tasks | Convergent validity was generally supported; tests factored together with traditional working memory measures [18]. |

| Memory | Remember–Know; Scene Recognition | Convergent validity was supported; tests factored together with traditional memory measures [18]. |

| Inhibitory Control | Stop-Signal Task (SST); Balloon Analogue Risk Task (BART); Delay Discounting Task | Several measures showed weak relationships with all other tests, indicating poor convergent validity for some experimental inhibitory control tasks [18]. |

Experimental Protocol & Methodology:

- Test Battery Administration: Participants completed a comprehensive battery including traditional tests (e.g., subtests from the Wechsler Adult Intelligence Scale and Memory Scale, California Verbal Learning Test-II) and experimental tests designed to measure response inhibition, working memory, and memory [18].

- Data Analysis: Researchers conducted an Exploratory Factor Analysis (EFA) on one randomly selected half of the community sample to identify the underlying factor structure without predefined restrictions.

- Validation: A subsequent Multigroup Confirmatory Factor Analysis (MGCFA) was performed on the second half of the sample to confirm the identified factor structure and test its invariance across community volunteers and patient groups [18].

Interpretation: The emergence of a stable three-factor structure (verbal/working memory, inhibitory control, and memory) supported the convergent validity of most tests of working memory and memory. However, the failure of several inhibitory control tasks to correlate strongly with each other or with traditional measures suggests they may be tapping into more specific, non-overlapping cognitive processes, highlighting the complexity of measuring the "inhibitory control" construct [18].

Validity in Applied Contexts: The Case of the SDQ

The Strengths and Difficulties Questionnaire (SDQ), a brief behavioral screening measure, offers another clear case study. An examination of its factor structure and validity used the MTMM approach, incorporating peer evaluations alongside parent and teacher ratings. The study concluded that the SDQ has good convergent validity but relatively poor discriminant validity [17].

This means that while different raters (e.g., parents and teachers) tended to agree on a child's traits (supporting convergence), the five subscales of the SDQ (Emotional Symptoms, Conduct Problems, Hyperactivity, Peer Problems, and Prosocial Behavior) did not differentiate from each other as clearly as theory would predict. For instance, a parent might rate a child similarly on items from theoretically distinct subscales, suggesting the measure's constructs are not fully independent [17].

The Research Toolkit: Essential Reagents for Validity Analysis

For scientists designing validation studies for cognitive assessments, the following "research reagents" and methodologies are essential.

Table 3: Essential Reagents and Methodologies for Validity Studies

| Tool / Methodology | Function in Validity Analysis | Example Application |

|---|---|---|

| Correlational Analysis | To quantify the strength and direction of the relationship between two measures. The foundational statistic for establishing convergent and discriminant validity [15]. | Calculating the Pearson correlation between scores on a new French vocabulary test and an established vocabulary test to demonstrate convergent validity [10]. |

| Factor Analysis (EFA/CFA) | To identify the latent construct(s) underlying a set of measured variables. EFA explores the structure, while CFA tests a pre-specified structure [18]. | Used in the CNP study to determine if experimental tests of working memory loaded onto the same latent factor as traditional working memory tests [18]. |

| Multitrait-Multimethod Matrix (MTMM) | A comprehensive framework for organizing and interpreting correlations to assess convergent and discriminant validity simultaneously while accounting for method-specific variance [17] [9]. | Used in the SDQ study to show that while different raters converged (good convergent validity), the traits themselves were not well differentiated (poor discriminant validity) [17]. |

| Traditional Neuropsychological Battery | Serves as a "criterion standard" set of measures with established validity against which new or experimental tests can be validated [18]. | In the CNP study, subtests from the Wechsler scales and Delis-Kaplan Executive Function System were used as benchmarks for specific cognitive domains [18]. |

Visualizing the Validity Workflow and Outcomes

The following diagram illustrates the logical relationship between the core concepts of construct validity and the analytical process for establishing them.

Emerging Frontiers: Digital Cognitive Assessments

The principles of convergent and discriminant validity are now being applied to a new generation of tools: remote and unsupervised digital cognitive assessments. These tools offer advantages in scalability, measurement precision (e.g., reaction time), and ecological validity [20]. The validation protocol for these tools mirrors that of traditional tests but with added considerations.

Experimental Protocol for Digital Tools:

- Define Target Constructs: Clearly articulate the cognitive domain (e.g., episodic memory, processing speed) the digital task is intended to measure.

- Select Criterion Measures: Choose established in-person neuropsychological tests as benchmarks for establishing convergent validity [20].

- Administer in Parallel: Participants complete both the digital assessment and the traditional criterion measures.

- Analyze Correlations: Calculate correlations between the digital metric (e.g., a novel learning curve score from an episodic memory task) and the scores from the traditional tests. Strong correlations support the digital tool's convergent validity [20].

- Assess Divergence: Correlate the digital metric with tests of dissimilar constructs to provide evidence for discriminant validity. For instance, a digital working memory task should not correlate strongly with a measure of personality.

Convergent and discriminant validity are two sides of the same coin, forming an indivisible partnership in the scientific pursuit of valid measurement [10] [15]. A cognitive test with strong convergent validity but weak discriminant validity may be measuring a general, non-specific factor rather than the precise construct of interest. Conversely, a test with strong discriminant validity but no convergent validity has no anchor in established theory or measurement.

For researchers and drug developers validating cognitive tools for use in clinical trials or diagnostic applications, a rigorous demonstration of both is non-negotiable. It is the foundation upon which reliable data, meaningful results, and ultimately, sound scientific conclusions are built.

In cognitive science and clinical research, the gap between theoretical constructs and practical assessment tools presents a significant methodological challenge. Theoretical cognitive constructs—such as memory, executive function, and processing speed—are abstract concepts that researchers aim to measure through concrete tasks and instruments. Convergent validity, the degree to which two measures of constructs that theoretically should be related are in fact related, serves as a critical bridge between theory and practice. Establishing strong convergent validity demonstrates that an assessment tool truly captures the intended theoretical construct, thereby justifying inferences made from test scores to underlying cognitive abilities. This guide provides a structured comparison of methodological approaches for linking cognitive constructs to practical assessment, with a specific focus on establishing convergent validity in cognitive assessment tools relevant to pharmaceutical development and clinical research.

The process of validation is particularly crucial in drug development, where objective, sensitive, and reliable cognitive endpoints are needed to determine treatment efficacy. In this context, automated text analysis and natural language processing (NLP) methods have emerged as transformative tools. Researchers can now analyze vast scientific literatures to create joint representations of tasks and constructs, identifying how theoretical concepts are grounded in specific assessment methodologies across the ever-expanding body of research [21].

Theoretical Foundations: Cognitive Constructs and Their Measurement

Defining Cognitive Constructs

Cognitive constructs are hypothetical, non-observable variables that psychologists invoke to explain and predict behavior in a systematic way. These constructs form the theoretical backbone of cognitive assessment:

- Theoretical Nature: Constructs like "working memory" or "cognitive control" are not directly observable but are inferred from performance on standardized tasks [21]. They represent specialized mental processes that contribute to overall cognitive functioning.

- Construct Representation: The process by which abstract constructs are translated into concrete, measurable tasks. This involves developing assessment items that require the specific cognitive ability for successful performance.

- Construct-Irrelevant Variance: The extent to which test scores are influenced by factors unrelated to the target construct, threatening validity.

The Validation Framework: Convergent Validity

Convergent validity forms part of the broader construct validity framework, which examines whether a test measures the intended theoretical construct. Key aspects include:

- Multi-Trait Multi-Method Matrix (MTMM): A systematic approach for examining convergent and discriminant validity by administering multiple measures of different constructs to the same group of individuals.

- Correlational Analysis: Convergent validity is typically demonstrated by moderate to strong correlations (r ≥ 0.4-0.6) between different measures purporting to assess the same construct.

- Cross-Method Convergence: Evidence that a construct manifests similarly across different assessment modalities (e.g., computerized tests, paper-and-pencil tests, ecological momentary assessment).

Comparative Analysis of Cognitive Assessment Methodologies

Established Cognitive Assessment Tools: A Quantitative Comparison

The following table summarizes key cognitive assessment tools and their methodological approaches to measuring theoretical constructs:

Table 1: Comparison of Cognitive Assessment Tools and Their Construct Measurement Approaches

| Assessment Tool | Primary Cognitive Constructs Measured | Administration Time | Validation Approach | Convergent Validity Evidence |

|---|---|---|---|---|

| NUCOG | Attention, Memory, Executive Function, Visuospatial, Language | 20-25 minutes | Correlation with gold-standard measures, diagnostic group comparisons | Strong correlations with MMSE (r=0.70-0.85) and similar dementia screening tools |

| NUCOG10 (Short-form) | Attention, Memory, Executive Function, Visuospatial, Language | ~10 minutes | ROC analysis, comparison to full NUCOG, diagnostic accuracy | High correlation with full NUCOG (r=0.95), similar sensitivity (0.98) and specificity (0.95) for dementia detection [5] |

| Experimental Cognitive Battery | Cognitive Control, Task Switching, Inhibitory Control | Variable (typically 30-60 minutes) | Joint task-construct graph embedding, computational modeling | Construct grounding via document embedding of 385,705 scientific abstracts [21] |

Methodological Approaches to Establishing Convergent Validity

Different methodological approaches offer distinct advantages for establishing convergent validity in cognitive assessment:

Table 2: Methodological Approaches for Establishing Convergent Validity in Cognitive Assessment

| Methodological Approach | Key Features | Data Analysis Techniques | Application Context |

|---|---|---|---|

| Traditional Psychometric | Correlational studies, factor analysis, diagnostic accuracy metrics | ROC curves, AUC values, sensitivity/specificity calculations [5] | Clinical tool development, validation of brief assessments against comprehensive batteries |

| Computational Literature Analysis | Natural language processing, document embedding, graph theory | Transformer-based language models, constrained random walks in task-construct graphs [21] | Cognitive theory development, identifying gaps in construct measurement, generating novel task batteries |

| Social Cognitive Theory Framework | Focus on self-efficacy, observational learning, behavioral capability | Randomized controlled trials, pre-post intervention designs [22] | Health behavior interventions, self-management programs, lifestyle modification studies |

Experimental Protocols for Validation Studies

Protocol 1: Short-Form Cognitive Assessment Validation

Objective: To develop and validate an abbreviated version of an existing cognitive assessment tool while maintaining strong psychometric properties and construct representation [5].

Methodology:

- Participant Recruitment: Recruit two distinct cohorts—healthy controls (n=132, 41%) and clinical populations with known cognitive deficits (e.g., dementia, n=191, 59%) [5].

- Data Collection: Administer the full assessment battery (e.g., 24-item NUCOG) to all participants.

- Item Selection:

- Compute Receiver Operating Characteristic (ROC) curves for each assessment item.

- Rank items according to Area Under the Curve (AUC) values.

- Select top-performing items to create short-form versions (5-item, 10-item, 15-item).

- Validation Procedure:

- Randomize participants into training (70%) and testing (30%) cohorts.

- Validate short-form versions against full assessment in testing cohort.

- Establish optimal cut-off scores using ROC analysis.

- Statistical Analysis:

- Calculate sensitivity, specificity, positive and negative predictive values.

- Assess convergent validity between short-form and full version scores.

- Evaluate internal consistency and test-retest reliability.

This protocol yielded the NUCOG10, which demonstrated comparable psychometric properties to the full assessment with a significantly reduced administration time (approximately 10 minutes), while maintaining high sensitivity (0.98) and specificity (0.95) for dementia detection at a cut-off score of 42/54 [5].

Protocol 2: Computational Literature Analysis for Construct Validation

Objective: To create a joint representation of cognitive tasks and theoretical constructs through automated analysis of scientific literature, enabling identification of relationships and knowledge gaps [21].

Methodology:

- Corpus Development:

- Collect 385,705 scientific abstracts focusing on cognitive control research.

- Include diverse methodologies, theoretical perspectives, and task paradigms.

- Text Processing:

- Map abstracts into an embedding space using transformer-based language models.

- Identify and extract key constructs and methodological elements.

- Graph Construction:

- Create a task-construct graph embedding that grounds constructs on specific tasks.

- Implement constrained random walks to explore nuanced construct meanings.

- Analysis Procedures:

- Query the graph to generate task batteries targeting specific constructs.

- Identify under-researched connections between constructs and measurement approaches.

- Visualize the semantic space of cognitive control research.

This computational approach addresses limitations of traditional literature reviews by human experts, which struggle to track the ever-growing literature and may introduce biases, redundancies, and confusion [21].

Visualization Framework for Cognitive Construct Mapping

Cognitive Construct Validation Workflow

Cognitive Construct Validation Workflow: This diagram illustrates the iterative process of establishing construct validity, from theoretical definition through computational literature analysis to statistical validation.

Multi-Method Construct Validation Model

Multi-Method Construct Validation Model: This diagram visualizes the multi-trait multi-method approach to establishing convergent validity through correlation between different measurement methods of the same theoretical construct.

Table 3: Essential Research Reagents and Resources for Cognitive Construct Validation

| Resource Category | Specific Tools/Platforms | Primary Function in Construct Validation |

|---|---|---|

| Statistical Analysis Software | R Programming, Python (Pandas, NumPy, SciPy), SPSS, Microsoft Excel | Advanced statistical computing, data visualization, psychometric analysis, and correlation calculations for validity studies [23] |

| Computational Literature Analysis | Transformer-based Language Models, Graph Embedding Algorithms | Creating joint representations of tasks and constructs from scientific literature, identifying research gaps, generating novel hypotheses [21] |

| Psychometric Assessment Tools | NUCOG, NUCOG10, Custom Task Batteries | Direct measurement of cognitive constructs, providing quantitative data for validation studies [5] |

| Data Visualization Platforms | ChartExpo, Ninja Tables, Custom Visualization Scripts | Creating comparison charts, quantitative data visualization, and clear presentation of validity evidence [24] [23] |

| Experimental Design Platforms | PsychoPy, E-Prime, jsPsych | Developing and administering computerized cognitive tasks with precise timing and data collection |

The pathway from theoretical constructs to validated assessment tools requires methodical application of convergent validity principles. As demonstrated by the development of abbreviated instruments like the NUCOG10 and computational approaches to literature analysis, the field continues to evolve toward more efficient, precise, and theoretically grounded assessment methodologies [21] [5]. For researchers in pharmaceutical development and clinical trials, these advances enable more sensitive detection of treatment effects and clearer connections between intervention mechanisms and cognitive outcomes. The continuing refinement of cognitive assessment tools through rigorous validation protocols ensures that our practical measurements remain firmly tethered to the theoretical constructs they purport to measure, ultimately advancing both basic science and clinical application.

The Researcher's Toolkit: Methods for Establishing Convergent Validity

In scientific research, particularly in the development and validation of cognitive assessment tools, establishing convergent validity is a critical step. This process demonstrates that a new measurement instrument measures the same underlying construct as an established, gold-standard tool. Correlation analysis serves as a foundational statistical method for this purpose, quantifying the strength and direction of the relationship between measurements obtained from different methods. Among the various correlation coefficients, Pearson's r and Spearman's ρ emerge as the most widely utilized metrics for assessing convergent validity in methodological studies. These coefficients provide researchers with a quantitative framework to evaluate whether two methods could be used interchangeably without affecting research conclusions or clinical decisions [25] [26].

Within the specific context of cognitive assessment research—where new digital tools, telephone-based assessments, and innovative methodologies are continually being developed—selecting the appropriate correlation coefficient is not merely a statistical formality but a fundamental methodological decision. The choice between Pearson and Spearman correlations directly impacts the validity of conclusions regarding a new tool's performance relative to established standards. This guide provides an objective comparison of these two foundational metrics, supported by experimental data and protocols from contemporary research in cognitive assessment.

Theoretical Foundations: Pearson's r vs. Spearman's ρ

Pearson's Product-Moment Correlation (r)

Pearson's r is a parametric statistic that measures the strength of a linear relationship between two continuous variables. It calculates the degree to which a change in one variable is associated with a proportional change in another variable, assuming the relationship can be approximated by a straight line. The coefficient ranges from -1 (perfect negative linear relationship) to +1 (perfect positive linear relationship), with 0 indicating no linear relationship [27] [28].

The formula for calculating Pearson's r is:

$$r{xy} = \frac{\sum{i=1}^{n}(xi - \bar{x})(yi - \bar{y})}{\sqrt{\sum{i=1}^{n}(xi - \bar{x})^2\sum{i=1}^{n}(yi - \bar{y})^2}}$$

Where:

- $r_{xy}$ = Pearson correlation coefficient between x and y

- $n$ = number of observations

- $x_i$ = value of x for ith observation

- $y_i$ = value of y for ith observation

- $\bar{x}$ = mean of x variable

- $\bar{y}$ = mean of y variable [28]

Spearman's Rank Correlation (ρ)

Spearman's ρ is a non-parametric statistic that measures the strength of a monotonic relationship between two variables, whether linear or non-linear. A monotonic relationship exists when the variables tend to move in the same relative direction (both increasing or both decreasing), but not necessarily at a constant rate. Instead of using raw data values, Spearman's ρ operates on rank-ordered data, making it less sensitive to outliers and non-normal distributions [27] [28].

The formula for calculating Spearman's ρ is:

$$\rho = 1 - \frac{6\sum d_i^2}{n(n^2-1)}$$

Where:

- $\rho$ = Spearman rank correlation

- $d_i$ = the difference between the ranks of corresponding variables

- $n$ = number of observations [27]

Direct Comparison: Key Characteristics and Applications

Table 1: Comprehensive Comparison of Pearson's r and Spearman's ρ

| Aspect | Pearson Correlation Coefficient | Spearman Correlation Coefficient |

|---|---|---|

| Purpose | Measures linear relationships | Measures monotonic relationships |

| Assumptions | Variables normally distributed, linear relationship, homoscedasticity | Variables have monotonic relationship, no strict distributional assumptions |

| Calculation Basis | Based on covariance and standard deviations of raw data | Based on ranked data and rank order |

| Data Types | Appropriate for interval and ratio data | Appropriate for ordinal, interval, and ratio data |

| Sensitivity to Outliers | Sensitive to outliers | Less sensitive to outliers |

| Interpretation | Strength and direction of linear relationship | Strength and direction of monotonic relationship |

| Effect Size Guidelines | Small: 0.10-0.29, Medium: 0.30-0.49, Large: ≥0.50 | Small: 0.10-0.29, Medium: 0.30-0.49, Large: ≥0.50 |

| Sample Size Efficiency | More efficient with larger sample sizes and normal data | Works well with smaller samples and doesn't require normality |

The fundamental distinction lies in the type of relationship each coefficient detects: Pearson's r specifically assesses linear relationships, while Spearman's ρ detects the broader category of monotonic relationships (where variables move in the same direction, but not necessarily at a constant rate). This difference has profound implications for method comparison studies in cognitive assessment, where the relationship between a new instrument and an established gold standard may not be strictly linear, particularly across the full range of cognitive abilities [27] [29].

Experimental Protocols for Cognitive Assessment Validation

Protocol 1: Validation of Telephone Cognitive Testing for Community-Dwelling Older Adults

A 2025 study developed and validated the Telephone Cognitive Testing for Community-dwelling Older Adults (TCTCOA), a culturally tailored assessment tool for Chinese elderly populations. The experimental protocol exemplifies the application of correlation analysis in establishing convergent validity for cognitive assessment tools [30].

Research Objective: To develop and validate a telephone-based multi-domain cognitive assessment tool tailored for healthy, community-dwelling older adults in China, with particular attention to cultural and educational considerations [30].

Participant Recruitment:

- Sample: 112 community-dwelling older adults aged 60 and above

- Recruitment source: Beijing, China (August-September 2023)

- Exclusion criteria: History of neurological or psychiatric disorders, hearing impairments

- Ethical approval: Obtained from the Ethics Committee of the Institute of Psychology, Chinese Academy of Sciences (IPCAS)

- Informed consent: Obtained from all participants [30]

Cognitive Domains Assessed:

- Episodic memory: Assessed using an adapted verbal paired associates subtest from Wechsler Memory Scale-Revised (WMS-R)

- Working memory: Assessed using backward digit span test from Wechsler Intelligence Scale-Revised (WAIS-R)

- Processing speed: Assessed using backward counting task from BTACT

- Executive function: Assessed using category fluency task (animal naming)

- Abstract reasoning and concept formation: Assessed using verbal clock test [30]

Experimental Procedure:

- 68 participants completed TCTCOA via both telephone and face-to-face modalities

- Montreal Cognitive Assessment (MoCA) administered for validation

- Testing conducted with counterbalanced design to control for order effects

- All assessments administered by trained researchers following standardized protocols [30]

Statistical Analysis for Convergent Validity:

- Pearson's correlations between telephone and face-to-face modalities

- Pearson's correlations between TCTCOA and MoCA scores

- Structural validity assessed through factor analysis

- Assessment of ceiling and floor effects [30]

Key Findings:

- Strong correlation between telephone and face-to-face modalities (r = 0.72)

- Moderate correlations with MoCA, supporting convergent validity

- No ceiling or floor effects observed

- Composite scores followed normal distribution

- Factor analysis supported structural validity, identifying general cognitive ability and efficiency as core components [30]

Protocol 2: Development of a Digital Memory and Learning Test for Elderly Individuals

A 2025 study developed a Digital Memory and Learning Test (DMLT) based on Rey's Auditory Verbal Learning Test (RAVLT) principles, incorporating electroencephalographic (EEG) recording during assessment [31].

Research Objective: To develop a digital memory and learning test system based on RAVLT principles that allows concurrent evaluation of cerebral electroencephalographic activity while maintaining accessibility [31].

Participant Characteristics:

- Sample: 18 elderly individuals (age 60-92 years)

- Recruitment: Geriatrics outpatient clinic at a University Center in Brazil

- Eligibility: Literate, no diagnosis of moderate or advanced dementia

- Randomization: Participants randomly divided into two subgroups (7 and 11 participants) [31]

Experimental Design:

- Phase I: Subgroup I (n=7) completed DMLT with EEG recording; Subgroup II (n=11) completed traditional RAVLT

- Phase II (14-day interval): Subgroup I completed traditional RAVLT; Subgroup II completed DMLT with EEG recording

- Counterbalanced design to control for order effects and practice effects [31]

DMLT Procedure:

- Word repetition phases: A1, A2, A3, A4, A5, B, A6, and Recognition

- Phases A1-A5: Participants listened to 15 words and recalled using computer system

- Phase B: Interference trial with different word group

- Phase A6: Recall of original word group after interference

- Recognition phase: Identification of original words from larger group

- EEG recording throughout DMLT administration [31]

Validation Methodology:

- Comparison of performance scores between DMLT and traditional RAVLT

- Correlation analysis between test modalities

- EEG power band analysis (Delta, Theta, Alpha, Beta, Gamma)

- Participant satisfaction assessment using Net Promoter Score [31]

Key Findings:

- Performance on digital test and RAVLT comparable with no significant differences

- EEG activity patterns correlated with test performance

- High participant acceptance of digital format

- Successful demonstration of convergent validity between digital and traditional formats [31]

Sample Size Considerations for Correlation Studies

Table 2: Sample Size Requirements for Correlation Analyses Based on 95% Confidence Interval Width

| Target Correlation | CI Width | Pearson | Spearman | Kendall |

|---|---|---|---|---|

| 0.1 | 0.2 | 378 | 379 | 168 |

| 0.2 | 0.2 | 355 | 362 | 158 |

| 0.3 | 0.2 | 320 | 334 | 143 |

| 0.4 | 0.2 | 273 | 295 | 122 |

| 0.5 | 0.2 | 219 | 246 | 99 |

| 0.6 | 0.2 | 161 | 189 | 73 |

| 0.7 | 0.2 | 109 | 134 | 51 |

| 0.8 | 0.2 | 65 | 84 | 32 |

| 0.9 | 0.2 | 30 | 42 | 17 |

Sample size planning is a critical consideration in method comparison studies employing correlation analysis. Required sample sizes increase when investigating smaller effect sizes (target correlations) and when seeking greater precision (narrower confidence interval widths). Based on empirical calculations, a minimum sample size of 149 is typically adequate for performing both parametric and non-parametric correlation analyses to detect at least moderate correlation strength (r ≥ 0.3) with acceptable confidence interval width [32].

Spearman's rank correlation generally requires slightly larger sample sizes than Pearson's correlation across most effect sizes when controlling for confidence interval precision. This has important implications for research planning in cognitive assessment validation, where researchers must balance practical constraints with methodological rigor [32].

Decision Framework for Coefficient Selection

The selection between Pearson's r and Spearman's ρ should be guided by both theoretical considerations and data characteristics. Pearson's r is most appropriate when: (1) both variables are continuous and normally distributed, (2) the relationship between variables is linear, and (3) there are no significant outliers influencing the relationship [27] [28].

Spearman's ρ is more appropriate when: (1) variables are measured on an ordinal scale, (2) data violate normality assumptions, (3) the relationship is monotonic but not necessarily linear, or (4) significant outliers are present that may unduly influence the correlation coefficient [27] [29].

In practice, many researchers in cognitive assessment validation calculate both coefficients. When both coefficients yield similar results, it strengthens confidence in the findings. When they differ substantially, this discrepancy provides valuable information about the nature of the relationship between measurements [29].

Essential Research Reagents and Materials

Table 3: Essential Research Materials for Cognitive Assessment Validation Studies

| Material/Instrument | Function/Purpose | Example from Literature |

|---|---|---|

| Reference Standard Test | Provides criterion measure for convergent validity; serves as gold standard comparison | Rey's Auditory Verbal Learning Test (RAVLT), Montreal Cognitive Assessment (MoCA) [30] [31] |

| Experimental Test Instrument | New assessment tool requiring validation against reference standard | Telephone Cognitive Testing (TCTCOA), Digital Memory and Learning Test (DMLT) [30] [31] |

| Electroencephalography (EEG) | Records neurophysiological activity during cognitive testing; provides objective brain function measures | 8-channel OpenBCI Cyton Biosensing Board [31] |

| Speech Recognition System | Converts verbal responses to digital text for automated scoring | p5.js library with p5.Speech extension [31] |

| Statistical Software | Performs correlation analysis, calculates confidence intervals, determines sample requirements | PASS 2022, R Statistical Software [27] [32] |

Methodological Considerations and Limitations

Common Misapplications in Correlation Analysis

Method comparison studies frequently misapply statistical techniques, potentially compromising validity conclusions. Two common errors include:

Misuse of Correlation Coefficients: Correlation coefficients measure association, not agreement. A high correlation does not necessarily indicate that two methods agree or can be used interchangeably. As demonstrated in method comparison literature, two methods can show perfect correlation (r = 1.00) while having substantial systematic differences that make them non-interchangeable [25].

Inappropriate Use of t-tests: Neither independent nor paired t-tests adequately assess method comparability. Independent t-tests only detect differences in average values between methods, while paired t-tests may detect statistically significant but clinically meaningless differences with large samples, or fail to detect meaningful differences with small samples [25].

Addressing Limitations in Correlation Analysis

Directionality Problem: Correlation alone cannot determine which variable influences the other. In cognitive assessment validation, this means correlation cannot establish whether the new instrument or the gold standard is the "true" measure of the construct [26].

Third Variable Problem: Unmeasured confounding variables may influence both measurement methods, creating spurious correlations. In cognitive testing, factors such as participant fatigue, educational background, or cultural factors may influence performance on both tests independently [26].

Complementary Analytical Approaches: To address these limitations, researchers should supplement correlation analysis with additional statistical approaches:

- Bland-Altman plots to visualize agreement between methods and identify systematic biases

- Regression analysis to predict relationships between variables and identify proportional bias

- Factor analysis to establish structural validity across assessment methods [25]

Pearson's r and Spearman's ρ serve as foundational metrics for establishing convergent validity in cognitive assessment research, each with distinct applications and assumptions. Pearson's r is optimal for detecting linear relationships with normally distributed continuous data, while Spearman's ρ is more appropriate for monotonic relationships with ordinal data or when distributional assumptions are violated.

The validation of contemporary cognitive assessment tools—from telephone-based assessments to digital memory tests—demonstrates the rigorous application of these correlation metrics in establishing methodological validity. By following structured experimental protocols, selecting appropriate sample sizes, and implementing comprehensive analytical plans, researchers can robustly evaluate new assessment methodologies against established standards.

Future developments in cognitive assessment will continue to rely on these foundational correlation metrics while potentially incorporating more sophisticated statistical approaches that address the limitations of correlation analysis alone. The ongoing integration of neurophysiological measures with behavioral assessment underscores the continuing relevance of appropriate correlation methodology in advancing cognitive science and clinical practice.

Exploratory and Confirmatory Factor Analysis (EFA/CFA)

Exploratory Factor Analysis (EFA) and Confirmatory Factor Analysis (CFA) are two prominent multivariate techniques rooted in the common factor model, both designed to model relationships among observed variables through a smaller number of unobserved latent constructs [33]. In cognitive assessment research, these methods are indispensable for evaluating the convergent validity of assessment tools—the degree to which tests that theoretically measure the same cognitive construct actually correlate with one another [18]. The fundamental distinction lies in their application: EFA serves as a data-driven, theory-generating approach that explores underlying structures without pre-specified constraints, whereas CFA provides a theory-driven, hypothesis-testing framework that evaluates pre-defined structural models [33]. This comparative analysis examines their methodological applications, performance characteristics, and implementation protocols within cognitive research contexts, with particular emphasis on their utility for establishing robust measurement instruments in clinical and pharmaceutical development settings.

Conceptual Foundations and Key Distinctions

The Common Factor Model Framework

Both EFA and CFA originate from the common factor model, which expresses observed variables as linear combinations of common factors plus unique variance [33]. The model is represented as:

y = Λη + ε

Where:

- y = matrix of observed indicator variables

- η = matrix of common latent factors

- Λ = matrix of factor loadings relating indicators to factors

- ε = matrix of unique random errors associated with observed indicators

The critical distinction between EFA and CFA emerges in the treatment of the factor loading matrix (Λ). EFA freely estimates all elements of this matrix, allowing all variables to load on all factors, while CFA constrains specific loadings to zero according to an a priori hypothesized model [33]. This fundamental difference in parameter estimation reflects their divergent purposes: exploration versus confirmation.

Comparative Methodological Characteristics

Table 1: Fundamental Differences Between EFA and CFA

| Characteristic | Exploratory Factor Analysis (EFA) | Confirmatory Factor Analysis (CFA) |

|---|---|---|

| Primary Objective | Identify underlying factor structure; hypothesis generation | Test pre-specified factor structure; hypothesis confirmation |

| Theoretical Basis | Data-driven with minimal prior assumptions | Strong theoretical foundation required |

| Parameter Constraints | No constraints on factor loadings; all freely estimated | Specific cross-loadings constrained to zero |

| Factor Rotations | Requires rotation for interpretability (e.g., varimax, oblimin) | Typically no rotation needed |

| Model Specification | No prior specification of factor relationships | Precise specification of factor relationships required |

| Statistical Testing | Limited inferential capability | Comprehensive goodness-of-fit testing available |

| Implementation Software | Conventional statistics software (SPSS, SAS) | Specialized SEM software (AMOS, Mplus, Lavaan) |

Methodological Protocols and Experimental Applications

EFA Implementation Protocol for Cognitive Assessment

Step 1: Data Preparation and Suitability

- Assess data suitability using Kaiser-Meyer-Olkin (KMO) measure of sampling adequacy (values >0.6 acceptable, >0.8 preferable)

- Conduct Bartlett's test of sphericity (significant p-value indicates sufficient correlations)

- Address missing data using appropriate methods (e.g., Full Information Maximum Likelihood) [34]

Step 2: Factor Extraction

- Select extraction method (Maximum Likelihood preferred for normality; Robust ML or Weighted Least Squares for non-normal data) [34]

- Determine number of factors using multiple criteria:

Step 3: Factor Rotation and Interpretation

- Choose rotation method based on expected factor correlations:

- Orthogonal (varimax) for uncorrelated factors

- Oblique (oblimin, promax) for correlated factors [33]

- Interpret factors based on pattern of loadings (typically >|0.3| or >|0.4|)

- Label factors according to theoretical meaning of high-loading items

CFA Implementation Protocol for Cognitive Validation

Step 1: Model Specification

- Define measurement model based on strong theory or prior EFA results

- Specify which observed variables load on which latent constructs

- Identify model by setting scale for latent variables (e.g., fixing first loading to 1 or constraining factor variance to 1)

Step 2: Parameter Estimation

- Select estimation method based on data characteristics:

- Maximum Likelihood (ML) for continuous, normal data

- Robust Maximum Likelihood (MLR) for minor non-normality

- Weighted Least Squares (WLSMV) for categorical data [34]

Step 3: Model Evaluation

- Assess global model fit using multiple indices:

- χ² test (p > 0.05 indicates good fit, but sensitive to sample size)

- CFI (Comparative Fit Index) > 0.90 or > 0.95 for excellent fit

- TLI (Tucker-Lewis Index) > 0.90 or > 0.95 for excellent fit

- RMSEA (Root Mean Square Error of Approximation) < 0.08 or < 0.06 for excellent fit

- SRMR (Standardized Root Mean Square Residual) < 0.08 [34]

- Evaluate local fit through examination of factor loadings (standardized values > 0.5 preferable) and modification indices

Step 4: Model Modification

- Consider theoretically justified model respectifications based on modification indices

- Avoid capitalizing on chance through sequential modifications without theoretical justification

Experimental Evidence from Cognitive Assessment Research

A comprehensive investigation of convergent validity in the Consortium for Neuropsychiatric Phenomics (CNP) study exemplifies the sequential application of EFA and CFA [18]. Researchers administered 23 traditional and experimental cognitive tests to 1,059 community volunteers and 137 patients with psychiatric diagnoses to examine whether tests mapped onto expected latent variables.

Experimental Protocol:

- Sample Splitting: Randomly divided community sample into two halves (n₁=529, n₂=530)

- EFA Phase: Conducted exploratory factor analysis on first subsample without pre-specified constraints

- CFA Phase: Tested identified factor structure via multigroup confirmatory factor analysis in second subsample and patient groups

- Measurement Invariance Testing: Evaluated whether factor structure remained equivalent across community and clinical populations

Key Findings:

- EFA revealed a three-factor structure broadly corresponding to verbal/working memory, inhibitory control, and memory domains

- Several experimental measures of inhibitory control demonstrated weak relationships with all other tests, questioning their convergent validity

- MGCFA supported the factor structure's stability across populations, establishing measurement invariance

- The sequential EFA-CFA approach provided robust evidence for the convergent validity of most working memory and memory tests, while raising concerns about specific inhibitory control measures [18]

Performance Comparison and Methodological Evidence

Accuracy in Factor Recovery

Simulation studies directly comparing EFA and CFA performance in cognitive test-like data reveal critical nuances for methodological selection [35]. Research examining factor extraction methods for data conforming to intelligence test parameters (varying factor loadings, factor correlations, tests per factor, and sample sizes) demonstrated that:

Table 2: Performance Comparison in Factor Recovery Accuracy

| Method | Conditions of Accurate Performance | Conditions of Poor Performance | Overall Accuracy Rate |

|---|---|---|---|

| EFA with Parallel Analysis (PA-PCA) | High factor loadings (>0.7), low factor correlations | Few tests per factor, high factor correlations | Frequent underfactoring [35] |

| EFA with Minimum Average Partial (MAP) | Large number of indicators per factor | Few tests per factor, high factor correlations | Frequent underfactoring [35] |

| EFA with Parallel Analysis (PA-PAF) | Various conditions, particularly with categorical data | Small sample sizes | Most accurate EFA method [35] |

| Confirmatory Factor Analysis | Most conditions, particularly with theory-guided specification | Severely misspecified models | Highest overall accuracy [35] |

| Fit Index Difference Values | Categorical indicators, low factor loadings | Very simple structures | Outperforms parallel analysis in specific conditions [36] |

Notably, commonly recommended "gold standard" EFA methods like Parallel Analysis based on principal components analysis (PA-PCA) and Minimum Average Partial (MAP) frequently underfactor with cognitive test data—recovering fewer factors than actually exist in the simulated data—particularly when there are few tests per factor and high correlations between factors [35]. This finding has substantial implications for cognitive test interpretation, as underfactoring may lead researchers to conclude tests measure fewer cognitive abilities than they actually do.

Comparative Analysis of Strengths and Limitations

Table 3: Comprehensive Strengths and Limitations of EFA and CFA

| Aspect | Exploratory Factor Analysis | Confirmatory Factor Analysis |

|---|---|---|

| Primary Strengths | Flexibility for novel instruments [33]No strong theoretical requirements [33]Identifies unexpected relationshipsSimpler model modification | Theory testing capability [33]Comprehensive fit statistics [33]Measurement invariance testing [33]Direct model comparisons [33] |

| Key Limitations | Subjectivity in factor retention [33]Rotation method arbitrariness [33]Limited inferential capability [33]Cannot test specific hypotheses [33] | Requires strong theoretical foundation [33]Model misspecification sensitivity [34]Challenging fit assessment [33]Need for specialized software [33] |

| Optimal Application Context | Early scale development [33]Instruments with limited validation [33]Unexplored cognitive domains | Established theoretical frameworks [33]Cross-validation studies [18]Measurement invariance testing [33] |

| Sample Size Requirements | Minimum 5-10 observations per variable [34]Larger samples for stability | Typically >200 cases [34]Larger samples for complex models |

Integrated Approaches and Advanced Methodological Considerations

The Confirmatory-Exploratory Continuum

Recent methodological advancements recognize that EFA and CFA exist along a continuum rather than as dichotomous choices [37]. Hybrid approaches that blend confirmatory and exploratory elements have demonstrated superior performance in slightly misspecified models where traditional CFA proves overly rigid:

- Exploratory Structural Equation Modeling (ESEM): Integrates EFA within the SEM framework, allowing cross-loadings while maintaining CFA's ability to model structural relationships

- Bayesian Structural Equation Modeling (BSEM): Incorporates approximate zero priors for small cross-loadings, balancing flexibility with parsimony

- ECFA/BCFA Procedures: After fitting an unrestrictive model (EFA or BSEM), these methods identify and retain only relevant loadings to provide parsimonious CFA solutions [37]

Simulation studies demonstrate that EFA typically provides the most accurate parameter estimates, although rotation procedure selection is critical—Geomin rotation performs well with correlated factors, while target rotation excels with simpler structures [37].

Cognitive Assessment Applications and Convergent Validity Challenges

Research examining exploratory behavior measurement highlights the critical importance of robust factor analytic approaches for establishing convergent validity [38]. A comprehensive assessment of multiple behavioral measures and self-report scales of exploration found:

- Limited Convergent Validity: Most behavioral measures lacked sufficient convergent validity with one another or with self-reports

- Task-Specific Variance: Psychometric modeling could not identify a good-fitting model with an assumed general exploration tendency, suggesting measures capture task-specific behaviors

- Temporal Stability Despite Specificity: Measures demonstrated stability across one-month timespans despite lacking cross-task generalizability [38]

These findings underscore the necessity of rigorous factor analytic approaches in cognitive assessment research, as assumptions about construct unity often prove problematic without empirical verification.

Essential Research Reagents and Computational Tools

Table 4: Essential Methodological Resources for Factor Analysis

| Resource Category | Specific Tools | Primary Function | Implementation Considerations |

|---|---|---|---|

| Statistical Software | Mplus, R (lavaan, psych, GPArotation), SAS (PROC FACTOR, CALIS), SPSS, Stata | Model estimation, fit statistics, rotation | CFA requires specialized SEM software; EFA available in conventional packages [33] |

| Factor Retention Decision Aids | Parallel Analysis (PA-PAF preferred), Fit Index Difference Values, MAP, Empirical Kaiser Criterion | Determining number of factors to retain | Use multiple methods; PA-PAF outperforms PA-PCA for cognitive data [35] |

| Fit Assessment Indices | χ² test, CFI, TLI, RMSEA, SRMR, WRMR | Evaluating model fit in CFA | Always report multiple indices; no single index sufficient [34] |

| Data Screening Tools | KMO, Bartlett's test, normality tests, outlier detection | Assessing data suitability | Essential preliminary step for both approaches [34] |

| Handling Non-normal Data | Robust Maximum Likelihood (MLR), Weighted Least Squares (WLSMV) | Estimation with non-normal or categorical data | Critical for valid results with real-world data [34] |

The comparative evidence demonstrates that EFA and CFA serve complementary but distinct roles in establishing the convergent validity of cognitive assessment tools. EFA provides essential flexibility during initial instrument development and when exploring novel cognitive domains, while CFA offers rigorous hypothesis testing for established theoretical frameworks. The most robust validation strategies employ sequential approaches—using EFA for initial structure identification followed by CFA confirmation on independent samples [18].

Cognitive assessment researchers must recognize that methodological choices significantly impact substantive conclusions about cognitive architecture. Underfactoring tendencies of popular EFA methods [35] and the measurement specificity observed in comprehensive validity assessments [38] highlight the necessity of methodologically sophisticated approaches. Future research should continue developing hybrid techniques along the confirmatory-exploratory continuum [37] while maintaining rigorous methodological standards that ensure the validity of cognitive assessment instruments used in basic research and pharmaceutical development.

The Multitrait-Multimethod Matrix (MTMM) is a formal methodology for examining the construct validity of a set of measures, developed by Campbell and Fiske in 1959 [39]. It provides a rigorous framework for simultaneously assessing convergent validity (the degree to which different measures of the same trait agree) and discriminant validity (the degree to which measures of different traits are distinct) [40] [39]. For researchers developing cognitive assessment tools, the MTMM is an essential tool for providing robust evidence that an instrument accurately measures the intended psychological construct and not something else.

The Core Components of an MTMM Matrix

An MTMM matrix is a specific arrangement of correlations that allows researchers to evaluate the influence of both traits (the constructs being measured) and methods (how they are measured) [39]. The matrix is organized by grouping measures according to their method of assessment.

The following diagram illustrates the logical relationships between the core concepts of the MTMM framework and how they are used to evaluate construct validity.

Within this structure, the matrix contains several key blocks of correlations, each serving a specific purpose in validation [39]:

- Reliability Diagonal (Monotrait-Monomethod): These are the reliability estimates for each measure, positioned on the diagonal of the matrix. They indicate how consistently a method measures a single trait.

- Validity Diagonals (Monotrait-Heteromethod): These correlations are between different methods measuring the same trait. High correlations in these diagonals provide evidence for convergent validity.

- Heterotrait-Monomethod Triangles: These are the correlations among different traits measured by the same method. High correlations suggest that the method itself is influencing the scores, creating a "methods factor."

- Heterotrait-Heteromethod Triangles: These are correlations between different traits measured by different methods. These correlations should be the lowest in the matrix, providing strong evidence for discriminant validity.

Experimental Protocols and Data Interpretation

A well-designed MTMM study requires careful planning. The following workflow outlines the key steps for implementing the MTMM framework in cognitive assessment research, from study design to the interpretation of results.

Detailed Methodology

The foundational steps for conducting an MTMM study are as follows:

- Define Traits and Methods: Select at least two distinct but theoretically related traits (e.g., working memory, processing speed) and at least two different methods for measuring them (e.g., computerized test, pen-and-paper test, rater observation) [40] [39]. The methods should be "truly different" to effectively tease apart trait variance from method variance [40].

- Select and Administer Measures: Choose specific instruments for each trait-method combination. In an ideal, fully-crossed design, each trait is measured by every method [39]. Administer all measures to participants, typically in a counterbalanced order to control for sequence effects.

- Construct the Matrix and Analyze: Calculate the correlations between all measures and arrange them into the MTMM matrix, replacing the main diagonal with reliability estimates (e.g., Cronbach's alpha) [39]. Analysis can be performed using Campbell and Fiske's original interpretive guidelines or more modern statistical techniques like Confirmatory Factor Analysis (CFA) [41] [40].

Interpretation Guidelines

Campbell and Fiske proposed specific principles for interpreting the MTMM matrix [39]. The table below summarizes the key interpretive rules and their implications for construct validity.

| Principle | Interpretive Focus | Implication for Construct Validity | |

|---|---|---|---|

| Significant Validity Diagonals | Convergent Validity | Correlations in the validity diagonals should be significantly different from zero and sufficiently large. | Supports the premise that different methods are measuring the same underlying trait. |

| Validity > Heterotrait-Heteromethod | Discriminant Validity | A validity coefficient should be higher than all correlations in the heterotrait-heteromethod triangles that share neither trait nor method. | Evidence that traits are related but distinct constructs. |

| Validity > Heterotrait-Monomethod | Method Factor Influence | A validity coefficient should be higher than all correlations in the heterotrait-monomethod triangles. | Suggests that the trait relationship is stronger than any bias introduced by using a common method. |