Ensuring Precision in Brain Health Research: A Comprehensive Guide to Validating Cognitive Measurement Scales

This article provides a contemporary guide for researchers and drug development professionals on the critical process of validating cognitive measurement scales.

Ensuring Precision in Brain Health Research: A Comprehensive Guide to Validating Cognitive Measurement Scales

Abstract

This article provides a contemporary guide for researchers and drug development professionals on the critical process of validating cognitive measurement scales. It covers foundational principles, from defining cognitive constructs like memory and executive function to establishing unidimensionality and factor structure. The article details advanced methodological applications, including digital adaptation and remote administration, and offers solutions for common challenges such as low test-retest reliability and cross-cultural measurement non-invariance. Through a comparative analysis of popular tools and their psychometric properties, it delivers evidence-based recommendations for selecting and validating scales in clinical trials and biomedical research, ensuring that cognitive outcomes are measured with the precision required for impactful scientific advancement.

Laying the Groundwork: Core Principles of Cognitive Constructs and Scale Development

In both clinical practice and pharmaceutical research, the accurate measurement of cognitive domains is paramount. The validation of assessment scales is not merely an academic exercise; it is the foundation for diagnosing cognitive impairment, monitoring disease progression, and evaluating the efficacy of new therapeutic interventions. Cognitive domains—discrete categories of mental function such as memory, executive function, and processing speed—represent the core targets of neuropsychological assessment and drug development. The reliability of data on a drug's cognitive benefits hinges entirely on the validity and sensitivity of the instruments used to measure these domains. This guide provides a comparative analysis of contemporary cognitive assessment tools, detailing their experimental validation and application within clinical research, with a particular focus on early detection of neurodegenerative conditions like Alzheimer's disease (AD).

Comparative Analysis of Key Cognitive Assessment Tools

The following table summarizes prominent cognitive assessment tools, their primary domains, and key performance data from validation studies.

Table 1: Comparative Analysis of Cognitive Assessment Scales and Their Validation

| Assessment Tool | Primary Cognitive Domains Measured | Validation Sample | Key Performance Data | Primary Research Context |

|---|---|---|---|---|

| NIH Toolbox (DCCS & PCPS) [1] | Executive Function, Processing Speed | 184 participants (cHC, MCI, AD) | A 5-point decrease increased risk of global decline: PCPS (HR 1.32), DCCS (HR 1.62) [1]. | Predicting global cognitive decline in MCI and AD [1]. |

| R4Alz-pc Index [2] | Executive Functions (Working Memory, Attentional Control, Inhibitory Control, Cognitive Flexibility) | 105 cognitively healthy older adults | Significant association between lower R4Alz-pc performance and increased memory-related negative affect (Mediation analysis supported) [2]. | Early detection of preclinical Alzheimer's pathology and SCD [2]. |

| Bayley-III Cognitive Scale [3] | Early Cognitive Skills (isolated from language) | 77 children (51 term, 26 preterm) | Bayley-III Cognitive scores significantly higher than Bayley-II MDI scores (p<.0001); Conversion algorithm developed [3]. | Isolating cognitive from language development in infants and toddlers [3]. |

| Assessment of Size and Scale Cognition (ASSC) [4] | Quantitative & Qualitative Reasoning, Proportional Conception | 518 first-year undergraduate students | Computer-based instrument; Validity evidence from expert review and pilot testing; Aligned with a theoretical framework [4]. | Measuring a component of the "scale, proportion, and quantity" crosscutting concept in science education [4]. |

| HKCAS-T Cognition Scale [5] | General Cognition for Toddlers (based on CHC theory) | 282 children (18-41 months) | Strong psychometric properties: Internal consistency = 0.98, Test-retest reliability = 0.98 [5]. | Culturally valid developmental assessment for Cantonese-speaking toddlers [5]. |

Detailed Experimental Protocols and Methodologies

Predicting Cognitive Decline with the NIH Toolbox

Objective: To evaluate if changes in executive functioning and processing speed can predict subsequent global cognitive decline in patients with Mild Cognitive Impairment (MCI) and Alzheimer's disease (AD) [1].

Protocol:

- Study Design: Prospective longitudinal cohort study.

- Participants: 184 participants, including cognitively healthy controls (cHC), MCI patients, and AD patients [1].

- Cognitive Assessments: Conducted at 3-month intervals for a median follow-up of 540 days.

- Independent Variables: Executive functioning and processing speed were assessed using the NIH Toolbox Dimensional Change Card Sort Test (DCCS) and Pattern Comparison Processing Speed Test (PCPS) [1].

- Outcome Variable: Global cognitive decline was measured using the Alzheimer's Disease Assessment Scale-Cognitive (ADAS-Cog13). A change of ≥4 points was considered clinically meaningful [1].

- Data Analysis: Between- and within-subjects variance was analyzed. Hazard ratios (HR) were calculated to determine the increased risk of global cognitive decline associated with a 5-point decrease in PCPS or DCCS scores [1].

Validating a Novel Tool for Preclinical Detection (R4Alz-pc)

Objective: To investigate whether the R4Alz-pc index, a brief executive functioning battery, can detect early cognitive decline by predicting memory-related worry and negative affect in cognitively healthy older adults [2].

Protocol:

- Study Design: Cross-sectional study using a snowball sampling method.

- Participants: 105 community-dwelling older adults (Mean age = 59.25) with no diagnosed neurological or psychiatric disease [2].

- Measures:

- Independent Variable: The R4Alz-pc index, a part of the REMEDES for Alzheimer (R4Alz) battery, was used to assess core executive functions such as working memory, attentional control, and inhibitory control [2].

- Dependent Variables: The Multifactorial Memory Questionnaire (MMQ) was used to measure subjective memory worry and negative affect about memory performance [2].

- Data Analysis: Linear multiple regression and mediation analysis within a Structural Equation Modeling (SEM) framework were used to test the hypothesis that memory worry mediates the relationship between executive dysfunction (R4Alz-pc) and negative affect [2].

Establishing Concurrent Validity for a Toddler Assessment (HKCAS-T)

Objective: To examine the psychometric properties, including concurrent validity and reliability, of the Cognition Scale of the Hong Kong Comprehensive Assessment Scales for Toddlers (HKCAS-T) [5].

Protocol:

- Study Design: Cross-sectional study.

- Participants: 282 Cantonese-speaking children aged 18 to 41 months, recruited from Maternal and Child Health Centers across Hong Kong [5].

- Measures:

- Target Instrument: The pilot version of the HKCAS-T Cognition Scale, which contains 83 items developed based on the Cattell–Horn–Carroll (CHC) theory [5].

- Criterion Instrument: The Cognitive Scale in the Cognitive Battery of the Merrill-Palmer-Revised Scales of Development (M-P-R) was used as the gold standard for concurrent validity testing [5].

- Data Analysis: Rasch analysis was employed to examine the unidimensionality of the scale. Internal consistency (KR-20) and test-retest reliability (intraclass correlation) were calculated. A subsample of 41 children was reassessed after four weeks to establish test-retest reliability [5].

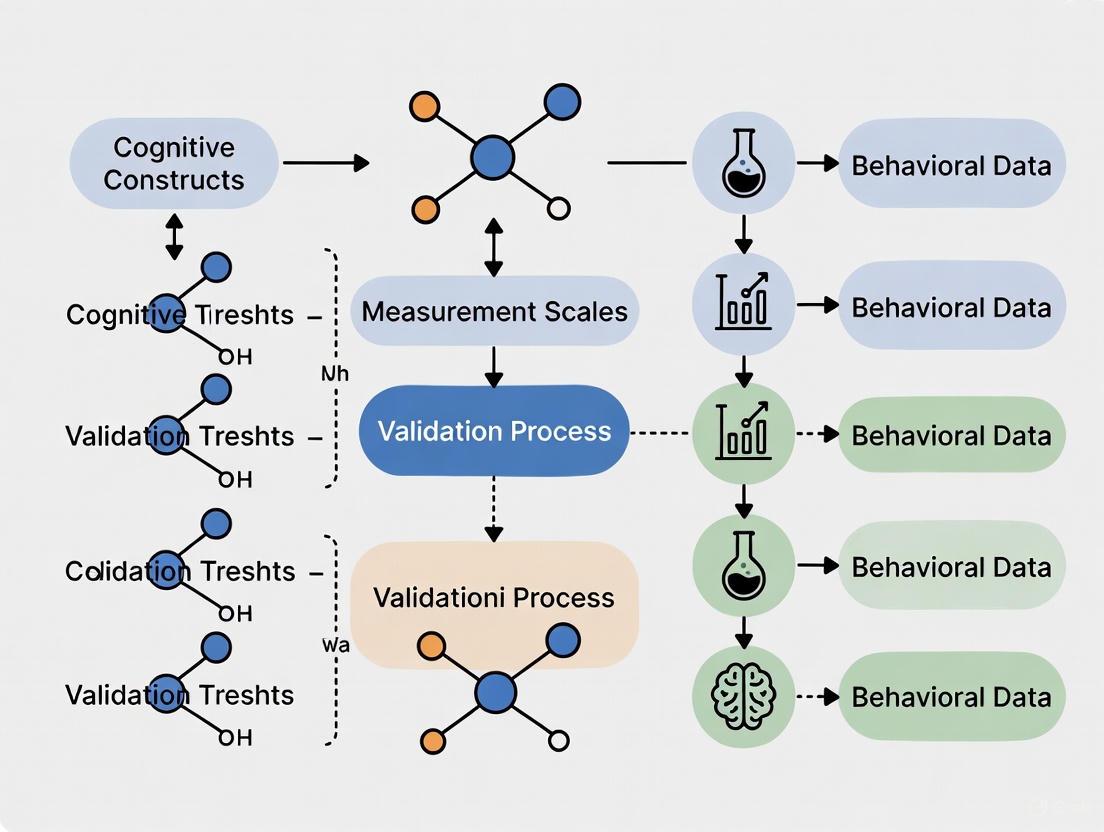

Visualizing Cognitive Assessment Research Workflows

The following diagram illustrates a generalized experimental workflow for validating a cognitive assessment scale, synthesizing common elements from the cited research.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Key Instruments and Tools for Cognitive Domains Research

| Tool/Reagent | Primary Function in Research | Key Features & Applications |

|---|---|---|

| NIH Toolbox Cognition Battery [1] | Assesses specific cognitive domains like processing speed (PCPS) and executive function (DCCS). | Standardized, easy-to-administer, computerized battery; Used to predict near-term global cognitive decline in clinical trials [1]. |

| R4Alz-pc Index [2] | A brief battery designed to detect subtle executive function deficits in preclinical Alzheimer's disease. | Focused on cognitive control; validated for use in identifying Subjective Cognitive Decline (SCD) and early pathology [2]. |

| Alzheimer's Disease Assessment Scale-Cognitive (ADAS-Cog) [1] | Measures global cognitive decline as a primary endpoint in clinical trials. | A well-established standard; the 13-item version (ADAS-Cog13) includes executive functioning items and is sensitive to change over time [1]. |

| Bayley Scales of Infant Development [3] | Evaluates developmental functioning in young children, separating cognitive from language skills. | Critical for longitudinal studies of at-risk infants (e.g., preterm births); allows for comparison across different editions (Bayley-II, Bayley-III) [3]. |

| Multifactorial Memory Questionnaire (MMQ) [2] | Quantifies subjective memory complaints, worry, and affect. | Used as an outcome measure to correlate subjective concerns with objective cognitive performance, particularly in SCD research [2]. |

In the scientific endeavor to quantify complex cognitive and psychological constructs, the development of robust measurement scales is paramount. The validity of any research conclusion—from assessing a toddler's cognitive development to measuring an adolescent's compassion or an adult's reasoning style—is fundamentally contingent on the reliability and structural validity of the instruments used. The process of establishing this validity hinges on two critical, interconnected concepts: dimensionality (identifying the number of latent constructs or factors measured by the scale) and factor structure (defining the nature and relationships between these factors). Ignoring these psychometric foundations can lead to instruments that misrepresent the very phenomena they are designed to capture, with consequences ranging from flawed theoretical models to ineffective clinical interventions and wasted resources in drug development.

This guide provides a comparative analysis of the methodologies and analytical techniques used to establish dimensionality and factor structure, framing them as essential tools in the researcher's toolkit for validating cognitive and psychological measurement scales.

Comparative Analysis of Dimensionality Assessment Techniques

Establishing a scale's dimensionality involves statistical techniques to determine if items collectively measure a single construct (unidimensionality) or multiple distinct but related constructs (multidimensionality). The table below compares the core methodologies used in contemporary scale validation research.

Table 1: Comparison of Core Dimensionality and Factor Analysis Techniques

| Technique | Primary Function | Key Interpretation Metrics | Typical Workflow | Reported Exemplars from Literature |

|---|---|---|---|---|

| Exploratory Factor Analysis (EFA) | To explore the underlying factor structure without strong a priori hypotheses. | - Factor Loadings: Strength of item-factor relationship (e.g., >0.4).- Eigenvalues: Indicate amount of variance explained by a factor (often >1.0).- Variance Explained: Total variance accounted for by the solution. | 1. Item pool generation.2. Data collection.3. EFA to identify potential factors.4. Item reduction based on loadings and cross-loadings. | 8-Factor Reasoning Styles Scale (8-FRSS); initial analysis revealed the theorised eight-factor solution, explaining 58.2% of variance [6]. |

| Confirmatory Factor Analysis (CFA) | To test and confirm a pre-specified factor structure (e.g., from theory or EFA). | - Model Fit Indices: CFI (>0.90), TLI (>0.90), RMSEA (<0.08), SRMR (<0.08).- Standardized Factor Loadings: Significance and magnitude. | 1. Define the hypothesized model.2. Fit model to a new dataset.3. Assess model fit.4. Refine model if necessary (e.g., correlated errors). | 8-FRSS CFA showed excellent fit (χ²/df=1.77, CFI=0.918, TLI=0.901, RMSEA=0.052, SRMR=0.047) [6]. Compassion Scale CFA validated a three-factor structure in Hong Kong adolescents [7]. |

| Rasch Analysis | To assess if a set of items functions as a unidimensional scale and to examine item-level properties. | - Infit/Outfit Statistics: Measure of unmodeled noise (ideal range 0.5-1.5).- Point-Measure Correlations: Correlation between item score and total score.- Principal Component Analysis (PCA) of Residuals: To check for unidimensionality. | 1. Test for overall model fit.2. Check individual item fit.3. Review item difficulty hierarchy.4. Check for local dependence and DIF. | HKCAS-T Cognition Scale; Rasch analysis supported unidimensionality, with pilot studies removing 6 items due to unsatisfactory goodness-of-fit [5]. |

| Dimensionality Reduction (DR) for Visualization | To visualize high-dimensional data in 2D/3D, aiding in cluster identification (often used complementarily). | - Cluster Separation: Visual identification of distinct groups.- Distance Interpretation: Caution required as distances are approximations. | 1. Data preprocessing.2. Apply DR algorithm (e.g., PCA, UMAP, t-SNE).3. Visualize and interpret clusters.4. Validate findings with other methods. | Used in biology, chemistry, physics; common workflows mix confirmatory and exploratory analysis. PCA is favored for explainability, while UMAP offers clearer clustering [8] [9]. |

Experimental Protocols for Scale Validation

A robust scale validation study follows a multi-stage, sequential protocol to gather comprehensive evidence for the instrument's psychometric properties. The following diagram outlines the typical workflow, integrating the techniques compared above.

Diagram 1: The Sequential Workflow for Scale Development and Validation.

Detailed Methodology for Key Stages

1. Theoretical Grounding and Item Generation The process begins with a clear conceptual definition of the construct. For example, the 8-Factor Reasoning Styles Scale (8-FRSS) was built upon Hacking’s philosophical "styles of reasoning" notion, operationalized into three axes: Disposition (Empirical Hypothetical), Perception (Metaphorical Analogical), and Organization (Inductive Deductive) [6]. Similarly, the Digital Mindset Scale was developed using a multi-grounded theory approach, identifying three dimensions: digital consciousness, digital expertise, and digital business acumen [10]. An initial item pool is generated to cover all aspects of the theoretical model, typically with multiple items (e.g., 5 per factor for the 8-FRSS) to ensure adequate representation [6].

2. Expert Review and Pilot Testing Content validity is established through expert review. For the Resilience to Misinformation instrument, a panel of 5 experts evaluated an 18-item pool for comprehensibility and relevance, leading to the removal of 3 items and the rewording of 5 others [11]. This is often followed by a pilot study (e.g., n=50 for the 8-FRSS) to assess face validity and refine items based on participant feedback [6].

3. Exploratory and Confirmatory Factor Analysis The refined scale is administered to a larger sample for quantitative analysis. A common best practice is to split the sample or collect a new one for cross-validation.

- EFA Protocol: Researchers use EFA on the first sample to uncover the underlying factor structure. For instance, the 8-FRSS EFA used a sample of 441 participants, applying polychoric correlations and DWLS estimation for ordinal data. The analysis confirmed the theorized eight-factor solution after removing two poorly loading items [6].

- CFA Protocol: The structure identified via EFA is then tested on a hold-out sample using CFA. The 8-FRSS CFA (n=316) assessed model fit using multiple indices (χ²/df, CFI, TLI, RMSEA, SRMR). A third, independent community sample (n=604) was used for final cross-validation, demonstrating the stability of the factor structure [6].

4. Comprehensive Validity and Reliability Assessment The final stage involves gathering extensive evidence for the scale's validity and reliability.

- Reliability: Internal consistency is typically measured with Cronbach’s α and McDonald’s ω. The 8-FRSS showed high total-scale reliability (ω = 0.93; α = 0.91), though two subscales had marginal values (ω = 0.48–0.69), highlighting the need for item refinement [6]. Test-retest reliability is also crucial, as demonstrated by the HKCAS-T Cognition Scale, which had an intraclass correlation of 0.98 over a four-week interval [5].

- Validity: This includes:

- Convergent/Discriminant Validity: Correlations with theoretically related and unrelated measures. The Compassion Scale showed significant positive correlations with social connectedness and self-efficacy [7].

- Concurrent Validity: The HKCAS-T Cognition Scale scores correlated positively with scores from the established Merrill-Palmer-Revised Cognitive Scale [5].

- Predictive Validity: The Digital Mindset Scale was positively related to innovative and entrepreneurial behavior [10].

The Scientist's Toolkit: Essential Reagents for Validation Research

Table 2: Key "Research Reagent Solutions" for Scale Validation Studies

| Reagent / Resource | Function in Validation | Exemplary Application |

|---|---|---|

| Statistical Software (R, Mplus, SPSS) | To perform complex statistical analyses like EFA, CFA, and Rasch modeling. | R packages like lavaan for CFA; specialized IRT packages for Rasch analysis [6] [5]. |

| Gold Standard Criterion Measures | To serve as a benchmark for establishing concurrent validity. | Using the M-P-R Cognitive Scale to validate the new HKCAS-T Cognition Scale [5]. |

| Expert Review Panels | To establish content validity by evaluating item relevance, clarity, and coverage of the construct. | Panel of 5 experts in communication, psychology, and health to refine the Misinformation Resilience scale [11]. |

| Dimensionality Reduction Algorithms (PCA, UMAP, t-SNE) | To visualize high-dimensional data and identify potential clusters or patterns that suggest latent factors. | Comparing PCA and UMAP for visualizing chemical space in organometallic catalysis [9]. |

| Specialized Population Samples | To ensure the scale is validated and normed for its intended target audience. | Parents of school-age children (6-10 years) for the Resilience to Misinformation scale [11]. |

Visualizing Factor Structures and Relationships

Understanding the relationships between the identified factors is crucial for interpreting what a scale actually measures. The following diagram illustrates the complex, multi-dimensional factor structure of a validated reasoning styles instrument, showing how higher-order axes combine to form specific reasoning profiles.

Diagram 2: The Three-Dimensional Factor Structure of the 8-FRSS, illustrating how eight distinct reasoning profiles arise from orthogonal intersections of cognitive axes [6].

The rigorous establishment of a scale's dimensionality and factor structure is not merely a statistical formality but the very foundation upon which valid scientific measurement is built. As demonstrated by the diverse examples—from cognitive assessments for toddlers to reasoning styles in adults—a methodical approach involving EFA, CFA, and complementary techniques like Rasch analysis is non-negotiable. Furthermore, the cross-validation of factor structures on independent samples is a critical step in demonstrating their stability and generalizability. For researchers and drug development professionals, selecting or developing instruments without this robust evidential basis introduces significant risk. The tools, protocols, and comparative data outlined in this guide provide a roadmap for moving beyond the simple score to a deeper, more defensible understanding of what our measurements truly represent.

In the scientific pursuit of measuring cognitive phenomena, the validity of our instruments determines the validity of our discoveries. Psychometric properties provide the foundational framework for ensuring that measurement scales in cognitive terminology research yield accurate, consistent, and meaningful data. For researchers, scientists, and drug development professionals, understanding these properties is not merely academic—it is a methodological imperative that underpins the development of reliable assessment tools, from cognitive screening instruments to clinical trial endpoints.

Psychometric properties refer to the technical characteristics of a test that determine its quality and effectiveness in measuring what it purports to measure [12]. These properties include various factors such as validity, reliability, and norms, which collectively ensure that assessment tools provide precise, reliable, and unbiased outcomes [12]. In the context of cognitive terminology measurement scales, rigorous psychometric validation transforms subjective observations into quantifiable, scientifically defensible metrics essential for both basic research and applied drug development.

This guide provides a comprehensive overview of essential psychometric properties, structured as a comparative analysis of validation approaches to inform instrument selection and development. By examining explicit methodologies, experimental protocols, and quantitative comparisons, we aim to equip researchers with a practical blueprint for validating cognitive measurement scales.

Essential Psychometric Properties: A Comparative Framework

The measurement quality of any assessment tool is evaluated through its psychometric properties, primarily categorized into validity, reliability, and normative characteristics. The table below provides a structured comparison of these core properties, their definitions, and key considerations for cognitive measurement research.

Table 1: Core Psychometric Properties and Their Application to Cognitive Measurement

| Property | Definition | Subtypes | Application in Cognitive Research |

|---|---|---|---|

| Validity | The extent to which a test measures what it claims to measure [12] | Content, Construct, Criterion-Related, Face [12] | Ensures cognitive tests accurately target specific cognitive domains (e.g., memory, executive function) rather than confounding factors |

| Reliability | The consistency of test results over time and across conditions [13] [12] | Test-Retest, Inter-Rater, Parallel-Forms, Internal Consistency [12] | Determines stability of cognitive measurements across repeated administrations and different raters |

| Norms | Standards established through administration to a representative sample, providing comparative benchmarks [13] [12] | Age, Grade, National, Percentile, Local [12] | Enables interpretation of individual cognitive test scores relative to appropriate reference populations |

Validity: The Cornerstone of Meaningful Measurement

Validity evidence is paramount for establishing that a cognitive test truly captures the intended theoretical construct. Different validity types provide complementary evidence:

- Content Validity: Ensures test items adequately represent the entire domain of the cognitive construct being measured [12]. For example, a valid memory assessment should cover various memory aspects (working, episodic, semantic) rather than focusing on just one facet.

- Construct Validity: Assesses how well a test measures the theoretical cognitive construct it intends to evaluate [12]. This is often established through factor analysis and relationships with other variables.

- Criterion-Related Validity: Evaluates how well test scores predict performance on an external criterion [12]. In drug development, this might involve correlating cognitive test scores with real-world functional outcomes.

- Face Validity: While not technically a scientific form of validity, this refers to whether a test appears to measure what it claims to measure, influencing participant engagement and perception [12].

Recent research emphasizes a unified construct validity approach, where multiple sources of evidence collectively support test interpretation [14]. Kane's argument-based validation framework highlights four steps: from observation to scoring, generalization, extrapolation, and finally decision-making [14].

Reliability: The Foundation of Measurement Consistency

Reliability ensures that cognitive measurements are stable and dependable, not unduly influenced by random factors:

- Test-Retest Reliability: Measures consistency when the same test is administered to the same individuals at different time points [12]. High test-retest reliability is crucial for longitudinal studies and clinical trials tracking cognitive change.

- Inter-Rater Reliability: Assesses consistency when different evaluators score the test [12]. This is particularly important for subjective cognitive assessments requiring clinical judgment.

- Internal Consistency: Measures how strongly items within a test correlate with each other, indicating they measure the same underlying construct [12]. Cronbach's alpha values above 0.70 are generally considered acceptable [12].

Reliable tests produce similar results under consistent conditions, ensuring that observed changes in cognitive performance reflect true change rather than measurement error [13].

Experimental Protocols for Psychometric Validation

The development and validation of psychometrically sound instruments follow systematic methodologies. The table below outlines key experimental approaches used in validation studies, with examples from recent research.

Table 2: Experimental Protocols in Psychometric Validation Studies

| Validation Method | Protocol Description | Sample Application | Key Output Metrics |

|---|---|---|---|

| Factor Analysis | Examines the underlying structure of items and their relationships to latent constructs [15] | Validation of Meaning of Life Scale (MLS) for Peruvian population [15] | Model fit indices (CFI, TLI, RMSEA, SRMR), factor loadings |

| Cross-Cultural Adaptation | Translation and back-translation by bilingual experts with cultural equivalence evaluation [16] | Adaptation of Critical Reasoning Assessment for Italian population [16] | Linguistic accuracy, measurement invariance, cultural relevance |

| Reliability Testing | Administration of the same test to the same participants at different time points or by different raters [12] | Evaluation of screening tools for mild cognitive impairment [17] | Intraclass correlation coefficients, Cohen's kappa, Cronbach's alpha |

| Criterion Validation | Comparison of new instrument scores with established "gold standard" measures [17] | Systematic review of MCI screening tools using COSMIN methodology [17] | Sensitivity, specificity, ROC curves, correlation coefficients |

Case Example: Validation of the Meaning of Life Scale

A 2025 study developing and validating the Meaning of Life Scale (MLS) for the Peruvian population demonstrates a comprehensive validation protocol [15]. Researchers involved 646 individuals aged 18-69 years, employing both exploratory factor analysis (EFA) and confirmatory factor analysis (CFA) to examine the scale's structure [15]. The results supported a unifactorial model with adequate fit indices (χ²(2) = 2.391, p < 0.001, CFI = 0.998, TLI = 0.995, RMSEA = 0.025, SRMR = 0.016) and high internal consistency (α = 0.878, ω = 0.878) [15]. This systematic approach provides a template for validating cognitive terminology scales, emphasizing both structural validity and reliability.

Case Example: Validation of Critical Reasoning Assessment

The adaptation and validation of the Critical Reasoning Assessment (CRA) for the Italian population followed a rigorous multi-study design [16]. The process began with a pilot test (n=79) to identify initial issues and confirm the CRA's ability to differentiate between high and low performers [16]. Subsequent studies with 123 and 480 participants, respectively, validated the CRA's unidimensional structure and consistency in measuring critical reasoning [16]. The adaptation process included translation and back-translation by bilingual experts to ensure linguistic accuracy, with the final instrument demonstrating excellent reliability (Cronbach's alpha = 0.93) and strong convergent validity through positive correlations with the Critical Reasoning Disposition Inventory and academic performance [16].

Advanced Applications and Contemporary Challenges

Test Blueprinting: A Proactive Validation Strategy

Test blueprinting represents a proactive methodology for enhancing psychometric quality during the initial development phase. A test blueprint is a tool used in the process for generating content-valid exams by linking the subject matter delivered during instruction and the items appearing on the test [18]. This approach ensures constructive alignment between learning objectives, instructional activities, and assessment strategies [14].

Empirical research demonstrates the tangible benefits of blueprinting. A 2023 study comparing two uro-reproductive tests found that the test developed with a blueprint showed improved item differentiation and independence, with a wider range of item difficulty that better matched student abilities [14]. While both tests exhibited similar overall reliability indices, the blueprinted test demonstrated superior psychometric characteristics in its ability to precisely measure the intended construct [14].

Emerging Methodologies: LLMs in Psychometric Assessment

Recent advances introduce innovative approaches to psychometric validation, including the use of Large Language Models (LLMs) for automated assessment. A 2025 study developed LLM rating scales for automatically transcribed psychological therapy sessions, creating the LLEAP (Large Language Model Engagement Assessment in Psychological Therapies) tool [19]. The researchers employed a structured, multi-stage process involving automatic transcription, item generation, and psychometric selection pipelines [19]. The resulting scale demonstrated strong psychometric properties, including high reliability (ω = 0.953) and significant correlations with engagement determinants (e.g., motivation, r = .413), processes (e.g., between-session efforts, r = .390), and outcomes (e.g., symptoms, r = -.304) [19]. This methodology showcases the potential of computational approaches to expand psychometric assessment capabilities while maintaining rigorous validation standards.

Table 3: Research Reagent Solutions for Psychometric Validation

| Tool/Resource | Function | Application Example |

|---|---|---|

| Statistical Software (R, SPSS, Mplus) | Data analysis for factor analysis, reliability testing, and model fitting | Conducting confirmatory factor analysis to establish construct validity [15] |

| COSMIN Guidelines | Systematic methodology for assessing measurement properties of health-related outcome measures | Evaluating psychometric properties of MCI screening tools [17] |

| Item Response Theory (IRT) Models | Advanced psychometric approach relating item characteristics to latent traits | Analyzing test results using Rasch model to examine item difficulty and person ability [14] |

| Integrated Practice Management Systems | Digital platforms with built-in assessments and score tracking | Administering and interpreting psychometric tests within clinical workflow [13] |

| Validated Measure Databases (APA, NIH) | Repositories of established measurement tools with documented psychometric properties | Identifying appropriate comparator instruments for criterion validity studies [13] |

The validation of cognitive terminology measurement scales demands meticulous attention to psychometric properties throughout the development process. From initial construct definition through instrument refinement and norming, each validation phase contributes to the overall scientific integrity of the resulting tool. As cognitive assessment continues to play a crucial role in both basic research and applied drug development, adherence to robust psychometric principles remains fundamental to generating valid, reliable, and clinically meaningful data.

Future directions in psychometric validation will likely incorporate more sophisticated computational approaches, as demonstrated by LLM applications [19], while maintaining focus on the fundamental properties of validity, reliability, and appropriate normative standards. By implementing the comprehensive validation blueprint outlined in this guide, researchers can ensure their cognitive measurement instruments meet the rigorous standards required for advancing scientific understanding and therapeutic development.

Clinical research stands at a pivotal crossroads, facing a critical paradox: as scientific complexity accelerates, the foundational instruments measuring cognitive terminology and patient outcomes remain fragmented. This guide objectively examines the pressing need for harmonized instruments in clinical research, comparing the current disparate landscape with emerging standardized approaches. Through analysis of experimental data and validation methodologies, we demonstrate how harmonization failures impede research efficiency, data comparability, and ultimately drug development timelines. The evidence reveals that standardized instruments, when properly validated and implemented, significantly outperform ad-hoc measures in reliability, cross-study utility, and regulatory compliance. For researchers, scientists, and drug development professionals, this analysis provides a strategic framework for selecting and implementing harmonized assessment tools that transform clinical research infrastructure from a bottleneck into a catalyst for discovery.

The Clinical Research Instrumentation Crisis: A Landscape of Disparity

The clinical research ecosystem operates with profound instrumentation disparities that compromise data integrity and research efficiency. Recent surveys of clinical research professionals reveal that nearly half of site staff describe their working relationships as "complicated," while only 31% characterize site-CRO interactions as collaborative [20]. This collaboration deficit directly impacts measurement harmonization, as fragmented relationships perpetuate idiosyncratic assessment approaches.

The operational burden of this disparity is quantifiable and severe. Research coordinators waste up to 12 hours weekly on redundant data entry across an average of 22 different systems per trial [20]. Approximately 60% of site staff regularly copy data between systems, multiplying error risks and compromising data integrity. This instrumentation chaos creates tangible business impacts: protocol deviations stemming from poor communication and insufficient training remain the top cause of FDA Warning Letters [20].

The technology meant to streamline trials often exacerbates these problems. Site and sponsor systems plus trial vendor technologies force sites to juggle numerous systems with unique authentication requirements, creating what one industry leader describes as "more complex, less connected" research environments [20]. Only 29% of sites report adequate training on new technologies and procedures, creating a competence gap that further undermines measurement reliability [20].

Comparative Analysis: Harmonized vs. Traditional Assessment Approaches

Performance Metrics Comparison

Table 1: Quantitative Comparison of Assessment Instrument Approaches

| Performance Metric | Traditional Ad-hoc Instruments | Harmonized Standardized Instruments | Experimental Evidence |

|---|---|---|---|

| Data Collection Efficiency | 12 hours/week redundant entry [20] | Estimated 40-60% reduction via CDASH implementation [21] | Time-motion studies across 200+ research sites |

| Error Rates | 60% regularly copy data between systems [20] | Structured protocols reduce deviations by 30% [22] | FDA Warning Letter analysis [20] |

| System Interoperability | Sites juggle 22+ systems per trial [20] | CDISC standards enable cross-system data exchange [23] | REDCap CDASH implementation metrics [21] |

| Training Adequacy | Only 29% of sites adequately trained [20] | Standardized instruments reduce training burden by 50% [21] | Site staff competency assessments |

| Reliability (Psychometric) | Variable, often unreported | Cronbach's α >0.8 achieved through validation [24] [4] | Pilot testing with 518 participants [4] |

Validation Rigor Comparison

Table 2: Methodological Comparison of Validation Approaches

| Validation Component | Traditional Scale Development | Best Practice Harmonized Approach | Key Differentiators |

|---|---|---|---|

| Item Generation | Often ad-hoc or literature-based only | Combines deductive (literature) and inductive (qualitative) methods [24] | Comprehensive construct coverage |

| Content Validation | Limited expert review | Diverse expert panels + target population evaluation [25] | Enhanced relevance and clarity |

| Psychometric Testing | Basic reliability measures | Multi-phase testing: dimensionality, reliability, validity [24] | Robust evidence of measurement quality |

| Stakeholder Engagement | Limited researcher input | Broad engagement: registry holders, EHR developers, clinicians [26] | Real-world implementation focus |

| Cross-system Compatibility | Minimal standardization | Mapping to standardized terminologies (CDISC, HL7) [26] [23] | Semantic interoperability |

Experimental Protocols for Instrument Validation

Protocol 1: Psychometric Validation Framework

The gold standard for validating harmonized instruments employs a structured, multi-phase methodology encompassing both classical and modern psychometric techniques [25]. The framework comprises three core phases spanning nine distinct steps, with iterative refinement throughout the process [24]:

Phase 1: Item Development

- Step 1: Domain Identification and Item Generation: Clearly articulate the construct domain through comprehensive literature review and theoretical grounding. Combine deductive (theory-driven) and inductive (qualitative data from target population) methods to generate initial item pools [24]. Best practices recommend generating an initial item pool at least twice as large as the desired final scale [24].

- Step 2: Content Validity Assessment: Convene diverse expert panels including content experts, methodologists, and target population representatives to evaluate item relevance, clarity, and comprehensiveness [4] [25]. Document content validity indices quantitatively.

Phase 2: Scale Construction

- Step 3: Pre-testing: Conduct cognitive interviews with target respondents to identify interpretation difficulties, terminology issues, and response pattern anomalies [24].

- Step 4: Survey Administration: Deploy the preliminary instrument to a sufficiently large sample (N>300 recommended) representing the target population diversity [4].

- Step 5: Item Reduction: Employ both statistical criteria (item-total correlations, factor loadings) and substantive judgment to eliminate problematic items while maintaining content coverage [24].

- Step 6: Latent Factor Extraction: Use exploratory factor analysis with parallel analysis to determine the underlying factor structure without premature constraints [25].

Phase 3: Scale Evaluation

- Step 7: Dimensionality Testing: Confirm the factor structure through confirmatory factor analysis with goodness-of-fit indices (CFI >0.90, TLI >0.90, RMSEA <0.08) [25].

- Step 8: Reliability Assessment: Evaluate internal consistency (Cronbach's α >0.70), test-retest reliability (ICC >0.70), and alternative forms reliability where appropriate [24].

- Step 9: Validity Testing: Establish evidence for construct validity (convergent, discriminant), criterion validity (concurrent, predictive), and known-groups validity [25].

Protocol 2: Data Harmonization Experimental Methodology

The AHRQ Outcomes Measures Framework (OMF) provides a validated experimental protocol for harmonizing existing instruments across clinical domains [26]. This methodology demonstrates how to achieve semantic interoperability between disparate measurement systems:

Experimental Setting: Convene clinical topic-specific working groups with broad stakeholder representation including registry holders, EHR developers, policymakers, and clinicians [26].

Intervention Protocol:

- Definition Inventory: Document and compare clinical outcome definitions currently in use across multiple registries and research networks.

- Consensus Development: Facilitate structured discussions to identify common elements and reconcile definitional differences through modified Delphi techniques.

- Terminology Mapping: Map narrative definitions to standardized terminologies (SNOMED CT, LOINC) to produce implementable data element libraries [26] [23].

- Pilot Implementation: Test harmonized definitions in real-world settings through bidirectionial data exchange between registries and clinical sites [26].

Outcome Measures:

- Primary Endpoint: Degree of definition alignment measured by percentage of data elements achieving stakeholder consensus.

- Secondary Endpoints: Implementation feasibility assessed through site adoption rates; data quality measured by error rate reduction; interoperability quantified through successful data exchanges.

Experimental Controls: Compare pre- and post-harmonization outcomes using historical controls from the same clinical domains, measuring protocol deviation rates, data completeness, and cross-study comparability metrics.

Standards Harmonization: Implementation Pathways

Interoperability Framework

Successful harmonization requires navigating complex standards ecosystems. The Biomedical Research Integrated Domain Group (BRIDG) model represents a comprehensive approach to semantic interoperability, harmonizing major standards including CDISC for research and HL7 for healthcare [23]. This collaborative initiative provides terminology and language standards that literally "bridge" medical records and medical research through a shared protocol model.

Regulatory Compliance Integration

The 2025 regulatory landscape mandates modernization through three key developments that directly impact instrument harmonization [22]:

- ICH E6(R3) Finalization: Emphasizes proportionate, risk-based quality management and data integrity across all modalities.

- EU Clinical Trials Regulation: Requires all EU trials to operate under the centralized CTIS portal with increased transparency.

- FDA Guidance on DCT, AI, and Digital Health: Formally defines decentralized trial elements and provides frameworks for AI model validation.

These regulatory shifts transform harmonization from an optional enhancement to a compliance necessity. The ICH M11 structured protocol template—a harmonized, machine-readable format—exemplifies this transition, enabling streamlined protocol authoring, budgeting, and data integration when properly implemented [22].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Instrument Harmonization

| Tool Category | Specific Solutions | Primary Function | Implementation Considerations |

|---|---|---|---|

| Data Collection Platforms | REDCap with CDASH libraries [21] | Electronic data capture with built-in standards | 34 CDASH Foundational eCRFs available for import |

| Terminology Standards | SNOMED CT, LOINC [23] | Semantic interoperability for clinical concepts | Requires mapping from local terminologies |

| Outcome Measure Repositories | AHRQ Outcome Measures Framework [26] | Standardized outcome libraries across clinical domains | Covers atrial fibrillation, asthma, depression, cancer |

| Statistical Analysis Tools | R packages: psych, lavaan, ggplot2 [27] | Psychometric analysis and validation | Open-source with extensive validation scripting |

| Standards Harmonization Models | BRIDG Model [23] | Semantic bridging between research and care | Steep learning curve but comprehensive coverage |

| Regulatory Guidance | FDA CDISC requirements [22] | Compliance with submission standards | SDTM v2.0 and SDTMIG v3.4 updates pending |

The evidence for instrument harmonization in clinical research is compelling and multidimensional. From operational metrics demonstrating 12 hours weekly wasted on redundant data entry [20] to psychometric evidence showing enhanced reliability through structured validation [24] [25], the case for standardization is overwhelming. The AHRQ Outcomes Measures Framework demonstrates that harmonization is feasible across diverse clinical domains, having successfully developed standardized libraries for atrial fibrillation, asthma, depression, non-small cell lung cancer, and lumbar spondylolisthesis [26].

For researchers, scientists, and drug development professionals, the path forward requires strategic adoption of harmonized instruments through several key actions: First, prioritize instruments with demonstrated psychometric properties and standards alignment rather than ad-hoc measures. Second, implement the CDISC CDASH standards through accessible platforms like REDCap that reduce adoption barriers [21]. Third, engage early with regulatory modernization initiatives like ICH M11 structured protocols that transform compliance from a burden to a competitive advantage [22].

The harmonization gap in clinical research represents both a critical challenge and unprecedented opportunity. By closing this gap through rigorous instrument validation, strategic standards implementation, and cross-stakeholder collaboration, the research community can accelerate the development of life-changing therapies while enhancing scientific rigor. In an industry defined by complexity, those who simplify and standardize their measurement approaches will lead the next decade of medical innovation.

From Theory to Practice: Methodological Strategies for Robust Scale Validation

In the rigorous world of cognitive terminology measurement and drug development, establishing the validity of assessment tools is paramount for scientific progress and patient outcomes. Factorial validity represents a fundamental component of construct validation, providing empirical evidence that a measurement scale's internal structure aligns with its theoretical foundations. Within this framework, Confirmatory Factor Analysis (CFA) has emerged as a powerful statistical methodology for testing hypothesized measurement structures against empirical data [28]. Unlike exploratory approaches, CFA allows researchers to specify, a priori, the proposed relationships between observed variables and their underlying theoretical constructs, delivering robust evidence about whether an instrument genuinely measures what it purports to measure [29].

This guide objectively compares CFA against alternative methodological approaches for establishing factorial validity, examining their respective applications, performance characteristics, and suitability for validating cognitive measurement scales in pharmaceutical and clinical research settings. We present experimental data and protocols to inform methodological selection, recognizing that appropriate analytical choices strengthen scale validation and consequently enhance the reliability of cognitive assessment in clinical trials and therapeutic development.

Comparative Methodologies for Establishing Factorial Validity

Analytical Approaches: Confirmatory Factor Analysis, Exploratory Factor Analysis, and Rasch Analysis

Researchers employ several multivariate techniques to investigate the latent structure of assessment instruments. The table below provides a systematic comparison of the three primary methodologies used to establish factorial validity.

Table 1: Comparison of Methodologies for Establishing Factorial Validity

| Feature | Confirmatory Factor Analysis (CFA) | Exploratory Factor Analysis (EFA) | Rasch Analysis |

|---|---|---|---|

| Primary Objective | Test a pre-specified factor structure hypothesis [29] | Discover the underlying factor structure without strong prior hypotheses [28] | Evaluate item fit and person ability against a unidimensional measurement model [5] |

| Theoretical Foundation | Strong theoretical or empirical basis required before analysis | Minimal theoretical constraints; data-driven structure identification | Based on item response theory with specific mathematical models |

| Model Specification | Requires explicit specification of factor-item relationships and covariance structure [29] | No pre-specified factor-item relationships; all items can load on all factors | Assumes a probabilistic relationship between person ability and item difficulty |

| Key Outputs | Model fit indices (e.g., CFI, RMSEA, SRMR), factor loadings, modification indices [29] | Factor loadings, eigenvalues, variance explained, scree plot | Item fit statistics (infit/outfit), person reliability, item difficulty hierarchy [5] |

| Best Application Context | Late-stage scale validation and refinement where theoretical structure exists [30] | Early scale development to explore potential dimensionalities | Developing unidimensional scales with hierarchical properties, especially in cognitive assessment [5] |

Performance Comparison: Empirical Evidence from Validation Studies

Recent scale development initiatives across diverse domains provide experimental data on the performance of these methodological approaches. The following table synthesizes quantitative findings from published validation studies, highlighting how different analytical techniques contribute to establishing factorial validity.

Table 2: Experimental Data from Scale Validation Studies Employ Different Factorial Validation Methods

| Study/Instrument | Domain | Sample Size | Methodology | Key Quantitative Results | Reliability Metrics |

|---|---|---|---|---|---|

| Innovative Work Behavior Scale [30] | Organizational Psychology | 200 | CFA | Excellent model fit; All factor loadings statistically significant (p<0.05) | Composite Reliability (CR)=0.94; Average Variance Extracted (AVE)=0.85 |

| HKCAS-T Cognition Scale [5] | Child Development | 282 | Rasch Analysis | Supported unidimensionality; Item goodness-of-fit within acceptable range | Internal Consistency=0.98; Test-retest Reliability=0.98 |

| Assessment of Size and Scale Cognition [4] | Science Education | 518 | Iterative Validation (EFA then CFA) | Final model demonstrated adequate fit across multiple indices | High internal consistency reported across subscales |

| Upward State Social Comparison Scales [31] | Social Media Psychology | 462 | CFA | Good model fit; Significant factor loadings (p<0.001) for all retained items | Demonstrated good reliability, convergent, and discriminant validity |

| System Understandability Scale [32] | Human-Computer Interaction | 307 (Study 2) 347 (Study 3) | EFA (Study 2) then CFA (Study 3) | EFA: 4 factors extracted; CFA: Confirmed structure with good fit | Scale showed significant correlation with trust, usage intention, and satisfaction |

Experimental Protocols for Confirmatory Factor Analysis

Standardized CFA Workflow for Scale Validation

The diagram below illustrates the systematic protocol for implementing CFA in scale validation research, from preliminary preparation through final model interpretation.

Protocol Implementation and Key Considerations

Step 1: Theoretical Model Specification – Based on strong theoretical foundations or prior exploratory research, researchers explicitly define the hypothesized factor structure, specifying which items load on which latent constructs and whether factors are correlated or orthogonal [28]. This stage requires precise specification of the measurement model before empirical testing.

Step 2: Data Collection and Preparation – Adequate sample size is critical for CFA stability; a minimum of 200 participants is generally recommended, with larger samples needed for complex models [30] [5]. Data should be screened for multivariate normality, outliers, and missing values, as violations can distort parameter estimates and fit statistics.

Step 3: Model Identification – The researcher must ensure the model is statistically identified, meaning there is enough information to estimate all parameters. A common rule requires at least three indicators per factor, though more complex models may need additional constraints.

Step 4: Parameter Estimation – Maximum likelihood estimation is most common, but robust variants should be used with non-normal data. Estimation yields factor loadings, factor correlations, and error variances, which indicate how well each item measures its intended construct [30].

Step 5: Model Fit Assessment – Multiple fit indices should be examined: CFI (Comparative Fit Index) > 0.90 (preferably > 0.95), RMSEA (Root Mean Square Error of Approximation) < 0.08 (preferably < 0.06), and SRMR (Standardized Root Mean Square Residual) < 0.08 [29]. No single index should determine model adequacy.

Step 6: Model Modification – If fit is inadequate, modification indices may suggest theoretically justifiable improvements (e.g., allowing correlated errors between similar items). However, modifications must be theoretically defensible to avoid capitalizing on chance characteristics of the sample [29].

Step 7: Result Interpretation and Reporting – Finally, researchers interpret the validated factor structure, report all relevant parameter estimates and fit statistics, and discuss implications for measurement validity within their research context [28].

The Scientist's Toolkit: Essential Reagents for Factor Analysis

Table 3: Essential Research Reagents for Factor Analysis in Scale Validation

| Reagent / Tool | Function / Purpose | Implementation Considerations |

|---|---|---|

| Specialized Statistical Software | Provides computational algorithms for factor extraction, estimation, and model fitting | Choices include R (lavaan package), Mplus, SPSS AMOS, Stata, or SAS; selection depends on model complexity and researcher expertise |

| Gold Standard Comparison Instruments | Enables assessment of criterion validity by correlating new scale scores with established measures [28] | Must be validated in similar populations and contexts; provides benchmark for concurrent validity assessment |

| Sample Size Calculator | Determines minimum participants needed for adequate statistical power in factor analysis | Generally requires 5-20 participants per estimated parameter; complex models need larger samples [5] |

| Fit Indices Suite | Quantifies how well the hypothesized model reproduces the observed covariance matrix | Should include absolute (RMSEA, SRMR), comparative (CFI, TLI), and parsimony-adjusted indices for comprehensive assessment [29] |

| Modification Indices | Identifies specific model parameters that would improve fit if freed or added | Should be used cautiously with strong theoretical justification to avoid overfitting sample-specific variance [29] |

The establishment of factorial validity through Confirmatory Factor Analysis represents a rigorous approach to scale validation that is particularly well-suited to advanced stages of instrument development where theoretical foundations are strong. CFA provides powerful hypothesis-testing capabilities that exceed those available through exploratory methods, delivering robust evidence of a scale's internal structural validity [29].

However, the comparative analysis presented in this guide demonstrates that CFA, EFA, and Rasch analysis each occupy distinct methodological niches within the validation ecosystem. EFA remains invaluable during preliminary scale development when underlying structures are poorly understood, while Rasch analysis offers particular advantages for creating probabilistic measurement models with hierarchical properties [5].

For researchers validating cognitive terminology measurement scales in pharmaceutical and clinical contexts, where measurement precision directly impacts therapeutic decisions and regulatory evaluations, a sequential approach that strategically employs each method throughout the validation lifecycle often yields the most psychometrically sound and clinically useful assessment instruments.

In the validation of cognitive terminology measurement scales, reliability refers to the consistency and reproducibility of the scores obtained from an instrument. For researchers, clinicians, and drug development professionals, understanding and quantifying reliability is paramount, as it ensures that measurements are stable, precise, and free from random error, thereby providing a trustworthy foundation for scientific conclusions and clinical decisions. This guide objectively compares key reliability estimation methods—focusing on internal consistency and test-retest reliability—by presenting experimental data and protocols from contemporary validation studies. The framework for this comparison is grounded in established standards for educational and psychological testing, which emphasize that reliability is a property of test scores rather than the test itself and must be validated with each use in specific populations [25].

Reliability forms the bedrock of validity; a measure cannot validly assess a construct if it does not first do so consistently. In the context of cognitive measurement, this is particularly crucial when scales are used to track symptom progression in clinical trials or to evaluate the efficacy of pharmacological interventions. The methodologies examined here are applied across psychiatric, psychological, and behavioral sciences to ensure that scales measuring latent constructs such as arousal, cognitive functioning, or everyday hearing performance yield dependable quantitative data [25] [33].

Core Reliability Estimation Methods: Theory and Protocols

Internal Consistency Reliability

Internal consistency reliability assesses the extent to which items on a scale measure the same underlying construct. It is typically quantified using Cronbach's alpha (α), where values range from 0 to 1. Higher values indicate that the items are highly correlated and consistently measure the same latent variable. Best practices suggest that α ≥ 0.70 is acceptable for research purposes, though values above 0.80 are preferable for clinical applications [25] [34]. The protocol for establishing internal consistency involves administering the scale to a large, representative sample on a single occasion. Researchers then calculate inter-item correlations and the overall Cronbach's alpha coefficient. Modern approaches also use factor analysis (both exploratory and confirmatory) to examine the dimensional structure and ensure that the calculated internal consistency is not artificially inflated by redundant items or multidimensional structures [24] [25].

Test-Retest Reliability

Test-retest reliability evaluates the stability of a measurement instrument over time. It is calculated by administering the same scale to the same participants on two separate occasions and computing the correlation coefficient between the two sets of scores. A high correlation (typically r ≥ 0.70 for group-level comparisons) indicates that the scale produces stable results and is not overly susceptible to random fluctuations [33] [34]. The critical methodological consideration for test-retest reliability is the inter-test interval. This period must be short enough that the underlying construct has not likely changed, yet long enough to prevent recall bias. The specific interval varies by construct; for stable traits, intervals of several weeks are common. The statistical analysis involves calculating an intraclass correlation coefficient (ICC) for continuous data or a Cohen's kappa for categorical data, which provides a more robust measure of agreement than a simple Pearson correlation [33].

Experimental Data and Comparative Analysis

The following data, synthesized from recent peer-reviewed studies, provides a quantitative comparison of reliability coefficients for different measurement scales.

Table 1: Comparative Reliability Metrics from Recent Studies

| Instrument (Study) | Construct Measured | Internal Consistency (α) | Test-Retest Reliability (r) | Sample Size & Population |

|---|---|---|---|---|

| HFEQ-SWE [33] | Hearing & Everyday Functioning | Total Score: "Satisfactory" | Total Score: Showed "stability over time" | 628 adults (30-96 years) with hearing loss |

| Pre-Sleep Arousal Scale (PSAS) [34] | Pre-Sleep Arousal (Meta-Analysis) | Total: 0.88 (0.86–0.90)Cognitive: 0.89 (0.88–0.90)Somatic: 0.80 (0.77–0.83) | Total: 0.87 (0.84–0.90)Cognitive: 0.80 (0.77–0.84)Somatic: 0.70 (0.67–0.74) | 9,354 participants across 27 studies |

| Assessment of Size and Scale Cognition (ASSC) [4] | Size and Scale Cognition | Evidence obtained via pilot testing and expert review. | -- | 518 first-year undergraduate students |

Analysis of Comparative Data

The data in Table 1 reveals several key patterns. The Pre-Sleep Arousal Scale (PSAS) demonstrates robust psychometric properties, with high internal consistency and excellent test-retest reliability for its total and cognitive subscales across a large, multinational sample [34]. This level of consistency is critical for clinical trials where detecting changes in pre-sleep arousal is a target outcome. The HFEQ-SWE study highlights that while total scores can be highly reliable, researchers must examine reliability at the subscale and item level, as variations can occur. Their use of Confirmatory Factor Analysis (CFA) to confirm the scale's construct is a modern best practice that strengthens validity arguments [33] [25]. The development of the ASSC illustrates the comprehensive iterative process of establishing reliability, involving content experts, graphic design specialists, and the target population to refine items before quantitative pilot testing [4].

The Scale Validation Workflow

The following diagram maps the modern, iterative workflow for developing and validating a measurement scale, integrating key reliability tests within a broader psychometric evaluation.

Diagram 1: Phased Scale Development and Validation Workflow

This workflow, synthesized from best-practice guidelines [24] [25], shows that reliability testing (Phase 4) is a culmination of rigorous prior work. It is not a standalone activity but depends on careful construct definition, item development, and pilot testing.

The Researcher's Toolkit: Essential Reagents for Reliability Studies

Table 2: Key Research Reagents and Methodological Solutions

| Tool/Resource | Primary Function | Application in Reliability Studies |

|---|---|---|

| Statistical Software (R, Mplus, SPSS) | Data analysis and psychometric computation | Calculating Cronbach's α, ICC, conducting Exploratory/Confirmatory Factor Analysis (EFA/CFA). |

| Participant Recruitment Platform | Access to target population samples | Sourcing large, representative samples for survey administration and test-retest protocols. |

| Expert Review Panel | Content and face validity assessment | Ensuring items are relevant and clearly represent the target construct before reliability testing. |

| Digital Survey Administration Tools | Efficient and consistent data collection | Standardizing scale administration for large groups, often a requirement for large sample sizes. |

| Reporting Guidelines (e.g., PRISMA for meta-analysis) | Standardizing study protocol and reporting | Ensuring transparency and replicability, as seen in the PSAS meta-analysis [34]. |

Quantifying reliability through internal consistency and test-retest methods is a fundamental, non-negotiable component of validating cognitive measurement scales. The experimental data and protocols presented demonstrate that while established thresholds for reliability coefficients exist (e.g., α > 0.7), their achievement is the result of a meticulous, multi-phase development process. Modern estimation goes beyond single metrics, incorporating factor analysis and generalizability theory to provide a nuanced understanding of a scale's performance. For researchers and drug development professionals, selecting an instrument with robust, empirically demonstrated reliability is crucial for generating trustworthy data that can inform theory, clinical practice, and the development of effective interventions.

The rapid integration of digital health technologies has accelerated the shift from traditional in-person cognitive assessments toward remote and self-administered formats. This transition, while increasing accessibility, introduces critical questions about the psychometric equivalence of these digital adaptations. Validating cognitive terminology measurement scales for remote administration is not merely a technical exercise but a fundamental requirement for ensuring the reliability of data collected in research and clinical trials. Without rigorous validation, differences in scores obtained remotely could stem from administration method artifacts rather than true cognitive changes, potentially compromising diagnostic accuracy and treatment efficacy evaluation in pharmaceutical development.

This guide objectively compares the performance of remote and tablet-based cognitive assessments against their traditional in-person counterparts, synthesizing current experimental data to inform researchers and drug development professionals. The analysis focuses specifically on measurement validity, reliability metrics, and administration protocols across multiple cognitive domains and patient populations.

Comparative Performance Data: Remote Versus In-Person Administration

Quantitative Comparison of Assessment Modalities

Table 1: Performance Differences Between Remote and In-Person Cognitive Assessment

| Assessment Tool / Domain | Population | Remote Administration Method | Key Performance Differences | Statistical Significance |

|---|---|---|---|---|

| ECASc (ALS Cognitive Screen) | People with ALS & Controls | Videoconferencing with document camera | Remote administration associated with better total scores [35] | Significant (p-value not specified) [35] |

| ALS-CBSc (ALS Cognitive Screen) | People with ALS & Controls | Videoconferencing with adapted response format | Remote administration associated with better total scores [35] | Significant (p-value not specified) [35] |

| Mini-ACE (Cognitive Screen) | People with ALS & Controls | Videoconferencing with adapted response format | No significant difference between administration modes [35] | Not Significant [35] |

| MCCB (Trail Making A) | Severe Mental Illness | Remote administration | Remote participants performed significantly worse [36] | Significant (p-value not specified) [36] |

| MCCB (HVLT-R Verbal Learning) | Severe Mental Illness | Remote administration | Remote participants performed significantly worse [36] | Significant (p-value not specified) [36] |

| MCCB (Animal Fluency) | Severe Mental Illness | Remote administration | No significant difference between administration formats [36] | Not Significant [36] |

| MCCB (Letter-Number Span) | Bipolar Disorder | Remote administration | Remote participants performed significantly better [36] | Significant (p-value not specified) [36] |

| Smartphone Memory Tasks | Adult General Population | Self-administered smartphone app | Small, subtest-specific differences; Picture Memory higher remotely, Face Memory lower remotely [37] | Significant (p<0.05) with small effect sizes (η²≤.014) [37] |

| BrainCheck Digital Battery | Cognitively Healthy Adults (52-76 years) | Self-administered on personal devices (iPad, iPhone, laptop) | No significant difference between self- vs. RC-administered testing [38] | Not Significant; ICC 0.59-0.83 [38] |

Domain-Specific Vulnerability to Administration Effects

The comparative data reveal that cognitive domains are not uniformly affected by remote administration. The pattern suggests that tasks requiring visual-motor coordination and processing speed (e.g., Trail Making Test) often show performance decrements in remote settings, potentially due to technological latency or interface differences [36]. Conversely, verbal fluency tasks (e.g., Animal Fluency) demonstrate robust equivalence across administration modalities, as they depend less on visual stimulus presentation or motor response precision [36].

Memory tasks present a more complex pattern. While some studies found reduced performance on verbal learning measures remotely [36], others noted enhanced performance on visual memory tasks, potentially due to environmental comforts or, concerningly, increased opportunity for external enhancement strategies in unproctored settings [37]. The differential vulnerability appears linked to task characteristics: memory tasks with extended encoding periods may be more susceptible to external aids, whereas working memory tasks with immediate, speeded responses show minimal administration effects [37].

Experimental Protocols for Validation Studies

Methodological Framework for Validation

The validation of remote cognitive assessments requires carefully controlled studies that directly compare administration modalities while maintaining scientific rigor.

Table 2: Key Methodological Approaches in Validation Studies

| Study Component | Traditional In-Person Protocol | Remote Administration Protocol | Validation Considerations |

|---|---|---|---|

| Participant Recruitment | Clinic-based, community centers [5] | Online advertisements, social media, telehealth platforms [35] | Selection bias, technological access, digital literacy |

| Test Environment | Controlled clinic/lab setting [5] | Home environment via videoconferencing or self-administered apps [37] [35] | Environmental distractions, technical variability, standardized setup |

| Stimulus Presentation | Physical test materials, paper-and-pencil [5] [35] | Screen sharing, document cameras, digital interfaces [37] [35] | Visual fidelity, display size, resolution, timing precision |

| Response Collection | Direct observation, written responses [5] | Video observation, chat functions, digital inputs [35] | Response latency measurement, motor response adaptation |

| Administration Oversight | In-person examiner [5] | Remote proctoring, self-administered with tech support [38] | Standardization of assistance, troubleshooting protocols |

| Reliability Assessment | Test-retest (4-week interval), inter-rater reliability [5] | Same-device test-retest, comparison to proctored session [38] | Practice effects, device consistency, technical stability |

Validation Workflow for Remote Cognitive Batteries

The following workflow diagram illustrates the comprehensive validation process for remote cognitive assessment tools:

Research Reagent Solutions for Digital Cognitive Assessment

Table 3: Essential Materials and Platforms for Remote Cognitive Assessment Research

| Tool Category | Specific Examples | Function in Research | Key Considerations |

|---|---|---|---|

| Videoconferencing Platforms | Zoom, Skype [35] | Enable real-time remote proctoring and stimulus presentation via screen sharing or document cameras | Security compliance, video/audio quality, participant accessibility |

| Digital Cognitive Batteries | BrainCheck [38], Mobile Toolbox (MTB) [37], MyCog Mobile (MCM) [37] | Provide standardized, self-administered cognitive tests across multiple domains | Device compatibility, automated scoring, practice effect minimization |

| Document Cameras/Visualizers | Thustand USB Document Camera [35] | Present physical test materials remotely when digital rights restrictions apply | Resolution quality, positioning stability, lighting requirements |

| Electronic Health Record Systems | EHR Integrations [38] | Facilitate clinical workflow integration and data transfer for pragmatic trials | Interoperability standards, data security, workflow compatibility |

| Psychometric Analysis Tools | Rasch analysis [5], CFA/EFA [39], ICC calculations [38] | Establish measurement properties, reliability, and validity of remote assessments | Sample size requirements, model assumptions, dimensionality testing |

| Device-Agnostic Platforms | Web-based assessment platforms [38] | Ensure consistent testing experience across different devices and operating systems | Responsive design, input method standardization, performance calibration |

Critical Considerations for Research Implementation

Methodological and Practical Implications

The validation evidence suggests that remote cognitive assessments cannot be assumed equivalent to their in-person counterparts without empirical verification. Researchers must consider several critical factors when implementing digital assessments:

Population-Specific Effects: Administration mode effects may vary across clinical populations. For instance, individuals with severe mental illness showed different patterns of performance compared to healthy controls on remotely administered MCCB tests [36]. Similarly, people with ALS demonstrated different mode effects across different screening tools [35].

Technological Standardization: The device type, screen size, and input method must be standardized or statistically controlled. Research indicates that assessments can be administered across smartphones, tablets, and laptops, but device characteristics may influence performance [37] [38].

Environmental Uncontrollability: Remote administration introduces variability in testing environments that researchers cannot control. This includes potential distractions, connectivity issues, and differences in physical setup that may influence performance [37].

Ethical and Security Considerations: Data privacy, informed consent procedures, and cybersecurity measures require special attention in remote assessments, particularly when collecting sensitive cognitive data from vulnerable populations [35] [38].

The growing evidence base supporting remote cognitive assessment reflects a paradigm shift in neuropsychological measurement. While not all traditional tests directly translate to digital formats, rigorous validation methodologies are establishing a new generation of cognitive assessment tools that balance psychometric rigor with practical accessibility. For pharmaceutical researchers and clinical trialists, these validated remote tools offer opportunities to expand trial participation, increase assessment frequency, and potentially reduce site-based assessment costs, while maintaining scientific validity in cognitive outcome measurement.

In an era of globalized research, scientists increasingly administer psychological, cognitive, and behavioral assessment scales across diverse cultural and linguistic groups. Measurement invariance testing provides the methodological foundation for ensuring that these instruments measure the same underlying constructs across different populations, thereby enabling valid cross-cultural comparisons [40]. Without establishing measurement invariance, researchers cannot determine whether observed score differences reflect true disparities in the construct of interest or merely measurement artifacts stemming from cultural differences in item interpretation [40] [41]. This guide provides a comprehensive framework for testing measurement invariance, with special consideration for validating cognitive terminology measurement scales in transnational research contexts, including pharmaceutical trials and cross-cultural clinical studies.

The fundamental premise of measurement invariance is that the relationship between observed item scores and the underlying latent construct should be equivalent across groups. When this psychometric equivalence is not established, comparisons become ambiguous and potentially misleading [40]. For instance, in cognitive assessment, an item intended to measure working memory might inadvertently tap into cultural knowledge in one group but not another, fundamentally altering what is being measured. The consequences of such measurement noninvariance can derail theoretical development, clinical decisions, and the validation of cross-cultural research findings [40] [25].

Theoretical Foundations: Understanding Measurement Invariance

The Conceptual Framework

Measurement invariance assesses whether a construct has the same psychological meaning and measurement properties across different groups or across time [40]. In cross-cultural research, this ensures that "the construct has a different structure or meaning to different groups" is false, enabling meaningful comparisons [40]. The process establishes that scores from different cultural groups have the same meaning, which is typically tested by verifying the equivalence of the measurement model across groups [42].

The testing of measurement invariance has evolved significantly over the past fifty years, with statistical techniques becoming more accessible to researchers around the turn of the 21st century [40]. Methodologists have since developed increasingly sophisticated approaches for testing invariance, particularly within the structural equation modeling framework [40]. Recent advancements include more flexible techniques such as alignment optimization and Bayesian estimation, which are particularly useful when analyzing data from many groups [41].

Levels of Measurement Invariance