Ensuring Reliability in Cognitive Terminology Classification: Methods and Applications in Biomedical Research

This article provides a comprehensive framework for establishing reliability in cognitive terminology classification systems, a critical component for valid assessment in biomedical and clinical research.

Ensuring Reliability in Cognitive Terminology Classification: Methods and Applications in Biomedical Research

Abstract

This article provides a comprehensive framework for establishing reliability in cognitive terminology classification systems, a critical component for valid assessment in biomedical and clinical research. Aimed at researchers, scientists, and drug development professionals, it explores the foundational principles of reliability testing, details methodological approaches for application, addresses common challenges and optimization strategies, and presents rigorous validation and comparative analysis techniques. By synthesizing current methodologies with practical applications, this guide supports the development of robust, reliable cognitive classifications essential for advancing research in neurodegenerative diseases, clinical trials, and cognitive neuroscience.

The Critical Role of Reliability in Cognitive Classification for Research Validity

In the rigorous field of cognitive terminology classification research, the pursuit of reliable measurement is foundational to producing valid and reproducible findings. Reliability refers to the consistency of a measurement method—whether a test, survey, or observational rating—in producing stable results across different occasions, raters, or instrument items [1] [2]. For researchers and drug development professionals, establishing reliability is a critical prerequisite for ensuring that observed outcomes in clinical trials or cognitive screening tools genuinely reflect the construct under investigation, rather than random error or methodological artifact. This guide provides a comparative analysis of the three core reliability types—test-retest, internal consistency, and inter-rater agreement—detailing their experimental protocols, key metrics, and applications in cognitive research.

Core Concepts of Reliability

The following table defines and compares the three primary forms of reliability, outlining their core focus and typical applications.

Table 1: Core Types of Reliability in Research

| Type of Reliability | Core Question | What It Measures | Typical Application Context |

|---|---|---|---|

| Test-Retest [1] [2] | Will the measure yield a consistent result for the same subject over time? | The stability of a test or instrument across time. | Measuring stable traits like IQ [1] or color blindness [1]. |

| Internal Consistency [1] [3] | Do all the items within a single measurement instrument consistently measure the same construct? | The interrelatedness of items within a single test or questionnaire. | A multi-item customer satisfaction survey [1] or a personality scale [4]. |

| Inter-Rater Reliability [1] [5] | Do different raters or observers consistently assess the same phenomenon? | The degree of agreement between two or more independent raters. | Observational studies, such as researchers coding classroom behavior [1] or clinicians assessing wound healing [1]. |

Quantitative Comparison of Reliability Metrics

The evaluation of each reliability type involves specific statistical measures and acceptance thresholds, as summarized below.

Table 2: Quantitative Metrics and Benchmarks for Reliability

| Reliability Type | Common Statistical Measures | Interpretation & Benchmark for Good Reliability | Example Correlation Value |

|---|---|---|---|

| Test-Retest [6] [2] | Pearson's Correlation Coefficient (r) | A correlation of ≥ 0.80 is generally considered to indicate good reliability [6] [2]. | An IQ test administered twice might yield r = 0.85, indicating good stability [6]. |

| Internal Consistency [3] [2] [4] | Cronbach's Alpha (α), Split-Half Correlation | A Cronbach's Alpha value of ≥ 0.70 is often considered acceptable, though values closer to 1.0 indicate stronger consistency [3] [4]. | A well-designed empathy scale should have a Cronbach's Alpha above 0.70 [4]. |

| Inter-Rater Reliability [5] [2] | Cohen's Kappa (κ), Percent Agreement, Pearson's r (for continuous data) | Kappa values: >0.8 = strong, 0.6-0.8 = substantial. Percent agreement should be high (e.g., >85-90%) [5]. | Two researchers observing the same patient interactions might achieve 86% agreement [5]. |

Experimental Protocols for Assessing Reliability

Test-Retest Reliability Protocol

This protocol assesses the temporal stability of a measurement instrument.

- Initial Administration: The test or instrument is administered to a group of participants.

- Time Interval: A predetermined time interval is allowed to pass. This interval is critical and depends on the construct; it should be short enough that the trait is not expected to change meaningfully, but long enough to prevent recall from the first test from influencing the second (e.g., two weeks to one month for a stable trait like intelligence) [1] [5].

- Second Administration: The identical test is administered to the same group of participants under the same conditions.

- Statistical Analysis: Calculate the correlation (typically Pearson's r) between the scores from the two time points. A correlation of ≥ 0.80 generally indicates good test-retest reliability [6] [2].

Key Considerations:

- Practice Effects: Participants may perform better the second time simply due to familiarity with the test. To mitigate this, researchers can use different but equivalent versions of the test for the two administrations [6].

- Condition Consistency: The testing environment (e.g., time of day, lighting, instructions) must be as identical as possible to minimize the influence of external factors [1] [6].

Internal Consistency Reliability Protocol

This protocol evaluates whether all items in a test consistently measure the same underlying construct, without the need for repeated administration.

- Single Administration: The test or questionnaire, which contains multiple items designed to measure the same construct, is administered to a group of participants on one occasion.

- Statistical Analysis: Choose one of the following primary methods:

- Cronbach's Alpha: This is the most common method. It is mathematically equivalent to the average of all possible split-half correlations. The resulting coefficient (α) indicates the degree to which all items hang together [5] [2] [4].

- Split-Half Reliability: The test items are randomly split into two halves (e.g., odd-numbered vs. even-numbered items). A total score is computed for each half for every participant, and the correlation between these two sets of scores is calculated. This correlation is then adjusted using the Spearman-Brown prophecy formula to estimate the reliability of the full test [3] [5].

Key Considerations:

- Homogeneity of Construct: This method is only appropriate for unidimensional tests that aim to measure a single, unified trait [1] [3].

- Item Wording: Care must be taken to ensure all questions are based on the same theory and are clearly phrased to be understood consistently by all participants [1].

Inter-Rater Reliability Protocol

This protocol assesses the consistency of judgments between different observers or raters.

- Rater Training: All raters are trained using the same explicit criteria, definitions, and scoring rules for the observations or ratings they will be making. This is a critical calibration step [1] [5].

- Independent Observation: Raters independently observe the same event or score the same material (e.g., video recordings, written responses, patient behaviors).

- Data Collection: Each rater records their scores or categorizations without consulting the other raters.

- Statistical Analysis: The choice of statistic depends on the data type:

- Categorical Data: Use Cohen's Kappa (κ) or calculate simple percent agreement. Kappa is preferred as it accounts for agreement occurring by chance [5] [2].

- Continuous Data: Use Pearson's Correlation Coefficient (r) or an Intraclass Correlation Coefficient (ICC) to measure the consistency of quantitative ratings [5].

Key Considerations:

- Clear Operational Definitions: The variables and criteria for rating must be objectively and meticulously defined to minimize subjective interpretation [1].

- Ongoing Calibration: In long-term studies, periodic "recalibration" meetings should be held to discuss ratings and maintain consistency among raters over time [5].

Essential Research Reagent Solutions for Reliability Testing

The following table outlines key methodological components essential for designing and executing reliability studies in cognitive and clinical research.

Table 3: Key Reagents and Methodological Tools for Reliability Research

| Research 'Reagent' | Function in Reliability Testing | Exemplar Uses |

|---|---|---|

| Standardized Cognitive Batteries | Provides a validated, multi-item instrument ideal for assessing internal consistency and test-retest reliability. | The Quick Mild Cognitive Impairment (Qmci) screen [7] or the Mayer-Salovey-Caruso Emotional Intelligence Test (MSCEIT) [4]. |

| Digital Cognitive Tasks | Enables precise, automated measurement of cognitive constructs like processing speed, minimizing inter-rater variability. | A computerized Digit Symbol Substitution Task (e.g., Speeded Matching) used to detect cognitive impairment [7]. |

| Structured Behavioral Coding Schemes | Provides the explicit operational definitions and criteria required to establish high inter-rater reliability in observational studies. | A rating scale with clear criteria for assessing wound healing stages [1] or classroom behavior [1]. |

| Speech-Language Analysis Pipeline | A tool for extracting objective, quantifiable features (acoustic, linguistic) from speech samples, enhancing reliability. | Used in automated cognitive screening tools to analyze connected speaking tasks for indicators of cognitive impairment [7]. |

| Statistical Analysis Software (with specific libraries) | The computational engine for calculating key reliability statistics (Cronbach's α, Cohen's κ, Pearson's r, ICC). | Software like R or SPSS running psychometric packages to compute internal consistency for a new scale [4]. |

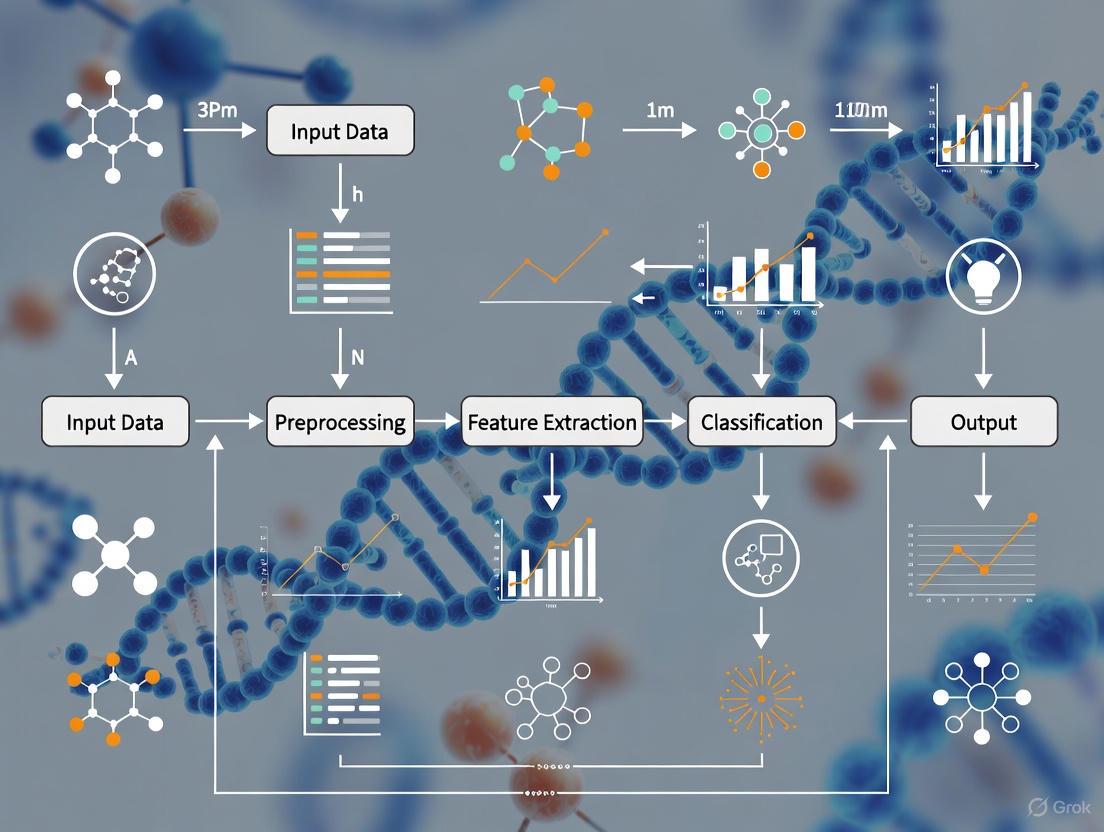

Visualizing the Reliability Testing Workflow

The following diagram illustrates the logical workflow for selecting and implementing the appropriate reliability assessment method in a research context.

For researchers and drug development professionals, a meticulous application of reliability testing is non-negotiable. Test-retest, internal consistency, and inter-rater agreement are not interchangeable concepts but complementary pillars of rigorous methodology. The choice of which to prioritize depends fundamentally on the nature of the measurement tool and the research question at hand. By adhering to the detailed experimental protocols, utilizing the appropriate statistical benchmarks, and leveraging modern research "reagents" like standardized digital tasks and automated analysis pipelines, scientists can ensure that their cognitive terminology classification research is built upon a foundation of consistent, reproducible, and therefore trustworthy measurement. This commitment to reliability is what ultimately allows for valid conclusions about the efficacy of new therapeutics and the accurate detection of cognitive states.

The precise classification of cognitive terminology is a cornerstone of both clinical neurological practice and modern therapeutic development. In clinical settings, reliable cognitive assessment enables accurate diagnosis and monitoring of conditions ranging from mild cognitive impairment to dementia. Within drug development, these classifications form the basis of clinical trial endpoints that determine treatment efficacy and regulatory approval. The reliability and validity of the methods used to classify and measure cognitive constructs are therefore paramount. This guide provides a comparative analysis of current methodologies, experimental protocols, and assessment tools used in cognitive terminology research, with a specific focus on their psychometric properties and applicability across neuropsychological and clinical trial contexts. The growing shift towards early intervention in diseases like Alzheimer's has further intensified the need for sensitive and meaningful endpoints that can detect subtle cognitive changes [8].

Comparative Analysis of Cognitive Assessment Modalities

Cognitive assessment tools vary significantly in their administration time, cognitive domains targeted, and psychometric properties. The table below provides a structured comparison of key assessment modalities used in both clinical practice and research.

Table 1: Comparison of Cognitive Assessment and Classification Modalities

| Modality / Tool | Primary Cognitive Domains Assessed | Administration Time | Reliability Considerations | Key Strengths | Key Limitations |

|---|---|---|---|---|---|

| NIH Toolbox Fluid Cognition Battery [9] | Episodic Memory, Working Memory, Attention, Cognitive Flexibility, Processing Speed | ~20-30 minutes | High internal consistency; Age-corrected standard scores | Comprehensive, multi-domain, computerized administration | Longer administration time; Primarily for in-person use |

| Trail Making Test B (TMTB) [9] | Executive Function, Visual Attention, Task Switching | <5 minutes | Sensitive to practice effects (test-retest) | Quick, widely used, low burden | Single domain focus; Can be influenced by motor speed |

| Clock Drawing Task (CLOX) [9] | Executive Function, Visuospatial Ability | ~5 minutes | Inter-rater reliability requires rater training [2] | Quick, low cost, sensitive to posterior cortical impairment | Subjective scoring requires trained raters |

| SA-BiLSTM Text Classification [10] | Semantic Content, Conceptual Relationships in Collaboration | Automated processing | High classification accuracy in experimental settings | Automated, scalable for large text datasets | Domain-specific (online knowledge collaboration) |

| One-Class Classification with Motor Tasks [11] | Global Cognitive Status via Gait, Fingertapping, Dual-tasks | Variable | High sensitivity (87.5%) for MCI detection [11] | Objective, uses motor-cognitive integration | Requires specialized equipment and analysis |

Experimental Protocols in Cognitive Classification Research

Protocol for Validation of Brief Cognitive Screens

Objective: To compare the performance and potential impairment classification of brief cognitive screening tools (TMTB, CLOX) against a comprehensive, multi-domain assessment (NIH Toolbox Fluid Cognition Battery) in a specific clinical population [9].

- Population: A population-based cohort of individuals with validated Systemic Lupus Erythematosus (SLE). Participants had a mean age of 46 years, were 92% female, and 82% Black [9].

- Methods:

- Trail Making Test B (TMTB): Participants were instructed to connect numbers and letters in alternating order as quickly as possible. The time to complete the test was recorded. Potential impairment was defined as an age- and education-corrected T-score <35 (>1.5 SD longer than the normative time) [9].

- Clock Drawing (CLOX): Participants were asked to draw a clock showing 1:45 (CLOX1) and then copy a pre-drawn clock (CLOX2). Clocks were scored on a 0-15 scale. Potential impairment was defined as CLOX1 <10 or CLOX2 <12 [9].

- NIH Toolbox Fluid Cognition Battery: This was administered in-person and includes five individual tests measuring episodic memory, working memory, attention, processing speed, and cognitive flexibility. An age-corrected standard score was generated, with potential impairment defined as a score <77.5 [9].

- Analysis: Researchers calculated the proportion of participants identified as potentially impaired by each test and examined the intercorrelation between the different measures.

Protocol for Hybrid Deep Learning Model in Text Classification

Objective: To develop and validate a hybrid deep learning model (SA-BiLSTM) for the fine-grained classification of cognitive-difference texts in online knowledge collaboration platforms [10].

- Dataset: The model was evaluated using a systematic experiment with a Baidu Encyclopedia dataset, which contains complex cognitive-difference texts generated through collaborative editing processes [10].

- Model Architecture:

- Bidirectional Long Short-Term Memory (BiLSTM): This component captures bidirectional contextual information and long-range dependencies in the text sequences [10].

- Self-Attention (SA) Mechanism: This component is integrated to assign different weights to words in a sequence, allowing the model to focus on the most informative features for classification and effectively mitigate semantic ambiguity [10].

- Experimental Design: The study included three evaluation aspects:

- Architectural Ablation Studies: Comparing variant structures to isolate the contribution of each model component.

- Comparative Analysis: Benchmarking the SA-BiLSTM model against mainstream baseline models, including FastText, TextCNN, RNN, BERT, and RoBERTa.

- Generalization and Robustness Evaluation: Assessing the model's performance across different conditions and its domain adaptation capabilities [10].

Visualizing Cognitive Assessment Workflows and Methodologies

Cognitive Screening and Impairment Classification Workflow

The following diagram illustrates the logical pathway for classifying cognitive impairment using a multi-test approach, as implemented in validation studies.

SA-BiLSTM Model Architecture for Cognitive Text Classification

This diagram outlines the workflow of the SA-BiLSTM hybrid model used for classifying cognitive differences in textual data.

The Researcher's Toolkit: Essential Reagents and Materials

Successful cognitive terminology classification research relies on a suite of validated tools and methodologies. The table below details key resources for constructing a robust research pipeline.

Table 2: Essential Research Reagents and Solutions for Cognitive Classification Studies

| Tool / Solution | Primary Function | Example Use Case | Psychometric Consideration |

|---|---|---|---|

| NIH Toolbox Fluid Cognition Battery [9] | Multi-domain computerized assessment of fluid cognitive abilities. | Primary outcome measure in clinical trials or longitudinal studies. | Provides age-corrected standard scores; good internal consistency. |

| Traditional Pen-and-Paper Tests (TMT, CLOX) [9] | Brief, in-clinic screening for specific cognitive deficits. | Rapid screening in geriatric or specialized clinics. | Test-retest reliability can be affected by practice effects; inter-rater reliability for CLOX requires training [2]. |

| Structured Interview Guides (VABS-3) [12] | Assess adaptive behavior and day-to-day functioning. | Evaluating real-world impact of cognitive deficits in developmental disorders. | Provides standard scores across communication, daily living, and socialization domains. |

| Pre-trained Language Models (BERT, RoBERTa) [10] | Baseline models for automated text analysis and classification. | Benchmarking performance of new cognitive text classification algorithms. | Performance must be validated on domain-specific datasets. |

| Custom Deep Learning Architectures (SA-BiLSTM) [10] | Fine-grained semantic classification of textual data. | Identifying cognitive differences in online collaboration or clinical transcripts. | Requires large, annotated datasets for training; model reliability is key. |

| One-Class Classification Algorithms [11] | Detecting deviation from a "normal" pattern in motor-cognitive data. | Early screening for mild cognitive impairment using gait or motor tasks. | High sensitivity is crucial for screening; requires control of confounds (e.g., age). |

The selection of cognitive assessment and classification tools is a critical decision that directly influences the validity of research findings and clinical conclusions. As evidenced by the comparative data, there is a inherent trade-off between the brevity and ease of administration of tools like the TMTB and CLOX and the comprehensive depth provided by batteries like the NIH Toolbox. The emergence of advanced analytical methods, including hybrid deep learning models and one-class machine learning classifiers, offers promising avenues for enhancing the objectivity, scalability, and sensitivity of cognitive terminology classification. Ultimately, the choice of tool must be guided by the specific research question, the target population, and a thorough understanding of each instrument's psychometric properties, particularly its reliability and validity for the intended purpose. Ensuring that these tools are not only reliable but also demonstrate clinical meaningfulness remains the central challenge and goal for researchers and clinicians alike [8].

The Impact of Unreliable Classification on Drug Development and Research Outcomes

In the high-stakes field of drug development, unreliable classification in cognitive terminology and disease subtyping represents a critical vulnerability that can compromise research validity, derail clinical trials, and ultimately prevent effective therapies from reaching patients. The consistency and accuracy with which researchers classify diseases, measure outcomes, and categorize patient populations fundamentally underpins every phase of the drug development pipeline. When classification systems lack reliability, the resulting data variability introduces noise that obscures true treatment effects, leading to costly trial failures and misguided resource allocation. This guide examines how unreliable classification impacts research outcomes through direct comparisons of reliable versus unreliable methodologies, provides experimental protocols for assessing classification reliability, and offers visualization tools to understand these critical relationships within the context of cognitive terminology research.

Table 1: Impact of Classification Reliability on Key Drug Development Metrics

| Development Phase | High-Reliability Classification Outcome | Low-Reliability Classification Outcome | Quantifiable Impact |

|---|---|---|---|

| Target Identification | Accurate patient stratification and biomarker selection | Heterogeneous patient populations diluting signal | 30% reduction in measurable treatment effect [13] |

| Phase 2 Trials | Clear go/no-go decisions based on efficacy | Inconclusive results requiring larger trials | 28-month phase extension [14] |

| Phase 3 Trials | Precise outcome measurement confirming efficacy | Failed endpoints due to measurement variability | $5.7B cost per approved drug [14] |

| Regulatory Submission | Streamlined review with validated endpoints | Requests for additional analyses or trials | 18-month review extension [14] |

| Clinical Implementation | Consistent treatment application across providers | Variable patient response and safety profiles | 33% repurposed agents in pipeline [13] |

Comparative Analysis: Reliable vs Unreliable Classification Systems

Quantitative Framework for Assessing Classification Reliability

The reliability of any classification system or measurement tool in research must be empirically validated using standardized psychometric testing. Reliability refers to the consistency of results when the measurement is reapplied under the same conditions, and it is typically assessed through three key metrics: internal consistency, test-retest reliability, and inter-rater reliability [15]. Each of these metrics provides crucial information about different aspects of classification consistency that directly impact data quality in drug development research.

Table 2: Reliability Assessment Metrics and Interpretation Guidelines

| Reliability Type | Statistical Measure | Interpretation Thresholds | Implication for Drug Development |

|---|---|---|---|

| Internal Consistency | Cronbach's Alpha (α) | <0.50: Unacceptable0.51-0.60: Poor0.61-0.70: Questionable0.71-0.80: Acceptable0.81-0.90: Good0.91-0.95: Excellent | Ensures measurement tools consistently capture the same underlying construct across trial sites |

| Test-Retest Reliability | Intraclass Correlation Coefficient (ICC) | <0.50: Poor0.50-0.75: Moderate0.76-0.90: Good>0.90: Excellent | Determines stability of patient classification over time in longitudinal trials |

| Inter-Rater Reliability | Cohen's Kappa (κ) | 0-0.20: None0.21-0.39: Minimal0.40-0.59: Weak0.60-0.79: Moderate0.80-0.90: Strong>0.90: Almost Perfect | Ensures consistent patient recruitment and outcome assessment across multiple trial sites |

Case Study: Impact on Alzheimer's Disease Drug Development

The Alzheimer's disease drug development pipeline illustrates the tangible consequences of classification reliability, with the 2025 pipeline hosting 182 trials assessing 138 drugs [13]. Biological disease-targeted therapies comprise 30% of the pipeline, while small molecule disease-targeted therapies account for 43%. The high attrition rate in neurological drug development can be partially attributed to challenges in patient classification and outcome measurement. Biomarkers play an increasingly important role in addressing classification reliability, serving as primary outcomes in 27% of active trials to provide more objective measures of disease progression and treatment response [13].

The comparison between traditional clinical classification and biomarker-enhanced classification reveals significant differences in trial efficiency. Repurposed agents, which represent 33% of the current pipeline, often benefit from established classification systems, potentially reducing reliability-related risks [13]. This demonstrates how improved classification reliability can optimize resource allocation and risk management in pharmaceutical development.

Experimental Protocols for Assessing Classification Reliability

Protocol 1: Internal Consistency Testing

Objective: To evaluate whether all items within a classification instrument measure the same underlying construct consistently.

Methodology:

- Administer the complete classification instrument to a representative sample of the target population (minimum n=100 for preliminary validation)

- Calculate Cronbach's alpha coefficient using statistical software (e.g., IBM SPSS Statistics: Analyze → Scale → Reliability Analysis)

- Compute inter-item correlations to identify potentially problematic items

- Conduct item-total statistics to assess whether any items weaken the overall consistency

Interpretation: Follow the thresholds in Table 2. Values below 0.70 indicate questionable consistency that may require instrument modification. For dichotomous items, use the KR-20 variant of Cronbach's alpha [15].

Application in Drug Development: This protocol should be applied to all patient-reported outcome measures, clinician rating scales, and diagnostic criteria before implementation in clinical trials to ensure consistent measurement across international sites.

Protocol 2: Test-Retest Reliability Assessment

Objective: To determine the stability of classification outcomes over time.

Methodology:

- Administer the classification instrument to the same participants at two time points

- Determine the appropriate interval based on the stability of the construct being measured (e.g., shorter intervals for dynamic constructs, longer for stable traits)

- Use Intraclass Correlation Coefficient (ICC) for continuous variables rather than Pearson correlation, as ICC better accounts for systematic differences

- Implement a two-way mixed-effects model, absolute-agreement type for most classification scenarios

Interpretation: Refer to ICC thresholds in Table 2. Poor test-retest reliability (<0.50) indicates the classification is too unstable for predictive applications or longitudinal trials [15].

Application in Drug Development: Essential for validating diagnostic stability in prevention trials and ensuring patient classifications remain consistent throughout long-term trials.

Protocol 3: Inter-Rater Reliability Evaluation

Objective: To assess consistency of classification across different raters, clinicians, or sites.

Methodology:

- Have at least two independent raters apply the classification system to the same cases (minimum n=30 cases)

- For categorical classifications, calculate Cohen's kappa (IBM SPSS: Analyze → Descriptive Statistics → Crosstabs → Statistics → Kappa)

- For continuous measures, use ICC with a two-way random-effects model

- Ensure raters are blinded to each other's assessments and clinical information that might bias classification

Interpretation: Use kappa thresholds from Table 2. Values below 0.60 indicate moderate or worse agreement that requires additional rater training or protocol refinement [15].

Application in Drug Development: Critical for multicenter trials where consistent patient enrollment and outcome assessment across sites is essential for trial validity.

Visualization: Impact Pathway of Unreliable Classification

Figure 1: Impact Pathway of Classification Reliability on Drug Development. This diagram visualizes how unreliable classification propagates through the drug development process, ultimately leading to trial failures and delayed therapies, while reliability solutions create a countervailing positive pathway.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Reagents and Tools for Reliability Testing in Cognitive Research

| Tool Category | Specific Solution | Function in Reliability Assessment | Application Context |

|---|---|---|---|

| Statistical Analysis Software | IBM SPSS Statistics | Calculates reliability coefficients (Cronbach's α, ICC, Cohen's κ) | All reliability testing protocols [15] |

| Biomarker Assay Kits | Plasma Phospho-Tau/Amyloid-β | Provides objective biological classification complementing cognitive measures | Alzheimer's disease trials [13] |

| Standardized Cognitive Batteries | NIH Toolbox | Offers normed, validated cognitive measures with established reliability | Multicenter trial harmonization |

| Digital Assessment Platforms | Electronic Clinical Outcome Assessment (eCOA) | Standardizes administration and reduces rater-dependent variability | All clinical trial phases |

| Rater Training Modules | Centralized Rater Certification Programs | Ensures consistent application of classification criteria across sites | Multicenter trials requiring high inter-rater reliability |

The impact of unreliable classification on drug development outcomes is both profound and measurable, contributing to the $5.7 billion cost per approved therapy in Alzheimer's disease and other complex disorders [14]. The comparative analysis presented in this guide demonstrates that reliability is not merely a methodological concern but a fundamental determinant of research success. By implementing rigorous reliability testing protocols, employing standardized assessment tools, and leveraging objective biomarkers, researchers can significantly reduce classification-related variability that currently undermines many development programs. As the field moves toward more targeted therapies and personalized medicine approaches, the reliability of our classification systems will increasingly determine the efficiency with which we can deliver effective treatments to patients. The 2025 goal for preventing or effectively treating Alzheimer's disease [14] remains achievable only if we address these fundamental measurement reliability challenges that currently impede our progress.

Within neurocognitive research, the precise measurement of cognitive constructs such as memory, executive function, and visuospatial abilities is fundamental to tracking disease progression in neurodegenerative disorders. The reliability and validity of assessment tools directly impact the classification of cognitive status, therapeutic monitoring, and clinical trial outcomes. This guide provides a comparative analysis of cognitive domains across major neurodegenerative conditions, detailing experimental protocols, key biomarkers, and data-driven insights into domain-specific decline patterns to inform diagnostic and therapeutic development.

Comparative Domain Impairment Across Neurodegenerative Conditions

Table 1: Cognitive Domain Impairment Profiles Across Neurodegenerative Conditions

| Disease Condition | Memory | Executive Function | Visuospatial Abilities | Primary Assessment Tools | Temporal Progression Pattern |

|---|---|---|---|---|---|

| Huntington's Disease (HD) | Visual memory emerges as early marker [16] [17] | Significant decline, particularly in verbal fluency and processing speed [16] [18] | Early and sensitive domain for disease progression [16] [17] | SOPT, UHDRS cognitive battery, Symbol Digit Modalities Test [19] [18] | Visual deficits precede motor onset; executive decline progresses steadily [16] [17] |

| Alzheimer's Disease (AD) | Episodic memory impairment central to diagnosis [20] | Executive dysfunction present, especially in later stages [21] | Relative preservation compared to memory deficits [20] | ADAS-Cog, Delayed Word Recall tests [20] | Memory impairment dominates early stage; other domains decline later [20] |

| Mild Cognitive Impairment (MCI) | Amnestic subtype shows prominent memory loss [22] | Non-amnestic subtype shows executive predominance [22] [21] | Variable impairment across subtypes [22] | MES, MoCA, Comprehensive neuropsychological batteries [22] [18] | Domain-specific progression predicts conversion to different dementia types [22] |

| Subjective Cognitive Decline (SCD) | Subtle subjective complaints without objective impairment [23] | Metacognitive abnormalities may precede objective deficits [23] | Generally preserved on standardized tests [23] | Self-report questionnaires, Advanced neuroimaging [23] | Potential precursor to MCI/AD; biomarker changes precede cognitive test abnormalities [23] |

Table 2: Quantitative Progression Metrics Across Disease Stages

| Cognitive Domain | Pre-manifest HD Annual Decline | Early Manifest HD Annual Decline | MCI to AD Progression Markers | Statistical Effect Sizes (HD vs. Controls) |

|---|---|---|---|---|

| Visual Memory | Significant worsening compared to healthy controls [16] [17] | Continued significant decline [16] [17] | Delayed Word Recall: top predictive feature [20] | Not explicitly quantified in available sources |

| Executive Function | Subtle changes in RP carriers [16] [17] | Significant decline in verbal fluency [16] | Executive items gain prominence later in progression [20] | Largest effect size (d=2.8) in manifest HD [18] |

| Processing Speed | Impaired in RP carriers [16] [17] | Moderate to severe decline [16] [18] | Symbol Digit Modalities test sensitive to change [18] | Moderate to large effect sizes across studies [18] |

| Global Cognition | Minimal changes [18] | Masked by practice effects in some domains [18] | ADAS-Cog13: +2 to +3 points indicates clinically meaningful decline [20] | Combined effect size: d=2.6 [18] |

Experimental Protocols for Cognitive Assessment

Comprehensive Neuropsychological Batteries

Comprehensive assessment typically involves 19+ neuropsychological tests spanning multiple cognitive domains administered over multiple sessions to reduce fatigue effects [18]. The protocol includes:

- Executive function assessment: Letter, action, and category fluency tests; Trail Making Test (Part B); Stroop-interference test

- Working memory and attention: Digits forward, backward and sequencing tests; Symbol Digit Modalities Test; Ruff 2 & 7 Cancellation Test – Accuracy

- Learning and memory: Short California Verbal Learning Test-II; Brief Visuospatial Memory Test-Revised

- Processing speed: Stroop-word reading, Stroop-colour naming, Trail Making Test (Part A); Ruff 2 & 7 Cancellation Test – Speed

- Language assessment: Brief Boston Naming Test; Indiana University Token Test

- Visuospatial function: Judgement of Line Orientation test (Form H); Rey Complex Figure Copying test [18]

Standardized administration requires trained raters blind to diagnostic status, with raw scores converted to z-scores using test-specific norms for cross-test comparison [18]. Longitudinal assessments must account for practice effects, particularly in healthy controls, which can mask true decline in patient populations [18].

The Self-Ordered Pointing Task (SOPT) Protocol

The SOPT measures working memory and executive function using abstract designs [19]. The standardized protocol involves:

- Stimuli: Abstract designs arranged in arrays of increasing difficulty (4 to 12 items)

- Administration: Participants point to one design per card in each array, with the rule of not pointing to the same design twice

- Testing procedure: Multiple trials with different stimulus arrangements

- Primary outcome: Total errors (ricc = .82 test-retest reliability) [19]

- Secondary measures: Span score (reliability = .58), perseverative errors (reliability = .12)

- Considerations: Small practice effects observed (Cohen's d = .34) upon retesting [19]

The SOPT demonstrates correlations with working memory, verbal learning, visuospatial ability, and specific executive functions like strategy utilization and planning, but not with cognitive flexibility or interference control [19].

Memory and Executive Screening (MES) Protocol

The MES is a brief cognitive test (approximately 7 minutes) developed for mild cognitive impairment screening [22]. The protocol includes:

- Memory component (MES-5R): One sentence with ten main points is remembered three times and free delay recalled two times; the summation of the five recall scores reflects instant and delayed memory and learning ability

- Executive component (MES-EX): Four subtests - category fluency test, sequential movement tasks, conflicting instructions task, and Go/No-go task

- Scoring: Total possible score is 100 (50 each for MES-5R and MES-EX)

- Classification accuracy: AUC of 0.89 for amnestic MCI-single domain (sensitivity=0.795, specificity=0.828) with cut-off <75; AUC of 0.95 for amnestic MCI-multiple domain (sensitivity=0.87, specificity=0.91) with cut-off <72 [22]

The MES is not related to education level and does not require reading or writing, reducing cultural bias [22].

Visualization of Cognitive Assessment Workflows

Figure 1: Comprehensive Cognitive Assessment Workflow for Longitudinal Studies

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Cognitive Assessment Tools and Their Research Applications

| Assessment Tool | Cognitive Constructs Measured | Administration Time | Reliability/Validity Evidence | Optimal Research Use Cases |

|---|---|---|---|---|

| Self-Ordered Pointing Task (SOPT) | Working memory, executive function, strategy utilization | 15-20 minutes | Test-retest reliability: ricc=.82 for total errors; correlates with working memory and visuospatial measures [19] | HD pre-manifest phase assessment; working memory-specific studies |

| Memory and Executive Screening (MES) | Instant/delayed memory, learning ability, executive function | ~7 minutes | AUC=0.89-0.95 for aMCI; not related to education level [22] | Large-scale screening; low-education populations; brief assessment protocols |

| UHDRS Cognitive Battery | Executive function (verbal fluency, processing speed, attention) | 15-20 minutes | Strong correlation with comprehensive batteries (effect size d=2.4 in HD) [18] | HD clinical trials; monitoring disease progression longitudinally |

| ADAS-Cog13 | Multiple domains including memory, language, praxis | 30-45 minutes | Sensitive to early AD progression; +2 to +3 points indicates clinically meaningful decline [20] | Alzheimer's clinical trials; MCI progression studies |

| Symbol Digit Modalities Test | Processing speed, visual attention, executive function | 5 minutes | Part of UHDRS; sensitive to change in HD over 12 months [18] | Processing speed assessment across multiple neurodegenerative conditions |

Domain-Specific Progression Patterns and Biomarker Correlations

Visual Cognition as an Early Marker in Huntington's Disease

Recent research demonstrates that visual cognition represents a particularly sensitive domain for early detection in Huntington's disease. A 2025 longitudinal study examining 181 participants across the HD spectrum found that visual memory and attention significantly declined in pre-manifest individuals compared to healthy controls over just 12 months [16] [17]. Those with reduced penetrance alleles (36-39 CAG repeats) exhibited changes in visual attention and processing speed despite preserved motor function, suggesting these measures may detect disease progression before traditional motor signs emerge [16] [17].

The neurobiological basis for early visual cognitive decline involves corticostriatal circuit disruption, which is a hallmark of HD pathology [16] [17]. The correlation between retinal changes (measured via optical coherence tomography) and cognitive status further supports the value of visual processing measures as potential biomarkers [16] [17].

Memory and Executive Function Progression Patterns

Distinct progression patterns emerge across neurodegenerative conditions:

Alzheimer's continuum: Delayed Word Recall emerges as the top cognitive marker in early stages, while Orientation gains prominence later, reflecting a shift toward executive and attentional decline as disease progresses [20].

Huntington's disease: Executive function shows the largest effect size (d=2.8) when comparing HD patients to controls, with significantly greater impairment than language domains (d=1.5) [18].

Mild Behavioral Impairment (MBI): Individuals with MBI exhibit diminished cognitive performance, particularly in memory and executive functions, with these domain-specific measures showing stronger associations than global cognitive assessments [21].

Emerging Digital Biomarkers and Machine Learning Approaches

Novel assessment approaches are expanding cognitive measurement precision:

Digital speech biomarkers: Studies analyzing spontaneous speech have identified distinct patterns across neurodegenerative conditions. MCI due to Alzheimer's disease shows reduced use of function words, Parkinson's disease MCI presents with shorter sentences and longer pauses, and MCI with Lewy bodies exhibits greater lexical repetition [24].

Machine learning feature importance: Permutation Feature Importance (PFI) analysis of ADAS-Cog13 data reveals how cognitive feature significance shifts with disease progression, enabling optimized test selection for different disease stages [20].

Multimodal deep learning: Models incorporating neuroimaging and cognitive data can predict cognitive scores (ADAS-Cog13, MMSE) and diagnostic status with higher accuracy than traditional approaches, supporting their use in clinical trial enrichment and individual progression forecasting [20].

The reliable classification of cognitive constructs requires domain-specific understanding of progression patterns across neurodegenerative conditions. Visual cognition emerges as a particularly sensitive early marker in Huntington's disease, while memory deficits remain central to Alzheimer's progression. Executive function measures show strong utility across multiple conditions. The evolving landscape of cognitive assessment increasingly incorporates brief, validated tools like the MES alongside digital biomarkers and machine learning approaches to enhance precision and scalability. Researchers should select assessment batteries that align with both the specific disease trajectory and stage of progression, while accounting for practice effects in longitudinal designs. As biomarker research advances, the integration of cognitive measures with neuroimaging and fluid biomarkers will further refine our understanding of domain-specific decline patterns across neurodegenerative conditions.

In cognitive science and neuropsychology, operational definitions serve as the essential bridge between theoretical constructs and empirical measurement. An operational definition specifies the exact procedures, tasks, or instruments used to measure an abstract cognitive concept, thereby transforming vague terminology into quantifiable variables [25]. For research aimed at classifying cognitive phenomena, the construct validity of these operational definitions—the degree to which they truly measure the intended theoretical construct—becomes the foundational standard upon which reliable classification depends [26] [27]. Without precise operational definitions that demonstrate strong construct validity, research on cognitive terminology classification lacks the rigor necessary for meaningful comparison, replication, and application in critical fields such as drug development.

The necessity of this precision is particularly evident in clinical and pharmaceutical contexts. For instance, when developing cognitive-enhancing medications, researchers must determine whether an intervention improves "working memory" or "executive function." These constructs must be defined through specific, measurable tasks whose validity has been empirically established [26]. This guide examines how major cognitive assessment approaches establish this critical link between terminology and measurement, comparing their methodological frameworks, validation evidence, and suitability for research applications.

Theoretical Framework: From Constructs to Operationalization

The Process of Operationalizing Cognitive Constructs

Cognitive constructs such as "working memory," "processing speed," and "cognitive bias" are abstract variables that cannot be directly observed but are inferred from observable behavior through systematic measurement procedures [25] [27]. The process of operationalization involves defining these constructs in terms of specific measurement operations, thus creating the concrete variables studied in research [28].

The development of a valid operational definition follows a systematic process:

- Identify the Concept: Clearly define the abstract cognitive construct (e.g., "attention") based on theoretical frameworks and existing literature [25].

- Determine Observable Indicators: Identify measurable behaviors, physiological responses, or performance metrics that manifest the construct (e.g., reaction time, accuracy on specific tasks) [25].

- Select Measurement Method: Choose appropriate assessment tools (e.g., computerized tests, behavioral observations, physiological monitoring) that match the construct and research context [25].

- Define Measurement Criteria: Precisely specify units of measurement, time frames, and administration contexts to ensure consistency [25].

Establishing Construct Validity

Construct validity is not a single statistic but an accumulated body of evidence demonstrating that a measurement tool adequately represents the intended construct [27]. Key forms of validity evidence include:

Table: Types of Validity Evidence in Cognitive Assessment

| Validity Type | Definition | Application Example |

|---|---|---|

| Construct Validity | Overall evidence that a test measures the intended theoretical construct | Demonstrating a memory test actually measures memory rather than attention or processing speed [27] |

| Content Validity | Degree to which test content represents the target construct's domain | Ensuring an executive function battery covers all theoretically relevant subdomains (inhibition, shifting, updating) [27] |

| Criterion Validity | Extent to which test scores correlate with established "gold standard" measures | Comparing a new brief cognitive screen against comprehensive neuropsychological assessment [27] |

| Ecological Validity | Ability to predict real-world functioning from test performance | Correlating laboratory-based memory tests with everyday forgetfulness [29] |

Comparative Analysis of Cognitive Assessment Approaches

Traditional Neuropsychological Assessment

Traditional pen-and-paper neuropsychological tests represent some of the most established operational definitions in cognitive science. These measures have extensive normative data and well-documented psychometric properties [29].

Operational Definitions Examples:

- Working Memory: "Score on Digit Span Backward subtest of the Wechsler Adult Intelligence Scale, measured as the longest sequence of numbers correctly recalled in reverse order."

- Processing Speed: "Number of items correctly completed on the Digit Symbol Substitution Test within 90 seconds."

- Verbal Fluency: "Number of unique animals named in 60 seconds on the Animal Fluency Test."

Strengths and Limitations: Traditional assessments provide standardized administration and extensive normative data but face limitations including practice effects (reduced sensitivity upon repeated administration), lengthy administration times requiring trained professionals, and questionable ecological validity (limited ability to predict real-world functioning) [29]. One study noted that traditional tests "may not effectively capture how cognitive functioning translates to everyday life situations which involve distractions, multitasking demands, and emotional pressures" [29].

Digital and Computerized Cognitive Assessment

Digital platforms represent an evolution in operational definitions, maintaining core cognitive constructs while transforming their measurement through technology.

Oxford Cognitive Testing Portal (OCTAL) OCTAL is a remote, browser-based platform providing performance metrics across multiple cognitive domains including memory, attention, visuospatial, and executive functions [30]. Its operational definitions include:

- Episodic Memory: "Accuracy on a continuous visual recognition task using novel abstract patterns, measured as the discrimination index (d')."

- Working Memory: "Performance on a spatial n-back task, quantified as the percentage of correct responses across varying load conditions (1-back, 2-back)."

- Attention: "Reaction time variability on a simple reaction time task, calculated as the coefficient of variation (standard deviation/mean RT)."

In validation studies (N=1,749), OCTAL demonstrated strong psychometric properties, with test-retest reliability scores of ICC ≥ 0.79 and excellent diagnostic accuracy in distinguishing Alzheimer's disease dementia from subjective cognitive decline (AUC = 0.98 for a 20-minute subset) [30]. The platform showed equivalent performance across English- and Chinese-speaking populations, supporting its cross-cultural applicability [30].

Computerized Adaptive Testing Some digital platforms employ adaptive algorithms that adjust task difficulty based on individual performance, creating more precise operational definitions that minimize ceiling and floor effects [29].

Technology-Enhanced Ecological Assessment

Emerging technologies aim to develop operational definitions with greater ecological validity by measuring cognition in real-world contexts or realistic simulations.

Virtual Reality (VR) Assessments VR platforms create immersive environments that operationalize cognitive constructs through complex, life-like tasks. For example:

- Executive Function: "Performance on a virtual supermarket shopping task, measured by efficiency of route planning, adherence to a budget, and accuracy in following a shopping list."

- Prospective Memory: "Accuracy in remembering to perform specific actions at predetermined times while navigating a virtual office environment."

While promising, VR assessments face challenges including "technological and psychometric limitations, underdeveloped theoretical frameworks, and ethical considerations" [29].

Ecological Momentary Assessment (EMA) EMA operationalizes cognitive constructs through repeated sampling in natural environments:

- Working Memory: "Performance on a brief n-back task administered via smartphone at random intervals throughout the day."

- Attention: "Accuracy on a simple arithmetic task completed in response to push notifications in real-world settings."

EMA enhances ecological validity but faces implementation challenges including "high participant burden and missing data" [29].

Digital Phenotyping This approach uses passive data collection from smartphones and wearables to create novel operational definitions:

- Processing Speed: "Typing speed variability on smartphone keyboard during daily use."

- Sleep-Related Cognitive Impairment: "Circadian rhythm disruption measured by accelerometer data, quantified as interdaily stability and intradaily variability."

Digital phenotyping faces "significant ethical and logistical challenges, including privacy and informed consent concerns, as well as challenges in data interpretation" [29].

Machine Learning and Wearable Device Integration

Advanced analytics are creating new operational definitions derived from behavioral and physiological patterns.

Wearable Device Monitoring A study with over 2,400 older adults used wearable device data to predict cognitive performance [31]. The operational definitions included:

- Processing Speed/Working Memory/Attention: "Poor performance defined as scores in the bottom quartile on the Digit Symbol Substitution Test (DSST)."

- Activity Variability: "Standard deviation of total activity counts across waking hours, derived from accelerometer data."

- Sleep Efficiency Variability: "Day-to-day variation in the percentage of time in bed spent asleep."

Machine learning models (CatBoost, XGBoost, Random Forest) demonstrated strongest predictive power for processing speed, working memory, and attention (median AUCs ≥ 0.82) compared to immediate/delayed recall (median AUCs ≥ 0.72) and categorical verbal fluency (median AUC ≥ 0.68) [31].

Table: Performance Comparison of Cognitive Assessment Modalities

| Assessment Approach | Reliability (Test-Retest) | Ecological Validity | Administration Burden | Best Application Context |

|---|---|---|---|---|

| Traditional Pen-and-Paper | Variable; high for established tests | Low to Moderate | High (trained administrator, 60-90 mins) | Gold-standard diagnosis, comprehensive assessment |

| Computerized Flat Batteries | Moderate to High (e.g., ICC ≥ 0.79 for OCTAL) [30] | Low to Moderate | Low to Moderate (20-30 mins, self-administered possible) | Large-scale screening, repeated assessment |

| Virtual Reality | Emerging evidence | High | High (specialized equipment, 30-45 mins) | Rehabilitation planning, functional capacity assessment |

| Ecological Momentary Assessment | Moderate | High | High (frequent interruptions, participant burden) | Real-world cognitive fluctuation, treatment response |

| Wearable-Based Prediction | High for activity/sleep metrics | High | Low (passive monitoring) | Long-term monitoring, early detection of decline |

Experimental Protocols and Validation Methodologies

Validation Framework for Operational Definitions

Establishing the validity of operational definitions requires rigorous experimental protocols. The following diagram illustrates a comprehensive validation workflow for cognitive assessment tools:

Psychometric Evaluation Protocol

The reliability and validity of operational definitions must be empirically demonstrated. A study on cognitive interpretation bias measures exemplifies this process [32]:

Sample: 94 young adults completed four interpretation bias measures across two sessions separated by one week.

Measures Included:

- Probe Scenario Task: Assessing implicit automatic processes through reaction times to resolve ambiguous social scenarios.

- Recognition Task: Combining automatic and reflective components through similarity ratings of scenario interpretations.

- Scrambled Sentences Task: Measuring interpretive biases through sentence construction under cognitive load.

- Interpretation and Judgmental Bias Questionnaire: Explicit reflective measure of interpretation likelihood.

Validation Analyses:

- Internal Consistency: Degree of interrelatedness among measure items.

- Test-Retest Reliability: Temporal stability measured via intraclass correlation coefficients (ICCs).

- Convergent Validity: Correlations between different measures of the same construct.

- Concurrent Validity: Correlations with social anxiety symptoms.

Results showed varying psychometric properties across measures, with the Scrambled Sentences Task and Interpretation and Judgmental Bias Questionnaire demonstrating good reliability and validity, while the Probe Scenario Task showed poor psychometric properties [32]. This highlights how operational definitions of the same theoretical construct can vary significantly in measurement quality.

Cross-Cultural Validation Protocol

The OCTAL platform demonstrated a rigorous protocol for establishing cross-cultural validity [30]:

Study Design: Four validation studies with N=1,749 participants across different populations.

Methodology:

- Task Equivalence: Testing performance equivalence between English- and Chinese-speaking younger adults.

- Lifespan Sensitivity: Mapping domain-specific aging trajectories from mid- to late-adulthood.

- Clinical Validation: Assessing diagnostic accuracy in a memory-clinic cohort (N=194).

- Reliability Testing: Establishing test-retest reliability (ICC ≥ 0.79; N=118).

Outcome Measures:

- Diagnostic Accuracy: Area Under the Curve (AUC) values for distinguishing Alzheimer's disease from subjective cognitive decline.

- Domain-Specific Trajectories: Age-related performance decline patterns across cognitive domains.

- Cross-Cultural Equivalence: Statistical equivalence of task performance across language groups.

This multi-study approach provides a comprehensive validation framework that establishes both the reliability and validity of the operational definitions employed.

Essential Research Reagents and Materials

Cognitive assessment requires specific "research reagents" - standardized tools and protocols that enable consistent measurement across studies and laboratories.

Table: Essential Research Reagents for Cognitive Terminology Operationalization

| Tool Category | Specific Examples | Primary Research Function | Key Psychometric Properties |

|---|---|---|---|

| Gold-Standard Neuropsychological Tests | Digit Symbol Substitution Test (DSST), CERAD Word-Learning Test, Animal Fluency Test | Provide criterion variables for validation studies; establish diagnostic accuracy | Extensive normative data; well-established validity for specific cognitive domains [31] |

| Computerized Assessment Platforms | OCTAL, CANTAB, CNS Vital Signs | Enable standardized administration across sites; facilitate precise reaction time measurement | High test-retest reliability (e.g., ICC ≥ 0.79 for OCTAL) [30] |

| Cognitive Bias Measures | Scrambled Sentences Task, Interpretation and Judgmental Bias Questionnaire | Quantify implicit cognitive processes relevant to psychopathology | Variable psychometric properties (e.g., α = .79 for SST) [32] |

| Ecological Momentary Assessment Platforms | Smartphone-based EMA apps, wearable sensors | Capture real-world cognitive functioning in natural environments | Enhanced ecological validity; potential participant burden [29] |

| Virtual Reality Environments | Virtual supermarket shopping task, virtual office prospective memory task | Assess complex cognitive functions in simulated real-world contexts | High face validity; technological and psychometric limitations [29] |

| Wearable Activity Monitors | ActiGraph, Fitbit, Apple Watch | Provide objective measures of activity, sleep, and circadian rhythms | Strong validity for physical activity metrics; emerging evidence for cognitive correlations [31] |

Data Integration and Interpretation Framework

The relationship between operational definitions, theoretical constructs, and validation evidence can be visualized as an integrated framework:

The rigorous operationalization of cognitive terminology represents a fundamental requirement for advancing both basic research and applied drug development. As this comparison demonstrates, assessment approaches vary significantly in their reliability, validity, practical feasibility, and ecological relevance. Traditional neuropsychological tests provide well-established operational definitions with extensive normative data but face limitations in ecological validity and practicality for repeated assessment. Digital platforms like OCTAL offer promising alternatives with strong psychometric properties and cross-cultural applicability [30]. Emerging technologies including VR, EMA, and wearable-based monitoring create new opportunities for ecologically valid assessment but require further validation and address ethical considerations [29].

For researchers and drug development professionals, selection of assessment approaches must balance methodological rigor with practical constraints. The optimal operational definitions depend on specific research goals: traditional measures for diagnostic accuracy studies, digital platforms for large-scale clinical trials, and technology-enhanced approaches for understanding real-world functional impact. Across all contexts, explicit attention to construct validity remains paramount—without demonstrating that our measurements truly capture intended cognitive constructs, the foundation of cognitive terminology classification research remains uncertain. Future directions should include development of standardized operational definition frameworks, increased attention to cross-cultural validation, and integration of multiple assessment modalities to capture the multifaceted nature of cognitive constructs.

Methodological Approaches for Implementing Reliable Cognitive Classification Systems

In cognitive terminology classification research and drug development, the reliability of measurement tools and diagnostic classifications is paramount. Reliability refers to the consistency and reproducibility of results, forming the foundation upon which valid scientific conclusions are built. Two statistical coefficients have emerged as fundamental for quantifying different types of reliability: Cronbach's alpha (α) for internal consistency and Cohen's kappa (κ) for inter-rater agreement. While both are reliability coefficients, they serve distinct purposes and are applied in different research contexts. Cronbach's alpha functions as a measure of how closely related a set of items are as a group, essentially evaluating whether items in a test or questionnaire consistently measure the same underlying construct [33] [34]. Conversely, Cohen's kappa measures the agreement between two raters who independently classify items into categorical outcomes, while accounting for the possibility of agreement occurring by chance [35] [36].

The distinction between these measures is critical for researchers designing studies in cognitive assessment and clinical trial endpoints. Selecting the inappropriate coefficient can lead to misleading conclusions about the reliability of measurements, potentially compromising research validity and subsequent clinical decisions. This guide provides a comprehensive comparison of these essential statistical tools, enabling researchers to make informed methodological choices aligned with their specific research objectives in cognitive terminology classification and pharmaceutical development.

Fundamental Concepts and Direct Comparison

Core Definitions and Applications

Cronbach's Alpha is a coefficient of internal consistency that quantifies how closely related a set of items are as a group [33]. It is most commonly employed in the development and validation of multi-item scales, questionnaires, and assessment instruments where researchers need to ensure that all items consistently measure the same underlying construct [34]. For example, in cognitive research, Cronbach's alpha would be used to evaluate whether all items on a cognitive assessment battery reliably measure a specific cognitive domain like executive function or memory. The coefficient is calculated as a function of the number of test items and the average inter-correlation among these items, with values ranging from 0 to 1 [33] [34].

Cohen's Kappa is a statistic designed to measure inter-rater reliability for qualitative categorical items [35] [36]. It assesses the degree of agreement between two raters who independently classify items into mutually exclusive categories, while incorporating a correction for chance agreement [35]. This measure is particularly valuable in healthcare research and cognitive classification where subjective judgments are required, such as when clinicians independently diagnose cognitive impairment or classify disease stages [35] [37]. Unlike simple percent agreement calculations, Cohen's kappa accounts for the probability of raters agreeing by chance alone, providing a more robust assessment of true agreement [35] [36]. The coefficient ranges from -1 (complete disagreement) to +1 (perfect agreement), with 0 indicating agreement equivalent to chance [38].

Comparative Analysis

Table 1: Key Differences Between Cronbach's Alpha and Cohen's Kappa

| Feature | Cronbach's Alpha | Cohen's Kappa |

|---|---|---|

| Type of Reliability | Internal consistency [38] [15] | Inter-rater reliability [35] [38] |

| Data Type | Ordinal/Interval (e.g., Likert scales) [38] [34] | Categorical (Nominal) [38] [36] |

| Purpose | Assess consistency of items within a test/scale [38] [34] | Assess agreement between independent raters [35] [38] |

| Number of Raters | Not applicable (assesses items, not raters) | Typically two raters [38] [36] |

| Range of Values | 0 to 1 [38] [34] | -1 to 1 [38] [36] |

| Chance Correction | No | Yes [35] [36] |

| Common Applications | Survey instruments, psychological tests, assessment scales [38] [34] | Medical diagnosis, content analysis, quality control [35] [38] |

Cronbach's Alpha: Deep Dive

Mathematical Foundation and Calculation

The mathematical foundation of Cronbach's alpha is derived from the ratio of the shared covariance between items to the total variance in the measurement. The standard formula for Cronbach's alpha is:

$$ \alpha = \frac{N \bar{c}}{\bar{v} + (N-1) \bar{c}}$$

Where:

- N = number of items in the scale

- $\bar{c}$ = average inter-item covariance among the items

- $\bar{v}$ = average variance of the items [33]

This formula demonstrates two critical properties of Cronbach's alpha: its sensitivity to both the number of items and the average inter-item correlation. As the number of items increases, Cronbach's alpha tends to increase, holding inter-item correlations constant. Similarly, as the average inter-item correlation increases, Cronbach's alpha increases as well, holding the number of items constant [33]. This relationship highlights the importance of both scale length and item homogeneity when designing reliable measurement instruments.

For researchers implementing this calculation, statistical software packages like SPSS provide automated procedures for computing Cronbach's alpha. The basic syntax in SPSS is:

This command generates the alpha coefficient along with additional statistics that help evaluate the scale's properties [33].

Interpretation Guidelines and Thresholds

Table 2: Interpretation Thresholds for Cronbach's Alpha

| Alpha Value | Interpretation | Acceptability in Research |

|---|---|---|

| < 0.50 | Unacceptable | Poor reliability; substantial revision required [15] |

| 0.51 - 0.60 | Poor | Minimal acceptability; may require item revision [15] |

| 0.61 - 0.70 | Questionable | Acceptable for exploratory research [15] |

| 0.71 - 0.80 | Acceptable | Good for basic research [33] [15] |

| 0.81 - 0.90 | Good | Strong reliability for applied settings [15] |

| 0.91 - 0.95 | Excellent | Possibly indicates item redundancy [15] |

| > 0.95 | Potentially problematic | Suggests redundant items; scale review recommended [34] |

While a common benchmark for acceptability in social science research is 0.70 [33], context-specific considerations may justify different thresholds. For high-stakes clinical assessments or cognitive classification instruments, more stringent thresholds (typically >0.80) are often required [15]. Conversely, for exploratory research or instruments with very few items, slightly lower values may be temporarily acceptable while the instrument is under development.

It is crucial to recognize that extremely high alpha values (>0.95) may indicate problematic item redundancy, where multiple items are essentially asking the same question in slightly different ways [15] [34]. This reduces the breadth of the construct being measured and compromises content validity despite high reliability.

Experimental Protocols and Methodological Considerations

Scale Development and Validation Protocol:

- Item Generation: Develop a comprehensive set of items theoretically linked to the construct of interest, ensuring content validity through expert review and pilot testing [34].

- Data Collection: Administer the preliminary scale to a sufficiently large sample (typically N>100 for stable estimates) representing the target population [34].

- Initial Analysis: Compute Cronbach's alpha for the entire scale and examine inter-item correlations to identify poorly performing items [33] [34].

- Item Analysis: Use the "alpha if item deleted" statistic to identify items whose removal would substantially improve the overall alpha [34].

- Dimensionality Assessment: Conduct factor analysis (exploratory or confirmatory) to verify unidimensionality, as high alpha alone does not guarantee a single underlying construct [33].

- Final Scale Composition: Retain items that contribute positively to internal consistency while maintaining content coverage.

Methodological Considerations:

- Cronbach's alpha is sensitive to the number of items in the scale, with longer scales naturally producing higher alpha values [33] [15].

- The measure assumes essentially tau-equivalent items, meaning all items are equally related to the underlying construct [34].

- Alpha can be artificially inflated by including redundant items that measure the same narrow aspect of a broader construct [15] [34].

- For scales with dichotomous items, the Kuder-Richardson formula 20 (KR-20) is the appropriate equivalent [15].

Cohen's Kappa: Deep Dive

Mathematical Foundation and Calculation

Cohen's kappa operates on a different mathematical principle than Cronbach's alpha, focusing on observed versus chance-corrected agreement between raters. The formula for Cohen's kappa is:

$$ \kappa = \frac{po - pe}{1 - p_e}$$

Where:

- $p_o$ = relative observed agreement among raters (proportion of cases where raters agree)

- $p_e$ = hypothetical probability of chance agreement (calculated based on the marginal distributions of rater responses) [36]

The calculation of chance agreement ($p_e$) is derived by multiplying the marginal probabilities for each category and summing these products across all categories. For a 2×2 confusion matrix (binary classification), this can be visualized as:

Where:

- $p_o = (a + d) / N$ (with N = a + b + c + d)

- $p_e = [(a+b)(a+c) + (c+d)(b+d)] / N^2$ [36]

This chance correction is what distinguishes kappa from simple percent agreement and makes it a more robust measure of true consensus between raters.

Interpretation Guidelines and Thresholds

Table 3: Interpretation Thresholds for Cohen's Kappa

| Kappa Value | Interpretation | Acceptability in Research |

|---|---|---|

| < 0 | No agreement | Worse than chance agreement [15] [36] |

| 0.01 - 0.20 | None to Slight | Generally unacceptable [15] [36] |

| 0.21 - 0.39 | Minimal | Questionable reliability [15] |

| 0.40 - 0.59 | Weak | Minimal acceptability [15] |

| 0.60 - 0.79 | Moderate | Acceptable for most research [15] |

| 0.80 - 0.90 | Strong | Good agreement [15] |

| > 0.90 | Almost Perfect | Excellent agreement [15] |

Interpretation of kappa values requires consideration of contextual factors beyond these general guidelines. The magnitude of kappa is influenced by the prevalence of the finding (whether the categories are equally probable) and bias (differences in marginal distributions between raters) [36]. For example, when a condition is very rare or very common, kappa values tend to be lower even with good agreement. Similarly, when raters have systematically different thresholds for assigning categories, kappa values can be affected.

In healthcare research, some experts have questioned whether the traditional interpretation thresholds proposed by Landis and Koch (1977) are sufficiently stringent, particularly for clinical diagnoses where misclassification can have serious consequences [35]. For high-stakes diagnostic classifications, values below 0.60 are often considered inadequate.

Experimental Protocols and Methodological Considerations

Inter-Rater Reliability Study Protocol:

- Rater Training: Standardized training sessions for all raters using explicit criteria for classification, with practice cases not included in the formal reliability assessment [35].

- Study Design: Independent rating of the same cases by multiple raters blinded to each other's assessments and clinical information that might bias ratings [35].

- Case Selection: Selection of a representative sample of cases that reflects the spectrum of conditions encountered in practice, including borderline cases [35].

- Data Collection: Structured data collection using standardized forms that operationalize all classification categories [35].

- Statistical Analysis: Calculation of both percent agreement and Cohen's kappa to provide complementary information about reliability [35].

- Disagreement Resolution: Analysis of cases with disagreement to identify systematic differences in interpretation and refine classification criteria.

Methodological Considerations:

- Kappa is appropriate for nominal (categorical) data without inherent ordering [38] [36].

- For ordinal data with meaningful categories (e.g., severity ratings), weighted kappa is more appropriate as it gives partial credit for near agreements [15].

- When there are more than two raters, the Fleiss kappa is an appropriate extension of Cohen's kappa [35].

- Kappa values can be misleading when category prevalence is very high or low, as the chance agreement probability increases in these situations [36].

- Confidence intervals should be reported for kappa coefficients to indicate the precision of the estimate [36].

Decision Framework for Researchers

Figure 1: Decision Framework for Selecting Appropriate Reliability Statistics

Application in Cognitive Terminology Classification Research

In cognitive terminology classification research, both reliability measures play complementary but distinct roles. Cronbach's alpha is essential for validating cognitive assessment batteries where multiple items or tests purportedly measure the same cognitive domain [37]. For example, when developing a memory assessment battery that includes multiple subtests for verbal recall, visual memory, and recognition memory, Cronbach's alpha would indicate whether these subtests consistently measure the broader "memory" construct.

Cohen's kappa finds critical application in ensuring consistent diagnostic classification of cognitive impairment across clinicians or research diagnosticians [37] [39]. Studies examining cognitive impairment classification in multiple sclerosis have demonstrated how different classification criteria yield varying prevalence rates, highlighting the importance of establishing reliable diagnostic procedures through kappa statistics [37] [39]. The selection of specific cut-offs (e.g., 1.5 SD vs. 2 SD below normative means) significantly impacts classification reliability, with kappa providing a standardized metric to compare agreement across different diagnostic approaches [37].

Essential Research Reagent Solutions

Table 4: Essential Methodological Components for Reliability Studies

| Research Component | Function in Reliability Assessment | Examples/Standards |

|---|---|---|

| Standardized Assessment Instruments | Provides structured framework for consistent data collection | BRB-N (Brief Repeatable Battery of Neuropsychological Tests) [39] |

| Rater Training Protocols | Ensures consistent application of classification criteria | Standardized training sessions with practice cases [35] |

| Statistical Software Packages | Computes reliability coefficients with appropriate methods | SPSS, R packages (psych, irr) [33] [15] |

| Classification Criteria Manuals | Operationalizes diagnostic decisions with explicit rules | Explicit cut-offs (e.g., 1.5 SD below normative mean) [37] [39] |

| Data Collection Platforms | Standardizes data capture across sites/raters | Electronic data capture systems, standardized forms [35] |

Comparative Analysis and Research Implications

Limitations and Complementary Use