From Encoding to Long-Term Storage: Decoding the Neural Mechanisms of Memory Construction and Consolidation

This article synthesizes current research on the neural mechanisms underlying memory construction and systems consolidation, processes critical for transforming labile experiences into stable long-term memories.

From Encoding to Long-Term Storage: Decoding the Neural Mechanisms of Memory Construction and Consolidation

Abstract

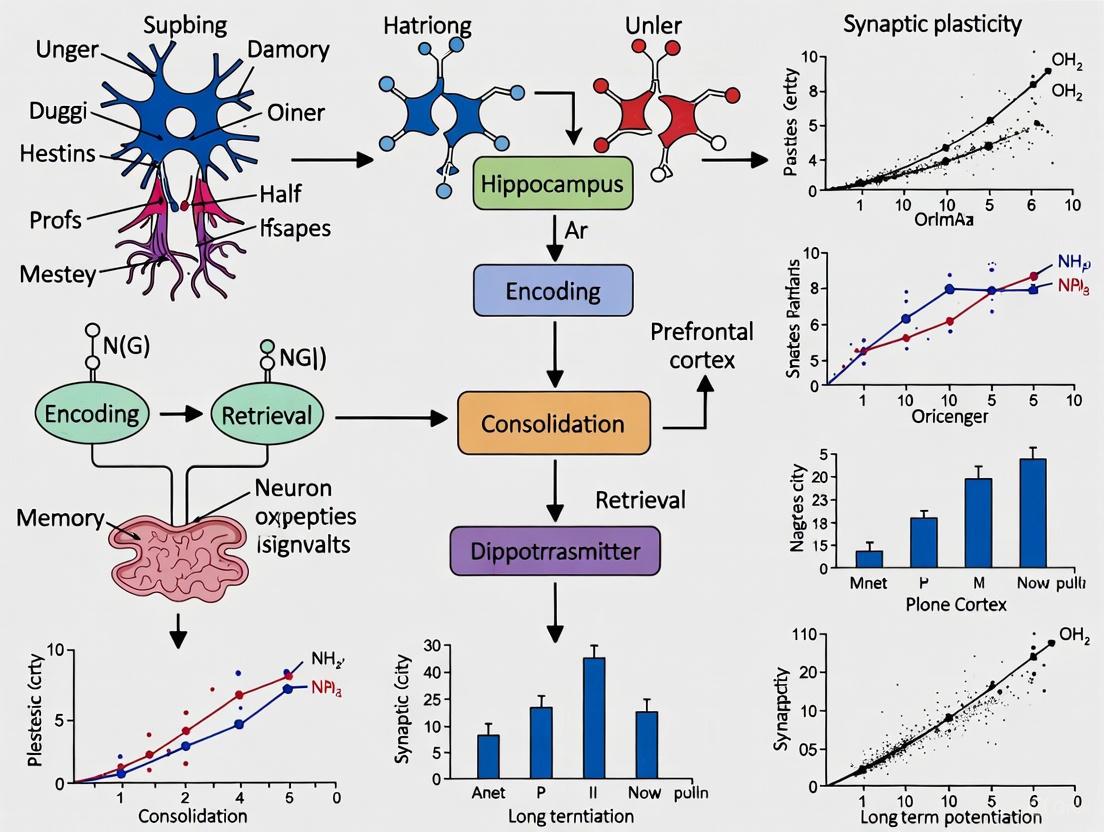

This article synthesizes current research on the neural mechanisms underlying memory construction and systems consolidation, processes critical for transforming labile experiences into stable long-term memories. We explore the foundational dialogue between the hippocampus and neocortex, highlighting the role of hippocampal replay, sharp-wave ripples, and sleep oscillations in guiding memory reorganization. Methodological advances, including large-scale neural ensemble recording and generative computational models, are detailed. The review further examines how consolidation failures manifest in neurological disorders and discusses strategies to optimize this process. Finally, we contrast leading theoretical frameworks and validate mechanistic insights through evidence from pathological conditions, offering a comprehensive perspective for researchers and drug development professionals aiming to translate these discoveries into novel therapeutic interventions.

Blueprint of a Memory: Hippocampal-Neocortical Dialogue and Systems Consolidation

Defining Memory Construction and Systems Consolidation

Memory formation is not a passive recording process but an active, dynamic construction. Episodic memories are (re)constructed mental representations that share neural substrates with imagination and combine unique sensory features with schema-based predictions [1]. This constructive process enables remarkable adaptability but also introduces characteristic distortions that increase as memories consolidate over time. The neurobiological mechanisms underlying memory construction and systems consolidation represent a fundamental research focus in neuroscience, with significant implications for understanding both normal cognitive functioning and memory-related psychopathologies.

The medial temporal lobe system, particularly the hippocampus, plays an indispensable role in initial memory formation, while long-term storage depends on widely distributed neocortical networks [2]. This transition from hippocampal-dependent to neocortical-dependent memory represents the core of systems consolidation—a process that unfolds over timescales ranging from hours to years [3]. Understanding these mechanisms provides critical insights for therapeutic interventions targeting memory disorders.

Core Mechanisms and Theoretical Frameworks

Computational Models of Memory Construction

Recent computational frameworks propose that memory construction relies on generative models trained by hippocampal replay processes. According to this view, the hippocampus rapidly encodes events using autoassociative networks, then trains generative models (implemented as variational autoencoders) in neocortical regions to recreate sensory experiences from latent variable representations [1]. This mechanism efficiently combines limited hippocampal storage for novel information with neocortical schemas for predictable elements.

Table 1: Key Features of Memory Construction and Consolidation

| Feature | Pre-Consolidation | Post-Consolidation |

|---|---|---|

| Primary Neural Substrate | Hippocampus and medial temporal lobe | Distributed neocortical networks |

| Representation Format | Detailed, context-rich, sensory-like | Abstracted, gist-based, semantic-like |

| Vulnerability to Interference | High | Low (except during reconsolidation) |

| Dependence on Hippocampus | Critical | Diminished or unnecessary |

| Susceptibility to Schema-Based Distortion | Lower | Higher |

The process involves hippocampal "replay" during rest periods, where patterns of neural activity reactivate recently encoded memories [1]. This replay trains generative networks in entorhinal, medial prefrontal, and anterolateral temporal cortices to reconstruct experiences, supporting both memory recall and imagination [1]. As consolidation progresses, these generative networks become increasingly capable of reconstructing events without hippocampal contribution, though detailed episodic recollection remains hippocampus-dependent [1].

Systems Consolidation: From Temporary Storage to Permanent Memory

Systems consolidation refers to the process whereby memories initially dependent on the hippocampus are gradually reorganized over time, with the hippocampus becoming less important for storage and retrieval as more permanent memories develop in distributed neocortical regions [2]. This process does not involve literal "transfer" of memories from hippocampus to neocortex, but rather gradual changes in neocortical networks that establish stable long-term memory by increasing complexity, distribution, and connectivity among multiple cortical regions [2].

Evidence for systems consolidation comes primarily from studies of retrograde amnesia, which demonstrate that damage to the hippocampus impairs memories formed in the recent past while typically sparing memories formed in the more remote past [2]. The duration of this gradient varies significantly, from 1-3 years for semantic memories to potentially decades for detailed autobiographical memories [2].

Table 2: Timescales of Memory Consolidation Processes

| Process | Typical Duration | Key Biological Mechanisms |

|---|---|---|

| Synaptic Consolidation | Minutes to hours | Protein synthesis, post-translational modifications, long-term potentiation |

| Cellular/Molecular Consolidation | Several hours to days | Gene expression, CREB-C/EBP pathway activation, structural changes |

| Systems Consolidation | Weeks to years | Network reorganization, hippocampal-neocortical dialogue, neural replay |

Competing Theoretical Perspectives

The standard model of systems consolidation proposes gradual neocortical independence from hippocampal control [2]. In contrast, multiple trace theory (later elaborated as the transformation hypothesis) maintains that detailed episodic memories remain permanently dependent on the hippocampus, while semantic (gist-based) memories can become hippocampus-independent [2]. This theoretical division explains why patients with hippocampal damage may retain remote semantic knowledge while losing detailed autobiographical memories from the same period.

Molecular Mechanisms of Memory Consolidation

CREB-C/EBP Pathway and Gene Expression

Long-term memory formation requires de novo gene expression and protein synthesis, distinguishing it from short-term memory which relies on existing networks and post-translational modifications [3]. The CREB-C/EBP molecular pathway represents an evolutionarily conserved mechanism for long-term plasticity and memory formation across species from invertebrates to mammals [3].

Research using rat inhibitory avoidance tasks has demonstrated that in the dorsal hippocampus, glucocorticoid receptors control rapid learning-dependent increases in CREB phosphorylation and expression of immediate early genes such as Arc, alongside increases in synaptic phospho-CaMKIIα, phospho-synapsin-I, and AMPA receptor subunit GluA1 expression [3]. These molecular changes constitute the fundamental substrate of cellular consolidation.

Stress Hormones and Memory Modulation

Emotionally charged or salient events are typically better remembered than neutral experiences, mediated by stress hormones including noradrenaline and glucocorticoids [3]. The relationship between stress levels and memory retention follows an inverted U-shape curve, where moderate stress enhances memory while extreme or chronic stress impairs it [3].

Glucocorticoid receptors regulate multiple intracellular signaling pathways necessary for memory consolidation, including those activated by CREB, MAPK, CaMKII, and BDNF [3]. Research has identified BDNF-dependent signaling as a key downstream effector of glucocorticoid receptor activation during memory consolidation, with BDNF administration rescuing both molecular impairments and amnesia caused by glucocorticoid receptor inhibition [3].

Experimental Approaches and Methodologies

Behavioral Paradigms for Studying Memory Consolidation

Key Experimental Protocols

Table 3: Experimental Protocols for Studying Memory Consolidation

| Method | Protocol Details | Applications | Key Outcome Measures |

|---|---|---|---|

| Inhibitory Avoidance (IA) | Single-trial learning where animal receives foot shock in specific context; tests contextual fear memory [3] | Molecular mechanisms of consolidation; stress-memory interactions | Latency to re-enter shock context; molecular changes in dorsal hippocampus |

| Lesion Studies | Selective hippocampal damage at different time points after learning [2] | Systems consolidation timelines; hippocampal dependence | Retrograde amnesia gradient; remote vs. recent memory preservation |

| Pharmacological Interventions | Protein synthesis inhibitors (anisomycin); receptor antagonists; BDNF administration [3] | Molecular pathways; necessary mechanisms | Rescue or impairment of long-term memory with specific molecular manipulations |

| Neuroimaging (fMRI) | Recall of recent vs. remote autobiographical memories [2] | Neural correlates of systems consolidation in humans | Hippocampal vs. neocortical activation patterns across time |

| Genetic Manipulations | CREB knockout; optogenetic silencing of specific pathways [3] | Causal molecular mechanisms; circuit-specific contributions | Time-limited memory impairments; pathway-specific deficits |

The inhibitory avoidance task has proven particularly valuable for memory consolidation research because its single-trial learning nature allows researchers to identify rapid molecular changes occurring after encoding and track their progression over time [3]. This paradigm has been instrumental in elucidating the role of dorsal hippocampus in contextual association formation and the specific molecular cascades required for consolidation.

The Scientist's Toolkit: Research Reagents and Methodologies

Table 4: Essential Research Reagents for Memory Consolidation Studies

| Reagent/Resource | Function/Application | Example Use in Memory Research |

|---|---|---|

| Protein Synthesis Inhibitors (e.g., Anisomycin) | Block de novo protein synthesis | Testing necessity of protein synthesis for long-term memory formation [3] |

| CREB Modulators | Activate or inhibit CREB pathway | Establishing causal role of CREB in long-term memory consolidation [3] |

| BDNF and TrkB Modulators | Manipulate BDNF signaling pathway | Rescuing memory deficits from glucocorticoid receptor inhibition [3] |

| Glucocorticoid Receptor Agonists/Antagonists | Modulate stress hormone signaling | Investigating stress-memory interactions and inverted U-shape relationship [3] |

| Optogenetic Tools | Precise temporal control of specific neural populations | Determining necessity and sufficiency of specific circuits during consolidation windows [2] |

| Activity Markers (e.g., c-Fos, Arc) | Identify recently activated neurons | Mapping neural ensembles engaged during memory encoding and consolidation [3] |

| Modern Hopfield Networks | Computational modeling of autoassociative memory | Simulating hippocampal memory binding and replay processes [1] |

| Variational Autoencoders | Implementing generative models | Modeling neocortical schema formation and memory reconstruction [1] |

Clinical Implications and Future Directions

Understanding memory construction and consolidation mechanisms has profound implications for treating memory-related disorders. The discovery of reconsolidation—where retrieved memories temporarily return to a labile state before restabilizing—has opened novel therapeutic avenues [3]. Potential applications include weakening maladaptive memories in post-traumatic stress disorder, strengthening fragile memories in age-related cognitive decline, and developing cognitive enhancers that target specific consolidation mechanisms.

The neurobiological framework outlined in this review continues to evolve through integration of computational modeling with molecular neuroscience. Future research will likely focus on linking specific molecular pathways to systems-level consolidation processes, developing temporally-precise interventions for memory disorders, and exploiting the constructive nature of memory to enhance adaptive cognitive functioning while minimizing distortion.

The Hippocampus as a Temporary Scaffold for New Memories

The hippocampus is widely recognized as a critical brain structure for memory formation. However, contemporary research has moved beyond this simple characterization to reveal its specific role as a temporary scaffold that supports the initial construction and stabilization of new memories before their redistribution to neocortical long-term storage. This scaffolding function represents a core component of the brain's memory systems, enabling rapid learning while maintaining cognitive stability. Understanding the precise neural mechanisms through which the hippocampus provides this initial support framework is essential for unraveling the pathophysiology of memory disorders and developing targeted therapeutic interventions.

This whitepaper synthesizes recent advances from multiple domains of neuroscience research—including electrophysiology, computational modeling, and molecular biology—to present a comprehensive mechanistic account of hippocampal scaffolding. We examine the specific circuit-level operations, neural coding strategies, and system-level interactions that collectively enable the hippocampus to serve as a temporary structural support for nascent memory traces. The framework presented here has significant implications for drug development targeting memory-related disorders including Alzheimer's disease, schizophrenia, and post-traumatic stress disorder.

Core Mechanism: Complementary Learning Systems

The hippocampus operates within a Complementary Learning System (CLS) framework, where it specializes in the rapid encoding of novel experiences while the neocortex gradually extracts statistical regularities across multiple experiences [1]. As a temporary scaffold, the hippocampus initially binds distributed cortical elements into coherent memory traces, then progressively supports the strengthening of direct cortical-cortical connections through controlled reactivation.

Computational Foundations

Recent generative models of memory provide a computational basis for understanding hippocampal scaffolding. These models propose that memory consolidation occurs when hippocampal replay trains generative networks (variational autoencoders) in the neocortex to reconstruct sensory experiences from latent variable representations [1]. In this framework:

- The hippocampus serves as an autoassociative "teacher" network that initially stores specific episodes

- Neocortical regions function as generative "student" networks that gradually learn to reconstruct experiences

- Hippocampal replay during offline states drives the transfer of information from the teacher to student networks

- As consolidation proceeds, the generative network becomes increasingly capable of reconstructing experiences without hippocampal support

This process optimizes the use of limited hippocampal storage for new and unusual information while delegating predictable elements to neocortical schemas [1]. The scaffolding function is therefore most critical during the initial period when the neocortical generative models cannot yet accurately reconstruct a novel experience.

Neural Signaling Pathways Supporting Scaffolding

The hippocampal scaffolding mechanism relies on precisely coordinated signaling between specific neural populations. Research has identified two parallel long-range projections from the lateral entorhinal cortex (LEC) to the CA3 region that conjointly stabilize memory representations [4].

Dual-Component Input System

The stabilization of hippocampal place maps during learning depends on the integrated action of two distinct input pathways from the lateral entorhinal cortex to CA3:

- LEC Glutamatergic (LECGLU) projections drive excitation in CA3 but also substantial feedforward inhibition that fine-tunes neuronal firing

- LEC GABAergic (LECGABA) projections suppress local inhibition to disinhibit CA3 activity with compartment- and pathway-specificity

This dual-input system creates a synergistic effect where LECGLU provides the excitatory drive while LECGABA selectively boosts somatic output to integrated LECGLU and CA3 recurrent inputs [4]. The coordinated action of these pathways supports the formation and maintenance of CA3 place cells across contexts and over time, enabling the hippocampus to maintain stable memory scaffolds during ongoing learning.

The following diagram illustrates these coordinated signaling pathways:

Dynamic Representation Updating

Beyond stable signaling, the hippocampus demonstrates remarkable flexibility in updating its representations as animals learn. In virtual navigation tasks, hippocampal neurons initially show consistent patterns when animals encounter novel environments. As learning progresses, these representations undergo decorrelation, with distinct neuronal populations coming to represent different reward states or task conditions [5]. This dynamic reorganization enables the hippocampal scaffold to adapt to behavioral relevance rather than simply mapping physical space.

This representational flexibility aligns with emerging evidence that hippocampal cells function less as pure "place cells" and more as "state cells" that encode an animal's situation within a broader cognitive context [5]. The scaffolding function therefore involves both stability to preserve memory integrity and flexibility to incorporate new learning.

Quantitative Evidence for Hippocampal Scaffolding

Biomechanical Vulnerability and Protection

Research on closed head injury in rat models provides quantitative evidence supporting the scaffolding concept by demonstrating how hippocampal vulnerability changes with developmental factors. A recent study established quadratic regression models describing the effects of impact strength and body weight on cell loss across hippocampal subregions [6].

Table 1: Quantitative Relationship Between Impact Strength, Body Weight, and Hippocampal Cell Loss

| Hippocampal Region | Impact Strength Effect | Body Weight Effect | Relationship Type |

|---|---|---|---|

| CA1 | Increased cell loss | Reduced cell loss | Quadratic, non-linear |

| CA3 | Increased cell loss | Reduced cell loss | Quadratic, non-linear |

| Dentate Gyrus (DG) | Increased cell loss | Reduced cell loss | Quadratic, non-linear |

The study found that increasing impact strength resulted in a higher proportion of cell loss, whereas increasing body weight was associated with a reduction in cell loss [6]. This protective effect of maturation parallels the progressive reduction in hippocampal dependency as memories consolidate, suggesting that the scaffold becomes more resilient as brain systems develop.

Behavioral correlates aligned with these pathological findings: higher impact strength prolonged the time required for rats to locate the hidden platform in the Morris water maze, while higher body weight shortened the platform-finding time under the same impact strength [6]. These behavioral measures demonstrate the functional consequences of compromised hippocampal scaffolding.

Microstructural Changes in Aging and Alzheimer's Disease

Quantitative MRI (qMRI) studies provide additional evidence for the hippocampal scaffolding concept by revealing microstructural changes that precede macroscopic atrophy in aging and Alzheimer's disease (AD). These techniques allow in vivo mapping of tissue composition changes including demyelination, iron deposition, and alterations in water content [7].

Table 2: Hippocampal Microstructural Markers in Aging and Early Alzheimer's Disease

| qMRI Parameter | Sensitivity | Aging Change | AD Change | Implied Tissue Pathology |

|---|---|---|---|---|

| R1 | Macromolecular environment, myelin, iron | Decrease | Decrease | Demyelination, iron deposition |

| MTsat | Macromolecular concentration, myelin | Decrease | Decrease | Demyelination |

| R2* | Iron content, magnetic susceptibility | Increase | Increase | Iron accumulation |

| PD | Water content | Variable | Increase | Edema, inflammation |

Studies show these microstructural alterations follow specific spatial patterns within the hippocampus, with distal regions (subiculum, CA1) showing greater vulnerability than proximal regions (dentate gyrus) in both aging and early AD [7]. This differential vulnerability aligns with the scaffolding concept, as these regions participate in distinct circuits supporting memory formation and consolidation.

Experimental Protocols for Investigating Hippocampal Scaffolding

Closed Head Injury Model for Quantitative Assessment

The established protocol for investigating hippocampal injury mechanisms involves controlled head impact in rodent models [6]:

Animal Preparation:

- Use male Sprague Dawley rats categorized by weight: light-weight (203.1g ± 8.2g, 8 weeks), medium-weight (301.2g ± 8.8g, 10 weeks), and heavy-weight (405.5g ± 12.3g, 12 weeks)

- Anesthetize via mask inhalation of isoflurane (2% initial, increasing to 4.0-5.0% over 3-5 minutes)

- Affix a steel helmet (2mm thick, 10mm diameter) to the pre-scraped area at the midline between Bregma and Lambda

- Secure a mold with acceleration/angular velocity sensors approximately 5mm from the helmet front edge

Impact Procedure:

- Position anesthetized rats in an acrylic box with sponge support, head placed on a 3cm wide elastic support mesh

- Align impact head fully with helmet at 50mm distance

- Initiate impact using BIM-IV head impact machine, simultaneously activating high-speed camera (1000fps) and sensor data acquisition (10kHz sampling)

- Continue isoflurane delivery until impact, then discontinue and monitor until mobility returns

Assessment Methods:

- Morris Water Maze testing at 24 hours post-injury with 5 days of behavioral training

- Hematoxylin-eosin staining of hippocampus for cell loss quantification

- Quadratic orthogonal regression modeling to relate impact strength, body weight, and hippocampal damage

Multi-Electrode Array Recording of Network Activity

For in vitro investigation of hippocampal network function, a detailed protocol exists for recording and analyzing neuronal network activity [8]:

Slice Preparation:

- Prepare acute mouse hippocampal slices using standard procedures

- Maintain slices in oxygenated artificial cerebrospinal fluid

Activity Induction and Recording:

- Induce neuronal network bursting activity using appropriate pharmacological agents or electrical stimulation

- Record bursting activity with multi-electrode arrays (MEAs)

- Maintain appropriate temperature and oxygenation throughout recordings

Data Analysis:

- Analyze network activity using MATLAB burst detection algorithms

- Quantify burst frequency, duration, amplitude, and spatial propagation patterns

- Apply statistical analyses to compare experimental conditions

This protocol enables investigation of fundamental network properties relevant to the scaffolding function, including synchronous activity, excitatory-inhibitory balance, and plasticity mechanisms.

Table 3: Research Reagent Solutions for Hippocampal Scaffolding Investigations

| Research Tool | Specific Example | Function/Application |

|---|---|---|

| Head Impact Device | BIM-IV Animal Impact Machine [6] | Delivers controlled mechanical loads to study injury mechanisms |

| Behavioral Assessment | Morris Water Maze [6] | Evaluates spatial memory function dependent on hippocampal integrity |

| Neural Activity Recording | Multi-Electrode Arrays (MEAs) [8] | Records network-level activity in hippocampal slices |

| Computational Framework | Variational Autoencoders (VAEs) [1] | Models generative memory processes and consolidation |

| Genetic Risk Model | APOE4 Allele Carriers [7] | Studies genetic susceptibility to hippocampal dysfunction in AD |

| Quantitative MRI | Multiparametric Mapping (R1, MTsat, R2*, PD) [7] | Characterizes hippocampal microstructure in vivo |

| Neural Modulation | Optogenetic Control of LECGLU/LECGABA Pathways [4] | Manipulates specific input pathways to study scaffolding mechanisms |

Diagram: Hippocampal Scaffolding in Memory Consolidation

The following diagram illustrates the complete process by which the hippocampus serves as a temporary scaffold during memory consolidation:

Clinical Implications and Therapeutic Opportunities

Understanding the hippocampus as a temporary scaffold provides crucial insights for developing interventions for memory disorders. Three key clinical conditions illustrate the consequences when scaffolding mechanisms fail:

Alzheimer's Disease

In AD, hippocampal scaffolding functions are compromised through multiple mechanisms. Aberrant neural reactivation during sharp-wave ripples (SWRs) appears early in disease progression [9]. Quantitative MRI reveals microstructural changes in hippocampal tissue composition—including demyelination and iron deposition—that precede macroscopic atrophy [7]. These alterations disrupt the precise coordination required for effective memory stabilization and consolidation.

Schizophrenia

Schizophrenia involves disrupted hippocampal-prefrontal connectivity and abnormal SWRs [9]. The impaired neural replay prevents proper memory stabilization, contributing to the fragmentation of thought and memory observed in this condition. Recent work suggests these abnormalities may stem from disrupted excitatory-inhibitory balance within hippocampal circuits [4].

Post-Traumatic Stress Disorder

PTSD may involve over-stabilization of traumatic memories within the hippocampal scaffold, preventing proper contextualization and integration [4]. The hyper-stability of these maladaptive memory traces leads to their intrusive retrieval and impaired extinction learning.

Therapeutic Development

Drug development targeting memory disorders should consider the dual temporal aspects of hippocampal scaffolding: initial stabilization and subsequent transfer. Compounds that enhance the precision of neural reactivation during SWRs may improve consolidation, while interventions that modulate the excitatory-inhibitory balance in hippocampal-entorhinal circuits could optimize scaffolding efficiency. The quantitative measures and experimental protocols outlined in this whitepaper provide essential tools for evaluating such therapeutic approaches.

The Role of the Neocortex as the Long-Term Storage Site

Long-term memory storage is a cornerstone of adaptive behavior, and the neocortex is widely recognized as its ultimate repository. This process, known as systems consolidation, involves a dynamic reorganization of brain networks where memories, initially dependent on the hippocampus, gradually stabilize within distributed neocortical regions, becoming independent of the medial temporal lobe [2]. The traditional view posits a dual-system model, with the hippocampus supporting fast learning of episodic details, while the neocortex serves as a slow-learning platform for semantic knowledge and remote memories [10] [2]. This framework explains the time-limited role of the hippocampus; damage impairs recent but typically spares remote memories, indicating a shift in the memory's physiological substrate over time [2]. This review synthesizes contemporary evidence elucidating the neocortex's role, highlighting specific laminar architectures, cell types, and large-scale circuits that enable the formation and permanence of our oldest and most defining memories [11].

Key Neuroanatomical Substrates and Mechanisms

Neocortical Layer 1: A Locus for Associative Memory

Layer 1 of the neocortex is emerging as a critical site for long-term memory formation and storage. This layer is almost devoid of cell bodies but is the target of "an almost infinite number of long-range terminal nerve fibers," as observed by Ramón y Cajal [10]. These fibers convey feedback information from higher cortical areas and higher-order thalamic nuclei onto the distal apical dendrites of pyramidal neurons in layers 2/3 and 5 [10].

- Integration of Feed-Forward and Feedback Inputs: Pyramidal neurons associate simultaneous input to the basal dendrites (carrying feature-related, feed-forward information) with input to the apical tuft dendrites in Layer 1 (carrying contextual, feedback information). This integration occurs via the generation of explosive dendritic calcium spikes that dominate the neuron's output [10].

- Convergence of Memory-Related Signals: Multiple memory structures from outside the neocortex, including the amygdala (for fear memory), higher-order thalamus (for learning), and top-down neocortical inputs, converge on Layer 1. This suggests Layer 1 serves as a nexus where different memory-related criteria—such as novelty, emotional significance, or deviation from expectation—gate the stabilization of long-term associations [10].

- Inhibitory Circuitry and Plasticity: Local Layer 1 interneurons shape and modulate long-range contextual inputs. These inhibitory circuits are plastic and undergo experience-dependent changes, potentially controlling dendritic calcium spikes and modulating the selection and activation of engram cortical neurons [10].

The hypothesis that semantic memory is the long-term association of different contexts with particular features in neocortical Layer 1 provides a powerful, testable model for the cortical embodiment of knowledge [10].

Prefrontal and Temporal Cortices in Remote Memory Storage

Compelling evidence from rodent studies implicates specific neocortical regions in storing remote memories. The anterior cingulate cortex (AC) and prelimbic cortex (areas of the prefrontal cortex), along with the temporal cortex, show robust increases in activity specifically following remote memory retrieval [11]. Importantly, damage to or inactivation of these areas produces selective remote memory deficits, confirming their necessity [11]. A key discovery is a direct monosynaptic prefrontal-hippocampal projection, termed the AC–CA pathway, which originates in the anterior cingulate and projects to the CA1 and CA3 regions of the hippocampus [12]. This top-down pathway is causally involved in contextual memory retrieval; its activation induces recall, while its inhibition impairs retrieval, without affecting memory encoding [12].

Engram Cells in the Sensory Neocortex

The sensory neocortex is not merely a passive receiver of information but an active substrate for long-term memory storage. In the auditory cortex (AuC), a sparse population of neurons in layer 2/3 emerges as a physiological candidate for the engram. These cells, termed Holistic Bursting (HB) cells, transition from quiescence to a bursting mode through associative learning [13]. They invariantly express holistic information of learned composite sounds, responding with a burst of spikes to a specific learned chord but not to its individual component tones [13]. The same sparse HB cells that embody the behavioral relevance of the learned sounds across the entire learning process, pinpointing them as single-cell engram candidates for long-term memory storage in the sensory neocortex [13].

Table 1: Key Cell Types in Long-Term Memory Storage

| Cell Type / Population | Brain Region | Defining Characteristics | Proposed Role in Memory |

|---|---|---|---|

| Holistic Bursting (HB) Cells [13] | Auditory Cortex, Layer 2/3 | Emerges during learning; fires bursts to a learned composite sound but not its isolated components. | Single-cell embodiment of a long-term sensory memory engram. |

| Sustained Place Cells [14] | Hippocampal CA1 | Maintains stable place fields across multiple days; over-represents salient task locations (e.g., rewards). | Forms a stable, expanding memory representation of the environment and learned tasks. |

| Transient Place Cells [14] | Hippocampal CA1 | Place fields are unstable, appearing for ≤2 days. | Rapid, plastic encoding of immediate experience; unstable component of the network. |

| Layer 1-Targeting Pyramidal Neurons [10] | Neocortex, Layers 2/3 & 5 | Integrates feed-forward (basal) and contextual (apical tuft) inputs via dendritic calcium spikes. | Association of features with context; putative substrate for semantic memory. |

Quantitative Evidence from Recent Studies

Formation of a Stable Hippocampal-Cortical Representation

Longitudinal tracking of hippocampal CA1 place cells (PCs) in mice reveals how a stable memory representation forms with experience. As mice learned a task over 7 days, the population of PCs evolved. A subset of PCs, termed sustained PCs, progressively increased their stability, eventually dominating the representation [14]. These sustained PCs were not randomly distributed; they disproportionately encoded learned, task-relevant information.

Table 2: Quantitative Evolution of Hippocampal Place Cell Populations During Learning

| Parameter | Trend Over 7 Days of Learning | Functional Implication |

|---|---|---|

| Population of Sustained PCs [14] | Increased nearly threefold after 5 days. | Expansion of a stable memory representation. |

| Spatial Density at Salient Regions [14] | Significantly more elevated in sustained PCs vs. transient PCs. | Stable cells preferentially encode learned, behaviorally relevant locations (rewards, cues). |

| Reward Condition Discriminability (CDI/PDI) [14] | Sustained PCs: CDI=0.172; Transient PCs: CDI=0.089. | Stable cells are more discriminative, reflecting refined task knowledge. |

| PC Onset Latency in Session [14] | Sustained PCs became active earlier in the session than transient PCs after day 1. | Stable cells are retrieved more rapidly upon task engagement, indicating efficient memory recall. |

Physiological Signature of a Cortical Engram

In the auditory cortex, Holistic Bursting (HB) cells show distinct physiological properties that satisfy key engram criteria. On the last day of training, the probability of a bursting response evoked by the learned chord in HB cells was almost 100%, compared to a spontaneous bursting rate of only 0.025 Hz [13]. Furthermore, the number of spikes in a learned chord-evoked burst was significantly greater than the arithmetic sum of spikes evoked by the four constituent tones presented individually, confirming their non-linear, holistic response property [13]. This specific, high-probability response underscores their role as a persistent cellular substrate for a specific memory [13].

Detailed Experimental Protocols

Protocol 1: Longitudinal Imaging of Hippocampal Memory Ensembles

This protocol is used to track the formation of stable memory representations in the hippocampus [14].

- Animal Preparation: Transgenic mice expressing the calcium indicator GCaMP6f are surgically implanted with a cranial window over the hippocampus and a head-fixation plate.

- Behavioral Task: Head-fixed mice run on a linear treadmill enriched with tactile features. They are trained over 7 days to associate two alternating reward locations with specific visual cues.

- Data Acquisition: Using a two-photon microscope, Ca2+ activity from a single population of hippocampal CA1 pyramidal neurons is recorded daily as the mouse performs the task. Behavioral data (running, licking) are recorded simultaneously.

- Image Processing & Cell Registration: Motion correction and segmentation algorithms are applied to identify active neurons. The same neurons are tracked across all 7 days using automated registration with manual curation.

- Place Cell Identification: Neurons are classified as place cells (PCs) based on significant spatial tuning of their Ca2+ activity. A place field is defined as a contiguous region where activity exceeds 50% of the maximum firing rate.

- Stability Analysis: PCs are classified as "sustained" (active for >2 consecutive days with a stable place field location) or "transient" (active for ≤2 days). Population vectors are compared across days to assess representational stability.

- Information Content Analysis: Spatial density profiles around salient regions (reward, cue) are computed. A discrimination index (at cellular and population levels) is calculated to quantify how well neuronal activity distinguishes between the two reward conditions.

Protocol 2: Identifying Cortical Engram Cells with Electrophysiology

This protocol combines chronic imaging with targeted patching to identify and physiologically characterize memory-holding neurons in the auditory cortex [13].

- Chronic Window Implantation: Mice are implanted with a cranial window over the auditory cortex to allow repeated optical access.

- Associative Learning: Head-fixed mice are trained in an auditory associative task over 6 days. Two novel composite chords are paired with water delivery from left or right spouts. Behavioral performance (correct lick direction) is tracked daily.

- Day-by-Day Calcium Imaging: Throughout training, two-photon Ca2+ imaging of L2/3 neurons in the auditory cortex is performed in response to the presentation of the training chords.

- Targeted Loose-Patch Recording: On the final training day, the cranial window glass is removed. Using the chronic imaging data as a guide, targeted loose-patch recordings are performed on identified neurons. This allows for high-temporal-resolution measurement of action potentials simultaneously with Ca2+ imaging, enabling a calibration between the two signals.

- Physiological Characterization:

- Sound Evoked Responses: The neuron's response to the trained chords and their individual constituent tones (never presented during learning) is recorded.

- Spontaneous Activity: Spontaneous firing is recorded in the absence of sound to establish a baseline.

- Burst Analysis: Responses are analyzed for burst events. A neuron is classified as a Holistic Bursting (HB) cell if it responds to a trained chord with a burst (Δf/f ≥ 0.8 in imaging, corresponding to multiple spikes in electrophysiology) but shows a significantly weaker response to the isolated tones.

Visualization of Mechanisms and Workflows

Neocortical Layer 1 Memory Hypothesis

Systems Consolidation and Cortical Storage

The Scientist's Toolkit: Key Research Reagents & Models

Table 3: Essential Reagents and Models for Investigating Cortical Memory Storage

| Tool / Reagent | Function in Research | Example Use Case |

|---|---|---|

| GCaMP6f/m (Genetically Encoded Ca2+ Indicator) [14] [13] | Reports neuronal activity via fluorescence changes upon calcium influx, enabling population imaging. | Longitudinal tracking of place cell activity in hippocampal CA1 [14] or sound-evoked responses in auditory cortex [13]. |

| Channelrhodopsin-2 (ChR2) / Halorhodopsin (eNpHR3.0) [12] | Allows precise activation (ChR2) or inhibition (eNpHR3.0) of specific neuronal populations with light (optogenetics). | Testing causal role of AC–CA pathway in memory retrieval [12]. |

| Retrograde Tracers (e.g., RV-tdT, CAV) [12] | Labels neurons that project to a specific injection site, revealing anatomical connectivity. | Identifying direct top-down projections from anterior cingulate cortex to hippocampus [12]. |

| Head-Fixed Behavioral Setups & Virtual Reality [14] [12] | Provides precise control of sensory stimuli and behavioral monitoring during neural recording/manipulation. | Training mice on linear treadmills with tactile features [14] or in virtual environments for memory tasks [12]. |

| Targeted Loose-Patch Electrophysiology [13] | Enables high-fidelity recording of action potentials from single neurons pre-identified by imaging. | Physiological characterization of Holistic Bursting cells in auditory cortex [13]. |

| Transgenic Mouse Lines | Drives expression of tools (e.g., GCaMP, opsins) in specific cell types or brain regions. | Ensuring robust and specific expression of indicators or actuators in cortical or hippocampal pyramidal neurons. |

Within the complex neural architecture of memory, the hippocampus acts as a central "teacher" network, guiding the process of memory construction and consolidation. This instructional role is primarily executed through neural replay—the rapid, sequential reactivation of neuronal firing patterns representing past experiences or potential future events during offline states like rest and sleep [15]. This process transforms transient experiences into stable, long-term memories distributed across the neocortex. Contemporary research reveals that replay is not a mere recapitulation of the past but a dynamic, selective mechanism biased by reward-prediction errors (RPE) and behavioral relevance [16] [17]. It facilitates the extraction of generalized knowledge and adaptive value structures, making it a cornerstone of flexible behavior. Understanding the mechanisms of hippocampal replay provides a critical framework for deciphering the neural basis of memory and developing novel therapeutic interventions for memory-related disorders.

The standard theory of systems consolidation posits that memories are initially encoded in the hippocampus and gradually transferred to the neocortex for long-term storage [16]. Neural replay is the hypothesized vehicle for this transfer. The hippocampus, by "replaying" compressed sequences of neural activity, effectively "teaches" the neocortex, guiding the reorganization and strengthening of cortical connections to form stable memory traces [15] [18].

This teaching function is highly selective. Not all experiences are replayed with equal strength; the hippocampus prioritizes information based on its adaptive significance. A growing body of evidence suggests that the key selection criterion is not reward itself, but the reward-prediction error (RPE)—the discrepancy between expected and actual outcomes, which is a fundamental teaching signal in reinforcement learning [17]. Furthermore, the content of replay can be fragmented or recombined, suggesting its role extends beyond simple memory strengthening to include inference, planning, and the construction of cognitive maps [16] [15]. This positions the hippocampal teacher not just as a simple recorder, but as an active, predictive simulator that extracts and reinforces valuable strategies from limited experiences.

Mechanistic Insights: How the Hippocampus Teaches

Structural and Circuit Foundations of Replay

Recent high-resolution studies have illuminated the nanoscale structural changes that underpin memory traces (engrams) and, by extension, the substrate for replay. Key findings include:

- Multi-synaptic Boutons: Neurons within a hippocampal memory engram undergo significant structural reorganization, forming atypical connections called multi-synaptic boutons. In these structures, the axon of one neuron contacts multiple post-synaptic neurons, potentially enabling flexible information routing and the cellular flexibility required for memory allocation and replay [19].

- Intracellular Reorganization: Engram neurons reorganize intracellular structures that provide energy and support communication and plasticity at neuronal connections. They also exhibit enhanced interactions with astrocytes, suggesting glial cells play a crucial role in supporting the replay process [19].

- Stabilizing Cortical Inputs: Long-range inputs from the lateral entorhinal cortex to the hippocampal CA3 region are critical for stabilizing memory representations. A combination of excitatory (glutamatergic) and inhibitory (GABAergic) inputs fine-tunes CA3 circuit activity through a balance of excitation and disinhibition, creating stable "templates" that can be reliably reactivated during replay [20].

The Bias: What Gets Replayed and Why

The hippocampus does not replay experiences at random. The selection is governed by sophisticated algorithms that optimize learning:

- Reward-Prediction Error (RPE) Bias: Neural activity associated with experiences that result in high RPE is preferentially replayed. This has been demonstrated in experiments where replay was best explained by a model prioritizing RPE, rather than one prioritizing reward outcome alone [17]. This RPE-biased replay is observed in the reactivation of cell pairs in the ventral striatum following unexpected rewards.

- Reward-Prediction Signals: During post-task rest, the most strongly reactivated cell pairs between the hippocampus and ventral striatum show preferential firing when approaching a reward location, indicating the replay of reward-prediction signals, not just reward consumption [17].

- Fragmentation in Large Environments: In naturalistic, large-scale environments, replay does not consist of complete trajectories. Instead, it is highly fragmented, depicting short, behaviorally relevant chunks (e.g., landings, conspecific interactions) covering only about 6% of the environment [15]. This "chunking" may be a fundamental mechanism for efficient hippocampal-neocortical communication and memory management.

Table 1: Key Features of Hippocampal Replay

| Feature | Description | Functional Significance |

|---|---|---|

| Temporal Compression | Replayed sequences occur orders of magnitude faster than the original experience [15]. | Enables rapid "training" of cortical networks; efficient information transfer. |

| Fragmentation & Chunking | In large environments, replays are short, covering only ~6% of the space [15]. | May reflect network constraints and facilitate memory "chunking" for cortical storage. |

| RPE Bias | Experiences with high reward-prediction errors are replayed more frequently [17]. | Prioritizes learning from surprising, informative outcomes; a core reinforcement learning principle. |

| Contextualization | Neural representation of an action differentiates based on its sequence position, primarily during rest [21]. | Supports skill learning by binding individual actions into a coherent, contextualized sequence. |

Experimental Approaches and Protocols

The study of neural replay relies on a suite of advanced technologies, from invasive electrophysiology to non-invasive human neuroimaging.

Protocol 1: Electrophysiology in Rodent Reinforcement Learning

This protocol is designed to dissociate reward from reward-prediction error to identify the true bias of replay [17].

- 1. Animal Model & Surgery: Adult male rats are implanted with chronic drivable tetrodes or silicon probes targeting the dorsal CA1 region of the hippocampus and the ventral striatum.

- 2. Behavioral Task: Rats are trained on a three-armed maze foraging task. Each arm is assigned a different, stable reward probability (e.g., High: 75%, Mid: 50%, Low: 25%). This design ensures that receiving a reward on the low-probability arm generates a high positive RPE.

- 3. Data Acquisition: During task performance and subsequent post-task rest/sleep periods, neural ensemble activity is recorded. Behavior (arm entries, reward delivery) is simultaneously tracked.

- 4. Replay & Model Analysis:

- Putative replay events are identified during sharp-wave ripples (SWRs).

- A state-space decoder is used to reconstruct the spatial trajectory represented by the neural activity during SWRs.

- Reinforcement learning models (e.g., Q-learning, Dyna-Q) with different replay policies (random, reward-biased, RPE-biased) are fitted to the behavioral data. Model comparison reveals which replay policy best predicts the animal's learning.

Protocol 2: Human fMRI for Cortical Replay During Implicit Learning

This protocol investigates the role of cortical replay in implicit statistical learning in humans [22].

- 1. Participants & Task: Human participants undergo fMRI while performing an incidental statistical learning task. They view sequences of images that follow probabilistic transitions determined by a ring-like graph structure, unaware of the underlying sequence.

- 2. Paradigm: The task includes brief (10-second) pauses ("interval trials"). The sequential structure changes halfway through the experiment without warning, allowing observation of dynamic learning.

- 3. fMRI Data Acquisition & Analysis:

- Multivoxel Pattern Analysis (MVPA) is used to detect replay. The neural activity patterns during the task are used as templates.

- During the 10-second pauses, the fMRI data are scanned for sequential reactivation of these task-related patterns, specifically looking for backward sequential replay in visual cortical areas.

- The strength of replay is then correlated with behavioral measures of implicit learning (e.g., response times modeled with a Successor Representation framework) and with post-task tests of explicit awareness.

The workflow for investigating replay in both animal and human studies can be summarized as follows:

Computational Framework: Reinforcement Learning and the Dyna Architecture

The hippocampus's teaching function is powerfully conceptualized through the lens of computational reinforcement learning, specifically the Dyna architecture [16].

In this framework, the CA3 region acts as a generative model, producing diverse, simulated experiences—akin to a "simulator." The CA1 region, which contains robust value representations, evaluates these simulations. Experiences or simulated trajectories that lead to high reward valuations are preferentially reinforced and replayed. This process closely parallels the Dyna-Q algorithm, where an agent performs offline simulations (replay) to supplement slow, trial-and-error learning, dramatically accelerating the learning process [16] [17].

From this perspective, memory consolidation is not merely the strengthening of incidental memories. It is an active process of deriving optimal strategies through offline simulation, where the hippocampus (the "teacher") uses replay to update and optimize the value functions in downstream regions like the striatum and neocortex (the "students").

Table 2: Quantitative Findings from Key Replay Studies

| Study Model | Key Finding | Quantitative Result | Citation |

|---|---|---|---|

| Bat Hippocampus (200m tunnel) | Replays are fragmented, not continuous. | Individual replays cover only ~6% of the 200m environment. [15] | |

| Rat Hippocampus-Striatum | Replay is biased by reward-prediction error, not reward. | RPE-biased replay policies provided the best fit to behavioral data, unlike random or reward-only policies. [17] | |

| Human Visual Cortex (fMRI) | Replay occurs during brief, on-task pauses and supports implicit learning. | Replay detected during 10-second pauses; strength correlated with successor representation learning, not explicit knowledge. [22] | |

| Human Motor Learning (MEG) | Action representations contextualize during rest. | Representational differentiation during rest in early learning (trials 1-11) correlated with skill gains. [21] |

Table 3: Key Research Reagent Solutions for Neural Replay Studies

| Reagent / Tool | Function in Replay Research | Example Use Case |

|---|---|---|

| Genetically Encoded Calcium Indicators (e.g., GCaMP) | Enables visualization of neuronal population activity in real-time via optical imaging. | Monitoring engram neuron activity during learning and replay in transgenic mice. [19] |

| Tetrodes / Silicon Probes | High-density electrophysiology for recording single-unit and ensemble neural activity from multiple brain regions simultaneously. | Recording from hippocampal CA1 and ventral striatal neurons concurrently in behaving rats. [17] |

| State-Space Decoder | Computational algorithm to decode the spatial position or behavioral content from neural population activity. | Reconstructing the trajectory of a replay event from the sequential firing of place cells. [17] [15] |

| Dyna-Q Reinforcement Learning Model | A computational framework that incorporates simulated experience (replay) to accelerate value learning. | Modeling the role of hippocampal-striatal replay in offline value updates and behavioral optimization. [16] [17] |

| Multivoxel Pattern Analysis (MVPA) | A fMRI analysis technique to detect stimulus-specific neural representations based on distributed activity patterns. | Identifying the spontaneous reactivation of task-related patterns in the hippocampus or cortex during post-encoding rest. [22] [18] |

Integrated Discussion and Future Directions

The evidence consolidated here firmly establishes the hippocampus as a "teacher" network that uses neural replay to instruct the broader brain. This teaching is not a passive broadcast but a highly curated, value-driven process. The core mechanism involves the biased selection of salient experiences—primarily those associated with high RPE—their compressed and often fragmented sequential reactivation, and their use in updating internal models for future behavior [17] [15].

The discovery of fragmented replay in large, naturalistic environments challenges the classical view of memory reactivation and suggests the hippocampus may communicate with the neocortex in "chunks" rather than complete episodes, a concept with profound implications for understanding real-world memory [15]. Furthermore, the observation that replay during brief rest periods drives rapid, early skill learning in humans highlights its causal role in micro-consolidation, a process critical for neurorehabilitation and brain-computer interface applications [21].

The following diagram synthesizes the core "teacher" circuit and its functional interactions:

Future research must focus on several key areas:

- Molecular Composition: Determining the molecular composition of structures like multi-synaptic boutons and their precise role in replay and plasticity [19].

- Causal Manipulation: Moving beyond correlation to direct causal manipulation of specific replay content in both animal models and humans to solidify its functional role.

- Translational Applications: Exploring how modulating replay dynamics could ameliorate memory deficits in neurodegenerative diseases like Alzheimer's or in neuropsychiatric disorders where memory precision fails [20] [18].

By decoding the algorithms of the hippocampal teacher, we open new frontiers in understanding memory construction and developing powerful therapeutic strategies.

Sharp-Wave Ripples (SWRs) as a Key Signature of Reactivation

Sharp-wave ripples (SWRs) are high-frequency (150-250 Hz), short-duration neuronal events originating primarily in the hippocampus that serve as a fundamental mechanism for memory reactivation. These transient oscillatory patterns facilitate the replay of experience-based neural activity in compressed temporal format, supporting critical cognitive functions including memory consolidation, retrieval, planning, and decision-making. This technical review synthesizes current understanding of SWR physiology, detection methodologies, and functional significance across behavioral states, providing researchers with comprehensive experimental frameworks and analytical tools for investigating hippocampal reactivation dynamics. Emerging evidence positions SWRs as a crucial biomarker for cognitive function with significant implications for therapeutic development in neurodegenerative and neuropsychiatric disorders.

Sharp-wave ripples (SWRs) represent the most synchronous population pattern in the mammalian brain, characterized by transient high-frequency oscillations (110-200 Hz in rodents; 80-250 Hz across species) that occur during offline brain states including slow-wave sleep and consummatory behaviors [23]. These events are initiated in the CA3 region of the hippocampus through excitatory recurrent connections and manifest in CA1 as sharp waves in stratum radiatum coupled with fast ripple oscillations in stratum pyramidale [24] [23]. The remarkable synchrony of SWRs generates powerful excitatory output that affects widespread cortical areas and subcortical nuclei, enabling coordinated reactivation of neuronal assemblies that represent experience-based information [23].

The spike content of SWRs is temporally and spatially coordinated by interneuron consortia to replay fragments of waking neuronal sequences in a temporally compressed format [23]. This replay mechanism constitutes a fundamental neural process for memory stabilization and systems consolidation, with selective disruption experiments demonstrating causal links between SWR integrity and memory performance [24] [25]. Beyond their retrospective role in consolidating past experiences, SWRs contribute to prospective functions including planning, decision-making, and creative thought by facilitating the recombination of stored information into novel sequences [24] [23]. The critical importance of SWRs is further highlighted by their pathological alteration in neurological and psychiatric conditions, including epilepsy, schizophrenia, and Alzheimer's disease, where their conversion to "p-ripples" serves as a marker of diseased tissue [23].

Physiological Mechanisms and Functional Roles

Core Physiological Properties

SWRs exhibit distinct electrophysiological characteristics that can be quantified through local field potential (LFP) recordings. The following table summarizes key biophysical parameters of hippocampal SWRs across species:

Table 1: Electrophysiological Characteristics of Sharp-Wave Ripples

| Parameter | Rodents | Humans | Recording Location |

|---|---|---|---|

| Frequency Range | 110-200 Hz [23] | 80-250 Hz [26] | CA1 Stratum Pyramidale |

| Duration | 50-100 ms [23] | 20-200 ms [27] | CA1 Stratum Pyramidale |

| Sharp Wave Duration | 40-100 ms [23] | Not Specified | CA1 Stratum Radiatum |

| Dominant State | SWS, Immobility [23] | SWS, Rest [27] | Hippocampal LFP |

| Inter-Event Interval | Irregular, 0.5-2 sec [23] | Variable with circadian rhythm [27] | Hippocampal LFP |

SWRs are not uniform events but rather distribute along a continuum in low-dimensional space, conveying information about layer-specific synaptic inputs [26]. Topological analysis of SWR waveforms has revealed an intrinsic dimension of four, suggesting that most waveform variability can be captured in a reduced parameter space [26]. This continuum reflects variations in features including frequency, amplitude, spectral entropy, and slope, which are influenced by cognitive demands such as novelty and learning [26].

Behavioral State Dependence

SWR occurrence is strongly modulated by behavioral state, following a clear dichotomy between preparatory and consummatory behaviors:

During active exploration and preparatory behaviors: Hippocampal networks are dominated by theta oscillations (6-10 Hz), with SWR occurrence largely suppressed [23] [28].

During consummatory behaviors (eating, drinking, immobility) and slow-wave sleep: Theta oscillations are replaced by irregularly occurring SWRs as the dominant hippocampal pattern [23].

Recent research in head-fixed rodents has revealed that even minor, localized movements (such as whisking or body adjustments) decrease SWR occurrence, demonstrating that hippocampal ripple generation is highly sensitive to motor engagement irrespective of reward timing [28]. This movement-induced suppression of ripples persists during both sleep-like states and quiet wakefulness, suggesting that while large-scale brain states modulate the overall likelihood of SWR generation, local motor-related influences exert a state-independent inhibitory effect [28].

Functional Contributions to Memory Processes

SWRs support multiple memory-related functions through distinct temporal patterns of neural reactivation:

Table 2: Functional Roles of SWRs in Memory Processes

| Function | Proposed Mechanism | Key Evidence |

|---|---|---|

| Memory Consolidation | Reactivation and strengthening of experience-based neural patterns during offline states [25] | SWR disruption impairs consolidation of novel environments [25] |

| Memory Retrieval | Immediate access to stored representations for decision-making [24] | Awake SWRs reactivate past experiences during behavioral choice points [29] |

| Planning & Decision-Making | Prospective replay of potential future paths [24] | Preferential replay of paths toward goals [24] |

| Systems Consolidation | Coordinated hippocampal-cortical reactivation during sleep [24] [29] | Hippocampal-prefrontal synchronization during SWRs [29] |

| Imagination & Construction | Recombination of stored information into novel sequences [24] [23] | SWR rates correlate with self-generated thoughts in humans [27] |

A emerging hypothesis suggests that single SWRs may support multiple cognitive functions simultaneously, mediating both the immediate use of remembered information for decision-making and the gradual process of memory consolidation [24]. This integrative view reconciles previous distinctions between "retrospective" and "prospective" replay by proposing that SWRs retrieve stored representations that can be utilized immediately by downstream circuits while simultaneously initiating consolidation processes [24].

Experimental Detection and Methodologies

Standardized SWR Detection Protocol

Comprehensive electrophysiological recording and analysis techniques have been established for SWR detection across species. The following workflow outlines a standardized approach for SWR identification and validation:

Diagram 1: SWR Detection Workflow

Electrophysiological Recording

- Implantation: Chronic implantation of multi-tetrode drives or silicon probes in hippocampal CA1 region targeting stratum pyramidale [29] [28].

- Position Verification: Histological confirmation of electrode placement through DiI labeling and post-hoc tissue examination [28].

- Signal Acquisition: Extracellular recordings of local field potentials (LFPs) and single-unit activity, typically sampled at 20 kHz [28]. Reference and ground electrodes implanted above cerebellum [28].

Signal Processing and SWR Detection

- Preprocessing: LFP signals are downsampled to 1 kHz and appropriate referencing is applied [28].

- Ripple-Band Filtering: Bandpass filtering in species-specific frequency ranges (70-180 Hz for humans [27]; 150-250 Hz for rodents [28]).

- Envelope Extraction: Application of Hilbert transform to obtain signal envelope [28].

- Threshold Detection: Identification of candidate events exceeding 4 standard deviations from mean envelope amplitude [28]. Exclusion of events exceeding 9 standard deviations to remove artifacts [28].

- Duration Filtering: Retention of events with durations between 20-200 ms [27]. Concatenation of peaks less than 30 ms apart [27].

- Artifact Rejection: Removal of periods associated with epileptic activity or movement artifacts through visual inspection and automated algorithms [27].

Advanced Analytical Approaches

Recent methodological advances have enabled more sophisticated characterization of SWR properties:

- Topological Waveform Analysis: Projection of SWR waveforms into high-dimensional space followed by dimensionality reduction techniques (UMAP, Isomap) to visualize continuum of SWR features [26].

- Cross-Structural Coordination: Analysis of coordinated activity between hippocampus and prefrontal cortex during SWR events to assess systems-level consolidation [29].

- Circadian Rhythm Analysis: Long-term monitoring of SWR rates across sleep-wake cycles to characterize natural fluctuations [27].

State-Dependent Variations in SWR Characteristics

Awake vs. Sleep SWRs

Significant functional and physiological distinctions exist between SWRs occurring during wakefulness and sleep states:

Table 3: Comparison of Awake and Sleep SWR Properties

| Characteristic | Awake SWRs | Sleep SWRs |

|---|---|---|

| Prefrontal Modulation | Stronger CA1-PFC synchronization [29] | Differing patterns of excitation/inhibition [29] |

| Spatial Reactivation | More structured, higher-fidelity representations [29] | Less structured, broader integration [29] |

| Behavioral Correlation | Enhanced during initial learning [29] | Prevalent during post-task consolidation [29] |

| Network Coordination | Absence of coordination with delta/spindles [29] | Coordinated with cortical spindles (12-18 Hz) and delta (1-4 Hz) [29] |

| Functional Role | Memory storage, retrieval, planning [29] | Integration across experiences, consolidation [29] |

Awake reactivation represents a higher-fidelity representation of behavioral experiences and is enhanced during early learning, suggesting a primary role in initial memory formation and memory-guided behavior [29]. In contrast, sleep reactivation appears better suited to support integration of memories across experiences during systems consolidation [29].

Novelty and Learning Effects

SWR characteristics are dynamically modulated by cognitive demands:

- Novelty Exposure: SWR waveforms show distinct segregation during wakefulness and sleep following exposure to novel environments [26].

- Learning Progression: Awake CA1-prefrontal reactivation is most prominently enhanced during initial learning in novel environments, suggesting a key role in early memory trace formation [29].

- Experience Dependence: The reinstatement of assembly patterns representing a novel environment correlates with their offline reactivation and is impaired by SWR disruption, while familiar environment representations remain more stable [25].

Table 4: Essential Reagents and Resources for SWR Research

| Resource | Specification | Experimental Function |

|---|---|---|

| Multi-channel Probes | 32-channel silicon probes with tetrode configurations [28] | High-density recording of hippocampal LFPs and single units |

| Head-Fixation System | Head-plates (e.g., CFR-2, Narishige) with stereotaxic alignment [28] | Behavioral stabilization during neural recording |

| Signal Processing | Hilbert transform for envelope extraction [28] | Ripple oscillation detection and characterization |

| Surgical Materials | Teflon-coated silver wires (125 μm diameter) [28] | Reference and ground electrode implantation |

| Histological Tracers | Fluorescent dyes (e.g., DiI) [28] | Post-hoc verification of electrode placement |

| Behavioral Apparatus | Elevated tracks (linear, W-track) with reward delivery [29] | Spatial learning paradigms with controlled navigation |

Analytical Tools and Software Approaches

- Topological Data Analysis: Persistent homology and dimensionality reduction techniques (UMAP) for waveform characterization [26].

- Cross-Structural Correlation: Analysis of hippocampal-prefrontal coordination during SWR events [29].

- Circadian Rhythm Analysis: Long-term SWR rate monitoring across sleep-wake cycles [27].

Pathological Alterations and Therapeutic Applications

SWRs as Biomarkers in Disease

Alterations in SWR characteristics serve as sensitive indicators of pathological states:

- Epilepsy: Conversion of physiological SWRs to "p-ripples" (pathological ripples) marks epileptogenic tissue [23].

- Alzheimer's Disease: Impaired neural synchronization and SWR generation contributes to memory deficits [30].

- Schizophrenia: Disrupted SWR activity observed in rodent models [23].

The biomarker potential of SWRs is being formally evaluated through structured validation frameworks, including the FDA's Biomarker Qualification Program, which establishes contexts of use (COU) for biomarkers in drug development [31]. Fit-for-purpose validation determines the level of evidence needed based on intended use, with analytical validation assessing performance characteristics including accuracy, precision, and sensitivity [31].

Therapeutic Strategies Targeting SWRs

Emerging approaches aim to modulate SWR activity for therapeutic benefit:

- Artificial SWR Induction: Noninvasive high-frequency visual stimulation proposed to induce artificial ripples to support memory function in neurodegenerative diseases [30].

- Closed-Loop Disruption: Optogenetic suppression of SWRs during specific time windows to assess causal roles in memory processes [25].

- Neuromodulatory Approaches: Leveraging neurotransmitter systems that influence SWR generation (e.g., acetylcholine, norepinephrine) to modulate memory consolidation [24].

The following diagram illustrates potential therapeutic strategies targeting SWR dysfunction:

Diagram 2: SWR-Targeted Therapeutic Approaches

Sharp-wave ripples represent a fundamental mechanism for memory reactivation in the hippocampal formation, serving as a key signature of neural processes underlying memory construction and consolidation. The comprehensive characterization of SWR physiology, state-dependent dynamics, and functional roles provides researchers with robust frameworks for investigating cognitive processes and their pathological alterations. Future research directions include establishing SWRs as validated biomarkers for cognitive function in neurodegenerative diseases, developing noninvasive modulation strategies for therapeutic intervention, and elucidating the precise mechanisms by which discrete SWR events support multiple cognitive functions simultaneously. The continued refinement of detection methodologies and analytical approaches will further enhance our understanding of these critical neural events and their translational applications.

This whitepaper synthesizes contemporary research on the neural mechanisms underlying the transformation of episodic memories into semantic knowledge. Drawing from intracranial encephalographic recordings, functional magnetic resonance imaging (fMRI), and computational modeling, we examine how detailed personal experiences evolve into generalized, context-free facts through systems consolidation. The process involves dynamic interactions between hippocampal and neocortical networks, with generative model training via hippocampal replay facilitating the extraction of statistical regularities from repeated experiences. We present quantitative data from key experiments, detailed methodological protocols, and essential research tools that collectively illuminate the semantization process. This framework provides critical insights for researchers and drug development professionals targeting memory disorders, offering a foundation for therapeutic interventions that modulate memory transformation pathways.

Human long-term memory is historically divided into two functionally distinct systems: episodic memory, which supports the recollection of personally experienced events in their spatiotemporal context, and semantic memory, which constitutes our conceptual knowledge about the world, detached from specific learning episodes [32] [33]. Neuropsychological evidence strongly supports this dissociation: damage to the hippocampal formation selectively impairs episodic memory, whereas injury to the anterior temporal lobe results in semantic memory deficits [32]. Despite these clear distinctions, these memory systems continuously interact, with episodic experiences gradually transforming into semantic knowledge through processes that remain incompletely understood.

The transformation hypothesis posits that semantic memory develops from the accumulated residue of multiple, related episodic experiences through a decontextualization process known as semantization [34]. This review integrates recent neuroscientific evidence to elucidate the neural mechanisms governing this transformation, focusing on systems consolidation, neural replay, and computational principles that enable the extraction of statistical regularities from discrete experiences. Understanding these mechanisms is paramount for developing interventions for memory disorders that disrupt the normal episodic-to-semantic transformation.

Neural Mechanisms of Memory Transformation

Systems Consolidation: Theoretical Frameworks

The process by which memories are stabilized and reorganized over time is known as systems consolidation. Several theoretical frameworks attempt to explain the neural reorganization that underpins the qualitative shift from episodic to semantic memory:

- Standard Model: Proposes that memories initially dependent on the hippocampus gradually become independent and stored solely in the neocortex over time [35].

- Multiple Trace Theory: Argues that the hippocampus remains necessary for the retrieval of detailed episodic memories, regardless of their age, while semantic (gist-like) memories can become hippocampal-independent [35].

- Trace Transformation Theory: Suggests that memories undergo qualitative changes within both hippocampus and cortex, with episodic details transforming into gist-like representations over time [36].

Recent experimental evidence increasingly supports transformation-based models, indicating that memories are not simply transferred from hippocampus to cortex but are fundamentally transformed in their representational format.

The Generative Model of Memory Construction and Consolidation

A groundbreaking computational framework proposes that consolidation constitutes the training of generative models in the neocortex by hippocampal replay signals [1]. In this model:

- The hippocampus rapidly encodes episodic experiences using an autoassociative network.

- During offline periods (e.g., sleep), hippocampal replay trains generative networks (implemented as variational autoencoders) in entorhinal, medial prefrontal, and anterolateral temporal cortices.

- These trained generative networks learn to recreate sensory experiences from latent variable representations, supporting both semantic knowledge and the reconstruction of episodic details.

This framework explains key memory phenomena: why similar neural substrates support recollection and imagination, how semantic memory emerges from episodic experiences, and why consolidated memories show schema-based distortions. The model efficiently combines hippocampal storage of novel information with neocortical extraction of statistical regularities, optimizing the use of limited hippocampal capacity [1].

Table 1: Key Characteristics of Memory Transformation Theories

| Theory | Primary Mechanism | Role of Hippocampus in Remote Memory | Predicted Memory Transformation |

|---|---|---|---|

| Standard Model | Inter-regional reorganization | Becomes unnecessary | Detailed to gist-like; context loss |

| Multiple Trace Theory | Multiple trace creation | Necessary for detailed recollection | Preservation of detailed memories with hippocampal engagement |

| Trace Transformation Theory | Intra-regional reorganization | Transforms representation | Detailed to gist-like within hippocampus |

| Generative Model | Neocortical model training | Trains neocortical models; may become less necessary | Construction from latent variables; schema-based distortions |

Neurogenesis-Dependent Transformation of Hippocampal Engrams

Recent research utilizing engram-tagging techniques in mice provides direct evidence for intra-regional transformation within the hippocampus. Contextual fear memories were shown to lose resolution over time, with mice exhibiting conditioned freezing in both the training context and a novel context at remote delays [36]. Crucially, hippocampal engrams were initially high-resolution but lost precision over time, while cortical engrams were consistently low-resolution. This time-dependent transformation of hippocampal engrams was dependent on adult hippocampal neurogenesis – eliminating neurogenesis arrested engrams in a recent-like, high-resolution state [36]. This demonstrates that neurogenesis plays a critical role in the semantization process by promoting the loss of contextual specificity.

Experimental Evidence and Quantitative Findings

Neural Correlates of Semantic Organization in Episodic Memory

Intracranial EEG (iEEG) studies with neurosurgical patients (N=69) have revealed how semantic structure influences episodic encoding and retrieval. Participants studied and recalled both categorized and unrelated word lists while neural activity was recorded [32]. Multivariate classifiers applied to these recordings successfully predicted:

- Encoding success (classifier AUC > 0.7)

- Retrieval success

- Temporal and categorical clustering during recall

Crucial findings emerged when assessing how these classifiers generalized across list types. Specific retrieval processes – rather than encoding processes – were identified as primary drivers of categorical organization in episodic recall [32]. This suggests that the interaction between semantic and episodic systems is particularly mediated by control processes during memory search and recovery.

Table 2: Quantitative Findings from Key Memory Transformation Studies

| Study Paradigm | Participant/Sample Type | Key Metrics | Primary Findings |

|---|---|---|---|

| iEEG during categorized free recall [32] | 69 neurosurgical patients | Classifier performance (AUC) for predicting recall success and organization | Retrieval processes, not encoding, primarily predicted categorical clustering (AUC > 0.7) |

| Engram resolution tracking [36] | Mice (contextual fear conditioning) | Freezing discrimination ratio (context A vs. B) | Recent memory: High discrimination (freezingA >> freezingB); Remote memory: Low discrimination (freezingA ≡ freezingB) |