Generative Models of Episodic Memory: From Neural Mechanisms to Clinical Translation in Neurodegenerative Disease

This article synthesizes contemporary interdisciplinary research on generative models of episodic memory, a paradigm that views memory not as a literal replay but as an active, constructive process.

Generative Models of Episodic Memory: From Neural Mechanisms to Clinical Translation in Neurodegenerative Disease

Abstract

This article synthesizes contemporary interdisciplinary research on generative models of episodic memory, a paradigm that views memory not as a literal replay but as an active, constructive process. We explore the foundational shift from preservative to generative memory frameworks, detailing computational models like hippocampal-indexed variational autoencoders and their role in consolidation. For a target audience of researchers and drug development professionals, the review covers methodological advances in AI, identifies key challenges such as memory distortion and model capacity limits, and evaluates validation through behavioral parallels and artificial agents. Finally, we discuss the profound implications of these models for understanding and treating memory disorders like Alzheimer's disease and delirium, where generative processes may break down.

The Constructive Brain: Foundations of Generative Episodic Memory

For decades, the dominant paradigm in memory research conceptualized episodic memory—the memory of autobiographical events—as a storage and retrieval system, often likened to a video recorder or computer file system that faithfully records and replays experiences. This framework is now undergoing a fundamental transformation. The emerging paradigm reconceptualizes episodic memory as a dynamic, constructive process in which past experiences are actively reconstructed rather than passively replayed [1]. This shift from a storage-based to a construction-based framework represents one of the most significant developments in modern cognitive neuroscience, with far-reaching implications for understanding memory's vulnerabilities to distortion, its neural underpinnings, and its fundamental relationship to imagination and future thinking.

This constructive view aligns with the generative model of memory construction and consolidation, which posits that hippocampal replay trains generative models to recreate sensory experiences from latent variable representations [2]. Rather than storing literal copies of experiences, the brain learns statistical regularities or "schemas" that enable it to reconstruct past events, simulate future scenarios, and support semantic memory extraction. This generative framework explains key features of memory that were problematic for storage-based models: why memories become more abstract and gist-based over time, how imagination shares neural substrates with recollection, and why memory distortions follow predictable patterns based on existing knowledge structures [2] [1].

Theoretical Foundations of Constructive Memory

Historical Context and Conceptual Evolution

The constructive view of memory has historical roots in Bartlett's pioneering work from the 1930s, which emphasized how remembering involves reconstructing experiences using schemas—active organizations of past reactions and experiences [1]. Bartlett rejected the notion of literal recall, arguing instead that "condensation, elaboration and invention are common features of ordinary remembering" [1]. Modern cognitive neuroscience has built upon this foundation, demonstrating that constructive processes are fundamental to episodic memory rather than representing occasional errors or imperfections.

The contemporary constructive episodic memory framework proposes that constituent features of a memory are distributed widely across different brain regions, with no single location containing a literal trace or engram of a specific experience [1]. Retrieval consequently involves a process of pattern completion, in which the rememberer pieces together distributed features that comprise a particular past experience. This system is inherently prone to certain types of errors but provides the flexibility needed to adapt past experiences to novel situations [1].

The Generative Model of Memory Construction and Consolidation

The generative model of memory provides a comprehensive computational framework for understanding constructive memory. This model proposes that:

- Hippocampal replay of patterns of neural activity during rest trains generative models to recreate sensory experiences from latent variable representations [2]

- Consolidation corresponds to the training of generative networks that gradually learn to reconstruct memories by capturing the statistical structure of experienced events

- Memory recall after consolidation is a generative process mediated by schemas representing common structure across events

- The brain uses a combination of conceptual and sensory features in episodic memory, with familiar components encoded as concepts and novel components stored in greater sensory detail [2]

This generative framework explains how the memory system optimizes the use of limited hippocampal storage for new and unusual information while efficiently representing predictable elements through neocortical schemas [2].

Table 1: Key Principles of Generative Memory Models

| Principle | Description | Computational Implementation |

|---|---|---|

| Schema-Based Reconstruction | Memories are reconstructed using learned statistical regularities from multiple experiences | Variational Autoencoders (VAEs) learning to reconstruct inputs from compressed latent variables [2] |

| Complementary Learning Systems | Rapid hippocampal encoding complements gradual neocortical learning of statistical structure | Teacher-student learning with hippocampal replay training generative neocortical networks [2] |

| Efficient Encoding | Unpredictable aspects stored in detail; predictable aspects reconstructed from schemas | Reconstruction error (prediction error) determines encoding precision and hippocampal engagement [2] |

| Multi-scale Representation | Memories bind coarse-grained conceptual and fine-grained sensory representations | Hierarchical latent variable models capturing different levels of abstraction [2] |

Neural Mechanisms and Computational Architecture

Neural Substrates of Constructive Memory

The generative model of memory construction identifies specific neural structures and their functional roles in constructive processes. The hippocampal formation (HF) serves as an autoassociative network that rapidly encodes events and supports their initial retrieval [2]. During consolidation, hippocampal replay activates these memories, training generative networks in neocortical regions including the entorhinal cortex, medial prefrontal cortex (mPFC), and anterolateral temporal cortices [2]. These generative networks eventually can reconstruct experiences without hippocampal support, explaining why older memories become resistant to hippocampal damage—a phenomenon known as systems consolidation [2].

Neuropsychological evidence strongly supports this architecture. Patients with damage to the hippocampal formation show deficits not only in remembering the past but also in imagination, episodic future thinking, dreaming, and daydreaming [2]. This pattern suggests a common constructive mechanism underpinning both memory and imagination, consistent with neuroimaging evidence showing considerable overlap in neural activation when people remember past experiences and imagine future scenarios [1].

Computational Implementation

The generative model is implemented computationally using modern machine learning approaches. Modern Hopfield networks model hippocampal autoassociative encoding, where feature units activated by an event are bound together by a memory unit [2]. Variational autoencoders (VAEs) implement the generative networks that learn to reconstruct sensory experience from latent variables [2]. The training process employs teacher-student learning, where outputs from the hippocampal autoassociative network train the generative network during memory replay [2].

This architecture provides mechanisms for several key features of memory: it explains why initial encoding requires the hippocampus but becomes independent over time; how semantic memory emerges from episodic experiences; why similar circuits support recall and imagination; and how consolidation extracts statistical regularities to support relational inference [2].

Experimental Evidence and Methodological Approaches

Key Experimental Paradigms

Research supporting the constructive paradigm employs diverse methodological approaches. Neuropsychological studies of patients with amnesia and dementia reveal dissociations between different memory components, showing that false recognition—rather than always indicating memory failure—can sometimes reflect the operation of adaptive constructive processes [1]. For instance, some amnesic patients show reduced false recognition of related lure words, suggesting their impairment affects the constructive processes that normally support gist-based memory [1].

Functional neuroimaging studies demonstrate substantial overlap in neural networks activated during past recollection and future imagination, particularly in hippocampal and prefrontal regions [1]. This supports the constructive episodic simulation hypothesis, which proposes that simulating future events requires flexibly extracting and recombining elements of past experiences [1].

Longitudinal cognitive neuroscience studies examine how measures like episodic memory performance moderate the relationship between brain atrophy and cognitive decline. These studies show that episodic memory has strong construct validity as a measure of cognitive reserve, weakening the impact of gray matter change on cognitive decline, whereas education strengthens this relationship [3].

Table 2: Experimental Methods in Constructive Memory Research

| Method Type | Key Measures | Insights Gained |

|---|---|---|

| Neuropsychological Assessment | False recognition patterns in amnesia, dementia; imagination deficits in hippocampal damage | Constructive processes depend on hippocampal-prefrontal network; memory and imagination share neural substrates [1] |

| Functional Neuroimaging (fMRI) | Neural overlap during past recall and future imagination; hippocampal replay during rest | Common neural circuitry for memory and imagination; reactivation patterns support consolidation [2] [1] |

| Longitudinal Cognitive Aging Studies | Episodic memory as moderator between brain atrophy and cognitive decline | Episodic memory measures cognitive reserve better than education; weakens impact of brain atrophy [3] |

| Computational Modeling | Variational autoencoders; modern Hopfield networks; teacher-student learning | Mechanistic accounts of consolidation as training generative models; schema-based distortion patterns [2] |

Quantitative Findings in Constructive Memory Research

Table 3: Key Quantitative Findings in Constructive Memory Research

| Phenomenon | Quantitative Measure | Interpretation |

|---|---|---|

| Cognitive Reserve Capacity | Episodic memory weakens impact of gray matter change on cognitive decline (p<0.05) [3] | Strong construct validity for episodic memory as cognitive reserve measure |

| Imagination-Recall Neural Overlap | Significant cross-region correlation (r > 0.75) in hippocampal and prefrontal activation during recall and imagination [1] | Supports constructive episodic simulation hypothesis |

| Consolidation Timeline | Gradual transition from hippocampal to neocortical dependence over weeks to months | Standard model of systems consolidation [2] |

| Boundary Extension in Memory | 10-20% of participants systematically remember seeing beyond the boundaries of presented images [2] | Schema-based reconstruction fills in predictable spatial information |

Table 4: Key Research Reagent Solutions for Constructive Memory Studies

| Resource | Function/Application | Example Use |

|---|---|---|

| Spanish and English Neuropsychological Assessment Scales (SENAS) | Validated cognitive measures across racial, ethnic, and linguistic groups [3] | Longitudinal cognitive trajectory measurement in diverse aging populations |

| Structural Causal Modeling (SCM) Frameworks | Causal inference and counterfactual analysis in neural representations [4] | Disentangling causal relationships in multi-modal MRI data for tumor segmentation |

| Variational Autoencoders (VAEs) | Generative modeling of memory reconstruction processes [2] | Computational modeling of schema-based memory distortions and consolidation |

| Modern Hopfield Networks (MHNs) | Autoassociative memory for rapid episodic encoding [2] | Modeling hippocampal pattern separation and completion mechanisms |

| BraTS Multi-modal MRI Dataset | Standardized neuroimaging benchmark with T1, T2, FLAIR, T1CE modalities [4] | Evaluating segmentation algorithms and causal modeling in heterogeneous data |

Experimental Protocols for Constructive Memory Research

Protocol 1: Assessing Constructive Episodic Simulation

This protocol examines the overlap between memory and imagination, testing the constructive episodic simulation hypothesis [1].

- Participant Selection: Recruit healthy adults, patients with hippocampal damage, and patients with prefrontal lesions

- Stimulus Development: Create cue words or phrases that prompt past recall and future imagination (e.g., "birthday party")

- Task Procedure:

- Present cues in randomized order across two conditions: past and future

- For past condition: "Think of a specific past event related to this cue"

- For future condition: "Imagine a specific future event related to this cue"

- Allow 20 seconds for mental construction, then collect detailed verbal description

- Data Collection:

- Record detailed phenomenological ratings (emotionality, vividness, sensory details)

- Collect fMRI data during construction phase

- Administer post-scan memory tests for generated events

- Analysis:

- Compare neural activation patterns using whole-brain fMRI analysis

- Calculate similarity scores between past and future networks

- Correlate phenomenological measures with neural activity

This protocol typically reveals significant overlap in hippocampal and prefrontal activation during past and future tasks, supporting the constructive episodic simulation hypothesis [1].

Protocol 2: Computational Modeling of Memory Consolidation

This protocol implements the generative model of memory construction and consolidation using teacher-student learning [2].

- Model Architecture:

- Implement teacher network: Modern Hopfield Network (MHN) with 1000+ memory units

- Implement student network: Variational Autoencoder (VAE) with encoder-decoder structure

- Configure latent space dimensions (typically 50-100 units) for compressed representations

- Training Stimuli:

- Curate dataset of natural images or synthetic patterns representing "experiences"

- Include both novel patterns and schema-consistent variations

- Training Procedure:

- Phase 1: Encode patterns in teacher (hippocampal) network

- Phase 2: Reactivate memories through random sampling from teacher network

- Phase 3: Use reactivated patterns to train student (generative) network

- Repeat cycles (1000+ iterations) to simulate consolidation

- Testing Protocol:

- Test reconstruction accuracy from both teacher and student networks

- Measure schema-based distortions (e.g., boundary extension)

- Evaluate generalization to novel but schema-consistent patterns

- Analysis Metrics:

- Quantitative: Reconstruction error, pattern completion accuracy

- Qualitative: Visualization of reconstructed patterns and distortions

This protocol demonstrates how hippocampal replay can train generative networks to reconstruct experiences, with schema-based distortions emerging as a natural consequence [2].

Implications and Future Directions

The paradigm shift from storage to construction in episodic memory theory has profound implications for both basic neuroscience and clinical applications. In cognitive neuroscience, it provides a unified framework for understanding memory, imagination, and future thinking, suggesting these capacities rely on common constructive processes [1]. For clinical applications, it offers new approaches to memory disorders, suggesting that interventions might target constructive processes rather than focusing solely on retention.

In neuropsychology, the constructive framework explains why memory distortions follow predictable patterns rather than representing random failures [1]. This insight is particularly relevant for understanding conditions like Alzheimer's disease, where constructive processes may become disrupted in specific ways. The finding that episodic memory serves as a better proxy for cognitive reserve than education [3] has direct implications for assessing dementia risk and designing cognitive interventions.

Future research directions include developing more sophisticated generative models that better capture the neural implementation of constructive processes, investigating how different types of schemas influence construction, and exploring how constructive processes change across development and in various clinical populations. The integration of causal inference approaches [4] with generative memory models represents a particularly promising avenue for disentangling the complex relationships between brain structure, cognitive function, and memory expression.

The constructive paradigm also bridges basic memory research with artificial intelligence development. Recent work on memory-augmented artificial agents [5] demonstrates how principles from human memory construction can inform the design of more efficient and robust AI systems. Conversely, advances in AI generative models provide new conceptual tools and computational frameworks for understanding human memory, creating a productive synergy between neuroscience and artificial intelligence.

The formation and persistence of memory are fundamental to human cognition, processes critically dependent on a dynamic interplay between the hippocampus and the neocortex. This hippocampo-neocortical dialogue facilitates the initial encoding, gradual consolidation, and eventual reconstruction of lived experiences. Contemporary neuroscience frameworks increasingly conceptualize this interaction through the lens of generative models, which posit that memory recall is an active, reconstructive process rather than the passive retrieval of a perfect recording. This whitepaper provides an in-depth technical guide to the core components of this dialogue, framing the established neurobiological evidence within the cutting-edge context of generative models of episodic memory. It further details key experimental methodologies and reagents, offering researchers a comprehensive toolkit for investigating these mechanisms and exploring their implications for therapeutic intervention.

Core Theoretical Framework: From Systems Consolidation to Generative Models

The canonical view of memory, embodied by the Complementary Learning Systems (CLS) theory, posits that the hippocampus serves as a fast-learning system for encoding episodic details, which are then gradually transferred to the neocortex for long-term, stable storage via a process called systems consolidation [2] [6]. This neocortical consolidation is thought to be mediated by the repeated reactivation or "replay" of hippocampal memory traces during offline states like sleep, which slowly trains neocortical networks [7] [8].

Modern computational perspectives have refined this view using generative models, such as Variational Autoencoders (VAEs). In this framework, the hippocampus acts as a rapid, autoassociative memory system that encodes a specific experience. Subsequent hippocampal replay of this experience then serves as a "teacher" to train a "student" generative model in the neocortex [2]. This generative model learns the underlying statistical structure, or "schema," of the events it is trained on. Once trained, the neocortical generative model can reconstruct the sensory experience of an event from a high-level latent representation. This process is highly efficient: predictable, schema-congruent aspects of an event can be reconstructed by the neocortex from the outset, while novel or unpredictable details are initially reliant on the hippocampal trace [2]. This explains why, as consolidation progresses, memories become more semanticized and prone to gist-based distortions, as they are increasingly reconstructed by the neocortical generative network based on its learned priors [2] [6].

Table 1: Key Theoretical Models of Hippocampal-Neocortical Interaction

| Model Name | Core Mechanism | Prediction on Hippocampal Role | Associated Computational Framework |

|---|---|---|---|

| Standard Systems Consolidation [2] | Gradual transfer of memory trace from hippocampus to neocortex. | Temporary role; remote memories become hippocampus-independent. | Complementary Learning Systems (CLS) |

| Multiple Trace Theory [6] | Hippocampus is engaged during retrieval to reactivate detailed episodic traces. | Permanent role for detailed, vivid episodic recall. | N/A |

| Generative Model of Consolidation [2] | Hippocampal replay trains a neocortical generative model (e.g., VAE). | Role diminishes as neocortical model learns to reconstruct the event. | Variational Autoencoder (VAE) / Teacher-Student Learning |

| Predictive Coding Model [9] | Memory replay is a generative process involving iterative message passing to minimize prediction error. | Encodes and replays prediction error for neocortical updating. | Predictive Coding Network |

Neural Mechanisms and Functional Anatomy

The hippocampo-neocortical dialogue is supported by a specific neuroanatomical architecture and rhythmic neural activity.

Anatomical and Functional Specialization

The hippocampus is not a uniform structure. There is functional specialization along its longitudinal axis: the anterior hippocampus (in humans) is more strongly connected to affective and schema-related areas like the amygdala and medial prefrontal cortex (mPFC), processing global context and emotion. In contrast, the posterior hippocampus connects more with posterior perceptual regions, supporting detailed spatial and contextual representations [6]. This is complemented by content-specific processing in the medial temporal lobe, where the perirhinal cortex processes object information and the parahippocampal cortex processes scene information, all funneling into the hippocampus for integration [6].

Functional connectivity studies reveal that while the anterior and posterior hippocampus maintain distinct but stable connectivity profiles with the neocortex during both rest and task states, this baseline is superposed by task-specific changes. Notably, during memory retrieval, there is a significant upregulation of hippocampal connectivity with a "recollection network" including the mPFC, inferior parietal, and parahippocampal cortices [10].

The Role of Sleep and Neural Oscillations

The dialogue is profoundly active during sleep, where a coordinated interplay of neural oscillations drives consolidation. The core mechanism involves a neocortical-hippocampal-neocortical reactivation loop initiated by the neocortex [8]. The process can be broken down as follows:

- The cortical Slow Oscillation (SO; <1 Hz) orchestrates the process, creating alternating windows of neuronal excitability (up-states) and inhibition (down-states).

- Thalamocortical Sleep Spindles (12-16 Hz) are nested in the SO up-states.

- Spindles, in turn, group and modulate the occurrence of hippocampal Sharp-Wave Ripples (SW-Rs; 80-200 Hz), which are associated with the reactivation of memory traces.

- This coupling creates a window for effective communication, where spindle power concurrently increases in both the neocortex and hippocampus time-locked to SW-Rs, significantly enhancing functional connectivity between the two structures [8].

Table 2: Key Neural Oscillations in Sleep-Dependent Memory Consolidation

| Oscillation | Location | Frequency | Primary Function in Consolidation |

|---|---|---|---|

| Slow Oscillation (SO) | Neocortex | <1 Hz | Provides a global temporal framework; organizes spindle and ripple events. |

| Sleep Spindle | Thalamocortical | 12-16 Hz | Gates synaptic plasticity; mediates hippocampal-neocortical coupling during ripples. |

| Sharp-Wave Ripple (SW-R) | Hippocampus | 80-200 Hz | Tags hippocampal memory traces for reactivation and redistribution. |

The following diagram illustrates this coordinated mechanism during sleep:

Figure 1: Sleep-Dependent Memory Consolidation Mechanism. Neocortical slow oscillations trigger thalamocortical spindles, which group hippocampal sharp-wave ripples to mediate memory reactivation and consolidation.

Experimental Protocols for Investigating the Dialogue

Protocol: Assessing Hippocampal-Neocortical Connectivity with fMRI

This protocol identifies network interactions during memory encoding and retrieval [10].

- Participant Preparation: Recruit a large cohort (e.g., N > 200) to ensure statistical power. Obtain informed consent.

- Task Design: Administer different episodic memory tasks (e.g., item-context association encoding) during functional MRI (fMRI) scanning. Tasks must have distinct, separable encoding and retrieval phases.

- Data Acquisition: Collect high-resolution T1-weighted anatomical scans and T2*-weighted echo-planar imaging (EPI) sequences for BOLD fMRI during task performance and a resting-state period.

- Preprocessing: Perform standard preprocessing steps: slice-time correction, realignment, co-registration to anatomical scan, normalization to standard space (e.g., MNI), and smoothing.

- Hippocampal Subregion Definition: Segment the hippocampus using an automated tool (e.g., Freesurfer) to define anterior and posterior hippocampal seeds.

- Functional Connectivity Analysis: Use a psychophysiological interaction (PPI) or correlational PPI (cPPI) analysis. Model the seed region's timecourse and its interaction with the task condition (encoding vs. retrieval) to identify voxels in the neocortex where connectivity with the hippocampus is modulated by the memory process.

- Conjunctive Analysis: To isolate patterns specific to core memory processes, perform a conjunctive analysis across multiple different memory tasks.

Protocol: Quantifying Sleep-Dependent Consolidation with iEEG/EEG

This protocol tests the role of sleep oscillations in the hippocampo-neocortical dialogue [8].

- Participant Preparation: Patients with intracranial EEG (iEEG) electrodes implanted in the hippocampus and neocortex (e.g., for epilepsy monitoring) are ideal. Alternatively, use high-density scalp EEG with a hippocampal source reconstruction model.

- Memory Encoding: Participants learn a declarative memory task (e.g., paired-associates) before sleep.

- Sleep Recording: Conduct whole-night polysomnography, including iEEG/EEG, EOG, and EMG to sleep stage.

- Event Detection:

- Ripples: Detect hippocampal SW-Rs by band-pass filtering the hippocampal signal (80-200 Hz), calculating the root mean square (RMS) power, and using an amplitude threshold (e.g., >3 SD above mean).

- Spindles: Detect neocortical (e.g., at Cz) and hippocampal spindles by filtering (12-16 Hz) and using an automatic detection algorithm (e.g., based on amplitude and duration).

- Slow Oscillations: Detect SOs by filtering the neocortical signal (0.5-1.5 Hz) and identifying negative peaks.

- Analysis of Coupling:

- Time-Frequency Representations (TFRs): Lock TFRs to the peak of hippocampal ripples and compare power to control (ripple-free) events.

- Coherence Analysis: Calculate spectral coherence between neocortical and hippocampal signals within the spindle frequency band (12-16 Hz) around ripple events.

- Nested Analysis: Assess the temporal coincidence of ripples and spindles with SO up-states.

- Memory Testing: Assess memory performance after sleep and correlate recall success with the measures of neural coupling (e.g., ripple-spindle coherence).

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents and Resources for Memory Research

| Resource / Reagent | Function in Experimental Research | Technical Specification / Example |

|---|---|---|

| Intracranial EEG (iEEG) | Provides direct, high-fidelity recording of hippocampal ripples and neocortical oscillations in humans. | Depth electrodes implanted in hippocampus; subdural grids on neocortex. |

| Functional MRI (fMRI) | Measures hippocampal-neocortical connectivity (e.g., with PPI analysis) during memory tasks. | 3T or 7T scanner; BOLD contrast; event-related design. |

| Variational Autoencoder (VAE) | Computational model simulating the neocortical generative network trained by hippocampal replay. | Architecture includes encoder, latent space, and decoder; trained on sensory input. |

| Modern Hopfield Network (MHN) | Computational model simulating the hippocampal autoassociative memory for rapid episodic encoding. | Network that binds features of an event into a memory unit [2]. |

| Mooney Images | Visual stimuli used to induce and study insight and memory formation in fMRI paradigms [11]. | High-contrast, two-tone images of real-world objects that are difficult to recognize. |

Emerging Insights and Future Directions

Generative models provide a unified account of various cognitive phenomena. The same neocortical generative network trained by hippocampal replay supports not only memory recall but also imagination and episodic future thinking by sampling from latent variables to construct novel scenarios [2]. Furthermore, the predictive coding framework models this interaction as a process of minimizing prediction error, where the hippocampus encodes mismatches (novelty) and relays them to the neocortex for model updating [9].

Recent research also highlights factors that enhance memory encoding by engaging this dialogue. For instance, insight during problem-solving—characterized by representational change in the cortex and coupled activity in the hippocampus and amygdala—predicts stronger subsequent memory, suggesting it optimally triggers the mechanisms of the hippocampo-neocortical dialogue [11].

The outlined mechanisms and experimental approaches provide a foundation for exploring novel therapeutic targets. Compounds or stimulation techniques designed to selectively enhance the coupling between sleep spindles and hippocampal ripples, for instance, could offer promising pathways for treating memory-related disorders by directly modulating the core engine of systems consolidation.

Within the field of memory research, the notion that remembering is an active, reconstructive process is paramount. This perspective posits that memory recall is not a simple playback of stored information but rather a construction that integrates traces of past events with general knowledge, expectations, and beliefs [12]. Central to this process are schemas, which are mental frameworks that organize and store abstract knowledge about the world, objects, and events [13]. These schemas profoundly influence how memories are encoded, consolidated, and retrieved, often leading to efficient memory function but also to characteristic distortions [2] [13].

This article frames the role of schemas within the context of contemporary generative models of episodic memory construction. These computational models propose that the brain, specifically the neocortex, learns a generative model of the world—a system that captures the statistical regularities or "schemas" of experiences [2]. This generative model can then be used to reconstruct past experiences, imagine future events, or support semantic knowledge. The hippocampus is thought to act as an autoassociative network that rapidly encodes specific episodes, which then train the neocortical generative model through processes like replay, a mechanism underlying systems consolidation [2]. From this viewpoint, schema-based memory distortions are not mere errors but are inherent features of a memory system that optimally combines detailed sensory information with efficient, schema-based predictions.

Theoretical Framework: Generative Models of Memory

The generative model of memory construction and consolidation provides a comprehensive computational framework for understanding how schemas and episodic details are integrated [2]. In this model, the hippocampal formation rapidly encodes an event, binding its various features into an autoassociative memory trace. Crucially, this trace is not the final storage of the memory. Instead, through hippocampal replay—the reactivation of neural activity patterns during rest—the neocortex is trained.

The neocortex, encompassing regions like the entorhinal cortex, medial prefrontal cortex (mPFC), and anterolateral temporal cortices, is conceived as implementing a generative model, often computationally instantiated as a variational autoencoder (VAE) [2]. This model gradually learns the probability distributions, or schemas, that underlie the events it is trained on. During memory recall, particularly after consolidation has occurred, the neocortical generative model is activated to (re)construct the sensory experience from its latent variable representations. The generative model thus supports not only the recall of 'facts' (semantic memory) but also the reconstruction of experiences (episodic memory) [2].

This framework explains several key memory phenomena:

- Semanticization: As consolidation proceeds, the memory becomes more reliant on the neocortical generative model, making it more abstract and conceptual, and less dependent on the hippocampal trace [2].

- Imagination and Future Thinking: The same generative network used for reconstruction can be sampled to construct novel, plausible scenes, explaining the shared neural substrates for memory and imagination [2].

- Schema-Based Distortions: If the generative model's schema is strong, it may "fill in" predictable but unexperienced details during reconstruction, leading to errors like boundary extension or false memories [2]. The model posits that unpredictable aspects of an experience need to be stored in hippocampal detail, while fully predicted aspects do not, optimizing the use of limited hippocampal storage [2].

Neural Substrates of Schema-Based Memory

The generative model aligns with neurobiological evidence suggesting a division of labor between different brain regions. The hippocampus is critical for the initial encoding and detailed recollection of individual episodes [14]. In contrast, schema knowledge is thought to be supported by neocortical regions, particularly the medial prefrontal cortex (mPFC) [14]. Some models propose a complementary relationship between these systems, while others suggest a competitive or inhibitory one, where engagement of cortical schema representations can suppress hippocampal activity [14].

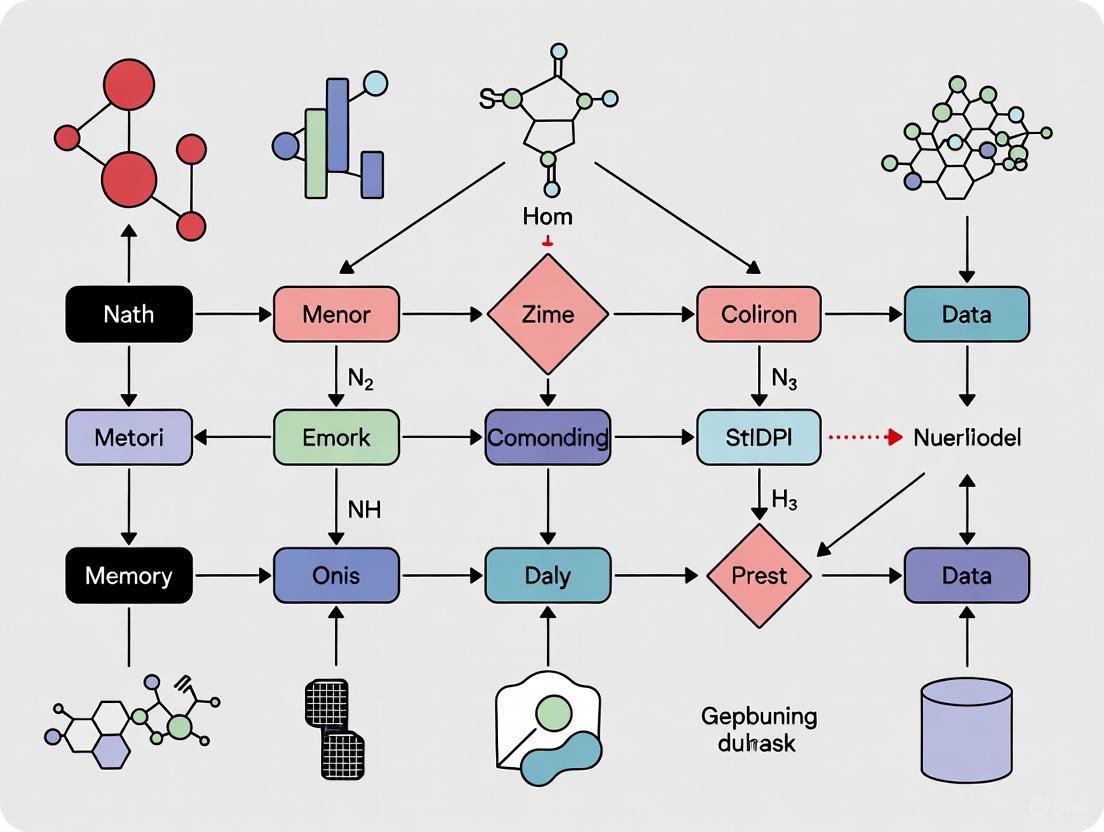

Diagram 1: Generative Model of Memory Construction. This figure illustrates the proposed flow of information in the generative model of memory. During encoding, the hippocampus binds features of an event. Through replay, it trains the neocortical generative model (schema). During recall, the neocortex performs a schema-based reconstruction, which can be supplemented by detailed information from the hippocampus.

Experimental Evidence: Schema Influences on Memory

Empirical research has robustly demonstrated how schemas shape memory. The following table summarizes key experimental paradigms and their findings regarding schema effects.

Table 1: Key Experimental Paradigms on Schema and Memory

| Experimental Paradigm | Key Finding | Implication for Memory Reconstruction |

|---|---|---|

| Bartlett's "War of the Ghosts" [13] [12] | Participants recalling a foreign folk tale omitted unfamiliar elements and altered details to fit their own cultural schemas. | Recall is a reconstructive process guided by schematic knowledge, not a reproductive one. |

| Carmichael, Hogan, & Walter (1932) [13] | Participants' drawings of ambiguous figures were biased toward the verbal label provided (e.g., "barbell" vs. "eyeglasses"). | Post-encoding information can be integrated into memory, altering the reconstruction. |

| Object-Scene Search Task [14] | Memory for an object's location was more accurate when it was in a schema-congruent location; this effect was eliminated for recollected scenes. | Episodic memory strength modulates schema use; strong recollection can override schema bias. |

Detailed Methodology: Object-Scene Search Task

A recent line of research provides a detailed methodology for examining how episodic memory strength modulates the use of schema knowledge [14].

Participants

- In the initial online study, 133 undergraduate participants were included after pre-experimental attention checks (150 were originally recruited, with exclusions for technical issues or not following instructions) [14].

- A replication study was conducted with a separate sample of 59 participants [14].

Materials and Stimuli

- Scenes: Participants viewed a series of scenes, each containing a target object.

- Schema Congruency Manipulation: The critical manipulation was the location of the target object within the scene.

- Schema-Congruent: The target object was placed in a semantically expected location (e.g., a toothbrush next to a sink).

- Schema-Incongruent: The target object was placed in an unusual location (e.g., a toothbrush next to a bathtub) [14].

Procedure

- Study Phase: Participants searched for and clicked on the target object in each scene.

- Test Phase: Participants were shown a mixture of old (studied) and new scenes, but now without the target object. Their tasks were:

- Spatial Recall: To indicate the precise location where the target object had been located during the study phase.

- Recognition Memory Judgment: To provide a confidence-based recognition judgment for the scene itself. This scale was designed to index different types and strengths of memory:

- Recollection: Confident recognition with the ability to remember specific details about the study event.

- Familiarity Strength: A scale of recognition confidence without specific recollection.

- Unconscious Memory: Assessed by comparing performance on studied scenes that participants were highly confident were "new" (high-confidence misses) versus truly new scenes [14].

Quantitative Results

The following table summarizes the core quantitative findings from the experiment, demonstrating the interaction between memory strength and schema bias.

Table 2: Influence of Memory Strength on Schema Bias in Spatial Recall [14]

| Memory Strength / Type | Effect of Schema Congruency on Spatial Recall Accuracy | Interpretation |

|---|---|---|

| New Scenes (Baseline) | Strongest schema-congruency effect | Performance relies entirely on prior schema in the absence of episodic memory. |

| Unconscious Memory | Schema-congruency effect present, but reduced compared to new scenes | A weak memory trace can begin to moderate reliance on schema. |

| Familiarity Strength | Schema-congruency effect decreased further as familiarity strength increased | Increasing memory strength progressively reduces schema bias. |

| Recollection | Schema-congruency effect was eliminated entirely | Strong, detailed episodic memory can completely override schematic biases. |

A further key finding was that when participants recollected an incongruent scene but could not correctly remember the target location, their guesses were still biased away from the schema-congruent regions. This suggests that recollection can suppress detrimental schema bias even when precise spatial information is not available [14].

The Scientist's Toolkit: Research Reagents and Materials

The following table details key components and their functions in the described research on schemas and memory, particularly drawing from the experimental paradigm outlined above [14].

Table 3: Essential Materials for Schema-Memory Interaction Research

| Item / Concept | Function in Research |

|---|---|

| Scene Stimuli Set | A standardized set of images depicting common environments (e.g., kitchens, offices, bathrooms) used to evoke consistent semantic schemas across participants. |

| Schema-Congruent & Incongruent Object Locations | The experimental manipulation where target objects are placed in either typical or atypical locations within scenes to create congruent and incongruent trials. |

| Confidence-Based Recognition Scale | A psychometric tool that allows for the dissociation of different memory states (recollection, familiarity, unconscious memory) based on participant confidence and subjective experience. |

| Eye-Tracking Apparatus | Used in related studies to measure gaze patterns, providing an implicit measure of how attention is driven by semantic information versus memory in scenes [14]. |

| fMRI/MRI | Neuro-imaging technology used to identify distributed brain activation during encoding and retrieval, particularly in the medial temporal lobe and prefrontal cortex [13] [14]. |

The research is clear: schemas, as priors for reconstruction, are fundamental to how memory operates. The generative model of memory provides a powerful computational framework that explains not only why schemas cause distortions but also how they contribute to memory efficiency, semanticization, and imagination. Empirical evidence, such as the finding that recollection eliminates schema-congruency biases, demonstrates a dynamic interplay between episodic and semantic systems. Rather than being a faithful recording, memory is a skilled reconstruction, blending the raw materials of the past with the blueprints of prior knowledge to build our remembered reality.

The Complementary Learning Systems (CLS) theory provides a foundational framework for understanding how the brain supports learning and memory. This theory posits that the brain operates two distinct but interacting learning systems: a rapid, episodic memory system in the hippocampus, and a slower, semantic memory system in the neocortex. Within the broader context of generative models of episodic memory construction research, this framework has been substantially extended and formalized to explain not only memory consolidation but also imagination, future thinking, and systematic memory distortions. Modern computational implementations have refined the original CLS framework using generative artificial intelligence approaches, particularly variational autoencoders (VAEs) and modern Hopfield networks, to create more unified accounts of memory construction, consolidation, and retrieval. These advances bridge theoretical neuroscience with practical applications, including novel approaches to drug discovery and molecular design, by providing principled models of how experience is transformed into structured knowledge.

Core Computational Frameworks and Their Neural Correlates

The Complementary Learning Systems (CLS) Framework

The standard CLS framework proposes that experiences are rapidly encoded in the hippocampus through pattern separation mechanisms, enabling distinct representations of similar episodes without interference. Through hippocampal replay during rest, these episodic representations gradually train distributed neocortical networks to extract statistical regularities across experiences, forming semantic knowledge that supports generalization. This framework explains key neuropsychological observations, including the temporal gradient of retrograde amnesia following hippocampal damage, where recent memories are impaired while remote memories are preserved [2]. The hippocampal system employs sparse, pattern-separated codes to minimize interference during rapid encoding, while the neocortical system employs overlapping, distributed representations to extract commonalities and support flexible generalization [15].

Generative Models of Memory Construction and Consolidation

Recent advances have formalized memory consolidation as the training of generative models through hippocampal replay. In this framework, the hippocampus acts as an autoassociative network that initially encodes events, then trains generative networks (implemented as VAEs) in sensory and association cortices to recreate sensory experiences from latent variable representations [2]. This approach explains how unique sensory and predictable conceptual elements of memories are stored and reconstructed by efficiently combining both hippocampal and neocortical systems. The generative model perspective provides mechanisms for semantic memory formation, imagination, episodic future thinking, relational inference, and schema-based distortions including boundary extension. During perception, the generative model provides ongoing estimates of novelty through reconstruction error (prediction error), determining which aspects of an event require detailed hippocampal encoding versus which can be efficiently handled by existing cortical schemas [2].

GENESIS: A Unified Framework for Episodic-Semantic Integration

The Generative Episodic-Semantic Integration System (GENESIS) model addresses limitations of standard CLS theory by formalizing memory as the interaction between two limited-capacity generative systems: a Cortical-VAE supporting semantic learning and generalization, and a Hippocampal-VAE supporting episodic encoding and retrieval within a retrieval-augmented generation architecture [16]. This framework implements bidirectional interactions between semantic and episodic systems, explaining how cortical representations influence episodic encoding from the outset, and how semantic knowledge introduces systematic distortions during episodic recall. GENESIS reproduces a wide range of behavioral phenomena, including generalization in semantic memory, recognition and serial recall effects, gist-based distortions in episodic memory, and constructive episodic simulation. The model's architecture reflects the insight that episodic encoding inherently depends on pre-existing cortical representations, with the hippocampus receiving highly processed inputs from the entorhinal cortex [16].

Table 1: Key Computational Frameworks in Memory Research

| Framework | Core Components | Neural Correlates | Key Innovations |

|---|---|---|---|

| Standard CLS | Hippocampal rapid encoding, cortical slow learning | Hippocampus (pattern separation), Neocortex (statistical learning) | Separation of learning timescales, replay-based consolidation [2] |

| Generative Memory Model | Hippocampal autoassociative network, Cortical VAEs | Entorhinal cortex (latent variables), Sensory cortices (reconstruction) | Memory as generative process, explains construction and distortion [2] |

| GENESIS | Cortical-VAE, Hippocampal-VAE, RAG architecture | Medial temporal lobe, Association cortices | Bidirectional episodic-semantic interaction, capacity limits [16] |

| MEM-α | Reinforcement learning memory management | Not specified (computational model) | Learned memory construction via reinforcement learning [17] |

Quantitative Comparisons and Empirical Validation

Behavioral Phenomena Accounted For by Different Frameworks

Each framework varies in its ability to explain key behavioral phenomena in human memory. The standard CLS theory successfully accounts for the initial rapid encoding of memories and their gradual consolidation, the temporal gradient of retrograde amnesia, and the extraction of statistical regularities from experiences. The generative model extension additionally explains vivid episodic recollection as a constructive process, systematic schema-based distortions during recall, imagination and future thinking, and the efficient use of hippocampal storage for novel information [2]. GENESIS further accounts for semantic intrusions during episodic recall, generalization to novel combinations of learned elements, recency and serial-order effects in free recall, and the constructive recombination of episodes during simulation [16].

Neurophysiological Evidence and Constraints

Recent single-unit recordings from the human hippocampus provide direct evidence for sparse coding of episodic memories, a key prediction of computational models. remembered items that elicited increased firing during encoding were associated with sparse, pattern-separated neural codes at retrieval, specifically in the hippocampus [15]. This sparse coding scheme supports the storage of individual episodic memories with minimal interference, consistent with computational principles underlying CLS and related frameworks. Quantitative analysis of normalized spike count distributions reveals increased positive skewness for target items compared to foils specifically in the hippocampus, indicating the presence of a small proportion of strongly responsive neurons that support sparse representations of individual memories [15].

Table 2: Empirical Support for Key Framework Predictions

| Framework Prediction | Experimental Paradigm | Key Findings | Neural Evidence |

|---|---|---|---|

| Sparse hippocampal coding | Single-unit recordings during recognition memory | Item-specific responses in small neuron subset | Increased distribution skewness for targets in hippocampus [15] |

| Schema-based reconstruction | Memory distortion tasks | Boundary extension, gist-based errors | Cortical generative models prioritize schema-consistent features [2] |

| Rapid hippocampal encoding | Single-trial learning tasks | Immediate memory formation | Pattern separation in hippocampal networks [15] |

| Cortical statistical learning | Associative inference tasks | Generalization to novel combinations | Neocortical representations capture feature covariances [16] |

Experimental Protocols for Investigating Memory Systems

Assessing Sparse Coding in Hippocampal Networks

To investigate sparse coding of episodic memories, researchers employ single-unit recording techniques in patients with medically intractable epilepsy undergoing intracranial monitoring. The experimental protocol involves:

Stimulus Presentation: Participants view a series of unique images (targets) during the encoding phase, followed by a recognition memory test where these targets are intermixed with novel images (foils).

Neural Recording: Extracellular action potentials are recorded from microwires implanted in the hippocampus and amygdala, with single units isolated using standardized spike sorting algorithms.

Data Analysis: Normalized spike counts are calculated for each neuron in response to each item during retrieval. The distributions of these spike counts for targets versus foils are compared using quantile-quantile plots and measures of skewness.

Statistical Testing: Bootstrap tests (e.g., B = 10,000 iterations) evaluate whether the target distribution shows significantly greater positive skewness than the foil distribution specifically in the hippocampus, indicating sparse coding [15].

This methodology has confirmed that only a small fraction of hippocampal neurons respond strongly to specific old items, with this sparse signal emerging specifically during retrieval of successfully remembered items.

Evaluating Generative Model Predictions

To test predictions of generative models of memory, researchers employ a combination of behavioral and neuroimaging approaches:

Stimulus Design: Create sets of visual scenes with systematic manipulation of predictable (schema-consistent) and unpredictable (schema-violating) elements.

Behavioral Testing: Participants complete surprise memory tests that assess both accurate recollection and specific types of distortions (e.g., boundary extension, gist-based intrusions).

Computational Modeling: Implement VAEs trained on similar stimuli to generate predictions about which features will be accurately recalled versus systematically distorted.

Model Comparison: Compare behavioral error patterns with predictions from different models (e.g., simple storage versus generative reconstruction).

This approach has demonstrated that memory errors are not random but systematically reflect the priors embedded in generative models, consistent with the framework that recall involves constructive processes rather than veridical retrieval [2].

Visualization of Framework Architectures

Standard CLS Framework Architecture

Standard CLS Framework

Generative Memory Model Architecture

Generative Memory Model

Table 3: Essential Computational Tools for Memory Research

| Research Tool | Type/Platform | Function in Research | Example Implementation |

|---|---|---|---|

| Variational Autoencoder (VAE) | Neural network architecture | Implements cortical & hippocampal generative models; learns latent representations of experiences [2] [16] | PyTorch/TensorFlow with custom encoder-decoder architectures |

| Modern Hopfield Network | Autoassociative memory | Models hippocampal pattern completion and separation; enables rapid episodic storage [2] | Continuous modern Hopfield implementation with energy-based retrieval |

| Retrieval-Augmented Generation (RAG) | Memory architecture | Provides episodic memory store with key-value pairing and similarity-based retrieval [16] | Custom implementation with cosine similarity matching |

| BoltzGen | Generative AI model | Protein binder design; demonstrates principles of generative construction in biological domains [18] | Structure prediction and generation for novel protein binders |

| Active Learning Framework | Optimization method | Guides molecular generation in drug discovery; parallels memory system exploration [19] | Nested cycles with chemoinformatic and molecular modeling oracles |

Implications for Drug Discovery and Molecular Design

The principles underlying complementary learning systems and generative memory models have informed recent advances in AI-driven drug discovery. Generative models for molecular design, such as BoltzGen, mirror the constructive processes of memory systems by generating novel protein binders for challenging biological targets [18]. These systems employ architectures that share conceptual similarities with hippocampal-cortical interactions, particularly in their ability to rapidly acquire specific instances (hippocampal analogy) while learning generalizable rules of molecular interactions (cortical analogy).

The integration of variational autoencoders with active learning frameworks in drug discovery parallels the efficient memory storage principles observed in neural systems [19]. In these implementations, VAEs learn compressed representations of molecular structures, while active learning cycles strategically guide exploration of chemical space, minimizing resource-intensive synthesis and testing—analogous to how hippocampal replay strategically trains cortical networks while minimizing interference. These approaches have demonstrated remarkable success, with generated molecules showing experimental validation in complex targets such as CDK2 and KRAS, including novel scaffolds distinct from previously known inhibitors [19].

The convergence between generative models of memory and generative AI in drug discovery highlights the cross-fertilization of ideas between neuroscience and computational chemistry. Principles of efficient representation, strategic exploration, and constructive generation are proving fundamental to both understanding biological intelligence and creating artificial intelligence systems with practical applications in medicine.

Episodic memories are not static records but are dynamically (re)constructed, sharing neural substrates with imagination and future thinking [2]. The process of memory consolidation is central to this generative framework, transforming labile hippocampal traces into stable cortical representations that support both semantic knowledge and the vivid reconstruction of past experiences. This whitepaper examines the neurobiological mechanisms underlying this process, with a specific focus on the role of hippocampal replay – the spontaneous reactivation of neural activity patterns during offline states – in training cortical generative models for memory construction and consolidation. Contemporary research has established that memory content is constructed during recall rather than merely retrieved, positioning generative models as a fundamental principle of episodic memory function [20] [21].

Core Neurobiological Mechanisms

Hippocampal Replay: Patterns and Oscillatory Context

Hippocampal replay occurs during specific brain oscillations that create optimal windows for memory reactivation. During rest and sleep, replay events are tightly coupled with:

- Sharp-wave ripples (SWRs): Irregular brief bouts of high-frequency firing (>150 Hz) in the hippocampus driven by strong excitatory inputs from CA3, resulting in a strong deflection in the local field potential [22]. These events provide privileged windows for memory reactivation.

- Slow oscillations: Cortical rhythms (<1 Hz) where periods of activity (Up states) alternate with quiet periods (Down states) [22]. The coordination between cortical slow oscillations and hippocampal ripples facilitates the cortico-hippocampal dialogue necessary for systems consolidation.

During these replay events, place cells that were active during waking experience fire in temporally compressed sequences that recapitulate past trajectories or anticipate future paths [23] [24]. This sequential activation is thought to be driven by less specific sequential activation in CA3, which in turn drives selected sub-groups of CA1 pyramidal cells [22].

The Cortical-Hippocampal Circuit in Memory Consolidation

The standard model of systems consolidation proposes that memories are initially stored in the hippocampus during wakefulness and progressively "transferred" to cortical networks during sleep [22]. A more recent generative perspective suggests that hippocampal replay trains cortical generative models to (re)create sensory experiences from latent variable representations [2].

Key anatomical components include:

- Hippocampal CA3: Operates as a single attractor or autoassociation network enabling rapid, one-trial associations between any spatial location and an object or reward, providing completion of the whole memory during recall from any part [25].

- Dentate gyrus: Performs pattern separation by competitive learning to produce sparse representations, separating out patterns represented by CA3 firing to keep memories distinct [25].

- Entorhinal cortex: Provides latent variable representations such as grid cells that encode the structural regularities of experiences [2] [26].

- Medial prefrontal cortex (mPFC): Compresses stimuli to minimal representations that form schemas or priors for memory reconstruction [2].

Table 1: Quantitative Characteristics of Hippocampal Replay Events

| Parameter | Typical Values | Measurement Context |

|---|---|---|

| Ripple Frequency | >150 Hz | Hippocampal LFP during SWRs [22] |

| Temporal Compression | 10-20x behavioral time | Sequence replay during rest/sleep [24] |

| Velocity Threshold | <5 cm/s | Detection of candidate replay events [23] |

| Significance Threshold | >95th percentile of shuffle distribution | Statistical threshold for replay detection [23] |

| Multi-unit Activity | Peak z-score >3 | Detection of population burst events [23] |

The Generative Model of Memory Construction

Theoretical Framework

The generative model conceptualizes consolidated memory as a network trained to capture the statistical structure of stored events by learning to reproduce them [2]. In this framework:

- The hippocampus serves as an autoassociative "teacher" network that rapidly encodes events through one-trial learning.

- Cortical generative networks (variational autoencoders) function as the "student" that gradually learns to reconstruct memories by capturing the statistical regularities ("schemas") across experiences.

- Hippocampal replay provides the training signal that allows the transfer of information from the hippocampal teacher to the cortical student networks.

This process explains key memory phenomena including the gradual abstraction of memories (semanticization), schema-based distortions, and the ability to imagine future events based on past experiences [2].

Compositional Memory and Zero-Shot Generalization

Recent research has revealed that hippocampal representations function compositionally, binding reusable building blocks (primitives) from cortical areas to construct memories of specific experiences [26]. These building blocks include:

- Spatial representations (grid and place cells)

- Vector representations (border-vector, object-vector, and reward-vector cells)

This compositional structure enables zero-shot generalization – the ability to behave adaptively in novel environments without new learning. When encountering a new configuration of familiar elements, the hippocampus can immediately compose an appropriate state space by binding the relevant vector representations to spatial locations [26].

Diagram 1: Compositional Memory Model. Cortical building blocks are composed into hippocampal representations through binding, enabled by replay, supporting generalized behavior.

Experimental Evidence and Methodologies

Replay Detection and Quantification

Detecting and quantifying hippocampal replay presents significant methodological challenges due to the absence of ground truth [23]. Current approaches include:

Sequence-Based Detection Methods:

- Weighted correlation: Quantifies linear correlation in time and position weighted by decoded posterior probabilities without assumptions about temporal rigidity [23].

- Linear fitting: Finds the linear path with maximum summed decoded probability, assuming constant trajectory slope [23].

- Rank-order correlation: Uses Spearman's correlation of spike times relative to place field locations [23].

Statistical Validation: Replay events are statistically validated through comparison with shuffled distributions (spatial or temporal permutations), with significant events typically exceeding the 95th percentile of shuffle-derived scores [23]. A novel framework evaluates replay detection performance using track discriminability in two-track paradigms, providing a cross-checking mechanism despite the lack of ground truth [23].

Table 2: Experimental Protocols for Studying Replay and Consolidation

| Methodology | Key Features | Applications |

|---|---|---|

| Dual-Track Paradigm | Animals run on two novel linear tracks; replay detected during PRE/RUN/POST sessions [23] | Quantifying track-specific replay and discriminability |

| Ex Vivo Cortical Cultures | Organotypic slices trained with dual-optical stimulation (ChR2/ChrimsonR) for 24h [27] | Studying prediction learning and spontaneous replay in isolated circuits |

| Teacher-Student Framework | Modern Hopfield network as teacher training cortical variational autoencoder [2] | Modeling systems consolidation as generative model training |

| Compositional State Space | RL framework with reusable building blocks (vector cells) [26] | Testing zero-shot generalization in novel environments |

Ex Vivo Evidence for Cortical Prediction and Replay

Recent ex vivo studies using cortical organotypic cultures have demonstrated that local cortical microcircuits can autonomously learn temporal patterns and spontaneously replay them, independent of hippocampal input [27].

Experimental Protocol:

- Sparse subpopulations of cortical pyramidal neurons were transduced with either Channelrhodopsin2 (ChR2) or ChrimsonR (Chrim) using Cre and FLP promoters.

- Training consisted of 24-hour presentation of temporal patterns using dual-optical stimulation (red light as CS, blue light as US) with either short (10ms) or long (370ms) inter-stimulus intervals.

- Whole-cell recordings assessed learned temporal predictions and spontaneous replay.

Findings: After 24 hours of training, cortical circuits exhibited:

- Timed prediction responses to conditioned stimulus alone, aligned with training interval.

- Spontaneous replay of learned temporal patterns during ongoing activity.

- Asymmetric connectivity between distinct neuronal ensembles with temporally-ordered activation as the mechanistic basis [27].

Diagram 2: Ex Vivo Cortical Learning Protocol. Dual-optical stimulation trains cortical circuits to learn temporal patterns, resulting in prediction and replay capabilities.

Research Tools and Reagents

Table 3: Essential Research Reagents and Solutions

| Reagent/Technique | Function/Application | Key Features |

|---|---|---|

| Channelrhodopsin2 (ChR2) | Optogenetic activation of neural populations using blue light [27] | Fast kinetics, sensitivity to blue light (~470 nm) |

| ChrimsonR | Optogenetic activation using red light [27] | Red-shifted excitation (~590 nm), enables dual-optical approaches |

| Cre/FLP Dependent Expression | Sparse, non-overlapping opsin expression in distinct neuronal subpopulations [27] | Enables differential stimulation of neural ensembles |

| Variational Autoencoders (VAEs) | Implementation of cortical generative models in computational modeling [2] | Learns latent variable representations for memory reconstruction |

| Modern Hopfield Networks | Autoassociative teacher network for rapid hippocampal encoding [2] | High memory capacity, one-trial learning of episodic events |

| Naïve Bayesian Decoder | Decoding spatial position from neural activity during replay events [23] | Reconstructs virtual trajectories from population activity |

Implications for Memory Research and Therapeutics

The generative model of hippocampal-cortical interaction provides a unified framework explaining diverse memory phenomena:

- Memory construction: Recall involves generative reconstruction rather than veridical retrieval, explaining why memories are susceptible to schema-based distortions [2].

- Systems consolidation: Gradual transfer of memory dependence from hippocampus to cortex occurs as cortical generative networks learn to reconstruct experiences with minimal error [2].

- Imagination and future thinking: The same generative mechanisms support construction of novel scenarios, explaining why hippocampal damage impairs both memory and imagination [2] [26].

- Zero-shot generalization: Compositional hippocampal representations enable adaptive behavior in novel environments without additional learning [26].

For therapeutic development, this framework suggests novel targets for memory disorders. Compounds that enhance hippocampal replay or facilitate cortical generative learning might improve memory consolidation, while understanding the precise mechanisms of compositional binding could inform treatments for conditions like Alzheimer's disease where relational memory is specifically impaired.

Computational Architectures and Clinical Applications of Generative Memory Models

The understanding of episodic memory is undergoing a paradigmatic shift from a static recording system to a dynamic, constructive process. This new framework posits that memory recall involves an active reconstruction of past experiences rather than the mere retrieval of fixed neural traces [2]. Within this theoretical context, Variational Autoencoders (VAEs) have emerged as powerful computational models that capture the essential interactions between hippocampal and cortical systems during memory formation, consolidation, and retrieval. These deep generative models provide a mathematical framework for understanding how the brain can reconstruct sensory experiences from latent representations, mirroring the proposed neural mechanisms of episodic memory construction [2] [28].

The neurobiological foundation of this approach rests on the well-established division of labor between the hippocampus, which rapidly encodes unique experiences, and cortical regions, which gradually extract statistical regularities across experiences [2] [16]. VAEs naturally model this complementary relationship through their encoder-decoder architecture, where the encoder compresses sensory input into efficient latent representations (hippocampal-like function), and the decoder reconstructs experiences from these representations (cortical-like function) [28]. This paper provides a comprehensive technical guide to implementing VAEs as models of cortical-hippocampal interaction, detailing architectural specifications, training methodologies, experimental protocols, and research tools for advancing generative models of episodic memory.

Theoretical Foundation: From Biology to Computation

Neural Correlates of Memory Construction

The hippocampal formation plays a central role in both memory encoding and retrieval, with recent evidence suggesting it functions as a generative system rather than a passive storage device. Neuroimaging studies reveal that similar neural circuits are activated during episodic recall, imagination, and future thinking, indicating a common generative mechanism for constructing mental experiences [2]. This constructive process involves the cooperative interaction between hippocampal and cortical systems, with the hippocampus binding distinctive features of an experience and cortical regions providing schematic knowledge that guides reconstruction [2].

Critical to this temporal dimension of memory are hippocampal time cells - neurons that fire sequentially during temporally structured experiences - which work alongside place cells to encode the spatiotemporal context of episodes [29]. These temporal codes are essential for reconstructing coherent episodic sequences rather than fragmented snapshots. The process of systems consolidation gradually transforms memories fromhippocampus-dependent detailed traces to cortically-based schematic representations, a transition that increases resilience to hippocampal damage while introducing schema-based distortions [2].

VAE as a Computational Model of Memory

Variational Autoencoders implement a computational framework that closely aligns with the brain's memory systems. In this analogy, the encoder network corresponds to the hippocampal inference process that compresses sensory input into efficient latent codes, while the decoder network mirrors cortical generative processes that reconstruct experiences from these codes [28]. The latent space of the VAE represents the compressed memory representation that captures the essential features of experiences while discarding predictable elements [2].

The VAE objective function directly implements the memory efficiency principle observed in biological systems, balancing accurate reconstruction with representational efficiency [2]. This balance is formalized through the evidence lower bound (ELBO), which consists of two terms: (1) a reconstruction loss that encourages faithful recreation of input experiences, and (2) a regularization term that encourages the latent space to follow an efficient prior distribution (typically Gaussian) [28]. This mathematical formulation captures the brain's need to simultaneously maintain fidelity to past experiences while efficiently organizing memories within existing knowledge structures.

Architectural Implementation

Core VAE Architecture for Memory Modeling

Implementing a biologically-plausible cortical-hippocampal model requires a specialized VAE architecture that captures the hierarchical and multi-scale nature of memory processing. The base architecture should include:

Sensory Encoder: A 5-layer convolutional network that processes raw sensory input (images, sounds) into increasingly abstract feature representations. Each layer should implement a stride of 2 for progressive dimensionality reduction, mirroring the cortical processing hierarchy [28].

Bottleneck Layer: A dense layer that maps convolutional features to the parameters of the latent distribution (μ and σ), representing the compressed memory trace formed through hippocampal indexing [2].

Stochastic Sampling: A reparameterization operation that generates latent samples z from the inferred distribution, enabling the probabilistic nature of memory recall and construction [28].

Generative Decoder: A 5-layer transposed convolutional network that reconstructs sensory experiences from latent samples, implementing the cortical generation process that occurs during memory recall [28].

The model should be trained using the Adam optimizer with a learning rate of 10⁻⁴, with training data consisting of diverse natural images to ensure robust latent representations [28].

Advanced Architectures for Specific Memory Phenomena

Dual-System Architecture (GENESIS Model)

For modeling the interaction between semantic and episodic memory systems, the GENESIS framework implements a dual-VAE architecture with separate but interacting components [16]:

Cortical-VAE: Models gradual semantic learning through a capacity-limited encoder-decoder pair that extracts statistical regularities across experiences. This system specializes in generalization and conceptual knowledge.

Hippocampal-VAE: Supports rapid episodic encoding within a retrieval-augmented generation (RAG) architecture, storing specific experiences as key-value pairs with temporal context.

Table 1: GENESIS Model Components and Functions

| Component | Architecture | Function | Biological Correlate |

|---|---|---|---|

| Cortical-VAE Encoder | CNN with capacity limitation | Extracts item-specific latent embeddings | Perirhinal/entorhinal cortex |

| Cortical-VAE Decoder | Transposed CNN | Reconstructs perceptual representations | Sensory cortex |

| Hippocampal-VAE | RAG with key-value storage | Forms episodic traces with temporal context | Hippocampal formation |

| Temporal Embedding | Positional encoding | Captures sequential order during experiences | Hippocampal time cells |

Temporal Codebook Architecture

For capturing temporal dynamics in episodic memory, the Spiking VQ-VAE with temporal codebook incorporates hippocampal time cell mechanisms through spiking neural networks [29]:

Spike Encoder: Converts static inputs into temporal spike trains using direct coding, representing the transformation of sensory inputs into neural activation patterns.

Temporal Codebook: Implements a discrete latent representation that triggers different time cell populations based on similarity measures, emulating the sequential firing of hippocampal time cells during experience.

Spike Decoder: Converts temporal patterns back into static representations for experience reconstruction, modeling the cortical integration of temporally structured information.

Diagram 1: Core VAE Architecture for Cortical-Hippocampal Interaction

Experimental Protocols and Methodologies

Model Training and Evaluation Framework

Establishing robust experimental protocols is essential for validating VAE models of memory. The following standardized protocol ensures reproducible evaluation of model performance:

Data Preparation:

- Utilize diverse natural image datasets (e.g., ImageNet ILSVRC2012) with >2 million training samples [28]

- Resize all images to 128×128×3 resolution with pixel values normalized to [0,1]

- Apply data augmentation including random horizontal flipping

- Organize data into batches of 200 samples for mini-batch training

Training Procedure:

- Initialize model weights using He initialization

- Optimize using Adam optimizer with learning rate of 10⁻⁴

- Train for minimum of 100 epochs with early stopping based on validation loss

- Compute ELBO loss with β=1.0 initially, with optional annealing

Evaluation Metrics:

- Reconstruction Accuracy: Mean squared error between original and reconstructed images

- Latent Organization: KL divergence between latent distribution and Gaussian prior

- Generalization Capability: Performance on held-out test datasets

- Memory Capacity: Number of distinct episodes that can be stored and retrieved

Specific Experimental Paradigms

Episodic Memory Reconstruction Protocol

This protocol evaluates the model's ability to encode and reconstruct specific episodes after single exposure, mimicking one-shot learning in biological systems [30]:

- Encoding Phase: Present novel images (e.g., from Fashion MNIST dataset) for single exposure

- Consolidation Phase: Allow for internal replay mechanisms to strengthen memory traces

- Retrieval Phase: Query the model with partial cues and evaluate completeness of reconstruction

- Interference Testing: Present interpolated material to assess memory robustness

Table 2: Episodic Memory Performance on Fashion MNIST

| Model Architecture | Units in C-System | Reconstruction Accuracy | Temporal Stability |

|---|---|---|---|

| Basic VAE | 10,000 | 85.2% | 72.1% |

| Enhanced VAE | 20,000 | 89.7% | 81.5% |

| Dual-System VAE | 40,000 | 92.3% | 88.9% |

Semantic Distortion and Generalization Protocol

This paradigm tests the model's tendency to incorporate schematic knowledge during reconstruction, leading to semantic distortions that increase with consolidation [2]:

- Schema Learning: Pre-train model on category-typical examples to establish semantic knowledge

- Atypical Encoding: Present category-atypical examples (e.g., unusual objects in typical scenes)

- Delayed Testing: Evaluate reconstruction after varying retention intervals