Interpreting the Black Box: Challenges, Methods, and Best Practices for Machine Learning in Biomedicine

The adoption of complex machine learning (ML) and deep learning (DL) models in biomedical research and drug development is hampered by their 'black box' nature, where internal decision-making processes are...

Interpreting the Black Box: Challenges, Methods, and Best Practices for Machine Learning in Biomedicine

Abstract

The adoption of complex machine learning (ML) and deep learning (DL) models in biomedical research and drug development is hampered by their 'black box' nature, where internal decision-making processes are opaque. This creates critical challenges in trust, validation, and clinical deployment. This article provides a comprehensive analysis for researchers and drug development professionals, exploring the fundamental causes of interpretability issues, reviewing state-of-the-art Explainable AI (XAI) methodologies, and presenting practical troubleshooting strategies. It further offers a comparative evaluation of validation frameworks to guide the selection and auditing of ML models, emphasizing the balance between predictive power and transparency for high-stakes biomedical applications.

The Black Box Problem: Understanding Opacity in Machine Learning Models

Black Box AI refers to artificial intelligence systems whose internal decision-making processes are opaque and difficult for humans to understand, even for the developers who build them [1]. In scientific research, particularly in drug development, this opacity poses significant challenges for validating results, identifying biases, and reproducing findings. These models accept inputs and generate outputs, but the reasoning behind their predictions remains hidden within complex algorithms [2].

The core issue stems from the extreme complexity of modern machine learning architectures, especially deep learning models with millions or billions of parameters that interact in non-linear ways [1]. As one analysis of developer discussions noted, "addressing questions related to XAI poses greater difficulty compared to other machine-learning questions" [3]. For researchers requiring rigorous validation of their methods, this creates a critical trust barrier.

Frequently Asked Questions (FAQs)

What exactly makes an AI model a "black box"? Black box models, particularly deep neural networks, contain numerous "hidden layers" between input and output layers. While developers understand the general data flow, the specific reasoning behind individual decisions remains mysterious because users cannot inspect how the model processes information through these hidden layers [1].

Why can't we use simpler, more interpretable models for all research applications? There's an inherent trade-off between accuracy and explainability. In many research domains, including drug discovery, black box models consistently deliver superior predictive performance for complex tasks like molecular property prediction or protein folding. As noted in the literature, "there are some things that only an advanced AI model can do" [1].

Which AI tools most commonly pose black box challenges in research settings? According to analysis of technical discussions, SHAP, ELI5, AIF360, LIME, and DALEX are among the tools most frequently associated with interpretation challenges. SHAP leads in usage frequency (67.2% of iterations), while DALEX, LIME, and AIF360 present the most significant interpretation difficulties [3].

What types of questions do researchers typically ask about interpreting black box models? Analysis reveals that "how" questions dominate (particularly for visualization and data management), while "what" questions are more common for understanding XAI concepts and model analysis. "Why" questions appear frequently in troubleshooting contexts, reflecting researchers' need to understand underlying AI issues [3].

Troubleshooting Common Black Box Interpretation Issues

Issue 1: SHAP Implementation and Runtime Errors

Problem: Researchers encounter implementation errors when applying SHAP (SHapley Additive exPlanations) to complex neural network models for feature importance analysis.

Solution Methodology:

- Environment Verification: Confirm compatible versions of SHAP, NumPy, and your ML framework (TensorFlow/PyTorch)

- Model Wrapping: Ensure proper wrapping of custom models using SHAP's

KernelExplainerfor non-standard architectures - Background Data Selection: Use representative background datasets (typically 50-100 instances) that accurately reflect your training distribution

- Gradient Handling: For deep learning models, verify gradient computation through backpropagation chains

Expected Outcome: Successful generation of feature importance values explaining individual predictions, enabling identification of key molecular descriptors or biological features driving model decisions.

Issue 2: Visualization Challenges with Model Interpretations

Problem: Generated explanation outputs (partial dependence plots, feature importance charts) lack sufficient clarity for scientific publication or stakeholder communication.

Solution Methodology:

- Color Palette Optimization: Implement scientifically appropriate color schemes with sufficient perceptual distance between categories

- Context-Rich Annotations: Incorporate domain-specific reference points (e.g., known active compounds, established biological thresholds)

- Hierarchical Explanation: Structure visual explanations from global model behavior to local instance-level predictions

- Accessibility Compliance: Ensure visualizations meet scientific publication standards for color contrast and interpretability

Expected Outcome: Publication-ready visual explanations that clearly communicate how input features (molecular properties, genomic markers) influence model predictions to diverse audiences including non-technical stakeholders.

Issue 3: Model Misconfiguration and Usage Errors

Problem: Models produce unexplained performance degradation or erratic behavior when deployed with new data distributions.

Solution Methodology:

- Distribution Shift Detection: Implement statistical tests (Kolmogorov-Smirnov, population stability index) to compare training vs. deployment data distributions

- Prediction Confidence Monitoring: Track confidence scores across deployments to identify regions of high uncertainty

- Adversarial Testing: Systematically probe model decision boundaries with slightly perturbed inputs

- Explanation Consistency: Verify that similar inputs receive both similar predictions and similar explanations

Expected Outcome: Stable, reliable model deployment with documented limitations and appropriate uncertainty quantification for research validation.

Quantitative Analysis of XAI Tools and Challenges

Table 1: Popularity and Difficulty Metrics for XAI Topics Among Researchers

| Topic Category | Percentage of Discussions | Primary Question Types | Difficulty (Unanswered Questions) |

|---|---|---|---|

| Tools Troubleshooting | 38.14% | How, Why | High |

| Feature Interpretation | 20.22% | How, What | Medium |

| Visualization | 14.31% | How (67.5%) | Medium |

| Model Analysis | 13.81% | What (23%) | High |

| Concepts & Applications | 7.11% | What (45%) | Low |

| Data Management | 6.41% | How (66.67%) | Medium |

Table 2: XAI Tool Usage Patterns and Challenge Areas

| XAI Tool | Usage Frequency | Primary Challenge Areas | Typical Resolution Time |

|---|---|---|---|

| SHAP | 67.2% | Implementation errors, visualization | Medium |

| ELI5 | 15.8% | Troubleshooting, model barriers | Low |

| AIF360 | 7.3% | Troubleshooting, fairness metrics | High |

| Yellowbrick | 5.1% | Visualization, plot customization | Low |

| LIME | 3.9% | Model barriers, instability | High |

| DALEX | 0.7% | Model barriers, compatibility | High |

Experimental Protocols for Black Box Interpretation

Protocol 1: Model Interpretation via SHAP Analysis

Purpose: To explain individual predictions from black box models by quantifying each feature's contribution.

Materials:

- Trained predictive model (any architecture)

- Preprocessed test dataset (50-500 instances)

- SHAP library (v0.4.0+)

- Visualization tools (Matplotlib, Plotly)

Methodology:

- Initialize appropriate SHAP explainer for model type:

- TreeExplainer for tree-based models

- DeepExplainer for neural networks

- KernelExplainer for model-agnostic cases

- Compute SHAP values for test instances:

- Generate visualization plots:

- Force plots for individual predictions

- Summary plots for global feature importance

- Dependence plots for feature relationships

- Interpret results in domain context:

- Map features to biological concepts

- Identify critical decision thresholds

- Document counterintuitive relationships

Validation: Compare explanations with domain knowledge and positive controls.

Protocol 2: Model-Agnostic Interpretation with LIME

Purpose: To create locally faithful explanations around individual predictions regardless of model architecture.

Materials:

- Black box model with prediction function

- Local interpretable model (linear, decision tree)

- LIME library (v0.2+)

- Data discretization tools

Methodology:

- Define explanation neighborhood around instance of interest

- Sample perturbed instances from training distribution

- Obtain black box predictions for perturbed samples

- Fit interpretable model to weighted predictions:

- Validate local fidelity through neighborhood prediction accuracy

Interpretation: Identify primary decision drivers within local prediction context and assess explanation stability across similar instances.

Research Reagent Solutions for XAI Experiments

Table 3: Essential Tools for Black Box Interpretation Research

| Tool/Category | Primary Function | Research Application |

|---|---|---|

| SHAP (SHapley Additive exPlanations) | Quantifies feature contribution to predictions | Identifying critical molecular descriptors in drug discovery |

| LIME (Local Interpretable Model-agnostic Explanations) | Creates local explanations around predictions | Understanding individual compound classification decisions |

| ELI5 (Explain Like I'm 5) | Debugging and visualizing ML models | Debugging feature importance in QSAR models |

| AIF360 (AI Fairness 360) | Detecting and mitigating model bias | Ensuring fairness in patient selection algorithms |

| Yellowbrick | Visual analysis and diagnostic tools | Creating publication-ready model explanation figures |

| DALEX | Model-agnostic exploration and explanation | Comparing multiple drug response prediction models |

| Attention Mechanisms | Visualizing feature importance in neural networks | Interpreting focus areas in protein sequence analysis |

| Partial Dependence Plots | Visualizing feature marginal effects | Understanding dose-response relationships in compound screening |

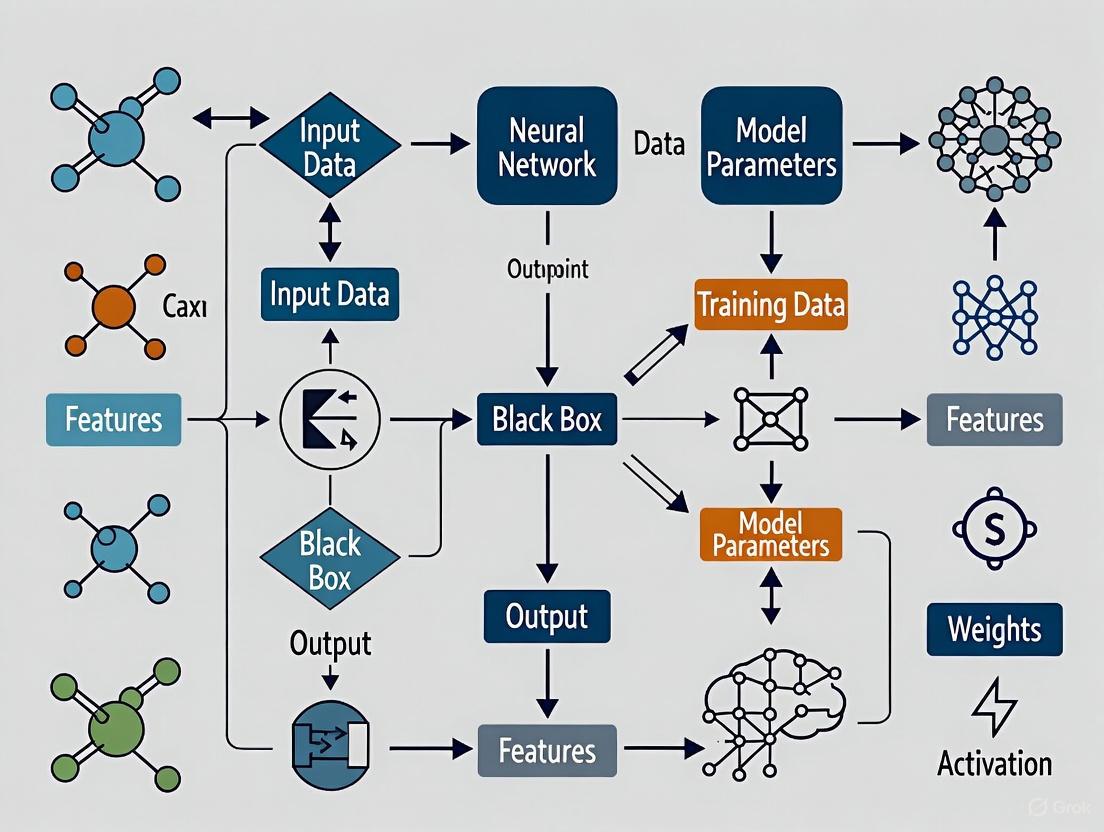

Visualizing Black Box Interpretation Workflows

Diagram 1: XAI Troubleshooting Methodology

Diagram 2: Model Interpretation Process Flow

Addressing black box AI challenges requires systematic approaches combining technical solutions with domain expertise. The methodologies presented here provide researchers with practical frameworks for interpreting complex models while maintaining scientific rigor. As the field evolves, integrating explanation capabilities directly into model development pipelines will be essential for building trust in AI-driven scientific discoveries, particularly in high-stakes domains like drug development where understanding failure modes is as crucial as celebrating successes.

FAQs: Navigating Accuracy vs. Interpretability in Drug Discovery

FAQ 1: Why is there a fundamental trade-off between model accuracy and interpretability?

The trade-off arises because the most accurate models, such as deep neural networks, are often the most complex. They build a hierarchical, internal representation of data that is incredibly effective for prediction but difficult for human experts to decipher. This is known as the "black box" problem [4]. In contrast, simpler models like linear regression or decision trees are more interpretable because their decision-making logic is transparent, but this often comes at the cost of lower predictive performance, especially on complex datasets like those in drug discovery involving molecular properties or protein interactions [4] [5].

FAQ 2: What are the specific risks of using black-box models in pharmaceutical research?

Using black-box models in drug development carries several critical risks:

- Unexplained Failures: An inability to understand why a model fails can stall research and make debugging nearly impossible [4] [5].

- Safety and Efficacy Concerns: In high-stakes fields like healthcare, a wrong prediction without a clear explanation can lead to serious consequences. Regulatory bodies are increasingly demanding transparency to evaluate a drug's safety and effectiveness [6] [7].

- Hidden Biases: Opaque models can inadvertently learn and perpetuate biases present in the training data, such as favoring certain molecular structures for non-scientific reasons. This can compromise the fairness and generalizability of your research [4] [5].

FAQ 3: My complex model is highly accurate on validation data. Why do I still need to explain its predictions?

High validation accuracy does not guarantee that a model has learned the correct underlying structure of the problem. The model might be:

- Learning Spurious Correlations: It might be relying on artifacts in the data that do not represent true cause-and-effect relationships in biology or chemistry.

- Non-Robust: Its performance may degrade significantly when faced with real-world, out-of-sample data [4]. Explainability techniques act as a crucial sanity check. They help verify that the model's decisions are based on scientifically plausible features, thereby building trust and ensuring that the model will perform reliably in practice [7] [5].

FAQ 4: What methodologies can help interpret complex models without sacrificing too much accuracy?

You can adopt several strategies to bridge the interpretability gap:

- Use Explainable AI (XAI) Techniques: Apply model-agnostic methods like SHAP and LIME to explain individual predictions from any black-box model [5].

- Employ Hybrid Models: Consider using systems that combine interpretable models with black-box components, allowing for complex data handling while still providing explanations through more transparent subcomponents [6].

- Leverage Surrogate Models: Train a simple, interpretable model to approximate the predictions of the complex black-box model. The global surrogate model can then be studied to gain insights into the overall behavior of the complex system [8].

- Prioritize Interpretable Architectures: When possible, use models like fairness-aware decision trees, which can enforce transparency and fairness constraints directly during the model-building process [9].

Troubleshooting Guides

Problem: Model Performance is High, But Interpretability is Low

Symptoms: Your deep learning model for predicting drug-target interactions shows a high Area Under the Curve (AUC) but provides no insight into which molecular features drove the decision.

Solution: Implement post-hoc explanation frameworks to illuminate the model's decision-making process.

Step 1: Apply Local Explanation Tools Use LIME to analyze individual predictions. LIME creates a local, interpretable model around a single prediction by slightly perturbing the input data and observing changes in the output [5].

Step 2: Apply Global Explanation Tools Use SHAP to get a consistent, global view of feature importance. SHAP assigns each feature an importance value for a particular prediction based on cooperative game theory [5].

Step 3: Visualize Explanations Create force plots or summary plots provided by SHAP to communicate which features are most important overall and how they push the model's output for specific instances [10] [5].

Experimental Protocol: Using SHAP for a Compound Activity Prediction Model

- Train Your Model: Train your black-box model (e.g., a Graph Neural Network) on your compound activity dataset.

- Initialize the SHAP Explainer: Choose an appropriate explainer. For tree-based models, use

TreeExplainer; for neural networks and other models,KernelExplaineris a good starting point. - Calculate SHAP Values: Compute SHAP values for a representative sample of your test set. This quantifies the marginal contribution of each feature to the prediction for every sample.

- Generate and Analyze Visualizations:

- Create a SHAP summary plot to see global feature importance.

- Select specific compounds of interest and generate SHAP force plots to visualize the contribution of features to that single prediction.

- Validate Scientifically: Correlate the top features identified by SHAP with known biochemical principles or existing literature to ensure the model is learning valid relationships [7] [5].

Problem: Ensuring Regulatory Compliance for Model Interpretability

Symptoms: Your model is part of a regulatory submission, and you need to demonstrate its transparency and adherence to guidelines like those in the EU's AI Act [6].

Solution: Integrate explainability as a core requirement throughout the machine learning lifecycle.

Step 1: Adopt a "White-Box" by Design Mindset Wherever possible, favor interpretable models. For example, use decision trees with fairness constraints built directly into their construction algorithm, which enforces fairness without needing post-processing [9].

Step 2: Use Decomposition Methods Implement methods like Maximum Interpretation Decomposition (MID). This approach, available in the R package

midr, functionally decomposes a black-box model into a low-order additive representation, creating a global surrogate model that is inherently more interpretable [8].Step 3: Comprehensive Documentation Maintain clear documentation of the model's design, data sources, all preprocessing steps, and the explanation techniques used. This creates an audit trail that is essential for regulatory review [6] [11].

Experimental Protocol: Building a Transparent Model with MID

- Develop the Black-Box Model: Train your high-accuracy, complex model on the pharmaceutical dataset.

- Perform Functional Decomposition: Use the

midrpackage to apply Maximum Interpretation Decomposition. This step derives a low-order additive representation of your black-box model by minimizing the squared error between the original model and the surrogate. - Analyze the Surrogate: The output of MID is an interpretable surrogate model. Study its components to understand the global behavior of your original system.

- Report and Submit: Include the results of the MID analysis, the surrogate model's performance, and its interpretations in your regulatory documentation to demonstrate a thorough understanding of the model's mechanics [8].

Quantitative Data in Drug Discovery XAI

Table 1: Global Research Output in Explainable AI for Drug Discovery (Data up to June 2024) [7]

| Country | Total Publications (TP) | Percentage of 573 Publications | Total Citations (TC) | TC/TP (Average Citations per Paper) |

|---|---|---|---|---|

| China | 212 | 37.00% | 2,949 | 13.91 |

| USA | 145 | 25.31% | 2,920 | 20.14 |

| Germany | 48 | 8.38% | 1,491 | 31.06 |

| UK | 42 | 7.33% | 680 | 16.19 |

| South Korea | 31 | 5.41% | 334 | 10.77 |

| India | 27 | 4.71% | 219 | 8.11 |

| Japan | 24 | 4.19% | 295 | 12.29 |

| Canada | 20 | 3.49% | 291 | 14.55 |

| Switzerland | 19 | 3.32% | 645 | 33.95 |

| Thailand | 19 | 3.32% | 508 | 26.74 |

Table 2: Performance Comparison of Advanced ML Paradigms in Drug Discovery [12]

| Machine Learning Paradigm | Key Application in Drug Discovery | Reported Benefit / Advantage |

|---|---|---|

| Deep Learning (CNNs, RNNs, Transformers) | Prediction of molecular properties, protein structures, ligand-target interactions. | Accelerates lead compound identification and optimization. |

| Federated Learning | Secure, multi-institutional collaboration for biomarker discovery and drug synergy prediction. | Integrates diverse datasets without compromising data privacy. |

| Transfer Learning & Few-Shot Learning | Molecular property prediction and toxicity profiling with limited data. | Leverages pre-trained models to overcome data scarcity, reducing development timelines and costs. |

Visual Workflows for XAI Protocols

XAI Technique Selection Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software Tools for Explainable AI Research

| Tool / Solution | Function / Purpose | Key Characteristic |

|---|---|---|

| SHAP (SHapley Additive exPlanations) | Explains the output of any ML model by calculating the marginal contribution of each feature to the prediction. | Model-agnostic; provides both local and global explanations. |

| LIME (Local Interpretable Model-agnostic Explanations) | Approximates a black-box model locally around a specific prediction with an interpretable model (e.g., linear regression). | Model-agnostic; designed for local, instance-level explanations. |

| midr R Package | Implements Maximum Interpretation Decomposition (MID) to create a global, low-order additive surrogate model from a black-box. | Provides a functional decomposition for building interpretable global surrogates. |

| TensorFlow Playground | Visual, interactive web application for understanding how neural networks learn. | Educational tool for building intuition about deep learning parameters. |

| Pecan AI | Low-code platform that automates data preparation and model building, incorporating visualization for insight. | Streamlines the ML workflow, making initial exploration and troubleshooting faster. |

Troubleshooting Guides

Troubleshooting Guide 1: Unexplained Performance Discrepancies in Subgroups

Problem: Your model performs well overall but shows significantly different accuracy for different demographic subgroups.

Symptoms:

- Lower precision or recall for specific racial, gender, or age groups

- Disproportionate false positive/negative rates across populations

- Model recommendations contradict clinical intuition for certain patient subgroups

Diagnostic Steps:

- Disaggregate Evaluation Metrics: Calculate performance metrics (accuracy, precision, recall, F1-score, AUROC) separately for each protected subgroup (e.g., by race, gender, age). Compare against majority group performance [13] [14].

- Analyze Training Data Representation: Audit your training dataset for representation bias. Check if minority subgroups constitute a sufficient sample size relative to the complexity of your model to avoid underestimation bias [13].

- Check for Flawed Proxies: Identify if your model relies on proxy variables correlated with protected attributes. A common example is using healthcare costs as a proxy for health needs, which can systematically underestimate illness in Black patients due to historical underutilization of care [15] [16].

- Implement Explainability Techniques: Use tools like SHAP (SHapley Additive exPlanations) or LIT (Learning Interpretability Tool) to generate local explanations for incorrect predictions on underrepresented groups. This can reveal if the model is latching onto spurious correlations or "demographic shortcuts" [17] [18].

Solutions:

- Apply Bias Mitigation Techniques: Use in-processing techniques like adversarial debiasing to incentivize the model to learn balanced predictions, or post-processing techniques to calibrate decision thresholds for different subgroups [14].

- Gather More Representative Data: Prioritize data collection from underrepresented subgroups to create a balanced dataset. If real data is scarce, consider ethical use of synthetic data generation (e.g., GANs) to supplement these groups [15].

- Use Interpretable Models: For high-stakes scenarios, consider replacing black-box models with inherently interpretable models (e.g., logistic regression, decision lists) to enable direct verification of model reasoning and ensure it aligns with clinical or domain knowledge [19].

Troubleshooting Guide 2: Black Box Model Rejection by End-Users

Problem: Clinicians or hiring managers distrust the model's recommendations and develop workarounds, reducing the tool's operational value [16].

Symptoms:

- End-users request overrides or bypass the system

- Low adoption rates despite high technical accuracy

- Users report that the model's output "doesn't make sense" for specific cases

Diagnostic Steps:

- Assess Explanation Fidelity: If using post-hoc explanations, verify their fidelity. Inaccurate or low-fidelity explanations severely limit trust, as they do not faithfully represent what the original model computes [19].

- Conduct User Interviews and Workflow Analysis: Identify the specific points of friction. Understand what information end-users need to build trust, which often goes beyond simple feature importance scores [17].

- Audit for Contextual Misalignment: Check if the model's decision-making process violates known domain constraints or常识 (e.g., recommending a treatment that is contraindicated for a specific subgroup) [19].

Solutions:

- Provide Faithful, Local Explanations: Implement explanation methods that are accurate for individual predictions. For a hiring model, this could mean highlighting the key skills and experiences that led to a candidate's score, ensuring the explanation matches the model's actual logic [17] [19].

- Incorporate Human-in-the-Loop (HITL) Design: Integrate controlled human oversight points. Allow experts to override model recommendations and use these overrides as feedback for continuous learning and model improvement [13] [20] [21].

- Demonstrate Consistency: Use the LIT tool to show users that the model behaves consistently when irrelevant features (like textual style or verb tense in clinical notes) are changed, building confidence in its robustness [18].

Frequently Asked Questions (FAQs)

Q1: What are the most common root causes of bias in machine learning models for healthcare and hiring?

The root causes can be categorized into three main areas [13] [15] [16]:

- Data Bias: Models are trained on historical data that reflects existing societal inequities (historic bias), or data that underrepresents certain populations (representation bias). For example, medical data historically focused on white male patients, leading to models that are less accurate for women and ethnic minorities [16].

- Algorithmic & Design Bias: The model optimization objective may not include fairness constraints, or it may use proxy variables that are correlated with protected attributes (measurement bias). A classic example is an algorithm that uses "healthcare cost" as a proxy for "health need," systematically underestimating the needs of Black patients [15] [16].

- Human & Deployment Bias: Lack of diversity in development teams can lead to blind spots. Furthermore, deployment bias occurs when a model is used in a context or population that is significantly different from its training environment [15].

Q2: Our model is a proprietary black-box system. How can we assess its fairness?

Even with a black-box model, you can perform rigorous fairness audits [20] [21]:

- Outcome Testing (Disparate Impact Analysis): This is a primary method required by new regulations. You analyze the model's input-output behavior by comparing selection rates, false positive rates, and false negative rates across different demographic groups. A significant discrepancy indicates potential bias [20] [21].

- Benchmarking with Diverse Datasets: Test the model on carefully curated benchmark datasets that are representative of the diverse populations you serve. Performance gaps on these benchmarks reveal subgroup-specific weaknesses [14].

- Use Explainable AI (XAI) Techniques: Apply model-agnostic explanation tools like SHAP or LIT. These can provide local explanations for individual predictions and aggregate these to gain global insights into which features the model is using, helping to identify reliance on problematic proxies [17] [18].

Q3: Are there any legal or compliance risks associated with using biased AI models in hiring?

Yes, the legal and compliance landscape is rapidly evolving and poses significant risks [20] [21]:

- Liability under Anti-Discrimination Laws: Tools used for hiring are considered "high-risk" under new regulations like the EU AI Act and the Colorado AI Act. Companies face liability under existing laws like Title VII of the Civil Rights Act and the Age Discrimination in Employment Act (ADEA). The ongoing Mobley v. Workday class-action lawsuit, which alleges discrimination by an AI hiring platform, is a key case to watch [20] [21].

- Mandatory Audits and Transparency: States like California, New York, and Illinois are implementing laws that require mandatory bias audits of AI hiring tools and transparency to candidates about their use [20].

- Best Practices: To mitigate risk, employers should conduct continuous bias audits, retain human oversight to override AI recommendations, and stay updated on changing regulatory standards [20] [21].

The following tables summarize quantitative findings and methodologies from key real-world case studies of algorithmic bias.

Table 1: Documented Case Studies of Algorithmic Bias in Healthcare

| Case Study / Source | AI Application Domain | Quantitative Finding / Nature of Bias | Disadvantaged Group(s) | Primary Bias Type |

|---|---|---|---|---|

| Obermeyer et al., Science [15] [16] | Healthcare Resource Allocation | Used past healthcare costs as a proxy for health needs, systematically underestimating illness severity. | Black Patients | Measurement Bias / Flawed Proxy |

| Kiyasseh et al., npj Digital Medicine [14] | Surgical Skill Assessment (SAIS) | AI showed "underskilling" (downgrading performance) and "overskilling" (upgrading performance) at different rates for different surgeon sub-cohorts. | Specific Surgeon Sub-cohorts | Evaluation Bias |

| London School of Economics (LSE) [16] | LLM for Patient Note Summarization | For identical clinical notes, used less severe language (e.g., "independent") for female patients vs. male patients ("complex," "unable"). | Women | Representation Bias |

| MIT Research [16] | Medical Imaging (Chest X-rays) | Models that best predicted patient race showed the largest "fairness gaps" (diagnostic inaccuracies). | Women, Black Patients | Demographic Shortcut / Aggregation Bias |

Table 2: Documented Case Studies of Algorithmic Bias in Hiring

| Case Study / Source | Context | Key Finding / Allegation | Disadvantaged Group(s) | Primary Bias Type |

|---|---|---|---|---|

| Mobley v. Workday [20] [21] | AI-Powered Resume Screening | Class-action lawsuit alleging systematic discrimination in screening for 100+ jobs based on race, age, and disability. | African American, Older Applicants, Applicants with Disabilities | Disparate Impact |

| University of Washington (2025) [20] | Human-AI Collaboration in Hiring | Recruiters who used biased AI tools demonstrated hiring preferences that matched the AI's skewed recommendations. | Candidates of different races and genders | Automation Bias |

Experimental Protocols for Bias Detection

Protocol 1: Disparate Impact Analysis for Hiring Algorithms

Objective: To quantitatively assess if an AI hiring tool has a significantly different selection rate for protected subgroups.

Materials: Historical applicant data (resumes, applications), protected attribute data (for testing only), model predictions (scores/classifications). Methodology:

- Data Preparation: Anonymize applicant data. Ensure protected attributes (race, gender, age) are stored separately and only used for this audit.

- Model Inference: Run the candidate screening model on the historical dataset to obtain a prediction (e.g., "select" or "reject") for each applicant.

- Calculate Selection Rates: For each protected subgroup (e.g., Group A and Group B), calculate the selection rate (number of selected candidates / total candidates in that subgroup).

- Compute Disparate Impact Ratio: Divide the selection rate of the minority group (Group B) by the selection rate of the majority group (Group A). A ratio below 0.8 (the "80% rule") often indicates evidence of adverse impact [20] [21].

- Statistical Testing: Perform a chi-squared test to determine if the observed differences in selection rates are statistically significant.

Protocol 2: Diagnostic Fairness Audit for Clinical AI Models

Objective: To evaluate the diagnostic performance of a clinical AI model (e.g., for skin cancer or disease prediction) across different racial and ethnic groups.

Materials: A curated benchmark dataset with expert-verified diagnostic labels and demographic metadata. Model prediction outputs (e.g., probability of disease). Methodology:

- Stratified Evaluation: Split the test dataset by racial/ethnic groups (e.g., White, Black, Asian, Hispanic).

- Calculate Group-Wise Metrics: For each subgroup, calculate key diagnostic performance metrics: Area Under the ROC Curve (AUROC), sensitivity (recall), specificity, and positive predictive value (precision) [14] [16].

- Identify Performance Gaps: Compare the metrics across subgroups. A clinically significant drop in AUROC or sensitivity for a particular group indicates that the model is less accurate for that population, which could lead to underdiagnosis [14] [16].

- Error Analysis: Manually review false negative cases from the disadvantaged group. Use XAI tools (e.g., salience maps for imaging models) to understand what features the model used to make an incorrect call, checking for reliance on non-clinical artifacts [18] [16].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Interpreting and Auditing Black-Box Models

| Tool / Technique | Type | Primary Function | Key Application in Bias Research |

|---|---|---|---|

| SHAP (SHapley Additive exPlanations) [17] [22] | Explainable AI (XAI) Library | Assigns each feature an importance value for a single prediction, based on cooperative game theory. | Provides local and global interpretability, identifying which features (e.g., specific words in a resume, pixels in an X-ray) most influenced a model's decision. |

| LIT (Learning Interpretability Tool) [18] | Interactive Visualization Platform | A visual, interactive tool for NLP and other models, supporting salience maps, metrics, and counterfactual generation. | Allows researchers to probe model behavior by asking "what if" questions, testing sensitivity to perturbations, and comparing model performance across data slices. |

| TWIX (Task-weighted Iimportance eXplanation) [14] | Bias Mitigation Add-on | An in-processing method that teaches a model to predict the importance of input segments (e.g., video frames) for its decision. | Used in surgical AI to mitigate bias by forcing the model to focus on clinically relevant features rather than spurious correlations, improving fairness. |

| Fairness Audit Framework [20] [21] | Regulatory & Methodology Toolkit | A set of procedures and metrics for measuring disparate impact and other fairness criteria, as mandated by law. | Enables compliance with emerging regulations (e.g., NYC Local Law 144, Colorado AI Act) by providing a standardized way to test for hiring bias. |

| Inherently Interpretable Models (e.g., Sparse Linear Models, Decision Lists) [19] | Modeling Approach | Models designed to be transparent and understandable by their very structure, without needing post-hoc explanations. | Provides a high-fidelity understanding of model reasoning, crucial for high-stakes domains where explanation reliability is paramount. Avoids the pitfalls of unfaithful explanations. |

Experimental and Mitigation Workflow Diagrams

Bias Detection and Mitigation Workflow

AI Hiring Risk and Mitigation Framework

Technical Support Center: Troubleshooting Black Box Models

Frequently Asked Questions (FAQs)

1. What exactly is a "Black Box" AI model? A Black Box AI model is a system where the internal decision-making process is opaque and difficult for humans to understand, even for the developers who built it [1]. Data goes in and results come out, but the inner mechanisms remain a mystery [1]. These models typically involve complex machine learning or deep learning architectures, such as multilayered neural networks with hundreds or thousands of layers, where users can see the input and output layers but cannot interpret what happens in the "hidden layers" in between [1].

2. Why is the "black box" nature of AI models a significant problem for research and drug development? The black box problem is critical in fields like drug development because it creates a lack of transparency and accountability [1]. If a model misdiagnoses a patient or suggests an ineffective drug compound, it is unclear who holds responsibility—the engineers, the company, or the AI itself [1]. Furthermore, the unexplainability of these systems can limit patient autonomy in medical decision-making and introduce potential psychological and financial burdens, as the reasoning behind a high-stakes recommendation remains opaque [23].

3. Is it true that more complex, black box models are always more accurate? No, this is a common myth [19]. There is a widespread belief that a trade-off exists, where higher accuracy necessitates lower interpretability. However, for problems with structured data and meaningful features, there is often no significant performance difference between complex classifiers (like deep neural networks) and simpler, more interpretable classifiers (like logistic regression or decision lists) [19]. The pursuit of accuracy at the cost of explainability can be misguided and potentially harmful for high-stakes decisions.

4. What are "hallucinations" in the context of large language models (LLMs), and why do they occur? "Hallucinations" refer to a phenomenon where a model, such as an LLM, generates a plausible-sounding but factually incorrect or completely nonsensical answer [1]. This can include factual errors, fabricated sources, or logical nonsense. These often occur because the deep learning systems powering these models are so complex that even their creators do not understand exactly what happens inside them, making it difficult to control or prevent erroneous outputs [1].

5. What technological approaches exist to help interpret black box models? Several technological approaches aim to enhance transparency:

- Explainable AI (XAI) and Post-hoc Explanation Methods: Techniques like SHAP (SHapley Additive exPlanations) explain a model's individual predictions by showing how much each feature contributed to the output [24]. LIME (Local Interpretable Model-agnostic Explanations) is another popular method that approximates the black box model locally with an interpretable one [17].

- Hybrid Systems: These integrate explainable models with black box components, allowing for complex data handling while still providing explanations through more transparent sub-components [25].

- Visual Explanation Tools: Methods like Gradient-weighted Class Activation Mapping (GRADCAM) can visually highlight the regions in an image (e.g., a medical scan) that most influenced the AI's prediction, bridging the gap between neural network operations and human comprehension [25].

Troubleshooting Guides

Issue: My model's predictions are accurate but unexplainable, and stakeholders do not trust it.

- Diagnosis: This is the core black box problem, often stemming from the use of highly complex models like deep neural networks or proprietary systems where logic is protected [1] [19].

- Solution: Implement post-hoc explainability techniques.

- For a global understanding of your model's behavior, use SHAP summary plots to see which features are most important overall [24].

- To explain a single, specific prediction (local interpretability), use a SHAP force plot or LIME [24]. This will detail how each feature value pushed the prediction higher or lower for that specific case.

- Recommended Action: When presenting model results to stakeholders, couple the prediction with the explanation generated by these tools to build trust and facilitate understanding.

Issue: My model appears to be exhibiting bias, such as demographic discrimination in screening applications.

- Diagnosis: The model may have learned spurious or unfair correlations from its training data, which is hidden by its opacity [1]. A famous example is an Amazon recruiting tool that penalized resumes containing the word "women's" [1].

- Solution:

- Use model debugging with XAI [26]. Apply SHAP or similar methods to your model's outputs to identify which features are driving predictions for different demographic groups [24].

- Audit the training data for representativeness and historical biases.

- Recommended Action: If bias is detected, consider re-engineering features, collecting more balanced data, or using a different, more interpretable model form that allows for constraints like monotonicity to prevent illogical relationships [19].

Issue: I need to comply with regulatory standards (like GDPR or the EU AI Act) that require explainability.

- Diagnosis: Regulations are increasingly demanding transparency for AI systems, especially in high-stakes domains like healthcare [25] [26].

- Solution:

- Documentation: Meticulously document the data sources, model selection process, and all steps taken to ensure explainability and fairness.

- Adopt Interpretable Models: Where possible, use inherently interpretable models like linear models, decision trees, or case-based reasoning, as their reasoning is self-evident and does not require a separate explanation model [19].

- Recommended Action: Integrate XAI metrics and assessment methods directly into your model development workflow to ensure you can generate the necessary reports for auditors [27].

Quantitative Data on Explainable AI (XAI)

The following table summarizes key quantitative data and projections for the XAI market, highlighting its growing importance.

| Metric | 2024 Value | 2025 Projection | 2029 Projection | CAGR | Source / Context |

|---|---|---|---|---|---|

| XAI Market Size | $8.1 billion | $9.77 billion | $20.74 billion | 20.6% | Driven by regulatory needs and adoption in healthcare, finance, and education. [26] |

| Companies Prioritizing AI | - | 83% | - | - | A majority of companies consider AI a top business priority. [26] |

| Clinician Trust Increase | - | Up to 30% | - | - | Explaining AI models in medical imaging can boost clinician trust by up to 30%. [26] |

Experimental Protocols for Model Interpretation

Protocol 1: Implementing SHAP for Local Explanation

Objective: To understand the reasoning behind a single prediction made by a complex black box model. Materials: A trained machine learning model (e.g., XGBoost, Neural Network), a single data instance for which an explanation is needed, the SHAP Python library. Methodology:

- Initialize an Explainer: Select an explainer suitable for your model (e.g.,

TreeExplainerfor tree-based models,KernelExplainerfor model-agnostic use). - Calculate SHAP Values: Pass your single data instance to the explainer to compute its SHAP values. These values represent the contribution of each feature to the model's output for that specific instance.

- Visualize the Results: Use a

force_plotto visualize the output. The plot will show the base value (the average model output) and how each feature's value pushes the prediction to a higher or final value. Interpretation: The force plot provides a intuitive, visual explanation for why the model made a specific decision, attributing credit to each input feature [24].

Protocol 2: Testing for Multicollinearity in Interpretable Models

Objective: To ensure the interpretability and stability of a linear model by verifying that its predictors are not highly correlated.

Materials: A dataset with defined predictor variables (X), a statistical software package (e.g., Python with statsmodels).

Methodology:

- Calculate Variance Inflation Factor (VIF): For each predictor variable, regress it against all other predictors. The VIF is calculated as

1 / (1 - R²), where R² is from that regression. - Interpret VIF Scores:

VIF = 1: No correlation.1 < VIF < 5: Moderate correlation.VIF > 5: High correlation—this violates the model assumption [24]. Interpretation: High VIF indicates that the coefficients for the correlated variables are unstable and their individual interpretations are unreliable. To fix this, remove one of the correlated variables, combine them, or use dimensionality reduction techniques like PCA [24].

Visualizing the Black Box Problem and Solutions

The following diagram illustrates the core concepts of black box models and the pathways to achieving explainability.

Diagram Title: Black Box Model Interpretation Pathways

The Scientist's Toolkit: Key Research Reagents for XAI

The table below lists essential tools and concepts for researchers working on interpreting machine learning models.

| Tool / Concept | Type | Primary Function | Key Consideration |

|---|---|---|---|

| SHAP | Post-hoc Explanation Library | Explains any model's output by calculating feature contribution using game theory. | Provides both local and global interpretability; can be computationally expensive. [24] |

| LIME | Post-hoc Explanation Library | Creates a local, interpretable model to approximate the predictions of any black box classifier. | Fast for local explanations; approximation may be unstable if the local surrogate is poor. [17] |

| GRADCAM | Visual Explanation Tool | Generates visual explanations for decisions from convolutional neural networks (CNNs). | Specific to CNN-based models; highlights important regions in an image. [25] |

| Inherently Interpretable Models | Model Class | Models like linear regression or decision trees that are transparent by design. | May be perceived as less powerful for some tasks, though the accuracy trade-off is often a myth. [19] |

| Variance Inflation Factor (VIF) | Diagnostic Metric | Quantifies the severity of multicollinearity in linear regression models. | A VIF > 5 indicates high multicollinearity, which destabilizes coefficients and harms interpretability. [24] |

XAI in Action: Techniques for Explaining Model Predictions

Frequently Asked Questions (FAQs)

Q1: What is the fundamental difference between an interpretable model and an explainable black-box model?

An interpretable model is inherently transparent by design—you can understand its reasoning directly from its structure, such as by examining the coefficients in a linear regression or the rules in a decision tree [28] [24]. In contrast, explainable artificial intelligence (XAI) uses post-hoc methods to create secondary, simplified explanations for a complex model whose internal workings remain opaque [28] [17]. This separation means the explanation is an approximation and may not perfectly represent the original model's logic [19].

Q2: Is there always a significant trade-off between model accuracy and interpretability?

No, this is a common misconception. For many problems, especially those with structured data and meaningful features, simpler, interpretable models like logistic regression or shallow decision trees can achieve predictive performance comparable to more complex black boxes like deep neural networks or random forests [19]. The iterative process of working with an interpretable model often allows a data scientist to better understand and refine the data, potentially leading to superior overall accuracy [19].

Q3: When is it absolutely necessary to use an intrinsically interpretable model in drug research?

Intrinsic interpretability is crucial in high-stakes decision-making domains. In drug research, this includes scenarios such as:

- Predicting patient treatment outcomes: Understanding which patient factors lead to a specific prediction is essential for clinical applicability [17].

- Identifying candidate molecules: Researchers need to know which chemical properties or biological activities the model deems important to guide further synthesis and testing [7].

- Regulatory compliance and safety: Models must be auditable and their decisions justifiable to regulatory bodies, requiring full transparency into how predictions are made [7] [19].

Q4: What are the core scope levels of interpretability for a model by design?

The interpretability of a model can be assessed at three primary levels [28]:

- Entirely interpretable: The entire model structure can be easily comprehended by a human (e.g., a very short decision tree or a linear model with only a handful of features).

- Partially interpretable: While the full model is complex, specific components can be interpreted (e.g., examining individual coefficients in a large linear model or single rules in a long decision list).

- Interpretable predictions: The model provides a self-contained explanation for an individual prediction (e.g., the path taken in a decision tree or the nearest neighbors used in a k-NN prediction).

Q5: How can I debug my interpretable model when it behaves unexpectedly?

Debugging involves a systematic review of the model's components and assumptions:

- Inspect model components: For a linear model, check for coefficients with unexpected signs or magnitudes that contradict domain knowledge [24].

- Validate model assumptions: For linear and logistic regression, test for violations of core assumptions like linearity, independence, and homoscedasticity. A failure here can render the model's interpretations unreliable [24].

- Examine problematic predictions: Isolate data points with high prediction error and trace the model's reasoning for those specific cases using its intrinsic structure (e.g., the decision path in a tree) [28].

Troubleshooting Guides

Issue 1: Unstable or Nonsensical Coefficients in Linear Models

| Potential Cause | Diagnostic Check | Recommended Solution |

|---|---|---|

| High Multicollinearity | Calculate Variance Inflation Factor (VIF) for all features. A VIF > 10 indicates severe multicollinearity [24]. | Remove redundant features, combine correlated features into a single predictor, or use regularization (Ridge regression) [24]. |

| Violation of Linearity | Plot residuals against predicted values. A clear pattern (e.g., U-shape) indicates non-linearity [24]. | Apply transformations to the feature (e.g., log, polynomial) or introduce interaction terms to capture non-linear effects. |

| Influential Outliers | Calculate Cook's distance for each data point. | Investigate influential points for data entry errors; if legitimate, consider robust regression techniques. |

Issue 2: Decision Tree is Over-complex and Fails to Generalize

| Potential Cause | Diagnostic Check | Recommended Solution |

|---|---|---|

| Overfitting | Compare performance on training vs. validation set. A large gap indicates overfitting. | Prune the tree by setting a maximum depth or increasing the minimum samples required for a split. Use cross-validation to find the right parameters. |

| Insufficient Data | The tree has very few samples at its leaf nodes. | Collect more data or simplify the tree structure by increasing the min_samples_leaf parameter. |

| Noise in the Data | The tree has many splits that do not offer significant performance gains. | Preprocess data to handle noise, or use ensemble methods like Random Forests which are more robust, acknowledging the trade-off with pure interpretability. |

Issue 3: Model is Interpretable but has Poor Predictive Performance

| Potential Cause | Diagnostic Check | Recommended Solution |

|---|---|---|

| Oversimplified Model | The model has too few parameters to capture the underlying complexity of the data. | Consider a more flexible yet still interpretable model, such as Generalized Additive Models (GAMs) or a RuleFit model that combines linear effects and decision rules [28]. |

| Poor Feature Representation | Features are not informative for the task. | Revisit feature engineering. Create more informative features through domain expertise. |

| The "Rashomon Effect" | Multiple different models (both interpretable and black-box) show similar performance but provide different interpretations [28]. | Acknowledge model multiplicity. Report on a set of well-performing interpretable models rather than a single one to provide a more robust narrative. |

Experimental Protocols & Methodologies

Protocol 1: Validating Assumptions for Linear Models

This protocol ensures that the interpretations from linear models (coefficients, p-values) are reliable.

1. Linearity Check:

- Method: Create partial regression plots (or component-plus-residual plots) for each continuous feature.

- Expected Outcome: The relationship should appear roughly linear. A clear curve suggests a violation.

- Corrective Action: Apply a transformation to the feature (e.g., log, square root) or the target variable.

2. Independence of Errors:

- Method: Use the Durbin-Watson test if data is time-ordered. A value near 2 suggests independence.

- Expected Outcome: Errors are not correlated with each other.

- Corrective Action: If data is sequential, consider using time series models.

3. Homoscedasticity Check:

- Method: Plot residuals versus fitted (predicted) values.

- Expected Outcome: The spread of residuals should be constant across all fitted values (no "fanning" pattern).

- Corrective Action: Transform the dependent variable or use weighted least squares regression.

4. Normality of Errors:

- Method: Plot a Q-Q (Quantile-Quantile) plot of the residuals.

- Expected Outcome: Points should closely follow the diagonal line.

- Corrective Action: For large sample sizes, this assumption is less critical due to the Central Limit Theorem. Transformations of the target variable can help.

5. Multicollinearity Check:

- Method: Calculate the Variance Inflation Factor (VIF) for each predictor.

- Expected Outcome: VIF < 5 is typically acceptable; VIF < 10 is often tolerated.

- Corrective Action: Remove variables with high VIF or use PCA to create uncorrelated components.

Protocol 2: Building and Pruning an Interpretable Decision Tree

This protocol outlines steps to create a decision tree that is both accurate and comprehensible.

1. Pre-Pruning (Setting Constraints):

- Method: Before training, set hyperparameters that limit tree growth.

- Key Parameters:

max_depth: The maximum depth of the tree (e.g., 3-5 for high interpretability).min_samples_split: The minimum number of samples required to split an internal node.min_samples_leaf: The minimum number of samples required to be at a leaf node.max_leaf_nodes: The maximum number of leaf nodes in the tree.

2. Training and Validation:

- Method: Train the tree on a training set and evaluate its performance on a separate validation set.

3. Post-Pruning (Cost-Complexity Pruning):

- Method: Use cost-complexity pruning to remove branches that provide the least gain in performance. Scikit-learn's

DecisionTreeClassifierprovidesccp_alphaparameter for this. A cross-validation loop is used to find the optimalccp_alphathat maximizes validation accuracy.

4. Final Evaluation:

- Method: Evaluate the final pruned tree on a held-out test set.

Decision Tree Pruning Workflow

Table 1: Global Research Output in Explainable AI for Drug Discovery (Data up to June 2024) [7]

| Country | Total Publications | Percentage of 573 Publications | Total Citations | Citations per Publication (TC/TP) |

|---|---|---|---|---|

| China | 212 | 37.00% | 2949 | 13.91 |

| USA | 145 | 25.31% | 2920 | 20.14 |

| Germany | 48 | 8.38% | 1491 | 31.06 |

| United Kingdom | 42 | 7.33% | 680 | 16.19 |

| Switzerland | 19 | 3.32% | 645 | 33.95 |

| Thailand | 19 | 3.32% | 508 | 26.74 |

The Scientist's Toolkit: Essential Reagents for Interpretable ML Research

Table 2: Key "Research Reagents" for Intrinsically Interpretable Modeling

| Item | Function & Explanation |

|---|---|

| Sparse Linear Models | Models that use regularization (Lasso/L1) to drive feature coefficients to zero, creating a simple, short list of the most important predictors. This enhances interpretability by focusing on a minimal feature set [19]. |

| Decision Rules & Lists | A set of IF-THEN statements that make the model's logic explicit and auditable. The RuleFit algorithm, for example, generates a collection of rules from decision trees and combines them with a sparse linear model for a powerful yet interpretable approach [28]. |

| Generalized Additive Models (GAMs) | Models of the form g(y) = f1(x1) + f2(x2) + .... They maintain interpretability because the effect of each feature can be visualized independently, showing its non-linear relationship with the target, which is highly valuable for understanding biological effects [28]. |

| Model-based Boosting | A framework for building interpretable additive models by sequentially adding "weak learners" like linear effects, splines, or small trees. This allows the researcher to control the model's complexity and maintain a transparent structure [28]. |

| SHAP (SHapley Additive exPlanations) | While a post-hoc method, SHAP is invaluable for validating intrinsically interpretable models. It can be used to check if the feature importance from a black-box model aligns with the coefficients of your interpretable model, providing a sanity check [24] [17]. |

Model Selection Logic Flow

Troubleshooting Guides

Guide 1: Resolving SHAP Computation Performance Issues

Problem: Calculating SHAP values is too slow for large datasets or complex models, hindering research progress.

Explanation: SHAP (SHapley Additive exPlanations) calculates the marginal contribution of each feature to the prediction across all possible feature combinations, which is computationally intensive [29] [30]. The computation time grows exponentially with the number of features if implemented naively.

Solution: Leverage model-specific optimizations and hardware acceleration.

- Use TreeSHAP for tree-based models: If using XGBoost, LightGBM, or Random Forest, employ TreeSHAP which reduces complexity from exponential to polynomial time [29].

- Enable GPU acceleration: Libraries like RAPIDS and XGBoost provide GPU support. NVIDIA demonstrated a reduction from 1.4 minutes to 1.56 seconds for SHAP calculation on a dataset with 30K+ samples [29].

- Sample strategically: For global explanations, calculate SHAP values on a representative sample rather than the entire dataset.

- Utilize approximate methods: For very high-dimensional data, consider KernelSHAP with a reduced number of feature perturbations.

Verification: After implementing GPU acceleration, computation time should decrease significantly. Validate that the SHAP values between CPU and GPU implementations remain consistent for a sample of your data.

Guide 2: Addressing Unreliable LIME Explanations

Problem: LIME (Local Interpretable Model-Agnostic Explanations) provides inconsistent explanations for similar instances.

Explanation: LIME works by perturbing input data and fitting a local surrogate model. The explanations can be sensitive to the kernel width and sampling strategy [31] [32]. The default kernel width of 0.75 × √(number of features) may not be optimal for all datasets.

Solution: Systematically optimize LIME parameters and validate explanations.

- Tune kernel width: Experiment with different kernel widths to find one that produces stable explanations. Start with values between 0.5-1.0 × √(number of features).

- Increase sample size: Increase the number of perturbed samples (

num_samplesparameter) to improve the stability of the local model. - Validate across similar instances: Test LIME on multiple similar instances to ensure consistency.

- Cross-validate with domain knowledge: Check if explanations align with domain expertise for known cases.

Verification: Run LIME multiple times on the same instance with different random seeds. The top 3-5 features should remain consistent. If not, increase num_samples or adjust the kernel width.

Guide 3: Handling Non-Intuitive SHAP Force Plots

Problem: SHAP force plots are visually overwhelming with many features, making interpretation difficult.

Explanation: Force plots display how each feature contributes to pushing the model output from the base value to the final prediction. With many features, the plot becomes cluttered and hard to interpret [29] [33].

Solution: Use alternative visualization strategies for high-dimensional data.

- Use decision plots instead: Decision plots show the same information as force plots but in a more readable format for many features [33].

- Filter features: Display only the top N most important features.

- Use hierarchical clustering: Group features with similar effects using SHAP's hierarchical clustering feature ordering.

- Aggregate features: For correlated features, consider creating feature groups.

Verification: Compare the insights from force plots with decision plots for the same instances. The key drivers of the prediction should be identically highlighted in both visualizations.

Guide 4: Mitigating Vulnerabilities to Adversarial Attacks

Problem: Both SHAP and LIME explanations can be manipulated, potentially hiding model biases [34].

Explanation: Post-hoc explanation methods that rely on input perturbations can be "gamed" by adversarial scaffolding techniques. An attacker can craft a model that produces the desired explanations while maintaining biased predictions [34].

Solution: Implement safeguards to detect potential manipulation.

- Audit with multiple methods: Cross-validate explanations using both SHAP and LIME.

- Test on known biased cases: Validate explanations on instances where potential biases are understood.

- Check global consistency: Ensure local explanations align with global model behavior.

- Use inherently interpretable models: For critical applications, consider using interpretable models as benchmarks [19].

Verification: Create synthetic test cases with known biases and verify that explanation methods correctly identify them. For example, in a hiring model, ensure that gender or race features are appropriately flagged if influential.

Frequently Asked Questions

Q1: When should I choose SHAP over LIME, and vice versa?

A1: The choice depends on your specific needs for accuracy, speed, and use case:

Table: SHAP vs. LIME Comparison

| Aspect | SHAP | LIME |

|---|---|---|

| Theoretical Foundation | Game-theoretic Shapley values [30] | Local surrogate models [31] |

| Explanation Scope | Both local and global explanations [29] | Primarily local explanations [32] |

| Computation Speed | Slower, especially for exact calculations [35] | Faster, more lightweight [35] |

| Theoretical Guarantees | Has consistency and local accuracy guarantees [30] | No strong theoretical guarantees [31] |

| Ideal Use Case | When you need mathematically consistent feature attribution | When you need quick local explanations for individual predictions |

Choose SHAP when you need mathematically rigorous explanations with consistency guarantees for both local and global interpretation. Prefer LIME when you need fast explanations for individual predictions and can accept less theoretical foundation [35].

Q2: How can I validate that my SHAP or LIME explanations are correct?

A2: Use multiple validation strategies:

- Domain expertise consultation: Present explanations to domain experts to verify they make sense in context [19].

- Cross-method validation: Compare SHAP and LIME explanations for the same instances – while they may not be identical, the top features should generally align.

- Sensitivity analysis: Slightly perturb input features and observe if explanations change reasonably.

- Baseline testing: Verify explanations on cases with known outcomes.

Q3: What are the limitations of post-hoc explanation methods?

A3: Key limitations include:

- Faithfulness concerns: Explanations are approximations and may not fully capture the model's reasoning [19].

- Local scope: LIME provides only local explanations, which may not represent global model behavior [32].

- Computational demands: SHAP can be computationally expensive for large models or datasets [29].

- Vulnerability to manipulation: Both methods can be gamed by adversarial attacks [34].

- Misinterpretation risk: Users may overinterpret explanations as causal relationships when they are merely correlational.

Q4: Is there a significant accuracy trade-off when using interpretable models instead of black boxes?

A4: Contrary to popular belief, recent research suggests that for structured data with meaningful features, there is often no significant difference in performance between complex black box models and simpler interpretable models [19]. The common belief in an accuracy-interpretability trade-off is often a myth – in many cases, interpretable models can achieve comparable performance while providing transparent reasoning [19].

Methodologies & Experimental Protocols

SHAP Analysis Protocol for Drug Development Data

Purpose: To identify key molecular features influencing compound efficacy predictions in drug discovery pipelines.

Materials:

- Compound dataset with structural features and activity measurements

- Trained predictive model (XGBoost, Random Forest, or Neural Network)

- SHAP library (Python)

Procedure:

- Model Training: Train model using standard protocols with train/validation split.

- SHAP Explainer Selection:

- For tree-based models: Use

TreeExplainerfor exact Shapley values [29] - For other models: Use

KernelExplainerorDeepExplainerfor neural networks

- For tree-based models: Use

- SHAP Value Calculation: Compute SHAP values for validation set (minimum 1000 instances for stable results)

- Global Interpretation: Create summary plots to identify most important features across dataset

- Local Interpretation: Use force plots or decision plots for specific compound predictions [33]

- Dependency Analysis: Plot SHAP dependency plots for top features to understand directional effects

Validation: Compare identified important features with known structure-activity relationships from medicinal chemistry literature.

LIME Explanation Protocol for Clinical Prediction Models

Purpose: To explain individual patient risk predictions for clinical decision support.

Materials:

- Trained clinical prediction model

- Patient dataset with clinical features

- LIME library (Python)

Procedure:

- Instance Selection: Identify specific patient cases requiring explanation

- LIME Explainer Configuration:

- Explanation Generation: For each patient of interest:

- Generate 5000 perturbed samples around the instance

- Fit local surrogate model (linear regression with Lasso regularization)

- Extract top 10 features driving the prediction

- Explanation Validation: Present explanations to clinical experts for face validity assessment

- Stability Testing: Run explanation multiple times with different random seeds to ensure consistency

Validation: Check that explanations align with clinical knowledge and that similar patients receive similar explanations.

Workflow Diagrams

SHAP Analysis Workflow

LIME Explanation Process

Research Reagent Solutions

Table: Essential Tools for Post-hoc Explanation Research

| Tool/Resource | Function | Application Context |

|---|---|---|

| SHAP Library (Python) | Computes Shapley values for any model; provides multiple explainers and visualizations [29] [30] | Model interpretation for both tree-based and neural network models |

| LIME Package (Python) | Generates local explanations by perturbing inputs and fitting local surrogate models [31] [32] | Explaining individual predictions for any black box model |

| XGBoost with GPU | Gradient boosting implementation with GPU acceleration for faster training and SHAP computation [29] | High-performance model training and explanation for large datasets |

| SHAP Decision Plots | Visualization technique for displaying how models arrive at predictions, especially effective with many features [33] | Interpreting complex decisions with multiple feature contributions |

| Model Auditing Framework | Systematic approach to validate explanation faithfulness and detect biases [19] [34] | Ensuring reliability of explanations in high-stakes applications |

Frequently Asked Questions (FAQs)

FAQ 1: What is the fundamental difference between a local and a global explanation? A local explanation zeros in on a single data point or prediction to answer, “How and why did the model arrive at this specific result?” [36]. In contrast, a global explanation looks at the model’s entire decision-making process across a dataset to answer, “How does this model behave overall?” [36] [37]. For example, telling a loan applicant why their application was denied is a local explanation, while describing which features the loan approval model relies on most across all applicants is a global explanation [36].

FAQ 2: When should I use a local explanation method versus a global one? The choice depends on your goal [36] [38]:

- Use local explanations to:

- Use global explanations to:

FAQ 3: Can I use the most important features from a global explanation to justify an individual (local) prediction? Not reliably. A feature that is important globally might not be the main driver for a specific local prediction, and vice versa [36]. For instance, a global analysis might find that credit score is the most important feature for a loan default model. However, a local explanation for a specific applicant might reveal that their high debt-to-income ratio was the primary reason for denial, even though their credit score was moderate [36]. Always use local explanation methods to justify individual outcomes.

FAQ 4: The SHAP method provides both local and global insights. How is that possible? SHAP (SHapley Additive exPlanations) assigns each feature an importance value (a Shapley value) for a given prediction [36] [40]. These values can be interpreted on a per-prediction basis, providing a local explanation for a single instance [37]. By aggregating the absolute Shapley values across many predictions in a dataset, you can generate a global perspective on the model's overall behavior and the average impact of each feature [39].

FAQ 5: Are there methods that combine local and global explanations? Yes, this is an active area of research. Methods like GLocalX take a "local-first" approach. They start by generating local explanations (e.g., in the form of decision rules for individual predictions) and then hierarchically aggregate them to build a global, interpretable model that approximates the complex black-box model [41] [42]. This bridges the gap between precise local justifications and comprehensive global understanding.

Troubleshooting Guides

Problem 1: My Global Explanation is Misleading or Oversimplified

Symptoms: The global feature importance plot shows a clear ranking, but you suspect the model uses features differently for different subgroups of data. The explanation seems to "average out" important but rare patterns.

Diagnosis: This is a common limitation of global methods like Partial Dependence Plots (PDPs), which show average effects and can hide heterogeneous relationships [39]. If features are correlated, methods like Permutation Feature Importance can also produce unreliable results [43].

Solution: Use complementary methods to uncover heterogeneity.

- Use Individual Conditional Expectation (ICE) Plots: While PDPs show the average effect, ICE plots show how the model's prediction for each individual instance changes as a feature varies [39]. This can reveal subgroups where the feature has a different, or even opposite, effect.

- Aggregate Local Explanations: Use a method like SHAP or GLocalX to generate explanations for many individual predictions. Then, examine the distribution of feature effects across your dataset. This can reveal if a feature is consistently important or only critical for a specific cluster of instances [40].

- Stratify Your Analysis: Split your dataset into meaningful subgroups (e.g., by disease type, patient demographic) and generate global explanations for each subgroup to see if the model's logic changes.

Table: Comparing Methods for a Holistic Global View

| Method | Key Strength | Key Weakness | Best Used For |

|---|---|---|---|

| Partial Dependence Plot (PDP) | Intuitive visualization of a feature's average marginal effect [39]. | Hides heterogeneous relationships (e.g., opposite effects for different subgroups) [39]. | Initial, high-level understanding of a single feature's average impact. |

| Individual Conditional Expectation (ICE) | Uncoverse heterogeneous relationships and variation across instances [39]. | Can become cluttered and hard to interpret with many data points [39]. | Diagnosing whether a PDP's average is masking complex behavior. |

| Permutation Feature Importance | Simple, model-agnostic measure of a feature's importance to overall model performance [36]. | Can be unreliable with highly correlated features; requires access to true labels [43] [39]. | Getting a quick, initial ranking of feature relevance for model accuracy. |

| SHAP Summary Plot | Combines feature importance with feature effect, showing both the global importance and the distribution of local effects [40] [39]. | Computationally intensive for large datasets [37]. | A comprehensive, default view of global model behavior based on local explanations. |

Problem 2: My Local Explanations are Unstable or Inconsistent

Symptoms: Two very similar data points receive vastly different local explanations. Small changes in the input data lead to large shifts in the feature importance scores provided by methods like LIME.

Diagnosis: Instability in local explanations can stem from the underlying complexity of the model's decision boundary [36]. For LIME specifically, instability can be caused by the random sampling process used to generate perturbed instances and the sensitivity to the kernel settings that define the local neighborhood [39].

Solution: Implement strategies to verify and stabilize your interpretations.

- Switch to a More Robust Method: If using LIME, consider switching to SHAP. SHAP is based on a solid game-theoretic foundation and provides consistent explanations, meaning that if a model's dependence on a feature increases, the SHAP value for that feature will also increase [40].

- Use Model-Specific Explainers: For tree-based models (e.g., Random Forests, XGBoost), use TreeExplainer (the SHAP implementation for trees). It provides exact Shapley values efficiently, eliminating the sampling variability associated with model-agnostic methods [40].

- Check Explanation Stability: Perform sensitivity analysis by slightly perturbing the input of interest and re-generating the explanation. If the explanation changes dramatically, treat it with caution and consider reporting an aggregate explanation over a small neighborhood of similar points.

Table: Key Reagent Solutions for Explainable AI Experiments

| Research Reagent (Method) | Function | Primary Scope | Considerations for Use |

|---|---|---|---|

| LIME (Local Interpretable Model-agnostic Explanations) | Explains individual predictions by fitting a simple, local surrogate model (e.g., linear regression) around the instance [36] [39]. | Local | Can be unstable; sensitive to kernel and sampling parameters [39]. |

| SHAP (SHapley Additive exPlanations) | Assigns each feature an importance value for a prediction based on cooperative game theory, satisfying desirable properties like local accuracy and consistency [36] [40]. | Local & Global | Computationally expensive; faster, model-specific versions (e.g., TreeSHAP) are available [37] [40]. |

| Partial Dependence Plots (PDP) | Visualizes the global relationship between a feature and the predicted outcome by marginalizing over the other features [36] [39]. | Global | Assumes feature independence; can be misleading with correlated features [39]. |

| GLocalX | Generates a global interpretable model (a set of rules) by hierarchically aggregating many local rule-based explanations [41] [42]. | Local to Global | Provides a comprehensible global surrogate that is built from faithful local pieces. |

Problem 3: Explaining a Complex Model is Computationally Prohibitively Expensive

Symptoms: Generating explanations for an entire dataset takes an impractically long time or requires unsustainable computational resources. This is a common issue with methods like SHAP that involve many model evaluations [37].