Mapping the Creative Mind: A Comprehensive Guide to EEG and ERP Measurements in Conceptual Design Tasks

This article provides a comprehensive framework for employing electroencephalography (EEG) and event-related potentials (ERPs) to study the neural correlates of conceptual design.

Mapping the Creative Mind: A Comprehensive Guide to EEG and ERP Measurements in Conceptual Design Tasks

Abstract

This article provides a comprehensive framework for employing electroencephalography (EEG) and event-related potentials (ERPs) to study the neural correlates of conceptual design. Tailored for researchers and drug development professionals, it bridges foundational theory with advanced application. We explore the electrophysiological signatures of creative cognition, detail robust methodological protocols for experimental design and data acquisition, address common troubleshooting and optimization challenges, and review validation strategies through multi-method comparisons and clinical biomarkers. The synthesis offers a practical guide for leveraging these non-invasive tools to objectively assess cognitive states and neurophysiological mechanisms underlying innovative thinking, with significant implications for cognitive biomarker development and clinical trial design.

The Neural Blueprint: Uncovering EEG and ERP Fundamentals for Design Cognition

Electroencephalography (EEG) is a non-invasive and cost-efficient electrophysiological method for assessing neurophysiological function by measuring the electrical activity of large, synchronously firing populations of neurons in the brain with electrodes placed on the scalp [1] [2]. Its high temporal resolution in the millisecond range enables researchers to probe the precise timing of neural events with exceptional precision [1]. Event-related potentials (ERPs) are specific neural responses extracted from the continuous EEG signal by time-locking and averaging many trials presented in an experiment, allowing investigators to study sensory, perceptual, and cognitive processing stages [1] [2].

The stability of ERP components makes them particularly valuable for neuroscience research and clinical applications, where they are employed for early cognitive evaluation, differential diagnosis, and prognostic intervention in various brain functioning disorders [2]. Within the context of conceptual design tasks research, EEG and ERP provide a window into the rapid neural processes underlying creativity, problem-solving, and decision-making - aspects of human cognition that are difficult to access through behavioral measures alone.

Key ERP Components and Their Cognitive Correlates

ERP components are characterized by their polarity (positive or negative), latency, amplitude, and scalp distribution. These components serve as neural markers for specific cognitive processes. The table below summarizes major ERP components relevant to conceptual design and cognitive research.

Table 1: Major ERP Components and Their Cognitive Correlates

| Component | Latency (ms) | Polarity | Cognitive Correlate | Research Application |

|---|---|---|---|---|

| N100 | 80-120 | Negative | Early attention | Initial sensory processing [3] |

| P300 | 250-500 | Positive | Attention, context updating | Decision-making, stimulus evaluation [4] |

| N400 | 300-500 | Negative | Semantic processing | Conceptual integration, language processing [3] [4] |

| ERN | 50-100 | Negative | Error detection | Performance monitoring [5] |

| MMN | 100-250 | Negative | Change detection | Pre-attentive processing [1] [5] |

Different ERP components can be successfully discriminated using advanced analysis techniques. Research demonstrates that both pleasant/unpleasant emotional moods and low/high cognitive states can be classified using graph-theoretic features extracted from spatio-temporal ERP components with accuracies of up to 92% and 89%, respectively [3].

Experimental Protocols and Methodologies

Basic EEG/ERP Experimental Setup

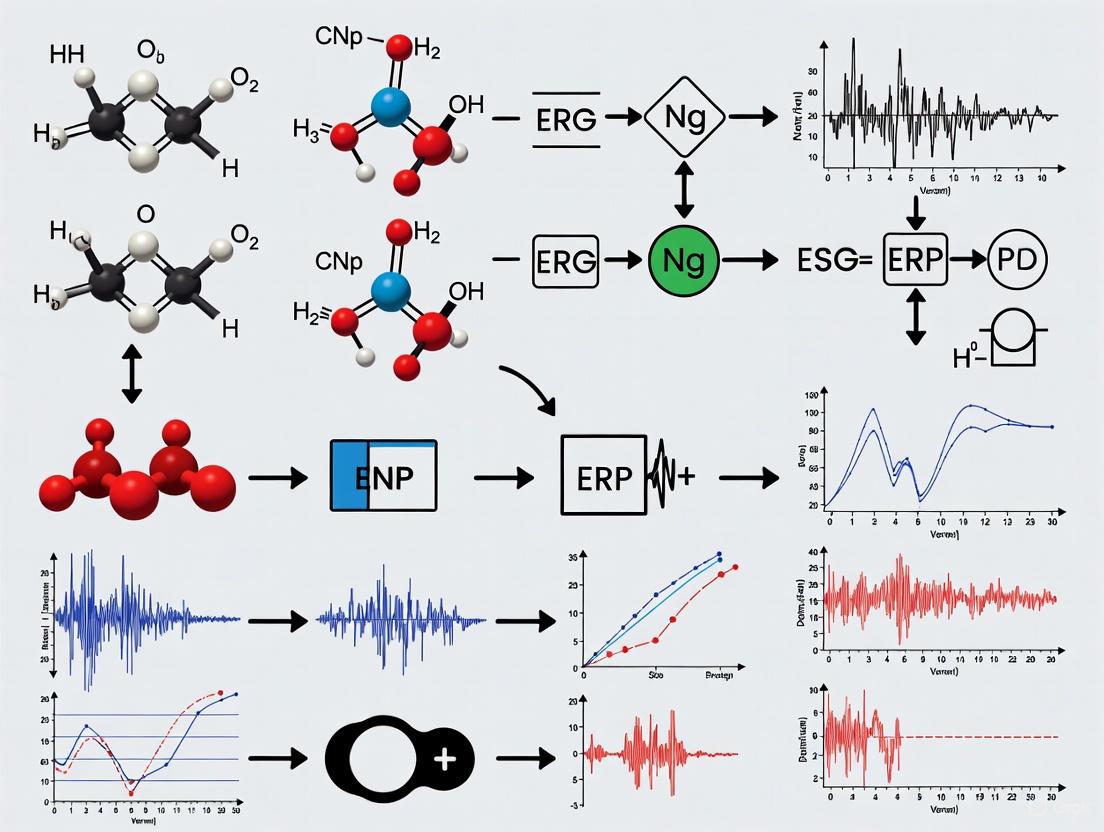

The following diagram illustrates a standard workflow for EEG/ERP data acquisition and analysis:

Detailed Participant Preparation Protocol

Materials and Equipment Required:

- EEG acquisition software (e.g., Neuroscan, BioSemi)

- Digital EEG Amplifier

- Electrode Cap (Easycap or equivalent) with Ag/AgCl sintered ring electrodes

- Abrasive electrolyte gel (Abralyt or equivalent)

- Syringes (without needle) for gel application

- Electrode impedance tester (Checktrode or equivalent)

- Electrically shielded room (recommended)

- Stimulus presentation software (E-Prime, Presentation) [1]

Step-by-Step Procedure:

Equipment Setup: Switch on stimulus generation and data collection equipment at least 30 minutes prior to starting data collection to allow stabilization. Check ambient electrical noise with a gauss meter. [1]

Cap Selection and Preparation:

- Measure head circumference using a flexible tape measure around the widest point of the head.

- Select appropriate cap size (standard sizes: 54, 56, 58, 60 cm).

- Verify proper fit by asking the participant to nod and turn head; cap should not shift.

- Snap electrodes into white plastic adaptors on the cap, being careful not to bend lead wires. [1]

Cap Application:

- Position FPz electrode approximately 10% of the nasion-inion distance above the nasion.

- Place cap on participant's head with FPz in this position.

- Adjust CZ electrode to lie halfway between nasion and inion.

- Verify positioning by measuring nasion-to-inion and preauricular-to-preauricular distances. [1]

Electrode Preparation:

- Apply abrasive electrolyte gel to each electrode using a syringe.

- Gently abrade the skin with a wooden cotton swab to reduce impedances.

- Target impedance values below 5 kΩ for optimal signal quality. [1]

Experimental Task Instructions:

- Explain the experimental task to the participant.

- Encourage minimal movement and eye blinks during trial periods.

- Conduct practice trials to ensure task understanding.

Data Acquisition Parameters

Table 2: Standard Data Acquisition Parameters for ERP Research

| Parameter | Typical Setting | Considerations |

|---|---|---|

| Sampling Rate | 500-1000 Hz | Must be at least 2x the highest frequency of interest |

| Filter Settings | 0.1-100 Hz bandpass | 50/60 Hz notch filter for line noise |

| Reference | Linked mastoids, Cz, or average reference | Choice affects ERP topography [5] |

| Electrode System | International 10-20 system | Standardized placement for reproducibility [1] |

| Impedance | <5 kΩ | Critical for signal quality [1] |

Data Preprocessing and Analysis

Preprocessing Workflow

The impact of preprocessing choices on subsequent analysis is substantial. Recent research demonstrates that preprocessing decisions can considerably influence decoding performance, with steps like filtering and artifact correction significantly affecting results [5]. The following diagram outlines key preprocessing steps:

Preprocessing Steps and Parameters

Table 3: EEG/ERP Preprocessing Steps and Recommended Parameters

| Processing Step | Purpose | Recommended Parameters | Impact on Data |

|---|---|---|---|

| High-Pass Filter | Remove slow drifts | 0.1-0.5 Hz cutoff | Higher cutoffs (e.g., 1Hz) can increase decoding performance [5] |

| Low-Pass Filter | Reduce high-frequency noise | 30-40 Hz cutoff | Lower cutoffs increase time-resolved decoding performance [5] |

| Artifact Correction | Remove ocular/muscular artifacts | ICA, autoreject | Generally decreases decoding performance but improves interpretability [5] |

| Baseline Correction | Remove pre-stimulus offsets | -200 to 0 ms typical | Longer baseline windows improve decoding performance [5] |

| Epoching | Extract time-locked segments | Typically -200 to 800 ms | Aligns data to experimental events |

| Artifact Rejection | Exclude contaminated trials | Voltage threshold: ±100 μV | Reduces noise but decreases trial count |

Advanced Analysis Techniques

Modern ERP research increasingly employs multivariate analysis approaches:

- Decoding Analysis: Uses classification models to predict experimental conditions from EEG patterns, taking advantage of the multidimensionality of the data [5].

- Time-Frequency Analysis: Examines oscillatory activity in both time and frequency domains, often using wavelet transforms [3].

- Single-Trial Analysis: Focuses on individual trial responses rather than averaged ERPs, providing insight into trial-by-trial variability [3].

- Connectivity Analysis: Measures functional connectivity between brain regions using methods like phase locking value (PLV) or phase lag index (PLI) [2].

Visualization and Interpretation

Effective visualization of ERP data is essential for interpretation and communication of results. A recent survey of visualization practices in the field revealed that the community lacks consistent nomenclature for plot types and faces challenges in representing the multidimensional nature of ERP data [6]. The following visualization approaches are commonly used:

- ERP Waveforms: Line plots showing voltage changes over time at specific electrodes, often with confidence intervals or standard error shading to represent variability [7].

- Topoplots: Scalp maps displaying spatial distribution of activity at specific time points or averaged across time windows [6].

- Butterfly Plots: Overlaid waveforms from all electrodes, useful for visualizing overall response patterns [6].

Recent recommendations suggest including measures of confidence and variance in ERP plots, such as 95% confidence intervals or standard deviation shading, to provide better information about the reliability of effects and individual variation [7].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 4: Essential Materials for EEG/ERP Research

| Item | Function | Examples/Specifications |

|---|---|---|

| EEG Amplifier | Records electrical activity from scalp | Neuroscan NuAmps, BioSemi ActiveTwo |

| Electrode Cap | Holds electrodes in standardized positions | Easycap with 32, 64, or 128 channels |

| Electrolyte Gel | Facilitates electrical conduction between skin and electrodes | Abralyt HiCl, Signa Gel |

| Electrode Types | Record brain electrical activity | Ag/AgCl sintered ring electrodes |

| Stimulus Presentation Software | Presents experimental paradigms | E-Prime, Presentation, PsychoPy [8] |

| Data Analysis Toolboxes | Processes and analyzes EEG data | EEGLAB, FieldTrip, MNE-Python, EPAT [2] |

| Impedance Checker | Verifies electrode-skin contact quality | UFI Checktrode |

| Electrically Shielded Room | Reduces environmental electrical noise | Faraday cage, sound-attenuating booth |

Application in Conceptual Design Research

Within conceptual design tasks research, EEG and ERP methodologies offer unique insights into the cognitive processes underlying creative thinking and problem-solving. Specific applications include:

- Tracking Conceptual Shifts: The N400 component can index semantic unexpectedness when designers encounter novel concepts or solutions [3].

- Monitoring Cognitive Workload: The P300 amplitude and latency can reflect cognitive resource allocation during complex design decisions [4].

- Identifying Insight Moments: Specific ERP patterns may correlate with "Aha!" moments during creative problem-solving.

- Evaluating Design Alternatives: Neural responses can provide implicit measures of preference or novelty when designers evaluate different concepts.

The high temporal resolution of EEG/ERP is particularly valuable for capturing the rapid cognitive dynamics that characterize conceptual design processes, where ideas can form and evolve in milliseconds. By implementing the protocols and methodologies outlined in this document, researchers can rigorously investigate the neural basis of design cognition with precision and reliability.

Event-related potentials (ERPs) are measured brain responses that are the direct result of specific sensory, cognitive, or motor events [9]. As a non-invasive electrophysiological technique, ERPs provide excellent temporal resolution on the millisecond scale, allowing researchers to capture the precise timing of neural processes associated with cognitive tasks [10] [9]. The ERP technique involves measuring electrical activity via electroencephalography (EEG) and using signal averaging to extract consistent voltage fluctuations time-locked to stimulus events from the background EEG 'noise' [10] [11]. This averaging process enhances the signal-to-noise ratio, making it possible to observe small amplitude voltage fluctuations that are systematically related to cognitive processing [9].

ERPs are characterized by a series of positive and negative voltage deflections, called components, which reflect distinct stages of information processing from perceptual analysis to higher-order cognition [10] [11]. These components are typically named according to their polarity (P for positive, N for negative) and either their ordinal position in the waveform or their typical latency in milliseconds [11] [9]. The N100, for instance, refers to a negative peak occurring around 100 ms post-stimulus, while the P300 indicates a positive peak around 300 ms [9]. Critically, different ERP components are generated by different neural populations and reflect distinct cognitive operations, making them particularly valuable for decomposing the temporal dynamics of complex cognitive tasks [10].

Key ERP Components: From Sensory to Higher-Order Processing

Early Sensory Components: N1 and P1

The P1 and N1 components represent the earliest stages of perceptual processing in their respective modalities. The visual P1 is a positive-going deflection occurring approximately 80-130 ms after visual stimulus onset, maximal over occipital scalp sites, and reflects early sensory processing in extrastriate visual cortices [10]. This component is considered an exogenous component, meaning it is primarily influenced by the physical parameters of the stimulus rather than cognitive factors [11]. Following the P1, the N1 component (also called N100) is a negative deflection peaking around 100-150 ms post-stimulus that reflects early selective attention mechanisms and initial perceptual analysis [10] [11]. Research indicates that the N1 amplitude is often enhanced for attended compared to unattended stimuli, suggesting its role in early stimulus discrimination and filtering [11]. Both components are generally unaffected by cognitive factors like semantic content but are highly sensitive to physical stimulus characteristics and attentional modulation [12].

Intermediate Cognitive Components: N200 and P200

The N200 (or N2) is an endogenous component typically emerging around 200-350 ms post-stimulus that has been associated with conflict detection and stimulus classification processes [11] [12]. In cognitive control paradigms such as Go/No-go tasks, the N200 demonstrates greater amplitude for stimuli requiring response inhibition, suggesting its involvement in cognitive control mechanisms [13] [12]. The P200 (or P2), a positive deflection occurring in a similar time window, reflects attention and discrimination processes as well as task difficulty [11]. Recent research has also identified novel prefrontal subcomponents of these classic potentials, including the prefrontal N1 (pN1) and prefrontal P1 (pP1), which peak over prefrontal sites at approximately 110 ms and 180 ms respectively and have been associated with visual-motor awareness [13]. These prefrontal components appear to originate from the anterior insular cortex and represent early frontal engagement in perceptual decision-making [13].

Higher-Order Cognitive Components: P300 and N400

The P300 (or P3b) is perhaps the most extensively studied ERP component, typically peaking between 250-500 ms after stimulus onset with a maximum amplitude over centroparietal electrode sites [14] [12]. This endogenous component is elicited by task-relevant stimuli that require updating of mental models or context [12]. P300 amplitude is inversely correlated with the probability of stimulus occurrence, with low-probability target stimuli eliciting larger P300s, while its latency is thought to reflect stimulus evaluation time [14] [12]. The component has been localized to a distributed network including temporal, parietal, and frontal regions, and is considered an index of attention-dependent working memory operations [12].

The N400 is a negative-going deflection peaking around 400 ms post-stimulus that is maximally distributed over centro-parietal sites, often with a slight right hemisphere bias for written language [14] [15]. This component is uniquely sensitive to semantic processing and is elicited by meaningful or potentially meaningful stimuli including words, pictures, faces, and environmental sounds [14] [15]. N400 amplitude demonstrates a nearly inverse linear relationship with a word's cloze probability (the probability it will complete a given sentence frame), with highly unexpected words in context eliciting the largest N400 amplitudes [14] [15]. Unlike the P300, the N400 shows remarkable latency stability across tasks and manipulations, with few factors besides aging and neurological conditions affecting its peak timing [14] [15].

Table 1: Key ERP Components in Cognitive Processing

| Component | Typical Latency (ms) | Polarity | Scalp Distribution | Primary Functional Correlates |

|---|---|---|---|---|

| P1/N100 | 80-130 | Positive | Occipital (visual) | Early sensory processing, initial perceptual analysis |

| N1/N100 | 100-150 | Negative | Modality-specific | Early selective attention, stimulus discrimination |

| P200 | 150-250 | Positive | Frontocentral | Attention, discrimination processes, task difficulty |

| N200 | 200-350 | Negative | Frontocentral | Conflict detection, stimulus classification, cognitive control |

| P300/P3b | 250-500 | Positive | Centroparietal | Context updating, attention allocation, working memory |

| N400 | 300-500 | Negative | Centroparietal | Semantic processing, meaning integration, access to semantic memory |

Experimental Protocols for Eliciting Key ERP Components

The auditory oddball paradigm represents the gold standard experimental design for eliciting the P300 component [12]. In this protocol, participants are presented with a sequence of auditory stimuli consisting of frequent "standard" tones (e.g., 1000 Hz, 80% probability) and rare "target" tones (e.g., 2000 Hz, 20% probability) in random order. Participants are instructed to respond selectively to the target tones via button press while ignoring standard tones. Stimuli are typically presented with a fixed inter-stimulus interval between 1-2 seconds, and the total number of trials should include a minimum of 30-40 target presentations to ensure adequate signal-to-noise ratio in the averaged ERP [12]. Critical recording parameters include a sampling rate of at least 250 Hz, filter settings of 0.01-30 Hz, and electrode placement including Fz, Cz, Pz, and Oz sites according to the 10-20 system [9]. The P300 is measured as the most positive peak between 250-500 ms at the Pz electrode site following target stimuli after correct trials only.

The semantic anomaly paradigm, originally developed by Kutas and Hillyard (1980), effectively elicits the N400 component by presenting sentences that end with either expected or semantically anomalous words [14] [15]. In a typical implementation, participants read or listen to sentences presented one word at a time, with 25% of sentences ending with a semantically incongruent word (e.g., "I take my coffee with cream and dog") while the remaining 75% end with congruent words [14]. Each word is typically displayed for 500-600 ms with a stimulus onset asynchrony (SOA) of 800-1000 ms. Participants may be periodically prompted to answer comprehension questions to ensure attention to the stimuli. The N400 effect is quantified as the mean amplitude in the 300-500 ms time window at centroparietal electrode sites (e.g., CPz, Pz) for anomalous versus congruent sentence endings, with larger negative amplitudes indicating greater difficulty with semantic integration [14] [15].

The Go/No-Go task is particularly effective for studying cognitive control processes and eliciting the N200 component associated with response inhibition [13]. In this paradigm, participants are presented with frequent "Go" stimuli (e.g., the letter "X", 70% probability) requiring a rapid button press, and rare "No-Go" stimuli (e.g., the letter "O", 30% probability) requiring response withholding. Stimuli are typically presented for 200-500 ms with a variable inter-trial interval between 1-2 seconds to prevent anticipatory effects. The experiment should include a minimum of 30-40 No-Go trials to ensure adequate averaging. The N200 is measured as the negative peak at frontocentral sites (e.g., FCz) between 200-350 ms post-stimulus, typically showing enhanced amplitude for No-Go compared to Go trials [13]. Recent research using this paradigm has also identified a novel slow negative anticipatory wave over prefrontal sites called the prefrontal negativity (pN) that begins up to 800 ms before stimulus onset and is associated with proactive cognitive control [13].

Table 2: Key Experimental Paradigms for ERP Research

| Paradigm | Primary Components Elicited | Stimulus Parameters | Task Requirements | Critical Experimental Conditions |

|---|---|---|---|---|

| Auditory Oddball | P300, N200, P200, N100 | Standard tones (80%), Target tones (20%), ISI: 1-2s | Button press to targets | Target vs. Standard stimuli |

| Semantic Anomaly | N400, P600 | Congruent (75%) vs. Incongruent (25%) sentence endings, SOA: 800-1000ms | Reading/listening, occasional comprehension questions | Congruent vs. Incongruent sentence endings |

| Go/No-Go | N200, P300, pN | Go stimuli (70%), No-Go stimuli (30%), ISI: 1-2s | Button press to Go stimuli, withhold to No-Go | Go vs. No-Go stimuli |

| Lexical Decision | N400, P300 | Words (50%), Pseudowords (50%), SOA: 800-1200ms | Word/nonword decision via button press | Words vs. Pseudowords, High vs. Low frequency words |

| Priming | N400, P300 | Prime-target pairs, SOA: 200-1000ms | Lexical decision or semantic categorization | Related vs. Unrelated prime-target pairs |

Visualization of ERP Component Timing and Functional Significance

The following diagram illustrates the typical temporal sequence of major ERP components and their functional correlates in cognitive processing:

ERP Component Timeline and Functional Correlates

The Researcher's Toolkit: Essential Materials and Reagent Solutions

Table 3: Essential Research Equipment and Software for ERP Studies

| Category | Specific Items | Purpose/Function | Representative Examples |

|---|---|---|---|

| EEG Recording Equipment | EEG Amplifier System | Signal acquisition and preliminary amplification | Biosemi ActiveTwo, BrainVision actiCHamp, Electrical Geodesics Inc. (EGI) systems |

| Electrodes/Caps | Electrical signal detection from scalp | Ag/AgCl electrodes, Electrode caps (32-256 channels) | |

| Electrolyte Gel | Ensuring conductivity between scalp and electrodes | Electro-Gel, Abralyt HiCl, Signa Gel | |

| Skin Preparation | Reducing impedance at electrode-skin interface | NuPrep abrasive gel, alcohol pads | |

| Stimulus Presentation Software | Experiment Programming | Designing and presenting experimental paradigms | E-Prime, Presentation, Psychtoolbox for MATLAB, OpenSesame |

| Response Collection | Recording participant behavioral responses | Response boxes, Computer keyboard, fMRI-compatible button boxes | |

| ERP Analysis Tools | Preprocessing Software | Filtering, artifact rejection, epoching | EEGLAB, ERPLAB, BrainVision Analyzer, MNE-Python |

| Statistical Analysis | Analyzing ERP amplitudes and latencies | R, SPSS, MATLAB with Statistics Toolbox | |

| Source Localization | Estimating neural generators of ERP components | sLORETA, BESA, BrainStorm | |

| Specialized Solutions | Conductive Paste | Fixing electrodes in place and maintaining conductivity | Elefix paste, Ten20 conductive paste |

| Electrode Cleaning | Maintaining electrode integrity and performance | Enzyme-based electrode cleaning solutions |

Applications in Clinical Research and Drug Development

ERP components have proven particularly valuable as biomarkers of synaptic dysfunction in various neurological and psychiatric conditions, offering sensitive measures of cognitive impairment that often precede behavioral manifestations [12]. In Alzheimer's disease research, for example, abnormalities in the P300 and N400 components become more common as neuropathology extends to neocortical association areas, with P300 latency delays serving as a sensitive indicator of cognitive impairment severity [12]. The N400 has demonstrated sensitivity to semantic memory deficits in mild cognitive impairment (MCI) and early Alzheimer's disease, often revealing processing abnormalities in individuals who still perform within normal ranges on behavioral tasks [12]. Similarly, the visual motion-elicited N200 shows marked reduction in amplitude in a subtype of AD patients with impaired motion detection, suggesting specific dysfunction in extrastriate visual cortex [12].

In pharmaceutical research, ERP components provide objective neurophysiological measures for evaluating cognitive effects of therapeutic compounds [12]. The P300 component is especially valuable in clinical trials for cognitive-enhancing drugs, with latency reductions potentially indicating improved information processing speed, while amplitude increases may reflect enhanced attention allocation or working memory updating [12]. The N400 component can sensitively measure semantic processing improvements in conditions characterized by language deficits. When implementing ERP measures in clinical drug trials, researchers should employ well-established paradigms with demonstrated test-retest reliability, include appropriate control conditions, and consider longitudinal assessment designs to track cognitive changes over time [12]. These electrophysiological measures offer the distinct advantage of providing direct, quantifiable measures of neural processing that are less susceptible to practice effects and other confounding factors that often complicate the interpretation of behavioral measures in clinical trials [12].

Application Notes: Brain Oscillations in Conceptual Design

Theoretical Framework and Functional Significance

Brain oscillations represent fundamental electrical rhythms generated by synchronized neuronal activity, serving as crucial mechanisms for coordinating large-scale brain networks during cognitive processes. Within the context of conceptual design tasks, these rhythms facilitate various stages of creative cognition, from initial idea generation to final evaluation. The rhythmic activity is categorized by frequency bands, each with distinct functional correlates that can be monitored via electroencephalography (EEG) to provide objective biomarkers of cognitive states during creative work [16] [17].

The integration of EEG with event-related potential (ERP) methodologies offers a powerful framework for investigating the temporal dynamics of brain activity during specific design events. This approach enables researchers to capture both the spontaneous oscillatory activity that reflects ongoing cognitive states and the transient neural responses to discrete design stimuli or insights [18]. Such multimodal investigation is particularly valuable for understanding the neurocognitive basis of conceptual design, where both sustained states and momentary breakthroughs contribute to creative output.

Quantitative Characterization of Frequency Bands

Table 1: Characteristic Features and Cognitive Correlates of Key Brain Oscillations

| Frequency Band | Frequency Range (Hz) | Primary Cognitive Correlates | Role in Creative Cognition |

|---|---|---|---|

| Theta | 4-8 | Deep relaxation, inward focus, memory consolidation, emotional insight [17] | Facilitates insight generation, associative thinking, and access to remote ideas [16] |

| Alpha | 8-12 | Relaxed yet alert state, reduced anxiety, improved creativity, passive attention [16] [17] | Promotes mental relaxation necessary for divergent thinking and overcoming fixation |

| Beta | 12-30 | Active thinking, concentration, problem-solving, external attention [16] [17] | Supports focused evaluation, convergent thinking, and implementation of design ideas |

| Gamma | >30 | Focused concentration [16] | Enables binding of disparate concepts into coherent ideas |

Methodological Considerations for Research

Recent advances in EEG methodology highlight several critical considerations for researching brain oscillations during conceptual design tasks. The choice of preprocessing pipelines significantly influences data quality and interpretability, with key steps including filtering, artifact correction, and referencing strategies [5]. Artifact correction using independent component analysis (ICA) or automated methods like AUTOREJECT, while methodologically rigorous, may potentially remove neural signals systematically associated with task conditions, particularly in paradigms involving eye movements or motor responses [5]. For creative cognition research involving naturalistic design environments, emerging approaches combining VR with EEG offer promising avenues for studying brain oscillations under ecologically valid conditions while maintaining experimental control [19].

Experimental Protocols

Comprehensive Protocol for Investigating Brain Oscillations During Conceptual Design

Experimental Design and Task Paradigm

This protocol outlines a standardized approach for investigating theta, alpha, and beta oscillations during conceptual design tasks, adapted from established EEG methodologies [18] and contemporary research practices [5]. The experimental paradigm consists of three primary conditions:

- Divergent Thinking Condition: Participants generate multiple solutions to open-ended design problems while EEG is recorded. This condition preferentially engages theta and alpha oscillations associated with ideation and remote association.

- Convergent Thinking Condition: Participants evaluate and select the most promising design concepts, engaging beta oscillations related to focused attention and decision-making.

- Resting State Baseline: Participants maintain quiet wakefulness with eyes open and closed to establish individual baselines for oscillatory activity.

Each condition should be sufficiently prolonged (minimum 3 minutes) to allow for stable oscillatory patterns to emerge, with multiple trials to ensure statistical reliability. Task instructions should be standardized across participants, and design stimuli should be controlled for complexity and domain relevance.

EEG Data Acquisition Parameters

- Equipment: 64-channel Ag/AgCl electrode system with Waveguard cap or equivalent [19]

- Amplifier Settings: Recording at 1024 Hz sampling rate with average reference [19]

- Impedance Management: Maintain electrode impedances below 10 kΩ throughout recording [19]

- Environmental Controls: Electrically shielded room, minimized ambient lighting, comfortable seating

- Synchronization: Use LabStreamingLayer (LSL) for synchronizing EEG with task events [19]

Data Preprocessing Pipeline

Table 2: Recommended Preprocessing Steps for Oscillatory Analysis

| Processing Step | Recommended Parameters | Rationale | Impact on Decoding Performance |

|---|---|---|---|

| High-Pass Filtering | 0.5-1.0 Hz cutoff [5] | Removes slow drifts while preserving oscillatory content | Higher cutoffs increase decoding performance but risk removing genuine neural signals |

| Low-Pass Filtering | 30-40 Hz cutoff [5] | Eliminates high-frequency noise while preserving frequency bands of interest | Lower cutoffs increase performance for time-resolved decoding |

| Artifact Correction | ICA for ocular artifacts; AUTOREJECT for muscle and channel artifacts [5] | Removes non-neural biological signals | Generally decreases decoding performance but improves interpretability |

| Referencing | Average reference [19] | Mitigates impact of reference electrode location | Effect varies by experiment; minimal overall impact |

| Baseline Correction | -200 to 0 ms pre-stimulus for ERPs; entire resting state for oscillations | Removes DC offsets | Longer baseline windows increase decoding performance |

| Detrending | Linear detrending [5] | Removes linear drifts within trials | Consistently improves decoding performance |

Advanced Analytical Approaches

Time-Frequency Analysis

For analyzing event-related changes in oscillatory power during design tasks:

- Epoch data around critical design events (e.g., insight moments, design decisions)

- Compute time-frequency representations using Morlet wavelets or similar methods

- Extract power in theta (4-8 Hz), alpha (8-12 Hz), and beta (12-30 Hz) bands

- Normalize power using baseline periods (-500 to -100 ms pre-stimulus)

- Statistical analysis using cluster-based permutation tests to identify significant oscillatory modulations

Functional Connectivity Analysis

To investigate network interactions between brain regions during creative cognition:

- Compute phase-based connectivity metrics (phase-locking value, weighted phase lag index)

- Focus on fronto-parietal and default mode networks given their relevance to creative cognition

- Compare connectivity patterns between different design task conditions

- Relate connectivity measures to behavioral metrics of creativity (originality, appropriateness)

Diagram 1: Experimental workflow for investigating brain oscillations during conceptual design tasks.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Equipment and Analytical Tools for EEG Research on Creative Cognition

| Category | Item | Specification/Function | Representative Examples |

|---|---|---|---|

| Recording Equipment | EEG Amplifier System | 64+ channels, high sampling rate (≥1024 Hz), low noise | Refa8 (TMSi) amplifier [19] |

| Electrode Cap | Ag/AgCl electrodes, standard 10/20 positioning | Waveguard cap (ANT Neuro) [19] | |

| Eye Tracking System | Integrated with HMD for naturalistic paradigms | Tobii eye-tracker (HTC Vive Pro Eye) [19] | |

| Stimulus Presentation | Virtual Reality System | Immersive environment for ecological design tasks | HTC Vive Pro Eye HMD [19] |

| Experimental Software | Precise stimulus control and synchronization | Unity3D with LabStreamingLayer [19] | |

| Data Analysis | Preprocessing Tools | Automated artifact removal and data quality control | AUTOREJECT package [5] |

| Feature Extraction | Automated time-series feature generation for machine learning | ROCKET algorithm [20] | |

| Decoding Frameworks | Neural network classifiers for condition prediction | EEGNet [5] | |

| Specialized Materials | Electrolyte Gel | Bridge between scalp and electrodes, maintain conductivity | Standard EEG electrolyte gels |

| Abrasive Preparations | Gentle skin exfoliation to reduce impedance | Mild abrasive pastes or prepping solutions |

Diagram 2: Functional roles of theta, alpha, and beta oscillations in creative cognition.

Electroencephalography (EEG) and Event-Related Potentials (ERPs) offer a powerful, millisecond-resolution window into the brain's cognitive processes, making them indispensable for studying the staged, hierarchical nature of conceptual design [21] [22]. Conceptual design involves high-order cognitive functions such as abstract object recognition, semantic processing, and content comprehension, which correspond to the advanced stage of the brain's cognitive processing hierarchy [21]. This document provides detailed Application Notes and Protocols for using EEG/ERP to delineate the cognitive stages of conceptual design, with a specific focus on applications in clinical research and drug development for psychiatry [23].

Research indicates that the brain processes stimuli through sequential stages: a physical attribute processing stage (e.g., processing basic sensory features), an elemental or structural processing stage (e.g., processing composition and melody), and an advanced informational processing stage where perceptual holism and conceptual understanding occur [21]. The following sections provide a structured overview of the ERP components associated with these stages, detailed experimental protocols for their investigation, and advanced analytical techniques for identifying distinct cognitive stages during conceptual design tasks.

Cognitive Stages and Their EEG/ERP Correlates

The brain's cognitive process during conceptual design can be segmented into distinct, hierarchical stages, each characterized by specific ERP components. The table below summarizes the primary cognitive stages, their functions, and accessible ERP biomarkers.

Table 1: Cognitive Processing Stages and Accessible EEG/ERP Biomarkers in Conceptual Design

| Cognitive Stage | Primary Function | ERP Component | Typical Latency (ms) | Key Experimental Paradigm |

|---|---|---|---|---|

| Physical Attribute Processing [21] | Processing fundamental physical attributes of stimuli (e.g., brightness, sound frequency). | Visual C1, P1, N1 [21]Auditory N1 [21] | <200 | Passive viewing/listening; Simple detection tasks. |

| Elemental/Structural Processing [21] | Processing structural attributes (e.g., image outline, sound rhythm, stimulus structure). | N2 [21]P300 [21] | 200-400 | Oddball paradigms; Stimulus categorization. |

| Informational Processing [21] | Abstract object recognition, semantic processing, and content comprehension (Perceptual Holism). | N400 [21]Information-Related Potentials (IRPs) [21] | >400 | Go/Nogo with informational attributes; Semantic anomaly tasks. |

Experimental Protocols for Investigating Conceptual Design

This section outlines standardized protocols for acquiring high-quality EEG/ERP data pertinent to conceptual design research. Adherence to these protocols is critical for ensuring reliability and reproducibility, especially in multi-site clinical trials [24].

Protocol 1: Visual Information Cognition Experiment (VICE)

This protocol is designed to probe the informational processing stage using visually mediated conceptual design [21].

- Objective: To isolate and measure neural correlates of abstract object recognition and perceptual holism during a visual conceptual design task.

- Stimuli:

- Paradigm: A variation of the Go/Nogo paradigm where subjects respond to both Go and Nogo stimuli to maintain consistent task engagement and presentation probability [21].

- Procedure:

- Setup: Participants are seated in a sound-attenuated, electrically shielded room. EEG is recorded using a high-density (e.g., 128-channel) system.

- Task: Stimuli are presented in a randomized order. Each trial consists of a fixation cross, followed by a stimulus presentation (e.g., 500 ms), and an inter-trial interval (e.g., 1000-1500 ms).

- Subject Task: Participants are instructed to press a button for all stimuli while internally categorizing them as "meaningful" or "meaningless."

- Data Acquisition: Continuous EEG is recorded at a sampling rate ≥500 Hz with a bandpass filter of 0.1-100 Hz. Electrooculogram (EOG) is simultaneously recorded for artifact correction [21] [25].

- Data Analysis:

- ERP Extraction: EEG data are segmented into epochs time-locked to stimulus onset (-200 to 800 ms). Epochs are baseline-corrected and averaged separately for Go and Nogo conditions.

- IRP Identification: The difference wave (Go minus Nogo) is computed to isolate potentials related to informational attribute processing, particularly positive and negative components occurring after 400 ms [21].

- Single-Trial Decoding: Apply machine learning methods like Common Spatial-Spectral Pattern (CSSP) to decode cognitive states from single-trial EEG [21].

Protocol 2: Active Auditory Oddball Paradigm

This standardized protocol is highly validated for assessing attentional and cognitive processing stages, including the P300, and is suitable for multi-site trials [22] [24].

- Objective: To elicit and measure the P300 component related to context updating and the N2 component related to stimulus classification, both relevant to the structural and decision stages of conceptual design.

- Stimuli:

- Standard Stimuli: Frequent, regularly occurring tones (e.g., 1000 Hz, 80% probability).

- Target Stimuli: Infrequent, "oddball" tones (e.g., 2000 Hz, 20% probability) to which participants must respond.

- Procedure:

- Participants listen to a series of tones and press a button as quickly and accurately as possible only upon hearing a target tone.

- A block typically consists of 250-300 tones with a stimulus duration of 50-100 ms and a variable inter-stimulus interval (e.g., 1000-1500 ms) [24].

- Data Analysis:

Table 2: Key Research Reagent Solutions for EEG/ERP Experiments

| Category | Item | Specification / Example | Primary Function in Research |

|---|---|---|---|

| Hardware [25] [24] | EEG Acquisition System | 128-channel Geodesic HydroCel System (EGI) [25] | High-density recording of scalp electrical activity. |

| Electrode Cap | Geodesic Sensor Net | Standardized sensor placement across participants. | |

| Software [24] | Stimulus Presentation | MATLAB with Psychtoolbox, E-Prime | Precise control and timing of experimental paradigms. |

| EEG Processing | EEGLAB, Automagic Pipelines [24] | Pre-processing, artifact removal, and ERP analysis. | |

| Analytical Tools [21] [26] | Machine Learning Toolbox | CSSP, MVPA [21] [26] | Single-trial decoding of cognitive states. |

| Stage Identification | HsMM-EEG Toolbox [26] | Identifying number, duration, and sequence of cognitive stages. | |

| Experimental Materials [21] | Visual Stimuli | Sector-derived positive polygons vs. random shapes [21] | Probing informational attributes and perceptual holism. |

| Auditory Stimuli | Grammar-conforming vs. random digit sequences [21] | Probing semantic and syntactic processing. |

Advanced Analysis: Identifying Cognitive Stages with HsMM-EEG

Beyond traditional ERP analysis, Hidden semi-Markov Model EEG (HsMM-EEG) provides a powerful data-driven method for identifying the precise number, duration, and sequence of unobservable cognitive processing stages during a conceptual design trial [26].

- Principle: HsMM-EEG models the EEG signal as a sequence of hidden states (processing stages), each characterized by a distinct, constant "neuronal signature" (pattern of brain activity) and a variable duration [26].

- Workflow:

- Model Fitting: HsMMs with different numbers of states are fitted to the single-trial EEG data from the entire trial period (from stimulus onset to response).

- Model Selection: The optimal number of states (stages) is identified using leave-one-out-cross-validation (LOOCV) to compare model likelihoods.

- Stage Interpretation: The function of each identified stage is inferred by examining how its duration is affected by experimental manipulations (e.g., meaningful vs. meaningless stimuli) and by correlating it with outputs from cognitive models [26].

HsMM-EEG Analysis Workflow

Visualization of Neural Pathways in Perceptual Holism

Brain network analysis reveals that advanced informational processing, or perceptual holism, shares consistent neural pathways across visual and auditory modalities [21]. The following diagram illustrates this unified network for conceptual integration.

Unified Pathway for Conceptual Processing

Electroencephalogram (EEG) and Event-Related Potentials (ERP) provide a non-invasive window into the neural correlates of information processing during conceptual design tasks. These measures offer millisecond temporal resolution, enabling researchers to capture the rapid, dynamic cognitive processes—such as attention, working memory, and mental effort—that underlie creative and analytical thinking [27]. Within the framework of information processing models, the physical and structural attributes of brain signals, like spectral power and functional connectivity, give rise to informational attributes that can be decoded to infer cognitive states like mental workload [27]. This is particularly relevant for conceptual design, a domain characterized by complex problem-solving and fluctuating cognitive demands. This document outlines application notes and experimental protocols for utilizing EEG/ERP in this context, tailored for researchers and drug development professionals investigating neurocognitive function.

Core Neurophysiological Correlates of Information Processing

EEG signals are decomposed into oscillatory bands, each associated with distinct cognitive functions. ERPs, particularly the P300 component, serve as direct markers of cognitive processes such as attention and decision-making. The table below summarizes the key EEG and ERP features relevant to conceptual design research.

Table 1: Key Neurophysiological Correlates of Information Processing

| Measure | Frequency / Component | Cognitive Correlate | Typical Topography | Change with Increased Load |

|---|---|---|---|---|

| Frontal Theta | 4-7 Hz | Mental effort, cognitive control [27] | Frontal-midline | Increase [27] |

| Parietal Alpha | 8-12 Hz | Idling of cortical resources, inhibition of task-irrelevant areas [27] | Parietal-occipital | Decrease (desynchronization) [27] |

| Beta | 16-31 Hz | Active concentration and focused attention [28] | Sensorimotor cortex | Context-dependent |

| Gamma | >31 Hz | High-level information binding and sensory processing [28] | Distributed | Context-dependent |

| P300 ERP | Positive peak ~300ms | Attention allocation, context updating, decision-making [29] [30] | Centro-parietal | Decreased amplitude, prolonged latency [30] |

The P300 component is a validated neurophysiological marker for cognitive dysfunction. Studies in clinical populations, such as individuals with epilepsy, show that a reduced P300 amplitude and prolonged P300 latency are correlated with impairments in attention and working memory, as confirmed by neuropsychological tests like the Montreal Cognitive Assessment (MoCA) and digit span [30]. This relationship underscores its utility as an objective biomarker for assessing the cognitive impact of neuroactive compounds or pathologies.

Experimental Protocols for EEG/ERP in Conceptual Design

This section provides a detailed methodology for a classic paradigm adapted to probe the cognitive demands of conceptual design.

Application Note: P300 Oddball Paradigm for Assessing Attentional Resource Allocation

Objective: To utilize the auditory P300 oddball paradigm as a tool for evaluating attention and working memory in healthy participants or patients, providing a baseline measure of cognitive function that can be perturbed by pharmacological interventions or task demands [29] [30].

Background: The P300 is evoked when a participant detects an infrequent "target" stimulus within a stream of standard stimuli. Its amplitude is thought to reflect the allocation of attentional resources, while its latency indexes stimulus classification speed [30]. In drug development, this paradigm can objectively quantify a compound's impact on cognitive processing.

Detailed Experimental Protocol

I. Participant Preparation

- Screening: Recruit participants based on study criteria (e.g., age, health status, design expertise). Obtain informed consent.

- Setup: Use a shielded room. Measure and adjust electrode impedances to below 10 kΩ using conductive gel to ensure high-quality signal acquisition [31].

II. Stimuli and Task Design

- Stimuli: Two auditory tones delivered via headphones (e.g., 1000 Hz standard tone, 2000 Hz target tone).

- Paradigm: A block of 300 trials with a 15% probability for the target tone (45 targets) and 85% for the standard tone (255 standards).

- Procedure:

- Instruct participants to press a button as quickly and accurately as possible upon hearing the target tone and to ignore the standard tone.

- Present tones in a random order with a fixed stimulus duration (e.g., 100ms) and a variable inter-stimulus interval (e.g., 1.5-2s) to prevent habituation.

- Include practice trials before the main experiment.

III. Data Acquisition

- EEG System: Continuous EEG recording from 57+ channels based on the international 10-20 system [31].

- Parameters: Sampling rate ≥ 1000 Hz, online band-pass filter (e.g., 0.1-100 Hz), and appropriate referencing (e.g., linked mastoids or average reference) [31].

- Trigger Synchronization: Send precise event markers to the EEG recording system at the onset of each stimulus to enable offline epoch extraction [31].

IV. Data Preprocessing and Analysis

- Filtering: Apply a zero-phase band-pass filter (e.g., 0.1-30 Hz) and a notch filter (50/60 Hz).

- Epoching: Segment data from -200 ms pre-stimulus to 800 ms post-stimulus around each stimulus event.

- Baseline Correction: Correct each epoch using the pre-stimulus interval.

- Artifact Rejection: Automatically reject epochs containing amplitudes exceeding ±100 µV and manually inspect for residual artifacts (e.g., eye blinks, muscle activity).

- Averaging: Separate and average epochs for target and standard conditions to derive the ERP waveforms.

- Quantification: Automatically detect P300 component at the Pz electrode: measure peak amplitude (µV) and latency (ms) within a 250-500 ms post-stimulus window.

The workflow for this protocol is standardized and can be visualized as follows:

The Scientist's Toolkit: Essential Research Reagents and Materials

A successful EEG/ERP experiment relies on a suite of specialized hardware and software. The following table catalogs the key components.

Table 2: Essential Research Reagents and Materials for EEG/ERP Studies

| Item Category | Specific Examples | Function & Application Notes |

|---|---|---|

| EEG Amplifier & Cap | Neuracle Neusen series [31], EMOTIV EPOC X [8], Muse S [28] | Function: Records electrical brain activity. Notes: Research-grade systems (e.g., Neuracle) offer high-channel counts and superior signal quality for validation studies. Consumer-grade devices (e.g., Muse) offer accessibility for pilot studies. |

| Conductive Gel | Electro-Gel, Abralyt HiCl | Function: Ensures low impedance between scalp and electrodes. Notes: Essential for high-fidelity ERP studies. Saline-based solutions are used in quick-setup systems. |

| Stimulus Presentation Software | PsychoPy [8], Presentation, E-Prime | Function: Prescribes experimental paradigm and delivers visual/auditory stimuli. Notes: Must support precise timing and synchronization with the EEG amplifier via trigger output. |

| ERP Analysis Software | EEGLAB, ERPLAB, MNE-Python, BrainVision Analyzer | Function: Processes raw EEG data: filtering, epoching, artifact rejection, and ERP averaging. Notes: Open-source tools (EEGLAB) are highly customizable; commercial software offers streamlined workflows. |

| Neuropsychological Batteries | Montreal Cognitive Assessment (MoCA) [30], Digit Span Test [30], Frontal Assessment Battery (FAB) [30], NASA-TLX [27] | Function: Provides subjective and behavioral measures of cognitive function and mental workload. Notes: Used to validate and correlate with objective EEG/ERP metrics. |

Integrated Workflow: From Data Acquisition to Informational Attributes

The journey from raw brain signals to meaningful cognitive insights involves a structured transformation of data attributes. The following diagram illustrates this integrated information processing pipeline, from physical signal acquisition to the final interpretation of informational attributes.

This workflow is operationalized in the following steps:

From Physical to Structural Attributes: The raw, microvolt-scale EEG voltage is first preprocessed to remove noise [28]. Feature extraction then transforms this raw signal into quantifiable structural attributes. This includes calculating spectral power in theta, alpha, beta, and gamma bands [27], or isolating ERP components like the P300 [31].

From Structural to Informational Attributes: Machine learning (ML) and deep learning (DL) models are employed to decode these structural attributes into meaningful informational states. For instance, a support vector machine (SVM) or convolutional neural network (CNN) can be trained to classify patterns of frontal theta and parietal alpha power into discrete levels of mental workload (e.g., low, medium, high) [27]. This step moves from correlation (a change in theta power) to inference (a state of high cognitive load).

Advanced Application: Mental Workload Assessment During Complex Task Performance

For research on conceptual design, which often involves multitasking, assessing mental workload (MWL) is crucial. EEG provides a more direct and continuous measure of MWL compared to subjective questionnaires like the NASA-TLX [27].

Protocol Notes:

- Task Design: Induce varying MWL levels using n-back tasks, mental arithmetic, or simulated design tasks with varying complexity.

- EEG Correlates: Focus on established biomarkers: increased frontal theta power and decreased parietal alpha power [27]. The theta/alpha power ratio has also shown high correlation with objective task load [27].

- Analytical Approach: Use machine learning models for classification. Note that models trained on single-tasking data often see a significant drop in accuracy when applied to multitasking conditions, highlighting the need for ecologically valid task designs [27].

From Lab to Protocol: Designing and Executing EEG/ERP Studies for Design Tasks

Electroencephalography (EEG) and Event-Related Potentials (ERPs) provide a powerful, non-invasive window into brain dynamics with millisecond temporal resolution, making them exceptionally suited for studying the rapid cognitive processes inherent to conceptual design [32] [33]. This application note details the core experimental paradigms—the Go/No-Go and Oddball tasks—and introduces innovative modifications tailored for design research. We provide structured protocols, quantitative benchmarks, and visualization tools to enable researchers and drug development professionals to reliably deploy these methods for investigating the neural underpinnings of creativity, inhibition, and novelty detection. The high temporal resolution of EEG allows for the monitoring of brain activity on the same time scale as cognitive processes occur, which is critical for understanding fast cognitive events during design thinking [33].

The Go/No-Go Paradigm: Probing Response Inhibition

The Go/No-Go task is a canonical paradigm for assessing response inhibition, a core executive function crucial for suppressing pre-potent responses and exercising cognitive control [34] [35]. In design research, this translates to the ability to inhibit dominant but uncreative solutions and explore novel alternatives. The paradigm typically involves two stimuli: frequent "Go" stimuli requiring a motor response, and infrequent "No-Go" stimuli requiring the withholding of that response. The cognitive conflict and subsequent inhibition on No-Go trials elicit characteristic ERP components, primarily the N2 and P3 [35].

Protocol for a Modified Visual Go/No-Go Task

This protocol is adapted for investigating design cognition using directional cues [34].

- Objective: To assess neural correlates of response inhibition during a task requiring spatial judgment.

- Equipment: EEG system with 64+ electrodes, 4-way joystick, display screen, soundproof chamber.

- Stimuli: Green equilateral triangles presented as cues and targets with random orientations.

- Procedure:

- Trial Structure: A central fixation cross is displayed for 1200 ms, followed by a cue triangle. After a 500 ms interval, a target triangle appears.

- Task Instruction: Participants must judge if the cue and target directions match. A match constitutes a "Go" trial, requiring a swift joystick movement in the indicated direction. A mismatch constitutes a "No-Go" trial, requiring no action.

- Block Design: The experiment consists of 8 randomized blocks with different Go/No-Go ratios (e.g., 100%:0%, 75%:25%, 50%:50%, 25%:75%). Participants are informed of the ratio before each block.

- Trials per Block:

- Blocks 1 & 2: 144 Go, 0 No-Go

- Blocks 3 & 4: 108 Go, 36 No-Go

- Blocks 5 & 6: 72 Go, 72 No-Go

- Blocks 7 & 8: 36 Go, 108 No-Go

- Rest: Participants rest between blocks.

- EEG Recording: Record with a 1000 Hz sampling rate, reference to the left mastoid. Include electrooculogram (EOG) electrodes to monitor eye movements [34].

- Key Dependent Variables:

Quantitative Data and Expected Outcomes

Table 1: Typical Behavioral and ERP Effects of Go/No-Go Ratio Manipulation [34]

| Go/No-Go Ratio | Go Reaction Time (ms) | No-Go Error Rate (%) | NoGo-P3 Amplitude (μV) | NoGo-P3 Latency (ms) |

|---|---|---|---|---|

| 100%:0% | Baseline (Fastest) | N/A | N/A | N/A |

| 75%:25% | Increases | Highest | Larger | Longer |

| 50%:50% | Moderate | Moderate | Intermediate | Intermediate |

| 25%:75% | Longest | Lowest | Reduced | Shortened |

The data in Table 1 demonstrates that as the proportion of Go trials decreases, the brain's predictive mechanisms adjust, making response inhibition easier (lower error rates) and requiring less cognitive resource allocation (reduced NoGo-P3 amplitude) [34].

Experimental Workflow

The following diagram illustrates the sequence and logic of a single trial in the visual Go/No-Go task.

The Oddball Paradigm: Detecting Novelty and Significance

The Oddball paradigm is a fundamental tool for studying attention, working memory, and the brain's response to novelty and deviance [36] [37]. In a typical auditory oddball task, a sequence of stimuli is presented where a frequent "standard" stimulus (e.g., 750 Hz tone) is interspersed with an infrequent "deviant" or "target" stimulus (e.g., 810 Hz tone). The participant's task is to detect the target. This paradigm reliably elicits the P300 (or P3) ERP component, a positive deflection occurring around 300-600 ms post-stimulus, which is associated with context updating and attention allocation [38] [37]. The P3 is often subdivided into the fronto-centrally maximal P3a, linked to novelty processing, and the parietally-maximal P3b, linked to target detection [37].

Protocol for an Active Auditory Oddball Task

- Objective: To elicit and measure the P300 component in response to target stimuli.

- Equipment: EEG system, equipment for binaural delivery of auditory stimuli (e.g., electrostatic headphones).

- Stimuli:

- Standard Stimulus: 750 Hz tone, 200 ms duration. (Probability: ~80%)

- Deviant/Target Stimulus: Higher frequency tone (e.g., 770-810 Hz, individually calibrated), 200 ms duration. (Probability: ~20%) [38].

- Procedure:

- Calibration (Optional): Prior to the main task, determine each participant's frequency discrimination threshold to set a deviant tone that is consistently detectable, ensuring task difficulty is controlled [38].

- Task Instruction: Participants are instructed to listen to the sequence of tones and press a button or mentally count the number of target (deviant) tones they hear.

- Stimulus Presentation: Approximately 500 stimuli are presented in a pseudo-random sequence with an inter-stimulus interval of around 1 second. The presentation of two consecutive deviant tones should be avoided.

- Task Design: The paradigm can be implemented in active (requiring a behavioral response) or passive (subject watches a movie) modes. The active version is required to elicit a robust P3b component [37].

- EEG Recording: Record with a sampling rate ≥ 250 Hz. Use a band-pass filter of 0.1-30 Hz during preprocessing. Independent Component Analysis (ICA) is recommended for artifact removal [39].

- Key Dependent Variables:

- Behavioral: Target detection accuracy, reaction time.

- ERP: Amplitude and latency of the P3b component at parietal electrode sites (e.g., Pz).

Quantitative Data and Clinical Utility

Table 2: Characteristic ERP Components in the Oddball Paradigm [38] [37]

| ERP Component | Typical Latency (ms) | Topography | Functional Significance | Clinical Relevance |

|---|---|---|---|---|

| Mismatch Negativity (MMN) | 150-250 | Frontocentral | Preattentive change detection | Reduced in schizophrenia |

| N2 | 200-350 | Frontocentral | Conflict detection, response inhibition | Altered in ADHD and OCD |

| P3a | 250-280 | Frontal/Central | Involuntary orienting to novelty | Sensitive to cognitive decline |

| P3b | 300-600 | Parietal/Central | Context updating, target evaluation | Latency prolonged in dementia |

The P300, particularly the P3b component, is a well-established biomarker in clinical and drug development research. For example, prolonged P300 latency and reduced amplitude are observed in conditions like Alzheimer's disease and schizophrenia, making it a valuable pharmacodynamic marker for assessing pro-cognitive effects of novel therapeutics [40] [37] [23].

Experimental Workflow

The following diagram outlines the core structure and cognitive processes involved in the oddball paradigm.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Equipment and Software for EEG/ERP Research

| Item Category | Specific Example / Specification | Primary Function |

|---|---|---|

| EEG Amplifier | 64-channel DC amplifier (e.g., Brain Products) [34] | Records electrical brain activity from the scalp with high temporal resolution. |

| Recording Cap | Ag/AgCl electrodes arranged in the International 10-20 system [39] | Holds electrodes in standardized positions on the participant's head. |

| Electrode Gel | Electrolytic gel | Ensures stable electrical conductivity between scalp and electrodes. Impedance should be kept < 5 kΩ [39]. |

| Stimulus Presentation Software | MATLAB with Psychtoolbox, Presentation, E-Prime | Precisely controls the timing and presentation of visual and auditory stimuli. |

| Response Device | 4-way joystick, response box, or computer mouse [34] | Records participant's behavioral responses (RT, accuracy). |

| EEG Preprocessing Toolbox | EEGLAB, BrainVision Analyzer | Provides a suite of tools for filtering, artifact removal (e.g., ICA), and epoching continuous EEG data. |

| ERP Analysis Toolbox | ERPLAB, FieldTrip | Facilitates the averaging of epochs, baseline correction, and statistical analysis of ERP components. |

Advanced Applications and Novel Task Design

Moving beyond standard paradigms, the field is embracing novel designs and analytical approaches to better capture the neural dynamics of complex cognition.

- Integrated Visual-Auditory Tests: Tests like the Integrated Visual and Auditory Continuous Performance Test (IVA-2) simultaneously assess multiple attention types (sustained, selective, divided) while EEG is recorded. Machine learning models can then be trained to estimate attention volumes from EEG-derived features with high accuracy (>80%) [39].

- EEG Source Imaging and Connectivity: Advanced analysis techniques move beyond scalp-level sensors to estimate the cortical sources of EEG signals. This is combined with functional connectivity analysis (e.g., using phase locking values) to track how large-scale brain networks interact during cognitive tasks [32] [33].

- Spectral Parameterization: The EEG power spectrum contains both periodic (oscillatory) and aperiodic (1/f-like) components. Automated algorithms (e.g., "specparam") can disentangle these, preventing conflation and providing clearer insights into neurodevelopmental changes and neural communication [32].

- Pharmaco-ERP in Drug Development: ERP markers are increasingly used in early-phase clinical trials to de-risk drug development. They serve as functional target engagement biomarkers, demonstrating that a drug candidate modulates a specific brain function (e.g., P300 for cognition) at a given dose, often before clinical effects are observable [40] [23].

Electroencephalography (EEG) and Event-Related Potential (ERP) measurements are fundamental tools in cognitive neuroscience for studying brain dynamics with millisecond temporal resolution. When investigating conceptual design tasks, which involve complex cognitive processes like creativity, problem-solving, and mental model manipulation, ensuring high-quality data acquisition is paramount. This application note details the essential equipment and setup protocols for EEG/ERP research, focusing specifically on the requirements for studying conceptual design processes. We frame this within a broader thesis on neurocognitive measurements during conceptual design tasks, providing researchers, scientists, and drug development professionals with standardized methodologies for reliable data collection.

The core EEG/ERP acquisition system consists of three primary components: electrode caps for signal sensing, amplifiers for signal conditioning and digitization, and often an electrically shielded environment to minimize noise interference. Each component plays a critical role in the signal chain, and optimal selection is necessary to capture the subtle neural correlates of higher-order cognitive functions involved in conceptual design.

Electrode Caps and Electrode Technologies

Electrode caps house the sensors that make contact with the scalp to measure electrical potentials. The choice of electrode technology represents a trade-off between signal quality, preparation time, and participant comfort [41] [42]. This is particularly relevant in conceptual design studies where participants need to remain comfortable and engaged during extended creative tasks.

Table 1: Comparison of EEG Electrode Types for Conceptual Design Research

| Feature | Gel-Based Electrodes | Water-Based (Semi-Dry) Electrodes | Dry Electrodes |

|---|---|---|---|

| Signal Quality | Very high, gold standard [41] | Good, stable [41] | Lower, prone to artifacts [41] |

| Preparation Time | Long (e.g., ~25 min for 32 ch) [42] | Moderate/Short [41] | Short (<5 min for 32 ch) [42] |

| Stability/Duration | High (up to 12 hours) [42] | Moderate (may need remoistening) [41] | Lower (15-60 min recommended) [42] |

| Participant Comfort | Lower (hair gel, abrasion) [41] | Higher [41] | Variable (can be uncomfortable) [41] |

| Best For | High-fidelity ERP components [42] | Longer studies with quick setup [41] | Rapid screening, short design tasks [42] |

Furthermore, electrodes can be categorized as active or passive. Active electrodes incorporate a miniature amplifier within the electrode itself to perform impedance conversion right at the scalp. This design makes the signal more robust against cable motion and environmental noise, allowing for good data quality even with moderately higher electrode-skin impedances [42]. Passive electrodes lack this circuitry and require very low impedance at the skin contact (~5-10 kΩ) to achieve a high signal-to-noise ratio, typically necessitating skin abrasion and conductive gel [42].

EEG Amplifiers

The amplifier is the core data acquisition unit that captures, filters, and digitizes the microvolt-level analog signals from the electrodes. Key specifications must be carefully considered to ensure the faithful recording of neural signals [43].

Table 2: Key Technical Specifications of Research-Grade EEG Amplifiers

| Parameter | Recommended Specification | Rationale and Application Note |

|---|---|---|

| Sampling Rate | ≥ 256 Hz [43] | Must be at least twice the highest frequency of interest (Nyquist theorem). For full-spectrum analysis including gamma bands (~80 Hz), >160 Hz is required; 256 Hz is a common standard [43]. |

| Bandwidth | DC to >80 Hz [43] | Must cover from slow cortical potentials (DC-0.5 Hz) to gamma oscillations (~80 Hz). AC-coupled amplifiers use a high-pass filter to remove DC offsets [43]. |

| Resolution | ≥ 24 bits [43] | Determines the smallest voltage change detectable. Higher bits provide a greater dynamic range to capture both strong and weak signals simultaneously [43]. |

| Input Referred Noise | < 1 μVrms [43] | The internal noise of the amplifier must be lower than the typical amplitude of EEG signals (a few microvolts) to avoid signal contamination [43]. |

| CMRR | > 80 dB (at 50/60 Hz); 100-110 dB is common [43] | Critical for rejecting common-mode environmental noise like line power (50/60 Hz). A higher CMRR indicates better noise cancellation [43]. |

| Input Impedance | > 100 MΩ [43] | A high input impedance prevents signal attenuation caused by high electrode-skin impedance, which is especially important when using dry electrodes [43]. |

| Impedance Monitor | Required (pre-setup); during recording is desirable [43] | Allows verification of signal quality before and during recording. Monitoring during recording must use frequencies outside the EEG band to avoid data contamination [43]. |

Electrically Shielded Environments

While portable systems with active electrodes can yield valid data in home and community settings [44], a controlled laboratory environment remains the gold standard for the highest data quality. An electrically shielded room, such as a Faraday cage, is a highly recommended strategy to improve the signal-to-noise ratio (SNR) [42]. It functions by blocking external electromagnetic interference from sources like power lines, radio broadcasts, and electronic equipment. This is crucial for capturing the clean, low-amplitude signals required for analyzing ERPs evoked during cognitive tasks.

Experimental Protocol for ERP Measurement during Conceptual Design Tasks

This protocol outlines the steps for measuring ERPs during a conceptual design task, adapted from established methodologies [45] [18]. The example task involves presenting participants with a series of problem statements (e.g., "Design a sustainable water transport system") followed by the presentation of potential solution concepts, with EEG recorded throughout.

The diagram below illustrates the end-to-end workflow for an ERP experiment in conceptual design research.

Participant Preparation and Electrode Montage

- Informed Consent: Obtain written, informed consent approved by the local Institutional Review Board (IRB) [44].

- Cap Fitting: Measure the participant's head circumference and select an appropriately sized electrode cap. Position the cap according to the international 10-20 system [46] or its high-density extensions (10-10 or 10-5 systems) for greater spatial resolution [46].

- Electrode Preparation:

- For Gel-Based Electrodes: Abrade the skin gently under each electrode site with a blunt-tipped needle and fill the electrodes with conductive gel. Aim for impedances below 10 kΩ for passive systems or below 25-50 kΩ for active systems [42].

- For Sponge-Based Electrodes: Soak the entire cap in a saline solution (e.g., KCl) for approximately 10 minutes before application. Target impedances of 60-100 kΩ [42].

- For Dry Electrodes: Ensure the cap is snug and each electrode pin makes firm contact with the scalp. Aim for impedances below 500 kΩ [42].

Equipment and Amplifier Configuration

- Amplifier Connection: Connect the electrode cap to the EEG amplifier. Ensure the ground and reference electrodes are properly placed.

- Parameter Setting: Configure the amplifier software with parameters suitable for ERP acquisition:

- Sampling Rate: 500 Hz or 1000 Hz is recommended for detailed temporal analysis [47].

- Bandwidth: Set to DC or 0.1 Hz (high-pass) to 100 Hz or higher (low-pass) to capture both slow cortical potentials and high-frequency activity [43].

- Impedance Check: Use the amplifier's built-in impedance monitor to verify all channels have acceptable impedance values before starting the recording [43].

Data Acquisition and Task Execution

- Task Programming: Implement the conceptual design task using experimental software (e.g., PsychoPy, Presentation, E-Prime). The task should output precise digital triggers marking the onset of each stimulus (e.g., problem statement, solution concept).

- Trigger Synchronization: Connect the stimulus computer to the EEG amplifier via a digital I/O cable or trigger box to synchronize event markers with the continuous EEG data [47].

- Recording: Instruct the participant to minimize movement and blinking during trial presentations. Record the continuous EEG data while the participant performs the task. Monitor data quality in real-time for excessive noise or artifacts.

Data Preprocessing and ERP Analysis

The following workflow details the key steps for transforming raw EEG into analyzable ERP data, a process critical for isolating neural responses related to conceptual design.

- Data Import and Filtering: Import the raw data into a preprocessing environment (e.g., EEGLAB, MNE-Python). Apply a high-pass filter (e.g., 0.1 or 1 Hz) to remove slow drifts and a low-pass filter (e.g., 30 or 40 Hz) to suppress high-frequency muscle and line noise [44].

- Epoching and Baseline Correction: Segment the continuous data into epochs time-locked to the stimulus events (e.g., from -200 ms before to 800 ms after stimulus onset). Apply baseline correction by subtracting the mean amplitude of the pre-stimulus period from the entire epoch.

- Artifact Handling: Identify and reject epochs contaminated by large artifacts (e.g., muscle movement, electrode pops) or use advanced techniques like Independent Component Analysis (ICA) to correct for recurring artifacts like eye blinks [45].

- Averaging: Average the artifact-free epochs separately for each experimental condition (e.g., trials with "innovative" vs. "conventional" solutions) to extract the ERPs. The averaging process attenuates random, non-time-locked brain activity, revealing the consistent neural response to the stimulus.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials and Equipment for EEG/ERP Research on Conceptual Design

| Item | Function/Description | Example Use-Case |

|---|---|---|

| Active Gel Electrode Cap | Provides excellent signal quality with reduced preparation time compared to passive gel systems; ideal for capturing high-fidelity ERPs [42]. | The primary choice for most lab-based studies on conceptual design ERPs where signal quality is critical [42] [44]. |

| High-Performance Amplifier | A research-grade amplifier with high sampling rate (≥500 Hz), high resolution (≥24 bits), and high CMRR (>100 dB) for precise signal acquisition [43] [47]. | Faithfully records the entire spectrum of neural activity, from slow cortical potentials to high-frequency gamma oscillations, during complex thinking. |

| Conductive Electrolyte Gel | A gel containing chloride ions to improve conduction and reduce impedance between the electrode and the skin [41]. | Used with wet (gel or sponge) electrodes to establish a stable electrical connection. Ultrasonic gels can be a cost-effective alternative [47]. |

| Faraday Cage / Shielded Room | A room or enclosure that blocks external electromagnetic fields, drastically reducing environmental noise [42]. | Essential for obtaining the cleanest possible data, especially in environments with high levels of electronic interference. |

| Trigger Interface Box | A device that sends precise digital markers from the stimulus presentation computer to the EEG amplifier, synchronizing the external events with the brain data [47]. | Critical for any ERP study to ensure accurate time-locking of brain responses to the presentation of design problems or concepts. |

| Stimulus Presentation Software | Software for designing and running experimental paradigms, capable of sending triggers and recording behavioral responses (e.g., reaction times) [45]. | Used to present the conceptual design task to the participant and log their performance and responses. |

This application note provides a detailed, step-by-step protocol for acquiring electroencephalography (EEG) and event-related potential (ERP) data, specifically contextualized for research involving conceptual design tasks. ERPs, measured using EEG, are electrical brain potentials time-locked to specific sensory, cognitive, or motor events [9]. Their high temporal resolution (on the millisecond scale) makes them an ideal methodology for studying the rapid cognitive processes underlying design and creativity [48]. The integrity of early sensory processing, which can be probed with specific ERP components, is fundamental before investigating higher-order cognitive operations, and this protocol includes support for such paradigms [1] [49]. Adherence to the following procedures is critical for obtaining the clean, high-fidelity data necessary to draw meaningful conclusions in cognitive neuroscience research.

Materials and Equipment

A successful EEG/ERP experiment requires specific equipment and reagents. The materials listed below constitute a standard setup for a research laboratory.

Table 1: Essential Equipment for EEG/ERP Acquisition

| Category | Specific Item | Function/Importance | Examples/Notes |

|---|---|---|---|

| Data Acquisition | EEG Amplifier | Amplifies microvolt-level brain signals for recording. | e.g., Neuroscan NuAmps, BioSemi [1] |