Mapping the Landscape: A Comprehensive Guide to the Spatial Distribution of Motion Artifacts in Brain Imaging

This article provides a systematic review of the spatial distribution of motion artifacts in brain imaging, a critical challenge for researchers, scientists, and drug development professionals.

Mapping the Landscape: A Comprehensive Guide to the Spatial Distribution of Motion Artifacts in Brain Imaging

Abstract

This article provides a systematic review of the spatial distribution of motion artifacts in brain imaging, a critical challenge for researchers, scientists, and drug development professionals. We first explore the foundational physics and heterogeneous patterns of these artifacts across different neuroimaging modalities, including MRI and fNIRS. The review then transitions to methodological advances, covering both hardware-based and deep-learning-driven correction techniques. We further offer a troubleshooting guide for artifact identification and mitigation in experimental design and data processing. Finally, we present a comparative analysis of validation frameworks and benchmark the performance of leading correction algorithms against standardized datasets. This synthesis aims to equip professionals with the knowledge to improve data fidelity in neuroimaging studies and pharmaceutical research.

The Physics and Patterns: Understanding the Origin and Spatially Heterogeneous Nature of Motion Artifacts

Magnetic resonance imaging (MRI) is a cornerstone of modern biomedical research and clinical diagnostics, providing unparalleled soft-tissue contrast without ionizing radiation. However, its susceptibility to subject motion has been a persistent challenge since its inception. In the specific context of brain research, motion artifacts introduce systematic biases that can compromise the integrity of functional connectivity analyses, structural measurements, and the development of quantitative biomarkers [1]. The prolonged data acquisition times required for high-resolution MRI, often spanning several minutes, far exceed the timescale of most physiological motions, including involuntary head movements, cardiac pulsation, and respiration [2]. This fundamental mismatch between acquisition speed and motion dynamics makes the MRI signal particularly vulnerable to corruption.

Motion during MRI acquisition primarily manifests in the final image as blurring (a loss of sharpness) and ghosting (replicated features of the object appearing as displaced copies) [3] [2]. These artifacts are not merely cosmetic; they can obscure anatomical details, mimic pathological conditions, and, most critically for research, lead to spurious brain-behavior associations [1]. Understanding the genesis of these artifacts requires moving from image space to the raw data domain of MRI, known as k-space. The corruption of k-space data by subject movement is the root cause of the ghosting and blurring observed in the final reconstructed image. This technical guide details the fundamental mechanisms by which motion disrupts k-space, explores the spatial distribution of these artifacts in brain imaging, and summarizes the quantitative frameworks and emerging solutions essential for robust neuroscientific research.

k-Space Fundamentals and the Impact of Motion

k-Space as the Fourier Domain of the Image

In MRI, spatial encoding is not performed directly. Instead, the signal is acquired in the spatial frequency domain, known as k-space. Each point in k-space contains information about the spatial frequency and phase of the entire imaged object, rather than representing a specific location [2]. The center of k-space (low spatial frequencies) determines the overall image contrast and signal intensity, while the periphery (high spatial frequencies) encodes the fine details and edges of the image [2].

The final image is reconstructed through an inverse Fourier transform of the acquired k-space data. This process assumes that the object being imaged has remained perfectly stationary throughout the entire data acquisition. Any violation of this assumption—that is, any motion during the scan—introduces inconsistencies in the k-space data, leading to artifacts in the reconstructed image [2].

How Motion Disrupts k-Space Data Consistency

The MRI signal is acquired sequentially, point-by-point or line-by-line, over time. When a subject moves, the spatial position of the spin protons changes. This movement alters the phase and frequency of the signal they emit, which in turn corrupts the corresponding k-space data points being acquired at that moment.

- Phase Shifts: Motion induces phase errors in the MR signal. In k-space, this is equivalent to a complex multiplication of the true k-space data, which results in a convolution in image space, manifesting as ghosting artifacts [2] [4].

- Inconsistent Encoding: Different k-space lines are acquired at different times. If the object moves between the acquisition of these lines, the data becomes inconsistent. Simple Fourier reconstruction interprets this as the object having multiple, discrete positions, leading to replicated "ghosts" in the image [2].

The specific appearance of the artifact is heavily influenced by the k-space trajectory (the order in which k-space is sampled) and the nature of the motion (e.g., periodic vs. sudden, slow drift vs. rapid oscillation) [2].

Characterizing Motion Artifacts: Ghosting and Blurring

Ghosting Artefacts

Ghosting appears as replicated versions of the main object or parts of it, typically displaced along the phase-encoding direction [3]. This directional preference arises because the phase-encoding gradient is applied sequentially and much more slowly than the frequency-encoding gradient. A single corruption event therefore affects a larger portion of k-space.

- The N/2 Ghost: A particularly famous and structured ghosting artifact is the Nyquist or N/2 ghost, which appears as a replica displaced by exactly half the field of view in the phase-encoding direction. It is often caused by consistent phase errors between odd and even echoes in echo-planar imaging (EPI) sequences, which can be exacerbated by motion and eddy currents from strong diffusion-sensitizing gradients [3] [4].

- Coherent vs. Incoherent Ghosting: Periodic motion (e.g., from cardiac pulsation) that is synchronized with the k-space acquisition rhythm results in coherent ghosting, where a discrete, well-defined number of replicas are visible. In contrast, random or non-periodic motion leads to incoherent ghosting, which appears as a smeared "noise-like" pattern or multiple overlapping stripes along the phase-encoding direction [2].

Blurring Artefacts

Blurring is a diffuse reduction of image sharpness, particularly affecting edges and fine details. It occurs when motion is continuous and slow (e.g., gradual relaxation of neck muscles) during the acquisition. In this scenario, the k-space data for a single object is effectively smeared across multiple spatial locations. Unlike the discrete replication in ghosting, this smearing results in a loss of high-frequency information, which manifests as a blur in the final image [2]. In sequences with interleaved k-space acquisition (e.g., T2-weighted Turbo Spin Echo), even slow drifts can produce significant ghosting rather than simple blurring, because the assumption of consistency between interleaved segments is violated [2].

Table 1: Characteristics of Key Motion-Induced Artefacts in Brain MRI

| Artefact Type | Primary Appearance | Common Causes in Brain MRI | k-Space Corruption Mechanism |

|---|---|---|---|

| Ghosting | Replicated structures along phase-encode direction [3] [2] | Periodic motion (cardiac, tremor), sudden jerks [2] | Inconsistent phase between k-space lines [2] |

| Blurring | Loss of sharpness and edge definition [2] | Slow, continuous drifts [2] | Smearing of high-frequency k-space data [2] |

| N/2 Ghost | Replica shifted by half the FOV [3] | Eddy currents from diffusion gradients, system delays [4] | Consistent phase error between odd/even echoes [4] |

| Signal Loss | Localized signal void | In-flow, spin dephasing in gradients | Corruption of signal generation, not just readout [2] |

Quantitative Assessment of Ghosting

For quality control and objective comparison, the American College of Radiology (ACR) recommends a standardized metric called "Percent Signal Ghosting" [3]. This method uses signal measurements from both the phantom and the background air to quantify the severity of ghosting artifacts.

The formula for the ghosting ratio (G) is: G = | (T + B) - (L + R) | / (2 × S) [3]

Where:

- S = mean signal intensity from a large region of interest (ROI) in the center of the phantom.

- T, B, L, R = mean signal intensities from ROIs placed in the top, bottom, left, and right background areas outside the phantom.

A lower percent ghosting value indicates better image quality, with generally accepted values being less than 1-3% for diagnostic quality [3].

Table 2: Quantitative Metrics for Motion Artefact Assessment

| Metric Name | What It Measures | Application Context |

|---|---|---|

| Percent Signal Ghosting [3] | Intensity of signal replicated in background | ACR Phantom QC; system performance |

| Framewise Displacement (FD) [1] | Magnitude of head movement between volumes | Resting-state fMRI data quality |

| Peak Signal-to-Noise Ratio (PSNR) [5] [6] | Fidelity of corrected image vs. ground truth | Validating motion correction algorithms |

| Structural Similarity Index (SSIM) [5] [6] | Perceptual image quality and structure preservation | Validating motion correction algorithms |

Experimental Protocols for Motion Artefact Analysis

Simulating Motion for Method Validation

A critical step in developing and testing motion correction algorithms is the use of simulated motion on known data sets. This provides a ground truth for comparison.

Protocol: In-Silico Motion Simulation

- Data Foundation: Start with a high-quality, motion-free brain MRI dataset, often referred to as the "ground truth" [5].

- Motion Corruption: Apply a simulated motion corruption operator to the k-space of the ground truth data. This can involve:

- Rigid-Body Transformations: Simulating rotations and translations of the head [5] [2].

- Phase Perturbations: Introducing simulated phase errors to replicate the effects of motion on the MR signal's phase [2].

- k-Space Line Displacement: Artificially shifting k-space lines to mimic inconsistencies from movement during phase encoding [2].

- Image Reconstruction: Reconstruct the image from the corrupted k-space using a standard Fourier transform (e.g., inverse FFT) to generate the motion-artifacted image for analysis [2].

Protocol for Ghosting Artefact Quantification (ACR Protocol)

For quantitative quality assurance of an MRI system, the following methodology is employed using a standardized phantom [3]:

- Phantom Imaging: Acquire an MRI scan of a large accreditation phantom, typically using a standard 2D spin-echo or gradient-echo sequence.

- Region of Interest (ROI) Placement:

- Place a large circular or rectangular ROI in the uniform center of the phantom to measure the mean signal (S).

- Place four smaller ROIs in the background air outside the phantom, positioned at the top, bottom, left, and right edges of the image (representing areas where ghosting artifacts typically appear).

- Signal Measurement: Record the mean pixel intensity from each of the five ROIs.

- Calculation: Compute the Percent Signal Ghosting using the formula provided in Section 3.3.

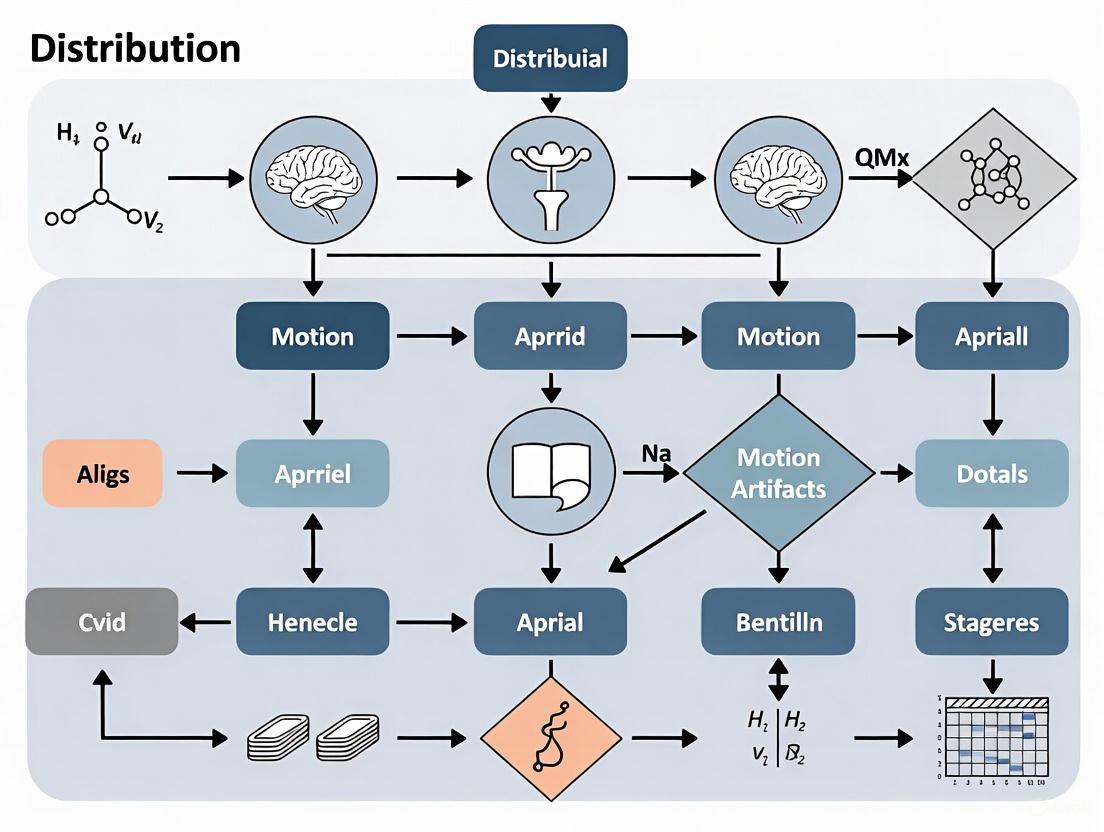

Diagram 1: Motion to Artefact Pathway. This flowchart illustrates the causal relationship between different types of subject motion, their specific disruptive effects on k-space data, and the final artifacts observed in the reconstructed MR image.

Implications for Brain Research and Corrective Methodologies

Impact on Brain-Behavior Associations

Motion artifacts pose a severe threat to the validity of brain-wide association studies (BWAS). Even after standard denoising procedures, residual motion artifacts can introduce systematic biases in functional connectivity (FC) measurements [1]. For instance, head motion has been shown to systematically decrease long-distance connectivity and increase short-range connectivity, a pattern that can be misattributed to neurological or psychiatric conditions [1]. Studies have shown that in large datasets like the Adolescent Brain Cognitive Development (ABCD) Study, a significant proportion of trait-FC relationships (42% showing overestimation, 38% showing underestimation) can be significantly impacted by motion even after denoising, highlighting the critical need for rigorous motion impact assessment in brain research [1].

Emerging Correction Strategies

The field is actively developing sophisticated methods to mitigate motion artifacts, broadly categorized into prospective and retrospective approaches.

- Prospective Motion Correction: These methods adjust the imaging sequence in real-time to track and compensate for motion during data acquisition. Examples include using optical tracking systems with reflective markers or MR-based navigators (e.g., PROMO, vNavs) to update the scanner's coordinate system [6] [7]. A recent study demonstrated the application of a retrospective technique called

alignedSENSEfor motion correction in ultralow-field portable MRI, showing clear improvements in brain image quality by jointly estimating motion and image parameters [7]. - Retrospective Motion Correction: These techniques operate on the already-acquired k-space or image data without requiring sequence modification. Traditional methods include rigid or non-rigid image registration. More recently, Deep Learning (DL) has shown remarkable promise [5] [8] [6].

- Generative Models: Models like Generative Adversarial Networks (GANs) and Denoising Diffusion Probabilistic Models (DDPM) learn a mapping between motion-corrupted and motion-free images. For example, the Res-MoCoDiff model uses a residual-guided diffusion process to correct motion artifacts efficiently, requiring only four reverse diffusion steps for reconstruction [5].

- Physics-Informed Deep Learning: These models incorporate knowledge of MRI physics, such as k-space corruption models, into the network's learning process to make corrections more robust and reduce the risk of "hallucinations" where the network creates plausible but incorrect image features [8].

Table 3: Research Reagent Solutions for Motion Artefact Investigation

| Tool / Reagent | Function / Description | Role in Motion Artefact Research |

|---|---|---|

| Digital Phantom (e.g., Modified Shepp-Logan) [2] | Computational model of a human head | Provides a known ground truth for simulating motion artifacts and validating correction algorithms. |

| ACR Large Phantom [3] | Physical standardized object for MRI QC | Enables quantitative, reproducible measurement of ghosting artifacts on a specific scanner. |

| Motion Simulation Framework [5] [6] | Software to apply synthetic motion to k-space | Generates paired datasets (corrupted/clean) for training and testing deep learning models. |

| Navigator Echoes (e.g., Nav1, Nav2) [4] | Additional MR signal acquisitions | Samples system phase information; used to correct for phase errors causing N/2 ghosting. |

| Deep Learning Models (e.g., U-net, Swin Transformer) [5] | Neural network architectures | Serves as the backbone for image-denoising models that learn to remove motion artifacts. |

The corruption of k-space data by subject motion is a fundamental problem in MRI, directly generating the ghosting and blurring artifacts that degrade image quality. In brain research, where quantitative accuracy is paramount, these artifacts are not merely nuisances but significant sources of bias that can lead to spurious scientific conclusions. A deep understanding of the mechanisms—how different motion types create specific phase inconsistencies and data irregularities in k-space—is the first step toward effective mitigation. While the problem is complex and multifaceted, the growing toolbox of prospective tracking, advanced reconstruction, and particularly deep learning-based retrospective correction offers powerful solutions. Future progress will depend on the continued development and rigorous validation of these methods, ensuring that MRI remains a reliable and precise instrument for unlocking the secrets of the brain.

In brain research, particularly in neuroimaging studies using techniques like functional Magnetic Resonance Imaging (fMRI), in-scanner motion presents a significant methodological challenge. Motion artifacts have the potential to systematically bias measures of functional connectivity, especially as in-scanner motion is frequently correlated with key variables of interest such as age, clinical status, and cognitive ability [9]. Understanding the spatial distribution of these artifacts is therefore crucial for interpreting neuroimaging data accurately. This whitepaper explores the biomechanical underpinnings of a consistently observed pattern in motion artifacts: the minimal motion near the atlas vertebra (C1) and the increased motion in frontal cortical regions. We examine the anatomical constraints, present quantitative data on motion distribution, and discuss the implications for researchers and drug development professionals working with neuroimaging data.

Biomechanical Basis of Motion Distribution

Anatomical Constraints of the Upper Cervical Spine

The spatial distribution of head motion within MRI scanners is not random but is fundamentally governed by the biomechanical properties of the cervical spine and the skull's attachment. The upper cervical spine, consisting of the atlas (C1) and axis (C2) vertebrae, forms a highly specialized complex designed for head mobility while protecting the brainstem. However, this mobility is not uniform across all directions.

- Atlanto-occipital Joint (C0-C1): This joint connects the skull to the atlas and primarily allows for flexion and extension (nodding). Its biconvex articular surfaces provide stability but limit other movements [10].

- Atlanto-axial Joint (C1-C2): This joint is specialized for axial rotation (head turning), contributing significantly to the overall rotational capacity of the head [10].

The biomechanical principle of coupling explains why motion is not isolated to a single plane. Coupling refers to the phenomenon where rotation or translation about one axis is consistently associated with simultaneous motion about another axis [10]. In the subaxial cervical spine (below C2), axial rotation inevitably couples with lateral bending in the same direction (ipsilateral coupling), creating complex movement patterns that propagate motion anteriorly.

The Composite Tension Plus (CT+) Model

Cortical expansion and folding, while occurring over a much longer timescale, are also governed by biomechanical principles that inform our understanding of structural constraints. The Composite Tension Plus (CT+) model posits that mechanical tension, mediated by the cytoskeleton of cells, plays a key role in morphogenesis [11]. This model, an update of the earlier Differential Expansion Sandwich Plus (DES+) model, incorporates ten distinct mechanisms to explain cortical expansion and folding. While not directly explaining short-term head motion, the CT+ model underscores that the brain is not a passive entity but possesses its own mechanical properties and internal tensions that may interact with externally imposed motions.

Quantitative Analysis of Motion Distribution

Empirical Evidence from Functional Connectivity Studies

Research specifically investigating motion artifacts in functional connectivity MRI (fcMRI) has quantified the spatial gradient of head motion. Studies using voxel-specific measures of frame displacement (FD) have consistently demonstrated that motion is minimal near the atlas vertebrae and increases with distance from this anchoring point [9]. The following table summarizes key quantitative findings from this research:

Table 1: Quantitative Spatial Distribution of In-Scanner Head Motion

| Brain Region | Relative Motion Level | Primary Direction of Motion | Correlation with Global FD |

|---|---|---|---|

| Near Atlas Vertebrae | Minimal | Constrained by biomechanics | High (r ~0.89) [9] |

| Frontal Cortex | High | Y-axis rotation (nodding) [9] | High (r ~0.89) [9] |

| Tissue Boundaries | Signal Artifacts | Partial volume effects [9] | Not specified |

The high correlation (r = 0.89) between voxel-specific motion measures and global Frame Displacement (FD) indicates that motion, while spatially heterogeneous, is a whole-brain phenomenon with a consistent pattern [9]. The finding that frontal cortex motion is largely driven by y-axis rotation (the nodding movement) directly links the biomechanical capability of the neck to the resulting artifact pattern in the brain image.

Biomechanical Properties of Spinal Segments

The varying range of motion (ROM) across different spinal segments explains why motion is not uniform. The following table summarizes the biomechanical properties of key cervical segments relevant to in-scanner head movement:

Table 2: Biomechanical Properties of Upper Cervical Spinal Segments

| Spinal Segment | Primary Motions | Coupled Motions | Biomechanical Constraints |

|---|---|---|---|

| C0-C1 (Atlanto-occipital) | Flexion/Extension | Contralateral lateral bending during axial rotation [10] | Biconvex articular surfaces |

| C1-C2 (Atlanto-axial) | Axial Rotation | Lateral flexion to opposite side [10] | Transverse ligament, alar ligaments |

| Subaxial Cervical (C2-C3) | Lateral Bending, Flexion/Extension | Ipsilateral axial rotation during lateral bending [10] | Facet joint orientation, ligamentous constraints |

Experimental Protocols for Motion Assessment

Measuring In-Scanner Motion

The standard methodology for quantifying head motion in fcMRI studies involves deriving estimates from the functional time series itself during image preprocessing [9].

- Image Realignment: Each volume in the fMRI time series is rigidly realigned to a reference volume.

- Parameter Estimation: This realignment process generates six realignment parameters (RPs) for each volume: three translations (x, y, z) and three rotations (pitch, roll, yaw).

- Frame Displacement (FD) Calculation: The RPs are summarized into a concise index of volume-to-volume motion. A common method, such as the one implemented in FSL, calculates FD using a matrix root mean squared formulation derived by Jenkinson et al. [9].

- Voxel-Specific FD: For spatial distribution analysis, voxel-specific measures of FD can be computed directly from the image header to understand regional variations [9].

Finite Element Modeling in Biomechanics

Finite element (FE) modeling is a computational technique used to simulate and analyze the biomechanics of complex structures like the spine. The following workflow outlines a typical FE approach, as used in studies of spinal fixation techniques [12]:

Diagram 1: Finite Element Model Workflow

This methodology allows researchers to simulate the Range of Motion (ROM) and stress distribution across spinal segments under various loading conditions (flexion, extension, lateral bending, axial rotation) that mimic in-scanner movements [12].

The Scientist's Toolkit: Research Reagents & Materials

Table 3: Essential Resources for Motion Biomechanics and Artifact Research

| Resource Category | Specific Tool / Reagent | Function / Application |

|---|---|---|

| Imaging & Analysis Software | FSL (FMRIB Software Library) [9] | Calculating Frame Displacement (FD) from fMRI data. |

| Simpleware, Geomagic, Hypermesh [12] | Pipeline for creating and meshing finite element models from CT data. | |

| Abaqus [12] | Performing finite element analysis on biomechanical models. | |

| Computational Models | Finite Element Model of C0-C3 [12] | Simulating biomechanics and range of motion in the upper cervical spine. |

| Composite Tension Plus (CT+) Model [11] | Modeling long-term, tension-based cortical expansion and folding. | |

| Deep Learning Models | Res-MoCoDiff [5] | Efficient diffusion model for MRI motion artifact correction. |

| JDAC Framework [13] | Iterative learning framework for joint image denoising and motion artifact correction. |

Implications for Neuroimaging Research and Drug Development

The predictable spatial distribution of motion artifacts has profound implications for the interpretation of neuroimaging data in both basic research and clinical trials.

- Systematic Bias: Because motion is often correlated with clinical status (e.g., greater in pediatric, elderly, or neurologically impaired populations), it can introduce systematic bias into group comparisons [9]. The frontal cortex, a region critical for executive function, decision-making, and social cognition, is particularly vulnerable to such artifact-induced bias.

- Denoising Strategies: Understanding that motion follows a biomechanically determined spatial gradient is essential for developing and selecting effective denoising strategies. Techniques like global signal regression (GSR) or censoring (removing high-motion volumes) must account for this non-uniformity [9].

- Advanced Correction Techniques: Emerging deep learning methods, such as Res-MoCoDiff and the JDAC framework, show promise in retrospectively correcting for motion artifacts [5] [13]. These models can be enhanced by incorporating biomechanical priors regarding the expected spatial pattern of motion.

The spatial distribution of in-scanner head motion, characterized by minimal movement near the atlas and increasing motion in frontal regions, is a direct consequence of the biomechanical constraints imposed by the upper cervical spine. The principles of spinal coupling and the specific ranges of motion allowed by the atlanto-occipital and atlanto-axial joints create a predictable gradient of motion that manifests as a corresponding spatial pattern of artifacts in neuroimaging data. For researchers and drug development professionals, acknowledging and accounting for this biomechanically determined artifact profile is not merely a technical consideration but a fundamental requirement for ensuring the validity and interpretability of neuroimaging findings. Future work integrating quantitative biomechanical modeling with advanced artifact correction algorithms presents a promising path forward for mitigating this persistent challenge.

In brain magnetic resonance imaging (MRI), patient motion remains a significant challenge that compromises diagnostic quality and research validity. Motion during acquisition generates distinct spatial artifacts—primarily ghosting, blurring, and signal loss—that vary in appearance and severity across different brain regions. These spatial signatures are not random; their manifestation depends on complex interactions between head motion, k-space sampling strategies, and the unique physiological and structural characteristics of specific brain areas. Understanding these patterns is crucial for researchers and drug development professionals who rely on high-quality neuroimaging data to detect subtle longitudinal changes in brain structure and function, particularly in clinical trials for neurodegenerative diseases.

This technical guide explores the spatial distribution of motion artifacts in brain MRI, providing a detailed analysis of their underlying mechanisms, regional susceptibility, and advanced correction methodologies. By framing this discussion within the broader context of a thesis on the spatial distribution of motion artifacts in brain research, we aim to equip scientists with the knowledge and tools necessary to identify, characterize, and mitigate these confounding factors in neuroimaging studies.

Fundamental Mechanisms Linking Motion to Artifact Formation

K-Space Sampling and Motion Interactions

The appearance of motion artifacts in reconstructed MR images is fundamentally determined by how motion states interact with the specific k-space sampling trajectory used during acquisition. K-space represents the raw data of an MRI scan before Fourier transformation into the final image, with the center of k-space determining image contrast and the periphery determining spatial resolution. When motion occurs during data acquisition, it disrupts the phase consistency of the k-space signal, creating inconsistencies that manifest as artifacts in the image domain.

Research by Schauman et al. demonstrates that the severity and nature of motion artifacts depend heavily on the k-space distribution of motion states [14]. Through motion-sampling plots that map motion states directly onto k-space, they identified nine distinct categories of motion artifacts. Their work revealed that artifacts are especially pronounced when motion discontinuities occur near the center of k-space or align with slow phase-encoding directions [14]. This explains why certain motion patterns produce severe artifacts while others have minimal impact on image quality.

Physics of Motion-Induced Signal Changes

The interaction between motion and MRI physics extends beyond simple displacement. Rigid-body head motion in the presence of magnetic field inhomogeneities introduces additional complexity through motion-induced phase shifts. The complete forward model for motion-affected k-space data can be represented as:

Where the motion transform U_t encompasses not only rotation (R_t) and translation (T_t) but also phase shifts induced by position-dependent B0 inhomogeneities (ω_t) that increase with echo time (TE_n):

This formulation explains why T2*-weighted sequences are particularly vulnerable to motion, as the impact of motion-induced B0 inhomogeneity changes increases with longer echo times [15]. The resulting signal loss can be misinterpreted as pathological findings in susceptibility-weighted imaging or quantitative BOLD applications, potentially compromising research conclusions in functional and vascular brain imaging studies.

Regional Susceptibility to Motion Artifacts

Different brain regions exhibit varying susceptibility to motion artifacts due to their unique structural characteristics, proximity to tissue interfaces, and functional roles. The table below summarizes the spatial signatures of motion artifacts across key brain areas.

Table 1: Regional Vulnerability to Motion Artifacts in Brain MRI

| Brain Region | Ghosting Patterns | Blurring Effects | Signal Loss | Primary Causes |

|---|---|---|---|---|

| Frontal Cortex | Moderate ghosting in phase-encode direction | Mild to moderate blurring of gyral patterns | Minimal | Proximity to sinuses, susceptibility gradients |

| Brainstem | Severe ghosting along multiple axes | Significant blurring of fine structures | Pronounced in T2*-weighted | Pulsatile motion, proximity to bone |

| Hippocampal Formation | Direction-specific ghosting | High impact on subfield delineation | Moderate | Complex geometry, pulsation from adjacent vessels |

| Corpus Callosum | Anisotropic ghosting patterns | White matter boundary blurring | Minimal in conventional, severe in diffusion | Central location, fiber orientation dependence |

| Visual Cortex | Posterior-anterior ghosting | Moderate impact on layer differentiation | Calcarine fissure specific | Tissue-air interfaces near occipital pole |

| Cerebellum | Multi-directional ghosting | Severe folia pattern degradation | Moderate to severe | Continuous physiological motion, posterior fossa anatomy |

The medial temporal lobe, particularly hippocampal subregions, demonstrates unique vulnerability due to its complex architecture and proximity to cerebrospinal fluid spaces. Studies investigating functional connectivity in these regions note that motion artifacts can significantly alter apparent connectivity measures, potentially confounding research on cognitive dysfunction [16]. Similarly, the brainstem and cerebellum are highly susceptible to both cardiac and respiratory-induced motion, often exhibiting severe blurring that obscures fine structural details essential for neurodegenerative disease monitoring.

Research by Giocomo et al. reveals that spatial memory networks centered on the medial entorhinal cortex show age-related instability in grid cell function, which may compound motion-related artifacts in functional studies of navigation and memory [17]. This intersection of biological vulnerability and technical artifact presents particular challenges for studies of aging and neurodegeneration where both motion and biological signals coexist.

Methodologies for Characterizing Motion Artifacts

Motion-Sampling Plot Framework

Schauman et al. introduced motion-sampling plots as a systematic framework for predicting and classifying motion artifacts based on k-space sampling properties [14]. This methodology enables researchers to map motion states directly onto k-space coordinates and assess their relationship to artifact appearance. The experimental protocol involves:

- Acquisition of 3D MRI data using multiple sampling trajectories (Cartesian, stack-of-stars, kooshball)

- Introduction of controlled motion through healthy volunteers mimicking patient motion while wearing real-time pose-tracking devices

- Systematic variation of motion direction, magnitude, and timing relative to k-space sampling

- Generation of motion-sampling plots that visualize the relationship between motion states and k-space coordinates

This approach has revealed that the k-space distribution of motion states is more predictive of artifact appearance than motion magnitude alone, providing researchers with a powerful tool for optimizing acquisition strategies for specific brain regions [14].

Physics-Informed Motion Detection

PHIMO+ represents an advanced methodology for T2* quantification that leverages physics principles to detect motion-corrupted k-space lines [15]. The technique employs a physics-informed loss function based on the empirical correlation coefficient between reconstructed signal intensities and mono-exponentially fitted intensities:

This loss function capitalizes on motion-related B0 inhomogeneity changes that disrupt the expected mono-exponential signal decay in multi-echo GRE sequences [15]. The experimental workflow involves:

- Acquisition of multi-echo GRE data for T2* quantification

- Self-supervised optimization of k-space line exclusion masks based on physics loss

- Data-consistent reconstruction using only motion-free k-space lines

- Central k-space preservation by assuming minimal change in mean image intensity despite motion

This methodology is particularly valuable for mqBOLD applications where accurate T2* quantification is essential for assessing oxygen metabolism, especially in deep gray matter structures susceptible to motion-induced signal loss [15].

Table 2: Advanced Motion Correction Methods in Brain MRI

| Method | Underlying Principle | Best-Suited Brain Regions | Limitations |

|---|---|---|---|

| JDAC Framework [18] | Joint iterative denoising and artifact correction | Whole-brain, especially gray-white matter interfaces | Requires extensive training data |

| PHIMO+ [15] | Physics-informed k-space line detection and exclusion | Brainstem, basal ganglia (T2*-weighted) | Limited to rigid-body motion |

| DIMA [19] | Diffusion models for unsupervised artifact correction | Cortical regions, hippocampal formation | Computational intensity |

| Leverage-Score Sampling [20] | Functional connectome feature selection | Large-scale networks, connectivity studies | Restricted to fMRI applications |

Experimental Protocols for Motion Artifact Characterization

Protocol for Spatial Mapping of Motion Artifacts

Comprehensive characterization of motion artifact spatial signatures requires a standardized experimental approach:

Participant Selection: Include healthy volunteers capable of mimicking controlled head movements during scanning. Sample size justification should consider expected effect sizes for motion-artifact correlations.

Motion Simulation: Implement both continuous (slow drift) and abrupt (sudden jerks) motion patterns using real-time pose tracking [14]. Motion parameters should include translation (x, y, z) and rotation (pitch, roll, yaw) across a range of magnitudes.

Multi-Protocol Acquisition: Acquire data using:

- T1-weighted and T2-weighted structural sequences

- T2*-weighted GRE for susceptibility-weighted contrast

- Diffusion-weighted imaging with multiple b-values

- Resting-state fMRI for functional connectivity assessment

Reference Standard: Include motion-free scans for each participant as a baseline for artifact quantification.

Artifact Assessment: Utilize quantitative metrics including:

- Image intensity variance in homogeneous regions

- Edge sharpness at tissue boundaries

- Signal-to-fluctuation noise ratio in time-series data

- Functional connectivity stability in network hubs

Protocol for Validation of Correction Algorithms

Robust validation of motion correction methods requires carefully designed experiments:

Dataset Curation: Utilize public datasets with paired motion-corrupted and motion-free data where available [21]. The Diff5T dataset provides valuable k-space data for method development and benchmarking [21].

Performance Metrics: Evaluate using quantitative measures including:

- Peak signal-to-noise ratio (PSNR)

- Structural similarity index (SSIM)

- Mean squared error (MSE) in region-of-interest analyses

- Anatomical fidelity using validated segmentation pipelines

Regional Analysis: Assess performance separately for:

- Cortical gray matter

- White matter tracts

- Deep gray matter structures

- Posterior fossa contents

Clinical Validation: Correlate motion-corrected image quality with quantitative biomarkers relevant to drug development, such as hippocampal volume in Alzheimer's trials or lesion load in multiple sclerosis studies.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Motion Artifact Research

| Resource | Function | Example Applications |

|---|---|---|

| Diff5T Dataset [21] | 5.0 Tesla diffusion MRI with raw k-space data | Method benchmarking, artifact simulation |

| Cam-CAN Dataset [20] | Multi-modal aging study with functional connectomes | Aging-related motion vulnerability studies |

| Motion-Sampling Plots [14] | Framework for predicting artifact appearance | Sequence optimization, protocol design |

| JDAC Framework [18] | Joint denoising and artifact correction | Processing of low-quality clinical scans |

| PHIMO+ Algorithm [15] | Physics-informed motion correction for T2* mapping | Quantitative susceptibility mapping, mqBOLD |

| DIMA Framework [19] | Unsupervised correction using diffusion models | Clinical data with no motion-free references |

| Leverage-Score Feature Selection [20] | Identification of motion-resistant connectome features | Functional connectivity studies in aging |

Visualization of Motion Artifact Characterization Workflows

Motion Artifact Characterization and Correction Workflow

Computational Frameworks for Motion Correction

The spatial signatures of motion artifacts in brain MRI—ghosting, blurring, and signal loss—follow predictable patterns across brain regions based on interactions between motion parameters, k-space sampling strategies, and regional anatomical characteristics. Understanding these patterns is essential for researchers and drug development professionals seeking to maximize data quality and validity in neuroimaging studies.

Methodologies such as motion-sampling plots, physics-informed motion detection, and joint denoising-artifact correction frameworks provide powerful tools for characterizing and mitigating these artifacts. By incorporating these approaches into standardized experimental protocols and leveraging emerging datasets and algorithms, the neuroscience community can significantly improve the reliability of neuroimaging biomarkers in both basic research and clinical trials.

Future directions in this field include the development of region-specific correction algorithms, real-time motion detection and compensation systems, and standardized quality control pipelines that account for the spatial distribution of motion artifacts. Such advances will be particularly valuable for longitudinal studies tracking subtle changes in brain structure and function, where consistent image quality is essential for detecting treatment effects.

Motion artifacts represent a significant source of noise in neuroimaging, critically impacting the fidelity of data acquisition and interpretation in brain research. These artifacts manifest in distinct patterns across different imaging modalities, each with unique implications for spatial distribution analysis and functional connectivity mapping. Understanding these modality-specific manifestations is paramount for developing effective correction strategies and ensuring the validity of neuroscientific findings, particularly in clinical and drug development contexts where accurate spatial localization of brain activity is essential.

The spatial distribution of motion artifacts is intrinsically linked to the fundamental physical principles underlying each imaging technology. Functional Magnetic Resonance Imaging (fMRI) measures the blood-oxygen-level-dependent (BOLD) signal, which is highly sensitive to head displacement due to the requirement for precise magnetic field homogeneity [22]. Functional Near-Infrared Spectroscopy (fNIRS) detects changes in hemoglobin concentrations through optode-scalp coupling, where motion affects signal quality through optode displacement and altered light transmission paths [23] [24]. Structural MRI, while not measuring dynamic function, suffers from spatial blurring and reconstruction artifacts that compromise anatomical accuracy [14] [18]. These differential mechanisms result in characteristic artifact patterns that necessitate tailored correction approaches for each modality, particularly in research focusing on the spatial organization of brain networks.

Fundamental Principles and Artifact Generation Mechanisms

Physical Basis of Artifact Formation

The generation of motion artifacts in neuroimaging modalities stems from their distinct physical measurement principles. fMRI relies on detecting minute changes in magnetic susceptibility caused by variations in blood oxygenation. Head motion disrupts the static magnetic field homogeneity, leading to spin history effects and phase inconsistencies during k-space sampling [14]. The spatial manifestation of these artifacts depends critically on the interaction between the motion trajectory and k-space sampling pattern, with motion discontinuities near the center of k-space or aligned with slow phase-encoding directions producing particularly severe artifacts [14].

fNIRS operates on different principles, utilizing near-infrared light (650-1000 nm wavelengths) to measure concentration changes in oxygenated (HbO) and deoxygenated hemoglobin (HbR) in cortical tissue [23]. Motion artifacts in fNIRS primarily occur through two mechanisms: optode displacement relative to the scalp, which alters the light-coupling efficiency, and changes in pressure on the scalp, which modulates peripheral blood flow in superficial tissues [24]. Unlike fMRI, fNIRS artifacts are typically channel-specific rather than affecting the entire image, though their impact can be substantial due to the relatively small signal changes associated with neural activity.

Characterization of Motion Artifact Types

Motion artifacts can be categorized based on their temporal characteristics and spatial extent. In fNIRS, researchers have classified artifacts into four distinct types: Type A (sharp spikes with standard deviation >50 from mean within 1 second), Type B (peaks with standard deviation >100 lasting 1-5 seconds), Type C (gentle slopes with standard deviation >300 over 5-30 seconds), and Type D (slow baseline shifts >30 seconds with standard deviation >500) [23]. This classification provides a framework for developing and evaluating targeted correction algorithms.

In MRI and fMRI, artifacts are often characterized by their appearance in reconstructed images, including ghosting (replication of structures along the phase-encoding direction), spin-history effects (signal loss due to intra-slice motion), and blurring (loss of spatial resolution) [14] [18]. The specific manifestation depends on the sampling strategy (e.g., Cartesian, stack-of-stars, or kooshball trajectories) and the nature of the motion, with recent research identifying nine distinct categories of motion artifacts in 3D MRI [14].

Table 1: Comparative Characteristics of Motion Artifacts Across Neuroimaging Modalities

| Characteristic | fMRI | fNIRS | Structural MRI |

|---|---|---|---|

| Primary Mechanism | Magnetic field inhomogeneity, spin history effects | Optode-scalp decoupling, pressure changes | K-space inconsistencies, sampling errors |

| Spatial Pattern | Whole-image distortions, ghosting along phase-encode direction | Channel-specific artifacts, superficial cortical regions | Volumetric blurring, global resolution loss |

| Temporal Classes | Low-frequency drift, sudden jumps | Type A (spikes), B (peaks), C (slopes), D (baseline shifts) | Transient vs. persistent motion effects |

| Depth Sensitivity | Whole-brain (cortical and subcortical) | Superficial cortex (1-1.5 cm depth) | Whole-brain |

| Sampling Interaction | Strong dependence on k-space trajectory | Minimal sampling rate dependence | Strong dependence on k-space trajectory |

Modality-Specific Artifact Profiles and Spatial Representations

fMRI Artifact Patterns and Spatial Distribution

Functional MRI exhibits distinctive motion artifact patterns that directly impact spatial localization accuracy. The blood-oxygen-level-dependent (BOLD) contrast mechanism underlying fMRI is particularly vulnerable to motion-induced magnetic field fluctuations. Rigid head motion interacts with k-space sampling strategies to produce characteristic artifacts whose appearance can be predicted using motion-sampling plots [14]. These artifacts are especially pronounced when motion discontinuities occur near the center of k-space or align with slow phase-encoding directions [14].

The spatial specificity of fMRI is further complicated by the phenomenon of spatial misregistration between functional scans and anatomical reference images. Even submillimeter movements can cause significant signal variations that mimic true neural activation patterns, particularly in regions near tissue boundaries with strong magnetic susceptibility gradients (e.g., orbitofrontal cortex, temporal lobes) [22]. Recent studies utilizing simultaneous fNIRS-fMRI have revealed that motion artifacts in fMRI can reduce the positive predictive value for detecting true brain activation to as low as 41.5% in within-subject analyses [25], highlighting the critical impact of motion on spatial accuracy.

fNIRS Artifact Patterns and Spatial Considerations

Functional NIRS exhibits a different artifact profile dominated by signal spikes and baseline shifts resulting from optode movement relative to the scalp. The spatial distribution of fNIRS artifacts is inherently linked to probe design and placement. Unlike fMRI, where motion affects the entire image, fNIRS artifacts typically impact specific channels, though their effects can propagate through analysis pipelines [23] [24]. The limited penetration depth of near-infrared light (approximately 1-1.5 cm) confines fNIRS measurements to superficial cortical regions, making them particularly vulnerable to systemic physiological noise from scalp blood flow that can be motion-induced [26].

The reproducibility of fNIRS spatial measurements is significantly affected by variations in optode placement across sessions. Studies have demonstrated that increased shifts in optode position correlate with reduced spatial overlap of detected activation across multiple sessions [27]. This has important implications for longitudinal studies and clinical applications where consistent spatial localization is essential. Research has shown that oxyhemoglobin (HbO) measurements are significantly more reproducible across sessions than deoxyhemoglobin (HbR) measurements [27], suggesting that HbO may be preferable for studies requiring high spatial reliability.

Structural MRI Artifact Patterns

Structural MRI, while not measuring dynamic function, faces unique motion artifact challenges that compromise anatomical accuracy. Motion during acquisition causes k-space inconsistencies that manifest as blurring, ghosting, and resolution loss in reconstructed images [14] [18]. The specific appearance of these artifacts depends on the interaction between the motion trajectory and the k-space sampling pattern, with certain sampling strategies (e.g., Cartesian) being more vulnerable to particular motion types than others (e.g., radial or spiral trajectories) [14].

In 3D MRI acquisitions, motion artifacts demonstrate complex spatial dependencies based on the direction and timing of movement relative to the phase-encoding sequence. Recent research has shown that the severity and nature of artifacts depend heavily on the k-space distribution of motion states, which can be visualized and interpreted using motion-sampling plots [14]. These artifacts are particularly problematic in clinical populations who may have difficulty remaining still, such as children, elderly patients, or individuals with neurological disorders affecting motor control.

Table 2: Quantitative Impact of Motion on Signal Quality and Spatial Accuracy

| Metric | fMRI | fNIRS | Structural MRI |

|---|---|---|---|

| Temporal Resolution | 0.3-2 Hz [22] | Up to 10 Hz (typically 5-10 Hz) [24] | N/A (structural acquisition) |

| Spatial Resolution | 1-3 mm (high) [22] | 1-3 cm (moderate) [28] | 0.5-1 mm (very high) |

| Spatial Overlap with Ground Truth | 47.25% within-subject [25] | Varies with optode placement consistency [27] | Qualitative assessment of anatomical accuracy |

| Positive Predictive Value | 41.5% within-subject [25] | Dependent on artifact correction method [29] | N/A |

| Reproducibility | High with motion correction | HbO more reproducible than HbR [27] | High with motion correction |

Methodologies for Motion Artifact Correction

fMRI Motion Correction Techniques

fMRI motion correction primarily relies on image registration algorithms that realign sequential volumes to a reference image. Tools such as FSL MCFLIRT and AFNI 3dVolReg calculate rigid-body transformation parameters (three translations and three rotations) to minimize variance between volumes [28]. More advanced approaches include prospective motion correction, which adjusts the imaging field of view in real-time based on head tracking, and sampling strategies less sensitive to motion, such as multiband acquisitions and radial sampling trajectories [14].

Recent innovations have explored the use of deep learning approaches for joint image denoising and motion artifact correction. The Joint Image Denoising and Artifact Correction framework employs iterative learning with an adaptive denoising model and an anti-artifact model to progressively improve image quality [18]. This approach incorporates a novel gradient-based loss function designed to maintain the integrity of brain anatomy throughout the correction process, addressing the limitation of traditional methods that often treat denoising and motion correction as separate tasks [18].

fNIRS Motion Correction Algorithms

Multiple algorithmic approaches have been developed to address motion artifacts in fNIRS data, each with distinct strengths and limitations. Wavelet-based methods utilize multiscale decomposition to identify and remove artifact components in the wavelet domain, demonstrating particular efficacy for spike artifacts [23] [29]. Temporal Derivative Distribution Repair employs a robust statistical approach to identify and downweight outliers in the temporal derivative of fNIRS signals, making it effective for both spike and baseline shift artifacts [29].

Other commonly used techniques include spline interpolation (MARA), which identifies artifact segments and replaces them with spline interpolants [29], principal component analysis, which removes components with variance patterns characteristic of motion [23], and correlation-based signal improvement, which exploits the negative correlation between HbO and HbR to identify and remove motion artifacts [29]. Comparative studies have found that the moving average and wavelet methods yield the best outcomes for pediatric data [23], while TDDR and wavelet filtering are most effective for functional connectivity analysis [29].

Multimodal Integration for Enhanced Correction

The integration of multiple neuroimaging modalities has enabled innovative approaches to motion artifact correction. Simultaneous fNIRS-fMRI recordings allow the use of high-temporal-resolution motion information derived from fMRI to correct fNIRS data. The AMARA-fMRI algorithm reconstructs high-resolution motion traces from slice-level acquisition times in simultaneous multislice fMRI, enabling effective motion correction in fNIRS without requiring MR-compatible accelerometers [28].

This multimodal approach capitalizes on the complementary strengths of each technique - fMRI's high spatial resolution and fNIRS' superior temporal resolution and portability [22]. Studies have demonstrated that such integration improves the detection of activation in deoxyhemoglobin and shows high overlap with fMRI activation when considering both HbO and HbR signals [28]. The spatial correspondence between fNIRS and fMRI detection of task-related activity has been shown to be good in terms of true positive rate, with fNIRS overlapping up to 68% of fMRI activation for group analyses [25].

Experimental Protocols for Artifact Assessment

Protocol Design for Motion Artifact Characterization

Systematic assessment of motion artifacts requires carefully designed experimental protocols that incorporate controlled motion conditions. For fNIRS studies, protocols should include task paradigms known to elicit motion, such as motor execution tasks or language production tasks, particularly in challenging populations like children [23]. These should be combined with periods of rest to establish baseline signal characteristics. The use of structured motion artifact classification (Types A-D) enables standardized quantification and comparison across studies [23].

For fMRI studies, protocols should incorporate various k-space sampling strategies (Cartesian, stack-of-stars, kooshball) to evaluate their interaction with different motion types [14]. The use of real-time pose tracking devices during acquisition allows precise correlation between motion parameters and artifact appearance [14]. Experimental designs should include both task-based and resting-state conditions to evaluate motion effects on both activation maps and functional connectivity measures.

Validation Metrics and Performance Assessment

Rigorous validation of motion correction methods requires multiple complementary metrics. For quantitative comparison, the receiver operating characteristic analysis provides sensitivity-specificity curves that evaluate the ability of correction methods to preserve true signals while removing artifacts [29]. Spatial overlap metrics quantify the correspondence between activation maps or functional networks derived from corrected data and ground truth references [25].

Additional important metrics include temporal signal-to-noise ratio, which measures the stability of the signal over time, and graph theory metrics (e.g., network degree, clustering coefficient) for evaluating the impact on functional connectivity patterns [29]. Reproducibility across multiple sessions provides a crucial measure of reliability for longitudinal studies [27]. For structural MRI, qualitative assessment by expert radiologists remains an important validation approach alongside quantitative metrics [18].

Table 3: Performance Comparison of fNIRS Motion Correction Algorithms

| Algorithm | Best For | Advantages | Limitations |

|---|---|---|---|

| Wavelet Filtering | Spike artifacts (Type A), functional connectivity analysis | Automatic, no additional hardware required | Can exacerbate baseline shifts |

| Temporal Derivative Distribution Repair | Mixed artifact types, online processing | Robust statistical foundation, real-time capability | Assumptions about derivative distribution |

| Spline Interpolation | Isolated motion artifacts | Preserves signal shape outside artifacts | Requires accurate artifact detection |

| Principal Component Analysis | Global artifact patterns | Effective for structured noise | May remove neural signal in low-channel setups |

| Correlation-Based Signal Improvement | Co-occurring HbO/HbR artifacts | Physiologically motivated model | Assumes perfect negative HbO-HbR correlation |

| Kalman Filtering | Progressive motion trends | Model-based approach | Requires parameter tuning |

The Scientist's Toolkit: Essential Research Reagents and Materials

Homer2 Software Package: A comprehensive MATLAB-based analysis suite for fNIRS data preprocessing, including implementation of multiple motion correction algorithms such as wavelet filtering and spline interpolation [23].

TechEN-CW6 fNIRS System: A continuous-wave fNIRS system operating at 690 and 830 nm wavelengths, suitable for pediatric and adult studies with configurable source-detector arrangements [23].

NIRSport2 Portable fNIRS System: A continuous-wave portable fNIRS device with 16 LED light sources (760 and 850 nm) and 15 silicon photodiode detectors, enabling flexible experimental setups outside laboratory environments [26].

Multimodal fNIRS-fMRI Probes: MR-compatible optode assemblies integrated into phased-array RF coils, allowing simultaneous acquisition while maintaining precise alignment between modalities [28].

FSL MCFLIRT: FMRIB Software Library module for motion correction in fMRI time-series data using rigid-body transformation with trilinear interpolation [28].

BrainVoyager QX: Comprehensive software package for fMRI analysis including preprocessing, statistical analysis, and visualization, with specialized tools for motion detection and correction [26].

ADNI Dataset: Large-scale public database of MRI scans including T1-weighted structural images used for training and validation of denoising and motion correction algorithms [18].

Real-Time Pose Tracking Systems: MR-compatible motion tracking devices that provide continuous head position data during scanning for prospective motion correction and artifact analysis [14].

Motion artifacts manifest in fundamentally distinct patterns across MRI, fMRI, and fNIRS neuroimaging modalities, each requiring specialized correction approaches tailored to their specific mechanisms and spatial characteristics. The spatial distribution of these artifacts is inextricably linked to the underlying physical principles of each technology, from k-space sampling interactions in fMRI to optode-scalp coupling in fNIRS. Effective artifact mitigation necessitates a comprehensive understanding of these modality-specific manifestations, particularly for research focusing on the spatial organization of brain function.

Advancements in motion correction increasingly leverage multimodal integration and machine learning approaches that jointly address multiple artifact types while preserving neural signals. The development of standardized evaluation metrics and experimental protocols for assessing correction efficacy remains crucial for validating these methods across diverse populations and experimental contexts. As neuroimaging continues to expand into more naturalistic settings and clinical applications, robust motion artifact management will play an increasingly critical role in ensuring the spatial accuracy and reliability of neuroscientific findings.

From Proactive to Post-Hoc: Cutting-Edge Techniques for Motion Artifact Correction

Motion artifacts represent a fundamental challenge in non-invasive brain imaging, significantly impacting the data quality and validity of neuroscientific findings. These artifacts arise from subject movement during data acquisition, causing a range of deleterious effects from signal distortion to complete data loss. In the context of studying the spatial distribution of neural activity, motion introduces systematic biases that can obscure true brain network dynamics and lead to spurious interpretations of functional specialization. The problem is particularly acute in clinical populations where motion control is difficult, potentially confounding research on neurological and psychiatric disorders. Understanding and mitigating these artifacts is therefore not merely a technical exercise but a prerequisite for accurate brain mapping. Hardware-based solutions—including accelerometers, inertial measurement units (IMUs), and optical tracking systems—provide a direct means to quantify and correct for motion effects by capturing head movement with high temporal precision. This whitepaper examines these critical technologies, their implementation methodologies, and their role in preserving the spatial fidelity of brain activity maps.

The Spatial Distribution of Motion Artifacts: A Brain Research Perspective

Motion artifacts are not uniform in their impact across the brain; their spatial distribution is influenced by imaging modality, hardware configuration, and neuroanatomy. In functional near-infrared spectroscopy (fNIRS), optode-skin decoupling caused by head movements produces signal anomalies that vary in amplitude and morphology—from rapid spikes to slow baseline shifts—depending on the nature and severity of motion [30]. These artifacts can create false patterns of functional connectivity if uncorrected, particularly in resting-state studies where subtle correlations define network architecture.

For magnetic resonance imaging (MRI), motion disrupts the sequential k-space data acquisition, leading to ghosting, blurring, and signal loss in reconstructed images [2]. The appearance and location of these artifacts depend on the interaction between the motion vector, the pulse sequence, and k-space trajectory. Critically, motion-induced signal changes can systematically vary across brain regions, potentially mimicking disease-related atrophy or activation patterns [31]. This is especially problematic for large-scale brain initiatives and clinical data warehouses, where automated analysis of images with varying motion contamination can introduce profound biases [31].

Table 1: Classification and Spatial Manifestation of Motion Artifacts in Brain Imaging

| Artifact Type | Primary Cause | Spatial Manifestation in Brain Data | Commonly Affected Brain Regions |

|---|---|---|---|

| Spikes (fNIRS) | Sudden optode decoupling | High-amplitude, transient signal deviations | Regions under mobile optodes (prefrontal, motor cortex) |

| Baseline Shifts (fNIRS) | Slow optode displacement | Low-frequency signal drift | All measured regions, particularly problematic for long channels |

| Ghosting (MRI) | Periodic motion | Replicated structures along phase-encode direction | Cortical boundaries, high-contrast interfaces |

| Blurring (MRI) | Continuous motion | Loss of structural definition and edge contrast | Fine structures (hippocampus, brainstem) |

| Signal Loss (MRI) | Spin dephasing | Focal signal dropout | Tissue-bone interfaces, medial temporal lobe |

Understanding this spatial dimension is crucial for developing targeted correction strategies. Hardware-based motion tracking provides the essential kinematic data needed to disambiguate true neural signals from motion-induced artifacts, thereby preserving the topographic integrity of brain maps.

Core Hardware Technologies for Motion Tracking

Accelerometers and Inertial Measurement Units (IMUs)

Operating Principle: Accelerometers measure proper acceleration along one or more axes, while IMUs typically combine triaxial accelerometers, gyroscopes (measuring angular velocity), and magnetometers (sensing orientation relative to Earth's magnetic field) to provide comprehensive motion data [32] [33]. Through sensor fusion algorithms, IMUs can track head position and orientation with high temporal resolution (>100 Hz).

Integration in Brain Imaging: In fNIRS and EEG, these sensors are directly mounted on the head cap or optode holders to capture head movement [33]. The acquired motion data serves two primary functions: (1) as a reference signal for adaptive filtering to remove motion artifacts from physiological signals, and (2) as a quality metric to identify and exclude motion-corrupted epochs from analysis.

Key Methodologies:

- Active Noise Cancellation (ANC): Uses accelerometer output as a reference signal in an adaptive filter to subtract motion artifacts from fNIRS/EEG data [33].

- Accelerometer-Based Motion Artifact Removal (ABAMAR): Identifies motion-contaminated segments based on accelerometer signal magnitude and applies signal correction [33].

- BLind Source Separation, Accelerometer-based Artifact Rejection, and Detection (BLISSA2RD): Combines blind source separation with accelerometer guidance to isolate and remove motion components [33].

Optical Motion Tracking Systems

Operating Principle: Optical tracking systems use multiple cameras to track the 3D position of reflective or active markers placed on the subject's head [32]. By triangulating marker positions from different camera viewpoints, these systems provide high-precision spatial coordinates (sub-millimeter accuracy) for rigid-body motion.

Integration in Brain Imaging: In MRI research, optical tracking is often implemented for prospective motion correction, where real-time head position data actively adjusts the imaging sequence to maintain consistent spatial encoding [2]. This approach is particularly valuable for high-resolution structural and functional scans where even sub-millimeter movement degrades image quality.

Performance Validation: Studies comparing optical tracking to inertial sensors show that optical systems generally provide superior accuracy for spatial displacement measurements, while IMUs excel at capturing high-frequency vibrations and tremors. The choice between technologies depends on the specific motion profile of interest and practical constraints of the imaging environment.

Table 2: Technical Comparison of Motion Tracking Technologies for Brain Imaging

| Parameter | Accelerometers/IMUs | Optical Tracking Systems | Practical Implications for Brain Research |

|---|---|---|---|

| Spatial Accuracy | Moderate (mm-cm range) | High (sub-mm range) | Optical preferred for precise spatial mapping |

| Temporal Resolution | Very High (>100 Hz) | High (50-200 Hz) | IMUs better for capturing tremor, micro-movements |

| Measurement Volume | Self-contained, unlimited | Limited by camera field-of-view | IMUs suitable for unconstrained movement paradigms |

| Line-of-Sight Requirement | None | Required for all markers | IMUs advantageous for prone positions, head turns |

| Setup Complexity | Low (wearable sensors) | High (camera calibration) | IMUs facilitate rapid subject setup |

| Susceptibility to Interference | Magnetic fields, metallic objects | Ambient light, marker occlusion | Consideration for specific imaging environments (MRI) |

| Integration with Brain Data | Direct synchronization with physiological signals | May require specialized interface hardware | IMUs offer simpler temporal alignment |

Experimental Protocols and Implementation

IMU Integration for fNIRS Motion Correction

Equipment and Sensor Placement:

- IMU Selection: Choose miniaturized IMUs (e.g., MTx, Xsens) with appropriate sampling rates (≥100 Hz) [32].

- Mounting: Securely attach IMUs to the head using double-sided adhesive tape with additional elastic straps to minimize sensor-skin movement [32]. Optimal placement is on the forehead or posterior head regions with minimal hair interference.

- Synchronization: Implement hardware or software triggering to synchronize IMU data acquisition with fNIRS recording using a common pulse signal [32].

Data Processing Workflow:

- Motion Data Acquisition: Record triaxial acceleration, angular velocity, and orientation data throughout the imaging session.

- Motion Artifact Identification: Apply threshold-based detection to accelerometer magnitude to flag motion-contaminated epochs.

- Adaptive Filtering: For ANC approaches, use the accelerometer signal as a reference input to an adaptive filter (e.g., LMS, RLS) to estimate and subtract motion artifacts from fNIRS signals [33].

- Validation: Compare motion-corrected fNIRS signals with simultaneously acquired quality metrics (e.g., short-separation channels) to verify artifact reduction.

Diagram 1: IMU-Based Motion Correction Workflow

Optical Tracking for MRI Motion Correction

System Configuration:

- Camera Setup: Position multiple infrared cameras around the scanner bore to maintain line-of-sight to head-mounted markers throughout the examination.

- Marker Configuration: Apply reflective markers in a non-collinear arrangement on a rigid frame attached to the head coil or directly to a custom head cap [31].

- Calibration: Perform system calibration using a reference object to establish the transformation between camera coordinates and scanner coordinates.

Prospective Motion Correction Implementation:

- Real-Time Tracking: Continuously monitor head position relative to the magnet isocenter during sequence execution.

- Coordinate Transformation: Convert optical marker positions to scanner coordinate system using a predetermined transformation matrix.

- Sequence Adjustment: Dynamically update gradient orientations, RF frequencies, and slice positions to compensate for detected motion [2].

- Quality Assurance: Implement motion thresholds to trigger scan reacquisition if correction limits are exceeded.

Diagram 2: Prospective Motion Correction in MRI

Validation Protocols for Motion Correction Systems

Ground Truth Establishment:

- Conduct simultaneous recordings with multiple motion tracking modalities (e.g., IMU + optical) to establish convergent validity [32].

- Perform controlled motion experiments with known movement patterns (e.g., metronome-guided head rotations) to quantify system accuracy.

Performance Metrics:

- Temporal Accuracy: Measure synchronization precision between motion data and brain imaging signals.

- Spatial Accuracy: Quantify positional error against known displacement standards.

- Correction Efficacy: Evaluate improvement in standard image quality metrics (SNR, CNR) and functional connectivity measures after motion correction [30].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Materials for Hardware-Based Motion Tracking

| Component | Function/Purpose | Example Products/Specifications |

|---|---|---|

| Miniature IMU | Measures linear acceleration and angular velocity for motion tracking | Xsens MTx (3D accelerometer, 3D gyroscope, 3D magnetometer) [32] |

| Inertial Sensor System | Multi-sensor platform for full-body motion capture | Xsens Motion Tracking System (multiple synchronized MTx units) [32] |

| Optical Motion Capture | High-precision 3D position tracking using reflective markers | Codamotion system with active markers [32] |

| Head-Mounted Marker Set | Rigid attachment of reflective markers to the head for optical tracking | Custom helmet or headband with non-metallic markers for MRI compatibility [31] |

| Synchronization Interface | Temporal alignment of motion data with brain imaging signals | Digital I/O trigger box or dedicated sync pulse generator [32] |

| Motion Simulation Platform | Validation of tracking accuracy using controlled movements | Robotic or manual positioning stages with known displacement [31] |

Discussion and Future Directions

Hardware-based motion tracking represents an essential methodology for protecting the spatial fidelity of brain mapping data against the corrupting influence of subject movement. The complementary strengths of IMUs (high temporal resolution, wearability) and optical tracking (high spatial accuracy) provide researchers with flexible options tailored to their specific experimental needs. As brain research increasingly focuses on distributed network properties and fine-grained topographic organization, the accurate characterization and correction of motion artifacts becomes ever more critical.

Future developments will likely focus on multimodal sensor fusion combining inertial and optical data to leverage their respective advantages, and deep learning approaches that use motion sensor inputs to guide more intelligent artifact correction [34]. Furthermore, the miniaturization and wireless integration of these sensors will facilitate their application in naturalistic imaging environments and special populations where motion is most problematic. By rigorously implementing these hardware solutions, researchers can ensure that the spatial patterns observed in their data reflect genuine brain organization rather than motion-induced artifacts, thereby advancing more accurate models of brain function in health and disease.

Motion artifacts in brain Magnetic Resonance Imaging (MRI) represent a significant challenge in both clinical diagnostics and neuroscientific research. These artifacts, primarily stemming from rigid head motion, degrade image quality and can hinder downstream applications such as image segmentation, registration, and accurate target tracking in MR-guided radiation therapy [5]. The spatial distribution of these artifacts is not random; they can alter the B0 field, resulting in susceptibility artifacts, and disrupt k-space readout lines, potentially violating the Nyquist criterion and causing characteristic ghosting and ringing patterns [5] [35]. From a research perspective, understanding this spatial distribution is paramount, as motion artifacts may compromise the validity of studies investigating fine-scale brain anatomy and function. The pursuit of robust correction methods is not merely a technical exercise in image enhancement but a fundamental prerequisite for ensuring the accuracy and reliability of brain research findings. Traditional mitigation strategies, including repeated acquisitions or prospective motion tracking, impose substantial workflow burdens and are often impractical in time-sensitive clinical or research settings [36] [35]. This context has catalyzed the deep learning revolution, with Convolutional Neural Networks (CNNs) establishing a strong foundation and novel approaches like Residual-Guided Diffusion Models (Res-MoCoDiff) pushing the boundaries of what is possible in artifact correction.

Convolutional Neural Networks: The Foundational Bedrock

Convolutional Neural Networks (CNNs) have emerged as a dominant class of artificial neural networks for processing data with a grid-like topology, such as images. Their design, inspired by the organization of the animal visual cortex, is built to automatically and adaptively learn spatial hierarchies of features from low- to high-level patterns [37]. A typical CNN architecture is composed of multiple building blocks: convolution layers, pooling layers, and fully connected layers. The convolution and pooling layers are responsible for feature extraction, while the fully connected layers map these extracted features to the final output, such as a classification or segmentation map [37]. The convolution operation itself uses small arrays of learnable parameters called kernels, which are applied across the entire input image to create feature maps. Key features of this process include weight sharing, which makes the network translation invariant and dramatically increases model efficiency compared to fully connected networks [37].

In the specific domain of brain MRI analysis, CNNs have been extensively applied. An overview of deep CNNs for brain image analysis on MRI highlighted their use in segmenting brain lesions, tissues, and sub-cortical structures [38]. The review detailed how these models leverage building blocks like convolution, pooling, and non-linear activation functions (e.g., ReLU) to achieve state-of-the-art performance. CNNs have become the first choice for many problems in computer vision, and their success in brain MR image analysis is evidenced by their overwhelming acceptance within the community, particularly in challenges organized by the Medical Image Computing and Computer-Assisted Intervention (MICCAI) society [38]. A bibliometric analysis of CNNs in medical imaging further underscored their impact, noting that ResNet and U-Net were among the most cited architectures, with brain-related research being the most prevalent disease topic [39]. This established CNN backbone provides the essential context for understanding the evolution towards more sophisticated, generative models like diffusion models for tasks such as motion artifact correction.

Residual-Guided Diffusion Models (Res-MoCoDiff): A Paradigm Shift

Core Principles and Problem Formulation