Mapping the Mind: A Bibliometric Analysis of Cognitive Psychology Trends and Their Impact on Biomedical Research

This article provides a comprehensive bibliometric analysis of the cognitive psychology research landscape, tracing the evolution of key terms and concepts over recent decades.

Mapping the Mind: A Bibliometric Analysis of Cognitive Psychology Trends and Their Impact on Biomedical Research

Abstract

This article provides a comprehensive bibliometric analysis of the cognitive psychology research landscape, tracing the evolution of key terms and concepts over recent decades. It explores the foundational pillars of the field, details advanced methodological approaches for data extraction and analysis using tools like Biblioshiny and CiteSpace, and addresses common challenges in citation analysis and data interpretation. By comparing research trends across high-impact and specialized journals and validating findings through cross-disciplinary indicators, this analysis offers actionable insights for researchers, scientists, and drug development professionals. The findings highlight a significant shift towards neuroscience-integrated topics and identify emerging frontiers with direct implications for clinical diagnostics and therapeutic development.

The Evolving Lexicon of the Mind: Mapping Core Topics and Trends in Cognitive Psychology

Identifying the Most Prevalent Research Topics in Cognitive Psychology

Cognitive psychology, the scientific study of mental processes such as attention, memory, language, and decision-making, continues to evolve rapidly, driven by methodological advancements and interdisciplinary integration. A bibliometric analysis of the field reveals a significant paradigm shift from purely behavioral investigations toward a neuroscience-informed approach that seeks to understand the biological underpinnings of cognitive functions. The proliferation of neuroimaging technologies, sophisticated computational models, and genetic analyses has created a research landscape characterized by its focus on neuroplasticity, working memory mechanisms, and executive function optimization [1] [2]. This evolution reflects the field's response to pressing societal needs, including developing interventions for cognitive aging, neurological rehabilitation, and mental health treatment.

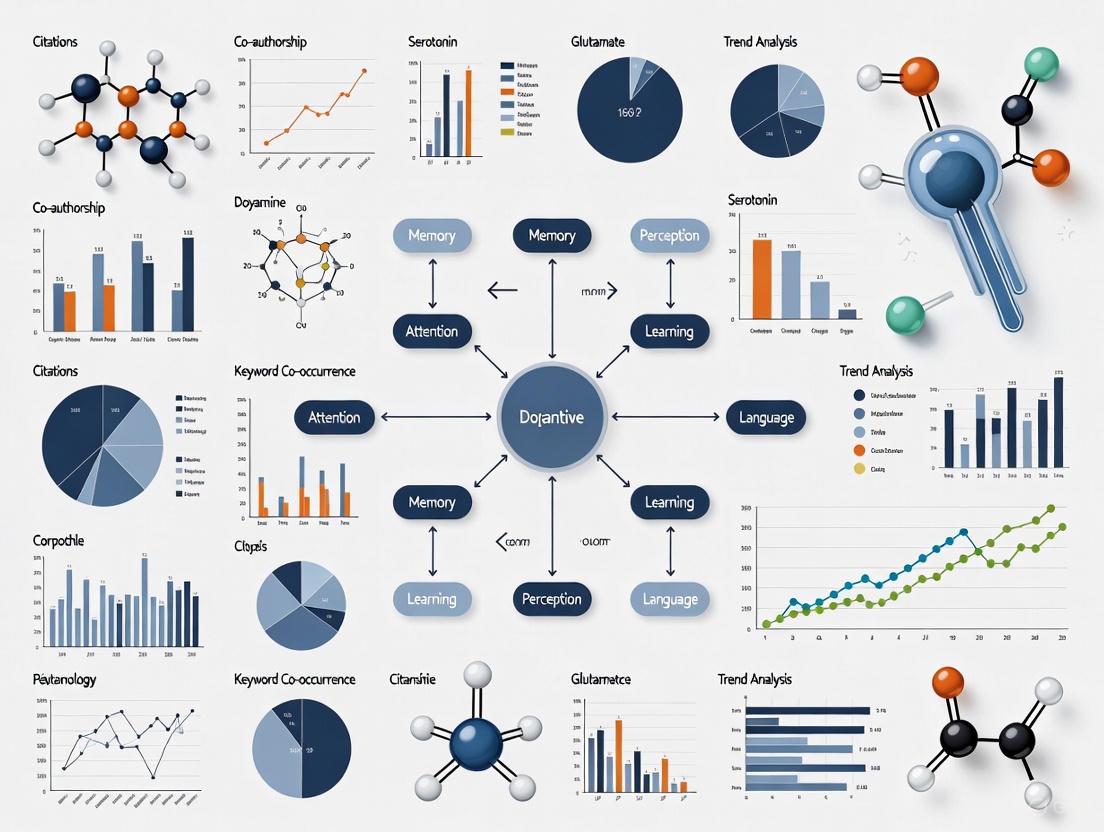

The analytical framework for this whitepaper utilizes bibliometric data to identify research trends, citation networks, and emerging frontiers. Bibliometric analysis provides objective, quantitative insights into the development of scientific fields through multi-dimensional analysis of research literature, including authorship patterns, institutional collaborations, keyword co-occurrence, and citation networks [1]. This methodology precisely identifies research hotspots and knowledge foundations while revealing collaboration networks and interdisciplinary characteristics. Recent analyses demonstrate that cognitive psychology research has steadily increased in annual publication output, with particular growth in studies integrating neuroscientific methods and therapeutic applications [1]. The United States leads in research output and centrality, with Harvard University being a leading institution, while journals such as "Disability and Rehabilitation" and "Stroke" serve as key dissemination channels for cognitively-oriented rehabilitation research [1].

Prevalent Research Topics and Quantitative Analysis

Bibliometric analysis of cognitive psychology literature reveals distinct research clusters with varying prevalence and growth trajectories. The table below summarizes the most prominent research topics based on publication volume, citation impact, and recent growth patterns.

Table 1: Prevalent Research Topics in Cognitive Psychology Based on Bibliometric Analysis

| Research Topic | Prevalence Level | Key Focus Areas | Methodological Approaches |

|---|---|---|---|

| Neuroplasticity | Very High | Synaptic plasticity, structural remodeling, functional reorganization, rehabilitation applications | Brain imaging (fMRI, MRI), brain-computer interfaces, neuromodulation techniques [2] |

| Working Memory | High | Capacity limits, training interventions, neural correlates, genetic factors | Computerized cognitive training, fMRI, genetic analysis, behavioral assessment [3] |

| Decision-Making | High | Neural mechanisms, cognitive biases, uncertainty, economic behavior | Behavioral experiments, neuroeconomics paradigms, computational modeling [4] |

| Executive Functions | Moderate-High | Cognitive control, task switching, inhibition, updating | Standardized cognitive batteries, experimental cognitive tasks, ecological assessment [4] |

| Attention | Moderate | Selective attention, sustained attention, multitasking, disorders of attention | Eye-tracking, EEG, continuous performance tasks, dual-task paradigms [4] |

Recent trends indicate particularly strong growth in neuroplasticity research, with emphasis on both adaptive (beneficial) and maladaptive (harmful) processes across different life stages [2]. The mechanisms underlying neuroplasticity and their therapeutic applications represent a dominant research stream, accounting for a significant proportion of recent publications in high-impact journals. Similarly, working memory research maintains a consistently high presence, with studies increasingly focusing on training-induced structural changes in the brain and their genetic correlates [3].

Analysis of keyword co-occurrence networks reveals that meta-analysis (strength=3.6), assessment tool validation (strength=3.49), and acceptance-based interventions (strength=2.89) represent particularly robust emerging trends [1]. These methodological approaches reflect the field's maturation toward evidence synthesis, measurement refinement, and clinical application. The research landscape has evolved from focusing on basic cognitive processes to systematic intervention development, with growing emphasis on methodological standardization and individualized interventions [1].

Detailed Experimental Protocols

Working Memory Training and Neuroplasticity

Objective: To investigate the effects of an 8-week standardized computerized working memory training program on cortical microstructure, morphometric similarity network changes, and associated genetic factors in healthy adults [3].

Participants:

- Total of 76 healthy participants recruited and equally divided into WMT group (n=38) and control group (n=38)

- Final analysis included 36 participants per group after exclusions for image quality

- Inclusion criteria: no prior medical treatment or drug use; normal vision; no neurological or psychiatric history; no substance use 24 hours pre-/post-test

- Sample size calculated using G*Power software with statistical power (1-β) of 0.90, alpha level of 0.05, and medium effect size (Cohen's d = 0.5) [3]

Procedure:

- Pre-test: Demographic collection, screening questionnaires, baseline cognitive assessment, and initial MRI scan

- Group Randomization: Participants randomly assigned to WMT or control group

- Training Phase: 40 sessions over 8 weeks (5 sessions per week)

- Post-test: Identical cognitive assessment and MRI scanning repeated after training completion

Working Memory Training Protocol:

- Task: Computerized adaptive running memory task assessing WM updating

- Stimuli: Locations, letters, and animals presented sequentially

- Requirement: Memorize properties of last three stimuli in each trial

- Structure: 30 trials per session, divided into six segments of five trials each

- Adaptive Difficulty: Stimulus presentation time decreased by 100ms when participants achieved ≥3 correct responses in a block

- Duration: Initial 30 minutes daily, reduced to 20 minutes on final day [3]

Control Group Protocol:

- Task: Simple memory task with 90 trials daily

- Stimuli: Single animal presented for 1750ms followed by 9-alternative forced choice recognition

- Requirement: Identify which animal was previously presented

- Duration: Approximately 10 minutes daily [3]

Table 2: Cognitive Assessment Measures for Near-Transfer Effects

| Assessment | Cognitive Domain | Specific Measures | Administration |

|---|---|---|---|

| Update Function Test (UFT) | Working Memory Updating | Accuracy, response time | Pre- and post-test |

| Inhibition Function Test (IFT) | Response Inhibition | Commission errors, inhibition score | Pre- and post-test |

| Switching Function Test (SFT) | Cognitive Flexibility | Switch cost, mixing cost | Pre- and post-test |

Neuroimaging Protocol:

- MRI Acquisition: High-resolution T1-weighted structural images

- Cortical Morphological Measures: Cortical thickness (CT) and fractional dimensions (FD)

- Morphometric Similarity Network (MSN): Constructed using multiple morphological features

- Analysis: Between-group comparisons of CT, FD, and MSN topological properties [3]

Genetic Analysis:

- Method: Partial least squares (PLS) analysis

- Data Source: Allen Human Brain Atlas transcriptomic dataset

- Objective: Investigate relationship between microstructural alterations and gene expression

- Enrichment Analysis: Gene Ontology (GO) and pathway analysis for PLS+ and PLS- genes [3]

Figure 1: Experimental Workflow for Working Memory Training Study

Neuroplasticity Assessment Protocol

Objective: To evaluate neuroplastic changes induced by cognitive training, non-invasive brain stimulation, or pharmacological interventions through multi-modal assessment approaches [2] [5].

Neuroimaging Methods:

- Structural MRI: Measures gray matter volume, cortical thickness, and white matter integrity

- Functional MRI (fMRI): Assesses task-related activation and functional connectivity

- Ultra-High Field MRI: 7T, 11.7T, and emerging 14T scanners providing enhanced spatial resolution

- Morphometric Similarity Network (MSN): Individual-level morphological brain networks using multiple cortical features [3]

Intervention Modalities:

- Computerized Cognitive Training: Adaptive working memory tasks, attention training, process-specific programs

- Non-Invasive Brain Stimulation: Transcranial magnetic stimulation (TMS), transcranial direct current stimulation (tDCS)

- Pharmacological Interventions: Neurotransmitter-targeted compounds, cognitive enhancers

- Combined Approaches: Integrated multimodal interventions for synergistic effects [2]

Outcome Measures:

- Primary Outcomes: Cognitive performance measures, brain structure and function

- Secondary Outcomes: Daily functioning, quality of life, transfer effects

- Biological Correlates: Genetic markers, neurotransmitter levels, neurophysiological indices [3]

Signaling Pathways and Neurobiological Mechanisms

Neuroplasticity involves complex molecular signaling pathways that mediate experience-dependent changes in neural circuitry. The following diagram illustrates key pathways implicated in working memory training-induced neuroplasticity:

Figure 2: Signaling Pathways in Experience-Dependent Neuroplasticity

Working memory training induces neurotransmission enhancement, particularly in glutamatergic and GABAergic systems within prefrontal and parietal regions [3]. This enhanced activity triggers receptor activation, including NMDA and AMPA glutamate receptors, as well as BDNF tropomyosin receptor kinase B (TrkB) receptors. Subsequent intracellular signaling involves calcium influx, activation of calcium/calmodulin-dependent protein kinase II (CamKII), and phosphorylation of cAMP response element-binding protein (CREB). These signaling cascades lead to gene expression changes in immediate early genes (c-Fos, Egr1) and effector genes (Arc, BDNF), which ultimately mediate structural plasticity through synaptogenesis, dendritic spine growth, and altered cortical morphology in frontal regions [3]. These molecular and structural changes manifest as functional improvements in cognitive performance, particularly in working memory updating, executive functions, and attentional control.

Genetic analyses reveal that working memory training-induced neuroplasticity is associated with specific gene expression patterns. Partial least squares analysis has identified two distinct gene clusters: PLS+ genes enriched in synaptic transmission, neural regulation, and energy metabolism; and PLS- genes associated with intracellular transport, protein modification, and stress responses [3]. This genetic architecture highlights the biological complexity of neuroplasticity and may explain individual differences in response to cognitive training interventions.

Research Reagent Solutions and Essential Materials

Table 3: Essential Research Materials for Cognitive Psychology and Neuroscience Studies

| Category | Specific Items | Function/Application | Example Use Cases |

|---|---|---|---|

| Neuroimaging Equipment | 3T MRI Scanner, 7T MRI Scanner, 11.7T MRI Scanner, Portable MRI Systems | High-resolution structural and functional brain imaging | Cortical thickness measurement, functional connectivity analysis, microstructural change detection [3] [5] |

| Cognitive Assessment Tools | Computerized Running Memory Task, Update Function Test, Inhibition Function Test, Switching Function Test | Quantifying cognitive performance and training effects | Working memory assessment, executive function evaluation, near-transfer effect measurement [3] |

| Genetic Analysis Resources | Allen Human Brain Atlas, Gene Expression Microarrays, RNA Sequencing Tools | Linking neurobiological changes to genetic correlates | Transcriptome-neuroimaging association studies, genetic enrichment analysis [3] |

| Brain Stimulation Devices | TMS (Transcranial Magnetic Stimulation), tDCS (transcranial Direct Current Stimulation) | Non-invasive neuromodulation | Causal investigation of brain-behavior relationships, therapeutic intervention studies [2] |

| Computational Modeling Software | Morphometric Similarity Network Analysis, Partial Least Squares Regression, Digital Brain Modeling Platforms | Advanced data analysis and theoretical modeling | Individual-level brain network construction, gene-expression correlation analysis, personalized brain simulation [3] [5] |

The Allen Human Brain Atlas serves as a crucial resource for transcription-neuroimaging association studies, providing regional gene expression profiles that can be correlated with structural and functional brain measures [3]. Ultra-high field MRI scanners (7T, 11.7T, and emerging 14T systems) enable unprecedented spatial resolution for investigating cortical microstructure and neuroplastic changes [5]. Computerized cognitive training platforms with adaptive difficulty algorithms allow for standardized administration of working memory interventions across diverse populations [3]. Digital brain modeling tools facilitate the creation of personalized brain simulations and digital twins, which are increasingly used to predict disease progression and treatment responses [5].

Emerging Frontiers and Future Directions

Cognitive psychology research is rapidly evolving toward increasingly sophisticated and interdisciplinary approaches. Several emerging frontiers promise to reshape the field in the coming years:

Digital Brain Models and Personalized Simulations: Research is progressing toward complete and accurate digital brain representations that vary in complexity and scope [5]. Personalized brain models enhance general simulations with individual-specific data, as exemplified by the Virtual Epileptic Patient, where neuroimaging data inform in silico simulations. Digital twins represent continuously evolving models that update with real-world data from a person over time, potentially predicting neurological disease progression or therapy responses [5]. The most ambitious efforts focus on full brain replicas - comprehensive digital versions that capture every aspect of brain structure and function. These modeling approaches underscore the growing potential of computational neuroscience to revolutionize both basic research and personalized medicine.

Advanced Neuroimaging Technologies: The field is experiencing simultaneous development of both higher-power and more accessible neuroimaging solutions [5]. Ultra-high field MRI systems (11.7T) provide remarkable spatial resolution, with in-plane resolution of 0.2mm and slice thickness of 1mm achievable in just 4 minutes of acquisition time. Concurrently, portable, cost-effective MRI alternatives are emerging from companies like Hyperfine and PhysioMRI, increasing accessibility and patient comfort. Helium-free operations and rotating gantry designs represent additional innovations that may expand clinical and research applications of neuroimaging.

Artificial Intelligence Integration: AI and large language models are increasingly applied to cognitive neuroscience research, with potential to automate labor-intensive processes like brain image segmentation and analysis [5]. Forecasts suggest that up to 40% of working hours in neuroimaging research could be positively impacted by AI, supporting researchers with administrative tasks, decision making, and personalized analysis. The validation and integration of AI tools into research workflows represents a critical frontier for the field.

Neuroethical Considerations: As research advances, important neuroethical questions are emerging regarding neuroenhancement, cognitive privacy, and data security [5]. The development of technologies that might "read minds" or enhance cognitive functions raises complex questions about fairness, accessibility, and the protection of individuals' inner lives. Similarly, digital brain models and twins present privacy challenges, as individuals with rare conditions may become identifiable despite data de-identification efforts. Addressing these neuroethical challenges is essential for responsible innovation in cognitive psychology research.

The convergence of these technological advancements with traditional cognitive psychology paradigms promises to accelerate our understanding of the human mind while creating new opportunities for intervention and enhancement. Future research will likely focus on integrating multiple levels of analysis—from genetic and molecular mechanisms to systems-level neuroscience and computational modeling—to develop comprehensive theories of cognitive function and dysfunction.

The Historical Rise of Neurosciences and Related Technologies in Psychological Discourse

The integration of neuroscience technologies into psychological research has fundamentally transformed how we study, understand, and conceptualize human cognition and behavior. This transformation represents one of the most significant paradigm shifts in the history of psychological science, moving the field from purely behavioral observation to the direct investigation of neural mechanisms underlying mental processes. The introduction of functional magnetic resonance imaging (fMRI) in particular has served as a catalytic force in this integration, creating new interdisciplinary fields and reshaping psychological discourse [6]. This technological revolution has enabled researchers to bridge the historical gap between the mind and the brain, allowing for the non-invasive investigation of neural processes in healthy, awake humans performing complex psychological tasks.

The evolution of this integration reflects a broader movement in scientific thinking about brain organization—from strict localizationism to connectionist approaches and, ultimately, to contemporary network-based theories of brain function [6]. As Arthur Benton presciently noted in 1992, "the traditional concept of discrete areal localization, i.e., linking specific functions and cognitive abilities to specific regions of the brain, is dying (if it is not already dead)" [6]. This shift in theoretical perspective, coupled with advancing technologies, has created the foundation for modern cognitive neuroscience as a bridge between psychological theory and biological implementation.

Historical Development of Neuroscientific Technologies

Pre-fMRI Era: Foundations of Functional Brain Imaging

The conceptual foundations for modern functional neuroimaging began much earlier than many researchers realize. The fundamental principle that brain activity correlates with changes in cerebral blood flow was first documented in the late 19th century. In 1890, British researchers Charles Sherrington and Charles Roy conducted pioneering experiments measuring changes in cerebral blood volume among anesthetized dogs, providing early evidence against the prevailing belief that the rigid skull prevented changes in cerebral blood flow [7]. This foundational work established the vascular principles that would later become the basis for fMRI.

The mid-20th century brought critical advances in quantifying human cerebral blood flow. In 1948, Seymour Kety and Carl Schmidt developed the first reliable, quantitative method for measuring cerebral blood flow in conscious humans under normal conditions using nitrous oxide and applying Fick's principle [7]. This methodological breakthrough provided the first concrete evidence that the brain intrinsically regulates its own blood flow in response to neural activity, overturning decades of conventional wisdom that external factors solely governed cerebral circulation.

The advent of positron emission tomography (PET) in the 1980s marked the first practical technology for mapping human brain function. Early PET studies focused primarily on resting-state investigations of cerebral metabolism and blood flow [8]. However, researchers quickly recognized the potential for measuring task-induced brain activity, leading to the development of sophisticated data analysis tools including statistical parametric mapping [8]. These analytical innovations, combined with the ability to measure brain activity during cognitive tasks, positioned PET as the first revolutionary technology for cognitive neuroscience research.

The fMRI Revolution: A Paradigm Shift

The development of functional magnetic resonance imaging (fMRI) based on the blood-oxygen-level-dependent (BOLD) effect in the early 1990s fundamentally transformed psychological research [6]. The seminal work by Kwong et al. (1992) demonstrated that fMRI could noninvasively detect changes in brain activity during sensory stimulation, opening unprecedented opportunities for mapping the working human brain [6]. The BOLD effect, first described by Ogawa et al. (1990), exploits differences in magnetic properties between oxygenated and deoxygenated hemoglobin, allowing researchers to infer neural activity from vascular changes [7] [9].

The rapid adoption of fMRI stemmed from several distinct advantages over previous technologies. Unlike PET, fMRI involved no ionizing radiation, allowed for higher spatial resolution, and permitted repeated measures within individuals [8]. Perhaps equally important was the democratizing effect of fMRI on cognitive neuroscience. As researcher Ray Dolan noted, "fMRI democratized access to a powerful technology for investigating the living human brain, allowing a broad cross-section of academic disciplines to pursue new agendas" [8]. This accessibility prompted an exponential increase in neuroimaging publications and facilitated the emergence of genuinely interdisciplinary research teams.

Table 1: Key Technological Developments in Neuroscience

| Year | Development | Key Researchers/Teams | Impact on Psychological Research |

|---|---|---|---|

| 1890 | Cerebral blood flow experiments | Roy & Sherrington | Established link between neural activity and cerebral blood flow |

| 1948 | Quantitative CBF measurement | Kety & Schmidt | Provided first method to measure human CBF quantitatively |

| 1980s | PET for brain activation studies | Frackowiak, Raichle, others | Enabled first maps of task-induced brain activity |

| 1990 | BOLD effect discovery | Ogawa et al. | Identified MRI contrast mechanism for neural activity |

| 1992 | First human fMRI study | Kwong et al. | Demonstrated practical fMRI for mapping human brain function |

| 1992-present | Event-related fMRI | Multiple groups | Enabled more sophisticated cognitive task designs |

The initial period of fMRI research (approximately 1992-2005) was characterized by enthusiastic efforts to map complex psychological functions to specific brain regions [6]. This period saw the identification of specialized cortical areas for functions such as face processing (fusiform face area), language processing, and executive functions [6]. However, this renewed localizationist approach also generated criticism, with some scholars labeling it as "new phrenology" and others highlighting methodological concerns such as inadequate statistical corrections [6].

Evolution of Experimental Paradigms and Analytical Approaches

From Block Designs to Complex Experimental Frameworks

Early fMRI research predominantly employed blocked designs, which presented alternating blocks of experimental and control conditions [8]. This approach, borrowed from PET methodology, allowed researchers to identify brain regions associated with specific cognitive processes through subtraction methodology [8]. While effective for early mapping studies, block designs were limited in their ability to disentangle rapidly evolving cognitive processes.

The development of event-related fMRI in the late 1990s represented a significant methodological advance, enabling researchers to study brain responses to individual trials rather than extended blocks [8]. This innovation allowed for more sophisticated experimental designs that could address questions about the temporal dynamics of cognitive processes and adapt paradigms from cognitive psychology, such as repetition suppression and priming [8]. The implementation of these more flexible designs coincided with the adoption of parametric and factorial experimental approaches that provided enhanced experimental control and more nuanced inferential capabilities [8].

Analytical Evolution: From Activation Mapping to Network Neuroscience

The analytical approaches in fMRI research have evolved substantially from initial focus on localized activations to contemporary emphasis on distributed networks. Early analyses focused primarily on identifying statistically significant activation peaks associated with specific task conditions [9]. The field employed various statistical approaches, including general linear models (GLM), cross-correlation analyses, t-tests, and independent component analysis, with ongoing debates about optimal statistical thresholding [9].

Throughout the 2000s, research increasingly recognized the limitations of purely localizationist accounts and began focusing on functional connectivity and network analyses [6]. This shift reflected the growing understanding that complex psychological functions emerge from interactions among distributed brain regions rather than isolated activity in specialized areas [6]. The culmination of this trend can be seen in large-scale projects such as the Human Connectome Project, which aims to map macroscopic brain circuits and their relationships to behavior [6].

Diagram 1: Evolution of fMRI Analytical Approaches

Bibliometric Landscape of Neuroscience in Psychological Research

Global Trends and Research Output

Bibliometric analyses reveal the dramatic growth and evolving focus of neuroscience research within psychological science. Analysis of 13,590 articles from 1990-2023 shows that the United States, China, and Germany have dominated research output, with China's publications rising from sixth to second globally after 2016, driven by national initiatives like the China Brain Project [10]. The research hotspots have progressively evolved from basic brain mapping to more complex themes including "task analysis," "deep learning," and "brain-computer interfaces" [10].

The integration of AI and neuroscience has emerged as a particularly rapidly growing area, with 1,208 studies published between 1983-2024 showing a notable surge in publications since the mid-2010s [11]. This interdisciplinary convergence has been particularly prominent in applications such as neurological imaging, brain-computer interfaces (BCI), and diagnosis of neurological diseases [11].

Table 2: Bibliometric Trends in Neuroscience Research (1990-2023)

| Metric | 1990-2012 | 2013-2023 | Trend |

|---|---|---|---|

| Total Publications | Gradual growth | Rapid increase | Exponential growth |

| Leading Countries | US, Germany, UK, Italy, France | US, China, Germany, UK, Canada | China's prominence increased |

| Primary Focus | Localization of function | Networks, connectivity, AI integration | Shift from localization to connectivity |

| Key Methodologies | fMRI, PET | fMRI, DTI, machine learning, network analysis | Increasing methodological diversity |

| Interdisciplinary Collaboration | Limited | Extensive | Significant increase |

Specialized Subfields and Their Evolution

The integration of neuroscience and psychology has spawned numerous specialized subfields, each with distinct research trajectories. Neuroeducation has emerged as an interdisciplinary approach combining cognitive neuroscience, psychology, and education to enhance learning [12]. Bibliometric analysis of 1,507 peer-reviewed articles from 2020-2025 shows the United States, Canada, and Spain as leading contributors, with key researchers including Hera Antonopoulou and Steve Masson driving the field forward [12].

Similarly, neuroinformatics has established itself as a pivotal field at the intersection of neuroscience and information science. Analysis of publications in the journal Neuroinformatics reveals enduring research themes including neuroimaging, data sharing, machine learning, and functional connectivity [13]. The journal has seen substantial growth in publications, from 18 articles in its inaugural 2003 volume to a record 65 articles in 2022, reflecting the field's expanding influence [13].

Impact on Psychological Theory and Practice

Reshaping Theoretical Frameworks

The integration of neuroscientific evidence has fundamentally reshaped psychological theories across multiple domains. In language research, systematic reviews of fMRI studies have revealed the dynamic interaction between language and working memory systems, showing involvement beyond traditional language areas to include subcortical structures, particularly the basal ganglia, and widespread right hemispheric regions [14]. This evidence has supported more distributed, network-based models of language processing that transcend classical localizationist models.

The influence of neuroscience has similarly transformed memory research, where neuroimaging findings frequently revealed activations in brain regions not predicted by lesion-deficit models alone [8]. For example, studies of episodic memory identified engagement in regions that would not have been anticipated based solely on lesion studies, highlighting the complementary value of functional neuroimaging for understanding typical brain organization [8].

Clinical Translation and Applications

The transition of fMRI from purely research tool to clinical application represents a significant milestone in the practical impact of neuroscience on psychological practice. Presurgical mapping for patients with brain tumors and other resectable lesions has become the primary clinical application, with BOLD fMRI and diffusion tensor imaging (DTI) providing critical information for surgical planning [9]. Validation studies have demonstrated high correlations between fMRI localization and direct cortical stimulation mapping, establishing fMRI as a reliable noninvasive alternative for identifying eloquent cortex [9].

The application of fMRI for language lateralization has shown particular promise as a potential replacement for the invasive Wada test. Multiple studies have demonstrated high concordance (r = 0.96 in one study of 22 patients) between fMRI lateralization and Wada test results [9]. While memory mapping with fMRI has proven more challenging to implement clinically, validation studies have shown promising correlations between preoperative fMRI hippocampal encoding asymmetry and postoperative memory outcomes [9].

Diagram 2: Clinical Translation Pathways

The Scientist's Toolkit: Essential Research Solutions

Table 3: Essential Research Reagents and Solutions in fMRI Research

| Item | Function/Purpose | Key Considerations |

|---|---|---|

| BOLD fMRI Protocols | Noninvasive mapping of neural activity via vascular changes | Optimization for specific cognitive domains; parameter selection |

| Event-Related Paradigms | Isolate brain responses to individual trials | Timing parameters; jittering; counterbalancing |

| Statistical Parametric Mapping | Statistical analysis of functional imaging data | Multiple comparison correction; statistical thresholding |

| Functional Connectivity Analysis | Measure temporal correlations between brain regions | Removal of confounds; choice of seed regions |

| Network Analysis Tools | Quantify topological properties of brain networks | Graph theory metrics; thresholding strategies |

| Cognitive Task Batteries | Engage specific psychological processes | Construct validity; performance metrics |

| Data Sharing Platforms | Facilitate open science and reproducibility | Data standardization; anonymization protocols |

Current Trends and Future Directions

The field continues to evolve rapidly, with several emerging trends likely to shape future psychological research. The integration of artificial intelligence and machine learning with neuroscience is accelerating, particularly in applications such as early diagnosis of neurological disorders, analysis of complex neural data, and personalized treatment approaches [11]. The number of publications in this intersection has surged since the mid-2010s, with the United States, China, and the United Kingdom playing pioneering roles [11].

Another significant trend is the focus on large-scale data collection and open science initiatives. Projects such as the Human Connectome Project have demonstrated the value of collecting high-quality neuroimaging data from large samples, leading to even more ambitious efforts to map brain structure and function across development and in various clinical populations [6]. These initiatives are increasingly emphasizing reproducibility, data sharing, and collaborative networks across institutions and countries.

Future directions likely include increased emphasis on multimodal integration, combining fMRI with other techniques such as electroencephalography (EEG), transcranial magnetic stimulation (TMS), and magnetoencephalography (MEG) to leverage the respective strengths of each method [10]. There is also growing interest in personalized neuroscience that accounts for individual differences in brain organization and function, potentially leading to more individualized educational, clinical, and occupational applications [12].

The historical rise of neurosciences in psychological discourse represents a fundamental transformation in how we study and understand the human mind. From early blood flow experiments to contemporary network neuroscience, this integration has progressively reshaped psychological theories, research methods, and clinical applications. As the field continues to evolve, it promises to further bridge the gap between biological mechanisms and psychological phenomena, offering increasingly sophisticated frameworks for understanding the complex relationship between brain, mind, and behavior.

Analyzing the Decline of Topics Aligned with Humanities and the Ascent of Natural Science-Oriented Research

The contemporary academic landscape is characterized by a significant divergence in the trajectories of humanities-oriented and natural science-oriented research. This shift is not merely perceptual but is substantiated by robust quantitative data on funding, faculty numbers, student enrollments, and publication patterns. Over the past decade, the humanities have faced substantial challenges, including declining institutional support and shifting student interests, while natural science fields have expanded their dominance in the research ecosystem [15]. This paper employs bibliometric analysis—the quantitative study of publication patterns—to document and analyze this scholarly reorientation, with particular attention to its manifestation in interdisciplinary fields like cognitive psychology.

Understanding this transition requires examining multiple dimensions of the academic enterprise. The following sections present original bibliometric data, detailed methodological protocols for replicating this analysis, visualization of research workflows, and a discussion of the implications for research evaluation and funding policy.

Quantitative Evidence of Diverging Research Trajectories

Institutional Support and Faculty Composition

Table 1: Trends in Humanities Faculty and Department Resources (2017-2023)

| Metric | Discipline | Trend | Time Period | Magnitude |

|---|---|---|---|---|

| Institutions Awarding Degrees | English | Decline | 2017-2022 | -4% |

| Institutions Awarding Degrees | American Studies | Decline | 2017-2022 | -17% |

| Institutions Awarding Degrees | Religion | Decline | 2017-2022 | -16% |

| Tenure-Line Faculty | English | Decrease | 2020-2023 | 59% of departments reported decrease |

| Tenure-Line Faculty | History, Anthropology, LOTE | Decrease | 2020-2023 | >40% of departments reported decrease |

| Non-Tenure-Track Faculty | All Humanities Disciplines | Increase | 2020-2023 | Exceeded 40% of total faculty |

| Department Chair Outlook | Research Universities | Optimistic | 2023-2024 | 51% |

| Department Chair Outlook | Master's Institutions | Pessimistic | 2023-2024 | 29% |

Data from a national survey of humanities departments reveals a field undergoing significant contraction. From 2017 to 2022, the number of institutions awarding degrees in traditional humanities disciplines declined substantially, with the most severe drops observed in American studies (-17%) and religion (-16%) [15]. This institutional retreat has been accompanied by shifts in faculty composition, with English departments experiencing the most severe losses—59% reported decreases in tenure-line faculty from 2020 to 2023 [15]. Concurrently, the proportion of non-tenure-track faculty across all humanities disciplines now exceeds 40%, indicating a trend toward precarious academic labor in these fields [15].

The perception of these challenges varies by institutional context. While a slight majority (51%) of department chairs at research universities express optimism about their discipline's future, only 29% of their counterparts at master's institutions share this outlook [15]. This suggests that the humanities' position is increasingly dependent on institutional wealth and research intensity.

Publication Patterns and Research Output

Table 2: Comparative Publication Patterns Across Disciplines

| Discipline Category | 3-Year Publication Rate | 10-Year Publication Rate | Primary Output Formats | Bibliometric Database Coverage |

|---|---|---|---|---|

| Humanities (e.g., History, Philosophy) | ~65% (Range: 46-81%) | ~85% (Range: 61-93%) | Journal articles, books, book chapters | Moderate |

| Visual & Performing Arts | ~21% (Range: 21-25%) | ~32% (Range: 30-34%) | Performances, compositions, exhibitions, critical texts | Limited |

| Natural Sciences | >90% (estimated) | >95% (estimated) | Journal articles, conference proceedings, preprints | Comprehensive |

| Transdisciplinary Research | Growing | Growing | Journal articles | Varies by field |

Fundamental differences in publication patterns between humanities, arts, and sciences further illuminate the divergent trajectories of these fields. Analysis of publication rates reveals that in a typical humanities discipline, approximately 65% of scholars publish at least one article within a 3-year period, rising to about 85% over a decade [16]. In stark contrast, visual and performing arts disciplines exhibit faculty article publication rates of only 21% at the 3-year mark, reaching just 32% after a full decade [16]. These disparities reflect fundamentally different epistemologies and output modalities, with arts scholarship emphasizing performative, compositional, and exhibition-based work rather than textual publication [16].

Natural sciences, with their almost exclusive reliance on journal articles and conference proceedings, are perfectly aligned with the output metrics that dominate contemporary research assessment. This alignment creates a systemic advantage for scientific disciplines in an era where bibliometric indicators heavily influence funding and prestige.

Methodological Framework: Bibliometric Analysis Protocols

Data Collection and Extraction Protocol

Experimental Protocol 1: Bibliometric Data Collection from Web of Science

- Objective: To extract comprehensive publication data for analyzing research trends in targeted fields.

- Materials: Web of Science (WOS) Core Collection database subscription, computer with internet access, data storage system.

- Procedure:

- Access the Web of Science Core Collection via institutional subscription.

- Design search queries using Boolean operators and field tags (e.g., TS="digital amnesia" OR "cognitive offloading").

- Apply publication date filters to establish temporal boundaries (e.g., 2003-2022 for longitudinal analysis).

- Select document types (e.g., "Article," "Review") to refine results.

- Export the full metadata record for each publication, including authors, affiliations, citations, keywords, and references.

- Save data in appropriate formats (e.g., BibTeX, CSV, Plain text) for subsequent analysis.

- Validation: Cross-validate search strategy with subject matter experts to ensure comprehensive keyword coverage.

- Notes: Web of Science was selected due to its high coverage of peer-reviewed, high-impact journals and compatibility with bibliometric software [17] [18]. The export setting "Full record and cited references" is recommended for comprehensive analysis.

Data Cleaning and Preprocessing Protocol

Experimental Protocol 2: Data Cleaning and Standardization

- Objective: To ensure accuracy and reliability in bibliometric analysis by standardizing dataset.

- Materials: Raw exported data from WOS, bibliometric software (Biblioshiny, CiteSpace), spreadsheet software.

- Procedure:

- Standardize author names by merging variants (e.g., "Smith, J" and "Smith, John").

- Harmonize institutional affiliations (e.g., "Univ Oxford" and "University of Oxford").

- Merge synonymous keywords (e.g., "cognitive overload" and "information overload").

- Unify plural and singular keyword forms (e.g., "distractions" to "distraction").

- Remove duplicate entries based on unique identifiers.

- Apply inclusion/exclusion criteria systematically to finalize dataset.

- Quality Control: Implement PRISMA flowchart methodology to document screening and selection process for transparency and replicability [18].

- Output: Cleaned dataset of bibliographic records ready for network analysis and visualization.

Analytical and Visualization Protocol

Experimental Protocol 3: Network Analysis and Visualization

- Objective: To map intellectual structure and thematic evolution of research fields.

- Materials: Cleaned bibliometric dataset, VOSviewer software, CiteSpace software, Biblioshiny.

- Procedure:

- Import cleaned dataset into analytical software.

- Conduct co-authorship analysis to examine collaboration patterns between institutions and countries.

- Perform keyword co-occurrence analysis to identify prominent research themes.

- Execute cluster analysis to group related concepts and identify emerging trends.

- Apply temporal mapping to visualize thematic evolution over time.

- Generate network visualizations with appropriate layout algorithms and clustering techniques.

- Interpretation: Analyze network density, centrality measures, and cluster composition to identify influential authors, institutions, and research fronts.

Visualizing Bibliometric Analysis: Workflow and Relationships

Bibliometric Analysis Workflow

The diagram above outlines the systematic process for conducting bibliometric analysis, from research question formulation through interpretation. This workflow underpins the findings presented in this paper and serves as a replicable template for researchers investigating publication trends across disciplines.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential Analytical Tools for Bibliometric Research

| Tool/Resource | Type | Primary Function | Application in Analysis |

|---|---|---|---|

| Web of Science Core Collection | Database | Comprehensive citation data | Primary source for bibliographic records and citation metrics |

| VOSviewer | Software | Visualization of scientific landscapes | Creating network maps of co-authorship, co-citation, keyword co-occurrence |

| CiteSpace | Software | Visualizing trends and patterns | Temporal analysis of research fronts, burst detection, cluster analysis |

| Biblioshiny | Software | Bibliometric analysis interface | Data preprocessing, descriptive statistics, basic visualizations |

| Journal Citation Reports | Database | Journal impact metrics | Contextualizing journal influence within specific disciplines |

These analytical tools enable the quantitative documentation of shifting research priorities. The predominance of natural science topics in high-impact journals is reflected in citation metrics and journal influence indicators, creating a self-reinforcing cycle of visibility and funding [19]. Meanwhile, humanities scholarship remains less visible in these dominant metrics systems, not necessarily due to lower quality but because of fundamental differences in publication and citation practices [16].

Case Study: Bibliometric Analysis of Cognitive Psychology Terms

The divergence between humanities-oriented and science-oriented research is particularly evident in interdisciplinary fields. Cognitive psychology, once firmly situated within the humanities, has increasingly adopted the methodologies and evaluation criteria of the natural sciences. A bibliometric analysis of digital amnesia research reveals patterns characteristic of scientifically-oriented fields: substantial growth in publications (from 195 articles in 2019 to 837 in 2023), significant contributions from China and the United States, and dominant themes like health, technology, and cognitive overload [18]. This trajectory contrasts sharply with traditional humanities topics, which show more modest growth patterns.

Research on learning technology in psychology further demonstrates this scientific orientation, with dominant themes including deep learning, self-efficacy, and the impact of COVID-19—all topics amenable to quantitative investigation and external funding [20]. The alignment of such psychological research with natural science methodologies has likely contributed to its relative growth compared to more interpretative humanities approaches.

Discussion: Implications for Research Evaluation

The systemic advantages of natural science-oriented research in contemporary bibliometric evaluation create significant policy implications. When universities and funding agencies prioritize metrics like Journal Impact Factor and publication volume, they inherently favor disciplines whose scholarly communication practices align with these indicators [16] [19]. This practice disadvantages fields like visual and performing arts, where scholarship may take the form of performances, compositions, or exhibitions rather than journal articles [16].

The common administrative practice of grouping "Arts and Humanities" together in assessment exercises further distorts evaluation, as these domains exhibit fundamentally different publication patterns [16]. For instance, while 65% of humanities scholars publish an article within three years, only 21% of visual and performing arts faculty do so [16]. Applying the same evaluation criteria to both domains misrepresents their distinct scholarly contributions.

These methodological tensions reflect broader epistemic conflicts about what constitutes valuable knowledge and how it should be documented and assessed. As research policy increasingly emphasizes transdisciplinary collaboration to address complex societal challenges [21], developing evaluation frameworks that recognize diverse forms of scholarly excellence becomes imperative.

Bibliometric analysis provides compelling evidence of the decline of humanities-oriented topics and the ascent of natural science-oriented research. This shift is multidimensional, reflected in institutional support, faculty composition, publication patterns, and funding flows. The case of cognitive psychology demonstrates how interdisciplinary fields increasingly adopt natural science methodologies to maintain visibility and resources in the contemporary research landscape.

Addressing this imbalance requires developing more nuanced evaluation frameworks that recognize disciplinary differences in knowledge production and dissemination. The Leiden Manifesto's principle of discipline-sensitive assessment offers a promising path forward [16]. By aligning evaluation practices with diverse research missions rather than imposing one-size-fits-all metrics, universities and funding agencies can foster a more epistemologically inclusive research ecosystem capable of addressing the complex challenges facing global society.

Keyword Co-Occurrence and Cluster Analysis to Visualize the Intellectual Structure of the Field

This technical guide provides a comprehensive framework for employing keyword co-occurrence and cluster analysis to map the intellectual structure of scientific fields, with a specific focus on applications within cognitive psychology research. These bibliometric techniques enable researchers to quantitatively analyze publication databases to identify conceptual relationships, emerging trends, and knowledge domains. By transforming unstructured textual data into visual network representations, scholars can gain insights into the evolution of research fronts, thematic concentrations, and interdisciplinary connections. This whitepaper details methodological protocols from data collection through interpretation, supported by practical implementation guidelines, visualization standards, and analytical workflows specifically adapted for cognitive psychology domains.

Keyword co-occurrence analysis (KCA) operates on the principle that frequently co-occurring keywords within a scientific literature represent conceptual relationships and thematic connections within a research field. When paired with cluster analysis, this methodology enables the identification of discrete intellectual substructures that constitute the broader knowledge domain. In cognitive psychology research, where terminology evolves rapidly and interdisciplinary connections abound, these techniques provide valuable insight into the field's conceptual organization [22].

The theoretical underpinning of this approach rests on the assumption that co-word patterns reflect shared cognitive frameworks among researchers. As scholars working on similar problems use similar terminology, these semantic patterns become measurable through bibliometric analysis. The resulting clusters represent what cognitive psychology researchers recognize as distinct yet interconnected research fronts. Within cognitive psychology specifically, these methods can trace the evolution of concepts like "executive function," "working memory," and "cognitive reserve" across subdomains and methodological approaches [23] [24].

Bibliometric mapping aligns with cognitive psychology principles itself—the visual representations created through these methods externalize the mental models shared by research communities. These maps function as cognitive artifacts that make the field's knowledge structure tangible and analyzable. For drug development professionals, understanding this intellectual landscape is crucial for identifying promising research directions, potential collaborations, and innovation opportunities at the intersection of cognitive psychology and pharmaceutical research.

Data Collection and Preprocessing Protocols

Data Source Selection and Retrieval

The foundation of any robust bibliometric analysis rests on comprehensive data collection. For cognitive psychology research, Scopus is recommended as the primary database due to its extensive coverage of psychological literature and robust application programming interface (API) for data extraction [22]. The search strategy must be carefully designed to capture the relevant literature without introducing bias:

Search Query Formulation: Begin with a pilot search using core terminology (e.g., "cognitive psychology," "executive function," "cognitive assessment") to identify additional relevant terms. The final query should combine these using Boolean operators. For example:

("cognitive psych*" OR "neurocognit*" OR "executive function*") AND (assessment OR test OR measure OR task).Field Specifications: Restrict searches to title, abstract, and keyword fields to maintain relevance. In Scopus, this is implemented as

TITLE-ABS-KEY().Temporal Delimitation: Define appropriate time frames based on research objectives. For tracking evolution, collect data across multiple decades; for current state analysis, focus on recent publications (e.g., 2010-present).

Document Type Filtering: Include only peer-reviewed journal articles, reviews, and conference papers to maintain quality, while excluding editorials, letters, and notes unless specifically relevant.

The retrieval process should be documented thoroughly, including search date, exact query, and result counts at each stage. This ensures reproducibility, a critical consideration for both bibliometric and cognitive psychology research standards [22].

Data Cleaning and Standardization

Raw bibliographic data requires extensive preprocessing to ensure analytical validity. This process, known as data disambiguation, addresses inconsistencies in terminology that would otherwise compromise analysis [22]. For cognitive psychology datasets, implement the following standardization protocol:

Keyword Normalization: Create a thesaurus file that consolidates variant forms of the same concept. For example, "service learning" and "service-learning" should be standardized to a single term [22]. Similarly, in cognitive psychology, "ADHD" and "attention-deficit/hyperactivity disorder" should be normalized.

Term Singularization: Convert plural forms to singular (e.g., "memories" → "memory," "cognitive processes" → "cognitive process") to prevent artificial separation of related concepts.

Acronym Resolution: Replace acronyms with their full forms or create bidirectional mappings (e.g., "ASD" → "autism spectrum disorder," "PASS" → "Planning, Attention, Simultaneous, Successive Processing").

Spelling Variation Reconciliation: Address differences between American and British English (e.g., "behavior" vs. "behaviour") through automated replacement.

The creation of a comprehensive thesaurus file is essential for this process. This file should document all transformations applied to the dataset, enabling transparency and replicability. For cognitive psychology research, domain-specific terminology resources like the American Psychological Association's Thesaurus of Psychological Index Terms can provide valuable guidance for standardization.

Data Extraction and Matrix Formation

Once cleaned, the keyword data must be transformed into analytical matrices. The primary structure for co-occurrence analysis is the co-occurrence matrix, a symmetric matrix where cells represent the frequency with which pairs of keywords appear together in the same documents [25]. The technical implementation involves:

Frequency Thresholding: Apply minimum occurrence thresholds to filter out rare terms that lack conceptual significance. The probabilistic model proposed by Zhou et al. (2022) can determine statistically significant thresholds rather than relying on arbitrary cutoffs [25].

Matrix Construction: Generate a keyword × keyword matrix where each cell entry ( co_{ij} ) represents the number of documents in which both keyword ( i ) and keyword ( j ) appear.

Normalization (Optional): Apply association strength measures like cosine similarity or Jaccard index to normalize co-occurrence frequencies relative to the individual term frequencies: ( \text{cosine}{ij} = \frac{co{ij}}{\sqrt{fi \cdot fj}} ) where ( fi ) and ( fj ) are the frequencies of keywords ( i ) and ( j ) respectively.

This matrix serves as the input for subsequent cluster analysis and network visualization, transforming textual information into quantifiable relational data.

Analytical Procedures and Methodologies

Cluster Analysis Algorithms

Cluster analysis groups keywords based on their co-occurrence patterns, revealing the conceptual architecture of the research field. Several algorithms are applicable to bibliometric data, each with distinct advantages for cognitive psychology applications:

Table 1: Cluster Analysis Algorithms for Bibliometric Data

| Algorithm | Method Type | Key Characteristics | Cognitive Psychology Applications |

|---|---|---|---|

| K-means | Non-hierarchical | Partitions data into k pre-defined clusters; minimizes within-cluster variance [26] | Identifying distinct cognitive profiles [23]; grouping research topics [24] |

| Hierarchical - Agglomerative | Hierarchical | Builds clusters iteratively by pairing similar cases; creates dendrogram visualization [26] | Mapping conceptual hierarchies in cognitive theories |

| Ward's Method | Hierarchical | Minimizes within-cluster variance during merging; creates compact, spherical clusters [26] | Grouping countries based on information search patterns [26] |

| Single Linkage | Hierarchical | Joins clusters based on nearest neighbors; can create elongated chains [26] | Identifying conceptual bridges between cognitive domains |

For cognitive psychology research, the K-means algorithm is particularly valuable for its efficiency with large datasets and clear cluster boundaries. The algorithm follows this protocol:

Determine Optimal Cluster Number (k): Use the silhouette coefficient to evaluate clustering quality across different k values [23]. The silhouette score measures how similar an object is to its own cluster compared to other clusters, with values ranging from -1 to 1 (higher values indicating better clustering).

Initialize Centroids: Randomly select k data points as initial cluster centers.

Assignment Step: Assign each keyword to the cluster with the nearest centroid based on Euclidean distance in the co-occurrence space.

Update Step: Recalculate cluster centroids as the mean of all points in the cluster.

Iteration: Repeat assignment and update steps until cluster assignments stabilize [24].

The algorithm minimizes the following objective function: ( J = \sum{j=1}^k \sum{i=1}^n \|xi - cj\|^2 ) where ( \|xi - cj\|^2 ) is the distance between keyword ( xi ) and cluster center ( cj ) [23].

Determining Optimal Cluster Number

Selecting the appropriate number of clusters is critical for meaningful interpretation. The silhouette method provides a robust approach for determining optimal k values [23]. Implementation protocol:

Compute silhouette scores for k values ranging from 2 to ( \sqrt{n} ) (where n is the number of keywords).

For each k, calculate the average silhouette width across all keywords: ( s(i) = \frac{b(i) - a(i)}{\max{a(i), b(i)}} ) where ( a(i) ) is the mean distance between i and other points in the same cluster, and ( b(i) ) is the mean distance between i and points in the nearest neighboring cluster.

Select the k value that maximizes the average silhouette score.

For cognitive psychology applications, also consider conceptual interpretability when finalizing cluster numbers. Statistical optimization should be balanced with theoretical coherence to ensure clusters represent meaningful research domains rather than mathematical artifacts.

Validation and Stability Assessment

Cluster solutions require validation to ensure reliability. Implement the following validation protocol:

Internal Validation: Calculate Dunn indices and within-cluster sum of squares to assess compactness and separation.

Stability Testing: Apply resampling methods (bootstrapping) to determine how consistently keywords cluster across subsamples.

Conceptual Validation: Subject clusters to domain expert review to assess face validity and conceptual coherence within cognitive psychology.

This multi-method validation approach ensures that identified clusters represent genuine intellectual structure rather than random patterning in the data.

Visualization and Interpretation Frameworks

Network Visualization Principles

Effective visualization transforms analytical output into interpretable intellectual maps. The following principles guide network representation:

Node Positioning: Use force-directed algorithms (e.g., Fruchterman-Reingold) to position strongly connected nodes closer together.

Cluster Differentiation: Represent different clusters with distinct colors using the specified palette (#4285F4, #EA4335, #FBBC05, #34A853) [23].

Node Scaling: Size nodes according to frequency or centrality metrics to visualize term importance.

Label Management: Adjust label sizes proportionally to node importance and use abbreviation strategies to reduce visual clutter.

These principles balance aesthetic clarity with information density, creating visualizations that accurately represent the underlying cognitive psychology knowledge structure while remaining interpretable for diverse audiences including researchers and drug development professionals.

Intellectual Structure Workflow

The complete analytical process from data collection to visualization follows a systematic workflow that can be implemented using bibliometric software tools:

Interpretation Guidelines

Transforming visualizations into meaningful insights requires systematic interpretation:

Cluster Labeling: Identify central terms within each cluster to generate descriptive labels for research themes.

Theme Characterization: Analyze the composition of each cluster to determine the core focus (methodological, conceptual, or applied).

Inter-cluster Relationships: Examine connections between clusters to identify interdisciplinary interfaces and knowledge diffusion patterns.

Temporal Evolution: Conduct longitudinal analysis by comparing maps across time periods to track conceptual emergence, convergence, or decline.

In cognitive psychology research, particular attention should be paid to connections between basic cognitive processes (e.g., attention, memory) and applied domains (e.g., cognitive assessment, intervention), as these interfaces often represent promising research frontiers with implications for drug development and cognitive enhancement.

Applications in Cognitive Psychology Research

Research Front Identification

Keyword co-occurrence and cluster analysis effectively identifies emerging research fronts in cognitive psychology. For example, a cluster showing strong connections between "cognitive reserve," "modifiable risk factors," and "dementia prevention" would indicate an active research domain with implications for preventive interventions and pharmaceutical development [24]. The probabilistic model for co-occurrence analysis developed by Zhou et al. provides statistical rigor in identifying significant relationships beyond random co-occurrence [25].

Recent applications in cognitive psychology have revealed:

- Distinct cognitive profiles in neurodevelopmental disorders through k-means clustering of assessment data [23]

- Interrelationships between cognitive reserve proxies and modifiable risk factors in aging populations [24]

- Emerging connections between cognitive training paradigms and neural plasticity mechanisms

For drug development professionals, these analyses can identify promising targets for cognitive enhancement and reveal potential combination approaches integrating pharmacological and behavioral interventions.

Cognitive Assessment and Profiling

Cluster analysis has direct applications in cognitive assessment beyond literature analysis. The k-means method has been successfully employed to identify distinct cognitive profiles in clinical populations based on standardized assessment scores [23]. For example:

Table 2: Cognitive Profile Clustering Application Based on PASS Theory

| Cluster | Cognitive Characteristics | Clinical Population | Intervention Implications |

|---|---|---|---|

| Planning-Dominant | High planning, moderate attention, low simultaneous processing | ADHD, ASD comorbidities | Strategy-based learning approaches |

| Attention-Deficit | Low attention, variable other domains | ADHD subtypes | Attention training technologies |

| Global Challenge | Low scores across all PASS domains | Intellectual developmental disorders | Comprehensive cognitive support |

| Specific Processing | Discrepancies between successive and simultaneous processing | Specific learning disorders | Modality-specific instructional materials |

This application demonstrates how the same methodological approach can be applied at both the literature level and the individual difference level within cognitive psychology research.

Technical Implementation Toolkit

Successful implementation requires specific analytical tools and resources:

Table 3: Research Reagent Solutions for Bibliometric Analysis

| Tool/Resource | Function | Application Note |

|---|---|---|

| VOSviewer | Network visualization and co-occurrence analysis | Optimal for keyword mapping; provides multiple layout algorithms [22] |

| Scopus API | Bibliographic data retrieval | Primary data source; comprehensive coverage of psychological literature [22] |

| R Bibliometrix | Comprehensive bibliometric analysis | Open-source alternative with full analytical workflow |

| Python Sci-kit Learn | K-means clustering implementation | Flexible parameter tuning for optimal clustering [23] |

| Thesaurus File | Keyword standardization | Critical for data cleaning; must be domain-specific [22] |

| Silhouette Analysis | Cluster validation | Determines optimal cluster number; validates solution quality [23] |

Advanced Analytical Techniques

Temporal Evolution Mapping

Tracking conceptual evolution requires specialized longitudinal approaches:

Time Slicing: Divide data into consecutive time periods (e.g., 5-year intervals) and compare cluster structures across periods.

Emergence Detection: Identify keywords showing significant frequency increases or new strong co-occurrence relationships.

Trajectory Analysis: Map the movement of specific concepts between clusters over time, indicating theoretical repositioning.

These techniques reveal how cognitive psychology paradigms shift, such as the transition from behaviorist to cognitive to cognitive neuroscience frameworks, providing historical context for current research fronts.

Cross-Disciplinary Integration Analysis

Cognitive psychology increasingly intersects with other fields. Cross-disciplinary analysis protocols include:

Interface Identification: Detect clusters containing terminology from multiple disciplines (e.g., cognitive psychology and computer science in "computational modeling").

Bridge Concept Detection: Identify keywords that consistently co-occur with terms from different domains, serving as conceptual bridges.

Knowledge Flow Analysis: Track citation patterns between clusters to determine directional influence.

For drug development professionals, these analyses can identify promising interdisciplinary collaboration opportunities and knowledge transfer pathways between basic cognitive research and applied pharmaceutical development.

Validation Frameworks

Robust validation requires multiple approaches:

Benchmarking: Compare results with established taxonomy resources like APA's classification system.

Expert Survey: Engage domain specialists in qualitative evaluation of cluster coherence and labeling.

Predictive Validation: Test whether current cluster structures predict future research trends or citation patterns.

These validation methods ensure that the intellectual structure identified through algorithmic approaches aligns with the conceptual organization recognized by the cognitive psychology research community.

Keyword co-occurrence and cluster analysis provide powerful methodological approaches for visualizing the intellectual structure of cognitive psychology research. When implemented with rigorous data collection, appropriate analytical protocols, and thoughtful interpretation frameworks, these techniques reveal the conceptual architecture of the field, emerging research fronts, and interdisciplinary connections. For drug development professionals and cognitive psychology researchers alike, these insights support strategic research planning, identification of innovation opportunities, and navigation of the complex knowledge landscape. The continued refinement of these methods—particularly through integration with natural language processing and machine learning approaches—promises even deeper insights into the evolving structure of cognitive science knowledge.

Bibliometric analysis is a powerful statistical method for exploring and analyzing large volumes of scientific data to unpack evolutionary nuances within a specific field while shedding light on emerging areas of research [27]. This data-driven approach utilizes quantitative indicators to map the structure, trends, and impact of research by examining publication patterns, citation networks, and authorship data [28]. Within cognitive psychology and related scientific fields, bibliometric analysis provides researchers, institutions, and policymakers with objective tools to track knowledge development from seminal works to current studies, identify research frontiers, and make informed strategic decisions about funding and research direction [29] [28].

The methodology has evolved significantly from its origins in library science and documentation to become an essential tool for navigating today's vast scientific landscape. The recent growth in bibliometric applications stems from increased scientific output, advanced computational tools, and greater accessibility of bibliographic databases [30]. For cognitive psychology researchers and drug development professionals, bibliometric analysis offers a systematic approach to track publication volume and growth trends across interdisciplinary connections between psychology, artificial intelligence, neuroscience, and pharmaceutical research [31].

Fundamental Principles of Bibliometric Analysis

Key Components and Metrics

Bibliometric analysis relies on several core components and metrics that provide different perspectives on the research landscape:

Citation Analysis: Examines the frequency and patterns of citations that publications, authors, or journals receive, serving as an indicator of scholarly impact and influence within the scientific community [28]. High citation counts often correlate with foundational works in a field.

Co-citation Analysis: Explores relationships between articles that are frequently cited together, revealing intellectual connections and clustering within research domains [28]. This method helps identify schools of thought and thematic relationships between seminal works.

Bibliographic Coupling: Links publications based on shared references, indicating intellectual similarities or influences between current research fronts [28]. This approach is particularly valuable for mapping recent developments in fast-evolving fields.

Keyword Co-occurrence Analysis: Identifies conceptual structure by tracking recurring keywords and their relationships, revealing dominant themes and emerging topics through term frequency and association patterns [27] [28].

Authorship and Collaboration Analysis: Focuses on productivity patterns of individual authors or institutions and the extent of research collaboration, highlighting influential contributors and knowledge networks [28].

Comparison with Other Review Methodologies

Bibliometric analysis occupies a distinct position among literature review methodologies, each with specific strengths and applications:

Table 1: Comparison of Literature Review Methods

| Method | Focus | Scope | Data Type | Primary Application |

|---|---|---|---|---|

| Bibliometric Analysis | Mapping intellectual structure and trends | Typically broad (hundreds to thousands of publications) | Quantitative publication data | Identifying research trends, networks, and emerging topics |

| Systematic Literature Review | Answering specific research questions | Usually narrow and focused | Qualitative synthesis of evidence | Evidence-based conclusions on specific phenomena |

| Meta-Analysis | Statistical synthesis of effect sizes | Empirical studies with comparable statistics | Quantitative effect size data | Estimating overall effect strength and direction across studies |

Bibliometric analysis distinguishes itself through its capacity to handle exceptionally large datasets that would be unmanageable through manual review methods, while providing macro-level insights into the evolution of research fields [30]. Unlike systematic reviews that typically address narrowly focused research questions, bibliometric analysis excels at revealing the broad intellectual landscape and structural dynamics of a domain [30]. While meta-analysis statistically combines results from multiple studies on specific relationships, bibliometric analysis maps the conceptual and social networks that constitute a research field [30].

Methodological Framework

Step-by-Step Bibliometric Process

Conducting a rigorous bibliometric analysis requires careful planning and execution across multiple phases:

Table 2: Bibliometric Analysis Step-by-Step Process

| Step | Key Activities | Tools & Techniques | Output |

|---|---|---|---|

| 1. Define Research Scope | Formulate research questions; Determine temporal range; Establish inclusion/exclusion criteria | Problem formulation frameworks | Clear research objectives and boundaries |

| 2. Data Collection | Select appropriate databases; Develop comprehensive search strategy; Export bibliographic data | Databases: Scopus, Web of Science, PubMed; Boolean search operators | Comprehensive dataset in .csv, .xls, or .ris format |

| 3. Data Cleaning | Remove duplicates; Standardize author names, affiliations, and keywords; Filter irrelevant records | Reference management software (EndNote, Zotero); Text processing tools | Refined, accurate dataset ready for analysis |

| 4. Select Analytical Techniques | Choose performance analysis and science mapping methods aligned with research questions | VOSviewer, CiteSpace, Bibliometrix | Appropriate methodology selection |

| 5. Execute Analysis | Perform selected bibliometric analyses; Identify patterns and relationships | R, Python, VOSviewer, Bibliometrix | Analytical results and identified trends |

| 6. Visualize Results | Create network maps, conceptual structures, collaboration patterns | Visualization tools (VOSviewer, Gephi, Tableau) | Intuitive visual representations of findings |

| 7. Interpret and Report | Contextualize results; Discuss implications; Identify future research directions | Academic writing frameworks; Statistical reporting | Comprehensive research report or publication |

Experimental Protocol for Bibliometric Research

For researchers implementing bibliometric studies, particularly in cognitive psychology and drug development domains, the following detailed protocols ensure methodological rigor:

Protocol 1: Tracking Evolutionary Trends

This protocol examines how research topics in cognitive psychology have evolved from seminal works to current studies:

Data Source Selection: Utilize multiple databases (Web of Science and Scopus) for comprehensive coverage [28] [30]. For cognitive psychology, include PsycINFO if accessible.

Search Strategy: Develop a systematic search query using title, abstract, and keyword fields. Example for cognitive psychology: (cognit OR "executive function" OR "working memory" OR "attention" OR "decision making") AND (psycholog* OR neurosci*). Apply appropriate methodological filters.

Temporal Segmentation: Divide the dataset into time slices (e.g., 5-year periods) to analyze trends and shifts in research focus [32].

Co-word Analysis Implementation: Use VOSviewer or Bibliometrix to perform co-word analysis and identify conceptual networks within each time period [30]. Set minimum keyword threshold appropriately (typically 5-10 occurrences).

Thematic Evolution Analysis: Track keyword emergence, disappearance, and persistence across time periods to map conceptual development [27].

Burst Detection: Apply algorithms to identify suddenly popular topics, potentially indicating emerging research fronts [32].

Protocol 2: Forecasting Future Research Directions

This advanced protocol utilizes machine learning to predict future trends based on current bibliometric indicators:

Indicator Selection: Compile multiple predictive variables including publication growth rates, citation accumulation patterns, review-to-research article ratios, and patent references [32].