Multivoxel Pattern Analysis for Memory Representations: From Neural Decoding to Clinical Applications

This article provides a comprehensive examination of multivoxel pattern analysis (MVPA) as a powerful tool for investigating distributed neural representations of memory.

Multivoxel Pattern Analysis for Memory Representations: From Neural Decoding to Clinical Applications

Abstract

This article provides a comprehensive examination of multivoxel pattern analysis (MVPA) as a powerful tool for investigating distributed neural representations of memory. Tailored for researchers, scientists, and drug development professionals, we explore the foundational principles of MVPA, contrasting it with traditional univariate fMRI approaches and establishing its theoretical basis for studying memory engrams and reinstatement. The review details methodological workflows—from trial estimation and feature selection to classifier training with Support Vector Machines (SVM)—and presents practical applications in decoding episodic memory states. We address critical troubleshooting considerations for optimizing MVPA in memory studies, including data preprocessing, dimensionality reduction, and cross-validation strategies. Finally, we validate MVPA's utility by comparing its sensitivity to univariate methods and showcasing its emerging role as a biomarker in clinical populations with memory impairments, such as major depressive disorder and opioid use disorder. This synthesis aims to equip researchers with the knowledge to implement MVPA and highlights its potential for transforming memory research and therapeutic development.

Theoretical Foundations: How MVPA Reveals Distributed Memory Codes

In functional neuroimaging, the predominant approach to data analysis has historically been the mass-univariate method, typified by the General Linear Model (GLM). This framework treats each voxel in the brain independently, testing for condition-specific effects one voxel at a time [1]. While powerful for identifying which brain regions are globally engaged during a task, this approach inherently disregards the information embedded in the covariance between voxels. It operates on the principle of functional segregation, seeking to localize cognitive functions to specific anatomic regions. However, a fundamental challenge in neuroscience is that the brain is a distributed processing system, with even basic tasks requiring the coordinated activity of neurons across multiple regions [1]. The information underlying complex cognitive functions, including memory, is often encoded in distributed spatial patterns of neural activity rather than isolated, peak responses in dedicated modules.

Multivariate Pattern Analysis (MVPA) represents a paradigm shift, moving beyond the "where" question to address "how" information is represented in the brain. The foundational insight of MVPA is that the pattern of activity across a population of voxels—even those showing only weak or moderate responses—can carry meaningful information about a stimulus, task, or cognitive state [2]. The origins of MVPA in fMRI are often traced to a seminal 2001 paper by Haxby et al., which investigated the distributed representation of faces and objects in the ventral temporal cortex [2] [1]. This study demonstrated that object categories are encoded as distinct patterns of neural activity and that these distinctive patterns persist even when the most category-selective voxels are excluded from analysis. This finding stood in stark contrast to a strong modular account of brain function and highlighted the superior sensitivity of multivariate methods for decoding the information content of neural population codes.

Core Principles: Contrasting Mass-Univariate and Multivariate Approaches

The distinction between mass-univariate analysis and MVPA is not merely a technical choice but reflects different models of cortical organization and information representation. The table below summarizes the fundamental differences between these two analytical frameworks.

Table 1: Core Differences Between Mass-Univariate Analysis and MVPA

| Feature | Mass-Univariate Analysis (GLM) | Multivariate Pattern Analysis (MVPA) |

|---|---|---|

| Unit of Analysis | Individual voxels tested independently | Patterns of activity across multiple voxels analyzed jointly |

| Primary Question | Where does a specific condition evoke significant activity? | What information is encoded in a region's distributed activity pattern? |

| Handling of Covariance | Treats covariance between voxels as a nuisance | Leverages covariance between voxels as the source of information |

| Sensitivity | Sensitive to the mean activation level of a region | Sensitive to the distributed pattern, even with weak mean activation |

| Model of Representation | Functional specialization / modularity | Distributed population coding |

| Typical Output | Statistical parametric maps | Classification accuracy, pattern similarity, model performance |

MVPA is not a single algorithm but a diverse family of methods united by the common principle of analyzing neural responses as patterns [2]. These methods are specifically designed to identify spatial and/or temporal patterns in the data that differentiate between cognitive tasks, perceptual states, or subject groups [1]. The enhanced sensitivity of MVPA is particularly valuable for detecting subtle, yet behaviorally relevant, neural signals that are spatially intermixed and would be missed by analyses that focus on the overall activation level of a region [3].

Key MVPA Variants and Experimental Protocols

Several MVPA variants have been developed to address different research questions. The following sections outline the purpose and provide a detailed experimental protocol for four major types of MVPA.

Decoding

- Conceptual Overview: Decoding, or pattern classification, is the most common form of MVPA. It uses machine learning classifiers (e.g., Support Vector Machines, linear discriminant analysis) to determine if a brain region's activity pattern contains enough information to predict a stimulus, cognitive state, or behavior. The classifier is trained on a subset of brain data with known conditions and then tested on a held-out dataset to assess its predictive accuracy [4] [1].

- Detailed Experimental Protocol:

- Experimental Design: A block, event-related, or hybrid design is used to present stimuli or tasks. A hybrid blocked fast-event-related design has been shown to be optimal for MVPA, combining the rest periods of a block design with the randomly alternating trials of a rapid event-related design to mitigate participant anticipation and strategy while allowing for feedback periods [5].

- fMRI Data Acquisition: Standard BOLD fMRI data is collected. The design must include multiple independent examples (trials) of each condition of interest to enable training and testing of the classifier.

- Preprocessing: Data undergoes standard preprocessing (motion correction, slice-time correction, normalization, smoothing). Note that excessive smoothing can blur fine-grained spatial patterns.

- Feature Selection: A region of interest (ROI) is defined (anatomically or functionally), or a searchlight approach is used to move a small spherical classifier across the entire brain.

- Pattern Assembly: For each trial or time point, a pattern of activity (a vector of beta weights or raw signal) is extracted from the selected voxels.

- Classification:

- Patterns and their associated condition labels are split into training and test sets using cross-validation.

- A classifier is trained on the training set to learn the mapping between activity patterns and conditions.

- The trained classifier predicts the conditions in the held-out test set.

- Validation: Classification accuracy is calculated as the proportion of correctly predicted test trials. Accuracy is statistically compared against chance level.

Representational Similarity Analysis (RSA)

- Conceptual Overview: RSA investigates the structure of neural representations by comparing the similarity of activity patterns evoked by different conditions. Instead of decoding categories, it characterizes the "representational space" by creating a neural Representational Dissimilarity Matrix (RDM), which quantifies how dissimilar each condition is from every other condition based on their neural activity patterns. This neural RDM can then be compared to RDMs derived from computational models, behavioral data, or other brain regions to test hypotheses about what information is being represented [4].

- Detailed Experimental Protocol:

- Stimulus Set: A set of stimuli or conditions is designed to systematically probe a representational space (e.g., different categories of memory items, faces with varying emotions).

- fMRI Data Acquisition & Preprocessing: Same as for decoding.

- Pattern Estimation: For each condition, a stable pattern of activity is estimated from the voxels within an ROI or searchlight.

- Construct Neural RDM: For all pairs of conditions, the dissimilarity between their activity patterns is calculated (e.g., using 1 - Pearson correlation or Euclidean distance). This results in a symmetric matrix where each cell represents the neural dissimilarity between two conditions.

- Model Comparison: RDMs from competing theoretical models (e.g., a semantic model vs. a perceptual model of memory representations) are created. The models' RDMs are compared to the neural RDM using a second-order similarity measure (e.g., Kendall's Tau or Spearman's rank correlation). The model that best correlates with the neural RDM is considered the better account of the information represented in that brain region.

Pattern Expression

- Conceptual Overview: This method investigates how a specific, pre-defined neural representation is expressed in the brain under different contexts or over time. A "reference pattern" is first identified (e.g., a pattern associated with a specific memory during encoding) and its expression is then measured in other parts of the dataset (e.g., during a subsequent retrieval period or rest). This is particularly useful for tracking the reactivation of memory traces [4].

- Detailed Experimental Protocol:

- Define a Reference Pattern: A neural activity pattern is established from a "localizer" task or a specific experimental phase (e.g., the average pattern for a successfully encoded memory).

- Apply the Reference Pattern: The similarity (e.g., dot product) between this reference pattern and brain activity patterns is measured in a separate set of data (e.g., during a post-encoding rest period or during a retrieval test).

- Correlate with Behavior: The magnitude of pattern expression (similarity) is then correlated with behavioral outcomes. For instance, one can test whether the strength of a memory pattern's reactivation during rest predicts subsequent recall accuracy.

Voxel-Wise Encoding Models

- Conceptual Overview: Encoding models take a fundamentally different approach. Instead of predicting the stimulus from the brain activity (decoding), they aim to predict brain activity from a detailed model of the stimulus or cognitive state. These models characterize the "tuning" of individual voxels or patterns to specific features (e.g., visual Gabor filters, semantic features). A well-fit encoding model can effectively predict how the brain will respond to novel stimuli [4] [1].

- Detailed Experimental Protocol:

- Stimulus Feature Specification: A set of features that quantitatively describe the stimuli is defined. For memory, this could be a semantic model where each word is represented as a vector in a high-dimensional semantic space.

- Model Training: For each voxel, a model (e.g., linear regression) is trained to map the stimulus features to the voxel's activation profile across a large set of training stimuli.

- Model Testing: The quality of the encoding model is tested by using it to predict brain activity for a set of novel, held-out stimuli. The correlation between the predicted and actual brain activity is measured.

- Inference: The spatial distribution of well-predicted voxels reveals which brain regions represent the feature space described by the model.

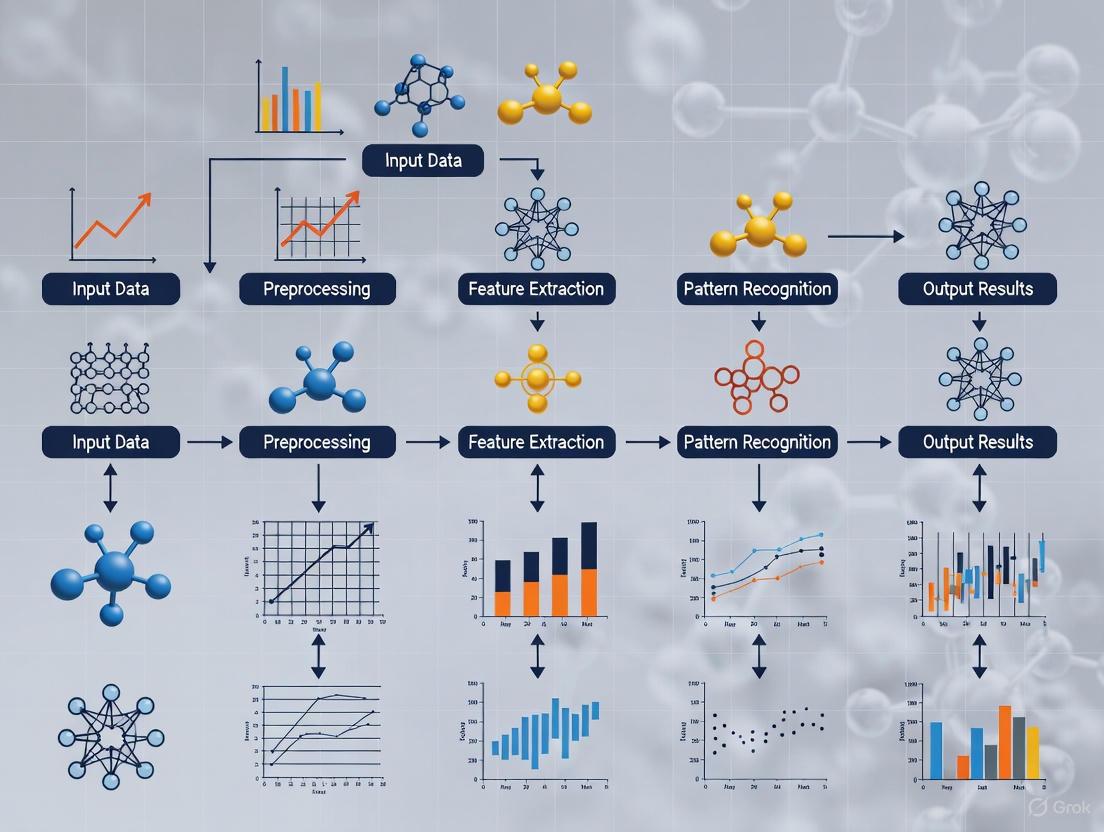

The logical relationships and applications of these MVPA variants in a research context can be visualized as a workflow.

Successful implementation of MVPA requires a combination of software tools, computational resources, and methodological knowledge. The following table details key components of the MVPA research toolkit.

Table 2: Research Reagent Solutions for MVPA

| Tool / Resource | Function / Purpose | Examples & Notes |

|---|---|---|

| MVPA Software Suites | Provides integrated pipelines for classification, RSA, and related analyses. | PyMVPA, PRoNTo, The Decoding Toolbox, CoSMoMVPA, MVPA-light. Many offer integration with popular fMRI analysis platforms. |

| General-Purpose Programming Languages | Offer maximum flexibility for custom analysis design and implementation. | Python (with scikit-learn, NumPy, SciPy) and R (with caret, e1071 packages) are most common. MATLAB with custom scripts is also widely used. |

| Classifier Algorithms | The core engine for decoding analyses; learns the mapping between brain patterns and experimental conditions. | Support Vector Machines (SVM) are most popular due to robustness in high-dimensional spaces. Others include Linear Discriminant Analysis (LDA), Logistic Regression, and k-Nearest Neighbors (k-NN). |

| High-Performance Computing (HPC) | Provides the computational power needed for intensive calculations, especially for whole-brain searchlight analysis. | University HPC clusters, cloud computing services (AWS, Google Cloud). Essential for processing large datasets in a feasible time. |

| Visualization Tools | Critical for interpreting and presenting complex multivariate results. | Includes brain map renderers (e.g., BrainNet Viewer, PyCortex) and statistical plotting libraries (e.g., Matplotlib in Python, ggplot2 in R). |

MVPA in Action: Application to Memory Representations Research

The application of MVPA has profoundly impacted memory research by providing a window into the content and dynamics of memory representations. While traditional univariate analyses could identify regions involved in memory processes (e.g., hippocampus during encoding), MVPA can decode the specific content of a memory being encoded or retrieved. For instance, MVPA can distinguish neural activity patterns associated with different categories of studied items (e.g., faces vs. houses) in the medial temporal lobe and ventral temporal cortex, even when overall activation levels are similar [2] [1].

Furthermore, MVPA is uniquely suited to track the fate of memory traces over time. Using pattern expression and similarity analyses, researchers have been able to demonstrate the reactivation of memory traces during post-encoding rest periods and sleep, linking the strength of this reactivation to subsequent memory performance. RSA allows for testing sophisticated models of the structure of memory representations, such as how the semantic relatedness between words is reflected in the similarity of their associated neural patterns. This moves beyond simple category decoding to understand the organizing principles of memory storage.

A critical consideration for MVPA in clinical and pharmacological settings is its potential as a sensitive biomarker. In developmental and clinical neuroimaging, MVPA techniques have shown promise for identifying distributed patterns of brain activity or structure that can distinguish healthy from diseased brains, predict disease onset, or differentiate treatment responders from non-responders [1]. For memory disorders like Alzheimer's disease, MVPA could potentially detect subtle alterations in the neural codes for memory before overt behavioral symptoms or gross volumetric changes become apparent.

MVPA represents a fundamental advancement in the analysis of neuroimaging data, shifting the focus from localized regional activation to the information content of distributed patterns. Its superior sensitivity makes it an indispensable tool for modern cognitive neuroscience, particularly for probing complex and latent cognitive states like memory representations. As the field moves toward more data-driven approaches and the integration of neuroimaging with other data types, the principles and methods of MVPA will continue to be central to unlocking the neural codes of human cognition.

The Reinstatement Framework describes a core process in systems neuroscience whereby the reactivation of specific memory traces, or engrams, in the hippocampus triggers the corresponding reactivation of distributed memory representations in the neocortex. This hippocampo-neocortical dialogue is fundamental to episodic memory retrieval. Modern neuroscientific investigations, leveraging tools like multivoxel pattern analysis (MVPA), are delineating the mechanisms of this process across multiple scales, from cellular ensembles in rodents to large-scale networks in humans. This framework posits that the hippocampus does not store the entire memory content but acts as an index that points to and reactivates the neocortical sites where specific sensory and contextual details are stored [6]. Understanding reinstatement is crucial for research into memory updating, consolidation, and the cognitive deficits observed in neuropsychiatric disorders, offering potential targets for therapeutic intervention.

Key Experimental Evidence for the Reinstatement Framework

The Reinstatement Framework is supported by convergent evidence from rodent models, which allow for causal manipulations of engram ensembles, and human intracranial and fMRI studies, which provide detailed temporal and spatial dynamics of neural activity during memory retrieval.

Cellular-Level Evidence from Rodent Models: Engram Reconstruction

A pivotal 2025 study by Lei et al. provided direct evidence for a dynamic reinstatement process involving the reconstruction of hippocampal engrams during the recall of remote memories [7] [8].

- Core Finding: The recall of a systems-consolidated, remote memory does not simply reactivate the original hippocampal engram formed during learning. Instead, it recruits a new ensemble of hippocampal engram cells for all subsequent retrievals [7] [8].

- Mechanism of Recruitment: The silencing of the original engram cells, facilitated by adult hippocampal neurogenesis, enables the recruitment of new engram cells. This new ensemble integrates newly experienced contextual information into the existing remote memory trace, a process termed systems reconsolidation [7].

- Circuit Coordination: The medial prefrontal cortex (mPFC) projects to and coordinates the activity between the new hippocampal engram and the valence-related engram in the basolateral amygdala (BLA), thereby integrating contextual and emotional information [7].

Table 1: Key Findings from Engram Reconstruction Study

| Aspect | Finding | Functional Significance |

|---|---|---|

| Hippocampal Role | Re-engaged during remote memory recall (Systems Reconsolidation) | Creates a labile state for memory updating [7] [8] |

| Engram Fate | Original engram silenced; new engram recruited | Prevents interference, allows incorporation of new information [7] |

| Role of Neurogenesis | Necessary for silencing original engrams | Facilitates the recruitment of new engram cells [7] |

| Cross-System Integration | mPFC coordinates hippocampus and amygdala activity | Integrates updated context with original memory valence [7] |

Systems-Level Evidence from Human Studies: Coordinated Reinstatement

Confirming the predictions of the framework, a 2019 human intracranial EEG (iEEG) study demonstrated tightly coordinated reinstatement between the hippocampus and neocortex during episodic memory retrieval [9].

- Temporal Dissociation: The study revealed a double dissociation in the timing and content of reinstatement. The hippocampus showed early reinstatement of item-context associations, while the lateral temporal cortex (LTC) showed later reinstatement of item-specific information [9].

- Mechanism of Coordination: The quality of item-specific reinstatement in the LTC was predicted by the magnitude of gamma-band phase synchronization between the hippocampus and LTC. This suggests that inter-regional oscillatory coupling is a mechanism for coordinating reinstatement [9].

- Representational Format: The hippocampus primarily reinstated bound item-context representations (e.g., an object in a specific location), whereas neocortical areas reinstated more invariant item information, consistent with the hippocampus acting as an index for neocortical content [9].

Table 2: Characteristics of Representational Reinstatement in Human Hippocampus and Neocortex

| Characteristic | Hippocampus | Lateral Temporal Cortex (LTC) |

|---|---|---|

| Representational Content | Item-context associations | Item-specific information |

| Timing of Reinstatement | Early (~0-0.5s post-stimulus) | Later (~1-3s post-stimulus) |

| Key Frequency Band | Not Specified | Gamma (30-100 Hz) |

| Theoretical Role | Index or pointer | Storage of sensory details |

| Dependence on Coordination | Initiates reinstatement | Reinstatement quality depends on hippocampal-LTC gamma synchronization [9] |

Experimental Protocols for Investigating Reinstatement

The following protocols detail the methodologies used in the key studies cited, providing a template for investigating the reinstatement framework.

Protocol: Tracing Engram Ensembles Across Memory States

This protocol is adapted from Lei et al. (2025) for investigating systems reconsolidation in rodent models [7].

Objective: To trace the fate of hippocampal engram ensembles from memory acquisition through systems reconsolidation and test memory updating.

Materials and Reagents:

- Triple-event labeling tool: A genetically engineered system for labeling neurons active during three distinct events (e.g., acquisition, remote recall, and a new context).

- Activity-dependent promoters: such as c-Fos-tTA or TRAP for labeling engram ensembles.

- Two-photon microscope: for longitudinal in vivo imaging of engram ensemble activity.

- Contextual Fear Conditioning (CFC) apparatus: consisting of distinct contexts.

- Viral vectors: for expression of actuators (e.g., opsins) or sensors (e.g., GCaMP).

Procedure:

- Label Acquisition Engram: Subject mice to CFC in Context A. Use the activity-dependent system to label the hippocampal (e.g., DG, CA1) engram ensemble formed during this training.

- Systems Consolidation: House mice in their home cage for a remote time period (e.g., 21 days).

- Label Recall Engram: On day 21, re-expose mice to Context A to trigger remote memory recall. Simultaneously, label the reactivated engram ensemble using a distinct reporter.

- Memory Updating Test: Expose mice to a novel, safe Context B. Test fear response in both the original (A) and new (B) contexts to assess generalization and updating.

- In Vivo Imaging: Throughout the procedure, use two-photon calcium imaging to track the activity of both the original and recall-recruited engram ensembles.

- Functional Manipulation: Use opto- or chemogenetics to inhibit either the original or new engram ensemble during specific phases to test their necessity for recall and updating.

Analysis:

- Quantify the overlap between engram ensembles from different phases.

- Correlate the activity of new engram cells with behavioral expression of updated memory.

- Use immunohistochemistry (e.g., for c-Fos) to validate ensemble activity and neurogenesis (e.g., for doublecortin) [7].

Protocol: Human iEEG for Tracking Reinstatement Dynamics

This protocol is adapted from the 2019 Nature Communications study for use in epilepsy patients with implanted electrodes [9].

Objective: To simultaneously track the content and timing of representational reinstatement in the hippocampus and neocortex during episodic memory retrieval.

Materials and Reagents:

- Intracranial EEG (iEEG) electrodes: implanted in the hippocampus and lateral temporal cortex (LTC).

- Virtual Reality (VR) system: for a spatial navigation memory task.

- Computational resources: for multivariate pattern analysis (MVPA) and time-frequency analysis.

Procedure:

- Task Design: Participants perform a VR task. During encoding, they navigate a room and encounter objects in specific locations. During retrieval, they are presented with objects in either the same (congruent) or a different (incongruent) context and must make a memory judgment.

- Data Acquisition: Simultaneously record iEEG from hippocampus and LTC during both encoding and retrieval phases.

- Representational Feature Extraction: For each brain region, construct feature vectors by concatenating time-frequency resolved power values (e.g., 1-100 Hz) in sliding time windows (e.g., 500 ms) across all electrode contacts in that region.

- Calculate Encoding-Retrieval Similarity (ERS): For each trial, compute the similarity (e.g., using Spearman's Rho) between the feature vector at a given retrieval time window and all feature vectors from the encoding period. This generates a temporal ERS map.

- Statistical Contrasting: Identify significant reinstatement by contrasting ERS maps for:

- Item-context association: Congruent vs. Incongruent trials.

- Item-specific information: Same Item vs. Different Item trials.

- Phase Synchronization Analysis: Compute phase-locking value (PLV) in the gamma band between hippocampus and LTC electrodes. Correlate PLV with the strength of neocortical item reinstatement.

Analysis:

- Use cluster-based permutation tests to identify significant time-frequency clusters in ERS contrasts.

- Employ regression models to test if hippocampal reinstatement strength or hippocampal-LTC gamma synchronization predicts LTC reinstatement strength [9].

Visualization of the Reinstatement Framework

The following diagrams illustrate the core concepts and experimental workflows of the reinstatement framework.

Diagram: Systems Reconsolidation and Engram Reconstruction

Diagram 1: Engram Dynamics in Systems Reconsolidation. This workflow depicts how remote memory recall triggers a shift from the original hippocampal engram to a new one, facilitated by neurogenesis and coordinated by mPFC-amygdala circuits to enable memory updating [7] [8].

Diagram: Human Hippocampal-Neocortical Reinstatement

Diagram 2: Temporal Dynamics of Human Memory Reinstatement. This sequence illustrates the content-specific and temporally dissociated reinstatement process, initiated by the hippocampus and followed by neocortical item reinstatement, coordinated via gamma-band synchronization [9].

The Scientist's Toolkit: Research Reagent Solutions

This table outlines essential reagents and tools for designing experiments on the reinstatement framework, with a focus on the protocols described above.

Table 3: Essential Research Reagents for Investigating Memory Reinstatement

| Reagent / Tool | Function | Example Use Case |

|---|---|---|

| Activity-Dependent Labeling Systems (c-Fos-tTA, TRAP) | Tags neurons that are active during a specific behavioral event with fluorescent reporters or actuators. | Labeling engram ensembles during memory acquisition, recall, or updating in rodent models [7] [6]. |

| Triple-Event Labeling Tool | Allows for sequential labeling of three distinct, experience-dependent neuronal populations. | Tracing engram ensembles across learning, remote recall, and exposure to a novel context [7]. |

| Two-Photon Calcium Imaging | Enables longitudinal, high-resolution imaging of neural activity in live, behaving animals. | Tracking the activity dynamics of labeled engram ensembles over days and weeks [7]. |

| Opto-/Chemogenetics (DREADDs, Channelrhodopsin) | Allows for precise excitation or inhibition of specific neural populations. | Testing the causal necessity of original vs. new engram ensembles for memory recall and updating [7] [6]. |

| Multivoxel Pattern Analysis (MVPA) | A computational technique for detecting distributed patterns of brain activity in fMRI data that represent specific stimuli or cognitive states. | Decoding the content of memory representations (e.g., items, contexts) from human fMRI or iEEG data [4] [9]. |

| Representational Similarity Analysis (RSA) | Quantifies the similarity between neural activity patterns, relating them to computational models or behavioral data. | Testing if neural representations during retrieval resemble those from encoding, or differ between brain regions [4] [9]. |

| Intracranial EEG (iEEG) | Records electrophysiological activity directly from the human brain, providing high temporal resolution. | Measuring the precise timing of reinstatement and inter-regional synchronization during memory retrieval [9]. |

| Virtual Reality (VR) Paradigms | Creates controlled, immersive, and ecologically valid environments for memory tasks. | Presenting complex episodic memories with dissociable item and context components during human neuroimaging [9]. |

In cognitive neuroscience, memory is not conceived as a unitary or static entity but as a dynamic process involving distinct representational states. The differentiation between active and latent memory representations provides a powerful framework for understanding how information is maintained, accessed, and used across time. From a causal perspective, a genuine memory representation must maintain a verifiable causal connection to a past event [10]. Active representations are characterized by their immediate availability for cognitive operations, typically sustained through persistent neural activity. In contrast, latent representations (also termed "activity-silent," "passive," or "silent" states) contain information that is not directly accessible but can be reactivated through specific manipulations, maintained potentially through short-term synaptic plasticity rather than sustained firing [11] [12]. This application note explores how Multivoxel Pattern Analysis (MVPA) can be leveraged to identify, distinguish, and investigate these distinct mnemonic states within the context of memory research, with particular emphasis on establishing causal linkages between neural activity and mnemonic function.

The theoretical foundation for this approach rests on what has been termed the reinstatement framework, which provides a mechanistic basis for the causal linkage between an experience, the memory trace encoding it, and the subsequent episodic memory of that experience. This framework highlights the crucial role of hippocampal engrams in encoding patterns of neocortical activity that, when reactivated, constitute the neural representation of an episodic memory [10]. MVPA offers the necessary analytical sensitivity to detect the subtle, distributed pattern changes associated with these representational states, making it an indispensable tool for contemporary memory neuroscience.

Theoretical Foundations: A Causal Account of Memory Representations

Defining Characteristics of Active and Latent States

The distinction between active and latent memory representations transcends mere phenomenological description and incorporates fundamental differences in neural mechanisms, cognitive function, and causal efficacy. Active states are defined by their direct causal influence on ongoing cognition and behavior, supported by persistent neural activity in specific brain networks. These representations are readily accessible for conscious recall, cognitive manipulation, and guiding action, making them the "working" component of working memory [11].

Latent states, meanwhile, represent information that is stored but not currently in a state of active processing. These representations are maintained through potential synaptic mechanisms rather than sustained neural firing, offering a metabolically efficient form of storage [11]. The causal connection to past events remains intact in latent states, though it requires specific triggers (such as a "ping" or TMS pulse) to become behaviorally manifest. This transition between states is dynamic and task-dependent, allowing the cognitive system to flexibly manage its limited attentional and working memory resources [11].

The Reinstatement Framework and Causal Linkage

The causal perspective on memory representations demands that a genuine memory maintains a demonstrable connection to the specific past event it purports to represent. The reinstatement framework provides a mechanistic account of how this causal linkage is established and maintained [10]. According to this framework:

- Hippocampal engrams during encoding capture patterns of neocortical activity associated with an experience

- These encoded patterns serve as a blueprint for subsequent reactivation

- During retrieval, partial cues can trigger the hippocampal network to reinstate the original neocortical patterns

- This reinstatement constitutes the neural basis of episodic recollection

Critically, the reinstatement framework accommodates the fact that retrieved episodic information only partially determines the content of an active memory representation, which typically comprises a combination of retrieved information with semantic, schematic, and situational information [10]. This explains both the reconstructive nature of memory and the potential for gradual distortion over time, especially for remote memories where re-encoding operations create an extended causal chain from the original experience to the currently accessible memory trace.

Table 1: Neural and Functional Characteristics of Active and Latent Memory Representations

| Characteristic | Active Representations | Latent Representations |

|---|---|---|

| Neural Mechanism | Persistent neural activity | Short-term synaptic plasticity, activity-silent maintenance |

| Metabolic Cost | High | Low |

| Cognitive Accessibility | Immediately available for recall and manipulation | Requires reactivation (e.g., via retro-cue, TMS, or visual impulse) |

| Temporal Dynamics | Sustained throughout retention period | Can be maintained during delays after neural activity returns to baseline |

| Functional Role | Online processing, immediate guidance of behavior | Robust maintenance of prospectively relevant information |

| MVPA Detectability | Directly decodable from pattern analysis | May require reactivation for reliable detection |

MVPA Approaches for Detecting Memory States

Core MVPA Methodologies

Multivoxel Pattern Analysis encompasses a suite of techniques that leverage distributed patterns of brain activity to infer mental representations and processes. For memory research, several MVPA approaches have proven particularly valuable:

- Decoding: Classifiers are trained to distinguish between neural activity patterns associated with different experimental conditions or stimulus categories. Successful decoding indicates that a brain region contains information about the decoded dimension [13].

- Representational Similarity Analysis (RSA): Characterizes neural representations by comparing patterns of activity associated with different items or conditions, creating a neural representational space that can be related to behavioral measures or computational models [13].

- Cross-decoding: Tests whether classifiers trained on one set of conditions (e.g., perception) can generalize to another (e.g., memory retrieval), revealing common neural representations across domains [13].

- Pattern Expression and Voxel-wise Encoding Models: These approaches either measure the expression of specific activity patterns or model the relationship between stimulus features and neural responses [4].

These methods are particularly well-suited to investigating the active versus latent distinction because they can detect information in neural activity patterns even when overall activation levels do not differ between conditions—a crucial capability for identifying latent representations that may not involve sustained increases in regional activity [13].

Establishing Causal Links with MVPA

MVPA strengthens causal accounts of memory through several methodological strengths:

- Item-specific analysis: Unlike mass-univariate approaches that often average across trials, MVPA can track specific representations across time, allowing researchers to establish that the same neural pattern present during encoding reappears during retrieval [13].

- Reinstatement detection: Cross-decoding approaches can directly test whether neural patterns during retrieval resemble those during encoding, providing evidence for the causal reinstatement of earlier brain states [14].

- State reactivation: By applying classifiers trained on perception to memory delay periods, MVPA can detect when latent representations are brought into an active state [15].

These capabilities make MVPA uniquely positioned to address fundamental questions about the causal relationships between experience, neural representation, and subsequent memory.

Experimental Protocols for Investigating Active and Latent States

Retro-Cue Working Memory Paradigm

The retro-cue paradigm has emerged as a powerful tool for investigating the active-latent distinction in visual working memory. This protocol leverages cueing during the maintenance delay to manipulate the state of memory representations.

Procedure:

- Encoding: Present a memory array (e.g., 2-4 colored squares) for 500ms [11].

- Initial Delay: Implement a 1s retention period with central fixation [11].

- Retro-Cue: Display a directional cue (arrow or color indicator) for 200ms that indicates which item(s) will be probed first. This cues the indicated item(s) into an active state while uncued items transition to a latent state [11].

- Secondary Encoding (Optional): In some paradigms, a second memory array (1-2 items) may be presented 1.5s after the first retro-cue, creating a complex working memory scenario with both active and latent components [11].

- Probe Phase: Present test stimuli at intervals to assess memory for both cued (active) and uncued (latent) items.

Key Manipulations:

- Active Load: Vary the number of items that must be maintained in an active state [11].

- Spatial Configuration: Manipulate the distance between memory items, as spatial proximity can affect the dissociation between active and latent states [11].

- Timing Parameters: Adjust delay durations to examine the temporal dynamics of state transitions.

Neural Measures:

- fMRI with MVPA to decode content-specific representations during delay periods.

- iEEG to measure spectral power changes (increased HFA with decreased LFA) associated with successful memory [14].

This paradigm has revealed that active and latent memories may draw on independent cognitive resources when items are spatially distinct, but this dissociation breaks down for items in close proximity [11].

Visual Impulse ("Ping") Reactivation Protocol

The visual impulse paradigm provides a method for reactivating latent representations, allowing researchers to study the transition from latent to active states.

Procedure:

- Cue Phase: Present a feature-based cue (e.g., orientation or color) indicating which attribute participants should attend to in the upcoming target [15].

- Delay Period: Implement an extended delay (5.5-7.5s) during which participants maintain the cued information.

- Ping Manipulation: In experimental trials, present a brief (100ms) visual impulse (often a neutral stimulus such as a uniform circle) 2.5s after cue offset [15].

- Target Presentation: Display the test stimulus requiring a response based on the maintained information.

- Comparison Conditions: Include no-ping trials as a control condition.

Neural Measures:

- fMRI with cross-task decoding: Train classifiers on perceptual representations and test on delay-period activity [15].

- Functional connectivity between early visual cortex and frontoparietal regions.

- Pattern similarity analysis to compare neural representations during perception and maintenance.

This approach has demonstrated that visual impulses can transform non-sensory template representations into sensory-like formats during attentional preparation, revealing the latent sensory representations that are not directly decodable in no-ping conditions [15].

Free-Recall with Intracranial EEG

Combining free-recall tasks with intracranial EEG (iEEG) offers unprecedented temporal and spatial resolution for studying the neural signatures of successful memory formation and retrieval.

Procedure:

- Stimulus Presentation: Present lists of words (typically 12-15 items) for 1600ms each, with 750-1000ms inter-stimulus intervals [14].

- Distractor Task: Implement a 20s arithmetic task following list presentation to prevent rehearsal of recency items [14].

- Retrieval Phase: Signal recall period with auditory tone and visual cue, allowing 30-45s for free recall of list items [14].

- Response Recording: Digitally record vocal responses for subsequent scoring.

Neural Measures:

- iEEG spectral power across multiple frequency bands.

- Multivariate classifiers trained to predict memory success based on distributed spectral power patterns [14].

- Cross-decoding to identify common neural signatures of successful encoding and retrieval.

This protocol has revealed that similar patterns of increased high-frequency activity in prefrontal, medial temporal, and inferior parietal cortices—accompanied by widespread decreases in low-frequency power—predict successful memory function during both encoding and retrieval [14].

Table 2: Comparison of Experimental Paradigms for Studying Active and Latent Memory

| Paradigm | Primary Memory Process | State Manipulation | Key Neural Correlates | MVPA Approaches |

|---|---|---|---|---|

| Retro-Cue Working Memory | Working memory maintenance | Retro-cue directs attention to specific items during delay | Sustained content-specific patterns in sensory and parietal cortices | Decoding of maintained information; Pattern similarity across states |

| Visual Impulse Reactivation | Attentional preparation | Visual impulse reactivates latent sensory templates | Enhanced sensory cortex decoding after ping; Altered V1-frontoparietal connectivity | Cross-task decoding (perception to attention); Representational similarity analysis |

| Free-Recall with iEEG | Episodic memory encoding and retrieval | Natural variation in memory success | Increased HFA with decreased LFA in prefrontal-MTL-parietal network | Multivariate classification of successful states; Cross-decoding encoding/retrieval |

Analytical Framework: MVPA for State Discrimination

Multivariate Classification of Memory States

The core analytical approach for distinguishing active and latent states involves training multivariate classifiers to identify patterns of neural activity associated with different mnemonic conditions:

Classification Pipeline:

- Feature Selection: Identify relevant neural features (voxels in fMRI, channels in iEEG/EEG, frequency bands in electrophysiology).

- Model Training: Use machine learning classifiers (e.g., L2-penalized regression, SVM) to distinguish conditions of interest (e.g., high vs. low memory load, cued vs. uncued items, successful vs. failed retrieval).

- Validation: Employ cross-validation to assess generalizability, using independent data not included in training.

- Interpretation: Examine feature weights to identify brain regions contributing most to classification.

For the active-latent distinction, classifiers can be trained to:

- Distract between neural activity during maintenance of active versus latent representations [11]

- Identify when latent representations are reactivated [15]

- Predict memory success based on neural states during encoding or retrieval [14]

Cross-Decoding and Representational Similarity Analysis

Cross-decoding approaches provide particularly powerful tests for the active-latent distinction by examining whether neural patterns are shared across different cognitive states:

Cross-Decoding Logic:

- Train classifiers to distinguish stimulus categories during perception

- Test whether these classifiers can distinguish the same categories during memory maintenance or retrieval

- Successful cross-decoding indicates common neural representations across states

Representational Similarity Analysis (RSA): RSA moves beyond simple classification to characterize the structure of neural representational spaces:

- Compute representational dissimilarity matrices (RDMs) for neural data by correlating activity patterns across conditions

- Create model RDMs based on cognitive theories or behavioral data

- Compare neural and model RDMs to test theoretical predictions

RSA can reveal whether the representational structure of active memories resembles that of latent memories or perceptual representations, providing insight into the format of information in different mnemonic states [13].

Table 3: Research Reagent Solutions for MVPA Memory Studies

| Research Tool | Function | Example Application | Key Considerations |

|---|---|---|---|

| Multi-voxel Pattern Analysis (MVPA) | Decodes information from distributed neural activity patterns | Identifying content-specific representations during memory delays | Enhanced sensitivity compared to mass-univariate approaches; Requires careful feature selection [4] |

| Representational Similarity Analysis (RSA) | Quantifies similarity structure of neural representations | Testing whether memory representations resemble perceptual representations | Model-based approach allows direct theory testing; Can integrate across imaging modalities [13] |

| Intracranial EEG (iEEG) | Records neural activity with high spatiotemporal resolution | Identifying spectral signatures of successful memory formation and retrieval | Clinical populations only; Excellent for localization and high-frequency activity [14] |

| Functional Connectivity MVPA (fc-MVPA) | Data-driven analysis of functional connectivity patterns | Identifying network alterations in clinical populations with memory deficits | Model-free approach; Can reveal novel network signatures [16] |

| Visual Impulse Stimulation | Reactivates latent neural representations | Converting latent memory templates to active sensory-like formats | Useful for probing representational states without behavioral reports [15] |

| Hierarchical Drift Diffusion Modeling (HDDM) | Computational modeling of decision processes | Linking memory states to decision dynamics in value-based choices | Integrates neural measures with computational accounts of behavior [17] |

Visualizing the Causal Framework and Analytical Workflow

Causal Framework of Memory Representations

MVPA Analytical Workflow for State Detection

The distinction between active and latent memory representations provides a productive framework for understanding the dynamic nature of mnemonic function. MVPA offers a powerful set of tools for investigating this distinction, allowing researchers to track specific representational content across different cognitive states and establish causal links between neural patterns and memory performance. The experimental protocols and analytical approaches outlined here provide a foundation for designing studies that can dissect the neural mechanisms underlying these distinct representational formats.

Future directions in this field include:

- Integrating MVPA with computational models to formalize theories of state transitions

- Developing real-time decoding approaches to manipulate memory states experimentally

- Combining neurostimulation with MVPA to test causal hypotheses about representation maintenance

- Applying these approaches to clinical populations to understand memory deficits in terms of disrupted state dynamics

As MVPA methodologies continue to evolve, they will undoubtedly provide increasingly sophisticated insights into the fundamental nature of memory representations and their causal role in cognition.

In memory research, a fundamental challenge is to move beyond simply identifying where in the brain a memory is stored and towards understanding how that memory is represented at the neural population level. Traditional functional magnetic resonance imaging (fMRI) analysis methods, predominantly the mass-univariate General Linear Model (GLM), have a significant limitation: they treat the covariance in activity between neighboring voxels as uninformative noise, effectively discarding it during analysis [18]. This approach overlooks the rich information embedded in the fine-grained spatial patterns of brain activity that constitute the neural population code for a memory trace.

Multivoxel Pattern Analysis (MVPA) represents a paradigm shift by treating this voxel covariance not as noise, but as the primary signal of interest [18] [4]. It operates on the principle that information is distributed across populations of neurons, and that even subtle, coordinated activity changes across many voxels can be computationally decoded to reveal the content of cognitive states, including specific memories [19]. This Application Note details how MVPA leverages voxel covariance to elucidate memory representations, providing the theoretical rationale, supporting evidence, and practical protocols for its application in cognitive neuroscience and drug development research.

Theoretical Foundation: From Mass-Univariate to Multivariate Analysis

The Limitation of the Mass-Univariate Framework

The standard GLM approach analyzes each voxel's time course independently, testing for linear correlations with a predefined model of the experimental task [18]. It is expressed as: Y = Xβ + ϵ where Y is the BOLD signal at a single voxel, X is the design matrix, β represents the model parameters, and ϵ is the error term [18]. Statistical inference then creates a map of "activated" regions. However, this method is blind to the information that may be present in the combined, patterned activity across multiple voxels, a pattern that may be more representative of a memory engram than the strong activation of any single region.

MVPA as a Supervised Classification Problem

MVPA reframes fMRI analysis as a pattern classification problem [18]. The "features" are the fMRI signals (e.g., beta weights or raw activity) from a cluster of voxels, and the "classes" are the experimental conditions (e.g., memory for face A vs. face B). A classifier, such as a Support Vector Machine (SVM), is trained to identify the unique spatial pattern of activity associated with each condition [18]. Once trained, the classifier can be tested on new data to determine if it can accurately decode the memory state from the brain's activity pattern alone. The success of this decoding provides direct evidence that the distributed pattern contains information about the memory.

Table 1: Core Differences Between GLM and MVPA Approaches

| Feature | General Linear Model (GLM) | Multivoxel Pattern Analysis (MVPA) |

|---|---|---|

| Unit of Analysis | Single Voxel | Pattern across Multiple Voxels |

| Voxel Covariance | Treated as noise, often smoothed | Treated as the primary signal |

| Model Dependency | Model-based; requires a reference function | Model-free; data-driven |

| Primary Output | Statistical parametric maps of activation | Classifier accuracy in decoding states |

| Sensitivity | High for strong, focal activation | High for subtle, distributed patterns |

Key Evidence: Voxel Covariance Carries Memory-Specific Information

Empirical studies consistently demonstrate that the information decoded by MVPA is often not accessible through univariate analyses, underscoring the functional significance of voxel covariance.

A compelling example comes from fear generalization research. In a study of spider fear, individuals with high fear were more likely to behaviorally classify ambiguous flower-spider morphs as spiders [19]. While univariate analyses showed generally heightened activation in fear-related brain regions in high-fear individuals, they could not account for the specific biased categorization of ambiguous stimuli [19]. This finding suggests that the neural representation of the ambiguous stimulus in high-fear individuals more closely resembles that of a true spider—a difference that likely resides in the fine-grained pattern of activity across voxels, which MVPA is designed to detect.

Furthermore, research in Major Depressive Disorder (MDD) has utilized functional connectivity MVPA (fc-MVPA) to link distributed network patterns to cognitive deficits, including memory impairment [16]. A 2025 study by our group identified six whole-brain clusters with altered functional connectivity in MDD, including the left cerebellar crus I and dorsal anterior cingulate cortex [16]. Post-hoc analyses revealed 24 specific patterns of disrupted connectivity, some of which correlated with performance on memory tasks in healthy controls—associations that were absent in MDD patients [16]. This demonstrates that the covariance structure of large-scale brain networks, measurable with MVPA, is a biomarker for cognitive and memory dysfunction.

The following diagram illustrates the fundamental workflow of an MVPA approach to decoding memory representations, highlighting how distributed patterns are used for classification.

Experimental Protocols for Memory Research

This section provides detailed methodologies for implementing MVPA in studies of memory.

Protocol: Basic MVPA Decoding of Memory Content

Objective: To determine whether distinct memory contents (e.g., memories for faces vs. scenes) can be decoded from fMRI activity patterns.

Materials:

- 3T or higher MRI scanner.

- Standard pre-processing software (e.g., FSL, SPM).

- MVPA software toolbox (e.g., PyMVPA, LIBSVM, The Decoding Toolbox).

Procedure:

- Experimental Design:

- Employ a block or event-related design where participants encode or recall distinct categories of stimuli (e.g., faces, scenes, objects). Use a sufficient number of trials per condition (>20) to ensure robust pattern estimation.

- Stimulus Presentation: Present stimuli using software like PsychoPy or E-Prime. Ensure precise timing synchronization with the scanner.

fMRI Data Acquisition:

- Acquire T1-weighted anatomical scans (1 mm isotropic).

- Acquire T2*-weighted BOLD fMRI scans (e.g., TR=2000 ms, TE=30 ms, voxel size=3-4 mm isotropic) [16].

Data Preprocessing:

- Perform standard steps: slice-time correction, motion correction, and spatial smoothing with a small kernel (e.g., 4-6 mm FWHM). Note: Excessive smoothing can blur fine-grained patterns.

- Normalize data to a standard stereotaxic space (e.g., MNI).

- For each trial or block, extract parameter estimates (beta weights) using a GLM, creating a single activation map per condition per subject.

MVPA Execution:

- Feature Selection: Define a region of interest (ROI) based on an anatomical atlas (e.g., hippocampus, ventral temporal cortex) or a functional localizer.

- Training: Train a linear Support Vector Machine (SVM) classifier on a subset of the data ("training set") to distinguish between the spatial activity patterns of two memory conditions (e.g., Face memory vs. Scene memory) [18].

- Testing & Validation: Test the trained classifier on the held-out data ("test set"). Use cross-validation (e.g., k-fold, leave-one-run-out) to obtain an unbiased estimate of decoding accuracy [18].

- Statistical Analysis: Compare decoding accuracy against chance level (e.g., 50% for two classes) using a t-test. Group-level inference can be performed using one-sample t-tests on individual subject accuracies.

Protocol: Functional Connectivity MVPA (fc-MVPA) for Network-Level Memory Signatures

Objective: To identify whole-brain functional connectivity patterns that differentiate memory performance or clinical groups, moving beyond localized activity.

Materials:

- As in Protocol 4.1, plus connectivity analysis tools (e.g., CONN toolbox, in-house scripts).

Procedure:

- Data Acquisition & Preprocessing:

- Acquire resting-state or task-based fMRI data.

- Preprocess data with additional steps to reduce confounds: regression of nuisance signals (white matter, CSF, motion parameters), and band-pass filtering (0.008-0.09 Hz) for resting-state analysis.

fc-MVPA Analysis (Data-Driven):

- The whole-brain fMRI data is subjected to a dimensionality reduction technique, such as Principal Component Analysis (PCA) [16].

- The analysis identifies clusters of voxels that show significant differences in their whole-brain functional connectivity patterns between groups (e.g., Healthy Controls vs. MDD) or conditions [16].

- These clusters serve as seed regions for post-hoc seed-based correlation analysis to characterize the specific networks that are altered.

Correlation with Behavior:

- Extract connectivity strength measures from the identified aberrant networks.

- Correlate these connectivity values with neurocognitive scores (e.g., composite memory score from the CNS-VS battery) [16]. The disruption of typical brain-behavior relationships is a key finding in clinical populations [16].

Table 2: Key Reagents and Computational Tools for MVPA

| Category | Item | Function/Description |

|---|---|---|

| Software & Libraries | PyMVPA | A Python package specifically designed for MVPA, providing a high-level interface for analysis [18]. |

| LIBSVM | A widely-used library for implementing Support Vector Machines, integrated into many toolboxes [18]. | |

| SPSS, R, Python (Pandas/NumPy) | For general statistical analysis and data manipulation of behavioral and results data [20]. | |

| Experimental Tools | PsychoPy | Open-source software for precise stimulus presentation and behavioral data collection. |

| Computerized Neurocognitive Assessment (e.g., CNS-VS) | Standardized battery to assess memory and other cognitive domains for correlation with neural data [16]. | |

| Data | High-Resolution fMRI BOLD Data | The primary raw data; blood-oxygen-level-dependent signal reflecting neural activity. |

| T1-Weighted Anatomical Scans | High-resolution images for co-registration and normalization of functional data. |

Visualization and Data Interpretation

Effective visualization is critical for interpreting the high-dimensional data generated by MVPA. The following diagram outlines the specific workflow for the fc-MVPA protocol described above.

Key Outputs and Interpretation:

- Classification Accuracy: The primary metric. Significance indicates that the brain region(s) analyzed contain decodable information about the experimental condition.

- Weight Maps: The classifier assigns a weight to each voxel. Visualizing these weights can reveal which voxels most strongly contribute to the discrimination, though caution is needed in their neurobiological interpretation.

- Representational Similarity Analysis (RSA): An extension of MVPA that compares the similarity of neural activity patterns to a model of cognitive representations, providing insight into the structure of memory space [4].

- Network Correlation Plots: For fc-MVPA, scatter plots showing the correlation between functional connectivity strength and memory performance powerfully illustrate disrupted brain-behavior relationships in clinical populations [16].

The covariance between voxels is not noise but a critical feature of the brain's population code for memory. MVPA provides a powerful, sensitive toolkit to read this code, revealing how memories are represented in distributed neural patterns and how these representations are disrupted in disease. For drug development, MVPA offers a potential biomarker to track the efficacy of cognitive-enhancing therapeutics by quantifying restoration of normative neural patterns, moving beyond mere behavioral endpoints to direct neural validation.

Multivoxel pattern analysis (MVPA) has revolutionized the field of cognitive neuroscience by providing a suite of powerful analytical tools to investigate how information is represented in distributed patterns of brain activity. Unlike traditional univariate analyses that examine overall signal amplitude averaged across a brain region, MVPA leverages the rich information contained in the spatial configuration of activity across multiple voxels simultaneously [21] [22]. For memory researchers, this approach offers unprecedented insight into the neural population codes that support the formation, maintenance, and retrieval of memories.

The core premise of MVPA is that different cognitive states—including different memories—elicit distinct, decodable patterns of neural activity [13]. This framework has proven particularly valuable for testing sophisticated cognitive theories about the format, durability, and transformation of memory representations [13]. Within the MVPA framework, three primary variants have emerged as essential tools for neuroscience research: decoding models, representational similarity analysis (RSA), and encoding models. Each offers unique strengths for probing different aspects of memory representations and their underlying neural mechanisms.

Core MVPA Variants: Theoretical Foundations and Comparative Analysis

Decoding Models

Theoretical Foundation and Implementation Decoding, often considered the most direct form of MVPA, operates as a classifier that predicts cognitive states or stimulus categories from distributed patterns of brain activity [21] [13]. In a typical decoding analysis, a machine learning classifier is trained to distinguish between experimental conditions (e.g., different memory categories) based on their multi-voxel activity patterns within a subset of the data. The trained model is then tested on independent data to determine if it can accurately decode the conditions solely from brain activity patterns [21]. This approach demonstrates that a brain region contains information about the decoded dimension.

In memory research, decoding has been powerfully employed to track the contents of memory even in the absence of external stimuli. For instance, several studies have successfully decoded which specific item a participant is holding in working memory during delay periods, revealing the neural underpinnings of memory maintenance [13]. Similarly, decoding approaches have been used to detect the reactivation of specific memories during retrieval tasks [23].

Strengths and Limitations The primary strength of decoding lies in its ability to identify whether specific information is represented in a brain region, offering superior sensitivity compared to univariate methods [21] [4]. However, successful decoding does not necessarily reveal how that information is represented—the format or structure of the neural representation [21]. Additionally, decoding analyses typically require categorizing stimuli into discrete classes, which may not fully capture the continuous nature of many memory representations [21].

Representational Similarity Analysis (RSA)

Theoretical Foundation and Implementation Representational Similarity Analysis (RSA) moves beyond simple classification to characterize the internal structure of neural representations by examining the geometric relationships between activity patterns evoked by different conditions [21] [22]. Rather than asking "what" information is represented, RSA investigates "how" that information is structured in representational space.

The core computational tool in RSA is the Representational Dissimilarity Matrix (RDM), which quantifies the pairwise dissimilarities between activity patterns for all tested conditions [22] [24]. In fMRI research, RDMs are typically created by calculating the dissimilarity (e.g., 1 - correlation) between multi-voxel activity patterns for each pair of conditions or stimuli [22]. These neural RDMs can then be compared to theoretical models of mental representations or to behavioral data, enabling researchers to test specific hypotheses about the dimensions that structure memory representations [22] [13].

A particularly powerful application of RSA in memory research involves comparing neural representations across different modalities, species, or timepoints [21] [24]. For example, Kriegeskorte and colleagues pioneered this approach by comparing neural representations in human and monkey inferior temporal cortex, revealing conserved representational hierarchies across species [24].

Strengths and Limitations RSA's primary advantage is its ability to characterize the internal structure of neural representations without requiring an explicit mapping between voxels and model features [21] [24]. This makes it ideal for testing cognitive theories about the organization of memory representations [13]. However, RSA is typically less sensitive than decoding for detecting whether specific information is present in a brain region, and its results can be influenced by the choice of dissimilarity metric [24].

Encoding Models

Theoretical Foundation and Implementation Encoding models take an inverse approach to decoding by predicting brain activity patterns from computational models of stimulus features or cognitive states [13] [24]. Whereas decoding models predict mental states from brain activity, encoding models predict brain activity from features of stimuli or hypothesized mental representations.

In practice, encoding models estimate the relationship between stimulus features (e.g., visual properties, semantic features, or model-derived representations) and the corresponding brain activity patterns [13]. These models can then be evaluated by their ability to predict brain activity in response to novel stimuli. In memory research, encoding models have been used to determine which feature spaces best capture the neural representations of remembered items, shedding light on the format of memory storage [13].

Strengths and Limitations Encoding models provide a direct bridge between computational models of cognition and neural implementation, offering strong interpretability for testing cognitive theories [13]. They can handle continuous, high-dimensional feature spaces and naturally accommodate complex, naturalistic stimuli. However, they require explicit feature specification and can be computationally intensive, particularly with complex feature models [13] [24].

Table 1: Comparative Analysis of Key MVPA Variants

| Feature | Decoding Models | Representational Similarity Analysis | Encoding Models |

|---|---|---|---|

| Primary Question | What information is represented? | How is information structured? | What features drive neural responses? |

| Analytical Approach | Classify conditions from brain activity | Compare neural representational geometries | Predict brain activity from feature models |

| Key Output | Classification accuracy | Representational Dissimilarity Matrix (RDM) | Feature weight maps, prediction accuracy |

| Theoretical Scope | Condition discrimination | Representational structure | Feature representation |

| Memory Research Applications | Content-specific reactivation, memory decoding | Memory space organization, integration | Feature-based memory models |

Experimental Protocols for Memory Research

Protocol 1: Decoding Memory Contents

Experimental Design This protocol examines whether specific memory contents can be decoded from neural activity patterns during memory retrieval [13]. Participants study a set of items (e.g., images, words) during an encoding phase. During retrieval, they are presented with cues and attempt to recall the associated items while fMRI data is collected. The analysis focuses on classifying which specific item is being recalled based on distributed activity patterns.

Step-by-Step Procedure

- Data Acquisition: Acquire high-resolution fMRI data during the retrieval task using standard EPI sequences (TR=2s, TE=30ms, voxel size=3×3×3mm³) [25]

- Preprocessing: Perform standard preprocessing including slice-time correction, motion correction, spatial smoothing (FWHM 4-6mm), and normalization to standard space

- Beta Estimation: Use a general linear model (GLM) with separate regressors for each trial type to estimate condition-specific beta weights for each voxel [22]

- Feature Selection: Select voxels within a priori regions of interest (e.g., hippocampus, medial temporal lobe, prefrontal cortex) based on the research question

- Classifier Training: Train a linear support vector machine (SVM) classifier using leave-one-run-out cross-validation to distinguish between activity patterns associated with different memory categories [25]

- Statistical Analysis: Assess whether classification accuracy exceeds chance level using permutation tests or t-tests against chance performance

Troubleshooting Tips

- Ensure sufficient trial numbers (typically 15-30 trials per condition) for reliable pattern estimation

- For low trial numbers, consider using cross-validated Mahalanobis distance rather than correlation-based metrics [24]

- Address potential confounds by including motion parameters and other nuisance regressors in the GLM

Protocol 2: RSA for Memory Integration

Experimental Design This protocol investigates how related memories become integrated in neural representational space [23]. Participants learn overlapping paired associates (AB and BC pairs) and are later tested on inferred relationships (AC). The analysis examines whether neural representations of related memories become more similar through integration.

Step-by-Step Procedure

- Data Acquisition and Preprocessing: Follow similar acquisition and preprocessing steps as in Protocol 1

- Pattern Estimation: Estimate activity patterns for each condition of interest (e.g., individual memory items) using a GLM with separate regressors for each condition

- RDM Construction: Calculate pairwise dissimilarities between all conditions using an appropriate distance metric (e.g., cross-validated Mahalanobis distance, correlation distance) to create neural RDMs [24]

- Model RDM Specification: Create theoretical model RDMs representing hypothesized representational structures (e.g., categorical organization, temporal structure, semantic similarity)

- Model Comparison: Compare neural RDMs to model RDMs using rank correlation (Spearman's ρ) or other appropriate similarity measures [22]

- Statistical Inference: Assess statistical significance using bootstrapping procedures or permutation tests that account for the non-independence of dissimilarity values [24]

Troubleshooting Tips

- Use cross-validated distance measures to reduce bias in dissimilarity estimates [24]

- For whole-brain analyses, consider searchlight approach to map representational structures across the brain

- When comparing across participants, use appropriate random-effects statistics for RDM comparisons

Table 2: Key Analytical Decisions in MVPA for Memory Research

| Analytical Step | Options | Recommendations for Memory Research |

|---|---|---|

| Pattern Estimation | Beta weights, % signal change, t-values | Beta weights from single-trial GLMs for trial-wise estimates [22] |

| Similarity/Distance Metric | Correlation, Euclidean distance, Mahalanobis distance | Cross-validated Mahalanobis for accuracy; correlation for stability [24] |

| Classification Algorithm | Linear SVM, Pattern Correlation, Logistic Regression | Linear SVM for robustness to noise [25] |

| Statistical Inference | Permutation tests, Bootstrap, T-tests | Permutation tests for classification; bootstrap for RDM comparisons [24] |

| Multiple Comparisons Correction | FDR, FWE, Cluster-based | FDR for searchlight analyses; FWE for ROI-based [25] |

Visualization and Computational Tools

MVPA Analytical Workflow

The following diagram illustrates the core analytical workflows for the three main MVPA variants in memory research:

RSA Workflow for Memory Integration Analysis

The following diagram details the specific workflow for conducting RSA to investigate memory integration:

Table 3: Essential Tools and Software for MVPA in Memory Research

| Tool Category | Specific Tools | Key Functionality | Application in Memory Research |

|---|---|---|---|

| Analysis Software | rsatoolbox (Python) [24], CoSMoMVPA, The Decoding Toolbox | RSA implementation, searchlight analysis, cross-validation | Comparing neural representational spaces, whole-brain mapping of memory representations |

| Programming Environments | Python (NumPy, SciPy, scikit-learn), MATLAB | Data manipulation, statistical analysis, machine learning | Custom analysis pipelines, novel methodological development |

| fMRI Preprocessing | fMRIPrep, SPM, FSL, AFNI | Data quality control, motion correction, normalization | Standardized preprocessing ensuring data quality for pattern analysis |

| Statistical Packages | Nilearn (Python), R | Advanced statistical testing, multiple comparisons correction | Validating statistical significance of decoding and RSA results |

| Visualization Tools | PyPlot, Seaborn, BrainNet Viewer | RDM visualization, brain mapping, result presentation | Communicating representational geometries and brain-wide patterns |

Advanced Applications in Memory Research

Tracking Representational Change in Memory Formation

Recent research has leveraged MVPA to track how neural representations transform during memory formation. For instance, a 2025 study by [12] used RSA to measure "representational change" (RC) during insight-driven learning. The researchers quantified how activity patterns in ventral occipito-temporal cortex reconfigured when participants achieved insight solutions to visual problems. They found that stronger representational changes during learning predicted better subsequent memory, linking dynamic representational transformations to memory formation success [12].

This approach demonstrates how RSA can capture the reorganization of neural representations as new memories form—a process that would be invisible to traditional univariate analyses. By examining the geometric relationships between activity patterns before and after learning, researchers can quantify the degree of representational change supporting memory formation.

Cross-Modal Memory Integration

MVPA has uniquely enabled the investigation of how memories integrate information across different modalities and episodes. The cross-decoding approach—training a classifier on one type of representation and testing it on another—has been particularly valuable here [13]. For example, researchers have demonstrated that activity patterns during memory retrieval resemble those during initial perception, suggesting partial reinstatement of perceptual representations during recall [13].

Similarly, studies of memory integration have used RSA to show how overlapping memories develop similar neural representations. As participants learn related information, the neural representations of associated items become more similar in representational space, providing a neural signature of memory integration [23]. This representational convergence supports the flexible extraction of inferential relationships beyond directly experienced associations.

Future Directions and Clinical Applications

The application of MVPA in memory research continues to evolve, with several promising directions emerging. The integration of MVPA with computational models of memory is becoming increasingly sophisticated, allowing researchers to test formal mathematical models of memory processes against neural data [13]. Similarly, the combination of MVPA with pharmacological interventions offers new opportunities for understanding the neurochemical basis of memory representations and developing novel cognitive enhancers.

For drug development professionals, MVPA approaches offer potential biomarkers for evaluating cognitive-enhancing compounds. By providing sensitive measures of memory representation quality and organization, MVPA could detect subtle treatment effects that might be missed by behavioral measures alone. Furthermore, the ability to track representational changes during learning and memory integration offers new targets for therapeutic intervention in memory disorders.

As MVPA methodologies continue to advance, they promise to yield increasingly precise characterizations of the neural codes that support human memory, bridging the gap between cognitive theory, neural implementation, and clinical application.

Methodological Pipeline and Applications in Memory Research

Multivoxel pattern analysis (MVPA) has revolutionized cognitive neuroscience by enabling researchers to decode cognitive states and mental content from distributed patterns of brain activity. Within the specific domain of memory representations research, MVPA provides a powerful methodological framework for moving beyond simple localization of memory-related activity and towards a detailed understanding of the informational content and format of memory traces. Unlike traditional univariate analyses that examine the average activity within a region, MVPA leverages pattern classification algorithms to detect subtle, distributed information across multiple voxels simultaneously [26]. This approach has proven particularly valuable for studying memory representations, as it can detect when the same neural pattern is reinstated during encoding and retrieval, track the transformation of memories over time, and distinguish between different types of memory content even within the same brain region.