Navigating the Labyrinth: Methodological Challenges in Cognitive Language Analysis for Clinical and Translational Research

This article synthesizes current methodological challenges and innovations in cognitive language analysis, a field pivotal for understanding neurological health and developing biomarkers for drug development.

Navigating the Labyrinth: Methodological Challenges in Cognitive Language Analysis for Clinical and Translational Research

Abstract

This article synthesizes current methodological challenges and innovations in cognitive language analysis, a field pivotal for understanding neurological health and developing biomarkers for drug development. It explores foundational issues, from the systemic nature of cognition to the critical role of linguistic diversity, before reviewing established and emerging methodologies, including task-based paradigms and Large Language Models (LLMs). The discussion extends to troubleshooting common pitfalls in study design and statistical analysis, and culminates in frameworks for the validation and comparative assessment of analytical approaches. Aimed at researchers and drug development professionals, this review provides a comprehensive roadmap for generating robust, reproducible, and clinically meaningful insights from cognitive language data.

Theoretical Foundations and the Complexity of Measuring the Language-Cognition Interface

Technical Support Center: Troubleshooting Guides & FAQs

This section provides targeted support for common methodological challenges encountered in cognitive language analysis research, framed within the ongoing theoretical shift from modular to systemic models of cognition.

Frequently Asked Questions

Q1: Our neuroimaging data shows inconsistent activation in traditional language areas (e.g., Broca's) across participants. Is this an experimental error? A: Not necessarily. This variability likely reflects genuine individual differences in neurocognitive organization rather than technical error. Research shows that the exact extension, location, and boundaries of language-related regions of interest (RoIs) typically change from one person to another [1]. This is suggestive of each person bearing a slightly different language faculty, both cognitively and neurobiologically, mostly because of their different developmental trajectories when acquiring their language(s) [1].

- Recommended Action: Instead of averaging across participants, analyze individual differentiability and functional connectivity patterns. Consider that language functions are supported by entire brain circuits rather than specific, fixed areas [2].

Q2: How can we statistically account for the influence of non-linguistic factors (e.g., inflammation, social activity) on cognitive performance in our language studies? A: Systemic factors are crucial mediators. Evidence indicates that systemic inflammation, quantified via biomarkers like IL-6, partially mediates age-related deficits in processing speed [3]. Furthermore, a clinical-pathologic study found that the association of locus coeruleus tangle density with lower cognitive performance was partially mediated by the level of social activity [4].

- Recommended Action: Incorporate baseline measures of inflammatory biomarkers (e.g., IL-6, CRP) and standardized questionnaires on social engagement [3] [4] as covariates or mediators in your statistical models.

Q3: Our model assumes language processing is a series of discrete stages, but our behavioral data shows significant overlap. Is our model wrong? A: Likely. The classic view of encapsulated, sequential processing stages is being challenged by network-based and dynamic models. Recent MEG studies show that large-scale cortical functional networks underlying cognition activate in structured, cyclical patterns with timescales of 300–1,000 ms, rather than in strict, feed-forward sequences [5]. This represents an overarching flow of cortical networks that is inherently cyclical [5].

- Recommended Action: Employ time-sensitive analytical methods like hidden Markov modeling (HMM) or temporal interval network density analysis (TINDA) to capture the dynamic, cyclical nature of network activations [5].

Q4: We are only studying standardized language, but our findings feel incomplete. How can we improve the ecological validity of our research? A: This is a recognized limitation. A more comprehensive neurocognitive approach to language must pursue a unitary explanation of linguistic variation, including the diversity of sociolinguistic phenomena and non-standard language varieties [1]. The brain processes different language varieties (e.g., formal vs. casual) by recruiting different, and sometimes extra, cognitive resources [1].

- Recommended Action: Expand stimulus sets to include diverse linguistic registers, sociolects, and dialogic (casual conversation) in addition to monologic (formal speech) language samples [1].

Summarized Quantitative Data

The table below consolidates key quantitative findings from seminal studies on systemic factors in cognitive aging, providing a reference for experimental design and hypothesis generation.

Table 1: Summary of Key Quantitative Findings from Cognitive Aging Studies

| Study Focus | Key Biomarker/Measure | Population | Statistical Finding | Cognitive Domain Affected |

|---|---|---|---|---|

| Systemic Inflammation & Aging [3] | IL-6, TNF-α, CRP | 47 Young (M=22.3 yrs) & 46 Older (M=71.2 yrs) Adults | IL-6 partially mediated age-related difference in processing speed. | Processing Speed, Short-term Memory |

| Brain Morphology & Inflammation [6] | IL-6, CRP; Cortical Gray Matter Volume | 408 Midlife Adults (30-54 yrs) | Cortical gray matter volume partially mediated the association of inflammation with cognitive performance. | Spatial Reasoning, Short-term Memory, Verbal Proficiency, Learning & Memory, Executive Function |

| Locus Coeruleus Pathology & Social Activity [4] | LC Tangle Density; Social Activity Score | 142 Older Adults (NCI & CI) | Social activity partially mediated the association between greater LC tangle density and lower cognitive performance. | Global Cognition (Episodic, Semantic, Working Memory, Visuospatial, Perceptual Speed) |

| Cortical Network Cycles [5] | Cycle Strength (S) | 55 Participants (MEG UK) | Cycle strength was significant (S = 0.066) and greater than permutations (P < 0.001). | Large-scale Cognitive Functions (as per network states) |

Experimental Protocols

Protocol 1: Assessing Systemic Inflammation as a Mediator of Cognitive Aging

Objective: To determine the extent to which systemic inflammation mediates age-related differences in cognitive performance [3].

- Participant Recruitment: Recruit two distinct age groups (e.g., young adults: 18-30 years; older adults: 60+ years). Screen for general health, excluding individuals with severe psychological disorders, excessive smoking/drinking, or major neurological conditions [3].

- Blood Sample Collection & Analysis: Collect fasting blood samples from each participant. Centrifuge samples to obtain serum or plasma. Use high-sensitivity enzyme-linked immunosorbent assay (ELISA) kits to quantify serum concentrations of inflammatory biomarkers:

- Cognitive Assessment: Administer standardized neuropsychological tests. Key domains should include:

- Statistical Mediation Analysis: Perform regression-based mediation analysis (e.g., using the PROCESS macro for SPSS or similar) to test if the effect of the independent variable (Age Group) on the dependent variable (Cognitive Score) is significantly reduced when the mediator (e.g., IL-6 level) is included in the model [3].

Protocol 2: Investigating Cyclical Cortical Network Dynamics with MEG

Objective: To identify and characterize the cyclical activation patterns of large-scale cortical functional networks during rest [5].

- Data Acquisition: Acquire magnetoencephalography (MEG) data from participants during a wakeful resting state (e.g., 5-10 minutes with eyes open). Simultaneously record electrocardiography (ECG) and electrooculography (EOG) to identify and remove artifacts related to heartbeats and eye blinks [5].

- Source Reconstruction & Parcellation: Preprocess MEG data (filtering, artifact removal). Use beamforming algorithms (e.g., LCMV) to reconstruct source-level activity. Parcel the source data into a standard set of brain regions (e.g., using the AAL or HCP-MMP atlas) [5].

- Network State Identification with HMM: Train a Hidden Markov Model (HMM) on the source-reconstructed data to identify a set of recurring, discrete brain states (e.g., K=12 states). The HMM will infer the multivariate spectral signature (power and coherence) of each state and the timing of their activations [5].

- Temporal Interval Network Density Analysis (TINDA): For each recurring state

n, identify all intervals between its subsequent activations. For every other statem, calculate the Fractional Occupancy (FO) asymmetry—the difference in the probability of statemoccurring in the first versus the second half of the state-n-to-nintervals. This reveals if statemtends to precede or follow staten[5]. - Cycle Strength Calculation: From the full FO asymmetry matrix, compute the overall cycle strength (S), which quantifies the robustness of a global cyclical pattern in network activations. Compare the observed S to a null distribution generated by permuting network state labels [5].

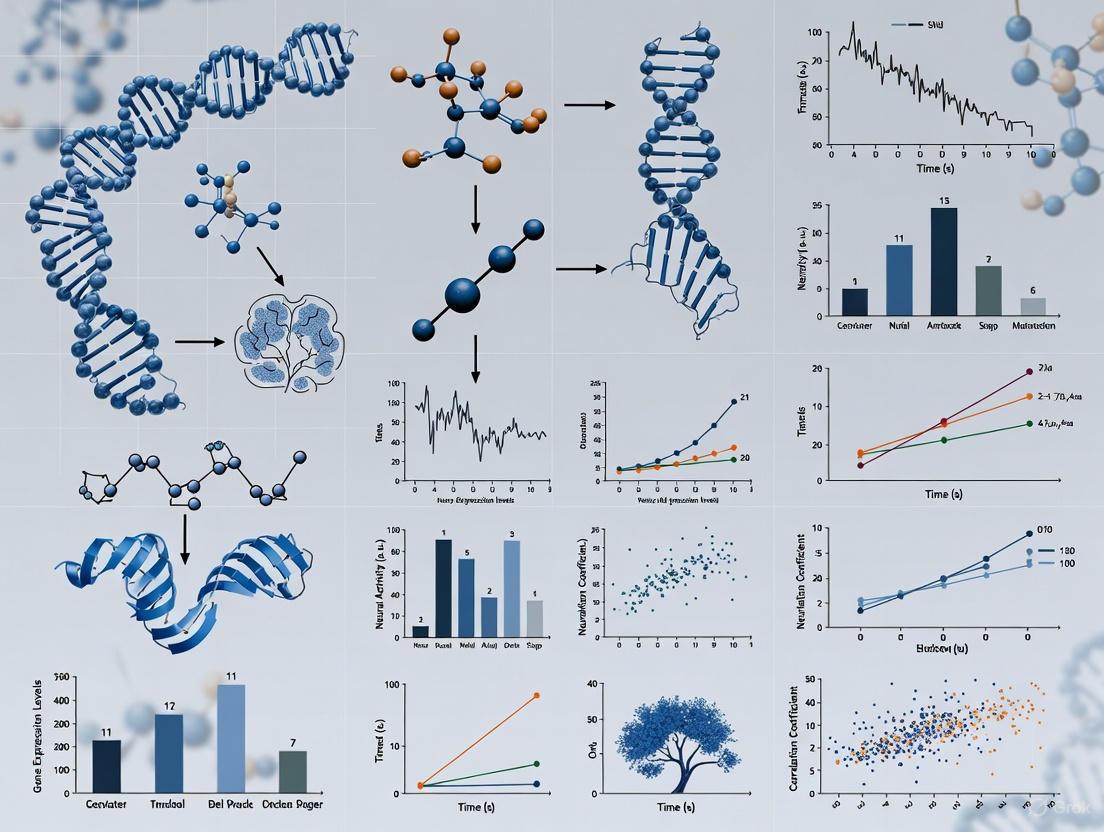

System Visualization Diagrams

Diagram 1: Systemic Inflammation to Cognitive Deficit Pathway

Diagram 2: Dynamic Network Cycle Model of Cognition

Diagram 3: Troubleshooting Workflow for Cognitive Models

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Analytical Tools for Systemic Cognitive Research

| Item / Reagent | Function / Utility in Research | Example Application |

|---|---|---|

| High-Sensitivity ELISA Kits | Precisely quantify low levels of systemic inflammatory biomarkers (e.g., IL-6, TNF-α, CRP) from blood serum/plasma. | Establishing a biochemical correlate for cognitive performance differences [3] [6]. |

| Hidden Markov Model (HMM) Toolboxes (e.g., SPM12, HMM-MAR) | Identify recurring, discrete brain states from continuous neuroimaging data (MEG, fMRI) and their timing of activation. | Modeling the stochastic yet structured temporal dynamics of large-scale brain networks [5]. |

| Temporal Interval Network Density Analysis (TINDA) | A custom method to quantify asymmetries in network activation probabilities over variable timescales, revealing cyclical patterns. | Detecting the overarching cyclical structure of functional network activations beyond simple Markovian transitions [5]. |

| Social Activity Questionnaire | A standardized scale to assess frequency of engagement in common social activities (e.g., visiting friends, volunteer work). | Measuring a putative reserve factor that mediates the link between brain pathology and cognitive outcomes [4]. |

| Structural MRI & Freesurfer | Provide high-resolution anatomical scans and automated quantification of global/regional brain morphology (e.g., cortical thickness, gray matter volume). | Assessing brain structure as a potential mediator between systemic factors (inflammation) and cognitive function [6]. |

Research into the interplay between language and executive function (EF) presents a complex methodological landscape. EF refers to the higher-order cognitive processes—including inhibitory control, working memory, and cognitive flexibility—that are essential for goal-oriented problem-solving in daily life [7]. A core challenge in this domain is that these cognitive processes are not directly observable; researchers must instead design specific tasks to sample behavior and then infer the underlying cognitive skills from the resulting scores [8]. This process is fraught with difficulties, from "construct irrelevant variance" (where tasks tap into unintended cognitive skills) to the inherent "noise" in human performance and questions about whether findings from a controlled task will generalize to real-world communication [8]. The following guides and FAQs are designed to help you navigate these challenges in your experimental work.

Frequently Asked Questions (FAQs)

FAQ 1: My experiment reliably produces robust group-level effects, but I am struggling to use the same task for individual differences research. Why is this? It is a common but problematic assumption that a task which works well for detecting group-level effects will automatically be valid and reliable for measuring differences between individuals. Tasks popular in group-level designs often have relatively small between-participant variability, making them well-powered to detect an average effect but unsuitable for rank-ordering individuals [9]. Furthermore, the difference scores commonly used in subtractive designs are notoriously noisy and unreliable estimates of an individual's effect size [9]. Solutions include moving away from simple difference scores and employing hierarchical modeling on trial-level data to derive more reliable individual effect sizes [9].

FAQ 2: When studying populations with neurodevelopmental disorders (NDDs), my language and EF assessments sometimes produce conflicting results between performance-based tasks and parent reports. Which should I trust? This inconsistency is a documented methodological challenge. For instance, in studies of bilingual children with autism spectrum disorder (ASD), performance-based tasks often reveal EF advantages (e.g., in working memory and inhibitory control) compared to monolingual peers, while parent-reported measures sometimes fail to detect these differences [7]. This does not mean one measure is inherently "wrong"; they may be capturing different facets of functioning. Performance-based tasks measure capacity under controlled conditions, while questionnaires often reflect behavior in daily life. The best practice is to use a multi-method assessment approach that combines both types of measures to gain a more complete picture [7].

FAQ 3: I am using a linguistic stimuli set where each item is unique and cannot be repeated across conditions for a single participant. How can I adapt this for an individual differences design? This is a fundamental challenge in psycholinguistics. In group-level studies, this is often solved by creating counterbalanced experiment versions where different participants see different items in different conditions. However, this solution is inappropriate for individual-differences designs because it introduces massive item-level variability and means participants are not completing comparable tasks [9]. To address this, researchers must take extra steps to ensure their measurement instrument is both valid and reliable for individual assessment, which may involve creating new stimulus sets specifically designed for this purpose, rather than repurposing ones from group-level studies [9].

FAQ 4: Can playful, ecologically valid interventions really produce measurable changes in executive functions? Yes. A growing body of research suggests that short, socially-engaging, and playful interventions can effectively enhance EFs. One study demonstrated that a brief 15-minute playful interaction, which involved co-created physical movement and imagination with an adult, led to improved performance on the Flanker task (a measure of attentional control and inhibition) in children aged 6-10, whereas a control group that engaged in non-playful physical activity did not show this improvement [10]. These activities are thought to be effective because they are multidimensional, simultaneously engaging cognitive, emotional, and social functions in an enjoyable context, which may support better generalization of skills [10].

Troubleshooting Common Experimental Issues

Issue 1: Low Reliability in Subtractive Task Scores

- Problem: The difference scores (e.g., Stroop effect, Ambiguity resolution cost) calculated for your participants show poor test-retest reliability, weakening your ability to correlate them with other measures.

- Solution: Avoid relying on simple difference scores. Instead, use hierarchical (multilevel) models to analyze trial-level data. This method provides more reliable estimates of individual effect sizes by partialing out nuisance variation and appropriately modeling the data structure [9].

Issue 2: Generalizability from Lab to Real World

- Problem: You find a significant effect of an EF training intervention on a lab-based language task, but you do not see transfer to naturalistic conversation or daily communication.

- Solution: Increase the ecological validity of your interventions and assessments. Consider incorporating socially-rich, playful paradigms that mirror real-world interactions [10]. When assessing outcomes, complement standardized lab tasks with natural language sampling or informant reports to capture a broader range of functioning [8].

Issue 3: Controlling for Confounding Variables in Bilingualism Studies

- Problem: When comparing monolingual and bilingual groups on EF tasks, it is difficult to rule out confounding factors like socio-economic status, cultural background, or language proficiency.

- Solution: Move beyond simple group comparisons. Carefully document and measure potential confounding variables, including language proficiency and dominance using standardized tools. Consider using longitudinal designs or statistical methods like regression that allow you to control for these factors when examining the relationship between bilingual experience and EF outcomes [7].

The table below summarizes key quantitative findings from recent research on the language-EF relationship, particularly in clinical populations.

Table 1: Key Quantitative Findings from Recent Research

| Study Focus | Participant Groups | EF Assessment Method | Key Finding | Statistical Outcome |

|---|---|---|---|---|

| Bilingualism in ASD [7] | 463 monolingual & 404 bilingual children with ASD | Performance-based tasks (e.g., working memory, cognitive flexibility) | Bilingual children showed EF advantages | Significant improvements in performance-based measures |

| Bilingualism in ASD [7] | 463 monolingual & 404 bilingual children with ASD | Parent-reported questionnaires | Inconsistency with performance-based measures | Parent-reported measures sometimes failed to detect bilingual-related differences |

| Social Playfulness [10] | 62 children (6-10 years) | Flanker Task (Response Times) | Playful interaction improved attentional performance | Significant improvement in response times post-intervention (p < .05) |

Detailed Experimental Protocols

Protocol 1: Investigating EF Benefits in Bilingual Children with Neurodevelopmental Disorders

This protocol is based on the methodologies synthesized in a recent scoping review [7].

- Participant Recruitment:

- Recruit children aged 4-12 years with a confirmed diagnosis of a neurodevelopmental disorder (e.g., Autism Spectrum Disorder).

- Create two matched groups: bilingual children (exposed to two or more languages) and monolingual children. Groups should be matched on age, non-verbal IQ, and socioeconomic status where possible.

- Executive Function Assessment:

- Performance-based Tasks: Administer a battery of computerized or direct-assessment tasks. Key domains to assess include:

- Inhibitory Control: Flanker Task [10] or Stroop Task.

- Working Memory: Digit Span or N-back tasks.

- Cognitive Flexibility: Dimensional Change Card Sort Task.

- Parent-reported Measures: Use standardized questionnaires such as the Behavior Rating Inventory of Executive Function (BRIEF) to capture EF in everyday settings.

- Performance-based Tasks: Administer a battery of computerized or direct-assessment tasks. Key domains to assess include:

- Data Analysis:

- Use multivariate analysis of covariance (MANCOVA) to compare performance across the two groups on the EF task battery, controlling for relevant covariates like verbal IQ.

- Analyze correlations and potential discrepancies between performance-based and parent-reported EF scores.

Protocol 2: A Short Playful Interaction to Modulate Executive Functions

This protocol is adapted from a study demonstrating the immediate effects of social play on EF [10].

- Participant Setup:

- Recruit typically developing children in the target age range (e.g., 6-10 years).

- Obtain informed consent from parents and assent from children.

- Baseline Measures:

- EF Task: Have the child complete a pre-test version of the Flanker task to establish a baseline for attentional control and inhibitory control [10].

- Mood Scale: Administer a child-friendly mood questionnaire (e.g., an adapted Positive and Negative Affect Schedule - PANAS).

- Intervention:

- Experimental Group (Playful Interaction): The experimenter engages the child in a 15-minute co-created playful activity. This should be characterized by novelty, unpredictability, and positive social exchange. Examples include spontaneous imaginative play or inventing new physical games together [10].

- Control Group (Physical Activity): The experimenter and child engage in a structured, non-playful physical activity of equivalent duration, such as repetitive calisthenics or a simple, rule-bound game.

- Post-Intervention Measures:

- Immediately after the interaction, re-administer the Flanker task and the mood scale.

- Also, administer a brief measure of social connection towards the experimenter.

- Data Analysis:

- Conduct a mixed-model ANOVA with time (pre vs. post) as a within-subjects factor and group (playful vs. control) as a between-subjects factor on Flanker task response times and accuracy.

- Analyze changes in mood and social connection using t-tests or non-parametric equivalents.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Tools for Language and EF Research

| Item/Tool Name | Function/Brief Explanation | Example Application |

|---|---|---|

| Flanker Task [10] | Measures attentional control and inhibitory control by requiring participants to respond to a target while ignoring flanking distractors. | Assessing the effect of a brief intervention on inhibitory control [10]. |

| Natural Language Processing (NLP) Libraries (e.g., SpaCy, NLTK) [11] | Software libraries for automated text analysis. Can extract features like lexical diversity, syntactic complexity, and semantic coherence from transcribed speech. | Objectively quantifying language deterioration in neurodegenerative diseases or improvements following therapy [12]. |

| Behavior Rating Inventory of Executive Function (BRIEF) | A parent- or teacher-reported questionnaire that assesses EF in an everyday environment. | Capturing real-world manifestations of EF challenges that may not be apparent in lab-based tasks, especially in NDD populations [7]. |

| Social Playfulness Paradigm [10] | A standardized, yet flexible, protocol for engaging participants in co-created, novel, and positive social play. | Used as an ecologically valid intervention to test the malleability of EFs in a socially engaging context [10]. |

Experimental Workflow and Relationship Diagrams

Bilingualism and EF Research Paradigm

Language Analysis Pipeline for Cognitive Assessment

The Critical Challenge of Linguistic and Participant Diversity

Technical Support Center: Troubleshooting Guides and FAQs

This technical support center provides practical solutions for researchers confronting the critical challenges of linguistic and participant diversity in cognitive language analysis. The following guides address specific methodological issues that may arise during your experiments.

Frequently Asked Questions

Q1: Our neuroimaging findings, based on English speakers, do not replicate in a study involving a tonal language. What could be the cause? A: This is a fundamental issue of linguistic typology. Different languages engage neural networks differently. For example, processing grammatical tone in a language like Mandarin relies more heavily on regions like the right superior temporal gyrus compared to non-tonal languages like English [13]. Your experimental design must account for these structural differences at the phonological, morphological, and syntactic levels, rather than assuming universal processing mechanisms.

Q2: We are struggling to recruit participants from diverse ethnic backgrounds for our early-phase clinical trial on a cognitive drug. What are the key barriers? A: The barriers are multifactorial, as qualitative interviews with clinical researchers confirm [14]. Key challenges include:

- Distrust of Healthcare Systems: Historical abuses, like the Tuskegee Syphilis Study, have created a lasting legacy of mistrust among some populations [15].

- Practical and Logistical Hurdles: Potential participants often face challenges related to transportation, the need to take time off work, and a lack of access to the academic medical centers where trials are typically conducted [14] [15].

- Lack of Awareness and Referral: Community clinicians may not refer patients from underserved groups, and the process often requires a "self-driven" referral, which creates an additional barrier [14].

- Language and Cultural Insensitivity: A lack of bilingual staff and materials, combined with unconscious bias from healthcare professionals, can prevent equitable recruitment [15].

Q3: A drug candidate in our development pipeline is showing a signal of cognitive impairment in Phase I. How should we proceed? A: This finding warrants a rigorous, phased assessment of cognitive safety [16]. You should:

- Confirm with Sensitive Tools: Ensure you are using objective, sensitive, and comprehensive cognitive assessments, not just subjective reports or basic psychomotor tests. Regulatory guidance (e.g., FDA UCM430374) emphasizes the need for sensitive measures of reaction time, attention, and memory from first-in-human studies [16].

- Determine Dose-Response: Investigate if the cognitive effect is dose-dependent to help establish a safe therapeutic window [16].

- Evaluate Risk-Benefit: Contextualize the cognitive risk against the drug's intended indication and mechanism. A cognitive side effect may be more acceptable in a life-saving cancer drug than in a treatment for a chronic, non-life-threatening condition [16].

Q4: How can we improve the diversity of participants in our neurolinguistics study to make our findings more generalizable? A: Moving beyond a reliance on WEIRD (Western, Educated, Industrialized, Rich, and Democratic) populations requires proactive strategies [13]. Recommendations from clinical research include [14] [15]:

- Community Outreach: Partner with local community organizations and health systems to build trust and recruit participants directly.

- Diversify Research Staff: Actively recruit staff from diverse backgrounds to help build rapport and cultural competence.

- Remove Logistical Barriers: Provide incentives that cover transportation, parking, and meals. Offer flexible scheduling outside standard working hours.

- Set and Monitor Enrollment Goals: Pre-define targets for the recruitment of underrepresented groups and track progress diligently.

Q5: Our deep learning model for linguistic neural decoding performs poorly when decoding speech from a new subject. Is the model faulty? A: Not necessarily. This is a classic challenge of inter-subject variability. Brain responses to the same linguistic stimulus can vary significantly from one person to another due to individual developmental trajectories and unique neural "wiring" [13] [17]. The solution often involves:

- Subject-Specific Finetuning: Allowing the model to adapt to a specific individual's neural data.

- Larger and More Diverse Datasets: Training models on data from a much wider variety of speakers to improve generalizability.

- Alignment Training: Using techniques that explicitly align the neural activity patterns of different subjects in a shared model space [17].

Troubleshooting Experimental Protocols

Issue: Inconsistent or Noisy Neural Signals in Diverse Participant Cohorts Methodology: When working with a diverse cohort, standard pre-processing pipelines may fail. Implement an advanced signal processing workflow that accounts for greater anatomical and functional variability [17] [13].

- Individualized Head Models: For EEG/MEG, use subject-specific MRI scans to create accurate head models rather than relying on template heads. This is crucial for accounting for anatomical differences that affect signal transmission.

- Parameter Optimization: Critically evaluate and adjust noise-cancellation and artifact rejection parameters (e.g., for eye blinks or muscle movement) for each subject or demographic subgroup. Default settings may be biased toward the majority population in initial training data.

- Functional Localization: For fMRI analyses, avoid relying solely on atlas-based Regions of Interest (ROIs). Instead, use functional localizer tasks to individually define key language regions (e.g., Broca's area, Wernicke's area) for each participant, as their exact location and extent can vary [13].

Quantitative Data on Research Trends and Disparities

Table 1: Emerging Hot Topics in Neuroimaging of Spoken Language (2000-2024)

A 25-year bibliometric analysis of 8,085 articles reveals the following trends based on keyword burst detection [18].

| Hot Topic | Projected Trend Rationale |

|---|---|

| Classification | Driven by the rapid growth of artificial intelligence and machine learning applications for analyzing brain data. |

| Alzheimer's Disease | Motivated by the aging population in developed countries, increasing focus on cognitive decline and its language biomarkers. |

| Oscillations | Growing interest in the role of neural oscillations (brain waves) as a fundamental mechanism for language processing. |

Table 2: Participant Diversity Gap in U.S. Clinical Research

An analysis of 32,000 participants in new drug trials in 2020 highlights significant underrepresentation compared to the U.S. Census population [15].

| Demographic Group | U.S. Census Population | Representation in Clinical Trials (2020) | Disparity |

|---|---|---|---|

| Black or African American | ~14% | 8% | -6% |

| Hispanic or Latino | ~19% | 11% | -8% |

| Asian | ~6% | 6% | ~0% |

| Adults 65+ | ~17%* | 30% | +13% |

*Note: The 65+ population figure is based on current census estimates for comparison. The +13% for older adults indicates a relative over-representation in this specific dataset, though they are often underrepresented in other research contexts [15].

Experimental Protocols for Assessing Cognitive Safety

Protocol: Comprehensive Cognitive Safety Assessment in Early Drug Development This protocol outlines a methodology for evaluating the potential cognitive-impairing effects of new drug compounds, crucial for both CNS and non-CNS drugs [16].

Objective: To characterize the cognitive safety profile of a novel compound, determining dose-response relationships and identifying any off-target pharmacological effects on the central nervous system.

Design: A randomized, double-blind, placebo- and active-controlled, single- or multiple-dose study. The active control (e.g., a known sedating antihistamine) serves as a benchmark to validate the sensitivity of the assessment.

Population: Healthy volunteers or a targeted patient population, screened for relevant medical history.

Cognitive Assessment Battery: The core of the protocol is a computerized cognitive assessment tool that is sensitive, reliable, and comprehensive. It should probe multiple cognitive domains [16]:

- Psychomotor Speed: Simple Reaction Time, Choice Reaction Time.

- Attention: Divided Attention, Selective Attention, and Sustained Attention tasks.

- Executive Function: Task-switching, Planning, and Inhibition (e.g., Stop-Signal Task).

- Episodic Memory: Verbal and Visual Memory tasks (immediate and delayed recall).

- Working Memory: N-back tasks or Spatial Working Memory.

Procedure:

- Baseline Assessment: Participants complete the cognitive battery and provide self-report ratings of mood and alertness prior to dosing.

- Dosing: Participants are randomized to receive a single dose of the novel compound (at one of several dose levels), a placebo, or the active control.

- Post-Dose Assessment: Cognitive testing is repeated at predetermined timepoints post-dose (e.g., 1, 3, 6 hours) to capture the peak drug concentration and the elimination profile.

- Statistical Analysis: Perform an ANOVA with post-hoc comparisons to test for significant differences between each active dose and placebo on the primary cognitive endpoints. The magnitude of any impairment can be benchmarked against the active control and against known impairers like alcohol [16].

Research Workflow and Signaling Pathway Diagrams

Inclusive Research Workflow

Dual-Stream Language Processing

The Scientist's Toolkit: Key Research Reagents

Table 3: Essential Tools for Cognitive Language Research

| Tool / Solution | Function in Research |

|---|---|

| Sensitive Cognitive Batteries (CANTAB, CogState) | Objective, computer-based assessments to detect subtle drug-induced cognitive impairment in clinical trials [16]. |

| Functional MRI (fMRI) | Non-invasive neuroimaging technique used to localize language processing in the brain with high spatial resolution [18] [17]. |

| Electroencephalography (EEG) | Non-invasive technique with high temporal resolution, ideal for tracking the rapid dynamics of speech processing and neural oscillations [18] [17]. |

| Electrocorticography (ECoG) | Invasive recording technique providing signal with high spatial and temporal resolution, often used in speech neuroprosthetics research [17]. |

| Large Language Models (LLMs) | Used in neural decoding to map brain activity to linguistic representations and generate text or speech from neural signals [17]. |

| Diversity & Inclusion Frameworks | Structured protocols and community partnerships to ensure participant recruitment reflects real-world population diversity [14] [15]. |

This technical support guide addresses the critical methodological challenges and confounding variables that researchers face in cognitive language analysis. A confounding variable is an extraneous factor that systematically changes along with the variables being studied, potentially distorting the results and leading to incorrect conclusions. Properly identifying and controlling for these confounds is essential for producing valid, reproducible research in psycholinguistics and cognitive science.

The following sections provide troubleshooting guidance, experimental protocols, and resources to help researchers design more robust studies that account for the complex interplay between participant characteristics, task demands, and environmental factors.

## Frequently Asked Questions (FAQs)

Q1: What are the most critical participant-related confounding variables in cognitive language studies?

Participant age, handedness, linguistic background, and sensory experiences can significantly confound results. For example, a 2025 consensus paper emphasizes that individual differences in sensorimotor experiences, cultural background, and cognitive strategies create substantial variability in embodied language effects [19]. Age-related differences in cognitive processing affect how linguistic stimuli are handled, while handedness strongly influences horizontal space-valence associations (left-handers typically associate positive concepts with the left side, contrary to right-handers) [20]. Bilingual and multilingual speakers process language differently than monolingual speakers, and these differences are not merely categorical but exist on a continuum of language experience [21].

Q2: How does task ecological validity affect experimental outcomes?

Low ecological validity—when laboratory tasks don't reflect real-world language use—threatens the generalizability of findings. Research highlights a problematic gap between "cognition in artificial experimental settings and cognition in the wild" [19]. Tasks that explicitly ask participants to evaluate valence, for instance, produce stronger space-valence associations than those where valence remains task-irrelevant [20]. Similarly, understanding language in casual conversations (which rely heavily on implicature and context) recruits different cognitive resources than processing formal linguistic stimuli [1].

Q3: What technical issues commonly affect cognitive assessments and how can they be resolved?

Technical problems often stem from internet connectivity, browser compatibility, and caching issues. For timed cognitive assessments, even minor connection interruptions can freeze tests because "the server is pinged every 5 seconds... to ensure answers are recorded properly and the timer is working" [22]. Recommended solutions include using Google Chrome or Firefox, clearing browser cache before assessments, ensuring a stable private internet connection with minimal connected devices, and using incognito/private browsing modes to eliminate extension interference [23] [22].

Q4: How can researchers better account for linguistic diversity in study design?

Traditional approaches that minimize linguistic variation produce "biologically implausible objects/processes" [1]. Instead, researchers should embrace diversity by including typologically diverse languages, non-standard varieties, and participants with varying linguistic experiences. This includes recognizing that "bilingualism is a dynamic multifaceted experience that shapes cognition and the brain" [21] and that the cognitive foundations of language will be better understood through examining different functions of language, sociolinguistic phenomena, and developmental paths [1].

## Troubleshooting Common Experimental Issues

### Problem: Inconsistent Space-Valence Association Results

Issue: Unexpected variability in how participants associate vertical/horizontal space with positive/negative concepts.

Solution:

- Control for handedness: Left-handed participants show reversed horizontal space-valence associations [20]. Record and account for handedness in your analysis.

- Consider cultural background: Horizontal space-valence associations may be stronger in non-Western cultures [20]. Document participants' cultural backgrounds.

- Explicitly state task relevance: Effects are stronger when valence evaluation is directly requested [20]. Clearly define whether valence is task-relevant or task-irrelevant in your methodology.

### Problem: Unaccounted Individual Differences Affecting Language Processing

Issue: High variability in psycholinguistic responses even when controlling for standard demographic factors.

Solution:

- Measure and control for affect: Negative affect consistently predicts daily cognitive failures at both within-person and between-person levels [24]. Incorporate brief affect measures into your study protocol.

- Document language expertise: Experts (e.g., wine specialists, ballet dancers) show enhanced mental simulation in their domains of expertise [19]. Account for specialized knowledge that might influence language processing.

- Consider sensory experiences: Individuals with congenital blindness or anosmia process sensory language differently despite having comparable conceptual knowledge [19]. Screen for and document unusual sensory experiences.

### Problem: Technical Disruptions During Cognitive Assessments

Issue: Cognitive assessment platforms freezing, timing inaccurately, or failing to save data.

Solution:

- Ensure stable connectivity: Use a dedicated, secure private network with minimal connected devices during timed tests [22].

- Clear cache regularly: Browser cache conflicts with updated assessment code can cause errors [22].

- Use supported browsers: Chrome and Firefox are optimally configured for most cognitive assessment platforms [23] [22].

- Document technical issues: Note any error messages, take screenshots, and record browser/device information for troubleshooting [22].

## Quantitative Data Synthesis

Table 1: Effect Sizes for Space-Valence Associations (Meta-Analysis Findings) [20]

| Dimension | Effect Size (r) | Number of Experiments | Key Moderating Factors |

|---|---|---|---|

| Vertical | 0.440 | 111 | Explicit valence evaluation tasks show larger effects |

| Horizontal | 0.310 | 88 | Handedness, cultural background |

Table 2: Affect-Cognition Relationships in Daily Diary Studies [24]

| Affect Type | Sample | Within-Person β | Between-Person β | Significance |

|---|---|---|---|---|

| Negative Affect | Singapore | 0.21 | 0.58 | p < 0.001 |

| Negative Affect | US | 0.08 | 0.28 | p < 0.001 |

| Positive Affect | Singapore | 0.01 | -0.04 | Not significant |

| Positive Affect | US | 0.02 | -0.11 | Between-person only (p < 0.001) |

## Experimental Protocols

### Protocol 1: Assessing Space-Valence Associations with Handedness Controls

Background: This protocol measures how individuals associate vertical and horizontal space with positive and negative concepts while controlling for key confounding variables.

Materials:

- Stimulus presentation software (e.g., PsychoPy, E-Prime)

- Handedness inventory (e.g., Edinburgh Handedness Inventory)

- Cultural background questionnaire

- Positive/negative concept stimuli (words or images)

Procedure:

- Administer handedness and cultural background questionnaires

- Randomize presentation of positive and negative concepts

- For vertical dimension: Measure response time/accuracy for upper vs. lower screen locations

- For horizontal dimension: Measure response time/accuracy for left vs. right screen locations

- Counterbalance trial order across participants

- Explicitly state whether valence evaluation is task-relevant or task-irrelevant

Analysis Notes:

- Separate analysis for left-handed and right-handed participants

- Consider cultural background as a covariate

- Report effect sizes using correlation coefficients (r) [20]

### Protocol 2: Daily Diary Method for Affect-Cognition Relationships

Background: This protocol captures naturalistic variations in affect and cognitive failures using daily diary methods to minimize recall bias.

Materials:

- Daily affect measure (e.g., PANAS - Positive and Negative Affect Schedule)

- Cognitive failures questionnaire

- Online survey platform or telephone interview protocol

Procedure:

- Collect baseline demographic and socioeconomic data

- For 7-8 consecutive days, administer daily surveys

- Time surveys consistently (e.g., end-of-day assessments)

- Use multilevel modeling to analyze within-person and between-person effects

- Ensure high response rates through participant reminders

Analysis Notes:

- Use multilevel modeling to separate within-person and between-person effects

- Control for demographic and socioeconomic variables

- Report standardized beta coefficients (β) for effect sizes [24]

## Visualization of Methodological Relationships

Confounding Variables and Mitigation Relationships

This diagram illustrates how participant characteristics (yellow) and task features (green) influence research outcomes (blue), alongside mitigation strategies (red) that help control for these confounding effects.

## The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Methodological Tools for Cognitive Language Research

| Tool/Resource | Function | Application Notes |

|---|---|---|

| Daily Diary Methods | Captures naturalistic variations in affect and cognition | Minimizes recall bias; ideal for studying within-person fluctuations [24] |

| Multilevel Modeling | Separates within-person and between-person effects | Essential for daily diary data; accounts for nested data structures [24] |

| Handedness Inventories | Controls for lateralization effects | Critical for spatial cognition studies; reveals reversed effects in left-handers [20] |

| Cultural Background Measures | Accounts for cultural variation in cognitive processing | Particularly important for horizontal spatial associations [20] |

| Language Experience Questionnaires | Quantifies bilingual/multilingual experience | Treats bilingualism as continuous rather than categorical [21] |

| Cognitive Assessment Platforms | Measures specific cognitive abilities | Ensure stable internet connection; use recommended browsers [23] [22] |

| Meta-Analytic Procedures | Synthesizes effect sizes across studies | Reveals robust patterns despite interstudy heterogeneity [20] |

From Traditional Paradigms to AI: A Toolkit for Cognitive Language Analysis

Technical Support Center: FAQs & Troubleshooting Guides

This technical support center is designed to assist researchers and laboratory professionals in navigating common methodological challenges encountered in experiments on cognitive task complexity and second language (L2) analysis.

Frequently Asked Questions (FAQs)

Q1: What is the fundamental theoretical disagreement I must account for when designing tasks to manipulate cognitive complexity? Your experimental design will be influenced by one of two primary theoretical frameworks. Robinson's Cognition Hypothesis posits that increasing cognitive demands along resource-directing dimensions (e.g., increasing the number of elements to consider) can simultaneously enhance linguistic complexity and accuracy by directing learners' attention to specific aspects of the language code [25] [26]. In contrast, Skehan's Limited Attentional Capacity Model suggests that humans have a finite attentional capacity, leading to trade-off effects where increases in one area (e.g., complexity) may result in decreases in another (e.g., fluency or accuracy) [26] [27]. Your choice of framework will shape your hypotheses and the interpretation of your results.

Q2: How can I effectively manipulate task complexity in a narrative speaking task? A robust method is to vary the number of elements a participant must manage. For example:

- Simple Task: Provide a narrative task based on pictures involving only two characters [27].

- Complex Task: Use a similar narrative task but introduce more characters or elements, for example, three or more individuals with differing characteristics or goals [27]. This increases cognitive load by requiring the learner to track more information and relationships.

Q3: Our lab is observing inconsistent effects of task complexity on participant performance. What could be the cause? Inconsistent findings are a recognized challenge in this field and can arise from several sources:

- Unvalidated Manipulations: Studies may fail to empirically confirm that their "complex" task was actually perceived as more cognitively demanding. It is good practice to use learner self-ratings, expert judgments, and time-on-task measures to validate your complexity manipulations [25].

- Modality Differences: Theoretical models were largely developed for oral interaction, but their predictions may not hold perfectly for written production or technology-mediated contexts [26]. The modality of your task (oral vs. written) must be considered.

- Individual Differences: Factors like a participant's working memory capacity and language aptitude can significantly moderate how they respond to complex tasks and feedback [28]. Measuring these variables can help explain variance in your results.

Q4: What is the role of task "closure" or "openness," and how does it impact outcomes? Task closure refers to whether a task has a single, predetermined solution (closed) or a wide range of acceptable solutions (open). Contrary to some early claims, recent research found that open tasks can elicit greater lexical diversity in L2 writing than closed tasks [25]. This suggests that the constraint of a single correct answer may limit linguistic exploration. The choice between open and closed tasks should be deliberate, based on whether the research goal is convergent problem-solving or divergent, creative language use.

Troubleshooting Common Experimental Issues

Problem: Participants show improved fluency but decreased accuracy on complex tasks.

- Diagnosis: This is a classic signature of a trade-off effect, consistent with Skehan's Limited Attentional Capacity Model [26]. The cognitive demands of the task are exceeding the participants' available attentional resources, forcing a prioritization of meaning over form.

- Solution: Consider the goal of your task. If accuracy is the primary target, you might:

Problem: High levels of participant anxiety or frustration during complex task performance.

- Diagnosis: High cognitive load can manifest as stress and a feeling of being pressed for time [25]. This affective response can interfere with language retrieval and production.

- Solution:

- Validate Task Difficulty: Ensure your complexity manipulation is appropriately calibrated for your participant population's proficiency level.

- Provide Clear Instructions: Give participants explicit, step-by-step instructions for what the task entails to reduce procedural uncertainty.

- Implement a "Tech Check": If the task is technology-mediated, conduct a rehearsal session before data collection to familiarize participants with the interface and troubleshoot technical issues like microphone or connectivity problems [30].

Problem: Technical failures disrupt technology-mediated task-based experiments.

- Diagnosis: Issues can range from participant-side connectivity problems to software glitches in your chosen platform (e.g., LMS, video conferencing) [30].

- Solution: Adopt a proactive support protocol:

- Prevention: Provide participants with clear guidelines on system requirements and conduct a mandatory tech check session [30].

- Preparedness: Create a simple step-by-step troubleshooting guide for common issues (e.g., "microphone not detected," "video frozen") [30].

- Support Channel: Establish a dedicated and rapid-response communication channel (e.g., a separate chat room or hotline) for technical support during experiments to minimize downtime [30].

Experimental Protocols & Data Synthesis

Protocol 1: Manipulating Cognitive Complexity in Oral Narrative Tasks

This protocol is adapted from a study examining cognitive processes during oral production [27].

- Objective: To investigate the effects of task complexity on cognitive processes and oral performance.

- Participants: L2 learners at a targeted proficiency level (e.g., intermediate).

- Task Design (Resource-Directing):

- Simple Task: A picture-based story narration or decision-making task involving two characters with minimal conflicting features.

- Complex Task: A parallel task involving six characters (three men, three women) with more detailed and potentially conflicting personal information, requiring more reasoning to complete (e.g., finding the best matches for a dating show) [27].

- Procedure:

- Participants are randomly assigned to a task sequence (simple-complex or complex-simple) with a washout period (e.g., two weeks) to minimize practice effects [27].

- Participants complete the task individually, with their production recorded via audio and video.

- Immediately after the task, a stimulated recall session is conducted. Participants watch the video of their own performance and verbalize their thought processes at the time of production, using their L1 to ensure depth of reporting [27].

- Data Analysis:

- Transcription: Audio recordings are transcribed.

- Performance Analysis: CALF measures are calculated from transcripts:

- Complexity: Subordination index (clauses per T-unit), Phrasal elaboration (complex nominals per clause).

- Accuracy: Proportion of error-free clauses.

- Fluency: Speech rate (syllables per minute).

- Lexical Diversity: Measure such as D-value or Type-Token Ratio.

- Process Analysis: Stimulated recall protocols are coded using a framework like Levelt's (1989) speech production model, categorizing comments as related to Conceptualization, Formulation, or Monitoring [27].

Protocol 2: Investigating Task Complexity and Written Corrective Feedback

This protocol integrates cognitive load with feedback timing, based on research into individual differences [28].

- Objective: To examine the effects of immediate vs. delayed written corrective feedback on the acquisition of a specific grammatical structure (e.g., French past tense) during collaborative writing, and the moderating role of working memory and aptitude.

- Participants: Learners studying the target language (e.g., university-level L2 French students).

- Task Design: A collaborative text-editing or story-completion task focusing on the use of the target structure.

- Procedure:

- Pretest: Participants individually write a text (e.g., a story) to establish a baseline.

- Treatment: Participants are randomly assigned to one of three groups for a collaborative writing task:

- Immediate CF Group: Receives metalinguistic corrective feedback during the writing process.

- Delayed CF Group: Receives the same feedback one week after the task.

- Task-Only Group: Completes the task but receives no feedback.

- Posttests: Participants individually write new texts in an immediate posttest and a delayed posttest (e.g., 2-4 weeks later).

- Individual Difference Measures: Participants complete a working memory test (e.g., a backwards digit span task) and a language aptitude test (e.g., LLAMA F) [28].

- Data Analysis:

- Primary Analysis: Group comparisons on posttest scores to measure learning gains.

- Moderation Analysis: Regression analyses to determine if working memory predicts gains for the Immediate CF group, and if language aptitude predicts gains for the Delayed CF group [28].

Quantitative Data Synthesis

The following tables summarize empirical findings on the effects of increased cognitive task complexity.

Table 1: Effects of Increased Cognitive Task Complexity on L2 Written Performance

| Linguistic Dimension | Effect of Increased Complexity | Key Study Findings |

|---|---|---|

| Lexical Complexity | ↑ Increase | Greater lexical diversity reported [25]. |

| Syntactic Complexity | Mixed Effects | Decreased subordination (clauses/T-unit), but increased phrasal elaboration (coordinate phrases/clause); no significant change in Mean Length of T-Unit [26]. |

| Accuracy | Mixed Effects | Lower proportion of target-like use (TLU) of articles [25]; No significant differences found in other accuracy measures under online planning [26]. |

| Fluency | — | No significant differences found under online planning conditions [26]. |

| Functional Adequacy | ↓ Decrease | Detrimental effects on content, organization, and overall scores [26]. |

Table 2: Effects of Increased Cognitive Task Complexity on L2 Oral Performance

| Linguistic Dimension | Effect of Increased Complexity | Key Study Findings & Context |

|---|---|---|

| Syntactic Complexity | Inverted-U Pattern | The middle-complexity task often yielded the most balanced performance, not the most complex [29]. |

| Accuracy | ↑ Increase | Complex tasks enhanced accuracy, but sometimes at the cost of lexical diversity [29]. |

| Lexical Diversity | ↓ Decrease | Can be constrained by the demands of a complex task [29]. |

| Fluency | Influenced by Sequence | Ascending sequences fostered fluency gains; starting with a complex task initially reduced speaking speed [29]. |

Conceptual Diagrams of Theoretical Frameworks

Task Complexity Decision Pathway

Cognitive Processes in L2 Oral Production

The Scientist's Toolkit: Key Research Reagents & Materials

Table 3: Essential Materials for Task-Based Complexity Research

| Item | Function in Research |

|---|---|

| Stimulated Recall Protocol | A methodological tool to gain insight into participants' cognitive processes during task performance. Immediately after completing a task, participants watch a video recording of their performance and verbalize their thoughts, which are then coded and analyzed [27]. |

| Working Memory Measure | An assessment to quantify an individual's limited attentional capacity, a key individual difference variable. Often measured using tasks like a backwards digit span, where participants must recall a sequence of numbers in reverse order. This capacity can moderate how learners handle complex tasks and feedback [28]. |

| Language Aptitude Test | A test to measure a learner's inherent propensity for language learning, such as the LLAMA F test. This aptitude can influence the effectiveness of different instructional approaches, such as the timing of corrective feedback [28]. |

| Cognitive Load Validation Measures | A multi-pronged approach to ensure task manipulations are perceived as intended. Includes learner self-rating scales (perceived difficulty/mental effort), expert judgment, and objective measures like time-on-task [25]. |

| Technology-Mediated Platform (TMTBLT) | A digital environment (e.g., video conferencing, custom LMS) used to deliver tasks and/or provide feedback. It allows for controlled presentation of stimuli, recording of performance, and enables research on online planning and digital interaction [31] [29]. |

Frequently Asked Questions

Q1: What is a double dissociation and why is it methodologically superior to a single dissociation? A double dissociation is demonstrated when two patients (or groups) show opposite patterns of spared and impaired cognitive functions [32]. Specifically, Patient A with a lesion in brain area "X" shows impairment in function "1" but not function "2", while Patient B with a lesion in brain area "Y" shows impairment in function "2" but not function "1" [33]. This is superior to a single dissociation, which can be misleading. A single dissociation (where a lesion affects one function but not another) might occur simply because the test for the unaffected function is less sensitive or demanding, not because the underlying brain systems are truly independent [32]. Double dissociation provides much stronger evidence for the functional independence of two cognitive processes and their localization to distinct brain areas [34] [32].

Q2: My research involves a neurodegenerative disease that affects diffuse brain networks, not a single, focal lesion. Can I still use the double dissociation logic? Yes, the logic of double dissociation can be adapted for groups of patients with different neurological conditions that affect multiple neural systems [32]. For instance, one can compare patients with Korsakoff's syndrome (KS) to those with Huntington's disease (HD). Research has shown that patients with KS exhibit severe deficits in explicit memory but relatively intact implicit, procedural memory. Conversely, patients with HD show the opposite pattern: intact explicit memory but impaired implicit memory [32]. This double dissociation suggests that explicit and implicit memory are subserved by dissociable neural networks (thalamic regions in KS and striatal regions in HD).

Q3: What are the most common pitfalls in designing a neuropsychological battery to uncover dissociations? Common pitfalls include [34]:

- Using a Single Test: A single test score can typically only indicate the presence or absence of "brain damage somewhere." It cannot distinguish between different types of pathologies or lesion locations [34].

- Ignoring Unimpaired Performance: The pattern of a patient's unimpaired test scores is as critical for brain-function analysis as the pattern of their deficits. A poor score on one test only demonstrates abnormal functioning, which could have many causes [34].

- Confounding Test Sensitivity with Localization: Using two tests where one is simply more sensitive to brain damage in general (e.g., a fluid intelligence test) than the other (e.g., a crystallized ability test) can create a false impression of a single dissociation. This has, for example, historically led to the incorrect conclusion that alcoholism causes more right-hemisphere damage [34].

Q4: In cross-language research, what methodological steps are critical when using an interpreter to ensure data trustworthiness? When a language barrier exists between researchers and participants, key methodological steps include [35]:

- Maintaining Conceptual Equivalence: The interpreter must translate the conceptual meaning of words and phrases, not just provide a literal translation. This is especially critical for complex concepts or healthcare terminology [35].

- Documenting Interpreter Credentials: Ideally, interpreters should be certified by a professional association (e.g., the American Translators Association) or possess verified sociolinguistic language competence to minimize translation errors [35].

- Making the Interpreter Visible: The interpreter's role and how they were used in the research process (e.g., during data collection and analysis) must be explicitly described in the methodology, rather than being rendered "invisible" [35].

Troubleshooting Common Experimental Challenges

| Challenge | Root Cause | Solution |

|---|---|---|

| False Positive Localization | Mass univariate lesion mapping (e.g., VLSM) can be biased toward areas near vascular trunks because lesions from stroke are not randomly distributed. A voxel may appear significant because it is often damaged alongside a truly critical area, not because it is critical itself [36]. | Employ multivariate or network-level lesion mapping approaches. These methods can identify disconnection syndromes and are less biased by common lesion locations [36]. |

| Inability to Replicate a Known Double Dissociation | The neuropsychological tests used may lack specificity or may not be comparable in sensitivity. If one test is inherently more difficult, it can create a performance difference that is misinterpreted as a dissociation [34] [32]. | Carefully pilot tests to ensure they are matched for difficulty and cognitive demand. The double dissociation is most reliable when it is based on the ratio or difference between test scores, not on absolute scores [34]. |

| Poor Generalizability of Language Task Results | Language samples are highly sensitive to the specific testing conditions, such as the type of discourse or the interlocutor. A score from one constrained task (e.g., describing a sandwich) may not predict performance in a different context (e.g., arguing with a spouse) [8]. | Acknowledge the purpose-specific nature of test validity. A test valid for diagnosing severity may not be valid for measuring treatment-induced change. Use multiple tasks to sample across different language contexts [8]. |

| Uninterpretable Null Results in Lesion Mapping | In mass univariate analyses, if a cognitive function is subserved by multiple critical brain regions, damage to any one region might not consistently cause a deficit if the others are intact. Each intact region acts as a counter-example, drastically reducing statistical power [36]. | Ensure a very large sample size to have sufficient power to detect effects that may be present but subtle. Be cautious in interpreting null effects, as they may reflect a lack of power rather than a true lack of association [36]. |

Experimental Protocols

Protocol 1: Establishing a Classic Double Dissociation with Focal Lesion Patients

This protocol is used to demonstrate that two cognitive functions are independent and depend on distinct brain regions.

- Participant Selection: Identify two carefully matched patients or two groups of patients. The critical criterion is that they have focal lesions in different, pre-specified brain areas (e.g., Patient/Group A with a left frontal lobe lesion; Patient/Group B with a left temporoparietal lesion) [32] [33].

- Task Selection and Administration: Select two tasks that are theorized to tap into two distinct cognitive functions (e.g., a speech production task and a language comprehension task). Administer both tasks to all participants.

- Data Analysis: Analyze the pattern of performance. A double dissociation is demonstrated if:

- Interpretation: This pattern provides strong evidence that the brain region damaged in Group A is necessary for function 1, and the region damaged in Group B is necessary for function 2.

Protocol 2: Conducting a Voxel-Based Lesion-Symptom Mapping (VLSM) Analysis

This modern, computational protocol identifies brain regions where tissue damage is statistically associated with a specific behavioral deficit across a large group of patients [36] [37].

- Data Collection:

- Lesion Delineation: For each patient, manually trace the lesion boundary onto a standard brain template (e.g., using MRI or CT scans).

- Behavioral Assessment: Administer a standardized neuropsychological test battery (e.g., the Standard Language Test of Aphasia - SLTA) to obtain a quantitative score for the function of interest [37].

- Preprocessing: Normalize all individual lesion maps into a common stereotaxic space to allow for voxel-by-voxel comparison across patients.

- Statistical Analysis (Mass Univariate Approach):

- For each voxel in the brain, divide patients into two groups: those with a lesion at that voxel and those without.

- Conduct a statistical test (e.g., t-test, Liebermeister test) to compare the behavioral scores of the two groups.

- Correct for multiple comparisons across all voxels (e.g., using False Discovery Rate or permutation testing) and control for potential confounds like total lesion volume [36].

- Output: The result is a statistical map of the brain highlighting voxels where the presence of a lesion is significantly associated with impairment on the behavioral measure.

The Scientist's Toolkit: Essential Research Reagents & Materials

| Item | Function / Rationale |

|---|---|

| Standardized Neuropsychological Battery (e.g., SLTA) | A comprehensive set of 26 subtests designed to assess a wide range of language and cognitive functions. It allows for the profiling of strengths and weaknesses necessary to identify dissociations [37]. |

| High-Resolution Structural MRI/CT Scans | Provides the anatomical data required for precise delineation (tracing) of brain lesion locations and volumes for lesion-symptom mapping [36]. |

| VLSM Software (e.g., MRIcron, NiiStat) | Specialized computational tools that perform voxel-based statistical analysis, comparing behavioral scores across patients with and without lesions at each voxel in the brain [36]. |

| Standard Brain Atlas (e.g., MNI, AAL) | A common stereotaxic coordinate space. Normalizing individual patient brains to this space allows for group-level statistical analysis and direct comparison of results across studies [36]. |

| Certified Interpreter / Translator | In cross-language research, a professional with sociolinguistic competence is critical to ensure conceptual equivalence during participant interviews and data translation, preserving the validity of qualitative data [35]. |

| Tasks Matched for Difficulty and Cognitive Demand | Carefully selected or designed experimental tasks. To argue for a true dissociation, tasks must be comparable in sensitivity to avoid confounding test difficulty with functional specialization [34] [32]. |

The Promise and Peril of Large Language Models (LLMs) in Analysis and Workflow Automation

Technical Support Center: Troubleshooting Guides and FAQs

This support center provides targeted solutions for methodological challenges encountered when using Large Language Models (LLMs) in cognitive language analysis and pharmaceutical research.

Frequently Asked Questions (FAQs)

Q1: Why does my LLM-based analysis fail on complex reasoning tasks that human subjects handle with more time? A: LLMs, particularly reasoning models, require significant internal computation for complex problems, much like humans. Research shows a direct correlation between human solving time and LLM computational effort (measured in tokens). Problems that take humans longer also require more tokens for LLMs, with arithmetic being least demanding and abstract reasoning (like the ARC challenge) being most costly for both [38]. This "cost of thinking" is similar.

Q2: How can I measure the cognitive load of human subjects interacting with LLM-driven interfaces? A: Electroencephalography (EEG) is a key tool. You can monitor specific frequency bands:

- Frontal Theta (4-7 Hz): Increased activity indicates higher working memory demand and cognitive load [39].

- Alpha (8-12 Hz): Suppression reflects heightened cognitive engagement [39]. Event-Related Potentials (ERPs) like the P300 component provide further insight into temporal dynamics of attention and decision-making during LLM interactions [39].

Q3: Our initial LLM prototype works, but scaling it to a production-grade workflow has caused reliability and cost issues. What's wrong? A: This is a classic failure to scale, often due to relying on manual "prompt engineering" or "context engineering." These methods are fragile and don't scale. The solution is a shift to automated workflow architecture, where context, instructions, and task breakdowns are generated and managed by code, not by hand. This involves decomposing tasks into atomic steps and automating context generation from live data sources [40].

Q4: What's the best way to validate an LLM's output against known scientific data to avoid hallucinations? A: Implement an automated validation layer. For instance, after an LLM drafts a summary or analysis, use scripts to validate the output against an expected schema or known data points. This is a core component of scalable, automated workflow architecture. Any discrepancies should be flagged for review or automatic reprocessing [40].

Troubleshooting Guides

Issue: High Cognitive Load in Study Participants Using LLM Tools Symptoms: User frustration, task abandonment, or EEG data showing elevated frontal theta activity [39].

| Step | Action | Diagnostic Method | Expected Outcome |

|---|---|---|---|

| 1 | Simplify the LLM output. Avoid information overload. | User feedback surveys & EEG analysis of alpha suppression. | Reduced self-reported frustration. |

| 2 | Implement a step-by-step reasoning process. Let the LLM "think" in stages. | Compare token count and task success rate before and after. | Improved task accuracy and lower frontal theta power in EEG [38] [39]. |

| 3 | Use Retrieval-Augmented Generation (RAG) to ground responses in verified sources. | Check outputs for citations and fact-check against source material. | Increased output accuracy and user trust. |

Issue: Scaling a Research LLM Prototype to a Robust, Automated Workflow Symptoms: Exploding costs, inconsistent outputs, constant manual tweaking of prompts, and inability to handle new data or rules [41] [40].

| Step | Action | Diagnostic Method | Expected Outcome |

|---|---|---|---|

| 1 | Architecture Review. Move from monolithic prompts to a decomposed workflow with atomic steps. | Code audit to identify monolithic prompt structures. | A clear map of discrete tasks (e.g., "extract entities," "validate," "summarize"). |

| 2 | Automate Context. Replace manual context with code that introspects live data (e.g., database schemas) to generate dynamic context. | Measure the time spent manually updating context prompts. | Drastic reduction in developer maintenance time for context updates [40]. |

| 3 | Implement Guardrails. Use a centralized AI gateway to manage LLM access, apply security filters, control costs, and log all interactions. | Monitor cost dashboards and audit logs for policy violations. | Predictable costs, enforced compliance, and auditable LLM interactions [41]. |

Experimental Protocols and Data

Protocol 1: Measuring the "Cost of Thinking" in LLMs vs. Humans This protocol assesses the parallel cognitive costs between humans and reasoning LLMs [38].

- Stimuli: Prepare a set of problems across seven classes (e.g., numeric arithmetic, intuitive reasoning, ARC challenge).

- Human Subjects: Present problems to participants and measure response time in milliseconds.

- LLM Model: Present the same problems to a reasoning LLM and measure the number of internal reasoning tokens generated before the final answer.

- Analysis: Correlate average human response time per problem class with average tokens used by the LLM for that class.

Table: Problem Class Difficulty for Humans and LLMs [38]

| Problem Class | Avg. Human Solving Time (ms) | Avg. LLM Reasoning Tokens | Relative Cost |

|---|---|---|---|

| Numeric Arithmetic | ~1,200 | ~150 | Low |

| Logical Deduction | ~2,450 | ~300 | Medium |

| ARC Challenge | ~4,100 | ~650 | High |

Protocol 2: EEG Analysis of Cognitive Load During LLM Interaction This protocol uses EEG to quantify the cognitive impact of LLM-assisted tasks [39].

- Setup: Fit participants with an EEG cap. Define a complex problem-solving task.

- Conditions: Participants perform the task (a) without assistance and (b) with assistance from an LLM-based tool.

- Recording: Record EEG signals throughout the task, focusing on frontal theta power and alpha power.

- Analysis: Use a framework like the Interaction-Aware Language Transformer (IALT) to analyze EEG data. Compare cognitive load markers (theta power) and engagement markers (alpha suppression) between the two conditions.

Table: Key EEG Metrics for Cognitive State Assessment [39]

| EEG Metric | Frequency Band | Cognitive Correlation | Indicator of Positive LLM Interaction |

|---|---|---|---|

| Frontal Theta | 4-7 Hz | Working Memory Load | Decrease in power |

| Frontal Alpha | 8-12 Hz | Cognitive Engagement | Suppression (decrease) |

| Frontal Alpha Asymmetry | 8-12 Hz | Emotional Valence | Shift towards left-frontal activity |

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Components for LLM-Based Cognitive and Pharmaceutical Research

| Item | Function in Research | Example Application |

|---|---|---|

| Reasoning LLMs | Models trained to break down problems step-by-step, mimicking a reasoning process. | Solving complex math problems or generating hypotheses in drug discovery [38] [42]. |

| EEG with Theta/Alpha Analysis | Provides real-time, objective neural data on a subject's cognitive load and engagement. | Quantifying the cognitive impact of an LLM-based decision-support tool [39]. |

| Retrieval-Augmented Generation (RAG) | A technique that grounds an LLM's responses in a curated database of factual information. | Building a drug discovery assistant that cites verified scientific literature, reducing hallucinations [40]. |

| AI Gateway & Guardrails | Centralized software to manage LLM access, enforce policies, control costs, and log interactions. | Ensuring compliance and cost-effectiveness when scaling an LLM prototype across a research organization [41]. |

| Automated Workflow Architecture | A code-driven system that decomposes tasks and auto-generates context, replacing manual prompt engineering. | Creating a robust, scalable pipeline for automated data analysis that adapts to new experimental schemas [40]. |

Experimental Workflow Visualizations

Automated Workflow Architecture

EEG Analysis Protocol

Frequently Asked Questions (FAQs)

Q1: What are the primary data fusion strategies for combining different data types, such as behavioral tasks and neuroimaging?

Data fusion strategies are key for integrating disparate data modalities. The main techniques are [43]:

- Early Fusion: This involves combining raw or preprocessed data from different modalities at the input stage. For example, you might concatenate text embeddings with image features before feeding them into a model.

- Late Fusion: Each modality is processed separately (e.g., using a vision model for images and a language model for text), and their outputs are merged at the end, often through weighted averaging or voting.

- Hybrid Fusion: This approach blends early and late fusion, allowing for intermediate interactions between modalities during processing. A video analysis system might use early fusion for audio-video frame alignment and late fusion to combine predictions from separate speech and gesture models [43].

Q2: How can we address the challenge of temporal misalignment between behavioral responses and neuroimaging data, such as EEG or fMRI?

Temporal misalignment is a common issue due to the different sampling rates and physiological latencies of various signals. Effective methods include [43] [44]:

- Temporal Alignment Techniques: These methods synchronize sequential data, such as matching transcribed speech to specific video frames or neural events. Techniques like Dynamic Time Warping (DTW) can be used to account for variable latencies in recorded activities during data averaging, which improves the accuracy of identifying task-induced brain activity [43] [44].

- Neural Tracking: This phenomenon ensures the theoretical possibility of temporally continuous decoding from evoked brain activities. The cortical activity automatically tracks the dynamics of speech and various linguistic properties, which helps in aligning brain recordings with external stimuli [17].

Q3: What computational models are best suited for linking neuroimaging data to behavioral outcomes in cognitive tasks?

Choosing the right model depends on your research goal—whether it's mechanistic explanation or prediction.