Optimizing Cognitive Load in Virtual Reality: Strategies for Enhanced Task Performance in Biomedical Research and Clinical Applications

This article provides a comprehensive analysis of cognitive load optimization in virtual reality (VR) environments, tailored for researchers, scientists, and drug development professionals.

Optimizing Cognitive Load in Virtual Reality: Strategies for Enhanced Task Performance in Biomedical Research and Clinical Applications

Abstract

This article provides a comprehensive analysis of cognitive load optimization in virtual reality (VR) environments, tailored for researchers, scientists, and drug development professionals. It synthesizes the latest research on foundational theories, advanced measurement methodologies including neurophysiological tools and AI-driven analytics, practical optimization techniques for VR interfaces and tasks, and comparative validation of VR technologies. By integrating evidence from recent studies, this resource aims to guide the development of more effective VR-based cognitive training, clinical assessments, and therapeutic interventions, ultimately enhancing research outcomes and patient care in biomedical contexts.

Understanding Cognitive Load: The Foundation of Effective VR Design for Clinical and Research Applications

Core Concepts & FAQs for Researchers

What is Cognitive Load Theory in a VR Research Context?

Cognitive Load Theory (CLT) posits that an individual's working memory has a limited capacity for processing new information. In Virtual Reality (VR) research, this theory helps us understand how the mental demands of a virtual environment and a primary task interact. Managing these demands is crucial for preventing overload, which can impair learning, performance, and data validity in experimental settings [1].

The theory categorizes cognitive load into three distinct types [1]:

- Intrinsic Cognitive Load: The mental effort inherent to the complexity of the primary task itself (e.g., learning a surgical procedure or solving a complex puzzle in VR).

- Extraneous Cognitive Load: The mental effort imposed by the way information or the task is presented. This is often influenced by suboptimal instructional design or technical issues within the VR system (e.g., a confusing interface, poor controller tracking, or blurry visuals).

- Germane Cognitive Load: The mental effort devoted to processing new information, forming mental schemas, and achieving long-term learning. Effective VR design aims to maximize this type of load.

Frequently Asked Questions on Cognitive Load in VR

Q1: Our study shows high extraneous cognitive load in the VR group. What are the most common design-related causes? High extraneous load is frequently caused by factors that distract users from the core learning or task objectives. Key contributors based on recent research include:

- Lack of Onboarding: Presenting learners with a complex VR interface without prior hands-on training significantly increases extraneous cognitive load [2].

- Technical Usability Problems: A poorly designed user interface, complicated control schemes, or hardware issues force users to dedicate mental resources to operating the system instead of focusing on the task [1].

- Inappropriate Interactivity: While often assumed to be beneficial, higher interactivity does not automatically lead to better learning and can sometimes be a source of extraneous load if not carefully integrated with the learning objectives [3].

Q2: We want to maximize germane cognitive load for learning. What VR design principles support this? To foster germane load, which is directly linked to schema construction and learning, design should focus on reducing extraneous load and effectively managing intrinsic load. Evidence-based principles include:

- Optimize Intrinsic Motivation: VR environments that enhance intrinsic motivation have been shown to increase germane cognitive load, thereby improving learning outcomes [3].

- Ensure Congruent Feedback: Cognitive and haptic feedback need to be congruent to effectively foster learning [4].

- High Usability: Systems with high usability scores are associated with excellent learning outcomes and allow more cognitive resources to be directed toward germane processes [1].

Q3: How can we quantitatively measure the different types of cognitive load in our VR experiments? Researchers can employ both subjective questionnaires and objective physiological measures:

- Subjective Scales: Leppink’s 10-item questionnaire is a validated tool that provides separate scores for intrinsic, extraneous, and germane cognitive loads on an 11-point scale [1].

- Objective Physiological Measures:

- Eye-Tracking: Pupil diameter, measured with eye-tracking integrated into VR headsets, is a reliable and quantifiable biomarker for cognitive load, as it exhibits a linear relationship with cognitive demand [5].

- Electroencephalogram (EEG): Scalp measurements of electrical activity can effectively discriminate between different workload levels. Specific patterns, such as frontal theta power increases and alpha power decreases, are correlated with higher cognitive workload [6].

Q4: Our participants sometimes report cybersickness. How is this related to cognitive load? Cybersickness is not just a comfort issue; it is an important experimental confounder. Studies have found significant correlations between reported cybersickness and increased cognitive load. The symptoms of cybersickness compete for limited cognitive resources, thereby increasing extraneous cognitive load and potentially skewing your performance data [4].

Technical Troubleshooting Guide: Mitigating Extraneous Cognitive Load

This guide addresses common technical problems in VR experiments that can artificially inflate extraneous cognitive load, compromising data quality.

| Problem Category | Specific Issue | Troubleshooting Steps | Direct Link to Extraneous Cognitive Load |

|---|---|---|---|

| System Performance & Software | General bugs, errors, and inconsistent performance [7]. | Perform a full reboot of the headset (not just sleep mode) [7]. | Unstable performance forces the brain to constantly adapt to a changing environment, consuming working memory resources. |

| Display & Visuals | Blurry or unfocused image [7] [8]. | 1. Adjust the IPD (Interpupillary Distance) setting on the headset [7].2. Clean the lenses with a microfiber cloth [8].3. Ensure the headset is fitted correctly [7]. | A blurry image requires additional mental effort to decipher visual information, increasing the load intrinsic to perception. |

| Controller & Tracking | Controllers not tracking or "tracking lost" errors [7] [8]. | 1. Replace controller batteries [7].2. Ensure play area is well-lit (but avoid direct sunlight) [7].3. Avoid reflective surfaces and small string lights [7].4. Clean the headset's external tracking cameras [7].5. Re-pair controllers via the companion app [8]. | Unreliable tracking breaks immersion and forces the user to consciously correct their movements, adding a layer of mental effort unrelated to the task. |

| Guardian System | Guardian boundary not staying set or warning pops up frequently [8]. | Set up a new boundary in a well-lit area, free of obstructions and repetitive patterns [8]. | Constant boundary warnings pull the user's attention away from the experimental task and into the physical world, causing task-switching and distraction. |

Experimental Protocols & Methodologies

Protocol 1: Evaluating a VR Skill Training Intervention

This protocol is adapted from a field study on IVR for procedural skill learning and demonstrates how to structure a multi-session experiment while measuring cognitive load [4] [1].

Objective: To assess the effectiveness and cognitive impact of a VR simulation for training a procedural skill (e.g., chest tube insertion) compared to traditional methods.

Workflow Overview:

Key Methodological Details:

- Participants: Recruit subjects naive to both the procedural skill and VR (e.g., medical students) [1].

- VR Intervention: Use a commercially available VR headset (e.g., Meta Quest 2). The training should include multiple repetitions over at least two separate sessions to account for learning curves [1].

- Crucial Controls: Include a tutorial session to familiarize users with the VR interface. This step is critical for reducing extraneous cognitive load in the main training sessions [2].

- Primary Outcomes:

- Technical Skill: Assessed using a validated tool like the Objective Structured Assessment of Technical Skills (OSATS) on a physical mannequin [1].

- Knowledge Test: Multiple-choice tests administered pre- and post-training [1].

- Cognitive Load & Usability: Measured immediately after the intervention using Leppink's scale for cognitive load and the System Usability Scale (SUS) [1].

Protocol 2: Passive Monitoring of Cognitive Workload via EEG in VR

This protocol outlines a method for objectively measuring cognitive workload during an interactive VR task using EEG, adapted from a study using an n-back task in VR [6].

Objective: To passively classify levels of cognitive workload in an interactive and immersive virtual environment using electroencephalogram (EEG) signals.

Workflow Overview:

Key Methodological Details:

- VR Task: An adapted n-back task within an immersive, game-like environment. Participants are presented with a sequence of stimuli (e.g., colored balls) and must indicate when the current stimulus matches the one from

nsteps back. The value ofn(e.g., 1, 2, 3) systematically modulates the intrinsic cognitive workload [6]. - Apparatus:

- VR System: A head-mounted display (HMD) like the HTC VIVE with motion-tracked controllers.

- EEG System: A high-density EEG system (e.g., 64-channel). To integrate with the VR headset, use a protective cap to prevent electrolyte gel from contaminating the HMD. A wireless amplifier is recommended for freedom of movement [6].

- EEG Metrics: Analyze spatio-spectral features known to correlate with cognitive workload. Key indicators include:

The Scientist's Toolkit: Research Reagents & Essential Materials

This table details key hardware, software, and assessment tools required for conducting rigorous research on cognitive load in VR contexts.

| Item Name | Specification / Version | Primary Function in Research |

|---|---|---|

| Standalone VR Headset | Meta Quest 2 or 3 [1] | Provides a fully immersive, untethered virtual environment for participants. Ideal for field studies and flexible lab setups. |

| VR-Integrated Eye-Tracking | Tobii Ocumen (e.g., in Pico Neo 3 Pro Eye) [5] | Provides objective, real-time measurement of pupil diameter as a reliable biomarker for cognitive load. |

| Electroencephalogram (EEG) | 64-channel wireless system (e.g., from BrainVision, g.tec) [6] | Measures electrical brain activity to passively discriminate between different levels of cognitive workload. |

| Cognitive Load Questionnaire | Leppink's 10-item scale [1] | A validated subjective instrument that provides separate quantitative scores for intrinsic, extraneous, and germane cognitive load. |

| System Usability Scale (SUS) | 10-item standard questionnaire [1] | Assesses the perceived usability of the VR system. Poor usability is a major contributor to extraneous cognitive load. |

| VR Simulation Software | Custom or commercial (e.g., Vantari VR) [1] | Presents the experimental task or training scenario. The design of this software is the primary independent variable manipulated to affect cognitive load. |

The Critical Role of Cognitive Load in VR Task Performance and Learning Outcomes

Core Concepts: Cognitive Load in VR Research

Cognitive load refers to the total amount of mental effort being used in working memory. In Virtual Reality (VR) research, managing cognitive load is paramount, as the immersive, multi-sensory nature of VR can easily overwhelm a user's cognitive capacity, hindering both task performance and knowledge acquisition [4] [9]. The table below summarizes the key principles and their importance for VR-based research and training.

Table 1: Key Principles of Cognitive Load in VR

| Principle | Description | Implication for VR Research |

|---|---|---|

| Intrinsic Load | Mental effort required by the inherent complexity of the task or subject matter [9]. | Complex tasks (e.g., surgical procedures, machinery operation) naturally demand high cognitive resources. |

| Extraneous Load | Mental effort wasted on non-essential elements due to poor instructional or environmental design [9]. | VR-specific distractions like complicated UI, unrealistic interactions, or visual clutter can overload users. |

| Germane Load | Mental effort devoted to schema construction and deep learning [9]. | Well-designed VR experiences direct cognitive resources toward effective learning and skill automation. |

| Cognitive Overload | When total cognitive load exceeds working memory capacity. | Leads to frustration, reduced performance, and poorer learning outcomes [4] [9]. |

Troubleshooting Guide: Common Scenarios & Solutions

This section addresses specific, high-priority challenges researchers and practitioners may encounter when designing or evaluating VR tasks.

Table 2: Troubleshooting Common Cognitive Load Issues in VR

| Scenario & Symptoms | Root Cause | Solution & Preventive Measures |

|---|---|---|

| Scenario 1: Poor Learning Outcomes Despite High ImmersionSymptoms: Users report high presence and enjoyment but perform poorly on subsequent knowledge tests [4] [9]. | High Extraneous Load: The immersive fidelity of VR may be creating non-essential processing demands, diverting attention from core learning content [10]. | Apply Cognitive Load Theory (CLT) Principles:• Use signaling to highlight critical information.• Provide pre-training on key concepts before the VR experience.• Segment complex tasks into manageable parts [9]. |

| Scenario 2: User Frustration During Skill AcquisitionSymptoms: Users make errors, appear agitated, and have low task completion rates, especially when merging cognitive and physical tasks [4]. | Intrinsic-Extraneous Load Mismatch: The cognitive demand of understanding the procedure and the haptic (touch) feedback may be incongruent, increasing mental demand [4]. | Scaffold Haptic-Cognitive Integration:• Design haptic feedback to be directly and intuitively congruent with the cognitive goal.• Implement the VR training alongside or after initial hands-on training, not necessarily before it [4]. |

| Scenario 3: Inconsistent Cognitive Load MeasurementsSymptoms: Physiological data (e.g., EEG, pupil dilation) and subjective self-reports do not align, making analysis difficult. | Measurement Discrepancy: Different metrics capture different aspects of cognitive load (e.g., physiological arousal vs. perceived effort), and may be confounded by factors like pupillary light reflex [5]. | Use a Multi-Modal Assessment Approach:• Triangulate data: Combine physiological sensors (EEG, eye-tracking), performance metrics (accuracy, time), and validated subjective scales (NASA-TLX).• For eye-tracking, use algorithms that separate neurological pupillary response from light-induced changes [5]. |

Frequently Asked Questions (FAQs)

Q1: Does higher immersion in VR always lead to better learning? A1: No. While high immersion can increase motivation and presence, it does not automatically improve learning outcomes. For novice learners, the high sensory fidelity can impose significant extraneous cognitive load, potentially leading to poorer immediate knowledge retention compared to traditional methods like videos or live demonstrations [10] [9]. The benefit of immersion is often dependent on the type of knowledge being taught and the quality of instructional design [10].

Q2: What is the most effective way to measure cognitive load in a VR study? A2: The most robust approach is a multi-modal method that combines several measures:

- Physiological Measures: EEG to track brain activity patterns (e.g., theta and alpha band power) or eye-tracking to monitor task-evoked pupillary response [6] [5].

- Performance Measures: Task accuracy, completion time, and error rates.

- Subjective Measures: Standardized questionnaires like the NASA-Task Load Index (TLX) administered after the task. Using multiple methods helps to build a more complete and reliable picture of the user's cognitive state [11].

Q3: How can I design a VR user interface (UI) to minimize unnecessary cognitive load? A3: Follow principles of inclusive and accessible design:

- Ensure High Contrast: Maintain a minimum contrast ratio of 4.5:1 for text and critical UI elements against the background to reduce visual processing effort [12] [13].

- Simplify Layouts: Avoid clutter and use clear, intuitive visual communication. Do not rely on color alone to convey information [14].

- Provide Customization: Allow users to adjust settings like text size and UI scale to suit their needs [14].

- Use Adaptive Layouts: Anchor UI elements to the user's field of view rather than a fixed world space to prevent users from "losing" the interface [14].

Experimental Protocols & Methodologies

This section provides a detailed methodology for a key experiment cited in the field, allowing for replication and adaptation.

Protocol: Assessing Cognitive Load in an Interactive VR N-Back Task

This protocol is adapted from a study that successfully used EEG to discriminate between levels of cognitive workload in an interactive VR environment [6].

1. Objective: To reliably measure and classify cognitive workload levels during an interactive VR task using physiological and behavioral data.

2. Participants:

- Recruit 15+ participants (ages 18-35).

- Screen for normal or corrected-to-normal vision, color blindness, and high susceptibility to motion sickness [6].

3. Equipment & Setup:

- VR System: HTC VIVE or equivalent headset with 6-degree-of-freedom tracking and motion-tracked hand controllers.

- Physiological Recording: Wireless EEG system with a sufficient number of electrodes (e.g., 32-channel), positioned according to the international 10-20 system. An amplifier is placed on the participant's back.

- Experimental Area: A clear space approximately 1m in front of the recording computer [6].

4. Experimental Task:

- The task is an adapted n-back task within a game-like VR environment.

- Stimuli: A series of colored balls (red, blue, purple, green, yellow) appear on a virtual podium.

- Procedure: For each trial, the participant uses the hand controller to pick up a ball. They must then place it in a target receptacle if the ball's color matches the color presented 'n' trials back. Otherwise, they place it in a different receptacle.

- Workload Manipulation: The factor 'n' is varied (e.g., 1-back, 2-back, 3-back) to systematically increase cognitive workload. Each participant completes multiple blocks for each workload level [6].

5. Data Collection:

- EEG Data: Continuous recording throughout the task. Key metrics include power spectral bands (theta, alpha, beta) over frontal, central, and parietal locations [6].

- Behavioral Data: Task accuracy and response time for each trial.

- Subjective Data: Post-task self-reporting on perceived mental effort.

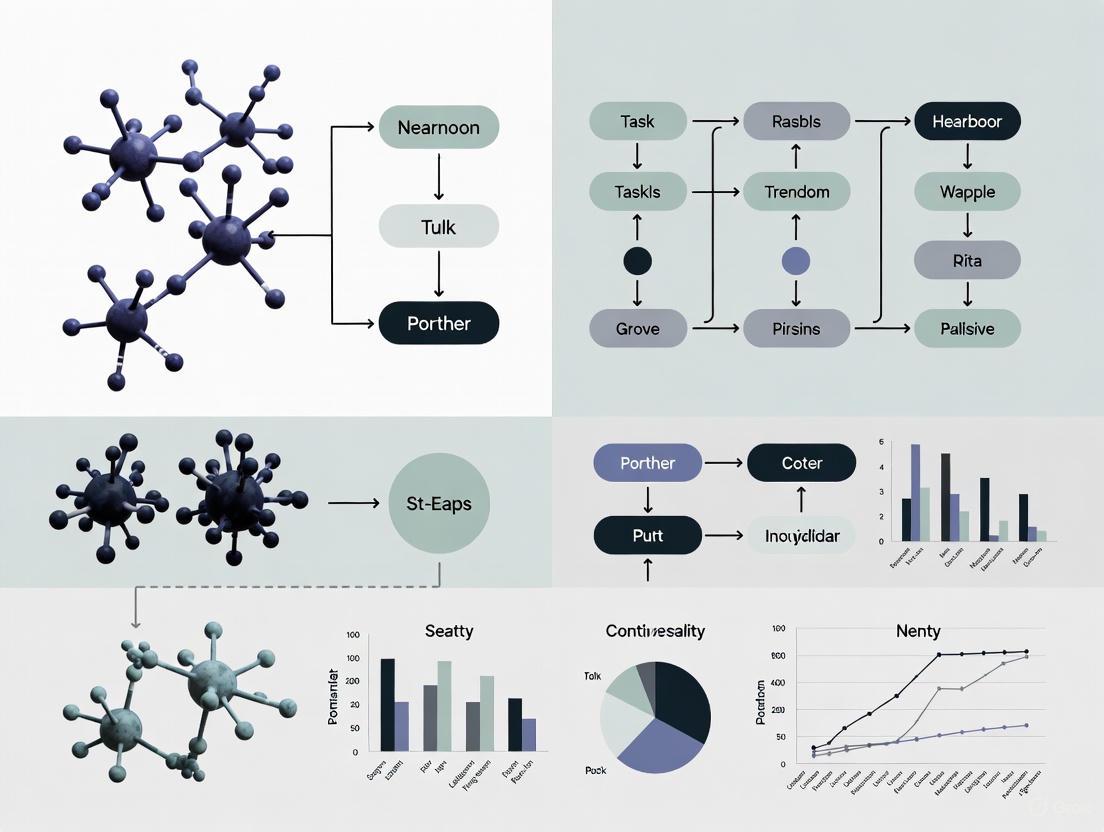

The workflow and logical relationships of this experimental protocol are summarized in the diagram below.

Protocol: A Multiple-Day Field Study in an Authentic Classroom

This protocol is based on a field study that investigated cognitive load over a multi-day training program, providing a template for longitudinal research in realistic settings [4].

1. Objective: To examine the interaction between cognitive load, self-efficacy, and learning outcomes when using IVR as a complement to hands-on skill training.

2. Participants & Design:

- Recruit a sizable cohort (e.g., 54 undergraduate students).

- Employ a between-subjects design with at least three groups:

- Group 1 (Control): Receives only practical, hands-on training.

- Group 2 (IVR-Before): Uses Immersive VR training before the hands-on session.

- Group 3 (IVR-After): Uses Immersive VR training after the hands-on session [4].

3. Materials & Measures:

- VR Learning Module: A professionally developed IVR application relevant to the training domain (e.g., molecular biology procedures).

- Assessment Tools:

- Cognitive Load: A validated self-report questionnaire administered after training sessions.

- Learning Outcomes: A test of procedural knowledge specific to the trained skill.

- Self-Efficacy: A scale measuring participants' confidence in performing the trained skill.

- Cybersickness: A simulator sickness questionnaire [4].

4. Procedure:

- The study is conducted over multiple days in a real classroom setting.

- On designated days, groups complete their assigned training regimen (IVR, hands-on, or both in sequence).

- After the training interventions on each day, participants complete the assessment measures (cognitive load, etc.) [4].

5. Data Analysis:

- Use statistical analyses (e.g., ANOVA) to check for significant differences in learning outcomes, cognitive load, and self-efficacy between the groups.

- Conduct correlation analyses to examine the relationships between cognitive load, self-efficacy, and cybersickness [4].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for VR Cognitive Load Research

| Tool / Solution | Function in Research | Example Use Case |

|---|---|---|

| Head-Mounted Display (HMD) with Eye-Tracking | Presents the immersive virtual environment and passively collects high-fidelity gaze data and pupil diameter, a key biomarker for cognitive load [5]. | Tracking pupillary response changes as task difficulty (n-back level) increases to infer cognitive workload in real-time [6] [5]. |

| Wireless Electroencephalogram (EEG) | Measures electrical activity from the scalp, providing direct insight into brain states associated with different levels of cognitive workload [6]. | Discriminating between low, medium, and high workload levels by analyzing spatio-spectral features (e.g., frontal theta and parietal alpha power) [6]. |

| Machine Learning Algorithms | Analyzes complex, multi-modal physiological and behavioral data streams to model and predict cognitive load as a continuous value [11]. | Developing a real-time cognitive load inference engine that adapts the VR content based on the user's current cognitive state to prevent overload [11]. |

| Validated Subjective Scales | Provides a standardized self-report measure of the user's perceived mental effort, complementing objective data. | Using the NASA-TLX after a VR training session to gauge subjective levels of mental demand, frustration, and effort [4]. |

| Cognitive Load Theory Framework | Provides a theoretical foundation for instructional design, helping to structure VR experiences to manage intrinsic, extraneous, and germane load [9]. | Designing a VR safety training module by segmenting complex procedures and using signaling to highlight hazards, thereby reducing extraneous load [9]. |

Cognitive Load Theory (CLT) and Its Application to Immersive Virtual Environments

Troubleshooting Guide: Common CLT-VR Research Challenges

This section addresses specific technical and methodological issues researchers may encounter when designing and conducting experiments on Cognitive Load Theory in Virtual Reality.

FAQ 1: Why do novice participants sometimes show lower knowledge retention in highly immersive VR conditions compared to traditional learning methods?

Answer: This is a documented phenomenon where high immersion can impose extraneous cognitive load, hindering initial knowledge acquisition for novices [9]. The sensory richness and interactivity of VR, while engaging, may overwhelm working memory when learners are first encountering complex material [15] [16].

- Solution A: Implement Scaffolded Immersion. Begin with simpler, less immersive presentations of the content (e.g., a 2D video or PowerPoint) before introducing the full VR simulation. This allows novices to build foundational schemas without being overloaded [9].

- Solution B: Integrate Cognitive Load-Aware Design. Use instructional design principles like signaling (highlighting key information) and guided narration to direct attention to essential elements and reduce unnecessary cognitive processing [9].

FAQ 2: How can we effectively measure cognitive load in real-time during a VR experiment without interrupting the task?

Answer: Direct subjective questionnaires interrupt flow. Instead, researchers can use a multi-modal approach for more objective, real-time assessment [15].

- Solution A: Neurophysiological Tools. Use tools like Electroencephalography (EEG) or functional Near-Infrared Spectroscopy (fNIRS) to obtain continuous, objective data on cognitive engagement and workload [15].

- Solution B: Integrated Dual-Task Probes. Implement a secondary, simple task (e.g., a periodic auditory cue requiring a button press) within the VR environment. Performance on this secondary task (e.g., reaction time, accuracy) serves as a behavioral probe of cognitive load, with slower responses indicating higher load [16].

FAQ 3: Our VR training in the lab shows good results, but skills are not transferring well to real-world contexts. What could be the cause?

Answer: Poor context transfer is often linked to high cognitive load during VR training, which can inhibit the formation of robust, long-term motor memories [16]. If the VR environment is overly complex or different from the real world, learners may struggle to apply their skills.

- Solution: Optimize Fidelity and Reduce Extraneous Load. Ensure the VR task's cognitive and motor demands closely match the real-world context. Use worked examples and simplify non-essential interactive elements during the initial learning phase to free up cognitive resources for schema construction [17] [16].

FAQ 4: What are the key considerations for managing cognitive load for neurodiverse participants in VR studies?

Answer: Neurodivergent individuals (e.g., with ADHD, ASD, dyslexia) may experience differences in working memory and information processing, making them more susceptible to cognitive overload in complex environments like VR [18].

- Solution: Adopt an Inclusive Framework. Apply the FEDIS framework to your VR design [18]:

- Format: Offer content in multiple, simultaneous formats (e.g., text with audio narration) to leverage different processing pathways.

- Environment: Minimize non-essential visual and auditory clutter within the VR scene.

- Delivery: Allow for self-pacing and provide clear, concise instructions.

- Instruction: Break down complex tasks into smaller, sequenced steps.

Experimental Protocols for CLT in VR

Below are detailed methodologies from key studies investigating cognitive load in virtual environments.

Protocol 1: Comparing Instructional Modalities for Technical Training

This protocol is designed to directly compare the cognitive load and effectiveness of VR against traditional teaching methods [9].

| Aspect | Description |

|---|---|

| Objective | To examine the relative effectiveness and cognitive load imposed by VR-based instruction versus conventional methods (PowerPoint, real-person demonstration) for novice learners. |

| Participants | 106 undergraduate students with no prior subject-matter experience. Participants are randomly assigned to one of three conditions. |

| Independent Variable | Instructional modality (PowerPoint, Real-Person Demonstration, Immersive VR Simulation). |

| Dependent Variables | Immediate knowledge retention (20-item multiple-choice test), cognitive ability (Raven's Progressive Matrices), learning styles (Honey & Mumford questionnaire). |

| Procedure | 1. Pre-test assessment of cognitive ability and learning styles.2. Random assignment to one instructional condition for the same technical content (e.g., operating a five-axis CNC machine).3. Immediate post-test knowledge assessment. |

| Key Findings | A significant main effect of instructional method was found. The real-person demonstration group achieved the highest mean score, followed by the PowerPoint and VR groups. This suggests that for novices, immersive VR may impose additional cognitive demands that hinder immediate knowledge acquisition [9]. |

Protocol 2: Measuring Cognitive Load in Visuomotor Adaptation

This protocol uses a dual-task paradigm to quantify cognitive load during a motor learning task in VR [16].

| Aspect | Description |

|---|---|

| Objective | To examine differences in cognitive load between a Head-Mounted Display (HMD-VR) and a Conventional Screen (CS) during visuomotor adaptation and its relationship to long-term retention. |

| Participants | 36 healthy participants, randomized into CS, HMD-VR, or cross-over groups. |

| Independent Variable | Training environment (CS vs. HMD-VR). |

| Dependent Variables | Cognitive load (measured via a secondary auditory reaction-time task), explicit and implicit adaptation components, long-term retention (after 24 hours). |

| Procedure | 1. Participants perform a visuomotor adaptation task (e.g., reaching while a cursor is rotated) while simultaneously responding to random auditory tones.2. The attentional demands (cognitive load) are measured by the reaction time and accuracy to the secondary task.3. Participants return after 24 hours for a retention test in the same or a different environment. |

| Key Findings | Cognitive load was significantly greater in HMD-VR than in CS. This increased load was correlated with decreased use of explicit learning mechanisms and poorer long-term retention and context transfer [16]. |

Research Workflow and Visualizations

The following diagram illustrates a generalized experimental workflow for a CLT-VR study, integrating elements from the cited protocols.

Experimental Workflow for CLT-VR Research

This diagram outlines the key methodological components and their logical sequence in a robust CLT-VR study.

The Scientist's Toolkit: Research Reagent Solutions

This table details key materials and tools essential for conducting research at the intersection of Cognitive Load Theory and Virtual Reality.

| Item Name | Category | Function in CLT-VR Research |

|---|---|---|

| Head-Mounted Display (HMD) | Hardware | Presents the immersive virtual environment. Key for manipulating the level of immersion (e.g., Oculus Quest) [9] [16]. |

| Neurophysiological Recording Tools (EEG, fNIRS) | Measurement | Provides objective, real-time data on cognitive states. EEG measures electrical brain activity, while fNIRS measures blood oxygenation, both serving as proxies for cognitive load [15]. |

| Dual-Task Probe | Software/Methodology | A secondary task (e.g., auditory tone reaction) used to measure attentional demands. Slower reaction times indicate higher cognitive load from the primary VR task [16]. |

| Unity 3D / Unreal Engine | Software | Game engine development platforms used to design and control the interactive VR environment and experimental logic [16]. |

| Raven's Progressive Matrices | Assessment | A non-verbal test used to assess participants' fluid intelligence (cognitive ability), which can be a covariate or moderating variable in learning outcomes [9]. |

| Cognitive Load Scale | Assessment | A subjective self-report questionnaire (e.g., NASA-TLX) administered post-task to gauge a participant's perceived mental effort [9] [19]. |

| Machine Learning Models (CNNs, RNNs) | Data Analysis | Used to classify and predict cognitive load levels from multimodal data streams (e.g., EEG, eye-tracking), enhancing the accuracy of load assessment [15]. |

For researchers in neuroscience and drug development, virtual reality (VR) offers unprecedented control for studying brain function and behavior. However, the validity of these experiments depends on a stable technical setup and a deep understanding of the neurobiological principles at play. This guide provides essential troubleshooting for common VR experimental issues and summarizes key contemporary research on how the brain processes virtual environments, with a special focus on optimizing cognitive load.

Frequently Asked Questions (FAQs) & Troubleshooting

This section addresses common technical problems that can disrupt data collection and introduce confounding variables in VR experiments.

Q: My headset tracking is inconsistent or the Guardian system keeps failing. What should I do?

- A: Tracking issues are often related to the experimental environment.

- Lighting: Ensure you are in a well-lit, indoor area. Avoid direct sunlight, which can damage the headset and interfere with tracking [7].

- Reflective Surfaces: Cover or avoid large mirrors and small string lights (e.g., Christmas lights), as they can confuse the tracking cameras [7].

- Camera Lenses: Gently clean the headset's four external tracking cameras with a microfiber cloth to remove smears or dust [7].

- Reboot: Perform a full reboot of the headset through the power menu, not just sleep mode [7].

Q: The visual display is blurry, or using the headset causes discomfort and nausea.

- A: This can often be resolved by proper hardware adjustment.

- IPD Adjustment: Adjust the Inter-Pupillary Distance (IPD) slider on the headset (e.g., Oculus Quest has physical presets) to match the distance between your eyes. Trial and error can find the optimal setting for visual clarity [7].

- Headset Fit: Ensure the headset is fitted correctly. The top strap should support the weight, and the side straps should secure it without being overly tight [7].

- VR Sickness: Discomfort and nausea are symptoms of VR sickness. Users may need to gradually acclimate to VR through shorter sessions [7].

Q: One of my controllers is not being detected by the headset.

- A:

- Battery: The most common solution is to replace the AA battery in the controller. Tracking quality can also decline as the battery depletes [7].

- Re-Pairing: If a new battery doesn't work, re-pair the controller via the companion Oculus app on a phone [20].

- Reboot: As with many issues, a full headset reboot can often resolve controller connectivity problems [7].

Q: My headset won't update, or an app keeps freezing.

- A:

Key Experimental Protocols & Data

The following studies provide foundational methodologies for investigating neurobiological processes and cognitive load in VR.

Investigating Neuro-Immune Anticipation in VR

This protocol details a paradigm for studying how the brain anticipates virtual threats and triggers a physiological immune response [21].

Experimental Workflow: The diagram below outlines the core procedures and measurements for investigating neural and immune responses to virtual threats.

Key Research Reagent Solutions:

| Item | Function in Experiment |

|---|---|

| Infectious Avatars | Virtual human faces displaying clear signs of infection; serve as the pathogenic threat stimulus. |

| EEG/fMRI | Measures brain activity in multisensory-motor and salience network areas in response to avatars. |

| Flow Cytometry | Analyzes frequency and activation of innate lymphoid cells (ILCs) from blood samples. |

| Peripersonal Space (PPS) Paradigm | A visuo-tactile task that measures the spatial extent of the body's defensive buffer zone. |

Summary of Key Quantitative Findings:

| Measurement | Finding | Significance |

|---|---|---|

| PPS Extension | PPS expanded to farther distances when infectious avatars approached [21]. | Indicates the brain's defensive mechanism anticipates threats before they are close. |

| Early Neural Detection (EEG) | A significant neural response difference to infectious vs. neutral avatars was detected at 129-150 ms [21]. | Shows the brain differentiates pathogenic threats from neutral stimuli very early in processing. |

| Innate Lymphoid Cell (ILC) Activation | Virtual and real infections induced similar, stronger modulation of ILC frequency/activation vs. neutral avatars [21]. | Demonstrates a virtual threat can trigger a measurable, adaptive immune system preparation. |

Cognitive Load-Driven Personalization of VR Memory Palaces

This protocol uses physiological data to dynamically adjust a VR environment, personalizing it to optimize cognitive load and enhance memory performance [22].

Experimental Workflow: The diagram below illustrates the closed-loop system for creating a personalized VR memory palace based on real-time cognitive load assessment.

Key Research Reagent Solutions:

| Item | Function in Experiment |

|---|---|

| EEG Headset (Oculus Quest 2) | Monitors participant's Beta wave activity as a correlate of focus and cognitive load during the VR task. |

| Polynomial Regression Model | Algorithms used to model individual cognitive load profiles from the physiological EEG data. |

| Grasshopper Software | A visual programming environment used to dynamically adjust spatial variables in the VR memory palace based on the cognitive load model. |

The Scientist's Toolkit: Essential Conceptual Frameworks

Beyond specific protocols, understanding these key concepts is critical for designing robust VR experiments.

The Sense of Agency (SoA) and Ownership (SoO) in VR

VR is a powerful tool for manipulating the Sense of Agency (SoA)—the feeling of controlling one's actions—and the Sense of Body Ownership (SoO)—the feeling that a virtual body is one's own [23]. These are foundational to embodiment and can be selectively manipulated.

- Manipulating SoA: Alter the relationship between real and virtual actions, such as introducing temporal or spatial misalignment between a user's movement and the virtual body's response [23].

- Manipulating SoO: Alter the characteristics of the virtual body, such as its physical appearance (realistic vs. object-like) or the synchrony of visuotactile feedback (e.g., a virtual ball hitting the hand) [23].

Key Insight: These senses can be dissociated. For example, changes in a virtual body's appearance (affecting SoO) may not impact the feeling of control (SoA). This allows for precise experimental control over components of self-consciousness [23].

Cognitive Load in VR Learning and Design

Managing cognitive load is essential for effective VR applications, particularly in training and educational contexts.

- Realism vs. Cognitive Load: Contrary to intuition, highly realistic and authentic VR environments do not necessarily lead to better learning. One study found that a minimalistic VR environment led to higher student motivation, suggesting that simpler designs can reduce distractions and extraneous cognitive load [24].

- Field Study Results: A multi-day field study in molecular biology skills found that using IVR (Immersive Virtual Reality) led to higher levels of cognitive load and, in some cases, lower learning outcomes and self-efficacy compared to practical training alone. This highlights the importance of carefully integrating VR into instructional frameworks and not assuming it is inherently superior [25].

Best Practices for VR Clinical Trials (VR-CORE Framework)

For therapeutic VR development, the Virtual Reality Clinical Outcomes Research Experts (VR-CORE) committee proposes a methodological framework [26]:

- VR1 Studies: Focus on content development using human-centered design principles, involving patients and providers iteratively to define needs and create desirable VR treatments.

- VR2 Studies: Initial feasibility and efficacy testing. These trials focus on acceptability, tolerability, and initial clinical signals in a small sample.

- VR3 Studies: Randomized Controlled Trials (RCTs) that compare the VR treatment to a control condition to evaluate efficacy with clinically important outcomes [26].

The Impact of Substance Use Disorders and Neurodegenerative Conditions on Cognitive Load Capacity

The study of cognitive load capacity—the finite amount of mental resources available in working memory for learning and task performance—is critical for developing effective virtual reality (VR) interventions. This capacity is significantly compromised by both Substance Use Disorders (SUDs) and neurodegenerative conditions, which impair key cognitive domains such as executive function, working memory, and attention [27] [28]. Cognitive Load Theory posits that learning is optimized when instructional design minimizes extraneous load, manages intrinsic load, and promotes germane load [29]. Within VR research, this principle is paramount; immersive environments, while engaging, can impose substantial cognitive demands that may overwhelm already compromised systems [4]. Understanding the specific impact of these clinical conditions on cognitive load is therefore not merely theoretical but a practical necessity for designing VR task scenarios that are both effective and ecologically valid for these populations. The goal is to create adaptive technologies that can personalize cognitive demand in real-time, thereby supporting rehabilitation and cognitive training where it is most needed.

Quantitative Data Synthesis: Cognitive Outcomes in Clinical Populations and VR Interventions

The tables below synthesize key quantitative findings on cognitive impairment in clinical populations and the outcomes of VR-based interventions.

Table 1: Cognitive Dysfunction and VR Intervention Effects in Substance Use Disorders (SUDs)

| Aspect | Key Quantitative Findings | Relevant Source / Context |

|---|---|---|

| Prevalence & Impact of Cognitive Deficits in SUDs | Cognitive deficits are shown to increase the likelihood of relapse [28]. Recovery of cognitive function is predictive of increased treatment adherence and decreased relapse rates [28]. | VRainSUD Usability Study |

| VR Intervention Outcomes (General) | In a systematic review of 20 RCTs, 17 studies (85%) demonstrated positive effects on at least one outcome variable. Proximal outcomes (e.g., craving) frequently improved. Regarding clinically meaningful outcomes, 7 out of 10 studies (70%) reported substance use reduction and abstinence [30]. | Systematic Review of VR for SA |

| VR Modality Effectiveness | VR interventions utilizing cue exposure therapy (n=10) and cognitive-behavioural therapy (n=5) were most frequent. VR shows significant promise for alcohol and nicotine disorders [30]. | Systematic Review of VR for SA |

| Usability & Feasibility | The VRainSUD platform received a total Post-Study System Usability Questionnaire (PSSUQ) score of 2.72 ± 1.92, indicating high satisfaction. The "System Usefulness" subscale scored 1.76 ± 1.37 [28]. | VRainSUD Usability Study |

Table 2: Cognitive Impairment and VR Intervention Effects in Neurodegenerative Conditions

| Aspect | Key Quantitative Findings | Relevant Source / Context |

|---|---|---|

| Prevalence of Alzheimer's Disease & MCI | An estimated 7.2 million Americans age 65 and older live with Alzheimer's dementia. This number is projected to grow to 13.8 million by 2060 [31]. | 2025 Alzheimer's Facts & Figures |

| Impact of MCI on Function | MCI involves a decline in cognitive abilities more pronounced than expected for age. Performance in complex Instrumental Activities of Daily Living (iADLs) declines notably [32]. | VR Study on MCI |

| VR Intervention Outcomes in MCI | A dual cognitive-motor VR intervention in MCI patients showed a significant intragroup effect on cognitive function and geriatric depression in both experimental and control groups (p < 0.001), with large effect sizes. The completion rate in the VR group was 82.35%, compared to 70.59% in the traditional training group [32]. | VR Study on MCI |

Experimental Protocols for Cognitive Load and VR Research

Protocol: Usability Testing of a VR Cognitive Training Platform (VRainSUD)

This protocol is designed to assess the feasibility and acceptability of a VR cognitive training tool for patients with SUDs, for whom cognitive deficits are a barrier to treatment.

- Objective: To evaluate the usability, ease of use, and participant satisfaction with the VRainSUD cognitive training platform in an inpatient SUD treatment population [28].

- Population: Adults (age ≥18) with a diagnosed SUD receiving inpatient treatment. Exclusion criteria include concurrent gaming addiction or neurological conditions [28].

- Materials:

- Hardware: Oculus Quest 2 headset for a fully immersive, untethered experience [28].

- Software: The VRainSUD platform, built in Unreal Engine, comprising multiple cognitive tasks targeting memory, executive functioning, and processing speed [28].

- Assessment Tools: A researcher-designed survey and the standardized Post-Study System Usability Questionnaire (PSSUQ) [28].

- Procedure:

- Recruitment & Consent: Therapists refer eligible patients. Interested participants provide informed consent [28].

- Setup & Familiarization: The session is conducted in a room with sufficient space. Participants receive a brief explanation of the platform and a familiarization period with the VR headset and controllers [28].

- Usability Task Execution: Participants complete a script of 9 tasks designed to test core platform functions. Two researchers are present: one to assist and guide, and another to observe and record key performance indicators (e.g., time to complete tasks, ability to follow instructions, physical controller use) [28].

- Post-Study Assessment: Immediately after the VR session, participants complete the online survey and the PSSUQ. The PSSUQ uses a 7-point Likert scale (1="Strongly Agree", 7="Strongly Disagree") to measure system usefulness, information quality, and interface quality [28].

- Data Analysis: Descriptive statistics characterize the sample and questionnaire responses. Mean PSSUQ scores are calculated for the total scale and subscales, with lower scores indicating greater satisfaction. Completion times and observational data are analyzed to identify usability bottlenecks [28].

Protocol: A Dual Motor and VR-Based Cognitive Intervention for MCI

This protocol evaluates a combined intervention aimed at improving cognitive function and mental health in older adults with Mild Cognitive Impairment.

- Objective: To assess the effectiveness of a dual intervention (motor training plus immersive VR-based cognitive training simulating an iADL) on cognitive functions, depression, and the ability to perform iADLs in patients with MCI [32].

- Population: Older adults (men and women) with a diagnosis of MCI. Participants are randomized to an experimental group (VR cognitive training) or an active control group (traditional cognitive training) [32].

- Materials:

- Motor Training Equipment: Materials for aerobic, balance, and resistance activities.

- VR System: An immersive VR head-mounted display (HMD) system running a software that simulates an Instrumental Activity of Daily Living.

- Assessment Tools:

- Montreal Cognitive Assessment (MoCA) for global cognitive function.

- Short Geriatric Depression Scale (SGDS).

- Instrumental Activities of Daily Living (IADL) scale [32].

- Procedure:

- Baseline Assessment: All participants undergo pre-testing with the MoCA, SGDS, and IADL scales [32].

- Intervention Phase (6 weeks):

- Both Groups: Receive 40-minute sessions of group-based motor training (aerobic, balance, resistance) [32].

- Experimental Group: Following motor training, participants receive cognitive training using the immersive VR iADL simulation [32].

- Control Group: Following motor training, participants receive traditional, non-VR cognitive training [32].

- Post-Intervention Assessment: After 12 sessions over 6 weeks, all participants are re-tested using the same measures as the baseline assessment [32].

- Data Analysis: Use repeated-measures ANOVA or similar statistical tests to compare within-group and between-group changes in MoCA, SGDS, and IADL scores from pre-test to post-test. Analyze completion rates and the level of difficulty achieved in the training tasks as secondary outcomes [32].

Visualizing Workflows: From Research Concepts to Experimental Systems

Conceptual Framework: Cognitive Load in Clinical VR Research

Technical Implementation: Adaptive VR Training System (CLAd-VR)

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Resources for Cognitive Load and VR Research with Clinical Populations

| Item / Solution | Function / Application in Research |

|---|---|

| Oculus Quest 2 / Meta Quest 3 | Standalone VR Head-Mounted Display (HMD). Provides a fully immersive experience without being tethered to a PC, enhancing mobility and ease of use in clinical settings. Essential for delivering the VR intervention [28] [29]. |

| Unreal Engine | A powerful game engine platform used for developing high-fidelity, interactive virtual environments. Allows researchers to create and customize cognitive training tasks and ecological scenarios (e.g., iADL simulations) [28]. |

| Emotiv EPOC X EEG Headset | A wearable electroencephalography (EEG) device with 14 electrodes. Used for real-time, objective measurement of neural activity as a physiological correlate of cognitive load. Critical for adaptive systems like CLAd-VR [29]. |

| Lab Streaming Layer (LSL) | An open-source software framework for synchronizing multi-modal data streams in real-time. Used to align EEG data with in-game events and performance metrics from the VR environment, ensuring temporal precision for analysis [29]. |

| Post-Study System Usability Questionnaire (PSSUQ) | A standardized 19-item questionnaire measuring user satisfaction with system usability. Provides reliable metrics on system usefulness, information quality, and interface quality, crucial for evaluating patient acceptance of VR tools [28]. |

| Montreal Cognitive Assessment (MoCA) | A widely used and validated cognitive screening tool. Effective for assessing global cognitive function (attention, memory, executive functions, etc.) in populations like MCI before and after interventions [32]. |

Frequently Asked Questions: Troubleshooting Guides for Researchers

Q1: Our VR intervention for patients with SUDs is showing high dropout rates. What are the key usability factors we should check?

A: High dropout often links to poor usability and high extraneous cognitive load. Systematically assess your platform using the following checklist:

- Controller Intuitiveness: During initial familiarization, observe if patients can naturally use the controllers. High error rates or need for constant guidance indicates a problem [28].

- Clarity of Instructions: Analyze feedback on "Information Quality" from the PSSUQ. If scores are high (indicating dissatisfaction), your in-VR instructions are likely unclear. Simplify text, add voice-overs, and use icons [28].

- Cognitive Load of Navigation: Ensure the menu system for selecting tasks is simple. Complex navigation drains cognitive resources before the actual training begins. A streamlined, intuitive interface is key for an SUD population with potential cognitive deficits [28].

Q2: When testing VR in an older adult population with MCI, many participants report cybersickness. How can we mitigate this?

A: Cybersickness can confound cognitive load measurements and lead to attrition. Implement these strategies:

- Minimize Vection: Avoid artificial locomotion (e.g., joystick movement) where the visual scene moves but the vestibular system detects no motion. Use teleportation for navigation instead [32].

- Ensure Stable Frame Rates: Maintain a high, stable frame rate (e.g., 90 Hz) to prevent latency-induced nausea. Optimize your VR environment's graphics to prevent drops [4].

- Shorter, More Frequent Sessions: Begin with shorter exposure times (5-10 minutes) and gradually increase duration over multiple sessions to promote adaptation [32].

- Leverage Correlation Findings: Note that cybersickness has been correlated with higher cognitive load and lower self-efficacy. Mitigating sickness may therefore directly improve cognitive capacity for the task itself [4].

Q3: Our goal is "far transfer" – we want VR cognitive training to improve real-world function in patients with SUDs. What type of VR task design is most promising?

A: Far transfer is a significant challenge. Move beyond simple, abstract cognitive tasks:

- Incorporate Ecological Validity: Design VR scenarios that simulate real-world high-risk situations for relapse, such as a party with social pressure to drink or a stressful situation that triggers craving. This bridges the gap between the clinic and daily life [30] [33].

- Target Specific Executive Functions: Focus on domains critically impaired in SUDs, such as response inhibition, decision-making, and set-shifting. Create tasks that directly train these functions within a meaningful context [27] [28].

- Combine CBT with VR: Do not use VR in isolation. Integrate it with established therapeutic frameworks like Cognitive Behavioral Therapy. For example, a VR scenario can expose a patient to a trigger, and the system can then guide them through CBT-based coping strategies in real-time [30].

Q4: We are developing an adaptive VR system. What is the most reliable method for real-time cognitive load assessment to drive the adaptations?

A: A multi-modal approach is superior to relying on a single metric:

- Primary Method: Physiological Sensing (EEG): Use a wearable EEG headset to capture brain activity. Specific features like increased frontal theta power and a decreased parietal alpha power are well-validated neural markers of increasing cognitive workload. Machine learning models (e.g., LSTM networks) can classify this data into low/optimal/high load states in real-time with high accuracy (e.g., >90%) [29] [34].

- Secondary Method: Performance Metrics: Continuously log in-VR performance data, such as task completion time, error rates (e.g., tool collisions in a simulation), and instances of skipped steps. This behavioral data provides a concrete, objective correlate of the user's functional state [29].

- Tertiary Method: Subjective Reports (For Calibration): Periodically, use short verbal or in-VR prompts for a subjective rating of mental effort. This is not for real-time control but for validating and calibrating your physiological and performance-based models [29].

Troubleshooting Guides & FAQs

Troubleshooting High Cognitive Load in VR Experiments

Q1: My study participants are reporting high mental demand and frustration. What could be the cause? High cognitive load and frustration often occur when Immersive Virtual Reality (IVR) is paired directly with hands-on training without adequate instructional support [4]. This can manifest as low learning outcomes and reduced self-efficacy among participants.

- Recommended Action: Consider restructuring your protocol so that IVR training is not conducted concurrently with physical task execution. Allow for a period of knowledge consolidation between virtual and practical sessions.

Q2: Participant performance is lower in the VR group compared to the control group. Is this normal? Yes, some studies have found that IVR groups can demonstrate higher levels of cognitive load and lower learning outcomes and self-efficacy scores compared to control groups using only practical training [4]. This highlights the importance of optimizing the VR instructional framework.

- Recommended Action: Ensure your experimental design includes a control group. Use standardized metrics like the NASA-Task Load Index to quantitatively compare cognitive load between groups [35].

Q3: How can I reduce the cognitive load caused by my VR interface design? High visual complexity and poor interface design are significant contributors to extraneous cognitive load.

- Recommended Action:

- Contrast & Color: Ensure a minimum contrast ratio of 4.5:1 for text-to-background. Avoid extreme contrasts like pure black/white to prevent visual fatigue; use dark gray and subtle gradients instead [36].

- Color Saturation: Use high-saturation colors sparingly, only for critical interactive elements or warnings, as overuse can cause eye strain [36].

- Information Layering: Avoid presenting too much information simultaneously. Use a multi-channel approach (visual, auditory, tactile) to distribute cognitive resources [37].

Q4: During long loading times, my participants feel "trapped" and agitated. How can I improve this experience? Waiting in VR, especially with non-interactive loading screens, can cause negative emotions and distorted time perception, increasing cognitive friction [38].

- Recommended Action: Implement an interactive loading interface. Research shows that interactive elements can shorten perceived waiting times and increase positive emotions, thereby managing cognitive load more effectively [38].

Experimental Protocols & Methodologies

Table 1: Summary of Key Cognitive Load Assessment Methods

| Method Type | Specific Tool/Metric | Measured Aspect | Application Context |

|---|---|---|---|

| Subjective Measure | NASA-Task Load Index (TLX) [35] | Mental, Physical, and Temporal Demand, Effort, Performance, Frustration | Broadly applicable for post-task assessment in navigation and complex tasks [35]. |

| Subjective Measure | Paas Scale [35] | Perceived Mental Effort | Often used in educational psychology and learning studies [35]. |

| Physiological Measure | Electrodermal Activity (EDA) [35] | Arousal and Cognitive Effort (Skin Conductance Response) | Suitable for real-life and VR navigation studies; reliable indicator of cognitive load variation [35]. |

| Behavioral Measure | Task Performance Score [35] | Accuracy and efficiency in task completion | Used as a behavioral measure of performance, e.g., in memory binding tasks [35]. |

| Behavioral & Physiological | Eye-Tracking [39] | Gaze patterns and pupillometry (linked to cognitive load) | Used in VR eye-tracking experiments to optimize system design [39]. |

Detailed Protocol: Investigating Cognitive Load During Navigation in VR [35]

This protocol validates VR as a method for cognitive load analysis in ecological, non-static contexts.

- Objective: To compare travelers' cognitive load in a real-life train station versus a VR simulation of the same environment, and to examine the effect of expertise (novice vs. expert travelers).

- Participants: Recruit both regular (experts) and occasional (novices) travelers.

- Task: Participants must find relevant information in the train station (real and virtual).

- Measures & Apparatus:

- Physiological: Electrodermal Activity (EDA) is recorded to measure cognitive effort.

- Subjective: The NASA-TLX questionnaire is administered post-task to assess perceived workload.

- Behavioral: A memory test (recognition of relevant factual and contextual information seen in the station) is used to measure performance.

- Procedure:

- Participants perform the navigation task in both the real-world location and the high-fidelity VR model (order counterbalanced).

- EDA is recorded throughout the task.

- Immediately after the task, participants complete the NASA-TLX.

- Following the task, the memory recognition test is administered.

- Key Finding: The study found no significant difference in cognitive load indicators (EDA, NASA-TLX, memory performance) between real-life and VR conditions, establishing VR as a valid and reliable method for ecological cognitive load research [35].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Tools for VR Cognitive Load Research

| Item | Function in Research |

|---|---|

| VR Headset with Pancake Optics (e.g., Meta Quest 3/Pro) | Provides high visual clarity. Key metrics like Pixels Per Degree (PPD), sharpness (MTF), and contrast ratio directly influence visual comfort and cognitive load [40]. |

| Electrodermal Activity (EDA) Sensor | A physiological tool for measuring cognitive load indirectly via skin conductance response, which rises with cognitive effort [35]. |

| NASA-TLX Software / Questionnaire | The gold-standard subjective tool for quantifying a user's perceived mental workload across multiple dimensions [35]. |

| Eye-Tracking Module (Integrated with VR Headset) | Provides behavioral data on gaze and pupillometry, which are robust indicators of visual attention distribution and cognitive load [39]. |

| Quality Function Deployment (QFD) & AHP Model | A computational method to translate user cognitive needs (e.g., low-load demand) into prioritized VR design elements, reducing trial-and-error in system development [37]. |

| Convolutional Neural Network (CNN) Prediction Model | Used to predict user cognitive load and satisfaction based on design input data, allowing for pre-emptive optimization of VR systems before user testing [37]. |

Methodological Workflow & Cognitive Load Theory Diagrams

Diagram 1: VR system optimization workflow based on user cognitive needs, integrating AHP-QFD and CNN models [37] [39].

Diagram 2: Cognitive Load Theory (CLT) framework, showing the three load types that compete for limited working memory resources [35].

Measuring and Applying Cognitive Load Metrics: Advanced Tools and Techniques for VR Research

FAQs and Troubleshooting Guides

Experimental Design and Setup

Q1: How should I structure a VR experiment to effectively combine EEG and fNIRS?

A combined EEG-fNIRS experiment requires a hybrid design that accommodates the temporal characteristics of both signals.

- Design Paradigm: For the EEG component, you will need event-related markers for each stimulus presentation to analyze Event-Related Potentials (ERPs) or time-frequency responses. For the fNIRS component, which tracks the slower hemodynamic response, you should analyze data across entire blocks of trials. A sample visual task would, therefore, include multiple trial events (for EEG) nested within a larger presentation block (for fNIRS) [41].

- Task Difficulty Calibration: To optimize cognitive load in VR, ensure task difficulty is individualized. Mismatched difficulty can lead to cognitive overload (frustration, errors) or underload (wasted effort, boredom), hindering learning. Using physiological measures to gauge cognitive load allows for real-time task adjustment [42].

Q2: What are the best practices for integrating EEG electrodes and fNIRS optodes on a single cap?

Co-registering EEG and fNIRS sensors on the same cap is technically challenging but critical for data quality.

- Cap Selection: Use a cap with a large number of slits (e.g., 128 or 160) and black fabric. The dark material reduces unwanted optical reflection, thereby improving fNIRS signal quality. The numerous slits provide the flexibility needed to place both types of sensor holders [41].

- Montage Planning: EEG and fNIRS sensors often compete for the same scalp locations. You must define your montage based on your research question and brain region of interest (e.g., the prefrontal cortex for cognitive control or the occipital lobe for visual tasks). Software tools like the MATLAB-based

ArrayDesignercan help plan optimal sensor layouts [41]. - Secure Placement: A common challenge is that elastic fabric caps can lead to inconsistent pressure and variable distance between the fNIRS source and detector. For higher-precision studies, consider customized solutions using 3D-printed or thermoplastic helmets to ensure stable and consistent optode-scalp contact across participants [43].

Signal Acquisition and Quality

Q3: My fNIRS signal is noisy. What are the common sources of artifact and how can I mitigate them?

fNIRS signals are susceptible to several physiological and motion artifacts.

- Motion Artifacts: While fNIRS is more robust to motion than fMRI, it is not immune. For experiments involving movement (e.g., in VR), it is recommended to use an accelerometer to record head movements. The data from the accelerometer can then be used with advanced signal processing techniques, like adaptive filtering, to clean the motion artifacts from the fNIRS signal [44].

- Physiological Confounds: Signals originating from heart rate, respiration, and blood pressure changes can contaminate the fNIRS data. These can be removed using a variety of methods, including band-pass filtering, Principal Component Analysis (PCA), or including them as regressors in a General Linear Model (GLM) [44].

- Other Sources: Perspiration can alter the optical characteristics of the scalp-sensor interface. While this can cause signal drift, the effects may stabilize once the sensor is saturated and can be eliminated during processing [44].

Q4: How do I achieve precise synchronization between EEG and fNIRS systems?

Accurate temporal alignment of EEG and fNIRS data streams is fundamental for multimodal analysis.

- Synchronization Methods: There are two primary methods. The first uses a unified processor to acquire both signals simultaneously, which achieves high-precision synchronization but requires a more intricate system design. The second involves using separate systems (e.g., NIRScout and BrainAMP) and synchronizing them via software like the Lab Streaming Layer (LSL) protocol or via shared hardware triggers sent from the stimulus computer [43] [41].

- Trigger Delay: When using hardware triggers, be aware of any potential delay. For instance, with some fNIRS systems, the trigger delay from the acquisition software is typically very short, not exceeding 5 milliseconds [44].

Q5: The prefrontal cortex activation from my fNIRS data doesn't increase with task difficulty as expected. Is this an error?

Not necessarily. In highly demanding multitasking environments, a lack of increase in Prefrontal Cortex (PFC) activation may reflect a phenomenon known as "cognitive disengagement" or "neural efficiency," where the brain actively limits resource engagement to manage an overwhelming cognitive load. This finding, which challenges the traditional linear view of PFC activation, underscores the importance of triangulating your fNIRS data with performance metrics and subjective reports to correctly interpret the results [45].

Data Processing and Analysis

Q6: What are the key steps for preprocessing fNIRS data before statistical analysis?

A robust preprocessing pipeline is essential for deriving meaningful hemodynamic responses.

- Signal Quality Check: Inspect all channels and reject those with poor signal quality.

- Convert Raw Light Intensity: Use the Modified Beer-Lambert Law to convert raw light intensity signals into changes in oxygenated (HbO) and deoxygenated (HbR) hemoglobin concentration [44].

- Artifact Removal: Identify and correct for motion artifacts.

- Filtering: Apply high-pass and/or low-pass filtering to remove drift and high-frequency physiological noise (e.g., cardiac signals) outside the frequency band of interest for the hemodynamic response [46].

- Confound Regression: Use a GLM with regressors for systemic physiological confounds to improve the specificity of the brain activity signal [46].

Q7: How can I fuse EEG and fNIRS data to get a more complete picture of brain activity?

Data fusion leverages the complementary strengths of both modalities.

- Analysis Approach: Fusion can occur at different levels. Asymmetric integration uses the features of one modality to inform the analysis of the other (e.g., using EEG-derived timings to model the fNIRS hemodynamic response). Symmetric fusion methods, like structured sparse multiset Canonical Correlation Analysis (ssmCCA), treat both modalities equally to find a shared latent variable, pinpointing brain regions where both electrical and hemodynamic activity are consistently detected [47]. This approach can reveal core neural networks, such as the Action Observation Network, more reliably than unimodal analyses [47].

Experimental Protocols for Cognitive Load Assessment in VR

Protocol 1: Multimodal Assessment in a VR Driving Simulator

This protocol is adapted from a study designed to measure cognitive load in adolescents with ASD, a approach that is highly relevant for optimizing cognitive load in VR scenarios [42].

- Objective: To fuse multimodal physiological data to classify cognitive load during a VR-based driving task, enabling future individualization of task difficulty.

- Participants: A target group of 20+ participants.

- Equipment:

- VR-based driving simulator.

- EEG system (e.g., from Brain Products).

- fNIRS system (mobile or full-head).

- Eye-tracker integrated into the VR headset.

- Peripheral physiology sensors (ECG, EDA, respiration belt).

- Experimental Design:

- Participants perform driving tasks in VR under varying levels of difficulty.

- The ground truth for cognitive load is established by a clinically trained rater who observes participant behavior and performance, which is particularly useful for populations who may not provide accurate self-reports.

- Data Modalities Recorded:

- EEG: To capture millisecond-scale electrical brain dynamics.

- fNIRS: To localize hemodynamic changes in the prefrontal cortex.

- Eye Gaze: Pupil dilation and blink rate from the eye-tracker.

- Peripheral Physiology: Heart rate, heart rate variability, skin conductance level, and respiration.

- Performance Metrics: Steering wheel movements, lane deviation, speed control, and error rates.

- Analysis:

- Extract features from all modalities (e.g., EEG band power, fNIRS HbO concentration, pupil diameter, heart rate).

- Use machine learning classifiers (e.g., SVM, LDA, ANN) to classify cognitive load levels (low vs. high) based on the rater's assessment.

- Compare classification accuracy using single modalities versus fused multimodal information (e.g., feature-level or decision-level fusion).

Protocol 2: Investigating Cognitive Disengagement with Mobile fNIRS

This protocol is based on research that revealed the "cognitive disengagement" effect during complex multitasking [45].

- Objective: To measure cognitive load in a high-immersion, ecologically valid VR multitasking paradigm using a mobile fNIRS device.

- Participants: 30+ participants (e.g., undergraduates).

- Equipment:

- A mobile, multi-channel fNIRS device.

- A VR system capable of running complex, multitasking scenarios.

- Experimental Design:

- Single-Task Condition: Participants perform a focused, primary task in VR.

- Multitask Condition: Participants perform the primary task while simultaneously managing several secondary tasks (e.g., responding to auditory cues, monitoring displays).

- Measures:

- fNIRS: Record hemodynamic activity from the prefrontal cortex.

- Performance: Score and error rates for both primary and secondary tasks.

- Subjective Load: Administer the NASA-TLX questionnaire after each condition.

- Analysis:

- Compare PFC activation, performance scores, and subjective ratings between single-task and multitask conditions.

- Correlate PFC activation with performance. A key finding would be decreased or unchanged PFC activation coupled with worse performance in the multitask condition, indicating potential cognitive disengagement.

Data Presentation and Specifications

Table 1: Key Specifications of EEG and fNIRS for Cognitive Load Research

| Feature | EEG (Electroencephalography) | fNIRS (functional Near-Infrared Spectroscopy) |

|---|---|---|

| What it Measures | Electrical potentials from post-synaptic neuronal activity [43] | Concentration changes in oxygenated (HbO) and deoxygenated hemoglobin (HbR) [43] |

| Temporal Resolution | Excellent (millisecond precision) [41] | Poor (slow hemodynamic response, ~3-6 seconds) [41] |

| Spatial Resolution | Relatively Low [43] | Good (~1-2 cm) [44] |

| Key Advantages | Direct measure of neural electrical activity; high temporal resolution; portable [43] | Good spatial resolution; less sensitive to motion artifacts than fMRI; portable; non-invasive [43] [44] |

| Main Limitations | Susceptible to EMG/EOG artifacts; poor spatial resolution and depth penetration [43] | Limited to cortical surface; sensitive to systemic physiological confounds (e.g., blood pressure) [44] |

| Typical Cognitive Load Biomarkers | Increase in frontal theta power; decrease in alpha power [48] [42] | Increase in prefrontal cortex HbO concentration [42] [45] (though may decrease in overload) |

Table 2: Cognitive Load Metrics Overview

| Metric Category | Examples | Brief Description | Considerations for VR |

|---|---|---|---|

| Subjective Measures | NASA-TLX [48], SWAT [48] | Self-report questionnaires assessing mental demand, effort, frustration, etc. | Intrusive; breaks immersion; may not be suitable for all populations (e.g., ASD) [42]. |

| Performance Measures | Task accuracy, reaction time, error rate [48] | Direct metrics of how well the user is performing the task. | Easy to collect in VR; may not be sensitive enough if the task is too easy/hard. |

| Physiological (Brain) | EEG (Theta/Alpha power) [48] [42], fNIRS (PFC HbO) [42] [45] | Direct and indirect measures of brain activity related to cognitive effort. | Requires specialized equipment; can be correlated to provide a more robust assessment [47]. |

| Physiological (Other) | Pupil Dilation [42], Heart Rate Variability [42], Skin Conductance [42] | Measures of autonomic nervous system arousal, which is linked to cognitive load. | Pupillometry can be integrated into VR headsets; other sensors may require additional setup. |

Workflow and Signaling Pathways

Cognitive Load Measurement and Adaptive VR Workflow

Multimodal Fusion for Cognitive Load Estimation

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Equipment and Software for Multimodal VR Research

| Item Category | Specific Examples / Models | Critical Function |

|---|---|---|

| VR Platform | Custom driving simulator [42], Immersive VR systems [4] | Presents controlled, ecologically valid environments and tasks to elicit cognitive load. |

| EEG System | Brain Products amplifiers [41], actiCAP snap electrodes [41] | Measures millisecond-scale electrical brain activity (e.g., theta/alpha power) related to cognitive processing. |

| fNIRS System | Continuous Wave systems (e.g., BIOPAC, NIRx, Hitachi ETG-4100) [44] [46] [47] | Measures hemodynamic changes (HbO/HbR) in the cortex to localize brain activity with good spatial resolution. |

| Peripheral Physiology | ECG for heart rate, EDA for skin conductance, Respiration belt, Eye-tracker [42] | Captures autonomic nervous system responses (arousal) that are correlated with cognitive load and effort. |

| Integrated Caps | actiCAP with 128+ slits (black fabric) [41], Custom 3D-printed helmets [43] | Enables stable and precise co-registration of EEG electrodes and fNIRS optodes on the scalp. |

| Synchronization Solution | Lab Streaming Layer (LSL) [41], Shared hardware triggers [41] | Ensures precise temporal alignment of data streams from all recording devices and task events. |

| Analysis Software/Tools | MATLAB, Structured Sparse Multiset CCA (ssmCCA) [47], Machine Learning libraries (SVM, LDA, ANN) [42] | Used for signal processing, artifact removal, data fusion, and ultimately classifying cognitive load levels. |

Troubleshooting Guides & FAQs

Common Eye-Tracking Calibration Issues

Q: What are the most common causes of poor eye-tracking calibration and how can I resolve them?

A: Poor calibration often stems from issues in detecting the pupil center and corneal reflection. Below is a summary of common problems and their solutions.

Table: Common Eye-Tracking Calibration Issues and Solutions

| Problem | Description | Recommended Solution |

|---|---|---|

| Absent Corneal Reflection | The corneal reflection is elongated, broken, or missing [49]. | Position the camera and IR lamp as close as possible to the bottom of the display and level. Avoid eccentric gaze positions >35 degrees [49]. |

| Glare | Additional bright spots in the image around the eyes [49]. | Remove reflective surfaces (e.g., glasses, sparkly makeup). Cover jewelry with matte tape. Use search windows to restrict where the algorithm looks for landmarks [49]. |

| Individual Differences | Poor tracking in individuals with light irises, very large pupils, or conditions like cataracts [49]. | Consider relaxing acceptable calibration thresholds, removing data, or switching to monocular tracking if only one eye tracks well [49]. |

| Drift | Tracking quality degrades over time [49]. | Incorporate drift checks into your experiment at intervals. Recalibrate only if drift becomes unacceptable [49]. |

| Environmental Reflections | Additional reflective spots caused by other infrared light sources (e.g., sunlight, overhead lighting) [49]. | Cover windows and turn off overhead lighting where necessary [49]. |

| Blinking During Calibration | Poor calibration for a specific target due to participant blinking [49]. | Recalibrate if a blink occurs during calibration. If it happens during validation, consider analyzing the data subset before or after the blink [49]. |

Q: The user can only select items on one part of the screen after calibration. What should I do?