Psychometric Validation of Immersive VR for Executive Function: A New Paradigm for Biomedical Research and Clinical Assessment

This article synthesizes current evidence and methodologies for the psychometric validation of immersive Virtual Reality (VR) assessments of executive functioning (EF), a critical cognitive domain for mental health and neurological...

Psychometric Validation of Immersive VR for Executive Function: A New Paradigm for Biomedical Research and Clinical Assessment

Abstract

This article synthesizes current evidence and methodologies for the psychometric validation of immersive Virtual Reality (VR) assessments of executive functioning (EF), a critical cognitive domain for mental health and neurological disorders. Targeting researchers, scientists, and drug development professionals, it explores the foundational promise of VR to enhance ecological validity and test sensitivity beyond traditional tools. The content details practical application and development strategies, addresses key methodological challenges like cybersickness and reliability, and provides a framework for rigorous validation against established benchmarks. By outlining future directions, this review serves as a comprehensive resource for integrating validated, scalable VR-based cognitive assessments into clinical trials and biomedical research.

The Paradigm Shift: Why Immersive VR is Revolutionizing Executive Function Assessment

The Ecological Validity Gap in Traditional Neuropsychological Tests

Neuropsychological assessments are fundamental for diagnosing cognitive impairments, yet a significant gap exists between their controlled testing environments and the complex demands of real-world functioning. This guide objectively compares traditional executive function assessments against emerging immersive Virtual Reality (VR)-based paradigms, focusing on their ecological validity—the degree to which test performance predicts daily functioning. We synthesize current experimental data demonstrating that while traditional tests are robust and standardized, they often lack verisimilitude (representativeness of daily tasks) and veridicality (predictive power for daily outcomes). In contrast, immersive VR assessments show considerable promise in bridging this ecological validity gap by simulating complex, everyday activities within controlled laboratory settings. This analysis provides researchers and clinicians with a comparative framework for selecting, developing, and validating ecologically valid cognitive assessment tools.

Ecological validity in neuropsychology refers to the functional and predictive relationship between a person's performance on a set of neuropsychological tests and their behavior in real-world settings [1]. This concept comprises two principal components:

- Verisimilitude: The degree to which a neuropsychological test mirrors the demands of a person’s daily living activities that it aims to evaluate.

- Veridicality: The extent to which test performance predicts an individual’s functioning in their daily living activities [1] [2].

Traditional neuropsychological tests, despite their robustness and standardization, were largely developed to assess cognitive "constructs" (e.g., working memory) without explicit regard for their ability to predict "functional" behavior [2]. For instance, the Wisconsin Card Sorting Test (WCST) and the Stroop test, while sensitive to certain brain injuries, were not originally designed to predict a patient's ability to navigate real-world challenges like managing finances or responding appropriately to traffic signals [2]. This fundamental disconnect creates the "ecological validity gap"—a chasm between what is measured in the clinic and what is required in everyday life.

Comparative Analysis: Traditional vs. Immersive VR Assessments

The following table summarizes the core differences between traditional and immersive VR-based assessments of executive function, highlighting the specific factors contributing to the ecological validity gap.

Table 1: Objective Comparison Between Traditional and Immersive VR Neuropsychological Assessments

| Feature | Traditional Assessments | Immersive VR Assessments |

|---|---|---|

| Ecological Validity | Limited ecological validity; accounts for only 18-20% of variance in everyday executive ability [1]. | High potential; environments replicate real-world complexity and daily activities (e.g., Virtual Multiple Errands Test) [1] [3]. |

| Testing Environment | Sterile, highly controlled clinic room; abstract, static stimuli (paper-and-pencil or 2D computer screens) [1] [4]. | Simulated real-world environments (e.g., supermarkets, streets); dynamic, multi-sensory stimuli via Head-Mounted Displays (HMDs) [5] [6]. |

| Task Impurity Problem | High risk; scores reflect variance from non-targeted EF and non-EF processes, complicating interpretation [1]. | Mitigated; complex tasks better engage and isolate targeted EFs within an integrated, realistic context [1] [3]. |

| Data Collection | Primarily accuracy and reaction time; manually recorded or from simple computerized tasks. | Automated, high-density data logging: performance metrics, movement paths, response latencies, and decision-making sequences [3]. |

| Sensitivity to Subtle Deficits | Less effective in detecting subtle changes in EF and early cognitive decline in healthy or non-clinical populations [1]. | Enhanced sensitivity; can detect prodromal stages of cognitive decline and subtle intraindividual changes [1] [3]. |

| Patient Engagement | Can be low due to repetitive, abstract nature of tasks [3]. | High; immersive and gamified elements capture increased attention and improve motivation [1] [3]. |

| Key Limitations | Low generalizability to daily life, task impurity, cultural/educational biases [2] [7]. | Cybersickness (e.g., dizziness, nausea), high costs, lack of standardized protocols, and technical challenges [1] [3]. |

Experimental Protocols and Validation Data

Protocol: The Virtual Multiple Errands Test (VMET)

The VMET is a prime example of a function-led assessment designed to bridge the ecological validity gap.

- Objective: To assess executive functions like planning, cognitive flexibility, and inhibitory control within a simulated daily task context [1].

- Methodology: Participants are immersed in a virtual environment (e.g., a shopping district or supermarket) via a Head-Mounted Display (HMD). They are given a set of errands to complete (e.g., "buy a loaf of bread," "find out the time of a movie"), but must adhere to specific rules (e.g., "items must be bought in a particular order," "certain zones are off-limits") [1].

- Data Collected: The system automatically logs a rich dataset, including:

- Task Completion Time: Total time to complete all errands.

- Rule Breaks: Number of times pre-established rules were violated.

- Task Sequencing Errors: Inefficiencies or errors in the order of completed tasks.

- Navigation Efficiency: Pathfinding routes and distances traveled.

- Validation Data: Studies validate the VMET by comparing its outcomes to both traditional executive function tests (e.g., Trail Making Test, Stroop) and measures of real-world functioning, such as caregiver reports or direct observation of daily activities [1]. This process assesses both veridicality and verisimilitude.

Protocol: Virtual Reality Spatial Navigation Assessment

Spatial memory and navigation are critical cognitive functions highly relevant to daily life and early indicators of conditions like Alzheimer's disease.

- Objective: To assess spatial memory and learning by evaluating a participant's ability to form and use a cognitive map of a virtual environment [8].

- Methodology: Using a virtual Morris Water Maze or a town square paradigm, participants must learn the location of a hidden target or a specific route across multiple trials. These tasks tap into egocentric (body-centered) and allocentric (world-centered) spatial reference frames [8].

- Data Collected:

- Learning Curve: Reduction in time or path length to target over trials.

- Search Strategy: Qualitative analysis of the paths taken (e.g., direct vs. random searching).

- Probe Trial Performance: Time spent in the correct quadrant when the target is removed, indicating memory retention.

- Validation Data: Performance on these VR tasks shows stronger correlations with real-world wayfinding ability than traditional paper-based spatial tests [8]. Furthermore, these tasks have proven sensitive in differentiating patients with Mild Cognitive Impairment (MCI) from healthy older adults, often more so than traditional measures [4] [8].

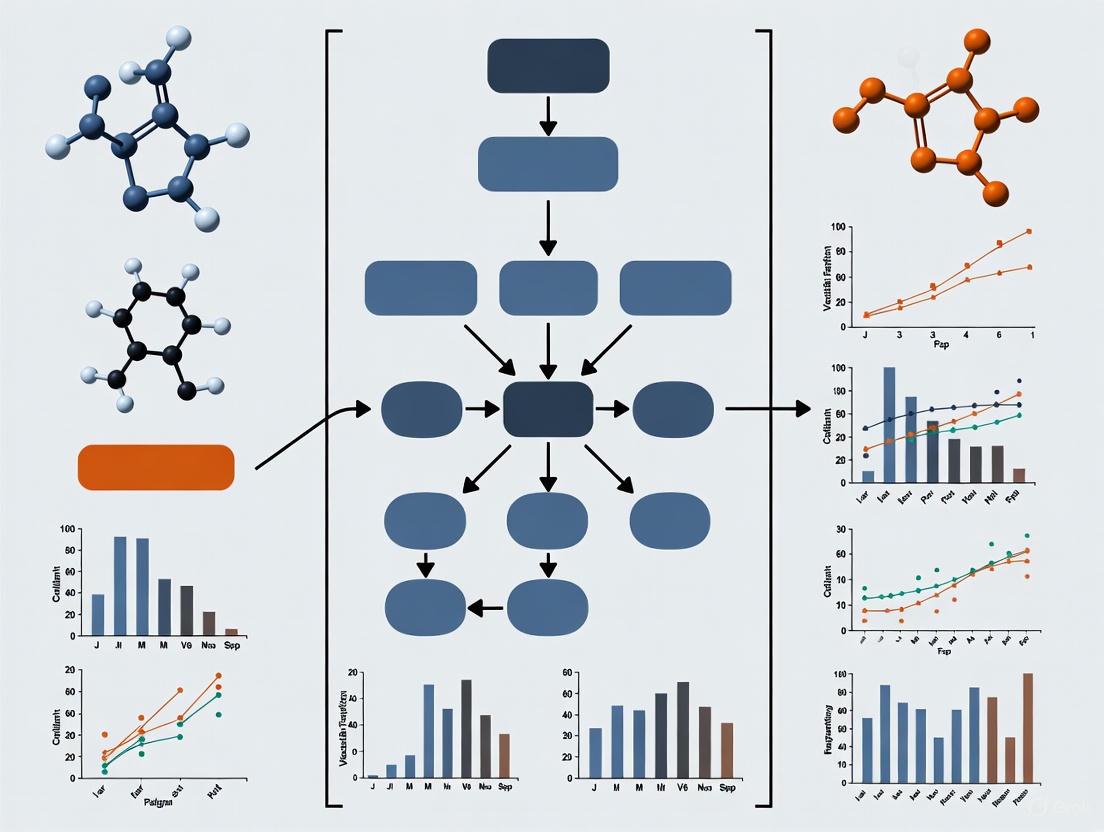

Visualizing the Assessment Workflow

The following diagram illustrates the logical workflow and key differentiators when employing traditional versus immersive VR assessment paradigms.

The Scientist's Toolkit: Essential Research Reagents & Solutions

For researchers aiming to develop or implement immersive VR assessments for executive function, the following tools and considerations are essential.

Table 2: Key Research Reagent Solutions for Immersive VR Assessment Development

| Item / Solution | Function & Rationale | Examples / Specifications |

|---|---|---|

| Head-Mounted Display (HMD) | Provides visual and auditory immersion, creating a sense of "presence" in the virtual environment, which is crucial for ecological validity [5] [6]. | Oculus Rift/Meta Quest, HTC Vive, Valve Index. Key specs: resolution, field of view, refresh rate, integrated audio. |

| Spatial Audio System | Renders 3D sound to enhance realism and provide critical cues for navigation and situational awareness [9]. | First-Order Ambisonics (FOA) with head-tracking; binaural audio playback. |

| Game Engine | Software platform for designing, building, and deploying interactive virtual environments. | Unity 3D, Unreal Engine. Enable control over stimuli, task logic, and data logging. |

| Data Logging Framework | Automated capture of granular behavioral data beyond simple accuracy, which is a key advantage of VR [3]. | Custom scripts within game engines to record timestamps, object interactions, player coordinates, and task outcomes. |

| Validation Battery | A set of established measures used to validate the new VR tool against traditional tests and real-world outcomes (veridicality) [1]. | NIH EXAMINER [10], Trail Making Test (TMT), Stroop Test, self-report or informant-based measures of daily functioning. |

| Cybersickness Questionnaire | Monitors potential adverse effects (e.g., nausea, dizziness) that can threaten data validity and participant comfort [1]. | Simulator Sickness Questionnaire (SSQ) or similar. |

The evidence indicates a clear and significant ecological validity gap in traditional neuropsychological tests. While they remain valuable for specific diagnostic purposes, their ability to predict real-world functioning is limited. Immersive VR assessments emerge as a powerful complementary paradigm, offering enhanced ecological validity through realistic simulations, engaging multi-sensory environments, and the capacity for rich, automated data collection. For researchers and clinicians focused on understanding cognitive health in the context of daily life, VR technology provides a transformative toolset. Future work must prioritize standardizing VR protocols, mitigating cybersickness, and conducting rigorous psychometric validation to fully integrate these tools into clinical and research practice, ultimately bridging the gap between the clinic and the real world.

Executive functions (EFs) are higher-order cognitive processes essential for goal-directed behavior, controlling and coordinating a wide range of mental processes and everyday behaviors [11]. Within neuropsychology, a consensus identifies three core executive functions: inhibition (the ability to suppress irrelevant stimuli or automatic responses), cognitive flexibility (the capacity to shift between mental sets or tasks), and working memory (a system for temporarily storing and manipulating information) [12] [11]. These core functions form the foundation for more complex, higher-order EFs such as reasoning, planning, and problem-solving [11]. Traditionally, these cognitive domains have been assessed using standardized paper-and-pencil or computerized neuropsychological tests. However, these traditional methods have been criticized for their limited ecological validity—their poor ability to predict real-world functioning and represent the complexity of daily life tasks [12] [11].

Virtual reality (VR) technology presents a paradigm shift in cognitive assessment and training by creating immersive, interactive, and ecologically valid environments. VR addresses fundamental limitations of traditional methods by simulating real-world activities in controlled settings, thereby enhancing the generalizability of findings to daily functioning [12] [13]. A key advantage of VR is its capacity to provide a safe environment for practicing skills while allowing for objective, automatic measurement of responses [12]. Furthermore, the immersive nature of VR can heighten user engagement and motivation, which are critical factors for effective cognitive training and assessment [14] [15]. This guide provides a comparative analysis of VR-based approaches for evaluating and training the core executive functions, synthesizing current experimental data and methodologies to inform researchers and drug development professionals.

Comparative Efficacy: VR vs. Traditional Executive Function Assessments

The validity and effectiveness of VR-based tools are supported by a growing body of meta-analytic evidence and individual empirical studies. The following table summarizes key quantitative findings comparing VR-based and traditional approaches to executive function assessment and training.

Table 1: Comparative Validity and Efficacy of VR-Based Executive Function Assessments and Interventions

| Core Executive Function | Study Population | Key Comparative Finding | Effect Size / Correlation | Source |

|---|---|---|---|---|

| Overall Executive Function | Mixed (Healthy & Clinical) | Significant correlation between VR-based assessments and traditional paper-and-pencil tests [12]. | Statistically significant correlations (Meta-analysis) [12] | PMC11595626 |

| Working Memory & Inhibitory Control | Young Adults with Intellectual Developmental Disabilities (IDD) | Significant improvement after VR-based cognitive training in a pilot study [16]. | Improvement post-VR training [16] | Healthcare12171705 |

| Global Cognitive Function | Older Adults with Mild Cognitive Impairment (MCI) | VR interventions significantly improved global cognition compared to control groups [17]. | Hedges's g = 0.6 (95% CI: 0.29 to 0.90) [17] | PMC12634598 |

| Global Memory & Executive Functioning | Individuals with Substance Use Disorders (SUD) | Significant improvement when VR cognitive training was added to treatment as usual [13]. | Statistically significant time × group interaction (p<0.001) [13] | fnbeh.2025.1653783 |

Beyond validity, the type of VR technology used appears to moderate efficacy, particularly in clinical populations. A systematic review and network meta-analysis focusing on older adults with Mild Cognitive Impairment (MCI) compared the effectiveness of different VR technologies and found that all types significantly improved global cognition compared to control groups. However, their relative efficacy varied, as detailed in the table below.

Table 2: Comparative Efficacy of VR Immersion Levels on Global Cognition in MCI

| VR Immersion Level | Description | Relative Efficacy in MCI | Cumulative Ranking (SUCRA) | Source |

|---|---|---|---|---|

| Semi-Immersive VR | Large screen-based simulations with partial sensory involvement [15]. | Most effective for improving global cognition [18]. | 87.8% [18] | ScienceDirect 152586102500458X |

| Non-Immersive VR | Computer-based applications with minimal sensory integration [15]. | Second most effective [18]. | 84.2% [18] | ScienceDirect 152586102500458X |

| Immersive VR | Head-Mounted Displays (HMDs) providing multisensory engagement [15]. | Less effective than semi- and non-immersive types [18]. | 43.6% [18] | ScienceDirect 152586102500458X |

Experimental Protocols for VR-Based Executive Function Assessment

A critical step in establishing the utility of VR tools is their validation against established gold-standard measures. The following section outlines detailed methodologies from key studies that have developed and validated VR-based paradigms for assessing core EFs.

Protocol 1: Validating a VR-Based Assessment Battery

A 2024 meta-analysis investigated the concurrent validity of VR-based assessments of executive function against traditional neuropsychological tests [12].

- Objective: To investigate the concurrent validity between VR-based assessments and traditional neuropsychological assessments of executive function and its subcomponents (cognitive flexibility, attention, and inhibition) [12].

- Literature Search: A systematic search of PubMed, Web of Science, and ScienceDirect was conducted for articles published from 2013 to 2023. Keywords included "Virtual Reality" AND "Executive function*" [12].

- Study Selection: From 1605 initially identified articles, nine studies meeting the inclusion criteria were selected. Criteria included the use of VR-based EF assessments, publication in English, and provision of sufficient data to calculate correlation coefficients with traditional tests [12].

- Data Analysis: Pearson's r correlation values were extracted and transformed into Fisher's z values for analysis using Comprehensive Meta-Analysis Software (CMA) Version 3. Heterogeneity was assessed using I², with random-effects models applied when heterogeneity was high [12].

- Key Outcomes: The results revealed statistically significant correlations between VR-based assessments and traditional measures across all executive function subcomponents, supporting the concurrent validity of VR tools [12].

Protocol 2: The Corsi Block-Tapping Task (CBTT) in VR

A 2024 pilot study utilized a classic paradigm adapted for a non-immersive screen to assess visuospatial working memory in young adults with intellectual developmental disabilities [16].

- Objective: To measure visuospatial short-term and working memory [16].

- Task Procedure:

- Setup: Nine squares are displayed on a screen [16].

- Encoding Phase: The squares light up in a variable sequence, one by one [16].

- Recall Phase: The participant must reproduce the sequence by selecting the squares in the correct order [16].

- Task Progression: The sequence complexity varies between two and eight elements based on the participant's performance. The test typically uses 20 sequences [16].

- Outcome Measures: The primary outcome is the number of correct sequences (score range 0-20), with a higher score indicating better visuospatial working memory [16].

Protocol 3: The Stop Signal Task (SST) in VR

The same pilot study used the SST to assess inhibitory control, a key component of inhibition [16].

- Objective: To assess response inhibition—the ability to cancel a preponent motor response [16].

- Task Procedure:

- Go Task: Participants are instructed to respond quickly to a left or right arrow presentation by pressing corresponding keys ('q' for left, 'p' for right) [16].

- Stop Task: Periodically, a "stop signal" (e.g., an auditory tone) appears shortly after the arrow, instructing the participant to inhibit their keypress response [16].

- Tracking Algorithm: The delay between the go signal and the stop signal is typically adjusted based on performance to maintain a specific success rate (e.g., 50%) [16].

- Outcome Measures: The primary outcome is the Stop Signal Reaction Time (SSRT), which estimates the speed of the internal inhibitory process. A shorter SSRT indicates better inhibitory control [16].

The following diagram illustrates the typical workflow for validating a VR-based executive function assessment, synthesizing the key steps from the described protocols.

The Scientist's Toolkit: Essential Reagents for VR EF Research

Implementing a rigorous VR-based executive function research program requires specific technological components and methodological considerations. The table below details key solutions and their functions.

Table 3: Key Research Reagent Solutions for VR Executive Function Research

| Tool / Solution | Function in VR EF Research | Representative Examples |

|---|---|---|

| Head-Mounted Display (HMD) | Provides immersive visual and auditory stimulation, creating a sense of presence in the virtual environment. | Oculus Rift, HTC Vive [19] [15] |

| VR Software Development Platform | Enables the creation of custom virtual environments and cognitive tasks tailored to specific research questions. | Unity, Unreal Engine |

| Traditional Neuropsychological Tests | Serves as the gold-standard for validating VR-based assessments through correlation analysis. | Trail Making Test (TMT), Stroop Color-Word Test (SCWT), Corsi Block-Tapping Task [12] [16] [11] |

| Cybersickness Questionnaire | Monitors adverse effects like dizziness and nausea that can confound cognitive performance data. | Simulator Sickness Questionnaire (SSQ) [11] |

| User Experience Questionnaire | Assesses subjective engagement, presence, and immersion, which are key mediators of intervention efficacy. | Custom or standardized usability scales [11] |

| Performance Data Logging | Automatically records objective, high-fidelity data on user behavior and task performance within the VR environment. | In-built software analytics capturing reaction time, errors, navigation paths [12] [13] |

When employing these tools, researchers must adhere to several critical methodological considerations. First, validation is paramount; any novel VR assessment must be rigorously validated against established traditional measures to ensure it accurately captures the intended cognitive construct [11]. Second, cybersickness must be proactively monitored and reported, as its symptoms can negatively impact cognitive performance and threaten the validity of the results [11]. Finally, the level of immersion (immersive, semi-immersive, non-immersive) should be selected based on the target population and clinical objectives, as it can significantly influence intervention outcomes [18].

The integration of virtual reality into the assessment and training of core executive functions represents a significant advancement in cognitive neuroscience and neuropsychology. Substantial evidence now confirms that VR-based tools demonstrate significant correlations with traditional measures, offering a unique combination of ecological validity, precise measurement, and heightened user engagement [12] [13] [15]. For researchers and drug development professionals, VR provides a sensitive and functionally relevant platform for detecting subtle cognitive changes and evaluating intervention efficacy. Future work should focus on standardizing VR protocols, establishing robust normative data, and further exploring the neural mechanisms underlying cognitive improvements driven by immersive experiences.

This comparison guide evaluates the performance of immersive Virtual Reality (VR) assessments of executive function against traditional neuropsychological tests. Framed within the broader thesis of psychometric validation for immersive VR, we synthesize current evidence demonstrating that VR-based assessments offer superior ecological validity by predicting real-world functional outcomes more effectively than conventional paper-and-pencil tests. Data from meta-analyses and controlled trials across clinical and healthy populations confirm that VR assessments show significant concurrent validity with traditional measures while capturing cognitive-behavioral complexities that traditional methods miss. This analysis provides researchers and drug development professionals with a evidence-based framework for adopting VR technologies that enhance the predictive power of neuropsychological evaluations.

Executive functions (EF)—higher-order cognitive processes including inhibition, cognitive flexibility, and working memory—are crucial for managing daily activities across various populations. Traditional neuropsychological assessments, such as the Trail Making Test (TMT), Stroop Color-Word Test (SCWT), and Wisconsin Card Sorting Test (WCST), have long been the gold standard for evaluating EF in clinical and research settings [12]. Despite their robust psychometric properties and widespread use, these traditional methods lack ecological validity, meaning they demonstrate poor generalizability to real-world functioning [12] [1]. This limitation arises because traditional tests abstract cognitive processes into isolated, non-contextual tasks that fail to simulate the complexity of everyday activities [12]. Consequently, they account for only 18% to 20% of the variance in everyday executive ability, creating a significant gap between clinic-based assessments and real-world cognitive functioning [1].

Immersive VR technology addresses this fundamental limitation by creating controlled, interactive environments that replicate real-world scenarios. By situating cognitive assessment within simulated daily activities—such as navigating a virtual kitchen or planning tasks in a virtual environment—VR-based assessments introduce representativeness (verisimilitude), a key component of ecological validity [1]. This paradigm shift enables more accurate prediction of functional outcomes, a critical requirement for both clinical diagnostics and evaluating cognitive outcomes in pharmaceutical trials.

Performance Comparison: VR vs. Traditional Assessment Modalities

The following analysis compares immersive VR-based executive function assessments against traditional paper-and-pencil tests across key psychometric and practical dimensions, synthesizing findings from recent meta-analyses and empirical studies.

Table 1: Comparative Analysis of Assessment Modalities

| Feature | Traditional Paper-and-Pencil Tests | Immersive VR-Based Assessments |

|---|---|---|

| Ecological Validity | Low; abstract tasks with limited real-world resemblance [1] | High; immersive simulations of daily activities [12] |

| EF Subcomponent Correlation | Moderate to strong correlations with VR measures (supported by meta-analysis) [12] | Significant correlations with traditional measures across all EF subcomponents [12] |

| Real-World Variance Accounted For | 18-20% of variance in everyday executive ability [1] | Substantially higher (precise percentage not quantified in results) |

| Contextual Control | Limited; standardized administration but lacking real-world context [12] | High; controlled environments simulating real-world challenges [1] |

| Assessment Capabilities | Primarily isolated cognitive functions [1] | Integrated cognitive-motor metrics in realistic scenarios [12] |

| Participant Engagement | Subject to boredom, fatigue, and variable effort [1] | Enhanced immersion and attention capture [1] |

| Data Collection Richness | Limited to accuracy, response time, and error counts | Multi-dimensional: movement tracking, decision pathways, and behavioral metrics |

Table 2: Quantitative Outcomes from Clinical and Healthy Populations

| Study Population | Intervention | Cognitive Domain | Traditional Measure Results | VR-Based Measure Results | Statistical Significance |

|---|---|---|---|---|---|

| PD-MCI [20] | 4-week iVR EF training | Prospective Memory | Improved in time-based and verbal-response tasks | Significant improvements sustained at 2-month follow-up | p < 0.05 |

| PD-MCI [20] | 4-week iVR EF training | Inhibition (Stroop Test) | Significant improvements post-intervention | Effects sustained at 2-month follow-up | p < 0.05 |

| Healthy Older Adults [20] | 4-week iVR EF training | Planning (Zoo Map Test) | Significant improvements post-intervention | Effects sustained at 2-month follow-up | p < 0.05 |

| Substance Use Disorders [21] | 6-week VR cognitive training | Global Memory | Statistically significant time × group interaction | F(1, 75) = 36.42, p < 0.001 | p < 0.001 |

| Substance Use Disorders [21] | 6-week VR cognitive training | Overall Executive Function | Statistically significant time × group interaction | F(1, 75) = 20.05, p < 0.001 | p < 0.001 |

Experimental Protocols and Methodologies

Meta-Analytic Validation of Concurrent Validity

A 2024 meta-analysis investigated the concurrent validity between VR-based and traditional neuropsychological assessments of executive function, focusing specifically on subcomponents including cognitive flexibility, attention, and inhibition [12].

Methodology:

- Literature Search: Systematic searches of PubMed, Web of Science, and ScienceDirect databases (2013-2023) identified 1605 articles, with 9 studies meeting full inclusion criteria after screening.

- Inclusion Criteria: Studies required to use both VR-based assessments and traditional executive function measures, provide sufficient correlation data, and be published in English as full-text articles.

- Quality Assessment: Two independent reviewers assessed study quality using the QUADAS-2 (Quality Assessment of Diagnostic Accuracy Studies 2) checklist.

- Data Analysis: Comprehensive Meta-Analysis Software (CMA) Version 3 was employed to examine associations. Pearson's r values were transformed into Fisher's z for analysis, with random-effects models applied due to high heterogeneity (I² > 50%).

- Sensitivity Analysis: Conducted to confirm robustness of findings after excluding lower-quality studies.

Key Findings: The analysis revealed statistically significant correlations between VR-based assessments and traditional measures across all executive function subcomponents, supporting the concurrent validity of VR assessments as alternatives to traditional methods [12].

VR Cognitive Training in Substance Use Disorders

A 2025 study evaluated the effectiveness of a 6-week VR-based cognitive training program (VRainSUD-VR) on neuropsychological outcomes in individuals with Substance Use Disorders (SUD) [21].

Methodology:

- Design: Non-randomized design with control group, pre- and post-test assessments, and convenience sampling.

- Participants: 47 patients with SUD assigned to either experimental group (EG: VRainSUD-VR + treatment as usual, n=25) or control group (CG: treatment as usual only, n=22).

- Intervention: The VRainSUD-VR program was implemented by trained psychologists from the treatment center over 6 weeks.

- Assessment: Cognitive and treatment outcomes (e.g., dropout rates) were assessed at pre- and post-test. Evaluation was conducted by different professionals than those implementing the intervention to reduce bias.

- Measures: Executive functioning, global memory, and processing speed were assessed using standardized neuropsychological measures.

- Statistical Analysis: Time × group interactions were analyzed using appropriate statistical methods with significance set at p < 0.05.

Key Findings: Statistically significant interactions were found for overall executive functioning [F(1, 75) = 20.05, p < 0.001] and global memory [F(1, 75) = 36.42, p < 0.001], indicating the effectiveness of the VR intervention for improving cognitive functions in SUD populations [21].

VR Cognitive Training in Parkinson's Disease and Healthy Aging

A 2025 multicenter, double-blind randomized controlled trial evaluated the efficacy of immersive VR cognitive training targeting executive functions in Parkinson's disease patients with mild cognitive impairment (PD-MCI) and healthy older adults (HC) [20].

Methodology:

- Design: Double-blind randomized controlled trial with 2-month follow-up.

- Participants: 30 PD-MCI patients randomized into cognitive training (PD-CT) or active placebo (PD-AP) groups; 30 age- and education-matched healthy controls assigned to cognitive training (HC-CT) or active placebo (HC-AP) groups.

- Intervention: 4-week executive function training delivered at home through a combined approach of telemedicine and immersive VR.

- Measures: Prospective memory (assessed using time-based and verbal-response tasks), executive functions (assessed using Stroop test, Zoo Map test), with effects tracked at post-intervention and 2-month follow-up.

- Statistical Analysis: Linear mixed-effects models (LME) and regression analyses to identify drivers of improvement.

Key Findings: The PD-CT group exhibited significant improvements in prospective memory and inhibition abilities (Stroop test) with effects sustained at 2-month follow-up. The HC-CT group showed improvements in planning abilities (Zoo Map test). Regression analyses revealed that prospective memory enhancements were primarily driven by improved inhibition and shifting abilities [20].

Conceptual Framework and Workflows

VR Assessment Predictive Advantage

VR Mechanisms for Real-World Prediction

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Research Reagent Solutions for VR Executive Function Assessment

| Tool/Reagent | Function/Application in VR EF Research |

|---|---|

| Immersive VR Head-Mounted Display (HMD) | Creates fully immersive 3D environments for ecological assessment; replaces real-world sensory input with controlled virtual stimuli [1]. |

| VR Executive Function Tasks (e.g., CAVIR) | Interactive virtual scenarios (e.g., kitchen tasks) that assess daily life cognitive functions; provide objective measurement of real-world functional capacity [12]. |

| Traditional Neuropsychological Batteries (D-KEFS, CANTAB) | Gold-standard reference measures for establishing concurrent validity of VR assessments; include tests such as Trail Making Test and Stroop Test [12]. |

| Cybersickness Monitoring Tools | Essential for controlling adverse effects (dizziness, nausea) that may confound cognitive performance metrics in VR environments [1]. |

| Telemedicine Integration Platforms | Enable remote administration of VR assessments and interventions; enhance accessibility for home-based testing and monitoring [20]. |

| Data Acquisition and Analytics Software | Captures multi-dimensional performance metrics (response accuracy, movement tracking, decision latency) for comprehensive cognitive profiling [12]. |

| User Experience Assessment Measures | Evaluate participant engagement, presence, and immersion factors that influence cognitive performance in VR environments [1]. |

The evidence synthesized in this comparison guide demonstrates that immersive VR-based assessments of executive function represent a significant advancement over traditional neuropsychological tests, primarily through their enhanced capacity for real-world functional prediction. Quantitative data across multiple clinical populations—including Parkinson's disease, substance use disorders, and healthy aging—consistently show that VR assessments maintain strong concurrent validity with traditional measures while addressing their fundamental limitation of ecological validity.

For researchers and drug development professionals, these findings have substantial implications. VR technologies offer a methodology for evaluating cognitive outcomes in clinical trials that more accurately predicts how patients will function in daily life, potentially providing more sensitive measures of treatment efficacy. The ability to detect subtle cognitive changes through immersive, ecologically valid assessments could accelerate the development of cognitive-enhancing interventions and provide more meaningful endpoints for clinical trials across neurological and psychiatric conditions.

Executive functions (EF) are higher-order cognitive processes essential for guiding, directing, and managing cognition, emotion, and behavior to achieve goals. These include core components such as inhibitory control, cognitive flexibility, and working memory, which collectively support complex functions like reasoning, planning, and problem-solving [1]. Traditional neuropsychological assessments of EF, while well-validated, face significant criticism for their limited ecological validity—they often fail to predict real-world functioning accurately because they isolate cognitive processes in artificial, controlled environments that lack the dynamic complexity of daily life [1] [22]. This limitation is particularly problematic for detecting subtle cognitive impairments in early-stage or mild conditions, where accurate assessment is crucial for timely intervention [23] [24].

Immersive Virtual Reality (VR) has emerged as a transformative tool for EF assessment, addressing these limitations by creating ecologically valid testing environments. By using head-mounted displays (HMDs) to simulate realistic, multi-sensory scenarios, immersive VR can reproduce the cognitive demands of everyday activities within a controlled, standardized setting [1] [22]. This review systematically evaluates the current landscape of immersive VR-based EF assessments, examining their psychometric properties, comparative efficacy against traditional tools, methodological protocols, and implementation frameworks. The analysis aims to provide researchers and clinicians with a comprehensive evidence base for adopting VR technologies in both clinical practice and cognitive neuroscience research.

Psychometric Properties of Immersive VR EF Assessments

The validation of immersive VR tools for EF assessment involves evaluating key psychometric properties, including construct validity, ecological validity, sensitivity, and reliability. Construct validity is frequently established by correlating VR task performance with traditional neuropsychological tests measuring similar EF constructs. For instance, a study comparing a VR-Stroop task to the Delis-Kaplan Executive Function System (D-KEFS) Color-Word Interference Test found a strong association between the two, supporting the VR task's construct validity for assessing inhibition [22]. Similarly, the EXIT 360° tool demonstrated significant correlations with conventional paper-and-pencil tests like the Trail Making Test and Stroop Test, confirming its convergent validity [23].

Ecological validity—the degree to which test performance predicts real-world functioning—is a major advantage of VR assessments. Studies consistently show that VR-based measures correlate more strongly with everyday executive functioning and parent-rated behavior questionnaires than traditional tests. In adolescent populations, VR-Stroop task performance was a better predictor of daily EF challenges than paper-and-pencil inhibition tests, capturing real-world cognitive demands more effectively [22]. The Virtual Action Planning-Supermarket (VAP-S), a shopping task, detected executive difficulties in multiple sclerosis patients that were missed by traditional tests, demonstrating superior generalizability to daily life [24].

Sensitivity refers to an instrument's ability to detect subtle cognitive deficits. VR tools often show enhanced sensitivity in identifying mild EF impairments in populations such as Parkinson's disease (PD), multiple sclerosis (MS), and mild cognitive impairment (MCI). For example, the EXIT 360° tool distinguished PD patients from healthy controls with higher diagnostic accuracy than traditional tests, indicating its sensitivity to early executive dysfunction [23]. In MS patients, the VAP-S revealed significant impairments in task efficiency during the familiarization phase, whereas traditional tests showed no group differences, highlighting VR's capacity to detect minor executive deficits [24].

Reliability evidence for VR EF assessments, including test-retest reliability and internal consistency, is less frequently reported. A systematic review noted that many studies fail to address psychometric properties comprehensively, with limited data on reliability [1]. However, tools like Nesplora Aquarium have demonstrated robust validity and reduced test fatigue, suggesting potential for reliable measurement [25]. Future studies should prioritize evaluating and reporting reliability metrics to strengthen the psychometric foundation of VR assessments.

Table 1: Key Psychometric Properties of Select Immersive VR EF Assessments

| VR Assessment Tool | Target EF Constructs | Validation Population | Construct Validity Evidence | Ecological Validity Evidence |

|---|---|---|---|---|

| EXIT 360° [23] | Planning, decision-making, problem-solving, working memory | Parkinson's Disease (PD), Healthy Controls | Correlation with TMT, Stroop Test, FAB | High discriminant accuracy for PD vs. controls |

| VR-Stroop (ClinicaVR) [22] | Inhibitory control, Attention | Adolescents, Healthy Adults | Correlation with D-KEFS Color-Word Interference Test | Better predictor of daily EF (BRIEF) than traditional Stroop |

| VAP-S [24] | Planning, multitasking, cognitive flexibility | Multiple Sclerosis (MS), Stroke | Correlations with traditional executive tests (TMT, Verbal Fluency) | Detects everyday executive problems not captured by traditional tests |

| VR Cubism [26] | Visuospatial reasoning, problem-solving, planning | Older Adults, Mild Cognitive Impairment (MCI) | N/A (Feasibility Study) | High usability and acceptance for community-dwelling older adults |

| Nesplora Aquarium [25] | Attention, Working Memory | Adults | Correlation with traditional DST and CBT | High ecological validity, reduced test fatigue |

Comparative Efficacy: Immersive VR vs. Traditional Tools

Detection Sensitivity and Diagnostic Accuracy

Immersive VR assessments frequently demonstrate superior sensitivity in identifying EF impairments compared to traditional pencil-and-paper tests, particularly in early-stage or subtle cognitive disorders. In a study with relapsing-remitting multiple sclerosis (RRMS) patients, the VAP-S shopping task revealed significant differences in performance metrics—including longer trajectory distance, increased task duration, and more stops—during the familiarization phase, whereas a battery of traditional neuropsychological tests showed no significant differences between patients and healthy controls [24]. Similarly, the EXIT 360° assessment demonstrated higher diagnostic accuracy in distinguishing individuals with Parkinson's disease from healthy controls compared to traditional tests, suggesting that VR tools are better equipped to detect the subtle executive deficits that characterize the early stages of neurodegenerative diseases [23].

Ecological Validity and Predictive Power

A key advantage of immersive VR is its enhanced ecological validity, meaning test performance more accurately predicts real-world functioning. A study with adolescents found that performance on a VR-Stroop task was a more accurate reflection of everyday executive functioning, as reported by parents on the Behavioral Rating Inventory of Executive Function (BRIEF), than a traditional pencil-and-paper Stroop test [22]. This indicates that VR environments, which incorporate realistic distractions and multi-step tasks, better mimic the cognitive challenges of daily life, thereby improving the generalizability of assessment results.

Influence of Demographic and Confounding Factors

Traditional neuropsychological test performance can be influenced by factors such as age, education, and prior experience with computers or tests. Interestingly, VR assessments appear to be more resilient to some of these confounding variables. One study found that performance on PC-based versions of cognitive tasks was influenced by age and computing experience, whereas performance on their VR counterparts was largely independent of these factors, with gaming experience being a minor predictor for only one task [25]. This resilience suggests that VR could provide a more equitable assessment platform for diverse populations, minimizing bias related to technological familiarity.

User Engagement and Test Experience

User experience is a critical component of effective assessment. Multiple studies report that immersive VR assessments are rated more highly than traditional methods on measures of enjoyment, engagement, and usability [26] [25]. The engaging, game-like nature of VR tasks can lead to higher participant motivation and reduced test anxiety [26]. Furthermore, VR formats have been shown to sustain attention and potentially reduce test fatigue, which is crucial for obtaining reliable results, especially in longer assessment batteries or with clinically fatigued populations like MS patients [25].

Table 2: Comparative Analysis: Immersive VR vs. Traditional EF Assessments

| Comparison Dimension | Immersive VR Assessments | Traditional Pencil-Paper/PC Assessments |

|---|---|---|

| Ecological Validity | High - Simulates real-world contexts and demands [1] [22] [24] | Low - Abstract, decontextualized tasks [1] [24] |

| Sensitivity to Mild Impairment | High - Detects subtle deficits in PD, MS, MCI [23] [24] | Limited - Less sensitive to early or subtle dysfunction [23] [24] |

| Stimulation Control | High - Standardized yet dynamic environments [1] [22] | High - Standardized but static and simplistic [22] |

| Influence of Demographics | Lower - Less affected by age and computer experience [25] | Higher - Affected by age, education, and test-taking experience [25] |

| User Engagement & Experience | High - Rated as more engaging, enjoyable, and usable [26] [25] | Variable - Can be perceived as repetitive or boring [25] |

| Key Advantage | Enhanced ecological validity and sensitivity for real-world prediction. | Well-established norms and extensive validation history. |

Methodological Protocols for VR EF Assessment

Common Experimental Paradigms and Workflows

Research utilizing immersive VR to assess executive functions typically follows a structured experimental workflow designed to ensure standardization, safety, and data integrity. The process generally begins with participant screening using established cognitive screeners like the Montreal Cognitive Assessment (MoCA) to characterize the sample and apply inclusion/exclusion criteria [23] [26]. This is often followed by a baseline assessment with traditional neuropsychological tests to enable convergent validity analyses [23] [24].

A critical next step is a VR familiarization phase, where participants are introduced to the HMD and the virtual environment without the pressure of formal assessment. This phase helps mitigate the effects of novelty and allows researchers to monitor for cybersickness [23] [24]. Participants then undertake the core VR assessment task, which is typically a simulated daily activity requiring the integration of multiple executive functions.

The following diagram illustrates a generalized experimental workflow, integrating common elements from the reviewed studies [23] [26] [24]:

Experimental Workflow for VR EF Assessment

Key VR EF Tasks and Measured Outcomes

The reviewed studies employ a variety of VR paradigms designed to mimic real-world activities. The EXIT 360° assessment immerses users in a household environment where they must complete a series of seven everyday subtasks (e.g., unlocking a door, choosing a person) to escape the house. It provides metrics such as a Total Score (based on correct/incorrect answers) and Total Reaction Time, assessing planning, decision-making, and problem-solving [23]. The Virtual Action Planning-Supermarket (VAP-S) requires participants to navigate a virtual supermarket and collect specific grocery items according to a list. Key outcome measures include total test duration, distance traveled, number of incorrect actions, and number of stops, which index planning efficiency, cognitive flexibility, and error monitoring [24]. The VR-Stroop task (within ClinicaVR: Classroom) presents color-word interference stimuli in a virtual classroom setting. It measures reaction time and commission errors to assess inhibitory control and attention in a context with realistic distractions [22]. VR Cubism, used as a cognitive stimulation activity, involves manipulating and assembling 3D puzzle pieces. While often used for training, its metrics of completion time and accuracy provide insights into visuospatial reasoning and problem-solving [26].

Technical Implementation and Research Toolkit

Successful implementation of immersive VR assessments requires a cohesive ecosystem of hardware, software, and measurement tools. This "Researcher's Toolkit" ensures the creation of standardized, engaging, and psychometrically sound assessment experiences.

Table 3: Essential Research Toolkit for Immersive VR EF Assessment

| Toolkit Component | Example Products/Platforms | Function in VR EF Research |

|---|---|---|

| Hardware: HMD | Meta Quest 2 [26], other mobile-powered headsets [23] | Presents immersive 360° environments; allows head-tracking for navigation. |

| Software: VR Platform | NeuroVirtual 3D [27] | Provides a free, open-source platform for building and running custom VR experiments without advanced programming. |

| Software: Specific Assessment | EXIT 360° [23], VAP-S [24], ClinicaVR [22], VR Cubism [26] | Implements standardized tasks for assessing specific EF components in ecological scenarios. |

| Validation: Traditional Tests | Trail Making Test (TMT), Stroop Test, FAB [23] [24] | Serves as a gold-standard reference for establishing convergent validity of the VR tool. |

| Measurement: UX/Cybersickness | System Usability Scale (SUS) [23] [26], iUXVR questionnaire [28], SSQ [22] | Quantifies usability, sense of presence, aesthetic experience, and adverse effects like nausea. |

| Data Collection: Biosensors | EEG, Zephyr Bioharness [27] | Records psychophysiological data (e.g., EEG, heart rate) synchronized with in-task events for enhanced sensitivity. |

The technology stack often begins with a head-mounted display like the Meta Quest 2, which provides a stand-alone, accessible immersive experience [26]. For creating custom paradigms, platforms like NeuroVirtual 3D are critical. This open-source software allows clinicians and researchers to build and modify virtual environments using a drag-and-drop interface without needing advanced programming skills, significantly improving accessibility [27]. The platform supports integration with various input devices and biosensors, enabling the collection of rich, multi-modal data.

A crucial, though often under-reported, aspect of the toolkit is the protocol for monitoring cybersickness and evaluating the user experience (UX). Specialized questionnaires like the iUXVR [28] and the System Usability Scale (SUS) [23] [26] are essential for ensuring that the VR tool is not only effective but also acceptable and safe for the target population, which is a prerequisite for valid assessment.

Limitations and Future Research Directions

Despite the promising potential of immersive VR for EF assessment, the field faces several challenges. A significant limitation is the inconsistent reporting of psychometric properties. A systematic review highlighted that many studies fail to adequately address construct validity, test-retest reliability, and internal consistency, raising concerns about the practical utility of some VR tools [1]. Furthermore, cybersickness—a form of motion sickness induced by VR—remains a barrier, potentially confounding cognitive performance metrics. Alarmingly, only 21% of studies in a recent review evaluated cybersickness, and only 26% assessed user experience [1].

Future research should prioritize the standardization and psychometric validation of existing VR tools. Larger sample sizes and multi-center studies are needed to establish robust normative data [1]. There is also a need to explore the integration of biosensors (e.g., EEG, eye-tracking, heart rate monitors) with VR systems to capture real-time, objective physiological correlates of cognitive effort and performance, potentially increasing the sensitivity of assessments [1] [27]. Finally, longitudinal studies are required to determine the prognostic value of VR assessments for tracking cognitive decline or evaluating response to intervention in neurodegenerative and neurodevelopmental disorders [23] [24].

Immersive VR represents a paradigm shift in the neuropsychological assessment of executive functions. By combining the ecological validity of real-world tasks with the rigorous control of a laboratory setting, VR tools offer a powerful and engaging method for detecting subtle cognitive impairments that traditional tests often miss. Evidence to date confirms that these assessments demonstrate good construct and ecological validity, often outperforming traditional tools in predicting daily functioning and showing resilience to demographic confounds.

However, for VR to achieve widespread clinical adoption, the field must address key challenges, including the standardization of protocols, comprehensive psychometric evaluation, and vigilant monitoring of cybersickness. Future work focusing on multi-modal data integration and longitudinal validation will solidify the role of immersive VR as an indispensable component of the cognitive assessment toolkit, ultimately enabling earlier diagnosis and more personalized cognitive interventions.

Building Validated Tools: From Development to Application in Clinical and Research Populations

Selecting appropriate technological foundations is crucial for developing valid and reliable immersive virtual reality assessments of executive function. This guide provides an objective comparison of head-mounted display options and development platforms based on current research evidence and methodological requirements for psychometric validation.

Head-Mounted Display Technologies: Comparative Analysis

Table 1: Technical Specifications and Application Contexts of HMD Technologies

| HMD Type | Technical Features | Research Advantages | Limitations & Considerations | Best Application Context |

|---|---|---|---|---|

| Standalone VR (Oculus Quest, Vive Focus Plus, Pico Neo) | 6-degrees-of-freedom tracking, inside-out tracking, mobile processors [29] | Wireless operation, accessible pricing, suitable for home-based assessments [29] | Potential processing limitations for complex scenes, limited battery life | Large-scale studies, remote data collection, home-based assessments [29] |

| PC-Connected VR (HTC Vive Pro) | External tracking, high-resolution displays, GPU processing [30] | Higher fidelity graphics, precise tracking, lower latency [31] | Tethered operation, higher cost, complex setup | Laboratory settings requiring maximum visual fidelity [30] |

| Augmented Reality (Microsoft HoloLens) | See-through displays, spatial mapping, gesture recognition [32] | Maintains connection to physical environment, reduces simulator sickness [32] | Limited field of view, higher cost, visual perception differences [30] | Assessments requiring interaction with real-world objects [32] |

| Smartphone-Powered HMD | 360° video delivery, head movement tracking, accessible technology [33] | Low cost, high accessibility, reduced simulation sickness [33] | Limited interactivity, graphic simplicity | Screening tools, large-scale assessments, low-budget research [33] |

Research indicates significant perceptual differences between AR and VR environments that must be considered during assessment design. A 2023 psychophysical study demonstrated users are more sensitive to size discrimination in VR (JND: 6) compared to video see-through AR (JND: 17.05), with virtual objects perceived as larger in VR environments [30]. These perceptual variations can influence assessment outcomes and must be accounted for during task design and interpretation.

The choice between HMDs and flat screen displays (FSD) involves trade-offs between immersion and practicality. Studies comparing HMD and FSD versions of the same executive function assessment found that while children preferred HMDs and reported higher presence, FSD versions provided viable performance measures suitable for remote testing [31]. This suggests that technological selection should align with specific research questions and practical constraints rather than assuming higher immersion always yields superior data.

Development Platforms and Software Considerations

Table 2: Development Approach Comparison for Executive Function Assessments

| Development Approach | Implementation Requirements | Advantages | Validation Evidence | Ideal Use Case |

|---|---|---|---|---|

| Custom-Built Native Applications | C#/C++ programming, game engine expertise, 3D modeling [29] | Maximum customization, optimal performance, full control over data collection [34] | Stronger psychometric properties when properly validated [33] | Large-scale studies with specific technical requirements [34] |

| Game Engine-Based Solutions (Unity, Unreal) | Visual scripting, asset management, platform deployment tools [34] | Rapid prototyping, cross-platform deployment, extensive documentation [34] | Mixed evidence; depends on implementation quality [1] | Most academic research contexts, proof-of-concept studies [34] |

| 360° Video Environments | 360° camera equipment, video editing software, basic HMD integration [33] | Rapid development, high ecological validity for specific scenarios, reduced simulation sickness [33] | Demonstrated validity in EXIT 360° for Parkinson's disease assessment [33] | Situational assessments with limited interaction requirements [33] |

| Adapted Traditional Tests | Basic VR development skills, understanding of original test principles [12] | Direct comparison to existing literature, easier validation against established measures [12] | Good concurrent validity with traditional measures (r=0.4-0.7) [12] | Initial VR validation studies, bridging traditional and novel methods [12] |

When developing VR-based assessments that may be classified as medical devices, researchers must consider regulatory requirements early in the development process. According to medical device standards, software that performs "patient-specific analysis and provides patient-specific diagnosis or treatment recommendations" typically falls under regulatory oversight [29]. Implementing quality management systems and risk management processes during development can prevent costly rework and facilitate regulatory approval when necessary [29].

The international VR-CORE working group recommends a structured framework for developing therapeutic VR applications, mirroring the FDA Phase I-III pharmacotherapy model [34]. This includes:

- VR1 Studies: Focus on content development using human-centered design principles with patient and provider input

- VR2 Trials: Evaluate feasibility, acceptability, tolerability, and initial clinical efficacy

- VR3 Trials: Randomized controlled studies comparing VR interventions to control conditions [34]

Experimental Protocols for Validation Studies

Figure 1: Experimental Workflow for VR Assessment Validation

A 2024 meta-analysis established robust concurrent validity between VR-based assessments and traditional executive function measures across multiple cognitive domains, with significant correlations for cognitive flexibility, attention, and inhibition [12]. This supports VR assessments as valid alternatives to traditional methods when properly validated.

The EXIT 360° assessment protocol provides a exemplary methodology for comprehensive validation:

- Participant Preparation: Familiarization phase with the device and virtual environment to control for adverse effects [33]

- Assessment Structure: Seven subtasks performed in 360° domestic environments assessing multiple executive function components simultaneously [33]

- Data Collection: Total score (range 7-14) and total reaction time across all tasks [33]

- Usability Assessment: System Usability Scale administered post-assessment to evaluate technological acceptability [33]

This protocol successfully distinguished people with Parkinson's Disease from healthy controls with higher diagnostic accuracy than traditional neuropsychological tests, demonstrating the sensitivity of well-designed VR assessments [33].

Implementation Challenges and Methodological Considerations

Table 3: Research Reagent Solutions for VR Executive Function Assessment

| Research Need | Solution Options | Functional Purpose | Implementation Example |

|---|---|---|---|

| Ecological Validity | 360° video environments, interactive virtual scenarios, real-world task simulation [33] | Increases generalizability to daily functioning, enhances test sensitivity [1] | EXIT 360° household environments for Parkinson's assessment [33] |

| Adverse Effects Monitoring | Simulator Sickness Questionnaire, System Usability Scale, continuous symptom assessment [1] | Ensures participant safety, maintains data quality, controls for confounding variables [1] | Pre-assessment familiarization phase in EXIT 360° protocol [33] |

| Motor Response Capture | Inertial measurement units, controller tracking, hand tracking, eye tracking [32] | Enables naturalistic movement assessment, provides multi-modal data sources [32] | HMD-WRIT test using IMU for functional mobility assessment [32] |

| Data Integration & Analysis | Automated scoring algorithms, performance metrics, integration with traditional tests [12] | Facilitates comparison with established measures, enables multi-modal analysis [12] | Correlation analysis between VR metrics and traditional EF tests [12] |

Figure 2: Psychometric Validation Framework for VR Assessments

Critical methodological considerations emerge from recent systematic reviews. Only 21% of VR assessment studies adequately evaluated cybersickness, and just 26% included comprehensive user experience assessments [1]. This represents a significant methodological gap, as cybersickness can negatively correlate with cognitive task performance (r=-0.32) and threaten assessment validity [1].

The task impurity problem presents another key consideration, where scores on executive function tasks reflect variance from multiple cognitive processes beyond the targeted construct [1]. VR assessments can address this through careful task design that isolates specific executive components while maintaining ecological validity.

Based on current evidence, researchers should:

- Align technology selection with research questions - Consider whether the enhanced immersion of HMDs is necessary or if FSD implementations would suffice given their remote testing capabilities [31]

- Implement comprehensive adverse effects monitoring - Systematically assess and report cybersickness symptoms to ensure data quality [1]

- Follow structured validation frameworks - Adopt phased approaches (VR1-VR3) that incorporate human-centered design and rigorous psychometric evaluation [34]

- Address perceptual differences between platforms - Account for known variations in size perception and depth estimation between AR and VR environments [30]

The rapidly evolving landscape of HMD technologies and development platforms offers unprecedented opportunities for ecologically valid executive function assessment. By making informed decisions based on current evidence and methodological best practices, researchers can develop validated tools that advance our understanding of cognitive functioning in health and disease.

The psychometric validation of executive function (EF) assessments is undergoing a transformative shift with the integration of immersive virtual reality (VR). Traditional neuropsychological assessments, while valuable, suffer from significant limitations in ecological validity—they lack similarity to real-world tasks and fail to simulate the complexity of everyday activities [12]. Executive functions, the high-level cognitive processes essential for goal-directed behavior, are crucial for managing complex daily activities, and impairments significantly impact disease management, academic performance, and independent living [35]. The emerging paradigm utilizes VR technology to create controlled yet ecologically rich environments that mirror real-life cognitive challenges, enabling more accurate assessment of executive functions like planning, cognitive flexibility, and inhibition [12] [35]. This guide objectively compares current VR-based assessment platforms through the critical lens of psychometric validation, providing researchers and drug development professionals with experimental data and methodologies advancing the field.

Experimental Foundations: Protocols and Validation Metrics

Key Study Methodologies in VR Assessment Research

VRainSUD Usability Protocol (Substance Use Disorders) This study employed a usability testing framework with 17 patients receiving inpatient treatment for Substance Use Disorders (SUD) at an Addiction Treatment Center [36]. Participants completed nine distinct cognitive training tasks targeting memory, executive functioning, and processing speed within the VR platform. Methodology included quantitative key performance indicator (KPI) tracking—notably time to complete tasks—coupled with researcher observations. Post-session, participants completed a survey and the standardized Post-Study System Usability Questionnaire (PSSUQ), which uses a 7-point Likert scale (1=Strongly Agree, 7=Strongly Disagree) to measure system usefulness, information quality, and interface quality [36]. Statistical analysis utilized descriptive statistics and ANOVA tests to analyze completion times and PSSUQ scores across demographic variables.

Nesplora Ice Cream Normative Study Protocol (Neurodegenerative Populations) This research established normative data for a VR-based executive function assessment in 419 healthy adults aged 17-80 [35]. The study employed a cross-sectional normative design with participants recruited from nine testing sites. The Nesplora Ice Cream test presented participants with a virtual scenario requiring the operation of an ice cream truck, embedding executive function tasks within an ecologically valid context. Researchers utilized empirical analysis to identify key EF factors and cluster analysis to define age groups. Confirmatory factor analysis validated the test's three-factor structure (planning, learning, and flexibility), while descriptive statistics provided normative baselines based on age and gender [35].

Meta-Analytic Validation Protocol A comprehensive meta-analysis investigated the concurrent validity between VR-based and traditional neuropsychological assessments of executive function [12]. Following PRISMA guidelines, researchers systematically reviewed 1,605 articles from PubMed, Web of Science, and ScienceDirect (2013-2023), ultimately including nine studies that met strict inclusion criteria. The analysis focused on correlation coefficients (Pearson's r) between VR assessments and traditional measures like the Trail Making Test (TMT) and Stroop Color-Word Test (SCWT). Effect sizes were calculated using Comprehensive Meta-Analysis Software, with Pearson's r values transformed to Fisher's z for analysis. Heterogeneity was assessed using I², with random-effects models applied when appropriate [12].

Quantitative Validation Data from Key Studies

Table 1: Psychometric Performance Metrics of VR Assessment Platforms

| Assessment Platform | Study Population | Sample Size | Primary Validation Method | Key Quantitative Results |

|---|---|---|---|---|

| VRainSUD [36] | SUD Patients | 17 | Usability Testing (PSSUQ) | Total PSSUQ: 2.72 ± 1.92; System Usefulness: 1.76 ± 1.37; Information Quality: 3.00 ± 1.95 |

| Nesplora Ice Cream [35] | Healthy Adults (17-80 years) | 419 | Normative Data Collection | Three-factor structure confirmed (Planning, Learning, Flexibility); No significant gender differences found |

| VR Executive Function Assessment [12] | Mixed (Children to Older Adults) | 9 Studies (Meta-analysis) | Concurrent Validity | Statistically significant correlations with traditional measures across all EF subcomponents |

Table 2: Cognitive Domains Targeted by VR Assessment Scenarios

| Platform/Scenario | Target Population | Primary Cognitive Domains Assessed | Ecological Context | Psychometric Advantages |

|---|---|---|---|---|

| VRainSUD [36] | Substance Use Disorders | Memory, Executive Function, Processing Speed | Personalized cognitive training tasks | High usability scores; Quickly adapted by VR-naive patients |

| Nesplora Ice Cream [35] | Neurodegenerative Conditions | Planning, Learning, Cognitive Flexibility | Operating a virtual ice cream truck | Established normative data; High ecological validity |

| CAVIR [12] | Mood Disorders, Psychosis | Daily Life Cognitive Functions | Interactive VR kitchen scenario | Correlates with TMT-B, CANTAB, Fluency test |

Visualization of Experimental Workflows

VR Assessment Validation Workflow

VRainSUD Development and Testing Pipeline

The Researcher's Toolkit: Essential Materials and Methods

Table 3: Research Reagent Solutions for VR Assessment Development

| Tool Category | Specific Tools/Platforms | Research Function | Key Characteristics |

|---|---|---|---|

| VR Hardware | Oculus Quest 2 [36] | Standalone VR immersion | Wireless freedom, inside-out tracking, Android 10 OS |

| Game Engines | Unreal Engine 4.27.2 [36] | VR environment development | Blueprints scripting, scalable architecture, visual engagement |

| Assessment Interfaces | CAVIR (VR kitchen scenario) [12] | Daily life cognitive function assessment | Interactive virtual environment, real-world task simulation |

| Usability Metrics | Post-Study System Usability Questionnaire (PSSUQ) [36] | Perceived system satisfaction | 19-item, 7-point Likert scale, three subscales (system usefulness, information quality, interface quality) |

| Traditional Validation Measures | Trail Making Test (TMT), Stroop Color-Word Test (SCWT) [12] | Concurrent validity assessment | Established psychometric properties, gold standard comparison |

| Statistical Analysis Tools | IBM SPSS Version 28 [36], Comprehensive Meta-Analysis Software [12] | Data analysis and meta-analysis | Quantitative statistical testing, effect size calculation, heterogeneity assessment |

Comparative Analysis of VR Assessment Approaches

Psychometric Validation Across Platforms

The search for ecological validity in executive function assessment has yielded distinct VR approaches across clinical populations. For Substance Use Disorders, VRainSUD demonstrates that usability and patient engagement are critical initial validation steps. The platform achieved strong system usefulness scores (1.76 ± 1.92 on PSSUQ), with participants quickly adapting to VR controllers despite limited prior experience [36]. This engagement factor is particularly valuable for SUD populations where cognitive deficits increase relapse likelihood and treatment adherence is crucial [36].

For neurodegenerative and general clinical applications, the Nesplora Ice Cream test emphasizes normative data collection and factor structure validation. The confirmation of its three-factor structure (planning, learning, and flexibility) provides a robust psychometric foundation for detecting executive dysfunction across the adult lifespan [35]. The establishment of age-stratified normative data enables clinicians to identify meaningful deviations from expected performance levels, crucial for early detection of neurodegenerative conditions.

The meta-analytic evidence confirms that VR-based assessments demonstrate statistically significant correlations with traditional measures across all executive function subcomponents, including cognitive flexibility, attention, and inhibition [12]. This concurrent validity supports VR's use as a viable alternative to traditional assessments while offering superior ecological validity through real-world activity simulation in controlled virtual environments.

Methodological Considerations for Research Applications

Population-Specific Customization: Effective VR scenarios must address unique population characteristics. SUD patients responded positively to cognitive training tasks but required additional instructions for certain exercises [36], suggesting the need for adaptable difficulty and clarity in task instructions. For neurodegenerative populations, the Nesplora test's successful application across a wide age range (17-80) demonstrates the importance of accounting for developmental and degenerative cognitive changes in normative data [35].

Technical Implementation Factors: The selection of appropriate hardware and software platforms significantly impacts research outcomes. The Oculus Quest 2 provided sufficient mobility and graphical capability for immersive cognitive training [36], while Unreal Engine's Blueprints scripting system enabled sustainable, compartmentalized logic structures [36]. These technical decisions directly influence both participant experience and the scalability of research implementations.

Validation Framework Design: Comprehensive VR assessment validation requires multi-method approaches combining traditional neuropsychological measures, usability metrics, and ecological behavioral observations. The integration of quantitative performance data (task completion time, accuracy) with qualitative feedback creates a complete validity picture, addressing both psychometric rigor and real-world applicability [36] [12] [35].

The comparative analysis of VR assessment platforms reveals a field maturing toward standardized psychometric validation while maintaining innovation in ecological scenario design. The documented success of VRainSUD in SUD treatment and the Nesplora Ice Cream test in normative population assessment demonstrates that ecological validity and psychometric rigor are not mutually exclusive goals. For researchers and drug development professionals, these platforms offer promising methodologies for detecting subtle executive function changes in clinical trials and treatment outcomes monitoring. Future development should focus on standardizing validation protocols across diverse populations, enhancing personalization algorithms based on individual cognitive profiles, and establishing international normative databases for VR-based cognitive assessment. As the technology continues to evolve, VR scenarios promise to bridge the critical gap between laboratory assessment and real-world cognitive functioning, ultimately improving early detection and intervention for both substance use and neurodegenerative conditions.

Executive functions (EFs) represent a set of higher-order cognitive processes—including planning, cognitive flexibility, inhibitory control, and working memory—that are essential for goal-directed behavior and functional independence [35] [10]. The psychometric validation of immersive virtual reality (VR) assessments for these functions represents a significant advancement in neuropsychological measurement, addressing critical limitations of traditional paper-and-pencil tests. Traditional assessments often lack ecological validity, failing to capture the complexity of real-world cognitive challenges that individuals face daily [35]. VR technology bridges this gap by creating immersive, interactive environments that simulate real-life situations, thus offering a more comprehensive evaluation of cognitive abilities within context-rich scenarios [35].

Within this innovative framework, Key Performance Indicators (KPIs) serve as the fundamental metrics for quantifying cognitive performance in virtual environments. Accuracy, reaction time, and error rates provide objective, quantifiable data essential for establishing the reliability, validity, and sensitivity of VR-based assessments. For researchers, scientists, and drug development professionals, these KPIs are not merely performance scores but crucial biomarkers that can detect subtle cognitive changes, track disease progression, and measure therapeutic intervention efficacy [10]. The precise measurement of these indicators allows for the development of robust normative data and the identification of clinically significant impairments in conditions such as traumatic brain injury, attention-deficit/hyperactivity disorder (ADHD), Alzheimer's disease, and Parkinson's disease [35].

This article provides a comparative analysis of current VR executive function assessments, focusing on their experimental protocols, psychometric properties, and the key performance indicators they employ. By framing this discussion within the broader context of psychometric validation, we aim to establish a rigorous foundation for evaluating the scientific merit and clinical utility of these emerging digital assessment tools.

Comparative Analysis of VR Executive Function Assessments

The following analysis compares two prominent computerized cognitive assessments alongside established VR tools, evaluating their methodologies, key performance indicators, and psychometric properties. This comparison highlights the distinct approaches to measuring cognitive constructs in digital environments.

Table 1: Comparative Analysis of Executive Function Assessments

| Assessment Tool | Primary Cognitive Constructs Measured | Key Performance Indicators (KPIs) | Administration Format | Reported Psychometric Data |

|---|---|---|---|---|

| Nesplora Ice Cream Test [35] | Planning, Learning, Flexibility | Factor scores for core EF components; Descriptive norms by age & gender. | Virtual Reality (Immersive) | Validity: Confirmatory Factor Analysis supported 3-factor structure.Reliability: High internal consistency.Norms: Data from 419 participants (ages 17-80). |

| Freeze Frame Assessment [10] | Inhibitory Control, Sustained Attention | Accuracy thresholds (1-7), Commission/omission errors, Mean reaction time. | Computerized (Flat Screen) | Validity: Modest association with NIH EXAMINER (accounted for 6.8% of variance).Usability: Average completion time of ~4 minutes. |