Resolving Vestibular Conflicts in Virtual Reality: A Neuroscience Framework for Research and Therapy

This article synthesizes current research on vestibular-sensory conflicts in virtual reality (VR) environments, a critical challenge in neuroscience research and clinical applications.

Resolving Vestibular Conflicts in Virtual Reality: A Neuroscience Framework for Research and Therapy

Abstract

This article synthesizes current research on vestibular-sensory conflicts in virtual reality (VR) environments, a critical challenge in neuroscience research and clinical applications. We explore the foundational mechanisms of sensory conflict and integration, detailing how discrepancies between visual, vestibular, and proprioceptive inputs induce cybersickness and balance disturbances. The content examines innovative methodological approaches, including galvanic vestibular stimulation (GVS) and machine learning diagnostics, that are advancing both the study and management of vestibular dysfunction. Practical troubleshooting strategies for optimizing VR experimental protocols and mitigating adverse effects are presented, alongside comparative validation of VR against conventional vestibular assessment tools. This comprehensive resource equips researchers and clinical professionals with evidence-based frameworks for designing VR experiments that account for vestibular conflicts while leveraging these insights for therapeutic innovation.

The Neuroscience of Sensory Conflict: Understanding Vestibular-Visual Mismatch in VR

Frequently Asked Questions (FAQs) for Researchers

Q1: What is sensory conflict theory in the context of VR? Sensory conflict theory is the most cited and widely accepted explanation for motion sickness in virtual environments. It proposes that discomfort arises from a mismatch or incongruence between afferent signals from the visual, vestibular, and somatic sensory systems and the brain's internal model of expected sensory patterns based on past experience [1] [2]. In VR, this often manifests as a visual-vestibular conflict: your eyes signal that you are moving through the virtual world, while your vestibular system in the inner ear reports that your body is stationary [3] [2] [4].

Q2: What are the common symptoms, and how are they measured in experimental settings? Symptoms, collectively known as cybersickness or visually induced motion sickness (VIMS), include nausea, dizziness, headaches, oculomotor strain, disorientation, and general discomfort [1] [3] [2]. In research, the most common tool for subjective measurement is the Simulator Sickness Questionnaire (SSQ), which quantifies symptoms across sub-scales like nausea, oculomotor, and disorientation [1] [3]. Objective measures include electroencephalography (EEG), which can detect increases in slow-wave (Delta, Theta) power in temporo-occipital regions correlated with increasing discomfort [3].

Q3: Can improving hardware specifications alone eliminate VR-induced discomfort? While advanced hardware is crucial, it is often insufficient on its own. Hardware improvements like high-resolution displays, low-latency tracking (<20ms), and full 6-degree-of-freedom (6DOF) headsets reduce visual delays and improve immersion, thereby lessening conflict [5]. However, they cannot fully resolve the fundamental locomotion-based sensory mismatch that occurs when a user's visual system perceives motion while their body is physically stationary. Addressing this requires a systems-level approach that includes hardware, software, and experimental design [5].

Q4: What are some experimental methodologies to mitigate sensory conflict? Research points to several effective methodologies:

- Motion Coupling: Using motion platforms to provide synchronized vestibular cues that match the visual flow. Studies show that synchronized visual-vestibular motion is the most enjoyable condition and significantly reduces subjective misery scores [1].

- Providing Visual Anchors: Incorporating a self-referenced visual element, like an artificial horizon or a virtual nose, can provide a stable frame of reference and reduce conflict [1].

- Graded Exposure: Starting with short, simple VR sessions and gradually increasing duration and complexity can help users adapt [6].

Troubleshooting Guide for Common Experimental Issues

| Problem & Symptom | Potential Root Cause | Recommended Solution for Researchers |

|---|---|---|

| Severe nausea and dizziness in participants [2]• High SSQ nausea scores.• Participant reports of vertigo. | Strong visual-vestibular conflict; Vection (illusion of self-motion) without corresponding physical motion [4]. | 1. Integrate a motion platform for synchronized vestibular stimulation [1].2. Add a stable visual reference (e.g., a cockpit, an artificial horizon) to the virtual scene [1].3. Reduce vection intensity by slowing down visual flow speeds. |

| Rapid onset of headaches and eye strain [3]• High SSQ oculomotor scores.• Observations of squinting. | High latency between physical head movement and visual update; Poor gaze stabilization; Overly complex graphics [6] [5]. | 1. Verify system latency is below 20ms [5].2. Simplify visual stimuli for initial experiments, using muted colors and simple shapes [6].3. Ensure the frame rate is high and stable to prevent flicker. |

| General disorientation and postural instability [2] [6]• High SSQ disorientation scores.• Swaying observed in participants. | Sensory mismatch leading to postural control issues; Lack of proprioceptive feedback. | 1. Have participants sit down during experiments to enhance postural stability [5].2. For locomotion, use omnidirectional treadmills (ODTs) to align proprioceptive and vestibular cues with visual motion [5].3. Implement controlled, rest breaks between experimental blocks to reset sensory integration [3]. |

| Participant drop-out due to intolerance | High individual susceptibility; Lack of adaptation period. | 1. Screen for susceptibility pre-trial using a brief VR exposure and SSQ.2. Design a habituation protocol with multiple, short sessions that gradually increase in intensity [6].3. Allow participants to control the pace of navigation where experimentally feasible. |

Key Experimental Protocols & Data

Protocol 1: Investigating Visual-Vestibular Synchronization

This methodology is adapted from a study using a motion-coupled VR system to directly test sensory conflict theory [1].

- Objective: To determine if synchronizing physical motion with visual roll cues reduces cybersickness compared to visual motion alone.

- Apparatus:

- VR System: Head-Mounted Display (HMD) with head-tracking (e.g., HTC VIVE).

- Motion Platform: A 3-Degree-of-Freedom (DoF) motion platform (e.g., Motion Systems PS-3TM-350 V3).

- Stabilization: Chair with headrest and seatbelt to constrain head movement and isolate vestibular stimuli.

- Experimental Conditions:

- Stationary: Visual rotation only (platform stationary).

- Synchronous: Visual-physical motion perfectly synchronized.

- Self-Referenced: Vestibular motion from the platform, but with a visually stable, self-referenced environment.

- Procedure:

- Participants are seated on the platform and equipped with the HMD.

- They are exposed to a visual stimulus simulating roll motion (like a merry-go-round) under the three conditions in a randomized order.

- Each condition lasts for a predefined period (e.g., 10-15 minutes).

- Subjective misery (MISC) and comfort levels are recorded after each condition. The Simulator Sickness Questionnaire (SSQ) is administered post-session.

- Key Findings: The synchronous condition was found to be the most enjoyable and led to a significant decrease in subjective cybersickness scores, confirming that reducing sensory conflict mitigates discomfort [1].

Protocol 2: EEG Investigation of Sensory Mismatch

This protocol uses EEG to study the neurophysiological correlates of increasing sensory conflict [3].

- Objective: To correlate the power of EEG frequency bands and information flow between brain areas with increasing levels of subjective VIMS.

- Apparatus:

- VR System: HMD with a head-tracked avatar.

- EEG System: High-density EEG (e.g., 128-channel).

- Procedure:

- Participants wear an EEG cap and HMD. A baseline EEG is recorded with eyes closed.

- In the VR environment, the participant's avatar is moved externally. The movement speed and freedom are increased stepwise according to a predefined protocol, creating increasing sensory mismatch.

- Participants are not allowed to move, ensuring a conflict between visual motion and vestibular stillness.

- After each movement period, a resting-state EEG is recorded, and the SSQ is administered.

- Data Analysis:

- Spectral Power: Calculate power in standard frequency bands (Delta, Theta, Alpha, Beta).

- Transfer Entropy (TE): A measure of directed information flow between different EEG channels.

- Key Findings: With increasing VIMS, the proportion of slow EEG waves (1–10 Hz) increases, especially in temporo-occipital regions. Furthermore, there is a general decrease in information flow, particularly in brain areas involved in processing vestibular signals and detecting self-motion [3].

The Scientist's Toolkit: Key Research Reagents & Materials

The following table details essential hardware and software for constructing a VR neuroscience research platform focused on sensory conflict.

| Item / Solution | Function in Research | Specification Considerations |

|---|---|---|

| Head-Mounted Display (HMD) | Presents the controlled visual stimulus; induces vection. | 6DOF tracking is essential [5]. High resolution and wide field of view for immersion. Low persistence and latency to minimize lag-induced conflict [5]. |

| Motion Platform | Provides synchronized or conflicting vestibular cues to test sensory conflict theory. | 3-DoF platforms can simulate roll, pitch, and heave. Platform accuracy and synchronization with visual frames are critical [1]. |

| Omnidirectional Treadmill (ODT) | Allows natural locomotion in VR, providing proprioceptive feedback that matches visual motion. | Key for studying locomotion-based sickness. Specs include low latency (<20ms) and high positional/angular accuracy [5]. |

| EEG System | Measures neural correlates of sensory conflict and cybersickness objectively. | High-density systems (e.g., 128-channel) are preferred. Capable of detecting subtle changes in power spectra (increased Delta/Theta power) [3]. |

| Simulator Sickness Questionnaire (SSQ) | The gold-standard subjective metric for quantifying symptoms. | Provides a total score and subscores (Nausea, Oculomotor, Disorientation). Must be administered pre-, during, and post-exposure for valid baselines and measures [1] [3]. |

| Motion Tracking System | Precisely tracks head and body movement for synchronizing visual and physical motion. | Systems with sub-millimeter accuracy and very low latency are required to avoid introducing additional conflict [1] [5]. |

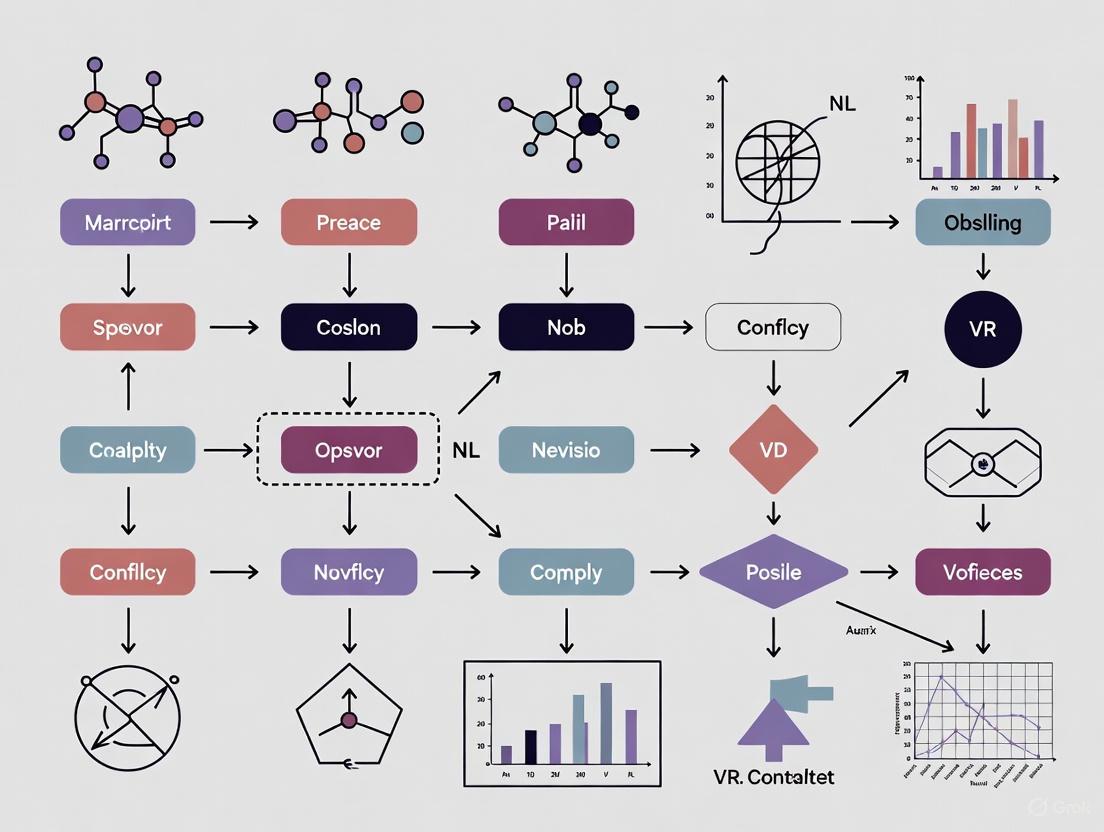

Experimental Workflow and Signaling Pathways

The following diagram illustrates the core mechanism of sensory conflict theory and the brain's response as measured in experimental settings.

This diagram maps the pathway from the initial sensory mismatch to the objectively measurable neural signals and subjective reports collected in experiments. The key takeaway for researchers is that the subjective experience of cybersickness has a clear, quantifiable neurophysiological signature that can be captured with tools like EEG.

Vestibular System Anatomy and Its Role in Spatial Orientation and Balance

Troubleshooting Guide: Vestibular Conflicts in VR Neuroscience

This guide helps diagnose and resolve common vestibular-related issues encountered during VR-based neuroscience experiments.

| Symptom / Problem | Potential Cause | Diagnostic Checks | Recommended Solution |

|---|---|---|---|

| High incidence of VR sickness (nausea, dizziness) [7] [8] | Visual-vestibular conflict: Visual system perceives motion while vestibular system signals stasis [7]. | Verify VR task design; ensure no artificial viewpoint movement during seated tasks [7]. | Implement "Improved Handheld Controller Movement" strategies that adjust virtual pitch/FOV based on real-world head acceleration [8]. |

| Postural instability & increased body sway in subjects [9] | Central suppression of vestibular input; common in PPPD or due to anxious, conscious balance control [9]. | Perform posturography; observe if sway increases with eye closure or on uneven ground [9]. | Incorporate vestibular physical therapy principles; train subjects in VR under safe, controlled conditions to recalibrate sensorimotor integration [10]. |

| Impaired spatial orientation & navigation in VR [9] | Dysfunctional spatial updating due to vestibular loss (BVP) or central suppression of vestibular signals (PPPD) [9]. | Administer a 3D Real-World Pointing Task (3D-RWPT) to test spatial memory and updating [9]. | Use VR paradigms without optic flow to isolate proprioceptive mismatches; validate with bedside spatial orientation tests [9] [7]. |

| Subject reports exhaustion & frustration, but not nausea [7] | High cognitive strain from resolving persistent sensorimotor mismatches [7]. | Use questionnaires (e.g., SSQ) to differentiate sickness from cognitive load; check task difficulty [7]. | Adjust task difficulty; ensure mismatches are introduced gradually to promote adaptation without excessive cognitive load [7]. |

| Worsening of symptoms in challenging balance conditions [9] | Loss of vestibular function (BVP), where vision/somatosensation cannot compensate [9]. | Test stance and gait with eyes closed and on foam surface; check for corrective saccades via vHIT [9]. | For BVP models, ensure visual or somatosensory cues are available. For PPPD models, reduce non-physiological muscular co-contraction through training [9]. |

Frequently Asked Questions (FAQs)

Q1: What is the core anatomical difference between peripheral and central vestibular systems?

The peripheral vestibular system is located in the inner ear and includes five sensory organs: three semicircular canals (detecting rotational head movements) and two otolith organs (the utricle and saccule, detecting linear acceleration and gravity). The central vestibular system comprises the parts of your central nervous system (brainstem, cerebellum, etc.) that process balance signals sent from the peripheral organs [10].

Q2: How can I isolate a proprioceptive mismatch from a visual-vestibular conflict in a VR experiment?

To isolate a proprioceptive mismatch, design a VR task where the participant remains seated and the virtual scene contains no optic flow or viewpoint movement. This removes the classic visual-vestibular conflict. Instead, introduce a mismatch between the participant's real hand position and the position of their virtual hand or a manipulated virtual object. This creates a conflict purely between visual and proprioceptive input [7].

Q3: Our VR study includes older adults. Are they more susceptible to VR sickness and dizziness?

Contrary to common assumption, recent evidence suggests that older adults may experience weaker VR sickness symptoms than younger participants. One study found that younger participants reported higher (worse) Simulator Sickness Questionnaire (SSQ) scores. This supports the feasibility of using VR with sensorimotor mismatches for rehabilitation in older populations [7].

Q4: What is the functional link between the vestibular system and a patient's sense of spatial orientation?

The vestibular system provides essential head movement information that the brain uses to continuously update your mental representation of your body's position and motion relative to the environment. This process, called spatial updating, is critical for orientation. Impairment, as seen in Bilateral Vestibulopathy (BVP) or Persistent Postural-Perceptual Dizziness (PPPD), leads to poor accuracy in tasks like pointing to remembered targets after a body rotation [9].

Q5: What is the key mechanistic difference in spatial orientation deficits between BVP and PPPD patients?

Both patient groups show similar deficits in spatial orientation tasks. However, in BVP, the cause is the actual loss of peripheral vestibular input. In PPPD, the peripheral function is normal, but there is a likely anxiety-driven central suppression of vestibular signals. The brain fails to use the available vestibular information effectively for updating spatial awareness [9].

Table 1: Spatial Orientation Performance in Vestibular Disorders (3D-RWPT)

| Cohort | Mean Angular Deviation (Overall) | Mean Angular Deviation (Vestibular-Specific Subtasks) | Spatial Orientation Discomfort |

|---|---|---|---|

| Healthy Controls (HC) | 7.77° ± 2.86° | 4.45° ± 2.33° | Low [9] |

| Bilateral Vestibulopathy (BVP) | 9.62° ± 3.21° | 8.11° ± 5.51° | High [9] |

| Persistent Postural-Perceptual Dizziness (PPPD) | 9.16° ± 3.85° | 6.62° ± 4.46° | High [9] |

Table 2: VR Sickness and User Experience Findings

| Factor | Impact on VR Sickness / User Experience | Key Finding |

|---|---|---|

| Sensorimotor Mismatch | No significant increase in classic VR sickness (nausea) [7]. | Mismatch group reported higher exhaustion/frustration, indicating cognitive strain [7]. |

| Age | Negative correlation with SSQ scores [7]. | Older participants experienced weaker VR sickness symptoms than younger participants [7]. |

| Visual-Vestibular Conflict | Primary cause of VR-induced vertigo and nausea [8]. | Can be mitigated by mapping real-world head acceleration to virtual character movement [8]. |

Detailed Experimental Protocols

Protocol 1: The 3D Real-World Pointing Task (3D-RWPT)

Purpose: To assess spatial orientation and memory by measuring the accuracy of pointing to remembered targets after whole-body rotation [9].

Methodology:

- Setup: The participant is seated on a rotatable chair in a room with multiple static, real-world visual targets.

- Encoding Phase: The participant views and memorizes the locations of all targets.

- Rotation Phase: The participant is passively rotated around the yaw axis with their eyes closed. This requires using vestibular input (or its suppression) to update their position relative to the targets.

- Pointing Phase: After rotation stops, the participant, still eyes-closed, must point to the location of a specified memorized target.

- Measurement: The angular deviation between the pointed direction and the actual target direction is measured.

- Paradigms: The test includes subtasks that emphasize either a cognitive (mental rotation) or a vestibular (body rotation) paradigm [9].

Inclusion Criteria:

- Age between 18 and 65 years.

- Normal scores on a dementia screening test (e.g., Montreal Cognitive Assessment, MoCA).

- For patient groups, diagnosis must be confirmed by an experienced neurotologist according to Bárány Society criteria (e.g., for PPPD or BVP) [9].

Protocol 2: VR Motor Task with Sensorimotor Mismatch

Purpose: To study the effects of proprioceptive mismatches on VR sickness and motor learning, isolating them from visual-vestibular conflicts [7].

Methodology:

- Setup: Participants are seated and wear a head-mounted display (HMD). They use a motion-tracked controller in a virtual environment.

- Task: A ball-throwing task is performed in VR.

- Intervention Groups:

- Mismatch Group: Experiences a deliberate, consistent discrepancy between the real hand position and the virtual hand/ball position.

- Error-based Group: Task difficulty is adjusted based on performance, without artificial mismatch.

- Errorless Group: Performs the task in a simplified, low-error condition.

- Controls: The VR scene is designed with no optic flow or viewpoint movement to avoid visual-vestibular conflicts. Only the user's virtual arm and the ball move.

- Outcome Measures:

- VR Sickness: Measured using the Simulator Sickness Questionnaire (SSQ).

- User Experience: Assessed via custom questionnaires on exhaustion, frustration, etc [7].

The Scientist's Toolkit: Research Reagent Solutions

| Essential Material / Tool | Function in Vestibular & VR Research |

|---|---|

| Head-Mounted Display (HMD) with 6-DoF Tracking | Creates an immersive visual environment and tracks head movements in six degrees of freedom, crucial for studying the vestibulo-ocular reflex (VOR) and inducing sensory conflicts [7]. |

| Video-Head Impulse Test (vHIT) System | Quantifies the function of the semicircular canals in the high-frequency range of the VOR by measuring eye velocity in response to rapid, passive head rotations [9]. |

| Stabilometer Platform / Posturography | Measures postural sway and balance control under various conditions (e.g., eyes open/closed, on foam), helping to differentiate between organic (BVP) and functional (PPPD) stance disorders [9]. |

| 3D Real-World Pointing Task (3D-RWPT) | A bedside clinical test that provides a simple measure of spatial memory and updating abilities, sensitive to vestibular dysfunction and central suppression of vestibular input [9]. |

| Simulator Sickness Questionnaire (SSQ) | A standardized psychometric tool for quantifying symptoms of VR sickness, with subscales for nausea, oculomotor issues, and disorientation [7]. |

Experimental Workflow and Vestibular Signaling Pathways

Neural Mechanisms of Visual-Vestibular Integration for Self-Motion Perception

Technical Support Center

Troubleshooting Guides

Visual-Vestibular Conflict & Motion Sickness

Issue: Users experience severe motion sickness (VIMS) during VR experiments

- Cause: Mismatch between visual motion cues in the VR environment and absent or conflicting vestibular signals from the inner ear [3] [11].

- Solution:

- Gradually expose participants to increasing levels of VR intensity to build tolerance [3].

- Implement "teleporting" movement instead of continuous visual motion in the virtual environment to reduce sensory conflict [11].

- Incorporate brief, scheduled resting-state periods with eyes closed during the experiment to alleviate symptoms [3].

Issue: Postural instability and ataxia observed after VR exposure

- Cause: Visual-vestibular conflict (VVC) stimulation disrupts normal balance processing. Objective postural instability often occurs after the conflict, not during it [12].

- Solution:

- Monitor participants' equilibrium immediately following the VR session, not just during exposure.

- Ensure the testing environment is safe for potential post-experiment imbalance, with support available if needed.

VR Hardware and Software

Issue: Blurry image in the VR headset

- Cause: Poor fit of the VR headset [13].

- Solution: Instruct the participant to move the headset up and down on their face until vision is clear, then tighten the headset dial and adjust the strap [13].

Issue: Image not centered in the VR headset

- Cause: The VR headset is not calibrated correctly [13].

- Solution: While in a module, instruct the participant to look straight ahead and then press the 'C' button on the keyboard [13].

Issue: Lagging image or tracking issues

- Cause: Low frame rate or incorrect base station setup [13].

- Solution:

Issue: Controller or tracker not detected

- Cause: The device is not turned on, is not charged, or needs pairing [13].

- Solution:

- Ensure the controller/tracker is turned on and fully charged.

- Re-pair the device through the SteamVR menu [13].

Frequently Asked Questions (FAQs)

Q1: What is the neurophysiological basis of motion sickness in VR? A1: Visually induced motion sickness (VIMS) arises from a sensory conflict between visual information indicating self-motion (vection) and vestibular/somatosensory systems signaling that the body is stationary [3]. This conflict is processed in different neural pathways, leading to subjective autonomic symptoms (nausea) and, later, objective postural instability [12]. EEG studies show that this state is associated with an increase in slow-wave brain activity (Delta, Theta, Alpha) in temporo-occipital regions and a general decrease in information flow between brain areas [3].

Q2: Which brain regions are critical for integrating visual and vestibular cues? A2: Key integration sites include the dorsal medial superior temporal area (MSTd) and the ventral intraparietal area (VIP) [14]. These areas contain neurons that respond selectively to both optic flow patterns (visual cues for self-motion) and physical translations in darkness (vestibular cues), making them prime neural substrates for multisensory integration of heading information [14].

Q3: How does the brain weight visual vs. vestibular information? A3: The brain uses a near-optimal, reliability-weighted averaging strategy, formalized by Bayesian causal inference models [15]. Each cue is weighted according to its reliability (the inverse of its variance), and the combined estimate is more precise than either cue alone. The combined reliability is the sum of the individual cue reliabilities [15].

Q4: What experimental measures can capture the neural effects of VIMS? A4: Electroencephalography (EEG) is well-suited for studying VIMS as it can be used during body movement in VR [3]. Key metrics include:

- Spectral Power: A shift to lower frequencies (1-10 Hz) with increasing VIMS intensity [3].

- Transfer Entropy (TE): An information-theoretic measure that shows a decrease in information flow between brain areas, particularly those involved in vestibular processing and self-motion detection, during high VIMS [3].

Experimental Protocols & Methodologies

Protocol 1: EEG Investigation of VIMS during Controlled VR Conflict

This protocol is designed to systematically study the neurophysiological correlates of increasing visual-vestibular conflict [3].

1. Participant Preparation & Habituation

- Apply EEG cap.

- Attach VR headset (HMD).

- Habituation Phase (10 minutes): Participants freely move their head and upper body for 5 minutes to acclimate to the VR environment, followed by 5 minutes of stillness without movement to establish a baseline state without induced sickness [3].

2. Baseline EEG Recording (2 minutes)

- Participants hold still and keep their eyes closed. This recording serves as the individual baseline for EEG activity [3].

3. Movement Period with EEG Recording

- Initiate continuous EEG recording.

- The avatar in the VR environment is moved externally based on a pre-defined protocol.

- Key Manipulation: Movement speed and freedom are increased step-wise to systematically elevate the level of sensory mismatch [3].

- Participants have no control over the avatar's movement during this period.

4. Resting-State Period (2 minutes)

- After 5 minutes of movement, participants hold still and close their eyes while EEG recording continues [3].

5. Subjective Symptom Assessment

- After each resting-state period, administer the Simulator Sickness Questionnaire (SSQ) to quantify the subjective level of motion sickness [3].

- Repeat steps 3-5 to gather data across multiple intensity levels.

6. Data Analysis

- EEG Analysis: Calculate frequency power (e.g., Delta: 1-3 Hz, Theta: 4-7 Hz, Alpha: 8-13 Hz) and Bivariate Transfer Entropy between electrode sites.

- Correlation: Associate changes in EEG power and information flow (TE) with SSQ scores across mismatch levels [3].

Protocol 2: Psychophysical Heading Discrimination Task

This protocol, adapted from non-human primate studies, investigates the behavioral integration of visual and vestibular cues for self-motion perception [15].

1. Stimulus Conditions

- Visual (Optic Flow): Present a cloud of moving dots simulating self-motion through a 3D environment. The heading direction (left or right of straight ahead) is controlled.

- Vestibular (Inertial Motion): Use a motion platform to deliver passive physical translations. The heading direction is similarly controlled.

- Combined: Present congruent visual and vestibular cues simultaneously.

2. Task Procedure (2-Alternative Forced Choice)

- On each trial, present one of the three stimulus conditions.

- The participant's task is to report whether their perceived heading direction was to the "left" or "right" of straight ahead.

- Vary the heading direction around straight ahead across trials to generate a psychometric function.

3. Data Analysis

- Fit psychometric functions for each condition.

- Extract the discrimination threshold (a measure of precision) and the point of subjective equality (a measure of accuracy).

- Test for Bayesian Optimal Integration by checking if the threshold in the combined condition is lower than the threshold in either unimodal condition and predicts the reduction based on cue reliability [15].

Signaling Pathways and Neural Workflows

Diagram: Neural Pathway for Visual-Vestibular Integration

Neural Pathway for Visual-Vestibular Integration

Diagram: Experimental Workflow for VIMS Study

Experimental Workflow for VIMS Study

The Scientist's Toolkit: Research Reagent Solutions

The following table details key materials and tools used in research on visual-vestibular integration.

| Item | Function & Application |

|---|---|

| Virtual Reality System | Presents controlled visual motion stimuli (optic flow) to induce vection and create precisely timed visual-vestibular conflicts [3] [11]. |

| Motion Platform | Provides physical inertial motion (vestibular stimulation) to deliver congruent or conflicting vestibular cues in combination with visual stimuli [15] [14]. |

| Electroencephalography (EEG) | Measures millisecond-level changes in brain electrical activity; used to identify spectral power shifts (increased Delta/Theta) and decreased information flow (Transfer Entropy) during VIMS [3] [16]. |

| Eye Tracker | Monitors eye movements and pupil response; critical for controlling for the effects of pursuit eye movements on optic flow and for assessing oculomotor symptoms of VIMS [16]. |

| Simulator Sickness Questionnaire (SSQ) | A standardized self-report metric to quantify the subjective intensity of motion sickness symptoms (nausea, oculomotor, disorientation) during and after VR exposure [3]. |

| Force Plates/Posturography | Objectively measures postural stability and sway to quantify the ataxia and balance disturbances that result from visual-vestibular conflict, often after the VR exposure has ended [12] [13]. |

| Computational Modeling Software | Implements Bayesian causal inference models (e.g., maximum likelihood estimation) to quantitatively predict how the brain weights and combines visual and vestibular cues based on their reliability [15]. |

Table: EEG Spectral Power Changes During VIMS

This table summarizes the changes in EEG frequency bands associated with increasing levels of visually induced motion sickness, based on findings from [3].

| Frequency Band | Frequency Range | Change During Severe VIMS | Brain Regions Most Affected |

|---|---|---|---|

| Delta | 1 - 3 Hz | Significant Increase | Temporo-Occipital |

| Theta | 4 - 7 Hz | Significant Increase | Temporo-Occipital |

| Alpha | 8 - 13 Hz | Significant Increase | Temporo-Occipital |

| Beta | 13 - 20 Hz | No significant change reported | - |

| Gamma | 21 - 40 Hz | No significant change reported | - |

Table: Bayesian Cue Integration in Heading Perception

This table outlines the key equations and principles of the Bayesian optimal integration model that explains how visual and vestibular cues are combined, as described in [15].

| Concept | Formula | Explanation |

|---|---|---|

| Combined Estimate | μ_comb = (w_vis * μ_vis) + (w_vest * μ_vest) |

The combined heading estimate is a weighted average of individual cue estimates. |

| Cue Weight | w = r / (r_vis + r_vest) where r = 1/σ² |

The weight of each cue is proportional to its reliability (inverse variance). |

| Combined Reliability | 1/σ²_comb = 1/σ²_vis + 1/σ²_vest |

The reliability of the combined estimate is greater than either cue alone. |

Bayesian Computational Models of Multisensory Integration and Weighting

Technical Support Center: Troubleshooting Vestibular Conflicts in VR Neuroscience

Frequently Asked Questions (FAQs)

FAQ 1: What is the core computational challenge causing cybersickness in VR experiments? The core challenge is the visual-vestibular conflict. In VR, your visual system signals self-motion (vection), while your vestibular organs report no corresponding acceleration or movement. The brain struggles to resolve this sensory mismatch. Bayesian models frame this as a causal inference problem, where the brain must decide whether visual and vestibular cues come from a common cause (and should be integrated) or independent causes (and should be segregated) [3] [17].

FAQ 2: How can I quantitatively measure the level of conflict or sickness in my participants? You can use a combination of subjective questionnaires and objective neural measures:

- Subjective: The Simulator Sickness Questionnaire (SSQ) is a standard tool for quantifying nausea, disorientation, and oculomotor symptoms [3].

- Objective: Electroencephalography (EEG) can track conflict-related brain activity. Increasing conflict and sickness correlates with a power increase in slow EEG waves (1-10 Hz), especially in temporo-occipital regions. Vestibular-Evoked Myogenic Potentials (VEMPs) can also objectively measure changes in vestibular processing post-VR exposure [3] [18].

FAQ 3: My Bayesian model isn't weighting sensory cues correctly. What could be wrong? Incorrect cue weighting often stems from inaccurate reliability estimates. In a Bayes-optimal model, cues should be weighted by their relative reliabilities (inverse variance). Ensure your model's likelihood functions accurately reflect the true noise characteristics of your sensory inputs (e.g., visual reliability for spatial tasks is often higher than auditory) [19] [20]. Furthermore, remember that the "principle of inverse effectiveness" often holds: multisensory integration benefits are largest when individual unisensory cues are weak [19].

FAQ 4: Can a participant's prior expectations really influence multisensory integration in VR? Yes. Prior expectations are a formal component of Bayesian models. Research shows that prior beliefs about causal structure (e.g., a strong "common-cause prior" that sight and sound originate from the same event) can override sensory evidence and dictate whether signals are integrated or segregated. In communicative contexts, for instance, the brain has a stronger prior to integrate vocal and bodily signals that share intent [21].

Troubleshooting Guides

Problem: Participants experience rapid onset of nausea and disorientation.

- Potential Cause: Excessive and unresolvable sensory conflict, where the visual motion signal is strong but completely uncorrelated with vestibular input.

- Solution:

- Calibrate Conflict Levels: Design your VR exposure protocol to start with low-mismatch scenarios (e.g., slow, predictable movements) and gradually increase freedom and speed [3].

- Implement Rest Periods: Follow movement periods with 2-minute resting-state intervals where participants close their eyes. This provides relief and allows for neural recording in a baseline state [3].

- Provide a Habituation Phase: Allow participants 5-10 minutes to get used to the VR environment and their avatar before starting the experimental intervention [3].

Problem: Neural data (e.g., EEG) shows inconsistent results during multisensory tasks.

- Potential Cause: High variability in how participants perform causal inference—some may optimally integrate cues while others use sub-optimal heuristic strategies.

- Solution:

- Use Computational Modeling: Fit participant behavior (e.g., in a spatial localization task) with a Bayesian Causal Inference model. This can identify which strategy a participant is using and isolate the influence of their priors versus sensory likelihoods [21].

- Analyze Information Flow: Calculate measures like Transfer Entropy from EEG data. During intense motion sickness, information flow decreases, especially in brain areas processing vestibular signals and self-motion. This might indicate the brain's strategy to handle an unresolvable conflict [3].

Problem: Difficulty modeling the dynamic weighting of cues in a real-world task.

- Potential Cause: Using a static model when cue reliabilities change over time or context.

- Solution: Implement a dynamic, learning-enabled model. Crossmodal synaptic plasticity rules can allow a model to learn the relative reliabilities of cues in real-time by capturing stimulus statistics, as demonstrated in robotic spatial localization tasks [20].

Table 1: Quantitative EEG Changes During Visually Induced Motion Sickness (VIMS) [3]

| Brain Region | EEG Frequency Band | Change During Severe VIMS | Functional Interpretation |

|---|---|---|---|

| Temporo-occipital | Delta (1-3 Hz) | Significant Increase | Reduced information processing capacity |

| Temporo-occipital | Theta (4-7 Hz) | Significant Increase | State of drowsiness/discomfort |

| Temporo-occipital | Alpha (8-13 Hz) | Significant Increase | Idling/functional inhibition of cortical areas |

| Widespread | Information Flow (Transfer Entropy) | General Decrease | Reduced transmission and processing of sensory information |

Table 2: Core Principles of Multisensory Integration for Model Design [19]

| Principle | Description | Implication for Bayesian Modeling |

|---|---|---|

| Superadditivity | Multisensory response > sum of unisensory responses. | Often occurs with weak stimuli; can be encoded in the model's decision function. |

| Inverse Effectiveness | Multisensory benefit is greatest when unisensory cues are weakest. | The model should account for dynamic changes in cue reliability. |

| Temporal Window | Stimuli are integrated within a specific time window. | The model's likelihood should incorporate temporal disparity as a cue for segregation. |

Detailed Experimental Protocols

Protocol 1: EEG Investigation of Vestibular Conflict in VR

This protocol is adapted from a study investigating how increasing visual-vestibular mismatch induces motion sickness and alters brain activity [3].

Participant Preparation:

- Recruit healthy, VR-inexperienced subjects.

- Apply a high-density EEG cap according to standard procedures.

- Attach the VR head-mounted display (HMD).

Habituation & Baseline Recording (10 minutes):

- Allow the participant to freely explore a neutral VR environment for 5 minutes to reduce novelty effects.

- Instruct the participant to remain still for 5 minutes with no visual motion.

- Record a 2-minute baseline EEG with eyes closed.

Experimental Intervention:

- Initiate continuous EEG recording.

- Expose the participant to predefined, externally controlled avatar movements in VR.

- Key: Use a stepped protocol where movement speed and freedom are incrementally increased every 5 minutes.

- After each 5-minute movement block, conduct a 2-minute resting-state EEG with eyes closed.

- Administer the Simulator Sickness Questionnaire (SSQ) after each rest period to quantify subjective experience [3].

Data Analysis:

- Preprocess EEG data (filtering, artifact removal).

- Calculate the power spectral density for standard frequency bands (Delta, Theta, Alpha, Beta).

- Compute Transfer Entropy between electrode pairs to assess directed information flow.

- Correlate EEG metrics (e.g., low-frequency power in temporo-occipital channels) with SSQ scores across mismatch levels.

Protocol 2: Psychophysics and Modeling of Audiovisual Integration

This protocol uses a spatial localization task to fit a Bayesian Causal Inference model, revealing the role of priors [21].

Stimuli Design:

- Create audiovisual clips of a speaker.

- Manipulate the spatial disparity between the sound source (voice) and the visual source (speaker's body) across trials (e.g., from congruent to highly disparate).

- Manipulate the pragmatic correspondence:

- Communicative Condition: Speaker addresses the participant with head, gaze, and speech.

- Non-communicative Condition: Speaker looks down and produces a meaningless vocalization.

Task Procedure:

- Participants report the perceived location of the sound in each trial.

- The ventriloquist effect is measured as the bias of the auditory position toward the visual position.

Computational Modeling:

- Fit participant responses with a Bayesian Causal Inference model.

- The model estimates:

- Sensory likelihoods: The reliabilities of auditory and visual spatial cues.

- Common-cause prior ((P_{common})): The prior belief that the two cues originate from the same source.

- Compare model variants to test if the (P_{common}) is higher in the communicative condition, indicating that pragmatic expectations guide integration.

Experimental Workflow and Signaling Pathways

The diagram below outlines the logical workflow and neural pathways involved in processing multisensory conflict in VR, from the initial stimulus to the perceptual and neural outcomes.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Computational Tools for VR Multisensory Research

| Item / Tool | Function / Description | Example Application |

|---|---|---|

| Head-Mounted Display (HMD) | Presents the controlled visual virtual environment. | Inducing calibrated visual-vestibular conflict for studying cybersickness [3] [18]. |

| Electroencephalography (EEG) | Records millisecond-level electrical activity from the scalp. | Tracking changes in brain rhythm power (e.g., increase in theta) during motion sickness [3]. |

| Vestibular-Evoked Myogenic Potentials (VEMP) | Measures vestibular system function via neck muscle responses. | Objectively quantifying changes in vestibular processing after VR exposure [18]. |

| Simulator Sickness Questionnaire (SSQ) | A standardized scale for quantifying subjective symptoms of motion sickness. | Correlating subjective discomfort with objective neural and physiological measures [3] [17]. |

| Bayesian Causal Inference Model | A computational framework to formalize how the brain arbitrates between integrating or segregating sensory cues. | Fitting behavioral data from spatial tasks to quantify the strength of a participant's common-cause prior [21]. |

| Transfer Entropy Analysis | An information-theoretic measure of directed information flow between time series. | Analyzing how sensory conflict reduces information transfer between brain regions from EEG data [3]. |

| Crossmodal Plasticity Learning Rules | Algorithmic rules that allow models to adapt synaptic weights based on sensory experience. | Enabling computational models to learn cue reliabilities in real-time, mimicking developmental learning [20]. |

How VR-Induced Sensory Conflicts Manifest as Cybersickness and Balance Impairments

FAQs: Understanding Cybersickness and Balance in VR Research

Q1: What is the primary physiological mechanism behind cybersickness? The primary mechanism is sensory conflict theory. This theory posits that cybersickness arises from a mismatch between visual inputs, which signal self-motion within the virtual environment, and vestibular/proprioceptive inputs, which indicate that the body is stationary [22] [23] [24]. This incongruence disrupts the vestibular network, leading to symptoms like nausea, dizziness, and disorientation [23].

Q2: Are certain populations more susceptible to VR-induced balance impairments? Research indicates that susceptibility varies. While one study found that older adults experienced weaker VR sickness symptoms compared to younger participants in a seated motor task [7], another study highlights that the aging balance system, with degenerative changes to sensory inputs, may be more affected by visual perturbations in VR [25]. Interestingly, patients with existing vestibular loss may be less susceptible to the visual-vestibular mismatch that causes cybersickness [26].

Q3: What are the most effective experimental methods for quantifying cybersickness? The most common method is through subjective questionnaires, but objective measures are also used.

- Subjective Measures: The Simulator Sickness Questionnaire (SSQ) [7] [23] [27] and the Virtual Reality Sickness Questionnaire (VRSQ) [22] are standard tools.

- Objective Measures: Neuroimaging techniques like functional near-infrared spectroscopy (fNIRS) can measure cortical activity in brain regions associated with multisensory integration (e.g., temporoparietal junction, angular gyrus) and correlate it with symptom severity [23]. Posturography can also objectively measure balance sway [25].

Q4: Can VR itself be used as a tool for vestibular rehabilitation? Yes. For patients with vestibular dysfunction, VR provides a controlled means to safely expose them to sensory conflicts [28] [26]. This exposure drives vestibular compensation and accelerates habituation. Studies have shown VR-based vestibular rehabilitation to be as effective as conventional therapy, with high levels of patient satisfaction [28].

Troubleshooting Guide: Common Experimental Challenges & Solutions

| Symptom / Issue | Possible Cause | Recommended Solution |

|---|---|---|

| High dropout rates due to nausea [25] | Visual-vestibular conflict; prolonged exposure; low frame rates [24]. | ✓ Implement user-initiated techniques: "flamingo pose" balance training or leaning into virtual turns [29].✓ Shorten exposure times and incorporate mandatory breaks.✓ Ensure a high, stable frame rate (e.g., 90 Hz) and optimize graphics to reduce latency [24]. |

| Significant postural sway during/after VR exposure | Intense visual perturbance; conflicting sensory inputs affecting balance control [25]. | ✓ For assessment: Use VR HMD sensors or mobile posturography to objectively quantify sway velocity [25].✓ For therapy: Start with low-intensity visual environments and gradually increase perturbance as tolerance builds [26]. |

| Variable susceptibility confounding group results | Individual differences in age, vestibular function, or prior VR experience [7] [24]. | ✓ Pre-screen participants for vestibular history and VR experience.✓ Stratify random allocation to experimental groups based on these factors.✓ Use a sham-controlled design for interventions (e.g., sham tDCS) [23]. |

| Lack of engagement in repetitive VR rehab exercises | Monotonous therapeutic content. | ✓ Leverage VR's strength by designing immersive, interactive, and game-like exercises to improve adherence [26] [25]. |

Summarized Quantitative Data from Key Studies

Table 1: Quantitative Findings on Cybersickness and Intervention Efficacy

| Study Focus | Key Metric | Result | Citation |

|---|---|---|---|

| Seated VR Walk | Increase in VRSQ Symptoms | Eye strain (+0.66), General discomfort (+0.6), Headache (+0.43) [22] | [22] |

| Galvanic Vestibular Stimulation (GVS) | Change in Motion Sickness | Beneficial GVS: 26% reduction; Detrimental GVS: 56% increase [30] | [30] |

| Cathodal tDCS | Ride Duration on VR Rollercoaster | Sham: 478 sec; Low Vib: 568 sec; Medium Vib: 623 sec [29] | [29] |

| Music Intervention | Reduction in Motion Sickness | Joyful Music: 57.3%; Soft Music: 56.7%; Stirring Music: 48.3% [27] | [27] |

| Age vs. Sickness | SSQ Score Correlation | Younger participants reported higher (worse) SSQ scores [7] | [7] |

Table 2: Core Components of a VR Neuroscience Toolkit

| Research Reagent / Tool | Function in VR Vestibular Research |

|---|---|

| Head-Mounted Display (HMD) | Presents the immersive virtual environment; often contains built-in sensors (gyroscopes, accelerometers) for tracking head movement and quantifying postural sway [22] [25]. |

| Simulator Sickness Questionnaire (SSQ) | A standard self-report tool for quantifying the severity of cybersickness symptoms (nausea, oculomotor, disorientation) after VR exposure [7] [23]. |

| Transcranial Direct Current Stimulation (tDCS) | A non-invasive brain stimulation technique. Cathodal tDCS over the right temporoparietal junction can modulate cortical activity to reduce cybersickness [23]. |

| Galvanic Vestibular Stimulation (GVS) | A technique that uses electrical current to manipulate vestibular afferent signals, allowing researchers to directly alter vestibular sensory conflict and test its causal role in motion sickness [30]. |

| Functional Near-Infrared Spectroscopy (fNIRS) | A neuroimaging method ideal for measuring cortical activity during VR experiences due to its portability and motion tolerance. It detects changes in blood oxygenation in brain areas like the TPJ [23]. |

Detailed Experimental Protocols

Protocol 1: Inducing and Measuring Cybersickness with a Seated VR Walk

This protocol is adapted for studying cybersickness in populations with limited mobility [22].

- Apparatus: Meta Quest 2 HMD, a rotating chair, and a 360-degree video of a visually engaging environment (e.g., a walk through the Venice Canals).

- Procedure:

- Participants complete pre-exposure questionnaires (VRSQ, I-PANAS-SF for emotions).

- Participants sit on a rotating chair and experience the 15-minute VR walk. They are instructed to rotate gently to follow the virtual scenery.

- The testing room should be silent to minimize external sensory input.

- Immediately after the experience, participants complete the VRSQ and I-PANAS-SF again, followed by the Spatial Presence Experience Scale (SPES) and Flow State Scale (FSS).

- Analysis: Compare pre- and post-VRSQ scores to quantify cybersickness. High flow and positive affect scores despite cybersickness symptoms indicate the experience's engaging nature.

Protocol 2: Applying tDCS to Modulate Cybersickness

This protocol uses neuromodulation to target the neural correlates of cybersickness [23].

- Apparatus: tDCS stimulator (e.g., ActivaDose), fNIRS system (e.g., NIRSport2), VR-HMD for a rollercoaster simulation.

- Procedure:

- Participants are randomly assigned to cathodal tDCS or sham stimulation groups.

- For the cathodal group, the cathode electrode is placed over CP6 (right TPJ) and the anode over Cz. A 2 mA current is applied for 20 minutes. The sham group receives only a brief current ramp.

- Before and after stimulation, participants undergo fNIRS scanning to measure baseline cortical activity.

- Participants then experience a VR rollercoaster while fNIRS data is collected.

- The SSQ is administered after the VR exposure.

- Analysis: Compare SSQ scores between groups. Analyze fNIRS data for changes in oxyhemoglobin concentration in the TPJ, angular gyrus, and superior parietal lobule.

Figure 1: Experimental workflow for tDCS modulation of cybersickness.

Protocol 3: Using GVS to Validate Sensory Conflict Theory

This protocol directly tests the causal role of vestibular conflict in motion sickness [30].

- Apparatus: GVS system, motion platform for passive lateral translations, instrumentation in a dark room.

- Procedure:

- Using a computational model, design two specific GVS waveforms: a "Beneficial" waveform predicted to reduce vestibular sensory conflict and a "Detrimental" waveform predicted to increase it.

- Participants are exposed to 40 minutes of passive lateral translations in the dark under three conditions: Beneficial GVS, No GVS (sham), and Detrimental GVS.

- Motion sickness symptoms are tracked in real-time using a scale like MISC (Misery Scale).

- Analysis: Compare the rate of motion sickness development (MISC rate per minute) across the three conditions. A statistical model tests for a significant linear effect of GVS condition on symptom severity.

Figure 2: Signaling pathway of VR-induced sensory conflict leading to symptoms.

Advanced Techniques: GVS, Machine Learning, and VR Protocols for Vestibular Research

Galvanic Vestibular Stimulation (GVS) as a Tool for Manipulating Vestibular Input

Galvanic Vestibular Stimulation (GVS) is a non-invasive technique that applies low-amperage electrical currents to the mastoid processes behind the ears to modulate vestibular system activity [31] [32]. In virtual reality (VR) neuroscience research, GVS serves as a crucial tool for investigating vestibular function and managing sensory conflicts that arise between visual, vestibular, and proprioceptive systems [33] [34]. By artificially generating vestibular signals that can be carefully controlled and dissociated from other sensory inputs, researchers can systematically probe the vestibular system's contributions to posture, gaze control, spatial navigation, and self-motion perception [31] [35].

The relevance of GVS has grown significantly with the expansion of VR applications, where conflicts between visual flow (indicating self-motion) and absent or contradictory vestibular signals (indicating no physical movement) often trigger visually induced motion sickness (VIMS) [33] [34]. Within this context, GVS provides a method to manipulate vestibular input deliberately, offering insights into both the fundamental mechanisms of sensory integration and potential therapeutic interventions for sensory processing disorders.

Key Concepts: Vestibular Conflicts in VR

The Neural Basis of Vestibular Conflict

The vestibular system comprises peripheral organs (semicircular canals and otolith organs) and central pathways that integrate sensory information for balance and spatial orientation [31]. In natural environments, inputs from vestibular, visual, and proprioceptive systems are congruent. In VR, however, sensory mismatches occur when visual stimuli suggest self-motion while vestibular signals indicate static position [33] [34].

GVS directly stimulates vestibular afferent nerves, primarily at the synapse between vestibular hair cells and eighth nerve afferents [36]. This stimulation creates artificial signals that the brain interprets as head movement or tilt, allowing researchers to study how the central nervous system resolves conflicting sensory information [31] [36].

GVS as a Probe for Vestibular Processing

Table 1: GVS Stimulation Modalities and Their Primary Applications

| Stimulation Type | Waveform Characteristics | Primary Research Applications | Key Effects |

|---|---|---|---|

| Directional GVS | Square waves or pulses [31] | Investigating vestibular contributions to postural control, gaze stabilization, and self-motion perception [31] | Direction-specific postural sway, nystagmus, and perception of body tilt [31] [37] |

| Noisy GVS (nGVS) | Randomly fluctuating currents [35] | Enhancing sensory integration for spatial cognition; therapeutic applications in balance disorders [35] [32] | Improved spatial memory, reduced postural sway, enhanced balance [35] [38] |

| Sinusoidal GVS | Oscillating currents at specific frequencies [36] | Assessing frequency-dependent vestibular responses; studying vestibulo-ocular reflexes [36] | Frequency-locked postural and ocular responses [36] |

The Scientist's Toolkit: Essential Materials and Equipment

Table 2: Essential Research Reagents and Equipment for GVS Experiments

| Item | Function/Description | Technical Considerations |

|---|---|---|

| Constant Current Stimulator | Delivers precise electrical currents regardless of impedance changes [37] | CE-certified for human research; capable of generating various waveforms (pulse, sinusoidal, noisy) with adjustable parameters [37] |

| Electrode Preparation Gel | Cleans skin and reduces impedance at electrode sites [37] | Commercial skin preparation gels (e.g., Nuprep) improve signal conduction and comfort [37] |

| Conductive Electrode Paste | Ensures stable electrical connection between electrode and skin [37] | High-conductivity paste (e.g., Ten20 Conductive Neurodiagnostic Electrode Paste) minimizes current dispersal [37] |

| Surface Electrodes | Apply current transcutaneously to mastoid processes [31] [32] | Typically round metallic plates (≈8mm diameter) or carbon rubber electrodes; secured with adhesive tape [36] |

| VR Head-Mounted Display (HMD) | Presents controlled visual environments [35] [34] | High refresh rate, wide field of view, and precise head tracking enhance immersion and experimental control [33] |

| Motion Tracking System | Quantifies postural responses and movement kinematics [36] | Critical for measuring GVS-induced postural sway and behavioral responses [36] |

Experimental Protocols for VR Neuroscience

Basic GVS Setup and Calibration

Protocol Details:

- Electrode Placement: Clean the skin over both mastoid processes with preparation gel to reduce impedance [37]. Apply electrodes with conductive paste, ensuring good contact and secure placement [37] [36].

- Stimulation Parameters: For initial setup, use low-intensity currents (0.5-1.0 mA) with 15-second duration and 2-3 second fade-in/fade-out periods to minimize discomfort [37].

- Sham Stimulation: Implement a credible sham condition with brief fade-in only (not reaching target intensity) to control for placebo effects [37].

- Parameter Refinement: Adjust current intensity based on participant feedback and experimental requirements, typically staying within 0.5-3 mA range for human studies [36].

Spatial Memory Assessment with nGVS in VR

Protocol Details:

- Stimulation Parameters: Apply nGVS with currents typically between 100-500 µA at frequencies around 100 Hz [35]. The random electrical fluctuations are believed to enhance neural stochastic resonance [35].

- VR Environment: Create an ecologically valid virtual environment with object occlusions and spatial landmarks that require allocentric (world-centered) spatial coding [35].

- Task Structure: Implement an encoding phase where participants explore and learn object locations, followed by a recall phase where they navigate to remembered locations [35].

- Outcome Measures: Quantify spatial memory performance using path length (total distance traveled to find objects) and time to completion [35]. These metrics show significant improvement under nGVS conditions compared to sham stimulation [35].

Subjective Postural Vertical Assessment

Objective: Quantify the effect of GVS on perceived body orientation relative to gravity [37].

Methodology:

- Setup: Participants sit blindfolded on a tilting chair that can be manually controlled to various tilt angles [37]. Padding minimizes somatosensory cues from the chair.

- Stimulation Conditions: Apply three conditions in randomized order: right-sided anodal GVS, left-sided anodal GVS, and sham stimulation [37].

- Procedure: For each condition, perform multiple trials (e.g., 8 tilts) starting from different angles. Participants indicate when they perceive themselves to be perfectly upright [37].

- Data Analysis: Measure the deviation from true vertical (Subjective Postural Vertical) under each stimulation condition. Right-anodal GVS typically produces significant deviations (approximately 0.87° on average), while left-anodal may show asymmetric effects [37].

Troubleshooting Common Experimental Issues

Participant Discomfort and Motion Sickness

Issue: Participants experience discomfort, dizziness, or nausea during or after GVS application, particularly when combined with VR exposure [33] [34].

Solutions:

- Current Ramping: Implement gradual fade-in and fade-out periods (2-3 seconds) rather than abrupt current onset/offset [37].

- Intensity Titration: Begin with lower currents (0.2-0.5 mA) and gradually increase to target intensity based on individual tolerance [37] [36].

- Session Duration: Limit initial exposure sessions to 5-15 minutes, particularly when combining GVS with potentially provocative VR stimuli [33].

- Symptom Monitoring: Use standardized questionnaires (SSQ, FMS) before, during, and after experiments to quantify symptoms [34].

Excessive Skin Impedance

Issue: High or variable skin impedance reduces stimulation efficacy and increases discomfort.

Solutions:

- Thorough Skin Preparation: Clean mastoid areas with alcohol wipes followed by specialized skin preparation gels (e.g., Nuprep) to remove oils and dead skin cells [37].

- Quality Electrode Paste: Use high-conductivity electrode paste (e.g., Ten20) and ensure adequate application without bridging between electrodes [37].

- Electrode Security: Secure electrodes firmly with adhesive tape or bandages to maintain consistent contact throughout the experiment.

Unclear or Asymmetrical Responses

Issue: GVS produces inconsistent, asymmetrical, or absent behavioral responses across participants.

Solutions:

- Individual Calibration: Account for known inter-individual differences in vestibular sensitivity by calibrating current intensity for each participant [37].

- Postural Context: Standardize initial posture and head position relative to torso, as these factors influence vestibulospinal responses [36].

- Control Conditions: Include adequate sham stimulation and within-subject designs to control for individual response variability [37].

- Response Verification: Implement simple response verification tasks (e.g., standing posture with eyes closed) to confirm basic GVS efficacy before main experiments.

Frequently Asked Questions (FAQs)

Q1: What specific vestibular structures does GVS activate? GVS primarily stimulates the neural afferents rather than the vestibular hair cells themselves [36]. It affects both semicircular canal and otolith afferents, though there is ongoing debate about potential differences in sensitivity between these systems [37]. The stimulation creates a neural firing pattern that the brain interprets as head acceleration or tilt [31].

Q2: How does nGVS differ from traditional GVS, and when should I use each? Traditional directional GVS uses square waves or pulses to create predictable vestibular illusions of body sway or rotation [31]. Noisy GVS (nGVS) employs randomly fluctuating currents that are thought to enhance sensory integration through stochastic resonance [35]. Use directional GVS when studying specific vestibulo-motor responses or creating controlled perceptual illusions. Use nGVS for therapeutic applications or when aiming to improve overall vestibular processing without inducing strong directional biases [35] [32].

Q3: What are the most important safety considerations for GVS? GVS is generally considered safe when standard protocols are followed [32]. Key safety measures include: (1) using constant current stimulators that prevent dangerous current spikes; (2) implementing current limits (typically ≤3 mA for human research); (3) excluding participants with known neurological conditions, vestibular disorders, or metal implants in the head/neck region; and (4) closely monitoring participant comfort and discontinuing immediately upon report of significant discomfort [37] [36].

Q4: Why might GVS effects be asymmetrical between left and right stimulation? Recent research has documented asymmetrical effects, with right-sided anodal GVS producing more consistent effects on subjective postural vertical than left-sided stimulation [37]. This may relate to known right-hemispheric dominance in cortical vestibular processing, though the exact mechanisms require further investigation [37].

Q5: How can I verify that my GVS setup is working correctly? Simple verification methods include: (1) having participants stand eyes closed with feet together and observing characteristic postural sway toward the cathode during stimulation; (2) measuring nystagmus responses (primarily torsional) if eye movement recording equipment is available; and (3) subjective reports of body tilt or rotation sensations from participants [31] [36].

Noisy GVS (nGVS) Protocols for Enhancing Postural Control and Stability

Frequently Asked Questions (FAQs)

Q1: What is the fundamental mechanism by which nGVS improves postural stability? nGVS applies a low-intensity, random electrical current (zero-mean Gaussian white noise) transcutaneously over the mastoid processes behind the ears. This stimulation modulates the firing activity of vestibular afferent nerves. The mechanism is linked to stochastic resonance, where the addition of a low level of noise can enhance the detection and transmission of weak sensory signals in neural systems, thereby improving the brain's ability to process vestibular information for balance and postural control [39].

Q2: How do I determine the optimal nGVS stimulation intensity for a participant? The optimal intensity is participant-specific and should be determined empirically. A established method involves applying a range of stimulation strengths (e.g., peak amplitudes of 0, 200, 400, 600, 800, and 1000 µA) while the participant stands quietly. The intensity that results in the minimal root mean square (RMS) of the center of pressure (COP) sway—indicating the best standing stability—should be selected as the optimal intensity for subsequent experiments [39].

Q3: Our participants sometimes experience motion sickness in VR environments. Could nGVS help with this? While nGVS's primary documented effect in this context is on postural control and spatial memory, there is evidence that GVS can mitigate motion sickness. However, it is thought to operate through a different neural pathway. The motion sickness reduction effect is associated with modulation of the nucleus tractus solitarius (NTS) and vestibular nuclei, which help suppress conflicting sensory signals that trigger symptoms like nausea and dizziness [35]. Its efficacy for VR-induced cybersickness in healthy participants is a potential area for further research.

Q4: What are the typical neurophysiological changes observed in the brain after nGVS? Electroencephalography (EEG) studies show that nGVS can lead to significant increases in EEG power across theta, alpha, beta, and gamma frequency bands, particularly in the left parietal lobe during both standing and walking tasks. Furthermore, post-stimulation effects include changed EEG activities in the precentral gyrus and right parietal lobe, suggesting nGVS can modulate cortical regions involved in sensorimotor processing and spatial orientation [39].

Q5: Are the effects of nGVS limited only to the stimulation period? No, research indicates there is a post-stimulation effect. Changes in brain activity, as measured by EEG, can persist after the nGVS has been turned off. This suggests that nGVS can induce short-term neuroplastic changes in the brain, making it a promising tool for therapeutic applications [39].

Troubleshooting Guide

Problem 1: Inconsistent or Lack of Improvement in Postural Stability

| Possible Cause | Diagnostic Steps | Recommended Solution |

|---|---|---|

| Sub-optimal stimulation intensity | Systematically test a range of currents (0–1000 µA) and measure CoP sway RMS during quiet standing [39]. | Re-calibrate and use the intensity that produces the smallest CoP sway RMS [39]. |

| Poor electrode-skin contact | Check electrode impedance; ensure skin is clean and dry before application. | Use abrasive prepping gel and high-conductivity electrode gel; secure electrodes firmly with tape [39]. |

| Vestibular vs. Visual Mismatch | Assess if the VR visual flow is highly incongruent with vestibular cues. | Simplify the VR visual scene or introduce more congruent self-motion cues to reduce sensory conflict [3]. |

Problem 2: Participant Discomfort or Skin Irritation

| Possible Cause | Diagnostic Steps | Recommended Solution |

|---|---|---|

| High stimulation intensity | Check if the current is significantly above the participant's determined optimal level. | Reduce the intensity to the lowest effective level; never exceed safety guidelines. |

| Electrode gel allergy or reaction | Inquire about skin sensitivities; inspect skin for redness. | Switch to a hypoallergenic electrode gel. |

| Prolonged stimulation | Review the protocol duration. | Ensure stimulation sessions are of a standard length (e.g., 6 minutes in some protocols) with adequate breaks [39]. |

Problem 3: Excessive Noise in Neurophysiological Data (e.g., EEG) During nGVS

| Possible Cause | Diagnostic Steps | Recommended Solution |

|---|---|---|

| Direct electrical interference from nGVS device | Run a test recording with nGVS on and no participant. | Use high-quality, shielded EEG systems; ensure proper grounding; employ artifact removal algorithms (e.g., ICA) during data processing [39]. |

| Motion artifacts | Observe if noise correlates with participant movement. | Instruct the participant to minimize non-task-related head movements where possible. |

Table 1: Effects of nGVS on Postural Control Metrics

| Study Population | Sample Size (n) | Key Outcome Measure | Result with nGVS | Statistical Significance (p-value) |

|---|---|---|---|---|

| BVH Patients & Healthy Subjects [39] | 17 (10 Healthy, 7 BVH) | CoP Sway (RMS) | Significantly Reduced | p < 0.05 |

| BVH Patients & Healthy Subjects [39] | 17 (10 Healthy, 7 BVH) | 2 Hz Head Yaw Quality During Walking | Significantly Improved | p < 0.05 |

Table 2: Effects of nGVS on Spatial Memory Performance in VR

| Study Population | Sample Size (n) | Key Outcome Measure | Result with nGVS | Effect Size (Cliff's Delta) |

|---|---|---|---|---|

| Healthy Adults [35] | 32 | Path Length (PL) | Significantly Shorter | δ = -0.773 to -0.789 (Subtask 2 & 3) |

| Healthy Adults [35] | 32 | Time to Completion (TTC) | Significantly Reduced | Not Reported |

Detailed Experimental Protocols

Objective: To investigate the effects of nGVS on postural stability during standing and walking with head turns.

Materials:

- nGVS Stimulator (e.g., DC-STIMULATOR PLUS)

- Carbon rubber electrodes with conductive gel

- Force plate (e.g., AMTI force plates)

- Motion capture system (e.g., VICON)

- EEG system with 32+ channels

- Metronome

Procedure:

- Electrode Placement: Clean the skin over the mastoid processes bilaterally. Place electrodes coated with conductive gel and secure them.

- Intensity Calibration: Determine the optimal nGVS intensity for each participant by having them stand on a force plate for 30 seconds under different stimulation levels (0–1000 µA). Select the intensity that minimizes CoP sway RMS.

- Experimental Task:

- Participants perform a block of trials before (pre-stimulation) and after (post-stimulation) a 6-minute period of nGVS at their optimal intensity.

- Each trial consists of:

- 5-second Walking: Participants walk while turning their head horizontally every 500 ms (2 Hz) in time with an auditory metronome cue.

- 5-second Standing: Participants stand still.

- Motion capture and EEG data are recorded simultaneously throughout the trials.

- Data Analysis:

- Calculate CoP RMS from force plate data during standing phases.

- Analyze head movement quality (accuracy, smoothness) from motion capture data during walking.

- Process EEG data to compute frequency band power (theta, alpha, beta, gamma) in regions of interest like the parietal lobe.

Objective: To assess the impact of nGVS on spatial learning and memory within a virtual reality environment.

Materials:

- nGVS Stimulator and electrodes

- Virtual Reality headset and development platform (e.g., Unity)

- Custom VR spatial navigation task

Procedure:

- Setup: Apply nGVS electrodes as described in Protocol 1.

- Study Design: Use a within-subjects or between-subjects design with two conditions: with-nGVS (active stimulation) and without-nGVS (sham/control).

- VR Task: Participants perform a VR spatial memory task. This typically involves learning and recalling the locations of objects placed in a complex, ecologically valid virtual environment (e.g., with object occlusions and specific lighting).

- Stimulation: Apply nGVS at a pre-determined, safe intensity throughout the learning and recall phases for the active condition.

- Data Collection: Primary metrics are Path Length (PL) and Time to Completion (TTC) for finding the remembered objects. Participant navigation paths are logged from the VR platform.

- Data Analysis: Use non-parametric tests like the Mann-Whitney U test to compare PL and TTC between the with-nGVS and without-nGVS conditions. Calculate effect sizes using Cliff's Delta.

The Scientist's Toolkit: Key Research Reagents & Materials

| Item Name | Function in nGVS Research | Example/Specification |

|---|---|---|

| nGVS Stimulator | Delivers precise, low-current electrical noise signal. | DC-STIMULATOR PLUS (NeuroConn GmbH); capable of generating zero-mean Gaussian white noise [39]. |

| Electrodes | Transcutaneous delivery of current to the vestibular system. | Carbon rubber electrodes (e.g., 25-35 cm²); used with conductive gel to reduce impedance [39]. |

| Force Platform | Quantifies static postural control by measuring center of pressure (CoP). | AMTI force plates; used to calculate CoP sway root mean square (RMS) [39]. |

| Motion Capture System | Quantifies dynamic postural control, gait, and head movement. | VICON system with multiple cameras; tracks body and head kinematics during walking tasks [39]. |

| Electroencephalography (EEG) | Records brain activity to assess cortical effects of nGVS. | 32-channel or higher systems; used to analyze changes in spectral power (theta, alpha, beta, gamma) [39]. |

| Virtual Reality (VR) System | Provides controlled, immersive environments for spatial navigation and sensory conflict studies. | Head-Mounted Display (HMD); paired with a 3D development platform like Unity to create spatial tasks [35]. |

Conceptual and Experimental Workflows

Diagram 1: Proposed Neural Pathways of nGVS Effects. nGVS stimulates vestibular afferents, which project to multiple brain regions. Modulation of brainstem circuits is linked to improved postural control, while influence on hippocampal and striatal networks enhances spatial memory. Changes in parietal cortical activity contribute to optimized sensorimotor integration during navigation.

Diagram 2: Generic Workflow for an nGVS Experiment. This flowchart outlines the common steps in a typical nGVS research protocol, from participant setup and crucial intensity calibration to pre-post testing and data analysis.

Technical Support Center: FAQs & Troubleshooting

This section provides direct answers to common technical issues encountered during VR-based vestibular assessment experiments, particularly those utilizing environmental simulations like subway platforms.

Frequently Asked Questions (FAQs)

Q1: During the subway scene simulation, participants report increased dizziness and sway. Is this a system error or an expected response? A: This is an expected and scientifically documented response, not necessarily a system error. Research shows that for individuals with vestibular hypofunction, moving visual scenes (like a virtual subway) accompanied by audio can significantly increase postural sway, which is a key metric in these assessments [40] [41]. You should verify that your system's tracking is functioning correctly, but the symptom itself is a valid experimental observation.

Q2: The VR image is lagging or has tracking issues during the experiment. How can this be resolved? A: Image lag and tracking issues can severely impact data quality. Please follow these steps:

- Check Frame Rate: Press the 'F' key on the keyboard to display the frame rate; it should be at least 90 fps for a smooth experience [42].

- Restart Systems: Restart the computer and the VR headset. For the headset, you can press the button on the link box twice [42].

- Inspect Base Stations: Ensure the base stations are correctly positioned with a clear line of sight to the headset and trackers. You can run a room setup in SteamVR to reconfigure the play area [42].

Q3: A participant's VR headset is not being detected by the system. What are the first steps to troubleshoot this? A: This is typically a connection issue.

- First, verify that the link box (the intermediate box between the PC and headset) is powered ON [42].

- Unplug all connections from the link box and firmly reconnect them [42].

- Finally, reset the headset via the SteamVR application [42].

Q4: The force plates used for measuring postural sway are not being detected by the Virtualis application. A:

- Check the physical USB connection to the computer or hub [42].

- Run an automatic hardware detection within the Virtualis application. This is typically found under

Administration > Devices[42].

Q5: How can I ensure our VR system and software are up-to-date for consistent experimental conditions? A:

- Virtualis Application: If connected to the internet, the application should prompt you automatically for updates. For manual updates or systems without web access, contact your local sales representative [42].

- SteamVR: Manually check for updates under the 'Steam' tab in the Steam client by selecting 'Check for Steam Client Updates' [42].

- Windows: Check for updates manually via: Start button > Settings > Update & Security > Windows Update > Check for updates [42].

Troubleshooting Guide for Common Hardware Issues

Table 1: Troubleshooting Common VR Hardware Problems

| Problem | Possible Reason | Solution |

|---|---|---|