Source Memory Assessment Techniques: From Foundational Concepts to Digital Biomarkers in Clinical Research

This article provides a comprehensive overview of contemporary source memory assessment techniques, tailored for researchers and drug development professionals.

Source Memory Assessment Techniques: From Foundational Concepts to Digital Biomarkers in Clinical Research

Abstract

This article provides a comprehensive overview of contemporary source memory assessment techniques, tailored for researchers and drug development professionals. It explores the fundamental cognitive architecture of source memory and its distinction from other memory systems. The scope spans traditional laboratory paradigms, the emergence of digital and virtual reality-based tools, and the critical validation of these methods against established biomarkers. The article further discusses optimization strategies for data fidelity and compares the sensitivity of various assessment types, concluding with their pivotal role in creating cognitive endpoints for clinical trials and early intervention strategies in neurodegenerative and psychiatric diseases.

Deconstructing Source Memory: Cognitive Architecture and Theoretical Frameworks

Conceptual Foundation of Source Memory

Source memory is a critical neuropsychological construct that refers to the ability to recall the contextual details of learned information, such as the spatial, temporal, or situational circumstances in which the information was acquired [1]. This form of memory goes beyond simple item recognition (remembering the content itself) to encompass memory for the source's specific characteristics [2] [1]. For instance, source memory enables an individual to remember not just a particular fact, but where and when it was learned, or from which specific list it originated.

The distinction between item memory and source memory has significant clinical implications. Research indicates these memory types can be dissociated in various populations; for example, patients with frontal lobe lesions often demonstrate disproportionate impairment in source memory while maintaining relatively preserved item memory, whereas medial temporal lobe amnesics typically show the opposite pattern [1]. This dissociation underscores the importance of specialized assessment protocols that can differentially evaluate these memory components.

Contemporary research has expanded our understanding of source memory through innovative paradigms. A 2025 virtual reality study demonstrated that source memory contributions to overall memory performance vary across the lifespan, playing a more substantial role in supporting long-term recall in older adults compared to younger individuals [2]. Furthermore, investigations into false memories have revealed that action complexity significantly influences source memory accuracy, with simple actions more likely to generate forward false memories through automatic mental simulation than complex, multi-step actions [3].

Quantitative Data Synthesis: Source Memory Performance Across Paradigms

Table 1: Quantitative Findings from Source Memory Research (2024-2025)

| Study Focus | Population | Key Metric | Performance Findings | Citation |

|---|---|---|---|---|

| Virtual Reality Assessment | 676 participants (12-85 years) | Source memory contribution to recall | Stronger association in older adults; enhanced long-term recall | [2] |

| False Memory Formation | Experimental participants | Recognition accuracy for action phases | Higher false acceptance for forward vs. backward phases (simple actions only) | [3] |

| Semantic Structure & Recall | 28 young adults (20-34 years) | Central detail recall | Content similarity between events systematically influenced recall | [4] |

| Semantic Structure & Recall | 28 older adults (64-83 years) | Central detail recall | Similar benefit from semantic structure as young adults | [4] |

| Aging & Mnemonic Strategies | Young (18-30) vs. Older (60-75) adults | Word recall with method of loci | Older adults showed equivalent recall to young adults with semantically congruent associations | [5] |

Table 2: Demographic Patterns in Self-Reported Cognitive Disability (2013-2023)

| Demographic Factor | 2013 Rate | 2023 Rate | Change | Notes |

|---|---|---|---|---|

| Overall Population | 5.3% | 7.4% | +2.1% | Steady increase since 2016 |

| Age 18-39 | 5.1% | 9.7% | +4.6% | Nearly doubled |

| Age 70+ | 7.3% | 6.6% | -0.7% | Slight decline |

| Income <$35K | 8.8% | 12.6% | +3.8% | Highest rate increase |

| Income >$75K | 1.8% | 3.9% | +2.1% | Remains lowest rate |

| No High School Diploma | 11.1% | 14.3% | +3.2% | Consistently highest rates by education |

Experimental Protocols for Source Memory Assessment

Virtual Reality-Based Source Memory Assessment

The Suite Test represents a novel approach to source memory assessment through immersive virtual reality technology [2]. This protocol offers enhanced ecological validity by simulating real-world memory demands.

Materials and Setup:

- Hardware: VR headset with motion tracking capabilities

- Software: Suite Test VR environment (furniture shop scenario)

- Recording system for response accuracy and timing

Procedure:

- Environment Familiarization: Participants are immersed in a 360-degree VR furniture store environment.

- Task Instructions: Participants receive audio instructions to group specific furniture items according to different customer families.

- Encoding Phase: Participants interact with furniture items while contextual information (source details) is embedded naturally.

- Source Memory Task: Participants must identify which customer family requested specific items or the location context of items.

- Recall Tests: Immediate recall, short-term delayed recall, and long-term delayed recall are administered.

- Recognition Trial: Final recognition phase assesses source discrimination accuracy.

Data Analysis:

- Source memory accuracy calculated as percentage correct for contextual attributions

- Response time measures for source identification

- Analysis of relationship between source memory and recall performance across age groups

This protocol has demonstrated particular utility in assessing age-related differences, revealing that older adults benefit more from source memory tasks for supporting long-term recall compared to younger participants [2].

Naturalistic Event Recall Paradigm

This protocol examines how semantic structure influences source memory across multiple timepoints in both young and older adults [4].

Materials:

- Stimuli: 8 short videos (3.5-4.5 minutes) depicting life situations

- Video conferencing platform (Microsoft Teams)

- Gorilla Experiment Builder for stimulus presentation

- Audio recording equipment for narrative capture

Procedure:

- Session 1 (Day 1 - Encoding):

- Participants watch 8 videos with preceding titles

- Immediate recall of 4 selected videos prompted by titles

- Audio recordings collected of narrative descriptions

Session 2 (Day 2 - 24-hour Delay):

- Recall of same 4 videos from Day 1

- Audio recordings collected

Session 3 (Day 8 - 1-week Delay):

- Recall of all 8 original videos

- Audio recordings collected

Coding and Analysis:

- Transcript Preparation: Verbatim transcription of audio recordings

- Event Segmentation: Identification of discrete events within narratives

- Detail Classification:

- Central details: Essential to storyline (characters, main events)

- Peripheral details: Contextual and perceptual information

- Semantic Network Analysis: Transformation of narratives into event networks based on semantic similarity

- Centrality Metrics: Calculation of semantic connectivity between events

This protocol has revealed that content similarity between events systematically influences recall across testing sessions similarly in both young and older adults, with semantic structure particularly predicting central (but not peripheral) detail recall [4].

Action Complexity and False Memory Paradigm

This experimental approach examines how action complexity affects source memory and false memory formation [3].

Materials:

- Stimuli: Photographs depicting simple vs. complex actions

- Computer-based recognition task

- Timing software for response capture

Procedure:

- Encoding Phase:

- Participants view static photos depicting unfolding actions

- Two conditions: Simple single actions vs. complex multi-step actions

- Presentation duration standardized across participants

Retention Interval: 15-minute delay with distractor task

Recognition Test:

- Presentation of original photos plus distractor images

- Distractors represent moments temporally forward or backward from original action

- Participants indicate whether they previously saw each image

- Response accuracy and confidence ratings collected

Data Analysis:

- False alarm rates compared between forward and backward distractors

- Analysis of simple vs. complex action conditions

- Signal detection analysis (d') for sensitivity measurement

This protocol has demonstrated that false memories due to implicit forward simulations occur primarily for simple actions but not for complex actions, suggesting different cognitive mechanisms based on action complexity [3].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Source Memory Research

| Tool/Resource | Primary Function | Application Notes | Representative Use |

|---|---|---|---|

| Suite Test VR Platform | Virtual reality-based memory assessment | Provides ecological validity; minimizes examiner variability; includes embedded source memory tasks | Assessment of source memory contributions across lifespan [2] |

| Method of Loci Materials | Mnemonic strategy implementation | Enhanced with semantic congruence for older adults; requires pre-existing knowledge integration | Episodic memory improvement in aging populations [5] |

| Naturalistic Video Stimuli | Ecologically valid encoding materials | Short films depicting life situations; enable analysis of semantic event structure | Investigation of semantic influences on event recall [4] |

| Action Photograph Series | Controlled stimulus sets for false memory research | Simple vs. complex action sequences; enables forward/backward memory distortion analysis | Testing false memory formation through mental simulation [3] |

| Eye-Tracking Systems | Measurement of overt attention during memory tasks | Correlates with internal attentional orienting in working memory; less engagement in long-term memory tasks | Dissociating attention mechanisms in working vs. long-term memory [6] |

| Neuropsychological Batteries | Standardized cognitive assessment (e.g., ACE-III) | Participant screening; ensures cognitive health in aging studies | Exclusion of participants with significant cognitive impairment [4] |

Methodological Considerations and Future Directions

The assessment of source memory requires careful consideration of several methodological factors. First, the complexity of to-be-remembered material significantly influences memory accuracy, with simple actions more prone to specific types of false memories than complex, multi-step actions [3]. Second, assessment timing and delay intervals critically impact the pattern of results, as source memory contributions to overall recall vary across immediate, short-term, and long-term testing periods [2].

Recent technological advances, particularly virtual reality platforms, offer promising avenues for enhancing the ecological validity of source memory assessment while maintaining experimental control [2]. However, researchers should note that recognition accuracy and confidence may differ between real-life and VR modalities, necessitating careful interpretation of findings [2].

Future research should address the neural mechanisms underlying source memory, building on evidence of frontal lobe involvement in source monitoring [1]. Additionally, investigation into individual differences in source memory capacity across the lifespan will inform targeted interventions for populations with source memory deficits, potentially leveraging semantically-congruent mnemonic strategies that have shown promise in older adult populations [5].

Source memory is a critical component of episodic memory, defined as the neurocognitive system that enables human beings to remember past experiences [7]. Specifically, source memory refers to the ability to recall the contextual details surrounding a memory episode—such as where, when, or from whom information was acquired [7]. This differs from item memory, which simply involves recognizing previously encountered information without contextual details. The cognitive psychology of source memory has generated significant research interest due to its disproportionate vulnerability to neurological conditions, aging, and brain injury compared to other memory forms [7].

Theoretical models of source memory have evolved substantially, with most contemporary frameworks emphasizing the central role of the prefrontal cortex (PFC) in successful source monitoring [7] [8]. Neuroimaging and neuropsychological studies consistently demonstrate that the prefrontal cortex plays a less essential role in memory for items themselves than in memory for the spatio-temporal context in which those items were learned [7]. This paper synthesizes key theoretical models and their experimental support, providing researchers with structured protocols for assessing source memory in both basic and applied settings.

Key Theoretical Models and Supporting Evidence

Prefrontal Cortex (PFC) Mediation Model

The PFC mediation model represents a dominant framework in source memory research, proposing that the lateral prefrontal cortex is fundamentally required for retrieving contextual details about memories [7]. This model distinguishes between two memory processes: (1) item memory, which involves recognizing previously encountered information, and (2) source memory, which requires recalling the contextual details of the encounter.

Table 1: Key Brain Regions Implicated in Source Memory

| Brain Region | Function in Source Memory | Evidence Source |

|---|---|---|

| Lateral Prefrontal Cortex (PFC) | Strategic memory retrieval, monitoring processes | Patient lesion studies [7] |

| Medial Temporal Lobe (MTL) | Binding item and context during encoding | fMRI-EEG multimodal studies [9] |

| Medial Prefrontal Cortex | Reality monitoring (internal vs. external sources) | Brain stimulation studies [8] |

| Temporoparietal Cortex | Internal source monitoring | Systematic review of brain stimulation [8] |

| Precuneus and Left Angular Gyrus | External source monitoring | Brain stimulation studies [8] |

Supporting evidence comes from patient studies demonstrating that individuals with focal lesions in lateral PFC show significant source memory deficits while often maintaining relatively preserved item memory [7]. Event-related potential (ERP) studies further reveal that both older adults and PFC patients exhibit a reduced early positive-going old/new effect compared to young controls, indicating neural processing differences during source retrieval [7]. The model has been refined through non-invasive brain stimulation studies that establish causal—rather than correlational—relationships between PFC function and source monitoring abilities [8].

Frontal Theory of Aging and Source Memory

The frontal theory of aging represents a specialized application of the PFC model, suggesting that age-related source memory declines result from disproportionate deterioration of prefrontal cortex function compared to other brain regions [7]. This model posits that older adults fall on a continuum between young adults and those with frank PFC damage in terms of source memory performance.

Table 2: Age-Related Differences in Memory Performance

| Memory Measure | Young Adults | Older Adults | PFC Patients |

|---|---|---|---|

| Item Hit Rate | Normal | Decreased | Normal |

| False Alarm Rate | Normal | No change | Increased |

| Source Accuracy | Normal | Disproportionately impaired | Disproportionately impaired |

| Early Old/New ERP Effect | Prominent | Reduced | Reduced |

| Left Frontal Negativity (600-1200 ms) | Not observed | Prominent | Smaller and delayed |

Interestingly, this model accommodates the counterintuitive finding that older adults sometimes show greater prefrontal activation than young adults during source memory tasks, interpreted as a compensatory mechanism for declining efficiency in other brain regions [7]. This compensation hypothesis suggests that cumulative declines in posterior memory processing regions place increased demands on prefrontal executive functions, resulting in recruitment of additional neural resources to maintain task performance.

Source Monitoring Framework

The source monitoring framework conceptualizes source memory as a decision process rather than a direct retrieval process. This model proposes three distinct subprocesses: (1) internal source monitoring (discriminating between self-generated sources), (2) reality monitoring (distinguishing between internal and external sources), and (3) external source monitoring (discriminating between different external sources) [8].

Brain stimulation studies provide causal evidence for distinct neural correlates underlying these subprocesses. Internal source monitoring depends on the lateral prefrontal and temporoparietal cortices, while reality monitoring engages the medial prefrontal and temporoparietal cortices, and external source monitoring relies on the precuneus and left angular gyrus [8]. This framework has particular clinical relevance for understanding conditions like schizophrenia, where source monitoring deficits are prominent.

Developmental Perspective on Source Memory Formation

Emerging research examines the development of source memory in children, revealing the importance of medial temporal lobe (MTL) maturation. Multimodal analysis combining EEG and fMRI has identified cortical sources of EEG signals during memory encoding that predict subsequent source memory performance in children aged 4-8 years [9].

Two specific EEG components—the P2 and late slow wave (LSW)—have been source-localized to MTL cortical areas, validating the MTL's crucial role in both early-stage information processing and late-stage memory integration [9]. The P2 effect was localized to all six tested subregions of cortical MTL in both hemispheres, while the LSW effect was specifically localized to the parahippocampal cortex and entorhinal cortex [9]. This developmental model highlights the progressive maturation of neural networks supporting source memory, with implications for understanding typical and atypical memory development.

Experimental Protocols for Source Memory Assessment

Item-Source Recognition Paradigm

The item-source recognition paradigm represents a standard approach for simultaneously assessing item memory and source memory [7]. This protocol presents participants with stimuli from different sources during the encoding phase, followed by a test phase that evaluates both item recognition and source identification.

Materials and Setup:

- Stimulus presentation software (e.g., E-Prime, PsychoPy)

- 200-400 study items (words, images, or sentences)

- Two distinct source contexts (e.g., male/female voice, left/right screen location, List 1/List 2)

- EEG recording equipment (optional, for neural correlates)

Procedure:

- Encoding Phase: Present items sequentially, each associated with a specific source context.

- Distractor Task: Implement a 5-10 minute mathematical or verbal task to prevent rehearsal.

- Test Phase: Present old and new items in random sequence.

- Item Recognition: For each test item, participants first indicate "old" or "new."

- Source Identification: For items judged "old," participants identify the source context.

- Data Collection: Record accuracy and reaction time for both item and source judgments.

Data Analysis:

- Calculate item recognition accuracy (hits - false alarms)

- Compute source memory accuracy (proportion correct for items correctly recognized)

- Compare performance across experimental conditions or participant groups

EEG-ERP Protocol for Neural Correlates

This protocol measures event-related potentials (ERPs) during source memory tasks to capture the neural time course of retrieval processes [7]. The method is particularly valuable for identifying timing differences in cognitive processing across populations.

Materials and Setup:

- High-density EEG system (64-128 channels)

- Electrically shielded and sound-attenuated testing room

- Stimulus presentation system synchronized with EEG acquisition

- ERP analysis software (e.g., EEGLAB, ERPLAB)

Procedure:

- Participant Preparation: Apply EEG cap following standard positioning guidelines.

- Impedance Check: Ensure all electrode impedances are below 5 kΩ.

- Task Administration: Implement item-source recognition paradigm.

- EEG Recording: Continuous recording during test phase with event markers.

- Data Preprocessing: Filtering, artifact rejection, eye-blink correction.

- ERP Analysis: Epoch extraction (-200 to 1200 ms), baseline correction.

- Component Measurement: Focus on early old/new effect (300-500 ms) and late frontal effect (600-1200 ms).

Key ERP Components:

- Early Old/New Effect: Widespread positive component (300-500 ms) associated with item recognition

- Late Frontal Effect: Sustained positivity at prefrontal sites (700+ ms) linked to source retrieval

Non-Invasive Brain Stimulation Protocol

Non-invasive brain stimulation techniques like transcranial magnetic stimulation (TMS) and transcranial direct current stimulation (tDCS) enable causal investigation of brain-behavior relationships in source memory [8].

Materials and Setup:

- TMS or tDCS system with neuronavigation (recommended)

- MRI-compatible brain marker set for individual neuronavigation

- Source memory task programmed for computer administration

- Sham stimulation capability for controlled conditions

Procedure:

- Target Identification: Determine stimulation coordinates based on individual MRI or standardized system.

- Stimulation Protocol: Apply stimulation before or during task performance.

- Control Condition: Implement sham stimulation using identical procedures.

- Task Administration: Administer source memory task following stimulation.

- Data Analysis: Compare source memory performance between active and sham conditions.

Stimulation Parameters (example for tDCS):

- Intensity: 1-2 mA

- Duration: 20-30 minutes

- Electrode Size: 25-35 cm²

- Target Regions: Based on source monitoring subprocess of interest

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Source Memory Research

| Research Tool | Function/Application | Example Use in Source Memory Research |

|---|---|---|

| High-Density EEG Systems | Recording neural activity with high temporal resolution | Measuring ERP components during source retrieval [7] |

| fMRI Equipment | Localizing neural activity with high spatial resolution | Identifying brain regions engaged during source encoding [9] |

| TMS/tDCS Systems | Non-invasive brain stimulation for causal inference | Establishing causal role of PFC in source monitoring [8] |

| E-Prime/PsychoPy Software | Precisely controlled stimulus presentation | Administering item-source recognition paradigms [7] |

| Neuronavigation Systems | Precisely targeting brain stimulation | Ensuring accurate stimulation of target regions [8] |

| Structural MRI | Individual anatomical reference | Guiding stimulation targets and interpreting results [8] |

Integrated Neural Pathways in Source Memory

The integrated neural pathway illustrates how source memory processing unfolds across multiple brain systems. During encoding, perceptual information undergoes item-context binding primarily in the medial temporal lobe, while contextual tags are associated through prefrontal cortex engagement [9] [10]. During retrieval, cues trigger strategic monitoring processes that depend on both MTL and PFC systems before a final source decision is made [7] [8]. This pathway highlights the dynamic interaction between temporal and frontal regions throughout the source memory lifecycle, with the PFC playing particularly critical roles in strategic retrieval and monitoring functions that enable accurate source identification.

Distinguishing Source Memory from Working and Long-Term Memory

Source memory refers to the neurocognitive ability to recall the contextual details surrounding a memory episode, such as the spatial, temporal, and sensory characteristics of the original learning event. This complex memory function can be dissociated from the contents of the memory itself and relies on distinct neural circuitry, primarily involving the prefrontal cortex and medial temporal lobe regions. Within the broader framework of memory systems, source memory operates at the intersection of working memory, which maintains and manipulates transient information, and long-term memory, which stores information more permanently. Understanding these distinctions is particularly crucial for developing precise assessment techniques in clinical and research settings, especially for neurodegenerative conditions like Alzheimer's disease and related dementias where source memory deficits often present as early markers [11] [12].

The accurate differentiation of these memory systems has profound implications for diagnosing cognitive impairment, evaluating therapeutic efficacy in drug development, and advancing fundamental memory research. This document provides researchers and drug development professionals with standardized protocols and analytical frameworks for distinguishing these memory systems, with particular emphasis on source memory assessment techniques relevant to clinical trial methodologies and experimental neuroscience.

Comparative Framework of Memory Systems

Table 1: Key Characteristics of Working, Long-Term, and Source Memory

| Feature | Working Memory | Long-Term Memory | Source Memory |

|---|---|---|---|

| Capacity | Limited (≈4-9 items) [13] | Virtually unlimited [13] | Context-dependent |

| Duration | 20-30 seconds without rehearsal [13] | Minutes to lifetime [13] | Variable; often degraded faster than factual content |

| Primary Function | Active maintenance and manipulation of temporary information [14] | Storage and retrieval of enduring information [13] | Contextual attribution and reality monitoring |

| Neural Correlates | Prefrontal cortex; Contralateral Delay Activity (CDA) [14] | Hippocampus and medial temporal lobe [13] [15] | Prefrontal-hippocampal interactions |

| Assessment Methods | Change detection tasks, n-back paradigms [14] [16] | Recall, recognition, priming tests [13] | Source monitoring paradigms |

| Vulnerability to Neurodegeneration | Early degradation in Alzheimer's disease [11] | Progressive impairment across dementia stages [12] | Early significant impairment in dementia [12] |

Table 2: Quantitative Assessment Metrics Across Memory Systems

| Assessment Tool | Memory System Measured | Administration Time | Key Parameters | Clinical Applications |

|---|---|---|---|---|

| Change Detection Task [14] [16] | Working Memory | 5-10 minutes | Capacity (K), Precision, CDA amplitude [14] | Early cognitive impairment screening |

| Delayed Estimation Task [16] | Visual Working Memory | 10-15 minutes | Response distribution, Swap errors [16] | Large-scale cognitive phenotyping |

| Montreal Cognitive Assessment (MoCA) [12] | Multiple domains | 10-15 minutes | Total score (0-30), Domain subscores | Dementia screening and monitoring |

| Spaced Learning Protocol [15] | Long-term memory encoding | 60 minutes | Retention intervals, Retrieval accuracy [15] | Therapeutic intervention evaluation |

| Source Monitoring Paradigm | Source memory | 15-20 minutes | Source attribution accuracy, Confidence ratings | Reality monitoring assessment in trials |

Experimental Protocols for Memory Assessment

Change Detection Protocol for Working Memory Assessment

Purpose: To quantify visual working memory capacity and active maintenance mechanisms using contralateral delay activity (CDA) measurement [14].

Materials: Computer system with precision timing capability, EEG setup with 64+ channels, stimulus presentation software, lateralized color squares or shapes.

Procedure:

- Participant Preparation: Apply EEG electrodes according to the 10-20 system, with particular attention to posterior sites (P07, P08, PO7, PO8).

- Trial Structure:

- Fixation cross (500 ms)

- Memory array (100 ms) displaying 2-4 colored squares lateralized to left or right visual field

- Retention interval (900-1000 ms) with blank screen

- Test array (2000 ms) requiring same/different judgment for one probed item

- Dual-Task Condition: Introduce a simple foveal discrimination task (e.g., C vs. mirror-reversed C) during the retention interval to assess active maintenance disruption [14].

- Data Collection: Minimum of 200 trials per set size condition, counterbalanced across visual fields.

- CDA Calculation: Compute difference waves between contralateral and ipsilateral hemispheres during retention interval, focusing on 300-600 ms post-stimulus window.

Analysis:

- Calculate working memory capacity: K = S × (H - F), where S = set size, H = hit rate, F = false alarm rate

- Quantify CDA amplitude as mean voltage 400-600 ms post-stimulus

- Compare CDA disruption between single-task and dual-task conditions [14]

Source Memory Assessment Protocol

Purpose: To evaluate contextual memory binding and source attribution accuracy.

Materials: Audio recording equipment, diverse stimulus set (images, words), contextual detail database.

Procedure:

- Encoding Phase:

- Present 100 items (50 images, 50 words) across two sessions (different days/times)

- Vary presentation context: spatial location (left/right screen), temporal order, sensory modality (visual/auditory), speaker gender (for words)

- Incorporate intentional encoding instructions: "Remember both the item and its presentation details"

- Retention Interval: 20-minute distractor task

- Retrieval Phase:

- Present recognition test with 150 items (100 old, 50 new)

- For each item identified as "old," administer source judgment: "Where was this item presented?" with forced-choice options

- Collect confidence ratings (1-4 scale) for each source attribution

- Experimental Manipulations:

- Include misleading contextual information for subset of trials

- Vary retention intervals (20 minutes vs. 48 hours) to assess durability

Analysis:

- Calculate source memory accuracy: Proportion of correct source attributions for correctly recognized items

- Compute source monitoring discrimination: d' for source judgments

- Analyze confidence-accuracy relationship for source versus item memory

- Assess binding errors: Frequency of contextual feature recombination

Long-Term Memory Consolidation Protocol

Purpose: To evaluate protein synthesis-dependent long-term memory formation using spaced learning paradigms [15].

Materials: Complex educational material (e.g., biology curriculum), distractor tasks, retention assessment tools.

Procedure:

- Stimulus Preparation: Compress target information into three 20-minute instructional periods

- Spacing Protocol:

- First learning episode: 20 minutes intensive instruction

- First distractor period: 10 minutes of unrelated cognitive tasks

- Second learning episode: 20 minutes same content, different presentation

- Second distractor period: 10 minutes

- Third learning episode: 20 minutes review and integration

- Retention Testing: Administer comprehensive tests at multiple intervals (immediately, 24 hours, 1 week, 1 month)

- Control Condition: Implement massed learning (60-minute continuous instruction) for comparison

Analysis:

- Compare retention rates between spaced and massed conditions across time intervals

- Calculate learning efficiency: Score increase per instructional hour

- Assess durability of memory traces through forgetting curves

- Evaluate protein synthesis dependence through pharmacological interventions when ethically permissible

Research Reagent Solutions

Table 3: Essential Materials for Memory Assessment Research

| Reagent/Resource | Function/Application | Specification Notes |

|---|---|---|

| EEG/ERP Systems | Neural correlates of working memory (CDA) [14] | 64+ channels; precision timing capability |

| fMRI Compatibility Tools | Functional imaging during source retrieval | High-resolution (3T+); hippocampal-focused protocols |

| Standardized Cognitive Batteries | Clinical memory assessment | MoCA, AD8 [12] |

| Custom Stimulus Presentation Software | Experimental control and timing | Millisecond precision; parallel port synchronization |

| Neuropsychological Assessment Tools | Baseline cognitive screening | Validated for target population |

| Biochemical Assay Kits | Protein synthesis markers (CREB) | LTP-related protein detection [15] |

| Data Analysis Platforms | Modeling of memory parameters | QCE-VWM framework implementation [16] |

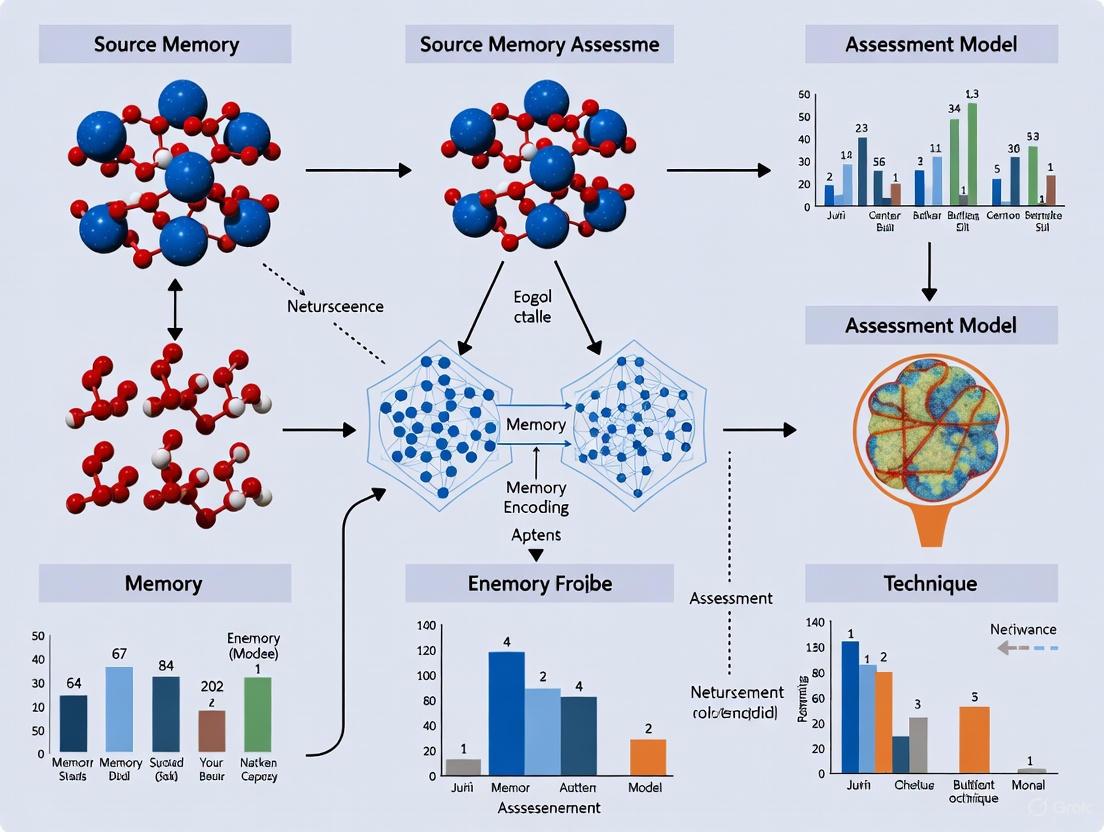

Visualization of Memory Assessment Workflows

Diagram 1: Comprehensive Memory Assessment Workflow for Clinical Trials

Diagram 2: Interrelationships Among Memory Systems

Data Interpretation Guidelines

Working Memory Performance Analysis:

- Normal CDA amplitude progression: Linear increase with set size, plateau at capacity limit [14]

- Pathological indicators: Reduced capacity (K<3), flattened CDA slope, excessive disruption during dual-task conditions

- Pharmacological sensitivity: CDA measures show sensitivity to cholinergic manipulation

Source Memory Interpretation Framework:

- Binding deficit pattern: Preserved item memory with impaired source attribution suggests medial temporal-prefrontal dysfunction

- Reality monitoring errors: Confusion between imagined and perceived events indicates specific prefrontal involvement

- Progression tracking: Source memory deficits often precede item memory decline in neurodegenerative conditions

Long-Term Memory Consolidation Metrics:

- Spaced learning efficacy: Significantly enhanced retention compared to massed learning (typically >30% improvement) [15]

- Protein synthesis dependence: Disruption by translational inhibitors indicates true LTM formation

- Clinical correlation: Poor consolidation predicts more rapid cognitive decline in mild cognitive impairment

Application in Clinical Trial Design

Integrating these differentiated memory assessments into clinical trials for cognitive-enhancing therapeutics requires:

- Endpoint Selection: Combine working memory (CDA amplitude), source memory (binding accuracy), and long-term memory (consolidation rate) as complementary endpoints

- Population Stratification: Use memory profiles to identify homogeneous patient subgroups for targeted interventions

- Dosing Optimization: Employ sensitive working memory measures (CDA disruption) for early phase dose-finding studies

- Mechanism Elucidation: Differential improvement across memory systems reveals drug mechanism:

- Prefrontal-enhanced compounds improve source memory

- Hippocampal-targeted agents enhance consolidation

- Network-stabilizing drugs reduce working memory interference

These protocols establish standardized methodology for distinguishing memory systems in research and clinical applications, with particular utility for clinical trial design and therapeutic development for neurodegenerative conditions.

{# The Neuroanatomical Correlates of Source Monitoring}

Source monitoring, the cognitive process of determining the origin of memories, is a critical function supported by a distributed neural network. This Application Note details the key neuroanatomical correlates and experimental protocols for investigating source memory. We provide a structured overview of the brain regions involved, standardized methodologies for functional neuroimaging assessments, and a toolkit of research reagents. The content is designed to support researchers and drug development professionals in the rigorous assessment of source memory, which is often impaired in neuropsychiatric disorders and age-related cognitive decline.

Source memory is a facet of episodic memory that enables an individual to recall the contextual details (e.g., time, place, or sensory modality) associated with a memory, as opposed to the memory content itself. This function is paramount for constructing an accurate and reliable autobiographical narrative. Deficits in source monitoring are recognized as sensitive early markers of cognitive dysfunction in conditions such as Alzheimer's disease and other dementias. Framed within a broader thesis on advancing source memory assessment techniques, this document synthesizes current evidence on the underlying neuroanatomy and provides detailed protocols for its investigation in research and clinical trial settings.

Neuroanatomical Foundations of Source Monitoring

Source monitoring relies on a coordinated network of prefrontal and medial temporal lobe regions, with additional contributions from posterior parietal and lateral temporal cortices. The following table summarizes the key brain regions and their proposed functional roles in source memory processes.

Table 1: Key Neuroanatomical Correlates of Source Monitoring

| Brain Region | Functional Role in Source Monitoring |

|---|---|

| Prefrontal Cortex (PFC) | Provides top-down cognitive control; supports strategic retrieval, monitoring, and evaluation of contextual details; crucial for resolving source conflict. |

| Hippocampus | Binds disparate contextual features (e.g., spatial, temporal) into a coherent episodic memory trace; essential for the initial encoding and subsequent retrieval of source information. |

| Anterior Cingulate Cortex (ACC) | Monitors for response conflict and competition between memory sources; involved in error detection during memory retrieval. |

| Posterior Parietal Cortex | Attracts attention to and maintains the focus on retrieved memory representations, including their contextual features. |

| Lateral Temporal Cortex | Involved in the storage and retrieval of semantic knowledge, which aids in attributing memories to the correct source. |

The Prefrontal Cortex (PFC), particularly the dorsolateral (dlPFC) and ventrolateral (vlPFC) subdivisions, is central to strategic retrieval and verification processes. Its interaction with the Hippocampus is critical, as the hippocampus is responsible for the binding of item and context during encoding [17]. The Anterior Cingulate Cortex (ACC) contributes by monitoring for conflicts between potentially misattributed sources [18] [19]. Furthermore, alterations in large-scale brain networks are implicated; for instance, hyperconnectivity of the Default Mode Network (DMN) may underpin the excessive self-focused attention that leads to source misattributions in certain pathologies [19].

Experimental Protocols for Assessing Source Monitoring

This section outlines standardized protocols for investigating the neural correlates of source memory using functional neuroimaging.

Functional Magnetic Resonance Imaging (fMRI) Protocol

This protocol is designed to map the brain networks involved in source memory retrieval with high spatial resolution.

- Objective: To identify and compare the neural activity associated with successful item recognition versus source memory retrieval.

- Materials & Setup:

- 3T MRI scanner with standard head coil.

- Presentation software (e.g., E-Prime, PsychoPy).

- MR-compatible audio-visual systems.

- Stimuli & Task Design:

- Encoding Phase: Participants are presented with a series of words or images. Each item is paired with a specific contextual feature (e.g., presented on the left/right side of the screen, spoken in a male/female voice, or associated with a specific task like "size" or "animacy" judgment).

- Retrieval Phase: Conducted inside the scanner. Participants complete a two-step test:

- Item Recognition: Old/new judgment for presented stimuli.

- Source Memory: For items correctly identified as "old," participants must identify the contextual source (e.g., "Was the word on the left or right?").

- fMRI Acquisition Parameters:

- Sequence: T2*-weighted echo-planar imaging (EPI) for BOLD contrast.

- Voxel Size: 3x3x3 mm³ (or smaller for higher resolution).

- TR/TE: TR = 2000 ms, TE = 30 ms.

- Slices: Whole-brain coverage (~37 axial slices).

- Field of View: 220 mm.

- Data Analysis:

- Preprocessing (slice-timing correction, realignment, normalization, smoothing) using SPM, FSL, or AFNI.

- First-level general linear model (GLM) contrasting BOLD activity during:

- Correct Source Trials > Correct Item-Only Trials

- Incorrect Source Trials > Correct Source Trials (for error-related activity)

- Group-level random-effects analysis to identify consistent activation clusters (e.g., using Activation Likelihood Estimation meta-analysis techniques [18]).

Diagram 1: fMRI source memory task workflow.

Functional Near-Infrared Spectroscopy (fNIRS) Protocol

This protocol offers a more ecological approach for studying populations like older adults or patients, where scanner environments are suboptimal [20].

- Objective: To monitor prefrontal cortex hemodynamics during source memory encoding and retrieval in a naturalistic setting.

- Materials & Setup:

- Continuous-wave fNIRS system (e.g., Oxymon Mk III, Artinis).

- Optodes configured to cover the dorsolateral and frontopolar PFC.

- A quiet testing room with a standard computer monitor.

- Stimuli & Task Design:

- fNIRS Acquisition Parameters:

- Measured Chromophores: Oxyhemoglobin (O2Hb) and Deoxyhemoglobin (HHb).

- Source-Detector Distances: 3 cm to ensure cortical penetration.

- Sampling Rate: ≥ 10 Hz.

- Data Analysis:

- Conversion of optical density to concentration changes using the Modified Beer-Lambert Law.

- Filtering (e.g., band-pass 0.01-0.2 Hz) to remove physiological noise.

- Block-average analysis to compare O2Hb/HHb changes during task conditions versus baseline.

- Statistical comparison (e.g., t-tests) of hemodynamic responses between successful and unsuccessful source memory trials.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Reagents for Source Memory Research

| Item | Function/Application |

|---|---|

| Virtual Reality (VR) Suite Test | A 360-degree VR-based neuropsychological assessment that immerses participants in a realistic environment (e.g., a furniture shop) to test source memory in an ecologically valid context [21]. |

| fNIRS System | A non-invasive optical neuroimaging tool to monitor cortical activation (via O2Hb/HHb) during cognitive tasks. Ideal for ecological setups and special populations due to its portability and tolerance for movement [20]. |

| Activation Likelihood Estimation (ALE) | A meta-analysis software (e.g., GingerALE) used to perform coordinate-based meta-analysis of functional neuroimaging studies, identifying consistent brain activation patterns across multiple experiments [18]. |

| Presentation Software | Software platforms (e.g., E-Prime, PsychoPy) for designing and running precisely timed cognitive experiments and stimuli delivery. |

| Standardized Word & Image Databases | Normed sets of stimuli (e.g., nouns, concrete images) to control for variables like frequency, concreteness, and emotional valence across experimental conditions. |

Data Visualization and Analysis Workflow

The path from raw neuroimaging data to interpretable results involves a multi-stage workflow. The following diagram outlines the critical steps for data processing and the integration of behavioral and neural data, which can be facilitated by machine learning approaches for pattern classification [22].

Diagram 2: Data analysis workflow from acquisition to interpretation.

Application Notes: Key Findings and Data Synthesis

Source memory, the ability to recall the contextual details of a learned item (e.g., where, when, or from whom information was acquired), demonstrates distinct developmental trajectories across the human lifespan. Research utilizing diverse methodologies—from longitudinal cohort studies to virtual reality-based assessments—reveals that the integrity of source memory is a sensitive marker of cognitive health, from early development through advanced aging. The following data synthesis consolidates key quantitative findings from recent and seminal studies.

Table 1: Lifespan Study Designs and Source Memory Performance Metrics

| Study / Dataset | Sample Size & Age Range | Study Design | Key Source Memory Finding | Associated Cognitive/Neural Correlates |

|---|---|---|---|---|

| Dallas Lifespan Brain Study (DLBS) [23] [24] | N=464; 21-89 years at baseline | Longitudinal (3 timepoints over ~10 years) | Data released for hypothesis testing; demonstrated brain network breakdown across lifespan [23]. | Amyloid & tau PET, structural & functional MRI, comprehensive neuropsychological battery [24]. |

| Virtual Reality (Suite Test) [2] | N=676; 12-85 years | Cross-sectional | Performance on VR source memory task was more strongly associated with recall in older adults, enhancing long-term recall [2]. | Ecological validity; relationship with immediate, short-term, and long-term delayed recall [2]. |

| Longitudinal Child Development Study [25] | N=135; 4-8 years at baseline | Longitudinal (3 years, cohort-sequential) | Accelerated rates of change in binding (fact/source combinations) between 5 and 7 years [25]. | Linear increases in item memory (facts or sources) between 4 and 10 years [25]. |

| ERP Study on Aging [26] | Young (M=22) vs. Older (M=66) adults | Cross-sectional | Older adults significantly outperformed by young on source memory, despite performance-enhancing measures [26]. | Lack of robust late right-prefrontal ERP effect in older adults, suggesting distinct cortical networks [26]. |

| Computational Memory Capacity [27] | N=636; 18-88 years | Cross-sectional | Computational memory capacity of brain networks, derived from connectomes, declines with age [27]. | Strongest decline in lateral frontal, cingulate, precuneus, and inferior parietal regions [27]. |

| Cognitively Informed Protocol (CogI) [28] | N=268; 9-89 years | Experimental (2x2 between-participants) | CogI bolstered recall of contacts across lifespan; older adults recalled fewer contacts overall [28]. | Efficacy was independent of interview modality (interviewer-led vs. self-led) [28]. |

Table 2: Age-Specific Vulnerabilities and Intervention Efficacy

| Age Group | Key Source Memory Characteristic | Potential Neurocognitive Basis | Intervention/Protocol Efficacy |

|---|---|---|---|

| Childhood (4-10 years) | Rapid development of binding processes between 5-7 years [25]. | Maturation of basic mnemonic and frontal lobe processes [25]. | Not directly assessed in retrieved studies; standard cognitive interview protocols are effective for children's memory generally [28]. |

| Young Adulthood | Peak performance; serves as benchmark for older group comparisons [26] [27]. | Optimal organization and computational capacity of brain connectomes [27]. | Cognitively informed protocols effective [28]. |

| Older Adulthood | Disproportionate decline compared to item memory [26]. | Frontal lobe dysfunction; reduced right prefrontal activity (ERP/fMRI); degradation of brain network connections [26] [27]. | Benefits more from source memory tasks for delayed recall [2]; Cognitively Informed Protocols bolster recall [28]. |

Experimental Protocols

This section provides detailed methodologies for key experimental paradigms cited in the application notes, enabling replication and implementation in research settings.

Protocol: Longitudinal Assessment of Source Memory in Childhood

This protocol is adapted from a longitudinal, cohort-sequential study designed to track the development of binding and source memory in early and middle childhood [25].

- Objective: To examine developmental changes in children's ability to bind items (facts) with their context (source) in memory.

- Materials and Setup:

- Stimuli: A set of novel facts presented via video to ensure consistency across longitudinal waves. Example: "A diamond can be burned."

- Sources: Two distinct sources (e.g., a male experimenter and a female experimenter, or two different puppets) who deliver the facts in the video.

- Environment: Quiet testing room.

- Procedure:

- Encoding Phase: Participants watch a video where the two sources take turns teaching several novel facts. Each fact is presented once.

- Retention Interval: A one-week delay is implemented between encoding and retrieval.

- Retrieval Phase:

- Item Memory Test: Participants are first asked to recall all the facts they remember (e.g., "What new facts did you learn last week?").

- Source Memory Test: For each correctly recalled fact, the participant is asked to identify the source ("Who told you that?"). The available responses should include the two experimental sources, "guess," "know," or an extra-experimental source (e.g., "learned it somewhere else").

- Data Analysis:

- Item Memory Score: Percentage of facts correctly recalled.

- Source Memory (Binding) Score: Percentage of correctly recalled facts for which the source is also correctly identified.

- Error Analysis: Categorize errors as intra-experimental (wrong source within the experiment) or extra-experimental (source outside the experiment).

- Longitudinal Design: Employ a cohort-sequential design. Three cohorts (e.g., ages 4, 6, and 8) are followed for three years with annual assessments.

Protocol: Virtual Reality-Based Source Memory Assessment (Suite Test)

This protocol details the procedure for the Suite Test, a novel VR-based assessment that embeds source memory within an ecologically valid task [2].

- Objective: To assess visual memory and source memory within a simulated real-world environment to enhance ecological validity.

- Materials and Setup:

- Hardware: A virtual reality headset (e.g., Oculus Rift, HTC Vive) and controllers.

- Software: The Suite Test software, which creates a 360-degree VR environment of a furniture shop.

- Stimuli: Different families of furniture items belonging to different virtual customer families.

- Procedure:

- Encoding/Task Phase: Participants are immersed in the VR furniture shop. A voice-over instructs them to group and pack specific sets of furniture items that were ordered by different families of customers. Participants click on the relevant furniture items to "pack" them. This process inherently encodes the items (furniture) and their sources (customer families).

- Distractor/Interference: A period of non-memory tasks may be incorporated.

- Retrieval Phase:

- Immediate and Delayed Recall: Participants are asked to recall the furniture items they were instructed to pack.

- Source Memory Task: Participants are specifically asked to recall which customer family ordered which set of furniture items.

- Recognition Trial: Participants may be presented with items and must indicate if they were among the target items.

- Data Analysis:

- Calculate accuracy for item recall (furniture), source memory (customer-family binding), and recognition.

- Analyze the relationship between source memory performance and performance on other recall tasks (immediate, short-term delayed, long-term delayed) across different age groups.

Protocol: Event-Related Potential (ERP) Study of Source Memory in Aging

This protocol is for investigating the neural correlates of source memory retrieval in young and older adults using high-density EEG, replicating and extending previous work [26].

- Objective: To identify age-related differences in the neural dynamics of source memory retrieval, with a focus on frontal lobe contributions.

- Materials and Setup:

- Equipment: 62-channel EEG recording system.

- Stimuli: Two temporally distinct lists of sentences, each containing two unassociated nouns (e.g., "The chef cooked the meat.").

- Software: Stimulus presentation software (e.g., E-Prime, Presentation) and ERP analysis toolbox (e.g., EEGLAB, ERPLAB).

- Procedure:

- Encoding Phase:

- Present each sentence in its entirety on a monitor.

- To enhance encoding, have participants make a pleasant/unpleasant judgment about each sentence.

- Present each sentence twice within its list to increase exposure.

- Use shorter lists (e.g., 8 words per list) to reduce memory load for older adults.

- Retrieval Phase:

- Present studied and unstudied nouns one at a time.

- Item Recognition: For each noun, participants first make an "old/new" judgment.

- Source Memory: For each noun judged "old," participants subsequently indicate from which list (List 1 or List 2) it originated.

- EEG Recording: Continuous EEG is recorded throughout the retrieval phase, time-locked to the presentation of each test noun.

- Encoding Phase:

- Data Analysis:

- Behavioral: Calculate measures of item discrimination (d') and source memory accuracy.

- ERP: Analyze Episodic Memory (EM) effects by contrasting ERPs for correctly identified "old" items versus correct rejections of "new" items.

- Early Posterior EM Effect: Calculate mean amplitude 400-800 ms at left parietal sites.

- Late Prefrontal EM Effect: Calculate mean amplitude 600-1000+ ms at right frontal sites.

- Statistical Comparison: Compare the magnitude and topography of these EM effects between young and older adult groups.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials and Tools for Source Memory Research Across the Lifespan

| Tool Category | Specific Item/Technique | Function in Source Memory Research |

|---|---|---|

| Neuroimaging Ligands | 18F-AV-45 (Florbetapir) [24] | Binds to amyloid-beta plaques in the brain for Positron Emission Tomography (PET) imaging. |

| 18F-AV-1451 (Flortaucipir) [24] | Binds to tau neurofibrillary tangles for in vivo PET imaging. | |

| Cognitive Assessment Batteries | CANTAB [24] | Computerized battery assessing multiple domains (e.g., spatial working memory, verbal recognition). |

| NIH Toolbox [24] | Standardized set of measures for cognitive, motor, sensory, and emotional function. | |

| Hopkins Verbal Learning Test [24] | Standardized test for verbal episodic memory and learning. | |

| Virtual Reality Platforms | Suite Test VR Environment [2] | A 360-degree VR furniture shop designed to assess visual and source memory with high ecological validity. |

| Electrophysiology Tools | High-Density EEG (62+ channels) [26] | Records millisecond-level electrical brain activity to study neural correlates of memory retrieval. |

| Computational Modeling | Reservoir Computing Framework [27] | A machine-learning approach to model and measure the computational memory capacity of individual brain connectomes. |

The Methodological Toolkit: From Traditional Paradigms to Digital Biomarkers

Source memory, a critical subdomain of episodic memory, refers to the cognitive ability to recall the contextual details surrounding a specific memory, such as where, when, or how the information was acquired. Unlike item memory, which simply assesses whether a stimulus is recognized, source memory evaluates the retrieval of contextual attributes, making it a more sensitive measure for detecting subtle cognitive changes. The Source-Memory Task Framework is a well-established classical laboratory paradigm designed to systematically assess this ability in both healthy and clinical populations. Its primary application in clinical and pharmaceutical research is to evaluate the efficacy of novel therapeutic agents, particularly for conditions like Alzheimer's disease and other related dementias where memory impairment is a core symptom. Within the broader thesis on source memory assessment techniques, this framework stands out for its robustness, reliability, and ability to dissect the specific cognitive components affected by neurological conditions or modulated by pharmacological interventions. The following sections provide a detailed breakdown of the experimental protocols, data presentation, and key resources required to implement this paradigm effectively.

Key Experimental Protocols

The implementation of the source-memory task can be broken down into three consecutive phases: a study phase, a distractor phase, and a test phase. The following protocol details a standard auditory source memory task, which can be adapted for visual or other sensory modalities.

Protocol: Standard Auditory Source-Memory Task

Objective: To assess a participant's ability to recognize items and remember whether they were originally presented in a male or female voice.

Materials and Equipment:

- Sound-attenuated testing booth: To minimize external auditory distractions.

- Computer with experimental software (e.g., E-Prime, PsychoPy): For precise stimulus presentation and response recording.

- High-quality headphones: For clear audio delivery.

- Pre-recorded word lists: Two lists of 40 words each, one spoken by a male voice and one by a female voice.

Procedure:

Study Phase:

- Instruction: Participants are informed that they will hear a series of words and should try to remember both the words and the voice (male or female) that spoke them. They are told that their memory for both will be tested later.

- Stimulus Presentation: A total of 80 words are presented auditorily through headphones. The presentation is typically randomized, with 40 words in a male voice and 40 in a female voice. Each word is presented for 2-3 seconds with a 1-second inter-stimulus interval.

Distractor Phase:

- Duration: A retention interval of 5-10 minutes.

- Task: Participants engage in a non-verbal, non-memory-based task to prevent rehearsal. A common distractor is a simple motor task or a visual pattern recognition task unrelated to the study stimuli.

Test Phase:

- Instruction: Participants are presented with a series of words on a computer screen. For each word, they must make two decisions:

- Item Memory Decision: Indicate whether the word is "Old" (heard during the study phase) or "New" (not heard during the study phase).

- Source Memory Decision: If the word is judged "Old," they must then identify the source, i.e., whether it was originally presented in the "Male" or "Female" voice.

- Stimulus Presentation: A randomized list of 160 words is presented visually. This list includes:

- 40 "Old" words from the male voice.

- 40 "Old" words from the female voice.

- 80 "New" words (foils).

- Response Collection: Participants input their responses using a button box or keyboard, with each trial timed.

- Instruction: Participants are presented with a series of words on a computer screen. For each word, they must make two decisions:

Data Extraction and Primary Variables:

- Item Memory Accuracy: The proportion of "Old" items correctly identified as "Old" (Hit Rate) versus "New" items incorrectly identified as "Old" (False Alarm Rate). These are used to calculate sensitivity indices like d'.

- Source Memory Accuracy: For items correctly identified as "Old," the proportion of correct source attributions (e.g., correctly identifying a word as having been in the "Male" voice).

- Reaction Time: The time taken to make both item and source decisions, which can be analyzed for correct trials to assess processing efficiency.

Protocol: Drug Intervention Study Using Source Memory

Objective: To evaluate the effect of a novel cognitive enhancer (e.g., CT1812) on source memory performance in a population with Mild Cognitive Impairment (MCI).

Design: Randomized, double-blind, placebo-controlled, crossover design.

Procedure:

Screening and Baseline:

- Participants (e.g., n=50 with amnestic MCI) are screened for eligibility, including APOE ε4 genotyping.

- All participants complete a baseline source-memory task (as described in Protocol 2.1) and a standard neuropsychological battery (e.g., ADAS-Cog).

Intervention Periods:

- Participants are randomly assigned to one of two sequences:

- Sequence A: Drug (e.g., CT1812, 500 mg/day) for 12 weeks, followed by a 4-week washout, then Placebo for 12 weeks.

- Sequence B: Placebo for 12 weeks, followed by a 4-week washout, then Drug for 12 weeks.

- The source-memory task and neuropsychological battery are administered at the end of each 12-week period.

- Participants are randomly assigned to one of two sequences:

Data Analysis:

- The primary outcome measure is the change in source memory accuracy from the end of the placebo period to the end of the drug period.

- Secondary measures include changes in item memory accuracy, reaction times, and scores on the neuropsychological battery.

- Subgroup analyses may be conducted based on factors like APOE ε4 carrier status.

Data Presentation and Analysis

The quantitative data derived from source-memory tasks are typically summarized using descriptive and inferential statistics. The following tables provide a structured overview of key performance metrics and a hypothetical dataset from a drug intervention study.

Table 1: Core Performance Metrics in a Standard Source-Memory Task

| Metric | Formula/Description | Interpretation |

|---|---|---|

| Item Memory Sensitivity (d') | z(Hit Rate) - z(False Alarm Rate) | Measures the ability to discriminate between old and new items, independent of response bias. A higher d' indicates better item memory. |

| Source Memory Accuracy | (Number of Correct Source Attributions) / (Number of Correct "Old" Judgments) | The proportion of correctly recognized items for which the source was also correctly identified. Directly measures source memory performance. |

| Item Memory Reaction Time (ms) | Mean response time for correct "Old" and correct "New" judgments. | Reflects the processing speed for item recognition decisions. |

| Source Memory Reaction Time (ms) | Mean response time for correct source judgments. | Reflects the processing speed for retrieving contextual details, often slower than item memory RT. |

Table 2: Hypothetical Data from a Drug Intervention Study (CT1812 vs. Placebo)

| Participant Group | Item Memory (d') | Source Memory Accuracy (%) | Item Memory RT (ms) | Source Memory RT (ms) |

|---|---|---|---|---|

| Placebo Group (n=25) | 1.2 (±0.3) | 65.5 (±7.2) | 890 (±105) | 1250 (±150) |

| CT1812 Group (n=25) | 1.6 (±0.4) | 74.8 (±6.5) | 820 (±95) | 1120 (±135) |

| p-value | p < 0.05 | p < 0.01 | p < 0.05 | p < 0.05 |

Note: Data presented as Mean (Standard Deviation). RT = Reaction Time.

Visualization of Experimental Workflow

To elucidate the logical flow of the source-memory task framework and its underlying cognitive processes, the following diagrams were generated using Graphviz DOT language. The color palette was strictly adhered to, and all node text colors were explicitly set to ensure high contrast against their background colors (fontcolor="#202124" on light backgrounds, fontcolor="#F1F3F4" on dark backgrounds) [29] [30].

Title: Source Memory Task Workflow

Title: Source Memory Neural Pathway

The Scientist's Toolkit: Research Reagent Solutions

The following table details the essential materials, tools, and software required to implement the source-memory task framework in a rigorous research or drug development context.

Table 3: Essential Research Reagents and Materials for Source-Memory Studies

| Item | Function/Application in Research | Example Specifications |

|---|---|---|

| Stimulus Presentation Software | Precisely controls the timing and sequence of auditory and visual stimuli during the task, ensuring experimental rigor and reproducibility. | E-Prime, PsychoPy, Presentation. |

| Neuropsychological Battery | Provides a comprehensive assessment of cognitive domains beyond episodic memory, allowing for correlation and validation of task findings. | ADAS-Cog, RBANS, CERAD-NB. |

| Audio Stimulus Library | Serves as the standardized, pre-validated set of auditory stimuli (words, non-words, sounds) for which source context (e.g., voice, location) is manipulated. | 500+ neutral nouns, recorded in multiple voices (male/female, left/right speaker). |

| Data Analysis Software | Used for statistical analysis of behavioral data (accuracy, reaction time) and calculation of derived metrics like d'. | R, Python (Pandas, SciPy), SPSS, JASP. |

| Experimental Control Hardware | Ensures accurate response capture and timing. A sound-attenuated booth is critical for auditory tasks to prevent contamination from external noise. | Button Boxes, High-Quality Headphones, Sound-Attenuated Booth. |

| Biomarker Assay Kits | In clinical trials, these are used to measure pharmacodynamic effects of a drug or to stratify patients based on underlying pathology (e.g., Aβ, tau, APOE). | ELISA or Simoa kits for Aβ42, p-tau; PCR for APOE genotyping. |

The assessment of complex cognitive functions, such as source memory, has long faced a fundamental challenge: the tension between experimental control and real-world relevance. Traditional laboratory tasks often employ simple, artificial stimuli that lack the contextual richness of everyday memory experiences, thereby limiting their ecological validity—the extent to which findings can be generalized to real-world settings [31] [32]. Virtual Reality (VR) technology presents a paradigm shift, offering researchers unprecedented capability to create immersive, controlled, yet ecologically plausible environments for assessing cognitive processes. For source memory assessment specifically—which involves remembering not just an item but the contextual details of its encounter—VR enables the creation of rich, multi-sensory encoding contexts that closely mirror real-world experiences while maintaining experimental rigor [33] [34].

The ecological validity of VR experiments can be understood through two complementary approaches: verisimilitude, concerned with how closely the experimental setting resembles the real world, and veridicality, which examines the empirical relationship between laboratory findings and real-world functioning [31] [34]. Research indicates that VR successfully balances both approaches, creating immersive environments that feel authentic to participants while generating data that corresponds meaningfully to real-world cognitive performance [31] [32] [34].

Theoretical Foundations: How VR Enhances Ecological Validity

Immersive Context Reinstatement for Source Memory

VR enhances source memory assessment through its unique capacity for context reinstatement, a critical mechanism in episodic memory retrieval. Unlike traditional methods that might use simple visual cues, VR can recreate complex environments that closely mimic the original encoding context, providing rich spatial and sensory cues that facilitate more accurate source monitoring [33]. This capability is particularly valuable for assessing the perceptual motor, executive function, and learning and memory domains that are crucial for real-world functioning [34].

Neuroimaging evidence suggests that deeper semantic processing during encoding—such as that engaged by immersive VR environments—strengthens memory traces and enhances communication between brain regions responsible for episodic memory formation, including the prefrontal cortex, hippocampus, and posterior parietal regions [33]. By creating environments that naturally elicit such deep processing, VR provides a more valid assessment of an individual's true memory capabilities.

Psychological and Physiological Validation

Comparative studies have demonstrated that VR environments elicit psychological and physiological responses that closely mirror those observed in real-world settings. Research examining both room-scale VR and head-mounted displays (HMDs) found that both setups were ecologically valid regarding audio-visual perceptive parameters [31]. Furthermore, both HMDs and cylindrical VR showed potential for representing real-world conditions in terms of EEG change metrics or asymmetry features, suggesting that VR can validly capture neural correlates of cognitive processing [31].

A 2025 study directly addressed ecological validity by comparing in-situ, room-scale VR, and HMD conditions, finding that although HMDs were perceived as more immersive, both VR tools demonstrated ecological validity for audio-visual perceptive parameters [31]. For psychological restoration metrics, neither VR tool perfectly replicated the in-situ experiment, but cylindrical VR was slightly more accurate than HMDs, highlighting how different VR implementations may vary in their ecological validity for specific cognitive domains [31].

Current Applications and Empirical Support

VR in Cognitive Assessment and Clinical Screening

VR-based assessment tools have shown particular promise in clinical neuropsychology, where traditional assessments often fail to predict real-world functional performance. The CAVIRE-2 (Cognitive Assessment using VIrtual REality) system represents an advanced application specifically designed to assess all six domains of cognition through 13 virtual scenarios simulating both basic and instrumental activities of daily living [34].

Table 1: Performance Metrics of CAVIRE-2 VR Cognitive Assessment System

| Metric | Results | Significance |

|---|---|---|

| Concurrent Validity | Moderate correlation with MoCA | Supports similarity to established cognitive assessment [34] |

| Test-Retest Reliability | ICC = 0.89 (95% CI = 0.85–0.92) | Demonstrates strong measurement consistency [34] |

| Discriminative Ability | AUC = 0.88 (95% CI = 0.81–0.95) | Effectively distinguishes cognitive status [34] |

| Optimal Cut-off Score | < 1850 (88.9% sensitivity, 70.5% specificity) | Provides clinical decision threshold [34] |

A validation study with 280 participants aged 55-84 years found that CAVIRE-2 demonstrated good test-retest reliability with an Intraclass Correlation Coefficient of 0.89 and good internal consistency (Cronbach's alpha = 0.87) [34]. The system displayed good discriminative ability with an area under the curve (AUC) of 0.88, effectively distinguishing between cognitively healthy individuals and those with mild cognitive impairment [34].

VR Gamification of Cognitive Tasks

Gamified VR cognitive tasks have demonstrated the ability to replicate established behavioral patterns while improving ecological validity and reducing task duration. A 2025 study examining gamified versions of three cognitive tasks (Visual Search, Whack-the-Mole, and Corsi block-tapping test) found that these tasks replicated typical performance patterns observed with their traditional counterparts despite using more ecologically valid stimuli and fewer trials [32].

Table 2: Performance Comparison Across Administration Modalities in Gamified Cognitive Tasks

| Cognitive Task | VR-Lab Condition | Desktop-Lab Condition | Desktop-Remote Condition | Statistical Significance |

|---|---|---|---|---|

| Visual Search RT (s) | 1.24 | 1.49 | 1.44 | P<.001 (VR-Lab vs. Desktop-Lab); P=.008 (VR-Lab vs. Desktop-Remote) [32] |

| Whack-the-Mole d' | 3.79 | 3.62 | 3.75 | P=.49 (not significant) [32] |

| Whack-the-Mole RT (s) | 0.41 | 0.48 | 0.64 | P<.001 (VR-Lab vs. Desktop-Remote); P<.001 (Desktop-Lab vs. Desktop-Remote) [32] |

| Corsi Block Span | 5.48 | 5.68 | 5.24 | P=.24 (not significant) [32] |

The study found that administration modality influenced certain performance measures, particularly reaction times. In the Visual Search task, reaction times were significantly faster in the VR-Lab condition (mean 1.24 seconds) than in both Desktop-Lab (mean 1.49 seconds) and Desktop-Remote (mean 1.44 seconds) conditions [32]. These findings support the feasibility of using gamified VR tasks for scalable and ecologically valid cognitive assessment while maintaining measurement precision.

Experimental Protocol: VR-Based Source Memory Assessment

Apparatus and Software Configuration

Hardware Requirements:

- Head-Mounted Display (HMD): Use a commercially available VR HMD with a minimum resolution of 1920×1080 per eye and a refresh rate of 90Hz. HMDs with inside-out tracking are preferred for eliminating external sensors [31] [32].

- Computing System: A VR-capable computer with a dedicated graphics card (e.g., NVIDIA GeForce RTX 3060 or equivalent) and sufficient RAM (≥16GB) to handle complex virtual environments without latency [35].

- Response Controllers: Use motion-tracked controllers that allow naturalistic interaction with virtual objects. Controller input should be recorded with millisecond precision for accurate reaction time measurement [32].

Software Development:

- Game Engine: Develop the virtual environment using a cross-platform 3D engine such as Unity or Unreal Engine, which support multiple VR SDKs and offer robust ecosystems for creating immersive experiences [36].

- Data Logging: Implement comprehensive event logging that records participant movements, interactions, timestamps, and behavioral responses. Data should be output in a structured format (e.g., CSV or JSON) for subsequent analysis [32] [34].

Virtual Environment Design

Encoding Context Creation:

- Design multiple distinct virtual environments (e.g., kitchen, office, garden) that serve as unique source contexts. Each environment should contain 20-30 interactable objects with varied semantic associations [33] [34].

- Incorporate multi-sensory elements including spatial audio cues and visual details that strengthen environmental distinctiveness. Environments should maintain a balance between ecological plausibility and experimental control [31].

- Implement a naturalistic interaction paradigm where participants perform goal-directed tasks (e.g., "prepare a meal" or "find your keys") to ensure deep semantic processing of items within their contexts [33].

Experimental Procedure:

- Encoding Phase: Participants complete tasks in 4-6 different virtual environments, with each session lasting approximately 5 minutes. In each environment, participants interact with 10 critical items that are later tested for source memory [33].

- Distractor Phase: Following encoding, participants engage in a 10-minute nonverbal distractor task to prevent rehearsal.

- Retrieval Phase: Participants are presented with items encountered during encoding (40 targets) plus new items (20 lures) and must indicate both item recognition (old/new judgment) and source context (which environment) for items judged as old [33].

Data Analysis and Interpretation

Primary Dependent Variables:

- Item Recognition Accuracy: Proportion of hits (correct old responses) minus false alarms (incorrect old responses to new items).

- Source Memory Accuracy: Proportion of correct source attributions for items correctly recognized as old.

- Response Latencies: Reaction times for correct versus incorrect source judgments.

Statistical Approach:

- Use repeated-measures ANOVA to examine effects of encoding context, item type, and their interaction on memory performance.

- For clinical applications, establish cutoff scores using receiver operating characteristic (ROC) analysis to maximize sensitivity and specificity for identifying cognitive impairment [34].

- Consider implementing multilevel modeling to account for both within-participant and between-participant variance, particularly when examining individual differences in source memory performance.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Reagents and Materials for VR Source Memory Assessment

| Item | Specification | Function/Justification |

|---|---|---|

| VR Head-Mounted Display | Meta Quest 3/3S, Apple Vision Pro, or equivalent with inside-out tracking | Provides immersive visual experience while tracking head movements for spatial navigation assessment [36] [32] |

| VR Development Platform | Unity 3D with XR Interaction Toolkit | Enables creation of interactive virtual environments with precise object manipulation and data logging capabilities [36] |

| Physiological Monitoring | Consumer-grade EEG/HR sensors (e.g., Muse headband, Polar H10) | Captures physiological correlates of cognitive processing (e.g., EEG asymmetry features, heart rate variability) [31] |

| Spatial Audio SDK | Steam Audio, Oculus Spatializer | Creates realistic soundscapes that enhance environmental immersion and provide additional contextual cues [31] |