Text Mining Psychology Journals: Advanced NLP Approaches for Terminology Extraction and Clinical Insight

This article provides a comprehensive guide to text mining methodologies specifically for analyzing terminology in psychology and biomedical literature.

Text Mining Psychology Journals: Advanced NLP Approaches for Terminology Extraction and Clinical Insight

Abstract

This article provides a comprehensive guide to text mining methodologies specifically for analyzing terminology in psychology and biomedical literature. It explores foundational concepts, details advanced techniques like sentiment analysis and deep learning, and addresses common challenges in data quality and model optimization. Aimed at researchers and drug development professionals, the content covers practical applications from hypothesis generation to clinical decision support, evaluating model performance and synthesizing key takeaways for future research directions in mental health and pharmaceutical development.

The Fundamentals of Text Mining in Psychological Science

Defining Text Mining and Natural Language Processing (NLP) in a Clinical Context

In clinical and psychological research, the vast majority of information is stored as unstructured text, including clinical notes, therapeutic transcripts, and scientific literature. Text Mining (TM) and Natural Language Processing (NLP) are computational techniques that transform this unstructured text into structured, analyzable data. While often used interchangeably, they represent overlapping but distinct concepts. Natural Language Processing (NLP), a subfield of artificial intelligence (AI), is concerned with the interaction between computers and human language. It provides the foundational techniques for understanding linguistic structure, enabling computers to read and interpret human language by performing tasks such as tokenization (breaking text into words or phrases), part-of-speech tagging, and named entity recognition (identifying specific entities like drugs or disorders) [1] [2]. Text Mining (TM), also known as text analytics, is a broader process that uses NLP techniques to extract meaningful patterns, trends, and knowledge from large volumes of text [3]. In essence, NLP provides the grammatical and syntactic tools, while TM applies these tools to solve specific research and clinical problems.

The clinical significance of these technologies is profound. They empower researchers and clinicians to systematically analyze data sources that were previously too vast or complex to assess manually, such as electronic health records (EHRs), transcripts of psychotherapy sessions, and vast corpora of scientific literature [3] [1] [2]. This capability is crucial for a field like psychology, where nuanced language can contain critical indicators of mental state, treatment efficacy, and disease progression.

Clinical Applications and Quantitative Evidence

TM and NLP facilitate a wide range of applications in clinical psychology and psychiatry. These can be broadly categorized into several key areas, each with demonstrated quantitative success.

Table 1: Key Application Areas of TM/NLP in Clinical Contexts

| Application Area | Description | Exemplary Study & Performance |

|---|---|---|

| Risk Prediction & Hospitalization | Predicting the risk of psychiatric hospitalization by mining outpatient clinical notes. | Text mining of narrative notes for patients with Severe Persistent Mental Illness (SPMI) significantly improved re-hospitalization risk models, confirming known risk factors like treatment dropout [4]. |

| Symptom & Disorder Screening | Identifying trauma-related symptoms or specific mental illnesses from textual descriptions. | In a global sample (n=5,048), combining language features from stressful event descriptions with self-report data achieved good accuracy for probable PTSD screening (AUC >0.7) [5]. |

| Extraction of Patient Characteristics | Identifying critical psychosocial factors from Electronic Health Records (EHRs) that impact care. | A 2025 study successfully used Named Entity Recognition (NER) to extract characteristics like "living alone" and "non-adherence" from clinical notes with high recall (0.75-0.90) and specificity (≥0.99) [6]. |

| Understanding Patient Perspective | Analyzing patient language from interviews or online postings to gauge psychopathology or emotional state. | Studies have deployed TM to identify semantic features of diseases like autism, analyze emotional content in anxiety, and examine the psychological state of specific populations [3]. |

| Analysis of Intervention Dynamics | Studying the constituent conversations of Mental Health Interventions (MHI) to understand what makes them effective. | NLP has been used to study patient clinical presentation, provider characteristics, and relational dynamics in therapy, with text features contributing more to model accuracy than audio markers [1]. |

The application of these methods is expanding rapidly. A 2022 narrative review of NLP for mental illness detection found an upward trend in research, with deep learning methods increasingly outperforming traditional machine learning approaches [2]. Furthermore, a 2023 systematic review noted rapid growth in the field since 2019, characterized by increased sample sizes and the use of large language models [1].

Experimental Protocols

To ensure reproducibility and rigor in research, detailed experimental protocols are essential. The following outlines a generalized TM/NLP workflow adapted for clinical psychological research.

Generic Text Mining Workflow for Clinical Text

This protocol provides a high-level framework for mining clinical or research text, such as EHR notes or psychology journal abstracts.

Table 2: Key Research Reagents & Computational Tools

| Tool Category | Examples | Function in Research |

|---|---|---|

| Programming Environments | Python, R | Provide the core ecosystem and libraries for implementing TM/NLP pipelines. |

| NLP Libraries & Frameworks | SpaCy [6], NLTK, Transformers (Hugging Face) | Offer pre-built functions for tasks like tokenization, NER, and leveraging pre-trained models (e.g., BERT, SciBERT [7]). |

| Machine Learning Libraries | scikit-learn, Keras, PyTorch | Provide algorithms for building classification, clustering, and other predictive models. |

| Text Mining Software | Tropes [3], SPSS Text Analysis for Surveys [3], ALCESTE [3] | Standalone software packages for quantitative text analysis, often with graphical user interfaces. |

| Validation Frameworks | scikit-learn (metrics), custom gold standards [6] | Tools and methodologies for assessing model performance against a human-created benchmark. |

Protocol Steps:

- Problem Formulation & Corpus Creation: Define the specific clinical or research question (e.g., "Identify methodological terminology in psychology abstracts"). Assemble a collection of relevant text documents (the corpus) based on clear inclusion criteria. Data sources can include EHRs, interview transcripts, or scientific abstracts from databases like PubMed [3] [7].

- Text Pre-processing: Clean and structure the raw text. This involves:

- Tokenization: Splitting text into individual words or tokens.

- Lemmatization/ Stemming: Reducing words to their base or dictionary form (e.g., "running" → "run").

- Removing Stop Words: Filtering out common but low-information words (e.g., "the," "and," "is").

- Spelling Correction: Addressing typos and inconsistencies, common in clinical notes [4] [6].

- Feature Engineering & Representation: Convert the pre-processed text into a numerical representation that machine learning models can understand. This can be simple (e.g., Bag-of-Words, TF-IDF) or complex (e.g., word embeddings like word2vec, or contextual embeddings from models like SciBERT) [1] [7].

- Knowledge Extraction / Model Training: Apply data mining techniques to extract patterns. This can be:

- Unsupervised: Using methods like clustering to discover inherent thematic groupings in the data without pre-defined labels [7].

- Supervised: Training a classification or prediction model (e.g., Logistic Regression, Support Vector Machines) using a labeled dataset (the "ground truth") [1] [2]. The ground truth could be clinician ratings, patient self-reports, or annotations by expert raters [1].

- Validation & Interpretation: Evaluate the model's performance against a held-out test set or a manually created "golden standard" [6]. Use appropriate metrics (e.g., recall, precision, F1-score, AUC-ROC) and interpret the results in the clinical context [3] [6].

Protocol: Sentiment Analysis for Psychological Stress Detection

This specific protocol outlines the methodology for using sentiment analysis to detect psychological pressure, as exemplified in a 2025 study on college students' employment stress [8].

Aim: To automatically identify signals of psychological stress in text data (e.g., student forum posts, interview transcripts) using deep learning-based sentiment analysis.

Workflow Diagram:

Methodological Details:

- Data Collection: Gather text data from relevant sources, such as online forums, social media platforms, or transcribed interviews. The sample should be representative to mitigate sampling and voluntary response biases [8].

- Annotation and Ground Truth: Label the data based on psychological theory (e.g., Lazarus and Folkman’s Transactional Model of Stress). Labels can indicate stress levels or emotional valence, often derived from self-reports or clinician ratings [1] [8].

- Model Training & Comparison:

- BERT (Bidirectional Encoder Representations from Transformers): A powerful transformer-based model that generates deep, contextualized word embeddings. Fine-tune a pre-trained BERT model on the specific clinical corpus [8].

- CNN (Convolutional Neural Network): Effective for extracting local features from text, such as key phrases indicative of stress.

- Hybrid BERT-CNN Model: Leverages BERT's contextual understanding and CNN's proficiency in detecting local patterns. This hybrid approach has been shown to achieve superior performance in accuracy, F1 score, and recall for sentiment analysis tasks in psychological domains [8].

- Evaluation: Compare the performance of all models using standard metrics. The hybrid model is expected to outperform the others, providing a more robust tool for early detection of psychological stress [8].

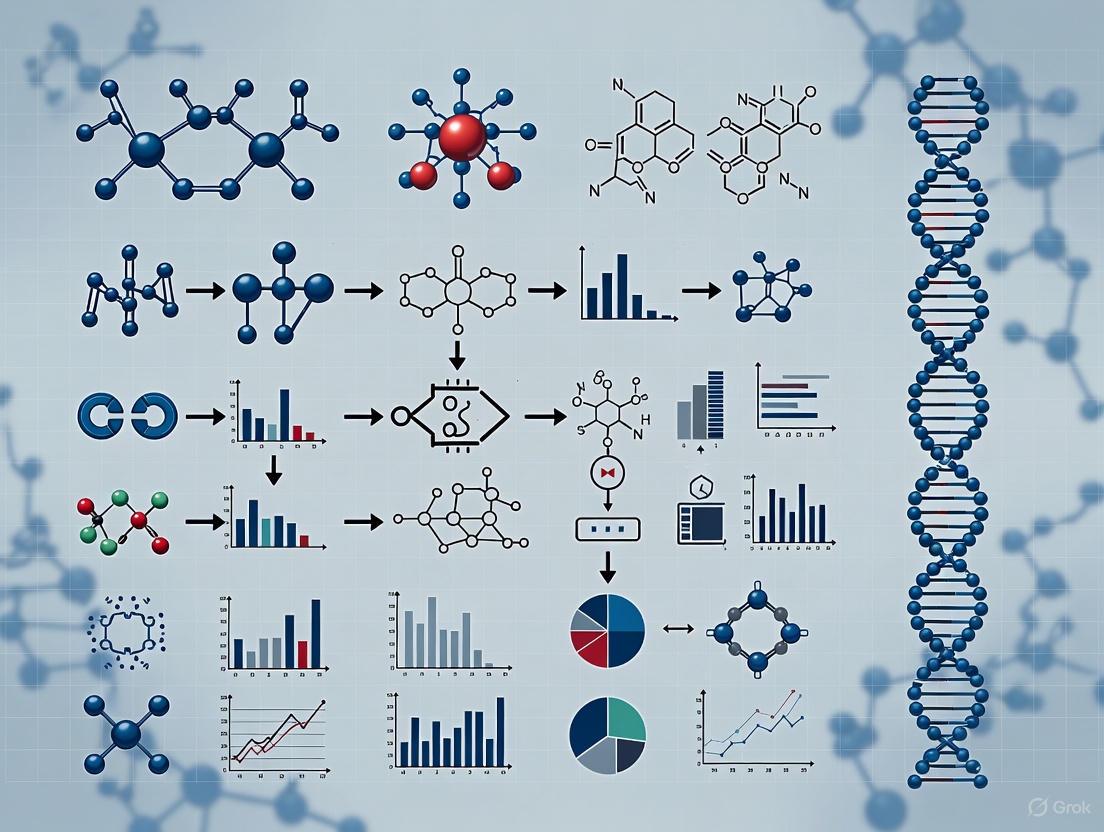

Visualization of a Standard Text Mining Pipeline

The following diagram illustrates the logical flow of a standard TM/NLP pipeline as applied in a clinical or research context, integrating the components and protocols described above.

Workflow Diagram:

Text mining approaches are fundamental to processing the vast and complex literature in psychology and drug development. These fields generate extensive unstructured text data, from clinical notes and research articles to patient-reported outcomes. Tokenization, Lemmatization, and Named Entity Recognition (NER) form the foundational pipeline that transforms this unstructured text into structured, analyzable data [9] [10]. These techniques enable researchers to identify key terminology, extract meaningful patterns, and uncover relationships within psychological literature, thereby accelerating insight generation and drug development processes.

The global NLP market, valued at approximately $27.73 billion in 2022 and projected to grow at a CAGR of 40.4%, underscores the critical importance of these technologies in research and industry applications [10]. For psychology journal terminology research, these methods provide systematic approaches for cataloging psychological constructs, symptom descriptions, treatment modalities, and pharmacological concepts across extensive scientific corpora.

Core Terminology and Technical Foundations

Tokenization

Tokenization serves as the initial text processing step, breaking down raw text into smaller constituent units called tokens [9] [10]. These tokens typically represent words, subwords, or phrases that become the basic units for all subsequent analysis. In psychology research, effective tokenization must handle specialized terminology including psychological constructs (e.g., "cognitivedissonance"), assessment tools (e.g., "BeckDepressionInventory"), and pharmacological compounds (e.g., "selectiveserotoninreuptakeinhibitor").

The tokenization process involves several technical considerations particularly relevant to scientific text:

- Delimiter Selection: Determining appropriate boundaries for tokens using spaces, punctuation, or custom rules [9]

- Language-Specific Challenges: Managing domain-specific punctuation, hyphenated terms, and academic writing conventions

- Context Preservation: Maintaining the relationship between tokens while creating discrete analytical units

Advanced tokenization methods have evolved to address various research needs, each with distinct advantages for psychological text mining:

Table: Tokenization Methods and Applications

| Method Type | Description | Psychology Research Applications |

|---|---|---|

| Word Tokenization | Splits text based on spaces and punctuation into complete words [9] | Basic processing of journal abstracts; patient narratives |

| Subword Tokenization | Breaks words into smaller meaningful units (e.g., prefixes, stems, suffixes) [9] | Handling specialized terminology; morphological analysis |

| Sentence Tokenization | Divides text into complete sentences using punctuation cues [9] | Document segmentation; analysis of rhetorical structure |

| N-gram Tokenization | Creates overlapping word groups of size 'n' (e.g., bigrams, trigrams) [9] | Identifying multi-word concepts; phrase pattern recognition |

Lemmatization

Lemmatization is the process of reducing words to their base or dictionary form, known as the lemma [11] [10]. This technique employs vocabulary and morphological analysis to group different inflected forms of a word, ensuring that words with the same core meaning are recognized as identical for analysis. For psychology terminology research, this is particularly valuable for normalizing verb tenses, noun plurals, and adjectival forms while preserving semantic integrity.

The linguistic sophistication of lemmatization differentiates it from simpler stemming approaches:

- Stemming crudely chops off word suffixes using heuristic rules, often producing non-words (e.g., "running" → "run", "better" → "good") [11]

- Lemmatization utilizes vocabulary and morphological analysis to return valid base forms (e.g., "running" → "run", "better" → "good") [11]

This precision makes lemmatization essential for psychology research where maintaining semantic accuracy is critical for understanding nuanced constructs and relationships.

Named Entity Recognition (NER)

Named Entity Recognition (NER) is an information extraction technique that identifies and classifies key elements in text into predefined categories such as person names, organizations, locations, medical codes, time expressions, quantities, percentages, and more [12] [11] [10]. For psychology and pharmacological research, NER systems are typically customized to detect domain-specific entities including:

- Psychological constructs: Disorders, symptoms, therapies

- Pharmacological entities: Drug names, compounds, mechanisms of action

- Assessment tools: Inventories, scales, questionnaires

- Biological entities: Genes, proteins, neurological structures

NER operates through either rule-based systems using carefully crafted patterns or machine learning approaches that learn to recognize entities from annotated examples [11]. Modern NER systems increasingly utilize deep learning models, particularly bidirectional transformers, which have demonstrated state-of-the-art performance on biomedical text extraction tasks [13] [14].

Quantitative Analysis of Technique Performance

The effectiveness of NLP techniques is quantitatively evaluated across multiple dimensions including accuracy, computational efficiency, and domain adaptability. The following table summarizes key performance metrics for the core techniques as applied to biomedical and psychological text:

Table: Performance Metrics of Core NLP Techniques

| Technique | Accuracy Range | Computational Efficiency | Domain Adaptation Requirements | Primary Evaluation Metrics |

|---|---|---|---|---|

| Tokenization | 95-99% [9] | High | Low to moderate (language-specific rules) | Boundary accuracy, consistency |

| Lemmatization | 90-97% [11] | Moderate | High (domain-specific dictionaries) | Lemma accuracy, linguistic validity |

| NER | 80-95% (biomedical domains) [15] | Low to variable (model-dependent) | Significant (domain-specific training data) | Precision, Recall, F1-score |

Recent advances in transformer-based models have substantially improved NER performance in biomedical contexts. For instance, specialized models like BioBERT and ClinicalBERT have achieved F1 scores of 89.8% and higher on biomedical named entity recognition tasks, significantly outperforming general-domain models [13]. These domain-adapted models are particularly relevant for psychology and pharmacology research where terminology is highly specialized.

Experimental Protocols and Methodologies

Protocol 1: Tokenization of Psychology Journal Text

Objective: Implement and evaluate tokenization methods on psychology literature to optimize terminology extraction.

Materials:

- Text corpus from psychology journals (e.g., APA PsycArticles)

- Computational environment with Python 3.8+

- NLP libraries: SpaCy, NLTK, Stanza [12] [16]

Methodology:

- Corpus Preparation: Collect and compile journal abstracts focusing on psychopharmacology

- Text Normalization: Convert text to lowercase, preserve hyphenated terms, handle academic citations

- Tokenizer Configuration:

- Implement whitespace tokenization as baseline

- Configure linguistic tokenizers (SpaCy) with custom rules for psychological terminology

- Apply subword tokenization (WordPiece) for complex compound terms

- Evaluation: Manually annotate 1000 tokens from psychology text as gold standard

- Validation: Calculate token boundary accuracy against human-annotated standard

Technical Considerations:

- Preserve specialized hyphenated terms (e.g., "meta-analysis", "follow-up")

- Handle in-text citations and reference formatting

- Manage statistical expressions and numerical ranges

Protocol 2: Lemmatization for Terminology Normalization

Objective: Standardize psychological terminology through lemmatization to improve concept mapping.

Materials:

- Tokenized psychology text from Protocol 1

- Domain-specific dictionaries (e.g., APA Dictionary of Psychology)

- Libraries: SpaCy, WordNet, domain-customized lemmatizers [12] [16]

Methodology:

- Baseline Establishment: Apply standard English lemmatization using out-of-the-box tools

- Domain Adaptation:

- Create custom rules for psychological terminology (e.g., "reinforcing" → "reinforce")

- Add domain-specific exceptions (e.g., "mania" should not lemmatize to "manic")

- Validation Framework:

- Develop test set of 500 psychological terms with expert-validated lemmas

- Compare lemmatization accuracy across standard and adapted systems

- Performance Assessment: Measure lemmatization accuracy and impact on downstream tasks

Technical Considerations:

- Distinguish between clinical terminology and common language

- Preserve proper nouns (assessment tool names, researcher names)

- Handle acronyms and abbreviations appropriately

Protocol 3: NER for Psychological Construct Extraction

Objective: Extract and classify psychological entities from research literature for terminology mapping.

Materials:

- Annotated psychology text corpora (e.g., PsyTAR, CEGS N-GRID)

- Pre-trained biomedical NER models (BioBERT, ClinicalBERT) [13]

- Annotation guidelines for psychological entities

Methodology:

- Entity Schema Definition:

- Disorder entities: depression, anxiety, schizophrenia

- Intervention entities: CBT, mindfulness, pharmacotherapy

- Assessment entities: Hamilton Rating Scale, MMPI-2

- Outcome entities: remission, relapse, symptom reduction

- Model Selection and Training:

- Fine-tune pre-trained biomedical transformers on psychology-specific corpus

- Implement ensemble approaches combining rule-based and ML methods

- Annotation Protocol:

- Dual-annotator process with psychologist consultation

- Adjudication process for annotation disagreements

- Evaluation:

- Standard precision, recall, F1-measure against test set

- Domain-specific evaluation on rare terminology

Technical Considerations:

- Address entity boundary challenges (e.g., "major depressive disorder" vs. "depressive disorder")

- Handle entity ambiguity (e.g., "mania" as symptom vs. diagnosis)

- Manage novel terminology and emerging constructs

Diagram 1: Text Processing Workflow for Psychology Terminology Extraction

Research Reagent Solutions

Implementing the described protocols requires specific computational tools and resources. The following table details essential research reagents for psychology terminology text mining:

Table: Essential Research Reagents for Psychology Terminology Text Mining

| Reagent Category | Specific Tools/Libraries | Primary Function | Application Notes |

|---|---|---|---|

| Core NLP Libraries | SpaCy, NLTK, Stanza [12] [16] | Text processing pipeline implementation | SpaCy preferred for production use; NLTK for education |

| Domain-Specific Models | BioBERT, ClinicalBERT, SciBERT [13] | Pre-trained models for scientific text | Fine-tuning required for psychology-specific tasks |

| Annotation Tools | BRAT, Prodigy, INCEpTION | Manual annotation of training data | Critical for creating domain-specific training sets |

| Evaluation Frameworks | scikit-learn, Hugging Face Evaluate | Performance metric calculation | Standardized evaluation across experiments |

| Specialized Lexicons | UMLS Metathesaurus, APA Dictionary | Domain knowledge integration | Improves lemmatization and entity recognition accuracy |

Advanced Applications in Psychology and Drug Development

The integration of tokenization, lemmatization, and NER enables sophisticated research applications in psychology and pharmacology. These techniques form the foundation for:

Adverse Drug Event Monitoring: Systematic reviews demonstrate that NER and relation extraction can identify adverse drug events from clinical notes with high precision, supporting pharmacovigilance efforts [14]. This is particularly relevant for psychopharmacology where side effect terminology is complex and nuanced.

Drug-Target Interaction Discovery: Advanced NLP pipelines incorporating these core techniques can extract drug-target relationships from literature, accelerating drug repurposing and discovery research [13] [17]. For psychological treatments, this enables mapping between pharmacological mechanisms and therapeutic outcomes.

Terminology Ontology Development: The processed output from these techniques supports the creation and expansion of psychological terminology ontologies, facilitating better knowledge organization and retrieval across the research literature.

Diagram 2: NER Annotation Process for Psychology Text

Tokenization, lemmatization, and named entity recognition constitute essential components of the text mining pipeline for psychology journal terminology research. When properly implemented with domain adaptation, these techniques enable researchers to transform unstructured psychological literature into structured, analyzable data supporting both basic research and applied drug development. The experimental protocols outlined provide methodological rigor for implementing these approaches, while the quantitative benchmarks establish performance expectations for real-world applications. As NLP methodologies continue advancing, particularly with transformer-based architectures, these core techniques will remain fundamental to extracting meaningful insights from the growing corpus of psychological and pharmacological literature.

The exponential growth of biomedical literature has created a pressing need for efficient tools to manage and extract knowledge from vast volumes of textual data [3]. Text mining (TM), which combines natural language processing (NLP), artificial intelligence, and statistical analysis, has emerged as a critical methodology for automating the discovery and retrieval of information from unstructured text [3]. Within psychiatry and psychology, these approaches are particularly valuable for facilitating complex research tasks that would be prohibitively time-consuming using traditional manual methods [3]. This systematic review synthesizes current evidence on TM applications in psychiatric and psychological research, with particular emphasis on methodological protocols and quantitative findings that demonstrate the transformative potential of computational approaches for understanding mental health phenomena, patient perspectives, and research trends.

Major Application Areas and Quantitative Findings

A systematic review of the literature identified four principal domains where text mining approaches are actively applied in psychiatric and psychological research [3]. The distribution of research across these domains and their characteristic data sources are summarized in Table 1.

Table 1: Core Application Areas of Text Mining in Psychiatry and Psychology

| Application Area | Primary Objective | Common Data Sources | Representative Techniques |

|---|---|---|---|

| Psychopathology | Identify disease-specific semantic features; compare language between clinical and control groups [3] | Written narratives; interviews; research transcripts [3] | Tokenization; lemmatization; cluster analysis; latent semantic indexing [3] |

| Patient Perspective | Understand patient experiences, attitudes, and behaviors; screen for disorders [3] | Internet postings; qualitative studies; social media [3] | Bag-of-words models; classification algorithms; sentiment analysis [3] |

| Medical Records | Improve safety, quality of care, and treatment description; identify disorders from clinical notes [3] | Electronic Health Records (EHRs); clinical notes [3] | Named entity recognition; co-occurrence analysis; logistic regression [3] |

| Medical Literature | Identify new scientific information; track methodological transparency and research trends [3] [7] | Biomedical literature databases; journal abstracts [3] [7] | Glossary-based extraction; contextualized embeddings; clustering [7] |

Recent large-scale studies demonstrate the quantitative impact of TM. An analysis of 85,452 psychology abstracts published between 1995 and 2024 found that 78.16% contained method-related keywords, with an average of 1.8 terms per abstract, indicating a significant shift toward greater methodological transparency in reporting [7]. Another systematic review screened 1,103 citations and identified 38 studies as concrete applications of TM in psychiatric research, revealing the diverse and growing utilization of these methods [3].

Experimental Protocols for Key Application Areas

This protocol is designed to track the presence and semantic evolution of methodological terminology in psychology and psychiatry research abstracts [7].

1. Research Question Formulation: Define the specific research question, typically focusing on the prevalence and thematic grouping of methodological terms over time [7].

2. Data Collection and Corpus Creation:

- Source Identification: Identify relevant scholarly databases (e.g., PsycINFO, MEDLINE, Web of Science) [3] [7].

- Search Strategy: Develop a comprehensive search strategy using a balanced approach of sensitivity (finding all relevant studies) and precision (excluding irrelevant ones) [18].

- Inclusion/Exclusion Criteria: Define criteria a priori using a framework like PICOS (Population, Intervention, Comparison, Outcomes, Study design) [3] [18]. Common criteria include publication date range, article type (e.g., empirical studies), and language.

- Data Retrieval: Extract abstracts and relevant metadata (e.g., publication year) from selected studies to form the analysis corpus [7].

3. Text Pre-processing:

- Tokenization: Split text into individual words or tokens [3] [7].

- Stopword Removal: Filter out common, low-information words (e.g., "the," "and") [3] [7].

- Lemmatization: Reduce words to their base or dictionary form (e.g., "running" to "run") [3].

4. Glossary-Based Term Extraction:

- Utilize a curated, domain-specific glossary of methodological terms (e.g., "randomized controlled trial," "factor analysis," "bootstrapping") as a gold standard [7].

- Perform term identification in the corpus using direct and fuzzy string matching to account for morphological variations [7].

5. Semantic Vectorization and Clustering:

- Encoding: Convert extracted terms into numerical representations using contextualized language models like SciBERT, which generates context-aware embeddings [7].

- Clustering: Apply clustering algorithms (e.g., k-means) to the unified term vectors to identify thematic groupings of methodological concepts. Both standard and weighted unsupervised approaches can be used [7].

6. Trend and Frequency Analysis:

- Analyze the frequency of extracted terms and the composition of clusters over time to identify emerging and fading methodological trends [7].

Diagram: Text Mining Analysis Workflow

Protocol 2: Screening for Mental Health Conditions from Textual Data

This protocol outlines a method for automated screening of specific psychiatric conditions, such as depression or post-traumatic stress disorder, from narrative text [3].

1. Objective Definition: Clearly define the condition or psychological state to be identified and the purpose of screening [3].

2. Data Source Selection:

- Select appropriate text sources, which may include medical records, online forum posts, interview transcripts, or social media data [3].

- Ensure ethical compliance and data anonymization procedures are in place.

3. Gold Standard Establishment:

- Create a reference standard for validation by having clinical experts label a subset of the data [3].

- This "gold standard" is used to train supervised machine learning models and validate results [3].

4. Feature Extraction:

- Linguistic Pre-processing: Apply tokenization and stopword removal [3].

- Feature Engineering: Transform text into analyzable features using methods such as:

5. Model Development and Validation:

- Algorithm Selection: Implement classification algorithms (e.g., logistic regression) to distinguish between classes (e.g., presence/absence of a condition) [3].

- Validation: Assess model performance by calculating sensitivity, specificity, predictive values, or using receiver operating characteristic (ROC) curve analysis against the gold standard [3].

Table 2: Essential Resources for Text Mining in Psychiatry and Psychology

| Tool/Resource | Type | Primary Function | Example Applications |

|---|---|---|---|

| Curated Methodological Glossary [7] | Lexical Resource | Serves as a gold-standard reference for identifying domain-specific terminology. | Extracting method-related keywords from scientific abstracts [7]. |

| Contextualized Language Models (e.g., SciBERT) [7] | Computational Algorithm | Generates context-aware embeddings (numerical representations) of words and phrases. | Capturing semantic meaning of terms for clustering and trend analysis [7]. |

| Clustering Algorithms (e.g., k-means) [7] | Statistical Method | Groups terms or documents into thematic clusters based on similarity in vector space. | Identifying underlying thematic groupings in methodological terminology [7]. |

| Classification Algorithms (e.g., Logistic Regression) [3] | Statistical Method | Classifies text into predefined categories (e.g., presence/absence of a condition). | Screening for depression or PTSD from narrative text [3]. |

| Natural Language Processing (NLP) Techniques (Tokenization, Lemmatization) [3] [7] | Text Pre-processing | Structures raw, unstructured text for analysis by breaking it down into components and standardizing words. | Fundamental first step in any text mining pipeline to prepare data for analysis [3] [7]. |

| Validation Metrics (Sensitivity, Specificity, ROC) [3] | Evaluation Framework | Quantifies the performance and accuracy of TM tools against a gold standard. | Validating a TM tool designed to screen for depressive disorders in medical records [3]. |

Diagram: Text Mining Analysis Pathways

This systematic review synthesizes the major application areas of text mining in psychiatry and psychology, detailing specific experimental protocols and quantifying the impact of these methodologies. The evidence demonstrates that TM approaches are fundamentally advancing research in psychopathology, patient perspectives, medical records, and the scientific literature itself. The increasing presence of methodological terminology in psychology abstracts, coupled with the development of sophisticated NLP pipelines for semantic analysis, signals a move toward greater methodological transparency—a crucial development in the context of psychology's replication crisis. Future research should focus on standardizing TM protocols across institutions, developing more domain-specific lexicons, and exploring the ethical implications of automated analysis of sensitive mental health data. As these methodologies continue to mature, their integration into mainstream psychiatric and psychological research holds the promise of unlocking deeper insights from textual data at a scale previously unimaginable.

Leveraging Text Mining for Exploratory Hypothesis Generation in Drug Development

The early stages of drug development are characterized by the critical need to generate viable scientific hypotheses from an exponentially growing body of biomedical literature. Text mining, a branch of artificial intelligence that combines natural language processing (NLP) and information retrieval, provides powerful tools to transform unstructured text into structured, analyzable data for this purpose [19]. Within the specific context of psychology and neuropharmacology research, these approaches can systematically extract hidden relationships between pharmacological constructs, mental states, and behavioral outcomes described in scientific literature. The application of text mining facilitates exploratory hypothesis generation by identifying non-obvious connections between drugs, psychological constructs, and physiological mechanisms, enabling researchers to formulate testable predictions about drug efficacy, safety, and mechanisms of action with greater speed and empirical grounding [19].

The challenge of drug-drug interaction (DDI) prediction exemplifies this need. Adverse drug reactions cause significant morbidity and mortality, with studies showing drug-drug interactions responsible for 0.57% of hospital admissions [19]. Text mining approaches can address this by systematically extracting pharmacokinetic and pharmacodynamic parameters from literature and databases, creating a foundation for computational DDI prediction models [19]. Similarly, in psychological research, text mining can operationalize complex constructs by identifying their manifestations in clinical notes or research literature, creating bridges between psychological terminology and pharmacological mechanisms.

Key Applications and Quantitative Evidence

Text mining supports hypothesis generation in drug development through several distinct approaches, each with demonstrated efficacy in extracting and structuring biomedical information. The table below summarizes the primary applications and their documented performance metrics.

Table 1: Performance Metrics of Text Mining Applications in Healthcare and Drug Development

| Application Area | Specific Task | Recall | Specificity | Precision/F1-Score | Data Source |

|---|---|---|---|---|---|

| Patient Characterization [20] | Identification of "Language Barrier" using Rule-Based Query | 0.99 | 0.96 | Not Reported | Electronic Health Records (EHRs) |

| Identification of "Living Alone" using NER Model | 0.86 (Test); 0.81 (Validation) | 0.94 (Test); 1.00 (Validation) | Not Reported | Electronic Health Records (EHRs) | |

| Identification of "Cognitive Frailty" using NER Model | 0.59 (Test); 0.73 (Validation) | 0.76 (Test); 0.96 (Validation) | Not Reported | Electronic Health Records (EHRs) | |

| Identification of "Non-Adherence" using NER Model | 0.75 (Test); 0.90 (Validation) | 0.99 (Test); 0.99 (Validation) | Not Reported | Electronic Health Records (EHRs) | |

| Literature-Based Discovery [19] | DDI Prediction via Similarity Measurements (INDI Framework) | Not Reported | Not Reported | Not Reported | Multiple Databases (DrugBank, DIDB, etc.) |

| Text Visualization [21] | Keyword Frequency Analysis using Word Clouds | Not Applicable | Not Applicable | Not Applicable | Customer Feedback, Documents, Interviews |

The data illustrates that text mining performance is highly dependent on the complexity of the target terminology. Rule-based methods excel with unambiguous terms (e.g., "language barrier"), while Named Entity Recognition (NER) models are more effective for conceptually complex or variably expressed constructs (e.g., "cognitive frailty") [20]. This has direct implications for psychology and drug development, where construct validity is paramount. The process of using multiple operational definitions (e.g., different text mining approaches for the same construct), known as converging operations, strengthens the validity of the extracted information and the hypotheses generated from it [22] [23].

Experimental Protocols and Methodologies

Protocol: Rule-Based Text Mining for Structured Data Extraction

This protocol is designed to extract well-defined terms and relationships from textual data, such as specific pharmacokinetic parameters or psychological construct names from structured abstracts.

1. Research Reagent Solutions

Table 2: Essential Materials for Rule-Based Text Mining

| Item Name | Function/Description |

|---|---|

| Structured Query Language (SQL) Database (e.g., SQL Server Management Studio) | A relational database management system used to store textual data and execute rule-based queries [20]. |

| Predefined Terminology List | A comprehensive list of keywords and phrases related to the target constructs (e.g., drug names, enzyme identifiers, psychological scales) [20]. |

| Rule-Based Query Script | A set of SQL scripts containing Boolean logic (AND, OR, NOT) and proximity operators to identify co-occurrences of key terms [20]. |

2. Procedure

- Step 1: Data Acquisition and Preprocessing. Identify and gather target text corpora (e.g., PubMed abstracts, clinical trial reports, psychology journal articles). Clean the data by standardizing formatting and correcting common optical character recognition errors.

- Step 2: Database Ingestion. Import the preprocessed text corpus into an SQL database. Structure the data into relevant tables (e.g., a table for article metadata and a table for the full text).

- Step 3: Rule Formulation. Develop an initial set of search rules based on domain knowledge. For example, to find articles on serotonin-related depression, a rule might be:

(SSRI OR "selective serotonin reuptake inhibitor") AND (depression OR "major depressive disorder"). - Step 4: Query Execution and Iteration. Execute the rule-based query on the database. Manually review a sample of the results (e.g., the first 35 discrepancies) to identify false positives and false negatives [20].

- Step 5: Rule Refinement. Refine the query rules iteratively based on the manual review to improve accuracy. This may involve adding exclusion terms or adjusting proximity parameters. Limit iterations (e.g., to five) to prevent an excessively long and conflicting rule set [20].

- Step 6: Output and Structured Data Generation. The final query output is a structured dataset (e.g., a table) listing the retrieved documents and the identified term co-occurrences, ready for hypothesis generation.

The following workflow diagram summarizes this protocol:

Protocol: Named Entity Recognition (NER) for Complex Constructs

This protocol uses machine learning to identify and classify complex, variably expressed entities in text, such as symptoms, cognitive states, or social behaviors described in clinical notes.

1. Research Reagent Solutions

Table 3: Essential Materials for NER Model Development

| Item Name | Function/Description |

|---|---|

| Annotated Text Corpus | A "golden standard" dataset where human experts have tagged (annotated) all mentions of the target entities in the text [20]. |

| Computational Environment (e.g., Python with PyTorch/TensorFlow) | A programming environment with deep learning libraries for building and training NER models. |

| Pre-trained Language Model (e.g., BERT, ClinicalBERT) | A model pre-trained on a large corpus that understands contextual relationships in language, which can be fine-tuned for specific NER tasks. |

2. Procedure

- Step 1: Golden Standard Creation. A subset of the text data (e.g., clinical notes, research abstracts) is manually reviewed by domain experts. They annotate the text, marking the spans of text that correspond to the target entities (e.g.,

[non-adherence]or[cognitive frailty]) [20]. - Step 2: Data Partitioning. The annotated corpus is divided into three sets: a training set (to teach the model), a test set (to evaluate its performance), and an optional validation set (for final tuning) [20].

- Step 3: Model Selection and Training. A pre-trained language model is selected. The training set is used to fine-tune this model, a process where the model learns to recognize the patterns of the target entities based on the human annotations.

- Step 4: Model Evaluation. The fine-tuned model is run on the test set. Its performance is calculated by comparing its predictions against the human annotations using metrics like recall, specificity, and F1-score [20].

- Step 5: Prediction on New Data. The validated model is deployed to extract entities from new, unannotated text corpora. The output is a structured list of detected entities and their context.

The workflow for this protocol is captured in the diagram below:

Visualization and Interpretation for Hypothesis Generation

The final stage of the exploratory process involves visualizing the extracted information to reveal patterns and relationships that suggest novel hypotheses.

Word Clouds and Tag Clouds are simple yet effective tools for initial exploration. They display word frequency graphically, giving greater prominence to words that appear more frequently in the source text [21]. For instance, mining clinical notes of patients experiencing a specific drug side effect might reveal frequently co-occurring psychological terms like "agitation" or "apathy," suggesting a potential drug-effect hypothesis that can be tested further.

For more complex relationship mapping, Sankey Diagrams are ideal. These diagrams visualize the flow or proportional relationship from one set of values (nodes) to another [21]. In the context of DDI and psychology, a Sankey diagram could illustrate the strength of association between a specific drug class, the psychological constructs it most frequently co-occurs with in literature, and the reported clinical outcomes.

The following diagram illustrates a generic text mining workflow for hypothesis generation, integrating the elements discussed:

This systematic approach—from data extraction through to visualization—enables researchers to move from vast, unstructured text to specific, data-driven hypotheses about drug mechanisms and effects in the context of psychological science.

The field of psychology is increasingly turning to computational methods to understand the intricate relationship between language and mental processes. Linguistic patterns offer a unique window into psychological constructs, revealing insights that traditional assessment methods may miss. This foundation is critical for advancing text mining approaches in psychology journal terminology research, allowing researchers and drug development professionals to systematically decode the language of the mind. By establishing robust theoretical links between specific language features and psychological states, we can develop more precise tools for diagnosis, treatment monitoring, and therapeutic development.

Theoretical Framework and Key Linguistic Markers

Substantial research has demonstrated that language patterns can reveal important psychological information that individuals may not disclose directly. Analysis of natural language can uncover true feelings and attitudes through detectable linguistic patterns, even when individuals are attempting impression management [24]. This capability makes linguistic analysis particularly valuable for psychological assessment where social desirability biases may affect self-report measures.

Table 1: Established Linguistic Correlates of Psychological Constructs

| Psychological Construct | Linguistic Marker | Direction of Association | Theoretical Interpretation |

|---|---|---|---|

| Depression | First-person singular pronouns | Increase [25] | Heightened self-focus or self-immersed perspective |

| Negative emotion words | Increase [25] | Elevated negative affect | |

| Sadness words | Increase [25] | Specific emotional experience | |

| Positive emotion words | Decrease [25] | Anhedonia or reduced positive affect | |

| Anxiety | Negative emotion words | Increase [25] | General negative emotionality |

| Negations | Increase [25] | Cognitive patterns of contradiction | |

| Anxiety-specific words | Increase [25] | Disorder-specific preoccupations | |

| Deception | Self-references | Decrease [24] | Reduced personal ownership of statements |

| Negative emotion terms | Increase [24] | Potential discomfort with deception |

The differentiation between overlapping conditions represents a particular challenge and opportunity for linguistic analysis. Research examining both depression and anxiety has found that while some language features are shared between these frequently co-occurring conditions, others show relative specificity [25]. This discrimination is vital for developing targeted interventions and understanding the distinct cognitive and emotional processes underlying these conditions.

Quantitative Data Synthesis

Table 2: Effect Sizes and Statistical Measures for Linguistic-Psychological Associations

| Linguistic Feature | Psychological Construct | Effect Size/Statistical Measure | Sample Characteristics | Data Source |

|---|---|---|---|---|

| First-person singular pronouns | Depression | Significant association (p<0.05) [25] | 486 participants with varying depression/anxiety | Clinical interviews |

| Anxiety | Significant association (p<0.05) [25] | 486 participants with varying depression/anxiety | Clinical interviews | |

| Negative emotion words | Depression | Significant association (p<0.05) [25] | 486 participants with varying depression/anxiety | Clinical interviews |

| Anxiety | Significant association (p<0.05) [25] | 486 participants with varying depression/anxiety | Clinical interviews | |

| Sadness words | Depression | Relatively specific marker [25] | 486 participants with varying depression/anxiety | Clinical interviews |

| Positive emotion words | Depression | Negative association [25] | 486 participants with varying depression/anxiety | Clinical interviews |

| Anxiety words | Anxiety | Relatively specific marker [25] | 486 participants with varying depression/anxiety | Clinical interviews |

| Negations | Anxiety | Relatively specific marker [25] | 486 participants with varying depression/anxiety | Clinical interviews |

The emerging challenge of Large Language Models (LLMs) in linguistic analysis must be acknowledged in contemporary research. Recent investigations have found that although the use of LLMs slightly reduces the predictive power of linguistic patterns over authors' personal traits, significant changes are infrequent, and LLMs do not fully diminish this predictive power [26]. However, some theoretically established lexical-based linguistic markers do lose reliability when LLMs are involved in the writing process, necessitating methodological adjustments in future research.

Experimental Protocols and Methodologies

Protocol: Clinical Interview Transcription for Linguistic Analysis

Purpose: To collect natural language samples for quantifying linguistic markers of depression and anxiety while controlling for comorbid conditions.

Materials and Equipment:

- Audio recording equipment

- Transcription software or services

- Linguistic Inquiry and Word Count (LIWC) software or equivalent

- Statistical analysis software (R, Python, or specialized text analysis packages)

Procedure:

- Participant Recruitment: Recruit participants with varying levels of target psychological constructs (e.g., currently depressed, currently anxious, comorbid conditions, and healthy controls) [25].

- Structured Interviews: Conduct clinical interviews using established protocols (e.g., Anxiety and Related Disorders Interview Schedule for DSM-5) administered by trained clinical interviewers [25].

- Audio Recording: Record all interview sessions with participant consent.

- Verbatim Transcription: Transcribe interviews verbatim, excluding filler words but preserving all substantive content.

- Word Count Threshold: Apply inclusion criteria based on minimum word count (e.g., 200 words) to ensure sufficient linguistic data [25].

- Linguistic Analysis: Process transcripts through validated text analysis programs (e.g., LIWC) which contain dictionaries of categories related to social, psychological and part of speech dimensions [24].

- Statistical Analysis: Correlate linguistic features with clinician-rated measures of psychological constructs, controlling for relevant covariates.

Validation Measures:

- Compare language-based classifications with gold standard clinical assessments

- Calculate sensitivity, specificity, and predictive values against expert ratings [3]

- Establish reliability measures for linguistic coding

Protocol: Text Mining for Psychiatric Research Applications

Purpose: To systematically extract useful biomedical information from unstructured text for psychiatric research using automated text mining approaches.

Materials and Equipment:

- Text mining software (Taltac, Tropes, Sphinx, ALCESTE, or custom solutions)

- Corpus of documents (medical records, interview transcripts, online postings)

- High-performance computing resources for large datasets

- Validation datasets with expert ratings

Procedure:

- Corpus Creation: Define inclusion criteria and assemble a collection of documents from relevant sources (medical records, HTML files, web postings, clinical notes) [3].

- Pre-processing: Introduce structure to the corpus through:

- Tokenization (splitting text into individual words or phrases)

- Stopword removal (eliminating common but uninformative words)

- Lemmatization (reducing words to their base or dictionary form)

- Part-of-speech tagging [3]

- Pattern Extraction: Apply knowledge extraction methods such as:

- Classification algorithms for categorizing texts

- Clustering techniques for identifying natural groupings

- Association rules for discovering co-occurrence patterns

- Trend analysis for tracking changes over time [3]

- Model Development: Build predictive models using machine learning approaches appropriate for the specific research question.

- Validation: Assess model performance against gold standards using measures such as:

- Sensitivity and specificity calculations

- Receiver Operating Characteristic (ROC) curves

- Cross-validation techniques [3]

Application Areas:

- Psychopathology (observational studies focusing on mental illnesses)

- Patient perspective (patients' thoughts and opinions)

- Medical records (safety issues, quality of care, treatment descriptions)

- Medical literature (identification of new scientific information) [3]

Visualization of Research Workflow

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Materials and Software for Linguistic-Psychological Research

| Item Name | Type/Category | Function/Purpose | Example Sources/References |

|---|---|---|---|

| Linguistic Inquiry and Word Count (LIWC) | Software tool | Automated text analysis program that evaluates linguistic features across social, psychological and part of speech dimensions [24] | University of Oregon studies [24] |

| Clinical Interview Protocols | Assessment tool | Structured formats for collecting natural language samples during clinical assessment | ADIS-5L (Anxiety and Related Disorders Interview Schedule) [25] |

| Text Mining Software Platforms | Software tool | Suites for pre-processing, pattern extraction, and analysis of textual data | Taltac, Tropes, Sphinx, ALCESTE [3] |

| Validation Datasets | Data resource | Gold-standard corpora with expert ratings for validating language-based classifications | Congressional Records with demographic data [26] |

| Computational Linguistics Algorithms | Methodological approach | Techniques from linguistics, cognitive science, and AI for automated language processing | Natural Language Processing (NLP), machine learning [25] |

| Specialized Lexicons | Data resource | Curated word lists representing psychological constructs (e.g., negative emotion words, anxiety words) | Depression and anxiety lexicons [25] |

The integration of these tools and methods creates a robust infrastructure for advancing text mining approaches in psychological research. As the field evolves, particularly with the influence of Large Language Models, methodological adjustments will be necessary to maintain the validity and reliability of linguistic markers for psychological assessment. Future research should focus on developing LLM-resistant linguistic markers and validation protocols that can account for these technological influences on natural language [26].

Methodologies and Real-World Applications in Mental Health and Pharma

This application note provides a structured framework for implementing a text mining pipeline tailored to research on psychological journal terminology. We detail a protocol from data acquisition to knowledge extraction, incorporating a real-world case study that analyzes methodological terminology trends in psychology abstracts. The guidelines are designed to equip researchers and drug development professionals with reproducible methods for conducting meta-research and terminology analysis at scale.

Text mining and Natural Language Processing (NLP) techniques have become indispensable for analyzing large-scale scientific literature, offering opportunities to extract meaningful patterns from massive bodies of scholarly text [7]. Within psychology, these methods are particularly valuable for investigating reporting quality, methodological transparency, and the evolution of domain-specific terminology [8]. The replication crisis in psychology has underscored the critical need for rigorous methodology and transparent reporting, making the automated analysis of methodological language a crucial area of research [7]. This document outlines a comprehensive, end-to-end pipeline to facilitate such analyses, with a specific focus on tracking methodological terminology in psychology journals.

Protocol: End-to-End Text Mining Pipeline

Stage 1: Data Collection and Preprocessing

Objective: To gather and prepare a corpus of psychological research abstracts for terminology analysis.

Materials & Reagents:

- Computing Environment: Python 3.8+ or R 4.0+ with necessary libraries.

- Data Sources: Access to bibliographic databases (e.g., PubMed, PsycINFO, Web of Science) via API or bulk download.

- Software Libraries: For Python:

requests(API calls),BeautifulSoup/Scrapy(web scraping),pandas(data manipulation),NLTK/spaCy(NLP tasks). For R:rvest(scraping),dplyr(data manipulation),tm/textmineR(text mining).

Procedure:

- Data Retrieval:

- Define search parameters to target psychology journals and a specific time range (e.g., 1995-2024).

- Use database-specific APIs (e.g., PubMed E-utilities) to execute searches and retrieve metadata, including titles, abstracts, publication years, and DOIs.

- Store the raw results in a structured format (e.g., CSV, JSON).

- Data Cleaning and Preprocessing:

- Text Normalization: Convert all text to lowercase.

- Tokenization: Split text into individual words or tokens.

- Remove Punctuation and Numbers: Eliminate non-alphanumeric characters and digits unless numerically significant.

- Handle Special Characters and Encoding: Ensure consistent UTF-8 encoding.

- Remove Stop Words: Filter out common, uninformative words (e.g., "the," "and," "is").

- Lemmatization: Reduce words to their base or dictionary form (e.g., "running" → "run").

Troubleshooting Tip: If API rate limits are encountered, implement throttling (e.g., time.sleep() between requests) or use batch processing.

Stage 2: Terminology Extraction

Objective: To identify and extract method-related keywords from the preprocessed text corpus.

Materials & Reagents:

- Curated Glossary: A domain-specific dictionary of target terminology. For methodological analysis, this may include terms like "randomized controlled trial," "factor analysis," "longitudinal," "pre-registration," "effect size," "covariate" [7].

- Software Libraries: Python:

spaCy(for phrase matching); R:stringr,textmineR.

Procedure:

- Glossary Development: Compile a list of relevant methodological terms. This can be derived from methodological textbooks, reporting guidelines (e.g., APA Manual [7]), or through preliminary exploratory analysis of the corpus.

- Term Extraction: Apply a two-pronged approach [7]:

- Exact String Matching: Identify exact occurrences of glossary terms within the abstracts.

- Fuzzy String Matching: Use algorithms (e.g., Levenshtein distance) to account for minor spelling variations or typos.

- Validation: Manually review a random sample of extracted terms to assess precision and recall, refining the glossary as needed.

Stage 3: Semantic Analysis and Clustering

Objective: To explore the semantic relationships between extracted terms and group them into meaningful thematic clusters.

Materials & Reagents:

- Embedding Model: A pre-trained language model capable of generating contextualized word embeddings, such as SciBERT (optimized for scientific text) [7].

- Clustering Algorithm:

scikit-learnfor Python (K-means, DBSCAN) orstatsfor R.

Procedure:

- Text Vectorization:

- For each extracted term, use the SciBERT model to generate a contextualized embedding. This involves processing every sentence where the term appears and averaging the resulting embeddings to create a unified vector representation for the term [7].

- Dimensionality Reduction: Apply techniques like Uniform Manifold Approximation and Projection (UMAP) or t-Distributed Stochastic Neighbor Embedding (t-SNE) to reduce the vectors to 2 or 3 dimensions for visualization.

- Clustering:

- Implement an unsupervised clustering algorithm like K-means on the full-dimensionality vectors.

- Determine the optimal number of clusters ( k ) using metrics such as the elbow method or silhouette score.

- Analyze the composition of each cluster to interpret the thematic grouping of methodological terms (e.g., a cluster for statistical methods, another for research designs) [7].

Stage 4: Trend Analysis and Knowledge Extraction

Objective: To quantify the prevalence of methodological terms over time and synthesize findings into actionable knowledge.

Procedure:

- Temporal Analysis:

- Calculate the annual frequency and relative prevalence (percentage of abstracts containing at least one methodological term) for the entire glossary and for individual clusters.

- Fit regression models (linear or nonlinear) to identify statistically significant trends in terminology usage [7].

- Knowledge Synthesis:

- Interpret the trends in the context of the psychological research landscape (e.g., an increase in "pre-registration" may reflect a growing emphasis on research transparency).

- Relate the semantic clusters to established methodological paradigms in psychology.

- Formulate conclusions regarding the evolution of methodological awareness and reporting practices in the field.

A 2025 study analyzed 85,452 psychology abstracts to investigate the prevalence and semantic structure of methodological terminology over three decades [7]. The following tables summarize the core quantitative findings and the experimental protocol of this study, which serves as a model implementation of the pipeline described above.

Table 1: Summary Results of Terminology Analysis in Psychology Abstracts

| Metric | Value | Interpretation |

|---|---|---|

| Total Abstracts Analyzed | 85,452 | Large-scale corpus enabling robust trend analysis [7]. |

| Abstracts with ≥1 Method Term | 78.16% | High penetration of methodological language in the field [7]. |

| Average Terms per Abstract | 1.8 | Indicates common reporting of multiple methodological aspects [7]. |

| Trend in Term Prevalence | Significant Increase | Suggests a shift towards greater methodological transparency over time [7]. |

Table 2: Detailed Protocol for the Referenced Case Study

| Pipeline Stage | Specific Implementation in Case Study |

|---|---|

| Data Collection | Collected 85,452 abstracts from psychology journals spanning 1995-2024 [7]. |

| Terminology Extraction | Used a curated glossary of 365 method-related keywords with exact and fuzzy string matching [7]. |

| Semantic Analysis | Terms were encoded using the SciBERT model, averaging embeddings across contextual occurrences [7]. |

| Clustering | Applied both standard and weighted k-means clustering, yielding 6 and 10 thematic clusters, respectively [7]. |

| Trend Analysis | Performed frequency and regression analysis to identify increasing trends in methodological term usage [7]. |

Visualization of the Text Mining Pipeline

The following diagram, generated using Graphviz, illustrates the logical workflow and data flow of the complete text mining pipeline, from initial data collection to final knowledge extraction.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools and Resources

| Item Name | Function/Benefit | Example/Notes |

|---|---|---|

| Curated Terminology Glossary | Serves as the gold-standard reference for targeted term extraction, ensuring analysis relevance and precision [7]. | A list of 365 method-related terms; can be tailored to specific sub-domains (e.g., clinical trials, psychometrics). |

| Contextual Language Model (SciBERT) | Generates context-aware embeddings for text, capturing semantic meaning more effectively than static models for scientific text [7]. | Pre-trained model; superior for encoding methodological terms found in scholarly abstracts [7]. |

| Clustering Algorithm (K-means) | An unsupervised machine learning method that groups semantically similar terms into thematic clusters without pre-defined labels [7]. | Requires selection of cluster number (k); use elbow method for guidance. |

| Visualization Palette | A set of colors for creating accessible and meaningful charts and diagrams, ensuring interpretability for all readers, including those with color vision deficiencies [27] [28]. | Use categorical palettes for clusters. Ensure sufficient contrast (≥3:1 ratio) against background [28]. |

| Bibliographic Database API | Programmable interface for large-scale, automated collection of scholarly metadata (titles, abstracts, authors, etc.) [7]. | PubMed E-utilities, IEEE Xplore, Springer Nature, or Web of Science APIs. |

Sentiment Analysis and Deep Learning for Identifying Psychological Phenomena

Application Notes

The integration of sentiment analysis and deep learning offers a powerful, scalable methodology for identifying psychological phenomena within large-scale textual data. This approach is particularly valuable for psychology journal terminology research, where it can transform unstructured text into quantifiable insights about internal states such as emotions, motives, and attitudes [29]. The shift from manual coding and traditional dictionary methods to advanced artificial intelligence (AI) models significantly enhances the scope, accuracy, and efficiency of psychological text analysis.

- Core Functionality: These technologies operate by processing human-generated text data using syntactic and semantic analysis. Deep learning models, particularly those based on transformer architectures, can decode not only explicit statements but also implicit emotional content, sarcasm, and contextual nuances that are essential for accurate psychological assessment [30].

- Key Applications in Psychological Research: In research settings, these tools can be deployed to analyze data from social media platforms, clinical notes, therapy transcripts, and scientific literature. Specific applications include tracking public mental health trends, identifying expressions of specific disorders in patient narratives, and mapping the prevalence of psychological concepts within academic corpora [29] [31] [30].

- Advantages over Traditional Methods: Moving beyond manual coding—which is resource-intensive and difficult to scale—and simple dictionary methods—which often generate false positives—deep learning models can learn complex patterns from manually coded data, offering a superior approximation of human judgment across various psychological coding tasks [29].

Experimental Protocols

Protocol 1: A Hybrid Deep Learning Framework for Topic-Based Sentiment Analysis

This protocol outlines a methodology for automatically discovering topics and classifying sentiment within short texts, such as social media posts, which can be adapted for analyzing expressions of psychological phenomena [31].

1. Data Collection and Preprocessing

- Data Source: Collect a corpus of relevant short texts (e.g., from Twitter, forum posts, or journal abstracts) using API access or pre-existing datasets.

- Text Cleaning: Remove URLs, user mentions, and punctuation. Convert text to lowercase and correct common misspellings.

- Tokenization: Split text into individual words or tokens.

- Stopword Removal: Filter out common, low-information words.

- Lemmatization: Reduce words to their base or dictionary form (e.g., "running" to "run").

2. Unsupervised Topic Identification using Latent Dirichlet Allocation (LDA)

- Objective: To automatically discover latent psychological themes or phenomena within the text corpus without pre-defined labels.

- Procedure:

- a. Represent the preprocessed text corpus as a document-term matrix.

- b. Apply the LDA algorithm to identify a pre-specified number of topics (k). The optimal k can be determined by evaluating model performance metrics like coherence score.

- c. For each discovered topic, extract the most probable words and automatically generate a human-readable label based on sentiment and aspect terms present in the topic [31].

- Output: A set of k topics, each defined by a label and a distribution of keywords.

3. Supervised Sentiment Analysis using a Hybrid Deep Learning Model

- Objective: To classify the sentiment (e.g., Positive, Negative, Neutral) of each text document within the identified topics.

- Model Architecture: A hybrid model combining:

- Procedure:

- a. Word Embedding: Represent each word in the text as a dense vector using pre-trained word embeddings (e.g., GloVe or Word2Vec).

- b. Feature Learning: Feed the sequence of word vectors into the hybrid BiLSTM-GRU model.

- c. Classification: The model's final layers (e.g., a Global Average Pooling layer followed by a softmax layer) output the sentiment polarity [31].

- d. Training: Train the model on a manually labeled subset of data, using categorical cross-entropy as the loss function.

Protocol 2: Fine-Tuning a Pre-trained Transformer for Psychological Phenomena Detection

This protocol leverages state-of-the-art transformer models, which have shown high performance in capturing psychological internal states from text [29].

1. Data Preparation and Manual Coding

- Sampling: Select a representative subset of the textual corpus for manual coding.

- Coding Scheme: Develop a precise coding scheme that defines the psychological constructs of interest (e.g., "achievement motivation," "cognitive dissonance," "emotional instability") [29] [32].

- Human Coding: Have multiple trained coders independently annotate the text samples based on the scheme. Calculate inter-coder reliability (e.g., Krippendorff's alpha) to ensure a satisfactory agreement standard [29].

2. Model Selection and Fine-Tuning

- Model Choice: Select a transformer model pre-trained on biomedical or general domain text. Examples include:

- Fine-Tuning: The pre-trained model is further trained (fine-tuned) on the manually coded dataset. This process adapts the model's general language knowledge to the specific task of identifying the target psychological phenomena.

3. Model Evaluation and Deployment

- Performance Assessment: Evaluate the fine-tuned model on a held-out test set of manually coded data. Use metrics such as accuracy, precision, recall, and F1-score.

- Inference: Deploy the best-performing model to automatically code the entire, larger textual corpus for the defined psychological phenomena.

Data Presentation

Performance Metrics of Text Mining Methods for Identifying Internal States

The following table summarizes findings from a systematic evaluation of various text mining methods against the gold standard of manual human coding [29].

| Method Category | Example Methods | Key Characteristics | Reported Performance |

|---|---|---|---|

| Dictionary Methods | LIWC, Custom-Made Dictionaries | Uses pre-defined word lists; prone to false positives; performs well on infrequent categories. | Lower performance compared to machine learning; generates more false positives [29]. |

| Supervised Machine Learning | Fine-tuned Large Language Models (e.g., BERT) | Learns patterns from manually coded data; requires a labeled training set. | Highest performance across various coding tasks for internal states [29]. |

| Zero-Shot Classification | Instructing GPT-4 with text prompts | Does not require task-specific training data; uses instructions to perform coding. | Promising, but falls short of the performance of models trained on manually analyzed data [29]. |

Key Deep Learning Models for Biomedical and Psychological Text Analysis

This inventory details state-of-the-art transformer models that can be leveraged for psychological text analysis, particularly in clinical or scientific contexts [13].

| Model Name | Full Form | Pre-training Corpus | Potential Application in Psychology Research |

|---|---|---|---|

| BioBERT | Bio-Bidirectional Encoder Representations from Transformers | PubMed, PMC | Analyzing psychology journal articles and scientific literature [13]. |

| ClinicalBERT | Clinical Bidirectional Encoder Representations from Transformers | MIMIC-III | Identifying psychological phenomena in electronic health records and clinical notes [13]. |

| BioMed-RoBERTa | BioMedical Robustly optimized BERT | Semantic Scholar | Large-scale analysis of psychological concepts in academic text [13]. |

Workflow Visualization

Hybrid Deep Learning Sentiment Analysis

Psychological Phenomena Detection Protocol

The Scientist's Toolkit

Research Reagent Solutions for Psychological Text Mining

This table details key software libraries and platforms essential for implementing the described experimental protocols.

| Tool Name | Function | Key Features for Psychology Research |

|---|---|---|

| Hugging Face [13] | Provides access to thousands of pre-trained transformer models. | Easy access to state-of-the-art models like BioBERT and ClinicalBERT for fine-tuning on psychological tasks. |

| Spacy [13] | Industrial-strength Natural Language Processing (NLP) library. | Provides robust pipelines for text preprocessing, including tokenization, lemmatization, and part-of-speech tagging. |

| NLTK [13] | A leading platform for building Python programs to work with human language data. | Useful for educational purposes and implementing classic NLP techniques and dictionary methods. |

| Gensim [13] | A library for topic modeling and document similarity. | Enables the implementation of unsupervised topic modeling algorithms like LDA to discover latent psychological themes. |

| Spark NLP [13] | An open-source text processing library for advanced NLP. | Offers scalable, production-grade NLP for analyzing very large datasets, such as massive social media corpora. |

Developing Domain-Specific Ontologies for Drug Abuse and Mental Health Terminology

The exponential growth of user-generated content on web and social media platforms presents a novel opportunity for conducting timely and insightful epidemiological surveillance of substance use behaviors and mental health trends [33]. Harnessing these vast, unstructured textual data sources requires sophisticated computational approaches that can accurately identify and interpret complex domain-specific terminology. Domain-specific ontologies provide the necessary structured framework, formally defining key concepts, their properties, and the relationships between them, thereby enabling powerful text mining and natural language processing (NLP) applications [33]. This document outlines detailed application notes and protocols for developing and utilizing such ontologies, with a specific focus on the drug abuse and mental health domains, framed within the context of psychological research and terminology.

Domain-Specific Ontology Development Protocol

The development of a robust domain ontology is a systematic process. The following protocol, adapting the established 101 ontology development methodology, provides a step-by-step guide [33].

Phase 1: Planning and Scoping

- Define Domain and Scope: Clearly articulate the ontology's boundaries. For drug abuse, this may encompass substances, use behaviors, disorders, treatments, and associated psychological concepts. Scope is typically defined using competency questions the ontology should answer (e.g., "What are the slang terms for opioids?").

- Reuse Existing Ontologies: Identify and integrate concepts from relevant pre-existing ontologies and terminologies to ensure interoperability and reduce redundant effort. Key resources include:

Phase 2: Implementation and Population

- Enumerate Terms: Compile a comprehensive list of key terms from diverse sources, including clinical literature (e.g., DSM-5), authoritative glossaries [35] [36], and web-based user-generated content to capture colloquial language.

- Define Classes and Hierarchy: Organize terms into a hierarchical class structure (e.g.,

Opioidis a subclass ofDrug). Establish object properties to define relationships between classes (e.g.,isTreatmentFor). - Create Instances: Populate the ontology with specific instances of classes (e.g., "Buprenorphine" is an instance of the class

MedicationAssistedTreatment).

Phase 3: Evaluation and Application

- Quality Evaluation: Assess the ontology using tools and best practices from the semantic web community. This includes checking for logical consistency and coverage of domain concepts.

- Integration with Machine Learning: The ontology can be integrated with NLP and ML pipelines to dramatically reduce false alarm rates by adding external knowledge to the statistical learning process [33]. For example, ontological concepts can be used as features in a classifier or to validate the output of a statistical model.

Table 1: Quantitative Profile of the Drug Abuse Ontology (DAO) as of 2022 [33]

| Component | Count | Description |

|---|---|---|

| Classes | 315 | Broad categories (e.g., Drug, Symptom, Treatment). |

| Relationships | 31 | Connections between classes (e.g., hasSideEffect). |

| Instances | 814 | Specific examples of classes (e.g., "naloxone" is an instance of Antagonist). |

The following workflow diagram illustrates the core ontology development process.

Application Note: Integrating Ontologies with Text Mining Workflows

Integrating a domain-specific ontology like the DAO into a text mining pipeline for psychology journal research significantly enhances the ability to extract meaningful information from unstructured text. This application note details two primary methods for this integration.

Semantic Annotation and Knowledge Graph Construction

This process involves identifying entities in text and linking them to concepts in the ontology.

- Protocol: