The Evolving Language of Cognition: From Theoretical Foundations to AI-Driven Applications in Psychological Science

This article charts the significant evolution of cognitive language within psychology publications, tracing its journey from foundational theories to its current state as a multidisciplinary, data-driven science.

The Evolving Language of Cognition: From Theoretical Foundations to AI-Driven Applications in Psychological Science

Abstract

This article charts the significant evolution of cognitive language within psychology publications, tracing its journey from foundational theories to its current state as a multidisciplinary, data-driven science. Aimed at researchers, scientists, and drug development professionals, it explores the paradigm shift from idealized linguistic models to a focus on diversity and neural mechanisms. The review critically examines the rise of advanced methodologies like neuroimaging and AI, addresses persistent cognitive and methodological roadblocks, and evaluates new validation frameworks. By synthesizing findings across these four intents, this article provides a comprehensive map of the field's trajectory and its profound implications for developing cognitive assessments, therapeutics, and computational tools in biomedical research.

From Idealization to Real-World Cognition: The Theoretical Shift in Language Science

The Traditional Focus on Language as an Idealized System

This whitepaper examines the traditional paradigm in linguistics and cognitive science that treats language as an idealized, homogeneous system. This approach, championed by foundational figures like Saussure and Chomsky, deliberately isolated language's core structure from the complexities of its real-world use. Framed within the broader thesis of how cognitive language research has evolved, this paper argues that while this methodology yielded significant initial progress, it has also resulted in biologically and cognitively implausible models. The field is now undergoing a paradigm shift, moving toward a neurocognitive approach that embraces linguistic diversity—including typological variations, sociolinguistic phenomena, and diverse developmental paths—as essential for a complete understanding of the language-ready brain [1]. This evolution mirrors a broader trend in psychological research toward incorporating quantitative data and robust experimental protocols to validate and refine theoretical models.

For decades, the central goal of linguistics and the cognitive science of language has been to unearth the fundamental, universal properties that underlie all human languages. To achieve this, theorists have consistently employed a strategy of idealization, abstracting away from the immense diversity and variability inherent in everyday language use. This approach construes languages as invariant systems emerging from an ocean of regional, social, and individual variations [1].

The intellectual heritage of this tradition is profound. It can be traced back to Ferdinand de Saussure, who famously distinguished between langue (the abstract, systematic language structure of a community) and parole (the individual, variable acts of speech) [1]. This distinction positioned linguistics as the scientific study of langue. Later, Noam Chomsky refined this further by arguing that linguistic theory is primarily concerned with ideal speaker-listeners in perfectly homogeneous speech communities, thereby filtering out the "noise" of performance errors and sociolinguistic variation [1].

This whitepaper will deconstruct this traditional focus, analyzing its theoretical underpinnings, the specific dimensions of diversity it overlooks, and the consequent limitations for a true science of the mind and brain. It will then outline the modern, data-driven shift toward a science that views language diversity not as a problem to be solved, but as a core source of insight.

Theoretical Foundations and the Drive for Universals

The traditional approach is built on the premise that the human brain is equipped with an innate, domain-specific language faculty (sometimes termed "universal grammar"). The vast and rapid acquisition of language by children, despite highly variable input, is presented as the primary evidence for this innate capacity. The object of study, therefore, becomes this internal, biological capacity rather than its external, messy manifestations.

A key methodological practice has been to rely on a narrow empirical base. As noted in recent literature, "most of this research has relied on a small set of languages, most notably, widely spoken Indo-European languages, like English or Spanish," while largely ignoring "non-WEIRD (Western, Educated, Industrialized, Rich, Democratic) societies/subjects" [1]. This was done under the assumption that the core computational system of language would reveal itself most clearly in standardized, formal varieties.

However, this level of abstraction creates a significant problem for interdisciplinary research, particularly for neuroscience. As critics have pointed out, the radical idealization of language phenomena can "produce biologically implausible objects/processes" [1]. There exists a fundamental explanatory gap between the abstract elements of linguistic theory (like rules and representations) and the identifiable biological units and processes discovered by neuroscience [1]. The challenge is to bridge this gap by developing cognitive and neural models that can account for the full spectrum of linguistic behavior, not just its idealized core.

The Overlooked Dimensions of Linguistic Diversity

The traditional focus on an idealized system has led to the systematic neglect of several key dimensions of linguistic variation. A comprehensive neurocognitive approach to language must account for at least the following four domains of diversity.

- Functional Diversity (Multifunctionality of Language): Language is used for a wide range of functions beyond the mere transmission of factual information. It is used for social bonding, expressing identity, structuring our own thoughts, and more. The cognitive resources and neural substrates recruited can differ significantly depending on whether one is engaging in a casual conversation full of implicatures versus delivering a formal lecture [1].

- Sociolinguistic Diversity: Every language exists in a multitude of social and geographical varieties (dialects, sociolects, registers). A cognitively realistic model must explain how speakers seamlessly navigate between these varieties—for instance, how a bilingual person selects the appropriate language or how a speaker switches from a formal to an informal register [1].

- Typological Diversity (Cross-Linguistic Variation): The world's languages exhibit stunning structural diversity at all levels: phonological, morphological, syntactic, and lexical. Relying on a small subset of typologically similar languages risks mistaking the specific properties of those languages for universal features of the language faculty. A robust cognitive science of language must be tested against and account for this full range of structural possibilities [1].

- Individual and Developmental Diversity: Language processing differs from one person to another, influenced by unique developmental trajectories, neurodiversity, and individual cognitive differences [1]. For example, individuals with cognitive conditions like autism may bear a "different human linguisticality, but still a functional one" [1]. Furthermore, the brain regions involved in language, while showing general patterns, exhibit notable individual differences in their exact extension and location [1].

Table 1: Key Dimensions of Linguistic Diversity Overlooked by the Idealized Model

| Dimension of Diversity | Description | Example | Cognitive Implication |

|---|---|---|---|

| Functional Diversity | The different purposes for which language is used. | Social bonding vs. conveying information. | Recruitment of different cognitive resources and neural networks depending on the communicative goal. |

| Sociolinguistic Diversity | The existence of different dialects, sociolects, and registers within a language. | Switching between a formal register at work and a casual register with friends. | Requires sophisticated cognitive control and context-management systems. |

| Typological Diversity | The structural differences between the world's languages. | Different word orders, case systems, or sound inventories. | Suggests the language faculty is a highly malleable cognitive device rather than a rigid, pre-specified template. |

| Individual/Developmental Diversity | Differences in language acquisition and processing across individuals and neurotypes. | Unique developmental paths in monolingual and bilingual children; language in neurodiverse populations. | Indicates that there is no single "standard" neural implementation of the language faculty. |

The Empirical Shift: Quantitative Data and Experimental Methods

The evolution beyond the idealized model is being driven by methodological advances that prioritize quantitative data collection and rigorous, reproducible experimental protocols. This shift aligns with the broader "quantitative turn" in psychological and cognitive research.

Table 2: Types of Quantitative and Qualitative Data in Language Research

| Data Type | Description | Examples in Language Research |

|---|---|---|

| Quantitative Data | Data that can be counted or measured numerically [2]. | Reaction times in psycholinguistic tasks, accuracy rates, neuroimaging data (fMRI activation levels, ERP amplitudes), corpus statistics (word frequency). |

| Discrete Data | Quantitative data with fixed, separate values [2]. | Number of words recalled in a memory test, number of grammatical errors, bounce rate in a web-based experiment. |

| Continuous Data | Quantitative data that can take any value within a range [2]. | Voice pitch (Hz), reading speed (words per minute), duration of a gaze fixation. |

| Qualitative Data | Non-numerical, descriptive data [2]. | Transcripts of conversational interactions, introspective reports, case studies of language disorders. |

Modern Experimental Protocols and Tools

Modern research into linguistic diversity leverages powerful tools and platforms that facilitate the collection of high-quality, reproducible data from diverse populations.

- Online Experiment Platforms (e.g., Gorilla): Tools like Gorilla Experiment Builder have revolutionized data collection by allowing researchers to design and deploy behavioral and cognitive experiments online without extensive coding [3]. This enables the rapid recruitment of large, diverse samples, moving beyond the traditional reliance on university student populations. A researcher can "launch a study, went to lunch and come back to 400 participant responses" [3].

- Controlled Experiments and A/B Testing: These are cornerstone methods for establishing causality. In language research, this could involve manipulating a variable (e.g., sentence complexity, speaker accent) and measuring its effect on a dependent variable (e.g., comprehension accuracy, reaction time) [2].

- Interdisciplinary Inferences: Fields like comparative psychology, cognitive archaeology, and experimental semiotics use experiments on extant species (including humans) to make inferences about the cognitive and linguistic abilities of extinct hominins, thus contributing to the broader thesis of language evolution [4].

The Scientist's Toolkit: Essential Research Reagents and Materials

For researchers embarking on experimental studies of language diversity, the following tools and "reagents" are essential.

Table 3: Key Research Reagent Solutions for Language Cognition Studies

| Item | Function/Brief Explanation |

|---|---|

| Online Experiment Platform (e.g., Gorilla) | A platform for building, deploying, and managing online behavioral experiments. It allows for the collection of validated reaction time and accuracy data from a global participant pool [3]. |

| Linguistic Stimulus Sets | Carefully controlled sets of words, sentences, or texts that vary on specific parameters (e.g., frequency, complexity, semantic content). These are the fundamental inputs for any language processing experiment. |

| Eye-Tracking System | Apparatus for measuring eye movements and gaze fixation. Used to study real-time language processing in reading, scene viewing, and spoken language comprehension. |

| Neuroimaging Resources (fMRI, EEG/MEG) | Functional Magnetic Resonance Imaging (fMRI) locates neural activity, while Electroencephalography (EEG) and Magnetoencephalography (MEG) track its millisecond-level timing. |

| Data Analysis Software (R, Python, SPSS) | Software environments for statistical analysis and visualization of quantitative data. Crucial for analyzing behavioral responses, neural data, and corpus statistics [3]. |

| Diagram-as-Code Tools (e.g., Mermaid, Eraser) | Tools that use a text-based syntax to generate consistent, version-controlled diagrams for experimental workflows and theoretical models, aiding in reproducibility and clear communication [5]. |

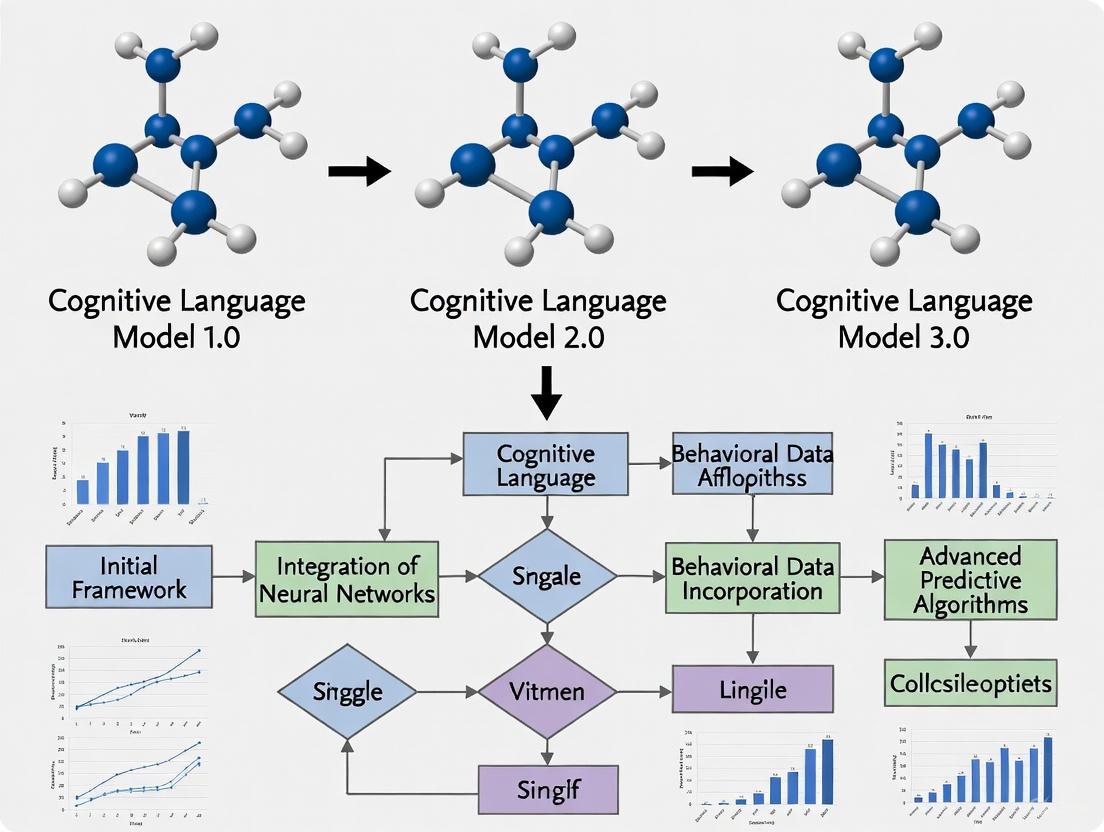

Visualizing the Paradigm Shift in Language Research

The evolution from an idealized to a diversity-focused approach can be conceptualized as a shift in research paradigms, as illustrated below.

Diagram 1: The conceptual shift from an idealized system paradigm to a diversity-focused paradigm in language research.

The traditional focus on language as an idealized system served an important purpose in the early development of linguistics and cognitive science, providing a clear, if simplified, object of study. However, this paradigm has reached its limits. A new consensus is emerging that the path to a truly explanatory science of language lies in directly confronting and explaining its pervasive diversity. This involves integrating insights from typology, sociolinguistics, developmental psychology, and neuroscience, and leveraging modern quantitative methods and experimental tools. By making linguistic diversity a central explanandum rather than a nuisance variable, the cognitive science of language is evolving to build a more comprehensive, biologically grounded, and accurate understanding of humanity's most distinctive trait.

Language, the hallmark of the human condition, is fundamentally characterized by diversity. Contemporary cognitive science and neuroscience have increasingly recognized that understanding this linguistic variation is not merely an adjunct to research but essential for constructing biologically plausible models of language processing. This whitepaper argues that a comprehensive neurocognitive approach to language must account for four key dimensions of diversity: functional multifunctionality, sociolinguistic variation, typological differences between languages, and diverse developmental paths. By integrating recent experimental findings and theoretical advances, we demonstrate how embracing linguistic diversity provides critical insights into the core properties of human language, its cognitive architecture, and its neurological foundations, ultimately leading to more accurate models of how the brain processes language in its natural, varied contexts.

The cognitive science of language has undergone a significant evolution in perspective. Traditional approaches, influenced by Saussure's focus on langue over parole and Chomsky's idealization of homogeneous speech communities, often treated linguistic variation as noise to be minimized [1]. This pursuit of universal properties, while fruitful, created biologically implausible models that failed to account for how language is actually processed by human brains in diverse real-world contexts [1]. The emerging paradigm recognizes that variation permeates every level of language, from phonological processing to syntactic structures, and that this diversity holds the key to understanding the true nature of human linguisticality.

This whitepaper situates this theoretical shift within broader developments in psychological research, where individual differences and population diversity are increasingly recognized as crucial explanatory factors rather than confounds. We explore how this evolution in perspective enables more comprehensive models of language processing, informs our understanding of language evolution and development, and provides novel pathways for clinical applications in neurological rehabilitation and cognitive enhancement.

Theoretical Foundations: Four Dimensions of Linguistic Diversity

A robust cognitive model of language must account for four interconnected dimensions of linguistic variation that reflect the true extent of diversity in human language capacities.

The Multifunctionality of Language

Language serves multiple functions beyond simple information transfer, including social bonding, conceptual structuring, and internal thought processes. Each function potentially recruits distinct cognitive resources and neurological substrates [1]. For instance, casual conversations relying heavily on implicatures and shared knowledge engage different processing mechanisms than formal exchanges where explicit information dominates [1]. This functional diversity necessitates cognitive models that can account for how the same linguistic system adapts to different communicative goals and contexts.

Sociolinguistic Diversity

Language varies systematically across social groups, geographical regions, and contextual settings. Crucially, this variation is not merely superficial but impacts core cognitive processes. Bilingual speakers and those who navigate multiple dialects demonstrate remarkable cognitive flexibility in selecting appropriate linguistic varieties based on context [1]. This management of sociolinguistic diversity requires cognitive control mechanisms that interface with the core language faculty, suggesting that the boundaries between "language" and "other" cognitive systems may be more permeable than traditionally assumed.

Typological Differences Between Languages

The world's approximately 7,000 languages exhibit remarkable structural diversity at all levels: phonological, morphological, syntactic, and lexical [1]. Despite this diversity, the human cognitive system acquires and processes any language with apparent ease. This tension between structural diversity and processing uniformity presents both a challenge and opportunity for cognitive models. Examining how the brain processes typologically distinct languages (e.g., isolating versus polysynthetic languages) provides a natural experiment for determining which aspects of language processing are universal versus language-specific.

Diverse Developmental Paths

Language development follows different trajectories across individuals, influenced by genetic predispositions, environmental factors, and neurocognitive differences. Even within neurotypical populations, psycholinguistic responses to identical linguistic stimuli show significant individual variation [1]. This diversity is even more pronounced in neurodiverse populations, where alternative developmental paths can result in functional but distinct linguistic abilities [1]. Understanding these varied developmental trajectories is essential for constructing complete models of the language faculty.

Table 1: Key Dimensions of Linguistic Variation and Their Cognitive Implications

| Dimension of Diversity | Key Aspects | Cognitive Implications |

|---|---|---|

| Functional Multifunctionality | Information transfer, social functions, conceptual structuring | Recruitment of different cognitive resources based on communicative function |

| Sociolinguistic Diversity | Dialects, sociolects, registers, multilingualism | Interface between language faculty and cognitive control systems |

| Typological Differences | Phonological, morphological, syntactic variation across languages | Identification of universal versus language-specific processing mechanisms |

| Diverse Developmental Paths | Neurotypical variation, neurodiversity, bilingual acquisition | Malleability of language faculty and multiple routes to linguistic competence |

Methodological Approaches: Experimental Paradigms for Studying Linguistic Diversity

Investigating the cognitive correlates of linguistic diversity requires innovative methodological approaches that move beyond traditional paradigms focused on homogeneous groups and standardized stimuli.

Cross-Linguistic Experimental Designs

Cross-linguistic comparisons provide powerful natural experiments for testing the universality of cognitive processes. These studies require carefully designed stimuli that are comparable across languages while respecting their structural differences. Key methodological considerations include:

- Stimulus Development: Creating matched stimuli that account for phonological, morphological, and syntactic differences while controlling for frequency, complexity, and psycholinguistic variables.

- Participant Selection: Ensuring representative sampling across language groups, including speakers from diverse educational and socioeconomic backgrounds.

- Task Design: Developing tasks that are culturally and linguistically appropriate for all participant groups, avoiding biases toward particular linguistic structures.

Neuroimaging Approaches to Variation

Modern neuroimaging techniques have revealed that the exact extension, location, and boundaries of language-related regions of interest (RoIs) vary across individuals [1]. This neurological variation correlates with differences in language experience, including multilingualism and exposure to different dialects. Methodological best practices include:

- Individualized Localizers: Using participant-specific functional localizers rather than relying solely on standardized coordinates.

- Cross-Population Validation: Testing neural models across diverse populations, including non-WEIRD (Western, Educated, Industrialized, Rich, Democratic) societies.

- Naturalistic Paradigms: Employing ecologically valid stimuli, including conversational speech and narrative comprehension, rather than focusing exclusively on decontextualized linguistic items.

Longitudinal Developmental Studies

Tracking language development across diverse populations provides insights into how linguistic variation emerges and stabilizes in cognitive systems. These studies examine:

- Bilingual Acquisition: How children exposed to multiple languages develop the cognitive mechanisms for managing linguistic diversity.

- Atypical Development: How neurodiverse populations (e.g., individuals with autism spectrum condition) develop alternative but functional linguistic systems.

- Lifespan Changes: How language processing adapts to cognitive changes across the lifespan, from childhood through aging.

Table 2: Essential Methodological Considerations for Studying Linguistic Diversity

| Methodological Approach | Key Techniques | Applications to Diversity Research |

|---|---|---|

| Cross-Linguistic Comparison | Matched stimulus design, structural priming, eye-tracking | Identifying universal versus language-specific processing mechanisms |

| Neuroimaging of Variation | fMRI with individual localizers, ERP, fNIRS, oscillation coupling | Mapping individual differences in neural organization for language |

| Developmental Tracking | Longitudinal design, microgenetic analysis, parental reporting | Understanding alternative pathways to linguistic competence |

| Computational Modeling | Connectionist models, Bayesian inference, agent-based simulation | Testing how diverse inputs shape language acquisition and processing |

Cognitive and Neurological Evidence: How Diversity Shapes Language Processing

Neural Correlates of Linguistic Diversity

Neuroimaging evidence increasingly demonstrates that linguistic diversity is reflected in brain organization and function. Rather than displaying a fixed neural architecture, the language network shows remarkable adaptability:

- Individual Variation: Core language regions show considerable individual differences in exact location, extent, and functional connectivity [1]. This variation is not random but correlates with individuals' linguistic experiences and cognitive styles.

- Experience-Dependent Plasticity: Bilinguals and multilinguals show structural and functional differences in language-related regions and networks, particularly in areas associated with cognitive control [1].

- Sociolinguistic Processing: Processing different dialects and registers engages brain regions beyond the classic perisylvian language network, including areas associated with social cognition and executive function [1].

Cognitive Adaptations for Managing Diversity

The human cognitive system employs several adaptive mechanisms to manage linguistic diversity:

- Language Control: Bilinguals and bidialectals develop enhanced cognitive control mechanisms for selecting appropriate linguistic varieties and suppressing interference [1].

- Predictive Processing: Listeners and readers rapidly adapt their predictive processing strategies based on linguistic variety, register, and speaker characteristics.

- Contextual Integration: The cognitive system integrates extralinguistic context more heavily when processing varieties with higher contextual dependence, such as informal registers.

Diagram 1: Cognitive Architecture for Managing Linguistic Diversity. The model illustrates how contextual cues engage control systems that modulate core language processing to accommodate linguistic variation.

Research Reagent Solutions: Essential Tools for Diversity Research

Table 3: Essential Research Reagents and Tools for Studying Linguistic Diversity

| Research Tool Category | Specific Examples | Function in Diversity Research |

|---|---|---|

| Standardized Assessment Batteries | Cross-linguistic naming tests, Multilingual Aphasia Examination | Providing comparable measures across diverse linguistic populations |

| Neuroimaging Stimulus Sets | Multilingual corpus-based stimuli, Dialectal speech recordings | Enaging neural processing of diverse linguistic forms while controlling for acoustic and psycholinguistic variables |

| Eye-Tracking Paradigms | Visual World Paradigm with dialectal variations, Cross-linguistic reading studies | Tracking real-time processing of diverse linguistic structures across populations |

| Computational Modeling Platforms | Connectionist models of bilingual processing, Bayesian models of language variation | Testing theoretical accounts of how diversity emerges and is processed |

| Genetic Analysis Tools | Polygenic risk scoring for language disorders, Gene expression analysis in model systems | Investigating biological foundations of individual differences in language abilities |

Implications and Applications: From Theory to Practice

Theoretical Implications for Cognitive Science

The incorporation of linguistic diversity into cognitive models has profound theoretical implications:

- Language Faculty Reconsidered: The language faculty appears more malleable and experience-dependent than traditionally conceived, emerging in slightly different ways across individuals [1].

- Universal Grammar Revisited: Rather than positing fixed linguistic universals, the focus shifts to universal computational capacities that can accommodate tremendous structural diversity.

- Brain-Language Relationships: The relationship between brain structure and language function is better understood as a dynamic, experience-dependent system rather than a fixed modular architecture.

Clinical and Applied Implications

Understanding linguistic diversity has practical applications across multiple domains:

- Neurological Rehabilitation: Language-based interventions can leverage the modulating effects of language on cognitive and neurological systems for therapeutic purposes [6].

- Educational Practices: Teaching methods can be optimized for diverse learner profiles by understanding alternative developmental pathways to linguistic competence.

- Assessment Tools: Diagnostic instruments can be improved by accounting for normal variation in language abilities across different populations.

Diagram 2: Research to Application Pipeline. The diagram illustrates how theoretical advances in understanding linguistic diversity inform methodological innovation, leading to empirical findings with practical applications across multiple domains.

Future Directions: Advancing the Cognitive Science of Linguistic Diversity

The cognitive science of linguistic diversity is still emerging, with several promising directions for future research:

- Integrating Multiple Timescales: Research should integrate evolutionary, developmental, and real-time processing perspectives on linguistic diversity [1].

- Gene-Language Environment Interactions: Investigating how genetic predispositions interact with diverse linguistic environments to shape language capacities.

- Computational Modeling of Variation: Developing models that can account for the emergence and maintenance of linguistic diversity within and across individuals.

- Clinical Translation: Applying insights from diversity research to develop more sensitive assessment tools and more effective interventions for language disorders.

Table 4: Priority Research Areas for Advancing the Cognitive Science of Linguistic Diversity

| Research Area | Key Questions | Required Methodological Advances |

|---|---|---|

| Neurodiversity and Language | How do neurodiverse populations develop alternative but functional language systems? | Development of appropriate assessment tools for non-standard language abilities |

| Cross-Linguistic Cognitive Neuroscience | To what extent does processing different language types engage distinct neural mechanisms? | Large-scale collaborative studies across diverse language communities |

| Social and Cultural Dimensions | How do cultural models of language shape cognitive processing? | Integration of anthropological and psychological approaches |

| Lifespan Perspectives | How does management of linguistic diversity change across the lifespan? | Longitudinal studies tracking language abilities in diverse populations over time |

The pivotal role of linguistic variation in cognitive models represents more than just an expansion of research scope—it constitutes a fundamental reorientation of how we conceptualize human language. By embracing diversity as a core feature rather than a complication, cognitive science and neuroscience can develop more accurate, biologically plausible models of language processing that account for the full range of human linguistic capabilities. This approach not only enhances our theoretical understanding but also promises more effective applications in clinical, educational, and technological domains. As the field moves forward, integrating diverse perspectives, methods, and populations will be essential for unraveling the complex interplay between language, cognition, and the brain.

The study of language is undergoing a profound transformation, moving from abstract cognitive models to mechanistic explanations grounded in the neurobiological infrastructure of the human brain. This shift represents a fundamental evolution in psychology publications research, where language is no longer viewed merely as a modular cognitive faculty but as a complex adaptive system implemented in biological tissue. The emerging paradigm emphasizes implementational causality—explaining how language processes are physically realized in neural circuits—and seeks to bridge the historical gap between linguistic computation and its biological substrate [7]. This transition mirrors broader trends in cognitive science toward integrated approaches that respect both the computational nature of mind and its physical instantiation in the brain.

The drive toward neurobiological frameworks stems from growing recognition that language behavior represents the output of a physically realized system in the human brain, described as a "sparsely connected recurrent network of biological neurons and chemical synapses" [7]. This perspective demands mechanistic descriptions of language processing that are grounded in and constrained by the characteristics of the neurobiological substrate, moving beyond high-level algorithmic accounts to models that operate in the universal "machine language" of neurobiology [7]. The core challenge lies in explaining how the computational machinery supporting language operations is implemented in neurobiological infrastructure across multiple spatial scales, from single neurons and synapses to cortical layers, microcolumns, brain regions, and large-scale networks.

Core Theoretical Foundations: From Cognition to Implementation

Neurobiological Causal Models

Neurobiological causal modeling represents a groundbreaking approach that fundamentally differs from traditional experimental and cognitive modeling strategies. Whereas traditional approaches infer processing theories from input-output relations or attempt to map these relations algorithmically through cognitive modeling, neurobiological causal modeling builds functional equations directly from established neurobiological principles without making ad hoc assumptions about algorithmic procedures and component parts [7]. This methodology intends to model the generators of language behavior at the level of implementational causality, providing a mechanistic description of language processing that is firmly grounded in the causal characteristics of the actual language system [7].

A key advantage of this approach is its ability to draw upon extensive knowledge from neuroanatomy, neurophysiology, and biophysics to inform model construction. The implementational building blocks derived from these knowledge sources provide necessary constraints for a computational neurobiology of language that ultimately integrates across all levels of description [7]. This represents a synthetic rather than reductive approach—systematically assembling computational language models from known neurobiological primitives at the implementational level, which contrasts with approaches that merely attempt to constrain existing neurocognitive architectures to increase their biological plausibility [7].

Essential Neurobiological Component Parts

The language system is physically implemented using fundamental neurobiological components with specific computational properties that differ significantly from simplified artificial neural networks. Biological neurons exhibit a diverse range of electrophysiological behaviors, including tonic spiking, bursting, and adaptation, with this diversity likely having functional significance for information processing [7]. Crucially, neuronal spike responses result from the integration of synaptic inputs on the spatial structure of the dendritic tree, which amounts to more than linear summation and gives rise to complex, nonlinear processing effects not captured by simpler point neurons [7].

The synaptic architecture of the brain follows specific biological constraints often overlooked in cognitive models. Neurons connect via either excitatory or inhibitory synapses but not both simultaneously, and synapses do not change sign during learning and development—a fundamental difference from most connectionist and deep learning models of language processing [7]. Major synapse types include fast and slow excitatory and inhibitory varieties that generate postsynaptic currents with different polarity, amplitudes, and rise and decay time scales, creating a rich temporal dynamic for neural computation [7]. Synaptic learning and memory are subserved by a variety of unsupervised learning principles, including activity-dependent, short-term synaptic changes that form the biological basis for learning [7].

Table 1: Core Neurobiological Components of Language Processing

| Component | Key Properties | Functional Significance for Language |

|---|---|---|

| Biological Neurons | Diverse firing patterns (tonic spiking, bursting, adaptation); Nonlinear dendritic integration | Enables complex temporal processing; Provides rich computational capabilities beyond linear summation |

| Synapses | Excitatory OR inhibitory (not both); Fast/slow varieties with different time courses; Do not change sign during learning | Creates precise temporal dynamics for processing; Constrains learning mechanisms in biological implementations |

| Cortical Microcircuits | Structured laminar organization; Sparsely connected recurrent networks; Multiple spatial scales | Supports hierarchical processing; Enables integration across time scales from milliseconds to lifetime |

| Neural Assemblies | Formed through correlation learning; Driven by cortical connectivity patterns | Basis for discrete circuits for cognitive computations; Explains emergence of semantic areas and hubs |

Key Experimental Evidence and Neural Mechanisms

Speech Segmentation and Word Recognition

Groundbreaking research has identified specific neural mechanisms for transforming continuous sound into distinct words, centered on the superior temporal gyrus (STG). This region, located just above the ear, was historically considered responsible only for low-level sound processing, but new evidence reveals its sophisticated role in linguistic segmentation [8]. Using electrocorticography with high-density electrodes placed directly on the brain surface, researchers discovered that the STG displays a rhythmic cycle of activity with a distinct "reset" signal at the end of spoken words, serving as a biological marker that punctuates the speech stream [8].

This segmentation mechanism operates using relative timing rather than absolute seconds, with neural trajectories stretching or compressing to fit word duration. This normalization process means that short words like "cat" and long words like "hippopotamus" trigger the same complete cycle of processing, maintaining consistent representation regardless of duration [8]. Crucially, this mechanism is experience-dependent—the neural marker for word boundaries disappears when listening to unfamiliar languages, explaining why foreign languages often sound like an unbroken blur of noise. Bilingual individuals show boundary detection for both known languages, with signal clarity correlating with proficiency level [8].

Phonological Recoding of Visual Symbols

The brain's ability to associate visual symbols with phonological representations represents another key mechanism in language processing. Research on learning associations between unknown visual symbols (Japanese Katakana characters) and arbitrary monosyllabic names revealed that event-related potentials (ERPs) are linearly affected by the strength of visual-phonological associations in specific time windows [9]. These effects begin around 200ms post-stimulus on right occipital sites and extend to around 345ms on left occipital sites, indicating rapid integration of visual and phonological information [9].

fMRI evidence further demonstrates that the left fusiform gyrus is progressively modulated by the strength of visual-phonological associations, suggesting this region's involvement in the brain network supporting phonological recoding processes [9]. This finding highlights the importance of cross-modal integration in language processing and demonstrates how arbitrary symbols become associated with linguistic representations through experience-dependent plasticity mechanisms.

Semantic Representation and Circuit Formation

Brain-constrained deep neural networks provide insights into how semantic representations and circuits form in the cerebral cortex. These models demonstrate that discrete circuits for cognitive computations emerge through correlation learning and specific cortical connectivity patterns, explaining the emergence of specialized semantic areas and hubs [10]. The feature correlational properties of concepts explain neurocognitive differences between processing proper names and category terms, as well as why circuits for concrete and abstract concepts differ, with the latter particularly reliant on language systems [10].

These models successfully simulate the formation of mechanisms for symbol and concept processing, including verbal working memory, learning of large symbol vocabularies, semantic binding in specific cortical areas, and attention focusing modulated by symbol type [10]. The networks analyze neuronal assembly activity to deliver putative mechanistic correlates of higher cognitive processes, developing candidate explanations founded in established neurobiological principles rather than merely simulating behavioral outcomes.

Experimental Protocols and Methodologies

Electrocorticography for Neural Speech Segmentation

Objective: To pinpoint where and how speech segmentation occurs in the cortex by capturing high-precision neural activity during speech perception [8].

Participants: Patients undergoing intracranial monitoring for epilepsy surgery, with electrode grids placed directly on the cortical surface for clinical purposes [8].

Stimuli and Tasks:

- Participants listen to radio news clips while neural activity is recorded

- Participants complete bistable speech tasks using looped audio recordings that can be perceived as different words depending on boundary placement (e.g., "turbo" vs. "boater")

- Multilingual participants listen to sentences in both native and unfamiliar languages to test experience-dependence of segmentation

Data Acquisition:

- High-density electrocorticography grids placed on the superior temporal gyrus

- Recording of neural firing patterns with high temporal and spatial resolution

- Synchronization of audio stimuli with neural recording

Analysis Approach:

- Identification of neural "reset" signals coinciding with word boundaries

- Comparison of neural activity patterns during bistable perception

- Cross-language comparison of segmentation signals

- Computational comparison with deep learning models (HuBERT) trained on speech [8]

Artificial Learning of Visual-Phonological Associations

Objective: To track the acquisition of novel visual-phonological associations and identify associated neural changes [9].

Participants: Healthy adults with no prior exposure to Japanese Katakana characters.

Learning Protocol:

- Day 1: Association learning phase with 24 unknown visual symbols (Japanese Katakana) and 24 arbitrary monosyllabic names

- Manipulation of association strength through varied proportions of correct and erroneous associations displayed during a two-alternative forced choice task

- ERP recording during learning to track changes in visual symbol processing

Testing Protocol:

- Day 2: fMRI session during matching task

- Continued manipulation of association strength as a probe for identifying phonological recoding regions

Neural Measures:

- Event-Related Potentials (ERPs): Recorded during learning phase to track temporal dynamics of association formation

- Functional MRI: Acquired during matching task to identify brain regions involved in phonological recoding

Analysis Focus:

- Linear effects of association strength on ERP components in specific time windows

- Gradual effects of association strength on fMRI activation in candidate regions

- Identification of left fusiform gyrus involvement in phonological recoding [9]

Multimodal Communication and Conceptual Alignment

Objective: To examine how communicative interactions shape conceptual representations and neural encoding of referents [11].

Participants: 71 pairs of unacquainted participants engaged in cooperative referential communication.

Experimental Protocol:

- Participants perform two interleaved interactional tasks describing and locating 16 novel geometrical objects (Fribbles)

- Recording of spontaneous interactions (approximately one hour) using multiple cameras, head-mounted microphones, and motion-tracking (Kinect)

- Collection of written descriptions and conceptual dimension ratings for each Fribble before and after interaction

- fMRI measurement of neural responses to each Fribble during one-back working memory task

- Additional fMRI during visual presentation of eight animated movies (35 minutes total) to enable functional hyperalignment across participants

Data Collected:

- High-quality video from three cameras and audio from head-mounted microphones

- Motion-tracking data throughout interactions

- Speech transcripts of all communicative interactions

- Behavioral conceptual representations (descriptions and dimensional ratings)

- Neural representations (fMRI responses to each referent)

Analytical Opportunities:

- Relationship between communicative behaviors and conceptual alignment

- Neural changes following face-to-face dialogue

- Multimodal analysis of communication dynamics [11]

Visualization of Key Mechanisms and Workflows

Neurobiological Causal Modeling Framework

Neural Word Segmentation Mechanism

Table 2: Essential Research Reagents and Solutions for Neurobiological Language Research

| Resource Category | Specific Examples | Function/Application | Key Considerations |

|---|---|---|---|

| Neuroimaging Modalities | High-density electrocorticography; Task-based and resting-state fMRI; fNIRS; MEG | Maps neural activity with high spatiotemporal resolution; Identifies network correlates of language processes | Electrocorticography provides direct neural recording but requires clinical populations; fMRI offers spatial precision but limited temporal resolution |

| Computational Modeling Tools | Brain-constrained deep neural networks; Adaptive dynamical systems; Recurrent neural network simulations | Implements neurobiological principles in silico; Tests mechanistic hypotheses; Bridges computational theory and biological implementation | Must incorporate biological constraints (e.g., separate excitatory/inhibitory connections, dendritic computation) |

| Behavioral Paradigms | Artificial language learning; Bistable speech tasks; Referential communication games; Multimodal interaction tasks | Controls linguistic experience; Tests causal hypotheses; Examines real-time language processing and acquisition | Enables tracking of learning and plasticity effects; Allows experimental manipulation of key variables |

| Stimulus Sets | Japanese Katakana characters; Novel object referents (Fribbles); Controlled speech samples; Bistable speech stimuli | Provides unknown symbols for learning studies; Controls for prior experience; Enables perceptual manipulation | Must control for psycholinguistic variables; Enables cross-linguistic comparisons |

| Data Resources | NEBULA101 dataset; CABB multimodal corpus; Shared neuroimaging datasets | Provides multimodal data for analysis; Enables replication and secondary analysis; Supports development of novel analytical approaches | Follows FAIR principles; Enables large-scale analysis of individual differences |

Implications and Future Directions

Theoretical Implications for Cognitive Science

The rise of neurobiological frameworks necessitates rethinking fundamental concepts in cognitive science. The traditional view of language as an isolated modular function is giving way to understanding it as a dynamic system branching out and connecting to more general cognitive mechanisms [12]. This perspective recognizes that language aptitude and performance interact with broader cognitive domains, including memory, fluid reasoning, auditory abilities, and even musicality, giving rise to "neurocognitive profiles" that reflect the integrated organization of the human cognitive system [12].

This integrated view has particular relevance for understanding multilingualism, where knowing and using multiple languages demands fundamental cognitive reorganization with specific psycho-neurobiological correlates [12]. Research shows that bilingual infants and children display different patterns of visual attention, perceptual development, and executive function compared to monolingual peers, suggesting that language experience shapes cognitive processes beyond the linguistic domain [13]. These findings challenge modular conceptions of language and support theories that emphasize the interactive nature of cognitive systems.

Clinical and Translational Applications

Neurobiological frameworks for language have significant implications for understanding and treating communication disorders. By identifying specific neural mechanisms underlying language processes, these approaches enable more targeted interventions for conditions such as aphasia, dyslexia, and developmental language disorder. The identification of the superior temporal gyrus as a hub for speech segmentation [8] and the left fusiform gyrus involvement in phonological recoding [9] provides specific targets for neuromodulation therapies.

The discovery of neurophysiological biomarkers of treatment response in various psychiatric conditions [14] [15] further demonstrates the clinical relevance of these approaches. As research identifies specific neural signatures associated with symptom dimensions, it becomes possible to develop optimized interventions that directly target these neurobiological mechanisms. The success of "closed-loop" stimulation strategies for movement disorders and epilepsy has generated interest in similar approaches for psychiatric disorders, though these must account for disorder-specific time constants relating neural changes to behavioral improvements [15].

Future Research Trajectories

Future research in neurobiological language frameworks will likely focus on several key directions. First, there is growing emphasis on naturalistic language processing—studying how the brain processes language in ecologically valid contexts rather than highly controlled laboratory settings. The CABB dataset, which includes multimodal recordings of face-to-face communicative interactions, represents an important step in this direction [11].

Second, research will increasingly examine developmental trajectories of neural language mechanisms, tracking how systems like the superior temporal gyrus word segmentation signal emerge during infancy and childhood [8]. This developmental perspective is essential for understanding how genetic predispositions and experience interact to shape the neural infrastructure for language.

Finally, the field will continue to develop more sophisticated brain-constrained models that incorporate additional neurobiological principles, such as distinct neuron types, realistic synaptic plasticity rules, and multi-scale organization from microcircuits to large-scale networks [7] [10]. These models will provide increasingly accurate simulations of how linguistic computations emerge from neural processes, ultimately leading to a comprehensive computational neurobiology of language that integrates across all levels of description from cells to cognition.

Integrating Animal and Human Communication Studies to Understand Language Evolution

The evolution of human language represents one of the most significant transitions in the history of life on Earth. Understanding this transition requires integrating insights from two traditionally separate domains: the study of animal communication systems and the investigation of human language capabilities. This integration demands moving beyond superficial comparisons to examine the deep cognitive foundations shared across species while acknowledging the unique computational properties of human language [16] [17]. The central challenge lies in distinguishing homologous traits (shared due to common ancestry) from analogous ones (similar due to convergent evolutionary pressures) [18].

Recent theoretical advances suggest that the "royal road" to understanding language evolution may lie not in animal communication systems per se, but in animal cognition more broadly [16]. This perspective shift acknowledges that communication systems in non-human animals typically permit expression of only a small subset of the concepts that species can represent and manipulate productively. For instance, honeybees possess excellent colour vision and can remember flower colours, yet their dance communication system only encodes spatial location information [16]. Similarly, human language exhibits the remarkable capacity to express virtually any concept within our conceptual storehouse, whereas animal communication systems appear intrinsically limited to a restricted set of fitness-relevant messages relating to food, danger, aggression, or other immediate concerns [16].

This whitepaper provides a comprehensive framework for integrating comparative approaches to illuminate the biological and cognitive foundations of human language, with particular emphasis on methodological considerations for interdisciplinary research.

Theoretical Foundations: Mentalistic vs. Referentialist Frameworks

Contrasting Models of Reference

A fundamental theoretical division separates referentialist from mentalistic perspectives on communication. Referentialist frameworks, dominant in behaviourist psychology and some philosophical traditions, posit direct linkage between utterances and their real-world referents [16]. In contrast, mentalistic perspectives, which represent the mainstream in modern cognitive science, view words as expressing mind-internal concepts rather than referring directly to things in the world [16].

Table 1: Comparison of Referentialist vs. Mentalistic Frameworks

| Aspect | Referentialist Framework | Mentalistic Framework |

|---|---|---|

| Nature of reference | Direct link between signals and world | Indirect process mediated by mental representations |

| Focus of analysis | Observable relationships between signals and referents | Internal cognitive processes and representations |

| Treatment of concepts | Often avoided or reduced to behavioural dispositions | Central to explanation; concepts ≠ words |

| Biological grounding | Intuition of "referential drive" useful for language acquisition | Compatible with modern cognitive neuroscience |

The mentalistic perspective conceptualizes communication as a two-stage process: first, a mental representation of an entity is activated; second, an utterance is produced that may elicit a similar representation in the listener [16]. This model applies across species, suggesting that the first stage—forming non-verbal conceptual representations—represents an important continuity between animal and human cognition.

Defining Communication Across Species

The question of what constitutes "communication" remains contested across disciplines. Biological accounts define signals as structures or acts that alter the behaviour of other organisms, evolved because of that effect, and are effective because the receiver's response has also evolved [18]. Informational frameworks focus on statistical correlations between signals and states of the world [18]. Intentional approaches emphasize voluntary signal production with particular communicative intentions [18]. These divergent definitions highlight the challenge of creating unified theoretical frameworks spanning human and animal communication.

Key Comparative Domains: Semantics, Syntax, and Pragmatics

Semantic Capabilities Across Species

The semantic capabilities of non-human animals reveal both continuities and discontinuities with human language. Research demonstrates that many species form rich mental concepts that far exceed what their communication systems can express [16]. The critical evolutionary transition may therefore involve changes in externalization mechanisms rather than conceptual capabilities themselves.

Table 2: Comparative Semantic Capabilities Across Species

| Species | Demonstrated Conceptual Capabilities | Communicative Expression | Gap Analysis |

|---|---|---|---|

| Non-human primates | Complex social knowledge, tool use concepts, numerical cognition | Limited repertoire of vocalizations and gestures primarily for immediate contexts | Large gap between conceptual repertoire and communicative expression |

| Honeybees | Colour vision, spatial memory, floral patterns | Dance communication encodes only spatial location | Specialized system for specific ecological domain |

| Cetaceans | Social relationship tracking, behavioural coordination | Complex vocalizations with potential for signature calls | Intermediate gap with some limited flexibility |

A crucial insight from comparative analysis is that the absence of a concept in a species' communication system does not constitute evidence that the species lacks that concept [16]. This observation fundamentally reorients the search for language precursors toward general cognitive capacities rather than specifically communicative behaviours.

Syntactic Capabilities and Sequential Structure

Human language exhibits hierarchical syntactic structure that enables discrete infinity—the capacity to generate an infinite number of expressions from finite elements. The evolutionary origins of this capacity remain hotly debated. While some animal communication systems exhibit sequential structure (e.g., birdsong), these typically lack evidence of hierarchical embedding or compositionality [17].

Research on zebra finches suggests they may be more sensitive to acoustic properties of individual song elements than to sequential properties, potentially indicating a fundamental difference in how sequential information is processed compared to human syntactic processing [17]. However, cultural evolution experiments with humans demonstrate that compositional structure can emerge through iterated learning when initially holistic systems are transmitted across generations [19].

Pragmatic and Intentional Aspects

Pragmatic aspects of communication—how signals are used and interpreted in context—reveal important continuities between animal and human communication, particularly in gestural communication among great apes [18]. The extent to which animal signals are produced voluntarily versus automatically remains controversial, with different systems showing varying degrees of flexibility.

Intentionality represents a particularly challenging domain for comparative analysis. While some animal signals appear produced with goals of influencing others, it remains controversial whether they are produced with Gricean intentions requiring metarepresentational abilities [18].

Experimental Approaches: Laboratory Models of Language Evolution

Iterated Learning Paradigms

Iterated learning experiments provide a powerful methodological bridge for studying language evolution in the laboratory. These paradigms involve transmitting artificial languages across "generations" of learners, allowing researchers to observe the emergence of linguistic structure under controlled conditions [19] [20].

Diagram 1: Iterated learning experimental workflow

These experiments demonstrate that structural properties of language—including compositionality and Zipfian frequency distributions—emerge as adaptations for learnability and transmission, even without pressure to communicate meanings [19]. This suggests that some fundamental properties of language may arise from general cognitive constraints rather than specifically communicative pressures.

Whole-to-Part Learning and Segmentation

A critical finding from experimental studies is the importance of whole-to-part learning in language evolution. Rather than building complexity from simple elements, human learners often extract parts from initially unanalyzed wholes [19]. This process drives the emergence of segmental structure through cultural transmission.

Laboratory models show that initially unsegmented sequences develop part-based structure over generations, with transitional probabilities within units becoming higher than transitional probabilities across unit boundaries—precisely the statistical pattern that facilitates segmentation in natural language [19]. This emergent segmentation subsequently makes the systems more learnable, creating a feedback loop where structure begets better learning which begets more structure.

Emergence of Zipfian Distributions

The frequency distribution of words in human languages follows a characteristic power law (Zipf's law), where a small number of items occur with very high frequency while most occur rarely. Experimental work shows that this distributional structure emerges through cultural transmission and facilitates learning [19].

Diagram 2: Emergence of Zipfian distributions through cultural evolution

This skewed distribution facilitates various aspects of language learning, including word segmentation, cross-situational word learning, and acquisition of grammatical categories [19]. The cultural evolution of this distribution illustrates how population-level linguistic phenomena emerge from individual-level learning and production biases.

Methodological Framework: Integrated Comparative Approaches

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Methodological Approaches for Integrated Language Evolution Research

| Method Category | Specific Approaches | Research Application | Key Considerations |

|---|---|---|---|

| Comparative cognition protocols | Reverse-reward contingency tasks, delayed match-to-sample, object permanence tests | Assessing conceptual capabilities independent of communication | Controls for perceptual and motor biases; species-appropriate motivation |

| Vocal learning assays | Isolation rearing, vocal playback, operant conditioning | Quantifying vocal flexibility and learning mechanisms | Distinguishing production versus perception learning; natural versus artificial contexts |

| Neurogenetic tools | FOXP2 sequencing, gene expression analysis, neuroimaging | Linking genetic and neural mechanisms to communication abilities | Accounting for pleiotropy; establishing causal versus correlational relationships |

| Cultural evolution paradigms | Iterated learning, artificial language learning | Studying emergence of structural properties | Balancing ecological validity with experimental control; transmission chain design |

Cross-Species Experimental Protocols

Effective comparative research requires standardized protocols that can be adapted across species while respecting their unique ecological and perceptual characteristics:

Conceptual Representation Protocol:

- Habituation Phase: Subjects are repeatedly presented with exemplars from one conceptual category

- Violation-of-Expectation Testing: Novel stimuli either match or violate the established category

- Response Measurement: Looking time, neural activity, or behavioural responses indicate detection of violations

- Control Conditions: Ensure responses reflect conceptual rather than perceptual differences

Vocal Learning Assessment Protocol:

- Baseline Recording: Document species-typical vocal repertoire in natural context

- Social Manipulation: Alter social environment or introduce novel vocal models

- Production Analysis: Quantify acoustic changes in vocal output over time

- Contextual Usage Testing: Determine whether novel vocalizations are used appropriately

Phylogenetic Comparative Methods

Reconstructing evolutionary trajectories requires specialized phylogenetic methods:

Homology Assessment Protocol:

- Character Mapping: Identify communicative traits across related species

- Phylogenetic Parsimony Analysis: Determine likely evolutionary history of traits

- Convergence Testing: Identify analogous traits through independent contrast analysis

- Ancestral State Reconstruction: Infer traits of common ancestors

These methods enable researchers to distinguish traits shared through common descent from those arising through convergent evolution, providing crucial evidence about evolutionary sequences [18].

Future Directions: Embracing Linguistic and Cognitive Diversity

Future progress in understanding language evolution requires moving beyond traditional model systems and embracing the full diversity of human languages and animal communication systems [21]. Just as biology has benefited from studying extremophiles—species living in extreme environments—language science will benefit from investigating typologically diverse languages and non-standard varieties [21].

This expanded comparative approach should include:

- Studying communication systems in less-researched species with exceptional capabilities

- Investigating how different human language structures shape and are shaped by cognitive processes

- Examining language development in diverse cultural and linguistic environments

- Exploring communication in neurodiverse populations to understand variant forms of human linguisticality

Recent research on bilingualism demonstrates the value of this approach, revealing how different language experiences shape cognitive processes including visual attention, perceptual development, and executive function [13]. Bilingual infants, for instance, show different patterns of visual attention to faces compared to monolingual infants, looking longer at the mouth than eyes—a pattern that persists into school age [13]. These findings illustrate how varied language experiences can lead to different developmental trajectories in cognitive domains related to communication.

Integrating animal and human communication studies requires recognizing that the cognitive foundations of language extend beyond specifically communicative capacities. The remarkable expressivity of human language builds upon conceptual representation systems shared with other animals, combined with unique mechanisms for externalizing these concepts through combinatorial and hierarchical systems [16].

Laboratory models of cultural evolution demonstrate how structural properties of language can emerge through iterated learning, providing crucial insights into how individual-level cognitive processes give rise to population-level linguistic structure [19] [20]. Meanwhile, comparative studies reveal both deep continuities in cognitive capacities and striking discontinuities in communicative expression across species.

Moving forward, a comprehensive understanding of language evolution will require interdisciplinary collaboration across linguistics, cognitive science, neuroscience, genetics, and animal behaviour, united by shared methodological frameworks and theoretical perspectives that embrace the true diversity of communication systems across species and human cultures.

The study of language evolution has undergone a fundamental transformation, shifting from static, homogeneous models to a dynamic framework that views language as a complex adaptive system (CAS). This paradigm change reframes language as a system that emerges from the interactions of adaptive agents, possesses non-linear dynamics, and evolves through cultural transmission and cognitive selection. Within psychological and psycholinguistic research, this shift provides a powerful new lens for understanding how cognitive biases at the individual level scale up to shape the structure and evolution of language at the population level over time. This whitepaper details the core principles of this paradigm shift, its evidence base, and the methodological innovations it brings to research on cognitive language.

The Core Principles of the New Paradigm

Defining a Complex Adaptive System (CAS)

A Complex Adaptive System (CAS) is a collection of diverse, interacting agents whose interactions and adaptations give rise to complex, emergent system-level behaviors that are not predictable from the properties of the individual agents alone [22]. In such systems, the behavior of the whole is more than the sum of its parts, and the system is characterized by path dependence and self-organization [22].

- Key Characteristics: CAS are marked by a sufficient number of elements that interact in rich, non-linear ways. These interactions feed back on themselves (recurrency), and the agents within the system update their strategies based on input from other agents. Consequently, the overall system operates under far-from-equilibrium conditions and exhibits emergence, where macro-level patterns arise from micro-level interactions without central control [22].

Language as a Complex Adaptive System

The application of CAS theory to language posits that language is not a static, homogeneous object but a dynamic system perpetually shaped by learning, use, and transmission. Language is seen as socially and culturally situated, highly sensitive to small initial differences, and determined by multiple components interacting in complex, often chaotic, ways [23]. This view allows researchers to model language evolution as a process where linguistic structure arises from the actions of populations of interacting, adaptive individuals [24].

Contrasting Paradigms: From Homogeneity to CAS

The table below summarizes the fundamental differences between the traditional, homogeneous view of language and the modern, CAS-based view.

Table 1: Key Differences Between the Homogeneous and CAS Views of Language

| Feature | Traditional Homogeneous View | Complex Adaptive System View |

|---|---|---|

| System Nature | Static, closed, and rule-governed | Dynamic, open, and adaptive |

| Primary Focus | Internal, invariant structure (e.g., universal grammar) | Interaction and adaptation among agents |

| Change Dynamics | Linear and predictable | Non-linear and path-dependent [22] |

| Key Mechanism | Innate biological endowment | Cultural transmission and cognitive selection [24] [25] |

| Outcome | Homogeneous, idealized competence | Diverse, emergent, and stable conventions |

| Modeling Approach | Formal, mathematical logic | Agent-based, iterated learning, and game-theoretic models [24] |

Evidence from Language Evolution Research

Empirical and computational research strongly supports the CAS framework, revealing how cognitive biases drive language change.

Cognitive Selection in Language Change

Research bridging psycholinguistics and historical linguistics demonstrates that words compete for survival based on their cognitive properties. A large-scale serial-reproduction experiment—where stories were passed down a chain of participants—revealed that words with certain psycholinguistic properties are more likely to survive retelling [25].

- Experimental Protocol: A "serial reproduction" or "telephone game" paradigm was used. A participant reads a story and then retells it from memory to the next participant, who then retells it to the next, and so on down a transmission chain. The survival of specific word forms is tracked across generations of retellings.

- Findings: Words that are acquired earlier in life, are more concrete, and have higher emotional arousal were significantly more likely to survive [25]. This micro-level preference was scaled up and validated against two large historical corpora, showing that the same properties predicted increasing word frequency over the past 200 years [25]. This provides robust evidence for cognitive selection as a key mechanism in language evolution.

Computational Modeling of Emergent Language

Computational models serve as virtual laboratories for testing hypotheses about language emergence and evolution under controlled conditions.

- Agent-Based Models: These simulate populations of autonomous agents that communicate to establish shared linguistic conventions. A seminal example is the Naming Game, where agents negotiate names for objects until a consensus emerges, demonstrating how local interactions can lead to global coherence without a central controller [24].

- Iterated Learning Models (ILM): These models focus on cultural transmission across generations. Each generation learns from the (often imperfect and incomplete) data produced by the previous generation. ILMs have shown that learning biases can lead to the spontaneous emergence of compositionality—a core feature of human language—as it increases transmission fidelity through a learning bottleneck [24].

- Evolutionary Game Theory: This approach models language use as a strategic interaction where agents adopt communication strategies that maximize mutual understanding and fitness. It helps explain the stability of linguistic conventions and norms [24].

The logical relationships between the core components of language as a CAS are visualized below.

Methodological Innovations for Research

Studying language as a CAS requires a toolkit that can handle complexity, adaptation, and emergence.

Key Research Reagents and Solutions

The following table details essential methodological "reagents" for conducting research in this paradigm.

Table 2: Research Reagent Solutions for Studying Language as a CAS

| Research Reagent | Function & Explanation |

|---|---|

| Agent-Based Modeling Platforms (e.g., NetLogo) | Software environments for building simulations of interacting agents to observe the emergent outcomes of simple local rules, such as the formation of lexical conventions. |

| Computational Learning Models (e.g., RNNs, Transformers) | Neural network architectures used to model language acquisition and processing in iterated learning experiments, testing if linguistic structure emerges from data-driven learning [24]. |

| Psycholinguistic Norms Databases | Curated datasets containing properties like Age of Acquisition, Concreteness, and Emotional Arousal for thousands of words, used to predict their survival and evolution [25]. |

| Serial Reproduction Protocols | Experimental frameworks for studying cultural transmission in the lab, directly testing how cognitive biases filter language over "generations" of participants [25]. |

| Historical Language Corpora | Large, digitized collections of texts from different historical periods, enabling the tracking of word frequency and grammatical change over time to validate model predictions [25]. |

| Qualitative Mapping Tools (e.g., Resource/Agent Maps) | Techniques for visually mapping the interdependencies between key resources and the behaviors of adaptive agents in a system, providing a holistic appreciation of complex dynamics [26]. |

A Workflow for Integrating Cognitive and Evolutionary Models

The methodology for connecting micro-level cognitive processes to macro-level language patterns involves a recursive cycle of computational and experimental research, as illustrated below.

Implications for Drug Development and Scientific Communication

While the primary focus is on language, the CAS paradigm has profound implications for adjacent fields, including psychology and drug development.

- Understanding Health Behaviors: Health-related practices can themselves be understood as complex adaptive systems. Interventions must account for the fact that behavior change is socially situated, non-linear, and sensitive to initial conditions [23].

- Managing Pharmaceutical Systems: The drug development ecosystem is a classic CAS, involving interactions between regulators, patients, physicians, suppliers, and payers. Tools like Resource/Agent Maps (RAM) can help managers model these complex interactions, anticipate the systemic impacts of policy changes (e.g., pricing regulations), and design more resilient and effective systems [26].

The paradigm shift from viewing language as a homogeneous, static entity to understanding it as a complex adaptive system represents a major advancement in cognitive and psychological research. This framework successfully bridges the gap between the micro-level of individual cognition and the macro-level of historical language change. By leveraging computational models, rigorous experimentation, and large-scale data analysis, researchers are now equipped to unravel the complex, emergent, and adaptive nature of language, offering profound insights not only into linguistics but also into the dynamics of other complex human systems.

The Toolbox Transformation: Neuroimaging, Computational Modeling, and Clinical Translation

The study of language, once confined to behavioral observation and lesion studies, has been fundamentally transformed by neuroimaging. This revolution has enabled researchers to move from inferring brain function from damage to directly observing the dynamic, networked neural activity that underpins human communication. The evolution of cognitive language research in psychology and neuroscience is marked by a paradigm shift from localized, modular models of language function to a network-oriented understanding. This article details how the complementary use of functional Magnetic Resonance Imaging (fMRI), Electroencephalography (EEG), and functional Near-Infrared Spectroscopy (fNIRS) is mapping the brain's intricate language networks, providing unprecedented insights for basic research and therapeutic drug development.

Decoding the Neuroimaging Toolkit: Principles and Applications

To fully leverage neuroimaging data, researchers must understand the fundamental principles and capabilities of each modality. The following table provides a comparative summary of these core techniques.

Table 1: Core Neuroimaging Modalities for Language Research

| Technique | Measured Signal | Spatial Resolution | Temporal Resolution | Key Strengths | Primary Limitations |

|---|---|---|---|---|---|

| fMRI | Blood Oxygen Level Dependent (BOLD) response [27] | High (millimeter-level) [27] | Low (0.33-2 Hz, lagging neural activity by 4-6s) [27] | Excellent whole-brain coverage, including subcortical structures; indispensable for localization [27] | Expensive, immobile equipment; sensitive to motion artifacts; low temporal resolution [27] |