Think-Aloud Protocols: A Comprehensive Guide for Researching Cognitive Processes in Biomedical Science

This article provides a comprehensive guide for researchers and drug development professionals on utilizing think-aloud protocols (TAP) to investigate cognitive processes.

Think-Aloud Protocols: A Comprehensive Guide for Researching Cognitive Processes in Biomedical Science

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on utilizing think-aloud protocols (TAP) to investigate cognitive processes. It covers the foundational theory of TAP, explores its practical application in clinical and biomedical research settings, addresses common methodological challenges and optimization strategies, and examines the latest scientific evidence validating its use against other methods. The guide synthesizes current best practices and empirical findings to equip scientists with the knowledge to effectively implement this powerful qualitative tool for uncovering insights into reasoning, problem-solving, and decision-making.

What Are Think-Aloud Protocols? Unlocking the Inner Workings of the Mind

The think-aloud protocol represents a methodological bridge between classical psychological inquiry and contemporary cognitive research, enabling direct observation of human thought processes that typically remain inaccessible. This technique requires participants to provide a continuous verbal report of their thoughts as they engage with tasks, providing researchers with a unique window into cognitive mechanisms, decision-making processes, and problem-solving strategies [1] [2]. Initially developed within psychological science, the method has expanded its influence across diverse fields including usability engineering, educational research, clinical science, and pharmaceutical development.

The theoretical foundations of think-aloud protocols trace back to the work of K. Ericsson and H. Simon, who pioneered protocol analysis as a rigorous approach for studying cognitive processes [1] [3]. Their research established that verbalizing thoughts concurrently during task performance could provide valid data on cognitive processes without significantly altering the thought processes themselves [3]. Clayton Lewis later adapted these techniques for usability testing at IBM, establishing their practical value for evaluating user interfaces and product designs [1]. This historical trajectory demonstrates how a once-niche psychological method evolved into a cross-disciplinary research tool, with recent studies confirming that thinking aloud produces minimal reactivity effects on the stream of consciousness, thus validating its methodological robustness [4].

Contemporary Applications in Cognitive Research

Expanding Beyond Usability Testing

While think-aloud protocols remain a cornerstone of user experience (UX) research—with 86% of UX practitioners reporting their use in usability testing [5]—their application has significantly expanded into scientific research domains. In clinical research contexts, think-aloud protocols have been deployed to map the cognitive processes underlying scientific hypothesis generation. Researchers using visual interactive analysis tools like VIADS (a visual interactive analysis tool for filtering and summarizing large data sets coded with hierarchical terminologies) employed think-aloud protocols to identify specific cognitive events during hypothesis formulation, including "Seeking connections" (23% of cognitive events) and "Using analysis results" (30% of cognitive events) [6].

Cognitive psychology has similarly embraced think-aloud protocols to investigate reasoning processes. Recent research utilizing verbal Cognitive Reflection Tests (vCRT) has employed think-aloud protocols to distinguish between reflective and unreflective thinking, demonstrating that most correct responses involve conscious reflection while most lured responses lack such deliberation [3]. This application highlights how think-aloud methods can arbitrate between competing theoretical accounts of human reasoning by providing direct evidence of cognitive processes rather than relying solely on outcome-based measures.

Methodological Variations and Research Settings

Table 1: Think-Aloud Protocol Variants and Their Research Applications

| Protocol Type | Definition | Best Use Cases | Research Advantages |

|---|---|---|---|

| Concurrent Think-Aloud (CTA) | Participants verbalize thoughts in real-time while performing tasks [7] | Identifying in-the-moment cognitive processes; usability testing [8] | Provides immediate access to thoughts; minimizes recall bias [1] |

| Retrospective Think-Aloud (RTA) | Participants view recordings of their performance afterward and describe their earlier thought processes [7] [1] | Complex tasks where verbalization might interfere with performance [1] | Reduces cognitive load during task performance; allows more complete verbal reports [1] |

The choice between concurrent and retrospective approaches depends on research objectives and the cognitive demands of the target task. Concurrent protocols offer direct access to unfolding thoughts but may increase cognitive load, while retrospective protocols provide more reflective commentary but risk memory inaccuracies [1]. Recent research has demonstrated the viability of both approaches across diverse settings, from controlled laboratory studies to remote testing environments [8] [5].

Experimental Evidence and Validation Studies

Quantifying Cognitive Processes in Hypothesis Generation

A rigorous 2024 study investigated cognitive processes during data-driven hypothesis generation in clinical research [6]. This controlled experiment employed think-aloud protocols to identify and quantify specific cognitive events as researchers analyzed National Ambulatory Medical Care Survey (NAMCS) datasets. The study implemented a 2×2 design comparing clinical researchers using VIADS versus other analytical tools (SPSS, SAS, R), with participants blocked by experience level.

Table 2: Cognitive Events During Hypothesis Generation (Adapted from [6])

| Cognitive Event | Frequency (%) | Definition | Research Implication |

|---|---|---|---|

| Using analysis results | 30% | Applying analytical outputs to formulate hypotheses | Indicates data-driven reasoning processes |

| Seeking connections | 23% | Attempting to identify relationships between variables | Reveals associative thinking patterns |

| Analogy | Not specified | Drawing comparisons to prior research or knowledge | Demonstrates role of prior knowledge in discovery |

| Use PICOT | Not specified | Applying Patient, Intervention, Comparison, Outcome, Time framework | Shows structured approach to hypothesis formulation |

The research yielded several critical findings: participants using the VIADS tool demonstrated the lowest mean number of cognitive events per hypothesis with the smallest standard deviation, suggesting this visualization tool may guide cognitive processes more efficiently than traditional statistical packages [6]. Furthermore, the study established that "Using analysis results" and "Seeking connections" represented the most frequent cognitive activities during hypothesis generation, together accounting for over 50% of all cognitive events [6].

Validation of Methodological Efficacy

Recent research has directly addressed concerns about potential reactivity effects—whether thinking aloud alters the very cognitive processes researchers aim to study. A 2025 study comparing Think-Aloud to Silent Think protocols found "the stream of consciousness was minimally reactive to the Think Aloud protocol, with no significant differences in meta-awareness and topic shifting rates" [4]. From 21 thought qualities and 18 content topics analyzed, only three qualities and one topic differed significantly between conditions, supporting the method's validity for examining natural thought processes.

Similarly, a 2023 study on verbal Cognitive Reflection Tests demonstrated that thinking aloud did not significantly disrupt test performance compared to control conditions, indicating that the method provides a valid window into typical cognitive functioning [3]. This growing body of validation research strengthens the foundation for using think-aloud protocols in rigorous cognitive research settings.

Detailed Experimental Protocol: Clinical Research Hypothesis Generation

Research Design and Materials

The following protocol adapts methodology from the VIADS clinical research study [6] for broader application in cognitive process research:

Study Design: 2×2 between-subjects design comparing tool usage (specialized visualization tool vs. standard analytical software) and researcher experience (experienced vs. inexperienced), with block randomization of participants.

Materials and Equipment:

- Datasets: Preprocessed datasets from relevant domains (e.g., medical records, experimental data)

- Analytical Tools: Dependent on condition (specialized research software or standard statistical packages)

- Recording Equipment: Screen capture software (e.g., BB Flashback) and audio recording equipment

- Transcription Service: Professional transcription for verbal protocols

- Coding Framework: Preliminary coding scheme based on cognitive theory

Participant Selection and Training

Participant Criteria:

- Recruit representative researchers from target domain

- Stratify by experience level using pre-established criteria (years of research experience, publications, specific methodological expertise)

- Target sample size: 16+ participants for quantitative analysis (based on [6])

Training Protocol:

- Tool-specific training: 60-minute standardized training for experimental condition tools

- Think-aloud practice: Demonstration and practice session with unrelated task

- Standardized instructions for think-aloud procedure

Experimental Procedure

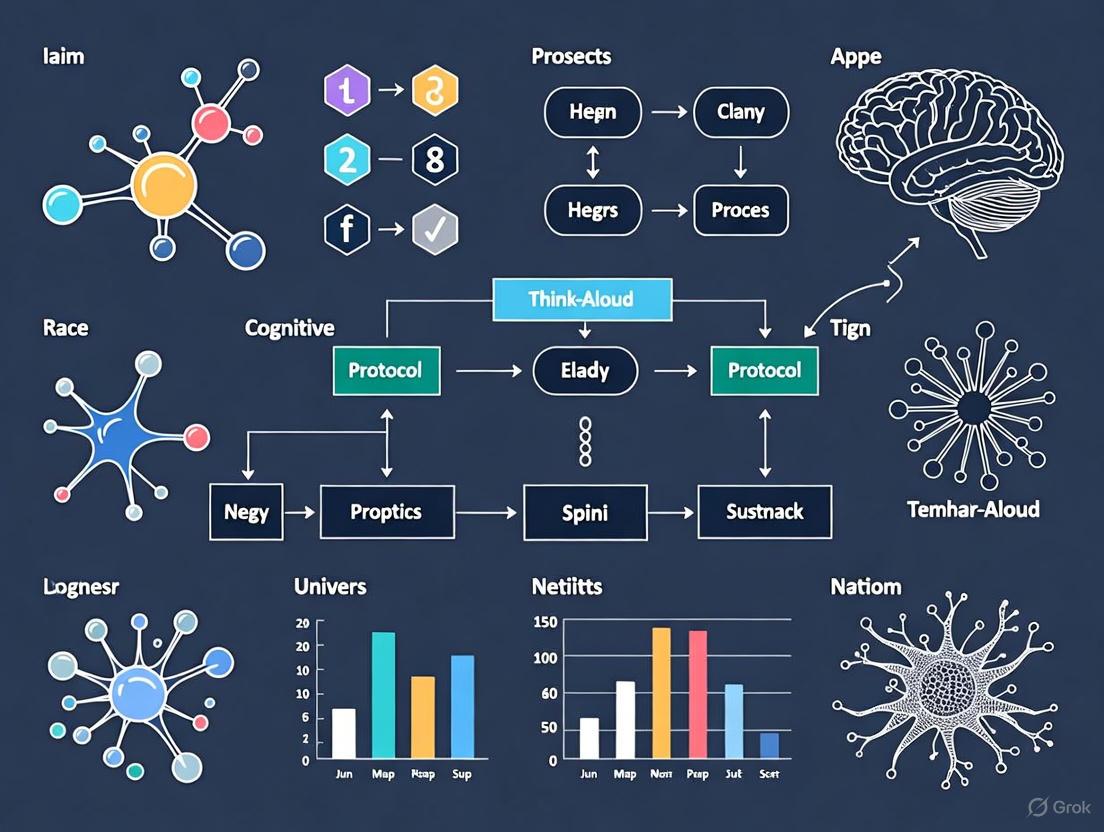

Diagram 1: Experimental workflow for think-aloud study

- Preparation Phase (15 minutes)

- Obtain informed consent

- Explain think-aloud procedure with demonstration

- Answer participant questions

- Data Collection Phase (120 minutes)

- Present research task and datasets

- Participant works independently while verbalizing thoughts

- Facilitator provides neutral prompts if silence exceeds 15 seconds

- Record simultaneous screen activity and audio

- Retrospective Phase (30 minutes, for RTA designs)

- Review video recording of session

- Participant describes thought processes at key decision points

- Post-session Processing

- Transcribe verbal protocols verbatim

- Verify transcription accuracy against recordings

Data Analysis Framework

Qualitative Analysis:

- Transcription: Verbatim transcription of verbal protocols

- Coding: Apply cognitive event coding framework to transcripts

- Theme Development: Identify patterns and group into overarching themes

- Validation: Inter-coder reliability assessment and consensus meetings

Quantitative Analysis:

- Frequency Analysis: Count cognitive events by type and participant

- Comparative Statistics: Independent t-tests between experimental conditions

- Quality Assessment: Expert rating of hypothesis quality (significance, validity, feasibility)

Essential Research Reagents and Materials

Table 3: Essential Research Materials for Think-Aloud Studies

| Material/Software | Function | Research Application |

|---|---|---|

| Screen Recording Software (e.g., BB Flashback) | Captures participant interactions with research tools | Essential for retrospective analysis and validating cognitive events [6] |

| High-Quality Audio Recording | Captures clear verbal protocols | Ensures accurate transcription of cognitive processes [6] |

| Professional Transcription Service | Creates verbatim text of verbal reports | Provides raw data for cognitive event coding [6] |

| Visual Analytic Tools (e.g., VIADS) | Enables interactive data exploration | Facilitates study of hypothesis generation in complex datasets [6] |

| Statistical Analysis Packages (e.g., R, SPSS, SAS) | Provides control condition for comparative studies | Enables comparison of cognitive processes across different research tools [6] |

| Coding Framework | Systematic classification of cognitive events | Enables quantitative analysis of qualitative data [6] |

Implementation Guidelines for Research Settings

Optimizing Protocol Administration

Successful implementation of think-aloud protocols requires careful attention to methodological细节:

Participant Instruction Framework:

- Provide clear examples of desired verbalization using think-aloud demonstrations [2]

- Emphasize that participants should verbalize everything that comes to mind without self-editing [7]

- Explicitly request descriptions of actions, expectations, frustrations, and reasoning processes [7]

- Conduct practice sessions with unrelated tasks to build comfort with verbalization [2]

Facilitation Guidelines:

- Use neutral prompts when participants fall silent (e.g., "What are you thinking now?") [7]

- Avoid leading questions or interpretive statements that may bias verbal reports [1]

- Maintain awareness of potential cognitive load effects, particularly with complex research tasks [2]

Addressing Methodological Challenges

Recent survey research with UX practitioners reveals common implementation challenges and solutions [5]:

- Participant Verbalization Difficulties: Some participants struggle with continuous verbalization; practice sessions and gentle prompting can improve compliance

- Analysis Burden: Transcription and coding are resource-intensive; leveraging multiple coders with reliability checks enhances rigor

- Balancing Validity and Efficiency: Practitioners report tension between comprehensive analysis and practical constraints; establishing clear coding priorities helps manage this tension

Industry surveys indicate that 95% of trained UX practitioners use think-aloud protocols despite these challenges, reflecting the method's unique value for accessing cognitive processes [5].

The think-aloud protocol has evolved from its origins in classical psychology to become a validated methodological approach for studying cognitive processes across diverse research domains. Contemporary applications in clinical research, cognitive psychology, and scientific discovery demonstrate its versatility and robustness. The experimental protocol and implementation guidelines provided here offer researchers a framework for applying this powerful method to investigate the cognitive mechanisms underlying complex reasoning, problem-solving, and discovery processes in scientific and professional contexts.

As research continues to validate and refine think-aloud methodologies, their application promises to yield increasingly sophisticated insights into the cognitive processes that drive scientific innovation and professional decision-making across fields including pharmaceutical development, clinical research, and data science.

The think-aloud protocol is a qualitative data collection technique in which participant verbalizations provide direct, real-time access to ongoing cognitive processes during a task [2]. This method is foundational to research on scientific reasoning and problem-solving, allowing investigators to identify the underlying cognitive mechanisms of complex processes like data-driven hypothesis generation in clinical research [6]. By capturing the stream of consciousness, researchers can move beyond merely observing actions to understanding the motives, rationale, and perceptions that drive those actions, accessing data that would otherwise be hidden in the participant's mind [2]. This application note details the protocols and methodologies for effectively implementing this technique in a research setting.

Experimental Protocol: Data-Driven Hypothesis Generation

This protocol is adapted from a controlled human-subject study investigating how clinical researchers generate scientific hypotheses while analyzing large datasets [6].

Objective

To identify and characterize the sequence of cognitive events (e.g., "Seek connections," "Using analysis results") that occur during data-driven scientific hypothesis generation by clinical researchers.

Materials and Reagents

Table 1: Research Reagent Solutions and Key Materials

| Item | Function in the Protocol |

|---|---|

| Visual Interactive Analysis Tool (e.g., VIADS) | Enables visualization, filtering, and summarization of large datasets coded with hierarchical terminologies (e.g., ICD codes) for the test group [6]. |

| Control Analytical Tools (e.g., SPSS, SAS, R, Excel) | Standard data analysis tools used by the control group for comparison [6]. |

| Preprocessed Datasets (e.g., from NAMCS) | Provides standardized, aggregated data (e.g., ICD-9-CM code frequencies) for all participants to analyze [6]. |

| Audio-Visual Recording System (e.g., BB Flashback) | Captures screen activity and participant-facilitator conversations for later transcription and coding [6]. |

| Professional Transcription Service | Converts audio recordings into accurate text transcripts for qualitative analysis [6]. |

| Coding Framework (A priori codebook) | A structured set of codes (e.g., "Analogy," "Use PICOT") for identifying cognitive events in transcripts [6]. |

Procedure

- Participant Recruitment and Randomization: Recruit clinical researchers with varying levels of experience. Use block randomization to assign participants to either the test group (using a specific interactive tool) or the control group (using their tool of choice) [6].

- Tool Training (Test Group Only): Conduct a one-hour training session for the test group on the specific functionalities of the interactive analysis tool (e.g., VIADS) [6].

- Think-Aloud Orientation: Begin the study session with a demonstration of the think-aloud protocol. Model the process using a task similar to what the participant will encounter.

- Example Demonstration: "As you participate today, I would like you to do what we call 'think out loud.' What that means is that I want you to say out loud what you are thinking as you work. Let me show you what I mean... I would expect there to be an icon that says 'text'... but I don't see that here. I'm confused by that. I'm going to look in other places for it..." [2].

- Participant Practice: Have the participant practice the technique with a simple, unrelated task to ensure comprehension [2].

- Data Analysis and Hypothesis Generation Session: Provide the preprocessed dataset to the participant. The task is to analyze the data and develop research hypotheses within a defined period (e.g., a 2-hour session). The participant must continuously verbalize their thought process following the think-aloud protocol. The facilitator may use neutral prompts (e.g., "What are you thinking right now?") if the participant falls silent [6] [2].

- Data Recording: Record the entire session's screen activity and audio using appropriate software [6].

- Data Transcription: Transcribe the audio recording verbatim using a professional service. The transcription should be checked for accuracy by a content expert [6].

Data Analysis and Cognitive Event Coding

Cognitive Event Coding Framework

The transcribed recordings are coded for specific cognitive events based on a pre-established conceptual framework [6]. The coder should independently review the transcripts, marking instances of these events.

Table 2: Cognitive Events and Frequencies in Hypothesis Generation

| Cognitive Event Code | Description | Representative Frequency in Hypothesis Generation [6] |

|---|---|---|

| Using analysis results | Interpreting or referring to the output of data analyses. | 30% |

| Seeking connections | Actively looking for relationships or patterns between variables or concepts. | 23% |

| Analogy | Comparing current analysis results or patterns to prior studies or known concepts. | Defined in codebook [6] |

| Use PICOT | Formulating a hypothesis using the Patient, Intervention, Comparison, Outcome, Time framework. | Defined in codebook [6] |

| Analyze data | The act of performing a specific analytical operation on the dataset. | Defined in codebook [6] |

Data Analysis Strategy

Analysis can be performed at multiple levels: per hypothesis, per participant, or per group (tool used). The frequency of cognitive events can be aggregated and compared between groups using statistical tests like independent t-tests [6]. The sequence of cognitive events can be mapped for each hypothesis to model the hypothesis generation process.

Workflow Visualization

Critical Considerations for Protocol Implementation

- Facilitator Guidance: The facilitator must gently redirect participants who stop verbalizing or who begin offering opinions instead of their immediate thoughts. Prompts should be neutral, such as, "Please keep telling me what you are thinking" [2].

- Protocol Limitations: The act of thinking aloud consumes cognitive resources, which may slightly reduce the capacity a participant can devote to the primary task. Participants also cannot articulate every aspect of their subconscious thought processes [2].

- Quality Assurance: To ensure coding reliability, two independent coders should code the transcripts. The coders must then compare results, discuss discrepancies, and reach a consensus, refining the coding principles as needed [6].

Concurrent vs. Retrospective Think-Aloud Protocols

Think-aloud protocols are a foundational methodology for studying human cognitive processes, enabling researchers to gain direct insight into the problem-solving and decision-making strategies of participants. These protocols are particularly valuable in fields requiring an understanding of complex cognitive tasks, such as drug development and clinical decision-making. The method operates on the premise that having participants verbalize their thoughts provides a window into their internal reasoning, offering data that is often inaccessible through mere observation of external behaviors [2]. There are two primary types of think-aloud protocols: the Concurrent Think-Aloud (CTA), where participants verbalize their thoughts in real-time while performing a task, and the Retrospective Think-Aloud (RTA), where participants describe their thought processes after task completion, often aided by a recording of their actions [9] [10]. This article provides a detailed comparison of these two formats, structured for researchers and scientists engaged in cognitive process research.

Core Conceptual Differences and Theoretical Underpinnings

The choice between CTA and RTA is not merely logistical; it is rooted in their distinct theoretical impacts on data quality and participant cognition.

- Concurrent Think-Aloud (CTA) requires participants to perform a task and verbalize their thoughts simultaneously. This method aims to capture the stream of consciousness with minimal retrospection or interpretation. However, a significant theoretical consideration is the dual cognitive load imposed on the participant. The processes of thinking about the task and verbalizing those thoughts compete for cognitive resources, which can potentially alter the very thought process being studied [10]. This can sometimes slow down task completion but may also foster a more measured, deliberate approach [11].

- Retrospective Think-Aloud (RTA) involves participants performing the task in silence first. Immediately after, they are shown a video recording (or other replay) of their session and are asked to retrospectively report their thoughts during the activity. A key advantage is that it avoids interfering with the primary task performance. However, its main theoretical vulnerability is the risk of post-rationalization and fabrication of thoughts, as participants may unconsciously fill gaps in their memory or provide socially desirable explanations for their actions. There is also the potential for forgetting fleeting but critical thoughts [10].

The table below summarizes the core characteristics and theoretical trade-offs of each method.

Table 1: Fundamental Characteristics of CTA and RTA

| Feature | Concurrent Think-Aloud (CTA) | Retrospective Think-Aloud (RTA) |

|---|---|---|

| Definition | Real-time verbalization during task performance. | Post-hoc verbalization after task completion, aided by a recording. |

| Primary Data | Raw, in-the-moment thoughts and immediate reactions. | Recalled thoughts, often with interpretation and justification. |

| Key Theoretical Advantage | Access to unfiltered, sequential thought processes. | Avoids interference with natural task performance and cognitive load. |

| Key Theoretical Disadvantage | Potential for dual cognitive load, altering the natural process. | Risk of memory decay, post-rationalization, and fabrication. |

Quantitative Comparison and Empirical Findings

Empirical studies have quantified the differential impacts of CTA and RTA, particularly when these protocols are used in conjunction with other research technologies like eye-tracking.

A 2020 study provides critical empirical evidence. The study involved managers using a simulation game for decision-making, with one group using CTA and another using RTA, while both were monitored with eye-tracking. The key finding was that CTA significantly distorted the eye-tracking data, whereas the data gathered with RTA provided independent evidence of participant behavior that was not confounded by the verbalization method. This suggests that for research on complex decision-making processes, RTA is a more suitable companion to eye-tracking as it causes less interference with natural perceptual behavior [10].

Furthermore, a 2018 international survey of 197 User Experience (UX) practitioners revealed industry trends in the application of these methods. The survey found that think-aloud protocols are among the most widely used methods for detecting usability problems, with 86% of respondents using them. Notably, concurrent protocols were more popular than retrospective ones. The same survey highlighted that practitioners almost always probe participants for more information and explicitly request them to verbalize specific content, adapting the classical protocol for practical efficiency [5].

The following table synthesizes key empirical findings and their implications for research design.

Table 2: Empirical Findings and Methodological Implications

| Aspect | Concurrent Think-Aloud (CTA) | Retrospective Think-Aloud (RTA) |

|---|---|---|

| Impact on Primary Data | Can slow task completion [11]; May distort complementary metrics like eye-tracking patterns [10]. | Less interference with primary task performance and correlated physiological data [10]. |

| Data Completeness | Ideas may be lost if information is difficult to verbalize or processes are automatic [10]. | Participants may omit or forget details, especially without a replay cue [10]. |

| Industry Adoption | More commonly used in practice than RTA [5]. | Less common than CTA, but valued in specific contexts [5]. |

| Best Suited For | Capturing the sequential flow of conscious thought during less automated tasks. | Studying tasks where uninterrupted performance is critical, or when combined with eye-tracking. |

Detailed Experimental Protocols for Implementation

For researchers aiming to implement these methods, adherence to standardized protocols is crucial for data validity and reliability.

Protocol for Concurrent Think-Aloud (CTA)

- Preparation and Briefing: The researcher begins by explaining the purpose of the "think-aloud" method to the participant. It is critical to emphasize that the goal is to hear their thoughts, not to receive their opinions or design suggestions [2].

- Demonstration: The researcher conducts a live demonstration using a simple, analogous task. For example: "I am going to think out loud as I try to send a text message on this mobile phone. OK, I'm looking at the home screen... I would expect an icon that says 'messages'... I don't see it, so I'm confused. I'll look in other places..." [2]. This models the desired type of commentary.

- Participant Practice: The participant is given a short, unrelated practice task to perform while thinking aloud. This allows them to become comfortable with the process, and the researcher can provide feedback on the volume and relevance of their verbalizations [2].

- Task Execution: The participant begins the actual experimental task while continuously verbalizing their thoughts. The researcher should use neutral prompts if the participant falls silent (e.g., "Please keep talking.") but avoid leading questions [10] [5].

- Data Recording: The entire session—including audio, screen capture, and any other biometric data like eye-tracking—is recorded for subsequent protocol analysis [11].

Protocol for Retrospective Think-Aloud (RTA)

- Preparation and Silent Task Performance: The participant is informed that they will perform a task first and then describe their thoughts afterward. They perform the core task in silence, without any requirement to verbalize [10].

- Recording the Session: The participant's performance is fully recorded (e.g., video and screen capture). In studies involving eye-tracking, a gaze cursor is often superimposed on the recording [10].

- Stimulated Recall Interview: Immediately after the task, the researcher replays the recording for the participant. The participant is instructed to describe what they were thinking at specific points during the task. The researcher can pause the playback at key moments (e.g., before a decision point, during a hesitation) to prompt for recall [10].

- Data Collection: The participant's retrospective commentary is recorded and transcribed. This data is then synchronized with the recording of their original actions for analysis.

The following diagram illustrates the key decision points for selecting and applying the appropriate think-aloud protocol.

The Researcher's Toolkit: Essential Materials and Reagents

Successful application of think-aloud protocols requires both methodological rigor and the right technological tools. The table below outlines the essential "research reagents" for this type of cognitive research.

Table 3: Essential Toolkit for Think-Aloud Protocol Research

| Tool/Resource | Function/Description | Example Use-Case |

|---|---|---|

| Audio/Video Recording System | Captures participant verbalizations and physical actions. | Core equipment for creating a permanent record of all CTA and RTA sessions [11]. |

| Screen Capture Software | Records all on-screen interactions. | Essential for software usability studies and for creating the stimulus video for RTA sessions [10]. |

| Eye-Tracker | Records gaze position and pupil movement. | Used to understand visual attention; best paired with RTA to avoid data distortion [10]. |

| Protocol Analysis Software | Facilitates coding and analysis of verbal data. | Software like Observer XT is used to transcribe commentary and code it into themes for quantitative analysis [11]. |

| Structured Observation Checklist | A pre-determined list of behaviors and codes. | Serves as the researcher's shorthand for marking observed actions and reactions during live sessions [11]. |

| Stimulated Recall Recording | A video replay of the participant's own task performance. | The critical stimulus used to prompt and cue memory during a Retrospective Think-Aloud session [10]. |

Both concurrent and retrospective think-aloud protocols offer powerful, yet distinct, pathways for investigating the cognitive processes of researchers, clinicians, and other professionals. The choice between them is not a matter of which is universally superior, but which is most appropriate for the specific research context. Concurrent Think-Aloud provides direct access to the real-time flow of thought but risks altering the process through cognitive load. Retrospective Think-Aloud preserves the integrity of the primary task performance but relies on the fallible processes of memory and recall. By understanding their theoretical trade-offs, empirical impacts, and implementing the detailed protocols outlined, scientists can make an informed methodological choice that optimizes the validity and depth of their research into complex cognitive systems.

Verbalization, in the form of think-aloud protocols, serves as a critical methodology for accessing and understanding unobservable cognitive processes. The fundamental premise is that having individuals verbalize their thoughts while engaging in a task provides direct insight into the internal cognitive mechanisms governing decision-making, problem-solving, and reasoning [9]. This approach is particularly valuable in research fields such as judgment and decision-making (JDM), where developing and testing theories about hidden cognitive processes is a primary challenge [12]. As an increasing amount of research migrates to online survey formats, the collection of typed open-text explanations—a modern adaptation of the spoken think-aloud protocol—has become an exceptionally easy and low-cost method for gathering qualitative data on cognitive processes [12]. This document outlines the scientific basis, application notes, and detailed experimental protocols for utilizing verbalization in cognitive process research.

Theoretical and Empirical Basis

Cognitive Foundation of Verbalization

The think-aloud protocol operates on the principle of concurrent verbalization, where participants narrate their thoughts in real-time during a task. This verbalization acts as a stream of consciousness that externalizes internal cognitive events, including goals, plans, confusions, assumptions, and decisions [9]. In scientific and clinical reasoning, this method helps researchers understand complex processes like data-driven hypothesis generation, which involves searching for a problem in knowledge-rich domains and relies heavily on divergent thinking [6]. Unlike pure introspection, which may involve retroactive explanation and confabulation, concurrent verbalization aims to capture thoughts as they occur, providing a more direct window into ongoing cognitive processes.

Evidence from Cognitive Research

Empirical studies across diverse domains validate that verbalizations reveal distinct cognitive patterns. A study on hypothesis generation in clinical research using a think-aloud protocol identified and quantified specific cognitive events, demonstrating how researchers engage with data and form scientific hypotheses [6]. The table below summarizes key quantitative findings from this study, illustrating the distribution of cognitive events during hypothesis generation.

Table 1: Cognitive Events in Scientific Hypothesis Generation (Adapted from [6])

| Cognitive Event | Mean Percentage of Total Events | Primary Function in Cognition |

|---|---|---|

| Using analysis results | 30% | Applying data observations to form hypothesis premises |

| Seeking connections | 23% | Identifying relationships between variables and concepts |

| Analogy | 11% | Leveraging prior knowledge or similar cases |

| Using PICOT | 9% | Structuring clinical research questions (Patient, Intervention, Comparison, Outcome, Time) |

| Data observation | 8% | Noticing trends, patterns, or anomalies in data |

| Background knowledge | 7% | Incorporating existing expertise and domain knowledge |

| Hypothesizing | 6% | Formulating an educated guess about variable relationships |

| Other events | 6% | Miscellaneous cognitive activities |

Furthermore, research on data sensemaking behaviors, which employed a combination of in-depth interviews and think-aloud tasks, identified a framework of data-centric sensemaking activities, including inspecting data, engaging with content, and placing data within broader contexts [13]. These clusters of activities provide a structured understanding of the cognitive processes involved in complex data interpretation.

Application Notes: Protocols for Cognitive Research

Experimental Workflow for Think-Aloud Protocols

The following diagram, generated using Graphviz, outlines the standard workflow for designing and executing a study incorporating the think-aloud protocol. This workflow integrates both concurrent and retrospective verbalization methods.

Cognitive Framework of Hypothesis Generation

Based on research into data-driven scientific hypothesis generation, the following diagram maps the cognitive events and their relationships during this complex process. This framework is particularly relevant for clinical and scientific research settings.

Detailed Experimental Protocols

Concurrent Think-Aloud Protocol for Hypothesis Generation

This protocol is adapted from a study on clinical researchers generating data-driven scientific hypotheses [6].

4.1.1 Objective To identify and characterize the cognitive events and processes involved in data-driven scientific hypothesis generation by clinical researchers.

4.1.2 Materials and Reagents Table 2: Essential Research Materials for Think-Aloud Studies

| Item | Specification/Example | Primary Function in Research |

|---|---|---|

| Dataset | Preprocessed National Ambulatory Medical Care Survey (NAMCS) data with ICD-9-CM codes [6] | Provides a realistic and relevant context for hypothesis generation by domain experts. |

| Analysis Tools | VIADS (Visual Interactive Analysis Tool), SPSS, SAS, R, Excel [6] | Enables participants to interact with, filter, and visualize data during the cognitive task. |

| Recording Software | BB Flashback for Windows or similar screen capture software [6] | Synchronously records screen activity and audio for subsequent transcription and analysis. |

| Transcription Service | Professional transcription service verified by a content expert [6] | Produces accurate verbatim transcripts of verbal reports for reliable coding. |

| Coding Framework | Preliminary conceptual framework of hypothesis generation process [6] | Provides the initial codebook and structure for identifying cognitive events in verbal data. |

4.1.3 Procedure

- Participant Preparation: Recruit clinical researchers with varying levels of experience. Obtain informed consent for audio and screen recording.

- Tool Training (If applicable): For groups using specialized tools like VIADS, provide a standardized one-hour training session prior to the main study session. Control groups use tools of their choice (e.g., SPSS, R).

- Task Introduction: Provide participants with the dataset and a clear instruction: "Analyze these datasets and develop research hypotheses. Please verbalize all your thoughts, decisions, and reasoning processes as you work, even if they seem incomplete or trivial."

- Study Session: Allow a defined period (e.g., 2 hours) for the task. The study facilitator may be present to remind participants to keep verbalizing if they fall silent.

- Data Recording: Use screen recording software to capture all participant interactions with the data and analysis tools, synchronized with audio recording of their verbalizations.

- Data Transcription: Employ a professional service to transcribe the audio recordings. A content expert should review transcripts for accuracy, particularly concerning domain-specific terminology.

- Retrospective Protocol (Optional): Use the screen recording as a stimulus for a retrospective think-aloud session, asking participants to elaborate on their thought processes at specific moments.

Open-Text Box Protocol for Online Surveys

This protocol provides a text-based alternative to spoken think-aloud for online survey environments [12].

4.2.1 Objective To gather qualitative data on the cognitive processes behind specific quantitative responses in online survey studies.

4.2.2 Procedure

- Survey Design: Following a key quantitative question in an online survey, immediately present an open-text box with the prompt: "Please explain your response."

- Data Collection: Collect the typed explanations provided by participants. These are typically short (one or two sentences) and directly related to the preceding question.

- Data Analysis: Analyze responses using a pragmatic and reflexive content analysis approach. This involves:

- Developing a coding scheme to categorize responses based on inferred cognitive processes.

- Using a second, independent coder to validate the categorization and calculate inter-coder agreement to ensure reliability.

- Employing reflexivity to acknowledge and mitigate researcher bias throughout the analysis.

Analysis Methods for Verbal Data

Content Analysis of Verbal Protocols

The analysis of transcribed verbal data typically involves a structured content analysis approach [12]. This process requires the development of a coding scheme—a set of categories or "codes" representing different cognitive events or themes. Two coders independently assign these codes to segments of the transcribed text. The reliability of the analysis is quantified by calculating inter-coder agreement. Discrepancies are resolved through discussion to reach a consensus. This method is highly flexible and, when combined with reflexivity—a constant awareness of the researcher's potential biases—provides a scientifically rigorous framework for interpreting qualitative verbal data [12].

After coding, the frequency and distribution of cognitive events can be analyzed quantitatively. For instance, in the hypothesis generation study, the unit of analysis can be each individual hypothesis, and the number and type of cognitive events per hypothesis can be compared between different groups (e.g., users of different analytical tools, or experienced vs. inexperienced researchers) using statistical tests like independent t-tests [6]. This quantitative summary of qualitative data allows for robust comparisons and helps validate the utility of the think-aloud method in uncovering differences in cognitive processes.

The think-aloud protocol is an established technique for studying human cognitive processes by having participants verbalize their thoughts in real-time during an activity [14]. This method serves as a "window on the soul," allowing researchers to discover what users truly think about a design or process, revealing misconceptions, and uncovering the underlying reasons for decision-making pathways [15]. In scientific research contexts, particularly those involving complex problem-solving and hypothesis generation, this protocol provides invaluable access to the cognitive mechanisms that drive scientific discovery.

The application of think-aloud protocols extends beyond traditional usability testing into sophisticated research domains, including clinical research and data-driven hypothesis generation [6]. By capturing the verbalized thought processes of researchers and scientists, this method enables the identification of specific cognitive events—such as "Seeking connections" or "Using analysis results"—that constitute the foundational elements of scientific reasoning and discovery [6]. The method's robustness, flexibility, and relatively low implementation cost make it particularly suitable for studying the complex cognitive processes employed by researchers, scientists, and drug development professionals in their work [15].

Theoretical Framework: Cognitive Processes in Scientific Research

Scientific hypothesis generation represents an advanced cognitive process that relies heavily on divergent thinking, particularly in knowledge-rich domains such as clinical medicine and drug development [6]. Unlike diagnostic reasoning, which typically begins with a known problem, data-driven scientific hypothesis generation involves searching for problems or focus areas—a process termed "open discovery" [6]. The think-aloud protocol effectively captures this process by documenting how researchers identify unusual phenomena, observe trends in data, and utilize analogies from prior knowledge.

A conceptual framework of the hypothesis generation process reveals several critical cognitive events that can be systematically coded and analyzed [6]. These include "Analyze data," "Seek connections," "Use PICOT" (Patient, Intervention, Comparison, Outcome, Type of study), and "Analogy," where researchers compare prior studies with current analysis results [6]. Understanding these cognitive events provides crucial insights into the scientific reasoning process, enabling the development of better tools and methodologies to support research activities across scientific domains.

Application Note 1: Usability Testing of Scientific Software and Tools

Experimental Protocol for Usability Testing

The think-aloud method can be effectively implemented for usability testing of scientific software, including visual analytic tools, data analysis platforms, and laboratory information management systems. The following protocol provides a standardized methodology for evaluating scientific software usability:

- Participant Recruitment: Recruit representative users, including researchers, scientists, and technicians with varying levels of experience and expertise relevant to the software being tested [15].

- Task Design: Develop representative tasks that reflect common research activities, such as data import, analysis, visualization, and export functions.

- Briefing Session: Explain the think-aloud process to participants, potentially showing a short video demo of a think-aloud session to vividly explain what's expected [15].

- Test Session: Ask participants to verbalize their thoughts continuously while performing the designated tasks. Use gentle, neutral prompts like "What are you thinking now?" to maintain verbalization flow [15] [16].

- Data Collection: Record screen activity and audio for subsequent analysis. Take notes on observed behaviors, difficulties, and verbalized thought processes.

- Post-session Interview: Conduct a brief structured interview to clarify any observed behaviors or comments from the session.

Data Collection and Analysis Methods

Data collected from think-aloud usability tests should include both qualitative and quantitative measures for comprehensive analysis:

Table 1: Usability Metrics for Scientific Software Evaluation

| Metric Category | Specific Measures | Data Collection Method |

|---|---|---|

| Task Performance | Success rate, time on task, error rate | Direct observation, screen recording |

| Cognitive Process | Misconceptions, confusion points, aha moments | Verbal protocol transcription |

| User Satisfaction | Frustration expressions, positive comments | Verbal protocol, post-session interview |

| Software Usability | Workflow interruptions, interface confusion | Facilitator observations, verbal protocol |

The analysis should focus on identifying patterns of misunderstanding, workflow obstacles, and cognitive barriers that impede efficient use of the scientific software. The qualitative data should be coded for specific usability issues, while quantitative metrics provide supporting evidence for prioritization of improvements [15].

Application Note 2: Data-Driven Hypothesis Generation in Clinical Research

Experimental Protocol for Hypothesis Generation Studies

Think-aloud protocols have been successfully applied to study the cognitive processes underlying data-driven hypothesis generation in clinical research [6]. The following detailed methodology can be implemented to capture these complex cognitive events:

Participant Selection and Group Assignment:

- Recruit clinical researchers with varying experience levels (experienced vs. inexperienced based on pre-established criteria including years of study design experience, data analysis experience, and publication history) [6].

- Use block randomization to assign participants to experimental and control groups.

Tool Training:

- For the experimental group, provide standardized training on the specific analytical tool being studied (e.g., one-hour training session for VIADS - a visual interactive analytic tool) [6].

- Allow control group participants to use any analytical tools they prefer (e.g., SPSS, SAS, R, Excel).

Data Set Preparation:

- Utilize appropriate scientific datasets (e.g., extracted from the National Ambulatory Medical Care Survey with preprocessed diagnostic and procedural codes and their frequencies) [6].

- Provide complete documentation for all data elements.

Study Session:

- Conduct a 2-hour study session where participants analyze datasets and develop hypotheses [6].

- Instruct participants to continuously verbalize their thought processes following the think-aloud protocol.

- Record screen activity and audio using appropriate software (e.g., BB Flashback).

Data Processing:

- Transcribe recordings professionally and verify accuracy.

- Code transcripts for cognitive events based on an established conceptual framework.

Cognitive Event Coding and Analysis Framework

The transcription data should be systematically coded for cognitive events using a standardized framework:

Table 2: Cognitive Events in Hypothesis Generation

| Cognitive Event | Description | Frequency in Clinical Research |

|---|---|---|

| Seeking connections | Looking for relationships between variables | 23% of total cognitive events [6] |

| Using analysis results | Applying statistical findings to hypothesis formation | 30% of total cognitive events [6] |

| Analogy | Comparing with prior research or knowledge | To be coded based on transcriptions [6] |

| Use PICOT | Formalizing hypotheses using structured framework | To be coded based on transcriptions [6] |

| Data exploration | Initial examination of dataset characteristics | To be coded based on transcriptions [6] |

The coded data should be analyzed at multiple levels: per hypothesis generation instance, per participant, and across experimental groups. Independent t-tests can compare cognitive events between groups (e.g., VIADS vs. control groups, experienced vs. inexperienced researchers) [6].

Visualization of Research Workflows

Think-Aloud Usability Testing Workflow

Hypothesis Generation Cognitive Process

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Essential Tools for Cognitive Process Research

| Tool Category | Specific Tools | Research Application |

|---|---|---|

| Visualization Tools | VIADS, SPSS, R, Python (Pandas, NumPy, SciPy) | Visual interactive analysis of large health datasets coded with hierarchical terminologies [6] [17] |

| Diagramming Tools | Graphviz, PlantUML, Mermaid.js | Representing structural information as diagrams; creating various diagram types from text definitions [18] [19] |

| Data Collection Tools | BB Flashback, screen recording software, audio recording equipment | Capturing screen activity and verbal protocols during study sessions [6] |

| Qualitative Analysis Tools | Transcription software, qualitative coding applications | Systematic coding and analysis of verbal protocol transcripts for cognitive events [6] |

| Statistical Analysis Tools | SPSS, SAS, R, Excel | Performing statistical analysis on coded cognitive events and hypothesis quality metrics [6] [17] |

Advanced Applications and Future Directions

The integration of think-aloud protocols with emerging technologies offers promising avenues for advancing cognitive process research in scientific domains. Eye tracking combined with think-aloud protocols can provide richer insights into the "why" behind the "where" researchers are looking when analyzing data visualizations [16]. This multimodal approach can reveal subconscious viewing patterns and priorities that may not be captured through verbalization alone.

Future applications could include the development of specialized tools with native support for BPMN (Business Process Model and Notation)-based process mining in scientific workflows [20]. Such tools could leverage think-aloud protocols to better understand how researchers interact with complex process models and identify opportunities for optimizing scientific workflows. The continued refinement of think-aloud methodologies will further enhance our understanding of cognitive processes in scientific research, ultimately accelerating discovery and innovation across scientific domains.

Executing Think-Aloud Studies: A Step-by-Step Guide for Robust Research

Think-Aloud Protocols (TAP) represent a foundational methodology for capturing unstructured data on human cognitive processes during task performance. This qualitative research method requires participants to verbalize their ongoing thoughts, providing researchers with a unique window into problem-solving strategies, decision-making pathways, and perceptual reactions [14] [1]. Within scientific domains including drug development and healthcare research, TAP enables the systematic examination of how professionals interpret complex data, navigate diagnostic processes, and operate sophisticated systems. The method has been validated across diverse fields from usability testing to medical education, establishing its robustness for studying the cognitive underpinnings of professional tasks [14] [21].

The two primary variants—Concurrent Think-Aloud (CTA) and Retrospective Think-Aloud (RTA)—offer distinct approaches to data collection during cognitive process research. CTA captures verbalizations simultaneously with task performance, providing immediate access to unfolding cognitive events. Conversely, RTA collects verbal reports after task completion, typically using recorded sessions as memory prompts [1] [10]. Selection between these methodologies requires careful consideration of research objectives, cognitive load implications, and the nature of the cognitive processes under investigation.

Methodological Foundations and Comparative Analysis

Conceptual Frameworks and Definitions

Concurrent Think-Aloud (CTA) involves continuous verbalization during task execution, providing real-time access to cognitive processes as they occur. Participants articulate their thoughts, expectations, and decision-making rationales while actively engaged with the experimental task [2] [1]. This approach aims to capture cognitive processes with minimal reconstruction or post-hoc rationalization, potentially providing richer data on the intermediate steps between stimulus and response [22].

Retrospective Think-Aloud (RTA) delays verbalization until after task completion, using video recordings, screen captures, or eye-tracking replays to stimulate participant recall [1] [10]. This method reduces dual-task interference but introduces potential memory decay and reconstruction biases. RTA participants first complete tasks silently, then retrospectively report their cognitive processes while reviewing their performance [21] [10].

Empirical Comparative Evidence

Table 1: Quantitative Comparisons Between CTA and RTA Methodologies

| Comparison Metric | Concurrent TAP (CTA) | Retrospective TAP (RTA) | Research Evidence |

|---|---|---|---|

| Protocol segments elicited | Higher number | Fewer segments | Kuusela & Paul, 2000 [22] |

| Insights into intermediate decision steps | More comprehensive | Less comprehensive | Kuusela & Paul, 2000 [22] |

| Statements about final choices | Fewer statements | More statements | Kuusela & Paul, 2000 [22] |

| Task performance | Potentially reduced due to cognitive load | Better task performance | Van den Haak et al., 2004 [21] |

| Observable usability problems | More observable problems | Fewer observable problems | Van den Haak et al., 2004 [21] |

| Compatibility with eye-tracking | Significant distortion of eye-movement data | Minimal impact on eye-tracking metrics | Špiláková et al., 2020 [10] |

Table 2: Practical Implementation Considerations

| Implementation Factor | Concurrent TAP (CTA) | Retrospective TAP (RTA) |

|---|---|---|

| Cognitive load | High (dual-task interference) | Low (sequential tasking) |

| Memory reliability | Not dependent on recall | Subject to memory decay |

| Session duration | Generally shorter | Longer (task + review phases) |

| Participant training | Requires practice examples | Requires clear review procedure |

| Data analysis complexity | Higher volume of verbal data | Potential for post-rationalization |

| Equipment needs | Audio recording sufficient | Requires session recording capability |

Research by Kuusela and Paul demonstrated that CTA generally outperforms RTA for revealing decision-making processes, generating more protocol segments and providing greater insights into intermediate cognitive steps [22]. However, RTA offers the advantage of generating more statements about final choices, potentially providing better data on decision outcomes [22].

Van den Haak and colleagues compared these methods in evaluating online library catalogs, finding comparable numbers and types of usability problems detected but noting differences in task performance [21]. Participants in RTA conditions demonstrated better task performance, likely because CTA's dual-task requirement (performing while verbalizing) creates cognitive load that can interfere with primary task execution [21].

When combined with eye-tracking for decision-making research, RTA demonstrates significant methodological advantages. Špiláková et al. found that CTA significantly distorts eye-tracking data, while RTA provides independent behavioral evidence without interfering with natural eye movement patterns [10]. This has important implications for research studying visual attention patterns during complex cognitive tasks.

Experimental Protocols and Application Notes

Standardized Protocol for Concurrent Think-Aloud

Participant Briefing and Training: Begin with a standardized explanation of the CTA method: "I'm going to ask you to think aloud as you work through some tasks. That means I'd like you to say everything you're thinking, what you're looking at, what you're trying to do, and what you're wondering about. Just pretend you're alone in the room speaking to yourself" [2]. Model the process with a demonstration using a practice task unrelated to the research focus. For example, demonstrate thinking aloud while using a stapler: "I'm looking at this stapler and expecting to find some indication of how to open it. I don't see any arrows or instructions, so I'm going to try pulling this part back..." [2]. Then provide a practice task for the participant with constructive feedback.

Data Collection Phase: During task execution, the researcher should use neutral prompts when verbalizations cease: "Remember to keep talking" or "What are you thinking now?" [2]. Avoid leading questions or interpretive responses. If participants ask for help or clarification, respond with: "Right now, I'm just interested in how you would approach this without my help" [2]. Record both audio and screen activity for subsequent analysis.

Moderator Guidelines: Position yourself as a passive observer rather than an interactive participant. Provide minimal intervention while ensuring the participant continues verbalizing. Document observations noting timestamps corresponding to significant behaviors, expressions of confusion, or task difficulties [2] [8].

Standardized Protocol for Retrospective Think-Aloud

Silent Task Performance Phase: Instruct participants: "Please work through these tasks as you normally would, without feeling any need to verbalize your thoughts. We'll discuss your approach afterward" [21] [10]. Ensure high-quality recording of screen activity, interactions, and if possible, facial expressions or eye-tracking data. This recording will serve as the retrieval cue in the subsequent phase.

Stimulated Recall Phase: Set up the playback system and instruct participants: "As we watch the recording of your session, I'd like you to describe what you were thinking at each point during the tasks. Please pause the recording whenever you have something to report" [10]. Use neutral prompts such as: "Can you remember what you were thinking here?" or "What was your reasoning at this point?" [21]. Avoid leading questions that might suggest particular thought processes.

Minimizing Reconstruction Bias: To reduce post-hoc rationalization, emphasize that you're interested in their actual thoughts during the task, not justifications for their actions. Encourage reporting of even fragmentary thoughts, uncertainties, or minor impressions [10]. Consider focusing on specific decision points or interaction sequences where cognitive processes are of particular theoretical interest.

Decision Framework for Method Selection

When to Prefer Concurrent TAP:

- Research questions focus on immediate cognitive processes rather than decision outcomes [22]

- Studying novice performance where thought processes are more accessible to consciousness

- Lower complexity tasks where dual-task interference is minimal [21]

- Need to capture fleeting impressions or immediate reactions to interface elements

- Resource constraints limit capacity for extended session duration or recording equipment

When to Prefer Retrospective TAP:

- Research involves high cognitive load tasks where simultaneous verbalization would interfere with performance [10]

- Combining with eye-tracking or other physiological measures that could be compromised by concurrent verbalization [10]

- Studying expert performance involving automated procedures difficult to articulate concurrently

- Need to understand final decision rationales and outcome evaluations [22]

- Participants have difficulty verbalizing during task performance (e.g., children, special populations)

Mixed-Methods Approaches: For comprehensive research programs, consider sequential implementation of both methods. CTA can identify problematic areas for deeper investigation using RTA, or RTA can follow CTA to explore specific decision points in greater depth [21].

Essential Research Reagent Solutions

Table 3: Essential Materials and Tools for TAP Implementation

| Research Tool | Function/Purpose | Implementation Notes |

|---|---|---|

| Digital Recording System | Captures screen activity, audio, and facial expressions | Essential for RTA; enables transcription and analysis of verbal reports [1] |

| Stimulated Recall Platform | Playback system for retrospective sessions with pause controls | Enables cued recall in RTA; should synchronize multiple data streams [10] |

| Protocol Transcription Software | Converts verbal reports to text for analysis | Enables qualitative coding of cognitive processes; should include timestamp references [1] |

| Task Scenario Templates | Standardized task descriptions with success criteria | Ensures consistency across participants; should reflect real-world use cases [8] |

| Participant Briefing Scripts | Standardized instructions for thinking aloud | Minimizes researcher bias; includes demonstration examples [2] |

| Neutral Prompting Protocol | Pre-defined non-leading prompts for moderators | Reduces researcher influence; maintains methodological consistency [2] |

| Qualitative Coding Framework | System for categorizing cognitive processes | Enables quantitative analysis of qualitative data; should establish inter-rater reliability [14] |

Methodological rigor in think-aloud research requires careful alignment between research questions and protocol selection. Concurrent TAP offers direct access to unfolding cognitive processes but may interfere with primary task performance. Retrospective TAP minimizes interference but introduces potential memory and reconstruction biases. The decision framework presented here enables researchers to make informed methodological choices based on their specific research context, cognitive process of interest, and practical constraints. When implemented with appropriate protocols and reagents, both methods provide valuable insights into the cognitive processes underlying complex decision-making in scientific and healthcare domains.

Participant selection and screening constitute a critical foundation for the validity and reliability of studies employing think-aloud protocols in cognitive process research. Within drug development and scientific research, understanding the cognitive mechanisms behind hypothesis generation, problem-solving, and decision-making is paramount. The think-aloud protocol, a process data method involving participants verbalizing their thoughts concurrently while performing tasks, provides a window into these internal processes [23] [9]. However, the richness of this data is inherently dependent on the careful selection of participants who possess the relevant domain-specific expertise and experiential knowledge. This application note outlines detailed protocols and frameworks for targeting the right participant profile, ensuring the collection of high-quality, actionable cognitive process data in a scientific context.

Core Principles of Participant Selection for Think-Aloud Protocols

Selecting participants for think-aloud studies in specialized fields diverges from quantitative sampling methods. The goal is not statistical representation but deep qualitative insight into cognitive processes.

- Purposive Sampling: Researchers intentionally select individuals based on pre-defined criteria related to specific experiences, knowledge, or qualities [24] [25]. This ensures participants can provide meaningful data on the phenomenon under investigation.

- Sample Size Considerations: Samples are typically small, often ranging from 20 to 50 respondents [24], allowing for in-depth analysis without aiming for statistical generalization. In highly specialized domains, samples may be even smaller; a study with clinical researchers involved 16 participants [6].

- Key Selection Criteria: Primary criteria often include domain expertise, specific task experience, and demographic or professional characteristics relevant to the research question. In a study on hypothesis generation, participants were clinical researchers categorized by experience levels based on years in study design, data analysis, and publication history [6].

Table 1: Core Selection Criteria for Think-Aloud Studies in Scientific Research

| Criterion Category | Description | Example from Clinical Research [6] |

|---|---|---|

| Professional Expertise | Years of experience, specific technical skills, and professional qualifications. | Years of study design and data analysis experience; number of publications. |

| Domain Knowledge | Deep understanding of the specific scientific field or subject matter. | Clinical research background; familiarity with medical datasets and ICD codes. |

| Task Proficiency | Demonstrated ability to perform the activities required by the study task. | Experience with data analysis tools (e.g., SPSS, SAS, R, or specific tools like VIADS). |

| Experiential Grouping | Stratification of participants based on experience level for comparative analysis. | Block randomization into "experienced" and "inexperienced" clinical researcher groups. |

Methodological Protocols for Participant Screening and Selection

This section provides a detailed, actionable protocol for screening and selecting participants in studies using think-aloud protocols.

Protocol: Defining Participant Profiles and Recruitment

Objective: To systematically identify, screen, and enroll participants who meet the precise expertise requirements for the cognitive process research study.

Materials: Participant database or recruitment tools, pre-screening questionnaire, informed consent forms.

Workflow Diagram: The following diagram illustrates the sequential workflow for participant screening and selection.

Procedure:

- Define Participant Profiles: Based on the research objectives, explicitly define the characteristics of the target participants. For a study on data analysis tools, this involved defining "experienced" and "inexperienced" clinical researchers using concrete metrics like publication count and years of design experience [6].

- Develop Pre-Screening Instrument: Create a short questionnaire or interview script to assess the defined criteria. This instrument should directly evaluate the key competencies and experiences required.

- Administer Pre-Screen and Initial Filter: Potential participants complete the pre-screening instrument. Their responses are evaluated against the inclusion/exclusion criteria to create a shortlist of eligible candidates.

- Obtain Informed Consent: Eligible candidates are provided with detailed information about the study, including the use of recording devices for screen activity and audio, and their consent is obtained [6].

- Confirm Group Assignment: Finalize the assignment of participants to experimental or control groups using a method like block randomization to ensure balanced groups [6].

Protocol: Integrating Think-Aloud and Cognitive Interviewing Techniques

Objective: To effectively implement the think-aloud protocol during the study session and probe deeper into cognitive processes.

Materials: Pre-defined task materials, audio and screen recording equipment (e.g., BB Flashback) [6], interview protocol with cognitive probes.

Procedure:

- Task Administration: Participants are given a specific task to complete, such as analyzing a dataset to generate scientific hypotheses [6].

- Think-Aloud Training: Prior to the task, train participants in the think-aloud technique. Demonstrate concurrent verbalization yourself, as participants may find it unfamiliar [25]. Instruct them to verbalize their thoughts continuously as they work.

- Data Recording: Record the entire session, including screen activity and audio, for subsequent transcription and analysis [6].

- Interviewer Probing: After the task or at natural breakpoints, use semi-structured cognitive probes to explore thought processes more deeply. Probes can target specific cognitive stages [24]:

- Comprehension: "What do you think this term means in this context?"

- Recall: "How did you remember that specific piece of information?"

- Judgment: "How certain are you about that conclusion?"

- Response: "What was your reasoning for selecting that answer?"

- General: "Can you tell me more about how you reached that decision?" [24]

Application in Drug Development and Clinical Research

The principles of participant selection are highly relevant to the drug development pipeline, particularly in early discovery phases where expert reasoning is crucial.

Connecting Cognitive Research to Drug Discovery: The process of hypothesis generation is fundamental to early drug discovery, where targets are identified and validated [26]. Understanding how researchers analyze complex biological data to form these hypotheses can streamline this initial phase. Think-aloud protocols can be used to study the cognitive processes of discovery scientists as they identify novel drug targets or interpret high-throughput screening data [26] [27].

Table 2: Research Reagent Solutions for Cognitive Process Studies

| Item / Solution | Function in Research |

|---|---|

| Visual Interactive Analysis Tool (e.g., VIADS) | Provides the interface and data environment for participants to perform analytical tasks, enabling the study of tool-guided hypothesis generation [6]. |

| Pre-screening Questionnaire | A tool to systematically filter and select participants based on pre-defined expertise criteria, ensuring the recruitment of the correct participant profile [6]. |

| Audio and Screen Recording Software (e.g., BB Flashback) | Captures the full context of the participant's actions and verbalizations, which are later transcribed and coded for cognitive events [6]. |

| Cognitive Probe Protocol | A semi-structured set of questions used by the interviewer to delve deeper into the participant's thought processes after a task, uncovering comprehension, recall, and judgment mechanisms [24] [25]. |

| Coding Scheme for Transcripts | A framework of defined cognitive events (e.g., "Seeking connections," "Using analysis results") used to quantitatively and qualitatively analyze the transcribed verbal data [6] [23]. |

The following diagram maps how participant selection and cognitive data collection integrate with and inform key stages of the broader drug discovery and development workflow.

Rigorous participant selection and screening are not merely preliminary steps but are integral to the success of think-aloud studies aimed at understanding cognitive processes in scientific and drug development research. By employing purposive sampling, defining clear expertise-based criteria, and implementing structured protocols that combine think-aloud methods with cognitive interviewing, researchers can ensure the collection of high-fidelity data. The insights gleaned from such meticulously conducted studies have the potential to refine analytical tools, optimize research workflows, and ultimately accelerate the path from scientific question to therapeutic solution.

Crafting Effective Tasks and Clear Instructions for Unbiased Data Collection

In cognitive process research, particularly within drug development, the integrity of collected data is paramount. Biased data can skew research outcomes, leading to flawed conclusions with significant scientific and financial repercussions [28]. The think-aloud protocol, a primary method for eliciting verbal reports on cognitive processes, is especially vulnerable to biases introduced through poorly designed tasks and instructions [1]. This article provides detailed application notes and protocols for crafting experimental tasks and instructions that minimize bias, framed within a broader thesis on advancing think-aloud methodologies for rigorous cognitive process research. The principles outlined are essential for researchers and scientists aiming to ensure the validity and reliability of their data in high-stakes environments.

Understanding Bias in Verbal Data Collection

Common Biases and Their Impact on Research

Bias in data collection refers to systematic errors that influence results in a particular direction [28]. In the context of think-aloud protocols for cognitive research, several biases are particularly relevant:

- Confirmation Bias: This occurs when researchers, consciously or unconsciously, design tasks or phrase instructions in ways that lead participants toward expected outcomes [28]. For example, in a study on drug decision-making, leading questions might steer participants to report focusing on specific efficacy data while overlooking side effects.

- Selection Bias: This bias arises when the participant pool or the sampled cognitive tasks do not represent the full spectrum of the population or cognitive processes under investigation [28]. If a cognitive study on diagnostic reasoning only uses simple cases, the collected verbal data will not reflect the complex, ambiguous scenarios encountered in real-world practice.

- Response Bias: This includes factors like social desirability, where participants may alter their verbal reports to present themselves as more competent or rational thinkers [28]. Participants might also try to provide what they believe the researcher wants to hear rather than their genuine thought processes.

The impact of such biases can be profound. In drug development, biased data collection can blind companies to market opportunities, stifle innovation, decrease decision-making quality, and ultimately put patients at risk if cognitive processes related to drug safety evaluations are not accurately understood [28].

The Think-Aloud Protocol: Foundations and Vulnerabilities

The think-aloud protocol is a method used to gather data in usability testing, psychology, and a range of social sciences, including decision-making and process tracing research [1]. It involves participants thinking aloud as they perform specified tasks, verbalizing whatever comes into their mind, including what they are looking at, thinking, doing, and feeling.

There are two primary types of think-aloud protocols:

- Concurrent Think-Aloud: Collected during the task execution.

- Retrospective Think-Aloud: Gathered after the task as the participant walks back through the steps they took, often prompted by a video recording [1].

While the concurrent protocol may provide more complete data, the retrospective approach has less chance of interfering with task performance [1]. Both are vulnerable to bias if the tasks and instructions are not meticulously designed.

Protocols for Designing Unbiased Tasks and Instructions

A General Framework for Experimental Protocols

A robust experimental protocol is like a recipe for running your experiment. It must be sufficiently thorough that a trustworthy, non-lab-member psychologist could run it correctly from the script alone [29]. The paradigm should typically include the following sections:

- Setting Up: The protocol should begin with all procedures required before the first participant arrives. This includes rebooting computers, applying specific settings (e.g., for screen color temperature, volume), and arranging the workspace. Set-up should be complete at least 10 minutes before the participant is expected [29].

- Greeting and Consent: Participants must be guided to the lab space to avoid stress. After they are settled, the first formal activity is obtaining informed consent. Researchers should emphasize the main points of the consent document and create a welcoming environment [29].

- Instructions and Practice: This is a critical phase for mitigating bias.